U.S. Pat. No. 12,172,075

Systems and Methods for Programming Movements Of Player-Controlled Avatars in Video Games

AssigneeActivision Publishing, Inc.

Issue DateOctober 23, 2023

Illustrative Figure

Abstract

Systems and methods are described for imparting dynamic, non-linear and realistic movement and look and feel to a player's/virtual character's first-person limbs and hand-held object model, and procedurally animating a first-person virtual camera such that it simulates the movement of a camera handheld by the player/virtual character. To impart chaotic or random motion to the first-person limbs and hand-held object model a first module defines and implements first and second two dimensional mass-spring-damper systems, each of which is linked to the player's/virtual vector's view vector. Procedural animation or rotational shake is implemented by a second module by applying a coherent noise function to each of the six axes of the first-person virtual camera.

Description

DETAILED DESCRIPTION The present specification is directed towards multiple embodiments. The following disclosure is provided in order to enable a person having ordinary skill in the art to practice the invention. Language used in this specification should not be interpreted as a general disavowal of any one specific embodiment or used to limit the claims beyond the meaning of the terms used therein. The general principles defined herein may be applied to other embodiments and applications without departing from the spirit and scope of the invention. Also, the terminology and phraseology used is for the purpose of describing exemplary embodiments and should not be considered limiting. Thus, the present invention is to be accorded the widest scope encompassing numerous alternatives, modifications and equivalents consistent with the principles and features disclosed. For purpose of clarity, details relating to technical material that is known in the technical fields related to the invention have not been described in detail so as not to unnecessarily obscure the present invention. The term “a multi-player online gaming environment” or “massively multiplayer online game” may be construed to mean a specific hardware architecture in which one or more servers electronically communicate with, and concurrently support game interactions with, a plurality of client devices, thereby enabling each of the client devices to simultaneously play in the same instance of the same game. Preferably the plurality of client devices number in the dozens, preferably hundreds, preferably thousands. In one embodiment, the number of concurrently supported client devices ranges from 10 to 5,000,000 and every whole number increment or range therein. Accordingly, a multi-player gaming environment or massively multi-player online game is a computer-related technology, a non-generic technological environment, and should not be abstractly considered a generic method of organizing human activity divorced from its specific technology environment. In various ...

DETAILED DESCRIPTION

The present specification is directed towards multiple embodiments. The following disclosure is provided in order to enable a person having ordinary skill in the art to practice the invention. Language used in this specification should not be interpreted as a general disavowal of any one specific embodiment or used to limit the claims beyond the meaning of the terms used therein. The general principles defined herein may be applied to other embodiments and applications without departing from the spirit and scope of the invention. Also, the terminology and phraseology used is for the purpose of describing exemplary embodiments and should not be considered limiting. Thus, the present invention is to be accorded the widest scope encompassing numerous alternatives, modifications and equivalents consistent with the principles and features disclosed. For purpose of clarity, details relating to technical material that is known in the technical fields related to the invention have not been described in detail so as not to unnecessarily obscure the present invention.

The term “a multi-player online gaming environment” or “massively multiplayer online game” may be construed to mean a specific hardware architecture in which one or more servers electronically communicate with, and concurrently support game interactions with, a plurality of client devices, thereby enabling each of the client devices to simultaneously play in the same instance of the same game. Preferably the plurality of client devices number in the dozens, preferably hundreds, preferably thousands. In one embodiment, the number of concurrently supported client devices ranges from 10 to 5,000,000 and every whole number increment or range therein. Accordingly, a multi-player gaming environment or massively multi-player online game is a computer-related technology, a non-generic technological environment, and should not be abstractly considered a generic method of organizing human activity divorced from its specific technology environment.

In various embodiments, a computing device includes an input/output controller, at least one communications interface and system memory. The system memory includes at least one random access memory (RAM) and at least one read-only memory (ROM). These elements are in communication with a central processing unit (CPU) to enable operation of the computing device. In various embodiments, the computing device may be a conventional standalone computer or alternatively, the functions of the computing device may be distributed across multiple computer systems and architectures.

In some embodiments, execution of a plurality of sequences of programmatic instructions or code enable or cause the CPU of the computing device to perform various functions and processes. In alternate embodiments, hard-wired circuitry may be used in place of, or in combination with, software instructions for implementation of the processes of systems and methods described in this application. Thus, the systems and methods described are not limited to any specific combination of hardware and software.

The term “module”, “application” or “engine” used in this disclosure may refer to computer logic utilized to provide a desired functionality, service or operation by programming or controlling a general purpose processor. Stated differently, in some embodiments, a module, application or engine implements a plurality of instructions or programmatic code to cause a general purpose processor to perform one or more functions. In various embodiments, a module, application or engine can be implemented in hardware, firmware, software or any combination thereof. The module, application or engine may be interchangeably used with unit, logic, logical block, component, or circuit, for example. The module, application or engine may be the minimum unit, or part thereof, which performs one or more particular functions.

The term “coherent noise” used in this disclosure may refer to any type of smooth pseudorandom noise. Coherent noise is generated by a coherent-noise function, which may be defined by the following characteristics: a) passing in the same input value will always return the same output value, b) a small change in the input value will produce a small change in the output value, and c) a large change in the input value will produce a seemingly random change in the output value.

The term “Perlin Noise” used in this disclosure may refer to a type of coherent noise that is the sum of several coherent-noise functions of ever-increasing frequencies and ever-decreasing amplitudes. Each coherent-noise function that is part of a Perlin Noise function is called an octave.

The term “octave” used in this disclosure may refer to one of the coherent-noise functions in a series of coherent-noise functions that are added together to form Perlin Noise. These coherent-noise functions are called octaves because each octave has, by default, double the frequency of the previous octave. The number of octaves controls the amount of detail of Perlin Noise. Adding more octaves increases the detail of Perlin Noise, with the added drawback of increasing the calculation time.

In embodiments, three translational motion axes (translation in x, y, and z) are orthogonal to each other. In embodiments, a 3D body can be rotated about three orthogonal axes, which may be referred to as “pitch”, “roll”, and “yaw”. It should be noted, however, that in some embodiments, when an offline transform is performed on calculations in a system defined by three orthogonal axes to semantically convert them into angles (i.e. the value in each axis in multiplied to 360 degrees to refer to them as “angles in pitch, yaw, and roll”), the values in yaw, pitch, and roll (Euler angles) may not be orthogonal to each other. Thus, in embodiments, the axes used for translation calculations are not orthogonal to the axes used for rotation calculations.

The term “amplitude” used in this disclosure may refer to a maximum absolute value that a specific coherent-noise function can output.

The term “frequency” used in this disclosure may refer to a number of cycles per unit length that a specific coherent-noise function outputs.

The term “persistence” used in this disclosure may refer to a multiplier that determines how quickly the amplitudes diminish for each successive octave in a Perlin Noise function. The amplitude of each successive octave is equal to the product of the previous octave's amplitude and the persistence value. Increasing the persistence produces “rougher” Perlin Noise.

The term “first-person” used in this disclosure may refer to a visual perspective that is presented to a human player of a video game. In the first-person, the visual perspective is designed to make the human player feel as if his or her own eyes are viewing the presented scene

Therefore, in a “first-person” video game, the graphical perspective is rendered from the viewpoint of the human player's virtual character, or a viewpoint from a camera mounted on the body of the human player's virtual character, which typically means the graphical perspective visually presents any portion of the virtual character, such as the limbs or objects (such as, for example, weapons) being held by the virtual character, that would naturally enter into the virtual character's field of view.

In a video game with a first-person perspective, a player uses inputs such as gamepads, joysticks, mice, trackpads, etc., to navigate and look around a game world. In some cases, a targeting crosshair, placed in the center of the player's viewpoint, may be used to represent the player's focus or, in cases where the player may be holding a virtual weapon, where ammunition would impact if fired from the virtual character's weapon. In this context, the term “dead zone” used in this disclosure may indicate how responsive the human player's controls are when moving the virtual character's viewpoint and, with it, the crosshairs. With a small dead zone, the “Torso Anchor” will move immediately when the human player manipulates his controls. A larger dead zone requires the player to move the controls farther from its point of rest in order to move the crosshairs on a display screen.

The term “game time” used in this disclosure may refer to a timer that starts when a match begins and advances at a constant rate for all players. The game time is deterministic across the one or more game servers and all clients. The system references important events in terms of their game time (for example, “40 damage was applied to player 3 at game time 135468”). Thus, game time is a constantly advancing timer in milliseconds indicating how much time has elapsed since the match began. In one embodiment, game time is specific to an instance of a game and only common to all players of that specific game instance. In another embodiment, game time universal across all instances of games being played such that, regardless of when a specific game instance was initiated, it would reference the same clock as another game instance which may have begun earlier or later in time.

In the description and claims of the application, each of the words “comprise” “include” and “have”, and forms thereof, are not necessarily limited to members in a list with which the words may be associated. It should be noted herein that any feature or component described in association with a specific embodiment may be used and implemented with any other embodiment unless clearly indicated otherwise.

As used herein, the indefinite articles “a” and “an” mean “at least one” or “one or more” unless the context clearly dictates otherwise.

Overview

FIG.1illustrates an embodiment of a multi-player online gaming or massively multiplayer online gaming system/environment100in which the systems and methods of the present specification may be implemented or executed. The system100comprises client-server architecture, where one or more game servers105are in communication with one or more client devices110over a network115. Players and non-players, such as computer graphics artists or designers, may access the system100via the one or more client devices110. The client devices110comprise computing devices such as, but not limited to, personal or desktop computers, laptops, Netbooks, handheld devices such as smartphones, tablets, and PDAs, gaming consoles and/or any other computing platform known to persons of ordinary skill in the art. Although three client devices110are illustrated inFIG.1, any number of client devices110can be in communication with the one or more game servers105over the network115.

The one or more game servers105can be any computing device having one or more processors and one or more computer-readable storage media such as RAM, hard disk or any other optical or magnetic media. The one or more game servers105include a plurality of modules operating to provide or implement a plurality of functional, operational or service-oriented methods of the present specification. In some embodiments, the one or more game servers105include or are in communication with at least one database system120. The database system120stores a plurality of game data associated with at least one game that is served or provided to the client devices110over the network115. In some embodiments, the one or more game servers105may be implemented by a cloud of computing platforms operating together as game servers105.

In accordance with aspects of the present specification, the one or more game servers105provide or implement a plurality of modules or engines such as, but not limited to, a master game module130, an object and arms movement (OAM) module132, and a virtual camera movement (VCaM) module134. In some embodiments, the one or more client devices110are configured to implement or execute one or more of a plurality of client-side modules some of which are same as or similar to the modules of the one or more game servers105. For example, in some embodiments each of the player and non-player client devices110executes a client-side game module130′ (also referred to as—client game module130′) that integrates a client-side object and arms movement module132′ and a client-side virtual camera movement module134′.

In some embodiments, the at least one non-player client device110gis used by the computer graphics artist or designer to log into the one or more game servers105(via the client game module130′) and execute the modules132and134on the server to customize, adjust or modulate a first plurality of parameters associated with a virtual character's torso and handheld object orientations and a second plurality of parameters associated with procedural animation or rotational shake motion of a virtual character's/player's virtual camera, offline. The tuned first and second plurality of parameters are stored in the at least one database120.

While various aspects of the present specification are being described with reference to functionalities or programming distributed across multiple modules or engines132and134, it should be appreciated that, in some embodiments, some or all of the functionalities or programming associated with these modules or engines may be integrated within fewer modules or in a single module—such as, for example, in the master game module130itself on the server side and in the client gaming module130′ on the client side.

In embodiments, the master game module130is configured to execute an instance of an online game to facilitate interaction of the players with the game. In embodiments, the instance of the game executed may be synchronous, asynchronous, and/or semi-synchronous. The master game module130controls aspects of the game for all players and receives and processes each player's input in the game. In other words, the master game module130hosts the online game for all players, receives game data from the client devices110and transmits updates to all client devices110based on the received game data so that the game, on each of the client devices110, represents the most updated or current status with reference to interactions of all players with the game. Thus, the master game module130transmits game data over the network115to the client devices110for use and rendering by the game module130′ to provide local versions and current status of the game to the players.

On the client-side, each of the one or more player client devices110implements the game module130′ that operates as a gaming application to provide a player with an interface between the player and the game. The game module130′ generates the interface to render a virtual environment, virtual space or virtual world associated with the game and enables the player to interact in the virtual environment to perform a plurality of game and other tasks and objectives. The game module130′ accesses game data received from the game server105to provide an accurate representation of the game to the player. The game module130′ captures and processes player inputs and interactions within the virtual world or environment and provides updates to the game server110over the network115.

The database system120described herein may be, include, or interface to, for example, an Oracle™ relational database sold commercially by Oracle Corporation. Other databases, such as Informix™, DB2 (Database 2) or other data storage, including file-based, or query formats, platforms, or resources such as OLAP (On Line Analytical Processing), SQL (Structured Query Language), a SAN (storage area network), Microsoft Access™ or others may also be used, incorporated, or accessed. The database system120may comprise one or more such databases that reside in one or more physical devices and in one or more physical locations.

Object and Arms Movement (OAM) Module

In various embodiments, the OAM module or engine132implements a plurality of instructions or programmatic code to enable a virtual character's movement of his arms and hand-held object to be perceived, from a first-person perspective, as sufficiently chaotic or random, thereby imparting dynamic, non-linear and realistic look and feel to the movement of the virtual character and hand-held object. To impart the realistic, chaotic or random motion the module132defines and implements a double two-dimensional (2D) mass-spring-damper system. In some embodiments, the double 2D mass-spring-damper system comprises a first mass-spring-damper unit or sub-system defined to model movement of the virtual character's arms and a second mass-spring-damper unit or sub-system defined to model movement of the virtual character's hand-held object.

In some embodiments, each of the first and second mass-spring-damper units or sub-systems is associated with, linked, anchored or coupled to the virtual character's view vector in a way that generates a perception that the first mass-spring-damper unit or sub-system is linked to the virtual character's torso or shoulder and the second mass-spring-damper unit or sub-system is linked to a tip of the hand-held object such as, but not limited to, a weapon or gun.

It should be appreciated that the nature of the double 2D mass-spring-damper system ensures that, when applied, movement of the virtual character's arms and object do not feel too linear. Similarly, the chaotic movement generated by the first and second spring systems is bounded by the use of dampers in these spring systems. The faster the mass elements of the first and second spring systems are moving, the more friction they introduce.

In embodiments, the two sets of 2D mass-spring-damper system are modeled with an applied force (that is, the force being generated from movement of a virtual character) being directed to a tip of an object, such as a weapon or gun, (so that it leads the object) as opposed to what happens in reality where the force is applied to a handle of the gun. It should be appreciated that all of the steps, algorithms, or other processes described herein are implemented in the form of programmatic instructions, stored in a non-transient memory, and executed by at least one processor in the video game client or hosting server.

A 2D Mass-Spring-Damper Unit or Sub-System

A damped harmonic oscillator, or a mass-spring-damper system, is a system where a motion between an anchor point and a moving mass is controlled by a spring and a damper variable. The force on the spring increases as the anchor and mass move farther apart. The damper variable generates a frictional force that opposes any motion of the mass, and its frictional force increases as the relative velocity between the anchor and mass increases. In embodiments of the present specification, the anchor is not fixed in space. The anchor is moved by game logic and the mass essentially follows it around via the forces of the mass-spring-damper system, as described herein. Accordingly, the programmatic instructions taught herein, when executed, determine a first location of an anchor point based on one or more parameters of the game or data generated in the course of the game, apply the mass-spring-damper logic described herein, and determine a position and orientation of the mass based upon the applied mass-spring-damper logic.

To integrate a motion of a mass-spring-damper unit over time in one dimension, an equation for a damped harmonic oscillator is used which is implemented based on data generated for a frame of the video game that is currently being rendered and used to generate a relative velocity and a relative displacement for a mass and an anchor in a subsequent frame of the video game. More specifically, the client device or server determines, for a first frame of a predefined duration, an offset or displacement between a mass and an anchor and a relative velocity between the mass and the anchor. The client device or server then determines, for a subsequent frame of a predefined duration, an offset or displacement between the mass and the anchor and the relative velocity between the mass and the anchor, assuming that the time duration of the subsequently rendered frame is equal to that of the first frame. This process serves to advance the calculation of a mass-spring-damper system along a straight line forward in time using the Forward Euler Method of numerical integration.

a=−k*x0−c*v0

v1=a*t+v0

x1=v1*t+x0where:‘a’ is the relative acceleration between the mass and anchor,k is a spring coefficient or constant,c is a damper coefficient or constant,‘x0’ is the current offset between the mass and anchor,‘v0’ is the current relative velocity between the mass and anchor,‘t’ is the time step between the current frame and next frame,‘v1’ is the next frame's relative velocity between the mass and anchor, and‘x1’ is the next frame's offset between the mass and anchor.

However, the aforementioned calculations v1 and x1 are unstable at variable frame rates—that is, the resulting relative velocity and offsets calculated using the mass-spring-damper system would appear to unexpectedly jitter if subsequent rendered frames had slightly different durations.

In accordance with some embodiments, the client device or server implement a Backward Euler Method of numerical integration to calculate the relative velocity (v1) and relative offset (x1) for a more stable result in case of variable frame rates. The Backward Euler Method initiates with an assumption that the next rendered frame's acceleration between mass and anchor (in contrast to the current relative acceleration) is already known, and then the equations are solved considering the information available from the current rendered frame.

For example, the equations to integrate a damped harmonic oscillator in one dimension or axis become:a1=−k*x1−c*v1 (Backward Euler, note that x1 and v1 are unknowns)Attempting to get v1 in terms of values we have in the current time (x0, v0, and t):v1=a1*t+v0Substitute for a1 from above:v1 (−k*x1−c*v1)*t+v0Substitute for x1, then simplify:v1=(v0−k*t*x0+c)/(1+k*t{circumflex over ( )}2+c*t)x1=v1*t+x0where, a1 is the next rendered frame's acceleration between mass and anchor

In the client or server, programmatically determining x1 and v1 using the Backward Euler Method defines a mass-spring-damper system that is much more tolerant of irregular values for ‘t’ over time (that is, for variable or uneven frame rates), and the result is a well-behaved damped spring system. While the aforementioned equations and calculations, based on the Backward Euler Method, are for a one dimensional (1D) mass-spring-damper system, for higher dimensions the calculations are performed independently for each axis. Stated differently, the 2D mass-spring-damper unit or system of the present specification is a 1D mass-spring-damper system in the X axis, and another instance of the same 1D system in the Y axis.

It should be noted that the module132implements the first and second 2D mass-spring-damper units or sub-systems to operate in Euler angles, namely, the X-axis represents pitch, and the Y-axis represents yaw. Therefore, offsets or displacements (being angular in nature) in the equations are in radians, and velocities are actually angular velocities in radians per second. Consequently, the calculated angular displacements in the first and second spring systems at a given time are applied to the virtual character's limbs and objects to establish more realistic first-person movements.

Determining Orientation of a Virtual Character's Limbs and Object During Gameplay

FIG.2Ais a flowchart of a plurality of exemplary steps of a method200aof dynamically determining orientation or position of a virtual character's limbs, particularly arms, and object as the virtual character is turned by a player during gameplay, in accordance with some embodiments of the present specification. In embodiments, the human player's virtual character is characterized by a torso having a mass and an anchor. In embodiments, the player's virtual character is holding an object that is characterized by a mass and an anchor. In some embodiments, the method200is implemented by the OAM module132, in one or more game servers105, in data communication with a client game module130′ on the player's client device110ofFIG.1. As discussed earlier in this specification, each of the first and second mass-spring-damper units or sub-systems is associated with, linked, anchored or coupled to the virtual character's view vector or viewpoint.

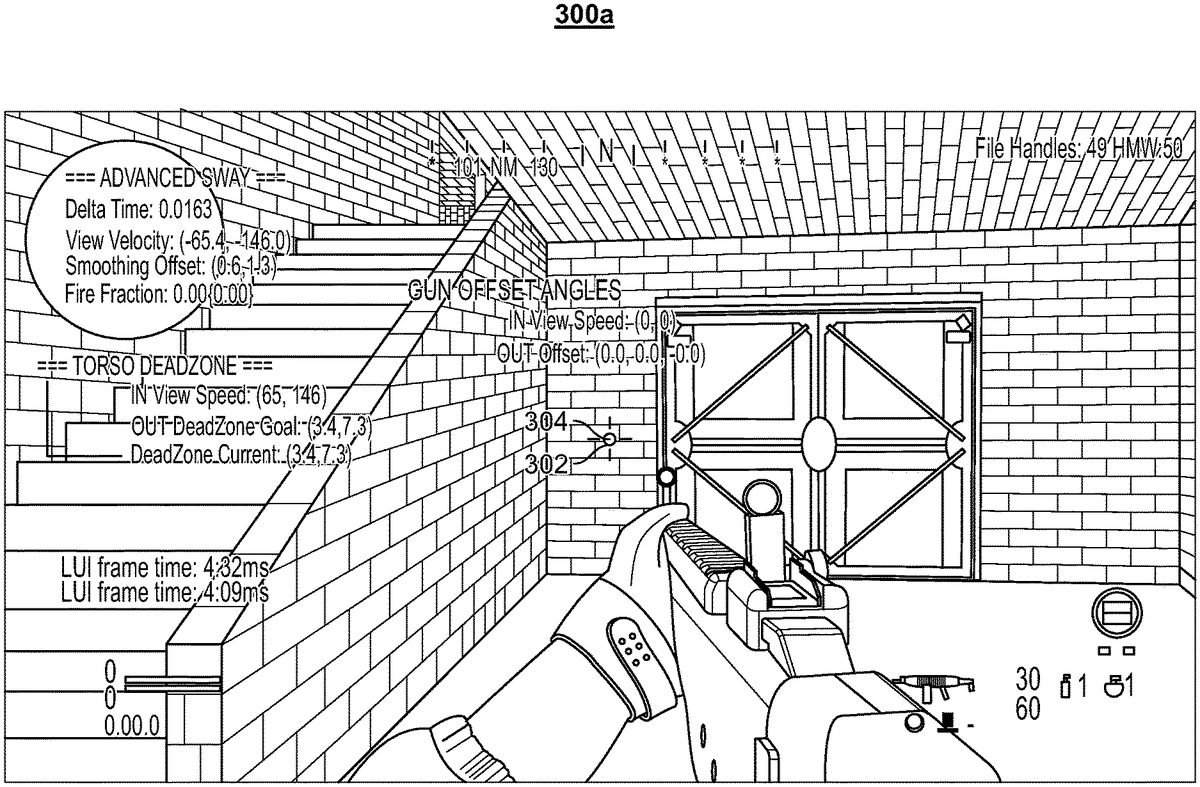

At step202, the module132calculates a current “Torso Anchor” orientation from the virtual character's view vector orientation and view vector angular velocity. As shown inFIG.3A, while the virtual character's angular view velocity is zero, the “Torso Anchor” orientation302(represented by a first sphere302in the figure) is aligned with the player's view vector or viewpoint304(represented by a white sphere304placed in the center of a targeting crosshair)—that is, the “Torso Anchor” orientation302is centered in the middle of the screen300a.

Referring now toFIG.3B, when the virtual character has a non-zero view vector angular velocity (that is, the player is turning or moving his virtual character), a dead zone306(represented by a rectangle in the figure) of a size or area is considered or generated around the virtual character's moving or turning view vector304orientation, as shown in screen300b. In some embodiments, the size or area of the dead zone306is a function of the virtual character's view angular velocity. In some embodiments, the “Torso Anchor” orientation302is dragged, moved or turned along with the virtual character's moving or turning view vector304only when the “Torso Anchor” orientation302hits a wall of the dead zone306. Therefore, the faster the virtual character turns, the farther the “Torso Anchor” orientation302falls behind or lags the virtual character's view vector304.

In some embodiments, a plurality of steps is implemented in order to determine a dead zone size in an axis.FIG.2Bis a flowchart of a plurality of steps of a method200bof using the virtual character's view vector angular velocity (in degrees per second) in an axis in order to determine a dead zone size (in degrees) in that axis. In embodiments, the method200bis implemented by the module132.

Referring now toFIG.2B, at step203a, the virtual character's current view vector angular velocity is smoothed in each axis using a function, such as a first-order infinite impulse response filter that applies weighting factors which decrease exponentially, referred to as an Exponential Moving Average function. The weighting for each older datum decreases exponentially, never reaching zero, thereby weighting new data more than older data. Data corresponding to a ‘dead zone blend duration’ parameter406(FIG.4), customized by a graphics designer, determines an amount of time over which the smoothing is performed. The smoothing process generates a “smoothed view velocity” that is less dependent on the instantaneous motions of the virtual character, but instead more generally represents the recent motion of the virtual character.

At step203b, data corresponding to the parameters of ‘in max view speed’410, ‘out max dead zone size’412and ‘view speed to dead zone size’408(FIG.4) are used to perform a nonlinear mapping from the “smoothed view velocity” to a “goal dead zone size”. The ‘view speed to dead zone size’408sub-asset can be modeled as a two dimensional (2D) graph in both the X and Y axes, with a point at (0, 0), a point at (1, 1), and designer-placed points in-between. Effectively, this allows relatively low view vector speeds to have a large impact on dead zone size and higher view vector speeds having a diminishingly smaller impact on dead zone size.

At step203c, after calculating the “goal dead zone size”, smoothing is performed from the “current dead zone size” toward the “goal dead zone size”. This prevents the dead zone from changing too rapidly from quick virtual character movements. Smoothing (from the “current dead zone size” toward the “goal dead zone size”) is defined by the ‘dead zone adjust rate’414ofFIG.4. In some embodiments, the smoothing corresponds to a linear tracking algorithm where the speed of dead zone change is expressed in dead zone size in degrees/second.

At step203d, the game is rendered in accordance with the determined “goal dead zone size”.

Since the dead zone size is controlled by the virtual character's angular view velocity, this also prevents the “Torso Anchor” from snapping back to center if the player were to rapidly move his mouse back and forth. It should be appreciated that the “Torso Anchor” is driven from the player's (or virtual character's) motion and the dead zone.

Referring back toFIG.2A, at step204, the module132calculates a current “Torso Mass” orientation from the current “Torso Anchor” orientation determined earlier at step202. In embodiments, a first 2D mass-spring-damper system, representing pitch and yaw in Euler angles, is used to connect the current “Torso Mass” orientation to the current “Torso Anchor” orientation. The “Torso Mass” follows the “Torso Anchor” due to the physics of the first 2D mass-spring-damper system.FIG.3Cshows the “Torso Mass” orientation308(represented by a second sphere308in the figure) with reference to the dead zone306, the “Torso Anchor” orientation302and the virtual character's view vector304in screen300c.

At step206, the module132calculates a current “Object Anchor” orientation from the virtual character's view vector angular velocity and the “Torso Mass” orientation determined earlier at step204. In some embodiments, the module132is configured to calculate the current “Object Anchor” orientation by adding a first angular offset or displacement to the current “Torso Mass” orientation. In some embodiments, the first angular offset or displacement is calculated by scaling the virtual character's view vector angular velocity in a corresponding axis. This results in defining the current “Object Anchor” orientation or position that leads ahead of the current “Torso Mass” orientation or position in a direction the virtual character is turning.FIG.3Dshows the current “Object Anchor” orientation310(represented by a third sphere310in the figure) with reference to the “Torso Mass” orientation308(represented by a second sphere308in the figure), the dead zone306, the “Torso Anchor” orientation302and the virtual character's view vector304in screen300d.

At step208, the module132calculates a current “Object Mass” orientation from the current “Object Anchor” orientation determined earlier at step206. In embodiments, a second 2D mass-spring-damper system, representing pitch and yaw in Euler angles, is used to connect the current “Object Mass” orientation to the current “Object Anchor” orientation.FIG.3Eshows the current “Object Mass” orientation312(represented by a fourth sphere312in the figure) with reference to the “Object Anchor” orientation310, the “Torso Mass” orientation308, the dead zone306, the “Torso Anchor” orientation302and the virtual character's view vector304in screen300e.

At step210, the module132uses an offset of the “Torso Mass” orientation from the view vector orientation as angles to pivot the virtual character's arms and object assembly near the virtual character's shoulder. In other words, the module132calculates a second angular offset between the “Torso Mass” orientation and the view vector orientation. The calculated second angular offset is applied, by the module132, as a rotation to the virtual character's arms and object model at the virtual character's shoulder.

Finally, at step212, the module132uses an offset of the “Object Mass” orientation from the view vector orientation as angles to pivot the virtual character's arms and object assembly at a point on the object, wherein the point is specified by a computer graphics designer. In other words, the module132calculates a third angular offset between the “Object Mass” orientation and the view vector orientation. The calculated third angular offset is applied, by the module132, as a rotation to the virtual character's arms and object model at a designer-specified pivot point on the object. A computer graphics designer or artist may specify a pivot point on the object by configuring a value of parameter436inFIG.4.

In some embodiments, the virtual character's object is one that might be capable of being aimed (for example, a gun, arrow, javelin, spear, telescope, etc.). It should be appreciated that such an object's line of sight orientation is substantially a function of an angular offset of the object mass as well as an angular offset of the torso mass. In some embodiments, when the player (or the virtual character) takes aim down the object's line of sight, the module132temporarily blends out all procedural movement in a gameplay scene, to ensure that the object is aligned with the virtual character's view vector.

In accordance with some aspects of the present specification, the module132is configured to generate at least one GUI (Graphical User Interface) to enable a graphics artist or designer to customize one or more parameters, properties or characteristics associated with a virtual character's torso and object (gun, in a non-limiting example) orientations. The at least one GUI is generated in response to the graphics designer's request through a client game module130′ on his client device110g(FIG.1).

FIG.4shows an exemplary GUI400that displays a plurality of customizable parameters associated with a virtual character's torso and handheld gun orientations, in accordance with some embodiments of the present specification. The GUI400displays a checkbox402which when checked by a graphics designer enables the module132to implement the sway motion method200ofFIG.2for a virtual character's first-person arms and object model during gameplay.

A first portion400adisplays a first plurality of parameters related to a virtual character's torso orientation or position. In some embodiments, the first plurality of parameters comprises:Dead zone blend duration406: this parameter determines an amount of time over which a virtual character's current view velocity is smoothed in each axis. Higher values of the parameter406result in slower dead zone shape change, making the virtual character's arms and object model (or view model) less sensitive to sudden motions and vice versa. In some embodiments, this smoothing is applied before performing a non-linear mapping from a “smoothed view velocity” to a “goal dead zone size”.View speed to dead zone size408: this parameter is utilized in creating a nonlinear mapping between view vector speeds and dead zone size.In max view speed410: this parameter is utilized in creating a nonlinear mapping between view vector speeds and dead zone size.Out max dead zone size412: this parameter is utilized in creating a nonlinear mapping between view vector speeds and dead zone size.Dead zone adjust rate414: this parameter drives a simple smoothing algorithm applied to the dead zone size after nonlinear mapping. The “current dead zone size” in each axis will blend towards a resulting “goal dead zone size” in each axis at a rate specified by the parameter414. Higher values of the parameter414result in snappier dead zone response to virtual character movements.Torso mass416: this parameter determines a mass or inertia of a “Torso Mass” itself. Higher values of the parameter416slow down an entire mass-spring-damper system by adding inertia without affecting the characteristic behavior of a specific set of tuning values. That is, if a designer creates a certain ‘look’ or ‘behavior’ which is just moving too fast or slow, the designer can adjust this ‘mass’ value416to make it behave the same but just slower or faster.Spring constants418: this parameter determines a spring constant ‘k’ of the first two-dimensional mass-spring-damper system. The spring constant is representative of how stiff the spring is. Stiffer (more difficult to stretch) springs have higher spring constants.Damper constants420: this parameter determines a damper constant ‘c’ of the first two-dimensional mass-spring-damper system. The damper constant is representative of a frictional force that opposes any motion of the torso mass. Higher damper constants result in high frictional forces.

Each of the parameters410,412,414,416,418and420is configured to have first and second values corresponding to pitch and yaw rotational motions.

A second portion400bdisplays a second plurality of parameters related to a gun orientation or position, the gun being handheld by the virtual character. In some embodiments, the second plurality of parameters comprises:Gun orientation blend duration422: this parameter determines a smoothing value for a virtual character's view velocity in each axis before performing nonlinear mapping.View speed to gun offset424: this parameter is utilized in creating a nonlinear mapping between view vector speeds and dead zone size. Higher values of this parameter result in slower dead zone shape change, making the virtual character's arms and gun (object) model (or view model) less sensitive to sudden motions.In max view speed426: this parameter is utilized in creating a nonlinear mapping between view vector speeds and dead zone size.Out max gun offset428: this parameter is utilized in creating a nonlinear mapping between view vector speeds and dead zone size.Gun mass430: this parameter determines a mass or inertia of a “Gun Mass” itself. Higher values of the parameter430slow down an entire mass-spring-damper system by adding inertia without affecting the characteristic behavior of a specific set of tuning values. That is, if a designer creates a certain ‘look’ or ‘behavior’ which is just moving too fast or slow, the designer can adjust this ‘mass’ value430to make it behave the same but just slower or faster.Spring constants432: this parameter determines a spring constant ‘k’ of the second two-dimensional mass-spring-damper system. The spring constant is representative of how stiff the spring is. Stiffer (more difficult to stretch) springs have higher spring constants.Damper constants434: this parameter determines a damper constant ‘c’ of the second two-dimensional mass-spring-damper system. The damper constant is representative of a frictional force that opposes any motion of the torso mass. Higher damper constants result in high frictional forces.Gun pivot point436: this parameter specifies a pivot point on the gun around which the resulting “Gun Mass” rotation will be applied. In embodiments, rotation from “Torso Mass” is applied at the shoulder while rotation from “Gun Mass” is applied at the pivot point436on the virtual character's arms and object model (or view model).Yaw-to-roll scale438: this parameter introduces a small amount of roll (rotation in the z axis) on the virtual character's arms and object model at a specified pivot point. The parameter438is a scale that is multiplied against a current frame's “Gun Mass” rotation y component (yaw) to calculate a “Gun Mass” rotation z component (roll). This effectively makes the gun roll/rotate into sharp turns. Higher values make the gun roll more as it is turning.

Each of the parameters426,428,430,432and434is configured to have first and second values corresponding to pitch and yaw rotational motions.

A third portion400cdisplays a third plurality of parameters related to fire blending. The third plurality of parameters is directed towards customizing a characteristic, such as, for example, tightening, the entire mass-spring-damper system while the player (or the virtual character) is firing, to make the weapon appear to better align with the virtual character's view vector while shooting. (Note that bullets are technically emitted from the camera and not the gun). In some embodiments, the third plurality of parameters (‘Fire’ settings) comprises:Fire duration440: duration, after firing a bullet, where the system will use the settings of the third plurality of parameters.Fire start blend duration442: duration over which to blend to the settings of the third plurality of parameters when starting to fire.Fire finish blend duration444: duration over which to blend out of the settings of the third plurality of parameters when the duration440has elapsed.Fire smoothing rate446: the virtual character's view vector is smoothed at this rate to calculate a base orientation of the virtual character's arms while firing. Units in radians/sec per radian of displacement between current and goal orientation.Fire torso dead zone scale448: this parameter scales the torso dead zone by this value while firing.Fire torso-to-gun scale450: this parameter scales a final gun orientation (that is, difference between “Gun Mass” and the virtual character's view vector) by this value while firing.

The third plurality of parameters of the GUI400enables tuning weapons to have a unique feel per weapon class. Thus, missile launchers feel very heavy and have a slow sway and primarily pivot around the shoulder.

Virtual Camera Movement (VCaM) Module

In various embodiments, the virtual camera movement module or engine134implements a plurality of instructions or programmatic code to procedurally animate the virtual camera such that it simulates the movement of a camera handheld by the virtual character. In some embodiments, the module134implements the feature of procedural camera animation (or camera shake behavior) by applying coherent noise, such as, for example, Perlin Noise, to each of six axes on a first-person virtual camera—that is, X, Y, and Z axes in translation (forward/backward, left/right, up/down) and Yaw, Pitch, and Roll in rotation. An embodiment uses six octaves of Perlin Noise sampled in one dimension for each of the six camera axes. Alternate embodiments may apply other coherent noise functions such as, for example, Simplex Noise or may use a plurality of layers of sin( ) function.

In accordance with some aspects, the module134is configured to sample Perlin Noise for every frame of gameplay, and a value is generated for a time duration corresponding to every frame. The module134then directly uses the generated value as an offset to one of the six axes on the local player's (or virtual character's) virtual camera. Persons of ordinary skill in the art would appreciate that a unique characteristic of Perlin Noise is that adjacently sampled values are similar, resulting in smooth transitions from frame to frame, even though it produces overall pseudorandom results. This is known as being a type of coherent noise.

In embodiments, the module134is configured to dynamically adjust or modulate one or more of a plurality of parameters (also referred to as ‘camera shake parameters’) input to the Perlin Noise function. In some embodiments, the plurality of parameters comprises a number of octaves (this value never changes and is always six in each axis, in accordance with some embodiments), amplitude, frequency, and persistence. In some embodiments, amplitude, frequency, and persistence are the parameters that are adjusted or modulated according to what the player (or the virtual character) is doing while playing the game to change the overall camera shake behavior.

In some embodiments, the one or more of the plurality of parameters provided to Perlin Noise calculation are adjusted or modulated in direct proportion to the virtual character's linear and angular velocity in the game. That is, the one or more of the plurality of parameters respond linearly to payer actions. So, for example, sprinting quickly through a game map will create a much faster, stronger, and rougher camera shake than slowly walking through a game level would. As another example, higher movement speed can affect pitch rotational shake roughness more than it would affect roll rotational shake

In some embodiments, the one or more of the plurality of parameters can also respond non-linearly to virtual character actions or movements. For example, some of the plurality of parameters will greatly increase when the virtual character accelerates from 10 units per second to 100 units per second but will only increase slightly in the range from 100 units per second to 250 units per second.

In accordance with aspects of the present specification, the one or more of the plurality of parameters can be controlled by graphics artists or designers on a per-axis basis. In some embodiments, the plurality of parameters can alternatively be controlled directly from designer-written game script, for example to add shake to a scene as the virtual character sits in a helicopter on the ground and the helicopter's rotors are spinning up in preparation for takeoff. In this case, the virtual character's velocity does not change but it is still desirable to add camera shake.

In accordance with some aspects of the present specification, the module134is configured to generate at least one GUI (Graphical User Interface) to enable a graphics artist or designer to customize one or more parameters, properties or characteristics associated with procedural animation or rotational shake motion of a player's (or virtual character's) virtual camera. The at least one GUI is generated in response to the graphics designer's request through a client game module130′ on his client device110g(FIG.1).

FIG.5shows an exemplary GUI500that displays a plurality of customizable parameters associated with procedural animation or rotational shake motion of a player's (or virtual character's) virtual camera, in accordance with some embodiments of the present specification. In some embodiments, the rotational shake motion of the virtual camera mimics a camera handheld by the player (or the virtual character). The GUI500displays a checkbox501which when checked by a graphics designer enables the module134to implement the handheld camera rotational motion of method600ofFIG.6during gameplay.

A first portion500adisplays a first plurality of parameters related to the camera rotational shake or motion. In some embodiments, the first plurality of parameters comprises: frequency502, amplitude504, persistence/roughness506and view-move scale combine mode508. In some embodiments, the parameter508has one of first, second or third attributes/options which correlate to ‘maximum’, ‘average’ or ‘multiply’, respectively. To calculate final values for frequency502, amplitude504, and persistence506, the system independently calculates these values resulting from the virtual character's movement speed (that is, translation speed through the virtual world) and the virtual character's angular view velocity (that is, rotation velocity). Once each of the three parameters502,504and506have been calculated from the virtual character's movement speed and angular view velocity, the combine mode508is used to determine which or how the values for the three parameters502,504and506should be used. Thus, if the first option of ‘maximum’ is chosen for the parameter508then the system uses the maximum of movement speed or angular view velocity for each of the frequency502, amplitude504, and persistence506parameters. If the second option of ‘average’ is chosen for the parameter508then the system uses an average of movement speed and angular velocity for each of frequency502, amplitude504, and persistence506parameters. If the third option of ‘multiply’ is chosen for the parameter508then the system multiplies the movement speed and angular view velocity and the resultant value is used for each of frequency502, amplitude504, and persistence506parameters. Each of the parameters502,504,506and508is configured to have first, second and third values corresponding to pitch, yaw and roll rotational motions.

A second portion500bdisplays a second plurality of parameters that define modifiers to the frequency502, amplitude504and persistence506with virtual character's movement. In some embodiments, the second plurality of parameters comprises:Modifier510: this parameter is configured as a drop-down list of options such as, for example, ‘speed control’ and ‘script control’. Under ‘speed control’, the virtual character's movement speed/velocity is used to control the frequency502, amplitude504and persistence506values. Under ‘scrip control’, arbitrary game logic can directly supply values to the system. That is, scripters can choose to increase shake intensity as a stationary helicopter's rotors begin to spin, for example.Non-linear map512: this parameter controls if the second plurality of parameters respond non-linearly to virtual character movement. In some embodiments, RumbleGraph assets are used to define non-linear mapping of the second plurality of parameters to a virtual character's movement. RumbleGraph assets determine how the system expresses the non-linear mapping in [0, 1] in the X-axis to [0, 1] in the Y-axis.In speed blend in time514: when the virtual character's current movement speed is less than a goal movement speed, the blend time514is used in a function, such as a type of Exponential Moving Average smoothing function. Increasing the value514will make shake parameters change more slowly in response to virtual character motion.In speed blend out time516: when the virtual character's current movement speed is greater than the goal movement speed, the blend time516is used in a function, such as a type of Exponential Moving Average smoothing function. Increasing the value516will make shake parameters change more slowly in response to virtual character motion.In player move speed518: inputs to a non-linear map must be in the range [0,1]. The value518specifies a maximum movement speed to use so that a value can be calculated in that range from the virtual character's current movement speed. That is, the virtual character's current movement speed divided by the ‘in player move speed’518is the value that will be used as the input to the non-linear map. Setting high values518above the virtual character's maximum speed in-game may result in the maximum frequency502, amplitude504and persistence506values never being achieved. This value518should generally be set to the virtual character's maximum expected speed in-game.Out frequency scale520: from its input, the non-linear map produces an output value in [0,1]. The output value is multiplied against the value of the parameter520to produce a final frequency scale value to use for sampling Perlin Noise. Higher values result in faster shaking.Out amplitude scale522: from its input, the non-linear map produces an output value in [0,1]. The output value is multiplied against the value of the parameter522to produce a final amplitude scale value to use for sampling Perlin Noise. Higher values of the parameter522result in greater shake amplitude (larger overall movement on screen).Out persistence scale524: from its input, the non-linear map produces an output value in [0,1]. The output value is multiplied against the value of the parameter524to produce a final persistence scale value to use for sampling Perlin Noise. Higher values of the parameter524result in rougher shake, in other words, less smooth and with more high-frequency detail.Out blend in time526and Out blend out time528: parameters526,528are similar to the parameters514,516, respectively, but control the blend in/out speed of a resulting current frequency scale, amplitude scale, and persistence scale values calculated from the virtual character's movement speed. For example, if a previous amplitude scale value was 1.1, and a current frame's goal amplitude scale value is 1.3 (calculated via the virtual character's current movement speed mapped through the RumbleGraph nonlinear function), the blend in time value526would be used to blend the previous amplitude scale value toward the goal. In other words, even though the current frame's goal amplitude scale is 1.3, the final amplitude scale value for the current frame may actually be about 1.2 since the blend in time526needs to be respected. This will allow for another method of smoothing out rapid changes in amplitude, frequency, and persistence.

A third portion500cdisplays a second plurality of parameters that define modifiers to the frequency502, amplitude504and persistence506with virtual character view vector orientation movement. In some embodiments, the third plurality of parameters comprises:Modifier530: this parameter is configured as a drop-down list of options such as, for example, ‘speed control’ and ‘script control’. Under ‘speed control’ the virtual character's angular view speed/velocity is used to control the frequency502, amplitude504and persistence506values. Under ‘scrip control’, arbitrary game logic can directly supply values to the system.Non-linear map532: this parameter controls if the third plurality of parameters respond non-linearly to virtual character view orientation movement. In some embodiments, RumbleGraph assets are used to define non-linear mapping of the third plurality of parameters to a virtual character's view orientation movement or angular view velocity. RumbleGraph assets determine how the system expresses the non-linear mapping in [0, 1] in the X-axis to [0, 1] in the Y-axis.In speed blend in time534: when the virtual character's current angular view velocity is less than a goal angular view velocity, the blend time534is used in a function, such as a type of Exponential Moving Average smoothing function. Increasing the value534will make shake parameters change more slowly in response to virtual character view rotation.In speed blend out time536: when the virtual character's current angular view velocity is greater than the goal angular view velocity, the blend time536is used in a function, such as a type of Exponential Moving Average smoothing function. Increasing the value536will make shake parameters change more slowly in response to virtual character view rotation.In player view speed538: inputs to the non-linear map must be in the range [0, 1]. The value538specifies a maximum angular view velocity to use so that a value can be calculated in that range from the virtual character's current angular view velocity. That is, the virtual character's current angular view velocity divided by the ‘in player move speed’538is the value that will be used as an input to the non-linear map. Setting high values538above the virtual character's angular view velocity in-game may result in the maximum frequency502, amplitude504and persistence506values never being achieved. This value538should generally be set to the virtual character's maximum expected angular view velocity in-game.Out frequency scale540: from its input, the non-linear map produces an output value in [0,1]. The output value is multiplied against the value of the parameter540to produce a final frequency scale value to use for sampling Perlin Noise. Higher values result in faster shaking.Out amplitude scale542: from its input, the non-linear map produces an output value in [0,1]. The output value is multiplied against the value of the parameter542to produce a final amplitude scale value to use for sampling Perlin Noise. Higher values of the parameter542result in greater shake amplitude (larger overall movement on screen).Out persistence scale544: from its input, the non-linear map produces an output value in [0,1]. The output value is multiplied against the value of the parameter544to produce a final persistence scale value to use for sampling Perlin Noise. Higher values of the parameter544result in rougher shake, in other words, less smooth and with more high-frequency detail.Out blend in time546and Out blend out time548: the parameters546,548are similar to the parameters526,528, respectively.

A Method of Imparting Procedural Animation or Realistic Shake Motion to a Virtual Camera Handheld by a Player/Virtual Character

FIG.6is a flowchart of a plurality of exemplary steps of a method600of imparting procedural animation or shake motion to a handheld virtual camera associated with a virtual character in a video game, in accordance with some embodiments of the present specification. In some embodiments, the method600is implemented by the VCaM module134, in one or more game servers105, in data communication with a client game module130′ on the player's client device110ofFIG.1. As discussed earlier in this specification, the procedural motion of the virtual camera is defined with reference to six camera axes—that is, X, Y, and Z axes in translation (forward/backward, left/right, up/down) and Yaw, Pitch, and Roll in rotation.

At step602, the module134uses the virtual character's current linear velocity, angular velocity, and ‘script state’ to calculate current amplitude, frequency, and persistence parameter values for input to a Perlin Noise function. ‘Script state’ implies that the module134is configured to utilize one or more events or states in gameplay itself to directly affect the camera shake motion. This is akin to the option of ‘script control’, for the modifier parameter510ofFIG.5, which enables arbitrary game logic (events or states) to directly supply values to the module134. For example, designers can choose to increase camera shake intensity as a stationary helicopter's rotors begin to spin (game logic, event or state), for example.

At step604, the module134samples the Perlin Noise function, for a frame of gameplay, using the parameters of amplitude, frequency and persistence along with a current ‘game time’ in order to generate a camera angular offset value in one axis (one dimension). An embodiment uses six octaves of Perlin Noise sampled in a dimension.

At step606, the module134scales the generated value against a designer-specified scale value for one of the six camera axes. That is, the generated value is used as an offset to one of the six axes on the virtual character's virtual camera.

The steps602,604and606are repeated for imparting procedural animation or camera shake motion to each of the remaining five camera axes.

Finally, at step608, the module134moves an orientation of the virtual camera based on the scaled generated value.

The above examples are merely illustrative of the many applications of the system of present specification. Although only a few embodiments of the present specification have been described herein, it should be understood that the present specification might be embodied in many other specific forms without departing from the spirit or scope of the specification. Therefore, the present examples and embodiments are to be considered as illustrative and not restrictive, and the specification may be modified within the scope of the appended claims.

Claims

- A method of programming a virtual character in a video game, wherein the virtual character is characterized by a torso having a torso mass and a torso anchor, the method being implemented by at least one server executing a plurality of programmatic instructions and comprising: generating, in at least one graphical user interface, one or more first parameter inputs for customizing an orientation of the torso;receiving responses to said one or more first parameter inputs;generating, in the at least one graphical user interface, one or more second parameter inputs for customizing characteristics of a first two-dimensional mass-spring-damper system, wherein the first two-dimensional mass-spring-damper system connects the torso orientation to an orientation of the torso anchor;receiving responses to said one or more second parameter inputs;and rendering the virtual character in accordance with the first parameter inputs and the second parameter inputs.

- The method of claim 1, wherein the virtual character is holding an object and wherein the object is characterized by an object mass and an object anchor.

- The method of claim 2, further comprising generating, in the at least one graphical user interface, one or more third parameter inputs for customizing an orientation of the object.

- The method of claim 3, further comprising receiving responses to said one or more third parameter inputs.

- The method of claim 4, further comprising generating, in the at least one graphical user interface, one or more fourth parameter inputs for customizing characteristics of a second two-dimensional mass-spring-damper system, wherein the second two-dimensional mass-spring-damper system connects the object orientation to an orientation of the object anchor, and receiving responses to said one or more fourth parameter inputs.

- The method of claim 1, wherein the one or more first parameters comprise at least one of a dead zone blend duration, a view speed to dead zone size, an in max view speed, an out max dead zone size, a dead zone adjust rate, or a torso mass.

- The method of claim 1, wherein the one or more second parameters include a spring constant or a damper constant of the first two-dimensional mass-spring-damper system.

- The method of claim 3, wherein the one or more third parameters comprise at least one of an object orientation blend duration, a view speed to object offset, an in max view speed, an out max object offset, an object mass, an object pivot point, or a yaw-to-roll scale.

- The method of claim 1, wherein the one or more fourth parameters include a spring constant or a damper constant of the second two-dimensional mass-spring-damper system.

- The method of claim 5, wherein the first two-dimensional mass-spring-damper system determines a first value indicative of a pitch and a second value indicative of a yaw and the second two-dimensional mass-spring-damper system determines a third value indicative of a pitch and a fourth value indicative of a yaw.

- A system for programming a virtual character in a video game, wherein the virtual character is characterized by a torso having a torso mass and a torso anchor, the system comprising: at least one server comprising at least one processor configured to execute a plurality of programmatic instructions wherein, when executed, the plurality of programmatic instructions: generates, in at least one graphical user interface, one or more first parameter inputs for customizing an orientation of the torso;receives responses to said one or more first parameter inputs;generates, in the at least one graphical user interface, one or more second parameter inputs for customizing characteristics of a first two-dimensional mass-spring-damper system, wherein the first two-dimensional mass-spring-damper system connects the torso orientation to an orientation of the torso anchor;receives responses to said one or more second parameter inputs;and renders the virtual character in accordance with the first parameter inputs and the second parameter inputs.

- The system of claim 11, wherein the virtual character is holding an object and wherein the object is characterized by an object mass and an object anchor.

- The system of claim 12, wherein, when executed, the plurality of programmatic instructions further generates, in the at least one graphical user interface, one or more third parameter inputs for customizing an orientation of the object.

- The system of claim 13, wherein, when executed, the plurality of programmatic instructions further receives responses to said one or more third parameter inputs.

- The system of claim 14, wherein, when executed, the plurality of programmatic instructions further generates, in the at least one graphical user interface, one or more fourth parameter inputs for customizing characteristics of a second two-dimensional mass-spring-damper system, wherein the second two-dimensional mass-spring-damper system connects the object orientation to an orientation of the object anchor, and receives responses to said one or more fourth parameter inputs.

- The system of claim 11, wherein the one or more first parameters comprise at least one of a dead zone blend duration, a view speed to dead zone size, an in max view speed, an out max dead zone size, a dead zone adjust rate, or a torso mass.

- The system of claim 11, wherein the one or more second parameters include a spring constant or a damper constant of the first two-dimensional mass-spring-damper system.

- The system of claim 13, wherein the one or more third parameters comprise at least one of an object orientation blend duration, a view speed to object offset, an in max view speed, an out max object offset, an object mass, an object pivot point, or a yaw-to-roll scale.

- The system of claim 11, wherein the one or more fourth parameters include a spring constant or a damper constant of the second two-dimensional mass-spring-damper system.

- The system of claim 15, wherein the first two-dimensional mass-spring-damper system determines a first value indicative of a pitch and a second value indicative of a yaw and the second two-dimensional mass-spring-damper system determines a third value indicative of a pitch and a fourth value indicative of a yaw.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.