U.S. Pat. No. 12,161,936

INFORMATION PROCESSING APPARATUS HAVING DUAL MODES OF VIDEO GAME OBJECT LAUNCH, AND COUNTERPART METHOD AND SYSTEM

AssigneeNintendo Co., Ltd.

Issue DateJanuary 17, 2024

Illustrative Figure

Abstract

An information processing apparatus includes controlling movement of any of first objects arranged on a field, controlling movement of a second object fed to any of the first objects, and carrying out control such that a first object satisfying prescribed proximity relation with the second object among the first objects acquires the second object. The controlling movement of a second object includes ejecting the second object to the field from a prescribed position in a direction determined based on an accepted first user operation input and moving the second object over the field in accordance with an accepted second user operation input. The controlling movement of any of the first objects moves any of the first objects toward the second object ejected to the field and moves any of the first objects toward the second object that moves over the field.

Description

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS This embodiment will be described in detail with reference to the drawings. The same or corresponding elements in the drawings have the same reference characters allotted and description thereof will not be repeated. A. Configuration of Information Processing Apparatus FIG.1is a diagram illustrating a hardware configuration of an information processing apparatus100based on an embodiment. By way of example, a configuration where the information processing apparatus according to the embodiment is mounted as a game device will be described. As shown inFIG.1, information processing apparatus100may be any computer. Information processing apparatus100may be, for example, a portable (also referred to as mobile) device such as a portable game device, a portable telephone, or a smartphone, a stationary apparatus such as a personal computer or a home game console, or a large apparatus such as an arcade game machine for a commercial purpose. The hardware configuration of information processing apparatus100is outlined below. Information processing apparatus100includes a CPU102and a main memory108. CPU102is an information processor that performs various types of information processing in information processing apparatus100. CPU102performs the various types of information processing by using main memory108. Information processing apparatus100includes a storage120. Storage120stores various programs (which may include not only a game program122but also an operating system) executed in information processing apparatus100. Any storage (storage medium) accessible by CPU102is adopted as storage120. For example, a storage embedded in information processing apparatus100such as a hard disk or a memory, a storage medium attachable to and removable from information processing apparatus100such as an optical disc or a cartridge, or combination of a storage and a storage medium as such may be adopted as storage120. In such a case, a game system representing an exemplary information processing system including information processing apparatus100and any storage medium may be configured. Game program122includes computer-readable ...

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS

This embodiment will be described in detail with reference to the drawings. The same or corresponding elements in the drawings have the same reference characters allotted and description thereof will not be repeated.

A. Configuration of Information Processing Apparatus

FIG.1is a diagram illustrating a hardware configuration of an information processing apparatus100based on an embodiment. By way of example, a configuration where the information processing apparatus according to the embodiment is mounted as a game device will be described.

As shown inFIG.1, information processing apparatus100may be any computer. Information processing apparatus100may be, for example, a portable (also referred to as mobile) device such as a portable game device, a portable telephone, or a smartphone, a stationary apparatus such as a personal computer or a home game console, or a large apparatus such as an arcade game machine for a commercial purpose.

The hardware configuration of information processing apparatus100is outlined below.

Information processing apparatus100includes a CPU102and a main memory108. CPU102is an information processor that performs various types of information processing in information processing apparatus100. CPU102performs the various types of information processing by using main memory108.

Information processing apparatus100includes a storage120. Storage120stores various programs (which may include not only a game program122but also an operating system) executed in information processing apparatus100. Any storage (storage medium) accessible by CPU102is adopted as storage120. For example, a storage embedded in information processing apparatus100such as a hard disk or a memory, a storage medium attachable to and removable from information processing apparatus100such as an optical disc or a cartridge, or combination of a storage and a storage medium as such may be adopted as storage120. In such a case, a game system representing an exemplary information processing system including information processing apparatus100and any storage medium may be configured.

Game program122includes computer-readable instructions for performing game processing as will be described later. The game program may also include a program that establishes data communication with a not-shown server and a program that establishes data communication with another information processing apparatus as a part of game processing.

Information processing apparatus100includes an input unit110that accepts an instruction from a user, such as a button or a touch panel. Information processing apparatus100includes a display104that shows an image generated through information processing. In the present example, a configuration provided with a touch panel representing input unit110on display104which is a screen will be described by way of example. Without being limited to the configuration, various input forms and representation forms can be adopted.

Information processing apparatus100includes a network communication unit106. Network communication unit106may be connected to a not-shown network and may perform processing for data communication with an external apparatus (for example, a server or another information processing apparatus).

Information processing apparatus100may be implemented by a plurality of apparatuses. For example, information processing apparatus100may be implemented by a main body apparatus including CPU102and an apparatus including input unit110and/or display104, which are separate from each other. For example, in another embodiment, information processing apparatus100may be implemented by a main body apparatus and a terminal device including input unit110and display104, or by a main body apparatus and an operation apparatus including input unit110. Information processing apparatus100may employ a television as a display apparatus, without including display104.

In another embodiment, at least some of information processing performed in information processing apparatus100may be performed as being distributed among a plurality of apparatuses that can communicate over a network (a wide range network and/or a local network).

B. Functional Configuration for Implementing Game Processing

FIG.2is a diagram illustrating a functional block of information processing apparatus100based on the embodiment. Referring toFIG.2, information processing apparatus100includes a first movement controller200, a second movement controller201, an acquisition unit206, and a virtual camera movement controller208.

First movement controller200controls movement of any of character objects (first objects) arranged on a game field. Second movement controller201controls movement of an item object (a second object) fed to any of the character objects. Acquisition unit206carries out control such that a character object satisfying prescribed proximity relation with an item object among the character objects acquires the item object.

Second movement controller201includes a first feeding unit202and a second feeding unit204. First feeding unit202accepts a first user operation input and ejects an item object to the game field from a prescribed position in a direction determined based on the first user operation input. Second feeding unit204accepts a second user operation input and moves an item object over the game field in accordance with the second user operation input. In the present example, the first and second user operation inputs include a touch operation input to a touch panel provided in display104. Specifically, the first user operation input includes an operation to cancel touching after moving a touch position (which is referred to as a flick operation in the present example). The second user operation input includes an operation to move the touch position (which is referred to as a touch movement operation in the present example).

First movement controller200moves any of character objects toward the item object ejected to the game field by first feeding unit202. First movement controller200moves any of the character objects toward the item object moved over the game field by second feeding unit204.

Virtual camera movement controller208arranges a virtual camera to satisfy prescribed positional relation with the game field. Virtual camera movement controller208moves the position of the virtual camera in parallel to the game field, for example, in response to a touch movement operation (a third user operation input) onto the game field.

Virtual camera movement controller208moves the position of the virtual camera with respect to the game field while it maintains an attitude in accordance with a pinch operation input (pinch-in or pinch-out) (a fourth user operation input) to the game field. By way of example, the pinch operation input refers to an operation input by a user to touch the touch panel with two fingers and change a distance between the fingers. An operation to decrease spacing as in pinching something between two fingers is also referred to as “pinch-in”. An operation to increase spacing by moving two fingers to spread is also referred to as “pinch-out”. In the present example, in a pinch-in operation, virtual camera movement controller208moves the position of the virtual camera to come closer to the game field while it maintains the attitude. In a pinch-out operation, virtual camera movement controller208moves the position of the virtual camera to move away from the game field while it maintains the attitude.

C. Overview of Game Processing

Game processing provided by execution of game program122according to the embodiment will now generally be described.

Game program122according to the embodiment provides a training game in which a user feeds an item object to character objects. By way of example, as an item object is fed to a character object, an event advantageous for the user during progress of a game may occur. For example, a level of the character object or the user may be increased, or the character object or the user may develop a special skill or acquire various items.

D. Exemplary Representation on Screen in Game Processing

Exemplary representation on a screen and an exemplary operation in game processing provided by execution of game program122according to the embodiment will now be described. By way of example, exemplary representation on the screen is provided on display104of information processing apparatus100.

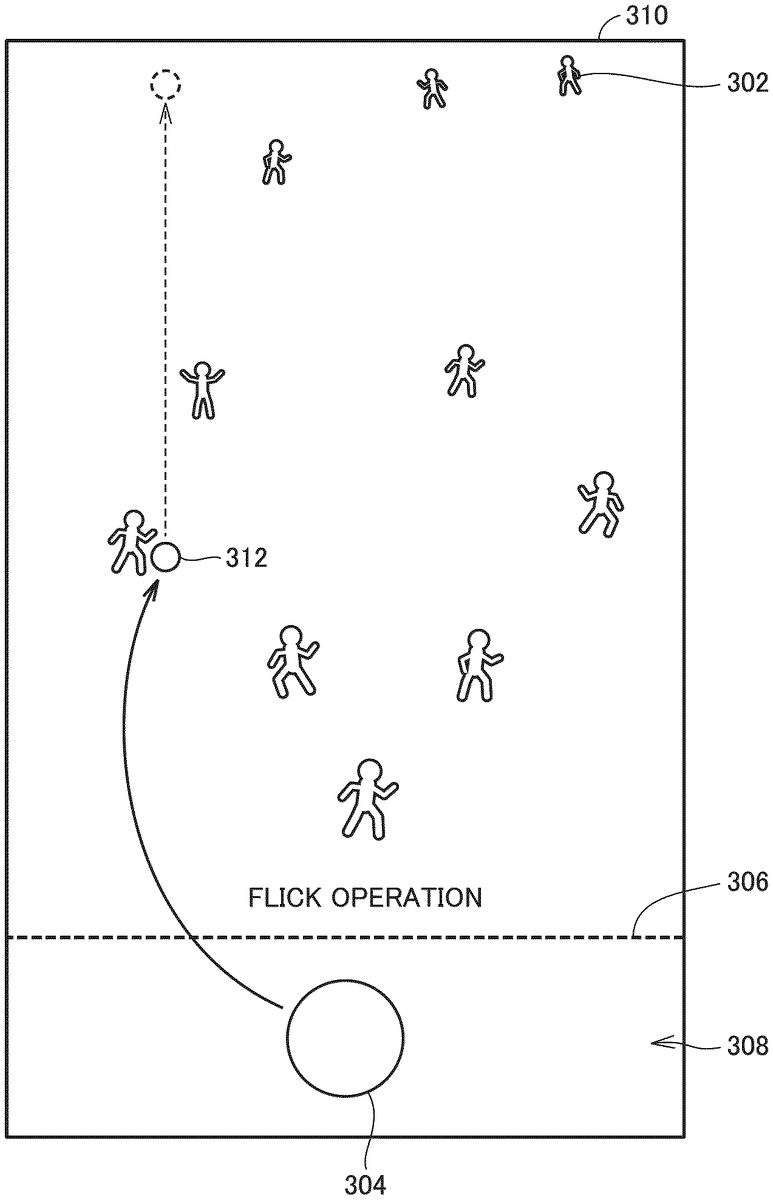

FIG.3is a diagram illustrating a screen300in a game in the game processing provided by game program122based on the embodiment.

As shown inFIG.3, character objects302are arranged on screen300. In the present example, the game field is set within a three-dimensional virtual space. For example, by way of example, a horizontal ground is set and character objects302are arranged on the ground. By way of example, ten character objects302are shown. A virtual camera is arranged at a position where the game field is obliquely looked down, and character objects302located in an upper portion on screen300are assumed as character objects far from a user and character objects302in a lower portion are assumed as character objects close to the user. The game field does not have to be the ground and may be a space having a height. In the present example, though a character object is arranged on the game field which is the ground by way of example, the character object does not necessarily have to be on the ground, and for example, the character object may be flying.

FIG.3shows a virtual boundary line306. Virtual boundary line306may be shown on screen300. An area below virtual boundary line306on screen300is set as an initial area308.

In initial area308, an item object304is shown at an initial position which is a central position. Item object304can be moved by touching by a user. Specifically, as the user laterally moves item object304while the user touches item object304, item object304laterally moves within initial area308in accordance with a touch position. When the user cancels touching of item object304, item object304returns to the central position which is the initial position. When the user moves item object304in an upward/downward direction while the user touches item object304, item object304moves within or beyond initial area308. When the user provides a flick operation input to cancel a touch position at a position beyond virtual boundary line306, the item object is ejected to the game field in accordance with the flick operation input.

When the user touches item object304and thereafter moves the touch position beyond virtual boundary line306while the user touches the item object, item object304moves over the game field in accordance with the touch position. When the user cancels touching, the item object returns to the initial position.

In the present example, item object304can be fed to character object302in two feeding schemes.

A first feeding scheme refers to such a flick operation input that the user touches item object304and thereafter cancels touching at a position beyond virtual boundary line306. In this case, the item object is ejected to the game field in accordance with the flick operation input. The ejected item object moves over the game field. Character object302acquires item object304when it satisfies prescribed proximity relation with the item object.

A second feeding scheme refers to such a case that the user touches item object304and thereafter further moves the touch position beyond virtual boundary line306after lapse of a prescribed period or longer by way of example. In this case, the item object moves over the game field in accordance with the touch position. Character object302acquires the item object when it satisfies prescribed proximity relation with the item object.

Therefore, the user can arbitrarily select a scheme for feeding item object304and zest of the game can be enhanced.

Though the flick operation input to cancel touching at a position beyond virtual boundary line306is described as the first feeding scheme, without being limited to the flick operation input, for example, another operation input to arbitrarily determine a direction may be applicable. For example, item object304may be ejected from the initial position in a direction toward a touch position. Though an operation to move the touch position at a position beyond virtual boundary line306is described as the second feeding scheme, without being limited as such, for example, another operation input to arbitrarily determine a direction may be applicable.

FIG.4is a diagram illustrating feeding item object304in the first feeding scheme in the game processing provided by game program122based on the embodiment.

FIG.4shows an example in which the user provides the flick operation input to touch item object304on a screen310and thereafter cancel touching at a position beyond virtual boundary line306. In this case, item object304is ejected to the game field in accordance with the flick operation input. In the present example, the user (or an avatar of the user) is assumed to possess a plurality of item objects304. The user can eject item objects304one by one to the game field in accordance with the flick operation input.

In the present example, an item object312is ejected as one of item objects304. Item object312is ejected from the prescribed position and moved over the game field through inertia by way of example. In the present example, item object312moves in a direction away from the user on the game field.

Item object312may be erased when it moves out of an area shown on screen310as an area on the game field. Alternatively, when item object312is present on the game field for a prescribed period of time without being acquired by character object302, item object312may be erased. Erased item object312may again be supplied as item object304possessed by the user.

For example, the user is assumed to possess ten item objects304. When all of ten item objects304are ejected, the number of item objects304becomes 0. In other words, the number of item objects304decreases each time of ejection. When the number of item objects becomes 0, item object304cannot be ejected. At this time, no item object304may be shown at the initial position.

FIG.5is a diagram illustrating control of movement of character object302with respect to item object312fed in the first feeding scheme based on the embodiment.

FIG.5shows an example in which the user provides the flick operation input to touch item object304on a screen320and thereafter cancel touching at a position beyond virtual boundary line306. In this case, item object312is ejected to the game field from a prescribed position in accordance with the flick operation input.

First movement controller200controls movement of character object302.

When character object302is within a first range with item object312being defined as the reference, first movement controller200moves character object302in a direction toward item object312.

When character object302is moved in the direction toward item object312and satisfies prescribed proximity relation (within a range314which indicates a prescribed distance or shorter from character object302in the present example), it acquires item object312. When character object302acquires item object312, item object312is erased.

When character object302is out of the first range after it starts moving toward item object312because of a high moving speed of item object312over the field, movement of character object302toward item object312may be stopped.

Though movement of one character object302is described in the present example, a plurality of character objects302may be moved. Specifically, when there are a plurality of character objects302within the first range with item object312being defined as the reference, the plurality of character objects302may be moved toward item object312. When one of the plurality of character objects302satisfies prescribed proximity relation with item object312, that character object302acquires item object312and item object312is erased. Therefore, with erasure of item object312, remaining character object(s)302of the plurality of character objects302may stop moving toward item object312.

Character object302may start moving toward item object312, for example, from a time point of ejection of item object312, from a time point when item object312is ejected and rolls over the game field, or from a time point when item object312stops moving.

A size of range314representing prescribed proximity relation can be changed as appropriate. Though range314is shown as an annular range in the present example, it does not have to be annular but may be in a rectangular shape or another shape.

FIG.6is a diagram illustrating feeding item object304in the second feeding scheme in the game processing provided by game program122based on the embodiment.

As shown inFIG.6, on a screen400, after the user touches item object304, the user moves the touch position beyond virtual boundary line306while the user keeps touching. In this case, an item object322moves over the game field in accordance with the touch position. When the user cancels touching, item object322is erased and item object304is shown at the initial position.

FIG.7is a diagram illustrating control for movement of character object302with respect to item object322fed in the second feeding scheme based on the embodiment.

As shown inFIG.7, on a screen410, after the user touches item object304, the user moves the touch position beyond virtual boundary line306while the user keeps touching. In this case, item object322moves over the game field in accordance with the touch position.

When character object302is within a second range with item object322being defined as the reference, first movement controller200moves character object302toward item object322.

By way of example, three character objects302move toward item object322. A range larger than the first range can be set as the second range. Character object302far from item object322can also move toward item object322. Though an example in which character object302within the second range moves is described in the present example, in the second feeding scheme, all character objects302may move toward item object322.

In the present example, for example, when the user possesses ten item objects304, three character objects302that satisfy prescribed proximity relation with item object322can all acquire item object322. In other words, item object322can also be said as a set of item objects304. When item object322satisfies prescribed proximity relation with character object302and is acquired, the number of ten possessed item objects304is decreased one by one. When the number of possessed item objects304becomes 0, item object322is erased. In another example, each one of possessed item objects304may be moved over the field as item object322. In other words, in this case, when any character object302acquires item object322, item object322is erased.

As described above, in feeding item object304to a plurality of character objects302, the user can perform an operation to eject item object312in any direction in the first feeding scheme and zest of the game can be enhanced. Character object302moves toward ejected item object312. Therefore, for example, even when there is no character object302in a direction in which item object312is ejected, character object302is more likely to acquire item object312. Therefore, decline in motivation of the user for an operation to eject item object312can be suppressed.

When the user intends to feed item object304to a specific character object302, the user may not be able to feed item object304to specific character object302due to deviation of the set direction of ejection of item object312or acquisition of ejected item object312by unintended character object302.

In the second feeding scheme, item object322can be moved to any position on the game field in accordance with an operation by the user. Therefore, item object322can be moved to the vicinity of a specific character object. A situation intended by the user can thus be created and zest of the game can be enhanced. On the other hand, with movement of item object322, other character objects302also move, and hence the user has to control a path for movement of item object322so as to avoid acquisition of item object322by unintended character object302, which can give zest to an operation to move item object322. Zest of the game can thus be given by providing different operability or zest to both of the first and second feeding schemes.

E. Description of Scheme for Feeding Item Object

FIGS.8A and8Bare diagrams illustrating an operation onto the touch panel according to the embodiment.

A method of setting a direction of ejection and a speed of ejection of item object304will be described with reference toFIG.8A. A position of ejection of item object304is set at a prescribed position Q on a first virtual plane L1shown with a three-dimensional coordinate within a three-dimensional space which will be described later.

In the present example, on touch panel13, touching is canceled at a point q2(x2, y2) on a touch panel coordinate system by way of example. At this time, a point q1(x1, y1) which is a touch position a prescribed time period before the time point of cancelation of touching is referred to. Then, a vector v (vx, vy) that connects point q1and point q2to each other is calculated based on point q1(x1, y1) and point q2(x2, y2). vx and vy are calculated as vx=x2□x1and vy=y2□y1.

Then, a three-dimensional vector V (Vx, Vy, Vz) within the three-dimensional space is calculated by coordinate conversion of two-dimensional vector v (vx, vy). Coordinate conversion from two-dimensional vector v (vx, vy) to three-dimensional vector V (Vx, Vy, Vz) is carried out based on a prescribed function. Vz may be set to a constant.

Referring toFIG.8B, first virtual plane L1is set at a prescribed position with respect to a position P of the virtual camera. In the present example, item object312is ejected in the direction of ejection shown with three-dimensional vector V from prescribed position Q on first virtual plane L1.

Specifically, when the user touches item object304and moves the touch position beyond virtual boundary line306while the user keeps touching, item object304moves over first virtual plane L1with that movement. Movement over first virtual plane L1encompasses not only movement of item object304as digging its way in first virtual plane L1but also movement as being in contact with the first virtual plane or movement while the item object maintains prescribed positional relation. Then, when touching by the user is canceled, item object304is ejected with a position of item object304at that time being defined as prescribed position Q. In the present example, prescribed position Q moves over first virtual plane L1. Prescribed position Q is not limited to a position on first virtual plane L1at the time point of cancelation of touching but may be a position in the vicinity thereof, a position at a time point before that time point, or a position calculated separately based on point q2(x2, y2). Prescribed position Q may be fixed. By way of example, with a specific position on first virtual plane L1corresponding to the position of item object304which is the initial position being defined as prescribed position Q, item object312may be ejected from that position. In the present example, the speed of ejection is set in accordance with magnitude of three-dimensional vector V by way of example. Therefore, for example, when point q1(x1, y1) is distant from point q2(x2, y2), the speed of ejection of item object312is high and the item object reaches a point far from the position of ejection.

FIG.9is a diagram illustrating relation of the game field with the first virtual plane and the second virtual plane set within the three-dimensional virtual space according to the embodiment.

Referring toFIG.9, a game field F is set within the three-dimensional virtual space. The virtual camera (a position P0) is arranged at a position satisfying prescribed positional relation with game field F. The virtual camera (position P0) is provided obliquely above game field F. A range of game field F imaged by the virtual camera (position P0) is shown on display104.

First virtual plane L1is set at a position satisfying prescribed positional relation with the virtual camera (position P0) within the three-dimensional virtual space. A second virtual plane L2is provided in parallel to game field F by way of example.

As described above, first virtual plane L1is a plane used in ejection of item object312. Specifically, item object312is ejected from prescribed position Q on first virtual plane L1. The direction of ejection and the speed of ejection of item object312are calculated based on two-dimensional vector v in accordance with a flick operation onto touch panel13as described with reference toFIGS.8A and8B.

When the user gives a flick operation input to touch item object304and thereafter cancel touching at a position beyond virtual boundary line306, first feeding unit202calculates prescribed position Q on first virtual plane L1, the direction of ejection, and the speed of ejection in accordance with a touch coordinate on touch panel13at the time of the flick operation. First feeding unit202ejects item object312to the game field and controls movement of item object312.

Second virtual plane L2is a plane used in movement of item object322by the second feeding unit. Specifically, item object322moves over second virtual plane L2. Second feeding unit204moves item object322to a position on second virtual plane L2set in accordance with a touch coordinate on touch panel13. Movement over second virtual plane L2encompasses not only movement of item object322as crossing second virtual plane L2but also movement as being in contact with the second virtual plane or movement while the item object maintains prescribed positional relation. Item object312ejected from the first virtual plane may be controlled to move over second virtual plane L2. In other words, as item object312moves over second virtual plane L2, item object312may be shown as moving over game field F.

When the user touches item object304and thereafter moves the touch position beyond virtual boundary line306, second feeding unit204converts a touch coordinate on touch panel13and calculates a position on second virtual plane L2. By way of example, when a duration of touching after the touch position is moved beyond virtual boundary line306exceeds a prescribed period, second feeding unit204arranges item object322at a calculated position on second virtual plane L2and carries out movement control for moving the item object over second virtual plane L2in accordance with a touch position.

Therefore, switching between the virtual planes over which the item object is moved is made in accordance with an operation input from the user. Specifically, in the first feeding scheme, first virtual plane L1is used. In the second feeding scheme, second virtual plane L2is used.

F. Description of Movement of Virtual Camera

FIG.10is a diagram illustrating movement (No. 1) of the virtual camera in the game processing provided by game program122based on the embodiment.

As shown inFIG.10, virtual camera movement controller208moves the virtual camera (position P0) in parallel to game field F in accordance with a movement operation input (third user operation input) for movement with respect to game field F. When touching is canceled after the movement operation input, movement of the virtual camera is stopped. In the present example, the virtual camera is moved to a position P1.

In the present example, first virtual plane L1is also moved such that relative positional relation is not varied with movement of position P of the virtual camera under the control by virtual camera movement controller208. In other words, when item object304is located and shown on first virtual plane L1, a position where item object304is shown is not varied in spite of movement of position P of the virtual camera. Therefore, a wide range can be set as the game field without impairing operability of the user and zest of the game can be enhanced.

FIG.11is a diagram illustrating movement (No. 2) of the virtual camera in the game processing provided by game program122based on the embodiment.

As shown inFIG.11, virtual camera movement controller208moves position P0of the virtual camera toward or away from game field F while it maintains the attitude in accordance with a pinch operation input (pinch-in or pinch-out) (a fourth user operation input) to the game field. In the present example, position P0of the virtual camera is moved to a position P2in accordance with the pinch operation input (pinch-in).

In the present example, first virtual plane L1is also moved such that relative positional relation is not varied with movement of position P of the virtual camera under the control by virtual camera movement controller208. Therefore, the game field can be shown as being zoomed in or out without impairing operability of the user and zest of the game can be enhanced.

G. Control of Representation of Item Object

FIGS.12A and12Bare diagrams illustrating control of representation of an item object based on the embodiment. As shown inFIGS.12A and12B, representation of the item object may be varied between the first feeding scheme and the second feeding scheme.

FIG.12Ais a diagram illustrating representation of item object312in accordance with the first feeding scheme. By way of example, a finger150of the user who operates item object304is shown. In the first feeding scheme, item object304to be ejected is shown at a touch position which is a position touched by the user.

When the user touches item object304and thereafter moves finger150while the user keeps touching, item object312is shown as following the touch position. By canceling touching from this state, item object312is shown as moving away from the touch position by ejection.

Immediately after the user touches item object304, the user may be notified of acceptance of a touch input, for example, by emphasized representation such as increase in size of item object304or flashing of item object304.

FIG.12Bis a diagram illustrating representation of item object322in accordance with the second feeding scheme. By way of example, finger150of the user who operates item object304is shown. In the second feeding scheme, item object322is shown at a position different from the touch position which is the position touched by the user.

Specifically, in the second feeding scheme, item object304is shown at a position not superimposed on the touch position which is the position touched by the user with finger150. In the second feeding scheme, item object322moves over second virtual plane L2and it is distant from the virtual camera. Therefore, item object322is shown with a small size. Then, by not allowing the position where item object304is shown to be superimposed on the touch position, for example, such a situation as difficulty in view of item object322by being hidden by finger150can be avoided. With such representation, the user can also readily identify the second feeding scheme.

By way of example, item object322is moved to a position on second virtual plane L2, where the item object is shown at upper left of a touch coordinate where an operation to touch with finger150is performed. Without being limited as such, the item object may be shown at another position.

H. Processing Procedure in Game Processing

A processing procedure in the game processing provided by execution of game program122according to the embodiment will now be described. Each step is performed by execution of game program122by CPU102.

FIG.13is a flowchart illustrating processing by first movement controller200provided by game program122based on the embodiment.

Referring toFIG.13, first movement controller200determines whether or not character object302has been touched (step S2).

When first movement controller200determines in step S2that the character object has been touched (YES in step S2), it performs first movement control processing (step S4). Details of first movement control processing will be described later.

Then, first movement controller200determines whether or not to quit the game (step S6).

When first movement controller200determines in step S6to quit the game (YES in step S6), the process ends (end).

When first movement controller200determines in step S6not to quit the game (NO in step S6), the process returns to step S2and the processing above is repeated.

When first movement controller200determines in step S2that the character object has not been touched (NO in step S2), it determines whether or not there is an item object on the game field (step S8).

When first movement controller200determines in step S8that there is an item object on the game field (YES in step S8), it performs second movement control processing (step S10). Details of second movement control processing will be described later.

Then, the process proceeds to step S6. When first movement controller200determines not to quit the game (NO in step S6), the process returns to step S2and the processing above is repeated.

When first movement controller200determines in step S8that there is no item object on the game field (NO in step S8), it performs normal movement control processing (step S12). First movement controller200may move character object302in a random direction as normal movement control processing.

Then, the process proceeds to step S6. When first movement controller200determines not to quit the game (NO in step S6), the process returns to step S2and the processing above is repeated.

FIG.14is a flowchart illustrating first movement control processing by first movement controller200based on the embodiment. Referring toFIG.14, first movement controller200selects a touched character object (step S20). Then, first movement controller200moves selected character object302in accordance with movement of the touch position (step S22).

Then, first movement controller200determines whether or not touching has been canceled (step S24). When first movement controller200determines that touching has been canceled (YES in step S24), it arranges selected character object302at a position on the game field corresponding to the touch position where touching has been canceled.

Then, the process ends (return).

When first movement controller200determines that touching has not been canceled (NO in step S24), the process returns to step S22and the processing above is repeated.

In other words, first movement controller200can move character object302in accordance with a flick operation input for character object302. In other words, the user can select any of character objects302and move the selected character object to any position, and zest of the game is improved.

Specific character object302can thus be selected and moved to a position in the vicinity of item object312or moved away therefrom.

Selected character object302may be not permitted to move to the initial position where item object304is provided. Thus, item object304cannot directly be fed to selected character object302. In other words, since item object304should be fed in any of the first and second feeding schemes, measures for feeding item object304to intended character object302are required and zest of the game can be maintained.

FIG.15is a flowchart illustrating second movement control processing by first movement controller200based on the embodiment.

Referring toFIG.15, first movement controller200determines whether or not there is item object312fed in the first feeding scheme on the game field (step S80).

When first movement controller200determines that there is item object312fed in the first feeding scheme on the game field (YES in step S80), it determines whether or not character object302is present within the first range set with item object312being defined as the reference (step S82).

When first movement controller200determines in step S82that character object302is present within the first range (YES in step S82), it performs movement processing for acquisition for that character object302(step S86). First movement controller200controls character object302to move toward item object312. At this time, character object302may move toward item object312at a speed higher than the moving speed in normal movement control processing.

Then, first movement controller200quits the process (return).

When first movement controller200determines in step S82that character object302is not present within the first range (NO in step S82), it performs normal movement control processing (step S90). First movement controller200may move character object302in a random direction as normal movement control processing.

When first movement controller200determines that there is no item object312fed in the first feeding scheme on the game field (NO in step S80), it means that there is item object322fed in the second feeding scheme on the game field (that is, on second virtual plane L2), and first movement controller200determines whether or not character object302is within the second range set with item object312being defined as the reference (step S87).

When first movement controller200determines in step S87that character object302is present within the second range, it performs movement processing for acquisition for that character object302(step S86). First movement controller200controls character object302to move toward item object322. A manner or a speed in movement for acquisition by character object302may be the same as or different from that in movement toward item object312in the first feeding scheme.

Then, first movement controller200quits the process (return).

When first movement controller200determines in step S87that character object302is not present within the second range (NO in step S87), it performs normal movement control processing (step S88). Processing thereafter is similar to the above.

Then, first movement controller200quits the process (return).

FIG.16is a flowchart illustrating processing by second movement controller201provided by game program122based on the embodiment.

Referring toFIG.16, second movement controller201determines whether or not an item object has been touched (step S30).

When second movement controller201determines in step S30that item object304has been touched (YES in step S30), the process proceeds to next step S31.

When second movement controller201determines in step S30that item object304has not been touched (NO in step S30), the state in step S30is maintained.

Then, in step S31, second movement controller201determines whether or not the touch position where item object304has been touched is within the initial area.

When second movement controller201determines in step S31that the touch position where item object304has been touched is within the initial area (YES in step S31), it moves item object304in accordance with the touch position (step S32). When second movement controller201determines that the touch position where item object304has been touched is within initial area308as described with reference toFIG.3, it laterally moves item object304in accordance with the touch position within initial area308.

Then, second movement controller201determines whether or not touching onto item object304has been canceled (step S34).

When second movement controller201determines in step S34that touching onto item object304has been canceled (YES in step S34), it moves item object304to the initial position (step S35). Then, the process ends (end). When second movement controller201determines that touching onto item object304has been canceled as described with reference toFIG.3, it moves item object304to the initial position.

When second movement controller201determines in step S34that touching has not been canceled (NO in step S34), the process returns to step S31and the processing above is repeated.

When second movement controller201determines in step S31that the touch position where item object304has been touched is not within the initial area, that is, the touch position has moved beyond virtual boundary line306(NO in step S31), it determines whether or not touching onto item object304continues for a prescribed period or longer on the outside of the initial area (step S36).

When second movement controller201determines in step S36that touching onto item object304continues for the prescribed period or longer on the outside of the initial area (YES in step S36), it performs second feeding control processing (step S38). Details of second feeding control processing will be described later. Then, the process proceeds to step S34.

When second movement controller201determines in step S36that touching onto the item object does not continue for the prescribed period or longer on the outside of the initial area (NO in step S36), it performs first feeding control processing (step S40). Details of first feeding control processing will be described later. Then, the process ends (end).

FIG.17is a flowchart illustrating first feeding control processing by first feeding unit202based on the embodiment.

Referring toFIG.17, first feeding unit202arranges an item object at a position on first virtual plane L1based on the touch position (step S50). Then, first feeding unit202determines whether or not touching has been canceled (step S52). When first feeding unit202determines that touching has been canceled (YES in step S52), it sets the position of item object304at that time point as the prescribed position (step S54). As described with reference toFIG.8, when the user touches item object304and moves the touch position beyond virtual boundary line306while the user keeps touching, item object304moves over first virtual plane L1with that movement. When touching by the user is canceled, first feeding unit202sets the position of item object304at that time as prescribed position Q.

Then, first feeding unit202sets the direction of ejection and the speed of ejection (step S55). With the touch position where touching has been canceled being defined as point q2and with the touch position a prescribed time period before the time point of cancellation being defined as point q1as described with reference toFIG.8, first feeding unit202sets the direction of ejection and the speed of ejection based thereon.

Then, first feeding unit202performs processing for ejecting item object312from prescribed position Q in accordance with the set direction of ejection and speed of ejection (step S56).

Then, first feeding unit202controls item object312subjected to ejection processing to reach second virtual plane L2through inertia and to move over second virtual plane L2(step S58).

Then, the process ends (return).

When first feeding unit202determines that touching has not been canceled (NO in step S52), the process proceeds to “A”. In other words, the process returns to step S31inFIG.16and the processing above is repeated until touching is canceled.

There may be a case that the position of point q2and the position of point q1are the same (substantially the same) and two-dimensional vector v cannot be calculated. For example, the user may move the touch position out of the initial area, temporarily stop operation, and cancel touching (for example, an operation to stop moving a finger on the outside of the initial area for a while and thereafter simply release the finger).

In this case as well, first feeding unit202may set prescribed position Q based on the touch position where touching has been canceled and eject the item object from that position. By way of example, when first feeding unit202is unable to calculate two-dimensional vector v, it sets the direction of ejection and the speed of ejection having initial values set in advance and ejects the item object from prescribed position Q. When first feeding unit202is unable to calculate two-dimensional vector v, it may quit ejection processing. In this case, the item object may return to the initial position.

FIG.18is a flowchart illustrating second feeding control processing by second feeding unit204based on the embodiment. Referring toFIG.18, second feeding unit204arranges item object322at the position on second virtual plane L2resulting from coordinate conversion based on the touch position (step S60). Then, the process ends (return). Specifically, when the user touches item object304and thereafter moves the touch position beyond virtual boundary line306as described with reference toFIG.9, second feeding unit204converts a touch coordinate where touch panel13was touched and calculates the position on second virtual plane L2. Second feeding unit204arranges item object322at the calculated position on second virtual plane L2. Second feeding unit204thus carries out movement control to move item object322to any position on second virtual plane L2in accordance with the touch position.

FIG.19is a flowchart illustrating acquisition processing by acquisition unit206based on the embodiment.

Referring toFIG.19, acquisition unit206determines whether or not character object302is present in the vicinity of an item object (step S100). Specifically, as described with reference toFIG.5, acquisition unit206determines whether or not character object302is present within range314with item object312or322being defined as the reference.

When acquisition unit206determines in step S100that the character object is not present in the vicinity of the item object (NO in step S100), the state in step S100is maintained.

When acquisition unit206determines in step S100that character object302is present in the vicinity of the item object (YES in step S100), character object302in the vicinity acquires the item object (step S102). When acquisition unit206determines that character object302is present within range314with item object312or322being defined as the reference, it performs processing to allow character object302to acquire the item object. Specifically, as the item object is fed to character object302, an event advantageous for a user during progress of the game may occur.

Then, acquisition unit206performs processing for erasing the item object (step S104). Specifically, when item object312is acquired in the first feeding scheme, acquisition unit206performs processing for erasing acquired item object312. Thus, another character object302is unable to acquire the item object.

Then, the process ends (end).

When item object322is acquired in the second feeding scheme in an example in which the user possesses single item object304, acquisition unit206performs processing for erasing item object322. When the user possesses at least two item objects304, the acquisition unit decreases the number of possessed item objects by one but it does not have to erase item object322. In this case, when the number of item objects304possessed by the user becomes 0, acquisition unit206erases item object322.

Then, the process ends (end).

FIG.20is a flowchart illustrating movement control by virtual camera movement controller208based on the embodiment. Referring toFIG.20, virtual camera movement controller208determines whether or not the game field has been touched (step S70).

When virtual camera movement controller208determines in step S70that the game field has been touched (YES in step S70), it determines whether or not a touch movement operation has been performed (step S71).

When virtual camera movement controller208determines in step S71that the touch movement operation has been performed (YES in step S71), it moves the position of the virtual camera in parallel to the game field in accordance with the touch movement operation (step S72).

Then, the process ends (end).

When virtual camera movement controller208determines in step S71that the touch movement operation has not been performed (NO in step S71), it determines whether or not a pinch operation has been performed (step S74).

When virtual camera movement controller208determines in step S74that the pinch operation has been performed (YES in step S74), it moves the position of the virtual camera in a direction toward/away from the game field in accordance with the pinch operation while it maintains the attitude (step S76).

Then, the process ends (end).

Modification

FIG.21is a diagram illustrating a functional block of information processing apparatus100based on a modification of the embodiment. Referring toFIG.21, information processing apparatus100based on the modification of the embodiment is different in functional block inFIG.2further including a third movement controller205. Since the configuration is otherwise similar to the configuration inFIG.2, detailed description will not be repeated.

Third movement controller205controls movement of an interfering object (a third object) arranged on the game field. Acquisition unit206carries out control such that a character object among a plurality of character objects or the interfering object that satisfies prescribed proximity relation with an item object acquires the item object.

When the interfering object acquires the item object, an event advantageous for the user during progress of the game that occurs by acquisition of the item object by the character object does not occur. Alternatively, no event may occur or an event disadvantageous for the user during progress of the game may occur.

FIG.22is a diagram illustrating a screen500in the game in the game processing provided by game program122based on the modification of the embodiment.

As shown inFIG.22, on screen500, an interfering object303is arranged together with character objects302and item object312. Though the present example describes arrangement of a single interfering object303, a plurality of interfering objects303may be provided.

Interfering object303interferes acquisition of item object312by character object302, for example, by acquiring item object312. By providing interfering object303, ejection or the like of item object312should be controlled not to allow interfering object303to acquire item object312, and zest of the game can be given.

Third movement controller205controls movement of interfering object303.

When interfering object303is within a third range with item object312being defined as the reference, third movement controller205moves interfering object303toward item object312. The third range may be the same as or different from the first range or the second range. Third movement controller205may move interfering object303toward item object312without providing a range. Interfering object303may be moved toward item object322, without being limited to item object312. Then, interfering object303may acquire item object322.

When interfering object303moves toward item object312and satisfies prescribed proximity relation (by way of example, at a prescribed distance or shorter from interfering object303), it acquires item object312. When interfering object303acquires item object312, item object312is erased.

When interfering object303is out of the third range after it starts moving toward item object312because of a high speed of item object312that moves over the field, interfering object303may stop moving toward item object312. Though interfering object303is moved in the present example, it may be fixed.

Interfering object303may move toward item object312at a speed higher than a moving speed of character object302(a moving speed in normal movement control processing or a moving speed in movement toward item object312or322).

Interfering object303may be eliminated from the game field, for example, by being touched. At this time, interfering object303may be transformed into an item object or interfering object303may produce an item object. At this time, the number of item objects that appear may be changed, and may be changed, for example, based on the number of item objects acquired by interfering object303.

H. Additional Aspects

A method of calculating the direction of ejection and the speed of ejection of item object312based on a position a prescribed time period before cancellation of the touch position and a touch position where touching has been canceled in the first feeding scheme is described. Without being limited as such, for example, first feeding unit202may control movement of item object312in the first feeding scheme in consideration of a path or variation in speed until reaching the touch position where touching has been canceled.

Though an example in which item object312that has moved out of the area shown on screen310as the area on the game field or item object312present on the game field for a prescribed period of time is supplied again and the number of item objects304returns to the original number is described above, the number does not have to return to the original number.

Though an example in which virtual camera movement controller208controls the position of the virtual camera in accordance with the touch movement operation or the pinch operation is described, the position of the virtual camera may be controlled in accordance with another operation. For example, the position of the virtual camera may be controlled by touching the game field a plurality of times. Without being limited to a touch input, the position of the virtual camera may be controlled by an operation onto a direction input key or a button. Alternatively, in response to touching of character object302a plurality of times, virtual camera movement controller208may bring the virtual camera closer to touched character object302and zoom in on that character object.

When virtual camera movement controller208moves the position of the virtual camera, first virtual plane L1is also moved. So long as relative positional relation with item object304is not varied, however, item object304may be moved. Without being limited to movement processing, the position of the virtual camera may be set by new calculation or the like. When a distance of movement of the virtual camera is long and first virtual plane L1or item object304enters, for example, the game field, first virtual plane L1or item object304may be not allowed to move any more. Furthermore, variation in relative positional relation with item object304with movement of the position of the virtual camera may be permitted.

Though a scheme in which first movement controller200moves character object302in accordance with the flick operation input is described above, without being limited to such an operation input, the character object may be moved in accordance with another operation input.

Processing for facilitating acquisition of the item object by specific character object302may be performed. For example, when specific character object302is selected by being touched (a sixth user operation input), a moving speed of specific character object302toward item object312or322that is present at the time of selection or will be present in a prescribed period after selection may be increased. Alternatively, the first range, the second range, or prescribed proximity relation set for specific character object302may be set to be larger than when the specific character object is not selected. Alternatively, specific character object302may be moved toward item object312or322regardless of the first range or the second range. In order to inform the user of which specific character object302has been selected or the fact of selection of character object302, a prescribed notification function may be performed or specific character object302may take a prescribed action. Simultaneously or alternatively, processing for not allowing character object302other than specific character object302to acquire item object312or322or processing for making acquisition by character object302other than specific character object302difficult may be performed.

A single type of character object302does not have to be provided but many types of character objects may be provided. At this time, an effect at the time of acquisition of item object312or322may be different or identical depending on the type of character objects302.

Though a scheme in which initial area308defined by virtual boundary line306is provided and second movement controller201performs first feeding control processing or second feeding control processing based on whether or not the touch position moves beyond virtual boundary line306is described above, initial area308does not have to be provided. For example, a scheme in which second movement controller201performs first feeding control processing or second feeding control processing based on whether or not the touch position corresponds to the initial position, that is, the touch position has moved from the initial position, may be applicable.

Though a scheme for performing second feeding control processing when a prescribed period or longer has elapsed since the touch position moved beyond virtual boundary line306is described above, limitation as such is not intended. For example, second feeding control processing may be performed when a duration of touching exceeds a prescribed period, regardless of virtual boundary line306. Alternatively, second feeding control processing may be performed when the touch position does not move for a period longer than a prescribed period while touching is continued (including an example in which the touch position substantially does not move) regardless of whether or not the touch position moves beyond virtual boundary line306. In addition, second feeding control processing may be performed depending on a prescribed condition or input, without being limited to the duration of touching.

Though a scheme in which first movement controller200moves character object302toward item object312or322when positional relation between character object302and item object312or322satisfies prescribed relation (being within the first or second range by way of example) is described above, another condition may be added. By way of example, a state of character object302may be added as the condition. For example, when the level of character object302cannot be increased from the current level, character object302may be not allowed to move toward the item object.

Though an example in which first movement controller200can move all character objects302toward item object322while item object322is located on the game field is also described above, also similarly in this case, at least one of character objects302may be not allowed to move.

Though an example in which the first range and the second range with item object312or322being defined as the reference are different between the first feeding scheme and the second feeding scheme is described above, the first range and the second range may be set with character object302being defined as the reference. Alternatively, the first range and the second range may be set individually for each character object302. The second individual range set for each character object may be larger than the first range.

Though a scheme in which first movement controller200moves character object302toward item object312or322when positional relation between character object302and item object312or322satisfies prescribed relation (being within the first or second range by way of example) is described above, a scheme in which the range is not set is also applicable. For example, first movement controller200may determine whether or not to move the character object toward item object312or322based on various conditions such as preference of character object302, an attribute of the item object, or a state of the game field and carry out movement control based on the determination. When an identical condition is satisfied, even character object302more distant from item object322in the second feeding scheme than from item object312in the first feeding scheme may be moved.

Though an example in which prescribed proximity relation for acquiring an item object is based on a condition identical in the first feeding scheme and the second feeding scheme is described above, without being limited as such, the condition may be different. For example, a range of acquisition may be different.

Though the virtual three-dimensional space is described above, the virtual two-dimensional space is also similarly applicable.

Though an example in which an item object is moved over the first virtual plane by means of the first feeding unit and an item object is moved over the second virtual plane by means of the second feeding unit is described above, processing for controlling movement of an item object by using different virtual planes is applicable also to an application other than the game in which an item object is fed to a character object in the present example. For example, the processing is applicable also to a game in which an item object is ejected or arranged in the game field regardless of a character object.

While certain example systems, methods, devices, and apparatuses have been described herein, it is to be understood that the appended claims are not to be limited to the systems, methods, devices, and apparatuses disclosed, but on the contrary, are intended to cover various modifications and equivalent arrangements included within the spirit and scope of the appended claims.

Claims

- An information processing system: a memory and at least one processor configured to perform operations comprising: arranging a plurality of first objects on a field;setting a first position on the field based on a first user operation, the first position being a position where one of the plurality of first objects that satisfies first positional relation with the position is caused to acquire a second object;moving the first position on the field based on a second user operation;canceling the first position based on a third user operation;decreasing an amount of the second object possessed by a user based on acquisition by one of the plurality of first objects;canceling the first position when the decreased amount of the second object satisfies a prescribed condition;and maintaining the first position when the decreased amount of the second object does not satisfy the prescribed condition.

- The information processing system according to claim 1, wherein the prescribed condition is that the amount of the second object is zero.

- The information processing system according to claim 1, wherein a third object indicating the second object is displayed at the first position.

- The information processing system according to claim 1, wherein the plurality of first objects are moved toward the first position.

- The information processing system according to claim 4, wherein one of the plurality of first objects that cannot acquire the second object is not moved toward the first position.

- The information processing system according to claim 1, wherein the first user operation is a touch operation, the second user operation is an operation to move a touch position while the touch operation is maintained, and the third user operation is a cancellation operation to cancel the touch operation.

- The information processing system according to claim 1, wherein based on a fourth user operation, a third object indicating the second object is arranged on the field and the amount of the second object possessed by the user is decreased.

- The information processing system according to claim 7, wherein one of the plurality of first objects that satisfies second positional relation with the arranged third object is caused to acquire the second object.

- The information processing system according to claim 8, wherein the plurality of first objects are moved toward the arranged third object.

- A method of controlling an information processing system comprising: arranging a plurality of first objects on a field;setting a first position on the field based on a first user operation, the first position being a position where one of the plurality of first objects that satisfies first positional relation with the position is caused to acquire a second object;moving the first position on the field based on a second user operation;canceling the first position based on a third user operation;decreasing an amount of the second object possessed by a user based on acquisition by one of the plurality of first objects;canceling the first position when the decreased amount of the second object satisfies a prescribed condition;and maintaining the first position when the decreased amount of the second object does not satisfy the prescribed condition.

- The method of controlling an information processing system according to claim 10, wherein the prescribed condition is that the amount of the second object is zero.

- The method of controlling an information processing system according to claim 10, further comprising displaying a third object indicating the second object at the first position.

- The method of controlling an information processing system according to claim 10, further comprising moving the plurality of first objects toward the first position.

- The method of controlling an information processing system according to claim 13, further comprising not moving one of the plurality of first objects that cannot acquire the second object toward the first position.

- The method of controlling an information processing system according to claim 10, wherein the first user operation is a touch operation, the second user operation is an operation to move a touch position while the touch operation is maintained, and the third user operation is a cancellation operation to cancel the touch operation.

- The method of controlling an information processing system according to claim 10, further comprising: arranging a third object indicating the second object on the field based on a fourth user operation;and decreasing the amount of the second object possessed by the user.

- The method of controlling an information processing system according to claim 16, further comprising causing one of the plurality of first objects that satisfies second positional relation with the arranged third object to acquire the second object.

- The method of controlling an information processing system according to claim 17, further comprising moving the plurality of first objects toward the arranged third object.

- A non-transitory computer readable storage medium having an information processing program stored thereon, the information processing program being executable by at least one computer to perform operations comprising: arranging a plurality of first objects on a field;setting a first position on the field based on a first user operation, the first position being a position where one of the plurality of first objects that satisfies first positional relation with the position is caused to acquire a second object;moving the first position on the field based on a second user operation;canceling the first position based on a third user operation;decreasing an amount of the second object possessed by a user based on acquisition by one of the plurality of first objects;canceling the first position when the decreased amount of the second object satisfies a prescribed condition;and maintaining the first position when the decreased amount of the second object does not satisfy the prescribed condition.

- An information processing apparatus: a memory and at least one processor configured to perform operations comprising: arranging a plurality of first objects on a field;setting a first position on the field based on a first user operation, the first position being a position where one of the plurality of first objects that satisfies first positional relation with the position is caused to acquire a second object;moving the first position on the field based on a second user operation;canceling the first position based on a third user operation;decreasing an amount of the second object possessed by a user based on acquisition by one of the plurality of first objects;canceling the first position when the decreased amount of the second object satisfies a prescribed condition;and maintaining the first position when the decreased amount of the second object does not satisfy the prescribed condition.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.