U.S. Pat. No. 12,138,539

INTERACTIONS BETWEEN CHARACTERS IN VIDEO GAMES

AssigneeSQUARE ENIX LTD.

Issue DateApril 27, 2022

Illustrative Figure

Abstract

A computer-readable recording medium including a program which is executed by a computer apparatus to provide a video game comprising a virtual game world presented to a player of the video game, and a plurality of characters in the virtual game world including a first character, and a second character controlled by the player, the program causing the computer apparatus to function as: a state indicator generating unit configured to generate a state indication, wherein the state indication is an indication of an emotional or physical state of the first character; and a coupling indicator generating unit configured to generate a coupling indication, wherein the coupling indication is an indication of a coupling occurring between the emotional or physical state of the first character and an emotional or physical state of the second character.

Description

DETAILED DESCRIPTION OF PREFERRED EMBODIMENTS The present embodiments represent the best ways known to the Applicant of putting the invention into practice. However, they are not the only ways in which this can be achieved. Embodiments of the present invention provide an emotional or physical state coupling routine that is incorporated within a video game. The video game is provided as a computer program. The computer program may be supplied on a computer-readable medium (e.g. a non-transitory computer-readable recording medium such as a CD or DVD) having computer-readable instructions thereon. Alternatively, the computer program may be provided in a downloadable format, over a network such as the Internet, or may be hosted on a server. With reference toFIG.1, the video game program may be executed on a video game apparatus10, such as a personal computer or a video game console. The video game apparatus10comprises a display screen12on which the video game is displayed, and a control unit14which typically includes at least a Central Processing Unit (CPU), a Read Only Memory (ROM) and a Random Access Memory (RAM). The control unit14may also include a Graphics Processing Unit (GPU) and a sound processing unit. The display screen12and the control unit14may be provided in a common housing, or may be separate connected units. The video game apparatus10also includes one or more user input devices by which the user can control a player character in the game. Such a user input device may comprise, for example, a mouse, a keyboard, a hand-held controller (e.g. incorporating a joystick and/or various control buttons), or a touchscreen interface integral with the display screen12(e.g. as in the case of a smartphone or a tablet computer). The video game apparatus10may be connected to a network such as the Internet, or may be stand-alone apparatus that is not connected to ...

DETAILED DESCRIPTION OF PREFERRED EMBODIMENTS

The present embodiments represent the best ways known to the Applicant of putting the invention into practice. However, they are not the only ways in which this can be achieved.

Embodiments of the present invention provide an emotional or physical state coupling routine that is incorporated within a video game. The video game is provided as a computer program. The computer program may be supplied on a computer-readable medium (e.g. a non-transitory computer-readable recording medium such as a CD or DVD) having computer-readable instructions thereon.

Alternatively, the computer program may be provided in a downloadable format, over a network such as the Internet, or may be hosted on a server.

With reference toFIG.1, the video game program may be executed on a video game apparatus10, such as a personal computer or a video game console. The video game apparatus10comprises a display screen12on which the video game is displayed, and a control unit14which typically includes at least a Central Processing Unit (CPU), a Read Only Memory (ROM) and a Random Access Memory (RAM). The control unit14may also include a Graphics Processing Unit (GPU) and a sound processing unit. The display screen12and the control unit14may be provided in a common housing, or may be separate connected units. The video game apparatus10also includes one or more user input devices by which the user can control a player character in the game. Such a user input device may comprise, for example, a mouse, a keyboard, a hand-held controller (e.g. incorporating a joystick and/or various control buttons), or a touchscreen interface integral with the display screen12(e.g. as in the case of a smartphone or a tablet computer). The video game apparatus10may be connected to a network such as the Internet, or may be stand-alone apparatus that is not connected to a network.

Alternatively, with reference toFIG.2, the video game program may be executed within a network-based video game system20. The video game system20comprises a server device22, a communication network24(e.g. the Internet), and a plurality of user terminals26operated by respective users. The server device22communicates with the user terminals26through the communication network24. Each user terminal26may comprise a network-connectable video game apparatus10as described above, such as a personal computer or a video game console, or a smartphone, a tablet computer, or some other suitable piece of user equipment. The video game program may be executed on the server22, which may stream user-specific game content (e.g. video in real time) to each of the plurality of user terminals26. At each user terminal the respective user can interact with the game and provide input that is transmitted to the server22, to control the progress of the game for the user. Alternatively, for a given user, the video game program may be executed within the respective user terminal26, which may interact with the server22when necessary.

In either case, the video game progresses in response to user input, with the user input controlling a player character. The user's display screen may display the player character's field of view in the game world in a “first-person” manner, preferably in three dimensions, and preferably using animated video rendering (e.g. photorealistic video rendering), in the manner of a virtual camera.

Alternatively, the user's display screen may display the player character and other objects or characters in the game world in a “third-person” manner, again preferably in three dimensions, and preferably using animated video rendering (e.g. photorealistic video rendering), in the manner of a virtual camera.

FIG.3is a block diagram showing the configuration of the video game apparatus10shown inFIG.1, in the case of the game being executed on such apparatus. It will be appreciated that the contents of the block diagram are not exhaustive, and that other components may also be present.

As illustrated, the control unit14of the video game apparatus10includes an input device interface102to which an input device103(e.g. a mouse, a keyboard or a hand-held controller, e.g. incorporating a joystick and/or various control buttons, as mentioned above) is connected, a processor (e.g. CPU)104, and an image generator (e.g. GPU)111to which a display unit12is connected.

The control unit14also includes memory (e.g. RAM and ROM)106, a sound processor107connectable to a sound output device108, a DVD/CD-ROM drive109operable to receive and read a DVD or CD-ROM110(both being examples of a computer-readable recording medium), a communication interface116connectable to the communication network24(e.g. the Internet), and data storage means115via which data can be stored on a storage device (either within or local to the video game apparatus10, or in communication with the control unit14via the network24). For a stand-alone (not network connected) video game apparatus, the communication interface116may be omitted.

The video game program causes the control unit14to take on further functionality of a user interaction indication generating unit105, a virtual camera control unit112, a state indicator generating unit113, and a coupling indicator generating unit114.

An internal bus117connects components102,104,105,106,107,109,111,112,113,114,115and116as shown.

FIG.4is a block diagram showing the configuration of the server apparatus22shown inFIG.2, in the case of the game being executed within a network-based video game system. It will be appreciated that the contents of the block diagram are not exhaustive, and that other components may also be present.

As illustrated, the server apparatus22includes a processor (e.g. CPU)204, and an image generator (e.g. GPU)211, memory (e.g. RAM and ROM)206, a DVD/CD-ROM drive209operable to receive and read a DVD or CD-ROM210(both being examples of a computer-readable recording medium), a communication interface216connected to the communication network24(e.g. the Internet), and data storage means215via which data can be stored on a storage device (either within or local to the server apparatus22, or in communication with the server apparatus22via the network24).

The video game program causes the server apparatus22to take on further functionality of a user interaction indication generating unit205, a virtual camera control unit212, an emotion indicator generating unit213, and an emotion coupling indicator generating unit214.

An internal bus217connects components204,205,206,209,211,212,213,214,215and216as shown.

Via the communication interface216and the network24, the server apparatus22may communicate with a user terminal26(e.g. video game apparatus10) as mentioned above, during the course of the video game. Amongst other things, the server apparatus22may receive user input from the input device103of the video game apparatus10, and may cause video output to be displayed on the display screen12of the video game apparatus10.

State Indication and Coupling Indication Generation

In accordance with the present disclosure a video game comprises a virtual game world, and an indication of an emotional or physical state of a first character in the virtual game world is generated. Also generated is an indication of a coupling between the emotional or physical state of the first character and an emotional or physical state of a second, player-controlled, character.

It will be appreciated that whilst a character in a video game does not actually experience emotions as such, from the perspective of the player it is as if the characters are fully-fledged emotional beings. The emotional state of a character in the virtual game world is a useful storytelling device, in a manner analogous to that of the expression of a human actor in a play. For example, a character may appear angry, sad, happy, joyful, or any other emotion that can be represented in a video game—such as, but not limited to, fear, anxiety, surprise, love, remorse, guilt, paranoia or disgust. Similarly, whilst a character in a video game does not have a physical state as such, it will be appreciated that this term refers to a physical state assigned to the character by the video game program. The physical state experienced by a character may affect the interactions between the character and the virtual game world. For example, a physical state of a character may be drunkenness, visual impairment, cognitive impairment, mobility impairment, deafness, temperature (e.g. feeling hot or cold), pain, or any other physical state that can be represented in a video game.

In the present embodiments the first character may be described as a non-player character. However, as will be described in more detail below, the first character may instead be a character controlled by another player.

It will be appreciated that a so-called ‘non-player character’ is a computer-controlled character and that a ‘player character’ is a character controlled by a player using a compatible user input device, such as the input device103illustrated inFIG.3.

In the following description and the accompanying drawings, the term ‘non-player character’ may be abbreviated as ‘NPC’, and the term ‘player character’ may be abbreviated as ‘PC’.

As will be described in more detail below, the present disclosure provides a computer-readable recording medium including a program which is executed by a computer apparatus to provide a video game comprising a virtual game world presented to a player of the video game, and a plurality of characters in the virtual game world including a first character, and a second character controlled by the player. With reference in passing to the procedural flow diagram ofFIG.24, the program causes the state indicator generating unit113/213to generate2301a state indication, wherein the state indication is an indication of an emotional or physical state of the first character. The program also causes the coupling indicator generating unit114/214to generate2302a coupling indication, wherein the coupling indication is an indication of a coupling occurring between the emotional or physical state of the first character and an emotional or physical state of the second character.

Further, in some embodiments, and as will be discussed in greater detail below, the program may cause the interaction indication generating unit105/205to generate an indication that the player may provide user input to initiate coupling of the first character to the second character.

Moreover, in some embodiments, and as also discussed in greater detail below, the program may cause the perception indication unit117/217to generate an indication of an entity perceived by the first character.

First Embodiment

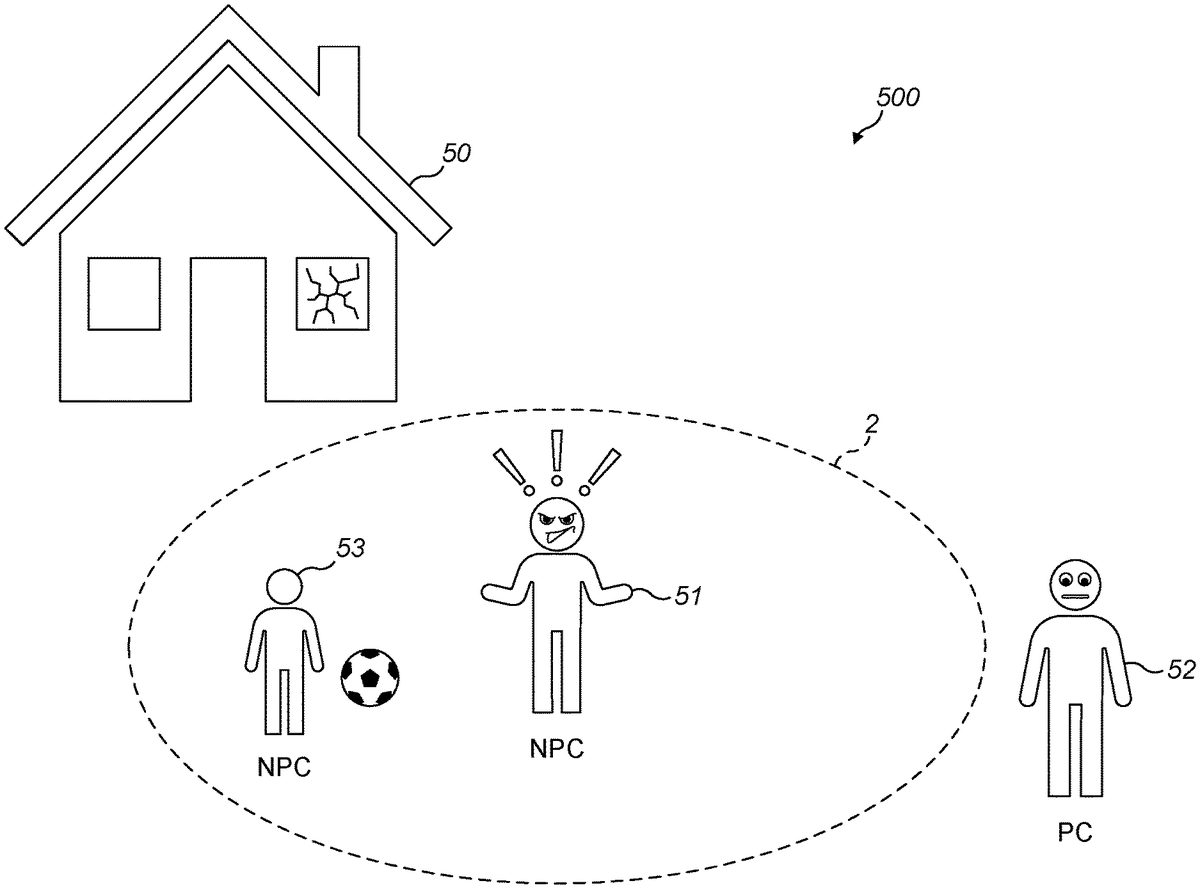

FIGS.5and6schematically show some exemplary in-game content (as would be presented on-screen to a user), namely a virtual game world500comprising an adult NPC51, a small child NPC53, a player character52, and a house50with a broken window. In this scenario, the adult NPC51is angry that the small child NPC53has broken the window of the house whilst playing with a ball. InFIG.5, the player character52is initially standing outside of a boundary2indicated by the dashed line. InFIG.6, the player character has moved inside of the boundary2.

In practice, a scene such as that illustrated inFIGS.5and6(and likewise in subsequent figures) may be generated by the image generator111/211and virtual camera control unit112/212, under the control of processor104/204(seeFIGS.3and4). It will of course be appreciated that, in the present figures, black and white line drawings are used to represent what would typically be displayed to the user as rendered video (preferably photorealistic video rendering) in the game.

The boundary2represents a predetermined threshold distance around the adult NPC51. In accordance with the present disclosure, and as will be described in more detail below, when the player character52crosses the boundary2to become closer than the predetermined distance from the adult NPC51, the emotional state of the player character52becomes coupled to the emotional state of the adult NPC51, such coupling being controlled by the processor104/204.

Whilst the boundary2is illustrated inFIGS.5and6as being generally circular, it will be appreciated that the predetermined threshold distance may depend on the direction from which the player character52approaches the NPC51(in such cases, the boundary would not be represented by a circle, but by another shape, and possibly by an irregular shape). It will also be appreciated that the boundary may in fact correspond to boundary in three-dimensional space, in which case the boundary may be represented by, for example, a sphere or hemisphere around the adult NPC51.

Whilst the boundary2is shown inFIGS.5and6(and some of the later figures) to help clarify the concept of the predetermined threshold distance, it will be appreciated that the boundary2need not necessarily be presented to the player. In other words, the boundary2need not necessarily be shown on the screen. However, if the boundary2is to be shown on the screen then the coupling indicator generating unit114/214is configured to generate a graphical indication of the predetermined threshold distance in the virtual game world, that is visible on the user's screen.

Although, in the figures, exclamation mark symbols are shown by the heads of certain characters to highlight that those characters are experiencing an emotion of some kind, it will be appreciated that such symbols need not be used in the game itself.

To recap, in the virtual game world500ofFIGS.5and6, the small child NPC53has broken a window of the house50with a ball, causing the nearby adult NPC51to become angry. Initially, as shown inFIG.5, the player character52is outside of the boundary2, and so the player character52is further than the predetermined threshold distance from the adult NPC51. Therefore, in the situation illustrated inFIG.5, the emotional state of the player character52is not coupled to the emotional state of the adult NPC51.

FIG.6shows a development of the situation illustrated inFIG.5in which the player character52is now inside the boundary2, and is closer than the predetermined threshold distance from the adult NPC51. As a result, the emotional state of the player character52is now coupled to the emotional state of the adult NPC51. Since the adult NPC51is angry, the player character52also becomes angry, mirroring the emotional state of the adult NPC51.

In the embodiment illustrated inFIGS.5and6, the emotional state of the adult NPC51is indicated by a facial expression of the adult NPC51. Similarly, the emotional state of the player character52is indicated by the facial expression of the player character52. Other methods of indicating the emotional state of a player character52or NPC51will be described in more detail below.

FIG.7is a procedural flow diagram of an emotional state coupling routine according to the first embodiment.

In step701, the routine causes the state indicator generating unit113/213to generate an indication of an emotional state experienced by a character. For example, as shown inFIGS.5and6, the indication of the emotional state may comprise, but is not limited to, a particular facial expression.

In step702, the routine causes the coupling indicator generating unit114/214to evaluate the distance between the player character52and the character experiencing the emotional state51. In the example illustrated inFIGS.5and6, the adult NPC51is the character experiencing the emotional state (the emotional state of anger), and so the distance between the player character52and the adult NPC51is evaluated. It will of course be appreciated that the evaluated distance corresponds to a distance in the virtual game world, and may be evaluated using any suitable method, such as by performing a calculation using a coordinate system within the virtual game world.

In step703, the routine causes the coupling indicator generating unit114/214to determine whether the distance evaluated in step702is less than a threshold distance. In this embodiment, the threshold distance is a predetermined threshold distance stored in the data storage means115/215. For example, the threshold distance may be determined in advance by the game designer.

If the result of the determination in step703is that the evaluated distance is greater than or equal to the threshold distance, then the routine proceeds along the path marked ‘No’, and the processing returns to step702. It will be appreciated that, in this case, the routine may wait for a short period of time (e.g. half a second) before causing the coupling indicator generating unit114/214to re-evaluate the distance between the player character52and the character experiencing the emotional state51, in order to avoid continuously performing the evaluation of step702, which may otherwise cause excessive processing load within the control unit14or server22.

If the result of the determination in step703is that the evaluated distance is less than the threshold distance, then the routine proceeds along the path marked ‘Yes’, and the processing proceeds to step704.

In step704, the routine causes the processor104/204to couple the emotional state of the player character52to the indicated emotional state. In the example illustrated inFIG.6, the emotional state of the player character52is coupled to the emotional state of the adult NPC51, such that the player character52becomes angry, mirroring the emotional state of the adult NPC51.

FIG.8shows a modification ofFIG.6in which an indication of the coupling between the emotional state of the player character52and the emotional state of the NPC51is additionally generated. In this example, the indication comprises a glow effect58around the NPC51, and a corresponding glow effect59around the player character52. Various methods of providing an indication of a coupling between two characters in the virtual game world will be described in more detail below. Beneficially, in this example, the player is provided with an indication that the emotional state of the player character52is coupled to the emotional state of the NPC51, and so the player is able to understand that the change in the emotional state of the player character52is due to this coupling. Whilst in the example ofFIG.8the indication of the coupling is shown around both the NPC51and the player character52, it will be appreciated that the coupling may instead be shown only around the player character52, or only around the NPC51. Moreover, the indication need not necessarily be a graphical indication. For example, the indication may comprise a sound.

Second Embodiment

FIGS.9and10show some further exemplary in-game content (as would be presented on-screen to a user), namely a virtual game world800comprising an NPC81, a player character82, and a tree80. In this case, the NPC81has an anxious expression, as the NPC81is perceiving there to be a monster83in the tree80(seeFIG.10). Within the context of the game, the monster83is in the mind of the NPC81and, although perceived as ‘real’ from the point of view of the NPC81and thereby causing the NPC81to be anxious, is not real from the point of view of the player character82or any other character in the virtual game world.

As shown inFIG.9, the monster83is not initially visible to the user, and only becomes visible to the user once the player character82has coupled with the NPC81, as shown inFIG.10—upon which the player character82then sees the game world (including the monster83) as though through the eyes of the NPC81. Throughout this process, what is presented to the user on-screen corresponds to what the player character82sees (including what the player character82sees as though through the eyes of the NPC81).

Thus, inFIG.9the player character82is initially standing outside of a boundary2indicated by the dashed line. InFIG.10, the player character82has moved inside of the boundary2, thereby coupling with the NPC81and causing the imaginary monster83to be revealed. The boundary2represents a predetermined threshold distance around the NPC81.

In accordance with the present disclosure, when the player character52crosses the boundary2to become closer than the predetermined distance from the adult NPC51, the player character52becomes coupled to the NPC51and an indication of an entity (e.g. monster83) perceived by the NPC81is generated by the perception indication unit117/217. As in the illustrated example, the entity perceived by the NPC51may be an entity perceived visually by the NPC51that is not actually a ‘real’ entity in the virtual game world. For example, if the NPC81is hallucinating, the NPC81may perceive an entity that is not perceived by other characters in the virtual game world under normal conditions.

Further,FIG.10shows a development of the situation ofFIG.9in which the player character82is now inside the boundary2, and is closer than the predetermined threshold distance from the adult NPC51. As a result, the player character82becomes coupled to the NPC81, and an indication83of the entity (monster83) perceived by the NPC81is generated (i.e. revealed) by the perception indication unit117/217.

To recap, in this example, the monster83is not considered to be a ‘real’ entity in the game world, and is considered to exist only in the mind of the NPC81. In other words, the monster83exists in the imagination of the NPC81, and would not normally be perceived by the player character82or any other character in the virtual game world. However, when the player character82is coupled to the NPC81, the indication of the entity83perceived by the NPC81is generated, and so the player is able to identify the entity that is causing the NPC81to become anxious. In other words, the virtual game world presented to the player is modified by the perception indication unit117to include the entity perceived by the NPC81, once the player character82has coupled to the NPC81.

FIG.11is a procedural flow diagram of a coupling routine for generating an indication of an entity perceived by a character, according to the second embodiment.

In step1001, the routine causes the state indicator generating unit113/213to generate an indication of an emotional state experienced by the NPC81. For example, as shown inFIGS.9and10, the indication is the facial expression of the NPC81, indicating that the NPC81is anxious.

In step1002, the routine causes the coupling indicator generating unit114/214to evaluate the distance between the player character82and the character81experiencing the emotional state. In this example, the character experiencing the emotional state is the NPC81, who is experiencing the emotional state of anxiety. It will of course be appreciated that the evaluated distance corresponds to a distance in the virtual game world, and may be evaluated using any suitable method, such as by performing a calculation using a coordinate system within the virtual game world.

In step1003, the routine causes the coupling indicator generating unit114/214to determine whether the distance evaluated in step1002is less than a threshold distance. In this embodiment, the threshold distance is a predetermined threshold distance stored in the data storage means115/215. For example, the threshold distance may be determined in advance by the game designer.

If the result of the determination in step1003is that the evaluated distance is greater than or equal to the threshold distance, then the routine proceeds along the path marked ‘No’, and the processing returns to step1002. It will be appreciated that, in this case, the routine may wait for a short period of time (e.g. half a second) before causing the coupling indicator generating unit114/214to re-evaluate the distance between the player character82and the character experiencing the emotional state81, in order to avoid continuously performing the evaluation of step1002, which may otherwise cause excessive processing load within the control unit14or server22.

If the result of the determination in step1003is that the evaluated distance is less than the threshold distance, then the routine proceeds along the path marked ‘Yes’, and the processing proceeds to step1004.

In step1004, the routing causes the processor104/204to couple the player character82to the character experiencing the emotional state81, and the processing proceeds to step1005.

In step1005, an indication of an entity perceived by the character experiencing the emotional state is indicated. In the example illustrated inFIG.10, the indication is an indication of the monster83perceived by the NPC81.

FIG.12shows a variant ofFIG.10in which an indication of the coupling between the player character82and the NPC81is additionally generated. In this example, the indication comprises a glow effect110around the NPC81and a corresponding glow effect111around the player character82. Various methods of providing an indication of a coupling between two characters in the virtual game world will be described in more detail below. Beneficially, in this example, the player is provided with an indication that the player character82is coupled to the NPC81, and so the player is able to understand that the indication83of the monster has been generated due to this coupling.

FIG.13is a modified version of the procedural flow diagram ofFIG.11. Steps1201to1204correspond to steps1001to1004ofFIG.11, respectively, and will not be described again here. Similarly, step1206corresponds to step1005ofFIG.11.

In step1205, an indication of the coupling between the player character82and the NPC81experiencing the emotional state is generated. In the example shown inFIG.12, this indication comprises a graphical indication110around the NPC81and a corresponding graphical indication111around the player character82. Whilst in the example ofFIG.12the indication of the coupling is shown around both the NPC81and the player character82, it will be appreciated that the coupling may instead be shown only around the player character82, or only around the NPC81. Moreover, the indication need not necessarily be a graphical indication. For example, the indication may comprise a sound.

Third Embodiment

FIG.14shows two sequential scenes130,131of a virtual game world comprising an NPC134, a player character135and a briefcase132. The NPC134has an anxious expression.

In the first scene130, the player is able to determine that the NPC134is anxious, based on the expression on the face of the NPC134. The briefcase132is a ‘real’ object in the game world that can be seen by both the NPC134and the player character135.

In the second scene131, the player character135has become coupled to the NPC134, and as a result an indication133(e.g. a glow effect) has been generated around the briefcase132by the perception indication unit117/217. The player character135may become coupled to the NPC134as a result of a scripted or random event, or in response to any other suitable trigger, such as crossing a boundary or threshold as described above. The indication133identifies to the player that the briefcase is causing the NPC134to be anxious. For example, the characters134/135may be in an airport and the briefcase132may be a suspicious, unattended item of luggage, causing the NPC134to become anxious.

An indication (e.g.133) of an entity perceived by the NPC134may comprise more than a simple graphical indication around the entity. For example, the player may be provided with the thoughts of the NPC character regarding the entity. In this example, the player may hear a line of dialogue such as “That bag looks very suspicious. Perhaps I should alert the authorities.” The line of dialogue may be provided in text and/or audible form. In one example, the dialogue may be presented to the player when the player directs the virtual camera of the video game towards the briefcase132.

The indication133of the entity (e.g. briefcase132) perceived by the NPC134may indicate that the player may interact with the entity (e.g. briefcase132) in the virtual game world. For example, in scene131the indication may further or alternatively comprise a button prompt indicating that the user may open the briefcase132to inspect the contents of the briefcase.

In contrast to the example shown inFIG.10, the briefcase132causing the NPC134to be anxious is a ‘real’ object in the game world, as opposed to the monster83ofFIG.10which exists only in the virtual mind of the NPC81. Therefore, the briefcase132can be perceived by both the player character135and the NPC134, even before the player character135is coupled to the NPC134.

In this example, when the player character135is coupled to the NPC134, the emotional state of the player character135is coupled to the emotional state of the NPC134, in addition to the generation of the indication135identifying the briefcase132. However, it will be appreciated that this need not necessarily be the case, and that the indication135identifying the entity causing the NPC134to be anxious may be generated without the emotional or physical state of the player character135being coupled to that of the NPC134.

In other variants, the entity that is causing the first character to be emotionally affected may be a ‘real’ object within the game world, but one that is initially not visible to the player, e.g. due to being hidden or obscured by another object. In such cases the perception indication unit117/217may be configured to reveal the entity to the player. This may include applying some kind of on-screen highlighting to the entity, or moving the entity into a visible position, or making the other object that is obscuring the entity become at least partially transparent, thereby enabling the player to see said entity on-screen.

Coupling or State Indications

FIGS.15ato15dshow exemplary graphical indications that may be used to indicate the emotional or physical state of a character (i.e. a state indication) or, in the case ofFIGS.15a,15band15d, a coupling indication, as may be employed in the above-described embodiments.

FIG.15ashows an example of a so-called “visible aura” around a character. In this example the visible aura is in the form of a glow effect140. The glow effect140may be coloured, or may simply be an increase in the brightness of an area around the character. The glow effect140may also be displayed as a distortion effect around the character, such as a heat haze effect. Whilst inFIG.15athe glow effect140is shown entirely surrounding the character, this need not necessarily be the case, and alternatively it may only partially surround the character.

For example, when the effect is used to indicate a coupling of the player character52to the adult NPC51shown inFIGS.5and6, the effect may only surround a part of the player character52that is closest to the NPC51. For instance, if the player character52is standing outside of the boundary2representing the predetermined threshold distance, but the player character has an outstretched arm that extends inside of the boundary2, then only the part of the player character that is inside the boundary may be surrounded by the glow effect140(i.e. the part of the outstretched arm that is less than the predetermined threshold distance from the adult NPC51).

FIG.15billustrates a border141around the character. In one example, the border141may be a simple outline drawn around the character, and could be drawn using any suitable shape, such as a multi-pointed star, a circle, or such like.

FIG.15cshows a thought bubble142indicating that a character is sad. In this example, the thought bubble142comprises an image showing a sad expression on the face of a character. However, this need not necessarily be the case, and the thought bubble could instead, for example, comprise text indicating the emotional state of the character.

FIG.15dshows an indication143below the character. In this example, the indication is shown as a circle143below and surrounding the character (e.g. projected on the ground). The indication143may correspond to, for example, a change in brightness, a change in colour, or a distortion effect.

Graphical indications of the form shown inFIGS.15a,15band15dmay be used to indicate that a player-controlled character is coupled to an NPC (such as the coupling indicated by arrow A inFIG.6, or indicated by arrow B inFIGS.10and12), or to indicate an entity perceived by a character in the virtual game world (such as the monster83illustrated in the tree80ofFIG.12, or the briefcase132ofFIG.14). Such graphical indications may be applied to either the player character or the NPC, or both, to indicate that they are coupled.

FIGS.16aand16bshow an example in which a player character155is closer than a predetermined threshold distance (indicated by the boundary2) from an NPC154, and the player character155has therefore coupled to the NPC154. The coupling between the NPC154and the player character155is indicated by the graphical indication150around the NPC154and a corresponding graphical indication151around the player character155. In this example, as the player character155moves within the boundary, the graphical indications150,151are modified based on the distance between the NPC154and the player character155.

More particularly, as shown inFIG.16a, the NPC154and the player character155are initially separated by a distance D1in the virtual game world, indicated by the arrow. The NPC154and the player character155are surrounded by corresponding graphical indications150,151of a first size.

In the development illustrated inFIG.16b, the NPC154and the player character155ofFIG.16ahave moved closer together, and are now separated by a distance D2in the virtual game world (smaller than distance D1) indicated by the arrow. As a result of the decrease in distance between the NPC154and the player character155, the size of the graphical indications152,153around the NPC154and player character155have been increased.

The increased size of the graphical indications152,153inFIG.16bmay simply be a visual aid to reinforce the fact that the characters are coupled. Alternatively, in other variants, the increased size of the graphical indication may denote an increased strength of coupling, which for example enables the player character155to perceive greater insight into the emotions of the NPC154.

In variants in which the coupling indication or state indication comprises a sound, the sound may increase in volume as the distance between the player character155and the NPC154decreases. Similar to the above example, such an increase in volume may simply be to reinforce the fact that the characters are coupled, or may denote an increased strength of coupling, as outlined above.

FIG.17shows two sequential scenes160,161of a virtual game world comprising an NPC163and player character164. The NPC163has an expression indicating that the NPC is angry. In scene160the player character164is standing outside of a boundary2that indicates a predetermined threshold distance around the NPC163.

In scene161, the player character164is now closer than the predetermined threshold distance from the NPC163and so the emotional state of the player character164has become coupled to that of the NPC163, as indicated by arrow E. In this example, a sound162is generated to indicate the coupling between the emotional states.

It will be appreciated that the indication that the emotional state of the player character164is coupled to the emotional state of the NPC163need not necessarily be restricted to only a sound or only a graphical indication, but may instead comprise a combination of sound(s) and graphical indication(s). For example, as shown in scene161, the angry expression on the face of the player character164is a graphical indication of the coupling, and the sound162is an audible indication of the coupling.

FIG.18shows an illustrative example of an indication lookup table, to which the routines of certain embodiments may refer. The first column of the table indicates a threshold distance between a player character and a character experiencing an emotional state. For example, the distance may correspond to the distance between the NPC154and the player character155illustrated inFIGS.16aand16b. The second column of the table shows an indication type that is associated with the distance in the same row, and the third column indicates a corresponding intensity of the indication. The intensity of a graphical indication may correspond to the size, brightness or colour of the indication. The intensity of an audible indication may correspond to a volume of the indication.

In the example shown inFIG.18, distances D1to D5are such that:D1>D2>D3>D4>D5

The table indicates that an indication of a first type, “1 (glow, no audio)”, having an intensity of 1, is associated with a threshold distance D1. Similarly, the table indicates that an indication of a first type “1 (glow, no audio)”, having an intensity of 2, is associated with a threshold distance D2. When the player character is at a distance that is less than or equal to distance D1, but greater than distance D2, a determination is made to generate an indication of the first type (glow, no audio) and having an intensity of 1. When the player character is at a distance that is less than or equal to distance D2and greater than distance D3, a determination is made to generate an indication of the first type (glow, no audio) and having an intensity of 2. Corresponding determinations may be made based on distances D3and D4. Since in this example D5is the shortest distance in the table, the corresponding indication (of the second type, having an intensity of 2) is generated simply when the distance between the player character and the NPC is smaller than distance D5.

FIG.19depicts a variant of the situation illustrated inFIGS.5and6, and shows two sequential scenes180,181of a virtual game world comprising an NPC183and player character184. The NPC183has an expression indicating that the NPC183is angry.

In scene180, an indication182that the player may provide user input to initiate a coupling between an emotional state of the player character184and an emotional state of the NPC183is also shown. In this example, the indication182is a graphical indication comprising a button prompt, generated by the user interaction indication generating unit105/205, indicating that the player may press a particular button or key on an input device (such as input device103illustrated inFIG.3) to initiate the coupling.

More generally, the user interaction indication generating unit105/205may be configured to generate an indication that the player may provide user input to initiate coupling of the emotional or physical state of the player character184to the emotional or physical state of another character (e.g. NPC183).

In scene181, the player has pressed the button indicated by the button prompt182, and as a result the emotional state of the player character184has become coupled to that of the NPC183, as indicated by arrow F. Therefore, the player character184has become angry, mirroring the emotional state of the NPC183.

FIG.20depicts a variant of the situation illustrated inFIGS.9and10, and shows two sequential scenes190,191of a virtual game world comprising an NPC194and player character195. The NPC194has an expression indicating that the NPC183is scared.

In scene190, an indication196that the player may provide user input to couple the player character195to the NPC194in order to generate an indication of an entity193perceived by the NPC194is also shown. In this example, the indication196is a graphical indication comprising a button prompt, generated by the user interaction indication generating unit105/205, indicating that the player may press a particular button or key on an input device (such as input device103illustrated inFIG.3) to initiate the coupling.

In scene191, the player has pressed the button indicated by the button prompt196, and as a result the player character195has become coupled to the NPC194, as indicated by arrow F, and the indication of an entity193perceived by the NPC194has been generated by the perception indication unit117/217. In the example shown inFIG.20, the entity perceived by the NPC194is the monster193in the tree192.

In the cases ofFIGS.19and20, the indication that the player may provide user input to couple the player character to the NPC is by means of a visual (on-screen) button prompt (e.g.182and196). However, in alternative embodiments the indication may take the form of a sound.

Behavioural Coupling

FIG.21shows a further example of a coupling of an emotional state of a player character203to an emotional state of an NPC202. InFIG.21, two sequential scenes200,201of a virtual game world comprising an NPC202and a player character203are shown. In scene200, the NPC202is experiencing an emotional state of anger, and is speaking a line of dialogue204. The emotional state of the NPC202may be reflected in the voice acting provided for the line of dialogue204.

In scene201, the emotional state of the player character203has become coupled to the emotional state of the NPC202. As a result, the player character203has become angry, mirroring the emotional state of the NPC202. Moreover, in this example, the coupling also causes the player character203to mirror the speech of the NPC204. Therefore, the player character203speaks a line of dialogue205based on the line of dialogue204spoken by the NPC202. For example, the player character203may speak exactly the same line of dialogue spoken by the NPC202. The voice actor for the player character203may deliver the line of dialogue in substantially the same manner as that of the NPC204.

FIG.22shows an example of a coupling between an action performed by an NPC212and an action performed by a player character213. InFIG.22, two sequential scenes210,211of a virtual game world comprising an NPC212and a player character213are shown. In scene210, the NPC212is experiencing an emotional state of anger, and is gesturing in an angry manner. For example, the NPC212may be angrily shaking his or her fist.

In scene211the emotional state of the player character213has become coupled to the emotional state of the NPC212. As a result, the player character213has become angry, mirroring the emotional state of the NPC212. Moreover, in this example, the coupling also causes the player character213to mirror an action performed by the NPC212. Therefore, the player character213performs the angry gesture made by the NPC212. For example, when the player character213is coupled to the NPC212, and the NPC212angrily waves a fist, the player character213also angrily waves a fist. The player may experience a total or partial loss of control over the player character213due to the coupling between the actions of the NPC212and the player character213.

Interactive Coupling

FIG.23shows (by means of four consecutive panels, A to D) an example of an interactive minigame with which the player may interact to modify the emotional or physical state of an NPC.

In panel A, an NPC220and a player character221are shown. The NPC220is experiencing an emotional state of anger, and the emotional state of the NPC220is coupled to the emotional state of the player character221as indicated by the arrow K.

In panel B, an interactive minigame222is shown. In one example, the minigame may be a puzzle or skill-based game.

By interacting with the minigame, the player is able to influence the emotional state of either the player character221or the NPC220. In this example, the player is able to influence the emotional state of the player character221by interacting with the minigame. Since the emotional state of the player character221is coupled to that of the NPC220, the change in the emotional state of the player character221caused by the player will then be reflected in the emotional state of the NPC220.

In other variants, the player's interaction with the minigame may directly influence the emotional state of the NPC220, irrespective of any effect on the emotional state of the player character221.

The minigame may be played with the NPC220as a co-player or opponent (but not necessarily so). For instance, the minigame may be a game that the NPC220gains pleasure from playing, or which has nostalgic significance for the NPC220, and thus playing the minigame has the effect of altering the emotional state of the NPC220.

In panel C, the player has successfully completed the minigame22and so the player character221has become less angry. As a result, as shown in panel D, the NPC220becomes less angry due to the coupling of the emotional state of the player character to the emotional state of the NPC220.

In another example, the minigame may simply comprise a selection of one of a number of dialogue options. For example, the NPC220may speak a line of dialogue, and the player may select a dialogue option for the player character221to speak in reply. Each of the dialogue options may result in a particularly positive or negative effect on the emotional state of the player character221or the NPC220, and in one example the player may be required to deduce which of the dialogue options will have the most positive effect based on the story of the video game.

Coupling of Physical States

The above described embodiments and examples have been described mainly by reference to an emotional state of the player character or the NPC. However, it should be appreciated that instead of coupling or indicating the emotional states of the player character and an NPC, the physical state of the characters may instead be indicated or coupled. The user interaction indication generating unit105/205may therefore be configured to generate an indication that the player may provide user input to initiate coupling of the physical state of the player character to the physical state of the NPC.

For example, with reference toFIG.5, adult NPC51may be experiencing a physical state such as pain, or may be experiencing a visual or cognitive impairment. When the player character52is inside the boundary2indicating the predetermined threshold distance, as shown inFIG.6, the physical state of the adult NPC51may couple to the physical state of the player character52. For example, when the NPC51is experiencing pain, the player character52may also experience that pain.

In other examples, when the physical state of the NPC51is coupled to the physical state of the player character52, the control of the player character by the player is affected. In one example the NPC51is experiencing a state of drunkenness, the player character52also experiences a state of drunkenness, and the player character becomes difficult to control. For example, the video game may simulate random inputs by the player, such that it is difficult for the player to control the player character to move in a straight line.

In another example, the coupling of the physical state of the player character52to the physical state of the NPC51affects the presentation of the virtual game world to the player. For instance, if the NPC51is experiencing visual impairment such as short-sightedness or blindness, the player character52may also experience the visual impairment due to the coupling of the physical state of the player character52to the physical state of the NPC51. As a result, the game world presented to the player may become blurred or darkened. Alternatively, if the NPC51is experiencing an auditory impairment such as deafness, the player character52may also experience the auditory impairment due to the coupling of the physical state of the player character52to the physical state of the NPC51. As a result, the audio (e.g. sounds and music from the virtual game world) played to the player may become distorted or may decrease in volume.

Summary

To summarise some of the main concepts from the present disclosure,FIG.24shows a procedural flow diagram of a coupling routine for generating an indication of a coupling between a player-controlled character and a character experiencing an emotional or physical state.

In step2301, an indication of an emotional or physical state experienced by a character is generated.

In step2302, an indication of a coupling occurring between a player-controlled character and the character experiencing the indicated emotional state is generated.

Modifications and Alternatives

Detailed embodiments and some possible alternatives have been described above. As those skilled in the art will appreciate, a number of modifications and further alternatives can be made to the above embodiments whilst still benefiting from the inventions embodied therein. It will therefore be understood that the invention is not limited to the described embodiments and encompasses modifications apparent to those skilled in the art lying within the scope of the claims appended hereto.

For example, in the above described embodiments and examples a coupling between a player character and another character has been described—e.g. the coupling of the emotional state of the player character to the emotional state of another character, or the coupling of the player character to another character to generate an indication of an entity perceived by the other character. It will be appreciated that these couplings may simply represent the natural empathy of the player character. Alternatively, the coupling could represent a supernatural ability of the player character to perceive or experience the emotions, thoughts and perceptions of other characters.

From the above, it will be appreciated that an emotional or physical state of a character, such as the emotional state of anger experienced by the non-player character51inFIGS.5and6, may be indicated (by a state indication generated by the state indicator generating unit113/213) in the virtual game world simply by the facial expression of the character. Alternatively, the emotional state of a character may be indicated by another type of state indication, such as (but not limited to) one of the graphical indications illustrated inFIGS.15ato15d. In one example, the state indication may be generated when the player character is closer than a predetermined threshold distance in the virtual game world to the other character. This predetermined threshold distance may be the same as, or different from, the predetermined threshold distance illustrated inFIGS.5and6, and may be used to determine whether the emotional or physical state of the player character is to be coupled to the emotional or physical state of the other character.

In the above described embodiments and examples the player character has been described as interacting with an NPC. However, the player character may alternatively interact in a similar manner with a character controlled by another human player. For example, the player may be provided with an indication of an emotional or physical state of the character controlled by the other player. In one example, each player may select one of a number of emotional or physical states to be assigned to their corresponding player character, and such emotional or physical states may then be shared with the player character of the other player by means of a coupling process as described herein.

Claims

- A non-transitory computer-readable recording medium storing instructions thereon, wherein the instructions when executed by one or more processors cause the one or more processors to: provide a video game comprising a virtual game world presented to a player of the video game, and a plurality of characters in the virtual game world including a first character that is not controlled by the player, and a second character controlled by the player;generate, for display, a state indication indicating an emotional or physical state of the first character;and generate, for display, a coupling indication indicating a coupling occurring between the emotional or physical state of the first character and an emotional or physical state of the second character when the second character is closer than a first predetermined threshold distance in the virtual game world from the first character;wherein the instructions cause the one or more processors to generate the state indication when the second character is closer than a second predetermined threshold distance in the virtual game world from the first character;and wherein the instructions cause the one or more processors to generate a first graphical indication of the first predetermined threshold distance in the virtual game world, a second graphical indication of the second predetermined threshold distance in the virtual game world, or both the first graphical indication and the second graphical indication.

- A non-transitory computer-readable recording medium storing instructions thereon, wherein the instructions when executed by one or more processors cause the one or more processors to: provide a video game comprising a virtual game world presented to a player of the video game, and a plurality of characters in the virtual game world including a first character that is not controlled by the player, and a second character controlled by the player;generate, for display, a state indication indicating an emotional or physical state of the first character;and generate, for display, a coupling indication indicating a coupling occurring between the emotional or physical state of the first character and an emotional or physical state of the second character;wherein the state indication comprises a graphical indication in the form of a visible aura that at least partially surrounds the first character;and wherein the visible aura increases in size or intensity as a distance between the first character and the second character decreases.

- A non-transitory computer-readable recording medium storing instructions thereon, wherein the instructions when executed by one or more processors cause the one or more processors to: provide a video game comprising a virtual game world presented to a player of the video game, and a plurality of characters in the virtual game world including a first character that is not controlled by the player, and a second character controlled by the player;generate, for display, a state indication indicating an emotional or physical state of the first character;and generate, for display, a coupling indication indicating a coupling occurring between the emotional or physical state of the first character and an emotional or physical state of the second character;wherein the state indication comprises a graphical indication in the form of a visible aura that at least partially surrounds the first character, and a corresponding graphical indication in the form of a visible aura that at least partially surrounds the second character;and wherein one or both of the visible auras increases in size or intensity as a distance between the first character and the second character decreases.

- A non-transitory computer-readable recording medium storing instructions thereon, wherein the instructions when executed by one or more processors cause the one or more processors to: provide a video game comprising a virtual game world presented to a player of the video game, and a plurality of characters in the virtual game world including a first character, and a second character controlled by the player;generate, for display, a state indication indicating an emotional or physical state of the first character;couple the emotional or physical state of the second character to the emotional or physical state of the first character when the second character is closer than a first predetermined threshold distance in the virtual game world from the first character;generate, for display, a coupling indication indicating the coupling between the emotional or physical state of the first character and the emotional or physical state of the second character when the second character is closer than a second predetermined threshold distance in the virtual game world from the first character;and generate a graphical indication of the first predetermined threshold distance or the second predetermined threshold distance in the virtual game world.

- A non-transitory computer-readable recording medium storing instructions thereon, wherein the instructions when executed by one or more processors cause the one or more processors to: provide a video game comprising a virtual game world presented to a player of the video game, and a plurality of characters in the virtual game world including a first character, and a second character controlled by the player;generate, for display, a state indication indicating an emotional or physical state of the first character, the state indication comprising a first visible aura that at least partially surrounds the first character and increases in size or intensity as a distance between the first character and the second character decreases;couple the emotional or physical state of the second character to the emotional or physical state of the first character when the second character is closer than a first predetermined threshold distance in the virtual game world from the first character;and generate, for display, a coupling indication indicating the coupling between the emotional or physical state of the first character and the emotional or physical state of the second character.

- The non-transitory computer-readable recording medium according to claim 5, wherein the coupling indication comprises a second visible aura that at least partially surrounds the second character and increases in size or intensity as the distance between the first character and the second character decreases.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.