U.S. Pat. No. 11,986,728

GAME PROCESSING METHOD AND RECORD MEDIUM

AssigneeKoei Tecmo Games Co Ltd

Issue DateMarch 29, 2022

Illustrative Figure

Abstract

A game processing method executed by an information processing device configured to perform transmission/reception of signals to/from a display part configured to be attachable to a head of a player to display a virtual image superimposed on an image in real space, the method includes causing the display part to display an image of a virtual object, adjusting at least one of a position or an orientation of the virtual object relative to the image in real space, based on an input of the player, causing the display part to display an image of a virtual game character so as to be arranged coinciding with the adjusted at least one of the position or the orientation of the virtual object, and hiding the image of the virtual object when the image of the game character is displayed.

Description

DETAILED DESCRIPTION OF THE EMBODIMENTS First to fourth embodiments of the present invention will now be described with reference to the drawings. Referring first toFIG.1, description will be given of an example of the overall configuration of a game system1common to the embodiments. As shown inFIG.1, the game system1includes an information processing device3, a game controller5, and a head mounted display7. The game controller5and the head mounted display7are each connected to the information processing device3so as to be capable of communicating (sending/receiving signals) by wire or wirelessly. The information processing device3is e.g. a non-portable game console. However, the information processing device3is not limited thereto and may be e.g. a portable game console integrally having an input part, a display part, etc. In addition to the game consoles, for example, the information processing device3may be ones e.g. manufactured and sold as computers such as e.g. a server computer, a desktop computer, a laptop computer, and a tablet computer, or may be ones e.g. manufactured and sold as telephones such as e.g. a smartphone, a cellular phone, and a phablet. By mounting the information processing device3onto the head mounted display7, the information processing device3and the head mounted display7may be integrally configured. In the case that the head mounted display7has the same function as the information processing device3does, the information processing device3may be excluded. The player performs various operation inputs using the game controller5. In the example shown inFIG.1, the game controller5includes e.g. a cross key9, a plurality of buttons10, a joystick11, a touch pad12, etc. The head mounted display7is a display device wearable on the user's head or face to implement so-called mixed reality (MR). The head mounted display7includes a transmissive display part13and displays thereon a virtual image in relation to a game generated by the information processing device3, superimposed on an ...

DETAILED DESCRIPTION OF THE EMBODIMENTS

First to fourth embodiments of the present invention will now be described with reference to the drawings.

Referring first toFIG.1, description will be given of an example of the overall configuration of a game system1common to the embodiments. As shown inFIG.1, the game system1includes an information processing device3, a game controller5, and a head mounted display7. The game controller5and the head mounted display7are each connected to the information processing device3so as to be capable of communicating (sending/receiving signals) by wire or wirelessly.

The information processing device3is e.g. a non-portable game console. However, the information processing device3is not limited thereto and may be e.g. a portable game console integrally having an input part, a display part, etc. In addition to the game consoles, for example, the information processing device3may be ones e.g. manufactured and sold as computers such as e.g. a server computer, a desktop computer, a laptop computer, and a tablet computer, or may be ones e.g. manufactured and sold as telephones such as e.g. a smartphone, a cellular phone, and a phablet.

By mounting the information processing device3onto the head mounted display7, the information processing device3and the head mounted display7may be integrally configured. In the case that the head mounted display7has the same function as the information processing device3does, the information processing device3may be excluded.

The player performs various operation inputs using the game controller5. In the example shown inFIG.1, the game controller5includes e.g. a cross key9, a plurality of buttons10, a joystick11, a touch pad12, etc.

The head mounted display7is a display device wearable on the user's head or face to implement so-called mixed reality (MR). The head mounted display7includes a transmissive display part13and displays thereon a virtual image in relation to a game generated by the information processing device3, superimposed on an image in real space.

Referring next toFIGS.2and3, an example of the schematic configuration of the head mounted display7will be described. As shown inFIG.2, the head mounted display7includes the display part13, a line-of-sight direction detection part15, a position detection part17, an audio input part19, an audio output part21, a manual operation input part23, an information acquisition part25, and a control part27.

The display part13is configured from, e.g. a transmissive (see-through) liquid crystal display or organic EL display. The display part13displays thereon, as e.g. a holographic video, a virtual image in relation to a game generated by the information processing device3, superimposed on an image in real space that can be seen through. The virtual image may be either a 2D image or a 3D image. The virtual image may be either a still image or a moving image. The display part13may be of non-transmissive type so that a virtual image generated by the information processing device3is superimposed and displayed on an image in real space captured by a camera for example.

The line-of-sight direction detection part15detects the line-of-sight direction of the player. As used herein, the “line-of-sight direction of the player” refers to a combined direction of both the eye orientation and the head orientation of the player. For example, in the case that the player turns the orientation of his head to the right from a reference direction and then turns the orientation of his eyes further to the right, the line-of-sight direction is an added direction of a head angle to the right and an eye angle to the right with respect to the reference direction. The line-of-sight direction detection part15includes an eye direction detection part29and a head direction detection part31.

The eye direction detection part29detects an eye orientation of the player. The eye orientation is detected as e.g. a relative direction (angle) with respect to the frontal direction of the head. The eye orientation detection technique is not particularly limited, and various detection techniques can be employed. For example, the eye direction detection part29may be composed of an infrared LED, an infrared camera, etc. In this case, the infrared camera captures an image of eyes irradiated with light from the infrared LED. From the captured image, the control part27may figure out the player's eye orientation based on, e.g. with a reference point being the position on the cornea of reflected light (corneal reflex) brought about by irradiation of the infrared LED light and a moving point being the pupil, the position of the pupil relative to the position of the corneal reflex. For example, the eye direction detection part29may be composed of a visible light camera, etc. From a player's eye image captured by the visible light camera, the control part27may calculate the eye orientation based on, e.g. with the reference point being the eye's inner corner and the moving point being the iris (so-called black eye), the position of the iris relative to the eye's inner corner.

The head direction detection part31detects a head orientation (face orientation) of the player. The head orientation is detected as e.g. a direction (vector) in a static coordinate system in real space, recognized by a space recognition process described later. The head direction detection technique is not particularly limited, and various detection techniques can be adopted. For example, the head mounted display7may include an accelerometer or a gyro sensor so that based on the detection result of the sensor, the control part27calculates a head direction of the player.

The position detection part17detects a head position of the player. The head position detection technique is not particularly limited, and various detection techniques can be employed. For example, the technique may be one where a plurality of cameras and a plurality of depth sensors are disposed around the head mounted display7so that the depth sensors recognize the player's surrounding space (real space) and so that based on the results of detection done by the plurality of cameras, the control part27recognizes the player's head position in the surrounding space. For example, a camera may be disposed on the exterior of the head mounted display7with marks such as light emitting parts being placed on the head mounted display7so that the external camera detects the player's head position.

The audio input part19is configured from e.g. a microphone to input the player's voice or other external sounds. The input player's voice is recognized as words through e.g. an audio recognition process effected by the control part27, which in turn executes a process based on the recognized voice. The type of the input external sound is recognized through e.g. the audio recognition process effected by the control part27, which in turn executes a process based on the recognized sound type.

The audio output part21is configured from e.g. a speaker to output audios to the player's ear. For example, characters' voices, sound effects, BGMs, etc. are output.

The manual operation input part23is configured from e.g. a camera to thereby detect a movement of the player's hand to input it as manual operation. The control part27executes a process based on the input manual operation. This enables the player to perform various operations by his hand's movement. Specifically, as shown inFIG.3, operations are possible e.g. such as: “tap” operation of making once contact between the tips of the thumb and the index finger of the right hand; “double-tap” operation of making twice contact therebetween; and “rotate” operation of upward extending and rotating the index finger of the right hand. In addition to these, a wide variety of manual operations may be feasible.

The information acquisition part25connects to the Internet, etc. by e.g. wireless communication, to acquire various types of information from an information distribution site that distributes information, a database, etc. The information acquisition part25can acquire e.g. information related to date and time, weather, news, entertainment (concert and movie schedules, etc.), shopping (bargain sale, etc.), or the like. The information acquisition part25may acquire e.g. current position information of the player based on signals from GPS satellites.

The control part27executes various processes based on detection signals of various types of sensors. The various processes include e.g. an image display process, a line-of-sight direction detection process, a line-of-sight adjustment process, a position detection process, the space recognition process, the audio recognition process, an audio output process, a manual operation input process, and information acquisition process. In addition to these, a wide variety of processes may be executable.

The information processing device3generates or changes a virtual image to be displayed on the display part13, to represent mixed reality (MR), based on the results of processes effected by the line-of-sight direction detection part15, the position detection part17, the audio input part19, the manual operation input part23, the information acquisition part25, etc. of the head mounted display7.

Referring next toFIGS.4to11, an exemplary outline will be described of a game according to this embodiment, i.e. a game that is provided by executing a game program and a game processing method of the present invention by the information processing device3.

The game according to this embodiment enables the player to communicate with a virtual game character who seems to exist in real space to the player, by superimposing an image of the game character on an image in real space. The arrangement, posture, movement, behavior, etc. of the game character vary in accordance with various operation inputs made by the operator (e.g. movements of line of sight and head, manual operation, voice, operation input with the game controller5). The game character may be a virtual character or object having eyes and its type is not particularly limited. The game character may be e.g. a human male character, a human female character, a non-human animal character, a virtual creature character other than human beings and animals, or a robot other than creatures.

FIG.4shows an exemplary flow of a game that is executed by the control part27of the head mounted display7and the information processing device3.

At step S5, the control part27determines whether the player wears the head mounted display7for the first time. The control part27may cause the display part13to display thereon a message to confirm whether it is a first wear and may make a determination based on the player's input (YES or NO). If it is the first wear (step S5:YES), the procedure goes to next step S10. On the contrary, if not the first wear (step S5:NO), the procedure goes to step S15described later.

At step S10, the control part27executes the line-of-sight adjustment process. The line-of-sight adjustment technique is not particularly limited. For example, the control part27may display markers on the display part13at total 5 locations, i.e. at a center location of the player's field of view and at 4 locations in the vicinity of four corners so that the dine-of-sight information is detected during when the player is gazing at the markers.

At step S15, the control part27displays a home menu screen on the display part13.

At step S20, the control part27determines whether to start a game. For example, the control part27may make a determination based on whether the game is activated on the home menu screen by the player. The procedure waits at this step S20until the game starts (step S20:NO). If the game is started (step S20:YES), the procedure goes to next step S25.

At step S25, the control part27executes the space recognition process of recognizing real space around the player using e.g. the depth sensors or cameras. This allows generation of a stationary static coordinate system (coordinate system corresponding to real space) that does not change even though the position of the head mounted display7changes as the player moves around.

At step S30, the information processing device3displays a virtual object on the display part13. The virtual object is displayed e.g. at the center of the field of view of the display part13. In the depth direction, the virtual object is displayed at a position near a collision point between the direction that the display part13faces (the direction of the player's head) and real space recognized at step S25. In the case that the player changes the direction of his head, the display position of the virtual object in the field of view remains unchanged from the center, whereas only the position of the virtual object in the depth direction changes in accordance with real space. The position in the depth direction may also be fixed and displayed at a preset position. The player may be able to adjust the position in the vertical and horizontal directions in the field of view or the position in the depth direction. The type of the virtual object is not particularly limited. For example, it can be a simple shaped object such as a cube, a rectangular parallelepiped, or a sphere, or a virtual object imitating various objects that actually exist such as a chair, a table, etc. A game character itself may be displayed.

At step S35, the information processing device3determines whether a game character is selected by the player. The control waits at this step S35until the game character is selected (step S35:NO). If the game character is selected (step S35:YES), the procedure goes to next step S40.

FIG.5shows an example of a character selection screen. In the example shown inFIG.5, rectangular parallelepiped virtual objects33A to33C are displayed superimposed on an image of the player's room that is real space. In this example, the virtual objects33A to33C are displayed e.g. in the vicinity of a sofa. The virtual objects33A,33B, and33C correspond to game characters A, B, and C, respectively, and have their respective different colors. The player can switch the virtual objects33A,33B, and33C in order, as shown inFIG.5, e.g. by the tap operation described above or by a switching operation achieved through the game controller5. The virtual objects may be switched by calling a character name aloud. With a virtual object corresponding to a desired game character being displayed, the player can select the game character e.g. by the double-tap operation or by a decision operation achieved through the game controller5.

Referring back toFIG.4, at step S40, the information processing device3determines whether the position and the orientation of the virtual object are decided by the player. The procedure waits at this step S40until the position and the orientation of the virtual object are decided (step S40:NO). If the position and the orientation of the virtual object are decided (step S40:YES), the procedure goes to next step S45.

FIG.6shows an example of a position adjustment screen of a virtual object. As shown inFIG.6, e.g. by changing the orientation of the player's head, the player can vertically and horizontally adjust the position relative to real space of the virtual object33A that is displayed at the center of the field of view of the display part13. Since the display position of the virtual object33A corresponds to the display position of the game character A, the player adjusts the position of the virtual object33A so that the game character A is arranged at a desired position.

FIG.7shows an example of an orientation adjustment screen of the virtual object. As shown inFIG.7, the player can adjust the orientation of the virtual object33A by rotating it around a vertical axis e.g. by a rotational operation made by the hand's movement described above or a rotational operation made through the game controller5. Since the orientation of the virtual object33A corresponds to the orientation of the game character A, the player adjusts the orientation of the virtual object33A so that the game character A faces a desired direction.

After completing adjustment of the position and orientation of the virtual object relative to real space, the player can decide the position and orientation of the virtual object by performing the double-tap operation or the decision operation through the game controller5.

Referring back toFIG.4, at step S45, the information processing device3displays the game character such that the game character is arranged coinciding with the position and orientation of the virtual object.FIG.8shows an example of a display screen of a game character. In this example, a female game character37as the game character A is displayed e.g. in her standing posture in front of a sofa.

At step S50, the information processing device3executes a communication process enabling communication to be made between the player and the game character. The player can communicate with the game character in various modes. For example, by moving around in real space, the player can move around a displayed game character and view or talk to the game character from different directions.

The player can e.g. touch a game character.FIG.9shows an example of a game screen in the case of touching a game character. As shown inFIG.9, an aiming line41is irradiated from a left hand39of the player. When irradiated onto a touchable area of the game character37, the aiming line41changes color so that the player can identify the touchable area. By e.g. closing the left hand with the color changed, the player can touch the game character37. At this time, the game character37may take an action in response to the touch, such as e.g. being pleased, blushing for shame, being displeased, or speaking given lines. InFIG.9, the right hand of the game character37is irradiated with the aiming line41. By closing the player's left hand in this state, the player can touch (hold) the right hand of the game character37. InFIG.9, the image of real space is not shown (the same applies toFIGS.10and11described later).

Although not shown, with the aiming line41irradiated onto the head of the game character37, the player can stroke the head of the game character37e.g. by moving the left hand39so that it is substantially horizontal to the floor and then moving it in the left-right direction. At this time, the game character37may take an action in response to the stroke on the head, such as e.g. rejoicing, being spoiled, or uttering a voice such as “Ehehe”.

In the case that the player e.g. waves his right hand toward the game character37, the game character37may take an action of waving back the hand while saying a line such as “Bye bye”. In the case that the player e.g. gives the game character37a peace sign, the game character37may take an action of e.g. returning a peace sign to the player while speaking a predetermined line such as “Peace!”.

When the player e.g. utters “hand fan” with his right hand moved forward, the right hand switches to a hand fan mode.FIG.10shows an example of a game screen in the hand fan mode. As shown inFIG.10, when the player waves (fans with) a right hand43toward the game character37in the hand fan mode, a virtual wind occurs in a direction corresponding to the direction of fanning with the right hand43, with the result that flexible objects accompanying the game character37, e.g. hair or clothes flutter in the wind. InFIG.10, e.g. hair45of the game character37is fluttering in a direction corresponding to the virtual wind. At this time, the game character37may take an action in response to the wind blowing, such as e.g. standing ready or giving a shriek. In addition to or in place of the right hand43, an image of the hand fan may be displayed.

When the player e.g. utters “Spray” with his right hand moved forward, the right hand switches to a spray mode.FIG.11shows an example of a game screen in the spray mode. As shown inFIG.11, when in a spray mode the player e.g. bends a second joint of an index finger47of the right hand from the state where the index finger47is extended upward, a spray49of mist is fired at the game character37. The spray49may be displayed or undisplayed. As a result, skin of the game character37and accompanying objects (e.g. hair, clothes, etc.) grow wet. Since the spray49is fired every time the index finger47is bent, the wet state gradually progresses and becomes drenched by repeating the spray many time. InFIG.11, e.g. a face51and clothes53of the game character37are wet. At this time, the game character37may take an action in response to getting wet, such as e.g. standing ready, giving a scream, or getting angry.

The player may give the game character37a call. For example, in the case that the player calls a name, the game character37may make a reply. In the case that the player utters a compliment such as “cute” or “pretty”, the game character37may be pleased or blush for shame. For example, in the case that the player utters “High cheese”, the game character37may get into a pose for photograph such as e.g. giving a peace sign or making a smile. Otherwise, in response to various calls from the player, the game character37may show various facial expressions and gestures, such as laughing, getting troubled or angry, etc. or may speak lines.

Referring back toFIG.4, step S55, it is determined whether to end the game. If the game is not over (step S55:NO), the procedure returns to step S50. On the other hand, if the game is over (step S55:YES), this flowchart comes to an end.

Although in the above, the case has been described where the game character37is displayed in a standing posture, display may be made such that the game character37is arranged coinciding with the position and orientation of the real object, e.g. such that the game character37is sitting on an actual chair. Details of this process will then be described.

4. First Embodiment

In the first embodiment, details will be described of the processes effected in the case that a game character is arranged coinciding with the position and orientation of a real object. The contents of processes of this embodiment are executed when mode switching is achieved e.g. by the player giving a call such as “Let's sit down” in the communication process at step S50described hereinbefore. Although not described below, in this embodiment, the player may make various communications that have been described at step S50with a game character, after arranging the game character coinciding with the position and orientation of the real object.

4-1. Functional Configuration of Information Processing Device

Referring first toFIG.12, description will be given of an example of a functional configuration of the information processing device3according to the first embodiment. Although inFIG.12the configuration of the head mounted display7is not shown, the head mounted display7has the same configuration as that shown inFIG.2described above.

As shown inFIG.12, the information processing device3includes an object selection processing part55, an object display processing part57, an object adjustment processing part59, a character posture setting processing part61, a first character display processing part63, and an object hide processing part65.

The object selection processing part55selects a kind of a virtual object, based on an input of the player. The virtual object is prepared in advance as a virtual object that imitates various real objects existing in real space. The player may be able to select a desired virtual object from among the prepared virtual objects. The virtual objects may include e.g. virtual seat object such as a chair, a sofa, a ball, a cushion, etc., virtual furniture objects such as a table, a chest, etc., virtual tableware objects such as a glass, a cup, a plate, etc. and virtual wall objects against which the game character can lean. For example, if the game character is desired to be seated on a real seat object such as a chair, a sofa, a ball, a cushion, etc. in real space, the player selects a virtual seat object.

The object display processing part57allows the display part13to display an image of the virtual object selected by the object selection processing part55, superimposed on an image in real space.

The object adjustment processing part59adjusts at least one of the position or the orientation of the virtual object relative to the image in real space, based on the player's input. For example, if the game character is desired to be seated on a real seat object, the object adjustment processing part59adjusts at least one of the position or the orientation of the virtual seat object relative to the real seat object contained in the image in real space, based on the player's input. As described above, the player can vertically and horizontally adjust the position relative to the real space of the virtual object displayed at the center of the field of view of the display part13, e.g. by changing the orientation of his head. The position of the virtual object in the depth direction is a position near the collision point between the direction of the player's head and the real space, as described above. The position in the depth direction along the direction of the line of sight may be adjustable e.g. by a manual operation or an operation made through the game controller5. The player can adjust the orientation of the virtual object e.g. by the rotational operation via the hand's movement or the rotational operation made through the game controller5. The player may adjust both the position and the orientation of the virtual object or only one of the position and the orientation.

The character posture setting processing part61sets the posture of a game character, based on the type of the virtual object selected by the object selection processing part55. For example, in the case that the selected object is a virtual seat object such as a chair, a sofa, a ball, a cushion, etc., a posture to sit on is set. For example, in the case that the selected object is a virtual table object, a posture to be seated confronting the player with the virtual table object in between is set. For example, in the case that the selected object is a virtual tableware object such as a glass, a cup, a plate, etc., a posture to touch it is set. For example, in the case that the selected object is a virtual wall object, a posture to lean against the virtual wall object is set.

The first character display processing part63(an example of a character display processing part) causes the display part13to display thereon an image of a virtual game character such that it is arranged coinciding with the position or the orientation of the virtual object whose at least one of the position or the orientation is adjusted by the object adjustment processing part59. For example, in the case that at least one of the position or the orientation of a virtual seat object is adjusted with respect to a real seat object, the first character display processing part63causes the display part13to display an image of a game character thereon so that the game character is arranged coinciding with at least one of the position or the orientation of the real seat object. The first character display processing part63causes the display part13to display thereon an image of a game character that takes a posture set by the character posture setting processing part61.

The object hide processing part65hides an image of a virtual object when caused to display an image of the game character by the first character display processing part63.

The processes, etc. effected by the processing parts described hereinabove are not limited to the example of sharing these processes. For example, they may be processed by a smaller number of processing parts (e.g. one processing part) or may be processed by further subdivided processing parts. The functions of the processing parts are implemented by a game program run by a CPU301(seeFIG.38described later). However, for example, some of them may be implemented by an actual device such as a dedicated integrated circuit such as ASIC or FPGA, other electric circuits, etc. The processing parts described above are not limited to the case that all of them are mounted on the information processing device3. Some or all of them may be mounted on the head mounted display7(e.g. control part27). In such a case, the control part27of the head mounted display7acts as an example of the information processing device.

4-2. Screen Example of Virtual Object Adjustment and Character Display

Referring next toFIGS.13to19, examples of a screen will be described on which the position and orientation of a virtual object are adjusted to display a game character.

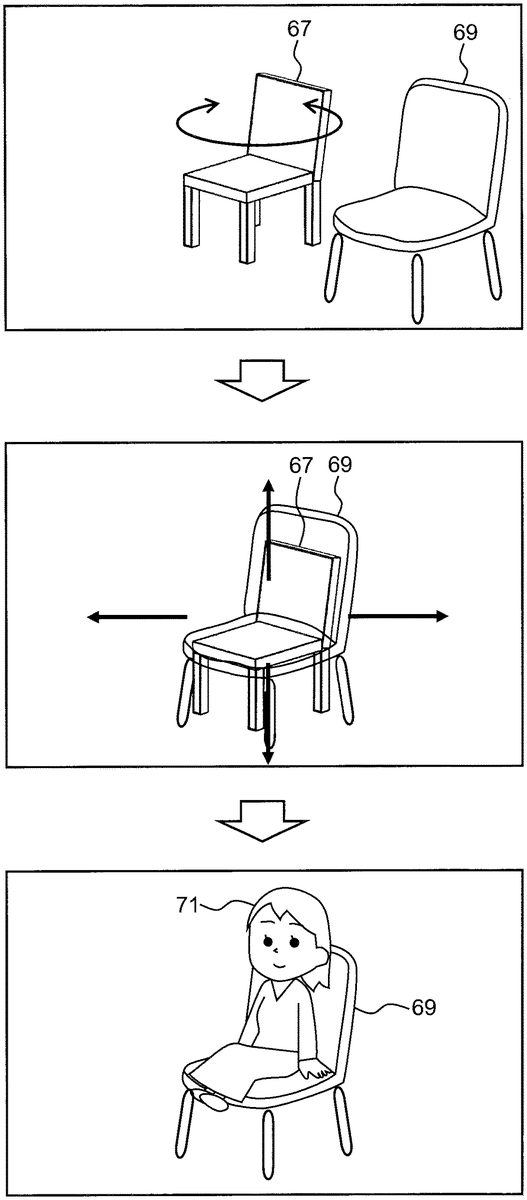

In the example shown inFIG.13, e.g. a virtual seat object67of a chair with backrest is being selected by the player. As shown in the upper part ofFIG.13, adjustment is made so that the orientation of the virtual seat object67coincides with the orientation of a real seat object69that is a chair with backrest existing in real space. Next, as shown in the middle part ofFIG.13, adjustment is made so that the position of the virtual seat object67coincides with the position of the real seat object69. At this time, adjustment may be made so that for example, the virtual seat object67and the real seat object69coincide in seat height. The adjustments of the orientation and position may be made in the reverse order to the above, or may be made in parallel (the same applies toFIGS.14to19). Subsequently, as shown in the lower part ofFIG.13, a game character71set to a sitting posture is arranged so as to coincide with the adjusted position and orientation of the virtual seat object67, with the virtual seat object67becoming hidden. This enables the game character71to be displayed as if the game character71were sitting on the real seat object69.

In the example shown inFIG.14, e.g. a virtual seat object73of a balance ball is being selected by the player. The virtual seat object73is displayed together with the orientation. As shown in the upper part ofFIG.14, adjustment is made so that the orientation of the virtual seat object73coincides with the orientation of a real seat object75that is a balance ball existing in real space. In this example, the real seat object75is of a ball shape having no orientation. The player may therefore adjust the front of the game character to be the direction to face. Next, as shown in the middle part ofFIG.14, adjustment is made so that the position of the virtual seat object73coincides with the position of the real seat object75. At this time, adjustment may be made so that for example, the virtual seat object73and the real seat object75coincide in seat (top surface) height. Subsequently, as shown in the lower part ofFIG.14, a game character77set to a sitting posture is arranged so as to coincide with the adjusted position and orientation of the virtual seat object73, with the virtual seat object73becoming hidden. This enables the game character77to be displayed as if the game character71were sitting on the real seat object75.

In the example shown inFIG.15, e.g. a virtual seat object79of a seated cushion is being selected by the player. As shown in the upper part ofFIG.15, adjustment is made so that the orientation of the virtual seat object79coincides with the orientation of a real seat object81that is a seated cushion existing in real space. Next, as shown in the middle part ofFIG.15, adjustment is made so that the position of the virtual seat object79coincides with the position of the real seat object81. At this time, adjustment may be made so that for example, the virtual seat object79and the real seat object81coincide in seat height. Subsequently, as shown in the lower part ofFIG.15, a game character83set to a sitting posture is arranged so as to coincide with the adjusted position and orientation of the virtual seat object79, with the virtual seat object79becoming hidden. This enables the game character83to be displayed as if the game character83were sitting on the real seat object81.

Although not shown, it is also possible for example to seat the game character on e.g. a square cushion that allows sitting in four directions, so as to face one direction in the same manner as the above and to seat the player adjacent to the game character so as to face another direction.

In the example shown inFIG.16, e.g. a virtual object85of a hugging pillow is being selected by the player. As shown in the upper part ofFIG.16, adjustment is made so that the orientation of the virtual object85coincides with the orientation of a real object87that is a hugging pillow existing in real space. At this time, adjustment may be made so that for example, the virtual object85and the real object87coincide in longitudinal direction (axial direction). Next, as shown in the middle part ofFIG.16, adjustment is made so that the position of the virtual object85coincides with the position of the real object87. At this time, adjustment may be made so that for example, the virtual object85and the real object87coincide in top surface height or center position. Subsequently, as shown in the lower part ofFIG.16, a game character89set to a posture lying and holding a pillow is arranged so as to coincide with the adjusted position and orientation of the virtual object85, with the virtual object85becoming hidden. This enables the game character89to be displayed as if the game character89were hugging the real object87.

In the example shown inFIG.17, e.g. a virtual object91of a glass with a straw is being selected by the player. As shown in the upper part ofFIG.17, adjustment is made so that the orientation of the virtual object91coincides with the orientation of a real object93that is a glass with a straw existing in real space. At this time, adjustment may be made so that for example, the virtual object91and the real object93coincide in straw direction. Next, as shown in the middle part ofFIG.17, adjustment is made so that the position of the virtual object91coincides with the position of the real object93. At this time, adjustment may be made so that for example, the virtual object91and the real object93coincide in straw height. Subsequently, as shown in the lower part ofFIG.17, a game character95set to a posture touching a glass and holding a straw in its mouth is arranged so as to coincide with the adjusted position and orientation of the virtual object91, with the virtual object91becoming hidden. This enables the game character95to be displayed as if the game character95were holding the real object93in its hand and drinking.

In the example shown inFIG.18, e.g. a virtual object97of a table is being selected by the player. As shown in the upper part ofFIG.18, adjustment is made so that the orientation of the virtual object97coincides with the orientation of a real object99that is a table existing in real space. At this time, adjustment may be made so that for example, the virtual object97and the real object99coincide in longitudinal direction. Next, as shown in the middle part ofFIG.18, adjustment is made so that the position of the virtual object97coincides with the position of the real object99. At this time, adjustment may be made so that for example, the virtual object97and the real object99coincide in top surface height. Subsequently, as shown in the lower part ofFIG.18, a game character101set to a posture e.g. confronting the player with a table in between and putting its hands or elbows on the table is arranged so as to coincide with the adjusted position and orientation of the virtual object97, with the virtual object97becoming hidden. This enables the game character101to be displayed as if e.g. the game character101were eating together with the player. By making the same adjustment for glasses, plates, etc. placed on the table, in addition to the table, it becomes possible to give a display as if the game character were drinking or eating while holding a glass, a plate, or the like in its hand. This enables the player to feel like a date.

In the example shown inFIG.19, e.g. a virtual object103of a wall is being selected by the player. As shown in the upper part ofFIG.19, adjustment is made so that the orientation of the virtual object103coincides with the orientation of a real object105that is a wall existing in real space. At this time, adjustment may be made so that for example, the virtual object103and the real object105are parallel to each other. Next, as shown in the middle part ofFIG.19, adjustment is made so that the position of the virtual object103coincides with the position of the real object105. At this time, adjustment may be made so that for example, the virtual object103and the real object105coincide in wall surface (position in the depth direction). Subsequently, as shown in the lower part ofFIG.19, a game character107set to a posture leaning against a wall is arranged so as to coincide with the adjusted position and orientation of the virtual object103, with the virtual object103becoming hidden. As a result, if e.g. the player puts a right hand109against the wall, the player can get a feeling of banging his hand against the wall.

4-3. Process Procedure Executed by Information Processing Device

Referring next toFIG.20, an example of a process procedure will be described that is executed by the CPU301of the information processing device3according to the first embodiment.

At step S110, the information processing device3allows the object selection processing part55to select a type of a virtual object based on an input of the player.

At step S120, the information processing device3allows the object display processing part57to display an image of the virtual object selected at step S110, superimposed on an image in real space by the display part13.

At step S130, the information processing device3allows the object adjustment processing part59to adjust at least one of the position or the orientation relative to the image in real space of the virtual object displayed at step S120, based on an input of the player.

At step S140, the information processing device3allows the character posture setting processing part61to set the posture of the game character, based on the type of the virtual object selected at step S110.

At step S150, the information processing device3allows the first character display processing part63to cause the display part13to display thereon an image of the game character that takes the posture set at step S140, so as to be arranged coinciding with the position or the orientation of the virtual object adjusted at step S130.

At step S160, the information processing device3allows the object hide processing part65to hide the image of the virtual object. This ends the flowchart.

The process procedure is a mere example. At least some processes of the procedure may be deleted or changed, or other processes other than the above may be added. The order of at least some processes of the procedure may be changed. The plural processes may be integrated into a single process. For example, steps S120, S130, and S140may be executed in the reverse order or may be executed simultaneously in parallel.

4-4. Effect of First Embodiment

As set forth hereinabove, the game program of this embodiment causes the information processing device3configured to perform transmission/reception of signals to/from the display part13configured to be attachable to the player' head to display a virtual image superimposed on an image in real space to function as: the object display processing part57causing the display part13to display an image of a virtual object; the object adjustment processing part59adjusting at least one of the position or the orientation of the virtual object relative to an image in real space, based on the player's input; the first character display processing part63causing the display part13to display an image of a virtual game character so as to be arranged coinciding with the adjusted at least one of the position or the orientation of the virtual object; and the object hide processing part65hiding the image of the virtual object when the image of the game character is displayed.

In this embodiment, an image of a virtual object is displayed superimposed on an image in real space by the display part13, and at least one of the position or the orientation of the virtual object relative to the image in real space is adjusted by the player's input. An image of a virtual game character is displayed superimposed on the image in real space by the display part13so as to be arranged coinciding with the adjusted position or orientation of the virtual object, rendering the virtual object image hidden.

This enables the game character to be displayed so as to be arranged coinciding with the position and the orientation of the actual object, by adjusting the position and the orientation of the virtual object to coincide with those of the actual object existing in real space. Accordingly, it becomes possible for the player to feel as if the game character were present in real world, with the result that the player can feel as if he were sharing his experience in real world with the game character.

In this embodiment, the object adjustment processing part59may adjust at least one of the position or the orientation of the virtual seat object relative to the real seat object contained in the image in real space, based on the player's input, while the first character display processing part63may cause the display part13to display an image of the game character so that the game character is arranged coinciding with at least one of the position or the orientation of the real seat object.

In this case, the game character can be displayed as if it were seated on an actual real seat object existing in real space. This enables the player to communicate with e.g. a game character seated on a real chair, allowing the player to feel as if the game character were present in real world.

In this embodiment, the game program may cause the information processing device3to further function as the object selection processing part55that selects the type of the virtual object, based on the player's input.

In this case, it becomes possible for the player to select the type of the virtual object matching the type of the actual object that exists in real space. For example, in the case that a chair exists in real space, the game character can be displayed as if it were seated on the actual chair, by selecting an object of a virtual chair and adjusting the position and orientation of the object of the virtual chair so as to coincide with those of the actual chair. Hence, the player can feel more realistic as if the game character were present in real world.

In this embodiment, the game program may cause the information processing device3to further function as the character posture setting processing part61that sets the posture of a game character, based on the selected type of the virtual object. In such a case, the first character display processing part63may cause the display part13to display thereon an image of a game character taking the set posture.

In this case, the game character can be displayed taking a posture that corresponds to the type of the virtual object selected by the player. For example, in the case that the player selects an object of a glass with a straw, after taking a posture of e.g. putting its hand on the glass and holding the straw in its mouth, the game character can be displayed so as to be arranged coinciding with the position and orientation of the actual glass with a straw. The player can thus feel more realistic as if the game character were present in real world.

4-5. Modification Example of First Embodiment

In the first embodiment described above, the adjusted position and orientation of the virtual object may be registrable so that the action of the game character can be changed based on the registered contents.

FIG.21shows an example of a functional configuration of the information processing device3according to this modification example. As shown inFIG.21, the information processing device3includes an object register processing part111and a first character action setting processing part113, in addition to the configuration shown inFIG.12.

The object register processing part111registers at least one of the position or the orientation of a virtual object adjusted by the object adjustment processing part59, into an appropriate record medium (e.g. a RAM305, a recording device317, etc., seeFIG.38described later).

The first character action setting processing part113(an example of a character action setting processing part) sets an action of a game character, based on the at least one of the position or the orientation of the virtual object registered by the object register processing part111. The “action” includes a game character's movement, body motion, facial expression and gesture, utterance, etc. The first character display processing part63causes the display part13to display an image of a game character so that the game character takes the action set by the first character action setting processing part113.

FIG.22shows an example of real space encompassing virtual objects to be registered.FIG.22is a diagram of e.g. the player' room where there are arranged a sofa115, a table117, a chest119, a television121, a calendar123, a window125, etc. The player selects a virtual object that corresponds to each real object, and performs the adjustment of the position and the orientation to register the type, position, orientation, etc. of each virtual object. This enables the game character to take actions in accordance with the arrangement of the furniture, etc. in the player' room. For example, the game character can take a wide variety of actions in accordance with the registered contents, such as e.g.: being seated at a place of the sofa115; sifting in front of the television121to see the direction of television; putting its elbows or drinking or eating at the place of the table117; making a motion to open a drawer in front of the chest119; looking at the calendar123and saying a line such as “It's an anniversary soon”; or looking out in front of the window125and saying a line “It's a nice day today, isn't it?”.

According to this modification example, by adjusting and registering the positions and orientations of the virtual objects e.g. in accordance with the arrangement of the furniture, etc. in his room, the player enables the game character to take actions in accordance with the arrangement of the furniture, etc. It therefore becomes possible for the player to feel as if the game character were present in his room, bringing about a feeling felt as if the player were sharing his real experience with the game character.

5. Second Embodiment

In a second embodiment, details will be described of processes effected in the case of setting the line-of-sight direction of the game character based on the line-of-sight direction of the player. The process contents of this embodiment are executed in the communication process at step S50described above.

(5-1. Functional Configuration of Information Processing Device>

Referring first toFIG.23, description will be given of an example of a functional configuration of the information processing device3according to the second embodiment. Although inFIG.23the configuration of the head mounted display7is not shown, the head mounted display7has the same configuration as that shown inFIG.2described above.

As shown inFIG.23, the information processing device3includes a line-of-sight direction setting processing part127, a second character display processing part129, an ancillary information acquisition processing part131, and a second character action setting processing part133.

The line-of-sight direction setting processing part127sets the line-of-sight direction of a virtual game character having eyes, based on the line-of-sight direction of the player detected by the line-of-sight direction detection part15of the head mounted display7. As described above, the “virtual game character having eyes” is not limited to a human being and includes e.g. a non-human animal character, a virtual creature character other than human beings and animals, and a robot other than creatures. As described above, the “line-of-sight direction of the player” is the direction including both the eye orientation and the head orientation of the player. The line-of-sight direction setting processing part127sets the line-of-sight direction of the game character, based on both the detected orientation of eyes and orientation of head of the plyer. The “line-of-sight direction of a game character” refers to a combined direction of eyes' orientation, head's orientation, and body's orientation of the game character.

The line-of-sight direction setting processing part127may set the line-of-sight direction of the game character e.g. so as to follow the detected line-of-sight direction of the player. “To follow” means, e.g. when the player and the game character confront each other (e.g. when the angle of intersection of the front direction of the player with the front direction of the game character is greater than 90 degrees), that the line-of-sight direction of the game character intersects with the line-of-sight direction of the player at a position (e.g. intermediate position) between the game character and the player. It means, e.g. when the player and the game character are side by side (e.g. when the front direction of the player and the front direction of the game character are parallel or intersect at an angle of 90 degrees or less), that the line-of-sight direction of the game character and the line-of-sight direction of the player become parallel to each other. In the case that the detected line-of-sight direction of the player is a direction corresponding to the game character, the line-of-sight direction setting processing part127may set the line-of-sight direction of the game character to a direction corresponding to the player.

The second character display processing part129(an example of the character display processing part) causes the display part13to display an image of the game character superimposed on an image in real space so that the game character changes its line-of-sight direction based on the line-of-sight direction of the game character set by the line-of-sight direction setting processing part127. At this time, the second character display processing part129displays the image of the game character so that the game character changes its line-of-sight direction by changing at least one of the eyes' orientation, the head's orientation, the body's orientation of the game character. Hence, the game character may change its line-of-sight direction by only the eyes' orientation or may change it by: the eyes' orientation and the head's orientation; the eyes' orientation and the body's orientation; or the head's orientation and the body's orientation without changing the eyes' orientation. The game character may change its line-of-sight direction by all of the eyes' orientation, the head's orientation, and the body's orientation.

FIGS.24and25show examples of the case that the line-of-sight direction of the game character is changed following the line-of-sight direction of the player.FIGS.24and25do not show a game play screen appearing on the display part13but are explanatory views explaining relationships between the game character's line-of-sight direction and the player's line-of-sight direction (the same applies toFIGS.28and29described later).FIG.24shows examples of the case that the player and the game character confront each other. As shown in the upper part ofFIG.24, in the case that a line-of-sight direction137of a player135turns to e.g. the right when viewed from the player, a line-of-sight direction141of a game character139turns to the right when viewed from the player so as to intersect the line-of-sight direction137of the player135, to follow the player's motion. As shown in the middle part ofFIG.24, in the case that the line-of-sight direction137of the player135turns to e.g. the left when viewed from the player, the line-of-sight direction141of the game character139turns to the left when viewed from the player so as to intersect the line-of-sight direction137of the player135, to follow the player's motion. As shown in the lower part ofFIG.24, in the case that the line-of-sight direction137of the player135turns toward the game character139, the line-of-sight direction141of the game character139turns toward the player135, to look at each other.

FIG.25shows examples of the case that the player and the game character are side by side. As shown in the upper part ofFIG.25, in the case that the line-of-sight direction137of the player135turns to e.g. the right when viewed from the front of the player, the line-of-sight direction141of the game character139changes so as to be substantially parallel to the line-of-sight direction137of the player135, to follow the player's motion. As shown in the middle part ofFIG.25, in the case that the line-of-sight direction137of the player135turns to e.g. the left when viewed from the front of the player, the line-of-sight direction141of the game character139changes so as to be substantially parallel to the line-of-sight direction137of the player135, to follow the player's motion. As shown in the lower part ofFIG.25, in the case that the line-of-sight direction137of the player135turns toward the game character139, the line-of-sight direction141of the game character139turns toward the player135, to look at each other.

Although not shown, also in the case that the line-of-sight direction137of the player135changes to the direction of elevation or depression, the line-of-sight direction141of the game character139follows so as to intersect the line-of-sight direction137of the player135. Although in the above the case has been described where the game character139changes its line-of-sight direction141by changing, e.g. the direction of its eyes, the line-of-sight direction141may be changed by changing the head's orientation or the body's orientation instead of or in addition to the eyes' orientation. For example, when the line-of-sight direction137of the player135changes to a small extent, the eyes' orientation of the game character may be changed. When changing to a middle extent, the head's orientation instead of or in addition to the eyes' orientation may be changed. When changing to a great extent, the body's orientation instead of or in addition to the eyes' orientation or the head's orientation may be changed.

Referring back toFIG.23, the ancillary information acquisition processing part131connects through e.g. a communication device323(seeFIG.38described later) to a network NW such as Internet, to acquire various types of information from the information distribution site that distribute information, a database, etc. The ancillary information acquisition processing part131may acquire e.g. current position information of the player based on signals from GPS satellites. The ancillary information acquisition processing part131may receive and acquire information detected by various sensors of the head mounted display7or information acquired by the information acquisition part25. The ancillary information acquisition processing part131can acquire e.g. information related to date and time, weather, news, entertainment (concert and movie schedules, etc.), shopping (bargain sale, etc.), or the like.

The second character action setting processing part133sets an action of a game character, based on information acquired by the ancillary information acquisition processing part131. The “action” includes the game character' movement, facial expression and gesture, utterance, etc. The second character display processing part129causes the display part13to display an image of the game character so that the game character takes the action set by the second character action setting processing part133.

The second character action setting processing part133enables the game character to take e.g. the following actions. For example, by disposing a luminance sensor on the head mounted display7, when a shooting star is seen while the player is looking up at the night sky, the game character also allows its line of sight to follow as the player changes his line-of-sight direction toward the shooting star. At this time, the shooting star may be identified based on detection information of the luminance sensor, and the game character may utter a line in accordance with the situation, such as “The stars are beautiful!”, “There was a shooting star again!”, etc. Similarly, while the player is watching fireworks, the game character also allows its line of sight to follow as the player changes his line-of-sight direction toward the fireworks. At this time, the fireworks may be identified based on detection information of the luminance sensor, and the game character may utter a line in accordance with the situation, such as “The fireworks are beautiful!”, etc.

For example, when a house chime rings, the game character also allows its line of sight to follow as the player changes his line-of-sight direction toward the entrance. At this time, sound of the chime may be identified based on detection information obtained by the audio input part19, and the game character may utter a line in accordance with the situation, such as “Looks like someone is here”.

By combining plural pieces of acquired information together, more advanced communication can be achieved between the player and the game character. For example, by adding time zone information to the detection information of the luminance sensor, it can be determined whether the light is the sun's light or the star's light. By further adding the current position information from GPS and the line-of-sight direction information to the detection information of the luminance sensor and the time zone information, it is also possible to determine which constellation the starlight comes from. This enables the game character to tell e.g. a story about the constellation.

In the case that e.g. news distribution information in accordance with the current position information is acquired to get e.g. coupon information that can be used at a nearby shop, and further when the player makes an utterance like “I'm hungry”, the contents of the utterance may be identified based on the detection information of the audio input part19, allowing the game character to make a proposal like “The lunch there is cheap”.

In the case that e.g. the current position information is about a movie theater, e.g. show schedule information of the movie theater may be acquired to identify a currently playing movie so that if it is a horror movie, the game character can be scared or if it is a comedy movie, the game character can laugh. This enables the player to get a feeling like enjoying a movie together with the game character.

By acquiring the current position information when watching fireworks described above, it becomes possible to identify the location of the firework display and tell e.g. a story about the firework display.

The processes, etc. effected by the processing parts described hereinabove are not limited to the example of sharing these processes. For example, they may be processed by a smaller number of processing parts (e.g. one processing part) or may be processed by further subdivided processing parts. The functions of the processing parts are implemented by a game program run the CPU301(seeFIG.38described later). However, for example, some of them may be implemented by an actual device such as a dedicated integrated circuit such as ASIC or FPGA, other electric circuits, etc. The processing parts described above are not limited to the case that all of them are mounted on the information processing device3. Some or all of them may be mounted on the head mounted display7(e.g. control part27). In such a case, the control part27of the head mounted display7acts as an example of the information processing device.

5-2. Process Procedure Executed by Information Processing Device

Referring then toFIG.26, an example of a process procedure will be described that is executed when setting and changing the line-of-sight direction of the game character based on the player's line-of-sight direction by the CPU301of the information processing device3according to the second embodiment.

At step S210, the information processing device3acquires information on the player's line-of-sight direction detected by the line-of-sight direction detection part15of the head mounted display7.

At step S220, by the line-of-sight direction setting processing part127, the information processing device3sets the line-of-sight direction of a game character based on the player's line-of-sight direction.

At step S230, by the second character display processing part129, the information processing device3causes the display part13to display an image of the game character so that the game character changes its line-of-sight direction based on the line-of-sight direction of the game character set at step S220. This flowchart thus comes to an end.

The process procedure is a mere example. At least some processes of the procedure may be deleted or changed, or other processes other than the above may be added. The order of at least some processes of the procedure may be changed. The plural processes may be integrated into a single process.

5-3. Effect of Second Embodiment

As set forth hereinabove, the game program of this embodiment causes the information processing device3configured to perform transmission/reception of signals to/from the display part13configured to be attachable to the player' head to display a virtual image superimposed on an image in real space and the line-of-sight direction detection part15detecting the player's line-of-sight direction to function as: the line-of-sight direction setting processing part127setting the line-of-sight direction of a virtual game character having eyes, based on the detected player's line-of-sight direction; and the second character display processing part129causing the display part13to display an image of the game character so that the game character changes its line-of-sight direction based on the set line-of-sight direction of the game character.

In this embodiment, the line-of-sight direction of a game character is set based on the player's line-of-sight direction. An image of the game character is generated so that the game character changes its line-of-sight direction to the set direction. The generated image is displayed superimposed on an image in real space by the display part13. As a result, in the case that the player changes his line-of-sight direction in response to an event in real world, the line-of-sight direction of the game character can be changed so as to follow the change. The player can thus feel as if he were sharing his experience in real world with the game character.

In this embodiment, the line-of-sight direction setting processing part127may set the line-of-sight direction of the game character so as to follow the detected player's line-of-sight direction.

In this case, when the player changes his line-of-sight direction in response to an event in real world, the line-of-sight direction of the game character can be changed so as to follow the line-of-sight direction. This enables the player to feel as if he were watching the same thing in real world together with the game character, increasing the sense of sharing.

In this embodiment, when the detected player's line-of-sight direction is a direction corresponding to the game character, the line-of-sight direction setting processing part127may set the line-of-sight direction of the game character to a direction corresponding to the player.

In this case, e.g. when the player sees toward the game character while changing the line-of-sight direction of the game character so as to follow the player's line-of-sight direction, the game character also toward the player, making it possible to gaze at each other and produce a feeling of lover and date.

In this embodiment, the second character display processing part129may display an image of the game character so that the game character changes its line-of-sight direction by changing at least one of the eyes' orientation, the head's orientation, or the body's orientation of the game character.

In this case, when the set line-of-sight direction of the game character changes to a small extent, the eyes' orientation may be changed. When changing to a middle extent, the head's orientation instead of or in addition to the eyes' orientation may be changed. When changing to a great extent, the body's orientation instead of or in addition to the eyes' orientation or the head's orientation may be changed. In this manner, by changing the mode of motion of changing the line-of-sight direction of the game character in accordance with the extent of change of the set line-of-sight direction of the game character, more natural expression becomes possible.

5-4. Modification Example of Second Embodiment

In the second embodiment described above, in the case of allowing the line-of-sight direction of the game character to follow the player's line-of-sight direction, the face of the game character may easily be visible to the player.

FIG.27shows an example of a functional configuration of the information processing device3according to this modification example. As shown inFIG.27, the information processing device3includes a time difference setting processing part143in addition to the configuration shown inFIG.23described above.

The time difference setting processing part143sets a predetermined time difference between the change of the player's line-of-sight direction and the change of the line-of-sight direction of the game character in the case that the player's line-of-sight direction detected by the line-of-sight direction detection part15of the head mounted display7changes from a direction not corresponding to the game character to a direction corresponding to the game character. The “predetermined time difference” may be set to e.g. approx. 1 sec to several sec.

FIG.28shows examples of changes of the line-of-sight direction of a game character and the player's line-of-sight direction occurring when a time difference is set in the case that e.g. the player and the game character are side by side. As shown in the upper part ofFIG.28, in the case that the line-of-sight direction137of the player135turns to e.g. the right when viewed from the front of the player, the line-of-sight direction141of the game character139changes so as to be substantially parallel to the line-of-sight direction137of the player135, to follow the player's motion. Next, as shown in the middle part ofFIG.28, in the case that the line-of-sight direction137of the player135turns toward the game character139, the line-of-sight direction141of the game character139does not change during the time difference set by the time difference setting processing part143. Then, after the elapse of the time difference, as shown in the lower part ofFIG.28, the line-of-sight direction141of the game character139turns toward the player135, gaging at each other.

According to this modification example, in the case that e.g. the player looks toward the game character while changing the line-of-sight direction of the game character so as to follow the player's line-of-sight direction, the game character looks toward the player after the elapse of the predetermined time difference. This enables the player to see and check, for a while, the appearance of the game character looking at the same thing as himself together, making it possible to further enhance the player's feeling of sharing the experience in real world with the game character.

In addition to the techniques, e.g. the line-of-sight direction setting processing part127may set the line-of-sight direction of the game character, based on either the player's eyes' orientation or head's orientation detected by the line-of-sight direction detection part15of the head mounted display7, e.g. based on the head's orientation.

FIG.29shows examples of changes of the line-of-sight direction of the game character and the player's line-of-sight direction in this case. As shown in the upper part ofFIG.29, in the case that a head's orientation137aof the player135turns to e.g. the right when viewed from the front of the player, the line-of-sight direction141of the game character139changes so as to be substantially parallel to the head's orientation137aof the player135, to follow the player's motion. Next, as shown in the lower part ofFIG.29, in the case that the player135turns only his eyes' orientation137btoward the game character139without changing the head's orientation137a, the line-of-sight direction141of the game character139does not change. This enables the player to visually recognize the appearance of the game character139looking at the same thing as himself together, by changing only his eyes' orientation.

According to this modification example, the line-of-sight direction of the game character can be changed so as to follow e.g. only the head's orientation of the player. In this manner, by separately detecting the eyes' orientation and head's orientation of the player to control the line-of-sight direction of the game character, the player can perform a so-called “flickering” action of changing only the eyes' orientation to see and check the appearance of the game character while e.g. allowing the line-of-sight direction of the game character to follow the head's orientation of the player, making it possible to further enhance the player's feeling of sharing the experience in real world with the game character.

6. Third Embodiment

In a third embodiment, description will be given of the case that wind conditions are detected in real space around the player to change the form of deformation of flexible objects (e.g. hair, clothes, etc.) accompanying the game character in accordance with the detected wind conditions. The process contents of this embodiment is executed in the communication process at step S50described above. For example, it may be executed when switching to the hand fan mode.

6-1. Functional Configuration of Information Processing Device

Referring first toFIG.30, description will be given of examples of a configuration of the head mounted display7and a functional configuration of the information processing device3, according to the third embodiment. Although the head mounted display7has the same configuration as that inFIG.2described above in addition to the configuration shown inFIG.30, the same configuration as inFIG.2is not shown.

As shown inFIG.30, the head mounted display7includes a wind detection part145. The wind detection part145detects wind conditions in real space around the player. The wind detection part145detects, as the “wind conditions”, at least one of air flow, wind velocity, or wind direction. The wind detection part145may be disposed on any one of a front surface, side surfaces, and a rear surface, or may be disposed on a plurality of locations. The wind detection part145may be disposed integrally with the head mounted display7, or may be disposed as a discrete element so as to be capable of transmission/reception of signals.

The wind detection part145includes an air flow detection part147, a wind velocity detection part149, and a wind direction detection part151. The air flow detection part147is e.g. an air flow meter and detects an air flow around the player. The wind velocity detection part149is e.g. an anemometer and detects a wind velocity around the player. The wind direction detection part151is e.g. an anemoscope and detects a wind direction around the player. The air flow detection part147, the wind velocity detection part149, and the wind direction detection part151need not be separate elements. For example, like a wind direction anemometer, two or more detection parts may be integrally assembled.

The information processing device3includes a third character display processing part153, an object deformation processing part155, a third character action setting processing part157, the ancillary information acquisition processing part131.

The third character display processing part153(an example of the character display processing part) causes the display part13to display a virtual game character superimposed on an image in real space.

The object deformation processing part155changes the form of deformation of a flexible object accompanying the game character, based on the wind conditions (at least one of the air flow, the wind velocity, or the wind direction) detected by the wind detection part145of the head mounted display7. The “flexible object” is e.g. hair of the game character, or clothes, swimwear, etc. worn by the game character. In the case that the game character is a female character, it may include a part of body, such as bust. The “form of deformation” is e.g. the extent of deformation, the direction of deformation, sway, vibration, turn, etc. In the case that the flexible object is e.g. clothes, swimwear, etc., it may include tear, damage, coming apart, etc.

The third character action setting processing part157(an example of the character action setting processing part) sets an action of a game character so as to change based on the wind conditions (at least one of the air flow, the wind velocity, or the wind direction) detected by the wind detection part145of the head mounted display7. The third character display processing part153causes the display part13to display an image of the game character so that the game character takes the action set by the third character action setting processing part157.

The ancillary information acquisition processing part131is the same as that of the second embodiment, and therefore will not be again described. The third character action setting processing part157sets an action of the game character, based on information acquired by the ancillary information acquisition processing part131. The third character display processing part153causes the display part13to display an image so that the game character takes the action set by the third character action setting processing part157.

FIGS.31and32show examples of the game screen in this embodiment. The example shown inFIG.31is the case where an event creating a wind around the player occurs in real world, with the strength of the wind being relatively weak. Although the event creating a wind is not particularly limited, it is e.g. fanning with a player's hand or a hand fan, exhaling through mouth, feeling a wind from an electric fan, blowing a wind of a hairdryer, getting some outside air, etc. In the case of the fanning motion, exhaling, blowing a dryer's wind, etc., the wind detection part145may be disposed as a discrete element separately from the head mounted display7so as to be able to detect those winds. As shown inFIG.31, hair161of a game character159is fluttering along the direction of the wind. At this time, the game character159may be allowed to say a line such as “It's cool and comfortable!” in accordance with the strength of the wind.

The example shown inFIG.32is the case where an event creating a wind around the player occurs in real world, with the strength of the wind being relatively strong. As shown inFIG.32, hair161of the game character159is greatly fluttering and a skirt163is turning up. At this time, the game character159may take an action such as holding down the skirt163with a shriek, being ashamed, etc.