U.S. Pat. No. 11,962,820

IMAGE GENERATION APPARATUS, IMAGE GENERATION METHOD, AND PROGRAM INDICATING GAME PLAY STATUS

AssigneeSony Interactive Entertainment Inc

Issue DateFebruary 9, 2021

Illustrative Figure

Abstract

Provided are an image generation apparatus, an image generation method, and a program for generating an image indicative of play status of a game in which two-dimensional objects representative of information to be offered to a viewing audience at the destination of delivery are clearly expressed. An image acquisition section acquires a game image indicative of the content to be displayed on a display device, the game image representing at least the play status of a game in which a virtual three-dimensional object placed in a virtual three-dimensional space is viewed from a point of view in the virtual three-dimensional space. The image acquisition section also acquires a delivery target two-dimensional image indicating a two-dimensional object targeted for delivery, the delivery target two-dimensional image having the same resolution as that of an image to be delivered. A resizing section resizes the game image to the resolution of the delivery target two-dimensional image so as to generate a resized game image. An image generation section generates an image that combines the resized game image with the delivery target two-dimensional image.

Description

DESCRIPTION OF EMBODIMENT One preferred embodiment of the present invention is described below with reference to the accompanying drawings. FIG.1is a view depicting an example of a computer network11related to one embodiment of the present invention.FIG.2is a view depicting a typical configuration of an entertainment system10related to the embodiment of the present invention.FIG.3Ais a view depicting a typical configuration of an HMD12related to the embodiment of the present invention.FIG.3Bis a view depicting a typical configuration of an entertainment apparatus14related to the embodiment of the present invention. As depicted inFIG.1, in the present embodiment, multiple entertainment systems10(entertainment systems10aand10bin the example ofFIG.1) are connected to the computer network11such as the Internet. Thus, the entertainment systems10aand10bcan communicate with each other via the computer network11. As depicted inFIG.2, each entertainment system10related to the present embodiment includes the HMD12, the entertainment apparatus14, a repeating apparatus16, a display device18, a camera/microphone unit20, and a controller22. The HMD12related to the present embodiment includes a processor30, a storage section32, a communication section34, an input/output section36, a display section38, a sensor section40, and an audio output section42, as depicted inFIG.3A, for example. The processor30is a program-controlled device such as a microprocessor that operates in accordance with programs installed in the HMD12, for example. The storage section32is a storage element such as a ROM (Read Only Memory) or a RAM (Random Access Memory), for example. The storage section32stores the programs, among others, that are executed by the processor30. The communication section34is a communication interface such as a wireless LAN (Local Area Network) module, for example. The input/output section36is an input/output port such as an HDMI (High-Definition Multimedia Interface; registered trademark) port, a USB (Universal Serial Bus) port, and/or an AUX (Auxiliary) port. The display section38is disposed on the front side of the HMD12. For example, the display section38is a display ...

DESCRIPTION OF EMBODIMENT

One preferred embodiment of the present invention is described below with reference to the accompanying drawings.

FIG.1is a view depicting an example of a computer network11related to one embodiment of the present invention.FIG.2is a view depicting a typical configuration of an entertainment system10related to the embodiment of the present invention.FIG.3Ais a view depicting a typical configuration of an HMD12related to the embodiment of the present invention.FIG.3Bis a view depicting a typical configuration of an entertainment apparatus14related to the embodiment of the present invention.

As depicted inFIG.1, in the present embodiment, multiple entertainment systems10(entertainment systems10aand10bin the example ofFIG.1) are connected to the computer network11such as the Internet. Thus, the entertainment systems10aand10bcan communicate with each other via the computer network11.

As depicted inFIG.2, each entertainment system10related to the present embodiment includes the HMD12, the entertainment apparatus14, a repeating apparatus16, a display device18, a camera/microphone unit20, and a controller22.

The HMD12related to the present embodiment includes a processor30, a storage section32, a communication section34, an input/output section36, a display section38, a sensor section40, and an audio output section42, as depicted inFIG.3A, for example.

The processor30is a program-controlled device such as a microprocessor that operates in accordance with programs installed in the HMD12, for example.

The storage section32is a storage element such as a ROM (Read Only Memory) or a RAM (Random Access Memory), for example. The storage section32stores the programs, among others, that are executed by the processor30.

The communication section34is a communication interface such as a wireless LAN (Local Area Network) module, for example.

The input/output section36is an input/output port such as an HDMI (High-Definition Multimedia Interface; registered trademark) port, a USB (Universal Serial Bus) port, and/or an AUX (Auxiliary) port.

The display section38is disposed on the front side of the HMD12. For example, the display section38is a display device such as a liquid crystal display or an organic EL (Electroluminescence) display that displays videos generated by the entertainment apparatus14. The display section38is housed in the enclosure of the HMD12. Preferably, the display section38may, for example, receive a video signal output by the entertainment apparatus14and repeated by the repeating apparatus16, and output the video represented by the received video signal. The display section38related to the present embodiment is arranged to display a three-dimensional image by presenting a right-eye image and a left-eye image, for example. Alternatively, the display section38may display solely two-dimensional images with no arrangements for presenting three-dimensional images.

The sensor section40includes, for example, sensors such as an acceleration sensor and a motion sensor. The sensor section40outputs motion data indicative of measurements such as rotation amount and movement amount of the HMD12to the processor30at a predetermined frame rate.

The audio output section42, typically constituted by headphones or speakers, outputs sounds represented by audio data generated by the entertainment apparatus14. The audio output section42receives an audio signal output by the entertainment apparatus14and repeated by the repeating apparatus16, for example, and outputs sounds represented by the received audio signal.

The entertainment apparatus14related to the present embodiment is, for example, a computer such as a game console, a DVD (Digital Versatile Disc) player, or a Blu-ray (registered trademark) player, for example. The entertainment apparatus14related to the present embodiment generates videos and sounds by executing game programs or by reproducing content, the game programs and the content being internally stored or recorded on optical disks, for example. Further, the entertainment apparatus14related to the present embodiment outputs a video signal indicative of the video to be generated and an audio signal representing the sound to be generated, to the HMD12and to the display device18via the repeating apparatus16.

The entertainment apparatus14related to the present embodiment includes a processor50, a storage section52, a communication section54, and an input/output section56, as depicted inFIG.3B, for example.

The processor50is a program-controlled device such as a CPU (Central Processing Unit) that operates in accordance with programs installed in the entertainment apparatus14, for example. The processor50related to the present embodiment also includes a GPU (Graphics Processing Unit) that renders images in frame buffers based on graphics commands and data supplied from the CPU.

The storage section52is a storage element, such as a ROM or a RAM, or a hard disk drive, for example. The storage section52stores the programs, among others, that are executed by the processor50. Further, the storage section52related to the present embodiment has areas allocated as frame buffers in which images are rendered by the GPU.

The communication section54is a communication interface such as a wireless LAN module, for example.

The input/output section56is an input/output port such as an HDMI (registered trademark) port or a USB port.

The repeating apparatus16related to the present embodiment is a computer that repeats video and audio signals output from the entertainment apparatus14and outputs the repeated video and audio signals to the HMD12and to the display device18.

The display device18related to the present embodiment is, for example, a display section such as a liquid crystal display that displays videos represented by the video signal output from the entertainment apparatus14.

The camera/microphone unit20related to the present embodiment includes a camera20athat captures an image of a subject and outputs the captured image to the entertainment apparatus14; and a microphone20bthat acquires ambient sounds, converts the acquired sounds into audio data, and outputs the audio data to the entertainment apparatus14. Further, the camera20related to the present embodiment constitutes a stereo camera.

The HMD12and the repeating apparatus16are capable of exchanging data with each other in a wired or wireless manner, for example. The entertainment apparatus14and the repeating apparatus16are interconnected by an HDMI cable or by a USB cable, for example, and are capable of exchanging data with each other. The repeating apparatus16and the display device18are interconnected by an HDMI cable, for example. The entertainment apparatus14and the camera/microphone unit20are interconnected by an AUX cable, for example.

The controller22related to the present embodiment is an operation input apparatus on which operation input is performed for the entertainment apparatus14. Using the controller22, the user may carry out diverse kinds of operation input by pressing arrow keys or buttons or by tilting operating sticks on the controller22. In the present embodiment, the controller21outputs the input data corresponding to the operation input to the entertainment apparatus14. Also, the controller22related to the present embodiment includes a USB port. When connected with the entertainment apparatus14by a USB cable, the controller22can output the input data to the entertainment apparatus14in a wired manner. The controller22related to the present embodiment further includes a wireless communication module or like arrangement capable of outputting the input data wirelessly to the entertainment apparatus14.

In the present embodiment, for example, a video representing the play status of a game is generated by a game program executed by the entertainment apparatus14aincluded in the entertainment system10a.This video is displayed on the display device18aviewed by the player of the game. The video is resized to a resolution suitable for delivery before being overlaid with two-dimensional objects such as letters, pictorial figures, or symbols targeted for delivery. The video overlaid with the two-dimensional objects targeted for delivery is delivered to the entertainment system10bvia the communication section54aof the entertainment apparatus14aover the computer network11. The video is thus displayed on the display device18bincluded in the entertainment system10b.In the description that follows, the video displayed on the display device18awill be referred to as the player video, and the video displayed on the display device18bas the viewing audience video.

FIG.4is a view depicting a typical player image60, which is a frame image included in the player video.FIG.5is a view depicting a typical viewing audience image62, which is a frame image included in the viewing audience video. In the present embodiment, as described above, the player image60depicted inFIG.4and the viewing audience image62depicted inFIG.5have different resolutions.

The player image60inFIG.4includes character information64aand character information64brepresenting game characters. Also, the player image60includes status information66aand status information66bindicative of the status of the game characters such as their names, ranks, and lives. For example, the status information66arepresents the status of the game character indicated by the character information64a.The status information66brepresents the status of the game character indicated by the character information64b,for example.

On the other hand, the viewing audience image62depicted inFIG.5includes command history information68aand command history information68b,in addition to the character information64a,the character information64b,the status information66a,and the status information66b.For example, the command history information68aindicates a history of commands input by the player operating the game character represented by the character information64a.The command history information68bindicates a history of commands input by the player operating the game character represented by the character information64b,for example.

With the present embodiment, as described above, some of the content of the player image60is in common with the content of the viewing audience image62, and some of the content of the player image60is different from the content of the viewing audience image62.

How the player image60and the viewing audience image62are generated is explained below in more detail.

FIG.6is an explanatory diagram explaining an example of generating the player image60and the viewing audience image62with the present embodiment. As depicted inFIG.6, four frame buffers70(70a,70b,70c,and70d) are allocated in the storage section52aof the entertainment apparatus14ain the present embodiment. Preferably, each of the four frame buffers70may be implemented with such technology as double buffering or triple buffering, for example.

In the frame buffer70a,a frame image indicative of system-related information is rendered at a predetermined frame rate, the image being generated by execution of a system program such as the operating system different from game programs. Here, a frame image indicating two-dimensional objects such as letters, pictorial figures, or symbols may be rendered in the frame buffer70a.

In the frame buffer70b,a frame image indicating the play status of a game is rendered at a predetermined frame rate, for example. Preferably, a frame image indicative of the play status of the game may be rendered here in the frame buffer70b,for example, the game being one in which virtual three-dimensional objects representing the game characters in a virtual three-dimensional space are viewed from a point of view in that virtual three-dimensional space. In the examples ofFIGS.4and5, the frame image indicating the character information64aand the character information64bcorresponds to the image rendered in the frame buffer70b.

In the frame buffer70c,a frame image indicative of the information regarding a user interface of the game is rendered at a predetermined frame rate, for example. Preferably, a frame image indicating the information regarding the user interface of the game such as explanations of input operations for the game and status information regarding the game characters may be rendered here in the frame buffer70c,for example. Here, a frame image indicating two-dimensional objects such as letters, pictorial figures, or symbols may be rendered in the frame buffer70c.In the examples ofFIGS.4and5, the frame image indicating the status information66aand the status information66bcorresponds to the image rendered in the frame buffer70c.

In the frame buffer70d,an image representing the information to be offered to the audience viewing the play status of the game is rendered, for example. This information is not offered to the game players. Here, a frame image indicating two-dimensional objects such as letters, pictorial figures, or symbols may be rendered in the frame buffer70d.In the example ofFIG.5, the frame image indicating the command history information68aand the command history information68bcorresponds to the image rendered in the frame buffer70d.

In the example ofFIG.6, as described above, the image generated by execution of the system program is rendered in the frame buffer70a.Further, the images generated by execution of the game program are rendered in the frame buffers70b,70c,and70d.

Here, in the present embodiment, the frame images rendered in the frame buffers70ato70dmay have the same resolution or different resolutions. In the ensuing description, it is assumed, for example, that the frame images rendered in the frame buffers70aand70chave approximately the same resolution that is highest, that the frame image rendered in the frame buffer70dhas the next-highest resolution, and that the frame image rendered in the frame buffer70bhas the lowest resolution.

For example, in order to generate a frame image indicating the play status of the game in which virtual three-dimensional objects placed in a virtual three-dimensional space are viewed from a point of view in that virtual three-dimensional space, it is necessary to perform high-load processes such as raycasting at a predetermined frame rate. The resolution of this frame image is thus limited by the processing capacity of the entertainment apparatus14that executes the game program. Because the play status of the game is often expressed by graphics, it is highly probable that a slightly blurred expression of the game play status is acceptable. With this taken into account, it is assumed in the ensuing description that the frame image rendered in the frame buffer70bhas the lowest resolution, as described above.

Preferably, the resolution of the frame image rendered in the frame buffer70bmay be arranged to vary with the play status of the game. For example, upon generation of an image subject to a high rendering load, the resolution of that image may be lowered.

Low resolutions may render blurry the two-dimensional objects such as letters, pictorial figures, or symbols placed in the frame image. The user may then have difficulty in understanding the content of that image. With this taken into consideration, the resolution of the frame image rendered in the frame buffer70cor70dis set higher than the resolution of the frame image rendered in the frame buffer70b.

Also, in the ensuing description, it is assumed that the resolution of the delivered video is set lower than the resolution of the video displayed on the display device18a.Thus, the resolution of the frame image rendered in the frame buffer70dis set lower than the resolution of the frame image rendered in the frame buffer70c,as described above.

In the example ofFIG.6, an image78is generated by a compositor76combining an image72stored in the frame buffer70bwith an image74stored in the frame buffer70c.Here, the compositor76resizes the image72to the resolution of the image74, for example. The image72is enlarged in this case. The compositor76thus generates the image78that combines the image74with the resized image72. The image78indicates the content of both the image72and the image74.

In another example, an image84is generated by a compositor82combining the image78with an image80stored in the frame buffer70d.Here, the compositor82resizes the image78to the resolution of the image80, for example. The image78is reduced in size in this case. The compositor82thus generates the image84that combines the image80with the resized image78. The image84indicates the content of both the image78and the image80.

In another example, an image90is generated by a compositor88combining the image78with an image86stored in the frame buffer70a.Here, the compositor88generates the image90that combines the image78with the image86, for example. The image90indicates the content of both the image78and the image86. In this case, for example, it is assumed that the resolution of the image90is the same as that of the image74rendered in the frame buffer70c.

In another example, an image94is generated by a compositor92combining the image84with the image86stored in the frame buffer70a.Here, the compositor92resizes the image86to the resolution of the image84, for example. In this case, the image86is reduced in size. The compositor92thus generates the image94that combines the image84with the resized image86. The image94indicates the content of both the image84and the image86. It is assumed here that the resolution of the image94is the same as that of the image80rendered in the frame buffer70d.

The image90is then displayed as the player image60on the display device18a.The image94is transmitted as the viewing audience image62to the entertainment system10bvia the communication section54a,the image94being displayed on the display device18bviewed by the audience at the destination.

Resizing an image overlaid with two-dimensional objects can make the two-dimensional objects unclear in the image. For example, reducing the image on which two-dimensional objects are superposed can smudge the two-dimensional objects in the image. Further, enlarging the image overlaid with two-dimensional objects can blur the two-dimensional objects in the image. In the example ofFIG.6, the content of the image80rendered in the frame buffer70dis neither enlarged nor reduced when placed in the image94as described above. Thus, in the example ofFIG.6, it is possible to generate the image indicative of the play status of a game, the image expressing clearly two-dimensional objects representing the information to be offered to a viewing audience at the destination of delivery.

In another example, the command history information68aand the command history information68bpresumably serve as quite a useful reference for the audience planning to play the game later. Thus, the display of such command histories appearing in the video viewed by the audience is useful for the viewing audience. On the other hand, the command histories are not very meaningful for the players who actually input the commands. The players could in fact be annoyed by the command histories indicated in the player video viewed by the players.

In the example ofFIG.6, as described above, the command history information68aand the command history information68bmay be controlled to be displayed in the viewing audience image62but not to appear in the player image60. Thus, in the exampleFIG.6, the players can play the game without being distracted by the command history information68aor68bwhile the viewing audience is being offered the command history information68aand the command history information68b.

FIG.7is an explanatory diagram explaining another example of generating the player image60and the viewing audience image62with the present embodiment.

In the example ofFIG.7, the frame images rendered in the frame buffers70ato70dare similar to the frame images rendered in the frame buffers70ato70din the example ofFIG.6and thus will not be discussed further.

In the example ofFIG.7, for example, an image102is generated by a compositor100combining an image96stored in the frame buffer70bwith an image98stored in the frame buffer70c.Here, the compositor100resizes the image96to the resolution of the image98, for example. The image96is enlarged in this case. The compositor100thus generates the image102that combines the image98with the resized image96. The image102indicates the content of both the image96and the image98.

In another example, an image108is generated by a compositor106combining the image96stored in the frame buffer70bwith an image104stored in the frame buffer70d.Here, the compositor106resizes the image96to the resolution of the image104, for example. The image96is enlarged in this case. The compositor106thus generates the image108that combines the104with the resized image96. The image108indicates the content of both the image96and the image104.

In another example, an image114is generated by a compositor112combining the image102with an image110stored in the frame buffer70a.Here, the compositor112generates the image114that combines the image102with the image110, for example. The image114indicates the content of both the image102and the image110. It is assumed here that the image114has the same resolution as that of the image98rendered in the frame buffer70c,for example.

Also, an image118is generated by a compositor116combining the image108with the image110stored in the frame buffer70a.Here, the compositor116resizes the image110to the resolution of the image108, for example. In this case, the image110is reduced in size. The compositor116thus generates the image118that combines the image108with the resized image110. The image118indicates the content of both the image108and the image110. It is assumed here that the image118has the same resolution as that of the image104rendered in the frame buffer70d,for example.

The image114is then displayed as the player image60on the display device18a.The image118is transmitted as the viewing audience image62to the entertainment system10bvia the communication section54a,the transmitted image being displayed on the display device18bviewed by the audience at the destination.

In the example ofFIG.7, as described above, the content of the image104rendered in the frame buffer70dis neither enlarged nor reduced when placed in the image118. Thus, what is generated in the example ofFIG.7is the image representing the play status of the game in a manner clearly indicating the two-dimensional objects denoting the information to be offered to the audience at the destination of delivery.

Also, in the example ofFIG.7, the content of the frame image stored in the frame buffer70bappears in the player image60but not in the viewing audience image62. Further, the content of the frame image stored in the frame buffer70dappears in the viewing audience image62but not in the player image60.

For example, it is not desirable to reveal to the viewing audience the information related to the players' privacy such as their names. It is thus preferred that the information regarding the players' privacy be prevented from appearing in the viewing audience video to be viewed by an audience.

In the example ofFIG.7, as discussed above, the information related to privacy, for example, is controlled to appear in the player image60but not in the viewing audience image62. Thus, the information not to be revealed to the viewing audience is controlled to be prevented from being viewed by the audience in the example ofFIG.7.

Also, in the example ofFIG.7, as in the example ofFIG.6, the command history information68aand the command history information68bmay be controlled to appear in the viewing audience image62but not in the player image60. Thus, in the example ofFIG.7, the players can also play the game without being distracted by the command history information68aor68bwhile the viewing audience is being offered the command history information68aand the command history information68b.

FIG.8is an explanatory diagram explaining an example of generating the player image60, the viewing audience image62, and an HMD image with the present embodiment. In the example ofFIG.8, an HMD image is generated, besides the player image60and viewing audience image62, as a frame image included in the video to be displayed on a display section38aof an HMD12aas part of the entertainment system10a.

In the example ofFIG.8, the frame image indicating the play status of the game is rendered in the frame buffer70aat a predetermined frame rate. Here, for example, it is possible to render, at a predetermined frame rate, a frame image as a three-dimensional image indicating the play status of a game in which virtual three-dimensional objects representing the game characters placed in a virtual three-dimensional space are viewed from a point of view in that virtual three-dimensional space.

In the frame buffer70b,a frame image indicative of system-related information is rendered at a predetermined frame rate, the frame image being generated by execution of a system program such as the operating system different from game programs. Here, a frame image indicating two-dimensional objects such as letters, pictorial figures, or symbols may be rendered in the frame buffer70b.

In the frame buffer70c,a frame image is rendered at a predetermined frame rate, the image being different from the frame image rendered in the frame buffer70aand indicating the play status of the game, for example. Here, for example, it is possible to render in the frame buffer70ca two-dimensional image corresponding to the above-mentioned three-dimensional image and indicating the play status of the game in which virtual three-dimensional objects representing the game characters placed in a virtual three-dimensional space are viewed from a point of view in that virtual three-dimensional space. In another example, the frame buffer70cmay have an image that indicates what the virtual three-dimensional space looks like when viewed from a point of view different from that of the frame image rendered in the frame buffer70arendered.

In the frame buffer70d,a frame image indicating system-related information is rendered at a predetermined frame rate, the frame image being different from the frame image rendered in the frame buffer70b,the frame image being further generated by execution of the system program, for example. Here, an image indicative of the information not to be viewed by anybody but the person wearing the HMD12amay be rendered in the frame buffer70b,and an image indicative of the information allowed to be viewed by those other than the person wearing the HMD12amay be rendered in the frame buffer70d,for example. Also, an image indicative of the information not to be viewed by the person wearing the HMD12amay be rendered in the frame buffer70d.Here, a frame image indicating two-dimensional objects such as letters, pictorial figures, or symbols may be rendered in the frame buffer70d.

In the example ofFIG.8, as described above, the images generated by execution of the system program are rendered in the frame buffers70band70d.The images generated by execution of the game program are rendered in the frame buffers70aand70c.

In the ensuing description, it is assumed, for example, that the frame images rendered in the frame buffers70band70dhave approximately the same resolution that is highest, that the frame image rendered in the frame buffer70chas the next-highest resolution, and that the frame image rendered in the frame buffer70ahas the lowest resolution.

In the example ofFIG.8, an image126is generated by a compositor124combining an image120stored in the frame buffer70awith an image122stored in the frame buffer70b,for example. Here, the compositor124resizes the image120to the resolution of the image122, for example. The image120is enlarged in this case. The compositor124thus generates the image126that combines the image122with the resized image120. The image126indicates the content of both the image120and the image122.

In another example, an image134is generated by a compositor132combining an image128stored in the frame buffer70cwith an image130stored in the frame buffer70d.Here, the compositor132resizes the image128to the resolution of the image130, for example. In this case, the image128is enlarged. The compositor132thus generates the image134that combines the image130with the resized image128. The image134indicates the content of both the image128and the image130.

The image126is then displayed as the HMD image on the display section38aof the HMD12a.The image134is displayed as the player image60on the display device18aand transmitted as the viewing audience image62to the entertainment system10bvia the communication section54a,the transmitted image being displayed on the display device18bviewed by the audience at the destination. Preferably, the image134may be resized in a manner suitable for delivery before being transmitted to the entertainment system10b.

FIG.9is an explanatory diagram explaining another example of generating the player image60, the viewing audience image62, and the HMD image with the present embodiment.

In the example ofFIG.9, the frame images rendered in the frame buffers70ato70care similar to the frame images rendered in the frame buffers70ato70cin the example ofFIG.8and thus will not be discussed further.

In the example ofFIG.9, a frame image indicating system-related information is rendered in the frame buffer70dat a predetermined frame rate, the image being generated by execution of the system program and being different from the frame image rendered in the frame buffer70b,for example. Here, an image indicative of the information to be offered to the audience viewing the play status of the game may be rendered in the frame buffer70d,for example. Alternatively, an image indicative of the information not to be viewed by the person viewing the display device18aor wearing the HMD12amay be rendered in the frame buffer70d.Here, a frame image indicating two-dimensional objects such as letters, pictorial figures, or symbols may be rendered in the frame buffer70d.

In the example ofFIG.9, an image142is generated by a compositor140combining an image136stored in the frame buffer70awith an image138stored in the frame buffer70b.Here, the compositor140resizes the image136to the resolution of the image138, for example. In this case, the image136is enlarged. The compositor140thus generates the image142that combines the image138with the resized image136. The image142indicates the content of both the image136and the image138.

In another example, an image150is generated by a compositor148combining an image144stored in the frame buffer70cwith an image146stored in the frame buffer70d.Here, the compositor148resizes the image144to the resolution of the image146, for example. In this case, the image144is enlarged. The compositor148thus generates the image150that combines the image146with the resized image144. The image150indicates the content of both the image144and the image146. It is assumed here, for example, that the image150has the same resolution as that of the image146rendered in the frame buffer70d.

The image142is then displayed as the HMD image on the display section38aof the HMD12a.The image144is displayed as the player image60on the display device18a.The image150is transmitted as the viewing audience image62to the entertainment system10bvia the communication section54a,the transmitted image being displayed on the display device18bviewed by the audience at the destination.

In the example ofFIG.9, it is thus possible to generate an image indicative of the play status of the game, the image clearly depicting the two-dimensional objects representing the information to be offered to the audience at the destination of delivery.

It should be noted that, in the present embodiment, the viewing audience image62may preferably be encoded before being transmitted to the entertainment system10b.

Explained further below are the functions of the entertainment apparatus14aincluded in the entertainment system10arelated to the present embodiment as well as the processes performed by the entertainment apparatus14a,the explanations centering on the generation of images to be delivered to the entertainment system10b.

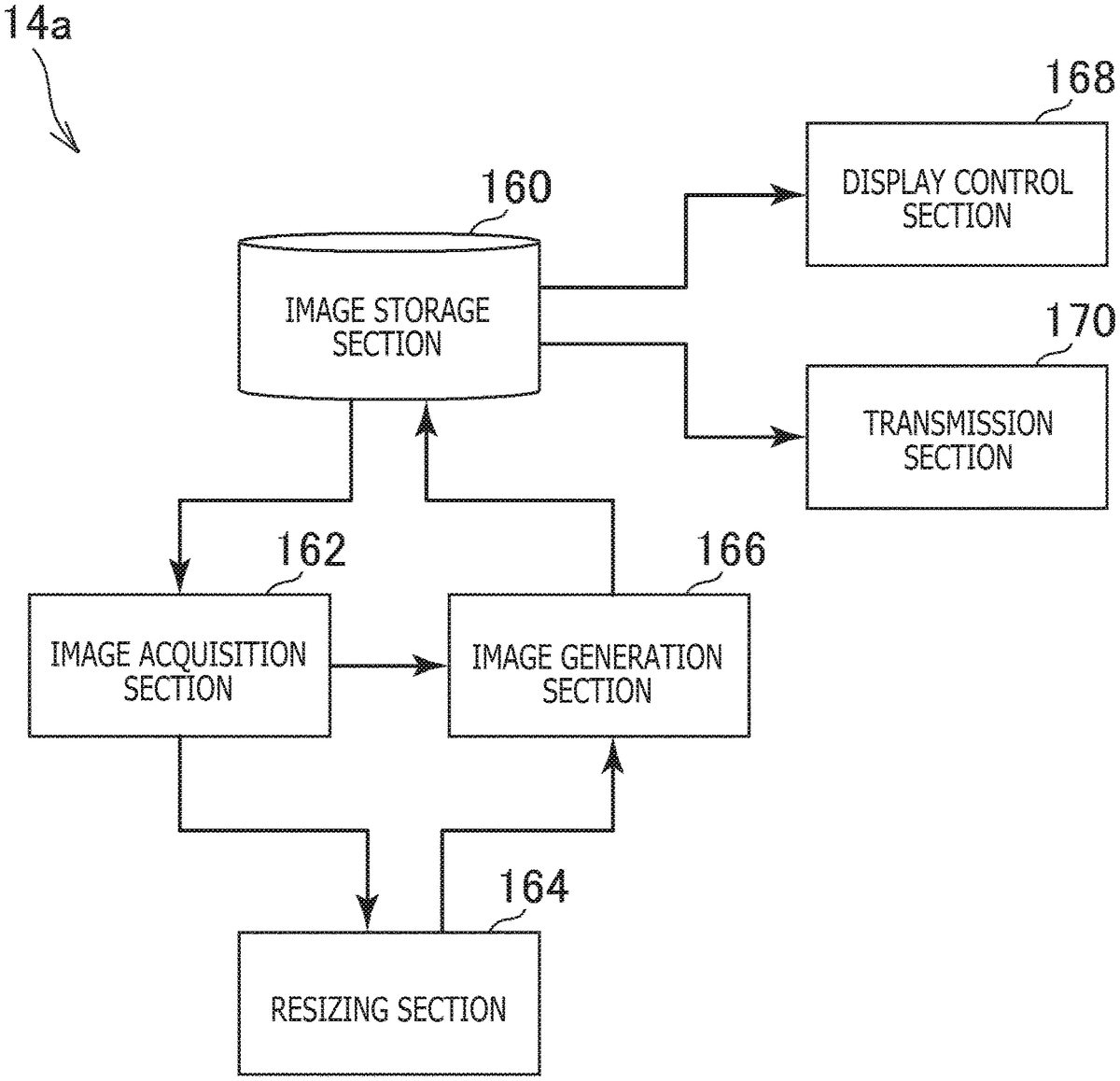

FIG.10is a functional block diagram depicting typical functions of the entertainment apparatus14arelated to the present embodiment. It is to be noted that not all functions denoted inFIG.10need to be implemented and that a function or functions other than those inFIG.10may be implemented in the entertainment apparatus14arelated to the present embodiment.

As depicted inFIG.10, the entertainment apparatus14aincludes, functionally, for example, an image storage section160, an image acquisition section162, a resizing section164, an image generation section166, a display control section168, and a transmission section170. The image storage section160is implemented using mainly the storage section52. The image acquisition section162, the resizing section164, and the image generation section166are implemented using mainly the processor50. The display control section168is implemented using mainly the processor50and the input/output section56. The transmission section170is implemented using mainly the processor50and the communication section54. The entertainment apparatus14aassumes the role of an image generation apparatus that generates images to be delivered with the present embodiment.

The above-mentioned functions may be implemented by the processor50executing programs installed in the entertainment apparatus14serving as a computer, the programs including commands corresponding to these functions. The programs may be stored on computer-readable information storage media such as optical disks, magnetic disks, magnetic tapes, magneto-optical disks, or flash memories, or transmitted typically via the Internet when supplied to the entertainment apparatus14.

In the present embodiment, for example, the image storage section160stores images. The multiple images stored individually in the frame buffers70ato70din the above-described examples correspond to the images stored in the image storage section160. Here, for example, new frame images are stored individually at a predetermined frame rate into the frame buffers70ato70dincluded in the image storage section160.

Also, in the present embodiment, for example, the image storage section160stores the images generated by the image generation section166.

In the present embodiment, for example, the image acquisition section162acquires the images stored in the image storage section160. Here, for example, the image acquisition section162acquires the images stored in the frame buffers70ato70dor the images generated by the image generation section166.

Here, for example, the image acquisition section162may acquire a game image indicative of the content to be displayed on the display device18a,the acquired game image representing at least the play status of the game in which virtual three-dimensional objects placed in a virtual three-dimensional space are viewed from a point of view in that virtual three-dimensional space. For example, the image78in the example ofFIG.6, the image96stored in the frame buffer70bin the example ofFIG.7, and the image144stored in the frame buffer70cin the example ofFIG.9correspond to this game image.

In another example, the image acquisition section162may acquire a delivery target two-dimensional image having the same resolution as that of images to be delivered, the two-dimensional image indicating two-dimensional objects targeted for delivery. For example, the image80stored in the frame buffer70din the example ofFIG.6, the image104stored in the frame buffer70din the example ofFIG.7, and the image146stored in the frame buffer70din the example ofFIG.9correspond to this delivery target two-dimensional image.

In another example, the image acquisition section162may acquire a three-dimensional space image indicative of the play status of the game. For example, the image72stored in the frame buffer70bin the example ofFIG.6corresponds to this three-dimensional space image.

In another example, the image acquisition section162may acquire a display target two-dimensional image having the same resolution as that of images to be displayed on the display device18a,the display target two-dimensional image indicating two-dimensional objects targeted for display on the display device18a.For example, the image74stored in the frame buffer70cin the example ofFIG.6and the image98stored in the frame buffer70cin the example ofFIG.7correspond to this display target two-dimensional image.

In the present embodiment, for example, the resizing section164generates a resized game image by resizing the game image to the resolution of the delivery target two-dimensional image. For example, the function of the compositor82in the example ofFIG.6, the function of the compositor106in the example ofFIG.7, and the function of the compositor148in the example ofFIG.9correspond to this function of the resizing section164.

The resizing section164may also generate a resized three-dimensional space image by resizing the three-dimensional space image to the resolution of the display target two-dimensional image. For example, the function of the compositor76in the example ofFIG.6corresponds to this function of the resizing section164.

The resizing section164may also generate a first resized game image by resizing the game image to the resolution of the delivery target two-dimensional image. Further, the resizing section164may generate a second resized game image by resizing the game image to the resolution of the display target two-dimensional image. For example, the function of the compositor100and that of the compositor106in the example ofFIG.7correspond to this function of the resizing section164. In this case, the compositor106generates the first resized game image, and the compositor100generates the second resized game image.

In the present embodiment, for example, the image generation section166generates a composite image by combining multiple images stored in the image storage section160. The image generation section166stores the generated composite image into the image storage section160in the present embodiment, for example.

Here, the image generation section166may generate an image that combines the resized game image with the delivery target two-dimensional image. For example, the image84in the example ofFIG.6, the image108in the example ofFIG.7, and the image150in the example ofFIG.9correspond to this composite image.

The image generation section166may also generate the game image by combining the resized three-dimensional space image with the display target two-dimensional image. For example, the image78in the example ofFIG.6corresponds to the game image generated in this manner.

The image generation section166may further combine the first resized game image with the delivery target two-dimensional image to generate an image indicative of the content to be delivered. For example, the image108in the example ofFIG.7corresponds to this image. Further, the image generation section166may generate an image that combines the second resized image with the display target two-dimensional image. For example, the image102in the example ofFIG.7corresponds to this image.

In the present embodiment, for example, the display control section168causes the display device18ato display the image generated by the image generation section166.

In the present embodiment, for example, the transmission section170transmits the image generated by the image generation section166to the entertainment system10b.

Explained hereunder with reference to the flowchart ofFIG.11is a typical flow of a process performed repeatedly at a predetermined frame rate by the entertainment apparatus14arelated to the present embodiment. The processing in this process example corresponds to the details explained earlier with reference toFIG.6.

First, the image acquisition section162acquires the image72stored as a frame image in the frame buffer70band the image74stored as a frame image in the frame buffer70c(S101).

Then, the resizing section164resizes the image72to the resolution of the image74(S102).

Then, the image generation section166generates the image78that combines the image74with the resized image72, and stores the image78into the image storage section160(S103).

Then, the image acquisition section162acquires the image78stored in the image storage section160and the image80stored as a frame image in the frame buffer70d(S104).

Then, the resizing section164resizes the image78to the resolution of the image80(S105).

Then, the image generation section166generates the image84that combines the image80with the resized image78, and stores the image84into the image storage section160(S106).

Then, the image acquisition section162acquires the image86stored as a frame image in the frame buffer70aand the image78stored in the image storage section160(S107).

Then, the image generation section166generates the image90that combines the image78with the image86, and stores the image90into the image storage section160(S108).

Then, the image acquisition section162acquires the image86stored as a frame image in the frame buffer70aand the image84stored in the image storage section160(S109).

Then, the resizing section164resizes the image86to the resolution of the image84(S110).

Then, the image generation section166generates the image94that combines the image84with the resized image86, and stores the image94into the image storage section160(S111).

Then, the display control section168performs control to display on the display device18athe image90stored in the image storage section160(S112).

Then, the transmission section170transmits the image94stored in the image storage section160to the entertainment system10b(S113). Here, for example, the transmission section170may encode the image94before transmitting it to the entertainment system10b.

Control is then returned to the processing in S101. In this process example, the processing ranging from S101to S113is thus performed repeatedly at a predetermined frame rate.

Explained next with reference to the flowchart ofFIG.12is a typical flow of another process performed repeatedly at a predetermined frame rate by the entertainment apparatus14related to the present embodiment. The processing in this process example corresponds to the details explained earlier with reference toFIG.7.

First, the image acquisition section162acquires the image96stored as a frame image in the frame buffer70band the image98stored as a frame image in the frame buffer70c(S201).

The resizing section164then resizes the image96to the resolution of the image98(S202).

Then, the image generation section166generates the image102that combines the image98with the resized image96, and stores the image102into the image storage section160(S203).

Then, the image acquisition section162acquires the image96stored as a frame image in the frame buffer70band the image104stored as a frame image in the frame buffer70d(S204).

Then, the resizing section164resizes the image96to the resolution of the image104(S205).

Then, the image generation section166generates the image108that combines the image104with the resized image96, and stores the image108into the image storage section160(S206).

Then, the image acquisition section162acquires the image110stored as a frame image in the frame buffer70aand the image102stored in the image storage section160(S207).

Then, the image generation section166generates the image114that combines the image102with the image110, and stores the image114into the image storage section160(S208).

Then, the image acquisition section162acquires the image110stored as a frame image in the frame buffer70aand the image108stored in the image storage section160(S209).

Then, the resizing section164resizes the image110to the resolution of the image108(S210).

Then, the image generation section166generates the image118that combines the image108with the resized image110, and stores the image118into the image storage section160(S211).

Then, the display control section168performs control to display on the display device18athe image114stored in the image storage section160(S212).

Then, the transmission section170transmits the image118stored in the image storage section160to the entertainment system10b(S213). Here, for example, the transmission section170may encode the image118before transmitting it to the entertainment system10b.

Control is then returned to the processing in S201. In this process example, the processing ranging from S201to S213is thus performed repeatedly at a predetermined frame rate.

Explained next with reference to the flowchart ofFIG.13is a typical flow of another process performed repeatedly at a predetermined frame rate by the entertainment apparatus14related to the present embodiment. The processing in this process example corresponds to the details explained earlier with reference toFIG.8.

First, the image acquisition section162acquires the image120stored as a frame image in the frame buffer70aand the image122stored as a frame image in the frame buffer70b(S301).

Then, the resizing section164resizes the image120to the resolution of the image122(S302).

Then, the image generation section166generates the image126that combines the image122with the resized image120, and stores the image126into the image storage section160(S303).

Then, the image acquisition section162acquires the image128stored as a frame image in the frame buffer70cand the image130stored as a frame image in the frame buffer70d(S304).

Then, the resizing section164resizes the image128to the resolution of the image130(S305).

Then, the image generation section166generates the image134that combines the image130with the resized image128, and stores the image134into the image storage section160(S306).

Then, the display control section168performs control to display, on the display section38aof the HMD12a,the image126stored in the image storage section160(S307).

The display control section168then performs control to display on the display device18athe image134stored in the image storage section160(S308).

Then, the transmission section170transmits the image134stored in the image storage section160to the entertainment system10b(S309). Here, for example, the transmission section170may encode the image134before transmitting it to the entertainment system10b.

Control is then returned to the processing in S301. In this process example, the processing ranging from S301to S309is thus performed repeatedly at a predetermined frame rate.

Explained next with reference to the flowchart ofFIG.14is a typical flow of another process performed repeatedly at a predetermined frame rate by the entertainment apparatus14related to the present embodiment. The processing in this process example corresponds to the details explained earlier with reference toFIG.9.

First, the image acquisition section162acquires the image136stored as a frame image in the frame buffer70aand the image138stored as a frame image in the frame buffer70b(S401).

Then, the resizing section164resizes the image136to the resolution of the image138(S402).

Then, the image generation section166generates the image142that combines the image138with the resized image136, and stores the image142into the image storage section160(S403).

Then, the image acquisition section162acquires the image144stored as a frame image in the frame buffer70cand the image146stored as a frame image in the frame buffer70d(S404).

Then, the resizing section164resizes the image144to the resolution of the image146(S405).

Then, the image generation section166generates the image150that combines the image146with the resized image144, and stores the image150into the image storage section160(S406).

Then, the display control section168performs control to display, on the display section38aof the HMD12a,the image142stored in the image storage section160(S407).

The display control section168then performs control to display on the display device18athe image144stored as a frame image in the frame buffer70c(S408).

Then, the transmission section170transmits the image150stored in the image storage section160to the entertainment system10b(S409). Here, for example, the transmission section170may encode the image150before transmitting it to the entertainment system10b.

Control is then returned to the processing in S401. In this process example, the processing ranging from S401to S409is thus performed repeatedly at a predetermined frame rate.

It is to be noted that the present invention when embodied is not limited to the above-described embodiment.

It is also to be noted that specific letter strings and numerical values in the foregoing description as well as in the accompanying drawings are only examples and are not limitative of the present invention.

Claims

- An image generation apparatus comprising: a game image acquisition section configured to acquire a game image indicative of content to be displayed on a player video display device and an audience video display device, the game image representing at least play status of a game in which a virtual three-dimensional object placed in a virtual three-dimensional space is viewed from a point of view in the virtual three-dimensional space;a player information image acquisition section configured to acquire a player information image indicative of content to be displayed on one or more of the player video display device and the audience video display device;an audience information image acquisition section configured to acquire an audience information image indicative of content to be displayed on the audience video display device and not on the player video display device;a player video display device delivery target two-dimensional image acquisition section configured to acquire a player video display device delivery target two-dimensional image indicating a two-dimensional object targeted for delivery to the player video display device, the delivery target two-dimensional image having a same resolution as that of an image to be delivered to the player video display device;an audience video display device delivery target two-dimensional image acquisition section configured to acquire an audience video display device delivery target two-dimensional image indicating a two-dimensional object targeted for delivery to the audience video display device, the delivery target two-dimensional image having a same resolution as that of an image to be delivered to the audience video display device;a game image resizing section configured to resize the game image to the resolution of the player video display device delivery target two-dimensional image so as to generate a player video display device resized game image, and to resize the game image to the resolution of the audience video display device delivery target two-dimensional image so as to generate an audience video display device resized game image;and a delivery image generation section configured to generate a player video display device image for display on the player video display device that combines the player video display device resized game image with the player video display device delivery target two-dimensional image and to generate an audience video display device image for display on the audience video display device that combines the audience video display device resized game image with the audience video display device delivery target two-dimensional image;wherein the player video display device image includes the player information image content and not the audience information image content, and the audience video display device image includes the player information image content and the audience information image content;and wherein the player video display device image and the audience video display device image have different resolutions.

- The image generation apparatus according to claim 1, further comprising: a three-dimensional space image acquisition section configured to acquire a three-dimensional space image indicating the play status of the game;a display target two-dimensional image acquisition section configured to acquire a display target two-dimensional image indicating a two-dimensional object targeted for display on the display device, the display target two-dimensional image having a same resolution as that of an image to be displayed on the display device;a three-dimensional space image resizing section configured to resize the three-dimensional space image to the resolution of the display target two-dimensional image so as to generate a resized three-dimensional space image;and a game image generation section configured to generate the game image by combining the resized three-dimensional space image with the display target two-dimensional image.

- The image generation apparatus according to claim 2, further comprising: a first frame buffer configured to store the three-dimensional space image;a second frame buffer configured to store the display target two-dimensional image;and a third frame buffer configured to store the delivery target two-dimensional image.

- The image generation apparatus according to claim 1, further comprising: a display target two-dimensional image acquisition section configured to acquire a display target two-dimensional image indicating a two-dimensional object targeted for display on the display device, the display target two-dimensional image having a same resolution as that of an image to be displayed on the display device, wherein the game image resizing section resizes the game image to the resolution of the delivery target two-dimensional image so as to generate a first resized game image, the game image resizing section resizes the game image to the resolution of the display target two-dimensional image so as to generate a second resized game image, the delivery image generation section generates an image that combines the first resized game image with the delivery target two-dimensional image, and a display image generation section that generates an image that combines the second resized game image with the display target two-dimensional image is further included.

- The image generation apparatus according to claim 4, further comprising: a first frame buffer configured to store the game image;a second frame buffer configured to store the display target two-dimensional image;and a third frame buffer configured to store the delivery target two-dimensional image.

- The image generation apparatus according to claim 1, wherein the two-dimensional object is a letter, a pictorial figure, or a symbol.

- An image generation method comprising: acquiring a game image indicative of content to be displayed on a player video display device and an audience video display device, the game image representing at least play status of a game in which a virtual three-dimensional object placed in a virtual three-dimensional space is viewed from a point of view in the virtual three-dimensional space;acquiring a player information image indicative of content to be displayed on one or more of the player video display device and the audience video display device;acquiring an audience information image indicative of content to be displayed on the audience video display device and not on the player video display device;acquiring a player video display device delivery target two-dimensional image indicating a two-dimensional object targeted for delivery to the player video display device, the delivery target two-dimensional image having a same resolution as that of an image to be delivered to the player video display device;acquiring an audience video display device delivery target two-dimensional image indicating a two-dimensional object targeted for delivery to the audience video display device, the delivery target two-dimensional image having a same resolution as that of an image to be delivered to the audience video display device;resizing the game image to the resolution of the player video display device delivery target two-dimensional image so as to generate a player video display device resized game image, and resizing the game image to the resolution of the audience video display device delivery target two-dimensional image so as to generate an audience video display device resized game image;and generating a player video display device an-image for display on the player video display device that combines the player video display device resized game image with the player video display device delivery target two-dimensional image, and generating an audience video display device image for display on the audience video display device that combines the audience video display device resized game image with the audience video display device delivery target two-dimensional image;wherein the player video display device image includes the player information image content and not the audience information image content, and the audience video display device image includes the player information image content and the audience information image content and wherein the player video display device image and the audience video display device image have different resolutions.

- A non-transitory, computer readable storage medium containing a program, which when executed by a computer, causes the computer to conduct an image generating method by carrying out actions, comprising: acquiring a game image indicative of content to be displayed on a player video display device and an audience video display device, the game image representing at least play status of a game in which a virtual three-dimensional object placed in a virtual three-dimensional space is viewed from a point of view in the virtual three-dimensional space;acquiring a player information image indicative of content to be displayed on one or more of the player video display device and the audience video display device;acquiring an audience information image indicative of content to be displayed on the audience video display device and not on the player video display device;acquiring a player video display device delivery target two-dimensional image indicating a two-dimensional object targeted for delivery to the player video display device, the delivery target two-dimensional image having a same resolution as that of an image to be delivered to the player video display device;acquiring an audience video display device delivery target two-dimensional image indicating a two-dimensional object targeted for delivery to the audience video display device, the delivery target two-dimensional image having a same resolution as that of an image to be delivered to the audience video display device;resizing the game image to the resolution of the player video display device delivery target two-dimensional image so as to generate a player video display device resized game image, and resizing the game image to the resolution of the audience video display device delivery target two-dimensional image so as to generate an audience video display device resized game image;and generating a player video display device an-image for display on the player video display device that combines the player video display device resized game image with the player video display device delivery target two-dimensional image, and generating an audience video display device image for display on the audience video display device that combines the audience video display device resized game image with the audience video display device delivery target two-dimensional image;wherein the player video display device image includes the player information image content and not the audience information image content, and the audience video display device image includes the player information image content and the audience information image content and wherein the player video display device image and the audience video display device image have different resolutions.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.