U.S. Pat. No. 11,918,914

TECHNIQUES FOR COMBINING USER'S FACE WITH GAME CHARACTER AND SHARING ALTERED CHARACTER

AssigneeSony Interactive Entertainment Inc.

Issue DateJanuary 8, 2022

Illustrative Figure

Abstract

A technique presents on a display a computer game character and a first scannable code (SC) scannable by a camera of at least a first user apparatus to cause a server to download to the first user apparatus a photo app operable to generate a photo of a first user and send the photo to the server, which alters the character in accordance with the photo. The character with the face altered is presented along with a second SC that can be scanned to cause the server to download to the first user apparatus a share app operable to select a second user apparatus to send an image of the character with the face altered in accordance with the photo such that the second user apparatus can display the character with the face altered in accordance with the photo.

Description

DETAILED DESCRIPTION This disclosure relates generally to computer ecosystems including aspects of consumer electronics (CE) device networks such as but not limited to computer game networks. A system herein may include server and client components which may be connected over a network such that data may be exchanged between the client and server components. The client components may include one or more computing devices including game consoles such as Sony PlayStation® or a game console made by Microsoft or Nintendo or other manufacturer, virtual reality (VR) headsets, augmented reality (AR) headsets, portable televisions (e.g., smart TVs, Internet-enabled TVs), portable computers such as laptops and tablet computers, and other mobile devices including smart phones and additional examples discussed below. These client devices may operate with a variety of operating environments. For example, some of the client computers may employ, as examples, Linux operating systems, operating systems from Microsoft, or a Unix operating system, or operating systems produced by Apple, Inc., or Google. These operating environments may be used to execute one or more browsing programs, such as a browser made by Microsoft or Google or Mozilla or other browser program that can access websites hosted by the Internet servers discussed below. Also, an operating environment according to present principles may be used to execute one or more computer game programs. Servers and/or gateways may be used that may include one or more processors executing instructions that configure the servers to receive and transmit data over a network such as the Internet. Or a client and server can be connected over a local intranet or a virtual private network. A server or controller may be instantiated by a game console such as a Sony PlayStation®, a personal computer, etc. Information may be exchanged over a network between the clients and servers. To ...

DETAILED DESCRIPTION

This disclosure relates generally to computer ecosystems including aspects of consumer electronics (CE) device networks such as but not limited to computer game networks. A system herein may include server and client components which may be connected over a network such that data may be exchanged between the client and server components. The client components may include one or more computing devices including game consoles such as Sony PlayStation® or a game console made by Microsoft or Nintendo or other manufacturer, virtual reality (VR) headsets, augmented reality (AR) headsets, portable televisions (e.g., smart TVs, Internet-enabled TVs), portable computers such as laptops and tablet computers, and other mobile devices including smart phones and additional examples discussed below. These client devices may operate with a variety of operating environments. For example, some of the client computers may employ, as examples, Linux operating systems, operating systems from Microsoft, or a Unix operating system, or operating systems produced by Apple, Inc., or Google. These operating environments may be used to execute one or more browsing programs, such as a browser made by Microsoft or Google or Mozilla or other browser program that can access websites hosted by the Internet servers discussed below. Also, an operating environment according to present principles may be used to execute one or more computer game programs.

Servers and/or gateways may be used that may include one or more processors executing instructions that configure the servers to receive and transmit data over a network such as the Internet. Or a client and server can be connected over a local intranet or a virtual private network. A server or controller may be instantiated by a game console such as a Sony PlayStation®, a personal computer, etc.

Information may be exchanged over a network between the clients and servers. To this end and for security, servers and/or clients can include firewalls, load balancers, temporary storages, and proxies, and other network infrastructure for reliability and security. One or more servers may form an apparatus that implement methods of providing a secure community such as an online social website or gamer network to network members.

A processor may be a single- or multi-chip processor that can execute logic by means of various lines such as address lines, data lines, and control lines and registers and shift registers.

Components included in one embodiment can be used in other embodiments in any appropriate combination. For example, any of the various components described herein and/or depicted in the Figures may be combined, interchanged, or excluded from other embodiments.

“A system having at least one of A, B, and C” (likewise “a system having at least one of A, B, or C” and “a system having at least one of A, B, C”) includes systems that have A alone, B alone, C alone, A and B together, A and C together, B and C together, and/or A, B, and C together.

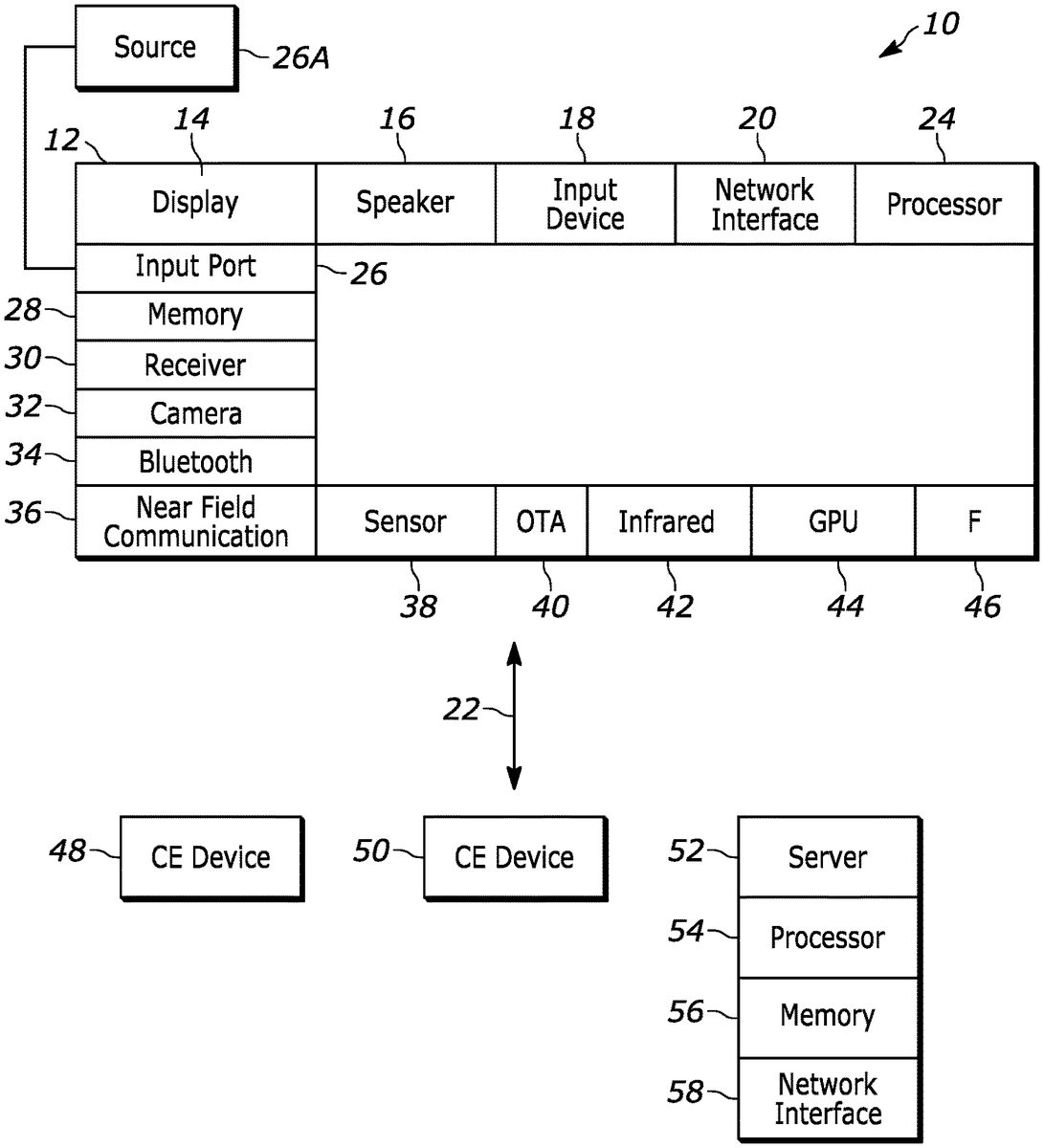

Now specifically referring toFIG.1, an example system10is shown, which may include one or more of the example devices mentioned above and described further below in accordance with present principles. The first of the example devices included in the system10is a consumer electronics (CE) device such as an audio video device (AVD)12such as but not limited to an Internet-enabled TV with a TV tuner (equivalently, set top box controlling a TV). The AVD12alternatively may also be a computerized Internet enabled (“smart”) telephone, a tablet computer, a notebook computer, a head-mounted device (HMID) and/or headset such as smart glasses or a VR headset, another wearable computerized device, a computerized Internet-enabled music player, computerized Internet-enabled headphones, a computerized Internet-enabled implantable device such as an implantable skin device, etc. Regardless, it is to be understood that the AVD12is configured to undertake present principles (e.g., communicate with other CE devices to undertake present principles, execute the logic described herein, and perform any other functions and/or operations described herein).

Accordingly, to undertake such principles the AVD12can be established by some, or all of the components shown inFIG.1. For example, the AVD12can include one or more touch-enabled displays14that may be implemented by a high definition or ultra-high definition “4K” or higher flat screen. The touch-enabled display(s)14may include, for example, a capacitive or resistive touch sensing layer with a grid of electrodes for touch sensing consistent with present principles.

The AVD12may also include one or more speakers16for outputting audio in accordance with present principles, and at least one additional input device18such as an audio receiver/microphone for entering audible commands to the AVD12to control the AVD12. The example AVD12may also include one or more network interfaces20for communication over at least one network22such as the Internet, an WAN, an LAN, etc. under control of one or more processors24. Thus, the interface20may be, without limitation, a Wi-Fi transceiver, which is an example of a wireless computer network interface, such as but not limited to a mesh network transceiver. It is to be understood that the processor24controls the AVD12to undertake present principles, including the other elements of the AVD12described herein such as controlling the display14to present images thereon and receiving input therefrom. Furthermore, note the network interface20may be a wired or wireless modem or router, or other appropriate interface such as a wireless telephony transceiver, or Wi-Fi transceiver as mentioned above, etc.

In addition to the foregoing, the AVD12may also include one or more input and/or output ports26such as a high-definition multimedia interface (HDMI) port or a universal serial bus (USB) port to physically connect to another CE device and/or a headphone port to connect headphones to the AVD12for presentation of audio from the AVD12to a user through the headphones. For example, the input port26may be connected via wire or wirelessly to a cable or satellite source26aof audio video content. Thus, the source26amay be a separate or integrated set top box, or a satellite receiver. Or the source26amay be a game console or disk player containing content. The source26awhen implemented as a game console may include some or all of the components described below in relation to the CE device48.

The AVD12may further include one or more computer memories/computer-readable storage mediums28such as disk-based or solid-state storage that are not transitory signals, in some cases embodied in the chassis of the AVD as standalone devices or as a personal video recording device (PVR) or video disk player either internal or external to the chassis of the AVD for playing back AV programs or as removable memory media or the below-described server. Also, in some embodiments, the AVD12can include a position or location receiver such as but not limited to a cellphone receiver, GPS receiver and/or altimeter30that is configured to receive geographic position information from a satellite or cellphone base station and provide the information to the processor24and/or determine an altitude at which the AVD12is disposed in conjunction with the processor24. The component30may also be implemented by an inertial measurement unit (IMU) that typically includes a combination of accelerometers, gyroscopes, and magnetometers to determine the location and orientation of the AVD12in three dimension or by an event-based sensors.

Continuing the description of the AVD12, in some embodiments the AVD12may include one or more cameras32that may be a thermal imaging camera, a digital camera such as a webcam, an event-based sensor, and/or a camera integrated into the AVD12and controllable by the processor24to gather pictures/images and/or video in accordance with present principles. Also included on the AVD12may be a Bluetooth transceiver34and other Near Field Communication (NFC) element36for communication with other devices using Bluetooth and/or NFC technology, respectively. An example NFC element can be a radio frequency identification (RFID) element.

Further still, the AVD12may include one or more auxiliary sensors38(e.g., a pressure sensor, a motion sensor such as an accelerometer, gyroscope, cyclometer, or a magnetic sensor, an infrared (IR) sensor, an optical sensor, a speed and/or cadence sensor, an event-based sensor, a gesture sensor (e.g., for sensing gesture command)) that provide input to the processor24. For example, one or more of the auxiliary sensors38may include one or more pressure sensors forming a layer of the touch-enabled display14itself and may be, without limitation, piezoelectric pressure sensors, capacitive pressure sensors, piezoresistive strain gauges, optical pressure sensors, electromagnetic pressure sensors, etc.

The AVD12may also include an over-the-air TV broadcast port40for receiving OTA TV broadcasts providing input to the processor24. In addition to the foregoing, it is noted that the AVD12may also include an infrared (IR) transmitter and/or IR receiver and/or IR transceiver42such as an IR data association (IRDA) device. A battery (not shown) may be provided for powering the AVD12, as may be a kinetic energy harvester that may turn kinetic energy into power to charge the battery and/or power the AVD12. A graphics processing unit (GPU)44and field programmable gated array46also may be included. One or more haptics/vibration generators47may be provided for generating tactile signals that can be sensed by a person holding or in contact with the device. The haptics generators47may thus vibrate all or part of the AVD12using an electric motor connected to an off-center and/or off-balanced weight via the motor's rotatable shaft so that the shaft may rotate under control of the motor (which in turn may be controlled by a processor such as the processor24) to create vibration of various frequencies and/or amplitudes as well as force simulations in various directions.

Still referring toFIG.1, in addition to the AVD12, the system10may include one or more other CE device types. In one example, a first CE device48may be a computer game console that can be used to send computer game audio and video to the AVD12via commands sent directly to the AVD12and/or through the below-described server while a second CE device50may include similar components as the first CE device48. In the example shown, the second CE device50may be configured as a computer game controller manipulated by a player or a head-mounted display (HMID) worn by a player. The HMID may include a heads-up transparent or non-transparent display for respectively presenting AR/MR content or VR content.

In the example shown, only two CE devices are shown, it being understood that fewer or greater devices may be used. A device herein may implement some or all of the components shown for the AVD12. Any of the components shown in the following figures may incorporate some or all of the components shown in the case of the AVD12.

Now in reference to the afore-mentioned at least one server52, it includes at least one server processor54, at least one tangible computer readable storage medium56such as disk-based or solid-state storage, and at least one network interface58that, under control of the server processor54, allows for communication with the other devices ofFIG.1over the network22, and indeed may facilitate communication between servers and client devices in accordance with present principles. Note that the network interface58may be, e.g., a wired or wireless modem or router, Wi-Fi transceiver, or other appropriate interface such as, e.g., a wireless telephony transceiver.

Accordingly, in some embodiments the server52may be an Internet server or an entire server “farm” and may include and perform “cloud” functions such that the devices of the system10may access a “cloud” environment via the server52in example embodiments for, e.g., network gaming applications. Or the server52may be implemented by one or more game consoles or other computers in the same room as the other devices shown inFIG.1or nearby.

The components shown in the following figures may include some or all components shown inFIG.1. Any user interfaces (UI) described herein may be consolidated and/or expanded, and UI elements may be mixed and matched between UIs.

FIG.2illustrates a display200such as any display described herein, e.g., a TV on which video games are presented, presenting a UI202under control of a main application (“app”) that in the non-limiting example shown includes game windows204presenting video or still images from respective computer games. Below the windows204are feature windows206representing respective categories of games such as “new”, “popular with friends”, “epic moments” (representing much-repeated or viewed segments of games or representing segments of games the user has “liked”), and “trophy time”, representing games or game segments in which the user or other users won prizes.

Automatically or upon user selection a UI300shown inFIG.3may be presented on the display200. In the non-limiting example ofFIG.3, a character window302is shown in which a character of a computer game appears in still image or video. The user may select the clip being presented or it may be selected automatically for the user. The user may select the character from the game to be altered it may be selected automatically for the user.

Also, a scannable code (SC)304such as a quick response (QR) code or barcode or other code appears that can be scanned by a camera of, e.g., a user apparatus such as a smart phone. A prompt306may also appear to prompt the user to scan the code for a specific purpose, in the example shown, to upload a photo (“selfie”) of the user for purposes to be shortly described.

Indeed, and turning now toFIG.4, at block400a user manipulating, e.g., a user apparatus such as a phone operates the camera on the phone to scan the code304shown inFIG.3. This causes the phone to open an application, termed herein a “selfie app”, that is a web app for permitting a photo taken by the user of himself at block404to be uploaded to a server such as the server52shown inFIG.1when the user selects at block406an “upload” button on his phone presented by the selfie app. Scanning the code essentially invokes a uniform resource listing (URL) of a hypertext transfer protocol (HTTP) web page hosted by the cell phone, the server, or a combination of the two.

The server receives the image of the user and at block408alters the face and if desired the body of the character shown at302inFIG.3in accordance with the image of the user. In other embodiments the user apparatus alters the face and if desired the body of the character. In other embodiments the server cooperates with the user apparatus to alter the face and/or body. In some embodiments the user may provide input to decide on whether the game or clip is to be presented in low- or high-quality video and thus take less or more time, respectively, to modify with the user's face.

In a non-limiting example, a Python script known as “Faceswap” may be used in which a library takes source video and merges the selfie onto a face in the video.

In non-limiting examples the image of the user may be blended with that of the game character. Blending may be accomplished using any appropriate bending algorithm. For example, blending the user and character images may be done using bitmaps and averaging corresponding bits to produce an average image, or using layer masks, or using alpha blending, in which a composite of two images is derived from combining pixel color values based on pixel transparency (alpha) values, typically on a pixel-by-pixel basis, etc. Blending may be of facial features only or it may be done by blending a full body image of the user with a full body image of the character.

FIG.5illustrates a UI500that may be presented on the display200in which a still or video image502of the game character appears side by side with an image504of the user as uploaded to the server at block406inFIG.4. Then after the operation at block408inFIG.4a UI600may be presented on the display200showing an altered game character602that is altered in accordance with the user's image such that at least the face of the character602is based on the image of the user. The game can execute with the character602moving according to the game program under control of a game controller except that the face of the character appears to be that of the user.

The UI600may also present a second code604that can be scanned to share an image or images of the game character602altered in accordance with the image of the user. A prompt606may be presented to this effect.

Accordingly, at block700inFIG.7a user may manipulate his apparatus such as a phone to scan the code604shown inFIG.6. This causes, at block702, the user apparatus to open a share app shown inFIG.8.

More specifically,FIG.8illustrates a user apparatus800that has scanned the code604inFIG.6and as a result has presented a UI802in accordance with the share app. The UI802presents a scene804from the computer game along with the title806of the game and a share selector808. Selection of the share selector808may cause a UI900shown inFIG.9to appear on the user apparatus800. The UI900may include a prompt902to check out a video under which may be selectors904each selectable to select a recipient such as a friend from a friends list stored in the apparatus800. A transmission mode selector906may be selected to designate the mode of transmission to the friend selected using a selector904. Transmission modes without limitation may include, e.g., instant message or other type of telephony messaging, transmission through a Web browser, etc.

Once the user selects the recipient(s) and transmission mode, the recipient's network address or email address or phone number as appropriate for the selected transmission mode is accessed and data sent to an apparatus1000associated with the recipient and shown inFIG.10. The recipient apparatus1000presents a scene1002of the computer game showing the game character altered in accordance with the user's image as shown at602inFIG.6. The title1004of the game and/or character may be presented along with the name1006of the sending user.

FIG.11encapsulates the technique discussed above. The main app1100fetches videos at1102from a server1104such as the server52shown inFIG.1. The game video or videos may be presented on the display200shown inFIG.2and described above. The user employs his apparatus800to scan the first code304as shown at1106to execute the selfie app, which sends the image of the user to the server as indicated at1108.

In response, as indicated at1110the server sends the image to the display200for presentation. The user image and IDs of the game video whose character is being altered are exchanged between the server and display as indicated by the arrows1112. The display consequently fetches the altered video that includes the character altered according to the user image as indicated at1114and presents the second code604, which is scanned by the user apparatus800to invoke the share app as indicated by the arrow1116.

The techniques described above may be performed in real time or non-real time. Text as input by the user and voice as spoken by the user and picked up using a microphone may be morphed onto the game character as well as set forth further below.

FIG.12illustrates a display1200such as any display herein including, e.g., the display of the user apparatus on which a UI1202can be presented. In the non-limiting example shown, the UI includes an image1204of a game character either as provided by the game designer or as modified consistent with disclosure above to have the face of the user.

A prompt1206may be presented to allow the user to change native dialog of the game associated with the character1204to use the user's name (by selecting selector1208) or another fanciful name input by the user (by selecting selector/input field1210). Thereafter, as the game and/or selected clip of the game is presented, the dialog played on audio speakers is changed such that whenever the native name of the character204is to be uttered, the name selected by the user is played instead.

FIG.13illustrates a display1300such as any display herein including, e.g., the display of the user apparatus on which a UI1302can be presented. In the non-limiting example shown, the UI includes an image1304of a game character either as provided by the game designer or as modified consistent with disclosure above to have the face of the user.

A prompt1306may be presented to allow the user to create a gif file by, e.g., selecting a selector1308. In response, the clip is converted to an image file in the .gif format.

FIG.14illustrates a display1400such as any display herein including, e.g., the display of the user apparatus on which a UI1402can be presented. In the non-limiting example shown, the UI includes an image1404of a game timeline, from start (on the left) to finish (on the right) with plural frames1406in the timeline at the times they appear in the game when played as a video. A slider1408may be provided and can be moved left and right along the timeline as indicated by the dashed arrows1410to select a frame1406at which to start the clip discussed inFIGS.1-11above. A stop frame also can be selected to select the end of the clip.

FIG.15illustrates that a palette can be provided to allow the end user to change visual attributes of the face of the character.

With more specificity,FIG.15illustrates a display1500such as any display herein including, e.g., the display of the user apparatus on which a UI1502can be presented. In the non-limiting example shown, the UI includes an image1504of a game character either as provided by the game designer or as modified consistent with disclosure above to have the face of the user.

Color selectors1506may be provided that can be selected by the user to define the color of the face of the character1504. Age selectors1508may be provided that can be selected by the user to define the age of the face of the character1504(with more wrinkle lines indicating older age, etc., such that a first number of wrinkle lines is imposed on the face when a younger age is selected and a second number of wrinkle lines greater than the first number is imposed on the face when an older age is selected.)

FIG.16illustrates a display1600such as any display herein including, e.g., the display of the user apparatus on which a UI1602can be presented. In the non-limiting example shown, the UI includes an image1604of a game character either as provided by the game designer or as modified consistent with disclosure above to have the face of the user.

The UI1602may include a selector1606selectable to allow a user to dub the user's voice onto dialog to be spoken by the character. The user may record a clip of his voice as indicated at1608by means of, e.g., a microphone on the user apparatus. The voice characteristics of the recorded voice are used to modify the voice of the character1604.

Also, if desired the user may select a selector1610to have the character1604speak the game dialog native to the game, but in the user's voice if desired, or a selector1612to have the character1604speak a dialog created by the user by selecting at1614either to dictate the desired dialog into a microphone of the user apparatus, or to input text at1616that is converted to speech and used for the dialog of the character1604or other character.

FIG.17illustrates that an end user can select a template to scale text, emphasize words to animate, and coordinate text to part of a video clip. With greater specificity, a display1700such as any display herein including, e.g., the display of the user apparatus on which a UI1702can be presented and can include an image of a game character either as provided by the game designer or as modified consistent with disclosure above to have the face of the user.

The UI1702can include text size selectors1704to enable a user to select the size and if desired location of text to be presented on the display during game play as typed in by the user or received from a friend. The UI1702also may include animation selectors1706to allow a user to define what text terms are to be animated or colored or otherwise highlighted during game play, including types of words and specific words themselves as defined by the user in a field1708.

Machine learning may be used to put friends together, in which a game console can capture a video of the period of a game in which, for example, a trophy was won and share the video between friends on a friend's list of a user apparatus.

While the particular embodiments are herein shown and described in detail, it is to be understood that the subject matter which is encompassed by the present invention is limited only by the claims.

Claims

- A device, comprising: at least one storage device that is not a transitory signal and that comprises instructions executable by at least one processor to cause the at least one processor to: present on at least one display at least one computer game comprising at least a first character;present on the display at least a first scannable code (SC) scannable by a camera of at least a first user apparatus to cause at least one server to download to the first user apparatus a photo application (app);the photo app being operable to generate a photo of at least a first user and send the photo to the at least one server;receive from the at least one server an image of the first character with a face altered in accordance with the photo;present the character with the face altered in accordance with the photo on the at least one display;present on the display at least a second SC scannable by a camera of the first user apparatus to cause the at least one server to download to the first user apparatus a share app operable to select a second user apparatus to send an image of the character with the face altered in accordance with the photo such that the second user apparatus can display the character with the face altered in accordance with the photo.

- The device of claim 1, wherein the display comprises a TV and the first and second user apparatuses comprise mobile phones.

- The device of claim 1, wherein the SC comprise quick response (QR) codes.

- The device of claim 1, comprising the at least one processor.

- The device of claim 4, comprising the at least one server.

- The device of claim 5, comprising the first and second user apparatus.

- The device of claim 1, wherein the instructions are executable to present, with the first SC, a prompt to scan the first SC to upload the photo.

- The device of claim 1, wherein the instructions are executable to present, with the second SC, a prompt to scan the second SC to share the photo.

- A method, comprising: presenting on at least one display at least one computer game comprising at least a first character;presenting on the display at least a first scannable code (SC) scannable by a camera of at least a first user apparatus to cause at least one server to download to the first user apparatus a photo application (app);the photo app being operable to generate a photo of at least a first user and send the photo to the at least one server;receiving from the at least one server an image of the first character with a face altered in accordance with the photo;presenting the character with the face altered in accordance with the photo on the at least one display;presenting on the display at least a second SC scannable by a camera of the first user apparatus to cause the at least one server to download to the first user apparatus a share app operable to select a second user apparatus to send an image of the character with the face altered in accordance with the photo such that the second user apparatus can display the character with the face altered in accordance with the photo.

- The method of claim 9, wherein the display comprises a TV and the first and second user apparatuses comprise mobile phones.

- The method of claim 9, wherein the SC comprise quick response (QR) codes.

- The method of claim 9, comprising presenting, with the first SC, a prompt to scan the first SC to upload the photo.

- The method of claim 9, comprising presenting, with the second SC, a prompt to scan the second SC to share the photo.

- A device comprising: at least one processor programmed with instructions to: present on at least one display at least one video comprising at least a first character;present on the display at least a first scannable code (SC) scannable by a camera of at least a first user apparatus to invoke on the first user apparatus a photo application (app);the photo app being operable to generate a photo of at least a first user and upload the photo;receive an image of the first character with a face altered in accordance with the photo;present the character with the face altered in accordance with the photo on the at least one display;and present on the display at least a second SC scannable by a camera of the first user apparatus to invoke on the first user apparatus a share app operable to select a second user apparatus to send an image of the character with the face altered in accordance with the photo such that the second user apparatus can display the character with the face altered in accordance with the photo.

- The device of claim 14, wherein the display comprises a TV and the first and second user apparatuses comprise phones.

- The device of claim 14, wherein the SC comprise quick response (QR) codes.

- The device of claim 14, comprising the first and second user apparatus.

- The device of claim 14, wherein the instructions are executable to present, with the first SC, a prompt to scan the first SC to upload the photo.

- The device of claim 14, wherein the instructions are executable to present, with the second SC, a prompt to scan the second SC to share the photo.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.