U.S. Pat. No. 11,911,700

SPORT GAME SYSTEM, COMPUTER-READABLE NON-TRANSITORY STORAGE MEDIUM HAVING STORED THEREIN SPORT GAME PROGRAM, SPORT GAME APPARATUS, AND SPORT GAME PROCESSING METHOD

AssigneeNINTENDO CO., LTD.

Issue DateJuly 7, 2022

Illustrative Figure

Abstract

A player character is moved based on an operation performed on a direction input device, the position of a virtual camera is controlled based on the position of the player character, and a swing direction in which an operation device has been swung is determined based on data from an inertial sensor, and causes the player character to perform a swing action of swinging in a direction according to the swing direction. Then, a moving object is moved based on collision determination between the swing action and the moving object, thereby controlling a sport game using the moving object.

Description

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS Hereinafter, the exemplary embodiment will be described. A game system according to an example of the exemplary embodiment is described below. An example of a game system1according to the exemplary embodiment includes a main body apparatus (an information processing apparatus, which functions as a game apparatus main body in the exemplary embodiment)2, a left controller3, and a right controller4. Each of the left controller3and the right controller4is attachable to and detachable from the main body apparatus2. That is, the game system1can be used as a unified apparatus obtained by attaching each of the left controller3and the right controller4to the main body apparatus2. Further, in the game system1, the main body apparatus2, the left controller3, and the right controller4can also be used as separate bodies (seeFIG.2). Hereinafter, first, the hardware configuration of the game system1according to the exemplary embodiment is described, and then, the control of the game system1according to the exemplary embodiment is described. FIG.1shows an example of the state where the left controller3and the right controller4are attached to the main body apparatus2. As shown inFIG.1, each of the left controller3and the right controller4is attached to and unified with the main body apparatus2. The main body apparatus2is an apparatus for performing various processes (e.g., game processing) in the game system1. The main body apparatus2includes a display12. Each of the left controller3and the right controller4is an apparatus including operation sections with which a user provides inputs. FIG.2shows an example of the state where each of the left controller3and the right controller4is detached from the main body apparatus2. As shown inFIGS.1and2, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. It should be noted that hereinafter, the left controller3and the right controller4will occasionally be referred to collectively as a “controller”. ...

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS

Hereinafter, the exemplary embodiment will be described.

A game system according to an example of the exemplary embodiment is described below. An example of a game system1according to the exemplary embodiment includes a main body apparatus (an information processing apparatus, which functions as a game apparatus main body in the exemplary embodiment)2, a left controller3, and a right controller4. Each of the left controller3and the right controller4is attachable to and detachable from the main body apparatus2. That is, the game system1can be used as a unified apparatus obtained by attaching each of the left controller3and the right controller4to the main body apparatus2. Further, in the game system1, the main body apparatus2, the left controller3, and the right controller4can also be used as separate bodies (seeFIG.2). Hereinafter, first, the hardware configuration of the game system1according to the exemplary embodiment is described, and then, the control of the game system1according to the exemplary embodiment is described.

FIG.1shows an example of the state where the left controller3and the right controller4are attached to the main body apparatus2. As shown inFIG.1, each of the left controller3and the right controller4is attached to and unified with the main body apparatus2. The main body apparatus2is an apparatus for performing various processes (e.g., game processing) in the game system1. The main body apparatus2includes a display12. Each of the left controller3and the right controller4is an apparatus including operation sections with which a user provides inputs.

FIG.2shows an example of the state where each of the left controller3and the right controller4is detached from the main body apparatus2. As shown inFIGS.1and2, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. It should be noted that hereinafter, the left controller3and the right controller4will occasionally be referred to collectively as a “controller”.

FIG.3is six orthogonal views showing an example of the main body apparatus2. As shown inFIG.3, the main body apparatus2includes an approximately plate-shaped housing11. In the exemplary embodiment, a main surface (in other words, a surface on a front side, i.e., a surface on which the display12is provided) of the housing11has a substantially rectangular shape.

It should be noted that the shape and the size of the housing11are discretionary. As an example, the housing11may be of a portable size. Further, the main body apparatus2alone or the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2may function as a mobile apparatus. The main body apparatus2or the unified apparatus may function as a handheld apparatus or a portable apparatus.

As shown inFIG.3, the main body apparatus2includes the display12, which is provided on the main surface of the housing11. The display12displays an image generated by the main body apparatus2. In the exemplary embodiment, the display12is a liquid crystal display device (LCD). The display12, however, may be a display device of any type.

The main body apparatus2includes a touch panel13on the screen of the display12. In the exemplary embodiment, the touch panel13is of a type capable of receiving a multi-touch input (e.g., electrical capacitance type). However, the touch panel13may be of any type, and may be, for example, of a type capable of receiving a single-touch input (e.g., resistive film type).

The main body apparatus2includes speakers (i.e., speakers88shown inFIG.6) within the housing11. As shown inFIG.3, speaker holes11aand11bare formed on the main surface of the housing11. Then, sounds outputted from the speakers88are outputted through the speaker holes11aand11b.

Further, the main body apparatus2includes a left terminal17, which is a terminal for the main body apparatus2to perform wired communication with the left controller3, and a right terminal21, which is a terminal for the main body apparatus2to perform wired communication with the right controller4.

As shown inFIG.3, the main body apparatus2includes a slot23. The slot23is provided on an upper side surface of the housing11. The slot23is so shaped as to allow a predetermined type of storage medium to be attached to the slot23. The predetermined type of storage medium is, for example, a dedicated storage medium (e.g., a dedicated memory card) for the game system1and an information processing apparatus of the same type as the game system1. The predetermined type of storage medium is used to store, for example, data (e.g., saved data of an application or the like) used by the main body apparatus2and/or a program (e.g., a program for an application or the like) executed by the main body apparatus2. Further, the main body apparatus2includes a power button28.

The main body apparatus2includes a lower terminal27. The lower terminal27is a terminal for the main body apparatus2to communicate with a cradle. In the exemplary embodiment, the lower terminal27is a USB connector (more specifically, a female connector). Further, when the unified apparatus or the main body apparatus2alone is mounted on the cradle, the game system1can display on a stationary monitor an image generated by and outputted from the main body apparatus2. Further, in the exemplary embodiment, the cradle has the function of charging the unified apparatus or the main body apparatus2alone mounted on the cradle. Further, the cradle has the function of a hub device (specifically, a USB hub).

FIG.4is six orthogonal views showing an example of the left controller3. As shown inFIG.4, the left controller3includes a housing31. In the exemplary embodiment, the housing31has a vertically long shape, i.e., is shaped to be long in an up-down direction shown inFIG.4(i.e., a z-axis direction shown inFIG.4). In the state where the left controller3is detached from the main body apparatus2, the left controller3can also be held in the orientation in which the left controller3is vertically long. The housing31has such a shape and a size that when held in the orientation in which the housing31is vertically long, the housing31can be held with one hand, particularly, the left hand. Further, the left controller3can also be held in the orientation in which the left controller3is horizontally long. When held in the orientation in which the left controller3is horizontally long, the left controller3may be held with both hands.

The left controller3includes a left analog stick (hereinafter, referred to as a “left stick”)32as an example of a direction input device. As shown inFIG.4, the left stick32is provided on a main surface of the housing31. The left stick32can be used as a direction input section with which a direction can be inputted. The user tilts the left stick32and thereby can input a direction corresponding to the direction of the tilt (and input a magnitude corresponding to the angle of the tilt). It should be noted that the left controller3may include a directional pad, a slide stick that allows a slide input, or the like as the direction input section, instead of the analog stick. Further, in the exemplary embodiment, it is possible to provide an input by pressing the left stick32.

The left controller3includes various operation buttons. The left controller3includes four operation buttons33to36(specifically, a right direction button33, a down direction button34, an up direction button35, and a left direction button36) on the main surface of the housing31. Further, the left controller3includes a record button37and a “−” (minus) button47. The left controller3includes a first L-button38and a ZL-button39in an upper left portion of a side surface of the housing31. Further, the left controller3includes a second L-button43and a second R-button44, on the side surface of the housing31on which the left controller3is attached to the main body apparatus2. These operation buttons are used to give instructions depending on various programs (e.g., an OS program and an application program) executed by the main body apparatus2.

Further, the left controller3includes a terminal42for the left controller3to perform wired communication with the main body apparatus2.

FIG.5is six orthogonal views showing an example of the right controller4. As shown inFIG.5, the right controller4includes a housing51. In the exemplary embodiment, the housing51has a vertically long shape, i.e., is shaped to be long in the up-down direction shown inFIG.5(i.e., the z-axis direction shown inFIG.5). In the state where the right controller4is detached from the main body apparatus2, the right controller4can also be held in the orientation in which the right controller4is vertically long. The housing51has such a shape and a size that when held in the orientation in which the housing51is vertically long, the housing51can be held with one hand, particularly the right hand. Further, the right controller4can also be held in the orientation in which the right controller4is horizontally long. When held in the orientation in which the right controller4is horizontally long, the right controller4may be held with both hands.

Similarly to the left controller3, the right controller4includes a right analog stick (hereinafter, referred to as a “right stick”)52as a direction input section. In the exemplary embodiment, the right stick52has the same configuration as that of the left stick32of the left controller3. Further, the right controller4may include a directional pad, a slide stick that allows a slide input, or the like, instead of the analog stick. Further, similarly to the left controller3, the right controller4includes four operation buttons53to56(specifically, an A-button53, a B-button54, an X-button55, and a Y-button56) on a main surface of the housing51. Further, the right controller4includes a “+” (plus) button57and a home button58. Further, the right controller4includes a first R-button60and a ZR-button61in an upper right portion of a side surface of the housing51. Further, similarly to the left controller3, the right controller4includes a second L-button65and a second R-button66.

Further, the right controller4includes a terminal64for the right controller4to perform wired communication with the main body apparatus2.

FIG.6is a block diagram showing an example of the internal configuration of the main body apparatus2. The main body apparatus2includes components81to91,97, and98shown inFIG.6in addition to the components shown inFIG.3. Some of the components81to91,97, and98may be mounted as electronic components on an electronic circuit board and accommodated in the housing11.

The main body apparatus2includes a processor81. The processor81is an information processing section for executing various types of information processing to be executed by the main body apparatus2. For example, the processor81may be composed only of a CPU (Central Processing Unit), or may be composed of a SoC (System-on-a-chip) having a plurality of functions such as a CPU function and a GPU (Graphics Processing Unit) function. The processor81executes an information processing program (e.g., a game program) stored in a storage section (specifically, an internal storage medium such as a flash memory84, an external storage medium attached to the slot23, or the like), thereby performing the various types of information processing.

The main body apparatus2includes the flash memory84and a DRAM (Dynamic Random Access Memory)85as examples of internal storage media built into the main body apparatus2. The flash memory84and the DRAM85are connected to the processor81. The flash memory84is a memory mainly used to store various data (or programs) to be saved in the main body apparatus2. The DRAM85is a memory used to temporarily store various data used for information processing.

The main body apparatus2includes a slot interface (hereinafter, abbreviated as “I/F”)91. The slot I/F91is connected to the processor81. The slot I/F91is connected to the slot23, and in accordance with an instruction from the processor81, reads and writes data from and to the predetermined type of storage medium (e.g., a dedicated memory card) attached to the slot23.

The processor81appropriately reads and writes data from and to the flash memory84, the DRAM85, and each of the above storage media, thereby performing the above information processing.

The main body apparatus2includes a network communication section82. The network communication section82is connected to the processor81. The network communication section82communicates (specifically, through wireless communication) with an external apparatus via a network. In the exemplary embodiment, as a first communication form, the network communication section82connects to a wireless LAN and communicates with an external apparatus, using a method compliant with the Wi-Fi standard. Further, as a second communication form, the network communication section82wirelessly communicates with another main body apparatus2of the same type, using a predetermined method for communication (e.g., communication based on a unique protocol or infrared light communication). It should be noted that the wireless communication in the above second communication form achieves the function of enabling so-called “local communication” in which the main body apparatus2can wirelessly communicate with another main body apparatus2placed in a closed local network area, and the plurality of main body apparatuses2directly communicate with each other to transmit and receive data.

The main body apparatus2includes a controller communication section83. The controller communication section83is connected to the processor81. The controller communication section83wirelessly communicates with the left controller3and/or the right controller4. The communication method between the main body apparatus2and the left controller3and the right controller4is discretionary. In the exemplary embodiment, the controller communication section83performs communication compliant with the Bluetooth (registered trademark) standard with the left controller3and with the right controller4.

The processor81is connected to the left terminal17, the right terminal21, and the lower terminal27. When performing wired communication with the left controller3, the processor81transmits data to the left controller3via the left terminal17and also receives operation data from the left controller3via the left terminal17. Further, when performing wired communication with the right controller4, the processor81transmits data to the right controller4via the right terminal21and also receives operation data from the right controller4via the right terminal21. Further, when communicating with the cradle, the processor81transmits data to the cradle via the lower terminal27. As described above, in the exemplary embodiment, the main body apparatus2can perform both wired communication and wireless communication with each of the left controller3and the right controller4. Further, when the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2or the main body apparatus2alone is attached to the cradle, the main body apparatus2can output data (e.g., image data or sound data) to the stationary monitor or the like via the cradle.

Here, the main body apparatus2can communicate with a plurality of left controllers3simultaneously (in other words, in parallel). Further, the main body apparatus2can communicate with a plurality of right controllers4simultaneously (in other words, in parallel). Thus, a plurality of users can simultaneously provide inputs to the main body apparatus2, each using a set of the left controller3and the right controller4. As an example, a first user can provide an input to the main body apparatus2using a first set of the left controller3and the right controller4, and simultaneously, a second user can provide an input to the main body apparatus2using a second set of the left controller3and the right controller4.

The main body apparatus2includes a touch panel controller86, which is a circuit for controlling the touch panel13. The touch panel controller86is connected between the touch panel13and the processor81. On the basis of a signal from the touch panel13, the touch panel controller86generates data indicating the position at which a touch input has been performed, for example, and outputs the data to the processor81.

Further, the display12is connected to the processor81. The processor81displays a generated image (e.g., an image generated by executing the above information processing) and/or an externally acquired image on the display12.

The main body apparatus2includes a codec circuit87and speakers (specifically, a left speaker and a right speaker)88. The codec circuit87is connected to the speakers88and a sound input/output terminal25and also connected to the processor81. The codec circuit87is a circuit for controlling the input and output of sound data to and from the speakers88and the sound input/output terminal25.

The main body apparatus2includes a power control section97and a battery98. The power control section97is connected to the battery98and the processor81. Further, although not shown inFIG.6, the power control section97is connected to components of the main body apparatus2(specifically, components that receive power supplied from the battery98, the left terminal17, and the right terminal21). Based on a command from the processor81, the power control section97controls the supply of power from the battery98to the above components.

Further, the battery98is connected to the lower terminal27. When an external charging device (e.g., the cradle) is connected to the lower terminal27, and power is supplied to the main body apparatus2via the lower terminal27, the battery98is charged with the supplied power.

FIG.7is a block diagram showing examples of the internal configurations of the main body apparatus2, the left controller3, and the right controller4. It should be noted that the details of the internal configuration of the main body apparatus2are shown inFIG.6and therefore are omitted inFIG.7.

The left controller3includes a communication control section101, which communicates with the main body apparatus2. As shown inFIG.7, the communication control section101is connected to components including the terminal42. In the exemplary embodiment, the communication control section101can communicate with the main body apparatus2through both wired communication via the terminal42and wireless communication not via the terminal42. The communication control section101controls the method for communication performed by the left controller3with the main body apparatus2. That is, when the left controller3is attached to the main body apparatus2, the communication control section101communicates with the main body apparatus2via the terminal42. Further, when the left controller3is detached from the main body apparatus2, the communication control section101wirelessly communicates with the main body apparatus2(specifically, the controller communication section83). The wireless communication between the communication control section101and the controller communication section83is performed in accordance with the Bluetooth (registered trademark) standard, for example.

Further, the left controller3includes a memory102such as a flash memory. The communication control section101includes, for example, a microcomputer (or a microprocessor) and executes firmware stored in the memory102, thereby performing various processes.

The left controller3includes buttons103(specifically, the buttons33to39,43,44, and47). Further, the left controller3includes the left stick32. Each of the buttons103and the left stick32outputs information regarding an operation performed on itself to the communication control section101repeatedly at appropriate timings.

The left controller3includes inertial sensors. Specifically, the left controller3includes an acceleration sensor104. Further, the left controller3includes an angular velocity sensor105. In the exemplary embodiment, the acceleration sensor104detects the magnitudes of accelerations along predetermined three axial (e.g., xyz axes shown inFIG.4) directions. It should be noted that the acceleration sensor104may detect an acceleration along one axial direction or accelerations along two axial directions. In the exemplary embodiment, the angular velocity sensor105detects angular velocities about predetermined three axes (e.g., the xyz axes shown inFIG.4). It should be noted that the angular velocity sensor105may detect an angular velocity about one axis or angular velocities about two axes. Each of the acceleration sensor104and the angular velocity sensor105is connected to the communication control section101. Then, the detection results of the acceleration sensor104and the angular velocity sensor105are outputted to the communication control section101repeatedly at appropriate timings.

The communication control section101acquires information regarding an input (specifically, information regarding an operation or the detection result of the sensor) from each of input sections (specifically, the buttons103, the left stick32, and the sensors104and105). The communication control section101transmits operation data including the acquired information (or information obtained by performing predetermined processing on the acquired information) to the main body apparatus2. It should be noted that the operation data is transmitted repeatedly, once every predetermined time. It should be noted that the interval at which the information regarding an input is transmitted from each of the input sections to the main body apparatus2may or may not be the same.

The above operation data is transmitted to the main body apparatus2, whereby the main body apparatus2can obtain inputs provided to the left controller3. That is, the main body apparatus2can determine operations on the buttons103and the left stick32based on the operation data. Further, the main body apparatus2can calculate information regarding the motion and/or the orientation of the left controller3based on the operation data (specifically, the detection results of the acceleration sensor104and the angular velocity sensor105).

The left controller3includes a power supply section108. In the exemplary embodiment, the power supply section108includes a battery and a power control circuit. Although not shown inFIG.7, the power control circuit is connected to the battery and also connected to components of the left controller3(specifically, components that receive power supplied from the battery).

As shown inFIG.7, the right controller4includes a communication control section111, which communicates with the main body apparatus2. Further, the right controller4includes a memory112, which is connected to the communication control section111. The communication control section111is connected to components including the terminal64. The communication control section111and the memory112have functions similar to those of the communication control section101and the memory102, respectively, of the left controller3. Thus, the communication control section111can communicate with the main body apparatus2through both wired communication via the terminal64and wireless communication not via the terminal64(specifically, communication compliant with the Bluetooth (registered trademark) standard). The communication control section111controls the method for communication performed by the right controller4with the main body apparatus2.

The right controller4includes input sections similar to the input sections of the left controller3. Specifically, the right controller4includes buttons113, the right stick52, and inertial sensors (an acceleration sensor114and an angular velocity sensor115). These input sections have functions similar to those of the input sections of the left controller3and operate similarly to the input sections of the left controller3.

The right controller4includes a power supply section118. The power supply section118has a function similar to that of the power supply section108of the left controller3and operates similarly to the power supply section108.

[Outline of Game Processing of Exemplary Embodiment]

Next, the outline of an operation of game processing executed by the game system1according to the exemplary embodiment will be described. As described above, in the game system1, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. When a game is played in the state where the left controller3and the right controller4are attached to the main body apparatus2, a game image is outputted to the display12. When the main body apparatus2alone in the state where the left controller3and the right controller4are detached from the main body apparatus2is attached to the cradle, the main body apparatus2can also output the game image to a stationary monitor or the like via the cradle. In the exemplary embodiment, description is given of an example case where the game is played in the latter mode. That is, the main body apparatus2alone in the state where the left controller3and the right controller4are detached from the main body apparatus2is attached to the cradle, and the main body apparatus2outputs the game image and the like to the stationary monitor or the like via the cradle. In addition, a case where the user plays the game while holding the left controller3with the left hand and the right controller with the right hand, is assumed.

[Assumed Game]

A game assumed in the exemplary embodiment is a sport game in which teams compete with each other in a virtual three-dimensional space. Specifically, the game is a team-versus-team sport game in which an impact is given to a moving object to move the moving object, each team raises the score by satisfying predetermined conditions, and a winning team and a losing team are determined based on their scores at the end of the game when a time limit has expired. In the exemplary embodiment, a soccer game is assumed as an example of the sport game. Therefore, a soccer ball object (hereinafter, simply referred to as “ball”) is assumed as the above moving object. The above impact is, for example, a kick, heading, etc., performed to the ball by an athlete character object corresponding to an athlete in soccer. Of athlete character objects, a player character object that is an object to be operated by the user is simply referred to as “player character” in the following description. Meanwhile, the athlete character objects other than the player character are referred to as NPCs (Non-Player Characters).

As sport games other than the soccer game to which the exemplary embodiment is applicable, sport games simulating sports (mainly, ball games) in which a moving object is moved as in the aforementioned soccer, are conceivable. Examples of the sport games include tennis, volleyball, badminton, and hockey.

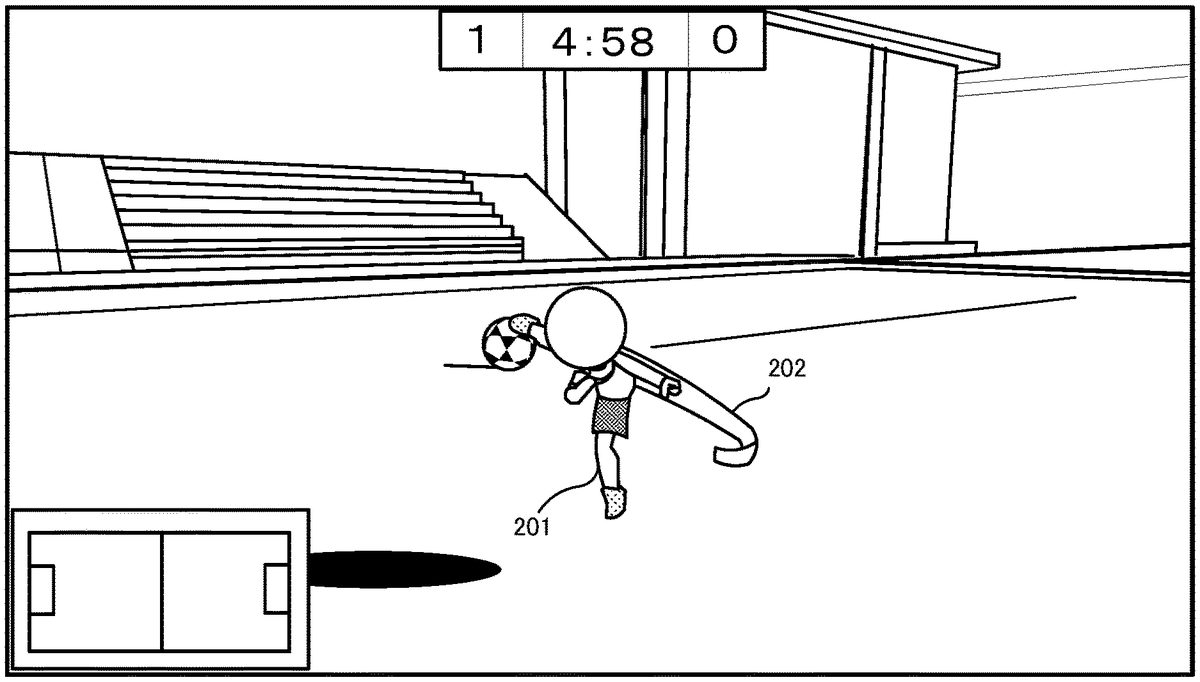

FIG.8shows an example of a screen of the soccer game assumed in the exemplary embodiment. This soccer game is basically progressed on a screen in a third-person view. That is, a virtual camera is located at a position a predetermined distance apart from a player character201(located behind the player character201in a default setting), and the game screen is displayed such that the whole body of the player character201is included in the field of view of the virtual camera. In addition, the virtual camera moves along with movement of the player character201while keeping the predetermined distance.

[Multi-Player Game]

The soccer game assumed in the exemplary embodiment is playable by multiple players through a network. More specifically, a plurality of game systems1(main body apparatuses2) are communicably connected to each other, and each of participant users of the soccer game takes charge of one of the members (athlete players) of the teams to play the game. Therefore, in the soccer game assumed in the exemplary embodiment, each user who participates the game operates the own player character201, as an athlete in the soccer team to which the user belongs. Specifically, each user can play the game as follows. That is, each user, at his/her own discretion, freely moves the player character201on a soccer field (hereinafter simply referred to as “field”) constructed in the virtual game space, and earns a point by causing the player character201to shoot a ball into a goal object (hereinafter, simply referred to as “goal”). That is, this game allows the user to simulatively experience a feeling of acting as a member of a team in an actual soccer game. In the following description, athlete character objects operated by other users are also described as NPCs.

[Communication/Connection Form]

A supplemental description regarding a communication form between the game systems1will be given. In the exemplary embodiment, a communication form in which game systems1communicate with each other via the Internet is assumed. However, in another embodiment, main body apparatuses2may be connected so as to be directly and wirelessly communicable with each other. Moreover, in the exemplary embodiment, one user uses one game system1(one screen). In another embodiment, screen splitting may be used such that a plurality of users can simultaneously play the game by using one game system1.

[Operation Method]

Next, an operation method in the soccer game will be described. In the soccer game, an operation method as follows is used. First, regarding movement of the player character201, the user can freely move the player character201on the field by using a direction input to the left stick32. Moreover, the user can cause the player character201to perform an action of swinging a leg, by performing an operation of swinging the right controller4(hereinafter referred to as “swing operation”). Hereinafter, the action of swinging a leg is referred to as a kick action.FIG.9shows an example of the game screen on which the player character201is performing the kick action.FIG.9shows an example of the kick action performed when the user swings the right controller4from the lower right side to the upper left side. On the game screen shown inFIG.9, the player character201swings its right leg substantially obliquely upward. On this screen, a swing effect image202indicating the direction in which the leg is swung (kick direction) is also displayed. The swing effect image202is a band-like effect image indicating a (three-dimensional) trajectory of the swing.

In the exemplary embodiment, the kick direction of the kick action is determined based on the swing direction of the swing operation and the imaging direction of the virtual camera. Specifically, using the front of the imaging direction of the virtual camera as a reference, a direction according to the swing direction is used as a kick direction. That is, the kick direction in the virtual game space is determined such that the swing direction viewed from the player corresponds to the kick direction viewed on the screen. Although the direction of the player character201is not used as a reference, when the player character201performs a kick action, the player character201is turned such that its forward direction coincides with the imaging direction, and thereafter starts the kick action. As a result of the kick action, when the leg of the player character201collides with the ball, the player character201kicks the ball and thereby moves the ball.FIG.10shows an example of the game screen on which the leg of the player character201collides with the ball through the kick action. InFIG.10, the player character201is located at the same position as inFIG.9, and the ball is located at a position just ahead of and near the player character201. InFIG.10, a collision effect203is also displayed. The collision effect203visually indicates that, as a result of the kick action, the leg of the player character201has collided with the ball, that is, has kicked the ball.FIG.11shows an example of the game screen indicating the state where the kicked ball is moved in a direction that is a little diagonally left-forward with respect to the player character201. InFIG.11, a ball movement effect204indicating a trajectory of movement of the kicked ball is also displayed. InFIG.11, the player character201, which has ended the kick action, is just before landing on the ground. Although described later in detail, the position and the direction of the virtual camera are automatically controlled according to movement of the ball such that the ball is displayed substantially near the center of the screen.

In the soccer game, a plurality of motions regarding the kick action (hereinafter referred to as “kick motions”) are prepared. For example, different motion data are prepared for a kick motion of kicking the ball with the right leg and a kick motion of kicking the ball with the left leg. These motion data are stored in association with the kick directions. A motion according to the kick direction is selected as appropriate and displayed (reproduced).

The contents of movement (ejection angle and movement path) of the ball kicked by the kick action are determined based on the kick direction and the way of contact. In addition, a flying distance and a moving velocity of the ball are determined based on the strength of the swing in the swing operation regarding the kick action. For example, the greater the strength of the swing is, the stronger the kick is, and the farther the ball flies.

As described above, the basis operation in the soccer game includes: causing the player character201to move by using the left stick32; and causing the player character201to perform a kick action by the swing operation of the right controller4. A method for calculating a swing direction and a method for determining a ball movement content will be described later in detail.

Next, a supplemental description regarding an operation other than the aforementioned operations during the soccer game will be given. First, regarding the aforementioned kick action, if the user twists his/her wrist (rotation of the right controller4around the z axis) during the swing operation, the trajectory of movement of the ball can be curved according to an angle of the twist. In addition to the kick action, the user can cause the player character201to perform a heading action. In the exemplary embodiment, the user can cause the player character201to perform a heading action by simultaneously swinging the right controller4and the left controller3. This simultaneous swing may not necessarily be exactly “simultaneous” in terms of time, and may be regarded as “simultaneous” when the right controller4and the left controller3are swung at substantially the same timing even if there is some time lag.

The user can cause the player character201to jump by pressing the B-button54. Regarding movement of the player character201, the user can cause the player character201to dash by operating the left stick32while pressing the ZL-button39.

Regarding the heading action and the dash, consumption of stamina may be considered. That is, a parameter of “stamina” is prepared as one of parameters regarding the player character201(in this case, a stamina gauge or the like may be displayed on the screen). A predetermined amount of stamina consumption may be required for a heading action or dash.

[Control of Virtual Camera]

Next, a control of the virtual camera in the soccer game will be described. As described above, in the soccer game, the game screen is expressed in a third-person view. Therefore, basically, the position and the direction of the virtual camera are controlled such that the player character201is always included in the field of view of the virtual camera. Moreover, in the soccer game, in order to prevent the user from losing sight of the ball, the virtual camera is controlled such that the imaging direction of the virtual camera (direction of the virtual camera) follows the position of the ball to some extent.

First, a default state of the virtual camera will be described.FIG.12shows a positional relationship as a default state between the virtual camera and the player character201. In the default state, the virtual camera is located at a position that is on the back side of the player character201and is a predetermined distance apart from the player character201. In the default state, the virtual camera is in an orientation such that the imaging direction thereof is orthogonal to a y axis of a virtual space coordinate system. A height (position on the y axis) of the virtual camera in the default state is set to a height such that, when the player character201is not floating in the air (when it is in contact with the field), the head of the player character201is displayed near the center of the game screen.

When the player character201moves in a z-axis positive direction of the virtual space coordinate system from the state shown inFIG.12, the virtual camera also moves and follows the player character201from behind while keeping the predetermined distance.

Next, a virtual camera direction follow-up control will be described. For example, it is assumed that, from the state shown inFIG.12, only the ball has moved rightward (in an x-axis negative direction of the virtual space coordinate system) while the player character201has not moved. In this case, the virtual camera is controlled as follows. That is, the position and the direction of the virtual camera are changed such that the imaging direction follows the position of the ball as shown inFIG.13. Specifically, the position of the virtual camera is changed so as to have a movement trajectory that rotates around the player character201while keeping the predetermined distance (i.e., the player character201is always inside the field of view of the virtual camera). The direction of the virtual camera is controlled such that the ball is displayed at substantially the center of the game screen. This allows the ball to be always displayed in the game screen, and prevents the user from losing sight of the ball.

When both the player character201and the ball are moving, the direction of the virtual camera is controlled such that the ball is substantially in front of the imaging direction as shown inFIG.13while the position of the virtual camera is changed according to the position of the player character201.

Here, a principle of a control for causing the direction of the virtual camera to follow the ball in the soccer game, will be described.FIG.14illustrates the principle. First, in the virtual space coordinate system, a target point211is set at a position a predetermined distance apart in a vertically upward direction from the position of the ball. The target point is calculated as three-dimensional coordinates. Furthermore, a follow-up determination area212which is a two-dimensional rectangular area is set on an xy plane (projection plane) of a virtual camera coordinate system. In the processing of the soccer game, the direction of the virtual camera is changed as needed such that the target point211falls within the follow-up determination area212on the xy plane in the virtual camera coordinate system. For example, as shown inFIG.15, when the target point is on the left side of the follow-up determination area212, the direction of the virtual camera is changed such that the follow-up determination area212moves leftward. The direction of the virtual camera is not instantaneously changed, but is changed over a predetermined time such that the follow-up determination area212gradually approaches the target point. At this time, the greater the distance between the target point211and the follow-up determination area212is, the greater the angle of change, per unit time, in the direction of the virtual camera becomes (i.e., the greater the distance from the ball is, the higher the follow-up speed of the direction of the virtual camera becomes).

In a case where the target point211is included in the follow-up determination area212, the operation of causing the direction of the virtual camera to follow the ball is stopped in the exemplary embodiment. Therefore, the follow-up operation of the direction of the virtual camera can be stopped when the target point211is not located in the center of the follow-up determination area212, for example, when the target point211is located near an outer frame of the follow-up determination area212. In the soccer game, the ball is constantly moving in many cases. Then, if the virtual camera is caused to exactly follow the ball such that the ball is displayed in the center of the screen, there is a possibility that the game screen becomes difficult to be seen. Therefore, in the exemplary embodiment, the follow-up operation is stopped when the ball roughly comes near the center of the game screen. In this regard, in another embodiment, for example, a control of changing the direction of the virtual camera may be performed with an easier pace than in the case where the target point211is outside the follow-up determination area212, until the target point211is located at a center point of the follow-up determination area212.

The above-described virtual camera direction follow-up control is also applicable to a height direction (a y-axis direction of the virtual camera coordinate system), and a control as follows is exceptionally performed.FIG.16illustrates a principle of this control. In the exemplary embodiment, when a y-axis position (height from the ground) of a center point of the ball in the virtual space coordinate system is equal to or lower than a first height213, a control is also performed such that the y-axis position of the target point211is not lower than a second height214. For example, when the center point of the ball is present at a position equal to or higher than the first height213from the ground, the y-axis position of the target point211is set at a position equal to or higher than the second height214as shown inFIG.17.

Meanwhile, as shown inFIG.18, when the height of the center point of the ball from the ground is equal to or lower than the first height213, the target point211is set such that the y-axis position thereof is not lower than the second height214. In this case, a linear distance between the center point of the ball and the target point211may be longer than that in the case ofFIG.17. That is, when the ball is close to the ground to a certain extent, the virtual camera direction follow-up control in the y-axis direction is not performed. Thus, for example, in a scene in which the ball continuously bounces at a low position, the game screen is prevented from being displayed as if it shakes up and down according to the bouncing ball, thereby providing an easy-to-view game screen.

Next, a camera control using the right stick52will be described. In this soccer game, basically, the follow-up control for causing the ball to be always included in the game screen as described above is automatically performed. Aside from this, the user can temporarily change the direction of the virtual camera by operating the right stick52. That is, while a direction input is caused by the right stick52, the direction of the virtual camera can be changed in a direction according to the input direction. For example, when the right stick52is tilted to the right, the direction of the virtual camera can be rotated rightward while the right stick52is tilted. However, in the processing of this soccer game, from the viewpoint of preventing the user from losing sight of the ball, the range of this rotation (movable range) is limited. Specifically, as shown inFIG.19, assuming that the forward direction of the virtual camera when the input to the right stick52is neutral is 0°, a range of 120° on each of the left side and the right side is set as a movable range of the virtual camera due to the right stick52. The reason is as follows. That is, in this soccer game (in relation to the angle of view or the like of the virtual camera), the above range prevents the player character201and the ball from going outside of the game screen. The value of 120° is an example, and any value may be used as appropriate according to the angle of view or the like of the virtual camera as long as the player character201and the ball are prevented from going outside of the game screen.

Furthermore, when a direction input is caused by the right stick52, a control as follows is also performed regarding the automatic virtual camera direction follow-up control as described above. That is, first, when such an input has occurred, there is a high possibility that the user wants to confirm the situation in the direction to which the virtual camera is directed. Therefore, in this case, a control of maintaining the direction of the virtual camera is performed while the automatic virtual camera direction follow-up control as described above is temporarily inhibited. Specifically, when a direction input is caused by the right stick52, the size of the follow-up determination area212is temporarily changed according to the input direction to prevent the automatic follow-up.FIG.20andFIG.21show an example of the follow-up determination area212in a case where the ball stops and the right stick52is tilted to the right.FIG.20shows a positional relationship between the ball, the target point211, and the follow-up determination area212as viewed from the virtual camera (the player character201is omitted).FIG.21is an overhead view of the positional relationship shown inFIG.20. In the example shown inFIG.20, the follow-up determination area212has a shape obtained by extending, to the right, the breadth of the follow-up determination area212shown inFIG.14, according to the rightward direction input to the right stick52(magnitude of tilting of the right stick52). Since the follow-up determination area212is increased according to the input direction of the right stick52, even when the imaging direction of the virtual camera is rotated rightward, the target point211is maintained to be inside the follow-up determination area212. This inhibits the automatic virtual camera direction follow-up operation as described above, and maintains the virtual camera facing to the right according to the input of the user.

When the direction input to the right stick52is canceled (is returned to be neutral), the follow-up determination area212is not extended. Moreover, when the direction input to the right stick52is neutral and the target point211falls within the follow-up determination area212, the follow-up determination area212is reduced. Thus, the automatic follow-up control is naturally resumed.

In another embodiment, when the target point211is inside the follow-up determination area212, a control of changing the direction of the virtual camera at an easy pace until the target point211comes to the center of the follow-up determination area212, may be performed.

In the exemplary embodiment, the automatic follow-up is temporarily inhibited by increasing the follow-up determination area212with an input to the right stick52. In this regard, in another embodiment, while an input to the right stick52is maintained, a control of degrading follow-up performance may be performed instead of completely inhibiting the follow-up operation.

[Control of Other Behaviors]

In the processing of the soccer game, a control as follows is performed regarding a behavior of the player character201, in addition to the aforementioned processing. That is, when the positional relationship between the player character201and the ball satisfies a predetermined condition, a control of directing the line of sight of the player character201toward the ball is also performed. For example, the predetermined condition is that the distance between the player character201and the ball is equal to or smaller than a predetermined distance and that a difference in angle between the forward direction of the player character201and the direction from the player character201to the position of the ball is equal to or smaller than a predetermined angle. This allows the user to easily visually recognize the direction in which the ball is present.

[Details of Soccer Game Processing of Exemplary Embodiment]

Next, soccer game processing according to the exemplary embodiment will be described in more detail with reference toFIGS.22to33.

[Data to be Used]

First, various data to be used for the soccer game processing will be described.FIG.22is a memory map showing an example of various data stored in the DRAM85of the main body apparatus2. The DRAM85of the main body apparatus2stores therein a game program301, image data302, operation data303, reception data304received from another apparatus, player character relevant data305, NPC relevant data306, motion data307, kick motion correspondence data308, a ball movement parameter309, a virtual camera parameter310, target point data311, follow-up determination area data312, game situation data313, and the like.

The game program301is a program for executing the soccer game processing.

The image data302is data of various images to be used in the soccer game processing. The image data302includes modeling data of a three-dimensional model, two-dimensional image data for background, and the like.

The operation data303is obtained from the left controller3and the right controller4, and indicates the content of a user's operation.FIG.23shows an example of a data structure of the operation data303. The operation data303includes at least digital button data321, right stick data322, left stick data323, right inertial sensor data324, and left inertial sensor data325. The digital button data321is data indicating pressed states of various buttons included in the controller. The right stick data322is data for indicating the content of an operation performed to the right stick52. Specifically, two-dimensional data of x, y is included. The left stick data323is data for indicating the content of an operation performed to the left stick32. The right inertial sensor data324is data indicating detection results of the inertial sensors such as the acceleration sensor114and the angular velocity sensor115of the right controller4. Specifically, 3-axis acceleration data and 3-axis angular velocity data are included. The left inertial sensor data325is data indicating detection results of the inertial sensors such as the acceleration sensor104and the angular velocity sensor105of the left controller3. In the following description, the right inertial sensor data324and the left inertial sensor data325may occasionally be referred to collectively as “inertial sensor data”.

Referring back toFIG.22, the reception data304from another apparatus is game data received from another game system1, and is used for controlling the progress of the soccer game. Specifically, operation data303and the like of another game system1(indicating the operation content of the corresponding user) are received and stored in the reception data304.

The player character relevant data305is data used for controlling the player character201.FIG.24shows an example of a data structure of the player character relevant data305. The player character relevant data305includes at least current position data331, forward direction data332, line-of-sight direction data333, and a currently-executed action334. The current position data331is data indicating the current position of the player character201in the virtual game space. The current position data331is indicated as three-dimensional coordinates in the virtual space coordinate system, for example. The forward direction data332is data indicating in which direction the front of the player character201faces in the virtual space coordinate system. The line-of-sight direction data333is data indicating a direction of the line of sight of the player character201. In other words, the line-of-sight direction data333is data indicating the orientation of the head of the player character201. The currently-executed action334is data indicating whether or not the player character201is currently executing an action of performing a predetermined motion based on the motion data307described later. If the player character201is currently executing such an action, the currently-executed action334indicates the content of the action (motion). In the exemplary embodiment, as examples of the predetermined action, “(plural kinds of) kick actions” and “heading action” are described. When none of these actions is currently executed, “non-action” is set in the currently-executed action334. For example, when the player character201is just running, this running is regarded as “non-action”.

Referring back toFIG.22, the NPC relevant data306is data relating to characters (athletes) other than the player character201. Although not shown inFIG.22, the NPC relevant data306includes table data indicating which user takes charge of which athlete character in the game play through the network. Furthermore, data, similar to the player character relevant data305, which is generated for each NPC based on the reception data304from another apparatus, is stored in the NPC relevant data306.

The motion data307defines predetermined motions of the player character201. Specifically, the motion data307is data defining the contents of motions regarding the kick actions (kick motions) and the content of a motion regarding the heading action (heading motion). In addition, in the exemplary embodiment, a plurality of kick motions according to the aforementioned swing direction are defined.FIG.25shows an example of a data structure of the motion data307. The motion data307includes at least motion ID341and motion content342. The motion ID341is an ID for uniquely identifying the corresponding motion. In the exemplary embodiment, each of the plurality of kick motions is represented in a form of “kick (number)”. Regarding the heading motion, only one type of heading motion is used in the exemplary embodiment. In another embodiment, plural types of heading motions may be prepared. In the exemplary embodiment, when the player character201is currently executing a kick action or a heading action, the corresponding motion ID341is set in the currently-executed action334.

Referring back toFIG.22, the kick motion correspondence data308is data defining association of each kick motion with the corresponding swing direction. This data is used for determining one kick motion from among the plural types of kick motions on the basis of the swing direction.FIG.26shows an example of a data structure of the kick motion correspondence data308. The kick motion correspondence data308includes motion ID351and corresponding swing direction352. The motion ID351corresponds to the motion ID341of the motion data307. In the exemplary embodiment, only motion IDs regarding the kick motions are included in the kick motion correspondence data308. The corresponding swing direction352is data defining a swing direction that uses a kick motion indicated by the corresponding motion ID351. Although described later in detail, in the exemplary embodiment, the swing direction is calculated as a two-dimensional vector. Therefore, as the content of the corresponding swing direction352, for example, a direction using a two-dimensional vector or a predetermined range including a plurality of directions, is defined (e.g., a range from 40° to 50° in a diagonally upper-right direction is defined by a two-dimensional vector).

Referring back toFIG.22, the ball movement parameter309is a parameter for controlling movement of the ball. The ball movement parameter309includes: data indicating the current position of the ball in the virtual game space; and parameters indicating the moving direction, the moving velocity, etc., of the ball from the current position. The content of the ball movement parameter309is changed as appropriate by collision with a predetermined athlete character object, and movement of the ball is controlled, for each frame, based on the parameter.

The virtual camera parameter310is data for designating the position, the imaging direction, etc., of the virtual camera (in the exemplary embodiment, the angle of view is fixed). The position and the direction of the of the virtual camera are controlled, for each frame, based on the parameter.

The target point data311is data indicating the target point211as shown inFIG.14, etc. The follow-up determination area data312is data defining the size and the shape of the follow-up determination area212as shown inFIG.14, etc. As an initial value of the follow-up determination area212, a substantially square shape having a predetermined size is defined, for example.

The game situation data313is data for managing the progress of the soccer game. Specifically, the game situation data313includes data and the like indicating the scoring status of each team, the remaining game time, and the like. Determination as to whether the game has ended, and win/loss determination are performed based on the data.

In addition to the above data, various data required for the game processing are generated as appropriate, and are stored in the DRAM85.

[Details of Processing Executed by Processor81]

Next, the soccer game processing according to the exemplary embodiment will be described in detail.FIG.27is a flowchart showing the soccer game processing in detail. In advance of the processing, preparations for starting the soccer game, such as network connection with other game systems1, grouping of users into teams, position assignment, etc., have already been made. If the number of participants is insufficient, NPCs corresponding to the insufficient number of participants may be AI-controlled. In this case, the AI may be treated as “other users”. A process loop of steps S2to S12shown inFIG.27is repeatedly executed for each frame.

The flowchart is merely an example of the processing. Therefore, the order of process steps may be changed as long as the same result is obtained. In addition, the values of variables and thresholds used in determination steps are also merely examples, and other values may be used according to need.

[Game Start Process]

InFIG.27, first, in step S1, the processor81executes a game start process. Specifically, the processor81executes an initialization process for initializing various data to be used in the following processes. In addition, the processor81arranges the athlete character objects in the virtual game space. Furthermore, the processor81performs a representation process for game start, and thereafter, arranges the virtual camera at the aforementioned default position (behind the player character201).

[Reception of Data from Another Game System]

Next, in step S2, the processor81receives game data related to another user from another game system1, and stores the data as reception data304from another apparatus.

Next, in step S3, the processor81acquires operation data303(related to itself). In subsequent step S4, the processor81executes a player character control process for controlling the player character201on the basis of the operation data.

[Player Character Control Process]

FIG.28is a flowchart showing the player character control process in detail. InFIG.28, in step S21, the processor81, with reference to the currently-executed action334, determines whether or not the player character201is currently executing a predetermined action (in this example, a kick action or a heading action). More specifically, the processor81determines whether or not the content of the currently-executed action334is “non-action”. When the content is not “non-action”, the processor81determines that the player character201is currently executing a predetermined action. As a result of the determination, when the player character201is not currently executing a predetermined action (NO in step S21), in step S22, the processor81determines whether or not a movement operation input for the player character201has been made, on the basis of the operation data303. Since the movement operation is an input operation to the left stick32in this example, the processor81performs this determination with reference to the left stick data323. Here, a jump operation and a dash operation are also treated as movement operations. Therefore, whether or not the B-button54has been pressed (jump operation) or whether or not the ZL-button39has been pressed (dash operation) is also determined.

As a result of the determination, when a movement operation input has been made (YES in step S22), in step S23, the processor81updates the content of the current position data331on the basis of the content of the movement operation input, and moves the player character201on the basis of the updated current position data331. Meanwhile, when a movement operation input has not been made (NO in step S22), the process in step S23is skipped and the next process takes place.

Next, in step S24, the processor81, with reference to the operation data303(inertial sensor data), determines whether or not a heading operation has been performed. Here, a supplemental description will be given for determination of the heading operation. In the exemplary embodiment, the heading operation is a user's operation of simultaneously swinging the right controller4and the left controller3. In the exemplary embodiment, whether or not such a swing operation has occurred is determined as follows. That is, regarding the left controller3, when an action of “downward swing” of a predetermined magnitude or more has been detected, it is determined that the swing operation regarding the simultaneous swing has occurred. Meanwhile, regarding the right controller4, if an action of swinging the right controller4with a predetermined magnitude or more is detected regardless of the swing direction, it is determined that the swing operation regarding the simultaneous swing has occurred. The above determination is made in consideration of ease of the operation. Regarding the left controller3, the left stick32is mainly operated. Meanwhile, unlike the left controller3, the swing operation is a main operation for the right controller4because the swing operation is also required for a kick action described later. Therefore, for example, if a motion of “downward swing” similar to that of the left controller3is required of the right controller4, there is a possibility that a hurdle for establishing the simultaneous swing is raised because the kick action described later is also performed by the swing operation. Therefore, the swing direction of the right controller4is not taken into consideration, and the simultaneous swing is established when a swing motion of a predetermined magnitude or more has occurred. Thus, ease of the heading operation is enhanced. Therefore, even a user's operation of swinging the left controller3downward while the user is swinging the right controller4can cause the heading operation to be established, which prevents the user from losing sudden chance of heading.

As a result of the determination, when a heading operation has occurred (YES in step S24), in step S32, the processor81sets the motion ID341corresponding to heading, in the currently-executed action334. Thereafter, the processor81ends the player character control process.

Meanwhile, when a heading operation has not occurred (NO in step S24), in step S25, the processor81determines whether or not a swing operation (regarding the right controller4) has occurred. Regarding the swing operation occurrence determination, in the exemplary embodiment, a start point in time and an end point in time of a swing operation are specified focusing on an xy component of an angular velocity, thereby determining whether or not a swing operation has occurred. Specifically, it is determined that a swing operation has started when the length of a vector regarding the xy component of the angular velocity (hereinafter referred to as “angular velocity xy vector”) has exceeded a predetermined threshold. Thereafter, when the length becomes less than the predetermined threshold, the processor81determines that the swing operation has ended. With this end of the swing operation, the processor81determines that the swing operation has occurred (the swing operation has been done). In order to perform this determination, a history of inertial sensor data corresponding to several tens of frames in the past are stored as appropriate in the DRAM85, although not shown in the figures. The processor81determines the start and the end of the swing operation on the basis of the history, thereby determining whether or not the swing operation has occurred. In the following description, a period from the start to the end of one swing operation is referred to as “swing period”. Regarding the predetermined threshold used for determining the start and the end of the swing operation, the same value may be used for determining the start and the end, or different values may be used.

As a result of the determination in step S25, when a swing operation has not occurred (NO in step S25), the process is advanced to step S27described later. Meanwhile, when a swing operation has occurred (YES in step S25), the processor81executes a kick action setting process in step S26.

[Kick Action Setting Process]

FIG.29is a flowchart showing the kick action setting process in detail. In this process, a swing direction is calculated as a two-dimensional vector, and a kick motion according to this swing direction is determined. Any method may be used for calculating the swing direction. In the exemplary embodiment, the swing direction is calculated through the following process. First, in step S41, the processor81extracts, from among a plurality of pieces of right inertial sensor data324within the swing period, right inertial sensor data324in which the orientation of the right controller4in the z-axis positive direction (seeFIG.5) is closest to the horizontal, and stores this data as “reference sensor data” in the DRAM85.

Next, in step S42, the processor81extracts, from among the plurality of pieces of right inertial sensor data324within the swing period, right inertial sensor data324in which the length of the angular velocity xy vector is the largest, and stores this data as “maximum sensor data” in the DRAM85.

Next, in step S43, the processor81calculates a tilt value (roll value), around the z axis, of the right controller4in the reference sensor data, and stores the value as “reference roll value” in the DRAM85. Regarding the tilt value, for example, it is assumed that a state where the surface, of the right controller4, having the right stick52faces vertically upward is 0, rotation to the right is expressed by a positive value, and rotation to the left is expressed by a negative value. Then, the tilt value may be calculated as a value within a range from +1 to −1.

When the reference roll value is calculated, in the exemplary embodiment, the reference roll value is corrected so as to be reduced as the z-axis positive direction of the right controller4is away from the horizontal (in the real space). Regarding the phrase “away from the horizontal”, for example, it can be said that an orientation (of the right controller4) at which the z-axis positive direction faces vertically upward or downward in the real space is an orientation most away from the horizontal. For example, if the right controller4is rotated around the z axis while the z-axis positive direction thereof faces vertically upward, this rotation is a roll rotation from the controller's perspective, but can be recognized as a yaw rotation from the perspective of the user's hand. Therefore, the above correction is made in order to make the rotation less likely to be recognized as a roll rotation, as the z-axis positive direction of the right controller4is closer to the vertically upward or downward direction.

Next, in step S44, on the basis of a time lag between the maximum sensor data and the reference sensor data and the angular velocities in these data, the processor81calculates an (relative) angle of change between these data. Furthermore, the processor81adds the angle of change to the reference roll value to calculate a roll value at the time of the maximum sensor data, and stores the value as “maximum roll value” in the DRAM85.

Next, in step S45, the processor81rotates the angular velocity xy vector in the maximum sensor data by an amount of the maximum roll value to calculate a swing direction vector, and stores the swing direction vector in the DRAM85.

The aforementioned swing direction calculation process can be regarded as a process of calculating a swing direction as a two-dimensional vector, in which a rotation, in consideration of a difference in orientation around the z axis caused by individual differences in the manner of holding the right controller4during the swing operation, is added to the angular velocity xy vector obtained when the momentum of the swing is maximum. For example, it is assumed that the user swings his/her hand to the upper right. In this case, although this swing is a swing in the upper-right direction from the user's perspective, there is a possibility that the value of the xy vector from the controller's perspective varies depending on a tilt around the z axis caused by the manner of holding the right controller4at that time, resulting in a possibility that the swing direction cannot be accurately determined. Therefore, in the above process, such a difference in the manner of holding the controller is corrected, and the swing direction is calculated as a two-dimensional vector.

Next, in step S46, the processor81determines a motion ID341of a kick motion according to the swing direction on the basis of the kick motion correspondence data308and the calculated swing direction.

Next, in step S47, the processor81sets the determined motion ID341in the currently-executed action334. This allows the player character201to perform a kick action according to the swing direction. This is the end of the kick action setting process.

Referring back toFIG.28, next, the processor81executes a process of controlling the line of sight of the player character201. That is, in step S27, the processor81determines whether or not the positional relationship between the player character201and the ball satisfies a predetermined condition. This predetermined condition is, for example, a condition that the distance between the player character201and the ball is within a predetermined distance and that a difference in angle between the forward direction of the player character and the position of the ball is within a predetermined range. That is, the processor81determines whether or not the positional relationship satisfies a condition that the ball can be seen from the player character201. As a result of the determination, when the predetermined condition is satisfied (YES in step S27), in step S28, the processor81sets the line-of-sight direction data333such that the head (line of sight) of the player character201is directed to the ball. Then, the processor81controls the orientation of the head of the player character201on the basis of the line-of-sight direction data333. When the predetermined condition is not satisfied (NO in step S27), the process in step S28is skipped. Then, the player character control process is ended.

Meanwhile, when the result of the determination in step S21is that the player character201is currently executing a predetermined action (YES in step S21), in step S29, the processor81controls the action of the player character201so as to perform a predetermined motion corresponding to the motion ID341set in the currently-executed action334. In the exemplary embodiment, if the forward direction of the player character201is not directed in the imaging direction of the virtual camera when the player character201starts a predetermined kick motion, the processor81makes the forward direction coincide with the imaging direction and then controls the player character201so as to start the predetermined kick motion. For example, if a swing operation (kick action) has been performed while the back side of the player character201faces the imaging direction of the virtual camera, the processor81turns the player character201such that the front side thereof faces the imaging direction and then causes the player character201to start the kick motion. As a result, the swing direction coincides with the kick direction. The reason for the above control is as follows. That is, the kick motion (kick direction) becomes a feedback indicating the result of the swing operation, Therefore, in order to correctly inform the user of the result of the swing operation, the direction of the player character201is made to coincide with the imaging direction of the virtual camera when the kick motion is started.

Next, in step S30, the processor81determines whether or not the motion regarding the action being currently executed has ended. As a result of the determination, when the motion has not yet ended (NO in step S30), the processor81ends the player character control process. In this case, control (reproduction) of the motion being currently executed is continued also in a process regarding the next frame. On the other hand, when the motion has ended (YES in step S30), in step S31, the processor81sets “non-action” in the currently-executed action334. Then, the processor81ends the player character control process.

[NPC Control Process]

Referring back toFIG.27, next, in step S5, the processor81executes an NPC control process. Specifically, the processor81calculates current position and direction of each NPC on the basis of reception data304from another apparatus, and stores the data as NPC relevant data306. Furthermore, the processor81executes, for each NPC, the same process as the aforementioned player character control process on the basis of the NPC relevant data306, thereby causing the NPC to move and perform a kick action or the like.

[Ball Collision Determination Process]

Next, in step S6, the processor81executes a ball collision determination process. In this process, mainly, whether or not the ball collides with any athlete character object is determined, and when there is a collision, the ball movement parameter309is set as appropriate.FIG.30is a flowchart showing the ball collision determination process in detail. InFIG.30, first, in step S51, the processor81determines whether or not the ball has collided with any athlete character object. When there is no collision (NO in step SM), the processor81ends the ball collision determination process.

On the other hand, when there is a collision (YES in step S51), in step S52, the processor81determines whether or not the athlete character object having collided with the ball is the player character201. As a result of the determination, when the collision is not a collision with the player character201(NO in step S52), this means that the ball has collided with any NPC. In this case, in step S53, the processor81sets the ball movement parameter309on the basis of a collision angle and a collision speed between the ball and the NPC, and the content of a kick action of the NPC. Thereafter, the processor81ends the ball collision determination process.