U.S. Pat. No. 11,911,693

INTER-VEHICLE ELECTRONIC GAMES

AssigneeWARNER BROS. ENTERTAINMENT INC.

Issue DateFebruary 8, 2022

Illustrative Figure

Abstract

A computer-implemented method for providing electronics games for play by a group of users in two or more moving vehicles. The method includes maintaining data structures of media program data, user profile data and vehicle profile data, receiving user and vehicle state information, identifying a group of users based on contemporaneous presence in two or more vehicles or common participation in a game or other group experience for related trips at different times, and selecting, configuring or creating media program for play at media players. An apparatus or system is configured to perform the method, and related operations.

Description

DETAILED DESCRIPTION Various aspects are now described with reference to the drawings. In the following description, for purposes of explanation, numerous specific details are set forth in order to provide a thorough understanding of one or more aspects. It may be evident, however, that the various aspects may be practiced without these specific details. In other instances, well-known structures and devices are shown in block diagram form to facilitate describing these aspects. Referring toFIG.1, methods for providing electronics games for play by a group of users in two or more moving vehicles150,160may be implemented in a network100. Other architectures may also be suitable. In a network architecture, sensor data can be collected and processed locally, and used to control streaming data from a network source. In alternative aspects, audio-video content may be controlled locally, and log data provided to a remote server. The audio-video content, also referred to as media content, includes digital audio, video, audio-video and mixed reality content, and includes interactive and non-interactive media programs or games. The media content may also be configured to support interactive features resembling video game features or may be devoid of interactive features except for responding to data indicative of user's location, preferences, biometric states or affinities. A suitable network environment100for practice of the methods summarized herein may include various computer servers and other network entities in communication with one another and with one or more networks, for example a Wide Area Network (WAN)102(e.g., the Internet) and/or a wireless communication network (WCN)104, for example a cellular telephone network using any suitable high-bandwidth wireless technology or protocol, including, for example, cellular telephone technologies such as 3rd Generation Partnership Project (3GPP), Long Term Evolution (LTE), 5G fifth-generation cellular wireless, Global System for Mobile communications (GSM) or Universal Mobile Telecommunications System (UMTS), and/or a wireless ...

DETAILED DESCRIPTION

Various aspects are now described with reference to the drawings. In the following description, for purposes of explanation, numerous specific details are set forth in order to provide a thorough understanding of one or more aspects. It may be evident, however, that the various aspects may be practiced without these specific details. In other instances, well-known structures and devices are shown in block diagram form to facilitate describing these aspects.

Referring toFIG.1, methods for providing electronics games for play by a group of users in two or more moving vehicles150,160may be implemented in a network100. Other architectures may also be suitable. In a network architecture, sensor data can be collected and processed locally, and used to control streaming data from a network source. In alternative aspects, audio-video content may be controlled locally, and log data provided to a remote server. The audio-video content, also referred to as media content, includes digital audio, video, audio-video and mixed reality content, and includes interactive and non-interactive media programs or games. The media content may also be configured to support interactive features resembling video game features or may be devoid of interactive features except for responding to data indicative of user's location, preferences, biometric states or affinities.

A suitable network environment100for practice of the methods summarized herein may include various computer servers and other network entities in communication with one another and with one or more networks, for example a Wide Area Network (WAN)102(e.g., the Internet) and/or a wireless communication network (WCN)104, for example a cellular telephone network using any suitable high-bandwidth wireless technology or protocol, including, for example, cellular telephone technologies such as 3rd Generation Partnership Project (3GPP), Long Term Evolution (LTE), 5G fifth-generation cellular wireless, Global System for Mobile communications (GSM) or Universal Mobile Telecommunications System (UMTS), and/or a wireless local area network (WLAN) technology using a protocol such as Institute of Electrical and Electronics Engineers (IEEE) 802.11, and equivalents thereof. In an aspect, for example as in a mesh network, the servers and other network entities (collectively referred to as “nodes”) connect directly, dynamically and non-hierarchically to as many other nodes as possible and cooperate with one another to efficiently route data from/to client devices. This lack of dependency on one node allows for every node to participate in the relay of information. Mesh networks can dynamically self-organize and self-configure. In another aspect, the servers can connect to client devices in a server-client structure. In an aspect, some client devices can also act as servers.

Client devices may include, for example, portable passenger devices (PPDs) such as smartphones, smartwatches, notepad computers, laptop computers, and mixed reality headsets, and special purpose media players and servers, herein called vehicle media controllers (VMCs) installed as part of vehicular electronic systems. VMCs152,162may be coupled to vehicle controllers (VCs)154,164as a component of a vehicular control system. The VC may control various other functions with corresponding components, for example, engine control, interior climate control, anti-lock braking, navigation, or other functions, and may help coordinate media output of the VMC to other vehicular functions, especially navigation.

Computer servers may be implemented in various architectures. For example, the environment100may include one or more Web/application servers124containing documents and application code compatible with World Wide Web protocols, including but not limited to HTML, XML, PHP and Javascript documents or executable scripts, for example. The environment100may include one or more content servers126for holding data, for example video, audio-video, audio, and graphical content components of media content, e.g., media programs or games, for consumption using a client device, software for execution on or in conjunction with client devices, and data collected from users or client devices. Data collected from client devices or users may include, for example, sensor data and application data. Sensor data may be collected by a background (not user-facing) application operating on the client device, and transmitted to a data sink, for example, a cloud-based content server122or discrete content server126. Application data means application state data, including but not limited to records of user interactions with an application or other application inputs, outputs or internal states. Applications may include software for selection, delivery or control of media content and supporting functions. Applications and data may be served from other types of servers, for example, any server accessing a distributed blockchain data structure128, or a peer-to-peer (P2P) server116such as may be provided by a set of client devices118,120,152operating contemporaneously as micro-servers or clients.

In an aspect, information held by one or more of the content server126, cloud-based content server122, distributed blockchain data structure128, or a peer-to-peer (P2P) server116may include, for example, a data structure of media production data in an ordered arrangement of media components for route-configured media content, a game engine including components for configuring and rendering interactive content in response to user input, or a library of media segments.

As used herein, users (who can also be passengers) are consumers of media content. When actively participating in content via an avatar or other agency, users may also be referred to herein as player actors. Consumers are not always users. For example, a bystander may be a passive viewer who does not interact with the content or influence selection of content by a client device or server.

The network environment100may include various passenger portable devices (PPDs), for example a mobile smartphone client106of a user who has not yet entered either of the vehicles150,160. Other client devices may include, for example, a notepad client, or a portable computer client device, a mixed reality (e.g., virtual reality or augmented reality) client device, or the VMCs152,162. PPDs may connect to one or more networks. For example, the PPDs112,114, in the vehicle160may connect to servers via a vehicle controller164, a wireless access point108, the wireless communications network104and the WAN102, in which the VC164acts as a router/modem combination or a mobile wireless access point (WAP). For further example, in a mobile mesh network116, PPD nodes118,120,152may include small radio transmitters that function as a wireless router. The nodes118,120,152may use the common WiFi standards to communicate wirelessly with client devices, and with each other.

FIG.2shows a media content server200for controlling output of digital media content, which may operate in the environment100, for example as an VMC152,162or content server122,126,128. The server200may include one or more hardware processors202,214(two of one or more shown). Hardware may include firmware. Each of the one or more processors202,214may be coupled to an input/output port216(for example, a Universal Serial Bus port or other serial or parallel port) to a source220for sensor data indicative of vehicle or travel conditions. Suitable sources may include, for example, Global Positioning System (GPS) or other geolocation sensors, one or more cameras configuring for capturing road conditions and/or passenger configurations in the interior of the vehicle150, one or more microphones for detecting exterior sound and interior sound, one or more temperature sensors for detecting interior and exterior temperatures, door sensors for detecting when doors are open or closed, and any other sensor useful for detecting a travel event or state of a passenger. Some types of servers, e.g., cloud servers, server farms, or P2P servers, may include multiple instances of discrete servers200that cooperate to perform functions of a single server.

The server200may include a network interface218for sending and receiving applications and data, including but not limited to sensor and application data used for controlling media content as described herein. The content may be served from the server200to a client device or stored locally by the client device. If stored local to the client device, the client and server200may cooperate to handle sensor data and other player actor functions. In some embodiments, the client device may handle all content control functions and the server200may be used for tracking only or may not perform any critical function of the methods herein. In other aspects, the server200performs content control functions.

Each processor202,214of the server200may be operatively coupled to at least one memory204holding functional modules206,208,210,212of an application or applications for performing a method as described herein. The modules may include, for example, a communication module206for communicating with client devices and servers. The communication module206may include instructions that when executed by the processor202and/or214cause the server to communicate control data, content data, and sensor data with a client device via a network or other connection. A tracking module208may include functions for tracking travel events using sensor data from the source(s)220and/or navigation and vehicle data received through the network interface218or other coupling to a vehicle controller. In some embodiments, the tracking module208or another module not shown may track emotional responses and other interactive data for one or more passengers, subject to user permissions and privacy settings.

The modules may further include a group integration (GI) module210that, when executed by the processor, causes the server to perform any one or more of identifying a group of users based on at least one of a contemporaneous presence by each user in the group in two or more vehicles (or anticipated future contemporaneous presence) or an application-mediated commonality between asynchronous trips, and an indication of interest by the each user in a communication network-mediated interaction with another of the group of users during the contemporaneous presence or by different users regarding asynchronous trips. For example, the GI module210may determine input parameters including a trip destination for one or more passengers, current road conditions, and estimated remaining travel duration based on data from the tracking module208, and apply a rules-based algorithm, a heuristic machine learning algorithm (e.g., a deep neural network) or both, to create one or more media content identifiers consistent with the input parameters. The GI module201may also determine associations of media content with one or more parameters indicating user-perceivable characteristics of the media content, including at least an indicator of semantic meaning relevant to one or more travel events. The GI module210may perform other or more detailed operations for integrating trip information in media content selection as described in more detail herein below.

The modules may include, for example, a media selection and configuration process (MSC) module212. The MSC module212may include instructions that, when executed by the processor202and/or214, cause the server200to select and configure media content for output by a player device during the trip based on criteria as described in more detail herein below. The memory204may contain additional instructions, for example an operating system, and supporting modules.

FIG.3shows aspects of a content player device300for operating on and controlling output of digital media content. In some embodiments, the same computing device (e.g., device300) may operate both as a content player device and as a content configuration server, for example, as a node of a mesh network. In such embodiments, the computing device may also include functional modules and interface devices as described above for the server200.

For content playing, the apparatus300may include a processor302, for example a central processing unit, a system-on-a-chip, or any other suitable microprocessor. The processor302may be communicatively coupled to auxiliary devices or modules of the apparatus300, using a bus or other coupling. Optionally, the processor302and its coupled auxiliary devices or modules may be housed within or coupled to a housing301, for example, a housing having a form factor of a television, active window screen, projector, smartphone, portable computing device, wearable goggles, glasses, visor, or other form factor.

A user interface device324may be coupled to the processor302for providing user control input to a process for converting input to game commands and for controlling output of digital media content. The process may include outputting video and audio for a conventional display screen or projection display device. In some aspects, the media control process may include outputting audio-video data for an immersive mixed reality content display process operated by a mixed reality immersive display engine executing on the processor302. In some aspects, the process may include outputting haptic control data for a haptic glove, vest, or other wearable; motion simulation control data, or control data for an olfactory output device such as an Olorama™ or Sensoryco™ scent generator or equivalent device.

User control input may include, for example, selections from a graphical user interface or other input (e.g., textual or directional commands) generated via a touch screen, keyboard, pointing device (e.g., game controller), microphone, motion sensor, camera, or some combination of these or other input devices represented by block324. Such user interface device324may be coupled to the processor302via an input/output port326, for example, a Universal Serial Bus (USB) or equivalent port. Control input may also be provided via a sensor328coupled to the processor302. A sensor may comprise, for example, a motion sensor (e.g., an accelerometer), a position sensor, a camera or camera array (e.g., stereoscopic array), a biometric temperature or pulse sensor, a touch (pressure) sensor, an altimeter, a location sensor (for example, a Global Positioning System (GPS) receiver and controller), a proximity sensor, a motion sensor, a carbon dioxide sensor, a smoke or vapor detector, a gyroscopic position sensor, a radio receiver, a multi-camera tracking sensor/controller, an eye-tracking sensor, a microphone or a microphone array. The sensor or sensors328may detect biometric data used as an indicator of the user's emotional state, for example, facial expression, skin temperature, pupil dilation, respiration rate, muscle tension, nervous system activity, or pulse. In addition, the sensor(s)328may detect a user's context, for example an identity, position, size, orientation, and movement of the user or the user's physical environment and of objects in the environment, or motion or other state of a user interface display, for example, motion of a virtual-reality headset. The sensor or sensors328may generate orientation data for indicating an orientation of the apparatus300or a passenger using the apparatus. For example, the sensors328may include a camera or image sensor positioned to detect an orientation of one or more of the user's eyes, or to capture video images of the user or the user's physical environment, or both. In some embodiments, a camera, image sensor, or other sensor configured to detect a user's eyes or eye movements may be integrated into the apparatus300or into ancillary equipment coupled to the apparatus300. The one or more sensors328may further include, for example, an interferometer positioned in the support structure301or coupled ancillary equipment and configured to indicate a surface contour to the user's eyes. The one or more sensors328may further include, for example, a microphone, array or microphones, or other audio input transducer for detecting spoken user commands or verbal and non-verbal audible reactions to output of the media content.

The apparatus300or a connected server may track users' biometric states and media content play history. Play history may include a log-level record of control decisions made in response to player actor biometric states and other input. The server200may track user actions and biometric responses across multiple game titles for individuals or cohorts.

Sensor data from the one or more sensors may be processed locally by the CPU302to control display output, and/or transmitted to a server200for processing by the server in real time, or for non-real-time processing. As used herein, “real time” refers to processing that is responsive to user input without any arbitrary delay between an input and an output; that is, that reacts as soon as technically feasible. “Non-real time” refers to batch processing or other use of sensor data that is not used to provide immediate control input for controlling the display, but that may control the display after some arbitrary amount of delay.

To enable communication with another node of a computer network, for example the media content server200, the client300may include a network interface322, wired or wireless. Network communication may be used, for example, to enable multiplayer experiences, including immersive or non-immersive experiences of media content. The system may also be used for other multi-user applications, for example social networking, group entertainment experiences, instructional environments, and so forth. Network communication can also be used for data transfer between the client and other nodes of the network, for purposes including data processing, content delivery, content control, and tracking. The client may manage communications with other network nodes using a communications module306that handles application-level communication needs and lower-level communications protocols, preferably without requiring user management.

A display320may be coupled to the processor302, for example via a graphics processing unit318integrated in the processor302or in a separate chip. The display320may include, for example, a color liquid crystal display (LCD) illuminated by light-emitting diodes (LEDs) or other lamps, a projector driven by an LCD or by a digital light processing (DLP) unit, a laser projector, or other digital display device. The display device320may be incorporated into a virtual reality headset or other immersive display system. Video output driven by a mixed reality display engine operating on the processor302, or other application for coordinating user inputs with an immersive content display and/or generating the display, may be provided to the display device320and output as a video display to the user. Similarly, an amplifier/speaker or other audio output transducer316may be coupled to the processor302via an audio processor312. Audio output correlated to the video output and generated by the media player module308, media content control engine or other application may be provided to the audio transducer316and output as audible sound to the user. The audio processor312may receive an analog audio signal from a microphone314and convert it to a digital signal for processing by the processor302. The microphone can be used as a sensor for detection of biometric state and as a device for user input of sound commands, verbal commands, or for social verbal responses to passengers. The audio transducer316may be, or may include, a speaker or piezoelectric transducer integrated to the apparatus300. In an alternative or in addition, the apparatus300may include an audio output port for headphones or other audio output transducer mounted ancillary equipment such as a smartphone, VMC, xR headgear, or equivalent equipment. The audio output device may provide surround sound, multichannel audio, so-called ‘object-oriented audio’, or other audio track output from the media content.

The apparatus300may further include a random-access memory (RAM)304holding program instructions and data for rapid execution or processing by the processor, coupled to the processor302. When the device300is powered off or in an inactive state, program instructions and data may be stored in a long-term memory, for example, a non-volatile magnetic, optical, or electronic memory storage device (not shown). Either or both RAM304or the storage device may comprise a non-transitory computer-readable medium holding program instructions, that when executed by the processor302, cause the device300to perform a method or operations as described herein. Program instructions may be written in any suitable high-level language, for example, C, C++, C#, JavaScript, PHP, or Java™, and compiled to produce machine-language code for execution by the processor. The memory304may also store data, for example, audio-video data or games data in a library or buffered during streaming from a network node.

Program instructions may be grouped into functional modules306,308, to facilitate coding efficiency and comprehensibility, for example, a communications module306and a media player module308. The modules, even if discernable as divisions or grouping in source code, are not necessarily distinguishable as separate code blocks in machine-level coding. Code bundles directed toward a specific type of function may be considered to comprise a module, regardless of whether or not machine code on the bundle can be executed independently of other machine code. The modules may be high-level modules only. The media player module308may perform operations of any method described herein, and equivalent methods, in whole or in part. Operations may be performed independently or in cooperation with another network node or nodes, for example, the server200.

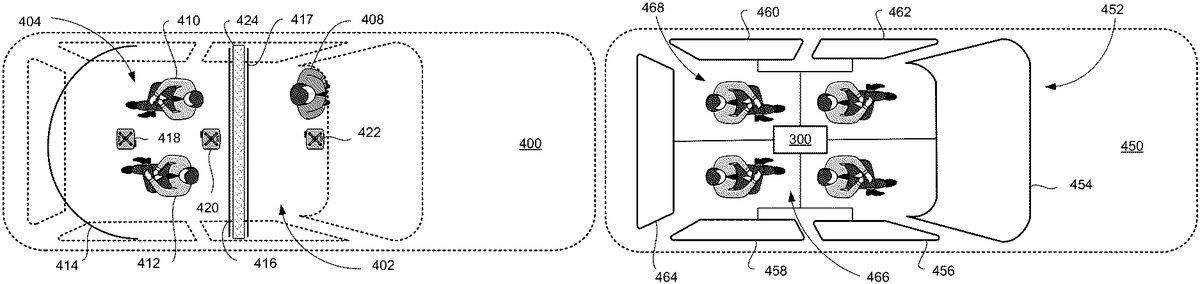

In a vehicle, the apparatus300may provide video content for projection onto an interior surface of the vehicle as the display320. For example, in a vehicle400shown inFIG.4A, one or more ceiling-mounted projectors418,420,422may project media content from the apparatus300onto corresponding screens416,414,417. In the illustrated configuration, two passengers410,412sitting in a passenger space404while a driver408occupies a cockpit402. The cockpit402may be isolated from the passenger space404by a sound wall424. The projector422may project video from rear-facing cameras of the vehicle400, showing the rear view for the driver. The projector420may project forward media content from the apparatus300onto a forward surround screen414. The projector418may project rear media content coordinated in time to the forward media content onto the rear screen416. “Forward” and “rear” are relative to the passengers'410,412orientations, not the vehicle400orientation. Passenger and vehicle orientations may be aligned or non-aligned as shown inFIG.4A. The rear screen416and forward screen414may be mounted on tracks enabling separation of the screens414,416to admit or release passengers, and closure to enable a fuller surround view, up to and including a full 360° surround view.

In driverless (e.g., autonomous vehicle) configurations, the sound wall424, rear view screen417, projector422, driver408and cockpit402are omitted, and the entire cabin of the vehicle400may function as a passenger entertainment space404. In driverless embodiments, the rear projection screen416may be a surround screen like the forward screen414.

Instead of or in addition to projection screens, electronic display screens may be used for display320, for example, LCD or OLED screens in various resolutions, color spaces and dynamic ranges. In driverless vehicles, the cabin is easily darkened so either projectors or electronic screens should work well.FIG.4Bshows a configuration of an autonomous vehicle450in which the window screens are replaced by LCD screens452, including a front screen454, right side screens456,458(one on each door) left side screens460,462(one on each door) and a rear screen464. One or more of the screens452may be transparent or partially transparent LCD or OLED panels (also called see-through displays) for augmenting the natural view of the vehicle exterior, may have an opaque backing for virtual reality, or may include an electronic or mechanical shutter to transition between opaque and transparent or partially transparent states. One or multiple LCD layers may be used as an electronic shutter to transition between opaque and transparent states. An apparatus300(for example, a VMC152) may be coupled to the screens452for controlling video output. One or more passengers468in the cabin466may view video content as if looking through the window, in see-through or opaque modes. In alternative embodiments, the vehicle windows are equipped with mechanical or electronic shutters to darken the cabin, and the apparatus300outputs the video content to traditional LCD or OLED screens in an interior to the vehicle, or to projectors as shown inFIG.4A. In an alternative or in addition, each of the passengers468may play the media content on a personal computing device, for example a smartphone, notepad computer or mixed reality headset.

In addition to conventional 2D output or 3D output for display on two-dimensional (flat or curved) screens (e.g., by televisions, mobile screens, or projectors), the media content output and control methods disclosed herein may be used with virtual reality (VR), augmented reality (AR) or mixed reality output devices (collectively referred to herein as xR). Some immersive xR stereoscopic display devices include a tablet support structure made of an opaque lightweight structural material (e.g., a rigid polymer, aluminum or cardboard) configured for supporting and allowing for removable placement of a portable tablet computing or smartphone device including a high-resolution display screen, for example, an LCD or OLED display. Other immersive xR stereoscopic display devices use a built-in display screen in a similar frame. Either type may be designed to be worn close to the user's face, enabling a wide field of view using a small screen size such as in smartphone. The support structure may hold a pair of lenses in relation to the display screen. The lenses may be configured to enable the user to comfortably focus on the display screen which may be held approximately one to three inches from the user's eyes. The device may further include a viewing shroud (not shown) coupled to the support structure and made of a soft, flexible or other suitable opaque material for form fitting to the user's face and blocking outside light. The immersive VR stereoscopic display device may be used to provide stereoscopic display output, providing a more immersive perception of 3D space for the user.

FIG.5shows an overview500of providing electronics games for play by a group of users in two or more vehicles. The method500may be performed by one or more computer processors of a server or cooperating servers in electronic communication with client devices in the two or more vehicles, optionally including servers at points of interests along travel routes. The server or cooperating servers may be in one or more of the vehicles, and/or may be in a remote location. At the process502, the one or more processors, at a content server, maintain media program data in a media program structure (e.g., a database). In an aspect, the media program data may include audio and audio-video games. At the process504, the one or more processors maintain user profile data in a user profile data structure.

As used herein, “user presence” means one or both of: a contemporaneous presence of two or more users in different vehicles, or an asynchronous presence by one or more users in one or more vehicles wherein each of the one or more users is playing or has played the same interactive media or application relating to their vehicular travel. Also as used herein, “user presence information” means information enabling a processor to make a reliable determination concerning a present, past or anticipated user presence, for example, data sets of user identity or vehicle identity associated with time, vehicle identity or geographic location. At the process506, the one or more processors may receive or determine user presence information from user devices. For example, each of the users of the user devices may register a user account with the one or more processors before or at the beginning a session. Once in a vehicle, each user may indicate via a user interface of their client device that they are ready to participate in a game with other users. In an aspect, the processors verify that the users are in two or more vehicles. At the process508, the one or more processors may receive or determine vehicle state information from vehicles. In an aspect, each of the vehicles has registered with the system of the one or more processors.

At the process510, the one or more processors may identify a group of users based on a contemporaneous presence by each user in the group in two or more vehicles, or in an alternative, based on an asynchronous presence in a similar trip environment participating in the same game or interactive experience. In an aspect, the one or more processors may identify the group by further identifying an indication of interest by each user in the group to interact, for example play games with one or more other users in the group during the user presence. In an aspect, the one or more processors may identify the group based on future co-presence for game play at a future contemporaneous presence. In some embodiments, the one or more processors may detect, based on vehicle, location or trip data and on user profile data that two or more users traveling in different vehicles may have an interest in a mutual game. Based on the detection, the one or more processors may push an invitation to join a common game to each user and join client devices that respond in a game session. The one or more processors may detect the user's travel plans by integration with a navigational application and may detect user presence in a vehicle using a network node placed therein.

At the process512, the one or more processors may select, configure or create an interactive media program of interactive game for playing by the group of identified users above. The interactive media program may be configured for output by respective interactive media players of each user of the group of users. At the process514, the one or more processors may provide the interactive media program to the interactive media players of the identified users in the identified two or more vehicles.

In an aspect, one or more processors at the content server may perform one or more of the processes502-514. In another aspect, one or more processors at the connected vehicles may perform one or more of the processes502-514. Therefore, it should be noted that the following descriptions of these processes refer to either the content server or the vehicle, unless noted otherwise.

FIG.6summarizes a computer network600in which the novel methods and apparatuses of the application may find use. One or more content servers620(e.g., a server farm or cloud) interconnected through a local area network, wide area network630, or other network may execute the processes and algorithms described herein, creating digital media programs that may be stored and distributed for contemporaneous use by a group traveling in different vehicles. In some cases, portions of media program data602may be in analog (e.g., film) and converted to digital form using any suitable conversion process, for example, digital scanning. A content server may select and/or configure a media program using media program data602, user profile data604, and vehicle profile data606based on user presence information608and vehicle state information610. One or more distribution servers may provide content for processing or delivery to servers at places (or points) of interest650along the route and to player devices640-642, from the content server620through the network630, e.g., the Internet or cellular telephone and data networks, and one or more router/modems/hotspots. In some embodiments, points of interest may be marked by short range (e.g., about one hundred meters or less) radio beacons placed in the environment. In an alternative, or in addition, the processor may locate places of interest by computing geographic proximity relative to a table of geographic locations. In an aspect, beacons outside the vehicles and placed perhaps by some lead vehicle player(s) may be used to signal the following vehicle's or vehicle inhabitant's processors or sensors that they are near the beacon(s). Beacon placement by players or game administrators may be used for explicit, hidden, or bluffing purposes, e.g., beacon signals may be used to indicate arrival of players to a destination; for providing secret messaging only known to some, e.g. the lead vehicle person who wants to otherwise secretly know about a following vehicle's route progress; and/or to bluff or otherwise deceive players about where another vehicle or object of interest in the game is located.

The player devices640-642and places of interest650may be connected via a mobile mesh network. Each player device640-642may connect to remotely networked devices through a vehicle controller (VC) of the vehicle in which the media player is located. The media player640-642may include, for example, smartphones, personal computers, notepad devices, projectors, and wearable xR devices. The media program may be transcoded to a suitable format for the player device640-642prior to delivery.

FIG.7Adiagrams a useful automatic process700for providing electronic games for play by a group of users in two or more vehicles. In an aspect of the present disclosure, the process700may be performed by one or more processors at a content server. At702, a processor accesses a data structure of media program data in a first database, a data structure of user profile data in a second database, and a data structure of vehicle profile data in a third database. The media program data may include, for example, discrete segments or components of digitally encoded audio, audio-video, virtual reality, augmented reality, game logic, or elements for multi-dimensional rendering for producing output for consumption in the vehicles. Output may include, for example, entertainment, instructional, advertisement, video gaming, social networking, or social gaming application. In an aspect, the processor may maintain the first, second and third database as separate databases, or in any combination.

At704, the processor receives or determines present or anticipated future user presence of one or more users that have registered their client device with the system of the processor. The processor may determine that two or more users are traveling contemporaneously, for example. In an alternative, or in addition, the processor may link a current user session with past (asynchronous) sessions of the same game or user experience by the same user and/or different users, enabling multi-user participation in a game or experience at different times. In an aspect, the processor may receive or determine user presence from GPS-based applications located in a user or player device. Based on detecting the presence of a registered client device in the vehicle, the system may infer that the user to whom the device is registered is in the vehicle. In another aspect, the processor may receive or determine user presence from GPS-based applications and components that are part of, or connected to, a vehicle controller (VC). In another aspect, the processor may receive or determine user presence from non-GPS-based applications located in a vehicle. In this aspect, a user device may register with a VC when the user enters the vehicle, or the user may register manually with the VC when the user enters the vehicle or at any time while the user is still present in the vehicle. In an aspect, the one or more processors may update the user presence information for each user based on the most current user presence information, in response to changes in user presence, or periodically. Accordingly, the one or more processors may monitor a current state and past states of user presence during a multi-vehicle session. In some embodiments, beacons outside the vehicle may be used to trigger an in-the-vehicle sensor for determining user presence and/or features of the external environment.

At706, the processor receives or determines vehicle state of one or more vehicles that have registered with the system of the processor. A vehicle state may include, for example, the location, velocity, and navigational plan for the vehicle; types of client devices in or connected to the vehicle; available media content for the vehicle based on bandwidth, data storage, or other technical factors or based on a subscription or license; available input devices such as cameras or microphones in or connected to the vehicle, and useful combinations of the foregoing. In an aspect, the processor may receive or determine a vehicle state or portion thereof from GPS-based applications or tracking device located in the vehicle. In another aspect, the processor may receive or determine vehicle state or a portion thereof from non-GPS-based applications located in a vehicle, for example, a digital speedometer, compass, temperature sensor, vision system, and so forth. In an aspect, the processor may update the vehicle state data with the received or determined vehicle state information.

At708, the processor identifies a group of users selected from users identified at704above, based at least in part on one of: a contemporaneous presence by each user in the group in two or more vehicles determined at706above, or common participation in a game or other group experience for related trips at different times. The processor may execute an algorithm to select the group of users from those sharing a contemporaneous presence, for example, based on similar user profiles, preferences or interests. In an alternative, or in addition, the processor may select the group of users based on express indications of user interest, for example by including participants who have transmitted an indication of interest to the processor. In an aspect, the processor may send invitations to join an interactive content session (e.g., a game) to users it identifies based on factors noted above as likely to be interested. The processor may update and track current and past membership and participation in any group event using an appropriate data structure.

Interactive multi-user media programs are made for contemporaneous use by nature. So, in selecting users, the algorithm may make use of input relating to each participant's purpose for being in vehicles at a shared time, or surrounding circumstances. For example, participants may be traveling to a common destination, or away from a common origin. They may be in vehicles specially equipped for a certain type of game; for example, vehicles equipped with lasers or laser sensors designed to interact with objects placed in the environment, or with surround screen systems. As noted above, beacons placed in the environment, may be used to trigger in-the-vehicle sensors for triggering game play events, including interactions with outside objects. They may have planned trips estimated to require similar amounts of time or be traveling on the same route. They may be in a certain geographic area (e.g., Southern California, or Burbank) and may wish to play with others in the same area, or in a different area. Contemporaneous presence in vehicles is a threshold requirement but is usually not enough to identify a suitable group of users, depending on the media program.

At710, the processor selects, configures or creates a media program (e.g., a game) for use by the group of identified users at708. In an aspect, the interactive media program is, or includes, an interactive, multiplayer game. In another aspect, the media program is a non-interactive, multiplayer game. In an aspect, the processor selects, configures or creates the media program based at least in part on data from the user profiles of the identified users and/or in part on data from the corresponding vehicle profiles. In an aspect, the processor selects, configures or creates the media program based in part on the specifications of the media player at each vehicle, for example whether the media player has three-dimensional (3D) or mixed reality (xR) capabilities.

The processor may configure the media program to enable players in different vehicles to play various games or share experiences. Use cases may include, for example, games having outcomes that depend on interaction between each vehicle and its environment and/or social interactions between players, sometimes referred to herein as “real-world” games. Examples of real-world games include driving games, observational games, and social games. Other use cases may include alternative reality (AR) games, wherein the players interact via a fantasy environment that reacts to user input and the real environment of the vehicle. AR games may include driving games, observational, and role-play games all played in a fantasy environment, and any other video game in which the processor alters game play in response to location and movements of players in different vehicles. Each of the game types may be played in real-time, synchronous mode in which the processor receives and processes data from all players contemporaneously, or in asynchronous mode in which players participate at different times and the processor afterwards compares records (e.g., scores) of game play. The processor may provide each game type in one or more different styles of play, for example: cooperative play, in which players cooperate to reach a common goal; team play in which teams compete against one another while players within team cooperate; competitive games, in which each player competes individually; elimination games in which players compete to extinguish others from the game; and puzzle games in which each player works alone on a puzzle or exploratory map. The processor may reveal the players' identities to one another. In an alternative, the processor may conceal the players' identities from one another. Player entities may be individuals, teams, computer-controlled characters, or a combination of the foregoing.

In driving games, players compete in navigation (way finding), speed on off-road tracks, safe driving, tagging other objects or vehicles (e.g., with a harmless wireless beam or the like), registering a la Square, their proximity or arrival at some beacon(s) along the route, or other attributes of motor vehicle control. These games differ from social navigational applications in which users can earn participation points or tokens by using or providing navigational data (e.g., Waze™). Instead of open-ended applications run primarily as a navigational aid for individual users, real-world games may be limited to definite player groups and play periods, focusing on entertainment value of friendly competition. At the implementation level, processes as described in connection withFIG.7Adistinguish real-world games from social navigation application. Further differences are apparent in the description of more specific games below.

Driving games may include elements of way finding or route selection, speed or efficiency, and safety or legal compliance. Any specific game may combine these elements in different amounts depending on user preferences and constraints of the operating environment. For example, two or more drivers of different vehicles may agree to compete on finding the shortest route between two points, or the fastest route, subject to safe driving limits. Passengers can also compete, either as ‘navigators’ for the drivers, or otherwise having input to the driverless vehicle's navigation system. The processor may enable the selfsame passenger or driver to compete with her best prior shortest or fastest or most scenic or . . . route score. For asynchronous trip game play, the processor may manage and serve a competition wherein a player can compete and/or cooperate to achieve a goal via related asynchronous trips. For example, the processor may serve a scavenger hunt game wherein players visit route locations where other player(s) have already been to retrieve some item(s), log the visit, or expose otherwise hidden items that are only revealed if another player has already discovered, photographed, tagged, or otherwise exposed them. In an alternative, or in addition, players might compete for the title of safest or most considerate driver. In each of these games, the processor executing the media program may score behavior of the vehicle and report a leaderboard.

FIG.7Bis a flow chart showing an example of processor operations during a driving game720. For example, in an aspect, the driving game may involve two drivers, Adam and Ben, driving Vehicle One and Vehicle Two, respectively. As shown inFIG.7B, the example driving game involves Adam and Ben competing for the title of the safest driver, contemporaneously or asynchronously. Adam and Ben, who both live in the same apartment complex in Santa Monica and work at the same workplace in Downtown L.A., each start driving from their apartment complex to work at approximately 7:00 a.m. At722, the processor initializes the driving game session, where the goal is to compete for the title of the safest driver. For example, the scores for Adam and Ben are initially set to zero. Or, in another example, the scores may be the cumulative scores. The scores may be recorded as part of the user profile data in a database. In an aspect, the processor awards scores for driving behaviors that are legally compliant, and deducts points for traffic violations or unsafe driving behaviors. At724, the processor determines that Vehicle One and Vehicle Two are initially parked at 1519 6thStreet, Santa Monica, CA, based on the GPS geolocation information obtained from the vehicles. Adam and Ben start driving to work at 7:00 a.m., heading to the office in Downtown L.A., taking Olympic Blvd. At the process726, at 7:03 a.m., Adam and Ben stops at the red light on Lincoln Blvd., waiting to make a left turn onto Olympic Blvd among many other morning commute drivers. This is a waypoint, and the processor at728calculates the waypoint scores for Adam and Ben. While Adam has activated his turn signal approximately 100 feet prior to entering the left turn lane, Ben failed to activate his turn signal at all. The processor awards one point to Adam, while the processor deducts 1 point for Ben, because California traffic law requires a turn signal in the last 100 feet before a turn if any other vehicle may be affected by the movement. The turning of Vehicle One and Vehicle Two would both affect other morning commute vehicles at the intersection. At730, Adam's score is one, while Ben's score is negative one. At 7:05 a.m., the light turns to green, and Adam proceeds to make a left turn safely. However, Ben enters the intersection to make a left turn while the light has already turned to red. The process, again at726, proceeds to calculate the waypoint scores for Adam and Ben. While Adam is not deducted any point, the processor at728deducts one point from Ben, because he has run a red light. At730, the processor accumulates the scores for Adam and Ben: one and negative two, respectively. At724, at 7:25 a.m., both Vehicle One and Vehicle Two approaches Los Angeles High School, where a school bus is stopped at a curb side to drop off students. At726, Los Angeles High School is another waypoint. Adam, seeing that the school bus is stopped with flashing red lights, proceeds to stop. Ben, on the other hand, fails to stop and proceeds to drive past the school bus. At728, the processor calculates the waypoint scores for Adam and Ben. The processor awards one point to Adam for properly stopping and remaining stopped when the red lights on the school bus are flashing. The processor deducts one point from Ben for failing to stop. At730, the accumulated scores for Adam and Ben, as calculated by the processor, are two and negative two, respectively. At 7:45 a.m., both Adam and Ben approach Staples Center, their workplace. At726, Adam proceeds to drive down Olympic Blvd. and waits for a right turn onto S. Figueroa St. At726, Ben, on the other hand, decides to take a shortcut onto a side street, where it is illegal to do so between 7:00 a.m. and 9:00 a.m. At728, the processor calculates the waypoint scores for Adam and Ben, where Adam is not deducted any point, but the processor deducts one point from Ben for driving illegally on a restricted street. At730, the accumulated scores for Adam and Ben are two and negative three, respectively. At 7:26 a.m., Ben arrives first at the workplace, at732, reaching the destination. At 7:727 a.m., Adam also arrives at the workplace at732. The processor at734calculates the game scores for Adam and Ben, where Adam has two points, and Ben has negative three points. At736, the processor outputs the respective game scores to the displays in Vehicle One and Vehicle Two. The winner of the driving game in this example720is Adam. In some aspect, the processor may store the games scores in the game database, for example, as part of the user profile data for Adam and Ben.

Observational games entail scoring by a processor of player observational interactions via a client device with roadside objects. For example, players may record their observations by taking a picture of each observed subject using their smart phone or similar device running a client application communicating with a game server. The processor of each client may time-stamp and recognize the object image, and assign a score based on the observation and optionally other factors, for example, time of observation, location of observation, or relative difficulty of the observation, and provide to the game server. Players can compete or cooperate to find objects during contemporaneous travel or asynchronously, depending on user preference.

FIG.7Cis a flow chart showing an example of processor operations during an observational game740. For example, in an aspect, the observational game may involve two drivers, Adam and Ben again, this time riding autonomous Vehicle Three and autonomous Vehicle Four, respectively, to commute to work from their apartment complex. As shown inFIG.7C, the example observational game involves Adam and Ben competing for the title of highest observational score, whether during travel at the same time or at different times. For example, the observational game740may involve capturing images of blue objects. At742, the processor initializes the observational game session, where Adam and Ben each start off with a score of zero. As in the driving game example above, the scores may instead be cumulative scores. At744, both Vehicle Three and Vehicle Four pick up Adam and Ben from their apartment complex, respectively, and start driving off. Immediately upon departure, Adam captures an image of a bicyclist riding a blue mountain bike, while Ben also attempts to capture the same image but unfortunately was too late in doing so, and the picture only captures another bicyclist riding a green bike. Both images are captured by smartphones communicably connected to the game server. At746, the processors receive the photo images taken by Adam and Ben. At748, the processor recognizes that Adam's photo includes a blue bike, while Ben's photo includes a green bike, not blue. At750, the processor determines that Adam's photo contains a scorable object (blue bike), while Ben's photo does not. At752, the processor calculates and awards one point to Adam, while the processor awards no point to Ben. Alternatively, the processor may deduct one point from Ben for submitting a photo of a wrong observational element (e.g., green bike instead of blue). The process returns to744. While Vehicle Three and Four drive near Roxbury Park on Olympic Blvd., Adam sees a bluebird and captures its image. Ben, on the other hand, sees a blue Ferrari. At746, the processor receives the photos of the bluebird and the blue Ferrari. At748, the processor recognizes the bluebird in Adam's photo, and the processor also recognizes the blue Ferrari in Ben's photo. At750, the processor determines that both the bluebird and the blue Ferrari are scorable objects in game740. At752, the processor calculates and awards two points to Adam, because bluebird is a rare sight. The processor calculates and awards only one point to Ben, because Ferraris are not a rare sight in Beverly Hills. At754, Vehicle Three and Vehicle Four arrive at Staple Center, the destination for Adam and Ben. At756, the processor calculates the game scores for Adam and Ben at this point: three for Adam, and one for Ben. Adam wins the observational game740. The processor outputs the game scores on the displays of Vehicle Three and Four. In some aspect, the processor stores the game scores in the database, for example, as part of the user profile data. The processor may also store current data for use in future asynchronous game sessions. Instead of, or addition to, competing with one another, player groups may also cooperate to find the most qualified observations in team play or to achieve a common purpose.

Social games use vehicular travel as a catalyst for encouraging social interactions between people traveling in different vehicles. These games may have a variety of designs and objectives. For example, social games may be used to introduce new people interested in meeting new people with shared interest; reconnecting people who happen to be sharing a travel experience in different vehicles; facilitating and prompting topical conversations on items of interest pertaining to travel; conversational games providing for guided social interaction, social puzzle solving; and sharing of electronic content, fundraising for social causes, or organizing group activities at shared destinations or otherwise.

FIG.7Dis a flow chart showing an example of processor operations during a social game760. For example, in an aspect, the social game may involve multiple participants, Adam, Carl, David, and Ed, riding (or driving) Vehicle Three, Vehicle Five, Vehicle Six and Vehicle Seven, respectively, where each vehicle may be autonomous or human-driven. As shown inFIG.7D, the example social game involves Adam, Carl, David, and Ed participating in the social game of “Burger and Movie Meetup in West LA.” The objective of the game is to meet up and eat hamburgers before finally reaching the movie theaters in Century City, the final destination. In this example, Adam, Carl, David, and Ed are strangers, and they chose to participate in this social game when the meetup event was announced online by Adam, the event host. The social game may be planned in advance of the participants riding or driving their vehicles, or the game may be initiated impromptu as one participant invites random or known passengers or drivers in contemporaneous presence. The social game780may be membership-based (for fee or otherwise), or it may be open to any vehicle passengers or drivers of concurrent conveyances interested in attending the meet-up. The meet-up may be scheduled for a particular time, or for a range of times (e.g., every Tuesday from 6 to 8 pm), or may be determined by an ad hoc determination of a common interest (e.g., to meet friendly strangers over hamburgers). At762, in an aspect, Adam has announced the meetup event at 5:30 p.m. using a social game app on his smartphone, as he leaves and drives away from work on a Friday evening in Vehicle Three. The meetup is scheduled to convene initially at a hamburger shop somewhere near the Century City theaters, around 6:30 p.m., followed by a movie watching at the Century City theaters thereafter. One of the features of the social game in this example is that the exact destination is not pre-determined, and instead, the destination may be fluidly determined by the aggregate interests of the game participants. In the present example, the participants discuss alternative meeting places, but the process ofFIG.7Dmay be applied to any topic of interest, including but not limited to news of the day, topics of shared special interest to the participants, interesting locations along the traveler's routes, events happening at a common destination, and so forth.

In the case of discussing meeting locations, for example, each game participant may suggest a hamburger shop, and the participants may vote on the favorite one to go to. Immediately, Carl and David, who are on their way home from their respective workplaces in Burbank and heading back to their home area in West L.A. on Vehicle Five and Vehicle Six, respectively, respond and announce their interest in participating in the meetup, using the social game app installed on their respective smartphones. The user interactions for the social game app may be performed directly on the smartphones via various user interface means including, for instance, touchscreen (e.g., finger tapping, swiping, typing, etc.), microphone (via voice input), camera (via gesture recognition input), etc. Alternatively, or in addition, the respective vehicles may be equipped with similar or other user interface means to detect user interactions, such as text, voice, and gesture inputs. Ed, who is still at work in Culver City but expects to be able to leave work at 6:00 p.m. to head home towards West L.A., also accepts the meetup invite from Adam. At764, the processor thus determines that Adam, Carl, David, and Ed, who are riding/driving their respective vehicles from their respective places of origin and heading generally toward West L.A., are participating in the meetup760. At766, the destination is tentatively set for Century City theaters as part of the social game. At768, Ed announces to the game participants through the social game app that he has just taken off from work at 6:00 p.m., and at770, Ed suggests Umami Burger in Santa Monica for the burger shop candidate. Ed's suggestion is outputted as a text, audio, or visual message to the other participants through the social game app, where the generated output may be outputted via smartphone, in-car entertainment system, or another output device communicably connected to the game server and processor. However, Adam, Carl and David rejects this proposal at772, because they all live near Umami Burger and frequent there often, such that they want to eat at another place. At the process768, at 6:15 p.m., Carl suggests LA Burger Bar on La Cienega and Pico, as he passes through Korea Town on Pico Blvd., where the processor at770generates and outputs Carl's suggestion to the rest of the game participants. At772, Adam responds positively to Carl's suggestion as he, too, is also heading westbound on Pico Blvd. from Downtown L.A., but both David and Ed respond in the negative. At774, the participants continue the topical exchange, for example, via text, audio, or visual message. David states at774that he is not passing through Koreatown and would not want to make a detour there. Ed states at774that LA Burger is out of his way and would not want to make a detour, either. As a result, the game participants decide not to go to LA Burger. At768, Adam approaches the Apple Pan on Pico Blvd., and at770, Adam proposes eating at the Apple Pan for the meetup, where the processor generates and outputs the invite to meet at the Apple Pan. At772, Carl, David, and Ed all respond positively to Adam's invite to meet at the Apple Pan via one or more messaging means above-described. At774, all the participants (and their autonomous vehicles, to the extent any of the vehicles are autonomously driving) head toward Apple Pan. At the764, the processor for the social game app updates the destination by adding Apple Pan, either as the final destination in lieu of Century City theaters (especially if the autonomous vehicle is a ride share, and the passenger would not be taking the same vehicle to the movie theaters) or as the intermediate destination before the movie theaters (e.g., if the vehicle will continue to be driven/ridden to the movie theaters after the burger shop). The destination may or may not be set separately for each participant based on certain criteria, such as whether the vehicle would continue to be driven/ridden to another destination, or whether the participant chooses to leave the game at any point. At766, when the vehicles reach Apple Pan, the destination, the social game session760terminates (either momentarily or entirely) at776. For the vehicles or participants that would continue on to the Century City theaters, the social game session760is momentarily terminated (or paused), and resumed when the participants head to the movie theaters, where the similar process flow in760may or may not repeat to decide on, for example, which movie theater to go to (Century City theaters as originally proposed, or rather some other theaters), which movie to watch, at what showtime, etc. For example, the processor may output possible movie showings and showtimes at nearby movie theaters to the game participants at762, or768, either before or while the participants and their vehicles are heading toward the destination.

Alternative reality games place the player in a computer-generated environment using virtual reality or augmented reality gear, including both headsets and surround screens. Surround screens may in some embodiments replace vehicle windows. Game play may be of any type that can integrate vehicle state data to enhance game, including any of the three types of travel games summarized above, and games such as, for example, role playing, fantasy, racing, battle, and strategy games enhanced by vehicle state data.

FIG.7Eis a flow chart showing an example of processor operations during an alternative reality game780. For example, in an aspect, the alternative reality game may involve one or more participants riding (or driving) autonomous or human-driven vehicles. As shown inFIG.7E, the example alternative reality game involves Ben and Ed participating in a fantasy racing game780of “Kill Evil Dragons on Mulholland Drive to Save the Princess.” The objective of the game is to drive on Mulholland Drive starting at Interstate405and ending at Highway101, where the princess awaits in her castle in the alternative reality. For safety, the fantasy game can only be played by passengers in autonomous vehicles that cannot be influenced to take unsafe actions for the sake of game play. At782, the processor initializes the alternative reality game session by welcoming Ben and Ed to the game (e.g., with audio-visual welcome message outputted through the virtual/augmented reality gears such as the headset worn by the players and surround screens inside the vehicles), when Ben and Ed enter their respective vehicles parked at the start line prior to starting the game. At784, the processor prompts Ben and Ed whether they wish to commence the game780, to which Ben and Ed replies affirmatively. At786, the processor generates, outputs and maintains alternative reality objects an environment, which simulates the scenery of the game players riding on flying unicorns along the twisted path of Mullholand Drive infested with evil dragons flying everywhere. For example, the surround screen in the vehicles may display a fantasy scenery of a magical world where the sky is purple and filled with monsters and dragons. At788, the processor triggers the alternative reality routine when Ben and Ed take off from the start line. In an aspect, the vehicles ridden by Ben and Ed are autonomously driven. At790, the processor generates and outputs the monsters and evil dragons flying around Mullholand Drive. At792, the processor applies the game rules and calculates scores. For example, black, red, green, and blue dragons are evil, and when they are shot down, they count as 5, 4, 3, 2, and 1 points each respectively. However, gold and white dragons are good, and when they are shot down, they count as negative 10 and 5 points each. Other monsters may be carrying game items (portions) such as “Heal Life,” “extra Magic,” and “Extra Arrow,” where shooting down such monsters may yield such game items to be used by the game players during game play. Players may ‘shoot down’ the dragons and monsters using an input device communicably connected to the game server and processor. For example, the vehicle horn may activate the firing of a shooting magic or arrow to shoot down the dragons and monsters in the alternative reality game780. Other virtual or augmented reality gears may be used, such as the headset, microphone, gesture sensors, motion trackers, game controllers, etc., to receive game play input from the game players. In an example, Returning to784, the processor may receive vehicle state, such as the velocity, direction, acceleration, road condition, weather, etc. during the game play as the vehicles travel, and at786, the processor may generate, output, and maintain alternative reality objects and environment, such as the simulated motion and environment of the flying unicorns concomitant with the motion of the vehicles using equipment such as 4D chairs inside the vehicle, the fire breaths of the evil dragons as simulated by the audio visual output of the dragon breaths outputted from the virtual/augmented reality gears such as surround screens and speakers, and increased cabin temperature to simulate heat, etc. Once the destination is reached at794(e.g., a point on Mullholand Drive designated as the finish line), the processor proceeds to calculate the scores of the players and terminate the session. For example, Ben is a seasoned “dragon slayer,” and during the game session with Ed, Ben has killed five black dragons, and one each of the red, green, and blue dragons. Ed, on the other hand, has not killed any dragons except one blue. In such case, the processor awards 11 points to Ben, and 1 point to Ed. Ben is the winner, and Ben is awarded with an alternative reality output of a princess thanking and kissing Ben for being a hero.

Referring again toFIG.7Aat712, after selecting or configuring the media program the processor provides the media program to the media player of each user in the group of identified users. In an aspect, the processor may play the media program. For example, the processor may stream the media program or provide it as a digital download over one or more networks shown inFIG.1. Or it may provide the media program from a local storage in each participating vehicle. If playing the media program, the processor may execute operations as described in connection withFIGS.7B-Dabove. In another alternative, the processor may select each participating client device based in part on the device having local access to the media program, or remote access from an independent source, in which case the operation712may be omitted.

While operations701-712relate to initiating an inter-vehicular session of media play, further novel aspects of the present disclosure relate to aspects of inter-vehicular game play or game setup, for execution by a computer processor.FIGS.8B-8Gshow additional operations822-876that may optionally be included in the method800and performed by one or more processors performing the method800, in any operative order. Any one or more of the additional operations822-876may be omitted unless needed by a downstream operation and one or more of the operations822-876may replace one or more others of the operations of the method800. Any useful combination of operations selected from the group consisting of the operations822-876may be performed by one or more processors of an apparatus as described herein as an independent method distinct from other useful combinations selected from the same group.

Referring toFIG.8B, the method800may further include at822identifying, by one or more processors, the group of users based on profile data of each user. The profile data may comprise at least one of present preference data, past preference data, and trip purpose for each of the users.

At824, the method may further include identifying, by one or more processors, the group of users based on trip data for each of the vehicle carrying the group of users. In an aspect, the trip data may indicate that the users have substantially similar trip start times, durations, intermediate locations along a route or end times. The trip data may indicate that the users have substantially similar trip itineraries, or substantially similar trip purposes. Trips may be contemporaneous, asynchronous and overlapping in time or space, or asynchronous and nonoverlapping in time or space but related by another similarity parameter.

At826, the method may further include selecting, by one or more processors, the two or more vehicles based on profile data of each user in the group. In an aspect, the user profile may indicate preference for certain type of vehicle. In an aspect, a preference in the user profile may require certain type of vehicle, for example, a preference for xR games may require vehicles with xR components. In an aspect, the two or more vehicles may include similar theme configuration or decoration.

In an aspect, at828, the method may divide the group of users into teams based on which of the two or more vehicles each of the users occupies. The teams may comprise users of the group in multiple vehicles.

In an aspect, the method may maintain time and space information of the vehicles carrying the group of users. The method may allow users to trade this information. For example, the information may indicate that a vehicle is a lead vehicle in the group or caravan. The method may allow users in different vehicles to see what is not presently visible. For example, users in non-leading vehicles may be able to see, hear or smell what is still around the corner but is already seen and heard at the lead vehicle. Another example may allow users of leading vehicles to see, hear or smell what is presently sensible in a vehicle at the tail of the group of vehicles. In an aspect, the method may dynamically use this information in a game in real-time. For example, a user in a lead vehicle may inform or bluff other users in other vehicles of real or non-real presence of an object ahead of the group of vehicles. In an aspect, the method may enable users to drop or pick up objects along the route in an xR-based game. Either a user, cameras or sensors can report time, space, fields of view and sensory information to identify objects exchanged. The vehicles may be geographically local to one another, or geographically distant from one another.

Referring toFIG.8C, the method800may further include at832one of selecting, configuring or creating, by one or more processors, media program which is indicated as ready for play. In an aspect, at834, the one or more processors may select, configure or create the media program based in part on user profile of each user. In an aspect, at836, the one or more processors may select, configure or create the media program based in part on trip data of the two or more vehicles. In another aspect, at838, the one or more processors may select, configure or create the media program based on one or more criteria, at least in part by an algorithm that evaluates relationships between user-facing elements of the media program and one or more of: the initial location, the terminal location and the one or more intermediate locations.

In an aspect (not shown), the one or more processors may select, configure or create the media program based in part on weather conditions at the two or more vehicles.

Referring toFIG.8D, the method800may further include at842processing, by one or more processors, involuntary biometric sensor data indicating a transient neurological state of each user relating to each user's current or past preferences. In an aspect, at844, the one or more processors may select, configure or create the media program based on at least one of input, past preference data, and involuntary biometric sensor data.

Referring toFIG.8E, the method800may further include at852providing, by one or more processors, trip data for each of the two or more vehicles for use in play of the media program. In an aspect, at854, the one or more processors may select routes of the two or more vehicles based in part on congestion management criteria, and in part on entertainment objectives of the media program. The congestion management criteria may be predetermined or updated dynamically. In an aspect, the routes may be preset. In another aspect, at856, the one or more processors may control navigation of at least one of the two or more vehicles to facilitate use of the media program by the group of users. At858, the controlling the navigation of the vehicles may include parking the two or more vehicles for a stationary session.

Referring toFIG.8F, the method800may further include at862inviting, by one or more processors, at least one of the group of users into at least one of the two or more vehicles. The one or more processors may invite the users via one of suitable methods of messaging. At864, the one or more processors may invite the users based on optimizing matches in preferences of the group of users from a pool of potential users by an algorithm based on one or more of: an aggregate measure of preference criteria weighted by defined weighting factors and a predictive machine learning algorithm trained over a set of preference criteria with an objective of maximizing a measure of user satisfaction with inclusion in the group of users. In an aspect, at866, the one or more processors may loosen the preference criteria in response to intentional input from one or more of the group of users indicating an intention for applying less exclusive criteria.

Referring toFIG.8G, the method800may further include at872tracking, by one or more processors, at least one of game progress or game rewards earned by each user during the contemporaneous presence and communicating the at least one of game progress or game rewards to a server for use after termination of the continuous presence. In an aspect, the method may allow users to share game rewards or credits. At874, the one or more processors may record one or more of the group of users for sharing over a computer network, for example, over a social media network. In an aspect, an artificial intelligence (AI) engine may receive the recorded data for training learning machines. In an aspect, the AI engine may assist in selecting, configuring or creating the media programs, or in assisting users in playing the media programs.

In an aspect, at876, the one or more processors may determine at least one winner of the media program based on at least one of: which of the two or more vehicles scores highest in one or more predetermined trip-related criteria, which of the group of users most accurately predicts occurrence of one or more trip-related events, which of the group of users scores highest in answering trip-related trivia questions, and the like.

FIG.9is a conceptual block diagram illustrating components of an apparatus or system900for providing electronics games for play by a group of users in two or more moving vehicles as described herein, according to one embodiment. As depicted, the apparatus or system900may include functional blocks that can represent functions implemented by a processor, software, or combination thereof (e.g., firmware).