U.S. Pat. No. 11,845,002

INTERACTIVE GAME SYSTEM AND METHOD OF OPERATION FOR SAME

AssigneeLÜ AIRE DE JEU INTERACTIVE INC.

Issue DateFebruary 1, 2019

Illustrative Figure

Abstract

An interactive game system for implementing an interactive game in a room. The interactive game system comprises: a motion sensing module, a control unit, a projection module and a lighting module. The motion sensing module senses movement of a real-world element and surface interaction data representative of a contact between the real-world element and an interactive surface is generated therefrom. The control unit generates graphic instructions and lighting instructions based on the surface interaction data, in accordance with an implementation of a gameplay of the interactive game. The projection module receives the graphic instructions from the control unit and projects graphics on the interactive surface in accordance therewith. The lighting module receives the lighting instructions from the control unit and performs illumination of a section of the room distinct from the interactive surface in accordance therewith.

Description

DETAILED DESCRIPTION In the following description, the same numerical references refer to similar elements. The embodiments, geometrical configurations, materials mentioned and/or dimensions shown in the figures or described in the present description are embodiments only, given solely for exemplification purposes. Although the embodiments of the interactive game system and corresponding parts thereof consist of certain geometrical configurations as explained and illustrated herein, not all of these components and geometries are essential and thus should not be taken in their restrictive sense. It is to be understood, as also apparent to a person skilled in the art, that other suitable components and cooperation thereinbetween, as well as other suitable geometrical configurations, may be used for the interactive game system, as will be briefly explained herein and as can be easily inferred herefrom by a person skilled in the art. Moreover, it will be appreciated that positional descriptions such as “above”, “below”, “left”, “right” and the like should, unless otherwise indicated, be taken in the context of the figures and should not be considered limiting. Moreover, although the associated method of operation of the interactive game system includes steps as explained and illustrated herein, not all of these steps are essential and thus should not be taken in their restrictive sense. It will be appreciated that the steps of the method described herein may be performed in the described order, or in any suitable order. Referring generally toFIGS.1and2, in accordance with one embodiment, there is provided an interactive game system10. The interactive game system10is designed and configured to provide an interactive game experience in at least a section of a large room12, using an interactive surface14(or interactive wall section). In an embodiment, the interactive surface14extends along a plane P defined by at least a portion of a wall13of the room12in which the ...

DETAILED DESCRIPTION

In the following description, the same numerical references refer to similar elements. The embodiments, geometrical configurations, materials mentioned and/or dimensions shown in the figures or described in the present description are embodiments only, given solely for exemplification purposes.

Although the embodiments of the interactive game system and corresponding parts thereof consist of certain geometrical configurations as explained and illustrated herein, not all of these components and geometries are essential and thus should not be taken in their restrictive sense. It is to be understood, as also apparent to a person skilled in the art, that other suitable components and cooperation thereinbetween, as well as other suitable geometrical configurations, may be used for the interactive game system, as will be briefly explained herein and as can be easily inferred herefrom by a person skilled in the art. Moreover, it will be appreciated that positional descriptions such as “above”, “below”, “left”, “right” and the like should, unless otherwise indicated, be taken in the context of the figures and should not be considered limiting.

Moreover, although the associated method of operation of the interactive game system includes steps as explained and illustrated herein, not all of these steps are essential and thus should not be taken in their restrictive sense. It will be appreciated that the steps of the method described herein may be performed in the described order, or in any suitable order.

Referring generally toFIGS.1and2, in accordance with one embodiment, there is provided an interactive game system10. The interactive game system10is designed and configured to provide an interactive game experience in at least a section of a large room12, using an interactive surface14(or interactive wall section). In an embodiment, the interactive surface14extends along a plane P defined by at least a portion of a wall13of the room12in which the interactive game system10is installed (or substantially parallel thereto). For example, and without being limitative, in an embodiment, the interactive game system10can be installed in a gymnasium of a school, a sport complex, or the like, to provide an interactive immersive and interactive playground for children positioned in the gymnasium, with at least a section of one wall13of the room12being used as the interactive surface14of the interactive game system10. One skilled in the art will understand that, in alternative embodiments, the interactive game system10, can also be installed in any other types of rooms having a sufficient dimension to provide the desired immersive experience such as, for example and without being limitative, a common room, a training room, a hall, etc. In an embodiment, the interactive surface14has dimensions ranging between about 12 inches by 7 inches and about 19 feet by 11 feet. In an alternative embodiment, the interactive surface14has dimensions ranging between about 8 feet by 4.5 feet and about 19 feet by 11 feet.

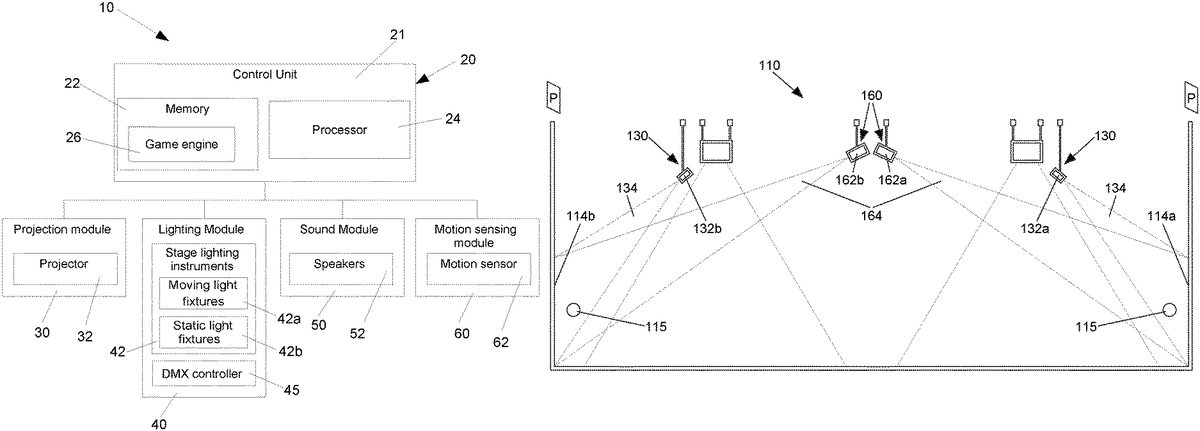

In the embodiment shown, the interactive game system10includes a control unit20(or controller), a projection module30(or projection source), a lighting module40(or light source), a sound module50(or acoustic source) and a motion sensing module60(or motion sensor). In an alternative embodiment, the interactive game system10could however be free of sound module50.

In an embodiment, the control unit20, the projection module30, the lighting module40, the sound module50and the motion sensing module60are mounted to a ceiling of the room12in which they are installed (i.e. are mounted to a structure positioned above a floor of the room12, to ensure that they do not impede on the action of the users moving in the room12). One skilled in the art will however understand that, in an alternative embodiment, at least one of the control unit20, the projection module30, the lighting module40, the sound module50and the motion sensing module60could be positioned differently than the above-mentioned mounting to the ceiling of the room12in which they are installed. For example, and without being limitative, at least one of the control unit20and the sound module50could be close to the ground, for example in a periphery of the room12to minimize the impact of the components with the actions of the users moving in the room12to play the interactive game. In another alternative embodiment, the at least one of the control unit20, the projection module30, the lighting module40, the sound module50and the motion sensing module60could be included in a portative device temporarily installable (or positionable) in the room12to allow the interactive game system10to be temporally used for providing the immersive game experience in the room12.

The projection module30, lighting module40, sound module50and motion sensing module60are connected to the control unit20(i.e. are in data communication with the control unit20) to allow the control unit20to manage the operation thereof and provide the desired interactive and immersive game experience to the users in the room12through the inputs provided by the motion sensing module60and the outputs provided by the projection module30, lighting module40and/or sound module50.

One skilled in the art will understand that the data connection between the control unit20and each one of the projection module30, lighting module40, sound module50and motion sensing module60can be any type of connection which allows data transfer therebetween. For example, and without being limitative, in an embodiment, the connection between the control unit20and the projection module30, lighting module40, sound module50and/or motion sensing module60is a wired connection. One skilled in the art will however understand that, in an alternative embodiment, the connection between the control unit20and the projection module30, lighting module40, sound module50and/or motion sensing module60can be a wireless connection, with the control unit20and the corresponding one the projection module30, lighting module40, sound module50and/or motion sensing module60being provided with communication hardware (e.g. receiver/transceiver) to allow wireless connection therebetween. For example, and without being limitative, the control unit20and the projection module30, lighting module40, sound module50and/or motion sensing module60could communicate over a local area network (LAN), a wide area network (WAN), such as the Internet, a wireless private area network (WPAN) or the like. One skilled in the art will also understand that other types of wireless communication could be used. One skilled in the art will also understand that different connections or connection types can be provided between the control unit20and each one of the projection module30, lighting module40, sound module50and motion sensing module60.

In the embodiment shown, the control unit20is a single (standalone) computing device21in data communication with the projection module30, lighting module40, sound module50and/or motion sensing module60. The computing device21can include a memory22for storing instructions, data and the like and at least one processor24for processing data. One skilled in the art will understand that, in an alternative embodiment (not shown), the control unit20could include a combination of a central computing device, connected to remote computing device(s), such as server(s) or the like and communicating with one another over a network, such as, for example and without being limitative, a local area network (LAN), a wide area network (WAN), such as the Internet, or the like. In another alternative embodiment, the control unit20could be a remote computing device, communicating with the projection module30, lighting module40, sound module50and/or motion sensing module60over a network, such as, for example and without being limitative, a local area network (LAN), a wide area network (WAN), such as the Internet, a wireless private area network (WPAN) or the like.

In the embodiment shown inFIGS.1,1aand2, the motion sensing module60includes at least one motion sensor62having a motion sensor field of view64intersecting with the interactive surface14. The at least one motion sensor62is configured to sense motion of real-world elements15within the motion sensor field of view64in order to detect the occurrence and the position of a contact between each one of the real world elements21and the interactive surface14(i.e. detect when a real world element21intersects with the plane P defining the interactive surface14and detect the position of the real world element21within the interactive surface14, when such contact occurs). In view of the above, in an embodiment, the data from the motion sensing module60can be used to generate surface interaction data representative of the contact between the real-word elements and the plane P defining the interactive surface14. For example, and without being limitative, the surface interaction data can include data relative to the occurrence of a contact, the position (i.e. the coordinates) of the contact of the real-world element15on the interactive surface, etc.

In the course of the present document, the term “real-word element” is understood to refer to any real-world object, person, or the like, which can be located within the field of view of the motion sensing module60, or can temporarily enter the field of view of the motion sensing module60and can contact (or intersect with) the interactive surface14.

For example, and without being limitative, with reference toFIG.1ain an embodiment, the motion sensing module60includes a Kinect™ motion sensor62having a camera66, an infrared emitter67and an infrared depth sensor68cooperating to sense the movement of the real-world elements15within the motion sensor field of view64. One skilled in the art will however understand that, in alternative embodiments (not shown), other form, type and/or model of motion sensor62could be used, such as sensor using time of Flight (ToF) technology, sensor using stereo technology, etc. Hence, the motion sensor62could include a different combination of components than the camera66, infrared emitter67and infrared depth sensor68of the embodiment shown to acquire the data relative to the motion of the real-world elements15within the motion sensor field of view64, which is required to generate the surface interaction data representative of the contact between the real-world elements15and the interactive surface14.

In an embodiment, the motion sensing module60can also include any additional software, hardware (memory, processor, etc.), or combination thereof to generate the surface interaction data relative to a contact between real-world elements15and the interactive surface14, from the data relative to real-world movement of the real-world elements15within the motion sensor field of view64thereof. One skilled in the art will however understand that, in an alternative embodiment, raw data relative to motion of the real-world element15within the field of view of the motion sensing module60could also be transferred to the control unit20, with the control unit20including the software, hardware, or combination thereof to generate the surface interaction data relative to the contact between the real-world elements15and the interactive surface14. In an embodiment, the motion sensing module60communicates the generated surface interaction data (or the raw data relative to motion of the real-world element15within the field of view of the motion sensing module60) to the control module20in real-time. For the sake of clarity, in the following description, only reference to the embodiment where the surface interaction data is generated at the motion sensing module60and transferred to the control module20will be made, keeping in mind that alternatively, the surface interaction data can be generated at the control module20, based on the data relative to motion of the real-world element15within the field of view of the motion sensing module60received therefrom.

In an embodiment, the motion sensing module60allows the generation of surface interaction data, without requiring any sensors being mounted on the corresponding wall13of the room12. In other words, the motion sensing module60is positioned away from the wall13of the room12being used as interactive surface14, with the motion sensor field of view64of the motion sensing module60being directed towards the wall13of the room12being used as interactive surface14. The wall13used to define the interactive surface14can therefore simply be a conventional wall13of the room12which is being used for the interactive game. One skilled in the art will understand that, in an alternative embodiment, at least a section of the floor, the ceiling or any other element located in the room12could also be used to define the interactive surface14. In the course of the present description, the term “wall” will however be used when referring to the structure used to define the interactive surface14, in order to ease description.

In order to allow the capture of precise data by the motion sensing module60(and consequently generation of precise corresponding surface interaction data), in an embodiment, the motion sensing module60is initially calibrated to define the spatial position of the surface defining the interactive surface14. In an embodiment, the calibration defines the plane P (i.e. the flat surface corresponding to the desired surface) along which contact with an element of the physical world is to be detected. It will be understood that to perform the calibration of the motion sensing module60, the dimensions of the interactive surface14must previously be defined. In other words, in an embodiment. the motion sensing module60is calibrated to define a plane P corresponding to the plane extending between the previously defined coordinates (i.e. x, y and z positions) of the corners of the interactive surface14. In view of the above, it will be understood that in most cases, the interactive surface14will substantially match the surface of a corresponding section of a wall13of the room12that is used to define the interactive surface14. However, in cases where, for example and without being limitative, the surface of the wall13is uneven, or where the coordinates (i.e. x, y and z positions) of the corner of the interactive surface14are not positioned along a common wall surface, the interactive surface14(i.e. the plane P defined between the corners of the interactive surface14) could differ from the surface of the corresponding wall13.

In an alternative embodiment, the motion sensing module60could be calibrated to match the interactive surface14exactly with the surface of the corresponding wall (or section thereof), rather than by defining a plane common to the predefined corners of the interactive surface14.

For example, and without being limitative, in an embodiment, the motion sensing module60can be configured to detect contacts between balls and the interactive surface14(i.e. the motion sensing module60is configured to capture the exact position of contact of each one of the balls with the interactive surface14). One skilled in the art will understand that, in an alternative embodiment, interactions between the physical world and the interactive surface14, different from the above-mentioned ball contact, could also be detected by the motion sensing module60(e.g. a user touching the interactive surface14, a contact between an object different from a ball and the interactive surface14, etc.), with the generated surface interaction data being subsequently transferred from the motion sensing module60to the control unit20.

As will be described in more details below, the control unit20can receive the surface interaction data as user input from the user within the interactive game and perform processing of the surface interaction data to subsequently control the projection module30, lighting module40and sound module50to implement the gameplay of the interactive game in accordance with the surface interaction data received as user input from the motion sensing module60(i.e. to generate the data relative to the graphics, lighting and sound to be outputted to the user to allow subsequent user interaction and representing the evolution of the user in the game being played in accordance with the game plot). In the course of the present application, the term “gameplay” is used to refer to the way the game is played and includes the game plot defined by the evolutive sequence of action defining the interactive game and which the player goes through in the game, as the action of the game evolves. Hence, in the present application, the implementation of the “gameplay” encompasses the determination of the specific graphics, lighting and/or sound to be outputted in accordance with the game plot to provide the desired immersive and interactive experience to the players.

The control unit20is configured to control at least one of the projection module30, the lighting module40and the sound module50in real-time, based on the surface interaction data (i.e. based on the input data from the motion sensing module60relative to real-world elements15contacting the interactive surface14), in order to provide an interactive game experience.

In an embodiment, the control unit20runs a game engine26configured to implement a predetermined gameplay, based on user interactions (or user inputs) as defined by the surface interaction data input and output the data relative to the corresponding graphics, lighting and/or sound. The game engine26is stored in the memory22of the control unit20and includes the necessary components to generate the required output instructions for implementing the gameplay (or game logic) (e.g. the graphic display, lighting, sound, etc. required to allow the user to interact and play the game). In an embodiment, the game engine includes, for example and without being limitative, a main game engine implementing the gameplay instructions, a rendering engine generating the required graphic instructions, an audio engine generating the required audio instructions, a lighting engine generating the required lighting instructions, a physics engine, etc. In other words, the game engine generates graphic instructions, lighting instructions and/or acoustic instructions, for the control unit20to control the output of the projection module30, the lighting module40and/or the sound module50respectively, in accordance with the implementation of the gameplay of an interactive game. One skilled in the art will understand that, in an embodiment, several different interactive games could be played using the interactive game system10, each interactive game having its own gameplay parameters. In an embodiment, the control unit20could access a database including gameplay information regarding the corresponding interactive game.

In an embodiment, the control unit20receives the surface interaction data from the motion sensing module60in real-time and generates and transmits output instructions to control at least one of the projection module30, the lighting module40and the sound module50also in real-time. As mentioned above, in an alternative embodiment, the control unit20could also receive raw data relative to real-world movement within the motion sensor field of view64, with the control unit20processing the data to generate the surface interaction data relative to contact between the real-world element15and the interactive surface14and subsequently generating and transmitting output instructions to control at least one of the projection module30, the lighting module40and the sound module50in real-time in order to provide the desired interactive experience to the user.

In view of the above, in an embodiment, the control unit20generates graphic instructions as output to be transmitted to the projection module30to project graphics (i.e. 2D or 3D graphics) on the interactive surface14. In an embodiment, the projection module30includes a projector32having a projector field of view34intersecting with the interactive surface14, to display the desired graphics on the interactive surface14. One skilled in the art will understand that, in an alternative embodiment, the control unit20could include more than one projector32or other display devices controlled by the control unit20and capable of displaying graphics on the interactive surface14. As mentioned above, the graphic instructions relative to the graphics to be displayed by the projection module30result from the processing of the surface interaction data generated using the motion sensing module60, thereby producing an interactive graphic display for the users. In other words, the graphic instructions are generated based on the surface interaction data, such that the graphic instructions are dependent of the real-world element15contacting the interactive surface14, thereby providing the interactivity between the real-world actions and providing the evolution of the interactive game.

In an embodiment, the projection module30is also calibrated, according to the predetermined interactive surface14, in order to provide a precise clear and graphic display. A calibration similar to the above-described calibration of the motion sensing module60, to define the spatial position of the surface defining the interactive surface14, can therefore be performed for the projection module30. Hence, a method similar to the one described above for the motion sensing module60can be performed. For example, and without being limitative, in an embodiment, the calibration defines the plane P (i.e. the flat surface corresponding to the desired surface) onto which the graphics are to be displayed. It will be understood that to perform the calibration of the projection module30, the dimension of the interactive surface14must previously be defined such that the projection module30is calibrated to define a plane P corresponding to the plane extending between the previously defined coordinates (i.e. x, y and z positions) of the corners of the interactive surface14. In view of the above, it will once again be understood that in most cases, the interactive surface14onto which the graphics are to be displayed will substantially match the surface of a corresponding section of a wall13of the room12that is used to define the interactive surface14. In an embodiment, a color calibration of the projection module30can also be performed for the specific conditions of the room12in which the interactive game system10is installed.

In an embodiment, the control unit20also generates lighting instructions as output to be transmitted to the lighting module40, to illuminate the room12in which the interactive game is played. The lighting module40has an illumination span44which can cover at least a portion of the room12, but which does not overlap with the interactive surface14, in order not to substantially impede on the quality and contrast of the graphics projected by the projection module30on the interactive surface14. For example and without being limitative, in an embodiment where the interactive surface14extends along a surface of a wall13of the room12, the illumination span44of the lighting module40is limited to illumination of the floor16of the room12, in order to minimize the negative incidence of the lighting provided by the lighting module40on the graphics projected by the projection module30on the interactive surface14.

In an embodiment, the lighting module40includes a plurality of stage lighting instruments42(or stage lighting fixtures) operating simultaneously and used in combination to illuminate the desired section of the room12in which the interactive game is played, in accordance with the lighting instructions. For example, and without being limitative, in an embodiment, the lighting module40comprises between about six and twenty stage lighting instruments42. In an embodiment, the lighting module40comprises about eight LED stage lighting instruments42of180W each to illuminate a section of about thirty feet by thirty feet and about sixteen LED stage lighting instruments42of180W to illuminate a section of about sixty feet by thirty feet. In view of the above, it will be understood that the quantity of stage lighting instruments42depends on the size of the section of the room12to be illuminated by the lighting module40and that, therefore, in alternative embodiments, a quantity of stage lighting instruments42different from the above-mentioned embodiment could be provided, for example to light a larger section or smaller section of a room12than the above described embodiments.

In an embodiment, the plurality of stage lighting instruments42includes a combination of moving light fixtures42aand static light fixtures42bcooperating to provide the lighting of the corresponding section of the room12. For example, and without being limitative, in an embodiment where the lighting module40comprises about eight stage lighting instruments42, the lighting module40can include two moving light fixtures42aand six static light fixtures42band in an embodiment where the lighting module40comprises about sixteen stage lighting instruments42, the lighting module40can be four moving light fixtures42aand twelve static light fixtures42b.

In an embodiment each one of the stage lighting instruments42of the lighting module40is independently controllable in accordance with the output lighting instructions produced by the control unit20(i.e. the type, color, intensity, direction etc. of a light beam produced by each stage lighting instrument42of the lighting module40can be controlled independently). In other words, the lighting instructions produced by the control unit20can relate to the lighting to be produced by each one of the stage lighting instruments42of the lighting module40independently. In the case of the moving light fixtures42a, the movement of the light beam produced by the stage lighting instrument42can also be independently controlled, for example to provide moving light, follow-spots, additional light for a precise portion of the section being of the room being lighted, etc. Such moving light fixtures42athereby contribute to an enhanced luminous atmosphere provided by the lighting module40for the interactive game.

The independent control of the plurality of stage lighting instruments42can therefore be used to vary the colors and/or intensity of the lighting and/or display animated lighting sequences including, for example and without being limitative, targeted color change, lighting of specific zones, punctual lighting effects, etc., which highly enhance the immersive experience of the interactive game, in the room12.

In an embodiment, to provide such individual control of the plurality of stage lighting instruments42, the lighting module40uses DMX (e.g. DMX512) as the lighting control protocol. In such an embodiment, each one of the stage lighting instruments42has a DMX address and are interlinked to each other and to the control unit20using DMX cables. In an embodiment, the lighting module40comprises a DMX controller45and the game engine26of the control unit20includes a software interface which supports communication with the DMX controller45. For example, and without being limitative, in an embodiment, the DMX controller45is a DMX USB PRO controller from ENTTEC. In an embodiment, the DMX controller45is operative to receive the output lighting instructions from the control unit20(i.e. from the game engine26of the control unit10) and translate the lighting instructions into DMX instructions, for independently controlling of the plurality of stage lighting instruments42of the lighting module40.

One skilled in the art will however understand that, in an alternative embodiment, other lighting control protocol allowing control of each one of the stage lighting instruments42can be used.

In an embodiment, the illumination provided by the lighting module40, according to the lighting instructions generated by the control unit20, results from processing of the surface interaction data generated using the motion sensing module60, thereby producing an interactive illumination for the users. In other words, the lighting instructions are generated based on processing of the surface interaction data, such that the lighting instructions are dependent on the real-world events related to the real-world elements15contacting the interactive surface14, thereby providing the interactivity between the real-world actions and the illumination provided by the lighting module40.

In view of the above, in an embodiment, the control unit20synchronizes the graphics projected by the projection module30and the illumination provided by the lighting module40to provide an immersive experience where the graphics and the illumination are complementary and coordinated in accordance with the implementation of the gameplay of the interactive game, as the game progresses. In an embodiment, the lighting module40could also include additional components (e.g. laser, fog machines, etc.) used to provide special effects which are performed according to the implementation of the gameplay and complement the above-mentioned graphics and/or illumination.

In an alternative embodiment, the illumination provided by the lighting module40, according to the lighting instructions generated by the control unit20, could be independent from the surface interaction data generated using the motion sensing module60, i.e. the lighting instructions generated by the control unit20could be predetermined and be independent from the interactions of the real-world element15with the interactive surface14. In such an embodiment, there is therefore no sync between the graphics projected by the projection module30(according to the graphic instructions output generated by the control unit20) and the illumination provided by the lighting module40(according to the lighting instructions output generated by the control unit20), while still providing an immersive experience where graphics and illumination are provided in an interactive game played by users in a large room12.

As mentioned above, one skilled in the art will understand that the lighting instructions could relate to the type, color, intensity, etc. of the lighting to be emitted by the lighting module40, as well as to a specific direction for each moving light fixture42a(i.e. the lighting instructions could relate to a type, color, intensity, direction etc. of a light beam for the illumination provided by each stage lighting instrument42of the lighting module40).

In an embodiment, the control unit20also generates acoustic instructions as output to be transmitted to the sound module50, to play sounds. In an embodiment, the sound module50includes a plurality of speakers which are used to play the sounds. In the course of the present description the term “sounds” is used to refer to any emitted vibrations (or propagated soundwave) that can be heard and interpreted when reaching a person's ear, such as, for example, individual noises, music, etc.

Once again, in an embodiment, the acoustic instructions relative to the sounds to be played by the sound module50result from processing of the surface interaction data generated using the motion sensing module60, thereby producing an interactive auditive environment for the users. In other words, the acoustic instructions are generated based on the surface interaction data, which indicates that the acoustic instructions are dependent on the real-world events related to the real-world elements15contacting the interactive surface14, thereby providing the interactivity between the real-world actions and the sounds played by the interactive game system10.

In view of the above, in an embodiment, the control unit20synchronizes the graphic projected by the projection module30, the illumination provided by the lighting module40and the sound played by the sound module50, to provide an immersive experience where the graphics, the illumination and the sounds are complementary and coordinated to match the implementation of the gameplay of the interactive game, as the game progresses. As mentioned above, one skilled in the art will however understand that, in an embodiment, the interactive game system10could be free of sound module50, with the immersive experience being rather provided by the combination of the graphics and the illumination being complementary and coordinated to match the implementation of the gameplay of the interactive game.

In an alternative embodiment, the sounds played by the sound module50, according to the acoustic instructions, could also be independent from the surface interaction data generated using the motion sensing module60, i.e. the acoustic instructions generated by the control unit20could be predetermined and be independent from the interaction of the real-world element15with the interactive surface14. In such an embodiment, there is therefore no sync between the sound played by the sound module50and the graphic projected by the projection module30and/or the illumination provided by the lighting module40, while still providing an immersive experience where sound, large scale graphics and illumination of the room are provided.

One skilled in the art will understand that the acoustic instructions could relate to the nature of the sounds to be played by the sound module50as well as to a specific acoustic direction in which the sounds are to be played, i.e. a direction of an acoustic beam for the sounds to be played by each one of the speakers52of the sound module50.

In an alternative embodiment shown inFIG.3wherein the features are numbered with reference numerals in the100series which correspond to the reference numerals of the previous embodiments, the interactive game system110could include two interactive surfaces114a,114b. In such an embodiment, the two interactive surfaces114a,114bcould be facing one another, but one skilled in the art will understand that they could also be at an angle relative to one another.

In such an embodiment, each one of the motion sensing module160and the projection module130are configured to have a respective motion sensor field of view164and a projector field of view134, intersecting with each one of the interactive surfaces114a,114b, to generate surface interaction data representative of contact between the real-world elements115and the planes P defining the interactive surfaces114a,114b, and for projecting the graphics on each one of the interactive surfaces114a,114b. In other words, the motion sensing module160includes a first motion sensor162aoriented towards a first interactive surface114a, and a second motion sensor162boriented towards a second interactive surface114band the projection module130includes at least a first projector132aoriented towards the first interactive surface114aand at least a second projector132boriented towards the second interactive surface114b. In an embodiment, the graphics projected on the interactive surfaces114a,114bare the same for each one of the interactive surfaces114a,114b. One skilled in the art will however understand that, in an alternative embodiment, the graphics projected on the interactive surfaces114a,114bcould be different for each one of the interactive surfaces114a,114b. One skilled in the art will also understand that, in an alternative embodiment (not shown) more that two interactive surfaces114a,114bcould be provided.

An interactive game system10in accordance with an embodiment having been described above, there will now be described a method for operation of such a system or, in other words, a method for providing an interactive game to users, using an interactive game system10.

With reference toFIG.4, in an embodiment, the method includes the initial step280of generating surface interaction data representative of a contact between a real-world element and at least one interactive surface. In an embodiment, the surface interaction data can be generated by a motion sensing module and be communicated in real-time to a control unit in data communication therewith. In an alternative embodiment, the surface interaction data can be generated by the control unit, based on raw data relative to motion of a real-world element within the field of view of the motion sensing module and communicated to the control unit in real-time.

In view of the above, in an embodiment wherein the step of generating surface interaction data representative of a contact between a real-world element and at least one interactive surface includes sensing movement of the real-world element within a motion sensor field of view intersecting with the interactive surface.

As mentioned above, in an embodiment, each one of the at least one interactive surface is a large surface defined by at least a portion of a wall of a room in which the interactive game is provided. In an embodiment, each one of the at least one interactive surface is a plane surface defined by at least the portion of a wall of a room in which the interactive game is provided.

The method also includes the step282of processing (or interpreting) the surface interaction data according to a gameplay of an interactive game implemented using the interactive game system.

The method further includes the step284of generating graphic instructions to be projected on at least one interactive surface, based on the processed surface interaction data and according to the predetermined implementation of the gameplay of the interactive game. In other words, this step allows the display of graphics representing progress of the interactive game, in accordance with the predetermined implementation of the gameplay, in response to the detected contacts between the real-world element and the interactive surface. In an embodiment, the graphics are generated by a game engine of the control unit and are communicated in real-time to the projection module, for projection thereof on the at least one interactive surface.

In an embodiment, the method further includes the step286of generating lighting instructions based on the processed surface interaction data and according to the predetermined implementation of the gameplay of the interactive game and implementing the lighting instructions using the lighting module. In other words, this step allows generating lighting instructions and providing illumination of a section of the room, which is complementary to the graphics displaying progress of the interactive game, in accordance with the predetermined implementation of the gameplay, in response to the detected contact between the real-world element and the interactive surface. In an embodiment, the lighting instructions are generated by the game engine of the control unit and are communicated in real-time to the lighting module, such that the stage lighting instruments thereof can produce the desired illumination of the room (or specific section thereof).

In an embodiment, the step of generating and implementing the lighting includes projecting light according to an illumination span covering at least a portion of the room and which does not overlap with the interactive surface.

In an embodiment, the step of generating and implementing the lighting includes independently controlling each stage lighting instrument of the lighting module.

In an embodiment, the method further includes the step288of generating acoustic instructions based on the processed surface interaction data and according to the predetermined implementation of the gameplay of the interactive game and implementing the acoustic instructions using the sound module. In other words, this step allows generating acoustic instructions and playing sounds complementary to the graphics and/or illumination representing progress in the interactive game, in accordance with the predetermined implementation of the gameplay, in response to the detected contact between the real-world element and the interactive surface. In an embodiment, the acoustic instructions are generated by the game engine of the control unit and are communicated in real-time to the sound module, such that the speakers thereof can play the sounds in the room (or in a specific acoustic direction in the room).

Several alternative embodiments and examples have been described and illustrated herein. The embodiments of the invention described above are intended to be exemplary only. A person of ordinary skill in the art would appreciate the features of the individual embodiments, and the possible combinations and variations of the components. A person of ordinary skill in the art would further appreciate that any of the embodiments could be provided in any combination with the other embodiments disclosed herein. It is understood that the invention may be embodied in other specific forms without departing from the central characteristics thereof. The present examples and embodiments, therefore, are to be considered in all respects as illustrative and not restrictive, and the invention is not to be limited to the details given herein. Accordingly, while the specific embodiments have been illustrated and described, numerous modifications come to mind. The scope of the invention is therefore intended to be limited solely by the scope of the appended claims.

Claims

- An interactive game system for implementing an interactive game in a room of a building, the interactive game system comprising: a motion sensing module sensing movement of a real world element within a motion sensor field of view intersecting with an interactive surface having graphics relative to playing of the interactive game projected on the interactive surface and including a plane extending substantially along at least a portion of a wall of the room of the building, with surface interaction data representative of a contact between the real-world element and the interactive surface being generated therefrom, the motion sensing module including a three-dimensional (3D) motion sensor configured to sense movement of the real-world element and the contact between the real-world element and the interactive surface independently of lightings conditions of the room of the building;a control unit in data communication with the motion sensing module, the control unit being configured to process the surface interaction data and generate graphic instructions and lighting instructions, in accordance with an implementation of a gameplay of the interactive game;a projection module in data communication with the control unit and receiving the graphic instructions therefrom, the graphic instructions being generated based on the processed surface interaction data, the projection module projecting graphics relative to the playing of the interactive game on the interactive surface, in accordance with the graphic instructions received from the control unit;and a lighting module in data communication with the control unit and receiving the lighting instructions therefrom, the lighting module including a plurality of stage lighting instruments together defining an illumination span exclusively oriented towards a section of a floor of the room, without overlapping with the interactive surface in order to perform illumination of a section of the room distinct from the interactive surface, the illumination being performed according to the lighting instruction received from the control unit.

- The interactive game system of claim 1, wherein the lighting instructions are generated based on the surface interaction data, the graphics relative to playing of the interactive game projected by the projection module and the lighting performed by the lighting module being coordinated in accordance with the implementation of the gameplay of the interactive game.

- The interactive game system of claim 1, further comprising a sound module in data communication with the control unit and receiving acoustic instructions therefrom, the sound module playing sounds according to the acoustic instructions received from the control unit.

- The interactive game system of claim 3, wherein the acoustic instructions are generated based on the surface interaction data, the sounds played by the sound module and the graphics relative to playing of the interactive game projected by the projection module being coordinated in accordance with the implementation of the gameplay of the interactive game.

- The interactive game system of claim 1, wherein the plurality of stage lighting instruments comprises a combination of moving light fixtures and static light fixtures operating independently to illuminate the desired section of the room.

- The interactive game system of claim 5, wherein the lighting module comprises a DMX controller and wherein the lighting module controls the plurality of stage lighting instruments using a DMX lighting control protocol.

- The interactive game system of claim 5, wherein the plurality of stage lighting instruments includes between six and twenty light-emitting diode (LED) stage lighting instruments, each one of the LED stage lighting instruments being about 180 watt LED lighting instruments.

- The interactive game system of claim 1, wherein the control unit comprises a memory and a processor, the control unit having a game engine stored in the memory and configured to implement a predetermined gameplay, based on user interactions defined by the surface interaction data, the game engine generating the graphic instructions and the lighting instructions.

- An interactive game system for implementing an interactive game in a room having a wall and a floor, the interactive game system comprising: a motion sensing module including at least one three-dimensional (3D) motion sensor having an infrared depth sensor and a motion sensor field of view intersecting with an interactive surface extending substantially along a section of the wall of the room, the at least one 3D motion sensor acquiring data relative to the motion of a real-world element within the motion sensor field of view and being configured to sense movement of the real-world element and contact between the real-world element and the interactive surface;a control unit in data communication with the motion sensing module, the control unit comprising a memory and a processor and at least one of the motion sensing module and the control unit generating surface interaction data representative of the contact between the real-world element and the interactive surface from the acquired data relative to the motion of the real-world element within the motion sensor field of view, the control unit generating graphic instructions and lighting instructions based on the surface interaction data and in accordance with an implementation of the gameplay of the interactive game;a projection module in data communication with the control unit and receiving the graphic instructions therefrom, the graphic instructions representing graphics relative to playing of the interactive game to be displayed, the projection module including a projector projecting the graphics relative to playing of the interactive game on the interactive surface, in accordance with the graphic instructions received from the control unit;and a lighting module in data communication with the control unit and receiving lighting instructions therefrom, the lighting instructions representing lighting to be performed, the lighting module including a plurality of stage lighting instruments together defining an illumination span which does not overlap with the interactive surface, the stage lighting instruments exclusively performing illumination of a section of the floor of the room distinct from the interactive surface extending substantially along the section of the wall of the room, in accordance with the lighting instruction received from the control unit.

- The interactive game system of claim 9, wherein the graphics relative to playing of the interactive game projected by the projection module and the lighting performed by the lighting module are coordinated in accordance with the implementation of the gameplay of the interactive game.

- The interactive game system of claim 9, further comprising a sound module in data communication with the control unit, the control unit generating acoustic instructions and transmitting the acoustic instructions to the sound module, the sound module playing sounds according to the acoustic instructions received from the control unit.

- The interactive game system of claim 11, wherein the acoustic instructions are generated based on the surface interaction data, the sounds played by the sound module and the graphics relative to playing of the interactive game projected by the projection module being coordinated in accordance with the implementation of the gameplay of the interactive game.

- The interactive game system of claim 9, wherein the plurality of stage lighting instruments are independently controllable using the lighting instructions to illuminate the desired section of the room.

- The interactive game system of claim 13, wherein the lighting module comprises a DMX controller and wherein the lighting module controls the plurality of stage lighting instruments using a DMX lighting control protocol.

- The interactive game system of claim 9, wherein the plurality of stage lighting instruments includes a combination of moving light fixtures and static light fixtures.

- A method for providing an interactive game in a room of a building, using an interactive game system, the method comprising the steps of: sensing a contact between a real-world element and an interactive surface defined along at least a portion of a wall of the room of the building using a motion sensing module including at least one three-dimensional (3D) motion sensor configured to sense movement of the real-world element and the contact between the real-world element and the interactive surface independently of lightings conditions of the room of the building;generating surface interaction data representative of the contact between the real-world element and the interactive surface defined along at least the portion of the wall of the room of the building using the motion control module;processing the surface interaction data using a control unit in accordance with an implementation of a gameplay of the interactive game implemented using the interactive game system;generating graphic instructions relative to graphics relative to playing of the interactive game to be projected on the interactive surface, based on the processed surface interaction data using the control unit, and projecting the graphics relative to playing of the interactive game on the interactive surface according to the graphic instructions, using a projection module;and generating lighting instructions using the control unit based on the processed surface interaction data and performing lighting of a section of the room located away from the interactive surface extending at least along the section of the wall of the room and using a lighting module having a illumination span exclusively oriented toward a section of a floor of the room, without overlapping with the interactive surface, the lighting being performed according to the lighting instructions and simultaneously with the projection of the graphics on the interactive surface.

- The method of claim 16, further comprising generating acoustic instructions based on the processed surface interaction data using the control unit and playing sounds according to the acoustic instructions using a sound module.

- The method of claim 16, wherein the step of generating lighting instructions and performing the lighting includes projecting light according to an illumination span of the lighting module covering at least a portion of the room distinct from the interactive surface.

- The method of claim 16, wherein the step of generating lighting instructions and performing the lighting includes independently controlling each stage lighting instrument of the lighting module in accordance with the lighting instructions.

- The method of claim 16, wherein the step of generating surface interaction data representative of the contact between the real-world element and the interactive surface includes sensing movement of the real-world object within a motion sensor field of view of the at least one motion sensor of the motion sensing module intersecting with the interactive surface.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.