U.S. Pat. No. 11,813,523

AUTOMATIC TRIGGERING OF A GAMEPLAY RECORDING USING VISUAL AND ACOUSTIC FINGERPRINTS

AssigneeATI Technologies ULC

Issue DateSeptember 3, 2021

Illustrative Figure

Abstract

Systems and methods are disclosed that automatically generating a gameplay recording from an application. Techniques are provided to extract data from a buffer, the extracted data are associated with the application; to detect, based on a signature associated with the extracted data, the occurrence of an event; and upon detection of the occurrence of the event, to generate the gameplay recording from an output of the application.

Description

DETAILED DESCRIPTION The present disclosure describes systems and methods for automatic triggering of the recording of gameplay clips. Through these systems and methods, a player (or a user) of a game can play a game without being interrupted by the need to press a hotkey each time the player desires to record a gameplay clip, typically following the occurrence of a game event. Moreover, systems and methods described herein free game developers of the need to integrate recording software into their game engine software, or, otherwise, the need to export API that communicates game events to third-party developers of a recording system. Visual and acoustic effects are rendered into a game's graphics and sound (i.e., a game's gameplay) to provide players with feedback on their actions as they play the game. The occurrence of a visual or an acoustic effect in the gameplay constitutes a “game event” or an “event.” Techniques disclosed herein utilize the detection of game events to automatically trigger the recording of a gameplay when those events occur. To automatically detect the occurrence of a game event, fingerprints (referred to herein as “signatures”) that uniquely characterize visual or acoustic effects are used. Once an event associated with a visual or an acoustic effect is detected, the generation of a gameplay clip can be triggered. Furthermore, techniques disclosed herein detect events associated with visual, acoustic, and sensory cues. A visual cue can be extracted from video data captured by one or more cameras. Visual cues can be distinct image patterns—for example, a gesture made by a player can serve as a visual cue. An acoustic cue can be extracted from audio data captured by one or more microphones. Acoustic cues can be distinct audio patterns—for example, a command uttered by a player can serve as an acoustic cue. ...

DETAILED DESCRIPTION

The present disclosure describes systems and methods for automatic triggering of the recording of gameplay clips. Through these systems and methods, a player (or a user) of a game can play a game without being interrupted by the need to press a hotkey each time the player desires to record a gameplay clip, typically following the occurrence of a game event. Moreover, systems and methods described herein free game developers of the need to integrate recording software into their game engine software, or, otherwise, the need to export API that communicates game events to third-party developers of a recording system.

Visual and acoustic effects are rendered into a game's graphics and sound (i.e., a game's gameplay) to provide players with feedback on their actions as they play the game. The occurrence of a visual or an acoustic effect in the gameplay constitutes a “game event” or an “event.” Techniques disclosed herein utilize the detection of game events to automatically trigger the recording of a gameplay when those events occur. To automatically detect the occurrence of a game event, fingerprints (referred to herein as “signatures”) that uniquely characterize visual or acoustic effects are used. Once an event associated with a visual or an acoustic effect is detected, the generation of a gameplay clip can be triggered.

Furthermore, techniques disclosed herein detect events associated with visual, acoustic, and sensory cues. A visual cue can be extracted from video data captured by one or more cameras. Visual cues can be distinct image patterns—for example, a gesture made by a player can serve as a visual cue. An acoustic cue can be extracted from audio data captured by one or more microphones. Acoustic cues can be distinct audio patterns—for example, a command uttered by a player can serve as an acoustic cue. A sensory cue can be extracted from sensory measurements. Sensory cues can be pre-determined movements or poses—for example, a player's pose as measured by transceivers attached to the player's body can serve as a sensory cue. Hence, signatures that uniquely characterize these cues can also be used by techniques described herein to automatically trigger the generation of a gameplay clip.

The present disclosure describes a method for automatically generating a gameplay recording (e.g., a gameplay clip) from an application. In an alternative, the method comprises operations that: extract data from a buffer, the extracted data are associated with the application; detect, based on a signature associated with the extracted data, the occurrence of an event; and upon detection of the occurrence of the event, generate the gameplay recording from an output of the application.

The present disclosure also describes a recording system for automatically generating a gameplay recording from an application. In an alternative, the system comprises a memory and a processor. The processor is configured to: extract data from a buffer in the memory, wherein the extracted data are associated with the application, detect, based on a signature associated with the extracted data, the occurrence of an event; and upon the detection of the occurrence of the event, generate a gameplay recording from an output of the application.

Furthermore, the present disclosure describes a non-transitory computer-readable medium comprising instructions executable by at least one processor to perform operations for automatically generating a gameplay recording from an application. In an alternative, the operations comprise: extracting data from a buffer, the extracted data are associated with the application; detecting, based on a signature associated with the extracted data, the occurrence of an event; and upon detection of the occurrence of the event, generating the gameplay recording from an output of the application.

In one example, the detection of the occurrence of an event can be carried out by computing a signature from the extracted data, comparing the signature to signatures stored in a database, and then determining that an event occurred, if a match is found between the computed signature and at least one signature from the database.

In another example, the detection of the occurrence of an event can be carried out by computing a signature from the extracted data, and, then, determining that an event occurred based on a machine learning model. The machine learning model can be trained based on exemplary pairs of signatures and corresponding events of interest.

The extracted data, based on which a detection is performed, can be extracted from a buffer that contains data comprising the gameplay output. In such a case, for example, the signature used for detection can be characteristic of a visual effect or an acoustic effect rendered in the extracted data. Alternatively, or in addition, the extracted data, based on which a detection is performed, can be extracted from a buffer that contains data captured from a scene of the game—e.g., the data captured can comprise video data, audio data, or sensory data. In such a case, for example, the signature used for detection can be characteristic of a visual, an acoustic, or a sensory cue present in the extracted data.

FIG.1is a block diagram of an example device100in which one or more features of the disclosure can be implemented. The device100can include, for example, a computer, a gaming device, a handheld device, a set-top box, a television, a mobile phone, a server, a tablet computer, or other types of computing devices. The device100includes a processor102, a memory104, a storage106, one or more input devices108, and one or more output devices110. The device100can also optionally include an input driver112and an output driver114. It is understood that the device100can include additional components not shown inFIG.1.

In various alternatives, the processor102includes a central processing unit (CPU), a graphics processing unit (GPU), a CPU and GPU located on the same die, or one or more processor cores, wherein each processor core can be a CPU or a GPU. In various alternatives, the memory104is located on the same die as the processor102or is located separately from the processor102. The memory104includes a volatile or non-volatile memory, for example, random access memory (RAM), dynamic RAM, or a cache.

The storage106includes a fixed or removable storage, for example, a hard disk drive, a solid-state drive, an optical disk, or a flash drive. The input devices108include, without limitation, a keyboard, a keypad, a touch screen, a touch pad, a detector, a microphone, an accelerometer, a gyroscope, a biometric scanner, or a network connection (e.g., a wireless local area network card for transmission and/or reception of wireless IEEE 802 signals). The output devices110include, without limitation, a display, a speaker, a printer, a haptic feedback device, one or more lights, an antenna, or a network connection (e.g., a wireless local area network card for transmission and/or reception of wireless IEEE 802 signals).

The input driver112communicates with the processor102and the input devices108, and permits the processor102to receive input from the input devices108. The output driver114communicates with the processor102and the output devices110, and permits the processor102to send output to the output devices110. It is noted that the input driver112and the output driver114are optional components, and that the device100will operate in the same manner if the input driver112and the output driver114are not present.

FIG.2is a block diagram of one example of a recording system200, employable by the device ofFIG.1, in which one or more features of the disclosure can be implemented. The recording system200can include a game engine210, a gameplay buffer220, and a recorder230. The recorder230can access the gameplay buffer220; the gameplay buffer stores a gameplay output215that is continuously generated by the game engine210during the playing of a game by a user. The recorder230includes, for example, a detector232, a clipper234, a database236, and a graphical user interface (GUI)238. The recorder230can automatically generate and output gameplay clips235based on events it detects232during the playing of a game, as is explained in detail below. The gameplay clips235can be replayed to the user and/or can be shared with the user's community on social media, e.g., the generated gameplay clips can be uploaded into the feeds of the user's contacts on social media.

Typically, during the playing of a game by a user, the game engine210generates a live stream with content that is a function of the game's logic and the player's interactions with it. Such a live stream, i.e., the gameplay output215, can include a video track containing 3D animated graphics and a corresponding audio track associated with the played game. During the playing of a game, the gameplay output215is buffered in the gameplay buffer220in a circular manner—the first frame (or data segment) to be buffered in is also the first frame (or data segment) to be removed from the buffer. The buffering of live stream, such as the gameplay output215, allows for the realtime processing of the data in the buffer by system components that can access the buffer, such as the recorder230.

The detector232can detect the occurrence of an event associated with the game based on, for example, the processing of the data currently stored in the gameplay buffer220. Thus, the detector232can be set to extract the most recent data in the buffer, possibly video and audio data within a time window that precedes or overlaps the current time. The detector232can then process the extracted data to detect the occurrence of a game event. Once an event has been detected, the clipper234can be triggered to output a gameplay clip235, generated from content currently stored in the gameplay buffer220.

The detector can carry out the detection of events, associated with visual or acoustic effects, using information in the database236. The database236can contain signatures that uniquely characterize visual or acoustic effects that may be rendered in the gameplay output215by the game engine210. The database236can also contain operating parameters used by the recorder230, for example, parameters and/or training data of a machine learning model used for the detection of the visual or the acoustic effects. The recorder230can also include a GUI238through which the user playing the game can set up recording parameters. For example, the user can select visual or acoustic effects out of which signatures will be created and then used by the recorder230to trigger the clipping of gameplays. The user can also define custom signatures. In another example, the user, via the GUI238, can select what social communities to share clipped gameplays with.

FIG.3is a block diagram of another example of a recording system300, employable by the device ofFIG.1, in which one or more features of the disclosure can be implemented. The recording system300can include a game engine310, a gameplay buffer320, a recorder330, a data buffer340, and a data source350. The recorder330can access the gameplay buffer320and the data buffer340. The gameplay buffer320stores a gameplay output315that is generated by the game engine310, and the data buffer340stores data that are received from the data source350. For example, the data source350feeds the data buffer340with data streams, including a video stream captured by one or more cameras352, an audio stream captured by one or more microphones354, or sensory data captured by any other sensors356. The recorder330can include, for example, a detector332, a clipper334, a database336, and a GUI338. The recorder330can automatically generate and output gameplay clips335based on events it detects during the playing of a game, as is explained in detail below. The gameplay clips335can be replayed to the user and/or can be shared with the user's community on social media, e.g., the generated gameplay clips can be uploaded into the feeds of the user's contacts on social media.

As mentioned above, during the playing of a game by a user, the game engine310generates a live stream (i.e., the gameplay output315) with a content that is a function of the game's logic and the player's interactions with it. In addition, during the playing of the game by the user, the data source350can stream video captured by the one or more cameras352. The video captured can cover the scene of the game, including the player and other participants. Thus, the video captured can contain visual patterns such as gestures made by the player or the other participants. Certain visual patterns can be used as visual cues that when detected332can trigger the clipping334of content from the gameplay buffer320. The data source350can stream audio captured by the one or more microphones354. The captured audio can contain audio emitted from the device executing the game, speech uttered by the player or the other participants, or any other surrounding sound. Hence, acoustic effects present in the emitted audio, specific commands present in the uttered speech, or specific sound patterns present in the surrounding sound can be used as acoustic cues that when detected332can trigger the clipping334of content from the gameplay buffer320. The data source350can also stream sensory data captured by the other sensors356. The captured sensory data can contain, for example, range measurements received from transceivers attached to the player, the other participants, or other objects at the scene of the game. Patterns of range measurements can be used as sensory cues that when detected332can also trigger the clipping334of content from the gameplay buffer320.

Similar to the manner in which the gameplay output315can be buffered in the gameplay buffer320, the data source output355can be buffered in the data buffer340in a circular manner—the first frame (or data segment) to be buffered in is also the first frame (or data segment) to be removed from the buffer. The buffering of live streams, such as those provided in the data source output355, allows for the realtime processing of the buffered streams by system components that can access the buffer340, such as the recorder330.

The detector332can detect the occurrence of an event associated with the game based on the processing of the data currently stored in the gameplay buffer, as explained with respect to the recorder230ofFIG.2. Alternatively, or in addition, the detector332can detect the occurrence of an event associated with the game based on the processing of the data currently stored in the data buffer340. To facilitate detection of events, the detector332can be set to extract the most recent data in the data buffer340, possibly video, audio, or other sensory data within a time window that precedes or overlaps the current time. The detector332can then process the extracted data to detect the occurrence of an event. Once an event has been detected, the clipper334can be triggered to output a gameplay clip335, generated from content currently stored in the gameplay buffer320. In an alternative, the clipper334can integrate content extracted from the data buffer340into content extracted from the gameplay buffer320. For example, the clipper334can insert a video of the player (as captured by one or more cameras352) into the content extracted from the gameplay buffer320.

The detector332can carry out the detection of events, associated with visual, acoustic, or sensory cues, using information in the database336. The database336can contain signatures that characterize visual, acoustic, or sensory cues that may be present in the data source output335. The database336can also contains, operating parameters used by the recorder330, for example, parameters and/or training data of a machine learning model used for the detection of events associated with the visual, acoustic, or sensory cues. The recorder330can also include a GUI338through which the user playing the game can set up recording parameters. For example, the user can select visual, acoustic, or sensory cues, of which signatures will be used by the recorder330to trigger the clipping of gameplays. The user can also define custom signatures. In another example, the user, via the GUI338, can select what social communities the user wishes to share clipped gameplays with.

FIG.4is a flow chart of an example recording method400, with which one or more features of the disclosure can be processed. The recording method400can be employed by the recorder230ofFIG.2or by the recorder330ofFIG.3. The method starts, in step410, with the extraction of data from a buffer that contains data associated with a game currently being played by a user. The data may be extracted from the gameplay buffer220,320, in which case it will contain gameplay output215,315generated by the game engine210,310. Alternatively, or in addition, the data may be extracted from the data buffer340, in which case it will contain data source output355captured by the data source350, e.g., data captured by the one or more cameras352, the one or more microphones354, or the other sensors356. For example, the data extracted410can be a data sequence that is extracted from the buffer220,320,340within a time window that precedes or overlaps the current time.

Once data associated with the game are extracted from the buffer, in step420, the occurrence of an event can be detected based on a signature associated with the extracted data. In an alternative, the user, when setting the recorder230,330can determine, possibly from a list presented to the player via the GUI238,338, what constitutes an event to be detected. For example, when data are extracted410from the gameplay buffer220,320, the user can select visual effects and/or acoustic effects, so that an event occurs when these selected effects are detected to be rendered in the extracted data. In another example, when data are extracted410from the data buffer340, the user can select visual, acoustic, or sensory cues, so that an event occurs when these selected cues are detected to be present in the extracted data.

Thus, in step420, the detection of the occurrence of an event is based on a signature associated with the extracted data. A signature can be any metric that uniquely identifies a certain visual or acoustic effect or a certain visual, acoustic, or sensory cue associated with the game. A signature that uniquely identifies an effect or a cue associated with the game is indicative of the occurrence of an event and, therefore, facilitates a detection of that event. For example, a signature of an acoustic effect or an acoustic cue can be computed based on a spectrogram, while a signature of a visual effect or a visual cue can be computed based on histogram-based image descriptors. Upon the detection of an event, in step430, a clip can be generated out of the gameplay content that is currently stored in the gameplay buffer220,320.

FIG.5is a flow chart of one detection method500, with which one or more features of the disclosure can be processed. The method500can make use of signatures—characterizing visual or acoustic effects and/or visual, acoustic, or sensory cues—that were computed and were stored in the database236,336before the playing of the game began. Thus, each signature in the database uniquely corresponds to a certain effect or cue. The effects and cues, for which signatures are computed in the database, can be determined by the developer of the recording system200,300, or, otherwise, the user can be provided, via the GUI238,338, with tools to select effects or cues for which signatures will be computed and stored in the database.

To detect the occurrence of an event, the method500starts, in step510, with extracting data that are associated with the game from a buffer220,320,340. In step520, a signature is computed from the extracted data. The computed signature is then compared with signatures stored in the database236,336, in step530. If a match is found to at least one signature of the signatures stored in the database540, such a match constitutes the detection of an event that can trigger the generation of a gameplay clip, in step550. Whether an event is detected555(and a clip has been generated) or not545, the method500can be repeated in a loop, continuing the detection of upcoming events during the playing of the game. The frequency in which data are extracted from the buffer510and a signature computed520therefrom, can be an operating parameter of the recorder230,330settable by the user. In an alternative, the frequency in which data are extracted can be dependent on the detection of an event. For example, when an event has been detected, and as a result the current content of the gameplay buffer has been clipped into a gameplay clip, the next data extraction can be delayed so that it is performed for data not included in the currently generated clip.

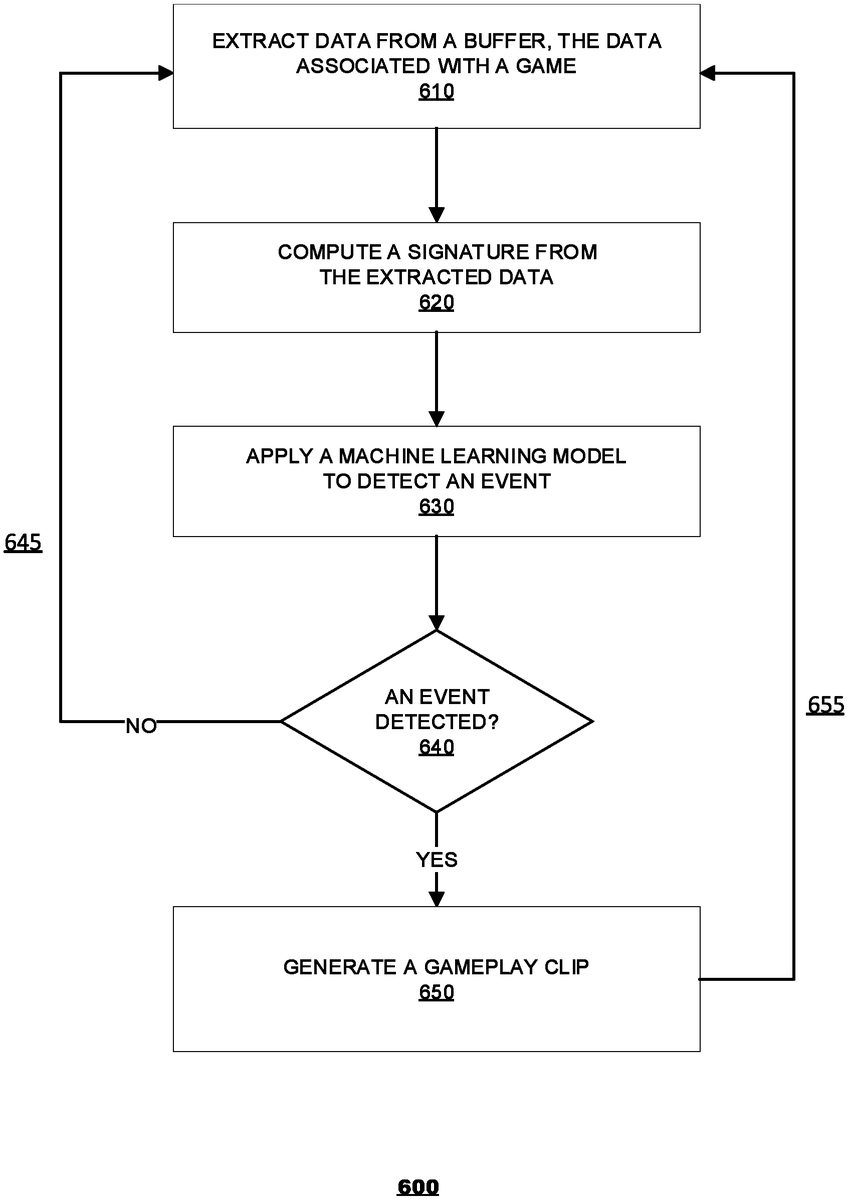

FIG.6is a flow chart of another detection method, with which one or more features of the disclosure can be processed. The method600applies a machine learning model to detect whether a signature, computed from data extracted in accordance with step610, correlates with an event. The model may be based on one or more neural networks (NN), such as convolutional neural networks (CNN), recurrent neural networks (RNN), artificial neural networks (ANN), and a combination thereof. The model can be trained to find the correlation between signatures and respective visual or acoustic effects and/or the correlation between signatures and respective visual, acoustic, and sensory cues associated with the game. That way, a signature at the input of the model generates an output that is indicative of the occurrence of an event that is associated with the respective effect or cue. The training of the machine learning model results in model parameters that can be stored in the database236,336, to be used during the application of the model. The training of the model can be done by the developer of the recorder230,330. In an alternative, the model can be trained or updated by the user as new effects and cues and their corresponding signatures are added, possibly by the user, to the database236,336.

To detect the occurrence of an event, the method600starts, in step610, by extracting data that are associated with the game from a buffer220,320,340. In step620, a signature is computed from the extracted data. Then, in step630, the signature is fed into the machine learning model. Based on that signature, the model can provide an output that is indicative of either a detection or no detection of the occurrence of an event, in step640. If an event has been detected, in step650, a clip is generated from the content of the gameplay buffer220,320. Whether an event has been detected655(and a clip has been generated) or not645, the method600can be repeated in a loop, continuing the detection of upcoming events during the playing of the game. As explained above, the frequency in which data are extracted from the buffer610and a signature is computed therefrom, can be an operating parameter of the recorder230,330settable by the user. In an alternative, the frequency in which data are extracted can be dependent on the detection of an event. For example, when an event has been detected, and as a result the current content of the gameplay buffer has been clipped into a gameplay clip, the next data extraction can be delayed so that it is performed for data not included in the generated clip.

It should be understood that many variations are possible based on the disclosure herein. Although features and elements are described above in particular combinations, each feature or element can be used alone without the other features and elements or in various combinations with or without other features and elements.

The various functional units illustrated in the figures and/or described herein, including, but not limited to, the processor102, the input driver112, the input devices108, the output driver114, the output devices110, the game engine210,310, the recorder230,330(including the detector232,332, the clipper234,334, or the GUI238,338) can be implemented as a general purpose computer, a processor, or a processor core, or as a program, software, or firmware, stored in a non-transitory computer readable medium or in another medium, executable by a general purpose computer, a processor, or a processor core. The methods provided can be implemented in a general purpose computer, a processor, or a processor core. Suitable processors include, by way of example, a general purpose processor, a special purpose processor, a conventional processor, a digital signal processor (DSP), a plurality of microprocessors, one or more microprocessors in association with a DSP core, a controller, a microcontroller, Application Specific Integrated Circuits (ASICs), Field Programmable Gate Arrays (FPGAs) circuits, any other type of integrated circuit (IC), and/or a state machine. Such processors can be manufactured by configuring a manufacturing process using the results of processed hardware description language (HDL) instructions and other intermediary data including netlists (such instructions capable of being stored on a computer readable media). The results of such processing can be mask works that are then used in a semiconductor manufacturing process to manufacture a processor which implements features of the disclosure.

The methods or flow charts provided herein can be implemented in a computer program, software, or firmware incorporated in a non-transitory computer-readable storage medium for execution by a general purpose computer or a processor. Examples of non-transitory computer-readable storage mediums include a read only memory (ROM), a random access memory (RAM), a register, cache memory, semiconductor memory devices, magnetic media such as internal hard disks and removable disks, magneto-optical media, and optical media such as CD-ROM disks, and digital versatile disks (DVDs).

Claims

- A method for use by a recording system for automatically generating a recording from an application, comprising: detecting, based on a signature associated with data extracted in real-time from a buffer that stores an output of the application, an occurrence of a game event within the application using a machine learning model, wherein the signature is characteristic of an acoustic effect generated by the application and is computed based on audio data generated by the application and the signature uniquely characterizes the occurrence of the game event within the application;and upon detection of the occurrence of the game event, automatically generating the recording from the output of the application using the recording system, wherein the recording system is not integrated into the application.

- The method of claim 1, wherein the detecting of the occurrence of the game event comprises: computing the signature from the extracted data;comparing the signature to signatures stored in a database;and determining that the game event occurred, if a match is found between the signature and at least one matching signature of the signatures in the database.

- The method of claim 1, wherein the machine learning model is trained based on exemplary pairs of signatures and corresponding events of interest.

- The method of claim 1, wherein the buffer contains data captured from a scene of a game, the data captured comprise at least one of video data, audio data, or sensory data.

- The method of claim 1, wherein the buffer is a circular buffer.

- A recording system for automatically generating a recording from an application, comprising: a memory;and a processor, wherein the processor is configured to: detect, based on a signature associated with data extracted in real-time from a buffer in the memory that stores an output of the application, an occurrence of a game event within the application using a machine learning model, wherein the signature is characteristic of an acoustic effect generated by the application and is computed based on audio data generated by the application and the signature uniquely characterizes the occurrence of the game event within the application;and upon the detection of the occurrence of the game event, automatically generate the recording from the output of the application using the recording system, wherein the recording system is not integrated into the application.

- The recording system of claim 6, wherein the processor is further configured to: compute the signature from the extracted data;compare the signature to signatures stored in a database;and determine that the game event occurred, if a match is found between the signature and at least one matching signature of the signatures in the database.

- The recording system of claim 6, wherein the machine learning model is trained based on exemplary pairs of signatures and corresponding events of interest.

- The recording system of claim 6, wherein the buffer contains data captured from a scene of a game, the data captured comprise at least one of video data, audio data, or sensory data.

- The recording system of claim 6, wherein the buffer is a circular buffer.

- A non-transitory computer-readable medium comprising instructions executable by at least one processor of a recording system to perform operations for automatically generating a recording from an application, the operations comprising: detecting, based on a signature associated with data extracted in real-time from a buffer that stores an output of the application, an occurrence of a game event within the application using a machine learning model, wherein the signature is characteristic of an acoustic effect generated by the application and is computed based on audio data generated by the application and the signature uniquely characterizes the occurrence of the game event within the application;and upon detection of the occurrence of the game event, automatically generating the recording from the output of the application using the recording system, wherein the recording system is not integrated into the application.

- The non-transitory computer-readable medium of claim 11, wherein the detecting of the occurrence of the game event comprises: computing the signature from the extracted data;comparing the signature to signatures stored in a database;and determining that the game event occurred, if a match is found between the signature and at least one matching signature of the signatures in the database.

- The non-transitory computer-readable medium of claim 11, wherein the machine learning model is trained based on exemplary pairs of signatures and corresponding events of interest.

- The non-transitory computer-readable medium of claim 11, wherein the buffer contains data captured from a scene of a game, the data captured comprise at least one of video data, audio data, or sensory data.

- The non-transitory computer-readable medium of claim 11, wherein the buffer is a circular buffer.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.