Illustrative Figure

Abstract

A virtual space experience system capable of preventing unintentional contact between players without inhibiting a sense of immersion is provided. A virtual space image determination unit 35 of a VR system S causes an image of a virtual space to be perceived by a second player to include an image in which a first avatar corresponding to a first player moves so as to generate a gap in a correspondence relationship between coordinates of the first player and coordinates of the first avatar when a trigger event is recognized, and causes the image of the virtual space to be perceived by the second player to include an image of a second avatar corresponding to the second player and a third avatar corresponding to the first player after the recognition. An avatar coordinate determination unit 34 determines coordinates of the third avatar based on the coordinates of the first player.

Description

DESCRIPTION OF EMBODIMENTS A VR system S which is a virtual space experience system according to an embodiment is described below with reference to the drawings. The VR system S is a system for causing a player to perceive that player himself/herself exists in a virtual space (so-called virtual reality (VR)) displayed as an image. The VR system S causes a first player P1and a second player P2(hereinafter referred to as a “player P” when the first player P1and the second player P2are collectively referred to) which both exist in a predetermined region (for example, one room) in a real space RS to perceive that both exist in one virtual space VS corresponding to the region via a first avatar A1corresponding to the first player P1and a second avatar A2corresponding to the second player. [Schematic Configuration of System] First, the schematic configuration of the VR system S is described with reference toFIG.1. As illustrated inFIG.1, the VR system S includes a plurality of markers1attached to the player P which exists in the real space RS, a camera2which captures the player P (to be exact, the markers1attached to the player P), a server3which determines an image and sound of the virtual space VS (seeFIG.4Band the like), and a head mounted display (hereinafter referred to as an “HMD4”) which causes the player to perceive the determined image and sound. In the VR system S, the camera2, the server3, and the HMD4are able to wirelessly transmit and receive information to and from each other. Any of the camera2, the server3, and the HMD4may be configured to be able to transmit and receive information to and from each other in a wired manner. The plurality of markers1are attached to each of the head, both hands, and both feet of the player P via the ...

DESCRIPTION OF EMBODIMENTS

A VR system S which is a virtual space experience system according to an embodiment is described below with reference to the drawings.

The VR system S is a system for causing a player to perceive that player himself/herself exists in a virtual space (so-called virtual reality (VR)) displayed as an image.

The VR system S causes a first player P1and a second player P2(hereinafter referred to as a “player P” when the first player P1and the second player P2are collectively referred to) which both exist in a predetermined region (for example, one room) in a real space RS to perceive that both exist in one virtual space VS corresponding to the region via a first avatar A1corresponding to the first player P1and a second avatar A2corresponding to the second player.

[Schematic Configuration of System]

First, the schematic configuration of the VR system S is described with reference toFIG.1.

As illustrated inFIG.1, the VR system S includes a plurality of markers1attached to the player P which exists in the real space RS, a camera2which captures the player P (to be exact, the markers1attached to the player P), a server3which determines an image and sound of the virtual space VS (seeFIG.4Band the like), and a head mounted display (hereinafter referred to as an “HMD4”) which causes the player to perceive the determined image and sound.

In the VR system S, the camera2, the server3, and the HMD4are able to wirelessly transmit and receive information to and from each other. Any of the camera2, the server3, and the HMD4may be configured to be able to transmit and receive information to and from each other in a wired manner.

The plurality of markers1are attached to each of the head, both hands, and both feet of the player P via the HMD4, gloves, and shoes worn by the player P. The plurality of markers1are used to recognize the coordinates and posture of the player P in the real space RS as described below. Therefore, the attachment positions of the markers1may be changed, as appropriate, in accordance with other equipment configuring the VR system S.

The camera2is disposed so as to be able to capture a range (in other words, a range in which the movement of the coordinates, the change of the posture, and the like may be performed) in which the motion of the player P is possible in the real space RS in which the player P exists from multiple directions.

The server3recognizes the markers1from the image taken by the camera2and recognizes the coordinates and the posture of the player P on the basis of the positions of the recognized markers1in the real space RS. The server3determines the image and sound to be perceived by the player P on the basis of the coordinates and the posture.

The HMD4is worn on the head of the player P. In the HMD4, a monitor41(virtual space image displayer) for causing the player P to perceive the image of the virtual space VS determined by the server3, and a speaker42(virtual space sound generator) for causing the player P to perceive the sound of the virtual space VS determined by the server3are provided (seeFIG.2).

When a game and the like are played with use of the VR system S, the player P perceives only the image and sound of the virtual space VS and is caused to perceive that the player P himself/herself exists in the virtual space. In other words, the VR system S is configured as a so-called immersive system.

In the VR system S, a so-called motion capture apparatus configured by the markers1, the camera2, and the server3is included as a system which recognizes the coordinates of the player P in the real space RS.

However, the virtual space experience system of the present invention is not limited to such configuration. For example, when a motion capture apparatus is used, a motion capture apparatus having a configuration different from the abovementioned configuration in terms of the number of markers and cameras (for example, one is provided for each) may be used besides the abovementioned configuration.

An apparatus which only recognizes the coordinates of the player may be used instead of the motion capture apparatus. Specifically, for example, a sensor such as a GPS may be installed in the HMD, and the coordinates, the posture, and the like of the player may be recognized on the basis of an output from the sensor. The sensor as above and the motion capture apparatus as described above may be used together.

[Configuration of Processing Unit]

Next, the configuration of the server3is described in detail with reference toFIG.2.

The server3is configured by one or a plurality of electronic circuit units including a CPU, a RAM, a ROM, an interface circuit, and the like. As illustrated inFIG.2, the server3includes, as functions realized by a program or hardware configurations which are implemented, a display image generation unit31, a player information recognition unit32, a trigger event recognition unit33, an avatar coordinate determination unit34, a virtual space image determination unit35, and a virtual space sound determination unit36.

The display image generation unit31generates an image to be perceived by the player P via the monitor41of the HMD4. The display image generation unit31has a virtual space generation unit31a, an avatar generation unit31b, and a mobile body generation unit31c.

The virtual space generation unit31agenerates an image serving as a background of the virtual space VS and an image of an object existing in the virtual space VS.

The avatar generation unit31bgenerates an avatar which performs a motion in correspondence with the motion of the player P in the virtual space VS. When there are a plurality of players P, the avatar generation unit31bgenerates a plurality of avatars so as to correspond to each of the players P. The avatar performs a motion in the virtual space VS in correspondence with the motion (in other words, the movement of the coordinates and the change of the posture) of the corresponding player P in the real space RS.

The mobile body generation unit31cgenerates, in the virtual space VS, a mobile body which is connectable to the avatar in the virtual space VS and of which corresponding body does not exist in the real space RS.

The “mobile body” only needs to cause the player P to predict the movement of the avatar different from the actual movement of the player (regardless of whether the player is conscious or not) when the avatar is connected.

For example, as the mobile body, a log which is floating on a river and onto which the avatar can jump, a floor which is likely to collapse when the avatar stands thereon, a jump stand, and wings which assist jumping are applicable in addition to a mobile body used in the movement in the real space such as an elevator. As the mobile body, characters, patterns, and the like drawn on the ground or a wall surface of the virtual space are applicable.

The “connection” between the avatar and the mobile body refers to a state in which the player may predict that the movement of the mobile body, the change of the shape of the mobile body, and the like affect the coordinates of the avatar.

For example, a case where the avatar rides an elevator, a case where the avatar rides on a log floating on a river, a case where the avatar stands on a floor which is about to collapse, a case of standing on a jump stand, and a case where the avatar wears wings which assist jumping correspond to the connection. A case where the avatar comes into contact with or approaches characters, patterns, and the like drawn on the ground or a wall surface of the virtual space also correspond to the connection.

In the player information recognition unit32, image data of the player P including the markers1captured by the camera2is input. The player information recognition unit32has a player posture recognition unit32aand a player coordinate recognition unit32b.

The player posture recognition unit32aextracts the markers1from the input image data of the player P and recognizes the posture of the player P on the basis of an extraction result thereof.

The player coordinate recognition unit32bextracts the markers1from the input image data of the player P and recognizes the coordinates of the player P on the basis of an extraction result thereof.

The trigger event recognition unit33recognizes that a predetermined trigger event has occurred when a condition defined by a system designer in advance is satisfied.

The trigger event may be a trigger event of which occurrence is not perceived by the player. Therefore, as the trigger event, for example, an event due to the motion of the player such as an event in which the player performs a predetermined motion in the real space (in other words, the avatar corresponding to the player performs a predetermined motion in the virtual space) is applicable, and an event which is not due to the motion of the player such as the elapse of predetermined time is applicable.

The avatar coordinate determination unit34determines the coordinates of the avatar corresponding to the player P in the virtual space VS on the basis of the coordinates of the player P in the real space RS recognized by the player coordinate recognition unit32b.

When the trigger event recognition unit33recognizes a trigger event, the avatar coordinate determination unit34corrects the coordinates of the avatar so as to generate a gap in the correspondence relationship between the coordinates of the player and the coordinates of the avatar corresponding to the player in a predetermined period of time or a predetermined range or a predetermined period of time and a predetermined range in accordance with the type of the recognized trigger event.

The “gap” in the correspondence relationship between the coordinates of the player and the coordinates of the avatar includes the movement amount of the coordinates of the avatar with respect to the movement amount of the coordinates of the player, a gap generated when the player and the avatar move after the time it takes to reflect the movement of the coordinates of the player in the movement of the coordinates of the avatar changes, and the like besides a gap generated by only moving the coordinates of the avatar when there are no movements of the coordinates of the player.

The virtual space image determination unit35determines an image of the virtual space to be perceived by the player P corresponding to the avatar via the monitor41of the HMD4on the basis of the coordinates of the avatar.

“The image of the virtual space” refers to an image from which the player may presume the coordinates of the avatar corresponding to the player himself/herself in the virtual space. For example, the image of the virtual space includes an image of another avatar, an image of an object existing only in the virtual space, and an image of an object existing in the virtual space in correspondence with the real space besides the image of the background of the virtual space.

The virtual space sound determination unit36determines sound to be perceived by the player P corresponding to the avatar via the speaker42of the HMD4on the basis of the coordinates of the avatar.

Processing units configuring the virtual space experience system of the present invention are not limited to the configurations as described above.

For example, some of the processing units provided in the server3in the abovementioned embodiment may be provided in the HMD4. A plurality of servers may be used, or CPUs mounted on the HMDs may work together with the omission of a server.

A speaker other than the speaker mounted on the HMD may be provided. In addition to a device which affects the sense of vision and the sense of hearing, a device which affects the sense of smell and the sense of touch such as a device which causes smell, wind, and the like in accordance with the virtual space may be included.

[Processing to be Executed]

Next, with reference toFIG.2toFIG.11, processing to be executed by the VR system S when the player P is caused to experience the virtual space VS with use of the VR system S is described.

[Processing in Normal Times]

First, with reference toFIG.2,FIG.3, andFIG.4, processing to be executed by the VR system S in normal times (in other words, a state in which a trigger event described below is not recognized) is described.

In the processing, first, the display image generation unit31of the server3generates the virtual space VS, the first avatar A1, the second avatar A2, and the mobile bodies (FIG.3/STEP101).

Specifically, the virtual space generation unit31aof the display image generation unit31generates the virtual space VS and various objects existing in the virtual space VS. The avatar generation unit31bof the display image generation unit31generates the first avatar A1corresponding to the first player P1and the second avatar A2corresponding to the second player P2. The mobile body generation unit31cof the display image generation unit31generates mobile bodies such as an elevator VS1described below.

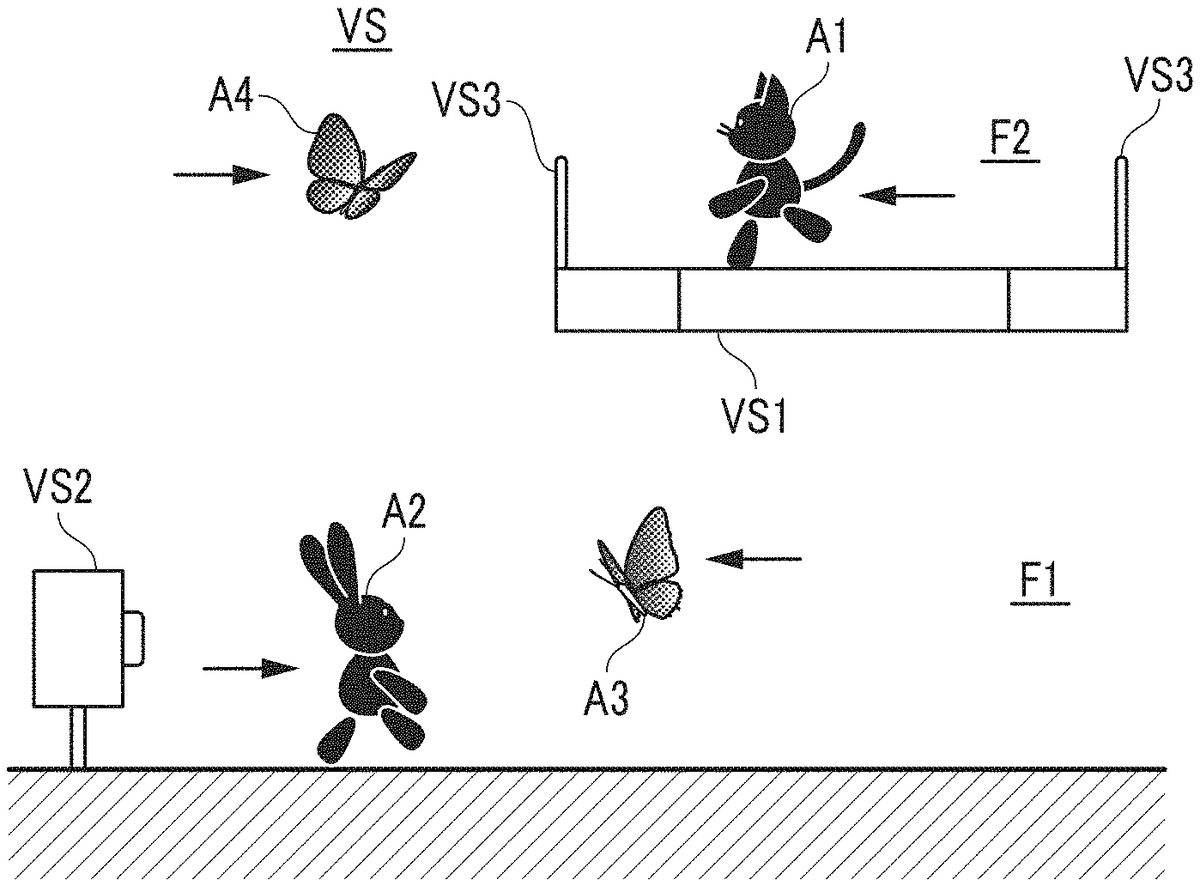

As illustrated inFIG.4B, in the virtual space VS generated by the processing in STEP101, an object relating to a trigger event such as a switch VS2generated in a position corresponding to a whiteboard RS1(seeFIG.4A) installed in the real space RS is installed besides the first avatar A1, the second avatar A2, and the elevator VS1which is a mobile body.

Next, the player information recognition unit32of the server3acquires image data captured by the camera2and recognizes the coordinates and the postures of the first player P1and the second player P2in the real space RS on the basis of the image data (FIG.3/STEP102).

Next, the avatar coordinate determination unit34of the server3determines the coordinates and the posture of the first avatar A1in the virtual space VS on the basis of the coordinates and the posture of the first player P1in the real space RS recognized by the player information recognition unit32, and determines the coordinates and the posture of the second avatar A2in the virtual space VS on the basis of the coordinates and the posture of the second player P2in the real space RS (FIG.3/STEP103).

Next, the virtual space image determination unit35and the virtual space sound determination unit36of the server3determine the image and sound to be perceived by the player P on the basis of the coordinates and the postures of the first avatar A1and the second avatar A2in the virtual space VS (FIG.3/STEP104).

Next, the HMD4worn by the player P causes the monitor41mounted on the HMD4to display the determined image and causes the speaker42mounted on the HMD4to generate the determined sound (FIG.3/STEP105).

Next, the player information recognition unit32of the server3determines whether the movement of the coordinates or the change of the posture of the first player P1or the second player P2in the real space RS is recognized (FIG.3/STEP106).

When the movement of the coordinates or the change of the posture of the first player P1or the second player P2in the real space RS is recognized (when it is YES in STEP106), the processing returns to STEP103and the processing of STEP103and thereafter is executed again.

Meanwhile, when the movement of the coordinates or the change of the posture of the first player P1or the second player P2in the real space RS is not recognized (when it is NO in STEP106), the server3determines whether a signal instructing the ending of the processing is recognized (FIG.3/STEP107).

When a signal instructing the ending cannot be recognized (when it is NO in STEP107), the processing returns to STEP106, and the processing in STEP106and thereafter is executed again.

Meanwhile, when a signal instructing the ending is recognized (when it is YES in STEP107), the VR system S ends the processing for this time.

By the processing above, in the virtual space VS, the first avatar A1corresponding to the first player P1, the second avatar A2corresponding to the second player P2, and the plurality of objects including the elevator VS1which is a mobile body, the switch VS2relating to the occurrence of a trigger event, and the like are installed.

The first player P1and the second player P2become able to perceive that the first player P1and the second player P2exist in the virtual space VS and can freely perform motions via the first avatar A1and the second avatar A2respectively corresponding to the first player P1and the second player P2by the image displayed and the sound generated in the HMD4worn by each of the first player P1and the second player P2.

[Processing at Time of Trigger Event Recognition]

Next, with reference toFIG.2andFIG.4toFIG.8, processing to be executed when the VR system S recognizes a trigger event is described.

In the VR system S, when a predetermined trigger event occurs, correction which generates a gap in the correspondence relationship between the coordinates of the first player P1and the coordinates of the first avatar A1or the correspondence relationship between the second player P2and the second avatar A2is performed. In the description below, correction which generates a gap in the correspondence relationship between the coordinates of the first player P1and the coordinates of the first avatar A1is described.

Specifically, in the VR system S, in a state in which the first avatar A1is riding the elevator VS1which is a mobile body (in other words, a state in which the first avatar A1and the elevator VS1are connected to each other) as illustrated inFIG.4B, the pressing of the switch VS2by the second avatar A2as illustrated inFIG.5Bis set to be a key to the occurrence of a trigger event.

When the occurrence of the trigger event is recognized, as illustrated inFIG.4toFIG.6, the first avatar A1corresponding to the coordinates of the first player P1move upward regardless of the movement of the coordinates of the first player P1. Specifically, the first avatar A1is moved from a first floor F1which is a first-floor part defined in the virtual space VS to a second floor F2which is a second-floor part by the elevator VS1.

By the above, in the VR system S, the first player P1of which corresponding first avatar A1is moved and the second player P2which is perceiving the first avatar A1can be caused to perceive the virtual space VS expanded more to the up-down direction as compared to the real space RS.

When such gap is generated, there is a fear that the first player P1and the second player P2may not be able to properly grasp the positional relationship with each other in the real space RS. As a result, there has been a fear that the first player P1and the second player P2may unintentionally come into contact with each other.

For example, in the state illustrated inFIG.6, the second player P2may misunderstand that the first player P1is also moving upward in the real space RS as with the first avatar A1. The second player P2may misunderstand that the second avatar A2corresponding to the second player P2in the virtual space VS is able to move to a place below the elevator VS1and may try to move the second avatar A2to the place below the elevator VS1(seeFIG.7).

However, in the real space RS, the first player P1corresponding to the first avatar A1actually exists in the same height as the second player P2. Therefore, in this case, in the real space RS, the first player P1and the second player P2come into contact with each other. As a result, there is a fear that the sense of immersion of the first player P1and the second player P2into the virtual space VS is inhibited.

Thus, in the VR system S, by executing the processing described below when the occurrence of a predetermined trigger event is recognized, unintentional contact between the first player P1and the second player P2is prevented without inhibiting the sense of immersion.

In the processing, first, the avatar coordinate determination unit34of the server3determines whether the first avatar A1is connected to the mobile body (FIG.8/STEP201).

Specifically, as illustrated inFIG.4, the avatar coordinate determination unit34determines whether the coordinates of the first player P1have moved in the real space RS such that the first avatar A1corresponding to the coordinates of the first player P1rides the elevator VS1which is a mobile body in the virtual space VS.

When it is determined that the first avatar A1is not connected to the mobile body (when it is NO in STEP201), the processing returns to STEP201and the determination is executed again. This processing is repeated at a predetermined control cycle until the first avatar A1is in a state of riding the elevator VS1.

Meanwhile, when it is determined that the first avatar A1is connected to the mobile body (when it is YES in STEP201), the trigger event recognition unit33of the server3determines whether the occurrence of the trigger event based on the motion of the second player P2is recognized (FIG.8/STEP202).

Specifically, the trigger event recognition unit33determines whether the second player P2has moved and is in a predetermined posture in the real space RS (seeFIG.5A) such that the second avatar A2corresponding to the second player P2is in the posture of touching the switch VS2in a position near the switch VS2(seeFIG.5B) in the virtual space VS.

When the coordinates of the second player P2moves to the predetermined position and the posture of the second player P2is in the predetermined posture, the trigger event recognition unit33recognizes that the trigger event based on the motion of the second player P2has occurred.

When the occurrence of the trigger event is not recognized (when it is NO in STEP202), the processing returns to STEP202and the determination is executed again. This processing is repeated at a predetermined control cycle while the first avatar A1is in a state of riding the elevator VS1.

Meanwhile, when the occurrence of the trigger event is recognized (when it is YES in STEP202), the display image generation unit31of the server3generates a third avatar A3and a fourth avatar A4(FIG.8/STEP203).

Specifically, the avatar generation unit31bof the display image generation unit31generates the third avatar A3corresponding to the first player P1and the fourth avatar A4corresponding to the second player P2in the virtual space VS by considering the occurrence of the trigger event to be the trigger.

At this time, the coordinates at which the third avatar A3and the fourth avatar A4are generated are coordinates independent from the coordinates of the first player P1and the coordinates of the second player P2. At this time point, the coordinates of the third avatar A3and the coordinates of the fourth avatar A4may be determined on the basis of the coordinates of the first player P1and the coordinates of the second player P2, and the third avatar A3and the fourth avatar A4may be generated in the determined coordinates as described below.

Next, the player information recognition unit32of the server3acquires image data captured by the camera2and recognizes the coordinates and the postures of the first player P1and the second player P2in the real space RS on the basis of the image data (FIG.8/STEP STEP204).

Next, the avatar coordinate determination unit34of the server3determines the coordinates and the postures of the first avatar A1and the third avatar A3in the virtual space VS on the basis of the coordinates and the posture of the first player P1in the real space RS recognized by the player information recognition unit32(FIG.8/STEP205).

Specifically, the avatar coordinate determination unit34determines the coordinates and the posture of the first avatar A1by correcting the coordinates and the posture of the first avatar A1based on the coordinates and the posture of the first player P1in accordance with the content (in other words, the correction direction and the correction amount) of the gap defined in advance depending on the type of the trigger event.

More specifically, the avatar coordinate determination unit34performs determination as the coordinates and the posture obtained by moving the coordinates and the posture of the first avatar A1based on the coordinates and the posture of the first player P1upward by the amount of the height of the second floor F2with respect to the first floor F1, and the coordinates and the posture of the first avatar A1.

The avatar coordinate determination unit34determines the coordinates and the posture of the third avatar A3on the basis of the coordinates and the posture of the first avatar A1on which correction has not been performed.

Next, the avatar coordinate determination unit34of the server3determines the coordinates and the postures of the fourth avatar A4in the virtual space VS on the basis of the coordinates and the posture of the second player P2in the real space RS recognized by the player information recognition unit32(FIG.8/STEP206).

Specifically, the avatar coordinate determination unit34determines the coordinates and the posture of the fourth avatar A4on the basis of the coordinates and the posture obtained by correcting the coordinates and the posture of the second avatar A2based on the coordinates and the posture of the second player P2in accordance with the content (in other words, the correction direction and the correction amount) of the gap defined in advance depending on the type of the trigger event.

More specifically, the avatar coordinate determination unit34determines the coordinates and the posture of the fourth avatar A4on the basis of the coordinates and the posture obtained by moving the coordinates and the posture of the second avatar A2based on the coordinates and the posture of the second player P2upward by the amount of the height of the second floor F2with respect to the first floor F1.

Next, the avatar coordinate determination unit34of the server3moves the coordinates and changes the postures of the first avatar A1, the third avatar A3, and the fourth avatar A4on the basis of the determined coordinates and postures (FIG.8/STEP207).

Specifically, as illustrated inFIG.4A,FIG.5A, andFIG.6A, regardless of the change of the coordinates of the first player P1, the coordinates of the first avatar A1are moved upward by the height of the second floor F2with respect to the first floor F1as illustrated inFIG.4B,FIG.5B, andFIG.6B. At this time, the posture of the first avatar A1changes in correspondence with the posture of the first player P1in the coordinates of the first avatar A1from time to time.

As illustrated inFIG.4B,FIG.5B, andFIG.6B, the coordinates of the third avatar A3move to the coordinates of the first avatar A1before being moved. The coordinates of the fourth avatar A4move to coordinates obtained by moving the coordinates of the second avatar A2upward as with the first avatar A1. The postures of the third avatar A3and the fourth avatar A4change in correspondence with the postures of the first player A1and the second player P2on the basis of the correspondence relationship which are defined in advance.

Next, the virtual space image determination unit35and the virtual space sound determination unit36of the server3determine the image and sound to be perceived by the player P on the basis of the coordinates and the postures of the first avatar A1, the second avatar A2, the third avatar A3, and the fourth avatar A4in the virtual space VS (FIG.8/STEP208).

Specifically, when the trigger event is recognized, the image and sound to be perceived by the first player P1are caused to include an image and sound in which the second avatar A2relatively moves with respect to the first avatar A1so as to correspond to the gap generated in the correspondence relationship between the coordinates of the first player P1and the coordinates of the first avatar A1.

The image and sound to be perceived by the second player P2are caused to include an image and sound in which the first avatar A1moves so as to generate a gap in the correspondence relationship between the coordinates of the first player P1and the coordinates of the first avatar A1.

After the trigger event is recognized, the image and sound to be perceived by the first player P1and the second player P2are caused to include an image and sound of the third avatar A3and the fourth avatar A4in addition to the image and sound of the first avatar A1and the second avatar A2which have been included up to that point.

Next, the HMD4worn by the player P displays an image determined by the monitor41and generates sound in the speaker42(FIG.8/STEP209).

Next, the player information recognition unit32of the server3determines whether the movement of the coordinates or the change of the posture of the first player P1or the second player P2in the real space RS is recognized (FIG.8/STEP210).

When the movement of the coordinates or the change of the posture of the first player P1or the second player P2in the real space RS is recognized (when it is YES in STEP210), the processing returns to STEP204and the processing of STEP204and thereafter is executed again.

Meanwhile, when the movement of the coordinates or the change of the posture of the first player P1or the second player P2in the real space RS is not recognized (when it is NO in STEP210), the server3determines whether a signal instructing the ending of the processing is recognized (FIG.8/STEP211).

When a signal instructing the ending cannot be recognized (when it is NO in STEP211), the processing returns to STEP210, and the processing in STEP210and thereafter is executed again.

Meanwhile, when a signal instructing the ending is recognized (when it is YES in STEP211), the VR system S ends the processing for this time.

As described above, in the VR system S, when a predetermined trigger event is recognized, an image and sound of the second avatar A2relatively moving with respect to the first avatar A1are included the image and sound to be perceived by the first player P1. The image and sound to be perceived by the second player P2are caused to include an image in which the first avatar A1moves.

Therefore, the line of sight of the first player P1when the predetermined trigger event is recognized naturally follows the second avatar A2. The line of sight of the second player P2naturally follows the movement of the first avatar A1.

When the predetermined trigger event is recognized, the third avatar A3corresponding to the first player P1and the fourth avatar A4corresponding to the second player P2are generated in the virtual space VS in addition to the first avatar A1corresponding to the first player P1and the second avatar A2corresponding to the second player P2.

In other words, the fourth avatar A4is generated while the attention of the first player P1is drawn to the movement of the second avatar A2. The third avatar A3is generated while the attention of the second player P2is drawn to the movement of the first avatar A1.

After the trigger event is recognized, the image and sound of the virtual space VS to be perceived by the first player P1and the second player P2are also caused to include the image and sound of the third avatar A3and the fourth avatar A4.

By the above, according to the VR system S, a case where the first player P1thinks that the fourth avatar A4has suddenly appeared (and a feeling of strangeness given to the first player P1) can be suppressed. As a result, the first player P1can be caused to accept the existence of the fourth avatar A4without inhibiting the sense of immersion of the first player P1.

By the above, according to the VR system S, a case where the second player P2thinks that the third avatar A3has suddenly appeared (and a feeling of strangeness given to the second player P2) can be suppressed. As a result, the second player P2can be caused to accept the existence of the third avatar A3without inhibiting the sense of immersion of the second player P2.

When another avatar exists in the virtual space VS, the player normally tries to avoid contact between the avatar corresponding to the player and the other avatar.

In the VR system S, when a trigger event occurs and a gap is generated between the coordinates of the first player P1and the coordinates of the first avatar A1corresponding to the first player P1, the third avatar A3corresponding to the coordinates of the first player P1is generated, and the fourth avatar A4is generated in coordinates obtained by correcting the coordinates corresponding to the coordinates of the second player P2so as to correspond to the gap.

Then, the image and sound of the virtual space VS to be perceived by the first player P1are caused to include the image and sound of the fourth avatar A4. The image and sound of the virtual space VS to be perceived by the second player P2are caused to include the image and sound of the third avatar A3.

Therefore, after the trigger event is recognized, when the first player P1performs some kind of motion, the first player P1performs the motion while naturally avoiding contact between the first avatar A1corresponding to the corrected coordinates of the first player P1and the fourth avatar A4corresponding to the corrected coordinates of the second player.

When the second player P2performs some kind of motion, the second player P2performs the motion while naturally avoiding contact between the second avatar A2corresponding to the coordinates of the second player P2and the third avatar A3corresponding to the coordinates of the first player P1.

By the above, the contact between the first player P1and the second player P2in the real space RS can be prevented.

Specifically, as illustrated inFIG.7, when the first avatar A1corresponding to the first player P1and the fourth avatar A4approach each other in the virtual space VS, the first player P1naturally performs a motion so as to avoid contact. Therefore, contact with the second player P2corresponding to the fourth avatar A4in the real space RS is avoided.

Similarly, when the second avatar A2corresponding to the second player P2and the third avatar A3approach each other in the virtual space VS, the second player P2naturally performs a motion so as to avoid contact. Therefore, contact with the first player P1corresponding to the third avatar A3in the real space RS is avoided.

In the VR system S, the third avatar A3corresponding to the first player P1and the fourth avatar A4corresponding to the second player P2are generated with use of the trigger event as a key. The above is performed to cause both of the first player P1and the second player P2to perform motions of avoiding contact in the real space RS.

However, the virtual space experience system of the present invention is not limited to such configuration and a configuration in which only one of the third avatar and the fourth avatar is generated may be employed.

Specifically, for example, in the abovementioned VR system S, the second floor F2to which the first avatar A1moves when the trigger event occurs, a fence VS3that limits the movement of the first avatar A1exists. For example, when the movement of the first player P1to the coordinates of the second player P2can be limited by the fence VS3, the generation of the fourth avatar A4may be omitted.

In the VR system S, as shapes of the first avatar A1and the second avatar A2, animal-type characters which are standing upright are employed. Meanwhile, as shapes of the third avatar A3and the fourth avatar A4, butterfly-type characters are employed. The above is employed to cause the shapes of a plurality of avatars corresponding to one player to be different from each other, to thereby suppress a feeling of strangeness given to another player seeing the avatars.

However, in the virtual space experience system of the present invention, the shapes of the third avatar and the fourth avatar are not limited to such configuration and may be set, as appropriate, in accordance with the aspect of the virtual space. For example, the shapes of the first avatar and the third avatar may be similar, and the color of either may be translucent.

In the abovementioned description, as shapes of the third avatar A3and the fourth avatar A4, butterfly-type characters are employed. However, the virtual space experience system of the present invention is not limited to such configuration. For example, as illustrated inFIG.9toFIG.11, a wall VS4generated below the elevator VS1along with the movement of the elevator VS1may serve as the third avatar.

In the illustrated example, the second floor F2does not exist. Therefore, as illustrated inFIG.11, after the trigger event ends, the first player P1perceives that the first avatar A1can only move on the elevator VS1(a range partitioned by the fence VS3). In other words, the motion range of the first player P1in the real space RS is limited.

At this time, as illustrated inFIG.10andFIG.11, when the wall VS4generated below the elevator VS1is the wall VS4that surrounds the range of the virtual space VS corresponding to the range of the motion, a case where it is felt that an avatar corresponding to the first player P1has increased in number can be suppressed, and a feeling of strangeness given to the second player P2can be further suppressed.

OTHER EMBODIMENTS

The illustrated embodiment has been described, but the present invention is not limited to such form.

For example, in the abovementioned embodiment, the image and sound to be perceived by the first player and the second player are determined in accordance with the generation of the third avatar and the fourth avatar. However, the virtual space experience system of the present invention is not limited to such configuration, and only the image to be perceived by the first player and the second player may be determined in accordance with the generation of the third avatar and the fourth avatar.

In the abovementioned embodiment, the trigger event occurs via the elevator VS1which is a mobile body. As a result of the trigger event, the coordinates of the first avatar A1are corrected so as to move upward, and a gap is generated. The abovementioned configuration is for simplifying the trigger event and the movement direction in order to facilitate understanding.

However, the trigger event in the virtual space experience system of the present invention is not limited to such configuration. Therefore, for example, the trigger event does not necessarily need to be via a mobile body and may occur after a predetermined amount of time elapses after a game and the like start. In addition to the up-down direction, the direction of the gap may be a depth direction, a left-right direction or a direction obtained by combining the depth direction, the left-right direction and/or the up-down direction. The gap may be a gap in terms of time, for example, a delay of the motion of the avatar from the motion of the player.

In the abovementioned embodiment, the third avatar A3and the fourth avatar A4are generated at the same time as the occurrence of the trigger event. However, the virtual space experience system of the present invention is not limited to such configuration. For example, the third avatar and the fourth player may be generated at the stage where the movement of the first player P1and the second player P2becomes possible after the trigger event ends.

In the abovementioned embodiment, the first avatar A1and the second avatar A2continuously exist even after the trigger event ends. However, the virtual space experience system of the present invention is not limited to such configuration, and at least one of the first avatar and the second avatar may be erased after the trigger event ends. When such configuration is employed, a feeling of strangeness given to the player due to the increase of the avatars in number can be suppressed.

REFERENCE SIGNS LIST

1—Marker,2—Camera,3—Server,4—HMD,31—Display image generation unit,31a—Virtual space generation unit,31b—Avatar generation unit,31c—Mobile body generation unit,32. . . Player information recognition unit,32a—Player posture recognition unit,32b—Player coordinate recognition unit,33—Trigger event recognition unit,34—Avatar coordinate determination unit,35—Virtual space image determination unit,36—Virtual space sound determination unit,41—Monitor (virtual space image displayer),42—Speaker (virtual space sound generator), A1—First avatar, A2—Second avatar, F1—First floor, F2—Second floor, P—Player, P1—First player, P2—Second player, RS—Real space, RS1—Whiteboard, S—VR system (virtual space experience system), VS—Virtual space, VS1—Elevator (mobile body), VS2—Switch, VS3—Fence, VS4—Wall (third avatar).

Claims

- A virtual space experience system, comprising: a virtual space generation unit which generates a virtual space corresponding to a predetermined region in a real space in which both of a first player and a second player exist;an avatar generation unit which generates a first avatar which performs a motion in correspondence with a motion of the first player, and a second avatar which performs a motion in correspondence with a motion of the second player in the virtual space;a player coordinate recognition unit which recognizes coordinates of the first player and coordinates of the second player in the real space;an avatar coordinate determination unit which determines coordinates of the first avatar in the virtual space on basis of the coordinates of the first player, and determines coordinates of the second avatar in the virtual space on basis of the coordinates of the second player;a virtual space image determination unit which determines an image of the virtual space to be perceived by the first player and the second player on basis of the coordinates of the first avatar and the coordinates of the second avatar;a trigger event recognition unit which recognizes occurrence of a predetermined trigger event;and a virtual space image displayer which causes the first player and the second player to perceive the image of the virtual space, wherein: the avatar generation unit generates a third avatar corresponding to the motion of the first player in the virtual space when the trigger event is recognized;the virtual space image determination unit causes the image of the virtual space to be perceived by the second player to include an image in which the first avatar moves so as to generate a gap in a correspondence relationship between the coordinates of the first player and the coordinates of the first avatar when the trigger event is recognized, and causes the image of the virtual space to be perceived by the second player to include an image of the second avatar and the third avatar after the trigger event is recognized;and the avatar coordinate determination unit determines coordinates of the third avatar in the virtual space on basis of the coordinates of the first player.

- The virtual space experience system according to claim 1, wherein: the avatar generation unit generates a fourth avatar corresponding to the motion of the second player in the virtual space when the trigger event is recognized;the virtual space image determination unit causes the image of the virtual space to be perceived by the first player to include an image in which the second avatar relatively moves with respect to the first avatar so as to correspond to the gap generated in the correspondence relationship between the coordinates of the first player and the coordinates of the first avatar when the trigger event is recognized, and causes the image of the virtual space to be perceived by the first player to include an image of the first avatar and the fourth avatar after the trigger event is recognized;and the avatar coordinate determination unit determines coordinates of the fourth avatar in the virtual space on basis of the coordinates of the second player.

- The virtual space experience system according to claim 1 or 2, wherein the third avatar has a shape which is different from a shape of the first avatar.

- The virtual space experience system according to claim 3, wherein the third avatar is a wall which surrounds a range of the virtual space corresponding to a motion range of the first player in the real space.

- A virtual space experience system, comprising: a virtual space generation unit which generates a virtual space corresponding to a predetermined region in a real space in which both of a first player and a second player exist;an avatar generation unit which generates a first avatar which performs a motion in correspondence with a motion of the first player, and a second avatar which performs a motion in correspondence with a motion of the second player in the virtual space;a player coordinate recognition unit which recognizes coordinates of the first player and coordinates of the second player in the real space;an avatar coordinate determination unit which determines coordinates of the first avatar in the virtual space on basis of the coordinates of the first player, and determines coordinates of the second avatar in the virtual space on basis of the coordinates of the second player;a virtual space image determination unit which determines an image of the virtual space to be perceived by the first player and the second player on basis of the coordinates of the first avatar and the coordinates of the second avatar;a trigger event recognition unit which recognizes occurrence of a predetermined trigger event;and a virtual space image displayer which causes the first player and the second player to perceive the image of the virtual space, wherein: the avatar generation unit generates a fourth avatar corresponding to the motion of the second player when the trigger event is recognized;the virtual space image determination unit causes the image of the virtual space to be perceived by the first player to include an image in which the second avatar relatively moves with respect to the first avatar so as to correspond to a gap generated in a correspondence relationship between the coordinates of the first player and the coordinates of the first avatar when the trigger event is recognized, and causes the image of the virtual space to be perceived by the first player to include an image of the first avatar and the fourth avatar after the trigger event is recognized;and the avatar coordinate determination unit determines coordinates of the fourth avatar in the virtual space on basis of the coordinates of the second player.

- The virtual space experience system according to claim 2, wherein the third avatar has a shape which is different from a shape of the first avatar.

- The virtual space experience system according to claim 6, wherein the third avatar is a wall which surrounds a range of the virtual space corresponding to a motion range of the first player in the real space.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.