U.S. Pat. No. 11,731,048

METHOD OF DETECTING IDLE GAME CONTROLLER

AssigneeSony Interactive Entertainment LLC

Issue DateMay 3, 2021

Illustrative Figure

Abstract

A technique detects when a computer simulation controller such as a computer game controller is idle and, thus, that the simulation (game) should be paused immediately without waiting for an “AwayFromKeyboard” timer to time out by detecting whether the user has laid the controller down and gone away or simply is not responding.

Description

DETAILED DESCRIPTION This disclosure relates generally to computer ecosystems including aspects of consumer electronics (CE) device networks such as but not limited to computer game networks. A system herein may include server and client components which may be connected over a network such that data may be exchanged between the client and server components. The client components may include one or more computing devices including game consoles such as Sony PlayStation® or a game console made by Microsoft or Nintendo or other manufacturer, virtual reality (VR) headsets, augmented reality (AR) headsets, portable televisions (e.g., smart TVs, Internet-enabled TVs), portable computers such as laptops and tablet computers, and other mobile devices including smart phones and TV set top boxes, desktop computers, any computerized Internet-enabled implantable device, and additional examples discussed below. These client devices may operate with a variety of operating environments. For example, some of the client computers may employ, as examples, Linux operating systems, operating systems from Microsoft, or a Unix operating system, or operating systems produced by Apple, Inc., or Google. These operating environments may be used to execute one or more browsing programs, such as a browser made by Microsoft or Google or Mozilla or other browser program that can access websites hosted by the Internet servers discussed below. Also, an operating environment according to present principles may be used to execute one or more computer game programs. Servers and/or gateways may include one or more processors executing instructions that configure the servers to receive and transmit data over a network such as the Internet. Or a client and server can be connected over a local intranet or a virtual private network. A server or controller may be instantiated by a game console such as a Sony PlayStation®, a personal computer, etc. Information may be exchanged over ...

DETAILED DESCRIPTION

This disclosure relates generally to computer ecosystems including aspects of consumer electronics (CE) device networks such as but not limited to computer game networks. A system herein may include server and client components which may be connected over a network such that data may be exchanged between the client and server components. The client components may include one or more computing devices including game consoles such as Sony PlayStation® or a game console made by Microsoft or Nintendo or other manufacturer, virtual reality (VR) headsets, augmented reality (AR) headsets, portable televisions (e.g., smart TVs, Internet-enabled TVs), portable computers such as laptops and tablet computers, and other mobile devices including smart phones and TV set top boxes, desktop computers, any computerized Internet-enabled implantable device, and additional examples discussed below. These client devices may operate with a variety of operating environments. For example, some of the client computers may employ, as examples, Linux operating systems, operating systems from Microsoft, or a Unix operating system, or operating systems produced by Apple, Inc., or Google. These operating environments may be used to execute one or more browsing programs, such as a browser made by Microsoft or Google or Mozilla or other browser program that can access websites hosted by the Internet servers discussed below. Also, an operating environment according to present principles may be used to execute one or more computer game programs.

Servers and/or gateways may include one or more processors executing instructions that configure the servers to receive and transmit data over a network such as the Internet. Or a client and server can be connected over a local intranet or a virtual private network. A server or controller may be instantiated by a game console such as a Sony PlayStation®, a personal computer, etc.

Information may be exchanged over a network between the clients and servers. To this end and for security, servers and/or clients can include firewalls, load balancers, temporary storages, and proxies, and other network infrastructure for reliability and security. One or more servers may form an apparatus that implement methods of providing a secure community such as an online social website to network members.

A processor may be a single- or multi-chip processor that can execute logic by means of various lines such as address lines, data lines, and control lines and registers and shift registers.

Components included in one embodiment can be used in other embodiments in any appropriate combination. For example, any of the various components described herein and/or depicted in the Figures may be combined, interchanged, or excluded from other embodiments.

“A system having at least one of A, B, and C” (likewise “a system having at least one of A, B, or C” and “a system having at least one of A, B, C”) includes systems that have A alone, B alone, C alone, A and B together, A and C together, B and C together, and/or A, B, and C together, etc.

Now specifically referring toFIG.1, an example system10is shown, which may include one or more of the example devices mentioned above and described further below in accordance with present principles. The first of the example devices included in the system10is a consumer electronics (CE) device such as an audio video device (AVD)12such as but not limited to an Internet-enabled TV with a TV tuner (equivalently, set top box controlling a TV). The AVD12alternatively may also be a computerized Internet enabled (“smart”) telephone, a tablet computer, a notebook computer, a HMD, a wearable computerized device, a computerized Internet-enabled music player, computerized Internet-enabled headphones, a computerized Internet-enabled implantable device such as an implantable skin device, etc. Regardless, it is to be understood that the AVD12is configured to undertake present principles (e.g., communicate with other CE devices to undertake present principles, execute the logic described herein, and perform any other functions and/or operations described herein).

Accordingly, to undertake such principles the AVD12can be established by some or all of the components shown inFIG.1. For example, the AVD12can include one or more displays14that may be implemented by a high definition or ultra-high definition “4K” or higher flat screen and that may be touch-enabled for receiving user input signals via touches on the display. The AVD12may include one or more speakers16for outputting audio in accordance with present principles, and at least one additional input device18such as an audio receiver/microphone for entering audible commands to the AVD12to control the AVD12. The example AVD12may also include one or more network interfaces20for communication over at least one network22such as the Internet, an WAN, an LAN, etc. under control of one or more processors24. A graphics processor may also be included. Thus, the interface20may be, without limitation, a Wi-Fi transceiver, which is an example of a wireless computer network interface, such as but not limited to a mesh network transceiver. It is to be understood that the processor24controls the AVD12to undertake present principles, including the other elements of the AVD12described herein such as controlling the display14to present images thereon and receiving input therefrom. Furthermore, note the network interface20may be a wired or wireless modem or router, or other appropriate interface such as a wireless telephony transceiver, or Wi-Fi transceiver as mentioned above, etc.

In addition to the foregoing, the AVD12may also include one or more input ports26such as a high-definition multimedia interface (HDMI) port or a USB port to physically connect to another CE device and/or a headphone port to connect headphones to the AVD12for presentation of audio from the AVD12to a user through the headphones. For example, the input port26may be connected via wire or wirelessly to a cable or satellite source26aof audio video content. Thus, the source26amay be a separate or integrated set top box, or a satellite receiver. Or the source26amay be a game console or disk player containing content. The source26awhen implemented as a game console may include some or all of the components described below in relation to the CE device44.

The AVD12may further include one or more computer memories28such as disk-based or solid-state storage that are not transitory signals, in some cases embodied in the chassis of the AVD as standalone devices or as a personal video recording device (PVR) or video disk player either internal or external to the chassis of the AVD for playing back AV programs or as removable memory media. Also, in some embodiments, the AVD12can include a position or location receiver such as but not limited to a cellphone receiver, GPS receiver and/or altimeter30that is configured to receive geographic position information from a satellite or cellphone base station and provide the information to the processor24and/or determine an altitude at which the AVD12is disposed in conjunction with the processor24. The component30may also be implemented by an inertial measurement unit (IMU) that typically includes a combination of accelerometers, gyroscopes, and magnetometers to determine the location and orientation of the AVD12in three dimensions.

Continuing the description of the AVD12, in some embodiments the AVD12may include one or more cameras32that may be a thermal imaging camera, a digital camera such as a webcam, and/or a camera integrated into the AVD12and controllable by the processor24to gather pictures/images and/or video in accordance with present principles. Also included on the AVD12may be a Bluetooth transceiver34and other Near Field Communication (NFC) element36for communication with other devices using Bluetooth and/or NFC technology, respectively. An example NFC element can be a radio frequency identification (RFID) element.

Further still, the AVD12may include one or more auxiliary sensors38(e.g., a motion sensor such as an accelerometer, gyroscope, cyclometer, or a magnetic sensor, an infrared (IR) sensor, an optical sensor, a speed and/or cadence sensor, a gesture sensor (e.g., for sensing gesture command), providing input to the processor24. The AVD12may include an over-the-air TV broadcast port40for receiving OTA TV broadcasts providing input to the processor24. In addition to the foregoing, it is noted that the AVD12may also include an infrared (IR) transmitter and/or IR receiver and/or IR transceiver42such as an IR data association (IRDA) device. A battery (not shown) may be provided for powering the AVD12, as may be a kinetic energy harvester that may turn kinetic energy into power to charge the battery and/or power the AVD12. A graphics processing unit (GPU)44and field programmable gated array46also may be included.

Still referring toFIG.1, in addition to the AVD12, the system10may include one or more other CE device types. In one example, a first CE device48may be a computer game console that can be used to send computer game audio and video to the AVD12via commands sent directly to the AVD12and/or through the below-described server while a second CE device50may include similar components as the first CE device48. In the example shown, the second CE device50may be configured as a computer game controller manipulated by a player or a head-mounted display (HMD) worn by a player. In the example shown, only two CE devices are shown, it being understood that fewer or greater devices may be used. A device herein may implement some or all of the components shown for the AVD12. Any of the components shown in the following figures may incorporate some or all of the components shown in the case of the AVD12.

Now in reference to the afore-mentioned at least one server52, it includes at least one server processor54, at least one tangible computer readable storage medium56such as disk-based or solid-state storage, and at least one network interface58that, under control of the server processor54, allows for communication with the other devices ofFIG.1over the network22, and indeed may facilitate communication between servers and client devices in accordance with present principles. Note that the network interface58may be, e.g., a wired or wireless modem or router, Wi-Fi transceiver, or other appropriate interface such as, e.g., a wireless telephony transceiver.

Accordingly, in some embodiments the server52may be an Internet server or an entire server “farm” and may include and perform “cloud” functions such that the devices of the system10may access a “cloud” environment via the server52in example embodiments for, e.g., network gaming applications. Or the server52may be implemented by one or more game consoles or other computers in the same room as the other devices shown inFIG.1or nearby.

The components shown in the following figures may include some or all components shown inFIG.1.

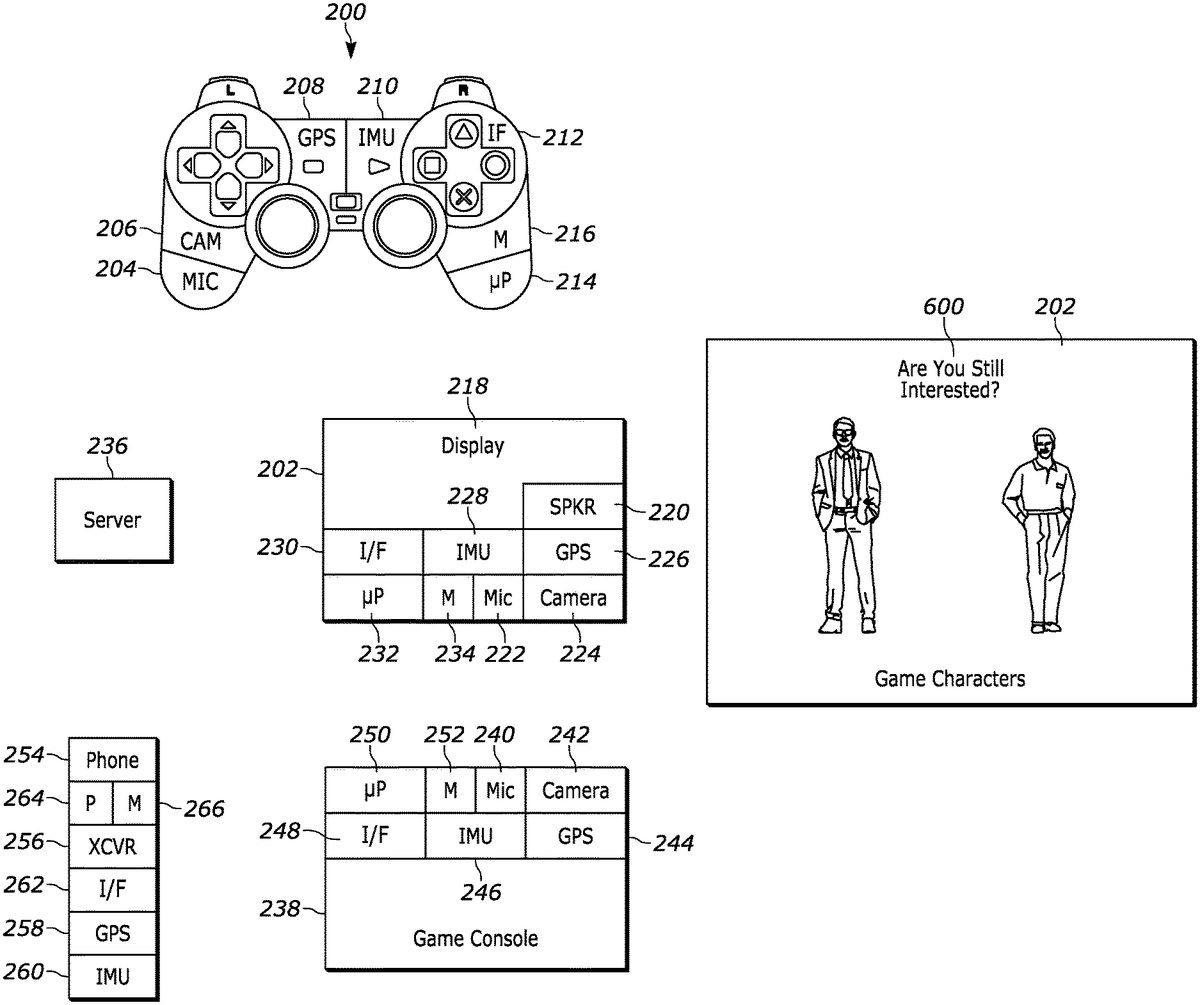

FIG.2illustrates a specific example system. A computer simulation controller (CSC)200that may be hand-held and include operating keys to control presentation of a computer simulation on a display device202is shown. The CSC200may be, for example, a Play Station Dual Shock® controller.

As shown inFIG.2, the controller200may include, in addition to operating keys, one or more microphones204, one or more cameras206, and one or more location sensors208such as global positioning satellite (GPS) sensors. The controller200may further include one or more inertial measurement units (IMU)210such as one or more of an accelerometer, gyroscope, magnetometer, and combinations thereof.

Moreover, the controller200may include one or more communication interfaces212such as wired or wireless transceivers including infrared (IR) transceivers, Bluetooth® transceivers, and Wi-Fi transceivers, and combinations thereof. One or more processors214accessing instructions on one or more computer storages216may be provided to control the components of the controller200.

Similarly, if desired the display202may include, in addition to a video display218and one or more speakers220, one or more microphones222, one or more cameras224, and one or more location sensors226such as GPS sensors. The display202may further include one or more IMU228.

Moreover, the display202may include one or more communication interfaces230such as wired or wireless transceivers including IR transceivers, Bluetooth® transceivers, and Wi-Fi transceivers, and combinations thereof. One or more processors232accessing instructions on one or more computer storages234may be provided to control the components of the display202.

The simulation presented on the display202under control of the controller200may be sent from one or more sources of computer simulations such as one or more servers236communicating with various components herein via a wide area computer network and one or more computer simulation consoles238communicating with various components herein via wired and/or wireless paths. In the example shown, the console238may include one or more microphones240, one or more cameras242, and one or more location sensors244such as GPS sensors. The console238may further include one or more IMU246.

Moreover, the console238may include one or more communication interfaces248such as wired or wireless transceivers including IR transceivers, Bluetooth® transceivers, and Wi-Fi transceivers, and combinations thereof. One or more processors250accessing instructions on one or more computer storages252may be provided to control the components of the console238.

In addition, one or more ancillary devices such as a wireless smart phone254may be provided. In addition to a keypad and wireless telephony transceiver256, the phone254may include microphone(s), camera(s), one or more location sensors258such as GPS sensors, one or more IMU260, one or more communication interfaces262such as wired or wireless transceivers including IR transceivers, Bluetooth® transceivers, and Wi-Fi transceivers, and combinations thereof, on or more processors264accessing instructions on one or more computer storages266to control the components of the phone254.

It is to be understood that logic herein may be implemented on any one or more of the storages shown inFIG.1or2and executed by any one or more processors described herein, and that motion/location signals and signals from sensors other than motion/location sensors may be implemented by any of the appropriate sensors shown and/or described herein.

As discussed herein, motion of the controller200can be used to infer whether a user has stopped paying attention to a simulation presented on the display device202or has simply stopped inputting signals but may still be watching the simulation, with the simulation being altered accordingly. For example, a motion state of the controller200can be identified using signals from motion sensors described herein and the simulation slowed down (played at a slower speed, but faster than a complete pause) and/or paused at least in part responsive to the motion state being stationary, if desired based on a confidence in the inference of a stationary state using signals from sensors other than the motion sensors. Also, whether the controller200is in the stationary state can depend on whether it is moving relative to a platform supporting the controller200, to account for motion of a controller that may be located on a moving platform such as a ship, vehicle, or indeed a swaying high-rise building.

FIG.3illustrates that altering presentation of the simulation responsive to the motion state of the controller200may alternatively or further include changing the pose of a simulation character300associated with the user from an action pose shown in the left inFIG.3to an inactive pose302(such as a prone or supine pose) when it is determined that the user has lost interest in the simulation according to logic below. Other examples of altering game play based on principles herein include putting a racing game into auto-drive mode when the user is determined to have lost interest.

Accordingly, and turning now toFIG.4, block400indicates that sensor signals are received from the controller200by any one or more of the processors described herein. By way of example, signals from the GPS208and/or IMU210of the controller200may be received, indicating motion (or no motion) of the controller200.

Other motion indicia may be received at block402. By way of example, signals from the GPS and/or IMU of any one or more of the display202, game console238, and phone254may be received, indicating motion (or no motion) of the component from whence the signals originate. Note that triangulation of signals from various components also may be used to determine motion.

Proceeding to block404, components in motion signals from the controller200that match components in background motion signals from any one or more of the display202, game console238, and phone254are removed, such that any remaining motion-indicating signals from the controller200represent motion of the controller relative to the platform supporting the controller. If these remaining motion-indicating signals from the controller200represent motion of the controller at decision diamond206, the logic ofFIG.4essentially continues to monitor for signals described herein at block408.

On the other hand, if the motion-indicating signals from the controller200represent no motion of the controller200(i.e., the controller200is stationary as it would be if laid down on a surface by the user), the logic proceeds to block410. At block410, a confidence in the determination that the motion state of the controller200is stationary is determined as described further herein. Moving to block412, based on the confidence determined at block410, presentation of the computer simulation is altered.

For example, if a low confidence of no motion is determined, the simulation may proceed at current play back speed by a pose of the character300(FIG.3) altered to indicate that the user may have lost attention. If medium confidence of no motion is determined, the simulation may proceed but at a slower speed than normal play back speed, whereas if high confidence of no motion is determined, the simulation may be paused. When the user is binge watching a streaming video service, the service may keep playing while the user is holding the controller as indicated by motion signals from the controller and paused shortly after the user is away when controller is detected to be stationary (e.g., on the floor or dropped.)

FIG.5illustrates further details. Commencing at block500, motion of the control200is identified as described above. If desired, wireless signal strength of wireless signals from the controller200is identified at block502. Controller power also may be identified at block504as determined by identifying whether the controller is in an on or off state as indicated by the absence of signals from the controller in response to, e.g., queries from other components.

Also, if desired the amplitude of acoustic signals such as voice signals sensed by any of the microphones here may be identified at block506. Voice recognition may be implemented on the acoustic signals at block508to identify terms in the voice signals. Furthermore, images in signals from any of the cameras herein may be recognized at block510. Based on any one or more of the above, confidence may be determined at block512.

For example, if wireless signal strength of the controller200or of a wireless headset worn by the user such as described in the case of the CE device50inFIG.1remains above a threshold at block502, confidence that the user has lost attention in the simulation by virtue of laying the controller down may be low, on the basis that the user has not walked away from the display202/console238(which may be used to detect the signal strength) although the user may have set the controller down. Similarly, if wireless signal strength of the controller200(or headset) drops below a threshold at block502, confidence that the user has lost attention in the simulation by virtue of laying the controller down may be high, on the basis that the user has walked away from the display202/console238and then laid the controller down.

If the controller remains energized at identified at block504, confidence may be high that the user has not lost attention in the simulation by virtue of maintaining the controller energized, whereas if the controller is identified at block504as being deenergized, confidence may be high that the user has lost attention in the simulation by virtue of turning off the controller.

Turning to the determination at block512using the microphone signals at block506, if amplitude of audible signals such as voice received from the microphone of the controller or of other microphone herein remains above a threshold, confidence that the user has lost attention in the simulation by virtue of laying the controller down may be low, on the basis that the user has remained near the monitoring microphone (e.g., the microphone on the display202/console238) although the user may have set the controller down. Similarly, if acoustic signal strength from the microphone used for monitoring at block506drops below a threshold, confidence that the user has lost attention in the simulation by virtue of laying the controller down may be high, on the basis that the user has walked away from the display202/console238.

Turning to the determination at block512using the vocal term recognition at block508, confidence that the user has lost attention in the simulation by virtue of speaking certain terms (e.g., “time for lunch”) may be high, on the basis that the terms indicate a loss of interest. Similarly, confidence that the user has lost attention in the simulation by virtue of speaking certain terms (e.g., “time for a kill shot”) may be low, on the basis that the terms indicate interest in the simulation.

Turning to the determination at block512using the image recognition at block510, confidence may be high that the user has lost attention in the simulation by virtue of recognizing, using face recognition, that the user is staring into space with gaze diverted from the display device202or has walked away from the controller. On the other hand, confidence may be high that the user has not lost attention in the simulation by virtue of recognizing, using face recognition, that the user is looking at the display device202.

When multiple blocks inFIG.5are used to determine confidence, each block may be accorded a respective weight, such that one determination of high confidence in lack of interest may outweigh another determination of low confidence of lack of interest. For example, the controller being deenergized as determined at block504, indicating high confidence in lack of interest, may outweigh loud signals from a microphone as determined at block506, otherwise indicating low confidence of lack of interest. Likewise, images from a camera at block510indicating that the user is staring intently at the display device, indicating high confidence of interest, may outweigh terms identified at block508that otherwise would indicate low confidence of interest.

The weights may be determined empirically and/or by machine learning (ML) models using, e.g., neural networks such as convolutional neural networks (CNN) and the like. A ML model may be trained on a training set of motion signals with accompanying sensor signals and ground truth of interest/no interest for each tuple in the training set (or at least for each of a percentage of the tuples). Training may be supervised, unsupervised, or semi-supervised.

Block514ofFIG.5indicates that the confidence determined at block512may be used to establish one or more timer periods. A first time period may be the time period after which the simulation is slowed (but not paused), and a second time period may be the period after slowing the simulation that the simulation is paused, absent a change in motion signals from the controller200.

For example, if it is determined that the controller is in a state of no motion, but confidence is low that the user has lost interest, a relatively long period or periods may be established. On the other hand, if it is determined that the controller is in a state of no motion and confidence is high that the user has lost interest, a relatively shorter period or periods may be established.

Proceeding to block516, absent a change of motion signals and/or a change in confidence that the user has lost interest, at the elapse of the first (shorter) period the simulation is slowed. If desired, as indicated at600inFIG.6, a visual or audible prompt may be presented on the display device202to the user to alert the user that the system believes the user may be losing interest in the simulation. Block518indicates that absent changed motion/confidence signals/determinations, at the elapse of a second period after the simulation was slowed, the simulation may be paused until such time as the controller200is manipulated again by the user or signals from sensors described herein indicate that the user has regained interest, with a high confidence.

Present principles may be used to detect when the game system should be turned off/put in sleep mode. When binge watching streaming video service, an option may be provided to keep it playing while holding the controller200or the phone254and pause shortly after the user is away when the controller was detected on floor or dropped when the option to do so was enabled by the system or by the user.

It will be appreciated that whilst present principals have been described with reference to some example embodiments, these are not intended to be limiting, and that various alternative arrangements may be used to implement the subject matter claimed herein.

Claims

- A device comprising: at least one computer memory that is not a transitory signal and that comprises instructions that, when executed by at least one processor, configure the device to: identify a motion state of a controller of a computer simulation based at least in part on at least one motion sensor in the controller;and at least in part responsive to the motion state being stationary and a confidence based at least in part on at least one signal from a first sensor, initially slow down presentation of the computer simulation and after an elapse of a period, pause presentation of the computer simulation, at least in part responsive to the motion state being stationary and responsive to the confidence satisfying a threshold, slow down or pause presentation of the computer simulation, the first sensor being other than the motion sensor in the controller and the first sensor being other than a timer.

- The device of claim 1, wherein the instructions are executable to: at least in part responsive to the motion state being stationary, slow down presentation of the computer simulation.

- The device of claim 1, wherein the instructions are executable to: at least in part responsive to the motion state being stationary, pause presentation of the computer simulation.

- The device of claim 1, wherein the instructions are executable to: establish at least one period based at least in part on the confidence, the period being associated with slowing down or pausing the computer simulation.

- The device of claim 1, comprising the at least one processor executing the instructions.

- A device comprising: at least one computer memory that is not a transitory signal and that comprises instructions that, when executed by at least one processor, configure the device to: identify a motion state of a controller of a computer simulation;and at least in part responsive to the motion state being stationary and responsive to a confidence satisfying a threshold, slow down or pause presentation of the computer simulation, the motion state being stationary being based at least in part on a motion sensor in the controller, the confidence being determined at least in part based on signals from a first sensor, the first sensor being other than the motion sensor in the controller and the first sensor being other than a timer, wherein the first sensor comprises at least one camera.

- A device comprising: at least one computer memory that is not a transitory signal and that comprises instructions that, when executed by at least one processor, configure the device to: identify a motion state of a controller of a computer simulation;and at least in part responsive to the motion state being stationary and responsive to a confidence satisfying a threshold, slow down or pause presentation of the computer simulation, the motion state being stationary being based at least in part on a motion sensor in the controller, the confidence being determined at least in part based on signals from a first sensor, the first sensor being other than the motion sensor in the controller and the first sensor being other than a timer, wherein the first sensor comprises at least one microphone.

- A device comprising: at least one computer memory that is not a transitory signal and that comprises instructions that, when executed by at least one processor, configure the device to: identify a motion state of a controller of a computer simulation;and at least in part responsive to the motion state being stationary and responsive to a confidence satisfying a threshold, slow down or pause presentation of the computer simulation, the motion state being stationary being based at least in part on a motion sensor in the controller, the confidence being determined at least in part based on signals from a first sensor, the first sensor being other than the motion sensor in the controller and the first sensor being other than a timer, wherein the first sensor comprises at least one wireless receiver.

- The device of claim 8, wherein the signals from the wireless receiver comprise signal strength indications.

- A device comprising: at least one computer memory that is not a transitory signal and that comprises instructions that, when executed by at least one processor, configure the device to: identify a motion state of a controller of a computer simulation;and at least in part responsive to the motion state being stationary and responsive to a confidence satisfying a threshold, slow down or pause presentation of the computer simulation, the motion state being stationary being based at least in part on a motion sensor in the controller, the confidence being determined at least in part based on signals from a first sensor, the first sensor being other than the motion sensor in the controller and the first sensor being other than a timer, wherein the instructions are executable to: identify the motion state being stationary at least in part by accounting for motion of a platform on which the controller is disposed.

- The device of claim 10, comprising accounting for motion of a platform on which the controller is disposed at least in part by removing components in motion signals from the controller that match components in motion signals representing motion of the platform.

- An apparatus comprising: at least one controller of a computer simulation, the controller being configured for controlling presentation of the computer simulation on at least one display, the computer simulation being received from at least one source of computer simulations;and at least one processor programmed with instructions that when executed by the processor configure the processor to: responsive to a first signal from at least one of: a camera, a microphone, a wireless transceiver, present the computer simulation at normal play back speed and alter a pose of at least one character in the computer simulation to indicate that a player of the computer simulation may have lost attention;responsive to a second signal from at least one of: the camera, the microphone, the wireless transceiver, play back the computer simulation at a slower speed than normal play back speed and higher than zero;and responsive to a third signal from at least one of: the camera, the microphone, the wireless transceiver, pause the computer simulation.

- The apparatus of claim 12, wherein the at least one processor is in the source and the source comprises at least one computer simulation console.

- The apparatus of claim 12, wherein the at least one processor is in the source and the source comprises at least one server communicating with the display over a wide area computer network.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.