U.S. Pat. No. 11,724,204

IN-GAME LOCATION BASED GAME PLAY COMPANION APPLICATION

AssigneeSony Interactive Entertainment LLC

Issue DateJune 1, 2021

Illustrative Figure

Abstract

A method for gaming. The method including receiving location based information of game play of a user playing a gaming application as displayed on a first computing device, wherein the location based information is made with reference to a location of a character in the game play of the user in a gaming world associated with the gaming application. The method including aggregating location based information of a plurality of game plays of a plurality of users playing the gaming application. The method including generating contextually relevant information for the location of the character based on the location based information of the plurality of game plays. The method including generating a companion interface including the contextually relevant information. The method including sending the companion interface to a second computing device associated with the user for display concurrent with the game play of the user.

Description

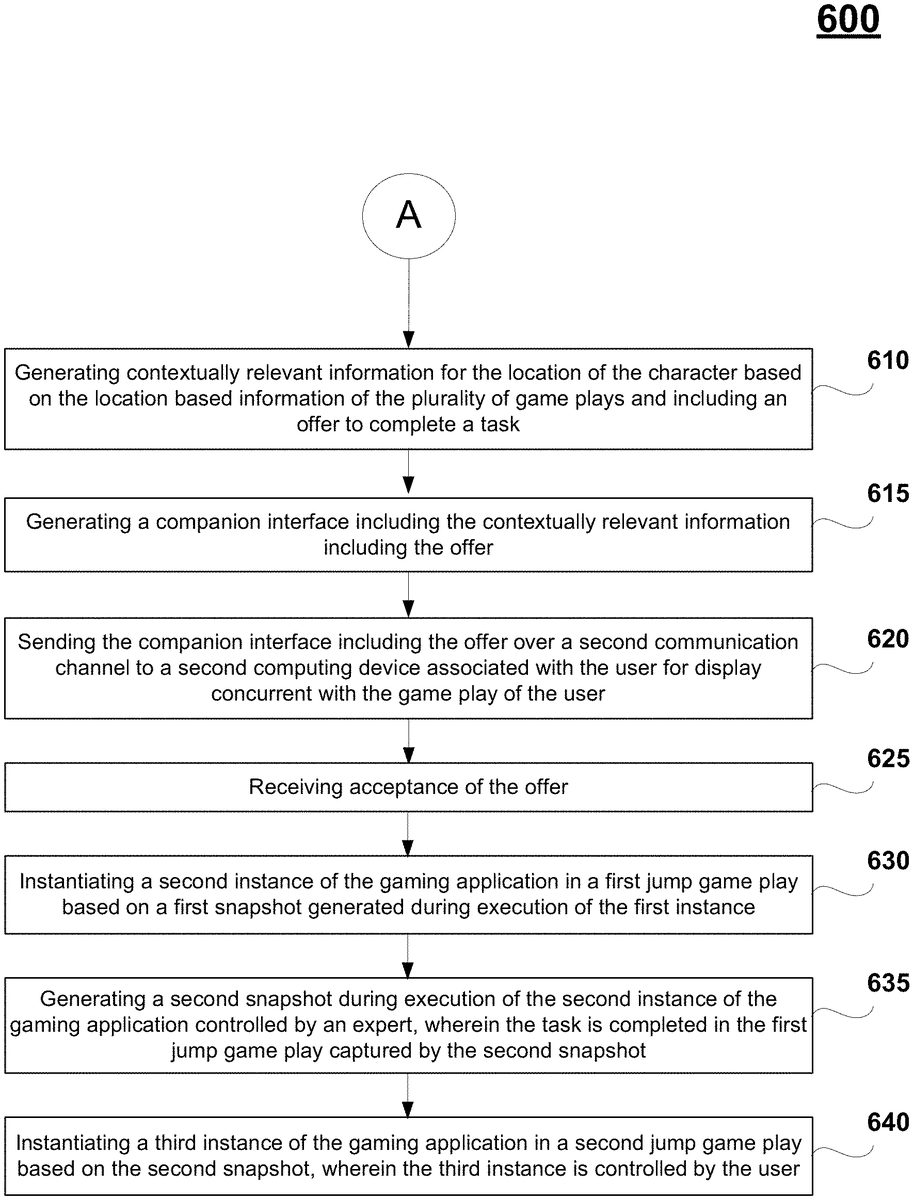

DETAILED DESCRIPTION Although the following detailed description contains many specific details for the purposes of illustration, anyone of ordinary skill in the art will appreciate that many variations and alterations to the following details are within the scope of the present disclosure. Accordingly, the aspects of the present disclosure described below are set forth without any loss of generality to, and without imposing limitations upon, the claims that follow this description. Generally speaking, the various embodiments of the present disclosure describe systems and methods implementing a location based companion interface that is configured to support game play of a user. Embodiments of the present disclosure provide for additional uses of a gaming application through a location based companion interface. The companion interface includes contextually relevant information (e.g., messaging, assistance information, etc.) that is generated based on a location of a character in the game play of the user. The location based information includes defining parameters generated for snapshots collected periodically during the game play of the user. In particular, a snapshot contains metadata and/or information about the game play of the user, and is configurable to enable another instance of a corresponding gaming application at a jump point in the gaming application corresponding to the snapshot. The contextually relevant information also includes information collected during the game plays of other users playing the same gaming application. In that manner, the user is able to receive contextually relevant information based on the current progress of the user (e.g., location in gaming world, etc.). For example, the contextually relevant information can provide assistance in the game play of the user, wherein the information may be based on game play location, past game play, and anticipated game play. Further, the companion interface can be used to create messages from the user. For instance, ...

DETAILED DESCRIPTION

Although the following detailed description contains many specific details for the purposes of illustration, anyone of ordinary skill in the art will appreciate that many variations and alterations to the following details are within the scope of the present disclosure. Accordingly, the aspects of the present disclosure described below are set forth without any loss of generality to, and without imposing limitations upon, the claims that follow this description.

Generally speaking, the various embodiments of the present disclosure describe systems and methods implementing a location based companion interface that is configured to support game play of a user. Embodiments of the present disclosure provide for additional uses of a gaming application through a location based companion interface. The companion interface includes contextually relevant information (e.g., messaging, assistance information, etc.) that is generated based on a location of a character in the game play of the user. The location based information includes defining parameters generated for snapshots collected periodically during the game play of the user. In particular, a snapshot contains metadata and/or information about the game play of the user, and is configurable to enable another instance of a corresponding gaming application at a jump point in the gaming application corresponding to the snapshot. The contextually relevant information also includes information collected during the game plays of other users playing the same gaming application. In that manner, the user is able to receive contextually relevant information based on the current progress of the user (e.g., location in gaming world, etc.). For example, the contextually relevant information can provide assistance in the game play of the user, wherein the information may be based on game play location, past game play, and anticipated game play. Further, the companion interface can be used to create messages from the user. For instance, a message can be a request for help, wherein the message is delivered to a targeted user or broadcast to a defined group (e.g., friends of the user), and displayable within corresponding companion interfaces. The companion interface may include messages for the user that are created by other users playing the gaming application. In other examples, a message can be created and delivered to one or more targeted users to enhance game play. For instance, a message may be triggered for display in a companion interface of a targeted user upon occurrence of an event, such as reaching a geographic location in the gaming world, or beating a boss, etc. The message may also be personalized to allow for person-to-person gaming or communication between the users. That is, the gaming application may be used in a multi-player environment via corresponding companion interfaces, wherein real time communication is enabled between two or users playing the gaming application and each companion interface provides information related to the game play of the other user. The contextually relevant information may include an offer of assistance from an expert to guide the user through the game play of the user, or for the expert to accomplish a task within the game play of the user.

With the above general understanding of the various embodiments, example details of the embodiments will now be described with reference to the various drawings.

Throughout the specification, the reference to “gaming application” is meant to represent any type of interactive application that is directed through execution of input commands. For illustration purposes only, an interactive application includes applications for gaming, word processing, video processing, video game processing, etc. Further, the terms video game and gaming application are interchangeable.

FIG.1Aillustrates a system10used for implementing a location based companion interface configured to support game play of a user playing a gaming application, wherein the gaming application can be executing on a local computing device or over a cloud game network, in accordance with one embodiment of the present disclosure. The companion interface may be used for creating content (e.g., assistance information, messages, etc.) for interaction by other users playing the gaming application, wherein the interaction may be through corresponding companion interfaces. The content is created based on location based information captured during game play of the user playing a gaming application, such as snapshot information. The companion interface may also be configured to provide assistance to the game play of the user by providing contextually relevant information to the user based on the past game play of the user, aggregated game plays of a plurality of users playing the same gaming application, location information of the game play of the user, and current progress of the game play of the user (including asset and skill accumulation, task completion, level completion, etc.).

As shown inFIG.1A, the gaming application may be executing locally at a client device100of the user5, or may be executing at a back-end game executing engine211operating at a back-end game server205of a cloud game network or game cloud system. The game executing engine211may be operating within one of many game processors201of game server205. In either case, the cloud game network is configured to provide a location based companion interface supporting the game plays of one or more users playing a gaming application. Further, the gaming application may be executing in a single-player mode, or multi-player mode, wherein embodiments of the present invention provide for multi-player enhancements (e.g., assistance, communication, etc.) to both modes of operation.

In some embodiments, the cloud game network may include a plurality of virtual machines (VMs) running on a hypervisor of a host machine, with one or more virtual machines configured to execute a game processor module201utilizing the hardware resources available to the hypervisor of the host in support of single player or multi-player video games. In other embodiments, the cloud game network is configured to support a plurality of local computing devices supporting a plurality of users, wherein each local computing device may be executing an instance of a video game, such as in a single-player or multi-player video game. For example, in a multi-player mode, while the video game is executing locally, the cloud game network concurrently receives information (e.g., game state data) from each local computing device and distributes that information accordingly throughout one or more of the local computing devices so that each user is able to interact with other users (e.g., through corresponding characters in the video game) in the gaming environment of the multi-player video game. In that manner, the cloud game network coordinates and combines the game plays for each of the users within the multi-player gaming environment.

As shown, system10includes a game server205executing the game processor module201that provides access to a plurality of interactive gaming applications. Game server205may be any type of server computing device available in the cloud, and may be configured as one or more virtual machines executing on one or more hosts, as previously described. For example, game server205may manage a virtual machine supporting the game processor201. Game server205is also configured to provide additional services and/or content to user5. For example, game server is configurable to provide a companion interface displayable to user5for purposes of generating and/or receiving contextually relevant information, as will be further described below.

Client device100is configured for requesting access to a gaming application over a network150, such as the internet, and for rendering instances of video games or gaming applications executed by the game server205and delivered to the display device12associated with a user5. For example, user5may be interacting through client device100with an instance of a gaming application executing on game processor201. Client device100may also include a game executing engine111configured for local execution of the gaming application, as previously described. The client device100may receive input from various types of input devices, such as game controllers6, tablet computers11, keyboards, and gestures captured by video cameras, mice, touch pads, etc. Client device100can be any type of computing device having at least a memory and a processor module that is capable of connecting to the game server205over network150. Some examples of client device100include a personal computer (PC), a game console, a home theater device, a general purpose computer, mobile computing device, a tablet, a phone, or any other types of computing devices that can interact with the game server205to execute an instance of a video game.

Client device100is configured for receiving rendered images, and for displaying the rendered images on display12. For example, through cloud based services the rendered images may be delivered by an instance of a gaming application executing on game executing engine211of game server205in association with user5. In another example, through local game processing, the rendered images may be delivered by the local game executing engine111. In either case, client device100is configured to interact with the executing engine211or111in association with the game play of user5, such as through input commands that are used to drive game play.

Further, client device100is configured to interact with the game server205to capture and store snapshots of the game play of user5when playing a gaming application, wherein each snapshot includes information (e.g., game state, etc.) related to the game play. For example, the snapshot may include location based information corresponding to a location of a character within a gaming world of the game play of the user5. Further, a snapshot enables a corresponding user to jump into a saved game play at a jump point in the gaming application corresponding to the capture of the snapshot. As such, user5can jump into his or her own saved game play at a jump point corresponding to a selected snapshot, another user may jump into the game play of the user5, or user5may jump into the saved game play of another user at a jump point corresponding to a selected snapshot. Further, client device100is configured to interact with game server205to display a location based companion interface from the companion interface generator213, wherein the companion interface is configured to receive and/or generate contextually relevant content, such as assistance information, messaging, interactive quests and challenges, etc. In particular, information contained in the snapshots captured during the game play of user5, such as location based information relating to the game play, as well as information captured during game plays of other users, is used to generate the contextually relevant content.

More particularly, game processor201of game server205is configured to generate and/or receive snapshots of the game play of user5when playing the gaming application. For instance, snapshots may be generated by the local game execution engine111on client device100, outputted and delivered over network150to game processor201. In addition, snapshots may be generated by game executing engine211within the game processor201, such as by an instance of the gaming application executing on engine211. In addition, other game processors of game server205associated with other virtual machines are configured to execute instances of the gaming application associated with game plays of other users and to capture snapshots during those game play, wherein this additional information may be used to create the contextually relevant information.

Snapshot generator212is configured to capture a plurality of snapshots generated from the game play of user5. Each snapshot provides information that enables execution of an instance of the video game beginning from a point in the video game associated with a corresponding snapshot. The snapshots are automatically generated during game play of the gaming application by user5. Portions of each of the snapshots are stored in relevant databases independently configured or configured under data store140, in embodiments. In another embodiment, snapshots may be generated manually through instruction by user5. In that manner, any user through selection of a corresponding snapshot may jump into the game play of user5at a point in the gaming application associated with the corresponding snapshot. In addition, snapshots of game plays of other users playing a plurality of gaming applications may also be captured. As such, game processor201is configured to access information in database140in order to enable the jumping into a saved game play of any user based on a corresponding snapshot. That is, the requesting user is able to begin playing the video game at a jump point corresponding to a selected snapshot using the game characters of the original user's game play that generated and saved the snapshot.

A full discussion on the creation and use of snapshots is provided within U.S. application Ser. No. 15/411,421, entitled “Method And System For Saving A Snapshot of Game Play And Used To Begin Later Execution Of The Game Play By Any User As Executed On A Game Cloud System,” which was previously incorporated by reference in its entirety. A brief description of the creation and implementation of snapshots follows below.

In particular, each snapshot includes metadata and/or information to enable execution of an instance of the gaming application beginning at a point in the gaming application corresponding to the snapshot. For example, in the game play of user5, a snapshot may be generated at a particular point in the progression of the gaming application, such as in the middle of a level. The relevant snapshot information is stored in one or more databases of database140. Pointers can be used to relate information in each database corresponding to a particular snapshot. In that manner, another user wishing to experience the game play of user5, or the same user5wishing to re-experience his or her previous game play, may select a snapshot corresponding to a point in the gaming application of interest.

The metadata and information in each snapshot may provide and/or be analyzed to provide additional information related to the game play of the user. For example, snapshots may help determine where the user (e.g., character of the user) has been within the gaming application, where the user is in the gaming application, what the user has done, what assets and skills the user has accumulated, and where the user will be going within the gaming application. This additional information may be used to generate contextually relevant content for display to the user in a companion application interface (e.g., assistance information), or may be used to generate content configured for interaction with other users (e.g., quests, challenges, messages, etc.). In addition, the contextually relevant content may be generated based on other information (e.g., location based information) collected from other game plays of other users playing the gaming application.

The snapshot includes a snapshot image of the scene that is rendered at that point. The snapshot image is stored in snapshot image database146. The snapshot image presented in the form of a thumbnail in a timeline provides a view into the game play of a user at a corresponding point in the progression by the user through a video game.

More particularly, the snapshot also includes game state data that defines the state of the game at that point. For example, game state data may include game characters, game objects, game object attributes, game attributes, game object state, graphic overlays, etc. In that manner, game state data allows for the generation of the gaming environment that existed at the corresponding point in the video game. Game state data may also include the state of every device used for rendering the game play, such as states of CPU, GPU, memory, register values, program counter value, programmable DMA state, buffered data for the DMA, audio chip state, CD-ROM state, etc. Game state data may also identify which parts of the executable code need to be loaded to execute the video game from that point. Not all the game state data need be captured and stored, just the data that is sufficient for the executable code to start the game at the point corresponding to the snapshot. The game state data is stored in game state database145.

The snapshot also includes user saved data. Generally, user saved data includes information that personalizes the video game for the corresponding user. This includes information associated with the user's character, so that the video game is rendered with a character that may be unique to that user (e.g., shape, look, clothing, weaponry, etc.). In that manner, the user saved data enables generation of a character for the game play of a corresponding user, wherein the character has a state that corresponds to the point in the video game associated with the snapshot. For example, user saved data may include the game difficulty selected by the user5when playing the game, game level, character attributes, character location, number of lives left, the total possible number of lives available, armor, trophy, time counter values, and other asset information, etc. User saved data may also include user profile data that identifies user5, for example. User saved data is stored in database141.

In addition, the snapshot also includes random seed data that is generated by artificial intelligence (AI) module215. The random seed data may not be part of the original game code, but may be added in an overlay to make the gaming environment seem more realistic and/or engaging to the user. That is, random seed data provides additional features for the gaming environment that exists at the corresponding point in the game play of the user. For example, AI characters may be randomly generated and provided in the overlay. The AI characters are not associated with any users playing the game, but are placed into the gaming environment to enhance the user's experience. As an illustration, these AI characters may randomly walk the streets in a city scene. In addition, other objects may be generated and presented in an overlay. For instance, clouds in the background and birds flying through space may be generated and presented in an overlay. The random seed data is stored in random seed database143.

In that manner, another user wishing to experience the game play of user5may select a snapshot corresponding to a point in the video game of interest. For example, selection of a snapshot image presented in a timeline or node in a node graph by a user enables the jump executing engine216of game processor201to access the corresponding snapshot, instantiate another instance of the video game based on the snapshot, and execute the video game beginning at a point in the video game corresponding to the snapshot. In that manner, the snapshot enables the requesting user to jump into the game play of user5at the point corresponding to the snapshot. In addition, user5may access game plays of other users or even access his or her own prior game play in the same or other gaming application using corresponding snapshots. In particular, selection of the snapshot by user5(e.g., in a timeline, or through a message, etc.) enables executing engine216to collect the snapshot (e.g., metadata and/or information) from the various databases (e.g., from database140) in order to begin executing the corresponding gaming application at a point where the corresponding snapshot was captured in a corresponding gaming application.

Game processor201includes a location based companion application generator213configured to generate a companion interface supporting the game play of user5when playing a gaming application. The generator213can be used to create contextually relevant information (e.g., assistance information, messages, etc.) to be delivered to or received from user5that is based on the game play of the user5, wherein the contextually relevant information is created using location based information (e.g., snapshots). The contextually relevant information may also be based on information collected from game plays of other users playing the gaming application. In particular, generator213is configurable to determine progress of the game play of user5for a particular gaming application (e.g., based on snapshots) for a particular context of the game play (e.g., current location of character, game state information, etc.), and determine contextually relevant information that may be delivered to a companion interface displayable on device11that is separate from a device displaying the game play of user5. For example, the contextually relevant information may provide information providing assistance in progressing through the gaming application. The contextually relevant information may consider information provided by a prediction engine214that is configured to predict where the game play of user5will go, to include what areas a character will visit, what tasks are required to advance the game play, what assets are needed in order to advance the game play (e.g., assets needed to accomplish a required task), etc. The companion interface may also be used to create contextually relevant content by user5for interaction by other users. For example, location based information shown in the companion interface (e.g., radar mapping, waypoints, etc.) may facilitate the creation of interactive content (e.g., quests, challenges, messages, etc.). That is, the user5may use location based information (e.g., snapshots) to create the interactive contextually relevant content. In another example, the companion interface can be used to create messages from the user, wherein the messages may be targeted to friends of the user asking for help or facilitating interactive communication (e.g., for multi-player gaming, taunting, etc.). The companion interface may be used to display messages from other users. The companion interface may also be used to display offers for assistance.

For example, in embodiments the location based information may be based on current and/or past game plays of multiple users playing the same gaming application in a crowd sourcing environment, such that the information may be determined through observation and/or analysis of the multiple game plays. In that manner, crowdsourced content may be discovered during the game plays, wherein the content may be helpful for other players playing the same gaming application, or provide an enhanced user experience to these other players. In another embodiment, the information provided in the companion interface may be related to game plays of users simultaneously playing the same gaming application (e.g., information is related to game plays of friends of the user who are simultaneously playing the gaming application, wherein the information provides real-time interaction between the friends), wherein the information advances the user's game play or provides an enhanced user experience. That is, the respective companion interfaces provide real-time interaction between the users. In still another embodiment, a user is playing the gaming application in isolation (e.g., playing alone), and receiving information through the companion interface that is helpful in advancing the game play of the first user, or for providing an enhanced user experience. In the solo case, the information (e.g., help, coaching, etc.) could be delivered in the form of recorded video, image, and/or text content. This information may not be generated in real-time, and may be generated by someone associated with the game developer (e.g., employed by the game publisher, console maker, etc.) whose role is to generate information for the benefit of users as conveyed through respective companion interfaces. In addition, third parties (profit based, non-profit based, etc.) may take it upon themselves to generate the information for the benefit of the gaming public. Further, the information may be generated by friends of the first user for the benefit solely for that player or for friends in a group. Also, the information may be generated by other related and/or unrelated users playing the gaming interface (e.g., crowdsourced). Further, the components used for implementation of the companion interface may be included within a game processor that is local to the user (e.g., located on device11or client device100), or may be included within a back end server (e.g., game server2015).

As shown, the companion interface is delivered to a device11(e.g., tablet) for display and interaction, wherein device11may be separate from client device100that is configured to execute and/or support execution of the gaming application for user5interaction. For instance, a first communication channel may be established between the game server205and client device100, and a separate, second communication channel may be established between game server205and device11.

FIG.1Billustrates a system106B providing gaming control to one or more users playing one or more gaming applications that are executing locally to the corresponding user, and wherein back-end server support (e.g., accessible through game server205) may implement a location based companion interface supporting game play of a corresponding user, in accordance with one embodiment of the present disclosure. In one embodiment, system106B works in conjunction with system10ofFIG.1Aand system200ofFIG.2to implement the location based companion interface supporting game play of a corresponding user. Referring now to the drawings, like referenced numerals designate identical or corresponding parts.

As shown inFIG.1B, a plurality of users115(e.g., user5A, user5B . . . user5N) is playing a plurality of gaming applications, wherein each of the gaming applications is executed locally on a corresponding client device100(e.g., game console) of a corresponding user. In addition, each of the plurality of users115has access to a device11, previously introduced, configured to receive and/or generate a companion interface for display on device11the provides contextually relevant information for a corresponding user playing a corresponding gaming application, as previously described. Each of the client devices100may be configured similarly in that local execution of a corresponding gaming application is performed. For example, user5A may be playing a first gaming application on a corresponding client device100, wherein an instance of the first gaming application is executed by a corresponding game title execution engine111. Game logic126A (e.g., executable code) implementing the first gaming application is stored on the corresponding client device100, and is used to execute the first gaming application. For purposes of illustration, game logic may be delivered to the corresponding client device100through a portable medium (e.g., flash drive, compact disk, etc.) or through a network (e.g., downloaded through the internet150from a gaming provider). In addition, user5B is playing a second gaming application on a corresponding client device100, wherein an instance of the second gaming application is executed by a corresponding game title execution engine111. The second gaming application may be identical to the first gaming application executing for user5A or a different gaming application. Game logic126B (e.g., executable code) implementing the second gaming application is stored on the corresponding client device100as previously described, and is used to execute the second gaming application. Further, user115N is playing an Nth gaming application on a corresponding client device100, wherein an instance of the Nth gaming application is executed by a corresponding game title execution engine111. The Nth gaming application may be identical to the first or second gaming application, or may be a completely different gaming application. Game logic126N (e.g., executable code) implementing the third gaming application is stored on the corresponding client device100as previously described, and is used to execute the Nth gaming application.

As previously described, client device100may receive input from various types of input devices, such as game controllers, tablet computers, keyboards, gestures captured by video cameras, mice touch pads, etc. Client device100can be any type of computing device having at least a memory and a processor module that is capable of connecting to the game server205over network150. Also, client device100of a corresponding user is configured for generating rendered images executed by the game title execution engine111executing locally or remotely, and for displaying the rendered images on a display. For example, the rendered images may be associated with an instance of the first gaming application executing on client device100of user5A. For example, a corresponding client device100is configured to interact with an instance of a corresponding gaming application as executed locally or remotely to implement a game play of a corresponding user, such as through input commands that are used to drive game play.

In one embodiment, client device100is operating in a single-player mode for a corresponding user that is playing a gaming application. Back-end server support via the game server205may provide location based companion interface services supporting game play of a corresponding user, as will be described below, in accordance with one embodiment of the present disclosure.

In another embodiment, multiple client devices100are operating in a multi-player mode for corresponding users that are each playing a specific gaming application. In that case, back-end server support via the game server may provide multi-player functionality, such as through the multi-player processing engine119. In particular, multi-player processing engine119is configured for controlling a multi-player gaming session for a particular gaming application. For example, multi-player processing engine130communicates with the multi-player session controller116, which is configured to establish and maintain communication sessions with each of the users and/or players participating in the multi-player gaming session. In that manner, users in the session can communicate with each other as controlled by the multi-player session controller116.

Further, multi-player processing engine119communicates with multi-player logic118in order to enable interaction between users within corresponding gaming environments of each user. In particular, state sharing module117is configured to manage states for each of the users in the multi-player gaming session. For example, state data may include game state data that defines the state of the game play (of a gaming application) for a corresponding user at a particular point. For example, game state data may include game characters, game objects, game object attributes, game attributes, game object state, graphic overlays, etc. In that manner, game state data allows for the generation of the gaming environment that exists at the corresponding point in the gaming application. Game state data may also include the state of every device used for rendering the game play, such as states of CPU, GPU, memory, register values, program counter value, programmable DMA state, buffered data for the DMA, audio chip state, CD-ROM state, etc. Game state data may also identify which parts of the executable code need to be loaded to execute the video game from that point. Game state data may be stored in database140ofFIG.1CandFIG.2, and is accessible by state sharing module117.

Further, state data may include user saved data that includes information that personalizes the video game for the corresponding player. This includes information associated with the character played by the user, so that the video game is rendered with a character that may be unique to that user (e.g., location, shape, look, clothing, weaponry, etc.). In that manner, the user saved data enables generation of a character for the game play of a corresponding user, wherein the character has a state that corresponds to the point in the gaming application experienced currently by a corresponding user. For example, user saved data may include the game difficulty selected by a corresponding user115A when playing the game, game level, character attributes, character location, number of lives left, the total possible number of lives available, armor, trophy, time counter values, etc. User saved data may also include user profile data that identifies a corresponding user115A, for example. User saved data may be stored in database140.

In that manner, the multi-player processing engine119using the state sharing data117and multi-player logic118is able to overlay/insert objects and characters into each of the gaming environments of the users participating in the multi-player gaming session. For example, a character of a first user is overlaid/inserted into the gaming environment of a second user. This allows for interaction between users in the multi-player gaming session via each of their respective gaming environments (e.g., as displayed on a screen).

In addition, back-end server support via the game server205may provide location based companion application services provided through a companion interface generated by companion application generator213. As previously introduced, generator213is configured to create contextually relevant information (e.g., assistance information, messages, etc.) to be delivered to or received from user5. The information is generated based on the game play of user5for a particular application (e.g., based on information provided in snapshots). In that manner, generator213is able to determine the context of the game play of user5and provide contextually relevant information that is deliverable to a comp interface displayable on device11(e.g., separate from the device displaying game play of user5).

FIG.1Cillustrates a system106C providing gaming control to a plurality of users115(e.g., users5L,5M . . .5Z) playing a gaming application as executed over a cloud game network, in accordance with one embodiment of the present disclosure. In some embodiments, the cloud game network may be a game cloud system210that includes a plurality of virtual machines (VMs) running on a hypervisor of a host machine, with one or more virtual machines configured to execute a game processor module utilizing the hardware resources available to the hypervisor of the host. In one embodiment, system106C works in conjunction with system10ofFIG.1Aand/or system200ofFIG.2to implement the location based companion interface supporting game play of a corresponding user. Referring now to the drawings, like referenced numerals designate identical or corresponding parts.

As shown, the game cloud system210includes a game server205that provides access to a plurality of interactive video games or gaming applications. Game server205may be any type of server computing device available in the cloud, and may be configured as one or more virtual machines executing on one or more hosts. For example, game server205may manage a virtual machine supporting a game processor that instantiates an instance of a gaming application for a user. As such, a plurality of game processors of game server205associated with a plurality of virtual machines is configured to execute multiple instances of the gaming application associated with game plays of the plurality of users115. In that manner, back-end server support provides streaming of media (e.g., video, audio, etc.) of game plays of a plurality of gaming applications to a plurality of corresponding users.

A plurality of users115accesses the game cloud system210via network150, wherein users (e.g., users5L,5M . . .5Z) access network150via corresponding client devices100′, wherein client device100′ may be configured similarly as client device100ofFIGS.1A-1B(e.g., including game executing engine111, etc.), or may be configured as a thin client providing that interfaces with a back end server providing computational functionality (e.g., including game executing engine211). In addition, each of the plurality of users115has access to a device11, previously introduced, configured to receive and/or generate a companion interface for display on device11that provides contextually relevant information for a corresponding user playing a corresponding gaming application, as previously described. In particular, a client device100′ of a corresponding user5L is configured for requesting access to gaming applications over a network150, such as the internet, and for rendering instances of gaming application (e.g., video game) executed by the game server205and delivered to a display device associated with the corresponding user5L. For example, user5L may be interacting through client device100′ with an instance of a gaming application executing on game processor of game server205. More particularly, an instance of the gaming application is executed by the game title execution engine211. Game logic (e.g., executable code) implementing the gaming application is stored and accessible through data store140, previously described, and is used to execute the gaming application. Game title processing engine211is able to support a plurality of gaming applications using a plurality of game logics177, as shown.

As previously described, client device100′ may receive input from various types of input devices, such as game controllers, tablet computers, keyboards, gestures captured by video cameras, mice touch pads, etc. Client device100′ can be any type of computing device having at least a memory and a processor module that is capable of connecting to the game server205over network150. Also, client device100′ of a corresponding user is configured for generating rendered images executed by the game title execution engine211executing locally or remotely, and for displaying the rendered images on a display. For example, the rendered images may be associated with an instance of the first gaming application executing on client device100′ of user5L. For example, a corresponding client device100′ is configured to interact with an instance of a corresponding gaming application as executed locally or remotely to implement a game play of a corresponding user, such as through input commands that are used to drive game play.

In another embodiment, multi-player processing engine119, previously described, provides for controlling a multi-player gaming session for a gaming application. In particular, when the multi-player processing engine119is managing the multi-player gaming session, the multi-player session controller116is configured to establish and maintain communication sessions with each of the users and/or players in the multi-player session. In that manner, users in the session can communicate with each other as controlled by the multi-player session controller116.

Further, multi-player processing engine119communicates with multi-player logic118in order to enable interaction between users within corresponding gaming environments of each user. In particular, state sharing module117is configured to manage states for each of the users in the multi-player gaming session. For example, state data may include game state data that defines the state of the game play (of a gaming application) for a corresponding user115A at a particular point, as previously described. Further, state data may include user saved data that includes information that personalizes the video game for the corresponding player, as previously described. For example, state data includes information associated with the user's character, so that the video game is rendered with a character that may be unique to that user (e.g., shape, look, clothing, weaponry, etc.). In that manner, the multi-player processing engine119using the state sharing data117and multi-player logic118is able to overlay/insert objects and characters into each of the gaming environments of the users participating in the multi-player gaming session. This allows for interaction between users in the multi-player gaming session via each of their respective gaming environments (e.g., as displayed on a screen).

In addition, back-end server support via the game server205may provide location based companion application services provided through a companion interface generated by companion application generator213. As previously introduced, generator213is configured to create contextually relevant information (e.g., assistance information, messages, etc.) to be delivered to or received from a corresponding user (e.g., user5L). The information is generated based on the game play of the user for a particular application (e.g., based on information provided in snapshots). In that manner, generator213is able to determine the context of the game play of the corresponding user and provide contextually relevant information that is deliverable to a comp interface displayable on device11(e.g., separate from the device displaying game play of user5L).

FIG.2illustrates a system diagram200for enabling access and playing of gaming applications stored in a game cloud system (GCS)210, in accordance with an embodiment of the disclosure. Generally speaking, game cloud system GCS210may be a cloud computing system operating over a network220to support a plurality of users. Additionally, GCS210is configured to save snapshots generated during game plays of a gaming application of multiple users, wherein a snapshot can be used to initiate an instance of the gaming application for a requesting user beginning at a point in the gaming application corresponding to the snapshot. For example, snapshot generator212is configured for generating and/or capturing snapshots of game plays of one or more users playing the gaming application. The snapshot generator212may be executing external or internal to game server205. In addition, GCS210through the use of snapshots enables a user to navigate through a gaming application, and preview past and future scenes of a gaming application. Further, the snapshots enable a requesting user to jump to a selected point in the video game through a corresponding snapshot to experience the game play of another user. In particular, system200includes GCS210, one or more social media providers240, and a user device230, all of which are connected via a network220(e.g., internet). One or more user devices may be connected to network220to access services provided by GCS210and social media providers240.

In one embodiment, game cloud system210includes a game server205, a video recorder271, a tag processor273, and account manager274that includes a user profile manager, a game selection engine275, a game session manager285, user access logic280, a network interface290, and a social media manager295. GCS210may further include a plurality of gaming storage systems, such as a game state store, random seed store, user saved data store, snapshot store, which may be stored generally in datastore140. Other gaming storage systems may include a game code store261, a recorded game store262, a tag data store263, video game data store264, and a game network user store265. In one embodiment, GCS210is a system that can provide gaming applications, services, gaming related digital content, and interconnectivity among systems, applications, users, and social networks. GCS210may communicate with user device230and social media providers240through social media manager295via network interface290. Social media manager295may be configured to relate one or more friends. In one embodiment, each social media provider240includes at least one social graph245that shows user social network connections.

User U0is able to access services provided by GCS210via the game session manager285, wherein user U0may be representative of user5ofFIG.1. For example, account manager274enables authentication and access by user U0to GCS210. Account manager274stores information about member users. For instance, a user profile for each member user may be managed by account manager274. In that manner, member information can be used by the account manager274for authentication purposes. For example, account manager2274may be used to update and manage user information related to a member user. Additionally, game titles owned by a member user may be managed by account manager274. In that manner, gaming applications stored in data store264are made available to any member user who owns those gaming applications.

In one embodiment, a user, e.g., user U0, can access the services provided by GCS210and social media providers240by way of user device230through connections over network220. User device230can include any type of device having a processor and memory, wired or wireless, portable or not portable. In one embodiment, user device230can be in the form of a smartphone, a tablet computer, or hybrids that provide touch screen capability in a portable form factor. One exemplary device can include a portable phone device that runs an operating system and is provided with access to various applications (apps) that may be obtained over network220, and executed on the local portable device (e.g., smartphone, tablet, laptop, desktop, etc.).

User device230includes a display232that acts as an interface for user U0to send input commands236and display data and/or information235received from GCS210and social media providers240. Display232can be configured as a touch-screen, or a display typically provided by a flat-panel display, a cathode ray tube (CRT), or other device capable of rendering a display. Alternatively, the user device230can have its display232separate from the device, similar to a desktop computer or a laptop computer. Additional devices231(e.g., device11ofFIG.1A) may be available to user U0for purposes of implementing a location based companion interface.

In one embodiment, user device130is configured to communicate with GCS210to enable user U0to play a gaming application. In some embodiments, the GCS210may include a plurality of virtual machines (VMs) running on a hypervisor of a host machine, with one or more virtual machines configured to execute a game processor module utilizing the hardware resources available to the hypervisor of the host. For example, user U0may select (e.g., by game title, etc.) a gaming application that is available in the video game data store264via the game selection engine275. The gaming application may be played within a single player gaming environment or in a multi-player gaming environment. In that manner, the selected gaming application is enabled and loaded for execution by game server205on the GCS210. In one embodiment, game play is primarily executed in the GCS210, such that user device230will receive a stream of game video frames235from GCS210, and user input commands236for driving the game play is transmitted back to the GCS210. The received video frames235from the streaming game play are shown in display232of user device230. In other embodiments, the GCS210is configured to support a plurality of local computing devices supporting a plurality of users, wherein each local computing device may be executing an instance of a gaming application, such as in a single-player gaming application or multi-player gaming application. For example, in a multi-player gaming environment, while the gaming application is executing locally, the cloud game network concurrently receives information (e.g., game state data) from each local computing device and distributes that information accordingly throughout one or more of the local computing devices so that each user is able to interact with other users (e.g., through corresponding characters in the video game) in the gaming environment of the multi-player gaming application. In that manner, the cloud game network coordinates and combines the game plays for each of the users within the multi-player gaming environment.

In one embodiment, after user U0chooses an available game title to play, a game session for the chosen game title may be initiated by the user U0through game session manager285. Game session manager285first accesses game state store in data store140to retrieve the saved game state of the last session played by the user U0(for the selected game), if any, so that the user U0can restart game play from a previous game play stop point. Once the resume or start point is identified, the game session manager285may inform game execution engine in game processor201to execute the game code of the chosen game title from game code store261. After a game session is initiated, game session manager285may pass the game video frames235(i.e., streaming video data), via network interface290to a user device, e.g., user device230.

During game play, game session manager285may communicate with game processor201, recording engine271, and tag processor273to generate or save a recording (e.g., video) of the game play or game play session. In one embodiment, the video recording of the game play can include tag content entered or provided during game play, and other game related metadata. Tag content may also be saved via snapshots. The video recording of game play, along with any game metrics corresponding to that game play, may be saved in recorded game store262. Any tag content may be saved in tag data stored263.

During game play, game session manager285may communicate with game processor201of game server205to deliver and obtain user input commands236that are used to influence the outcome of a corresponding game play of a gaming application. Input commands236entered by user U0may be transmitted from user device230to game session manager285of GCS210. Input commands236, including input commands used to drive game play, may include user interactive input, such as including tag content (e.g., texts, images, video recording clips, etc.). Game input commands as well as any user play metrics (how long the user plays the game, etc.) may be stored in game network user store. Select information related to game play for a gaming application may be used to enable multiple features that may be available to the user.

Because game plays are executed on GCS210by multiple users, information generated and stored from those game plays enable any requesting user to experience the game play of other users, particularly when game plays are executed over GCS210. In particular, snapshot generator212of GCS210is configured to save snapshots generated by the game play of users playing gaming applications through GCS210. In the case of user U0, user device provides an interface allowing user U0to engage with the gaming application during the game play. Snapshots of the game play by user U0is generated and saved on GCS210. Snapshot generator212may be executing external to game server205as shown inFIG.2, or may be executing internal to game server205as shown inFIG.1A.

In addition, the information collected from those game plays may be used to generate contextually relevant information provided to user U0in a corresponding companion application. For example, as previously introduced, companion application generator213is configured for implementing a location based companion interface that is configured to support game play of the user U0, wherein the companion interface includes contextually relevant information (e.g., messaging, assistance information, offers of assistance, etc.) that is generated based a location of a character in the game play of user U0. Companion application generator213may be executing external to game server205as shown inFIG.2, or may be executing internal to game server205as shown inFIG.1A. In these implementations, the contextually relevant information may be delivered over a network220to the user device231for display of the companion application interface, including the contextually relevant information. In another embodiment, the companion application generator213may be local to the user (e.g., implemented within user device231) and configured for both generating and displaying the contextually relevant information. In this implementation, the user device231may be directly communicating with user device230over a local network (or through an external network220) to implement the companion application interface, wherein the user device231may deliver location based information to the user device231, and wherein device230is configured for generating and displaying the companion application interface including the contextually relevant information.

Further, user device230is configured to provide an interface that enables the jumping to a selected point in the gaming application using a snapshot generated in the game play of user U0or another user. For example, jump game executing engine216is configured for accessing a corresponding snapshot, instantiate an instance of the gaming application based on the snapshot, and execute the gaming application beginning at a point in the gaming application corresponding to the snapshot. In that manner, the snapshot enables the requesting user to jump into the game play of the corresponding user at the point corresponding to the snapshot. For instance, user U0is able to experience the game play of any other user, or go back and review and/or replay his or her own game play. That is, a requesting user, via a snapshot of a corresponding game play, plays the gaming application using the characters used in and corresponding to that game play. Jump game executing engine216may be executing external to game server205as shown inFIG.2, or may be executing internal to game server205as shown inFIG.1A.

FIGS.3-8are described within the context of a user playing a gaming application. In general, the gaming application may be any interactive game that responds to user input. In particular,FIGS.3-8describe a location based companion interface that is configured to support game play of a user, wherein the companion interface includes contextually relevant information (e.g., messaging, assistance information, etc.) that is generated based on a location of a character in the game play of the user.

With the detailed description of the various modules of the gaming server and client device communicating over a network, a method for implementing a location based companion interface supporting game play of a corresponding user is now described in relation to flow diagram300ofFIG.3, in accordance with one embodiment of the present disclosure. Flow diagram300illustrates the process and data flow of operations involved at the game server side for purposes of generating location based information contained within a companion interface that is transmitted over a network for display at a client device of a user, wherein the client device may be separate from another device displaying the game play of the user playing a gaming application. In particular, the method of flow diagram300may be performed at least in part by the companion application generator213ofFIGS.1and2.

Although embodiments of the present invention as disclosed inFIG.3are described from the standpoint of the game server side, other embodiments of the present invention are well suited to implementing a location based companion interface within a local user system including a game processor configured for executing a gaming application in support of a game play of a user and configured for generating location based information of the game play, and a companion application generator of another device configured for receiving the location based information over a local network and for displaying contextually relevant information. For example, the companion interface is implemented within a local and isolated system, wherein information from game plays of other users may not necessarily be used for generating the contextually relevant information. In another implementation, information from game plays of other users may be received from a back-end game server over another network and used for generation of the contextually relevant information.

Flow diagram300includes operations305,310, and315for executing a gaming application and generating location based information of game play of a user playing the gaming application. In particular, at operation305the method includes instantiating a first instance of a gaming application in association with game play of a user. As previously described, in one embodiment, the instance of the gaming application can be executed locally at a client device of the user. In other embodiments, the instance of the gaming application may be executing at a back-end game executing engine of a back-end game server, wherein the server may be part of a cloud game network or game cloud system. At operation310, the method includes delivering data representative of the game play of the user to a first computing device over a first communication channel for interaction by the user. The communication channel may be implemented for example through a network, such as the internet. As such, rendered images may be delivered for display at the first computing device, wherein the rendered images are generated by the instance of the gaming application in response to input commands made in association with game play of the user.

At operation315, the method includes determining location based information for a character in the game play of the user. In particular, the location based information is made with reference to a location of a character in the game play of the user in a gaming world associated with the gaming application. The location based information may be included within snapshots that are generated, captured and/or stored during the game play of the user, as previously described. For example, each snapshot includes metadata and/or information generated with reference to the location of the character. In one embodiment, the metadata and/or information is configured to enable execution of an instance of the gaming application beginning at a point in the gaming application corresponding to the snapshot (e.g., beginning at a jump point corresponding to the state of the game play when the snapshot was captured, which reflects the location of the character in the game play). For instance, the snapshot includes location based information of the game play, and game state data that defines the state of the game play at the corresponding point (e.g., game state data includes game characters, game objects, object attributes, graphic overlays, assets of a character, skill set of the character, history of task accomplishments within the gaming application for the character, current geographic location of the character in the gaming world, progress through the gaming application in the game play of the user, current status of the game play of the character, etc.), such that the game state data allows for generation of the gaming environment that existed at the corresponding point in the game play. The snapshot may include user saved data used to personalize the gaming application for the user, wherein the data may include information to personalize the character (e.g., shape, look, clothing, weaponry, game difficulty, game level, character attributes, etc.) in the game play. The snapshot may also include random seed data that is relevant to the game state, as previously described.

The remaining operations of flow diagram300may be performed by a companion application generator, which may be executing locally or a back-end server of a cloud game network, as previously described. In particular, at operation320, the method includes receiving location based information of game play of a user playing a gaming application. In the case where the gaming application is executed locally at the first computing device, the location based information generated during the game play may be received from the localized, first computing device over a network. For example, the location based information may be generated and stored locally by the first computing device. In the case where the gaming application is executed at a game executing engine operating at a back-end game server of a cloud game network or game cloud system, the location based information generated during the game play may be received from the back-end game server, either internally where the cloud game network is configured for executing the gaming application and generating the companion interface, or externally where the cloud game network is configured for executing the gaming application and another server is generating the companion interface.

At operation325, the method includes receiving and/or aggregating location based information of a plurality of game plays of a plurality of users playing the gaming application. For example, snapshots may be captured during the game plays of the other users, just as snapshots are captured during the game play of the user, wherein the snapshots include location based information relating to the game plays of the other users, as previously described. The snapshots and/or the location based information contained in those snapshots may be generated locally at user devices, and delivered to a back-end server of a cloud game network or game cloud system, or the snapshots may be generated at the back-end server of the cloud game network which is also executing instances of the gaming application in support of the other game plays. The location based information is also received from the back-end server, either through a local network (where the server acts to execute instances of the gaming application, and to generate the companion application), or through a remote network (where the back-end server of the cloud game network acts independent of the generation of the companion application).

These snapshots, including the metadata/information contained within the snapshots or inferred from the snapshots generated during the game play of other users, may be combined with the location based information (generated during game play of user) to provide additional information relating to the game play of the user. For example, the location based information generated during game play of the user can be used to determine where the character has been in the game play, what tasks the character has accomplished in the game play, the current assets and skills of the character, where the character currently is located (e.g., in the gaming world), etc. Combining and analyzing the location based information generated during game play of the user with metadata/information contained within the snapshots or inferred from the snapshots generated during the game play of other users, it may be statistically determined (e.g., as determined through a prediction engine) what actions/assets are necessary to advance the game play of the user, such as where the character in the game play will be going, what tasks are upcoming and/or required to advance the game play, and what assets or skills are needed by the character to accomplish those tasks, etc.

At operation330, the method includes generating contextually relevant information for the location of the character, wherein the contextually relevant information is generated in real-time based on the location based information of the plurality of game plays, including the game play of the user. In some implementations, based on statistical predictions as described above, the contextually relevant information may provide assistance in progressing and/or advancing through the gaming application, in embodiments.

For example, the contextually relevant information may be provided in the form of messages, wherein the messages may be used to provide helpful information advancing game play (e.g., steps that guide the user to accomplish a required task, notification of what is coming up, or). In one implementation, a train of related messages is generated depending on location and/or movement of a character within the gaming world, such as generating step-by-step instructions providing chunk sized instructions that are part of a larger instruction set, each chunk being delivered when the character reaches a corresponding location. In particular, a task may be statistically predicted, wherein the task is required to advance the game play of the user. One or more solutions to accomplishing the task may be determined. These solutions are determined from the previously collected information (e.g., snapshots) of the plurality of game plays of other users, which may provide insight on future game play of the user. For example, the collected information may show that that the aforementioned task is typically addressed in other game plays by other users at the same point in the game play, and that those users found one or more solutions to complete that task. Those solutions may include assets that are required to accomplish those tasks. Further, the collected information that is analyzed may be further filtered by the user. For example, the user may be interested in solutions from all game plays (e.g., by default), from game plays of noted experts, from one or more selected game plays of friends of the user, etc.

The message may include offers for assistance, such as tips from experts or friends or third parties, or a link that opens a 2-way interactive communication allowing a friend or expert to guide the user in his or her game play, etc. In some embodiments, the message includes an offer to play the gaming application for the user in a jump game to accomplish a task, as will be described further in relation toFIG.6. In some embodiments, the message is generated in response to a trigger, such as recognizing the inability of the user to advance through a certain point in the gaming application. In other examples, the contextually relevant information may include offers to receive or purchase downloadable content (DLC) that is needed by the user to accomplish upcoming tasks to be completed in order to advance the game play.

In still other embodiments, the contextually relevant information may be originally generated by the user. For example, the message may be intended for broadcast to other users (e.g., help flag), and includes a request for assistance from other players of the gaming application. Further, the message including the request for assistance may be targeted to friends of the user, or delivered to known experts, or third parties that provide gaming assistance—wherein the friends, experts, and third parties may or may not be simultaneously playing the gaming application. In other implementations, the message may also include an offer to open 2-way communication with the user that is generated by the user, and may be targeted to friends of the user. In still other implementations, the message includes an offer to open 2-way communications with another user, wherein the message is generated by the other user.

For illustration, a broadcast message may be generated in response to receiving a request for help from the user, wherein the user may be at an impasse in advancing his or her game play. The message may be received by the back-end server (e.g., server executing the companion application generator213). For example, a beacon and/or flag associated with the request for help may be broadcast across one or more companion interfaces of one or more friends of the user that are playing the gaming application. The beacons may be inserted into the one or more radar mappings showing the gaming world in the one or more user interfaces, each beacon located at a point corresponding to the location of the character of the user in the gaming world. The back-end server may receive an acceptance of the request, wherein the acceptance could be generated by a friend of the user. In one embodiment, data representative of the game play of the user is streamed to a third computing device associated with the friend. That is, the game play of the user may be streamed to the friend via a corresponding companion interface. Further, a two-way communication session (e.g., messaging via companion interfaces, voice, etc.) may be established so that the user and the friend may communicate. In that manner, the friend may help provide guidance in real time as the user is playing the gaming application.

In other implementations, the contextually relevant information may also provide other types of information provided to increase the user experience. The information may be related to the game play of the user, such as generally being helpful, supporting, interesting, or the like. For example, the information may provide locations of other users playing the gaming application (e.g., where characters of other users are located within a radar mapping of the gaming world) especially those that are in the vicinity of the character of the user. The contextually relevant information may include navigation pointers, interesting game features and their locations, icons or links to other features (e.g., snapshots, jump game instantiations, etc.).

In still other implementations, the contextually relevant information may include messages from other users (e.g., egg drops containing information or statements from friends of the user, to include taunting statements, words of encouragement, etc.). For illustration, a predefined event may be detected within the game play of the user. The predefined event may include a character reaching a location within a gaming world. Upon detection, a previously generated message from a friend of the user may be accessed. That message may be delivered to the user as contextually relevant information. For example, the message may include a taunt from the friend (e.g., “Ha Ha. I thought you would never get here!”). As another example, the message may include encouraging words from the friend (e.g., “Congratulations, you finally made it! It took me forever to get here. Get through the next section quick, and see you (in the game) on the flip side.”). For example,FIGS.4A-4Cillustrate messages generated by a friend and delivered in a companion interface of a user playing a gaming application, as will be described below.

At330, the method includes generating a companion interface including the contextually relevant information that is based on the location based information from the plurality of game plays (e.g., snapshot information) and from the game play of the user, and wherein the information is generated based with reference to a location of a character in the game play of the user (e.g., location based contextually relevant information). That is, generally the companion interface provides features in support of the game play of the user, and enables the user, or any other viewer, to access information in real time that is generally helpful to user while playing the gaming application.

For example, in one embodiment, the companion interface includes a radar mapping that shows at least a portion of the gaming world of the gaming application, wherein the radar mapping includes at least objects/features located within the gaming world, and locations of characters of the user and other players. Additional information may also be included within or adjacent to the radar mapping, such as messaging that provides general information to increase the experience of the user, or a solution to accomplish a predicted task necessary to advance the game play of the user. In the example previously introduced, wherein a solution is statistically predicted and selected for presentation to the user in the companion interface,

At least one of the solutions may be selected for presentation to the user as contextually relevant information, wherein that solution may include a sequence of steps necessary to accomplish that task. The sequence of steps may be further associated with locations and/or actions in the game play of the user, such that a first step may be associated with a combination of a first location/first action, a second step may be associated with a combination of a second location/second action, etc. Each of the steps in the solution may further be associated with a corresponding message, wherein each of messages may be presented in the companion application depending on the displayed location and/or actions of a character in the game play of the user. For example, a first step in the sequence may be presented in a first message in the companion interface. As an illustration, the first step may include assets required by a character to accomplish that task, and instructions for obtaining those assets. The method includes determining that the first step was completed within the game play of the user, wherein the completion may be further associated with a first location of the character, and/or a first action taken by the character. At this point, a second step in the sequence may be presented in a second message in the companion interface.

At340, the method includes sending the companion interface to a second computing device associated with the user for display concurrent with the game play of the user. For example, in one embodiment there may be two communication channels delivering information, such as a first communication channel established to deliver data representative of game play of the user to the first computing device, and a second communication channel established to deliver data associated with the companion interface (e.g., providing for delivery of interface, and input commands controlling the interface). In another embodiment, the companion interface may be delivered along with the data representative of game play of the user, such as through a split screen including a first screen showing the game play and a second screen showing the companion interface. More particularly, the companion interface is generated in real time, and delivered concurrent with the game play of the user, such that the information provided through the interface supports the game play of the user. In that manner, the game play of the user may be augmented with the information provided by the companion interface.

FIGS.4A-4Cillustrate a message generated by a friend and delivered in a companion interface of a user playing a gaming application, as previously introduced. The message may be provided within a mapping400(e.g., radar mapping) showing the location of a character of the user within a gaming world of the gaming application. The message may be presented in response to an event trigger (e.g., character of user reaching a particular location in the gaming world). In each of the radar mappings400shown inFIGS.4A-4C, a directional pointer450represents the location of the character within the gaming world, and is placed at the center of the radar mapping400. Further, the directional pointer450also gives an orientation of the character (e.g., viewpoint of the character within the gaming world).