U.S. Pat. No. 11,524,241

METHOD AND SYSTEM FOR DESIGNING GAME PLAY AND ECONOMIES

AssigneeUnity Technologies ApS

Issue DateOctober 26, 2021

Illustrative Figure

Abstract

A method of improving game development is disclosed. A game model graph of a video game is created or modified using visual scripting nodes. The game model graph represents one or more game systems and/or one or more economies. The nodes are linked to game resources from the video game. Player profiles describing a plurality of different player types are accessed to be used during a simulation of the game model graph. One or more additional simulations are performed. Each of the one or more additional simulations includes executing the game model graph using the player profiles and the game resources. Data is extracted from the one or more additional simulations to determine behavior of the one or more game systems, the one or more game economies, and/or the resources within the video game over time and across the player types.

Description

DETAILED DESCRIPTION The description that follows describes example systems, methods, techniques, instruction sequences, and computing machine program products that, comprise illustrative embodiments of the disclosure, individually or in combination. In the following description, for the purposes of explanation, numerous specific details are set forth in order to provide an understanding of various embodiments of the inventive subject. matter. It will be evident, however, to those skilled in the art, that various embodiments of the inventive subject matter may be practiced without these specific details. The term ‘content’ used throughout the description herein should be understood to include all forms of media content items, including images, videos, audio, text, 3D models (e.g., including textures, materials, meshes, and more), animations, vector graphics, and the like. The term ‘came’ used throughout the description. herein should be understood to include video games and applications that execute and present video games on a device, and applications that execute and present simulations on device. The term ‘game’ should also be understood to include programming code (either source code or executable binary code) which is used to create and execute the game on a device. The term ‘environment’ used throughout the description herein should be understood to include 2D digital environments (e.g., 2D video game environments, 2D simulation environments, 2D content creation environments, and the like), 3D digital environments (e.g., 3D game environments, 3D simulation environments, 3D content creation environments, virtual reality environments, and the like), and augmented reality environments that include both a digital (e.g., virtual) component and a real-world component. The term ‘game object’, used throughout the description herein is understood to include any object of digital nature, digital structure or digital element within an environment (e.g., a digital object). A game object can represent (e.g., in a corresponding data structure) almost anything within the ...

DETAILED DESCRIPTION

The description that follows describes example systems, methods, techniques, instruction sequences, and computing machine program products that, comprise illustrative embodiments of the disclosure, individually or in combination. In the following description, for the purposes of explanation, numerous specific details are set forth in order to provide an understanding of various embodiments of the inventive subject. matter. It will be evident, however, to those skilled in the art, that various embodiments of the inventive subject matter may be practiced without these specific details.

The term ‘content’ used throughout the description herein should be understood to include all forms of media content items, including images, videos, audio, text, 3D models (e.g., including textures, materials, meshes, and more), animations, vector graphics, and the like.

The term ‘came’ used throughout the description. herein should be understood to include video games and applications that execute and present video games on a device, and applications that execute and present simulations on device. The term ‘game’ should also be understood to include programming code (either source code or executable binary code) which is used to create and execute the game on a device.

The term ‘environment’ used throughout the description herein should be understood to include 2D digital environments (e.g., 2D video game environments, 2D simulation environments, 2D content creation environments, and the like), 3D digital environments (e.g., 3D game environments, 3D simulation environments, 3D content creation environments, virtual reality environments, and the like), and augmented reality environments that include both a digital (e.g., virtual) component and a real-world component.

The term ‘game object’, used throughout the description herein is understood to include any object of digital nature, digital structure or digital element within an environment (e.g., a digital object). A game object can represent (e.g., in a corresponding data structure) almost anything within the environment; including 3D digital models (e.g., characters, weapons, scene elements (e.g., buildings, trees, cars, treasures, and the like)) with 3D model textures, backgrounds (e.g., terrain, sky, and the like), lights, cameras, effects (e.g., sound and visual), animation, and more. The term ‘came object’ may also be understood to include linked groups of individual game objects. A game object is associated with data that describes properties and behavior for the object.

The terms ‘asset’, ‘game asset’, and ‘digital asset’, used throughout the description herein are understood to include any data that can be used to describe a game object or can be used to describe an aspect of a digital project (e.g., including: a game, a film, a software application). For example, an asset can include data for an image, a 3D model (textures, rigging, and the like), a group of 3D models (e.g., an entire scene), an audio sound, a video, animation, a 3D mesh and the like. The data describing an asset may be stored within a file, or may be contained within a collection of files, or may be compressed and stored in one file (e.g., a compressed file), or may be stored within a memory. The data describing an asset can be used to instantiate one or more game objects within a game at runtime (e.g., during execution of the game).

The terms ‘client’ and ‘application client’ used throughout the description herein are understood to include a software client or software application that can access data and services on a server, including accessing over a network.

Throughout the description herein, the term “agent” and “AI agent” should be understood to include entities such as a non-player character (NPC), a robot, and a game world which are controlled by an artificial intelligence system or model.

Throughout the description herein, the term ‘game economy’ should be understood to include a virtual economy within a game wherein game objects may be priced (e.g., with a virtual currency, game points, or the like). In addition, the term ‘game economy’ may include a configuration (e.g., a collection of connected nodes within a game model graph) of sources, sinks and associated control mechanisms for an availability of game objects (e.g., weapons, armor, extra speed, extra lives, and the like) within a game. For example, a game economy may refer to a game model graph configuration (or a part thereof) that determines a total amount of an availability of game object within a game (e.g., a number and type of a weapon available throughout a game), a rate of an availability of a game object within a game (e.g., a rate at which a number and type of a weapon becomes available throughout a game), and the like.

The present invention includes apparatuses which perform one or more operations or one or more combinations of operations described herein, including data processing systems which perform these methods and computer readable media which when executed on data processing systems cause the systems to perform these methods, the operations or combinations of operations including non-routine and unconventional operations or combinations of operations.

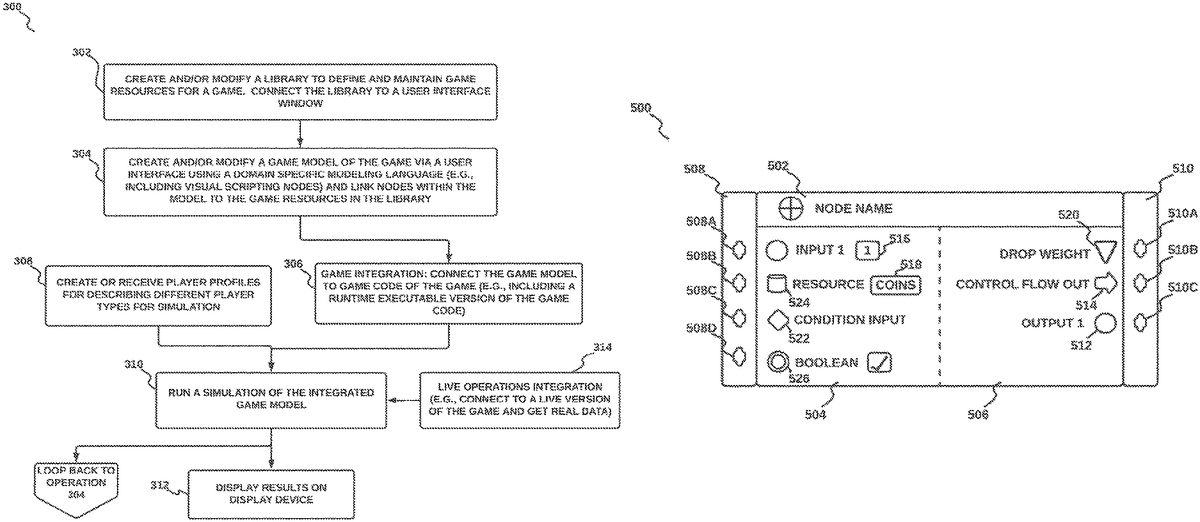

The systems and methods described herein include one or more components or operations that are non-routine or unconventional individually or when combined with one or more additional components or operations, because, for example, they provide a number of valuable benefits to software developers and video game creators. For example, the systems and methods described herein simplify game development by simulating game play in order to test a game economy design and a game play design. In accordance with an embodiment, the systems and methods may provide a tool with simple visual scripting nodes for creating a model (e.g., as described with respect to operation304of the method300shown inFIG.3) of a game system and a game economy and connecting the model with game code (e.g., as described with respect to operation306of the method300described with respect toFIG.3) from the game to be simulated. The model may ice simple to visualize and understand due to the visual scripting nodes, and the model may be used within the systems and methods to simulate game play and generate information which may be used (e.g., by a game developer) to determine potential resource bottlenecks early in a game development process as well as to model a future success of the game design. Additionally, the systems and methods described herein provide continuous design and adjustment of a game economy and game play after release of a game (e.g., release to the public) by providing a connection to and simulation of live game data (e.g., via live game services) as described with respect to operation314of the method300described with respect toFIG.3.

The systems and methods described herein, and as described with respect to the method300shown inFIG.3provide support for game designers to design and simulate behavior of game systems and economies within a game over time. The methods and systems can be used throughout a game design process, from a beginning of game development to a live operating phase after release to the public. A purpose of the simulations (e.g., as described in operation310of the method300) is to enable testing of a design of a game early in a design process. The simulations may reveal potential imbalances in a game system. and an economy design and can also predict a future success of the design (e.g., with respect to game players). The simulations help when used in an iterative process of game design and development of a game as it can provide insights before a first release of the game.

A method of improving game development is disclosed. A game model graph of a video game is created or modified using visual scripting nodes. The game model graph represents game systems and/or an economy. The nodes are linked to one or more game resources from the video game. Player profiles describing a plurality of different player types are accessed to be used during a simulation of the game model graph. One or more additional simulations are performed. Each of the one or more additional simulations includes executing the game model graph using the player profiles and the one or more game resources. Data is extracted from the one or more additional simulations to determine behavior of the game systems, the economy, and the resources within the video game over time and across the player types.

A game model graph (e.g., as described with respect to operation304of the method300shown inFIG.3) built within a Game Design Tool system100(e.g., as described with respect toFIG.1,FIG.2A,FIG.2B,FIG.3,FIG.4,FIG.5,FIG.6A-6Q,FIG.7,FIG.8A,FIG.8B,FIG.9A, andFIG.9B) can be integrated directly with game code (e.g., as described with respect to operation306of the method300), providing a single source for system and economy design of a game. The direct integration described in operation306may include a direct connection to game code, whereby changes made in visual scripting nodes (e.g., as described with respect to operation304of the method300shown inFIG.3) may be directly and automatically implemented in the game code. The direct integration within operation306avoids a manual copy and paste operation of game design values and economy values to the game code (e.g., manual copy/paste operations can lead to mismatches between a game design and an actual player experience and is inherently error-prone).

Turning now to the drawings, systems and methods, including non-routine or unconventional components or operations, or combinations of such components or operations, for a game design tool system in accordance with embodiments of the invention are illustrated. In accordance with many embodiments, and shown inFIG.1is a game design tool system100. The game design tool system100includes a game design tool user device102, a game design tool server device140in networked communication over a network150. In accordance with an embodiment, the game design tool system100includes a resource database125which may contain data describing game assets and data describing other resources (e.g., within a library) that may be required by a video game executing on the game design tool user device102. In accordance with an embodiment, the game design tool system100may include a Game Data database120that stores data from additional game design tool systems that include additional game design tool user devices (the additional game design tool systems and additional game design tool user devices are not shown inFIG.1but may be incorporated within operation314of the method300described below).

In accordance with an embodiment,FIG.1shows a single user/player130and a single game design tool user device102; however, it should be understood that during operation a plurality of users130on a plurality of game design tool user devices102may be in operation and in communication with the game design tool server140, and store data on the Game Data database120over the network150.

In accordance with an embodiment and shown inFIG.2Ais a schematic showing details of a game design tool user device102within a game design tool system100. The game design tool user device102includes one or more central processing units103(CPUs), and graphics processing units105(GPUs). The CPU103(and the GPU105) is any type of processor, processor assembly comprising multiple processing elements (not shown), having access to a memory101retrieve instructions stored thereon, and execute such instructions. Upon execution of such instructions, the instructions implement the processing device103to perform a series of tasks as described below in reference toFIG.3andFIG.4. The memory101can be any type of memory device, such as random-access memory, read only or rewritable memory, internal processor caches, and the like.

The game design tool user device102also includes one or more input/output devices108such as, for example, a keyboard or keypad, mouse, pointing device, touchscreen, microphone, camera, and the like, for inputting information in the form of a data signal readable by the processing device103. The game design tool user device102further includes one or more display devices109, such as a computer monitor, a touchscreen, and a head mounted display (HMD)), which may be configured to display digital content including video, a video game environment, an integrated development environment and a virtual simulation environment to the user130. The display device109is driven or controlled by the one or more GPUs105and optionally the CPU103. The GPU105processes aspects of graphical output that assists in speeding up rendering of output through the display device109. The game design tool user device102also includes one or more networking devices107(e.g., wired or wireless network adapters) for communicating across a network.

The memory101on the game design tool user device102also stores a game engine104(e.g., executed by the CPU103or GPU105) that communicates with the display device109and also with other hardware such as the input/output device (s)108to present a 3D game environment and a game development environment (e.g., a video game) to the user130and to simulate a 3D game environment and game state. The game engine104would typically include one or more modules that provide the following: simulation of a virtual environment and game objects therein (e.g., including animation of game objects, animation physics for game objects, collision detection for game objects, and the like), rendering of the virtual environment and the game objects therein, networking, sound, and the like in order to provide the user with a complete or partial virtual environment (e.g., including video game environment or simulation environment) via the display device120. In accordance with an embodiment, the simulation and rendering of the virtual environment may be de-coupled, each being performed independently and concurrently, such that the rendering always uses a recent state of the virtual environment and current settings of the virtual environment to generate a visual representation at an interactive frame rate and, independently thereof, the simulation step updates the state of at least some of the digital objects (e.g., at another rate). In accordance with an embodiment, the game engine104may be implemented within an application such as a video game.

In accordance with an embodiment, the memory101on the game design tool user device102also stores a game design tool client module106for implementing methods for game design and simulation as described herein and in particular with respect to the method shown inFIG.3. The game design tool client module106may be implemented for example as a software development kit (SDK).

In accordance with an embodiment and shown inFIG.2Bis a game design tool server device140. The game design tool server device140includes one or more central processing units111(CPUs) . The CPU111is any type of processor, processor assembly comprising multiple processing elements (not shown), having access to a memory113to retrieve instructions stored thereon, and execute such instructions. Upon execution of such instructions, the instructions implement the processing device111to perform a series of tasks as described below in reference toFIG.3andFIG.4. The memory113can be any type of memory device, such as random-access memory, read only or rewritable memory, internal processor caches, and the like. The game design tool server device140also includes one or more networking devices115(e.g., wired or wireless network adapters) for communicating across the network150.

In accordance with an embodiment, the memory113on the game design tool server device140also stores a game design tool server module112for implementing methods as described herein and in particular with respect to the methods shown inFIG.3and the data flow diagram ofFIG.4. The game design tool server module112may be implemented for example as a software development kit (SDK).

In accordance with an embodiment, and shown inFIG.3is a flowchart of a method300for creating and simulating a game model graph for a game within a game design tool system100. The method300may be used in conjunction with the Game Design Tool system100as described with respect toFIG.1,FIG.2A,FIG.2B,FIG.4,FIG.5,FIG.6A-6Q,FIG.7,FIG.8A,FIG.8B,FIG.9A, andFIG.9B. In various embodiments, some of the method elements shown inFIG.3may be performed concurrently, in a different order than shown, or may be omitted. In accordance with an embodiment, the game model graph is a visual scripting (or visual programming) graph than includes user interface nodes (e.g., as described inFIG.5andFIG.6A, throughFIG.6Q) and which represents game logic for a game (e.g., a video game) or a part of a game. The game logic may represent logic underlying a game economy within a game, and logic underlying mini games (e.g., challenges, treasure hunts, and more) within a game, and more. For example, the game logic may control aspects of the game economy by controlling a frequency, an amount, an input and an output of a plurality of mini games within the game economy (e.g., the game logic may control how often a new armor may be made available to a character within a game, how often a character may find a hidden treasure within a game, how many rewards a character receives after successfully completing a challenge, what type or rewards a character receives after successfully completing a challenge, and the like). In accordance with an embodiment, the method300may be performed with a Game Design Tool User Interface (UI), wherein the Game Design Tool User Interface UI is described with respect toFIG.8A,FIG.8B,FIG.9A, andFIG.9B.

In accordance with an embodiment, in operation302of the method300, a library is created (e.g., within the Game Design Tool UI), wherein the library includes descriptions of game resources for a game. In accordance with an embodiment, the library may be created manually by a developer (e.g., entering the descriptions), or may be created by uploading descriptions (e.g., importing resource descriptions). In accordance with an embodiment, the library enables the user to describe and maintain game resources that may be used within a game runtime execution of the game. In accordance with an embodiment, a game resource is an element that can be quantified (e.g., counted) and can be used as a means to control (e.g., manage) a flow (e.g., an economic flow) of a game. Accordingly, a game resource can include many different elements, including in-game elements such as weapons, cards, and coins, but also less tangible elements including progression meters (e.g., experience points), a list of completed quests, and the like. In accordance with an embodiment, data within the library may be stored locally (e.g., within the game design tool user device102) or over the network150(e.g., within the resource database125).

In accordance with an embodiment, as part of operation302, the library may be connected to a graphical user interface window in order to display and manage (e.g., track and modify) values associated with game resources. In accordance with an embodiment, there may be two UI windows, with a first window (e.g., referred to herein as a ‘Library Window’) to declare and maintain game resource structures (e.g., interconnections between resources), and another window (e.g., referred to herein as a ‘Game State window’) to observe (e.g., via displayed graphs) and reason about game resource data over time.

In accordance with an embodiment, the library may be designed for managing large numbers of resources (e.g., hundreds or thousands of different game resources) with associated connections between resources (e.g., dependencies between resources and possible hierarchies of resources) that define details of a game system. An example resource (and associated connections) would be a chest resource in a game (e.g., a chest filled with bounty), wherein the chest in the game includes other resources such as cards (e.g., that are defined in the library). The cards in the chest may depend on many other resource items (and properties associated with the items) in a library (e.g., a player level, a rarity of the chest, additional cards a player owns, and the like). For example, when a designer is defining chest contents, the designer will reference other items in the library (cards) and calculate what cards are given based on properties or other items in the library (e.g., a level of the cards needs to be greater than a level of a player opening them). A weapon resource provides another example of interconnection between resources in the library, wherein damage caused by the weapon resource may be determined based on other resources (and associated properties) in the library. For example, the damage caused by the weapon at a time during a game may be determined (or modified) based on one or more of the following: a level of a player resource wielding the weapon at the time, a strength value of the player resource at the time, other gear resources the player resource owns at the time, and more.

In accordance with an embodiment, during operation304of the method300, a plurality of visual scripting nodes is provided via the Game Design Tool UI. The visual scripting nodes (or just ‘nodes’) include a domain-specific modeling language which can be used (e.g., by a game developer) to express game systems and economies. The domain-specific modeling language is included in the nodes and expressed by a connection of nodes into a graph structure described below with respect toFIG.5,FIG.6A-6Q,FIG.7,FIG.8A,FIG.8B,FIG.9A, andFIG.9B. For example, the domain specific modeling language provides a means by which a game designer (e.g., or a game developer) can express a game system and an economy design in a way that is intuitive to understand and communicate to others (e.g., via an interconnection of the visual scripting nodes into a graph structure). In accordance with an embodiment, the nodes provide a structural core of the Game Design Tool system100. In accordance with an embodiment, as part of operation304, the game resources are connected to a game system by means of the displayed nodes (e.g., by a game developer interacting with the nodes via the user interface). An example of a game model graph created via a user interface is described below with respect toFIG.7,FIG.8A,FIG.8B,FIG.9A, andFIG.9B.

In accordance with an embodiment, during operation306of the method300, the game design tool client module106may perform a game integration to connect a game model graph (e.g., a game model graph created during operation304) to game code for the game. The game code includes any software code or programming code that can be compiled into an executable file of the game, and when compiled and executed on a computation device (e.g., such as the game design tool user device102) causes the computation device to display the game on a display device and communicate with input/output devices in order to interact with a player of the game. The game code may be packaged (e.g., compiled and built) within executable application referred to herein as the game runtime executable. This includes creating at least one executable file and any associated libraries, DLL, or other necessary files for the application to function. The Game integration includes connecting the game model graph with the game code thus making the game model graph an efficient means to experiment and optimize aspects of the game (e.g., aspects including a game economy and game design). During operation, based on the game model graph being integrated into an executable version of the game (e.g., via compiled game code), developers and designers can work closely together and experiment with different game design scenarios (e.g., via a structure of the game model graph and properties of the nodes therein) and receive immediate feedback via a playing of the executable version or the game (e.g., which can be changed in real-time by the graph).

In accordance with an embodiment, as part of operation306, the game integration may include creating connections between a runtime execution of a game and a game model graph (the connections are described in more detail with respect toFIG.4). The runtime execution of the game (e.g., or ‘game runtime’) refers to an executing version of the game runtime executable application/file which is being executed on a computation device. The connections may be configured within an integration layer between the game runtime and the game model graph. In accordance with an embodiment, the integration layer may be configured such that during operation the game model graph updates a game state of a player (items, currencies etc.) based on data received from the game runtime (e.g., a video game). The game runtime may handle user interaction (e.g., showing values on a display screen and reacting to signals from the UI) and signal the game model graph to update a game state (e.g., by updating values stored in a memory). The updating of the game state includes updating aspects of the game related to the player, including an updating of items owned by the player (e.g., weapons, points, currency, etc.), health of a player (number of lives, power level, etc.) position and progress of the player, and the like.

In accordance with an embodiment, the integration layer may also be configured such that during operation the game model graph updates aspects of the game runtime which can modify game logic, game resources, and a displayed game UI. For example, an amount, type, timing, and probability of resource distribution may be changed via nodes within the game model graph. In accordance with an embodiment, the updating of the game runtime may occur dynamically (e.g., during execution of the game runtime) with a loading of different models that include the game logic.

As an example, consider the following sequence of events: A player (e.g., a human or an AI agent) pushes a “Buy Tomato” button in a game UI (e.g., during a playing of the game). The game UI signals the game runtime which signals the game model graph (e.g., via the integration layer) with data describing a request for the player to buy a tomato. Based on the player having requirements to purchase the tomato, a game state for the player is updated (e.g., via the game model graph) to reflect the purchase (e.g., reducing an amount of player money by the tomato price, and increasing an inventory for the player by one tomato). The game UI may then be updated with the updated game state of the player. Additionally, the game model graph may load new game logic (e.g., to modify the price of Tomatoes) based on logic within nodes of the game model graph (e.g., nodes which react to the purchase of the tomato).

With game integration as described in operation306, a game developer is not required to manually change (e.g., to copy and paste) design and economy values in the game code, which is inherently error-prone and can often lead to mismatches between a game design and an actual player experience.

In accordance with an embodiment, during operation310of the method300, the game design tool client module106performs one or more simulations, wherein each of the one or more simulations include a playing of the game runtime by a simulation engine (e.g., a simulation service, an AI agent, and the like) to determine behavior of game systems over time and across a multitude of player types (e.g., player profiles).

In accordance with an embodiment, the game model graph (e.g., described in operation304) is connected with the simulation engine via the integration layer created in operation306. In accordance with an embodiment, as part of operation310, the simulation engine may interact with the game runtime, the game state, and the game UI during the running of the simulation. In accordance with an embodiment, a game designer may define values and parameters used for the simulation during operation304(e.g., using the nodes). In addition, the simulation engine may apply player profiles (defined below) during the simulation.

Having the game model graph connected to the simulation engine allows simulations to be run throughout a development process (e.g., early throughout a game design process wherein the game may change substantially, during later stages of development where chances are less significant, and even after release of the game publicly). In accordance with an embodiment, the simulations of operation310are run locally (e.g., on the game design tool user device102). In accordance with another embodiment, the simulations of operation310may be run on the server device140(e.g., in a cloud service and run at a large scale).

In accordance with an embodiment, after a simulation is complete, or when a simulation is actively stopped, a user may loop back to operation304to modify a game model graph and run new simulations.

In accordance with an embodiment, in operation312of the method300, results of simulation from operation310may be shown on a display device (e.g., within a user interface).

In accordance with an embodiment, as part of operation308of the method300, the game design tool client module106creates or receives player profiles, wherein the player profile describes a plurality of different player types to be used during a simulation of a game (e.g., as described in operation310). The player types may modify the simulation of the video game via the game model graph. In accordance with an embodiment, the Player profiles may be used for describing different player types to provide realism for simulations (e.g., in order to more accurately simulate a real game playing audience). The player types may include a description of a frequency of how often the player type interacts with one or more features in a game model (e.g., causing a triggering of a Player Action node during a simulation). For example, player types may include ‘casual’ which may have generally lower frequency interactions with the one or more features, ‘hardcore’ which may have generally higher frequency interactions with the one or more features, ‘heavy spender’ which may have higher frequency interactions with purchasing features, and any other type or combination. In accordance with an embodiment, a human game designer (e.g., game developer) can define a plurality of different types of player profiles (e.g., via a user interface), run a plurality of simulations for each type, and analyze results from the plurality of simulations (e.g., perform comparisons of results from different types of player profiles). In accordance with an embodiment, a Player profile includes a description of choices available to a simulated player within game. In accordance with an embodiment, the choices may include one or more actions the simulated player can perform in the game. For example, choices may include limitations on locations and means for a player to use resources, limitations on locations and means for a player to watch advertisements, limitation on when a player may purchase an item, limitations on how often player may play a game, and limitations on how long a player may play a game, and more.

In accordance with an embodiment, a player profile may include a skill parameter (e.g., a numeric value). In accordance with an embodiment, a player profile may include data that describes a frequency and amount of playing sessions (e.g., simulations) for the player profile; wherein the data may include values that describe a number of simulations per time period (e.g., simulations per day), and simulation length and a cooldown period between simulations. The value for simulations per time period may describe how many times a player profile opens a game over the time period, while the simulation length may describe length of time of a simulation, and the cooldown may describe a minimum amount of time between simulations. In accordance with an embodiment, a player profile may include data that describes actions associated with a player action node (described below), wherein the actions may describe a frequency of triggering of the player action node. In accordance with an embodiment, the triggering of the player action node may be associated with a category of node. For example, an action value in a player profile may be linked to a triggering of one or more of the following: a core game node (described below), a core game progression node (described below), an in-app purchase node (described below), or a combination thereof. As an example, a player profile described as ‘hardcore’ may include values such as 50 simulations per day, simulation length of 5 minutes, minimum cooldown of 5 minutes, with each simulation including 5 core game node actions (e.g., 5 different triggerings of a core game node via the player action node per simulation), and 2 in-app purchase node actions (e.g., 2 different triggerings of an in-app purchase node via the player action node per simulation).

In accordance with an embodiment, as part of operation308, in addition to using predefined player profiles and creating player profiles (e.g., via a user interface), the game design tool client module106may use real player behavior data obtained from human players playing a game (e.g., from a game playing service or server that includes a plurality of player data from human players). Using profiles created from human player data may make the simulations in operation310more realistic.

In accordance with an embodiment, the simulations in operation310may include a Time machine component that allows for manipulation of a flow of time during a simulation (e.g., by controlling a simulation speed). For example, a user may control time intervals for simulation (e.g., 1 hour, 1 day, 1 week, 1 month, 1 year), or time of day for simulations, or the like. In accordance with an embodiment, the Time Machine component may help optimize a simulation by jumping large sections of time during which a game state may not change substantially (e.g., due to lack of user interacting with the system).

In accordance with an embodiment, at operation314of the method300, data from one or more live games (e.g., or benchmark data) may be gathered and input to the simulations. The use of real data from one or more live games enables a designer of a game (e.g., a game developer) to quantify an accuracy of design assumptions made for the game based on the live game data. In accordance with an embodiment, output from the simulations can be analyzed to determine the following: which systems in the game (e.g., nodes within the game model graph) are resource bottlenecks, which systems in the game are large sinks for award items (e.g., gems), and which levels are too hard/easy to complete.

In accordance with an embodiment, a game model graph (e.g., as created in operation304) may be connected to a plurality of live game services. For example, a plurality of different game code configurations can be generated (e.g., during operation306) from a plurality of game model graph configurations and delivered through live operating game distribution or updating service (e.g., via Remote Config™ from Unity Technologies Inc.). The game model graph can also define and send analytic events with full information about the context of different game elements and events.

In accordance with an embodiment, the Game Design Tool UI may support an integration of different live operating tools and services that include the following: analytics on players and player behavior (e.g., via Unity Analytics™ from Unity Technologies Inc.), a monetization of a game through adding advertisements (e.g., via UnityAds™ from Unity Technologies Inc.), an optimization of in-app purchases (e.g., via Unity IAP™ from Unity Technologies Inc.) and a personalization of game experiences (e.g., via GametTune™ from Unity Technologies Inc.). The domain-specific modeling language can contain elements needed for these integrations as pre-integrations that later (e.g., when entering the LiveOps phase) can be taken into use. The integration of elements required for live operations (e.g., within operations304and306of the method300) enables a game designer to consider game optimization early on during a game design process and thus may improve player experiences (e.g., when the game gets launched) since components that include ad placements and personalized content are in optimized locations/times in the game flow. In accordance with an embodiment, the Live operations can be used to test different game model graphs created during operation304. In this case the user (e.g., the game designer) may create different models and choose which versions of the game model graph to send out. In accordance with an embodiment, each of the different game model graphs has more than just different configuration values; for example one set of players may receive a first game model graph wherein the players collect coins in the game and see an ad at the end of the game, while another set of players may receive a second game model graph wherein the player gains score based on how far they progress in the game and then sees an IAP offer at the end of the game. More details on the integration of live operating tools and services are shown with respect toFIG.8AandFIG.8B.

In various embodiments, some of the method elements shown inFIG.3may be performed concurrently, in a different order than shown, or may be omitted.

In accordance with an embodiment, and shown inFIG.4is a data flow diagram400of a Game Design tool system100. In accordance with an embodiment, the data flow diagram400includes a game model graph user interface (UI)404(e.g., as used in operation304) which may generate and modify a game model graph402. The data flow diagram400also includes a simulation runtime process408which receives and executes (e.g., simulates) the game model graph402(e.g., as described with respect to operation310of the method.). The simulation runtime process408may use player profiles as input (e.g., as described in operation308), wherein the player profiles used in the simulation may come from one or more of a machine learning (ML) agent424, a predefined scripted player behavior428, and a perfect player426. In accordance with an embodiment, simulation results409from the simulation runtime408are sent to the game model graph UI for display to the user as described in operation312). In accordance with an embodiment, a came runtime410(e.g., an executing version of a game) manages a display of a game user interface UI412(e.g., as part of operation312) using at least configuration and state data420from a game, wherein the configuration and state data420is updated by the game runtime410. The configuration and state data may also be modified by live operations414(e.g., as described with respect to operation314). In accordance with an embodiment, the game runtime410may also have an integration layer406that allows aspects of the game model graph402to be integrated into a game runtime410. In accordance with an embodiment, the integration layer406may send data to the game model graph UI404. For example, the integration layer406may send data directly to a node within a game model graph402which is displayed in the game model graph UI404.

In accordance with an embodiment, as part of operation304of the method300, there is provided a graphical user interface for display on a display device, the graphical user interface (GUI) comprising a means to create a graph of interconnected nodes, wherein the graph of interconnected nodes represent a game model graph402as generated in operation304of the method300. The GUI displays a plurality of nodes which may be customized for a game model graph402. In accordance with an embodiment, and as shown inFIG.5, is a possible structure of a displayed node500within a game model graph402. In accordance with an embodiment, each node500within a game model graph402may include a title bar502which may include a node icon (e.g., customized for each node) and a node title. In addition, each node includes one or more inputs504and one or more outputs506. For simplicity and visual logical flow of data from left to right on a display screen, the inputs504are located on the left of the node500, while the outputs506are located on the right side of the node500. In accordance with an embodiment, each input is associated with an input connector (e.g.,508A to508D) which represents a potential connection between an input of the node500with an output from an additional node not shown inFIG.5. Similarly, each output is associated with an output connector (e.g.,510A to510C) which represents a potential connection between an output of the node500with an input from an additional node not shown inFIG.5.

In accordance with an embodiment, as part of operation304, during a building of the game model graph, the output connectors (510A to510C or collectively510) from a first node500are connected to input connectors (508A to508D or collectively508) of the same type (e.g., types are described below) from a second node500not shown inFIG.5. For example, Control Flow outputs must be connected to Control Flow inputs, Condition Data type outputs must be connected to Condition Data type inputs, Resource Data type outputs must be connected to Resource Data type inputs, and the like (Control Flow input/outputs, Condition Data input/outputs, and Resource Data input/outputs are described below).

In accordance with an embodiment, an input (or an output) may be one of two different types: either a Control Flow input/output or a Data input/output. In accordance with an embodiment, a Control Flow input/output provides control of data flow and execution of nodes over time (e.g., triggering of node execution). For example, connections along Control Flow inputs and outputs (e.g., from one node to another) determine the order in which a game model graph may be executed (e.g., node execution order within a graph, and data flow from each node in the graph). In accordance with an embodiment, a Data type input includes a plurality of types of data, including the following: a Number Data type, used to pass numerical values; an Enumeration Data type, used to select/pass a value of a pre-defined limited set of values (e.g. Seconds, Minutes, Hours, Days); a Boolean Data type, used to pass either ‘true’ or ‘false’; a Condition Data type, which is an object that is evaluated on demand and can either ‘be met’ (the condition applies) or ‘not be met’ (the condition does not apply); a Resource Data type, which represents a resource item (e.g., from the Library Window) that is part of a modeled economy for a game (e.g., coins, cards, swords, live points, etc.); and a Drop Weight Data type, which is an object used in conjunction with a Weighted Drop node610(defined below) to build a lootbox-like system. In accordance with an embodiment, a Data Type input on a node can be associated with an explicit default value, which is used if no value is fed into the node via the input port. Otherwise, an input may have an implicit default value. In accordance with an embodiment, some nodes may provide a means for selecting resources and properties by means of a text field.

In accordance with an embodiment, and shown inFIG.5(e.g., and also inFIG.6A-6Q,FIG.7,FIG.8A,FIG.8B,FIG.9A, andFIG.9B), a displayed node500within a user interface may have different symbols for different types of inputs; outputs. For convenience of explanation, a Control Flow input/output withinFIG.5(and alsoFIG.6A-6Q,FIG.7,FIG.8A,FIG.8B,FIG.9A, andFIG.9B) is depicted by an arrow icon514. For convenience of explanation, a Data Type input/output withinFIG.5(and alsoFIG.6A-6Q,FIG.7,FIG.8A,FIG.8B,FIG.9A, andFIG.9B) is depicted by a plurality of icons depending on a type of data. For example, a Drop Weight Data type is represented by inverted triangle520icon, a Resource Data type is represented by a database icon524, a Condition Data type is represented by a diamond shaped icon522, and a Number Data type and Enumeration Data type are both represented by a circle icon512, and a Boolean Data type is represented by a double circle icon526. In accordance with an embodiment, a Data type input may have a default value displayed516with the input. Similarly, a Resource Data type may have a display of a resource518which is associated with the input.

In accordance with an embodiment and shown inFIG.6AthroughFIG.6Qare a plurality of different types of nodes to be used in operation304when creating a game model graph. An example UI for the game design tool which incorporates the nodes is shown inFIG.8AandFIG.8Bbelow.

Core Game Node

In accordance with an embodiment, and shown inFIG.6A, is a GUI display of a Core Game node600, wherein the Core Game node600is a visual representation of, and controlling UI for a core game within a game model graph. The Core Game node600is a means to represent any event within a game (e.g., a video game) that can be modeled with a win rate (e.g., a rate of winning, or a rate of success) such that an output may be a ‘win’ outcome (e.g., a success at the event), a ‘loss’ outcome (e.g., a failure at the event) or even a tie outcome (e.g., a neutral outcome of the event) (e.g., the ‘tie’ is not shown inFIG.6A). In accordance with an embodiment, the Core Game node600may represent events such as a mini game or challenge (e.g., a treasure hunt, a race, a fight, and more) nested within a larger game (e.g., a game within a game), wherein the larger game is represented by a large game model graph comprising, a plurality of nodes and a plurality of interconnected game model graphs (e.g., as shown in.FIG.9AandFIG.9B). In accordance with an embodiment, the Core Game node600may be used in a first mode wherein the Core Game node600does not include any details of a mini game or challenge which it represents, rather, it includes the win rate for the mini game or challenge regardless of any details associated. with the event. As an example, consider a situation wherein a Core Game node600represents a mini treasure hunt within a larger first person shooter video game. The Core Game node600may be used to represent the mini treasure hunt regardless of any details of the treasure hunt such as a time duration of the hunt (e.g., a time limit), details of an environment in which the hunt takes place (e.g., layout of environment, style, lighting, etc.), rules for the hunt (e.g., number of treasures to find), and more. Rather, the details of the mini treasure hunt are abstracted away (e.g., removed) by representing the mini treasure hunt by the Core Game node600(e.g., within a game model graph) since only a win rate is used to determine an outcome of a player within the mini treasure hunt.

In accordance with an embodiment, the Core Game node600may be used in a second mode wherein the Core Game node600may be linked directly with game code (e.g., as described with respect to operation306of the method) wherein details of a mini game or challenge which it represents are included in the game code. In such a mode, the win rate associated with the Core Game node600may be linked to the game code to control an aspect of the treasure hunt (e.g., such as a difficulty level wherein the difficulty level is inversely related to the win rate so that a high win rate is related to a low difficulty level, and vice versa).

In accordance with an embodiment, inputs and outputs of the Core Game node600may be defined (e.g., by a user) during operation304, including connecting other node outputs to the Core Game node600inputs and connecting outputs from the Core Game node600to other node inputs. In accordance with an embodiment, the Core Game node600may be linked directly to game code (e.g., during operation306of the method300) and may be linked with an executable version of a game (e.g, an executable file). In accordance with an embodiment, during a designing of a game system (e.g., an economy game system) within operation304, details within a Core Game node600(e.g., details related to gameplay of a core game associated with a Core Game node600) may not be relevant, so the Core Game node600may be considered a “black box” and may be implemented as a random output generator which chooses from a plurality of outputs such as either a ‘Win’ Control Flow output600D or a ‘Lose’ Control flow output600E based on a probability distribution controlled by a ‘Win Rate’ Number Data input600B and a predefined probability distribution (not shown inFIG.6A).

In accordance with an embodiment, the Core Game node600includes one or more inputs. In accordance with an embodiment, the Core Game node600may include a Control Flow input600A for controlling a triggering of the Core Game node600. In accordance with an embodiment, the triggering of the Core Game node600includes one of the following: an execution of a predetermined Core Game associated with the Core Game node600(e.g., an execution of code or an executable file that describes a game) that generates an output result which is either a ‘Win’600D or a ‘Lose’600E, and an execution of a predefined probability distribution function that generates an output result which is either a ‘Win’600D or a ‘Lose’600E.

In accordance with an embodiment, a Core Game node600may include an input for a Win Rate600E (e.g., a value from 0 to 1) that represents a probability of a player winning the core game linked to the Core Game node600. In accordance with an embodiment, there many be a Condition Result input600C that provides an additional control (e.g., in addition to the Control Flow input600A) of the triggering of the Core Game node600. For example, based on the Condition Result600C evaluating to ‘True’ along with the Control Flow input600A being received, the Core Game node600will be triggered (e.g., “played”) and an output including either a ‘Win’600D or ‘Lose’600E will be triggered. Based on the Condition Result600C evaluating to ‘False’, a Condition Not Met output600F is triggered (e.g., rather than an ‘Win’600D or ‘Lose’600E output). In accordance with an embodiment, based on no valid connection existing on the Condition Result input600C, the Condition Result is considered “met” and the Core Game node600will be played.

In accordance with an embodiment, the Core Game node600‘Win’ output600D continues a flow within a graph (e.g., to any other node connected to the ‘Win’ output600D) based on whether the Core Game node600evaluated to a win. In accordance with an embodiment, the Core Game node600‘Lose’ output600E continues a flow within a graph (e.g., to any other node connected to the ‘Lose’ output600E) based on whether the core game evaluates to a loss. In accordance with an embodiment, the Core Game node600may contain a ‘condition not met’ output600F that continues a flow within the node system based on whether the core game evaluates to a Condition Result600C not being met. Accordingly, any node connection connected to the ‘condition not met’ output600F will be triggered based on a core game evaluating to a condition result not being met.

Core Game Progression Node

In accordance with an embodiment, and shown inFIG.6B, there is provided a GUI display of a Core Game Progression node602wherein the Core Game Progression node602is a visual representation of, and GUI controller for player progression within a game. The Core Game Progression node602provides a means for modeling (e.g., by an inclusion of a Core Game Progression node602within a game model graph) player progression during an execution of a core game (e.g., by connection to a Core Game node600within a game model graph). In accordance with an embodiment, inputs and outputs of the Core Game Progression node602may be defined (e.g., by a user) during operation304, including connecting other node outputs to the Core Game Progression node602inputs and connecting outputs from the Core Game Progression node602to other node inputs.

In accordance with an embodiment, the progression may relate to a measure of advancement within a game level of a game (e.g., a tracking of a player progression within a game), which can include a measure of advancement within a level in a game (e.g., progression along a path, progression towards a level end point, and the like), progression be levels within a game, progression of collectible items within a game (e.g., a number of collected items), progression with respect to weapons in a game (e.g., from simple weapons to more elaborate and powerful weapons), and the like. In accordance with an embodiment, the Core Game Progression602generates (e.g., when triggered by an input control flow602A) an output progression value602G that represents a progression value for the Core Game Progression, wherein the progression value is determined by a random numerical value between the two inputs (e.g., min602B and max602C) to the Core Game Progression node602. The two inputs provide limitations for the output progression value602G. In accordance with an embodiment, the Core Game Progression node602may have additional inputs, including a Control Flow input602A which triggers (e.g., an execution) the Core Game Progression node602, and an input for a Condition Result602D which provides a conditional control on the triggering. For example, based on the input condition result602D evaluating to “true”, the Core Game Progression node602will be “played” (e.g., executed to generate an output progress value602G) and trigger a Control Flow output602E (e.g., to signify that the node had successfully activated). Based on the input condition result602D evaluating to “false”, the ‘Condition Not Met’ Control flow output602F will be triggered and no output progress value602G is Generated. In accordance with an embodiment, based on no valid connection existing at the input, the input Condition Result602D may be considered “met” (e.g., as a default).

Faucet Node

In accordance with an embodiment, and shown inFIG.6Cthere is provided a Faucet node604, wherein the Faucet node604, when triggered by a Control Flow input604A within a game model graph, performs an action of adding an amount of a resource to a game state of a player. In accordance with an embodiment, the amount of the resource to be added may be determined with a number data type input604C (e.g., an input labeled ‘How Many’ with a default value of 10). The number data type input604C includes a numerical value for an amount of items of the resource that is added to the game state of the player by the Faucet node604every time the Faucet node604is triggered. In accordance with an embodiment, the Faucet node604includes a resource data type input604B that includes a reference to a resource (e.g., a link to a resource in a library created in operation302) that is added to the game state of the player when the Faucet node604is triggered. In accordance with an embodiment, the Faucet node604may include a Control Flow input604A to trigger the Faucet node604to add the resource to the game state. In accordance with an embodiment, the Faucet node604may include a number data type input604D that represents an upper limit of a total amount (e.g., a maximum total amount) of the resource that a game state of a player may include (e.g., to stop the Faucet node604from adding resource items once the limit is reached. For example; consider a player currently holding 23 coins, and a Faucet node604that produces 10 coins per visit (e.g., each time the faucet is triggered) and with a limit of 30 coins. Due to the limit of 30 coins, the Faucet node604would only produce an additional 7 coins (e.g., instead of producing 10 coins) so that the limit of (23+7=) 30 coins is not exceeded. In accordance with an embodiment, the Faucet node604may include a Control Flow output to continue a flow after the faucet performs the action of adding the resource items.

In accordance with an embodiment, inputs and outputs of the Faucet node604may be defined (e.g., by a user) during operation304, including connecting other node outputs to the Faucet node604inputs and connecting outputs from the Faucet node604to other node inputs.

Sink Node

In accordance with an embodiment, and shown inFIG.6D, there is provided a Sink node606, wherein the Sink node606when triggered by a Control Flow input606A within a game model graph, performs an action of removing an amount of a resource from a game state of a player. In accordance with an embodiment, the amount of the resource may be determined with a number data type input606C (e.g., an input labeled ‘How Many’ inFIG.6Dwith a default value of 10). The number data type606C includes a value for an amount of resource items606B that are removed from a game state of the player by the Sink node606every time the Sink node606is triggered (e.g., within a game model graph). In accordance with an embodiment, the Sink node606includes a resource data type input606B that includes a reference to an existing resource (e.g., a link to a resource in a library created in operation302) that is removed from the game state of the player when the Sink node606is triggered.

In accordance with an embodiment, the Sink node606may include a Control Flow input606A that triggers the Sink node606. In accordance with an embodiment, the Sink node606may include a Control Flow output606E to continue a flow after the Sink node606removes the resource items. In accordance with an embodiment, the Sink node606may have an Boolean data type input606D that allows a negative value of an amount of the resource, wherein the Sink node606can lead to a negative amount of the resource in the game state (e.g., if Allow Negative inFIG.6Dis “true”).

In accordance with an embodiment, inputs and outputs of the Sink node606may be defined (e.g., by a user) during operation304, including connecting other node outputs to the Sink node606inputs and connecting, outputs from the Sink node606to other node inputs.

Upgrade Node

In accordance with an embodiment, and shown inFIG.6E, there is provided an Upgrade node608, wherein the Upgrade node608is used to build an upgrade system within a game model graph, by evaluating an input Condition Result. In accordance with an embodiment, the Upgrade node608may also include a Control Flow input608A used to trigger the Upgrade node608. In accordance with an embodiment, the Upgrade node608includes a ‘success’ Control Flow output608C that is triggered when the input ‘Condition Result’608B is met, and the Upgrade node608includes a ‘fail’ ControlFlow output608D that is triggered when the ‘Condition Result’608B is not met.

In accordance with an embodiment, inputs and outputs of the Upgrade node608may be defined (e.g., by a user) during operation304, including connecting other node outputs to the Upgrade node608inputs and connecting outputs from the Upgrade node608to other node inputs.

Weighted Drop Node

In accordance with an embodiment, and shown inFIG.6F, there is provided a Weighted Drop node610, wherein the Weighted Drop node610performs an action that includes selecting one out of a plurality of Drop Weight inputs (described below in the ‘Drop Weight node’ section) for each internal run (e.g., execution) of the Weighted Drop node610(e.g., wherein a number of runs is determined by an input to the Weighted Drop node610). In accordance with an embodiment, each run of the number of runs generates an output Drop Weight. In accordance with an embodiment, a number of Drop Weight inputs for a Weighted Drop node610may be determined when the Weighted Drop node610is added to a game model graph (e.g., by a user during a graph creation). In accordance with an embodiment, there is a minimum of two Drop Weight inputs. In accordance with an embodiment, a number of internal runs to be performed by the Weighted Drop node610is determined by an input610B (e.g., a “# of Runs” input as shown inFIG.6F). In accordance with an embodiment, the selecting of an output is performed by applying a probability distribution to the number of Drop Weight inputs based on a weight of each Drop Weight (e.g., the weight of a drop weight is described below), and selecting one of the Drop Weight inputs as an output. Accordingly, the Weighted Drop node610, may determine a probability weight for an input Drop Weight by using a value associated with the Drop Weight input as weight. For example, consider the example Weighted Drop node610inFIG.6Fthat includes two Drop weight inputs (610C and610D) and that would have two associated Drop Weight values (e.g., during execution when connected to two Drop Weight nodes612described below), wherein a first Drop Weight input610C is associated with a first value and a second Drop Weight input610D is associated with a second value ‘B’. A first probability may be determined for the first Drop Weight input610C that is equal to a percentage of the first value ‘A’ compared to a total value of Drop Weight inputs (e.g., an addition of values ‘A’ and ‘B’), or the first probability=100%בA’/(‘A’+‘B’). Similarly, a second probability may be determined for the second Drop Weight input610D that is equal to a percentage of the second value ‘B’ compared to a total value of Drop Weight inputs (e.g., an addition of values ‘A’ and ‘B’), or the second probability=100%בB’/(‘A’+‘B’). Continuing with the example, during operation, the first Drop Weight input610C is selected during the number of runs610B with a probability of the first probability, and the second Drop Weight input610D is selected during the number of runs610B with a probability of the second probability. Accordingly, a Weighted Drop node610allows for a modeling of quasi-random systems including Lootboxes, reward systems, and enemy encounters.

In accordance with an embodiment, the inputs to a Weighted Drop node610includes the following: a Control Flow input610A to trigger the Weighted Drop node610to select of a drop weight, a number data type (e.g., labeled ‘# of runs’610B) to describe a frequency of how often the Weighted Drop node610runs and selects a Drop Weight internally, and a plurality of Drop Weight data type inputs (e.g., from which the Weighted Drop node610selects from). In accordance with an embodiment, the Weighted Drop node610includes a Control Flow output610E that is triggered when all internal runs of the Weighted Drop node610are finished.

In accordance with an embodiment, inputs and outputs of the Weighted Drop node610may be defined (e.g., by a user) during operation304, including connecting other node outputs to the Weighted Drop node610inputs and connecting outputs from the Weighted Drop node610to other node inputs.

Drop Weight Node

In accordance with an embodiment, and shown inFIG.6G, there is provided a Drop Weight node612, wherein the Drop Weight node612provides a candidate Drop Weight used as an input to a Weighted Drop node610. In accordance with an embodiment, when triggered during operation (e.g., during execution of a game model graph), the Drop Weight node612drops an amount of a resource (e.g., the amount determined by an input612C to the Drop Weight node612) into a game state of a player based on the Drop Weight node612being selected by the Weighted Drop node610(e.g., based on a probability determined by the Weighted Drop node610, wherein the determination is based on a weight value612C of the Drop Weight node612). In accordance with an embodiment, the Drop Weight node612includes the following inputs: a Resource Data type input612A including a reference (e.g., link) to a resource that is added to a game state of a player based on the Drop Weight node612being selected by the Weighted Drop node610, a Number Data type input612B representing the amount number) of resource items that are to be added to the game state of the player, and a Number Data type input612C (e.g., referred to as ‘weight’ inFIG.6G) representing a probability weight that the Drop Weight node612(e.g., and the associated resource612A) is selected by the Weighted Drop node610. For example, the weight number data type input value may be relative to weights associated with other Drop Weights connected to a same Weighted Drop node610(e.g., as described with respect to the Weighted Drop node610above). In accordance with an embodiment, an output612D of the Drop Weight node612is a drop weight object, wherein the Drop Weight object may include the resource612A, the amount.612B, and the weight612C.

In accordance with an embodiment, an example of a Weighted Drop node610and a Drop Weight node612is shown inFIG.7. As part of the example shown inFIG.7, consider a Weighted Drop node720(e.g., similar to the Weighted Drop node610inFIG.6F) with the following three Drop Weight node inputs: an Archer Drop Weight node700(e.g., similar to Drop Weight node612), a Goblin Drop Weight node704(e.g., similar to Drop Weight node612), and a Knight Drop Weight node708(e.g., similar to Drop Weight node612) for dropping resources associated with Archers, Goblins and Knights respectively (e.g., with Archers, Goblins and Knights being resources within a game). Furthermore, according to the example shown inFIG.7, the three Drop Weight nodes (700,704, and708) have weights of 3, 6, and 9 for dropping Archers, Goblins, and Knights respectively. Accordingly, a probability (e.g., 3/18 or ˜16.667%) of dropping an Archer is half of a probability (e.g., 6/18 or ˜33.333%) of dropping a Goblin, whereas a probability (e.g., 9/18 or 50%) of dropping a Knight is three times as high as dropping an Archer.

In accordance with an embodiment, inputs and outputs of the Drop Weight node612may be defined (e.g., by a user) during operation304, including connecting other node outputs to the Drop weight node612inputs and connecting outputs from the Drop Weight node612to other node inputs.

Time Limited Amount Node

In accordance with an embodiment, and shown inFIG.6H, there is provided a Time Limited Amount node614, wherein the Time Limited Amount node614provides a timer as a means to control a functioning of a second node over time (e.g., to control an amount of production of a resource over time via a Faucet node604, to control an amount of reduction of a resource over time via a Sink node606, and the like). For example, the Time Limited Amount node614may be used in combination with a second node (e.g., with a Faucet node604), to control how much the second node produces (e.g., of a resource) in a given time frame (e.g., wherein the time frame is described in the Time Limited Amount node614).

In accordance with an embodiment, the Time Limited Amount node614includes the following inputs: a Control Flow input614A to trigger (e.g., reset) the Time Limited Amount node614and output an amount, a number data type input614B representing the amount that is produced by the Time Limited Amount node614every time it is triggered (e.g., unless a limit is reached), a number data type input614C representing a limit for an aggregated amount that can be produced since a last reset (e.g., triggering), and a number data type input614D representing a reset time (e.g., in seconds) after which the produced amount614B is set back to 0 (e.g., to create a pause of production). In accordance with an embodiment, the Time Limited Amount node614includes a number data type output614E equal to the amount614B and passes it on (e.g., to a next node in a game model graph) if the limit614C has not yet been reached.

In accordance with an embodiment, inputs and outputs of the Time Limited Amount node614may be defined (e.g., by a user) during operation304, including connecting other node outputs to the Time Limited Amount node614inputs and connecting outputs from the Time Limited Amount node614to other node inputs.

Weighted Random Node

In accordance with an embodiment, and shown inFIG.6I, there is provided an Weighted Random node616, wherein the Weighted Random node616provides a means of producing a plurality of weighted flow control outputs (616E,616F, and616G), wherein only one of the outputs is triggered at a time (e.g., with each input triggering of the Weighted Random node616). In accordance with an embodiment, the Weighted Random node616includes the following inputs: a Control Flow input616A to randomly trigger one of the plurality of weighted Control Flow outputs (616E,616F, and616G), and a corresponding plurality of number data inputs (616B,616C, and616D) representing weights (e.g., which can be converted to probabilities) for each corresponding output to be triggered. WhileFIG.6Ishows 3 number data inputs (616B,616C, and616D) and 3 corresponding weighted Control Flow outputs (616E,616F, and616G), there can be any number of corresponding inputs and outputs. For example, in accordance with an embodiment, the number of inputs/outputs can be configured directly within the Weighted Random node616UI.

In accordance with an embodiment, inputs and outputs of the Weighted Random node616may be defined (e.g., by a user) during operation304, including connecting other node outputs to the Weighted Random node616inputs and connecting outputs from the Weighted Random node616to other node inputs.

Activate Node

In accordance with an embodiment, and shown inFIG.6J, there is provided an Activate node618, wherein the Activate node618provides a means of controlling execution flow within a game model graph. The Activate node618controls a time interval for how often a Control Flow output618C is activated (e.g., triggered.). The time interval may be provided in any time unit (e.g., seconds, minutes, hours, days or the like). In accordance with an embodiment, the Activate node618includes the following inputs: a number type input618A representing a time interval value in which the Activate node618trigger (e.g., during operation when a game model graph is executed), and a number type input618B representing a time unit for the time interval (e.g., seconds, minutes, hours, days). In accordance with an embodiment, the Activate node618includes a Control Flow output that is triggered at the given time intervals (e.g., provided by the input618A).

In accordance with an embodiment, inputs and outputs of the Activate node618may be defined (e.g., by a user) during operation304, including connecting other node outputs to the Activate node618inputs and connecting outputs from the Activate node618to other node inputs.

Condition Node

In accordance with an embodiment, and shown inFIG.6K, there is provided an Condition node620, wherein the Condition node620provides a means of specifying a resource-based condition to guard segments of a game economy flow. The Condition node620output6200is triggered if the Condition node620receives a specified amount of a specified resource which are both input to the Condition node620. Optionally, based on a ‘Consume’ number type Boolean input620C being true, the resource can be consumed during a triggering of the Condition node620.

In accordance with an embodiment, a Condition node620may include the following inputs: a resource data type input620A that includes a reference to a resource (e.g., the specified resource), a number data type input620B (‘Required’) that represents an amount of the resource620A that is required for the condition to apply (e.g., the specified amount) and a number data Boolean type input (‘Consume’) to signify whether the required amount of the resource should be consumed when the condition applies. In accordance with an embodiment, the Condition node620provides an output condition620D (e.g., as a condition data type) which is a Condition object to be evaluated later in the context of the Control Flow.

In accordance with an embodiment, several Condition nodes620can be combined by a Condition Combiner node622(described below). Accordingly, a Condition node620is evaluated in a deferred way via a Condition Combiner node622, whereby a resource is only consumed if a combined conditional construct within a Condition Combiner that includes the Condition node620applies. In accordance with an embodiment, a Condition may be used with the following nodes a Condition Combiner node622, an Upgrade node608, a Core Game node600, and a Core Game Progression node602.

In accordance with an embodiment, inputs and outputs of the Condition node620may be defined (e.g., by a user) during, operation304, including connecting other node outputs to the Condition node620inputs and connecting outputs from the Condition node620to other node inputs.

Condition Combiner Node

In accordance with an embodiment, and shown inFIG.6L, there is provided a Condition Combiner node622that provides a means to combine a plurality of Conditions using Boolean operators (e.g., including AND and OR operators), wherein the plurality of conditions originate in Condition nodes620. An output is generated when the input conditions satisfy the operator function. For example, using the AND operator means that all input conditions must apply (e.g., all input conditions are met) in order to have a combined output result that applies (e.g., output condition that is met). Using the OR operator means that at least one of the input conditions has to apply (e.g., be met) in order to have a combined output result that applies (e.g., output condition that is met). The Condition combiner622may use any Boolean operator and is not limited to AND and OR operators. In accordance with an embodiment, a type of operator and a number of inputs for a Condition combiner622can be selected (e.g., via a UI).

In accordance with an embodiment, the Condition Combiner node622provides an output combined condition (e.g., as a condition data type) which is a Condition object to be evaluated later in the context of the Control Flow. The output combined condition is a condition statement that combines the input conditions with the operator function. For example, based on condition1622A and condition2622B being satisfied and the operator being AND (e.g., as inFIG.6L), the output condition would be a combined condition of ‘condition1’ AND ‘condition2’.

In accordance with an embodiment, Condition Combiner node622may be used with the following nodes: a Condition Combiner node622, an Upgrade node608, a Core Game node600, and a Core Game Progression node602. In accordance with an embodiment, inputs and outputs of the Condition Combiner node622may be defined (e.g., by a user) during operation304, including connecting other node outputs to the Condition Combiner node622inputs and connecting outputs from the Condition Combiner node622to other node inputs.

Get Amount Node

In accordance with an embodiment, and shown inFIG.6M, there is provided a Get Amount node624, wherein the Get Amount node624provides a means of accessing (e.g., retrieving) a value that represents an amount of a resource (e.g., specified as an input to the node624). The Get Amount node624may query a library to retrieve the value. For example, if there were 26 coins in a library, the Get Amount node624would retrieve the value 26. In accordance with an embodiment, based on the resource being an inventory with several items, the amount may be a value representing an aggregated amount of all items. In accordance with an embodiment, the Get Amount node624may have a resource input624A that includes a reference to a resource (e.g., from a library) of which an amount of the resource is going to be determined (e.g., looked up in a database). In accordance with an embodiment, the Get Amount node624may have a number output representing the determined amount of the resource.

In accordance with an embodiment, inputs and outputs of the Get Amount node624may be defined (e.g., by a user) during operation304, including connecting other node outputs to the Get Amount node624inputs and connecting outputs from the Get Am punt node624to other node inputs.

Set Amount Node