U.S. Pat. No. 11,508,137

STORAGE MEDIUM STORING INFORMATION PROCESSING PROGRAM, INFORMATION PROCESSING APPARATUS, INFORMATION PROCESSING SYSTEM, AND INFORMATION PROCESSING METHOD FOR ENLARGING/REDUCING AN OBJECT IN A VIRTUAL SPACE

AssigneeNINTENDO CO., LTD.

Issue DateMarch 6, 2020

Illustrative Figure

Abstract

A three-dimensional object for which three orthogonal object axes are set is disposed in a virtual space. One or two of the three object axes are determined as an enlargement/reduction axis, based on an orientation of the three-dimensional object in the virtual space. A user's operation input is associated with the enlargement/reduction axes. The three-dimensional object is at least enlarged or reduced based on the association of the user's operation input with the enlargement/reduction axes.

Description

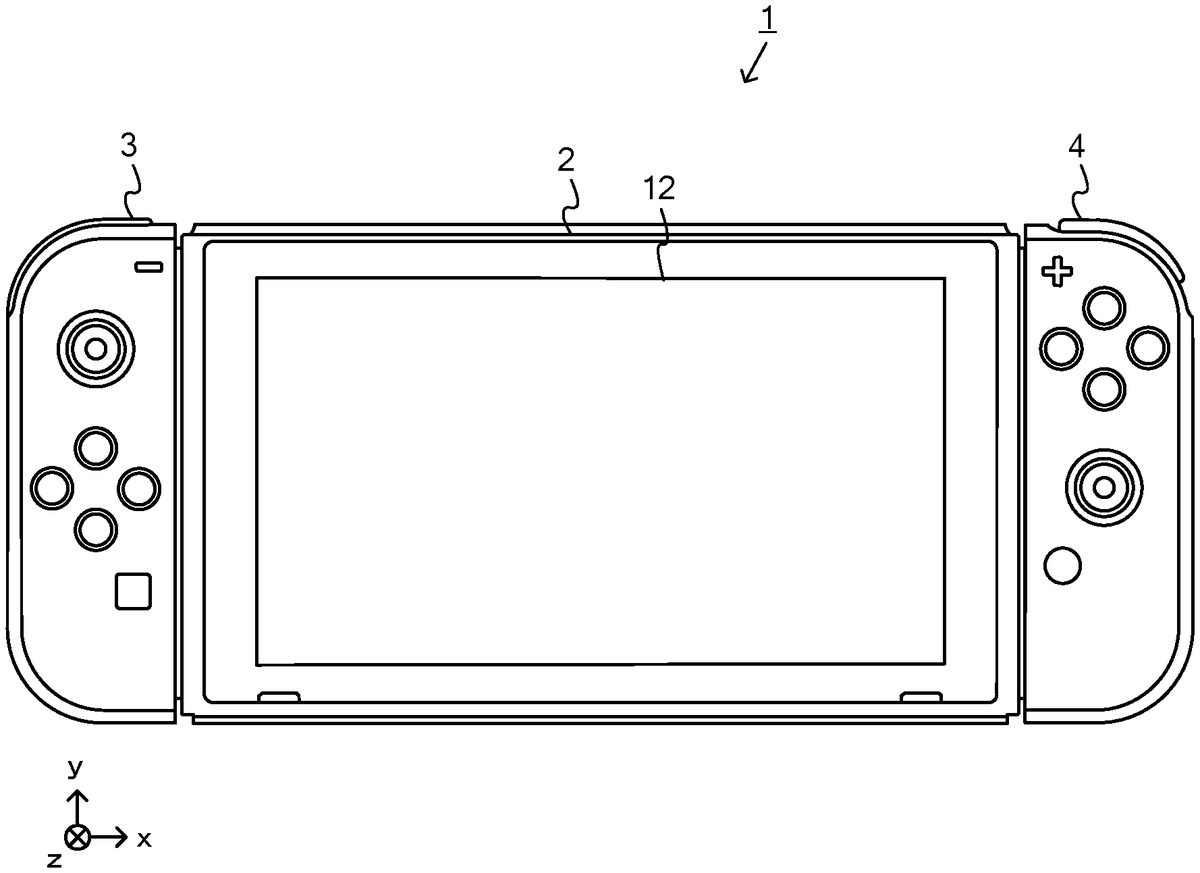

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS An information processing system according to this non-limiting example will now be described. The information processing system of this non-limiting example, which is, for example, a game system1, includes a main body apparatus (information processing apparatus; in this non-limiting example, serving as a game device main body)2, and a left controller3and a right controller4. The left controller3and the right controller4are attachable to and detachable from the main body apparatus2. That is, when the left controller3and the right controller4are attached to the main body apparatus2, and the game system1can be used as a unified apparatus. Also in the game system1, the main body apparatus2and the left controller3and the right controller4can be used separately from each other (seeFIG. 2). A hardware configuration of the game system1of this non-limiting example will now be described before the control of the game system1of this non-limiting example is described FIG. 1is a diagram showing a non-limiting example of the state that the left controller3and the right controller4are attached to the main body apparatus2. As shown inFIG. 1, each of the left controller3and the right controller4is attached to and unified with the main body apparatus2. The main body apparatus2is an apparatus for performing various processes (e.g., game processing) in the game system1. The main body apparatus2includes a display12. Each of the left controller3and the right controller4is an apparatus including operation sections with which a user provides inputs. FIG. 2is a diagram showing a non-limiting example of the state that each of the left controller3and the right controller4is detached from the main body apparatus2. As shown inFIGS. 1 and 2, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. It should be noted that hereinafter, the left controller3and the right controller4will occasionally be referred to collectively ...

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS

An information processing system according to this non-limiting example will now be described. The information processing system of this non-limiting example, which is, for example, a game system1, includes a main body apparatus (information processing apparatus; in this non-limiting example, serving as a game device main body)2, and a left controller3and a right controller4. The left controller3and the right controller4are attachable to and detachable from the main body apparatus2. That is, when the left controller3and the right controller4are attached to the main body apparatus2, and the game system1can be used as a unified apparatus. Also in the game system1, the main body apparatus2and the left controller3and the right controller4can be used separately from each other (seeFIG. 2). A hardware configuration of the game system1of this non-limiting example will now be described before the control of the game system1of this non-limiting example is described

FIG. 1is a diagram showing a non-limiting example of the state that the left controller3and the right controller4are attached to the main body apparatus2. As shown inFIG. 1, each of the left controller3and the right controller4is attached to and unified with the main body apparatus2. The main body apparatus2is an apparatus for performing various processes (e.g., game processing) in the game system1. The main body apparatus2includes a display12. Each of the left controller3and the right controller4is an apparatus including operation sections with which a user provides inputs.

FIG. 2is a diagram showing a non-limiting example of the state that each of the left controller3and the right controller4is detached from the main body apparatus2. As shown inFIGS. 1 and 2, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. It should be noted that hereinafter, the left controller3and the right controller4will occasionally be referred to collectively as a “controller”.

FIG. 3is six orthogonal views showing a non-limiting example of the main body apparatus2. As shown inFIG. 3, the main body apparatus2includes an approximately plate-shaped housing11. In this non-limiting example, a main surface (in other words, a surface on a front side, i.e., a surface on which the display12is provided) of the housing11has a generally rectangular shape.

It should be noted that the shape and the size of the housing11are optional. As a non-limiting example, the housing11may be of a portable size. Further, the main body apparatus2alone or the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2may function as a mobile apparatus. The main body apparatus2or the unified apparatus may function as a handheld apparatus or a portable apparatus.

As shown inFIG. 3, the main body apparatus2includes the display12, which is provided on the main surface of the housing11. The display12displays an image generated by the main body apparatus2. In this non-limiting example, the display12is a liquid crystal display device (LCD). The display12, however, may be a display device of any type.

In addition, the main body apparatus2includes a touch panel13on the screen of the display12. In this non-limiting example, the touch panel13allows multi-touch input (e.g., a capacitive touch panel). It should be noted that the touch panel13may be of any suitable type, e.g., it allows single-touch input (e.g., a resistive touch panel).

The main body apparatus2includes a speaker (i.e., a speaker88shown inFIG. 6) inside the housing11. As shown inFIG. 3, speaker holes11aand11bare formed in the main surface of the housing11. The speaker88outputs sounds through the speaker holes11aand11b.

The main body apparatus2also includes a left-side terminal17that enables wired communication between the main body apparatus2and the left controller3, and a right-side terminal21that enables wired communication between the main body apparatus2and the right controller4.

As shown inFIG. 3, the main body apparatus2includes a slot23. The slot23is provided on an upper side surface of the housing11. The slot23is so shaped as to allow a predetermined type of storage medium to be attached to the slot23. The predetermined type of storage medium is, for example, a dedicated storage medium (e.g., a dedicated memory card) for the game system1and an information processing apparatus of the same type as the game system1. The predetermined type of storage medium is used to store, for example, data (e.g., saved data of an application or the like) used by the main body apparatus2and/or a program (e.g., a program for an application or the like) executed by the main body apparatus2. Further, the main body apparatus2includes a power button28.

The main body apparatus2includes a lower-side terminal27. The lower-side terminal27allows the main body apparatus2to communicate with a cradle. In this non-limiting example, the lower-side terminal27is a USB connector (more specifically, a female connector). When the unified apparatus or the main body apparatus2alone is placed on the cradle, the game system1can display, on a stationary monitor, an image that is generated and output by the main body apparatus2. Also, in this non-limiting example, the cradle has the function of charging the unified apparatus or the main body apparatus2alone, being placed thereon. The cradle also functions as a hub device (specifically, a USB hub).

The main body apparatus2includes an illuminance sensor29. In this non-limiting example, the illuminance sensor29, which is provided under the main surface of the housing11, detects the illuminance (brightness) of light entering from the main surface of the housing11. Note that the display12can be adjusted to an appropriate brightness for displaying an image according to the illuminance of light detected by the illuminance sensor29. In this non-limiting example, it can be determined whether or not the main body apparatus2is attached to a goggles device described below, based on the detected illuminance.

FIG. 4is six orthogonal views showing a non-limiting example of the left controller3. As shown inFIG. 4, the left controller3includes a housing31. In this non-limiting example, the housing31has a vertically long shape, e.g., is shaped to be long in an up-down direction (i.e., a y-axis direction shown inFIGS. 1 and 4). In the state that the left controller3is detached from the main body apparatus2, the left controller3can also be held in the orientation in which the left controller3is vertically long. The housing31has such a shape and a size that when held in the orientation in which the housing31is vertically long, the housing31can be held with one hand, particularly the left hand. Further, the left controller3can also be held in the orientation in which the left controller3is horizontally long. When held in the orientation in which the left controller3is horizontally long, the left controller3may be held with both hands.

The left controller3includes an analog stick32. As shown inFIG. 4, the analog stick32is provided on a main surface of the housing31. The analog stick32can be used as a direction input section with which a direction can be input. The user tilts the analog stick32and thereby can input a direction corresponding to the direction of the tilt (and input a magnitude corresponding to the angle of the tilt). It should be noted that the left controller3may include a directional pad, a slide stick that allows a slide input, or the like as the direction input section, instead of the analog stick. Further, in this non-limiting example, it is possible to provide an input by pressing the analog stick32.

The left controller3includes various operation buttons. The left controller3includes four operation buttons33to36(specifically, a right direction button33, a down direction button34, an up direction button35, and a left direction button36) on the main surface of the housing31. Further, the left controller3includes a record button37and a “−”(minus) button47. The left controller3includes a first L-button38and a ZL-button39in an upper left portion of a side surface of the housing31. Further, the left controller3includes a second L-button43and a second R-button44, on the side surface of the housing31on which the left controller3is attached to the main body apparatus2. These operation buttons are used to give instructions depending on various programs (e.g., an OS program and an application program) executed by the main body apparatus2.

The left controller3also includes a terminal42that enables wired communication between the left controller3and the main body apparatus2.

FIG. 5is six orthogonal views showing a non-limiting example of the right controller4. As shown inFIG. 5, the right controller4includes a housing51. In this non-limiting example, the housing51has a vertically long shape, e.g., is shaped to be long in the up-down direction. In the state that the right controller4is detached from the main body apparatus2, the right controller4can also be held in the orientation in which the right controller4is vertically long. The housing51has such a shape and a size that when held in the orientation in which the housing51is vertically long, the housing51can be held with one hand, particularly the right hand. Further, the right controller4can also be held in the orientation in which the right controller4is horizontally long. When held in the orientation in which the right controller4is horizontally long, the right controller4may be held with both hands.

Similarly to the left controller3, the right controller4includes an analog stick52as a direction input section. In this non-limiting example, the analog stick52has the same configuration as that of the analog stick32of the left controller3. Further, the right controller4may include a directional pad, a slide stick that allows a slide input, or the like, instead of the analog stick. Further, similarly to the left controller3, the right controller4includes four operation buttons53to56(specifically, an A-button53, a B-button54, an X-button55, and a Y-button56) on a main surface of the housing51. Further, the right controller4includes a “+” (plus) button57and a home button58. Further, the right controller4includes a first R-button60and a ZR-button61in an upper right portion of a side surface of the housing51. Further, similarly to the left controller3, the right controller4includes a second L-button65and a second R-button66.

Further, the right controller4includes a terminal64for allowing the right controller4to perform wired communication with the main body apparatus2.

FIG. 6is a block diagram showing a non-limiting example of an internal configuration of the main body apparatus2. The main body apparatus2includes components81to91,97, and98shown inFIG. 6in addition to the components shown inFIG. 3. Some of the components81to91,97, and98may be implemented as electronic parts on an electronic circuit board, which is contained in the housing11.

The main body apparatus2includes a processor81. The processor81is an information processor for executing various types of information processing to be executed by the main body apparatus2. For example, the CPU81may include only a central processing unit (CPU), or may be a system-on-a-chip (SoC) having a plurality of functions such as a CPU function and a graphics processing unit (GPU) function. The processor81executes an information processing program (e.g., a game program) stored in a storage section (specifically, an internal storage medium such as a flash memory84, an external storage medium that is attached to the slot23, or the like), thereby executing the various types of information processing.

The main body apparatus2includes a flash memory84and a dynamic random access memory (DRAM)85as examples of internal storage media built in itself. The flash memory84and the DRAM85are coupled to the CPU81. The flash memory84is mainly used to store various data (or programs) to be saved in the main body apparatus2. The DRAM85is used to temporarily store various data used in information processing.

The main body apparatus2includes a slot interface (hereinafter abbreviated to “I/F”)91. The slot I/F91is coupled to the processor81. The slot I/F91is coupled to the slot23, and reads and writes data from and to a predetermined type of storage medium (e.g., a dedicated memory card) attached to the slot23, in accordance with commands from the processor81.

The processor81reads and writes, as appropriate, data from and to the flash memory84, the DRAM85, and each of the above storage media, thereby executing the above information processing.

The main body apparatus2includes a network communication section82. The network communication section82is coupled to the processor81. The network communication section82communicates (specifically, through wireless communication) with an external apparatus via a network. In this non-limiting example, as a first communication form, the network communication section82connects to a wireless LAN and communicates with an external apparatus, using a method compliant with the Wi-Fi standard. Further, as a second communication form, the network communication section82wirelessly communicates with another main body apparatus2of the same type, using a predetermined communication method (e.g., communication based on a particular protocol or infrared light communication). It should be noted that the wireless communication in the above second communication form achieves the function of allowing so-called “local communication”, in which the main body apparatus2can wirelessly communicate with another main body apparatus2located in a closed local network area, and the plurality of main body apparatuses2directly communicate with each other to exchange data.

The main body apparatus2includes a controller communication section83. The controller communication section83is coupled to the processor81. The controller communication section83wirelessly communicates with the left controller3and/or the right controller4. The main body apparatus2may communicate with the left and right controllers3and4using any suitable communication method. In this non-limiting example, the controller communication section83performs communication with the left and right controllers3and4in accordance with the Bluetooth (registered trademark) standard.

The processor81is coupled to the left-side terminal17, the right-side terminal21, and the lower-side terminal27. When performing wired communication with the left controller3, the processor81transmits data to the left controller3via the left-side terminal17and also receives operation data from the left controller3via the left-side terminal17. Further, when performing wired communication with the right controller4, the processor81transmits data to the right controller4via the right-side terminal21and also receives operation data from the right controller4via the right-side terminal21. Further, when communicating with the cradle, the processor81transmits data to the cradle via the lower-side terminal27. As described above, in this non-limiting example, the main body apparatus2can perform both wired communication and wireless communication with each of the left and right controllers3and4. Further, when the unified apparatus obtained by attaching the left and right controllers3and4to the main body apparatus2or the main body apparatus2alone is attached to the cradle, the main body apparatus2can output data (e.g., image data or sound data) to a stationary monitor or the like via the cradle.

Here, the main body apparatus2can communicate with a plurality of left controllers3simultaneously (or in parallel). Further, the main body apparatus2can communicate with a plurality of right controllers4simultaneously (or in parallel). Thus, a plurality of users can simultaneously provide inputs to the main body apparatus2, each using a set of left and right controllers3and4. In a non-limiting example, a first user can provide an input to the main body apparatus2using a first set of left and right controllers3and4, and at the same time, a second user can provide an input to the main body apparatus2using a second set of left and right controllers3and4.

The main body apparatus2includes a touch panel controller86that is a circuit for controlling the touch panel13. The touch panel controller86is coupled between the touch panel13and the processor81. Based on a signal from the touch panel13, the touch panel controller86generates, for example, data indicating a position where a touch input has been performed. Then, the touch panel controller86outputs the data to the processor81.

Further, the display12is coupled to the processor81. The processor81displays, on the display12, a generated image (e.g., an image generated by executing the above information processing) and/or an externally obtained image.

The main body apparatus2includes a codec circuit87and speakers (specifically, a left speaker and a right speaker)88. The codec circuit87is coupled to the speakers88and an audio input/output terminal25and also coupled to the processor81. The codec circuit87is for controlling the input and output of audio data to and from the speakers88and the sound input/output terminal25.

Further, the main body apparatus2includes an acceleration sensor89. In this non-limiting example, the acceleration sensor89detects the magnitudes of accelerations along predetermined three axial (e.g., x-, y-, and z-axes shown inFIG. 1) directions. It should be noted that the acceleration sensor89may detect an acceleration along one axial direction or accelerations along two axial directions.

Further, the main body apparatus2includes an angular velocity sensor90. In this non-limiting example, the angular velocity sensor90detects angular velocities about predetermined three axes (e.g., the x-, y-, and z-axes shown inFIG. 1). It should be noted that the angular velocity sensor90may detect an angular velocity about one axis or angular velocities about two axes.

The acceleration sensor89and the angular velocity sensor90are coupled to the processor81. The detection results of the acceleration sensor89and the angular velocity sensor90are output to the processor81. Based on the detection results of the acceleration sensor89and the angular velocity sensor90, the processor81can calculate information about a motion and/or orientation of the main body apparatus2.

The illuminance sensor29is coupled to the processor81. The illuminance sensor29outputs the result of detection to the processor81. Based on the detection result of the illuminance sensor29, the processor81can calculate information about brightness around the main body apparatus2. As a non-limiting example, when the main body apparatus2is attached to a goggles device described below, brightness around the main body apparatus2is reduced. Therefore, based on the detection result of the illuminance sensor29, the processor81can determine whether or not the main body apparatus2is attached to the goggles device.

The main body apparatus2includes a power control section97and a battery98. The power control section97is coupled to the battery98and the processor81. Further, although not shown, the power control section97is coupled to components of the main body apparatus2(specifically, components that receive power supplied from the battery98, the left-side terminal17, and the right-side terminal21). Based on a command from the processor81, the power control section97controls the supply of power from the battery98to each of the above components.

Further, the battery98is coupled to the lower-side terminal27. When an external charging device (e.g., the cradle) is coupled to the lower-side terminal27, and power is supplied to the main body apparatus2via the lower-side terminal27, the battery98is charged with the supplied power.

FIG. 7is a block diagram showing non-limiting examples of the internal configurations of the main body apparatus2, the left controller3, and the right controller4. It should be noted that the details of the internal configuration of the main body apparatus2are shown inFIG. 6and therefore are omitted inFIG. 7.

The left controller3includes a communication control section101, which communicates with the main body apparatus2. As shown inFIG. 7, a communication control section101is coupled to components including the terminal42. In this non-limiting example, the communication control section101can communicate with the main body apparatus2through both wired communication via the terminal42and wireless communication not via the terminal42. The communication control section101controls the method for communication performed by the left controller3with the main body apparatus2. That is, when the left controller3is attached to the main body apparatus2, the communication control section101communicates with the main body apparatus2via the terminal42. Further, when the left controller3is detached from the main body apparatus2, the communication control section101wirelessly communicates with the main body apparatus2(specifically, the controller communication section83). The wireless communication between the communication control section101and the controller communication section83is performed in accordance with the Bluetooth (registered trademark) standard, for example.

Further, the left controller3includes a memory102such as a flash memory. The communication control section101includes, for example, a microcomputer (or a microprocessor) and executes firmware stored in the memory102, thereby performing various processes.

The left controller3includes buttons103(specifically, the buttons33to39,43,44, and47). Further, the left controller3includes the analog stick (“stick” inFIG. 7)32. Each of the buttons103and the analog stick32outputs information regarding an operation performed on itself to the communication control section101repeatedly at appropriate timing.

The left controller3includes inertial sensors. Specifically, the left controller3includes an acceleration sensor104. Further, the left controller3includes an angular velocity sensor105. In this non-limiting example, the acceleration sensor104detects the magnitudes of accelerations along predetermined three axial (e.g., x-, y, and z-axes shown inFIG. 4) directions. It should be noted that the acceleration sensor104may detect an acceleration along one axial direction or accelerations along two axial directions. In this non-limiting example, an angular velocity sensor105detects angular velocities about predetermined three axes (e.g., the x-, y, and z-axes shown inFIG. 4). It should be noted that the angular velocity sensor105may detect an angular velocity about one axis or angular velocities about two axes. Each of the acceleration sensor104and the angular velocity sensor105is coupled to the communication control section101. Then, the detection results of the acceleration sensor104and the angular velocity sensor105are output to the communication control section101repeatedly at appropriate timing.

The communication control section101acquires information regarding an input (specifically, information regarding an operation or the detection result of the sensor) from each of input sections (specifically, the buttons103, the analog stick32, and the sensors104and105). The communication control section101transmits operation data including the acquired information (or information obtained by performing predetermined processing on the acquired information) to the main body apparatus2. It should be noted that the operation data is transmitted repeatedly, once every predetermined time. It should be noted that the interval at which the information regarding an input is transmitted from each of the input sections to the main body apparatus2may or may not be the same.

The above operation data is transmitted to the main body apparatus2, whereby the main body apparatus2can obtain inputs provided to the left controller3. That is, the main body apparatus2can determine operations on the buttons103and the analog stick32based on the operation data. Further, the main body apparatus2can calculate information regarding the motion and/or the orientation of the left controller3based on the operation data (specifically, the detection results of the acceleration sensor104and the angular velocity sensor105).

The left controller3includes a power supply section108. In this non-limiting example, the power supply section108includes a battery and a power control circuit. Although not shown inFIG. 8, the power control circuit is coupled to the battery and also coupled to components of the left controller3(specifically, components that receive power supplied from the battery).

As shown inFIG. 7, the right controller4includes a communication control section111, which communicates with the main body apparatus2. Further, the right controller4includes a memory112, which is coupled to the communication control section111. The communication control section111is coupled to components including the terminal64. The communication control section111and the memory112have functions similar to those of the communication control section101and the memory102, respectively, of the left controller3. Thus, a communication control section111can communicate with the main body apparatus2through both wired communication via the terminal64and wireless communication not via the terminal64(specifically, communication compliant with the Bluetooth (registered trademark) standard). The communication control section111controls the method for communication performed by the right controller4with the main body apparatus2.

The right controller4includes input sections similar to the input sections of the left controller3. Specifically, the right controller4includes buttons113, the analog stick52, and inertial sensors (an acceleration sensor114and an angular velocity sensor115). These input sections have functions similar to those of the input sections of the left controller3and operate similarly to the input sections of the left controller3.

The right controller4includes a power supply section118. The power supply section118has a function similar to that of the power supply section108of the left controller3and operates similarly to the power supply section108.

As described above, in the game system1of this non-limiting example, the left controller3and the right controller4are removable from the main body apparatus2. The game system1will now be described, assuming that the main body apparatus2outputs an image with the left controller3and the right controller4detached from the main body apparatus2. In the case where the game system1is used to perform an operation on an application (e.g., an editor for generating and editing a virtual space, and a game application) with the left controller3and the right controller4detached from the main body apparatus2, a single user may use the main body apparatus2and the left controller3or the right controller4.

FIG. 8is a diagram showing a non-limiting example of how a single user uses the game system1by operating the main body apparatus2and the right controller4with the left controller3and the right controller4detached from the main body apparatus2. As shown inFIG. 8, the user can hold the main body apparatus2with their left hand, and the right controller4with their right hand, and perform an operation while viewing an image displayed on the main body apparatus2.

For example, in this non-limiting example, the user holds the right controller4with a single hand (e.g., the right hand), pointing toward the display12of the main body apparatus2in the longitudinal direction (upward direction (the positive direction of the y-axis) shown inFIG. 1) of the elongated, generally board-shaped right controller4, with the side surface (side surface on which a slider62is provided) of the right controller4which is in contact with the main body apparatus2when attached to the main body apparatus2facing leftward, and the main surface (surface on which the analog stick52and the like are provided) of the right controller4facing upward. In other words, the right controller4is held by a single hand of the user with the positive direction of the y-axis of the right controller4pointing ahead of the user, and the positive direction of the z-axis pointing upward.

In addition, in this non-limiting example, the user holds the main body apparatus2with the other hand (e.g., the left hand) with the longitudinal direction (the direction of the x-axis shown inFIG. 1) of the horizontally elongated, generally board-shaped main body apparatus2in a horizontal position, and the main surface (surface on which the display12and the like are provided) of the main body apparatus2facing the user. The main body apparatus2is held by the other hand of the user with the negative direction of the z-axis pointing toward the user, and the direction of the x-axis extending horizontally with respect to the ground.

Thus, generation/editing of a virtual space and game play using a virtual space are performed according to an operation of each operation button or a stick of the right controller4, being held by one hand of the user, or an operation of moving the body of the right controller4. For example, the acceleration sensor114of the right controller4can detect an acceleration in each of the x-, y-, and z-axis directions as an operation input. The angular velocity sensor115can detects an angular velocity around each of the x-, y-, and z-axis directions as an operation input. The accelerations and/or angular velocities thus detected can be used to calculate the motion and orientation of the body of the right controller4. In this non-limiting example, as a non-limiting example, an object disposed in a virtual space displayed on the display12can be deformed (enlarged/reduced) according to the user's operation using the right controller4.

In addition, in this non-limiting example, a display range displayed on the display12can be changed according to an operation of moving the body of the main body apparatus2, being held by the other hand of the user. For example, the acceleration sensor89of the main body apparatus2can detect an acceleration in each of the x-, y-, and z-axis directions as an operation input. The angular velocity sensor90can detects an angular velocity around each of the x-, y-, and z-axis directions as an operation input. Therefore, the motion and orientation of the body of the main body apparatus2can be calculated using the acceleration and/or the angular velocity. In this non-limiting example, as a non-limiting example, the orientation of a virtual camera that is provided in a virtual space so that an image is displayed on the display12can be changed according to a change in the orientation of the main body apparatus2in the real space. Specifically, by changing a virtual camera disposed at a fixed position in a virtual space in the same manner as that in which the orientation of the main body apparatus2is changed in the real space, an image of the virtual space can be produced which is taken as if the virtual space were viewed through the display12of the main body apparatus2. Note that as another non-limiting example, in addition to the orientation change, the position of the virtual camera in the virtual space may be moved (position change) according to the movement (position change) of the main body apparatus2in the real space.

FIG. 8shows a non-limiting example image that is displayed in a process of deforming (enlarging/reducing) an object in a virtual space by operating the main body apparatus2and the right controller4. As shown inFIG. 8, in this non-limiting example process, an image in which a plurality of objects (a first object OBJ1and a second object OBJ2in the non-limiting example ofFIG. 8) are disposed in a virtual space is displayed on the main body apparatus2. Thereafter, the user can set any one of the plurality of objects disposed in the virtual space as an object to be operated, or deform (enlarge/reduce) the set object to be operated in the virtual space, by operating the analog stick52and the operation buttons53-56of the right controller4, or moving the body of the right controller4. The user can also change the orientation of the virtual camera set in the virtual space by changing the orientation of the main body apparatus2, and thereby change the range of the virtual space that is displayed the display12.

For example, the display12displays a mark T indicating a position (pointed position) that is pointed by the body of the right controller4. Therefore, the position where the mark T is pointed by the body of the right controller4serves as a pointed position where the user inputs a position on the display12. As a non-limiting example, the mark T is displayed at an initial position (e.g., the center of the display12) on the display12before the displayed position is changed according to a change in the orientation of the body of the right controller4. When the user performs a predetermined holding operation (e.g., an operation of pressing down the operation button (A-button)53and an operation of pressing down the operation button (R-button)60) using the right controller4, an object OBJ displayed and overlaid by the pointed position indicated by the mark T at the time of that operation is set as an object to be operated, and a position overlaid by the pointed position indicated by the mark T at the time of that operation is set as a hold position for deforming the object OBJ. Thus, the pointed position by the user's operation, when the holding operation is performed thereon, comes to serve as a hold position for deforming an object OBJ. Thereafter, during a period of time that the holding operation is continued, the state that the object to be operated is set and the hold position is set for that object to be operated is continued. When the holding operation is ended, the state that the object to be operated is set is removed, and the hold position is changed to a normal pointed position. Thus, the hold position indicates a pointed position that is pointed by the user using the body of the right controller4with the holding operation being performed.

The object OBJ set as an object to be operated can be deformed (enlarged/reduced) in the virtual space according to a subsequent operation using the right controller4. For example, in the state that the operation of specifying an object to be operated is continued, when the position indicated by the body of the right controller4is moved upward, downward, leftward, or rightward, the hold position set for the object to be operated in the virtual space is moved upward, downward, leftward, or rightward, so that the object OBJ is deformed. Thereafter, when the holding operation is ended, the deformation of the object OBJ is stopped, so that the final shape of the object OBJ as an object to be operated at the final time point is determined, and the process of deforming an object is removed.

Note that the operation method for deforming an object OBJ set as an object to be operated is not limited to the above operation method. For example, an object OBJ set as an object to be operated may be deformed according to an operation of deflecting the stick52of the right controller4. Alternatively, in the case where an object OBJ is operated using a mouse, an object OBJ set as an object to be operated may be deformed according to a pointed position specified by operating the mouse. Alternatively, in the case where an object OBJ is operated using a touch pad, an object OBJ set as an object to be operated may be deformed according to a pointed position specified by operating the touch pad. Alternatively, in the case where an object OBJ is operated using an operation button (e.g., a directional button), an object OBJ may be deformed stepwise according to the number to times the operation button is pressed down.

In addition, the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2can be operated. In that case, the left controller3and the right controller4are unified with the main body apparatus2, and therefore, it is difficult to perform an operation based on a position pointed by the left controller3and/or the right controller4. Therefore, in the operation method of deforming an object OBJ set as an object to be operated using the unified apparatus, the left and right sticks32and/or52may be used. For example, in the operation of using the unified apparatus, when an operation of pressing down the operation button (A-button)53or the operation button (R-button)60of the right controller4, being attached to the main body apparatus2, is performed, an object OBJ displayed and overlaid by the mark T at the time of the operation is set as an object to be operated, and a hold position is set for the object OBJ. Thereafter, the object OBJ set as an object to be operated is deformed according to an operation of deflecting the stick32of the left controller3and/or the stick52of the right controller4, with the left controller3and the right controller4attached to the main body apparatus2. When the unified apparatus is thus used to perform an operation, the direction of the virtual space displayed on the display12is changed according to the orientation of the unified apparatus in the real space. Therefore, the direction in which the stick is deflected may be changed according to the direction of the virtual space. For example, when the unified apparatus is oriented with the downward direction thereof (the negative direction of the y-axis shown inFIG. 1) pointing in the vertical direction of the real space, the downward direction of the display12(the negative direction of the y-axis shown inFIG. 1) is the vertical direction in the virtual space. Therefore, if an operation of deflecting the stick32of the left controller3and/or the stick52of the right controller4downward (the negative direction of the y-axis shown inFIG. 1) indicates a downward operation instruction in the virtual space displayed on the display12, such an operation is intuitive. Meanwhile, when the unified apparatus is oriented with the right direction thereof (the positive direction of the x-axis shown inFIG. 1) pointing in the vertical direction of the real space, the right direction of the display12(the positive direction of the x-axis shown inFIG. 1) is the vertical direction in the virtual space. Therefore, if an operation of deflecting the stick32of the left controller3and/or the stick52of the right controller4rightward (the positive direction of the x-axis shown inFIG. 1) indicates a downward operation instruction in the virtual space displayed on the display12, such an operation is intuitive.

In this non-limiting example, in the case where an object is to be deformed in a virtual space, one or two enlargement/reduction axes are selected from three object axes set for the object, and an enlargement direction and a reduction direction are specified for each enlargement/reduction axis. A process of deforming an object in a virtual space will now be described with reference toFIGS. 9-19. Note thatFIG. 9is a diagram showing a non-limiting example of object axes set for a rectangular parallelepiped object OBJ.FIG. 10is a diagram showing a non-limiting example of an enlargement direction and reduction direction of each enlargement/reduction axis.FIG. 11is a diagram showing a non-limiting example of enlargement/reduction axes De1and De2in the case where a hold position H is located in an upper left region of the object OBJ.FIG. 12is a diagram showing a non-limiting example of enlargement/reduction axes De1and De2in the case where a hold position H is located in a lower left region of the object OBJ.FIG. 13is a diagram showing a non-limiting example of enlargement/reduction axes De1and De2in the case where a hold position H is located in an upper right region of the object OBJ.FIG. 14is a diagram showing a non-limiting example of enlargement/reduction axes De1and De2in the case where a hold position H is located in a lower right region of the object OBJ.FIG. 15is a diagram showing a non-limiting example of a direction CP pointing from the position of a virtual camera toward the object OBJ, which is used when an enlargement/reduction axis is selected.FIG. 16is a diagram showing a non-limiting example of movement of a hold position H set for the object OBJ.FIG. 17is a diagram showing a non-limiting example of calculation of an enlargement/reduction amount based on a moved hold position H.FIG. 18is a diagram showing a non-limiting example of enlargement/reduction axes De1and De2set for a cylindrical object OBJ.FIG. 19is a diagram showing a non-limiting example of enlargement/reduction axes De1and De2set for a spherical object OBJ.

InFIG. 9, three orthogonal object axes are set for each of a plurality of objects disposed in a virtual space. For example, for a rectangular parallelepiped object OBJ, three object axes Xo, Yo, and Zo are set which pass through a central position (e.g., the center-of-gravity position) P (Ax, Ay, Az) of the object OBJ in the world coordinate system of the virtual space, orthogonally intersecting with each other at the central position P. Specifically, the object axis Xo points in the direction that passes through the centers of a pair of opposite surfaces (e.g., the left and right surfaces) of the rectangular parallelepiped object OBJ and the central position P of the object OBJ. The direction from one surface to the other surface (e.g., from the left surface to the right surface) of the pair of opposite surfaces is set as the positive direction of the object axis Xo. The object axis Yo points in the direction that passes through the centers of another pair of opposite surfaces (e.g., the top and bottom surfaces) of the rectangular parallelepiped object OBJ and the central position P of the object OBJ. The direction from one surface to the other surface (e.g., from the bottom surface to the top surface) of the pair of opposite surfaces is set as the positive direction of the object axis Yo. The object axis Zo points in the direction that passes through the centers of still another pair of opposite surfaces (e.g., the front and rear surfaces) of the rectangular parallelepiped object OBJ and the central position P of the object OBJ. The direction from one surface to the other surface (e.g., from the front surface to the rear surface) of the pair of opposite surfaces is set as the positive direction of the object axis Zo. Note that the central position of an object OBJ may not be the center-of-gravity position and may be any position that serves as a reference for the object OBJ. For example, the central position of an object OBJ may be other reference positions of the object OBJ, such as a central position of a structure and an origin used in generation.

As clearly seen below, two of the three object axes Xo, Yo, and Zo thus set for the rectangular parallelepiped object OBJ are selected and set as an enlargement/reduction axis for deforming (enlarging/reducing) the object OBJ, at least based on the orientation of the object OBJ. Thereafter, based on the position (hold position) where the user takes hold of the object OBJ for deformation, an enlargement direction and a reduction direction are set for the set enlargement/reduction axes. Specifically, based on a positional relationship between a projection position obtained by projecting the hold position onto each enlargement/reduction axis and the reference position of the object OBJ (e.g., the central position P of the object OBJ where the three object axes Xo, Yo, and Zo intersect with each other), the direction from the central position P of the object OBJ toward the projection position along the enlargement/reduction axis is set as an enlargement direction, and the direction from the projection position toward the central position P of the object OBJ along the enlargement/reduction axis is set as a reduction direction. Note that the position on the object OBJ displayed and overlaid by the position (pointed position) on the display12pointed by the user is set as a hold position for the object OBJ. The pointed position may be pointed by a controller (the left controller3and/or the right controller4).

As a non-limiting example, as shown inFIG. 10, it is assumed that two of the three object axes Xo, Yo, and Zo, e.g., the object axes Xo and Yo, are set as an enlargement/reduction axis, and a hold position is set at a position located in the negative direction of the axis Xo and in the positive direction of the axis Yo with respect to the central position P. In such a case, a positional relationship between an intersection point Xh obtained by projecting the hold position perpendicularly onto the axis Xo and the central position P is such that the intersection point Xh is located in the negative direction of the axis Xo with respect to the central position P. Therefore, in the case of the axis Xo, which is one of the enlargement/reduction axes, the direction pointing from the central position P toward the intersection point Xh, i.e., the negative direction of the axis Xo, is the enlargement direction of the enlargement/reduction axis Xo, and the opposite direction (i.e., the positive direction) of the axis Xo is the reduction direction of the enlargement/reduction axis Xo. When the hold position is moved in the enlargement direction of the enlargement/reduction axis Xo, the object OBJ is enlarged in the enlargement direction. When the hold position is moved in the reduction direction of the enlargement/reduction axis Xo, the object OBJ is reduced in the reduction direction. A positional relationship between an intersection point Yh obtained by projecting the hold position perpendicularly onto the axis Yo and the central position P is such that the intersection point Yh is located in the positive direction of the axis Yo with respect to the central position P. Therefore, in the case of the axis Yo, which is the other of the enlargement/reduction axes, the direction pointing from the central position P toward the intersection point Yh, i.e., the positive direction of the axis Yo, is the enlargement direction of the enlargement/reduction axis Yo, and the opposite direction (i.e., the negative direction) of the axis Yo is the reduction direction of the enlargement/reduction axis Yo. When the hold position is moved in the enlargement direction of the enlargement/reduction axis Yo, the object OBJ is enlarged in the enlargement direction. When the hold position is moved in the reduction direction of the enlargement/reduction axis Yo, the object OBJ is reduced in the reduction direction.

Note that the three object axes Xo, Yo, and Zo may not intersect at a single point, or may not intersect at the reference point (central position P) of the object OBJ. The projection position may not be such that the hold position is projected exactly perpendicularly onto an enlargement/reduction axis, and may be a position obtained by projection onto an enlargement/reduction axis according to a predetermined relationship.

Based on the above process, as shown in, for example,FIGS. 11-14, surfaces (in the example ofFIG. 11, the front surface, the right surface, and the top surface) of the rectangular parallelepiped object OBJ seen from the virtual camera disposed in the virtual space are virtually divided into four regions, and an enlargement direction and reduction direction for the object OBJ are set based on the region where the hold position provided by the user's operation is located. For example, the regions are four portions obtained by equally dividing the object OBJ with reference to the center thereof by two planes (a first plane and a second plane). Specifically, the first plane dividing the object OBJ includes one of the three object axes Xo, Yo, and Zo set as one of the enlargement/reduction axes, one of the three object axes Xo, Yo, and Zo not set as an enlargement/reduction axis, and the central position P of the object OBJ. The second plane dividing the object OBJ includes one of the three object axes Xo, Yo, and Zo set as the other enlargement/reduction axis, one of the three object axes Xo, Yo, and Zo not set as an enlargement/reduction axis, and the central position P of the object OBJ. The regions include an upper left region, lower left region, upper right region, and lower right region separated by the first and second planes.

As shown inFIG. 11, it is assumed that a hold position is set in the upper left region of the rectangular parallelepiped object OBJ with the object axes Xo and Yo of the object OBJ set as an enlargement/reduction axis. In this case, for the two set enlargement/reduction axes, the direction thereof toward an upper left region as seen from the central position P of the object OBJ is the direction in which the object OBJ is to be enlarged. For example, in the non-limiting example ofFIG. 11, in the case where a hold position is set in the upper left region of the object OBJ, for which the object axes Xo and Yo are set as an enlargement/reduction axis, the positive direction of the object axis Yo is an enlargement direction, and the negative direction of the object axis Xo is an enlargement direction. In order to show the user the directions in which the object OBJ is to be enlarged, the positive direction of the object axis Yo is represented by a first enlargement direction image De1, and the negative direction of the object axis Xo is represented by a second enlargement direction image De2. As a non-limiting example, the first and second enlargement direction images De1and De2are each in the shape of an arrow extending outward from the intersection point between the corresponding enlargement/reduction axis and the corresponding surface of the object OBJ.

For example, if the hold position H is moved in the directions indicated by the first and second enlargement direction images De1and De2, the object OBJ is enlarged in the directions indicated by the first and second enlargement direction images De1and De2. In addition, if an operation of moving the hold position H in the directions opposite to the directions indicated by the first and second enlargement direction images De1and De2, the object OBJ is reduced in the directions opposite to the directions indicated by the first and second enlargement direction images De1and De2. Specifically, if an operation of moving the hold position H in the direction indicated by the first enlargement direction image De1is performed, the object OBJ is enlarged both in the direction indicated by the first enlargement direction image De1and in the direction opposite to the direction indicated by the first enlargement direction image De1, where the central position P of the object OBJ is the center.

As can be clearly seen fromFIG. 11, in the case where the hold position H is set in the upper left region of the rectangular parallelepiped object OBJ, the state that the hold position H is set on the front surface of the object OBJ, which extends in the directions of the two set enlargement/reduction axes (i.e., the directions of the object axes Xo and Yo), and the state that the hold position H is set on the top surface of the object OBJ, which does not extend in one of the directions of the two enlargement/reduction axes (i.e., perpendicular to the direction of the object axis Yo). Thus, no matter which of the surfaces of the object OBJ having different directions the hold position H is set, the object OBJ is enlarged or reduced in the same two enlargement/reduction axis directions. That is, even in the case where the hold position H is set on a surface of the rectangular parallelepiped object OBJ which is adjacent to the surface of the rectangular parallelepiped object OBJ extending the directions of the two determined enlargement/reduction axes, the object OBJ is enlarged or reduced in the directions of the two enlargement/reduction axes.

As shown inFIG. 12, it is assumed that a hold position is set in the lower left region of a rectangular parallelepiped object OBJ with the object axes Xo and Yo of the object OBJ set as an enlargement/reduction axis. In this case, the direction of each of the two set enlargement/reduction axes which points toward a lower left region as seen from the central position P of the object OBJ is set as a direction in which the object OBJ is to be enlarged. For example, in the non-limiting example ofFIG. 12, in the case where a hold position is set in the lower left region of the object OBJ, for which the object axes Xo and Yo are set as an enlargement/reduction axis, the negative direction of the object axis Yo is an enlargement direction, and the negative direction of the object axis Xo is an enlargement direction. To show the user the directions in which the object OBJ is to be enlarged, the negative direction of the object axis Yo is represented by a first enlargement direction image De1, and the negative direction of the object axis Xo is represented by a second enlargement direction image De2.

As shown inFIG. 13, it is assumed that a hold position is set in the upper right region of a rectangular parallelepiped object OBJ with the object axes Xo and Yo of the object OBJ set as an enlargement/reduction axis. In this case, the direction of each of the two set enlargement/reduction axes which points toward an upper right region as seen from the central position P of the object OBJ is set as a direction in which the object OBJ is to be enlarged. For example, in the non-limiting example ofFIG. 13, in the case where a hold position is set in the upper right region of the object OBJ, for which the object axes Xo and Yo are set as an enlargement/reduction axis, the positive direction of the object axis Yo is an enlargement direction, and the positive direction of the object axis Xo is an enlargement direction. To show the user the directions in which the object OBJ is to be enlarged, the positive direction of the object axis Yo is represented by a first enlargement direction image De1, and the positive direction of the object axis Xo is represented by a second enlargement direction image De2.

As shown inFIG. 14, it is assumed that a hold position is set in the lower right region of a rectangular parallelepiped object OBJ with the object axes Xo and Yo of the object OBJ set as an enlargement/reduction axis. In this case, the direction of each of the two set enlargement/reduction axes which points toward a lower right region as seen from the central position P of the object OBJ is set as a direction in which the object OBJ is to be enlarged. For example, in the non-limiting example ofFIG. 14, in the case where a hold position is set in the lower right region of the object OBJ, for which the object axes Xo and Yo are set as an enlargement/reduction axis, the negative direction of the object axis Yo is an enlargement direction, and the positive direction of the object axis Xo is an enlargement direction. To show the user the directions in which the object OBJ is to be enlarged, the negative direction of the object axis Yo is represented by a first enlargement direction image De1, and the positive direction of the object axis Xo is represented by a second enlargement direction image De2.

Next, a non-limiting example process of selecting two of the three object axes as an enlargement/reduction axis will be described with reference toFIG. 15. As the first stage of the process, based on the position of the virtual camera and the position of an object OBJ in the virtual space, two are selected from three orthogonal world coordinate axes that are an X-axis pointing in horizontal leftward and rightward directions in the virtual space, a Y-axis pointing in vertical upward and downward directions in the virtual space, and a Z-axis pointing in horizontal forward and rearward directions in the virtual space. For example, as shown inFIG. 15, a direction CP is calculated which points from the position (point-of-view position) of the virtual camera in the virtual space toward the central position P of the object OBJ as an object to be operated. In the case where the angle of elevation or angle of depression (an angle of θv in the vertical direction in the world coordinate system) of the direction CP in the virtual space is greater than or equal to a threshold (e.g., greater than or equal to 60°), the X- and Z-axes which are a world coordinate axis are selected. In the case where the horizontal azimuthal angle (an angle of θw in the horizontal direction in the world coordinate system) of the direction CP in the virtual space is within a predetermined angular range (e.g., within 45°) about the Z-axis direction, the X- and the Y-axes which are a world coordinate axis are selected. In the case where the angles of the direction CP do not satisfy any condition, the Y- and Z-axes which are a world coordinate axis are selected.

As the second stage of the process, two object axes that are closer to the two selected world coordinate axes are selected from the three object axes of the object OBJ as an enlargement/reduction axis. For example, three object axes are indicated by Xo, Yo, and Zo, and the two selected world coordinate axes are indicated by A and B. One of the object axes Xo, Yo, and Zo that is closest to the direction of the world coordinate axis A is denoted as an axis “a”, and one of the object axes Xo, Yo, and Zo excluding the axis “a” that is closest to the world coordinate axis B is denoted as an axis “b”. The object axes “a” and “b” are set as an enlargement/reduction axis for the object OBJ.

Note that in the above non-limiting example process, two are selected from the three world coordinate axes based on the position of an object OBJ with respect to the virtual camera, and two are selected from the three object axes based on the orientation of the object OBJ in the virtual space and the two world coordinate axes, and are set as an enlargement/reduction axis for the object OBJ. However, other methods may be used to set two of the three object axes as an enlargement/reduction axis.

As a first non-limiting example, a screen coordinate system is set in which three screen coordinate axes (e.g., a virtual camera line-of-sight direction axis, virtual camera leftward/rightward axis, and virtual camera upward/downward axis, which are orthogonal to each other) are specified with reference to the position and orientation of the virtual camera in the virtual space. Thereafter, from the three screen coordinate axes, two screen coordinate axes (i.e., the virtual camera leftward/rightward axis and the virtual camera upward/downward axis) excluding the virtual camera line-of-sight direction axis are selected, and two object axes which are closer to the two screen coordinate axes are selected as an enlargement/reduction axis. For example, the three object axes are indicated by Xo, Yo, and Zo, and the two selected screen coordinate axes are indicated by C and D. One of the object axes Xo, Yo, and Zo that is closest to the direction of the screen coordinate axis C is denoted as an axis “c”, and one of the object axes Xo, Yo, and Zo excluding the axis “c” that is closest the screen coordinate axis D is denoted as an axis “d”. The object axes “c” and “d” are set as an enlargement/reduction axis for the object OBJ.

As a second non-limiting example, two object axes that have the smallest differential angle with respect to a screen plane (i.e., a plane parallel to both the virtual camera leftward/rightward axis and the virtual camera upward/downward axis) in the screen coordinate system are selected as an enlargement/reduction axis. For example, the three object axes are indicated by Xo, Yo, and Zo. One of the object axes Xo, Yo, and Zo that has the smallest differential angle with respect to the screen plane is denoted as an axis “e”, and one of the object axes Xo, Yo, and Zo excluding the axis “e” that has the smallest differential angle is denoted as an axis “f”. The object axes “e” and “f” are set as an enlargement/reduction axis for the object OBJ.

As a third non-limiting example, two object axes that have the greatest differential angle with respect to the light-of-sight direction of the virtual camera in the virtual space are selected as an enlargement/reduction axis. For example, the three object axes are indicated by Xo, Yo, and Zo. One of the object axes Xo, Yo, and Zo that has the greatest differential angle with respect to the line-of-sight direction of the virtual camera is denoted as an axis “g” and one of the object axes Xo, Yo, and Zo excluding the axis “g” that has the greatest differential angle with respect to the line-of-sight direction is denoted as an axis “h”. The object axes “g” and “h” are set as an enlargement/reduction axis for the object OBJ.

Next, a non-limiting example process of calculating an amount in which an object OBJ is to be enlarged or reduced according to the movement of a hold position H will be described with reference toFIGS. 16 and 17. For example, in the above process, the intersection point between the plane including the direction connecting the virtual camera and the hold position H and the direction pointing from the virtual camera along one (first one) of the two enlargement/reduction axes, and the direction pointing from the central position P of the object OBJ along the other (second) enlargement/reduction axis, is set as an enlargement/reduction intersection point. The amount of enlargement or reduction of the object OBJ along the second enlargement/reduction axis is calculated based on the amount of movement of the enlargement/reduction intersection point that is moved according to the movement of the hold position H in the virtual space. Note that as described above, the hold position H is located on the object OBJ that is displayed and overlaid by a position (pointed position) on the display12which is an aiming position pointed by the user. Therefore, the direction pointing from the reference position (virtual camera point-of-view position) toward the hold position H (direction CH shown inFIG. 17) is an aiming direction connecting the virtual camera in the virtual space and the position in the virtual space corresponding to the pointed position.

For example, as shown inFIG. 16, it is assumed that in the case where the object axes Xo and Yo of a rectangular parallelepiped object OBJ are set as an enlargement/reduction axis, a hold position is moved from a hold position H1to a hold position H2in the lower right region of the object OBJ. Here, the movement from the hold position H1to the hold position H2is performed in the negative direction of the object axis Yo, which is one (first one) of the enlargement/reduction axes, and in the positive direction of the object axis Xo, which is the other (second) enlargement/reduction axis. Therefore, this is an operation of enlarging the object OBJ in the negative direction of the object axis Yo and enlarging the object OBJ in the positive direction of the object axis Xo. Thus, in this non-limiting example, the enlargement/reduction operation directions of the user's operation are associated with the directions of two enlargement/reduction axes.

FIG. 17shows a non-limiting example method for calculating the amount of enlargement of an object OBJ that is enlarged in the direction pointed by the object axis Xo, which is the second enlargement/reduction axis, when the hold position is moved from the hold position H1to the hold position H2as described above. For example, the amount of enlargement of the object OBJ in the direction pointed by the object axis Xo is calculated using the amount of movement of an enlargement/reduction intersection point that is the intersection point between the plane (Y-plane shown inFIG. 17) including the direction pointing from a reference position (virtual camera point-of-view position) toward the hold position H2(direction CH ofFIG. 17) and the direction pointing from the reference position along one (first one) of the enlargement/reduction axes (i.e., the object axis Yo), and the direction pointing from the central position P of the object OBJ along the other (second) enlargement/reduction axis (i.e., the object axis Xo). In addition, the amount of enlargement of the object OBJ in the direction pointed by the object axis Yo is calculated using the amount of movement of an enlargement/reduction intersection point that is the intersection point between the X-plane including the direction pointing from the reference position (virtual camera point-of-view position) toward the hold position H2and the direction pointing from the reference position along the second enlargement/reduction axis (i.e., the object axis Xo), and the direction pointing from the central position P of the object OBJ along the first enlargement/reduction axis (i.e., the object axis Yo).

For example, the amount of enlargement of the object OBJ is calculated based on the difference between an enlargement/reduction intersection point calculated based on the hold position H1set in the previous process, and an enlargement/reduction intersection point calculated based on the hold position H2set in the current process. As a non-limiting example, the amount of enlargement of the object OBJ is calculated by:

s(n)=s(n−1)+(d(n)−d(n−1))

where s(n) represents the size of the object OBJ, and d(n) represents the distance between the central position P and the enlargement/reduction intersection point. As another non-limiting example, the amount of enlargement of the object OBJ is calculated by:

s(n)=s(n−1)+(d(n)−d(n−1))*(s(n−1)/d(n−1))

Note that an upper limit value and a lower limit value may be set for the size s(n) of the object OBJ. As another non-limiting example, an upper limit value may be set for the amount of enlargement of the object OBJ, and a lower limit value may be set for the amount of reduction of the object OBJ. For example, in the case where an upper limit value is set for the size s(n) of the object OBJ, even when the calculated size s(n) exceeds the upper limit value, the size s(n) is set to the upper limit value (i.e., the amount of enlargement=0), and then the object OBJ is enlarged. After an operation of moving the hold position is thus performed so as to enlarge the object OBJ so that the size s(n) of the object OBJ exceeds the upper limit value, if the hold position is moved in the opposite direction, the object OBJ is reduced, i.e., an operation of reducing the object OBJ is performed. Here, the reduction of the object OBJ may be started in response to the reduction operation at the time point that the size s(n) becomes less than the upper limit value due to the above method, or immediately after the reduction operation is performed, i.e., a switching time point. Note that “reduction is started immediately after the switching time point” may include not only the case where reduction is started exactly immediately after the switching time point, but also the case where reduction is started after a predetermined non-response period (play period) has passed since the switching time point.

In addition, in the case where a lower limit value is set for the size s(n) of the object OBJ, even if the calculated size s(n) is smaller than the lower limit value, the size s(n) may be set to the lower limit value (i.e., the amount of reduction=0), and then the object OBJ may be reduced. In addition, in the case where the calculated size s(n) is smaller than the lower limit value, the operation may be switched to an operation of enlarging the object OBJ at the time point that the size s(n) smaller than the lower limit value is calculated. For example, in the case where the lower limit value of the object OBJ is set to the size s(n)=0, when the hold position H is moved in the reduction direction to a position beyond the central position P of the object OBJ (e.g., the hold position H is moved into another region of the four divided regions), the calculated size s(n) is smaller than the lower limit value. At the time point that the size s(n) smaller than the lower limit value is thus calculated, enlargement/reduction can be reversed by replacing the process of reduction by the amount of reduction with the process of enlargement by the amount of reduction.

More specifically, it is assumed that the object OBJ is reduced in a first reduction direction in response to the user's operation input in a first direction, and the object OBJ is enlarged in a first enlargement direction opposite to the first reduction direction in response to the user's operation input in a second direction. In that case, if the user's operation input in the first direction is continued after the object OBJ is reduced in the first reduction direction to the lower limit value in response to the user's operation input in the first direction, the object OBJ is enlarged in the first enlargement direction after the object OBJ reaches the lower limit value. When the user's operation input in the second direction is performed after the object OBJ reaches the lower limit value and then is enlarged in the first enlargement direction, the object OBJ is reduced in the first reduction direction.

In addition, when the hold position H is moved into the outside of the display range of the display12during the operation of deforming the object OBJ, the process of changing the amount of enlargement or the amount of reduction may be stopped at the time point that the hold position H is moved into the outside of the display range (i.e., the object OBJ is maintained at the size as it is immediately before the hold position H is moved into the outside of the display range). In that case, the calculation of the amount of enlargement or the amount of reduction may be resumed based on the hold position H located at the time point that the hold position H is returned into the display range of the display12.

In addition, when an operation of deforming the object OBJ is performed, the object OBJ may overlap another object (may enter another object). At that time, even after a process of deforming the object OBJ is continued with the object OBJ maintained overlapping the second object, the overlapping state with the second object may be maintained. Alternatively, the second object overlapping the object OBJ may be moved out of the range of the object OBJ during deformation.

In addition, when an operation of deforming the object OBJ is performed, the object OBJ may overlap a field surface set in the virtual space (may enter below the field). At that time, the deformed object OBJ may be moved to above the field surface after the deforming process is continued with the overlapping state with the field surface maintained and then the deforming process is ended (i.e., after the holding operation is ended). Alternatively, further deformation of the object OBJ may be stopped at the time point that the object OBJ comes into contact with the field surface during deformation.

Note that even in the case where the maintenance of the overlapping state of the deformed object OBJ and the field surface is not permitted (i.e., after the process of deforming the object OBJ is ended, the deformed object OBJ is moved to above the field surface so that the object OBJ does not overlap the field surface), overlapping between the object OBJ and another object may be permitted. In other words, when the object OBJ and the field surface overlap at the end of the process of deforming the object OBJ, then even if the process of moving the object OBJ to above the field surface is performed, so that the moved object OBJ overlaps another object, that overlapping may be maintained. As a result, the difficulty in predicting the final destination of the object OBJ in the case where after the object OBJ is moved so as to avoid overlapping the field surface, the object OBJ is moved again so as to avoid overlapping still another object, and therefore, can be eliminated or reduced.

Incidentally, the object OBJ can be not only deformed but also moved in a virtual space by the user's operation, and at that time, the object OBJ may be forbidden to be moved so as to overlap the field surface. Specifically, the object OBJ may be set so that the object OBJ cannot be moved to enter below the field surface. Likewise, the object OBJ can be set so that the object OBJ cannot be moved so as to overlap another object (enter another object). Note that when the object OBJ is maintained overlapping another object at the time point that the deformation process has been ended, the object OBJ may be permitted to overlap that second object in the process of moving the object OBJ. However, once overlapping between the object OBJ and the second object has been eliminated (after overlapping is eliminated during the movement process and/or after overlapping is eliminated at the time point that the movement process is ended), the object OBJ may be set so that the object OBJ cannot be moved so as to overlap the second object. Although the field surface is illustrated in the above description, the present technology is not limited to this, and a ceiling surface or a wall surface may be set similarly, for example. Alternatively, a boundary for defining a preferable specific space for disposing an object OBJ in a virtual space may be set similarly.

In addition, the object OBJ may be enlarged or reduced by calculating an intended enlarged or reduced size based on the enlargement/reduction intersection point. For example, the intended size of the object OBJ when enlarged in the direction pointed by the object axis Xo is calculated based on the distance on the enlargement/reduction axis Xo between the central position P of the object OBJ and the enlargement/reduction intersection point of the Y-plane. In addition, the intended size of the object OBJ when enlarged in the direction pointed by the object axis Yo is calculated based on the distance on the enlargement/reduction axis Yo between the central position P of the object OBJ and the enlargement/reduction intersection point of the X-plane.

In addition, as a non-limiting example, in the case where at least one of the position of the object OBJ, the orientation of the object OBJ, the position of the virtual camera, and the orientation of the virtual camera is changed during an operation of deforming the object OBJ, a parameter for enlarging or reducing the object OBJ may be calculated by changing any of the enlargement/reduction axes, enlargement direction, central position, etc., of the object OBJ, depending on the states of the object OBJ and/or the virtual camera after being changed. As another non-limiting example, even when at least one of the position of the object OBJ, the orientation of the object OBJ, the position of the virtual camera, and the orientation of the virtual camera is changed during an operation of deforming the object OBJ, the process of enlarging or reducing the object OBJ may be continued using the states of the object OBJ and/or the virtual camera before being changed.

In the foregoing, it is assumed that the object OBJ is deformed (enlarged/reduced) in the directions pointed by two enlargement/reduction axes selected from the three object axes. Alternatively, the way of deforming an object OBJ may vary depending on the shape thereof. For example, in the case where an object OBJ has a shape including a curved surface, the object OBJ may be deformed in a way different from that for a rectangular parallelepiped shape.