U.S. Pat. No. 11,504,632

DYNAMIC ADJUSTMENT OF SHARD COUNT FOR PLAYER MATCHMAKING

AssigneeAmazon Technologies Inc

Issue DateDecember 12, 2019

Illustrative Figure

Abstract

A matchmaker (e.g., matchmaking software) may be implemented as a number of matchmaker shards, where each shard can potentially run on a different host within a service provider network. Disclosed herein are techniques and systems for dynamically adjusting a shard count associated with a given matchmaker of a subscriber during runtime, the shard count dictating a number of matchmaker shards used for assigning players to matches of a game. Adjustment of the shard count may be based on metrics that are usable to determine whether the current number of matchmaker shards is/are “overloaded”, and, if so, the shard count can be increased, or whether the current number of matchmaker shards are “underloaded”, and, if so, the shard count can be decreased.

Description

DETAILED DESCRIPTION Service providers offer various network-based (or “cloud-based”) services to users to fulfill computing needs of the users. These service providers may operate service provider networks that include clusters of managed servers stored in data centers located across different geographic regions. In this way, users who have subscribed for use of the network-based services (or “subscribers”) need not invest in and maintain the computing infrastructure required to implement the various services that they may need. Additionally, subscribers and their clients are able to access these network-based services over different geographic regions. To offer these network-based services across geographic areas, service providers operate and maintain service provider networks (e.g., cloud-based computing environments, network-based service architectures, network-based service infrastructures, etc.). In this way, service provider networks may provide subscribers with scalable, on-demand, and network-accessible computing platforms over large geographic regions such that the subscribers have readily-available VM instances at their disposal. These service provider networks allow subscribers to immediately have computing infrastructure over large geographic regions to fulfill individual computing needs of the subscriber, and also to provide computing resources to support services provided to clients of the subscribers. For example, a subscriber to the service provider network may be a game developer (e.g., individual, company, and/or other organization) that has developed an online game that they would like to provide to clients who desire to play the online game. However, the game developer may desire to provide access to their online game to clients over large geographic regions, and for large amounts of players. The amount of computing infrastructure (e.g., compute power, memory, storage, networking, security, etc.) used to support and maintain an online gaming platform over different geographic regions that hosts game sessions for players may be large enough to be impractical for game developers, particularly new or emerging ...

DETAILED DESCRIPTION

Service providers offer various network-based (or “cloud-based”) services to users to fulfill computing needs of the users. These service providers may operate service provider networks that include clusters of managed servers stored in data centers located across different geographic regions. In this way, users who have subscribed for use of the network-based services (or “subscribers”) need not invest in and maintain the computing infrastructure required to implement the various services that they may need. Additionally, subscribers and their clients are able to access these network-based services over different geographic regions. To offer these network-based services across geographic areas, service providers operate and maintain service provider networks (e.g., cloud-based computing environments, network-based service architectures, network-based service infrastructures, etc.). In this way, service provider networks may provide subscribers with scalable, on-demand, and network-accessible computing platforms over large geographic regions such that the subscribers have readily-available VM instances at their disposal. These service provider networks allow subscribers to immediately have computing infrastructure over large geographic regions to fulfill individual computing needs of the subscriber, and also to provide computing resources to support services provided to clients of the subscribers.

For example, a subscriber to the service provider network may be a game developer (e.g., individual, company, and/or other organization) that has developed an online game that they would like to provide to clients who desire to play the online game. However, the game developer may desire to provide access to their online game to clients over large geographic regions, and for large amounts of players. The amount of computing infrastructure (e.g., compute power, memory, storage, networking, security, etc.) used to support and maintain an online gaming platform over different geographic regions that hosts game sessions for players may be large enough to be impractical for game developers, particularly new or emerging game developers, to purchase and maintain on their own.

Accordingly, service provider networks may provide a game-hosting service that is a fully, or at least partially, managed online gaming platform. The game-hosting service may deploy, operate, and scale session-based online game servers in the service provider network on behalf of game developers. The game-hosting service may provide groups, or “fleets,” of virtual machine instances (e.g., VM instances, instances, etc.) that execute on computing resources of the service provider network and host game sessions for clients of a subscribing game developer. Game software included in a game build can be provisioned to a fleet of instances, and the game-hosting service may spin up the fleet of instances, where each instance in the fleet executes at least one process that is to host a game session. To establish or join a game session, players may utilize their client devices (e.g., applications, software, or other programs executing thereon) to request (e.g., via an application programming interface (API) call) that the game-hosting service create a game session.

To create a game session, a match is generally formed or made as an initial step, followed by a step of placing the match. The game developer may create its own customized matchmaker (e.g., matchmaking software) by specifying custom matchmaking rules that are to be used by the matchmaker to assign players to matches of the game. This customized matchmaker (and the data it uses) may be hosted in the service provider network and used to assign players to matches of the developer's game. If matchmaking software (and the data it uses for matching players together) resides on a single host computer (sometimes referred to herein as a “host”) within the service provider network, the processing needs of the matchmaking software can quickly exceed the processing capacity of the single host on which it is executing. This may occur when matchmaking software (or “matchmaker”) created by a developer includes complicated matchmaking rules, and/or if incoming traffic (e.g., a rate of incoming matchmaking requests) spikes to a higher-than-expected level. When the processing needs of the matchmaker exceed the processing capacity of the host, the queue of incoming matchmaking requests may continue to grow if the incoming traffic does not subside, which results in players having to wait progressively longer wait times to be placed into a match.

This disclosure describes, among other things, techniques and systems implemented by a game-hosting service of a service provider network to allow a matchmaker (e.g., matchmaking software, sometimes called a “matchmaker configuration”) to be implemented as a number of matchmaker shards, where each shard can potentially run on a different host within the service provider network. In this manner, the matchmaker can, at times, be run in a distributed manner (via multiple shards) across multiple hosts. Also disclosed herein are techniques and systems for dynamically adjusting (i.e., increasing or decreasing) a shard count associated with a given matchmaker of a subscriber during runtime (e.g., adjusting the shard count while new players continue to request placement into matches of a game).

A “matchmaker shard” (or “shard”), as used herein, means executable software that is tasked with determining match assignments for a designated allotment of players associated with queued matchmaking requests. For example, if the shard count is set to one shard, a single matchmaker shard may execute on a VM instance of a host, and that single matchmaker shard works with the entire player population that has requested to be, but has not yet been, placed into matches of a game. When the shard count is increased to more than one shard, multiple matchmaker shards may execute on multiple VM instances that are potentially executing on a multiple different hosts. Distributing matchmaker shards across multiple hosts, on demand, can help ensure that there is sufficient processing capacity to accommodate a spike in the rate of incoming matchmaking requests and/or to accommodate running a matchmaker algorithm that is based on complicated, processing-intensive matchmaking rules, and the like.

In an illustrative example, if the shard count is set to two shards, a first matchmaker shard may execute on a first VM instance of a first host, and a second matchmaker shard may execute on a second VM instance of a second host, and each matchmaker shard may execute a common matchmaker algorithm for assigning its own designated allotment of players to matches of the game. For instance, the first matchmaker shard may execute the matchmaker algorithm on a first subset of players (e.g., roughly 50% of the entire player population that has requested to be placed into matches), and the second matchmaker shard may execute the matchmaker algorithm on a second subset of the players (e.g., roughly 50% of the entire player population).

Adjustment of the shard count may be based on metrics (e.g., measured parameters, statistics, etc.) that are reported by the individual matchmaker shard(s) as a result of executing a matchmaker algorithm to assign players to matches of a game. An example metric that is usable for determining whether to increase the shard count is a “maximum wait time metric.” To illustrate, an individual matchmaker shard may be allotted at least a portion of the queued matchmaking requests, and may be tasked with assigning players associated with those requests to matches of a game by executing a matchmaker algorithm. In this context, the matchmaker shard may compute, for an individual matchmaking request, a time period between a first time at which the matchmaking request was queued and a second time at which the matchmaker shard started executing the matchmaker algorithm to assign a player(s) associated with the matchmaking request to a match of the game. This computed time period is referred to herein as a “wait time” for that player. During a reporting period, the wait times computed for the individual matchmaking requests can be evaluated to determine a maximum wait time amongst the set of computed wait times, and this maximum wait time can be evaluated to determine whether or not to increase the shard count. In one example, a threshold wait time can be used. That is, the shard count may be increased if the maximum wait time violates (e.g., is greater than or equal to, or is strictly greater than) the threshold wait time. In some embodiments, the shard count may be increased if individual maximum wait times of multiple maximum wait times reported by a matchmaker shard over a predetermined period of time violates the threshold wait time. That is, if the reported maximum wait times violate the threshold wait time for a threshold amount of time, the shard count may be increased a bit more cautiously. Thus, the maximum wait time metric is usable to determine whether the current number of matchmaker shards is/are “overloaded”, and, if so, the shard count can be increased.

An example metric that is usable for determining whether to decrease the shard count is an “algorithm utilization metric.” To illustrate, an individual matchmaker shard with an allotment of at least a portion of the queued matchmaking requests may execute the matchmaker algorithm to assign the players associated with those requests to matches of the game, and then the shard may wait for a short period of time (e.g., 250 milliseconds, 200 milliseconds, etc.) while additional matchmaking requests are queued, and may repeat the execution of the matchmaker algorithm for this next batch of queued requests. At a suitable reporting interval, the matchmaker shard may sum multiple time periods measured from starting the execution of the matchmaker algorithm to stopping the execution of the matchmaker algorithm in order to compute a total run time of the matchmaker algorithm. This total run time can then be divided by the elapsed wall-clock time measured from the start of the first run of the matchmaker algorithm to the end of the last run of the matchmaker algorithm. This results in a percentage of a period of time that the matchmaker shard spent executing the matchmaker algorithm to assign its allotment(s) of players to matches of the game, and this percentage (called the “algorithm utilization metric”) can be evaluated to determine whether or not to decrease the shard count. In one example, a threshold percentage can be used. That is, the shard count may be decreased if the algorithm utilization metric (e.g., a percentage value) fails to violate (e.g., is less than or equal to, or is strictly less than) the threshold percentage. Thus, the algorithm utilization metric is usable to determine whether the current number of matchmaker shards are “underloaded”, and, if so, the shard count can be decreased.

Adjusting the shard count up or down in this manner allows for striking a balance between ensuring that adequate computing resources are available for placing players into matches within a reasonable amount of time, and also ensuring that the player population is not overly fragmented. That is, partitioning the player population amongst matchmaker shards provides benefits of reducing the wait time for players to be placed into matches because the matchmaking workload can be potentially distributed across multiple hosts, thereby providing adequate resources to meet or exceed the demand of the matchmaker algorithm. However, if too many shards are implemented for a given matchmaker, the player population may become overly-fragmented, and the match quality may not be as good as it could be with a less-fragmented player population. To illustrate, consider an example where a matchmaker is implemented across 10,000 matchmaker shards. This number of shards may ensure that players can be placed into matches quickly for many use cases, but this also means that the player population will be divided into 10,000 subsets of players, and each matchmaker shard works with its own subset of players to find matches exclusively for those players. In this example, if a first player is in one subset allocated to a first shard, and if the matchmaking algorithm would otherwise place the first player into a match with a second player who is in another subset allocated to a second shard, these players may not be provided with optimized match quality because players allocated to different matchmaker shards may not be matched together. Thus, it may be beneficial to set the shard count to a number that is as low as possible, while trying to set the shard count to a number that that is high enough to provide sufficient processing resources for placing players into matches within a reasonable (e.g., below threshold) amount of time. In some embodiments, the dynamic adjustment of the shard count may bias towards a preference of having more processing capacity at the cost of having more-fragmented player populations. Said another way, it may be better to have sufficient capacity for matchmaking so that wait times are reduced, possibly at the expense of having an overly-fragmented player population for a short time. This may translate into an implementation that more liberally increases the shard count, and more conservatively decreases the shard count.

An example process for increasing a shard count may include executing, by one or more computing devices of a service provider network, a first number of matchmaker shards on one or more VM instances allocated to a subscriber of the service provider network. This first number of matchmaker shards may include at least a first matchmaker shard executing on a first VM instance of a first host computer. The process may determine a metric associated with queued matchmaking requests associated with a plurality of players of a game associated with the subscriber, where the metric indicates whether one or more matchmaker shards of the first number of matchmaker shards are overloaded. Based at least in part on the metric, a shard count associated with the subscriber may be increased from the first number to a second number greater than the first number. With the shard count increased to the second number, the one or more computing devices may execute the second number of matchmaker shards on of the one or more VM instances allocated to the subscriber, wherein the second number of matchmaker shards includes at least the first matchmaker shard executing on the first VM instance of the first host computer and a second matchmaker shard executing on at least one of the first VM instance, a second VM instance of the first host computer, or a third VM instance of a second host computer.

An example process for decreasing a shard count may include executing, by one or more computing devices of a service provider network, a first number of matchmaker shards on one or more VM instances allocated to a subscriber of the service provider network. This first number of matchmaker shards may be configured to assign players to matches of a game associated with the subscriber, and may include at least a first matchmaker shard executing on a first VM instance of a first host computer and a second matchmaker shard executing on at least one of the first VM instance, a second VM instance of the first host computer, or a third VM instance of a second host computer. The process may determine a first metric that indicates whether the first matchmaker shard is underloaded, and may determine a second metric that indicates whether the second matchmaker shard is underloaded. Based at least in part on at least one of the first metric or the second metric, a shard count associated with the subscriber may be decreased from the first number to a second number less than the first number. With the shard count decreased to the second number, the one or more computing devices may execute the second number of matchmaker shards on the one or more VM instances allocated to the subscriber, which may include executing a first matchmaker shard on the first VM instance of the first host computer.

By dynamically adjusting the shard count—which dictates the number of matchmaker shards that are deployed at any given time, a matchmaking service can determine, based at least in part on observed metrics that indicate whether the matchmaker shards are presently overloaded or presently underloaded, when, and/or how fast, and/or by how much to adjust the shard count. This helps ensure that players are not waiting longer than a threshold amount of time to be placed into matches, while also mitigating the effects of fragmenting the player population amongst multiple shards.

While some of the techniques are described herein as being performed in a service provider network of a service provider, the techniques may similarly be applied in other computing networks, such as on-premise servers managed by the game developers themselves. Certain implementations and embodiments of the disclosure will now be described more fully below with reference to the accompanying figures, in which various aspects are shown. However, the various aspects may be implemented in many different forms and should not be construed as limited to the implementations set forth herein. The disclosure encompasses variations of the embodiments, as described herein. Like numbers refer to like elements throughout.

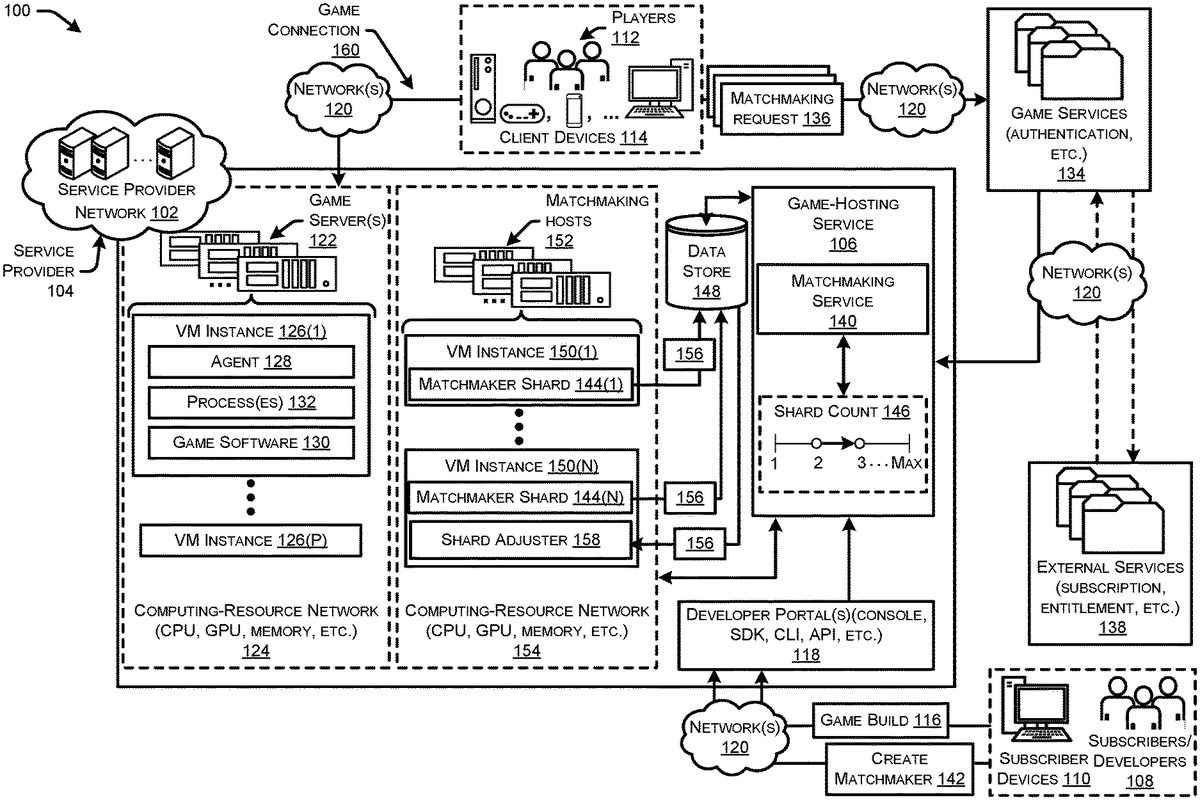

FIG. 1illustrates a system-architecture diagram of an example environment100in which a matchmaking service of a service provider network102can dynamically adjust a shard count, which dictates a number of matchmaker shards used for assigning players to matches of a game. Each matchmaker shard is potentially executable on a different host.

As illustrated, the service provider network102may be operated and/or managed by a service provider104. The service provider network102may provide various services to users to fulfill their computing resource needs, such as cloud-based computing resources. For example, the service provider network102may provide cloud-based, scalable, and network accessible compute power services, storage services, database services, and/or other services. As illustrated, the service provider network102may also provide a game-hosting service106that is a scalable, cloud-based runtime environment for online games, including session-based multiplayer games. The game-hosting service106may be fully managed by the service provider104and may deploy, operate, and scale the session-based multiplayer game servers in the cloud-based, or network-based, environment. For example, the game-hosting service106may not only provide the hardware to host the game sessions, but also manage ongoing activity, security, storage, and performance tracking. Additionally, the game-hosting service106may provide auto-scaling capabilities such that instances supporting game sessions can be spun up or spun down based on player demand.

To utilize the game-hosting service106, subscribers108(who may also be “game developers”, or “developers”108) may utilize subscriber devices110to register for an account (e.g., a user account, subscriber account, etc.) with the game-hosting service106. This may allow the subscribers108to subscribe to the game-hosting service106, provide a game build(s) for their online game(s), create a matchmaker(s) with customized matchmaking rules, and to provide their clients (denoted as “players112” inFIG. 1, because the clients may, at times, be engaged in playing a game) with access to the online game(s) via their client devices114without the subscribers108having to invest in the computing resources (e.g., on-premise resources) needed to host the online game sessions for their players112. In order to utilize the game-hosting service106, the subscribers108may provide a game build116to the game-hosting service106via one or more developer portals118. The developer portal(s)118may include one or more of a web-based console, a software-development kit (SDK), a command-line interface (CLI), an application programming interface(s) (API), and/or any other means by which a subscriber108may specify and/or provide a game build116to the game-hosting service106, and/or provide a request to create a customized matchmaker (described in more detail below).

The game build116may correspond to any type of online game that may host a game session for one or more players112. For instance, the game build116may correspond to a session-based single player online game, or a session-based multiplayer online game. In this disclosure, which describes techniques and systems relating to matchmaking, the game build116may correspond to a session-based multiplayer online game where players112are grouped together in matches to play the game. The game build116may represent any type of online game, such as real-time strategy (RTS) games, first person shooter (FPS) games, multiplayer online battle arena (MOBA) games, role playing (RPG) games, massively multiplayer online (MMO) games, massively multiplayer online role player games (MMORPG), virtual board games (e.g., chess, checkers, etc.), action-adventure games, simulation games, strategy games, sports games, virtual reality games, and/or any other game that may be played in an online environment.

Generally, the client devices114may comprise any type of computing device that may be utilized for online gaming. For instance, the client devices114may include laptop computing devices, desktop computing devices, mobile phones, tablets, gaming systems, controller-based devices, virtual and/or augmented reality devices (e.g., head-mounted displays (HMDs)), other wearable devices, biometric sensors, projectors, televisions, and/or any computing device usable on its own, or in conjunction with other devices, for online gaming. In some examples, at least part of the online game may execute and/or be stored locally on the client devices114. Furthermore, the subscriber devices110may comprise any type of computing device that may be utilized to access the service provider network102. For instance, the subscriber devices110may include, without limitation, laptop computers, desktop computers, tablet computers, server computers, mobile phones (e.g., smartphones), gaming systems (e.g., game consoles), televisions, and/or any computing device usable on its own, or in conjunction with other devices, for accessing the service provider network102.

The game build116may include the game software for the online game, and may further include server executables, supporting assets, libraries, and dependencies that are used to host and/or execute the game software on an instance. The subscribers108may provide the game build116through the developer portal(s)118, such as by uploading the game build116over one or more networks120(e.g., the Internet, wireless wide area networks (WANs), personal area networks (PANs), wired and/or wireless local area networks (LANs), etc.). The network(s)120may comprise any type of network or combination of network, including wired and/or wireless networks. Once a subscriber108has uploaded their game build116, the game-hosting service106may deploy the game software to one or more game servers122in a computing-resource network124. For instance, the game software corresponding to the game build116may be installed on one or more virtual machine (VM) instances126that are at least partially managed by a local agent128(e.g., script, program, application, etc.).

The computing-resource network124may include data centers that each include one or more computing resources, such as VM instances126(1)-(P), where “P” is any integer (referred to herein collectively as “VM instances126” “or just “instances126”). The data centers may house the game server(s)122and may be located across disparate geographical regions such that computing resources are available to support functionality for cloud-based services provided by the service provider network102. The computing resources may include various combinations of hardware-based components, such as central processing units (CPU), graphics processing units (GPU), memory, storage, network capacity, security, and/or any other type of hardware-based resource to support cloud-based services, such as the game-hosting service106. In some examples, the computing resource network124may further include respective memories that store various firmware-based and/or software-based resources that provide the functionality of the services, such as the instances126on which an agent128executes, the game software130executes, and one or more processes132execute to support a game session.

Generally, the agent128may be responsible for handling various processes on an instance126, such as spinning up an instance126, spinning down an instance126, handling lifetime processes of the instance126, retrieving game session assignments for processes132executing on the instance126, executing processes132on the instance126to host a game session, managing resources of the instance126, installing patches and/or other software on the instance126and/or various other actions for managing the instance126. A game session is an instance of the game software130running on a server that players112can connect to and interact with. The game defines the basic characteristics of the game session, such as the life span or the number of players112involved. The process(s)132may be binary processes132, executable processes132, etc., running on the VM instance126that consume or utilize the underlying hardware resources and/or other resources.

To play in a game session, the client devices114may interact directly with the game-hosting service106, and/or through various backend game services to retrieve information on current game sessions, to request new game sessions, and/or reserve slots in game sessions. For instance, the client devices114may interact, over one or more networks120, with game services134that may handle communication between client devices114and the game-hosting service106. Further, the game services134may handle additional tasks or provide additional services, such as player authentication and authorizations, team building, and inventory control. For example, when a player112wants to start a new game, the client device114may call the authentication service to first verify the player's112identity, and then send a matchmaking request136to the game-hosting service106. It is to be appreciated that, although the game services134may receive matchmaking requests136and route the requests136to the game hosting service106, and although the game services134may handle some matchmaking tasks itself, this disclosure contemplates that matchmaking tasks are handled by the game-hosting service106of the service provider network102, and, as such, matchmaking requests136can be submitted from the client devices114directly to the game hosting service106over the network(s)120, without being routed through the game services134, in some embodiments.

In further examples, the online game of a subscriber108may rely on or utilize one or more additional external services138, such as for validating a subscription membership and/or determining entitlements for a client's account. As shown, the information from the external services138may be passed to the game server(s)122via the game services134and the game-hosting service106without going through the client device(s)114.

To establish or join a game session, players112may utilize their client devices114(e.g., applications, software, or other programs executing thereon) to request136(e.g., via an API call) that the game-hosting service106place them into a match of a game session for a game. For example, to create a game session for a multiplayer game, a match is generally formed or made as an initial step, followed by a step of placing the match. For example, if a player(s)112connects to the game services134(or connects directly to the game hosting service106without connecting to the game services134) over the network120for playing a multiplayer game, a “StartMatchmaking” API call can be submitted, along with identifiers of the player(s)112who would like to be placed in a match for playing an online video game.

The game hosting service106may implement a matchmaking service140to handle the matchmaking requests136(sometimes referred to herein as “tickets”136) by assigning players associated with the tickets136into matches of a game using a matchmaker (e.g., matchmaking software) associated with the subscriber108who developed the game. For example, a subscriber108can creates a new matchmaker (or matchmaker configuration) via a request sent to the game hosting service106, which is shown inFIG. 1as a “create matchmaker” request142. As part of this create matchmaker request142, the subscriber108may configure customized matchmaking rules using a customizable rules engine that allows subscriber108to design how to match players112together based on player attributes, game modes, skill level, geographic regions, etc. These matchmaking rules are to be adhered to when matching players112together into matches. These matchmaking rules can be based on any suitable factors, such as, without limitation, skill level of the players112, amount of time the players112have been waiting to be placed into a match, geographic regions of the players112, social connections amongst the players112, player preferences for particular game attributes or features (e.g., maps, weapons, etc.), and/or other factors. In an illustrative example, the subscriber108may create a matchmaking rule that indicates the matchmaker algorithm should only consider matching players together whose client devices114have a latency less than 50 milliseconds. Another example matchmaking rule may specify that players112with skill levels that are different by more than a threshold difference (e.g., more than 15 points different) should not be grouped together in a match. As yet another example, the subscriber108may configure a matchmaker with a matchmaking rule that biases toward matching players112together if they have a preference for the same particular game map among a plurality of game maps that can be used in the game. These are merely example matchmaking rules, and other types of rules known to a person having ordinary skill in the art can be implemented.

As mentioned, a matchmaker (e.g., matchmaking software) may be deployed as a number of matchmaker shards144(1)-(N) (referred to herein collectively as “matchmaker shards144” “or just “shards144”). An adjustable shard count146is usable to set the number of matchmaker shards144at any number over a range of integers from a minimum number (e.g., one shard) to a maximum number. InFIG. 1, “N” represents the current number of shards144deployed, which is equal to the current shard count146. When a subscriber108creates a new matchmaker (via a create matchmaker request142) with customized matchmaking rules, the matchmaking service140may consult an override table maintained in a data store148to determine a maximum shard value, Max, from the override table for the given subscriber108, and the matchmaking service140may copy the maximum shard value, Max, to the subscriber's108settings for that newly-created matchmaker to indicate the maximum number, Max, of shards to which the shard count146is adjustable for that matchmaker. In addition, the current shard count146may be initialized at any suitable initial number from the minimum number (e.g., one shard) to the maximum number, Max. In some embodiments, the initial number of shards144may be set to the minimum number. For example, if the minimum number to which the shard count146is adjustable equals one shard, the shard count146may be initialized to one shard. With the shard count146set to an initial number, that initial number of matchmaker shards144may be assigned to a given VM instance(s)150executing on a matchmaking host(s)152(sometimes referred to as “host computer”150, or simply “host”150) in a computing-resource network154, which may be the same as, or similar to, the computing-resource network124described above. Each matchmaker shard144may include the matchmaking software for assigning players112to matches of a game in accordance with one or more matchmaking rules, and may further include server executables, supporting assets, libraries, and dependencies that are used to host and/or execute the matchmaker shard144on an instance150.

The computing-resource network154may include data centers that each include one or more computing resources, such as VM instances150(1)-(N) (referred to collectively as “VM instances150” “or just “instances150”). AlthoughFIG. 1shows a one-to-one correspondence of shards144to instances150, there may be a many-to-one correspondence of shards144to instances150, in some embodiments, such as when a single VM instance150is sufficient for executing multiple matchmaker shards144. The data centers of the computing-resource network154may house the host computers152and may be located across disparate geographical regions such that computing resources are available to support functionality for cloud-based services provided by the service provider network102. The computing resources may include various combinations of hardware-based components, such as CPU, GPU, memory, storage, network capacity, security, and/or any other type of hardware-based resource to support cloud-based services, such as the matchmaking service140. In some examples, the computing resource network154may further include respective memories that store various firmware-based and/or software-based resources that provide the functionality of the services, such as the instances150on which the matchmaker shards144execute.

The assignment of an individual matchmaker shard144to a VM instance150of a given host152may be carried out by a “load balancing node.” In some embodiments, one of the VM instances150may be designated as this load balancing node using a system of distributed locks. Consider an example where the VM instance150(N) is designated as the load balancing node. This designation may be made by the VM instance150(N) claiming ownership of (or acquiring) a particular lock. With this particular lock acquired, the VM instance150(N) may determine, or select, the most appropriate VM instance150and/or host152to which an individual matchmaker shard144should be assigned. The selection of the most appropriate VM instance150and/or host152to host the matchmaker shard144may be based on various factors including, without limitation, a current capacity of the host152and/or the current capacity of the VM instance150, whether the VM instance150is in charge of other tasks, such as reading metrics reported by other shards144for determining whether to adjust the shard count146, etc. The assigned VM instance150selected by the load balancing node (e.g., the instance150(N)) may then obtain or acquire a lock that is associated with (or specific to) the matchmaker shard144in order to secure ownership of the matchmaker shard144, and this information may be written to a lock table maintained in the data store148. The lock for a given matchmaker shard144may be keyed on a shard identifier (ID), which may be a function of the subscriber's108account ID and/or a matchmaking configuration name. The use of the load balancing node for assigning matchmaker shards144to VM instances150executing on particular hosts152prevents a single host152from “owning” (e.g., by its VM instance(s)150acquiring locks for) a large number of matchmaker shards144, and/or owning a number of computationally-expensive shards144, while other hosts152and their VM instances150remain relatively idle. The load balancing node may be configured to, at one or more different times (e.g., periodically, in response to the occurrence of an event, etc.) after an initial assignment, analyze the factors it uses for assigning shards144to VM instances150and/or hosts152, and determine whether any shards144can be redistributed/reassigned to other VM instances150and/or hosts152to provide an improved balance of shards across VM instances150and/or hosts152.

At runtime, the matchmaking service140may read the current shard count146for a given matchmaker from a record in the data store148associated with the given matchmaker. If the shard count146happens to be missing from the record in the data store148, the shard count146may be assumed to be equal a minimum number (e.g., one shard) of an adjustable range. The matchmaking service140may determine the lock to use, such as by computing a hash value based on the ticket identifier (ID) of an incoming ticket136and/or the current shard count146. The lock name for each shard144may include the matchmaker shard144or an identifier thereof. A shard count146of zero (“0”) may have logic which does not include the matchmaker shard144in the lock name for backward compatibility with systems that do not implement matchmakers as a number of matchmaker shards144. It is to be appreciated that a subscriber108may create multiple matchmakers, each implemented as a number of matchmaker shards144that can potentially be set to a number greater than one, and where each shard144can potentially execute on a different host152. In this scenario, each matchmaker associated with the subscriber108may have a shard count146associated therewith, which is adjustable up to the same maximum number, Max, and down to the same minimum number (e.g., one shard).

The shard ID of the matchmaker shard144for which the request136is to be queued may be stored with the request136to enable routing of the request136to the correct host152executing the VM instance150that acquired the lock for the matchmaker shard144. When a player112submits a matchmaking request136, a shard ID may be passed in with the request136to access the appropriate matchmaker shard144and the corresponding lock for the shard144. The matchmaking service140may consult (e.g., read) the lock table in the data store148to identify which VM instance150owns the matchmaker shard144associated with the request136. The matchmaking service140may then make a call to either the lock-owning VM instance150of a particular host152, or, if the lock is unowned, to the load balancing node (e.g., the instance150(N)) for real-time assignment of the matchmaker shard144to an appropriate VM instance150of a host152.

In general, a single request136may be associated with a single player112, or with multiple players112. The incoming requests136are received, and the requests136are distributed to the matchmaker shards144in order to distribute the players112amongst the matchmaker shards144. This can be done randomly, in some embodiments. For example, if the shard count146is set to two, two shards144(1) and144(2) may be allocated roughly half of the players112(randomly-selected) associated with a subset of the incoming requests136, and the subset of requests136may be queued (e.g., placed into a logical container) for each shard144. This may be accomplished by placing the respective subsets of requests136into respective queues (e.g., logical containers) associated with each matchmaker shard144. If the shard count146is currently set to one shard, all of the requests136may be queued for the single matchmaker shard144.

In some embodiments, distributing the incoming requests136amongst the shards144may not be random. For example, allocating requests136to shards144may be based on a time of receipt (e.g., a first received request136may be queued for a first shard144(1), a next received request136may be queued for a second shard144(2), and so on and so forth, much like dealing cards to players of a card game). In some embodiments, other logic for distributing requests136amongst shards144may be utilized, such as exposing a partitioning key to the subscriber108that is used to assign partitioning key values to its players112, and players112with the same value of the partitioning key may be queued together for one shard144(1), while other players112with a different common value of the partitioning key may be queued together for another shard144(2), and so on and so forth. As yet another example, a machine learning model(s) may receive the requests136as input and may output shard assignments based on various features, such as any suitable player attribute(s). In other words, the matchmaker service140may implement some pre-matchmaking logic to partition the player population amongst shards in a way that is perhaps better-than-random, but not as robustly as the matchmaking logic implemented by the individual matchmaking shards144for matching players112together in matches of the game.

The VM instance150running a matchmaker shard144may verify that it owns the correct matchmaker shard144for the requests136that have been queued for the matchmaker shard144it is hosting. This may be accomplished by checking the lock table in the data store148to verify ownership of the correct matchmaker shard144. Assuming the respective VM instances150owns the correct matchmaker shard144for which the requests136are being queued, each matchmaker shard144is tasked with “finding a match” for the players112associated with the requests136that have been allotted to the shard144. To do this, the matchmaker shard144may implement a matchmaker algorithm that is common across all shards144for a given matchmaker. Accordingly, an individual matchmaker shard144may periodically “work on” its own queued requests136by executing a matchmaker algorithm to assign the players112associated with the queued requests136to matches of a game. Each time the matchmaker algorithm is executed, the algorithm may start and stop for a given set of queued matchmaking requests136over a period of time (e.g., a period of roughly a minute). The process of running the algorithm may include determining the players112associated with the allotted requests136that have been queued for the shard144, starting execution of the matchmaker algorithm to assign the determined players112to matches of the game, stopping execution of the matchmaker algorithm, and then waiting a short period of time (e.g., 250 milliseconds, 200 milliseconds, etc.) to allow for some settling of incoming requests136as they are queued, and then repeating these steps for the next set of queued requests136that are allotted to the matchmaker shard144.

As this matchmaking process is going on, the individual matchmaker shards144may report metrics156at a reporting interval (e.g., every 15 seconds). These reported metrics156may be stored in (e.g., written to a table in) the data store148. The data store148(e.g., object storage) may represent one or more data stores that stores various data described herein at one or more locations in the service provider network102. The data store148may track matchmaker shards144. Records for these shards144may include additional data/metadata (e.g., locks) including, without limitation, an owner (e.g., whether the shard144is not assigned to an owner, or assigned to VM instance150and/or host152), identifiers of VM instance126/150, locks owned by the instances126/150, fleet, subscriber108, etc. The data store148may further include the game builds116for developers108that include game software130. The data store148may further store data for the shard count146that indicates minimum numbers, maximum numbers, current values to which the shard count146for a given matchmaker is, or can be, set. The data store148may further store the metrics156described herein, such as a metric indicating whether one or more shards144are overloaded (e.g., the maximum wait time metric), a metric indicating whether one or more shards144are underloaded (e.g., the algorithm utilization metric), and possibly additional metrics. The data store148may further store queues in which matchmaking requests136(or tickets136) are queued for assigning players112to matches. The data store148may further store user accounts for the players112and/or subscribers108, and subscriber parameter values, which, as explained in further detail below, may dictate the thresholds and other logic used by the shard adjuster158for a given matchmaker of a given subscriber108. Much of the data can be updated at any suitable time by the service provider104and/or the subscriber108.

A VM instance150(N) designated as a “leader” VM instance150may periodically read the metrics156from the data store148and determine, using a shard adjuster158, whether to adjust the shard count146for the matchmaker represented by the current number of matchmaker shards144. The metrics156may include any suitable metrics that generally indicate, or are usable to determine, whether the matchmaker shards144are presently overloaded or presently underloaded. An example metric156that is usable for determining whether to increase the shard count146is a “maximum wait time metric.” To illustrate, an individual matchmaker shard144, say shard144(1) inFIG. 1, may be allotted at least a portion of the queued matchmaking requests136, and may be tasked with assigning players112associated with those requests136to matches of a game by executing a matchmaker algorithm. In this context, the matchmaker shard144(1) may compute, for an individual matchmaking request136, a time period between a first time at which the matchmaking request136was queued (e.g., in a queue for the shard144(1)) and a second time at which the matchmaker shard144(1) started executing the matchmaker algorithm to assign a player(s)112associated with the matchmaking request136to a match of the game (i.e., a second time when the algorithm first considers the player for a potential match). This computed time period is referred to herein as a “wait time” for the player(s)112. At the end of a reporting interval, the wait times for individual ones of a plurality of requests136can be evaluated (e.g., compared against one another) to determine a maximum wait time among the set of computed wait times, and this maximum wait time can be evaluated by the shard adjuster158to determine whether or not to increase the shard count146. In one example, a threshold wait time can be used. That is, the shard count146may be increased if the maximum wait time violates (e.g., is greater than or equal to, or is strictly greater than) the threshold wait time. The threshold wait time may be set to any suitable value that is deemed acceptable for players112to wait to be placed into matches, and this threshold wait time may be adjustable by the subscriber108for the subscriber's108own players112. In some embodiments, the shard count146may be increased if individual maximum wait times of multiple maximum wait times reported by a matchmaker shard144over a predetermined period of time (e.g., a period that spans multiple reporting intervals) violates the threshold wait time. For example, if each of the multiple maximum wait times reported over a predetermined (or threshold) period of time violates the threshold wait time, the reported maximum wait times have violated the threshold wait time for a threshold amount of time (e.g., a minute), and the shard count146may be increased. Said differently, if the maximum wait time metric is elevated above the threshold wait time for a period of time, the current number of matchmaker shards144may be considered to be “overloaded”, and, in this case, the shard count146can be increased.

In some embodiments, the individual matchmaker shards144can be tagged for up-sharding (e.g., tagging the shard144with a designated tag) based on the maximum wait time(s) reported by the shard144, and then, if and when a threshold number or percentage of shards144are tagged for up-sharding, the shard adjuster158may determine to increase the shard count146. For example, if at least 50% of the shards144have been tagged for up-sharding, the shard count146may be increased. However, in some embodiments, if any shard144is overloaded (e.g., if the maximum wait time violates the threshold wait time for more than a predetermined period of time), the shard count146may be increased. This provides a more liberal approach to increasing the shard count146, as opposed to waiting for multiple shards144to be overloaded before increasing the shard count146.

An example metric156that is usable for determining whether to decrease the shard count146is an “algorithm utilization metric.” To illustrate, an individual matchmaker shard144, say the shard144(1), with an allotment of at least a portion of the queued matchmaking requests136may execute the matchmaker algorithm for its matchmaker to assign the players112associated with those allotted requests136to matches of the game, and then the shard144(1) may wait for a short period of time (e.g., 250 milliseconds, 200 milliseconds) while additional matchmaking requests136are queued, and the shard144(1) may repeat executing the matchmaker algorithm for this next batch of queued requests136. At the end of a reporting interval, the matchmaker shard144(1) may sum multiple time periods measured from starting the execution of the matchmaker algorithm to stopping the execution of the algorithm in order to compute a total run time of the matchmaker algorithm over a the reporting interval, and this total run time can be divided by the elapsed wall-clock time measured from the start of the first run of the matchmaker algorithm for the reporting interval to the end of the last run of the matchmaker algorithm for the reporting interval. This results in a percentage of a period of time that the matchmaker shard144(1) spent executing the matchmaker algorithm to assign its allotment(s) of players112to matches of the game during a reporting interval, and this percentage (called the “algorithm utilization metric”) can be reported to the data store148so that the shard adjuster158can read the metric156and evaluate the metric to determine whether or not to decrease the shard count146. In one example, a threshold percentage (e.g., 10%) can be used. That is, the shard count146may be decreased if the algorithm utilization metric (e.g., a percentage value) fails to violate (e.g., is less than or equal to, or is strictly less than) the threshold percentage. Thus, the algorithm utilization metric is usable to determine whether the current number of matchmaker shards144are “underloaded”, and, in this case, the shard count146can be decreased. In some embodiments, if all shards144are underloaded (e.g., if all shards144report an algorithm utilization metric that fails to violate the threshold percentage), the shard count146may be decreased. This provides a more conservative approach to decreasing the shard count146, as opposed decreasing the shard count146as soon as a single shard144is underloaded (assuming multiple shards144are executing).

Increasing and/or decreasing the shard count146may be done in increments (e.g., by adjusting the shard count146one integer at a time, such as by increasing the shard count146from1to2, from2to3, from3to4, and so on). Said another way, if the shard count146is increased, one additional matchmaker shard144may be deployed to a VM instance150of a host152. In the other direction, if the shard count146is decreased, one of the currently-active matchmaker shards144may be decommissioned (e.g., execution of the instance150may be stopped, the instance150may be spun down, etc.). In some embodiments, whenever the shard count146is to be adjusted, the shard adjuster158may determine a target number (from the minimum number to the maximum number) to which the shard count146is to be adjusted. For example, if the maximum wait time metric for one or more shards144is elevated above a threshold wait time by an above threshold amount (e.g., if the maximum wait time spikes way above the threshold wait time), this may indicate that the shards144are extremely overloaded, and the shard count146may be increased to a target number that increases the shard count146by more than just a single shard144. This may allow for quickly pushing wait times down to an acceptable amount. In the opposite direction, if the algorithm utilization metric for one or more shards144is below the threshold percentage by an above threshold amount, this may indicate that the shards144are extremely underloaded, and the shard count146may be decreased to a target number that decreases the shard count146may more than just a single shard144. Again, this may allow for quickly mitigating fragmentation of the player population.

The shard adjuster158may communicate the target number, and/or an instruction to increase the shard count146, to the matchmaking service140so that a global shard count146can be adjusted. For example, by notifying the matchmaking service140, an updated current value of the shard count146can be made accessible to all of the shards144, such as by storing (e.g., writing to a table) the new shard count146in the data store148.

In the case of increasing the shard count146, existing tickets136may continue to be allocated to the shards144to which they were assigned at a time of ingestion. No re-distribution of existing tickets136to a new shard(s)144is needed because tickets136are short-lived. New tickets136, however, may start flowing to newly-deployed shards144as a result of the increased shard count146, and the distribution of tickets136amongst the shards144is likely to balance out over a short period of time. In the case of decreasing the shard count146, new tickets136naturally get allocated to the active shards144, but there may be tickets136that were allocated to a decommissioned shard(s)144and no longer have a matchmaker shard144working to find matches for the players112associated with those tickets136. Accordingly, these tickets136may be reallocated to a new active shard144. In some embodiments, this is accomplished by re-ingesting the tickets136. All queued tickets136can be analyzed to determine the shards144assigned to the tickets136, and this may be compared to the currently active shards144, or the current shard count146, for the matchmaker in question, and if the assigned shard for a ticket136has been decommissioned (e.g., is no longer valid), the ticket136may be re-ingested, such that the ticket136is reassigned to an active shard144.

After forming a match, the game-hosting service106may be tasked with placing the match. It is to be appreciated that, in some embodiments, short of forming a new match, a matchmaker shard144may identify an existing match associated with an already running process132that is hosting a game session, and if the active game session has an open player slot(s) that the player(s)112associated with the request can be placed into, the matchmaker shard144may assign the player(s)112to the open player slot(s) in the active game session, without having to form a separate match for the player(s)112. Otherwise, the game-hosting service106may have already spun up VM instances126in fleets that are allocated for various subscribers of the service provider network102, and each of the spun up VM instances126may have server processes132executing thereon in idle mode until the processes132are assigned to a game session request for hosting a corresponding game session (associated with a match) until the game session ends. Other than loading maps and other data for hosting a particular game session, these idle processes may be ready to start hosting a game session as soon as they are assigned to a game session request for a match. The game-hosting service106may be tasked with identifying a server process132executing on a VM instance126that is executing on a common server122, which will serve multiple players112in a set (e.g., 2, 10, 100, etc.) of players112that have been matched together for playing a multi-player game. Accordingly, the game-hosting service106may resolve a fleet alias by calling a routing service, load fleet data to determine a fleet of VM instances126associated with the incoming request, and query the data store148to identify available (e.g., idle) processes132that are usable to host a game session corresponding to the incoming request. It is to be appreciated that, for efficiency reasons, the game-hosting service106may “pack” server processes132as tight as possible to avoid overutilization of resources. That is, the game-hosting service106may try to minimize the number of processes132that are concurrently executing in idle mode in order to have available a sufficient number of processes132while also avoiding unnecessary computing resource consumption that could be utilized for other purposes.

After assigning a process132to a game session request, a corresponding game session is created, and the assigned process132executing on a VM instance126of the subscriber's fleet may be instructed to host (or support) the created game session for players112of the subscriber108who have been assigned to the same match of the game session. The client application(s) running on the client device(s)114associated with the game session may receive connection information (e.g., a port of a server122, an IP address, etc.), and may create a game connection(s)160by connecting directly, over the network(s)120, to the open game server122using a player session ID(s). The server process132may then accept the player ID(s) as a valid ID(s) and accepts, or rejects, the game connection(s)160. If connected, the player session is set to active and the players112begin playing the game using their client device(s)114and the game connection160that is established between the game server122that has the instance126with the process132executing to host the selection game session for the given match. Once a game session has ended (e.g., players112quit, the game ends, time out, etc.), the client application on each of the involved client devices114may disconnect from the process132, and the game-hosting service106can change the game session to terminated, upload a game session log to storage, and update a fleet utilization to indicate that the game server122has one less process executing132.

As mentioned, the game-hosting service106may deploy a group of instances126, often referred to as a “fleet” of instances126, on game servers122. In various examples, a fleet of instances126may all support the same game build116, or the same online game. Each instance126in a fleet may run multiple processes132simultaneously, depending on the hardware capability, and each server process can host at least one game session. Since a game build116can have one or multiple executable files, a developer108and/or the service provider104may configure a fleet to run multiple server processes132of each executable on each instance126.

FIG. 2A-2Billustrate schematic diagrams200A and200B, respectively, showing an example technique for partitioning a player population associated with queued matchmaking requests136amongst a plurality of matchmaker shards144, and for adjusting a shard count146based on metrics156reported by the shards144.

InFIG. 2A, the shard count146for a given matchmaker (or matchmaker configuration) is initially set to two shards. This may be an initialized number or it may have been adjusted from a previous number (e.g., previously increased from one shard to two shards). In any case, two matchmaker shards144(1) and144(2) are shown as executing on respective VM instances150(1) and150(2). In this example, the two shards144(1) and144(2) represent shards of a common matmaker configuration for a subscriber108of the service provider network102. Each VM instance150may be executing on a host152. Accordingly, the first VM instance150(1) is shown as executing on a first host152(1) and the second VM instance150(2) is shown as executing on a second host152(2). However, both instances150(1) and150(2) may execute on a single host152, in some embodiments. In addition, the VM instance150(2) is shown as being designated as a leader instance who is responsible for reading metrics156periodically from the data store148, and for adjusting, via a shard adjuster158, the shard count146based on the metrics156. AlthoughFIGS. 2A and 2Bcan represent a one-to-many correspondence of a single matchmaker (or matchmaker configuration) to multiple shards144, there may, in some embodiments, be a many-to-many correspondence of multiple matchmakers to multiple shards144for a given subscriber108. With this in mind, in some embodiments, the first shard144(1) may represent a shard144of a first matchmaker of a subscriber108, and the second shard144(2) may represent a shard144of a second, different matchmaker of the subscriber108, and the shard count146may represent a count of the total number of shards144for the multiple matchmakers (or matchmaker configurations). The former example of a one-to-many correspondence of a single matchmaker (or matchmaker configuration) to multiple shards144will be predominantly described in the examples herein.

FIG. 2Ashows incoming matchmaking requests136that are ingested into the system as players112are requesting to be placed into matches. The incoming matchmaking requests136may vary in number based on whether it is a light traffic time or a heavy traffic time.FIG. 2Ashows requests136(1)-(P), “P” being any number, have been ingested into the system. The system, in addition to determining a matchmaker shard144for which the individual requests136are to be queued, may record a time, T, at which the request136is queued for a given shard144. Accordingly, the times T1-Tprepresent the respective times at which the requests136(1)-136(P) are queued.

FIG. 2Aalso shows that a first subset of the requests136are queued in a first queue202(1) for the first matchmaker shard144(1), and that a second subset of the requests136are queued in a second queue202(2) for the second matchmaker shard144(2). These queues202may be managed for each shard144and configured to hold matchmaking requests136for assigning players112to matches by a given shard144. As discussed herein, the allocation of requests/tickets136to shards144(or queues202for shards144) may be random, or it may not be random. For example, partition keys may be used to group together requests136associated with players112who have been assigned a common partition key value, and the requests136associated with players112having a common partition key value may be allocated to a given queue202of a given shard144. As another example, a machine learning model(s) may be utilized to allocate the requests136so that the player population is distributed amongst the queues202of the matchmaker shards144in a way that is designed to improve the quality of matches.

The first matchmaker shard144(1) may execute a matchmaker algorithm to assign players112in the first queue202(1) to matches of a game, and the second matchmaker shard144(2) may execute the same matchmaker algorithm to assign players112in the second queue202(2) to matches of the game. As this is done, start and stop times of running the matchmaker algorithm may be recorded, and as more incoming matchmaking requests136are received, the requests136may be distributed into the respective queues202of the respective shards144, and the shards144may iteratively assign players112to matches of a game, as described herein.

At a reporting interval (e.g., every 15 seconds), the shards144report metrics156that may be stored in the data store148. Periodically, the leader instance150(2) reads the metrics156reported from all shards144and provides the metrics156as input to the shard adjuster158. As described herein, the metrics156may include, among other metrics, a maximum wait time metric reported by each shard144, as well as an algorithm utilization metric reported by each shard144. These metrics156may indicate, or may be used to determine, whether the shards144are overloaded or underloaded at the present time. In the example ofFIG. 2A, the shard adjuster158determines that the shards144(1) and144(2) are presently overloaded based on the metrics156, and determines to adjust204the shard count146by increasing the shard count146from two shards to three shards. For example, the maximum wait time metric156reported by the first matchmaker shard144(1) may have violated a threshold wait time for a predetermined period of time. For example, the first shard144(1) may have reported a maximum wait time metric of 5 seconds at the end of a first reporting interval, followed by a maximum wait time metric of 6 seconds at the end of a second reporting interval, followed by a maximum wait time metric of 6 seconds at the end of a third reporting interval, followed by a maximum wait time metric of 7 seconds at the end of a fourth reporting interval. In this example, the multiple maximum wait time metrics reported may violate a threshold wait time of, say, 5 seconds. In other words, for the last minute, the longest waiting player in each batch of matchmaking requests136has waited too long, and the shard adjuster158determines to increase the shard count146to three shards.

FIG. 2Billustrates the effect of this increase of the shard count146to three shards144. For example, a new, third matchmaker shard144(3) may be deployed206for execution on a third VM instance150(3) of a third host152(3), and the incoming requests136received after the increase of the shard count146may be distributed amongst the three queues202(1),202(2), and202(3) so that the three matchmaker shards144(1)-(3) can each work on their respective player allotments.

FIG. 3illustrates an example graphical user interfaces300by which a subscriber108can adjust a parameter that controls the sensitivity of adjusting a shard count146up and/or down, allowing the subscriber108to customize how (e.g., when, how fast, and/or by how much) matchmaker shards144are to be increased and/or decreased. The subscriber108may have created a subscriber profile or account with the service provider network102and may access the user interface200via a developer portal118.

The subscriber user interface300may include a message portion302that explains that a game hosting service106has the ability to reduce the time the subscriber's108players112wait to be matched with others by fragmenting the subscriber's108player population into smaller groups (e.g., distributing the player population amongst the multiple matchmaker shards144, as described herein). The message portion302may further explain a parameter that is adjustable via a control element304(e.g., a slider bar) to control an aspect of the adjustment of the shard count146that dictates the number of matchmaker shards144deployed at a given time for the subscriber's108matchmaker. In the example user interface300ofFIG. 3, the control element304may be set to the farthest left position possible (e.g., to match the subscriber's108players112as fast as possible), and the subscriber108may adjust a parameter by manipulating the control element304, such as by moving a slider to the right to indicate that the subscriber108is willing to have its players112wait a little bit longer to be placed into higher-quality matches (e.g., to be placed into matches amongst as many of their peers as possible). This “wait time” parameter may be configurable on a per-game basis so that the subscriber108can control the wait times permissible for different games. For example, for certain types of games where players112play for long hours and get very involved with the gameplay, the subscriber108may set a longer permissible wait time (e.g., by moving the control element304farther to the right on the user interface300) because players112of the game may be willing to wait longer for a quality match. By contrast, for a game that is played for a short time (perhaps by players112that do not spend a lot of time playing online games), the permissible wait time may be set to a shorter amount of time by the subscriber108(or the subscriber108may leave the control element304at the far left of the slider bar) because players112of the game may be less willing to wait to be placed into a match if they care little about with whom they play the game.

The processes described herein are illustrated as a collection of blocks in a logical flow graph, which represent a sequence of operations that can be implemented in hardware, software, or a combination thereof. In the context of software, the blocks represent computer-executable instructions that, when executed by one or more processors, perform the recited operations. Generally, computer-executable instructions include routines, programs, objects, components, data structures, and the like that perform particular functions or implement particular abstract data types. The order in which the operations are described is not intended to be construed as a limitation, and any number of the described blocks can be combined in any order and/or in parallel to implement the processes.

FIG. 4illustrates a flow diagram of an example process400for setting up a matchmaker for a subscriber108of a service provider network102. For discussion purposes, the process400is described with reference to the previous figures.

At402, a computing device(s) of a service provider network102may receive a request142, from a subscriber device110of the subscriber108, to create a new matchmaker. This create matchmaker request142may be received over a network(s)120and/or via the developer portal(s)118ofFIG. 1. As part of this create matchmaker request142, the subscriber108may configure customized matchmaking rules using a customizable rules engine that allows subscriber108to design how to match players112together based on player attributes, game modes, skill level, geographic regions, etc. These matchmaking rules are to be adhered to when matching players112together into matches. These matchmaking rules can be based on any suitable factors, such as, without limitation, skill level of the players112, amount of time the players112have been waiting to be placed into a match, geographic regions of the players112, social connections amongst the players112, player preferences for particular game attributes or features (e.g., maps, weapons, etc.), and/or other factors.

At404, the computing device(s) of a service provider network102may determine a maximum number, Max, to which the shard count146is adjustable for the newly-created matchmaker. For example, as described herein, the matchmaking software corresponding to the newly-created matchmaker may be implemented as a number of matchmaker shards144, where each shard144can potentially run on a different host152within the service provider network102. In some embodiments, the determination at block404may include consulting an override table maintained in a data store148to determine a maximum shard value, Max, from the override table for the given subscriber108, and copying the maximum shard value, Max, to the subscriber's108settings for that newly-created matchmaker to indicate the maximum number, Max, of shards144to which the shard count146is adjustable for that matchmaker.

At406, the computing device(s) of a service provider network102may initialize the shard count146at the first (initial) number of matchmaker shards144. The shard count146may be initialized at any suitable initial number from a minimum number (e.g., one shard) to the maximum number, Max, determined at block404. In some embodiments, the initial number of shards144may be set to the minimum number. For example, if the minimum number to which the shard count146is adjustable equals one shard, the shard count146may be initialized to one shard at block406. In some embodiments, the initial number of shards144may be set to a number greater than the minimum number, and this determination of the initial number may be based at least in part on a matchmaking preference set by the subscriber108, such as the matchmaking preference described with respect toFIG. 3.

At408, the computing device(s) of a service provider network102may assign the first (initial) number of matchmaker shards144to one or more VM instances150executing on respective host computers152. If the initial number of matchmaker shards144equals one shard144, the assignment at block408may be to a single VM instance150executing on a single host computer152. However, if the initial number of matchmaker shards144equals a number greater than one, the assignment at block408may be to multiple VM instances150, possibly executing on multiple host computers152. As shown by sub-block410, this assignment at block408may include a load-balancing operation(s). For example, the assignment of an individual matchmaker shard144to a VM instance150of a given host152at block408may be carried out by a “load balancing node.” In some embodiments, one of the VM instances150may be designated as this load balancing node using a system of distributed locks. Consider an example where the VM instance150(N) shown inFIG. 1is designated as the load balancing node. This designation may be made by the VM instance150(N) claiming ownership of (or acquiring) a particular lock. With this particular lock acquired, the VM instance150(N) may determine, or select, the most appropriate VM instance150and/or host152to which an individual matchmaker shard144should be assigned at block408. The selection of the most appropriate VM instance150and/or host152to host the matchmaker shard144may be based on various factors including, without limitation, a current capacity of the host152and/or the current capacity of the VM instance150, whether the VM instance150is in charge of other tasks, such as reading metrics reported by other shards144for determining whether to adjust the shard count146, etc.

At412, the assigned VM instance(s)150may obtain or acquire a lock that is associated with (or specific to) the matchmaker shard144in order to secure ownership of the matchmaker shard144, and this information may be written to a lock table maintained in the data store148. After the initial assignment, the load balancing node may be configured to, at one or more different times (e.g., periodically, in response to the occurrence of an event, etc.), analyze the factors it uses for assigning shards144to VM instances150and/or hosts152at block408, and determine whether any shards144can be redistributed/reassigned to other VM instances150and/or hosts152to provide an improved balance of shards across VM instances150and/or hosts152.

FIG. 5illustrates a flow diagram of an example process500for assigning players112to matches of a game using one or more active matchmaker shards144deployed on VM instances150of host computers152. For discussion purposes, the process500is described with reference to the previous figures.

At502, a computing device(s) of a service provider network102may execute a first (current) number of matchmaker shards144on one or more VM instances150allocated to a subscriber108of the service provider network102. For example, the VM instance(s)150on which the first number of matchmaker shards144is executing may be the instance(s)150that acquired the lock(s) for the shard(s)144at412of the process400. The first number of matchmaker shards144executed at block502may be an initial number of shards (e.g., a minimum number, such as one shard), or a number that was adjusted from the previous number of shards144. In any case, the first number of matchmaker shards144executed at block502may be one shard or multiple shards, and may include at least a first matchmaker shard144(1) executing on a first VM instance150(1) of a first host computer152(1). Furthermore, as described herein, in a scenario where the current number of shards144is greater than one, the multiple shards144may be executing on the same VM instance150, on different VM instances150of the same host152, or on VM instances150across different hosts152.