U.S. Pat. No. 11,465,051

SYSTEMS AND METHODS FOR COACHING A USER FOR GAME PLAY

AssigneeSony Interactive Entertainment Inc

Issue DateJune 26, 2020

Illustrative Figure

Abstract

A method for processing a self-coaching interface is described. The method includes identifying a gameplay event during gameplay by a user. The gameplay event is tagged as falling below a skill threshold. The method further includes generating a recording for a window of time for the gameplay event and processing game telemetry for the recording of the gameplay event. The game telemetry is used to identify a progression of interactive actions before the gameplay event for the window of time. The method includes generating overlay content in the self-coaching interface. The overlay content is applied to one or more image frames of the recording when viewed via the self-coaching interface. The overlay content appears in the one or more image frames during a playback of the recording. The overlay content provides hints for increasing a skill of the user to be above the skill threshold.

Description

DETAILED DESCRIPTION Systems and methods for coaching a user for game play are described. It should be noted that various embodiments of the present disclosure are practiced without some or all of these specific details. In other instances, well known process operations have not been described in detail in order not to unnecessarily obscure various embodiments of the present disclosure. FIG. 1is a diagram of an embodiment of a system100to illustrate a play of a game. The system100includes a display device1that displays a virtual scene110of the game. The display device1has a camera101that captures one or more images of a real-world environment in front of the camera101. For example, the camera101captures one or more images within a field-of-view of the camera101. As an example, each virtual scene is an image frame. In one embodiment, the terms image and image frame are used herein interchangeably. Examples of a display device, as used herein, include a smart television, a television, a plasma display device, a liquid crystal display (LCD) device, and a light emitting diode (LED) display device. A user1is playing the game, such as a video game, by using a game controller1. Examples of the video game include a single player game or a multi-player game. Examples of a game controller1include a Playstation™ controller, an Xbox™ controller, and a Nintendo™ controller. As an example, a game controller includes multiple joysticks and multiple buttons. The display device1displays multiple images of the game. The user1logs into a user account1that is assigned to the user1by a server system to access the game from the server system. For example, a user identity (ID) assigned to a user and a password assigned to the user by the server system are authenticated by the server system to determine to allow the user to access the game. Upon accessing ...

DETAILED DESCRIPTION

Systems and methods for coaching a user for game play are described. It should be noted that various embodiments of the present disclosure are practiced without some or all of these specific details. In other instances, well known process operations have not been described in detail in order not to unnecessarily obscure various embodiments of the present disclosure.

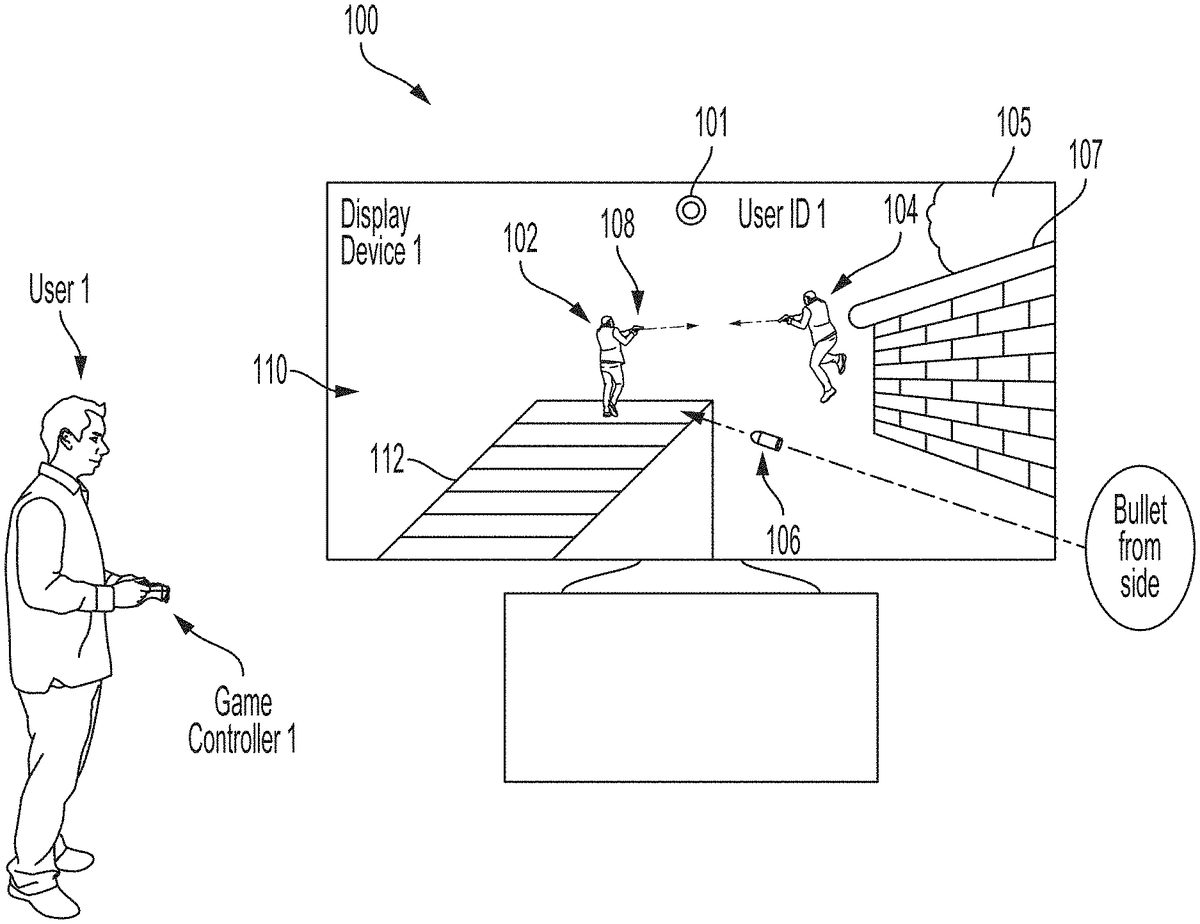

FIG. 1is a diagram of an embodiment of a system100to illustrate a play of a game. The system100includes a display device1that displays a virtual scene110of the game. The display device1has a camera101that captures one or more images of a real-world environment in front of the camera101. For example, the camera101captures one or more images within a field-of-view of the camera101. As an example, each virtual scene is an image frame. In one embodiment, the terms image and image frame are used herein interchangeably. Examples of a display device, as used herein, include a smart television, a television, a plasma display device, a liquid crystal display (LCD) device, and a light emitting diode (LED) display device. A user1is playing the game, such as a video game, by using a game controller1. Examples of the video game include a single player game or a multi-player game. Examples of a game controller1include a Playstation™ controller, an Xbox™ controller, and a Nintendo™ controller. As an example, a game controller includes multiple joysticks and multiple buttons.

The display device1displays multiple images of the game. The user1logs into a user account1that is assigned to the user1by a server system to access the game from the server system. For example, a user identity (ID) assigned to a user and a password assigned to the user by the server system are authenticated by the server system to determine to allow the user to access the game.

Upon accessing the game, the user1plays the game by using the game controller1. During the play of the game, the virtual scene110is generated and a user ID1assigned to the user1is displayed in the virtual scene110.

In the game, the user1uses the game controller1to build a virtual ramp112on which a virtual user102can climb to have a height advantage over another virtual user104. The virtual user102, the virtual ramp112, and the virtual user104are within the virtual scene110. Also, in the game, movement of the virtual user102is controlled by the user1with the game controller1. The user1uses the game controller1to control a virtual gun108that is held by the virtual user102. For example, the user1uses the game controller1to control the virtual gun108to shoot at the virtual user104. The virtual gun108is a portion of the virtual scene110. The virtual user104is controlled by another user, such as a user2or a user3, in the multi-player game.

During the play of the game, in the virtual scene110, a virtual bullet106is directed towards the virtual user102while the virtual user102is shooting at the virtual user104and the virtual user104is shooting at the virtual user102. The virtual bullet106is directed towards to the virtual user102from a side of the virtual user102. The virtual scene110does not include a virtual user that shot the virtual bullet106at the virtual user102. The virtual scene110includes a virtual tree105and a virtual wall107. It should be noted that each of the virtual tree105, the virtual wall107, the virtual ramp112, the virtual user102, the virtual gun108, the virtual bullet108, and the virtual user104is an example of a virtual object.

In one embodiment, the server system authenticates a user ID and a password to allow a user to access multiple games for game play.

In an embodiment, instead of the display device1, a head-mounted display (HMD) is used. The HMD is worn by the user1on his/her head.

FIG. 2is a diagram of an embodiment of the system100to illustrate a virtual scene202of the game. The virtual scene202is displayed on the display device1and follows the virtual scene110during the play of the game. For example, both the virtual scenes110and202are displayed during the same gaming session. The user1is not able to save the virtual user102from being hit by the virtual bullet106(FIG. 1). After the virtual bullet108hits the virtual user102, the virtual user102dies in the game and is beamed by a virtual drone204of the virtual scene202. Also, before the virtual user102dies in the game, the user1manages to control the game controller1to further control the virtual user102and the virtual gun108(FIG. 1) to kill the virtual user104. When the virtual user104is killed, the virtual user104is beamed by another virtual drone206in the virtual scene202. Also, in the virtual scene202, the user ID1is displayed.

FIG. 3is a diagram of an embodiment of the system100to illustrate another virtual scene302of the game. The virtual scene302is displayed after the virtual scene202(FIG. 2) is displayed or before the virtual scene110(FIG. 1) is displayed. In the virtual scene302, the virtual user102is controlled by the user1via the game controller1. The virtual scene302is displayed on the display device1. The virtual user102is controlled to use the virtual gun108to shoot at the virtual user104. Also, the virtual scene302includes a virtual wall304built by the virtual user102. For example, the user1uses the game controller1to control the virtual user102to build the virtual wall304to protect the virtual user102from virtual bullets being shot by the virtual user104.

In the virtual scene302, a virtual bullet306hits the virtual user102from behind. The user1cannot see, from the virtual scene302, which virtual user shot the virtual bullet306at the virtual user102. The virtual scene302does not include a virtual user that shot the virtual bullet306at the virtual user102.

It should be noted that the virtual scene302is displayed on the display device1during a gaming session that is the same or different from a gaming session in which the virtual scenes110and202ofFIGS. 1 and 2are displayed. For example, after the virtual user102dies in the virtual scene202, a game program of the game is executed by a processor of the server system to provide a waiting time period in which the user1waits for the virtual user102to respawn or rejuvenate or to come alive. An example of a processor, as used herein, includes a microprocessor or a microcontroller or a central processing unit (CPU) or an application specific integrated circuit (ASIC) or a programmable logic device (PLD). The waiting time period occurs before the game displays the virtual scene302. In this example, the user1does not log out of the user account1between the display of the virtual scenes202and302, and so the virtual scenes110,202, and302are displayed during the same gaming session. As another example, after the virtual user102dies in the virtual scene202, the user1logs out of the user account1. The user1later logs into the user account1. After the user1logs into the user account1, the virtual scene302is displayed. In this example, the virtual scene302is displayed during a gaming session that is different from the gaming session in which the virtual scenes110and202are displayed.

In one embodiment, a gaming session ends at a time the virtual user102that is controlled by the user1either dies or wins the gaming session. The virtual user102wins the gaming session when the virtual user102kills all other virtual users in the gaming session or survives beyond a prestored time period during the gaming session. Once the virtual user102dies, the virtual user102can be respawned and another gaming session begins, and the other gaming session ends either when the virtual102dies or wins the other gaming session.

FIG. 4is a diagram of an embodiment of the system100to illustrate yet another virtual scene402in which the virtual user102dies. The virtual scene402is displayed after the virtual scene302(FIG. 3) is displayed. For example, both the virtual scenes302and402are displayed during the same gaming session. In the virtual scene402, the virtual users102and104are killed. The virtual user102is killed by the virtual bullet306(FIG. 3). The virtual scene402includes the virtual drones204and206used to beam in the virtual users102and104.

FIG. 5Ais a diagram of an embodiment of a system500to illustrate the recording of game event data510by a game recorder502. The system500includes a processor system505. An example of a processor system505is a server system, which includes one or more servers of a data center or of multiple data centers. Another example of the processor system505includes one or more processors of the server system. Yet another example of the processor system505includes one or more virtual machines. An example of the game recorder502is a digital video recorder that records both video and audio data of the game event data510. The processor system505includes the game recorder502, a game processor506and a coaching processor508. The game processor506is coupled to the game recorder502and to the coaching processor508. The coaching processor508is coupled to the game recorder502.

The game processor506executes a game program528to facilitate the play of the game by the user1and by other users. As an example, the game program528includes a game engine and a rendering engine. The game engine is executed for determining positions and orientations of various virtual objects of a virtual scene. The virtual scene includes a background, which is an example of the virtual object. The rendering engine is executed for determining graphics parameters, such as color, intensity, shading, and lighting of the virtual objects of the virtual scene. The game program528is stored in one or more memory devices of the processor system502and the one or more memory devices are coupled to the game processor506. When the game program528is executed, the game event data510is generated and the virtual scenes110,220,302, and402(FIGS. 1-4) are displayed on the display device1.

The game event data510generated is for the user ID1. For example, the virtual scenes110(FIG. 1),202(FIG. 2),302(FIG. 3), and402(FIG. 4) of the game event data510are generated upon execution of the game program528. The game program528is executed when the user ID1and other information, such as a password or a phone number or a combination thereof, of the user1are authenticated by the processor system505.

As an example, the game event data510includes multiple game events1through m leading up to a death1of the virtual user102is illustrated in the virtual scene202(FIG. 2), where m is a positive integer. To illustrate, the game event data510includes data of the virtual scenes110(FIG. 1) and202. To further illustrate, a game event512is data of the virtual scene110and a game event514is data of the virtual scene202. As another example, the game event data510includes multiple game events1through n leading up to a death x of the virtual user102illustrated in the virtual scene402(FIG. 4), where n and x are positive integers. To illustrate, the game event data510includes data of the virtual scenes302(FIG. 3) and402. To further illustrate, a game event516is data of the virtual scene302and a game event518is data of the virtual scene402. As another example, the game event data510includes multiple game events1through o leading up to a decrease in health level of the virtual user102to be below a predetermined threshold, where o is a positive integer. To illustrate, a game event520is data of a virtual scene in which the virtual user102has full health and a game event522is data of a virtual scene in which the virtual user102's health decreases to be below the predetermined threshold. As yet another example, the game event data510includes multiple game events1through p leading up to a decrease in health level of the virtual user to be below the predetermined threshold, where p is a positive integer. To illustrate, a game event524is data of a virtual scene in which the virtual user102has full health and a game event526is data of a virtual scene in which the virtual user102's health decreases to be below the predetermined threshold. It should be noted that a series of game events from the game event520to the game event522occurs during the same or a different gaming session then an occurrence of a series of game events from the game event524to the game event526.

During execution of the game program528, the game recorder502records the game event data510. For example, a processor of the game recorder502stores or writes the game event data510to one or more memory devices of the game recorder502. Examples of the memory device include a read-only memory, a random access memory, and a combination thereof. To illustrate, the memory device is a flash memory device or a redundant array of independent disks (RAID).

During or after execution of the game program528, the game event data510that is recorded is provided from the game recorder502to the coaching processor508. For example, the coaching processor508periodically requests the game recorder502for obtaining the game event data510from the game recorder502. In response to reception of a request from the coaching processor508, the game recorder502accesses, such as reads, the game event data510from the one or more memory devices of the game recorder502and sends the game event data510to the coaching processor508. As another example, the game recorder502periodically sends the game event data to the coaching processor508. To illustrate, without receiving any request from the coaching processor508, the game recorder502accesses the game event data510from the one or more memory devices of the game recorder502and sends the game event data510to the coaching processor508.

During or after execution of the game program528, a coaching program530is executed by the coaching processor508. For example, the coaching program530is executed after the display of the virtual scene202(FIG. 2) and before the display of the virtual scene302(FIG. 3) or after the display of the virtual scene402(FIG. 4).

It should be noted that the coaching program530performs the functions described herein as being performed by the coaching program530when the coaching program530is executed by the processor system505. It should further be noted that in an embodiment instead of the game recorder502, multiple game recorders are used. Similarly in one embodiment is that of the game processor506, multiple game processors are used. In one embodiment instead of the coaching processor508, multiple coaching processors are used.

In one embodiment, the game recorder502, the game processor506and the coaching processor508are coupled with each other via a bus.

In an embodiment, the coaching program530is a portion of the game program528and is integrated into the game program528. For example, a computer program code of the coaching program520is interspersed with a computer program code of the game program528.

In one embodiment, a game event is sometimes referred to herein as a gameplay event.

In an embodiment, each time a death of the virtual user102occurs, the coaching program530identifies from the game event data510, that the death has occurred. For example, the coaching program530determines that the virtual scene202includes the virtual drone204above the virtual user204to determine that the virtual user102has died. The coaching program520tags, such as assigns a keyword, such as death or demise, to the virtual scene202in which the virtual user102dies, and stores the keyword as metadata within one or more memory devices of the processor system505. Each time the virtual user102dies, the coaching program530determines that a skill level of the user1is below a preset threshold, which is stored in one or more memory devices of the processor system505. For example, each time the virtual user102dies in the game, the game program528reduces a skill level corresponding to the user ID1to be below the preset threshold.

FIG. 5Bis an embodiment of the game recorder502to illustrate recording of game event data504for user IDs2through N, where N is a positive integer. The user IDs2through N are assigned to other users2through N that are playing the game. For example, the user ID2is assigned to a user2and the user IDN is assigned to a user N.

The game event data504includes a series of game events1through q for the user IDX and another series of game events1through r recorded for the user IDN. For example, a game event550includes data for a virtual scene that is accessed upon execution of the game program528(FIG. 5A). The game event q includes a death q of the virtual user102. The game program528is accessed after the user IDN is authenticated by the processor system505(FIG. 5A). As another example, a game event552includes data for a virtual scene that is accessed upon execution of the game program528. The game event552includes a death1of a virtual user that is controlled by the user N via a game controller N. As another example, a game event554includes data for a virtual scene that is accessed upon execution of the game program528and a game event556includes data for a virtual scene that is accessed upon execution of the game program528. The game event556includes a death r of the virtual user that is controlled by the user N via the game controller N, where r is a positive integer. During or after execution of the game program528, the game event data504that is recorded is provided from the game recorder502to the coaching processor508(FIG. 5A) in the same manner in which the game event data510(FIG. 5A) is provided from the game recorder502to the coaching processor508.

FIG. 6is a diagram of an embodiment of a system600to illustrate a use of the coaching program530to generate a coaching session608for training the user1. The system600includes the game event data510for the user ID1. Also, the system600includes the coaching program530, the game event data504for the user IDs2-N, a client device602, selected game event data604, and metadata606. Examples of a client device, as used herein, include a desktop computer, a laptop computer, a tablet, a smart phone, a combination of a hand-held remote controller and smart television, a combination of a hand-held controller (HHC) and a game console, and a combination of a hand-held controller and a head mounted display (HMD). A game controller is an example of the HHC. The display device1is an example of a display device of the client device602.

The game event data510for the user ID1includes video data612and audio data614. As an example, video data of game event data includes a position, an orientation, and the graphics parameters of multiple virtual objects displayed in a virtual scene. Also, as an example, audio data of game event data includes phonemes, vocabulary, lyrics, or phrases that are uttered by one or more virtual objects in a virtual scene. Similarly, the game event data504for the user IDs2-N includes video data and audio data for each of the user IDs2-N.

Examples of the metadata606include game telemetry, such as one or more game states for generating virtual objects in the game. For example, the metadata606includes a game state based on which a virtual object, which is not displayed or output or uttered in a virtual scene, is displayed in a coaching scene. As another example, the metadata606is information associated with positions and orientations of one or more virtual objects in a virtual scene, such as the virtual scene110or202or302or402(FIGS. 1-4), that is displayed during execution of the game program528. To illustrate, the metadata606is a position at which a virtual rectangular frame is displayed around a position of the virtual bullet106that is displayed in the virtual scene110. The virtual rectangular frame is not displayed in the virtual scene110. As another example, the metadata606is a position and orientation of a virtual user that is not displayed in the virtual scene110or202or302or402(FIGS. 1-4). The virtual user, not displayed, shoots the virtual bullet106(FIG. 1) or306(FIG. 3). The coaching program530generates the virtual user that shot the virtual user102at a position next to, e.g., to the right of, a position of the virtual bullet106to show the virtual user as shooting the virtual bullet106towards the virtual user102. As yet another example, the metadata606is a username of the virtual user that is not displayed in the virtual scene110or202or302or402and that shoots a virtual bullet. As another example, the metadata606is not used to generate a camera view from view point of the virtual user that is not displayed in the virtual scene110or202or302or402. For example, the metadata606is not used to generate an action replay of the virtual scene110or202or302or402from the viewpoint of the virtual user that is not displayed in the virtual scene110or202or302or402.

Examples of the coaching session608includes a display of a coaching scene in which the user1cannot play the game but can learn based on his/her previous play of the game, or previous play of the game by the users2-N, or a combination thereof. For example, the coaching program530is executed by the processor system505(FIG. 5A) to generate the coaching session608for the user ID1. An example of the coaching scene is a virtual scene that is generated and rendered upon execution of the coaching program530. When the coaching session608is generated, one or more coaching scenes are displayed on the client device602. The coaching scenes, when displayed for a user ID, do not allow a play of the game to the user ID. For example, during a display of the coaching scenes for the user ID1, the game program528(FIG. 5A) is not executed by the processor system505for the user ID1. To illustrate, for the user ID1, the processor system505does not render a virtual scene that is generated by executing the game program528during a display of one or more coaching scenes for the user ID1. Instead, the processor system505executes the coaching program530for rendering the one or more coaching scenes for the user ID1.

In an operation610, the coaching program530receives an input signal616from the client device602to initiate the coaching session608. For example, during a play of the game, the user1selects a button on the client device1to generate the input signal616for initiating the coaching session608. To illustrate, after the virtual user102dies in the virtual scene202or402(FIG. 2 or 4), the user1selects a button of the game controller1(FIG. 1). Upon receiving the selection, the game controller1generates the input signal616for initiating the coaching session608. The input signal616is sent from the client device602to the coaching program530.

The coaching program530receives the input signal616and identifies from the input signal616that the coaching session608is to be initiated. Upon identifying to the coaching session608is to be initiated, the coaching program530generates an output signal618including a request620for one or more user IDs622for which the coaching program520is to be initiated. For example, the output signal618includes a query to the client device602for obtaining information regarding whether the coaching program530is to be initiated based on gameplay by the user ID1or user ID2or user IDN or a combination of two or more of the user IDs ID1, ID2, and IDN. To illustrate, the output signal618includes an inquiry for obtaining the user ID1or user ID2or the user IDN or a combination of two or more thereof that is accessed for gameplay of the game.

The client device602receives the request620for the one or more user IDs622from the coaching program530. For example, the client device602displays the request602for the one or more user IDs622on a display screen of the client device602. To illustrate, the client device602displays a list of the user IDs1-N on the display screen of the client device602. As another example, the client device602outputs a sound via a headphone worn by the user1asking the user1for the one or more user IDs622. The headphone is a part of the client device602or is coupled to the client device602. As yet another example, the client device602outputs a sound via one or more speakers of the client device602asking the user1for the one or more user IDs622.

The client device602receives the one or more user IDs622from the user1and generates an input signal623that includes the one or more user IDs622. For example, the user1selects one or more buttons on the game controller1to provide the one or more user IDs622. To illustrate, the user1selects one or more buttons on the game controller1to select one or more of the user IDs1-N displayed on the display screen of the client device602. The client device602sends the input signal623to the coaching program530.

Upon receiving the one or more user IDs622within the input signal623, the coaching program530generates an output signal624including a request626for identifying a game sequence type for which the coaching session608is to be generated for the one or more user IDs622. For example, the request626is an inquiry regarding whether the game sequence type is of a type 1 or a type 2. An example of type 1 of a game sequence includes multiple game events that lead to a death of a virtual user and an example of type 2 of a game sequence includes multiple game events that lead to a decrease in health level of the virtual user. To illustrate, the type 1 includes the game events512through514and the game events516and518, and the type 2 includes the game events520through522and524through526(FIG. 5A).

The client device602receives the output signal624including the request626for identifying the game sequence type and outputs the request to the user1via a user interface or another type of interface of the client device602. For example, the client device602displays or renders the request626via a display screen of the client device602. To illustrate, the client device602displays a list of game sequence types including a death of the virtual user102(FIGS. 1-4) and a reduction in a health level of the virtual user102below the predetermined threshold. As another example, the client device602outputs a sound via one or more speakers of the client device602and the sound includes the request626for the game sequence type. As yet another example, the client device602outputs a sound via the headphone to the user1for obtaining a response to the request626.

In response to the request626, the user1provides a game sequence type identifier628via a user interface or another type of interface of the client device602. For example, the user1selects one or more buttons of the game controller1to provide the game sequence type identifier628. To illustrate, the user1selects one or more buttons to select either the game sequence type of death of the virtual user102or the reduction in the health level of the virtual user102. Upon receiving the selection of the game sequence type identifier628, the client device602generates an input signal630including the game sequence type identifier628and sends the input signal630to the coaching program530.

The coaching program530receives the input signal630and identifies the game sequence type identifier628from the input signal630. Upon identifying the game sequence identifier628, the coaching program530generates an output signal634including a request632for identifying a game event of the sequence type identified by the game sequence identifier628. For example, to determine whether the coaching session608is to be generated for the game event514or518(FIG. 5A) of death of the virtual user102, the coaching program530generates the request632for identifying the game event514or518. The coaching program530sends the output signal634to the client device602.

Upon receiving the output signal634, the client device602identifies the request632from the output signal634and provides the request632via a user interface or another interface of the client device602to the user1. For example, the client device602displays a list of the game events514and518on the display screen of the client device602. To illustrate, the client device602displays the virtual scene202and the virtual scene402on the display screen of the client device602to allow the user1to select one of the virtual scenes202and402.

The user1responds to the request632to identify the game event for which the coaching session608is to be generated. The game event is identified to provide a game event identifier636. For example, the user1selects via one or more buttons of the game controller1, the virtual scene202to identify the game event514or the virtual scene402to identify the game event518. In response to receiving the selection of the virtual scene202or402, the client device602generates an input signal638having the game event identifier636, and sends the input signal638to the coaching program530.

Upon receiving the input signal638, the coaching program530identifies the game event identifier636and selects the game event data604for which the coaching session608is to be generated for the game user ID622. For example, the coaching program530identifies the game event identifier636identifying the game event514(FIG. 5A) recorded based on the virtual scene202(FIG. 2) that is displayed when the game program528is executed for the user ID1. An example of the selected game event data604includes the game event514or the game event518(FIG. 5A).

Upon identifying the game event identifier636for which the coaching session608is to be generated, the coaching program530is executed by the processor system505to store a portion of the game event data510that is recorded within a predetermined amount of time interval from a time of recording of the game event identified by the game event identifier636to output the selected game event data604. For example, upon identifying the game event514for which the coaching session608is to be generated, the coaching program530stores one or more game events that are recorded within a predetermined time interval before a time at which the game event514is recorded. The coaching program530also stores the game event514. The one or more game events lead up to or result in an occurrence of the game event514. To illustrate, the coaching program530deletes from one or more memory devices of the processor system505one or more game events that are recorded outside the predetermined time interval before the time at which the game event514is recorded. The game event514and the game events within the predetermined time interval are stored by the coaching program530as the selected game event data604. Also, in this illustration, the processor system505retains within the one or more memory devices of the processor system505one or more game events that are recorded within the predetermined time interval before the time at which the game event514is recorded to retain the selected game event data604. The one or more game events that are deleted lead to the one or more game events that are retained, and the one or more game events that are retained lead to the game event514. The one or more game events that are retained are the selected game event data604.

To explain further, during a gaming session of the game, a series of virtual scenes are generated and recorded upon execution of the game program528. The series includes a first series, a second series, and a third series. The first series includes a first set of consecutive virtual scenes in which the virtual user102collects items, such as virtual weapons and virtual objects, such as bricks or metal bars, to defend the virtual user102from virtual bullets. The second series includes a second set of consecutive virtual scenes in which the virtual user102fights with other virtual users. The third series includes a third set of consecutive virtual scenes, such as the virtual scenes110and202(FIGS. 1 and 2), for which the game events512and514(FIG. 5A) are recorded. The second series is consecutive to the first series and the third series is consecutive to the second series. The processor system505deletes the first and second series of virtual scenes and retains the third series of virtual scenes. The third series is the selected game event data604.

As another illustration, the coaching program530stores the selected game event data604, which includes a portion of the game event data510, within one or more memory devices of the processor system505. The one or more memory devices of the processor system505in which the selected game event data604is stored are different from, e.g., not the same as, one or more memory devices in which the game event data510is recorded. In one embodiment, instead of being stored at different one or more memory device, the selected game event data604is stored in a memory device at different memory addresses than memory addresses at which the game event data510is recorded in the memory device.

As yet another example, upon identifying the game event514for which the coaching session608is to be generated, the coaching program530stores one or more game events that are recorded within a predetermined time interval before a time at which the game event514is recorded, one or more game events recorded within a preset time interval after the time at which the game event514is recorded, and the game event514. The one or more game events that are recorded within the predetermined time interval before the time at which the game event514is recorded, the game event514, and the one or more game events recorded within the preset time interval after the time at which the game event514is recorded are the selected game event data604. For example, upon identifying the game event514for which the coaching session608is to be generated, the coaching program530stores one or more game events that are recorded within the preset time interval after the time at which the game event514is recorded. The game event514is followed by the one or more game events recorded within the preset time interval after the time at which the game event514is recorded. To illustrate, the coaching program530deletes from one or more memory devices of the processor system505one or more game events that are recorded outside the preset time interval after the time at which the game event514is recorded. The one or more game events that occur within the preset time interval after the time at which the game event514is recorded lead to the one or more game events that are deleted. Also, in this illustration, the processor system505retains within the one or more memory devices of the processor system505one or more game events that are recorded within the preset time interval after the time at which the game event514is recorded.

To explain further, during a gaming session of the game, a series of virtual scenes are generated upon execution of the game program528. The series includes a first series, a second series, and a third series. The first series includes a first set of consecutive virtual scenes in which the virtual user102collects items, such as virtual weapons and virtual objects, such as bricks or metal bars, to defend the virtual user102from virtual bullets after the virtual user102dies in the game event514as indicated in the virtual scene202. The second series includes a second set of consecutive virtual scenes in which the virtual user102dances with other virtual users. The third series includes a third set of consecutive virtual scenes in which the virtual user102continues to dance with the other virtual users. The second series is consecutive to the first series and the third series is consecutive to the second series. The processor system505deletes the second and third series of virtual scenes and retains the first series of virtual scenes. The first series is the selected game event data604.

Based on the selected game event data604, the coaching program530processes the metadata606to generate and render virtual objects, such as overlays, that can increase game skills of the user1by enabling the user1to see one or more reasons for occurrence of the game event identified by the game event identifier636. For example, the coaching program530processes the metadata606to identify a position and orientation of a virtual user that shot the virtual user102and determines to include an overlay of the virtual user that shot the virtual user102. Once the overlay is displayed, the user1can see a reason for the death of the virtual user102. The reason for the death is the virtual user that shot the virtual user102. The overlay is an example of a virtual object. Also, the coaching program530generates a virtual frame surrounding the virtual user to highlight the virtual user. As another example, the coaching program530identifies, from the metadata606, a position of the virtual bullet106and determines that the virtual user102would not have been killed by the virtual bullet106if the virtual user102would have jumped or been protected by a virtual wall. The coaching program530generates a coaching comment for displaying to the user1to control the virtual user102to jump while shooting or to build a wall around the virtual user102. The coaching comment enables the user1to protect the virtual user102from being hit by the virtual bullet106. The user1can review the coaching comment. When faced with a similar situation in which the virtual user102is about to be killed by another virtual bullet, if the user1follows the coaching comment, the virtual user102can be protected from the other virtual bullet.

The coaching program530generates overlays of virtual objects according to the metadata606for overlaying on the selected game event data604to generate the coaching session608. For example, the coaching program530overlays a virtual frame around a virtual object in a virtual scene stored as the selected event data604. As another example, the coaching program530overlays a virtual object in the virtual scene202. The virtual object overlaid in the virtual scene202shot the virtual bullet106(FIG. 1). As yet another example, the coaching program530adds, as an overlay, a username or a user ID of the virtual object that shot the bullet106.

The coaching program530provides the coaching session608to the client device602. For example, the processor system505executes the coaching program530to generate one or more coaching scenes in which one or more virtual objects generated based on the metadata606are overlaid on the selected game event data604. To illustrate, the one or more coaching scenes include an overlay of one or more virtual objects on the virtual scene202. The one or more coaching scenes are displayed on the display screen of the client device602.

The coaching session608continues until an input640is received from the client device602. For example, the input640is generated when the user1selects one or more buttons on the game controller1to end the coaching session608. The input640is sent within an input signal642to the coaching program530. The coaching program530identifies the input640to end the coaching session608from the coaching program530and ends the coaching session608. For example, the coaching program530ends the coaching session530for the user ID1in response to the reception of the input640. To illustrate, execution of the game program528for the user ID1continues after the coaching session608ends for the user ID1.

In one embodiment, the coaching program530does not request the client device602for identifying a game sequence type and for identifying a game event of the game sequence type. For example, the coaching program530does not send the signals624and634to the client device602. There is no need for the user1to identify a game sequence type and a game event of the game sequence type. Rather, the coaching program530determines that the input signal616for initiating the coaching session608is received immediately after the game event514or518of death of the virtual user102. For example, the coaching program530determines that the input signal616is received at an end of occurrence of the game event514or518and before an occurrence of a game event consecutive to the game event514are518. Upon determining so, the coaching program530determines that the coaching session608is to be generated for the game event514or518.

In one embodiment, the coaching session608occurs during a play of the game. For example, both the coaching program530and the game program528are executed simultaneously by the processor system505. Before the user1plays the game, an input signal indicating a selection for simultaneous execution of the coaching program530with the game program528is received from the game controller1of the client device602by the processor system505. Upon receiving the input signal, the processor system505executes the coaching program530. When the coaching program530is executed, one or more virtual objects are generated based on the metadata606and rendered by the coaching program530of the processor system506for display along with virtual objects of virtual scenes that are generated by execution of the game program528. For example, one or more virtual objects generated based on the metadata606are displayed in the virtual scenes110,202,302, and402during the coaching session608.

In one embodiment, a coaching scene is referred to herein as a self-coaching interface, such as a self-coaching user interface.

FIG. 7Ais a diagram of an embodiment of a coaching scene700for illustrating use of one or more virtual objects with the virtual scene110(FIG. 1). The one or more virtual objects are generated based on the metadata606(FIG. 6). The coaching scene700includes the virtual user102, the virtual gun108, the virtual bullet106, and the virtual ramp112. The coaching scene700is generated upon execution of the coaching program530(FIG. 6), and is displayed on the display device1.

The coaching scene700includes a virtual comment702, a virtual frame704, another virtual frame706, a gun ID708, a virtual frame710, a bullet ID712, a gun ID714, a virtual frame716, a virtual gun718, a virtual user720, a virtual frame722, a virtual user ID724, a virtual game controller726, and a virtual button ID728. The coaching scene700excludes the virtual tree105and the virtual wall107so as to not clutter the coaching scene700with virtual objects unnecessary for coaching the user1. The virtual comment702, the virtual frame704, the virtual frame706, the gun ID708, the virtual frame710, the bullet ID712, the gun ID714, the virtual frame716, the virtual gun718, the virtual user720, the virtual frame722, the virtual user ID724, the virtual game controller726, and the virtual button ID728are examples of one or more virtual objects, such as overlays, generated based on the metadata606. It should be noted that the virtual user720is not in the virtual scene110(FIG. 1A).

The virtual comment702is a coaching comment, “Jump Now! OR Start building a wall now!” for the user1to avoid being hit by the virtual bullet106. The virtual frame704extends around the virtual user102to highlight the virtual user102. Similarly, the virtual frame706extends around the virtual gun108. The gun ID708identifies a type of the virtual gun108to highlight the virtual gun108. For example, the gun ID708identifies whether the virtual gun108is a shotgun or a pistol or a semiautomatic gun or a double-barrel gun or an AK-47. The virtual frame710extends around the virtual bullet106to highlight the virtual bullet106. The bullet ID712identifies a type of the virtual bullet106. Examples of types of a virtual bullet include a lead round nose bullet, a semi-jacketed bullet, and a full metal jacket bullet.

The virtual frame716extends around the virtual gun718that is held by the virtual user720. The gun ID714identifies a type of the virtual gun718. The virtual frame722extends around the virtual user720to highlight the virtual user720. The virtual user ID724is a user ID that is assigned to another user who controls the virtual user720that shot the virtual user102with the virtual bullet106. The user ID724is assigned by the processor system505(FIG. 5A). The virtual game controller726is an image of the game controller1that is used or held by the user1playing the game and controlling the virtual user102. The virtual button ID728is a button that is selected by the user1during an occurrence of the virtual scene110(FIG. 1) leading to a death of the virtual user102. By providing the metadata606in the virtual scene700, the coaching program530facilitates self-coaching of the user1to increase the skill level of the user1.

The coaching scene700is displayed along or simultaneously with a timeline scrubber730, which is a time bar or a time scale or a time axis or a timeline. The coaching program530generates and renders the time scrubber730, which is a portion of the coaching session608. The user1uses the game controller1to select one of many segments, such as segments732,734, and736, of the timeline scrubber732to access the coaching scene700from the processor system505and view the coaching scene700. For example, when the segment732is selected, the coaching scene700is displayed on the display device1.

The timeline scrubber730includes segments for displaying the selected game event data604on the display screen1. As an example, the timeline scrubber730includes segments and each segment can be selected by the user1via the game controller1to generate an input signal. When the input signals are received, a playback of the virtual scenes leading up to a game event, a virtual scene of the game event, and virtual scenes occurring after the game event are played back by the coaching program530with overlay content superimposed on one or more of the virtual scenes. As another example, the timeline scrubber730includes a set738of segments that can be selected by the user1via the game controller1to enable a display of coaching scenes in which one or more virtual objects generated based on the metadata606are overlaid on portions of virtual scenes that are recorded during the predetermined time period before the time at which a virtual scene, such as the virtual scene202, of death of the virtual user102is recorded. The predetermined time period extends from −P time units to 0 time units, where time units can be seconds or minutes. Moreover, the timeline scrubber730includes a segment736that can be selected by the user1via the game controller1to view a coaching scene in which one or more virtual objects generated based on the metadata606are overlaid on a portion of a virtual scene, such as the virtual scene202, that is recorded at a time of death of the virtual user102. When the segment736of the timeline scrubber730is selected by the user1via the game controller1, the coaching scene including one or more virtual objects generated based on the metadata606are overlaid on one or more of the virtual objects in the virtual scene202in which the virtual user102died is rendered by the coaching program530and displayed on the display device1. The death of the virtual user102is recorded at 0 units. The timeline scrubber730includes a set740of segments that can be selected by the user1via the game controller1to enable a display of coaching scenes in which one or more virtual objects generated based on the metadata606are overlaid on portions of virtual scenes that are recorded during the preset time period after the time at which a virtual scene, such as the virtual scene202, of death of the virtual user102is recorded. The preset time period extends from 0 time units to M time units, where time units can be seconds or minutes. There is a window of time between the time units −P and M.

In one embodiment, the coaching scene700excludes one or more of the virtual frame704, the virtual frame706, and the gun ID708. For example, the coaching program530determines that one or more of the virtual frame704, the virtual frame706, and the gun ID708is not needed to increase the skill level of the user1, and therefore does not generate one or more of the virtual frame704, the virtual frame706, and the gun ID708.

In an embodiment, instead of extending a frame around a virtual object in a coaching scene, the coaching program530highlights the virtual object by rendering the virtual object in a substantially different color or intensity or shade compared to colors or intensities are shades of other virtual objects in the coaching scene. For example, instead of extending the frame710around the virtual bullet106and the frame722around the virtual user720, the coaching program530renders the virtual bullet106and the virtual user720to have a substantially greater intensity than intensities of the virtual user102and the virtual gun108. Again, highlighting the virtual bullet106and the virtual user720in comparison with the virtual user102and the virtual gun108facilitates self-coaching of the user1.

In an embodiment, the coaching scene700includes the virtual tree105and the virtual wall107.

In one embodiment, the coaching program530changes a time period between the time units −P and M based on a number of game events that lead up to an end game event, such as death or decrease in health level. For example, the coaching program520determines that a first length of time for which the virtual user102engages in a battle sequence that leads to a first death of the virtual user102is greater than a second length of time for which the virtual user102engages in a second battle sequence that leads to a second death of the virtual user102. The first battle sequence is fought with one or more virtual users and the second battle sequence is fought with one or more virtual users. The coaching program520stores the selected game event data604that leads up to the first death for the first length of time and stores the selected game event data604that leads up to the second death for the second length of time. In this manner, the entire first and second battle sequences are captured and stored by the coaching program520.

FIG. 7Bis a diagram of an embodiment of a coaching scene750that is rendered and displayed on the display device1. The coaching scene750is rendered by the coaching scene750in response to an input signal indicating a selection of a segment752on the timeline scrubber730. The segment752is selected by the user1using one or more buttons on the game controller1.

The segment752is consecutive to the segment734and the segment734is consecutive to the segment732. For example, the segment752includes one or more virtual objects from a virtual scene that is recorded between a time at which the virtual scene110(FIG. 1) is recorded and a time at which the virtual scene202(FIG. 2) is recorded. Each virtual scene corresponding to a segment of the timeline scrubber730is recorded after being displayed on the display screen1.

The coaching scene750includes a virtual comment754, a virtual frame756, a virtual bullet758, and a bullet ID760. The virtual comment754is generated by the coaching program530(FIG. 6) to coach the user1to protect the virtual user102from being shot by the virtual bullet758. For example, the virtual comment754includes “Jump Now!”. The virtual frame756extends around or surrounds the virtual bullet758that is shot by the virtual user720after the virtual bullet106(FIG. 7A) is shot. The virtual frame756highlights the virtual bullet758. The bullet ID760identifies a type of the virtual bullet758. The coaching scene750excludes the virtual tree105and the virtual wall107so as to not clutter the coaching scene750with virtual objects unnecessary for coaching the user1.

It should be noted that in the coaching scene750, a progression of virtual objects compared to the virtual objects in the coaching scene700ofFIG. 7Ais illustrated. For example, in the coaching scene750, the virtual frame756that surrounds the virtual bullet758shot from the virtual gun718is illustrated. The virtual frame756is generated after the virtual frame710that surrounds the virtual bullet106is generated. The virtual bullet758is shot after the virtual bullet106is shot. Also, in the coaching scene750, the virtual comment754indicates to the user1to control the virtual user102to jump without an option for building a virtual wall. The coaching program530determines that at the time the virtual bullet758is shot, it is too late for the user1to build the virtual wall.

In one embodiment, when a segment753next to the segment752is selected by the user1via the game controller1, the processor system505processes the metadata606to display a position of the virtual bullet758that is closer to the virtual user102compared to a position of the virtual bullet758illustrated in the coaching scene750. The segment753corresponds to a coaching scene that includes one or more virtual objects from a first virtual scene that is consecutive in time from a time at which a second virtual scene is recorded by the game recorder502. The positions of the virtual bullet758in the first and second virtual scenes define movement of the virtual bullet758. The coaching scene750includes virtual objects from a playback of the second virtual scene.

In an embodiment, the coaching scene750includes the virtual tree105and the virtual wall107.

In one embodiment, the virtual comments702and754are examples of hints to the user1to increase the skill level to be above the preset threshold. For example, during a next gaming session, when the user1controls the virtual user102or build a virtual wall on at least one side of the virtual user102, chances of the virtual user102from being hit by a virtual bullet from a side or behind the virtual user102are reduced. The virtual user102can stay alive longer during the game session and the game program528increases the skill level of the user1to be above the preset threshold. Each hint provides a reason for a death of the virtual user102. For example, the virtual user102died during a previous gaming session because the virtual user102did not build a virtual wall or did not jump during the previous gaming session.

FIG. 8Ais a diagram of an embodiment to illustrate a simultaneous display of the coaching scene700and another coaching scene800on the display device1. In addition to the coaching scene700, the coaching scene800is rendered by the coaching program530(FIG. 6) on the display device1. For example, the coaching session608(FIG. 6) includes the coaching scenes700and800. As another example, an input signal is received by the coaching program530from the client device602. The input signal indicates a selection of the game event identifier636(FIG. 6) of game event data recorded from the virtual scene110(FIG. 1) based on which the coaching scene700is generated and a selection of another game event identifier of game event data recorded from which the virtual scene302(FIG. 3) based on which the coaching scene800is generated. In this example, when a selection of the segment732on the timeline scrubber730is received from the client device602, both the coaching scenes700and800are generated and rendered by the coaching program530. Also in this example, the timeline scrubber730is generated by the coaching program530and rendered along the coaching scenes700and800.

The coaching scene800is generated based on the virtual scene302(FIG. 3). For example, the virtual scene302is recorded and one or more virtual objects from the virtual scene302are included in the coaching scene800. To illustrate, the virtual user102, the virtual bullet306, and the virtual gun108are included in the coaching scene800. As another example, the coaching scene800is generated based on the virtual scene302that is displayed and recorded at a time corresponding to the segment732. To illustrate, the virtual scene302is recorded a number of time segments prior to a time of the game event q of the virtual user102. The virtual scene302is recorded in the same manner as that of the virtual scene110from which the coaching scene700is generated.

The coaching scene800includes a user ID802, a virtual frame804, a virtual user806, a virtual gun808, a virtual frame810, a gun ID812, the virtual bullet306, a bullet ID816, and a virtual frame818. The user ID802, the virtual frame804, the virtual user806, the virtual gun808, the virtual frame810, the gun ID812, the bullet ID816, and the virtual frame818are examples of one or more overlays of virtual objects generated based on the metadata606(FIG. 6).

The virtual frame804extends around the virtual user806to highlight the virtual user806that is a reason for the death x of the virtual user102. The virtual user806is controlled by another user via a game controller. The virtual frame810extends around the virtual gun808held by the virtual user806to highlight the virtual gun808. The gun ID812identifies a type of the virtual gun808. The user ID802is assigned to the other user that controls the virtual user806. The user ID802is assigned by the processor system505(FIG. 5A).

The virtual frame818extends around the virtual bullet306to highlight the virtual bullet306that is directed towards the virtual user102by the virtual user806. The bullet ID816identifies the virtual bullet306. Also, the coaching scene800includes the virtual comment702to facilitate coaching of the user1.

In one embodiment, a virtual comment displayed within the coaching scene800is different from the virtual comment702. For example, the virtual comment displayed within the coaching scene800is “Jump!” or “Build a wall” instead of “Jump now! OR Start building a wall now!”.

In an embodiment, one or more virtual objects in the coaching scene800are not highlighted by the coaching program530. For example, the coaching program530determines that there is no need for highlighting the virtual user102and the virtual gun108to increase the skill level of the user1, and determines not to generate the virtual frame704and the virtual frame706.

FIG. 8Bis a diagram of an embodiment of the display device1to illustrate a simultaneous rendering and display of the coaching scene700associated with the user ID1and another coaching scene850associated with the user ID N. The coaching scene850is generated by the coaching program530(FIG. 5A). The coaching scene850is generated for the user ID N. For example, when the input signal623(FIG. 6) includes the user ID1identifying the user1and includes the user ID N identifying another user who is assigned a user ID N, the coaching program530accesses the game event data504recorded for the user ID N from one or more memory devices of the processor system505and stores a portion of the game event data504as a portion of the selected game event data604in one or more memory devices of the processor system505. To illustrate, the one or more memory devices in which the game event data504is recorded are different than the one or more memory devices in which the selected game event data604is recorded. The one or more virtual objects generated based on the metadata606for the user ID N are overlaid by the coaching program530on the portion of the selected game event data604for the user ID N to generate the coaching scene850for the user ID N. The game event data504is generated during game play of the game by the other user having the user ID N.

The coaching scene850includes a virtual tree852, a virtual user854, a virtual gun856, a gun ID858, a user ID860, a virtual frame862, another virtual frame864, a virtual user866, a virtual gun868, a virtual frame870, another virtual frame872, a gun ID874, a virtual bullet876, a virtual frame878, a gun ID880, a virtual user882, a virtual frame884, a virtual gun886, a virtual frame888, a virtual wall890, another virtual wall892, a virtual health894, a user ID897, and a virtual ramp899. The coaching scene850further includes a virtual controller896, and a virtual button898. The user ID860, the virtual frame862, the virtual frame864, the virtual user854, the virtual gun856, the gun ID858, the virtual frame872, the gun ID874, the virtual frame888, the virtual frame884, the virtual frame870, the virtual controller896, and the virtual button898are examples of one or more virtual objects generated based on the metadata606for overlay.

The coaching scene850is generated for the user ID N based on a virtual scene that is generated upon execution of the game program528(FIG. 5A), and leads up to a death of the virtual user882. For example, the virtual scene from which the coaching scene850is generated includes one or more of virtual objects displayed in the coaching scene850. The virtual scene from which the coaching scene850is generated excludes one or more virtual objects generated based on the metadata606for display in the coaching scene850. To illustrate, the virtual scene from which the coaching scene850is generated includes the virtual bullet876, the virtual gun886, the virtual user882, the virtual ramp899, the virtual walls890and892, the virtual tree852, the user ID897, the virtual user866, and the virtual gun868. The virtual scene for the user ID N is generated after the other user accesses the game program528. The game program528is accessed by the other user when the user ID N is authenticated by the processor system505.

The virtual frame862surrounds the virtual user854and the virtual frame864surrounds the virtual gun856. The gun ID858identifies a type of the virtual gun856. The user ID860is assigned to a user that controls the virtual user854via a game controller or another type of controller. The virtual frame878extends around the virtual bullet876that is shot from the virtual gun856towards the virtual user882.

The virtual health894is a health of the virtual user882. The virtual frame884extends around the virtual user882and the virtual frame888extends around the virtual gun886. The virtual user882is standing on the virtual ramp899and is surrounded on two sides by the virtual walls890and892. The gun ID880identifies a type of the virtual gun886. Also, the gun ID874identifies a type of the virtual gun868. The virtual frame872surrounds the virtual gun868and the virtual frame870surrounds the virtual user866. The user ID897identifies a user who is controlling the virtual user866via a game controller or another type of controller.

The virtual controller896is an image of a controller, such as a keyboard, that is used by the other user to control the virtual user882. The virtual button898identifies a button on the controller that is represented by the virtual controller896. The button is selected by the other user at the time corresponding to the segment732during the play of the game.

The timeline scrubber730is displayed along or simultaneous with a display of the coaching scenes700and850. For example, the coaching program530(FIG. 6) renders the timeline scrubber730along with the simultaneous display of the coaching scenes700and850, for display of the timeline scrubber730with the coaching scenes700and850. When a selection of the segment732is received from the game controller1(FIG. 1) of the client device602(FIG. 6), the coaching program530renders the coaching scene850, which is generated based on a virtual scene that is recorded at a time corresponding to the segment732. The segment736indicates the time 0 at which a death of the virtual user882occurs during a play of the game by the other user that controls the virtual user882.

The virtual user882is shooting at the virtual user866. During the shootout, the virtual user854is shooting at the virtual user882. The virtual wall892protects the virtual user882from being injured by the virtual bullet876. The user1can learn from the coaching scene850to build a virtual wall, such as the virtual wall890, on a side of the virtual user102to protect the virtual user102from the virtual bullet106shot by the virtual user720.

In one embodiment, instead of the coaching program530, a coaching engine is used. As an example, an engine is a combination of software and hardware for executing functions described herein as being performed by the engine. To illustrate, the engine is a PLD or an ASIC that is programmed to perform the functions described herein as being performed by the engine.

In one embodiment, any virtual frame, described herein, surrounds a virtual object to highlight the virtual object.

FIG. 8Cis a diagram of an embodiment of a system801to illustrate that instead of or in addition to rendering a coaching scene on the display screen1, the processor system505generates audio data805that is output as sound to the user1via a head phone803. The head phone803is worn to by the user1to be proximate to ears of the user1. During the coaching session608, the coaching program530generates the audio data805that is output as sound to the user1. For example, during the coaching session608, instead of or in addition to displaying the virtual comment702, the coaching program530sends the audio data805to the headphone803. The audio data805includes a message, such as “Jump while shooting”, that is output as sound simultaneously with or instead of the virtual comment702displayed on the display screen1. The headphone803outputs the audio data805as the message to the user1.

In an embodiment, the virtual objects702,704,706,708,710,712,714,716,718,720,722,724,726,728(FIG. 7A),756,758(FIG. 7B),802,804,806,808,810,812,816,818(FIG. 8A),854,856,858,860,864,878,880,884,888,870,872,874, and897are examples of overlay content that is generated by the coaching program530based on the metadata606.

FIG. 9is a diagram of an embodiment of a system900to illustrate use of an inferred training engine902for generating the coaching session608(FIG. 6). The system900includes an artificial intelligence (AI) processor904, the game event data510for the user ID1, and the game event data504for the user IDs2-N. The AI processor904is a processor of the processor system505(FIG. 5A).

The inferred training engine902includes a feature extractor906, a feature classifier908, and a model910that is to be trained. An example of each of the feature extractor906, the feature classifier908, and the model910is an ASIC. Another example of each of the feature extractor906, the feature classifier908, and the model910is a PLD. An example of the model910is a network of circuit elements. Each circuit element has one or more inputs and one or more outputs. An input of a circuit element is coupled to one or more outputs of one or more circuit elements. To illustrate, the model910is a neural network or an artificial intelligence model. The feature classifier908is coupled to the feature extractor906and to the model910.

The inferred training engine902accesses, such as reads, the game event data510and the game event data504from one or more memory devices of the game recorder502. The feature extractor906extracts features from the game event data510and504. Once the features are extracted, the feature classifier908classifies the features that are extracted. The features that are classified are used to train the model910to determine a game event, such as death or decrease in health level, for which to initiate the coaching session608and to identify one or more virtual objects of a virtual scene that are to be associated with one or more overlays of virtual objects generated based on the metadata606.

FIG. 10is a flowchart to illustrate an embodiment of a method1000for training the model910. In an operation1002of the method1000, the feature extractor906identifies features from the game event data510and the game event data504(FIG. 9). For example, the feature extractor906determines that a skeletal of the virtual user102in the virtual scene202(FIG. 2) lies in a horizontal plane instead of a vertical plane, or determines that the virtual scene202includes the virtual drone204above the skeletal of the virtual user102for beaming the virtual user102, or determines that words, such as, “I am going to die” or “I am dead” are uttered by the virtual user102in the virtual scene202, or a combination thereof to determine a death of the virtual user102in the virtual scene202. As another example, the feature extractor906determines that a health level of the virtual health of the virtual user102in the virtual scene202has decreased to zero to determine that the health level has decreased to be below the predetermined threshold.

An operation1004of the method1000occurs after the operation1002. In the operation1004, the feature classifier908classifies the features extracted from the game event data510and504. For example, the feature classifier908determines that because the skeletal of the virtual user102lies in the horizontal plane, the virtual user102has died. As another example, the feature classifier908determines that because the virtual scene202includes the virtual drone204above the skeletal of the virtual user102, the virtual user102has died. As yet another example, the feature classifier908determines that because the virtual scene202includes the word “I” and “dead” or “I” and “die” in the same sentence uttered by the virtual user102in the virtual scene202, the virtual user102has died. As another example, the feature classifier908determines that because the health level of the virtual user102in the virtual scene202has decreased to be below the predetermined threshold, health of the virtual user102is low.

An operation1006of the method1000occurs after the operation1004. In the operation1006, the model910is trained based on a number of game events of a sequence type to determine a probability of occurrence of a game event of the sequence type during a next gaming session. For example, the model910determines a probability that the virtual user102will die during a next gaming session or a probability that a health level of the virtual user102will decrease below the threshold during a next gaming session. To illustrate, upon determining that the virtual user102has died during at least 6 out of the past 10 gaming sessions, the model910determines that a probability that the virtual user102will die during a next gaming session is high, e.g., above a preset threshold. On the other hand, upon determining that the virtual user102has survived during at least 6 out of the past 10 gaming sessions, the model910determines that a probability that the virtual user102will die during a next gaming session is low, e.g., below the preset threshold.

An operation1008of the method1000follows the operation1006. The operation1008is executed by the processor system505. Upon determining that the probability of occurrence of a game event of a sequence type during the next gaming session is low, the processor system505does not recommend, in the operation1008, that the coaching session608be initiated for the next gaming session. On the other hand, upon determining that the probability of occurrence of a game event of a sequence type during the next gaming session is high, the processor system505recommends, in the operation1008, that the coaching session608be initiated for the next gaming session. For example, the processor system505generates a message and renders the message for display on the display device1. The message queries the user1whether the user1wishes to initiate the coaching session608. As another example, the processor system505generates audio data including the message to indicate to the user1whether the user1wishes to initiate the coaching session608, and sends the audio data to the head phone910(FIG. 9). The audio data is output as sound by the head phone910to the user1.

Upon viewing the message displayed on the display screen1or listening to the message, which is output as sound by the head phone910, the user1selects one or more buttons on the game controller1to indicate that the coaching session608be initiated to generate an input signal. Upon receiving the input signal indicating that the coaching session608be initiated, the processor system505initiates the coaching session608. For example, the processor system505renders the coaching scene700(FIG. 7A) or the coaching scene750(FIG. 7B) or both the coaching scenes700and800(FIG. 8A) or both the coaching scenes700and850(FIG. 8B) for display on the display screen1. On the other hand, upon receiving an input signal indicating that the coaching session608not be initiated, the processor system505does not initiate the coaching session608.

FIG. 11Ais a diagram of an embodiment of a system1100to illustrate a communication via a router and modem1104and a computer network1102between the processor system505and multiple devices, which include a display device1106and a hand-held controller1108. Examples of the display device1106include the display device1(FIG. 1), an LCD display device, an LED display device, a plasma display device, and an HMD. Examples of the hand-held controller1108include a touch screen, a keypad, a mouse, the game controller1, and a keyboard. Each of the display device1106and the hand-held controller1108is used by the user1. Also, each of the users2-N uses a hand-held controller having the same structure and function as that of the hand-held controller1108and a display device having the same structure and function as that of the display device1106. The display device1106and the hand-held controller1108are examples of the client device602(FIG. 6).

The system1100further includes the router and modem1104, the computer network1102, and the processor system505. The system1100also includes a headphone1110and the display device1106. The display device1106includes a display screen1112, such as an LCD display screen, and LED display screen, or a plasma display screen. The display device1(FIG. 1) is an example of the display device1106. An example of the computer network1102includes the Internet or an intranet or a combination thereof. An example of the router and modem1104includes a gateway device. Another example of the router and modem1104includes a router device and a modem device.

The display screen1112is coupled to the router and modem1104via a wired connection. Examples of a wired connection, as used herein, include a transfer cable, which transfers data in a serial manner, or in a parallel manner, or by applying a universal serial bus (USB) protocol.

The hand-held controller1108includes controls1118, a digital signal processor system (DSPS)1120, and a communication device1122. The controls1118are coupled to the DSPS1120, which is coupled to the communication device1122. Examples of the controls1118include buttons and joysticks. Examples of the communication device1122includes a communication circuit that enables communication using a wireless protocol, such as Wi-Fi™ or Bluetooth™, between the communication device1122and the router and modem1104.

The communication device1122is coupled to the headphone1110via a wired connection or a wireless connection. Examples of a wireless connection, as used herein, include a connection that applies a wireless protocol, such as a Wi-Fi™ or Bluetooth™ protocol. Also, the communication device1122is coupled to the router and modem1104via a wireless connection. Examples of a wireless connection include a Wi-Fi™ connection and a Bluetooth™ connection. The router and modem1104is coupled to the computer network1102, which is coupled to the processor system505.

During the play of the game, the processor system505executes the game program528to generate image frame data from one or more game states of the game and applies a network communication protocol, such as transfer control protocol over Internet protocol (TCP/IP), to the image frame data to generate one or more packets and sends the packets via the computer network1102to the router and modem1104. The modem of the router and modem1104applies the network communication protocol to the one or more packets received from the computer network1102to obtain or extract the image frame data, and provides the image frame data to the router of the router and modem1104. The router routes the image frame data via the wired connection between the router and the display screen1112to the display screen1112for display of one or more images of the game based on the image frame data received within the one or more packets.