U.S. Pat. No. 11,452,941

EMOJI-BASED COMMUNICATIONS DERIVED FROM FACIAL FEATURES DURING GAME PLAY

AssigneeSony Interactive Entertainment Inc

Issue DateNovember 30, 2020

Illustrative Figure

Abstract

Techniques for emoji-based communications derived from facial features during game play provide a communication channel accessible by players associated with gameplay hosted by the online game platform and capture an image of a user using a controller that includes a camera. The techniques further determine facial features of the user based on the image, generate an emoji based on the facial features of the user, and transmit the emoji to the players associated with the online game platform over the communication channel.

Description

DETAILED DESCRIPTION As used herein, the term “user” refers to a user of an electronic device where actions performed by the user in the context of computer software are considered to be actions to provide an input to the electronic device and cause the electronic device to perform steps or operations embodied by the computer software. As used herein, the term “emoji” refers to ideograms, smileys, pictographs, emoticons, and other graphic characters/representations that are used in place of textual words or phrases. As used herein, the term “player” or “user” are synonymous and, when used in the context of gameplay, refers to persons who participate, spectate, or otherwise in access media content related to the gameplay. As mentioned above, the entertainment and game industry continues to develop and improve user interactions related to online game play. While many technological advances have improved certain aspects of interactive and immersive experiences, modern gameplay communications continue to use traditional audio and/or text-based communication technology, which fails to appreciate the dynamic and evolving nature of modern inter-personal communications. Accordingly, as described in greater detail herein, this disclosure is directed to improved techniques for gameplay communications that particularly support emoji-based communications (e.g., ideograms, smileys, pictographs, emoticons, and other graphic characters/representations). Moreover, this disclosure describes hardware controllers that include integrated cameras as well as improved gameplay communication techniques, which operate in conjunction with the hardware controllers. Referring now to the figures,FIG. 1illustrates a schematic diagram of an example communication environment100. Communication environment100includes a network105that represents a distributed collection of devices/nodes interconnected by communication links120that exchange data such as data packets140as well as transporting data to/from end nodes or client devices such as game system130. Game system130represents a computing device (e.g., personal computing devices, entertainment systems, game systems, laptops, tablets, mobile devices, and the like) that includes ...

DETAILED DESCRIPTION

As used herein, the term “user” refers to a user of an electronic device where actions performed by the user in the context of computer software are considered to be actions to provide an input to the electronic device and cause the electronic device to perform steps or operations embodied by the computer software. As used herein, the term “emoji” refers to ideograms, smileys, pictographs, emoticons, and other graphic characters/representations that are used in place of textual words or phrases. As used herein, the term “player” or “user” are synonymous and, when used in the context of gameplay, refers to persons who participate, spectate, or otherwise in access media content related to the gameplay.

As mentioned above, the entertainment and game industry continues to develop and improve user interactions related to online game play. While many technological advances have improved certain aspects of interactive and immersive experiences, modern gameplay communications continue to use traditional audio and/or text-based communication technology, which fails to appreciate the dynamic and evolving nature of modern inter-personal communications. Accordingly, as described in greater detail herein, this disclosure is directed to improved techniques for gameplay communications that particularly support emoji-based communications (e.g., ideograms, smileys, pictographs, emoticons, and other graphic characters/representations). Moreover, this disclosure describes hardware controllers that include integrated cameras as well as improved gameplay communication techniques, which operate in conjunction with the hardware controllers.

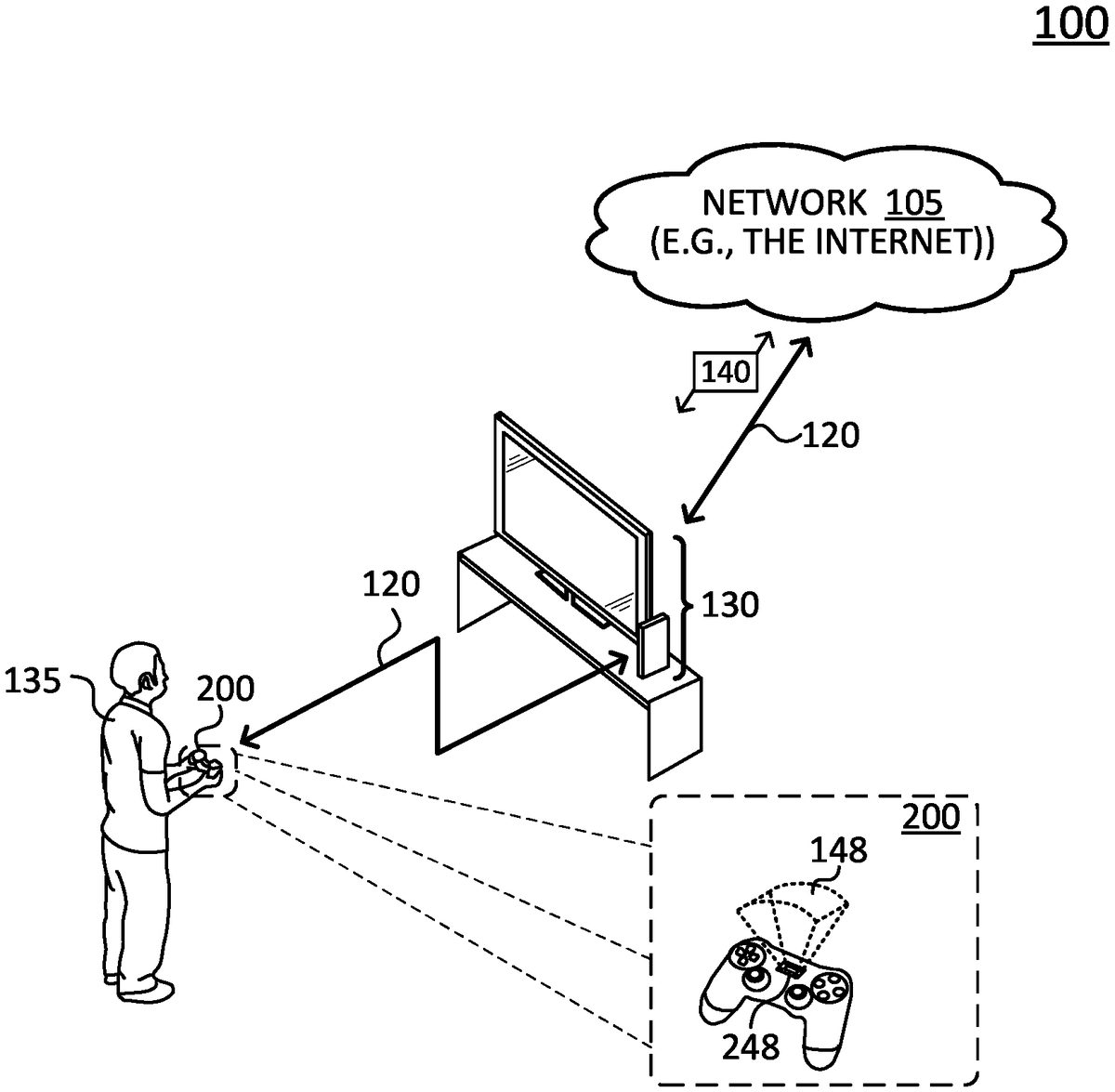

Referring now to the figures,FIG. 1illustrates a schematic diagram of an example communication environment100. Communication environment100includes a network105that represents a distributed collection of devices/nodes interconnected by communication links120that exchange data such as data packets140as well as transporting data to/from end nodes or client devices such as game system130. Game system130represents a computing device (e.g., personal computing devices, entertainment systems, game systems, laptops, tablets, mobile devices, and the like) that includes hardware and software capable of executing locally or remotely stored programs.

Communication links120represent wired links or shared media links (e.g., wireless links, PLC links, etc.) where certain devices (e.g., a controller200) communicate with other devices (e.g., game system130) based on distance, signal strength, operational status, location, etc. Those skilled in the art will understand that any number of nodes, devices, links, etc. may be included in network105, and further the view illustrated byFIG. 1is provided for purposes of discussion, not limitation.

Data packets140represent network traffic/messages which are exchanged over communication links120and between network devices using predefined network communication protocols such as certain known wired protocols, wireless protocols (e.g., IEEE Std. 802.15.4, WiFi, Bluetooth®, etc.), PLC protocols, or other shared-media protocols where appropriate. In this context, a protocol is a set of rules defining how the devices or nodes interact with each other.

In general, game system130operates in conjunction with one or more controllers200, which for purposes of discussion, may be considered as a component of game system130. In operation, game system130and controller200cooperate to create immersive gameplay experiences for user135and provide graphics, sounds, physical feedback, and the like. For example, controller200is an interactive device that includes hardware and software to receive user input, communicate user inputs to game system130, and provide user feedback (e.g., vibration, haptic, etc.). Moreover, controller200can accept a variety of user input such as, but not limited to combinations of digital and/or analog buttons, triggers, joysticks, and touch pads, and further detects motion by user135using accelerometers, gyroscopes, and the like. As shown, controller200also includes an image capture component248such as one or more cameras that operatively capture images or frames of user135within a field of view148. Notably, while controller200is shown as a hand-held device, it is appreciated the functions attributed to controller200are not limited to hand-held devices, but instead such functions are appreciated to readily apply to various types of devices such as wearable devices.

FIG. 2illustrates a block diagram of controller200. As shown, controller200includes one or more network interfaces210(e.g., transceivers, antennae, etc.), at least one processor220, a memory240, and image capture component248interconnected by a system bus250.

Network interface(s)210contain the mechanical, electrical, and signaling circuitry for communicating data over communication links120, shown inFIG. 1. Network interfaces210are configured to transmit and/or receive data using a variety of different communication protocols, as will be understood by those skilled in the art.

Memory240comprises a plurality of storage locations that are addressable by processor220and store software programs and data structures associated with the embodiments described herein. For example, memory240can include a tangible (non-transitory) computer-readable medium, as is appreciated by those skilled in the art.

Processor220represents components, elements, or logic adapted to execute the software programs and manipulate data structures245, which are stored in memory240. An operating system242, portions of which are typically resident in memory240, and is executed by processor220to functionally organizes the device by, inter alia, invoking operations in support of software processes and/or services executing on the device. These software processes and/or services may comprise an illustrative gameplay communication process/service244, discussed in greater detail below. Note that while gameplay communication process/service244is shown in centralized memory240, it may be configured to collectively operate in a distributed communication network of devices/nodes.

Notably, image capture component248is represented as a single component, however, it is appreciated that image capture component248may include any number of cameras, modules, components, and the like, in order to facilitate accurate facial recognition techniques discussed in greater detail below.

It will be apparent to those skilled in the art that other processor and memory types, including various computer-readable media, may be used to store and execute program instructions pertaining to the techniques described herein. Also, while the description illustrates various processes, it is expressly contemplated that various processes may be embodied as modules configured to operate in accordance with the techniques herein (e.g., according to the functionality of a similar process). Further, while the processes have been shown separately, those skilled in the art will appreciate that processes may be routines or modules within other processes. For example, processor220can include one or more programmable processors, e.g., microprocessors or microcontrollers, or fixed-logic processors. In the case of a programmable processor, any associated memory, e.g., memory240, may be any type of tangible processor readable memory, e.g., random access, read-only, etc., that is encoded with or stores instructions that can implement program modules, e.g., a module having gameplay communication process244encoded thereon. Processor220can also include a fixed-logic processing device, such as an application specific integrated circuit (ASIC) or a digital signal processor that is configured with firmware comprised of instructions or logic that can cause the processor to perform the functions described herein. Thus, program modules may be encoded in one or more tangible computer readable storage media for execution, such as with fixed logic or programmable logic, e.g., software/computer instructions executed by a processor, and any processor may be a programmable processor, programmable digital logic, e.g., field programmable gate array, or an ASIC that comprises fixed digital logic, or a combination thereof. In general, any process logic may be embodied in a processor or computer readable medium that is encoded with instructions for execution by the processor that, when executed by the processor, are operable to cause the processor to perform the functions described herein.

FIG. 3illustrates a schematic diagram of a gameplay communication engine305that comprises modules for performing the above-mentioned gameplay communication process244. Gameplay communication engine305represents operations of gameplay communication process244and is organized by a feature extraction module310and an emoji generation module315. These operations and/or modules are executed or performed by processor220and, as mentioned, may be locally executed by controller200, remotely executed by components of game system130(or other devices coupled to network105), or combinations thereof.

In operation, controller200captures a frame or an image320of user135during gameplay using its integrated camera—i.e., image capture component248(not shown). For example, controller200may capture the image in response to an image capture command (e.g., a button press, a specified motion, an audio command, etc.), according to a scheduled time, and/or based on a gameplay status (e.g., a number of points achieved, a change in the number of points, gameplay milestones, etc.) In some embodiments, controller200captures multiple images in order to sample facial feature changes and track facial feature changes.

After image capture gameplay communication engine305analyzes image320(as well as images321,322, and so on) using feature extraction module310which extracts and determines facial features such as features330associated with image320and thus, associated with user135. In particular, feature extraction module310performs facial recognition techniques on image320to detect specific points or landmarks present in image320. Collectively, these landmarks convey unique and fundamental information about user135such as emotions, thoughts, reactions, and other information. These landmarks include for example, edges of eyes, nose, lips, chin, ears, and the like. It is appreciated that gameplay communication engine305may use any known facial recognition techniques (or combinations thereof) when detecting, extracting, and determining features330from an image.

Feature extraction module310subsequently passes features330to emoji generation module315to generate an emoji331. In operation, emoji generation module315processes features330and maps these features to respective portions of a model emoji to form emoji331. Emoji generation module315further adjusts or modifies such respective portions of the model emoji to reflect specific attributes of features330(e.g., adjust check distances, nose shape, color pallet, eyebrow contour, and the like), which results in emoji331. In this fashion, emoji331may be considered a modified model emoji, as is appreciated by those skilled in the art. Notably, the model emoji may be specific to a user or it may be generic for multiple users. In either case, emoji generation module315creates emoji331based on a model or template emoji. Gameplay communication engine305further transmits emoji331for further dissemination amongst players associated with current gameplay (e.g., transmitted over an online gameplay chat channel hosted by a multi-user game platform).

FIG. 4illustrates a schematic diagram of a gameplay communication engine405, according to another embodiment of this disclosure. Gameplay communication engine405includes the above-discussed feature extraction module310(which extracts features330from image320) and additionally includes a vector mapping module410, which maps images to a position vectors in an N-dimension vector space412based on respective features, and an emoji generation module415, which generates an emoji431based on a proximity located position vectors, which are assigned to expressions/emotions.

More specifically, N-dimension vector-space412includes one or more dimensions that correspond to respective features or landmarks, where images or frames are mapped to respective position vectors according to its features. For example, dimension1may correspond to a lip landmark, dimension2may correspond to a nose landmark, dimension3may correspond to an ear landmark, and so on.

In operation, vector mapping module410maps image320to a position vector based on features330and corresponding dimensions. As discussed in greater detail below, N-dimension vector-space412provides a framework to map images to vector positions based on extracted features and group or assign proximately positioned vectors to specific expressions and/or emotions. For example, the vector-position associated with image320in N-dimension vector-space412may be associated with a particular expression/emotion based on its proximity to other position vectors having pre-defined associations to the expression/emotion.

Emoji generation module415evaluates the vector position mapped to image320, determines the vector-position proximate to other vector positions assigned to an emotion/expression (e.g., here “happy” or “smiling”) and subsequently generates emoji431, which corresponds to the emotion/expression. Emoji431, similar to emoji331, is further disseminated amongst players associated with current gameplay (e.g., transmitted over an online gameplay chat channel hosted by a multi-user game platform).

FIG. 5illustrates a vector-space500that includes multiple position vectors assigned or grouped according to specific emotions. As shown, groups of proximately located position vectors are grouped according to emotions such as “sad”, “happy”, “angry”, and “surprised”. It is appreciated that any number of emotions may be used, and the emotional groups shown are for purposes of discussion not limitation. Further, it is also appreciated position vectors may be organized according to particular expressions (e.g., smiling, frowning, and so on), and/or combinations of emotions and expressions.

Vector-space500also provides a query vector510that represents the position vector corresponding to image320. As mentioned above, vector mapping module410generates a position vector for image320based on features330and, as shown, such position vector is formed as query vector510. Once vector mapping module410generates query vector510, emoji generation module415further identifies the closest or proximately located position vectors by analyzing relative distances and/or angles there-between. For example, emoji generation module415determines query vector510is proximate or positioned closest to position vectors assigned to the “happy” emotion. Thereafter, emoji generation module415generates an emoji corresponding to the “happy” emotion, resulting in emoji431. For example, as discussed in greater detail below, emoji generation module415determines the emotion (or expression) and uses such emotion as a key to lookup emoji431.

It is appreciated that vector-space500may be initially created using training data, which can be used to map vector-positions and corresponding emotions/expressions as well as to establish baseline and threshold conditions or criteria between specific emotions/expressions. Further, vector-space500may include more nuanced expressions/emotions and defined sub-groupings of vector-positions as desired. Moreover, vector-space500may be updated over time to improve accuracy for specific users (e.g., incorporating weights for certain features, etc.)

FIG. 6illustrates a table600that indexes emoji according to respective emotions/expressions. As mentioned above, emoji generation module415determines a query vector for an image (e.g., query vector510) is proximate to a particular emotion (e.g., “happy”) and uses the particular emotion as a key to lookup the corresponding emoji (e.g., emoji431). In this fashion, emoji generation module415uses table600to lookup a particular emoji for to a given emotion/expression and selects the particular emoji for further dissemination. While table600is shown with a particular set of emotions/expressions/emoji, it is appreciated any number of emotions/expressions may be used.

FIG. 7illustrates a third-person perspective view700of gameplay in a game environment. Third-person perspective view700includes a multi-player chat702that supports the traditional gameplay communications (e.g., text-based communications) as well as the improved gameplay communication techniques disclosed herein. In particular, multi-player chat702shows text-based communications from players701,702, and703as well as emoji-based communications for players704and705.

In proper context, the gameplay represented by third-person perspective view700is hosted by an online game platform and multi-player chat702represents a live in-game communication channel. As shown, during the gameplay, players701-705communicate with each other over multi-player chat702. While players701-703communicate with text-based communications, players704and705employ the improved gameplay communication techniques to disseminate emoji. For example, player704operates a controller that has an integrated camera (e.g., controller200). At some point during the gameplay, controller200captures an image of player704, determines an emoji corresponding to the features extracted from the image, and transmits the emoji over multi-player chat702.

FIG. 8illustrates a simplified procedure800for improved gameplay communications performed, for example, by a game system (e.g., game system130) and/or a controller (e.g., controller200) that operates in conjunction with the game system. For purposes of discussion below, procedure800is described with respect to the game system.

Procedure800begins at step805and continues to step810where, as discussed above, the game system captures an image of a user using, for example, a controller having an integrated camera. The game system can capture the image based on an image capture command, a scheduled time, and/or a gameplay status. For example, the image capture command may be triggered by a button press, an audio signal, a gesture, the like, the scheduled time may include a specific gameplay time or a per-defined time period, and the gameplay status can include a sudden change in a number of points, a total number of points, gameplay achievements, gameplay milestones, and the like.

Procedure800continues to step815where the game system determines facial features of the user based on the image using a facial recognition algorithm. As mentioned above, the game system may use any number of facial recognition techniques to identify landmarks that convey unique and fundamental information about the user. The game system further generates, in step820, an emoji based on the facial features of the user. For example, the game system can map the facial features to respective portions of a model emoji (e.g., emoji331) and/or it may generate the emoji based on an emotion/expression derived from a vector-space query and table lookup (e.g., emoji431).

The game system further transmits or disseminates the emoji, in step825, to players associated with gameplay over a communication channel. For example, the game system can transmit the emoji over a multi-player chat such as a multi-player chat702, or any other suitable channels, as is appreciated by those skilled in the art. Procedure800subsequently ends at step830, but it may continue on to step810where the game system captures another image of the user.

It should be noted some steps within procedure800may be optional, and further the steps shown inFIG. 8are merely examples for illustration, and certain other steps may be included or excluded as desired. Further, while a particular order of the steps is shown, this ordering is merely illustrative, and any suitable arrangement of the steps may be utilized without departing from the scope of the embodiments herein.

The techniques described herein, therefore, provide improved gameplay communications that support emoji-based communications (e.g., ideograms, smileys, pictographs, emoticons, and other graphic characters/representations) for live gameplay hosted online game platforms. In particular, these techniques generate emoji based on feature extracted from images of a user, which images are captured by a controller having an integrated camera. While there have been shown and described illustrative embodiments for improved gameplay communications content and operations performed by specific systems, devices, components, and modules, it is to be understood that various other adaptations and modifications may be made within the spirit and scope of the embodiments herein. For example, the embodiments have been shown and described herein with relation to controllers, game systems, and platforms. However, the embodiments in their broader sense are not as limited, and may, in fact, such operations and similar functionality may be performed by any combination of the devices shown and described.

The foregoing description has been directed to specific embodiments. It will be apparent, however, that other variations and modifications may be made to the described embodiments, with the attainment of some or all of their advantages. For instance, it is expressly contemplated that the components and/or elements described herein can be implemented as software being stored on a tangible (non-transitory) computer-readable medium, devices, and memories such as disks, CDs, RAM, and EEPROM having program instructions executing on a computer, hardware, firmware, or a combination thereof.

Further, methods describing the various functions and techniques described herein can be implemented using computer-executable instructions that are stored or otherwise available from computer readable media. Such instructions can comprise, for example, instructions and data which cause or otherwise configure a general purpose computer, special purpose computer, or special purpose processing device to perform a certain function or group of functions. Portions of computer resources used can be accessible over a network. The computer executable instructions may be, for example, binaries, intermediate format instructions such as assembly language, firmware, or source code.

Examples of computer-readable media that may be used to store instructions, information used, and/or information created during methods according to described examples include magnetic or optical disks, flash memory, USB devices provided with non-volatile memory, networked storage devices, and so on. In addition, devices implementing methods according to these disclosures can comprise hardware, firmware and/or software, and can take any of a variety of form factors. Typical examples of such form factors include laptops, smart phones, small form factor personal computers, personal digital assistants, and so on.

Functionality described herein also can be embodied in peripherals or add-in cards. Such functionality can also be implemented on a circuit board among different chips or different processes executing in a single device, by way of further example. Instructions, media for conveying such instructions, computing resources for executing them, and other structures for supporting such computing resources are means for providing the functions described in these disclosures.

Accordingly this description is to be taken only by way of example and not to otherwise limit the scope of the embodiments herein. Therefore, it is the object of the appended claims to cover all such variations and modifications as come within the true spirit and scope of the embodiments herein.

Claims

- A method for generating emoji models based on user facial features, the method comprising: capturing a plurality of images of a user, the images captured by a camera at determined times during gameplay;extracting one or more facial feature landmarks detected within each captured image based on facial recognition of one or more facial features of the user;tracking a position of each of the facial feature landmarks in each captured image, wherein a set of positions of the facial feature landmarks correspond to a facial expression in the captured image;identifying a corresponding facial expression based on a change in the position of the tracked facial feature landmarks across the captured images;selecting at least one of the captured images to use in mapping the corresponding facial expression;mapping the tracked facial feature landmarks within the at least one selected image to a model emoji, wherein the model emoji is associated with the corresponding facial expression;adjusting one or more portions of the model emoji based on a weight for one or more of the facial features of the user;and distributing the adjusted model emoji over a communication channel to one or more recipients associated with a current session of the user.

- The method of claim 1, further comprising determining the times based on a gameplay status of the user, wherein the gameplay status includes at least one of a change in number of points, a total number of points, gameplay achievements, or gameplay milestones.

- The method of claim 1, further comprising determining the times based on detecting one or more triggers that include at least one of controller input, audio signal, and detected gesture.

- The method of claim 1, wherein the facial feature landmarks are defined as one or more points of the facial features that correspond to assigned emotions associated with the corresponding facial expression.

- The method of claim 4, wherein the points of the facial features include at least one of edges of eyes, nose, lips, chin, or ears.

- The method of claim 1, further comprising identifying a set of weights for the facial features of the user, wherein the set of weights correspond to attributes of the facial features of the user.

- The method of claim 6, wherein the set of weights correspond to at least one of shape, color, contour, dimension, and distance to another facial feature.

- The method of claim 1, wherein distributing the adjusted model emoji includes inserting the adjusted model emoji within text-based communication.

- The method of claim 1, further comprising mapping vectors of the facial features of the corresponding facial expression in a vector-space, and assigning the corresponding facial expression to an emotion based on a proximity of the mapped facial expression vectors to vectors associated with the emotion.

- A system for generating emoji models based on user facial features, the system comprising: a memory;a camera that captures a plurality of images of a user, the images captured at determined times during gameplay;and a processor that executes instructions stored in the memory, wherein execution of the instructions by the processor: extracts one or more facial feature landmarks detected within each captured image based on facial recognition of one or more facial features of the user;tracks a position of each of the facial feature landmarks in each captured image, wherein a set of positions of the facial feature landmarks correspond to a facial expression in the captured image;identifies a corresponding facial expression based on a change in the position of the tracked facial feature landmarks across the captured images;selects at least one of the captured images to use in mapping the corresponding facial expression;maps the tracked facial feature landmarks within the at least one selected image to a model emoji, wherein the model emoji is associated with the corresponding facial expression;adjusts one or more portions of the model emoji based on a weight for one or more of the facial features of the user;and distributes the adjusted model emoji over a communication channel to one or more recipients associated with a current session of the user.

- The system of claim 10, wherein the processor further executes instructions to determine the times based on a gameplay status of the user, wherein the gameplay status includes at least one of a sudden change in number of points, a total number of points, gameplay achievements, or gameplay milestones.

- The system of claim 10, wherein the processor further executes instructions to determine the times based on detecting one or more triggers that include at least one of controller input, audio signal, and detected gesture.

- The system of claim 10, wherein the facial feature landmarks are defined as one or more points of the facial features that correspond to assigned emotions associated with the corresponding facial expressions.

- The system of claim 13, wherein the points of the facial feature landmarks include at least one of edges of eyes, nose, lips, chin, or ears.

- The system of claim 10, wherein the processor further executes instructions to identify a set of weights for the facial features of the user, wherein the set of weights correspond to attributes of the facial features of the user.

- The system of claim 15, wherein the set of weights correspond to at least one of a shape, a color, a contour, dimension, and distance to another facial feature.

- The system of claim 15, wherein the processor further executes instructions to map vectors of the facial features of the corresponding facial expression in a vector-space, and assigning the corresponding facial expression to an emotion based on a proximity of the mapped facial expression vectors to vectors associated with the emotion.

- The system of claim 10, wherein the adjusted model emoji is distributed by being inserted within text-based communication.

- A non-transitory, computer-readable storage medium, having instructions encoded thereon, the instructions executable by a processor to perform a method for generating emoji models based on user facial features, the method comprising: capturing a plurality of images of a user, the images captured by a camera at determined times during gameplay;extracting one or more facial feature landmarks detected within each captured image based on facial recognition of one or more facial features of the user;tracking a position of each of the facial feature landmarks in each captured image, wherein a set of positions of the facial feature landmarks correspond to a facial expression in the captured image;identifying a corresponding facial expression based on a change in the position of the tracked facial feature landmarks across the captured images;selecting at least one of the captured images to use in mapping the corresponding facial expression;mapping the tracked facial features within the at least one selected image to a model emoji, wherein the model emoji is associated with the corresponding facial expression;adjusting one or more portions of the model emoji based on a weight for one or more of the facial features of the user;and distributing the adjusted model emoji over a communication channel to one or more recipients associated with a current session of the user.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.