U.S. Pat. No. 11,439,901

APPARATUS AND METHOD OF OBTAINING DATA FROM A VIDEO GAME

AssigneeSony Interactive Entertainment Inc.

Issue DateDecember 31, 2019

Illustrative Figure

Abstract

A method of obtaining data from a video game includes: inputting a video image of the video game comprising at least one avatar, detecting a pose of the avatar in the video image, assigning one or more avatar-status boxes to one or more portions of the avatar, determining, based on the pose of the avatar, a position of at least one avatar-status box with respect to the avatar using a correlation between avatar poses and avatar-status boxes, producing avatar-status data for the avatar indicative of the position of the at least one avatar-status box with respect to the avatar in the video image, generating one or more indicator images for the avatar in accordance with the avatar-status data, and augmenting the video image of the video game comprising at least the avatar with one or more of the indicator images in response to the pose of the avatar, in which the step of determining the position of the at least one avatar-status box with respect to the avatar includes providing, to a correlation unit trained to determine correlations between avatar poses and avatar-status boxes, an input comprising at least data indicative of the pose of the avatar, and obtaining from the correlation unit data indicative of a position of the at least one avatar-status box with respect to the avatar, responsive to that input, and each avatar-status box has a corresponding classification indicative of a type of in-game interaction associated with the avatar-status box.

Description

DESCRIPTION OF THE EMBODIMENTS Referring now to the drawings, wherein like reference numerals designate identical or corresponding parts throughout the several views,FIG. 1schematically illustrates the overall system architecture of a computer game processing apparatus such as the Sony® PlayStation 4® entertainment device. A system unit10is provided, with various peripheral devices connectable to the system unit. The system unit10comprises an accelerated processing unit (APU)20being a single chip that in turn comprises a central processing unit (CPU)20A and a graphics processing unit (GPU)20B. The APU20has access to a random access memory (RAM) unit22. The APU20communicates with a bus40, optionally via an I/O bridge24, which may be a discreet component or part of the APU20. Connected to the bus40are data storage components such as a hard disk drive37, and a Blu-Ray® drive36operable to access data on compatible optical discs36A. Additionally the RAM unit22may communicate with the bus40. Optionally also connected to the bus40is an auxiliary processor38. The auxiliary processor38may be provided to run or support the operating system. The system unit10communicates with peripheral devices as appropriate via an audio/visual input port31, an Ethernet® port32, a Bluetooth® wireless link33, a Wi-Fi® wireless link34, or one or more universal serial bus (USB) ports35. Audio and video may be output via an AV output39, such as an HDMI port. The peripheral devices may include a monoscopic or stereoscopic video camera41such as the PlayStation Eye®; wand-style videogame controllers42such as the PlayStation Move® and conventional handheld videogame controllers43such as the DualShock 4®; portable entertainment devices44such as the PlayStation Portable® and PlayStation Vita®; a keyboard45and/or a mouse46; a media controller47, for example in the form of a remote control; and a headset48. Other peripheral devices may similarly be considered such as a printer, or a3D printer (not shown). The GPU20B, optionally in conjunction with the CPU20A, generates video images ...

DESCRIPTION OF THE EMBODIMENTS

Referring now to the drawings, wherein like reference numerals designate identical or corresponding parts throughout the several views,FIG. 1schematically illustrates the overall system architecture of a computer game processing apparatus such as the Sony® PlayStation 4® entertainment device. A system unit10is provided, with various peripheral devices connectable to the system unit.

The system unit10comprises an accelerated processing unit (APU)20being a single chip that in turn comprises a central processing unit (CPU)20A and a graphics processing unit (GPU)20B. The APU20has access to a random access memory (RAM) unit22.

The APU20communicates with a bus40, optionally via an I/O bridge24, which may be a discreet component or part of the APU20.

Connected to the bus40are data storage components such as a hard disk drive37, and a Blu-Ray® drive36operable to access data on compatible optical discs36A. Additionally the RAM unit22may communicate with the bus40.

Optionally also connected to the bus40is an auxiliary processor38. The auxiliary processor38may be provided to run or support the operating system.

The system unit10communicates with peripheral devices as appropriate via an audio/visual input port31, an Ethernet® port32, a Bluetooth® wireless link33, a Wi-Fi® wireless link34, or one or more universal serial bus (USB) ports35. Audio and video may be output via an AV output39, such as an HDMI port.

The peripheral devices may include a monoscopic or stereoscopic video camera41such as the PlayStation Eye®; wand-style videogame controllers42such as the PlayStation Move® and conventional handheld videogame controllers43such as the DualShock 4®; portable entertainment devices44such as the PlayStation Portable® and PlayStation Vita®; a keyboard45and/or a mouse46; a media controller47, for example in the form of a remote control; and a headset48. Other peripheral devices may similarly be considered such as a printer, or a3D printer (not shown).

The GPU20B, optionally in conjunction with the CPU20A, generates video images and audio for output via the AV output39. Optionally the audio may be generated in conjunction with or instead by an audio processor (not shown).

The video and optionally the audio may be presented to a television51. Where supported by the television, the video may be stereoscopic. The audio may be presented to a home cinema system52in one of a number of formats such as stereo, 5.1 surround sound or 7.1 surround sound. Video and audio may likewise be presented to a head mounted display unit53worn by a user60.

In operation, the entertainment device defaults to an operating system such as a variant of FreeBSD 9.0. The operating system may run on the CPU20A, the auxiliary processor38, or a mixture of the two. The operating system provides the user with a graphical user interface such as the PlayStation Dynamic Menu. The menu allows the user to access operating system features and to select games and optionally other content.

FIG. 1therefore provides an example of a computer game processing apparatus configured to execute an executable program associated with a computer game and generate a sequence of video images for output in accordance with an execution state of the computer game.

Referring now toFIG. 2, in embodiments of the disclosure an apparatus100adapted to obtain data from a video game comprises an input unit105configured to obtain a video image of the video game comprising at least one avatar, a detection unit110configured to detect a pose of the avatar in the video image, an assignment unit115configured to assign one or more avatar-status boxes to one or more portions of the avatar, a control unit120configured to determine, based on the pose of the first avatar, a position of at least one avatar-status box with respect to the avatar using a correlation between avatar poses and avatar-status boxes, and to produce avatar-status data for the avatar indicative of the position of the at least one avatar-status box with respect to the avatar, and an image generator125configured to generate one or more indicator images for the avatar in accordance with the avatar-status data, and to augment the video image of the video game comprising the avatar with one or more of the indicator images in response to the pose of the avatar.

A games machine90is configured to execute an executable program associated with a computer game and generate a sequence of video images for output. The computer game processing apparatus illustrated inFIG. 1provides an example configuration of the games machine90. The games machine90outputs the video images and optionally audio signals to a display unit91via a wired or wireless communication link (such as WiFi® or Bluetooth® link) so that video images of a user's gameplay can be displayed. The sequence of video images output by the games machine90can be obtained by the apparatus100which operates under the control of computer software which, when executed by a computer or processing element, causes the apparatus100to carry out the methods to be discussed below.

In some examples, the apparatus100is provided as part of the games machine90which executes the executable program associated with the computer game. Alternatively, the games machine90may be provided as part of a remote computer games server and the apparatus100may be provided as part of a user's home computing device.

In embodiments of the disclosure, the input unit105of the apparatus100receives video images in accordance with the execution of the executable program by the games machine90. Using one or more of the video images as an input the detection unit110can detect a pose of an avatar included in a scene represented in a video image.

During gameplay the avatar can interact with various elements of the in-game environment, and the in-game interactions of the avatar may be controlled by the executable program on the basis of one or more avatar-status boxes defined by the executable program associated with the video game. The executable program may define a plurality of respective avatar-status boxes and associate one or more of the avatar-status boxes with the avatar in the video image. For example, the executable program may define one avatar-status box that is mapped to the avatar's torso and another avatar-status box that is mapped to the avatar's head, such that one avatar-status box has a position that is determined based on the position of the avatar's torso and the other avatar-status box has a position that is determined based on the position of the avatar's head. As such, a given avatar-status box associated with the avatar can move in correspondence with a movement of a given portion of the avatar's body. A position of an avatar-status box mapped to a portion of an avatar can thus vary in accordance with the position of the avatar in the in-game environment, and a position of the avatar-status box with respect to another interactive element in the in-game environment, such as another avatar-status box of another avatar, can be used by the executable program to determine whether the avatar is involved in an in-game interaction.

For example, in a one-on-one combat game in which two avatars attack each other using portions of the body of the avatar (such as a hand, arm, foot, leg and/or head) the executable program of the video game may define respective avatar-status boxes for respective portions of the avatar's body. In this case, the executable program may define one or more avatar-status boxes associated with the first avatar which move in correspondence with the movements of the first avatar. Similarly, the executable program may define one or more avatar-status boxes associated with the second avatar which move in correspondence with the movements of the second avatar. When the executable program determines that an avatar-status box associated with the first avatar overlaps or collides with an avatar-status box assigned to the second avatar, this provides an indication of an occurrence of an in-game interaction (an in-game event) between the first avatar and the second avatar. Hence, for each avatar-status box the executable program defines a corresponding position and size of the avatar-status box and when a portion of the in-game environment occupied by one avatar-status box coincides with a portion of the in-game environment occupied by another avatar-status box, the result is an in-game interaction which is dependent upon the type (classification) of the two coincident avatar-status boxes.

In response to determining that two avatar-status boxes overlap with each other in the in-game environment, the execution state of the video game can be updated which is reflected in the video images output as a result of the execution of the executable program. Specifically, the execution state of the video game can be updated in dependence upon the type (classification) of the overlapping avatar-status boxes and the time at which the avatar-status boxes overlap, and the in-game interaction is thus represented in the sequence of video images generated as a result of the execution of the computer program.

In a specific example, the avatar-status box associated with the first avatar may be classified as a “hit-box” and mapped for example to the first avatar's leg, foot, arm or hand. The avatar status-box associated with the second avatar may be classified as a “hurt-box” and mapped to the second avatar's head or torso, for example. A “hit-box” is a type of avatar-status box associated with an in-game interaction in which an avatar performs an attack operation to inflict damage upon another avatar or another in-game element, and the “hurt-box” is a type of avatar-status box associated with an in-game interaction in which an avatar is targeted by an attack operation. As such, a “hit-box” is typically mapped to a portion of the avatar capable of performing an attack operation (e.g. performing an in-game hit) such as a portion of a limb of the avatar, and a “hurt-box” is typically mapped to a portion of the avatar capable of being attacked (e.g. receiving a hit operation from an element in the video game) such as a portion of a torso of the avatar or a portion of a limb of the avatar. When an avatar status-box classified as a “hit-box” overlaps with an avatar status-box classified as a “hurt-box” in the in-game environment, the avatar associated with the “hit-box” can interact with another avatar, or in game-element, associated with the “hurt-box” to inflict damage upon the other avatar or in-game element.

It will be appreciated that the movements of one or more portions of the first avatar can be controlled by a first user based on a user input provided via a user interface device such as the handheld videogame controller43connected to the games machine90by a wired or wireless communication link. Similarly, the movements of one or more portions of the second avatar may be controlled by a second user based on a user input provided via a user interface device such as the handheld videogame controller43, or alternatively the movements of at least one of the first avatar and the second avatar may be automatically controlled by the executable program such that at least one of the avatars is a video game bot.

The executable program may define a range of different types of avatar-status boxes that may be mapped to a portion of the avatar, including:i. a hit box indicative that a portion of an avatar pose can inflict an in-game hit-interaction on an element of the video game;ii. a hurt box indicative that a portion of an avatar pose can receive an in-game hit-interaction from the element of the video game;i. an armour box indicative that a portion of an avatar pose can not be hurt;ii. a throw box indicative that at least part of an avatar pose can engage with a target to throw the target;iii. a collision box indicative that a portion of an avatar pose can collide with a target;iv. a proximity box indicative that a portion of an avatar pose can be blocked by an opponent; andv. a projectile box indicative that a portion of an avatar pose comprises a projectile.

In embodiments of the disclosure, the assignment unit115is configured to assign one or more avatar-status boxes to one or more portions of the avatar included in the video image output by the games machine90. The techniques of the present disclosure are capable of correlating avatar-status boxes having any of the above mentioned classifications with avatar poses, and the assignment unit115can assign one or more avatar-status boxes to one or more portions of the avatar, in which each avatar-status box has a corresponding classification selected from the list consisting of: a hit box; a hurt box; an armour box; a throw box; a collision box; a proximity box; and a projectile box.

The assignment unit115may assign a plurality of avatar-status boxes to the avatar, such that a first avatar-status box is assigned to a first portion of the avatar and a second avatar-status box is assigned to a second portion of the avatar. In the case where the avatar is a humanoid avatar, the first or second portion may correspond to one of a hand, arm, foot, leg, head or torso of the avatar. In some cases, the assignment unit115may assign avatar-status boxes having different classifications, where a classification of an avatar-status box is indicative of a type of in-game interaction associated with the avatar-status box. The assignment unit115can be configured to assign a first avatar-status box having a first classification to the first portion of the avatar and may assign a second avatar-status box having a second classification to the second portion of the avatar. In cases where a portion of the avatar is capable of participating in more than one-type of in-game interaction, the assignment unit115may assign a first avatar-status box having a first classification and a second avatar-status box having a second classification to that same portion of the avatar, and may do so at the same time or at different times. An example of such a portion of the avatar may be the avatar's leg, which is capable of performing an attack operation to inflict damage on an opponent and is also capable of being the victim of an attack by an opponent in which the avatar suffers damage. As such, the apparatus100can receive a video image of the video game and one or more avatar-status boxes can be assigned to the avatar in the video image by the assignment unit115. Each avatar in the video image may be assigned a plurality of avatar-status boxes, each avatar-status box having a corresponding classification selected from a plurality of classifications.

In embodiments of the disclosure, the detection unit110is configured to detect a pose of the first avatar in the video image by performing an analysis of an input video image including the avatar using a feature point detection algorithm to detect features of the avatar. For example, the detection unit110may use a library for avatar keypoint detection, such as OpenPose, from which a pose of an avatar in the video image can be estimated, as discussed in detail below. In this way, the detection unit110can detect a pose of the avatar in a video image and can detect changes in the pose of the avatar by analysing each video image of a sequence of video images. Hence more generally, the detection circuitry110is configured to detect the pose of the avatar in the video image and to generate information indicative of the pose of the avatar.

In embodiments of the disclosure, the control unit120is configured to determine a position of at least one avatar-status box with respect to the avatar based on the pose of the avatar using a correlation between avatar poses and avatar-status boxes, and to produce avatar-status data for the avatar indicative of the position of the at least one avatar-status box with respect to the avatar. Using the detected avatar pose and the one or more avatar-status boxes assigned to the avatar, the control unit120can be configured to determine a position of at least one of the avatar-status-boxes with respect to the first avatar using the correlation between avatar poses and avatar-status boxes, wherein the correlation is generated by a machine learning system having been trained using video images. The control unit120can access a model that has been trained using machine learning techniques to correlate avatar poses with avatar-status boxes. By providing information indicative of the pose of the avatar detected by the detection circuitry110to the model, a position of at least one of the avatar-status boxes with respect to the detected avatar pose can be determined. In other words, information indicative of the pose of the avatar detected by the detection circuitry110can be provided as an input to the model defining correlations between avatar poses and avatar-status boxes, and the model can thus determine a position of at least one of the avatar-status boxes with respect to the first avatar in the video image. For example, the correlation between avatar poses and avatar-status boxes may indicate that for a given avatar pose a given avatar-status box is expected to have a given position with respect to the given portion of the avatar to which it is assigned according to previous avatar poses and avatar-status boxes observed when training the model to correlate avatar poses and avatar-status boxes. Therefore, when the pose of the avatar detected by the detection unit110corresponds to the given avatar pose, the correlation defined by the model can be used to determine a position of at least one of the avatar-status boxes with respect to the avatar. In this way, the apparatus100can assign one or more avatar-status boxes to an avatar and a position of at least one of the avatar-status boxes with respect to a pose of the avatar can be determined using the correlation. The avatar may be capable of adopting a plurality of respective avatar poses and for a detected avatar pose the correlation can be used to determine a position of an avatar-status box with respect to the avatar pose by providing information indicative of the detected avatar pose as an input to the model.

For example, at its simplest the model defining the correlation between avatar poses and avatar-status boxes may comprise information indicating a correspondence between a first avatar pose and a position of one or more avatar-status boxes associated with the first avatar pose, and a correspondence between a second avatar pose and a position of one or more avatar-status boxes associated with the second avatar pose. The first avatar pose may correspond to an upright defensive-stance, and the second avatar pose may correspond to an upright attack-stance in which at least one of the avatar's limbs protrudes in a direction away from the torso of the avatar, for example. For the first avatar pose, the model defines a plurality of avatar-status boxes each having a size and a position with respect to the first avatar. In some cases the model may define a plurality of avatar-status boxes that all have the same classification (e.g. for the first avatar pose all of the avatar-status boxes may have a first classification corresponding to a “hurt-box” classification). Alternatively, some of the avatar-status boxes may have a first classification and some of the avatar-status boxes may have a second classification, wherein the first classification is different to the second classification and the classification is indicative of a type of in-game interaction associate with the avatar-status box. For the second avatar pose the model may similarly define a plurality of avatar-status boxes each having a defined position with respect to the first avatar and each avatar-status box having a corresponding classification. As such, the model may comprise information defining avatar-status boxes each having a corresponding classification and at its simplest each avatar-status box is either classified as a “hurt-box” or a “hit-box”. It will be appreciated that other classifications of avatar-status boxes can be defined by the model. In this way, for a given avatar pose some of the avatar-status boxes may have a first classification corresponding to a “hurt-box” and some of the avatar-status boxes may have a second classification corresponding to a “hit-box”. When the pose of the avatar detected by the detection unit110corresponds to one of the avatar poses defined by the model, the correlation between avatar poses and the avatar-status boxes defined by the model can be used to determine a position of at least one of the avatar-status boxes assigned to a portion of the avatar by the assignment unit105. Hence a position of an avatar-status box with respect to a portion of the avatar (such as a position of a “hit-box” or “hurt-box” with respect to a limb of the avatar) can be determined based on a detection of the pose of the avatar in the video image.

Consequently, using just a video image as an input to the apparatus100, one or more avatar-status boxes can be assigned to the avatar in the video image, and a position of one or more of the avatar-status boxes with respect to the avatar can be determined using the model defining the above mentioned correlation between avatar poses and the avatar-status boxes, and therefore a position of an avatar-status box can be determined that at least approximates a position of an avatar-status box as defined by the executable program associated with the video game. This provides a technique whereby an analysis of a video image of a video game can allow positions of avatar-status boxes associated with an avatar to be determined using video images output as a result of the execution of the executable program without requiring access to the source code or reverse engineering of the source code.

In embodiments of the disclosure the control unit120is configured to produce avatar-status data for the avatar indicative of the position of at least one avatar-status box with respect to the avatar. Upon determining a position of an avatar-status box with respect to the avatar for the detected pose of the avatar, the control unit120can produce avatar-status data for the avatar to record the determined position of the avatar-status box with respect to the avatar for the detected avatar pose. For example, the avatar-status data for the avatar may comprise data indicative of a first pose of the avatar and a position of each avatar-status box with respect to the avatar for the first pose of the avatar. Similarly, the avatar-status data for the avatar may comprise data indicative of a second pose of the avatar and a position of each avatar-status box with respect to the avatar when the avatar adopts the second pose. Positions of respective avatar-status boxes can be stored in association with a respective avatar pose, and avatar-status box position information can be stored for a plurality of respective avatar poses.

In embodiments of the disclosure, the image generator125is configured to generate one or more indicator images for the avatar in accordance with the avatar-status data, and to augment the video image of the video game comprising at least the first avatar with one or more of the indicator images in response to the pose of the avatar. The avatar-status data comprises data indicative of the position an avatar-status box with respect to the avatar for an avatar pose, which can be used by the image generator125in order to generate one or more indicator images for the avatar. As such, indicator images can be generated in dependence upon the avatar-status data produced by the control unit120and the indicator images can be used to augment the video image of the video game comprising at least the first avatar. The video image can be augmented in response to the detected pose of the avatar in the video image using the indicator images so that movement of the avatar in the video image is accompanied by movement of the indicator images.

Referring toFIG. 3, there is provided a schematic flowchart in respect of a method of obtaining data from a video game, comprising:

inputting (at a step310) a video image of the video game comprising at least one avatar;

detecting (at a step320) a pose of the avatar in the video image;

assigning (at a step330) one or more avatar-status boxes to one or more portions of the avatar;

determining (at a step340), based on the pose of the avatar, a position of at least one avatar-status box with respect to the avatar using a correlation between avatar poses and avatar-status boxes;

producing (at a step350) avatar-status data for the avatar indicative of the position of the at least one avatar-status box with respect to the avatar in the video image;

generating (at a step360) one or more indicator images for the avatar in accordance with the avatar-status data; and

augmenting (at a step370) the video image of the video game comprising at least the avatar with one or more of the indicator images in response to the pose of the avatar.

The method of obtaining data from a video game illustrated inFIG. 3can be performed by an apparatus such as the apparatus100illustrated inFIG. 2operating under the control of computer software.

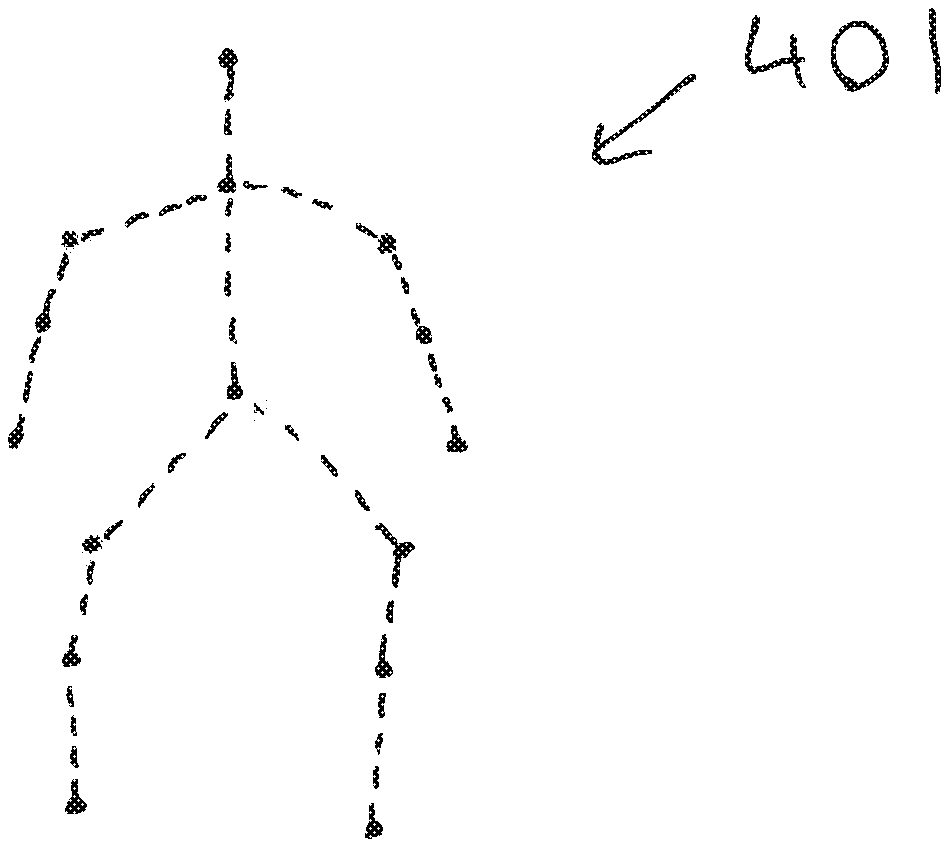

FIG. 4aschematically illustrates a plurality of keypoint features detected for an avatar in a video image. In embodiments of the disclosure, detecting the pose of the avatar in the video image comprises: analysing the video image of the video game using an algorithm trained on skeletal models; and selecting one avatar pose from a plurality of avatar poses based on one or more from the list consisting of: an angle of a limb of the avatar with respect to a torso of the avatar; a degree of extension of the limb of the avatar; and a distance between a position of a centre of gravity of the avatar and a position of a predetermined portion of the limb of the avatar. The video image can be analysed by the detection circuitry110using a model-based pose estimation algorithm so as to detect keypoint features401a-oof the avatar401such as the torso, hand, face and foot of the avatar from which the pose of the avatar401can be estimated. The detection circuitry110can analyse the video image using a keypoint detection library to detect keypoint features, and using an algorithm trained on skeletal models different poses adopted by the avatar can be detected.FIG. 4bschematically illustrates a pose of the avatar401that is estimated based on the keypoint features detected by the detection circuitry110.

Based on one or more properties detected by the detection unit110for the avatar pose included in the video image, the detection unit110can select one avatar pose from a plurality of predetermined avatar poses. An angle of a limb of the avatar400with respect to the torso of the avatar400may be used to select one avatar pose from a plurality of avatar poses. For example, the detection unit110may detect the pose of the avatar in the video image and select either a first avatar pose or a second avatar pose from the plurality of predetermined avatar poses based on whether the angle between the limb and the torso exceeds a predetermined threshold value. In this way, predetermine threshold value for an angle between a first portion of the avatar and a second portion of the avatar can be used to determine which avatar pose from the plurality of avatar poses corresponds to the avatar pose adopted by the avatar in the video image. In some examples, when an angle between an arm of the avatar and the torso of the avatar is less than or equal to the predetermined threshold angle (e.g. 45, 50, 55, 60, 65, 70, 75, 80, 85 or 90 degrees) this indicates that the avatar pose corresponds to a first avatar pose, and when the angle between the arm of the avatar and the torso of the avatar exceeds the predetermined threshold angle this indicates that the avatar pose corresponds to a second avatar pose. For the case where the angle between the arm of the avatar and the torso of the avatar is less than or equal to the predetermined threshold, the detection unit110may select the first avatar pose which corresponds to a upright resting pose which is adopted by the avatar by default in the absence of a user input. Similarly, for the case where the angle between the arm of the avatar and the torso of the avatar exceeds the predetermined threshold the detection circuitry may select the second avatar pose which corresponds to an upright attack pose which is adopted by the avatar in response to a user input instructing the avatar to perform an attack operation. Hence more generally, a plurality of predetermined threshold values may be used for comparison with the angle between the limb of the avatar and the torso of the avatar, such that one of the plurality of avatar poses can be selected according to whether or not the measured angle exceeds a threshold angle associated with a given avatar pose.

Alternatively or in addition, a degree of extension of the limb of the avatar may be used to select one avatar pose from the plurality of avatar poses. A degree of extension of the limb with respect to the torso may be detected by detecting a position of a given portion of the limb (e.g. hand, elbow, foot or knee) with respect to a portion of the torso. When the distance between the given portion of the limb of the avatar and the portion of the torso of the avatar is less than or equal to a predetermined threshold distance, this may indicate that the avatar pose corresponds to a first avatar pose. Similarly, when the distance between the given portion and the portion of the torso of the avatar exceeds predetermined threshold distance, this may indicate that the avatar pose corresponds to a second avatar pose. In this case, the first avatar pose may correspond to the upright resting pose which is adopted by the avatar by default and the second avatar pose may correspond to the upright attack pose. A plurality of threshold distances can be used for comparison with the distance between the given portion of the limb of the avatar and the portion of the torso, as detected by the detection circuitry110, and one of the plurality of avatar poses can be selected according to whether or not the distance exceeds a threshold distance associated with a given avatar pose. Similarly, the degree of extension of the limb of the avatar may be used to select one avatar pose from the plurality of avatar poses by measuring an angle associated with a joint of the avatar, such as the knee or elbow joint, for example. In this way, a predetermined threshold angle may be associated with at least one joint of the avatar and one avatar pose may be selected from the plurality of avatar poses in dependence upon whether the angle associated with the at least one joint of the avatar exceeds the predetermined threshold angle associated with that joint.

Alternatively or in addition, a distance between a position of a centre of gravity of the avatar and a position of a predetermined portion of the limb of the avatar may be used to select one avatar pose from the plurality of avatar poses. In this case, when a distance between the centre of gravity of the avatar and the predetermined portion of the limb of the avatar is less than or equal to a predetermined threshold distance, this may indicate that the avatar pose corresponds to a first avatar pose. Similarly, when the distance between the centre of gravity of the avatar and the predetermined portion of the limb of the avatar exceeds the predetermined threshold distance, this may indicate that the avatar pose corresponds to a first avatar pose. As such, a predetermined threshold distance may be used for comparison with a distance between the position of the centre of gravity of the avatar and a portion of the avatar such as the hand, elbow, foot or knee. Therefore, one avatar pose can be selected from the plurality of avatar poses in dependence upon whether or not the predetermined portion of the avatar is located further from the centre of gravity of the avatar than the predetermined threshold distance. For example, the first avatar pose may correspond to the upright resting pose which is adopted by the avatar by default and the second avatar pose may correspond to the upright attack pose in which a limb of the avatar is extended away from the torso of the avatar in order to attack another entity in the in-game environment.

Hence more generally, the detection circuitry110can be configured to detect an extent to which a given limb of the avatar is outstretched and an avatar pose can be selected from the plurality of predetermined avatar poses based on the a magnitude of a parameter detected for the given limb. The predetermined threshold angles and/or predetermined threshold distances discussed above can be used by the detection unit110to select one avatar pose from the plurality of avatar poses, wherein the plurality of avatar poses comprise: a first avatar pose corresponding to an upright posture of the avatar in which the arms of the avatar are proximate to the torso and the avatar's legs are approximately parallel to the length of the avatar's torso; and a second avatar pose corresponding to an upright posture of the avatar in which at least one arm or leg of the avatar protrudes in a direction away from the torso of the avatar. In some examples, the second avatar pose may correspond to an posture of the avatar in which a left arm of the avatar protrudes in a direction away from the torso of the avatar, a third avatar pose may correspond to a posture of the avatar in which a right arm of the avatar protrudes in a direction away from the torso of the avatar, a fourth avatar pose may correspond to a posture of the avatar in which a left leg of the avatar protrudes in a direction away from the torso of the avatar, and a fifth avatar pose may correspond to a posture of the avatar in which a right leg of the avatar protrudes in a direction away from the torso of the avatar. It will be appreciated that these respective avatar poses may be detected by the detection circuitry110by detecting one or more angles or distances associated with one of the left arm, right arm, left leg or left arm. Similarly, the plurality of avatar poses may comprise one or more avatar poses corresponding to avatar poses in which more than one limb protrudes away from the torso of the avatar.

It will be appreciated that the magnitude of the predetermined threshold angles and/or predetermined threshold distances used for determining an avatar pose may vary depending on the properties of the avatar. For example, the magnitude of the predetermined threshold angles and/or predetermined threshold distances may vary in dependence upon the height of the avatar, and/or a length of the avatar's limb, and/or a ratio of torso length to limb length. In this way, a first set of threshold values may be used for the threshold angles and/or distances for an avatar having a first height and a second set of threshold values may be used for the threshold angles and/or distances for an avatar having a second height.

In some examples, the detection unit110can be configured to categorise the one or more avatar poses of the plurality of avatar poses according to three possible categories: a first categorisation referred to as “startup pose”; a second categorisation referred to as “active pose”; and a third categorisation referred to as “recovery pose”. The first categorisation corresponds to avatar poses in which the avatar adopts a default pose or a defensive pose (e.g. guard stance/boxing pose), for example in which the arms are proximate to the user's torso or head. The second categorisation corresponds to avatar poses in which at least one of the limbs protrudes away from the torso and the degree of extension of the at least one limb is greater than a predetermined percentage (e.g. 70% or 80%) of the maximum extension of the at least one limb. Alternatively or in addition to using the predetermined percentage of the maximum extension of the at least one limb, an angle of the at least one limb with respect to the torso or with respect to the horizontal may be used to determine whether an avatar pose is categorised as an “active pose” based on whether the angle exceeds a predetermined threshold value. As such, a detected avatar pose can be categorised as an “active pose” based on whether an angle associated with the avatar pose exceeds a predetermined angle with respect to at least one of the horizontal (e.g. with respect to the surface on which the avatar is standing) or the torso of the avatar. The third categorisation corresponds to avatar poses in which the avatar pose recovers from a second categorisation pose in order to return to a first categorisation pose. In other words, the third categorisation corresponds to avatar poses following a second categorisation avatar pose where the degree of extension of the at least one limb is now less than the predetermined percentage (e.g. 70% or 80%) of the maximum extension of the at least one limb.

It will be appreciated that the above categorisations may also be used for avatar poses that are not part of a predetermined set of poses (e.g. as predefined animation frames) but are generated or modified heuristically, e.g. according to a physics model.

For a typical in-game interaction in which the avatar attacks another avatar, the attack operation may be composed of at least a first avatar pose having the first categorisation (“startup pose”), followed by a second avatar pose having the second classification (“active pose”), then followed by a third avatar pose having the third categorisation (“recovery pose”). In this way an attack operation can be formed of a sequence of avatar poses comprising at least three respective avatar poses. For an avatar pose corresponding to the first categorisation, at least one of the avatar-status boxes assigned to the one or more portions of the avatar pose has a hurt-box classification indicating that a portion of the avatar pose can be hit as part of an in-game interaction. For an avatar pose corresponding to the second categorisation, at least one of the avatar-status boxes assigned to the one or more portions of the avatar pose has a hit-box classification indicating that a portion of the avatar pose can inflict a hit as part of an in-game interaction. For an avatar pose corresponding to the third categorisation, at least one of the avatar-status boxes assigned to the one or more portions of the avatar pose has a hurt-box classification indicating that a portion of the avatar pose can be hit as part of an in-game interaction. In a specific example, the avatar-status boxes corresponding to the avatar pose having the first categorisation each have a hit-box classification, indicating that the respective portions of the avatar are capable of being damaged by an in-game hit interaction, whereas for the avatar pose having the second categorisation at least one avatar-status box associated with the avatar's protruding limb has a hit-box classification, which means that the avatar pose having the second categorisation is capable of inflicting an in-game hit interaction via the hit-box when the region of space in the video game corresponding to the hit-box overlaps with a region of space in the video game corresponding to a hurt-box of another avatar or element in the video game. It will be appreciated that the value of the predetermined percentage of the maximum extension of the at least one limb may, which is used to determine whether a given avatar pose corresponds to an “active pose”, may vary suitably depending on the properties of the avatar such as limb length or height, but typically a value in the range of 60%-90% of the maximum extension of the limb may be used.

In embodiments of the disclosure, augmenting the video image of the video game comprises: augmenting the video image with a first set of indicator images in response to a first avatar pose and augmenting the video image with a second set of indicator images in response to a second avatar pose, in which each indicator image corresponds to one avatar-status box. The image generator125can be configured to generate one or more indicator images for the first avatar in accordance with the avatar-status data, and one or more of the indicator images can be used to augment the video image of the video game. By augmenting the video image in accordance with the avatar-status data, the video image can be augmented with visual representations of the avatar-status boxes for which a position with respect to the avatar has been determined on the basis of the correlation between avatar poses and avatar-status boxes. An augmented image can thus be provided which can be viewed by a user or another person viewing the user's game play to visually inform an observer or a player of the position of the avatar-status boxes with respect to the avatar. For example, such augmented images may be provided for display to an audience viewing a game being played as part of an electronic sports (eSports) tournament. As discussed previously, an avatar in a video image can adopt a number of different poses in response to a user input that controls the configuration of the avatar. When the avatar pose has a “startup pose” categorisation, which corresponds to an upright defensive-stance, the one or more avatar-status boxes classified as “hit-boxes” can be used to augment the video image so as to illustrate the portions of the avatar which are capable of being hit as part of an in-game interaction. Similarly, other types of avatar-status boxes associated with in-game interactions in which the avatar is the victim of an attack, such as an “armour box” or a “collision box”, can be used to augment the video image as appropriate.

When the avatar pose has an “active pose” categorisation, which corresponds to an avatar stance adopted as part of an attack action by the avatar, the one or more avatar-status boxes classified as “hit-boxes can be used to augment the video image, and in addition one or more avatar-status boxes associated with the protruding limb and classified as “hurt-boxes” can be used to augment the video image. As such, a portion of the video image corresponding to the outstretched limb of the avatar can be augmented with an indicator image corresponding to an avatar-status box having a “hit-box” classification. The portions of the avatar which are capable of being hit as part of an in-game interaction and the portions of the avatar which are capable of inflicting an in-game hit-interaction can be visually represented in the augmented video image. Similarly, other types of avatar-status boxes associated with in-game interactions in which the avatar inflicts an in-game hit-interaction upon an element in the video game, such as a projectile box indicative that at least part of the avatar pose comprises a projectile or a throw box indicative that at least part of the avatar pose can engage with an element to perform a throw operation, can be used to augment the video image as appropriate.

When the avatar pose has a “recovery pose” categorisation, which corresponds to an avatar stance adopted after an attack operation has been performed by the avatar, the one or more avatar-status boxes classified as “hit-boxes can be used to augment the video image and the one or more avatar-status boxes associated with the protruding limb and classified as “hurt-boxes” are no longer used to augment the video image. In this way, the portions of the avatar which are capable of being hit as part of an in-game interaction can be visually represented in the augmented video image.

The image generator125can be configured to augment the video image of the video game with one or more indicator images in response to the pose of the avatar. A first set of indicator images can be used to augment the video image in response to a detection that the avatar pose has the first categorisation, and a second set of indicator images can be used to augment the video image in response to a detection that the avatar pose has the second categorisation. This allows respective sets of indicator images to be associated with respective avatar poses based on a categorisation of an avatar pose. In response to a detection that the avatar has an avatar pose having the first categorisation, the image generator125can select the one or more indicator images corresponding to the first set of indicator images and the video image can be augmented in real-time to illustrate the location and the type of each avatar-status box for the avatar. In some examples, an indicator image corresponding to an avatar-status box having a first classification can be generated having a first colour, and an indicator image corresponding to an avatar-status box having a second classification can be generated having a second colour so that when the video image is augmented with the respective indicator images the different types of avatar-status boxes can easily be distinguished. For example, the hurt-boxes may be rendered in red whereas the hit-boxes may be rendered in green. Other colours may be used to visually represent the different types of avatar-status boxes in the augmented video image.

In embodiments of the disclosure, each avatar-status box is assigned to a portion of the avatar and each avatar-status box has a corresponding classification indicative of a type of in-game interaction associated with the avatar-status box. A plurality of avatar-status boxes may be assigned to the avatar, where one or more avatar-status boxes may be assigned to a given portion of the avatar. For example, an avatar-status box may be assigned to each portion of the avatar that is capable of inflicting an in-game hit interaction on an opponent or capable of receiving an in-game hit interaction from an opponent. As such, for portions of the avatar's body that have dual-capability, a first avatar-status box having a first classification and a second avatar-status box having a second classification may be assigned to the portion of the avatar's body, the first classification being different to the second classification. In this way, the portion of the avatar's body having dual capability can participate in two different types of in-game interaction via the two avatar-status box. Similarly, any number of avatar-status boxes may be assigned to a given portion of the avatar in dependence upon the number of types of interaction that portion is capable of participating in.

In embodiments of the disclosure, the classification of an avatar-status box is selected from the list consisting of:i. a hit box indicative that a portion of an avatar pose can inflict an in-game hit-interaction on an element of the video game;ii. a hurt box indicative that a portion of an avatar pose can receive an in-game hit-interaction from the element of the video game;i. an armour box indicative that a portion of an avatar pose can not be hurt;ii. a throw box indicative that at least part of an avatar pose can engage with a target to throw the target;iii. a collision box indicative that a portion of an avatar pose can collide with a target;iv. a proximity box indicative that a portion of an avatar pose can be blocked by an opponent; andv. a projectile box indicative that a portion of an avatar pose comprises a projectile.

In embodiments of the disclosure, assigning one or more avatar-status boxes to the first avatar comprises assigning at least a first avatar-status box and a second avatar-status box to a limb of the first avatar, in which first avatar-status box has a first classification and the second avatar-status box has a second classification, the first classification being different to the second classification, in which a classification of the first avatar-status box indicates that the first avatar-status box is a hit box and a classification of the second avatar status box indicates that the second avatar-status box is a hurt box.

In embodiments of the disclosure, determining the position of the at least one avatar-status box with respect to the first avatar comprises: providing, to a correlation unit trained to determine correlations between avatar poses and avatar-status boxes, an input comprising at least data indicative of the pose of the avatar; and obtaining from the correlation unit data indicative of a position of the at least one avatar-status box with respect to the avatar, responsive to that input. The correlation unit may be provided separate to the apparatus100such that the apparatus100communicates with the correlation unit via a wired or wireless communication (such as WiFi® or Bluetooth® link). Alternatively, the correlation unit may be provided as part of the apparatus100as illustrated inFIG. 5. Referring toFIG. 5, the detection circuitry110can detect a pose of the avatar according to the principles outlined above, and the control unit120can be configured to provide data indicative of the pose of the avatar to the correlation unit130. The correlation unit130is trained to correlate avatar poses with avatar-status boxes. By providing an input to the correlation unit130comprising data indicative of the pose of the avatar, the correlation unit130can generate data indicative of a position of at least one avatar-status box with respect to the avatar. For example, the correlation unit130may store a model trained to correlate avatar poses with avatar-status boxes, such that correlation unit130can output avatar-status box position information in response to receiving information indicative of an avatar pose, wherein the avatar-status box position information is determined based on previous relationships between avatar poses and avatar-status boxes observed in one or more video games when training the model.

In embodiments of the disclosure, determining the position of the at least one avatar-status box with respect to the first avatar comprises: providing, to the correlation unit130trained to determine correlations between avatar poses and avatar-status boxes, supplementary data relating to an in-game interaction of the avatar with an element of the video game, the supplementary data indicative of one or more selected from the list consisting of:i. the pose of the first avatar corresponding to the in-game interactionii. a pose of a second avatar corresponding to the in-game interactioniii. a score indicator included in a region of the video image; andiv. a level of brightness associated with a region of the video image.

During gameplay avatars can interact with various elements of the in-game environment on the basis of avatar-status boxes defined by the executable program. Specifically, an avatar can interact with an element of the video game in dependence upon whether an avatar-status box associated with the avatar intersects an avatar-status box of another avatar or a status-box of another interactive element in the video game. The number of ways in which each avatar may interact with the in-game environment is determined based on the number of avatar-status boxes associated with the avatar and the classification of those avatar-status boxes. Whilst the types of avatar interactions may vary widely, in-game interactions are typically characterised by an update to the video image or an audio signal associated with the video image. An in-game interaction may be thus be characterised by an on-screen update including a flash of light, a change in a score indicator (e.g. status bar) or a recoil of an avatar's body, for example. Similarly, audio signals output as a result of the execution of the video game may also provide an indication of an in-game interaction. For example, audio sounds such as a crash or a bang may also accompany an in-game interaction and can thus be used to determine the occurrence of an in-game interaction. The supplementary data relating to the in-game interaction can thus provide an indication of a property of the in-game interaction. The supplementary data relating to the in-game interaction can be provided to the correlation unit130. The correlation unit130can thus determine a position of at least one avatar-status box with respect to the avatar based on the detected pose of the avatar and the supplementary data.

Referring toFIG. 6, there is provided a schematic flowchart in respect of a method of obtaining data from a video game, comprising:

inputting (at a step610) a video image of the video game comprising at least one avatar;

detecting (at a step620) an in-game interaction of the avatar with an element of the video game and detecting a pose of the avatar corresponding to the in-game interaction;

assigning (at a step630) one or more avatar-status boxes to one or more portions of the avatar;

selecting (at a step640) at least one of the avatar-status boxes as being associated with the in-game interaction using the correlation between avatar poses and avatar-status boxes; and

determining (at a step650), based on the pose of the avatar, a position of the at least one avatar-status box with respect to the avatar using a correlation between avatar poses and avatar-status boxes;

producing (at a step660) avatar-status data for the avatar indicative of the position of the at least one avatar-status box with respect to the avatar in the video image;

generating (at a step670) one or more indicator images for the avatar in accordance with the avatar-status data; and

augmenting (at a step680) the video image of the video game comprising at least the avatar with one or more of the indicator images in response to the pose of the avatar.

In embodiments of the disclosure, the detection unit110can be configured to detect an in-game interaction based on a score indicator included in a region of the video image, and/or a level of brightness associated with a region of the video image and/or a property of an audio signal associated with the video image. As well as detecting an in-game event, the avatar pose corresponding to the in-game interaction can also be detected. Information indicative of a property of the in-game interaction and the avatar pose corresponding to the in-game event can be provided to the correlation unit130. Using this information the correlation unit130can select one of the avatar-status boxes assigned to the avatar and determine a position for the avatar-status box.

For example, at the time of the in-game interaction the avatar pose may indicate that the avatar's right (or left) arm is protruding away from the avatar's body. This provides an indication that the in-game interaction involves an avatar-status box assigned to the avatar's right arm. The information relating to the avatar pose and the in-game interaction can thus be used to select the avatar-status box assigned to the avatar's right arm, and information indicative of a position of the avatar-status box with respect to the avatar's right arm can be obtained from the correlation unit130. Using the information indicative of the in-game interaction, the correlation unit130can also determine a classification of the avatar-status box associated with the in-game interaction. For example, when the information related to the in-game interaction indicates that the avatar suffers damage as a consequence of the in-game interaction, the correlation unit130may determine that the avatar-status box has a hurt-box classification. Alternatively, when the information related to the in-game interaction indicates that the avatar inflicts damage as a consequence of the in-game interaction, the correlation unit130may determine that the avatar-status box has a hit-box classification. By providing, to the correlation unit130trained to determine correlations between avatar poses and avatar-status boxes, supplementary data relating to the in-game interaction of the avatar the correlation unit130may determine a position and a categorisation of the avatar-status box assigned to a portion of an avatar.

FIG. 7illustrates an apparatus adapted to train a correlation unit to correlate avatar poses and avatar-status boxes. In embodiments of the disclosure, an apparatus700adapted to correlate avatar poses and avatar-status boxes comprises: an input unit710configured to obtain a sequence of video images of a video game comprising at least a first avatar and a second avatar; a detection unit720configured to detect an in-game interaction between the first avatar and the second avatar indicative of an intersection between a first avatar-status box associated with the first avatar and a second avatar-status box associated with the second avatar and to detect an avatar pose for the first avatar and the second avatar corresponding to the in-game interaction; an avatar-status box calculation unit730configured to determine, for the in-game interaction, a position of the first avatar-status box with respect to the first avatar and a position of a second avatar-status box with respect to the second avatar; and a correlation unit740configured to store a model defining a correlation between avatar poses and avatar-status boxes and to correlate at least one of the position of the first avatar-status box with the pose of the first avatar and the position of the second avatar-status box with the pose of the second avatar, in which the correlation unit is configured to adapt the model in response to the correlation for at least one of the first avatar and the second avatar.

The input unit710receives the sequence of video images from a games machine, such as the game machine90illustrated inFIG. 2. The video images including at least the first avatar and the second avatar can be used by the apparatus700to observe various in-game interactions between the first avatar and the second avatar, where an in-game interaction occurs when an avatar-status box of the first avatar intersects or collides with an avatar-status box of the second avatar.

The detection unit720can detect in-game interactions as they occur by detecting changes in the properties of the video image (e.g. a change in a score indicator or a change in brightness of a given region in the video image or a recoil of an avatar's body). In addition, the detection unit720can detect an avatar pose of at least one of the avatar's at the time of the in-game interaction so as to generate avatar pose information that is associated with the in-game interaction.

By analysing the sequence of video images and observing the various in-game interactions between the first avatar and the second avatar, the apparatus700can detect the different avatar poses corresponding to the in-game interactions and determine which avatar poses correspond to which types of in-game interaction. By observing the various in-game interactions, the apparatus700can determine when two respective avatar-status boxes intersect or collide and can thus determine a position of each avatar-status box at the time of the in-game interaction. By detecting the avatar pose corresponding to the in-game interaction, the apparatus700can thus associate the avatar pose at the time of the in-game interaction with the position of the avatar-status box. Therefore, for a given in-game interaction, the apparatus700can determine a position of the two or more avatar-status boxes involved in the in-game interaction and a position of one avatar-status box with respect to the avatar pose of the first avatar can be determined and a position of a position of the other avatar-status box with respect to the avatar pose of the second avatar can be determined. In this way, the apparatus700can train a machine learning system using the observed in-game interactions and detected avatar poses corresponding to the in-game interactions to correlate avatar-status boxes with avatar poses. The machine learning system may be a neural network such as a ‘deep learning’ network, or a Baysean expert system, or any suitable scheme operable to learn a correlation between a first set of data points and a second set of data points, such as a genetic algorithm, a decision tree learning algorithm, an associative rule learning scheme, or the like.

The avatar-status box calculation unit730can determine, for an in-game interaction, a position of a first avatar-status box with respect to the first avatar and a position of a second avatar-status box with respect to the second avatar, and calculations performed for multiple in-game interactions can be used to train a machine learning system to correlate avatar poses with positions of avatar-status boxes. The correlation unit740can store a model defining a correlation between avatar poses and avatar-status boxes. In response to detecting an in-game interaction, information indicative of the properties of the in-game interaction (e.g. which types of avatar-status box are involved in the in-game interaction) and information indicative of at least one avatar pose corresponding to the interaction can be provided as an input to the model. The correlation unit740can thus adapt the model in response to the correlation between the avatar pose and the avatar-status box for the in-game interaction. The model can be adapted in response to the properties of the in-game interactions observed by the apparatus700to correlate avatar poses with avatar-status boxes. The model stored by the correlation unit740can then be used for correlating a given avatar pose with one or more avatar-status boxes. Therefore, an input comprising data indicative of an avatar pose may be provided as an input to the correlation unit730and, based on the correlations between avatar poses and avatar-status boxes defined by the model, the model can output a position of one or more avatar-status boxes associated with that avatar pose. This provides an example of a model-based approach, in which a model defining a correlation between avatar poses and avatar-status boxes is inferred from video images of a computer game without having to manually access and reverse engineer source code for an executable program associated with the computer game. Model-based reinforcement learning techniques allow extraction of the relevant information for inferring a model such that the model can be inferred without having to reverse engineer source code of an executable program for machine learning purposes. Using model-based reinforcement learning techniques, the process of training the correlation unit740to correlate avatar poses and avatar-status boxes can be performed to infer and adapt a model defining a correlation between avatar poses and avatar-status boxes, and the amount of time required to train the correlation unit can be reduced compared to a model-free reinforcement learning approach.

Referring toFIG. 8, there is provided a schematic flowchart in respect of a method of training a correlation unit to determine correlations between avatar poses and avatar-status boxes, comprising:

inputting (at a step810) a sequence of video images of a video game comprising at least a first avatar and a second avatar;

detecting (at a step820) an in-game interaction between the first avatar and the second avatar indicative of an intersection between a first avatar-status box associated with the first avatar and a second avatar-status box associated with the second avatar;

determining (at a step830), for the in-game interaction, a position of the first avatar-status box with respect to the first avatar and a position of a second avatar-status box with respect to the second avatar;

detecting (at a step840), for the in-game interaction, a pose for the first avatar and a pose for the second avatar;

correlating (at a step850) at least one of the position of the first avatar-status box with the pose of the first avatar and the position of the second avatar-status box with the pose of the second avatar; and

adapting (at a step860) a model defining a correlation between avatar poses and avatar-status boxes in response to the correlation for at least one of the first avatar and the second avatar.

The method of training a correlation unit to determine correlations between avatar poses and avatar-status boxes illustrated inFIG. 8can be performed by an apparatus such as the apparatus700illustrated inFIG. 7operating under the control of computer software.

In so far as embodiments of the disclosure have been described as being implemented, at least in part, by software-controlled data processing apparatus, it will be appreciated that a non-transitory machine-readable medium carrying such software, such as an optical disk, a magnetic disk, semiconductor memory or the like, is also considered to represent an embodiment of the present disclosure.

It will be appreciated that references herein to ‘obtaining data’ from a video game do not necessarily mean obtaining/extracting data from the videogame itself and/or extracting/obtaining data used by the videogame; as noted previously herein, games may not permit, or easily operate to allow, useful access to desired data such as status box positions. Furthermore, the techniques described herein are applicable to video recordings of a video game, for example hosted on a website, for which the specific runtime of the game no longer exists and hence from which no data can be directly obtained. Hence ‘obtaining data’ from a videogame may be more generally understood as inferring or deriving information about a videogame from image (and optionally audio) data output by the videogame, for example using the techniques described herein.

It will be apparent that numerous modifications and variations of the present disclosure are possible in light of the above teachings. It is therefore to be understood that within the scope of the appended claims, the technology may be practised otherwise than as specifically described herein.

Thus, the foregoing discussion discloses and describes merely exemplary embodiments of the present invention. As will be understood by those skilled in the art, the present invention may be embodied in other specific forms without departing from the spirit or essential characteristics thereof. Accordingly, the disclosure of the present invention is intended to be illustrative, but not limiting of the scope of the invention, as well as other claims. The disclosure, including any readily discernible variants of the teachings herein, defines, in part, the scope of the foregoing claim terminology such that no inventive subject matter is dedicated to the public.

Claims

- A method of obtaining data from a video game, comprising: inputting a video image of the video game comprising at least one avatar;detecting a pose of the avatar in the video image;assigning one or more avatar-status boxes to one or more portions of the avatar;determining, based on the pose of the avatar, a position of at least one avatar-status box with respect to the avatar using a correlation between avatar poses and avatar-status boxes;producing avatar-status data for the avatar indicative of the position of the at least one avatar-status box with respect to the avatar in the video image;generating one or more indicator images for the avatar in accordance with the avatar-status data;and augmenting the video image of the video game comprising at least the avatar with one or more of the indicator images in response to the pose of the avatar;and in which the step of determining the position of the at least one avatar-status box with respect to the avatar comprises: providing, to a correlation unit trained to determine correlations between avatar poses and avatar-status boxes, an input comprising at least data indicative of the pose of the avatar;and obtaining from the correlation unit data indicative of a position of the at least one avatar-status box with respect to the avatar, responsive to that input, and each avatar-status box has a corresponding classification indicative of a type of in-game interaction associated with the avatar-status box.

- A method according to claim 1, in which the step of detecting the pose of the avatar comprises: analysing the video image of the video game using an algorithm trained on skeletal models;and selecting one avatar pose from a plurality of avatar poses based on one or more of: an angle of a limb of the avatar with respect to a torso of the avatar;a degree of extension of the limb of the avatar;and a distance between a position of a centre of gravity of the avatar and a position of a predetermined portion of the limb of the avatar.

- A method according to claim 1, comprising: augmenting the video image with a first set of indicator images in response to a first avatar pose and augmenting the video image with a second set of indicator images in response to a second avatar pose, in which each indicator image corresponds to one avatar-status box.

- A method according to claim 1, comprising: detecting an in-game interaction of the avatar with an element of the video game and detecting the pose of the avatar corresponding to the in-game interaction;selecting at least one of the avatar-status boxes as being associated with the in-game interaction using the correlation between avatar poses and avatar-status boxes;and determining, based on the pose of the avatar, a position of the at least one avatar-status box with respect to the avatar using the correlation between avatar poses and avatar-status boxes.

- A method according to claim 1, in which the step of determining the position of the at least one avatar-status box with respect to the avatar comprises: providing, to a correlation unit trained to determine correlations between avatar poses and avatar-status boxes, supplementary data relating to an in-game interaction of the avatar with an element of the video game, the supplementary data indicative of one or more of: i. the pose of the avatar corresponding to the in-game interaction;ii. a pose of another avatar corresponding to the in-game interaction;iii. a score indicator included in a region of the video image;and iv. a level of brightness associated with a region of the video image.

- A method according to claim 1, in which each avatar-status box is assigned to a portion of the avatar.

- A method according to claim 1, in which the classification of an avatar-status box is one or more of: i. a hit box indicative that a portion of an avatar pose can inflict an in-game hit-interaction on the element of the video game;ii. a hurt box indicative that a portion of an avatar pose can receive an in-game hit-interaction from the element of the video game;iii. an armour box indicative that a portion of an avatar pose can not be hurt;iv. a throw box indicative that a portion of an avatar pose can engage with a target to throw the target;v. a collision box indicative that a portion of an avatar pose can collide with a target;vi. a proximity box indicative that a portion of an avatar pose can be blocked by an opponent;and vii. a projectile box indicative that a portion of an avatar pose comprises a projectile.

- A method according to claim 1, in which the step of assigning one or more avatar-status boxes to the avatar comprises assigning at least a first avatar-status box and a second avatar-status box to a limb of the avatar, in which the first avatar-status box has a first classification and the second avatar-status box has a second classification, the first classification being different to the second classification.

- A method according to claim 8, in which a classification of the first avatar-status box indicates that the first avatar-status box is a hit box and a classification of the second avatar status box indicates that the second avatar-status box is a hurt box.

- A method according to claim 1, wherein the correlation unit is trained in accordance with actions, comprising: inputting a sequence of video images of a video game comprising at least a first avatar and a second avatar;detecting an in-game interaction between the first avatar and the second avatar indicative of an intersection between a first avatar-status box associated with the first avatar and a second avatar-status box associated with the second avatar;determining, for the in-game interaction, a position of the first avatar-status box with respect to the first avatar and a position of a second avatar-status box with respect to the second avatar;detecting, for the in-game interaction, a pose for the first avatar and a pose for the second avatar;correlating at least one of the position of the first avatar-status box with the pose of the first avatar and the position of the second avatar-status box with the pose of the second avatar;and adapting a model defining a correlation between avatar poses and avatar-status boxes in response to the correlation for at least one of the first avatar and the second avatar.

- An apparatus adapted to obtain data from a video game, comprising: an input unit configured to obtain a video image of the video game comprising at least one avatar;a detection unit configured to detect a pose of the avatar in the video image;an assignment unit configured to assign one or more avatar-status boxes to one or more portions of the avatar;a control unit configured to determine, based on the pose of the avatar, a position of at least one avatar-status box with respect to the avatar using a correlation between avatar poses and avatar-status boxes, and to produce avatar-status data for the avatar indicative of the position of the at least one avatar-status box with respect to the avatar;and an image generator configured to generate one or more indicator images for the avatar in accordance with the avatar-status data, and to augment the video image of the video game comprising at least the avatar with one or more of the indicator images in response to the pose of the avatar;and in which the control unit is configured to: provide, to a correlation unit trained to determine correlations between avatar poses and avatar-status boxes, an input comprising at least data indicative of the pose of the avatar;and obtain from the correlation unit data indicative of a position of the at least one avatar-status box with respect to the avatar, responsive to that input, and wherein each avatar-status box has a corresponding classification indicative of a type of in-game interaction associated with the avatar-status box.