U.S. Pat. No. 11,413,531

GAME CONSOLE APPLICATION WITH ACTION CARD STRAND

AssigneeSony Interactive Entertainment Inc.

Issue DateMarch 3, 2020

Illustrative Figure

Abstract

A computer system and method for presenting windows in a dynamic menu are provided. The dynamic menu presents windows corresponding to an active application according to a first order, whereby windows are arranged based on recent interaction. The dynamic menu also presents windows corresponding to one or more inactive applications according to a second order, whereby windows are arranged based on relevance to a user of the computer system. The windows are presented in a first presentation state, and are expanded to a second presentation state or a third presentation state in response to user interaction. The third presentation state is reached via the second presentation state, thereby reducing latency and complexity of menu navigation.

Description

DETAILED DESCRIPTION OF THE INVENTION Generally, systems and methods for better information sharing and control switching in a graphical user interface (GUI) are described. In an example, a computer system presents a GUI on a display. Upon user input requesting a menu, the menu is presented in the GUI based on an execution of a menu application. The menu includes a dynamic area that presents a plurality of windows. Each of the windows corresponds to a target application and can be included in the dynamic menu area based on whether the target application is active or inactive, and depending on a context if the target application is inactive. The dynamic menu area shows the windows in a first presentation state (e.g., glanced state), where each window presents content in this presentation state. Upon user interactions with the dynamic menu area, the presentation of the windows can change to a second presentation state (e.g., focused state), where a window in the second state and its content are resized and where an action performable on the content can be selected. Upon a user selection of the window in the second state, the presentation of the window changes to a third presentation state (e.g., selected state), where the windows and its content are resized again and where an action performable on the window can be further selected. In a further example, implementing the above UI functions via the GUI is a computer system executing one or more applications for generating and/or presenting windows in one of the presentation states in the menu (referred to herein as a dynamic menu as it includes a dynamic menu area). For instance, the computer system executes the menu application to, upon a user request for the dynamic menu, present windows in the first or second states and ...

DETAILED DESCRIPTION OF THE INVENTION

Generally, systems and methods for better information sharing and control switching in a graphical user interface (GUI) are described. In an example, a computer system presents a GUI on a display. Upon user input requesting a menu, the menu is presented in the GUI based on an execution of a menu application. The menu includes a dynamic area that presents a plurality of windows. Each of the windows corresponds to a target application and can be included in the dynamic menu area based on whether the target application is active or inactive, and depending on a context if the target application is inactive. The dynamic menu area shows the windows in a first presentation state (e.g., glanced state), where each window presents content in this presentation state. Upon user interactions with the dynamic menu area, the presentation of the windows can change to a second presentation state (e.g., focused state), where a window in the second state and its content are resized and where an action performable on the content can be selected. Upon a user selection of the window in the second state, the presentation of the window changes to a third presentation state (e.g., selected state), where the windows and its content are resized again and where an action performable on the window can be further selected.

In a further example, implementing the above UI functions via the GUI is a computer system executing one or more applications for generating and/or presenting windows in one of the presentation states in the menu (referred to herein as a dynamic menu as it includes a dynamic menu area). For instance, the computer system executes the menu application to, upon a user request for the dynamic menu, present windows in the first or second states and populates windows in the dynamic menu with content associated with either active or inactive applications of the computer system. In particular, the menu application determines a first order of windows and a second order of windows, associated with active applications and inactive applications, respectively. Each order may include multiple sub-groupings of windows, based on criteria including, but not limited to, relevance, timing, and context, to improve user accessibility and promote interaction with applications of the computer system. The menu application may generate and/or present the dynamic menu to include the first order of windows arranged horizontally adjacent to the second order of windows in a navigable UI. User interaction with the dynamic menu may permit the user to view one or more windows in the first order and/or the second order in the focused state.

To illustrate, consider an example of a video game system. The video game system can host a menu application, a video game application, a music streaming application, a video streaming application, a social media application, a chat application (e.g., a “party chat” application), and multiple other applications. A video game player can login to the video game system and a home user interface is presented thereto on a display. From this interface, the video game player can launch the video game application and video game content can be presented on the display. Upon a user button push on a video game controller, a menu can be presented in a layer at the bottom of the display based on an execution of the menu application. The menu includes a dynamic area, presenting multiple windows, each associated with an application of the video game system (e.g., the video game application, the music streaming application, etc.) The windows within the dynamic area of the menu are shown in a glanced state, providing sufficient information to the video game player about the applications (e.g., to perceive the car race invitation and to see a cover of the music album). By default, only one of the windows is shown in a focused state at a time and remaining windows are shown in the glanced state. Focus is shifted within the dynamic menu by navigating between windows, for example, by using a video game controller to scroll through the dynamic menu. For instance, upon the user focus (e.g., the user scroll) being on the music window, that window is expanded to show a partial list of music files and to present a play key. By default, windows associated with the video game application are arranged to the left of the dynamic menu in a horizontal arrangement, where each window presents content from a different service of the video game application. For example, the first window, presented in the focused state, could present content related to the video game player's recent accomplishments in the video game application (e.g., trophies, replays, friend or fan reactions, etc.). Similarly, another window to the right in the dynamic menu could include additional and/or newly available levels for the video game player to choose from. A third window could present content related to online gaming communities and/or sessions to join. In this way, the dynamic menu can provide multiple points of access to different services available in the video game platform in a single organized menu of horizontal windows. Additionally, the dynamic menu may present windows from inactive applications, for example, to the right of those associated with active applications. Inactive applications could be related to the game, for example a chat that took place in the game, or could be unrelated, for example, a music player application. The menu application may organize the two sets of windows differently. For example, the windows to the left, for active application services, may be organized by timing, such as the most recently accessed service is at the far left and by default in the focused state. The windows to the right, associated with inactive application services, may be organized by relevance to the video game application and/or by context of the user and the system.

Embodiments of the present disclosure provide several advantages over existing GUIs and their underlying computer systems. For example, by selecting windows for presentation in a dynamic menu, relevant application information can be surfaced to a user. In this way, the user may access multiple application services for an active application and may be presented with services from multiple inactive application services in the same UI element. By presenting the dynamic window in a horizontal linear arrangement of windows including both active application services and inactive application services, based on the user focus, navigation of UI menus and menu trees is reduced to a single linear menu that determines content dynamically for presentation to the user. Such techniques reduce system computational demands, arising from repeatedly rendering menus, and improve user experience by reducing frustration and fatigue caused by inefficient menu navigation.

In the interest of clarity of explanation, the embodiments may be described in connection with a video game system. However, the embodiments are not limited as such and similarly apply to any other type of a computer system. Generally, a computer system presents a GUI on a display. The GUI may include a home user interface from which different applications of the computer system can be launched. Upon a launch of an application, a window that corresponds to the application can be presented in the GUI.

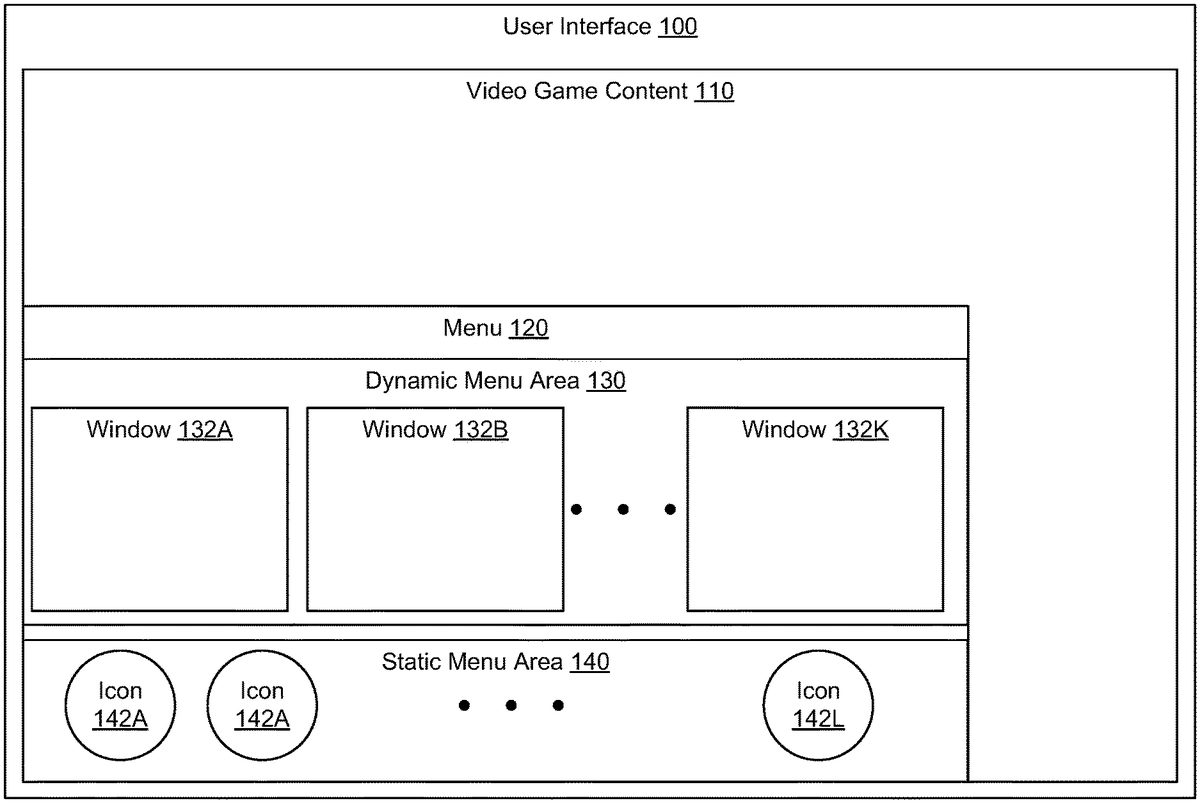

FIG. 1illustrates an example of a menu with selectable actions, according to an embodiment of the present disclosure. As illustrated, a graphical user interface100of a computer system (e.g., a video game system) is presented on a display. The GUI100presents video game content110of a video game application of the computer system (e.g., one executed by the video game system) and a menu120of a menu application of the computer system (e.g., one executed by the video game system). The menu120can be presented over at least a portion of the video game content110such that to appear in the foreground of the GUI100while the video game content110appears in the background of the GUI100. For instance, the menu120and the video game content110are displayed within a menu window and a content window, respectively, where the menu window is shown in a layer that is over the content window and that overlaps with only a portion of the content window.

In an example, the menu120can occlude the portion of the video content110behind it or can have some degree of transparency. Additionally or alternatively, the texturing and/or brightness of the menu120and the video game content110can be set such that the menu120appears in the foreground and the video game content110appears in the background.

As illustrated, the menu120includes a dynamic menu area130and a static menu area140. The dynamic menu area130presents a plurality of windows132A,132B, . . . ,132K, each of which corresponds to an application of the computer system. The static menu area140presents icons142A,142B, . . . ,142L, each of which corresponds to a system function (e.g., power on, volume control, mute and unmute, etc.) or an application of the computer system. For brevity, each of the windows132A,132B, . . . ,132K is referred to herein as a window132and each of the icons142A,142B, . . . ,142L is referred to as an icon142. By containing the two areas130and140, the menu120represents a dashboard that shows contextually relevant features and relevant system functions without necessitating the user to exit their game play.

Generally, a window132is added to the dynamic menu area130based on a determination of potential relevance to the user of the GUI100, as described in more detail in reference to the following figures, below. A window132is added to the dynamic menu area130according to an order, where windows within the dynamic menu area130can be arranged based on the potential relevance to the user. In comparison, the static menu area140may not offer the dynamicity of the dynamic menu area130. Instead, the icons142can be preset in the static menu area140based on system settings and/or user settings. Upon a selection of an icon142, a corresponding window (e.g., for a system control or for a particular background application) can be presented. The menu120can be dismissed while the window is presented, or alternatively, the presentation of the menu120persists.

The content, interactivity, and states of the windows132are further described in connection with the next figures. Generally, upon the presentation of the menu120, the execution of the video game application and the presentation of the video game content110continue. Meanwhile, user input from an input device (e.g., from a video game controller) can be received and used to interact with the menu120in the dynamic area130and/or the static area140. The dynamic area interactions allow the user to view windows132in different states, and select and perform actions on the content of the windows132or the windows132themselves. The static area interactions allow the user to select any of the icons142to update the system functions (e.g., change the volume) or launch a preset window for a specific application (e.g., launch a window for a music streaming application). Once the interactions end, the menu120is dismissed and the user control automatically switches to the video game application (e.g., without input of the user explicitly and/or solely requesting the switch). Alternatively, the switch may not be automatic and may necessitate the relevant user input to change the user control back to the video game application. In both cases, user input received from the input device can be used to interact with the video game content110and/or the video game application.

AlthoughFIG. 1describes a window as being presented in a dynamic menu area or can be launched from an icon in a static menu area, other presentations of the window are possible. For instance, user input from the input device (e.g., a particular key push) can be associated with the window. Upon receiving the user input, the window can be presented in a layer over the video game content110, without the need to present the menu120.

FIG. 2illustrates a computer system that presents a menu, according to an embodiment of the present disclosure. As illustrated, the computer system includes a video game console210, a video game controller220, and a display230. Although not shown, the computer system may also include a backend system, such as a set of cloud servers, that is communicatively coupled with the video game console210. The video game console210is communicatively coupled with the video game controller220(e.g., over a wireless network) and with the display230(e.g., over a communications bus). A video game player222operates the video game controller220to interact with the video game console210. These interactions may include playing a video game presented on the display230, interacting with a menu212presented on the display230, and interacting with other applications of the video game console210.

The video game console210includes a processor and a memory (e.g., a non-transitory computer-readable storage medium) storing computer-readable instructions that can be executed by the processor and that, upon execution by the processor, cause the video game console210to perform operations relates to various applications. In particular, the computer-readable instructions can correspond to the various applications of the video game console210including a video game application240, a music application242, a video application244, a social media application246, a chat application248, a menu application250, among other applications of the video game console210(e.g., a home user interface (UI) application that presents a home page on the display230).

The video game controller220is an example of an input device. Other types of the input device are possible including, a keyboard, a touchscreen, a touchpad, a mouse, an optical system, or other user devices suitable for receiving input of a user.

In an example, the menu212is similar to the menu130ofFIG. 1. Upon an execution of the video game application240, a rendering process of the video game console210presents video game content (e.g., illustrated as a car race video game content) on the display230. Upon user input from the video game controller220(e.g., a user push of a particular key or button), the rendering process also presents the menu212based on an execution of the menu application250. The menu212is presented in a layer over the video game content and includes a dynamic area and a static area. Windows in the dynamic area correspond to a subset of the applications of the video game console.

Upon the presentation of the menu212, the user control changes from the video game application240to the menu application250. Upon a receiving user input from the video game controller220requesting interactions with the menu212, the menu application250supports such interactions by updating the menu212and launching any relevant application in the background or foreground. The video game player222can exit the menu212or automatically dismiss the menu212upon the launching of an application in the background or foreground. Upon the exiting of the menu212or the dismissal based on a background application launch, the user control changes from the menu application250to the video game application240. If a foreground ground application is launched, the user control changes from the menu application250to this application instead. In both cases, further user input that is received from the video game controller220is used for controlling the relevant application and/or for requesting the menu212again.

AlthoughFIG. 2illustrates that the different applications are executed on the video game console210, the embodiments of the present disclosure are not limited as such. Instead, the applications can be executed on the backend system (e.g., the cloud servers) and/or their execution can be distributed between the video game console210and the backend system.

FIG. 3illustrates an example of a context-based selection of windows, according to an embodiment of the present disclosure. As illustrated, based on timing data320and a context330, a menu application310includes windows340for a dynamic area of a menu. The menu application310determines a first order360for arranging one or more of the windows340in the dynamic menu associated with active computing services370. Similarly, the menu application310determines a second order362for arranging one or more of the windows340in the dynamic menu associated with inactive computing services380. The first order360may arrange windows340based on the timing data120as a measure of potential relevance to the user. Additionally, the menu application310may use the context330and the user setting350to determine an arrangement of windows340for inclusion in the second order362such that the second order362is of relevance to the user.

In an example, the context330generally includes a context of a user of the computer system (e.g., a video game player) and/or a context of an application being executed on the computer system, as described in more detail, below. The context330may be received over an application programming interface (API) of a context service, where this context service may collect contexts of the user from different applications of a computer system or a network environment, or from an application (e.g., a video game context can be received from a corresponding video game application). The user setting350may be received from a user account or from a configuration file.

FIG. 4illustrates an example of a window in different states, according to embodiments of the present disclosure. Here and in subsequent figures, an action card is described as an example of a window and corresponds to an application. Generally, a window represents a GUI object that can show content and that can support an action performable on the content and/or window. In an example, the action card is a specific type of the window, where the action card includes a container object for MicroUX services, and where the action card contains content and actions for a singular concept. Action cards included in a menu facilitate immediate and relevant actions based on contexts of what the users are engaged with and the relationships of people, content, and services within a computer environment. Action cards included in the menu may be arranged horizontally and/or vertically in the GUI, and may be interactive. The menu may be dynamic and may include an arrangement of action cards that is responsive to the user interaction with the menu and a context.

As illustrated inFIG. 4, the action card can be presented in one of multiple states. Which state is presented depends on the user input, as further described in the next figures. One of the states can be a glanced state410, where the action card provides a glance to the user about the application. The glance includes relevant information about the action card, where this information should help the user in deciding in taking an action or not. For example, in the glanced state410, the action card has a first size, and presents content412and a title414of the content412or the action card based on the first size. To illustrate, an action card for a music application can be presented in the first state as a rectangle having particular dimensions, showing a cover and a title of a music album.

Another state can be a focused state420, where the action provides relevant information to the user and one or more options for one or more actions to be performed (e.g., for one or selectable actions on content of the application or the action card itself). In other words, the action card can surface quick actions for the user to select in response to the user's focus being on the action card. For example, in the focused state420, the action card has a second size (which can be larger than the first size), resizes the presentation of the content412and the title414based on the second size, and presents one or more selectable content actions422(e.g., play content, skip content, etc.) and one or more selectable card actions (e.g., move the action card to a position on the display, resize the action card, pint the action card, present the action card as a picture-in-picture, etc.). Referring back to the music action card illustration, in the focused state420, the music cover and album title are enlarged and a play button to play music files of the music album is further presented.

Yet another state can be a selected state430, where the action continues to provide relevant information to the user in a further enlarged presentation format, and provides one or more options for one or more actions to be performed on the connect and/or the action card itself (e.g., for one or selectable actions on content of the application or the action card itself). In other words, the action card becomes the primary modality for interacting with the MicroUX and displays the relevant visual interface. For example, in the selected state430, the action card has a third size (which can be larger than the second size), resizes the presentation of the content412and the title414based on the third size, continues the presentation of the content action422, presents additional content432of the application, and presents one or more options434for one or more content actions or card actions that can be performed on the action card. Upon a user selection of the option434, the card actions are presented and can be selected. Referring back to the music action card illustration, in the selected state430, the music cover and album title are further enlarged and the presentation of the play button continues. Additional music files of the music album are also identified. In the above states, the content412, title414, content action422, and additional content432can be identified from metadata received from the application.

As illustrated inFIG. 4, the action card can include a static presentation and a dynamic presentation area, each of which can be resized depending on the state. For instance, the title414is presented in the static area, identifies the underlying application associated with the action card and does not change with the state. In comparison, the content412can be presented in the dynamic area and can change within each state and between the states. In particular, the content itself may be interactive (e.g., a video) and its presentation can by its interactive nature change over time. Additionally or alternatively, the content412can also be changed over time depending on the user context and/or the application context.

As illustrated inFIG. 4, the action card in the glanced state410, the action card in the focused state420, and the action card in the selected state430each include an icon416. The icon416may be an image representative of one or more things including, but not limited to, the user (e.g., a profile picture), the computer system (e.g., a system logo or image), a target application (e.g., a badge or icon from the application), and a system icon representing the type of target application (e.g., a general symbol for the type of content being presented, for example, a music note for an audio player, a camera for an image gallery, a microphone for a chat application, etc.).

As illustrated inFIG. 4, in an action card in the focused state (e.g., focused state420) one or more command options440are included. WhileFIG. 4shows the command options440in a panel positioned lower than the content, the command options440could be positioned as an overlay in front of the content, in a side panel, and/or positioned above the content near the title414. The command options440may include textual or image-based instructions or may include one or more interactive elements442such as buttons or other virtual interactive elements. For example, the interactive elements442may include a button configured to receive a user-click. In some embodiments, the command options440and the interactive elements442facilitate interaction with content412and/or additional content432. For example, the command options may provide additional and/or alternative function control over content beyond what is provided by content action422. To illustrate, the content action422may provide a play/pause function for an audio or video player, while one of the command options440may include a mute/unmute function for a party chat application. As a further example, the command options440may be context-specific, based on the current state of the content in the action card. For example, when an action card is playing audiovisual content, the command options440may include only a mute button, rather than a mute/unmute button, and a stop button, rather than a play button.

In reference to the figures, below, the term action card is used to describe a window in one or more of the presentation states. For example, a window presenting content in the dynamic menu that is associated with an application on the computer system that is different from the menu application is referred to as an “action card.” Further, the action cards are illustrated as being presented sequentially next to each other along a horizontal line. However, the embodiments are not limited as such and can include other arrangements based on different orders of inactive and active service. For instance, the action cards can be presented sequentially along a vertical line.

FIG. 5illustrates an example of a computer system500that presents a dynamic menu550populated with action cards, according to an embodiment of the present disclosure. As illustrated inFIG. 5, the computer system500includes a menu application510(e.g., menu application310ofFIG. 3), which includes a service determining unit512, a context determining unit514, and an order determining unit516. These are described as sub elements of the menu application510, but may also be different applications of the computer system500, providing data to the menu application510for the menu application510to perform operations. For example, the menu application510may determine active computing services520and inactive computing services522. Services may refer to functionality available to a user506and/or the computer system500, from different applications. For example, a song, playable using a media player application, may be available to the user506as an inactive computing service522. In this way, multiple inactive computing services522may be available from multiple applications526a-n. As illustrated inFIG. 5, the active computing services520may be provided from the applications524a-nthat are already running the respective active computing services520. For example, an active computing service520may be a video game service available from a video game application (e.g., game navigation or mission selection). As another example, a chat application may be running a chat service as an active computing service520.

As illustrated inFIG. 5, the menu application510determines a first order560and a second order562for presenting action cards in a dynamic menu550via a display540. As described in more detail in reference toFIGS. 1-2, the dynamic menu550may form a part of a larger menu (e.g., menu120ofFIG. 1) overlaid on other content, such as a user application502, in response to input received by computer system500from user506. The first order560includes one or more action cards522a-n(also referred to as action cards522for simplicity), where “a,” “b,” and “n” represent integer numbers greater than zero and “n” represents the total number of action cards552. As illustrated, by default an action card552aof the action cards522is presented in the focused state, as described in more detail in reference toFIG. 4. In the focused state (e.g., focused state420ofFIG. 4) the action card552aincludes content574(e.g., content412ofFIG. 4) received by the computer system500from one or more content sources530and a content action576(e.g., content action422ofFIG. 4) associated with content574. Content sources530may include a content network532, including, but not limited to, a cloud-based content storage and/or distribution system. The content sources530may also include system content534provided by a data store communicatively coupled with the computer system (e.g., a hard drive, flash drive, local memory, external drive, optical drive, etc.). The first order560may be determined based on at least one timing factor for each active computing service of the active computing services520. For example, the menu application510may determine the first order560based on arranging the action cards552in order of most recent interaction with the user506(e.g., last-played). Similarly, the first order560may be based on how frequently the user506interacts with applications524from which active computing services520are available (e.g., most-played, favorites, etc.). Alternatively, the first order560may be based on timing of activation of the active computing services520, for example, which active computing service520was most recently activated by the user506. Activating a computing service may include initiating, executing, and/or running an application on the computer system500based on a request by the user506and/or by a background process operating on the computer system500. As such, the first order may arrange the action cards552according to a “most recently opened” arrangement.

As illustrated inFIG. 5, the second order562describes an arrangement of the action cards554a-non the dynamic menu550presented on the display540. The action cards554a-n(also referred to as action cards554for simplicity) may be presented in the glanced state (e.g., glanced state) by default. In some embodiments, at least one action card of the action cards554may be presented in the focused state (e.g., focused state) by default. The arrangement of action cards554in the second order562may be based on a context, determined by the menu application510(e.g., using order determining unit516). The context may be based on a user application502of the computer system510, a system application504of the computer system, and/or the user506. A context of the user506(user context) generally includes any of information about the user506, an account of the user, active background applications and/or services, and/or applications and/or services available to the user506from the computer system550or from other network environment (e.g., from a social media platform). A context of the application (user application502and/or system application504context) generally includes any information about the application, specific content shown by the application, and/or a specific state of the application. For instance, the context of a video game player (user context) can include video game applications, music streaming applications, video streaming applications, social media feeds that the video game player has subscribed to and similar contexts of friends of the video game player. The context of a video game application includes the game title, the game level, a current game frame, an available level, an available game tournament, an available new version of the video game application, and/or a sequel of the video game application. In some embodiments, context may be determined by the menu application510from data describing features, aspects, and/or identifiers of the user application502, the system application504, and the user506for which context is being determined. For example, the computer system500may maintain a profile of the user506in system content534that includes data describing biographic information, geographic location, as well as usage patterns. From that profile, the menu application510may derive one or more elements of user context (e.g., user context320ofFIG. 3). To illustrate the example, biographical information of the user506may indicate that the user506is a legal minor. This information may be used in determining a user context based upon which the second order562may exclude any inactive computing services522restricted to other users over the age of legal majority. In this way, the second order562is defined for a given context and the order of action cards554is also pre-defined based on the context. For other user, system application, or user application contexts, however, the second order562may differ. Furthermore, the second order562may include action cards554for inactive computing services522from multiple application types, for which the second order562indicates an arrangement of the action cards554according to application type. For example, second order562may include multiple action cards554for media-player services, two for video game services, and one for a party-chat service. The second order may, in some embodiments, determine the second order562such that the media player action cards are grouped together and the video game action cards are grouped together.

In some embodiments, the dynamic menu may present windows that include active windows and suggested windows. Such windows can be presented dependently or independently of presentation orders related to recency and contexts. An active window corresponds to an active computing service. A suggested window corresponds to an inactive computing service, where the inactive computing service has not been previously used by a user of the computer system, such as one that have not been previously accessed, activated, inactivated, closed, removed, deleted, and/or requested by the user. Such an inactive computing service is referred to herein as a new inactive computing service. For instance, a new inactive computing service of a video game application may include a newly unlocked level in the video game application or a new downloadable level content (DLC) available from a content server. In an example, the menu application may determine a number of active windows corresponding to active computing services and a number of suggested windows corresponding to new inactive computing services for presentation within the dynamic window. In determining that a suggested window is to be presented within the dynamic menu, new inactive services may additionally and/or alternatively be ranked or otherwise compared based on relevance to the user and/or a computing service(s) (whether active or inactive). In some cases, relevance may be determined based on a context of the user or the computing service(s). As described above, context includes system context, application context, and/or user context. For example, a newly unlocked video game level may be included in the dynamic menu as a suggested window based on the relevance to an active video game application, determined by user profile information indicating that the user typically launches unlocked video game levels.

As illustrated inFIG. 5, the first order560and the second order562indicate a horizontal arrangement of the action cards552and the action cards554in the dynamic menu550. For example, the action cards574may be arranged in the dynamic menu550to the left of the action cards554in a linear horizontal arrangement. In this example, the action cards may be arranged in the dynamic menu550such that the right-most action card will be the action card552n, which is positioned adjacent and to the left of the action card554a, forming a linear arrangement of action cards making up the first order560and the second order562in the dynamic menu550. In some embodiments, the arrangement of action cards according in the dynamic menu may differ from the linear and horizontal arrangement illustrated inFIG. 5. For example, the arrangement may be circular, vertical, curved, etc. In some embodiments, the dynamic menu may switch between first and action cards by exchanging positions on the display, putting the action cards554in, for example, the left-most position in the arrangement.

FIG. 6illustrates an example of a display that presents a dynamic menu including interactive action cards, according to an embodiment of the present disclosure. As illustrated inFIG. 6, a first order610and a second order620defines the arrangement of action cards in a dynamic menu650presented via a display640. Multiple action cards652a-nare arranged horizontally according to the first order610, further organized in sets of action cards. For example,FIG. 6includes a first set612, a second set614, and a third set616. The sets may divide the action cards652by application type, for example, video game, social media application, web browser, etc. As an example, the action cards652arranged in the first set612may all present content of video games, while the action cards652arranged in the second set614may all present content of social media applications. Furthermore, the first order610may define a limit on a number of the action cards652in each set. For example, the first set612may be limited to three action cards652, while the second set614may be limited to only two action cards652, as illustrated inFIG. 6. The first order610may define more than three sets, and the number of sets and the maximum number of action cards for each set may be pre-defined and/or dynamic, to reflect context and usage of the computer system (e.g., computer system500ofFIG. 5). When the number of possible action cards exceeds a predefined limit, particular action cards may be selected to meet this limit. Various selection techniques are possible. In one example technique, the selection is random. In another technique, the selection is based on a relevance to a user or an application. For instance, a user context and/or an application context can be used to rank the possible action cards and the highest ranked action cards are selected to meet the predefined limit.

FIG. 6further illustrates a second order620defining multiple subsets. For example, a first subset622, a second subset624, and a third subset626divide the action cards654making up the second order620. In some embodiments, the second order620may include additional or fewer subsets. The second order620may define subsets based on relevance to the first order610. For example, the first subset622may include action cards654presenting content for inactive services of active applications (e.g., applications for which an active service is presented in an action card652). In another example, the second subset624may include action cards654presenting content for inactive services for applications from the same application type as those presented in the first subset622. In another example, the third subset626may include second action cards654presenting content for inactive services of a different application type from those presented in the first subset622and/or the second subset624. As with the first order610sets, each subset of the second order620may include action cards654of a single application type, and the second order620may define a maximum number of action cards654of each application type to be included in the second order620. For example, as illustrated inFIG. 6, the first subset622includes three action cards654, while the second subset624includes two action cards654. As with the first order610, the second order may be pre-defined and/or dynamic, changing relative to the context.

FIG. 7illustrates an example of a display presenting a dynamic menu750in response to user interaction, according to an embodiment of the present disclosure. As illustrated inFIG. 7, the dynamic menu750, presented via display740, includes a horizontal arrangement of multiple action cards752a-nfrom active computing services and multiple action cards754a-nfrom inactive computing services. By default, the dynamic menu750presents only action card752ain the focused state770, and all other action cards in the glanced state772. In response to a user interaction710(e.g., by a user input via input device220ofFIG. 2), the action card states may be adjusted in the dynamic menu750to shift the action card752afrom the focused state770to the glanced state772, while adjusting the action card754afrom the glanced state772to the focused state770. In this way, the dynamic menu750facilitates interactions with the content present in the action cards, and provides a user (e.g., user506ofFIG. 5) with access to applications and content.

FIG. 8illustrates another example of an action card in a dynamic menu850, according to an embodiment of the present disclosure. As illustrated inFIG. 8, by default, the dynamic menu850presented via a display840includes multiple actions cards in a glanced state872and only one action card852ain a focused state870, as described in more detail in reference toFIG. 7. In response to a user interaction810, for example via a user input device (e.g., user input device220ofFIG. 2), to select the action card852ain the focused state870, the computer system (e.g., computer system500ofFIG. 5) may generate and/or present via display840an action card820in the selected state874(e.g., selected state430ofFIG. 4). As illustrated inFIG. 8, the selected state includes a selectable action822that permits a user to control dynamic content presented in the selected state874, as described in more detail in reference toFIG. 4.

FIG. 9illustrates an example of interaction with a dynamic menu950, according to an embodiment of the present disclosure. As illustrated inFIG. 9, the dynamic menu950presented via a display450may be entered and exited through user interaction using an input device (e.g., input device220ofFIG. 2). When the dynamic menu950is active, at least one action card is in the focused state. As illustrated inFIG. 9, action card952ais in the focused state, while all remaining action cards are in the glanced state. In response to a user interaction910, for example, a user request to exit, hide, minimize, or collapse the dynamic menu950, the computer system (e.g., computer system500ofFIG. 5) may adjust the action card952afrom the focused state to the glanced state via the action of a menu application (e.g., menu application510ofFIG. 5). Similarly, in response to a user interaction920to enter, show, maximize, or erect the dynamic menu950, the computer system, via the menu application, return the same action card952ato the focused state from the glanced state. In some embodiments, the user interaction910and the user interaction920are the same action on the input device (e.g., press a single button successively to both hide and show the dynamic menu950). Alternatively, the user interaction910and the user interaction920may be different actions, and reentering the dynamic menu950may not return action card952ato the focused state, depending on a change in context between exiting and reentering the dynamic menu940. For example, if the user exited the dynamic menu940to make some changes to active applications and/or processes via a different avenue of user interaction (e.g., static menu area140ofFIG. 1), the ordering of action cards in the dynamic menu950may change, such that the action card952in the focused state may be different upon reentry, as described in more detail in reference toFIGS. 11-12, below.

FIG. 10illustrates an example of a computer system1000that adjusts a dynamic menu1050in response to activation of an application1026a, according to an embodiment of the present disclosure. As illustrated inFIG. 10, while the dynamic menu1050is being presented via a display1040, multiple applications1024on the computer system may be running active computing services1020, while other applications1026may be inactive. For example, a video game may be running, along with a party chat application, a web browser application, and a live-stream video application. As illustrated inFIG. 10, the application1026ainitializes while the dynamic menu1050is presented. In response, a menu application1010running on the computer system1000determines when the application1026aactivates, and generates and/or presents an additional action card1056as part of a first order1060in the dynamic menu1050. As such, the additional action card1056appears in the dynamic menu1050in a horizontal arrangement of action cards1052as described in more detail in reference toFIG. 5. WhileFIG. 10shows the additional action card1056in the left most position, it may appear in another position in the first order1060. The process of adding the additional action card1056to the dynamic menu may occur in real time and without any user interaction as a default process of the menu application1010. Alternatively, the menu application may generate and/or present a notification of a new active computing service1020corresponding to the application1026a, for which the user (e.g., user506ofFIG. 5) may select to add the additional action card1056to the dynamic menu1050. In some embodiments, the additional action card1056may be presented in the focused state initially. Alternatively, the additional action card1056may be presented in the glanced state initially, as illustrated inFIG. 10.

FIG. 11illustrates an example of a dynamic menu1150adjusting in response to a deactivated application1124n, according to an embodiment of the present disclosure. As illustrated inFIG. 11, an active application1124running on a computer system1100deactivates, as determined by a menu application1110running on the computer system1100, while the computer system1100is presenting the dynamic menu1150via a display1140. The deactivated application1124nis different from the menu application1110. As illustrated inFIG. 11, the dynamic menu presents action cards1152for each active application1124, and actions cards1154for inactive applications1126, as described in more detail in reference toFIG. 5. As described in more detail in reference toFIG. 9, at least one action card is presented in the focused state, while the remaining action cards are shown in the glanced state. As illustrated inFIG. 11, an action card1152ais in the focused state, while a different action card1156is associated with the deactivated application1124n. Unlike what was described in reference toFIG. 10, the menu application1100does not reorganize and/or adjust the dynamic menu1140after determining that the deactivated application1124nis no longer active. Instead, the menu application1110continues to present the different action card1156in the dynamic menu1140, until the computer system receives user input1130, including, but not limited to, a user request to remove the dynamic menu from the display. For example, as described in more detail in reference toFIG. 1, the dynamic menu1150may be included in a user interface (e.g., user interface100ofFIG. 1) presented on the display1140. In this way, as described inFIG. 9, the user interaction1130may prompt the menu application1110to remove or minimize the dynamic menu1150. Upon receiving a second user interaction1132, for example, by repeating the same command to reverse the change, menu application1110repopulates the dynamic menu1150without the different action card1156and with the same action card1152ain the focused state.

FIG. 12illustrates an example of a menu application1210determining an inactive application1228and adding a corresponding action card1256to a dynamic menu1250, according to an embodiment of the present disclosure. As illustrated inFIG. 12, a computer system1200runs a menu application1210that has determined one or more applications1226a-nare inactive, and has generated and/or presented action cards1254a-ncorresponding to the one or more applications1226in the dynamic menu1250via a display1240. The action cards1254are presented according to a second order (e.g., second order562ofFIG. 5), as described in more detail in reference toFIG. 5. The inactive application1228may have become inactive while the dynamic menu was presented, as described in more detail in reference toFIG. 11. While the dynamic menu1250is presented, the menu application1210does not adjust the dynamic menu1250, nor does it populate additional action cards for inactive computing services1222for which action cards are not already presented, until the computer system1200receives user input1230requesting to remove the dynamic menu1250from the display1240. When the computer system1200receives a second user input1232prompting the menu application1210to generate and/or present the dynamic menu1250, the menu application1210populates the dynamic menu1250including the corresponding action card1256for the inactive application1228.

FIG. 13illustrates an example of a computer system1300for presentation of content in an interactive menu and an action card, according to an embodiment of the present disclosure. As described in more detail in reference toFIGS. 1-2, the computer system1300may be a videogame system, a backend system, or any other system configured to store and present content on a display. As illustrated, the computer system1300includes multiple target applications1302a-n(hereinafter also referred to as target application1302, target applications1302, target app1302, or target apps1302, for simplicity), where “a,” “b,” and “n” are positive integers and “n” refers to the total number of target applications. As illustrated, the computer system1300further includes a menu application1310, a cache1318, and an action card application1360. Each of the target apps1302may correspond to a different application running on the computer system (e.g., video game console210ofFIG. 2) or on a backend system, as described in more detail in reference toFIGS. 1-2. The target application1302may be a system application or a user application, and is different from the menu application1310. Generally, a system application is an application that does not interact with a user of the computer system1300, while a user application is an application configured to interact with a user of the computer system1300.

The cache1318may include a local memory on the computer system (e.g., a hard disk, flash drive, RAM, etc.) configured for rapid storage and retrieval of data to minimize latency. As illustrated, the menu application1310includes a determining unit1312, one or more data templates1314, and a computing service1316. The determining unit1312may be implemented as software and/or hardware, such that the menu application1310may determine a data template1314that is defined for a specific target application1302. The data templates1314, at least in part, identify the types of content for the computer system1300to store in the cache1318for the target application1302based on the association of a data template1314with a target application1302. In some cases, each data template1314further associates a type of content (e.g., audio, video, video game content, etc.) with one or more presentation states.

The menu application may store data1320in the cache1318, where the data1320may include multiple types of content including, but not limited to, first content1322and second content1324. For example, as described in more detail in reference toFIGS. 1-2, the content may include video content, audio content, video game content, party chat information, etc. The data1320may also include a first uniform resource identifier (URI) of the first content1322, where a URI typically is characterized by a string of characters identifying a resource following a predefined set of syntactical rules. For example, a URI may identify a resource to facilitate interaction involving that resource between networked systems. Similarly, the data1320may include URI information for each type content, for example, a second URI of the second content1324. The first content1322and the second content1324may be identified in one or more data templates1314and associated in the data templates1324with a glanced state, a focused state, and/or a selected state, as described in more detail in reference toFIGS. 1-2. For example, the first content1322may be associated with a glanced state and with a focused state, and the second content1324may be associated only with the focused state, as defined in a given data template1314. In this way, the cache1318may store multiple types of content associated with multiple data templates1314, making up data for different target applications1302.

As illustrated, the computer system1300is communicatively coupled with one or more content sources1330from which the computer system may fetch and/or receive data1320. For example, the content sources1330may include a content network1332, including, but not limited to, a cloud-based content storage and/or distribution system. The content sources1330may also include system content1334provided by a data store communicatively coupled with the computer system (e.g., a hard drive, flash drive, local memory, external drive, optical drive, etc.).

As illustrated, the computer system1300is communicatively coupled to an input device1340, which may include, but is not limited to a user input device as described in more detail in reference toFIG. 2(e.g. video game controller220ofFIG. 2). The input device1340may provide user input to the computer system1300to facilitate user interaction with data1320stored in the cache1318. As described in more detail below, the interaction may take place via one or more menus and/or windows in a UI. For example, the computer system1300may generate user interface data to configure a user interface including a static menu and a dynamic menu, as described in more detail in reference toFIG. 1(e.g., static menu area140and dynamic menu area130of menu120ofFIG. 1).

As illustrated, the computer system1300is communicatively coupled with display450. Display1350may include any general form of display compatible with interactive user interfaces (e.g., display230ofFIG. 2). Display1350may include an augmented reality and/or virtual reality interface produced by a wearable and/or portable display system, including but not limited to a headset, mobile device, smartphone, etc. In response to input provided by the input device1340, received as user input by the computer system1300, the computer system1300may present a dynamic menu1352via display1350(e.g., dynamic menu area130ofFIG. 1). As described in more detail in reference toFIGS. 1-2, the display1352may present a static menu and the dynamic menu1350, where the dynamic menu1350includes one or more action cards1354. As illustrated, the action cards1354are presented in the glanced state, and each may correspond to different target applications1302, and each may be populated with different data1320according to a different data template1314associated with the different target applications1302. For example, the action cards1356may include an action card1354that corresponds to a first target application1302, such that the action card1354presents first content1322based on data from the cache1318and according to a data template1314defined for the first target application1302. As described in more detail in reference toFIG. 5, user interaction with the computer system1300via the dynamic menu1350may switch one or more of the action cards1354from the focused state to the glanced state, and vice versa. As illustrated inFIG. 13, action card1354bis in the focused state, but interaction with the dynamic menu1350permits a user (e.g., user506ofFIG. 5) to switch the focused state presentation, for example, from the action card1354bto the action card1354a.

As described in more detail in reference toFIGS. 1-2, the computer system1300may receive one or more types of user interaction via the input device1340taking the form of a request for the selected state associated with the target application of the action card1354, as described in more detail in reference toFIG. 4. This may include a user button press on the action card1354and/or another form of user interaction via the input device1340that constitutes a command option (e.g., command option440ofFIG. 4). The computer system1300may respond to the user input by generating a copy of the data associated with the first target application1302from the cache and sending the copy of the data associated with the first target application1302to the action card application1360. As illustrated, the action card application1360is different from the menu application and the first target application1302. The action card application1360may be configured to generate user interface data, such that a user interface generated using the user interface data may present, via display1350, an action card1370that corresponds to the first target application1302presented in the selected state. The action card1370, in the selected state, may include both first content1372and second content1374presented based on the data template1314associated with the target application1302. The first content1372and the second content1374may be populated in the action card1370by the action card application1360using the copied data sent to the action card application1360by the menu application1310, in response to user input received by the computer system1300via the input device1340.

FIG. 14illustrates an example flow for presenting content in an interactive menu, according to embodiments of the present disclosure. The operations of the flow can be implemented as hardware circuitry and/or stored as computer-readable instructions on a non-transitory computer-readable medium of a computer system, such as a video game system. As implemented, the instructions represent modules that include circuitry or code executable by a processor(s) of the computer system. The execution of such instructions configures the computer system to perform the specific operations described herein. Each circuitry or code in combination with the processor represents a means for performing a respective operation(s). While the operations are illustrated in a particular order, it should be understood that no particular order is necessary and that one or more operations may be omitted, skipped, and/or reordered.

In an example, the flow includes an operation1402, where the computer system determines active applications and inactive applications. As described in more detail in reference toFIG. 5, applications on a computer system (e.g., computer system500ofFIG. 5) may be active or inactive, and the computer system may run a menu application (e.g., menu application510ofFIG. 5) to determine which applications are active and inactive.

In an example, the flow includes operation1404, where the computer system determines a first order of presentation of action cards corresponding to the active applications (e.g., active computing services520ofFIG. 5). As described in more detail in reference toFIG. 5, the menu application determines a first order (e.g., first order560ofFIG. 5) describing the number, order, and arrangement of action cards (e.g., action cards552ofFIG. 5) to present in a dynamic menu (e.g., dynamic menu550ofFIG. 5). The action cards may be associated with the active applications and each may be associated with a different application. As described in more detail in reference toFIG. 5, the first order may be divided into sets, and the action cards may be arranged in order of most recent interaction and/or most frequent interaction. As described in more detail in reference toFIG. 6, the sets may include only action cards for a single application type, and may be limited in the number of action cards in each set, depending on the application type.

In an example, the flow includes operation1406, where the computer system determines a context of at least one of a user application, a system application, or a user. As described in more detail in reference toFIG. 5, context includes a system application context, a user application context and/or a user context. User context generally includes any of information about the user, an account of the user, active background applications and/or services, and/or applications and/or services available to the user from the computer system or from other network environment (e.g., from a social media platform). User application and/or system application context generally includes any information about the application, specific content shown by the application, and/or a specific state of the application. For instance, the context of a video game player (user context) can include video game applications, music streaming applications, video streaming applications, social media feeds that the video game player has subscribed to and similar contexts of friends of the video game player. The context of a video game application includes the game title, the game level, a current game frame, an available level, an available game tournament, an available new version of the video game application, and/or a sequel of the video game application.

In an example, the flow includes operation1408, where the computer system determines a second order of presentation of action cards corresponding to the inactive computing services. As described in more detail in reference toFIGS. 5-6, the second order (e.g., second order562ofFIG. 5) may include multiple action cards (e.g., action cards554ofFIG. 5) arranged horizontally by relevance to the action cards included in the first order. For example, relevance may be determined by dividing the second order into multiple subsets (e.g., subset622ofFIG. 6) corresponding, for example, to action cards presenting content for inactive services of active applications (e.g., applications for which an active service is presented in an action card). Alternatively, a subset may be presented including action cards presenting content for inactive services for applications from the same application type as those in the first order. Alternatively, a subset may be presented including action cards presenting content for inactive services of a different application type from those presented in the first order.

In an example, the flow includes operation1410, where the computer system presents, in a dynamic menu, the action cards according to the first order and the action cards according to the second order, with only one of the action cards in a focused state and the remaining action cards in a glanced state. As described in more detail in reference toFIGS. 5-13, the menu application (e.g., menu application510ofFIG. 5) may generate and/or present by default a dynamic menu (e.g., dynamic menu550ofFIG. 5) with a single action card (e.g., action card554aofFIG. 5) in the focused state, with the remaining action cards in the glanced state. In some embodiments, multiple action cards may be presented in the focused state. As described in more detail in reference toFIG. 7, the focused state may be shifted from one action card to another by user interaction with the computer system. As described in more detail in reference toFIG. 8, an action card may be expanded into the selected state by a different user interaction and/or by repeating the same interaction with the computer system. As described in more detail in reference toFIG. 9, navigating away from the dynamic menu within a user interface may switch all action cards to the glanced state, whereupon the action card having been in the focused state is returned to the focused state when the user returns to the dynamic menu. As described in more detail in reference toFIG. 10the menu application (e.g., menu application1010ofFIG. 10) may add additional action cards (e.g., additional action card1056ofFIG. 10) to the first order (e.g., first order1060ofFIG. 10) when an application activates (e.g., application1026aofFIG. 10). As described in more detail in reference toFIG. 11, the menu application may determine that an application has deactivated (e.g., application1124nofFIG. 11), and may continue to present the corresponding action card (e.g., action card1156ofFIG. 11) in the dynamic menu until the user interacts with the computer system to remove the dynamic menu from the user interface and/or display. For example, the user may interact with the static menu (e.g., static menu area140ofFIG. 1) to close the dynamic menu. Upon reopening the dynamic menu, the menu application may remove the action card associated with the deactivated application from the dynamic menu. As described in more detail in reference toFIG. 12, the dynamic menu similarly may add an action card (e.g., action card1256ofFIG. 12) to the second order only upon reopening the dynamic menu after navigating away and/or closing the dynamic menu by the user.

FIG. 15illustrates an example of launching an application module and terminating a menu application, according to embodiments of the present disclosure. In particular, the menu application is used to present a menu that includes a plurality of windows. As illustrated, a menu application1510supports the presentation of a window1520in a glanced state1522, a focused state1524, and a selected state1526depending on user input from an input device as explained herein above. The window1520corresponds to an application (referred to herein as an “underlying application” in the interest of clarity). Metadata of the underlying application may be received by the menu application to populate, by the menu application1510, the content and selectable actions on the content in the window1520as relevant to each state (the content and selectable actions are shown as “action card component1512”).

In an example, when the window1520is added (along with other windows corresponding to different underlying applications) to the menu, the menu application1510also instantiates an application module1530. The application module1530can be a logical container for coordinated objects related to a task (e.g., to present an interfacing window) with optional programming window. The application module1530can have parameters common to the different underlying applications (e.g., common objects), whereby it represents a shell from which any of these applications can be quickly launched. When the window1510is in the glanced state1522or the focused state1524, the menu application1510does not pass content or application-related information to the application module1530(this is illustrated inFIG. 15with blank area of the application module1530).

When the window1520starts transitioning from the focused state1524to the selected state1526in response to a user selection of the window1520, the size, content, and selectable actions of the window1520start changing. The menu application passes information about this change along with parameters specific of the underlying application (that corresponds to the window1520) to the application module1530(e.g., state information, programming logic, etc.). Accordingly, the application module1530would have the same action card component1532as the action card component1512presented in the window1520during the transition to and in the selected state1526. In addition, the application module1530corresponds to an instantiation of the underlying application given the specific parameters of this application.

During the transition and in the selected state1426, the application module1430supports an overlay window1440that has the same size and includes the same content and actions as the window1420. A rendering process presents the overlay window1440over the window1420, such that both windows completely overlap during the transition and in the selected state1426. Hence, from a user perspective, the user would only perceive one window (e.g., the overlay window1440), while in fact two windows are presented on top of each other.

Upon the end of the transition or upon user input requesting action, the window1420may be dismissed (e.g., closed) and the overlay window1440may be used instead. From that point, the overlay window1440becomes the interface to the underlying application and the menu application1410can be terminated (or run in the background).

FIG. 12illustrates an example flow for launching an application module and terminating a menu application, according to embodiments of the present disclosure. The operations of the flow can be implemented as hardware circuitry and/or stored as computer-readable instructions on a non-transitory computer-readable medium of a computer system, such as a video game system. As implemented, the instructions represent modules that include circuitry or code executable by a processor(s) of the computer system. The execution of such instructions configures the computer system to perform the specific operations described herein. Each circuitry or code in combination with the processor represents a means for performing a respective operation(s). While the operations are illustrated in a particular order, it should be understood that no particular order is necessary and that one or more operations may be omitted, skipped, and/or reordered. The example flow inFIG. 12can be performed in conjunction with or separately from the example flow inFIG. 10.

In an example, the flow includes an operation1202, where the computer system presents video content of a video game application (e.g., first content of a first application) on a display. The video game application can be executed on the computer system and the video game content can be presented based on the game play of a user of the computer system (e.g., a video game player).

In an example, the flow includes an operation1204, where the computer system receives user input requesting a menu. For instance, the user input is received from an input device (e.g., a video game controller) and corresponds to a user push of a key or button on the input device (e.g., a particular video game controller button) or any other type of input (e.g., a mouse click). An event may be generated from the user input indicating a command. The command can be for the presentation of the menu. Otherwise, the command can be for other controls (e.g., the display of a home user interface, an exit from the video game application, etc.) depending on the type of the user input.

In an example, the flow includes an operation1206, where the computer system presents the menu, where this menu includes a plurality of windows (e.g., action cards) displayed in a dynamic area of the menu and a plurality of icons displayed in a static area of the menu. For instance, the menu is presented in response to the command for the presentation of the menu. In addition, a user context and an application context can be determined and used to select particular application or remote computing services that are likely of interest to the user. Each window within the dynamic menu area corresponds to one of these applications. The windows can also be presented in a glanced state. In one illustration, the window of likely most interest to the user given the user context and application context can be shown in another state (e.g., the focused state). In another illustration, if one of the windows was selected or was in a focused state upon the most previous dismissal of the menu, that window can be presented in the focused state.

In an example, the flow includes an operation1208, where the computer system instantiates an application module. The application module can have parameters common to the different applications that correspond to the windows of the menu.

In an example, the flow includes an operation1210, where the computer system receives a user scroll through the windows within the dynamic menu area (or any other types of interactions within the dynamic menu area indicating a focus of the user). The user scroll can be received based on user input from the input device and a relevant event can be generated based on this input.

In an example, the flow includes an operation1212, where the computer system presents a window (e.g., an application window corresponding to one of the applications where the user focus is currently on) in the other state (e.g. the focused state). For instance, if the user scroll is over the window, that window is presented in the focused state, while the presentation of the remaining windows is in the glanced state.

In an example, the flow includes an operation1214, where the computer system receives a user selection of the window. The user selection can be received based on user input from the input device and while the window is presented in the focused state. A relevant event can be generated based on this input.

In an example, the flow includes an operation1216, where the computer system presents the window in a different state (e.g. a selected state). For instance, the window's size is changed from the focused state to the selected state, while the presentation of the remaining windows remains in the glanced state.

In an example, the flow includes an operation1218, where the computer system updates the application module to include parameters specific to the corresponding application of the selected window and to present an overlay window. For instance, the size, content, and actions of the window and the state information and programming logic of the application are passed to the application window, thereby launching an instance of the application from the application module, where this instance can use the information about the size, content, and actions of the window for the presentation of the overlay window.

In an example, the flow includes an operation1220, where the computer system presents the overlay window. For instance, as the window transitions from the focused state to the selected state or once in the selected state, a rendering process also presents the overlay window over the window.

In an example, the flow includes an operation1222, where the computer system dismisses the presentation of the window. For instance, upon the presentation of the overlay window or upon the transition to the selected state, the window is closed. In addition, the menu application can be termination or can be moved to the background.

In an example, the flow includes an operation1224, where the computer system presents an option to perform an action (e.g., a selectable action) on the overlay window (e.g., to pin to side or to show as a picture in picture). For instance, the option is presented as an icon within the overlay window upon the transition to the selected state.

In an example, the flow includes an operation1226, where the computer system receives a user selection of the option. The user selection can be received based on user input from the input device and a relevant event can be generated based on this input.

In an example, the flow includes an operation1228, where the computer system performs the action. For instance, a pin-to-side operation or a picture-in-picture operation are performed on the overlay window, resulting in a pinned window or a picture-in-picture window.

In an example, the flow includes an operation1230, where the computer system changes user control from the menu to the video game content. For instance, the presentation of the menu is dismissed, while the presentation of the content continues in the pinned window or the picture-in-picture window. User input received subsequently can be used to control the video game application or to request the menu again. In an illustration, a subset of the buttons, keys, or gestures on the input device are mapped to and usable for controlling the video game application, while another subset of the buttons, keys, or gestures are mapped to and usable for controlling the pinned window or picture-in-picture window. In this way, the user can have simultaneous control over both the video game application and the other application (that interfaces with the user through the pinned window or picture-in-picture window) depending on the buttons on the input device.