U.S. Pat. No. 11,344,805

GAMEPLAY EVENT DETECTION AND GAMEPLAY ENHANCEMENT OPERATIONS

AssigneeDell Products L.P.

Issue DateJanuary 30, 2020

Illustrative Figure

Abstract

Information handling systems, methods, and computer-readable media enable gameplay event detection and gameplay enhancement operations. One or more in-game events may be detected by monitoring audio content, by monitoring video content, by monitoring user input, by monitoring other information, or a combination thereof. The one or more in-game events (and information related to the one or more in-game events) may be stored at a database. Information of the database may be accessed to trigger one or more gameplay enhancement operations. The one or more gameplay enhancement operations may include an automatic highlight capture operation, a dynamic screen aggregation operation, a dynamic position switch operation, a dynamic player switch operation, a gameplay enhancement operation using one or more peripheral devices (e.g., a lighting effect or a haptic feedback event), one or more other gameplay enhancement operations, or a combination thereof.

Description

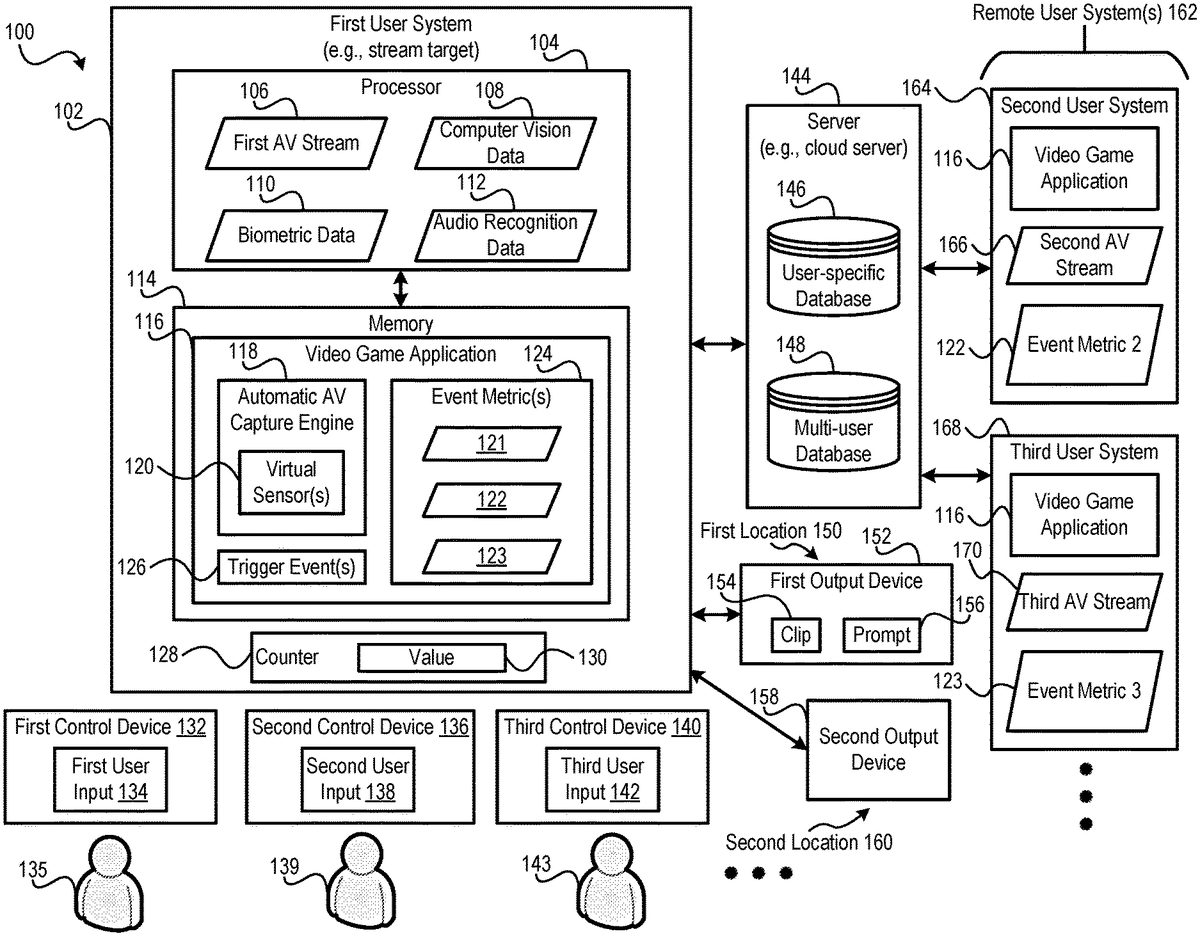

DETAILED DESCRIPTION For purposes of this disclosure, an information handling system may include any instrumentality or aggregate of instrumentalities operable to compute, calculate, determine, classify, process, transmit, receive, retrieve, originate, switch, store, display, communicate, manifest, detect, record, reproduce, handle, or utilize any form of information, intelligence, or data for business, scientific, control, or other purposes. For example, an information handling system may be a personal computer (e.g., desktop or laptop), tablet computer, mobile device (e.g., personal digital assistant (PDA) or smart phone), server (e.g., blade server or rack server), a network storage device, or any other suitable device and may vary in size, shape, performance, functionality, and price. The information handling system may include random access memory (RAM), one or more processing resources such as a central processing unit (CPU) or hardware or software control logic, ROM, and/or other types of nonvolatile memory. Additional components of the information handling system may include one or more disk drives, one or more network ports for communicating with external devices as well as various input and output (I/O) devices, such as a keyboard, a mouse, touchscreen and/or a video display. The information handling system may also include one or more buses operable to transmit communications between the various hardware components. Certain examples of information handling systems are described further below, such as with reference toFIG. 1. FIG. 1depicts an example of a system100. The system100includes multiple user systems, such as a first user system102and one or more remote user systems162. In the example ofFIG. 1, the one or more remote user systems162include a second user system164and a third user system168. The user systems102,164, and168may each include a desktop computer, a laptop computer, a tablet, a mobile device, a server, or a gaming console, as illustrative examples. In one example, the first user system102is ...

DETAILED DESCRIPTION

For purposes of this disclosure, an information handling system may include any instrumentality or aggregate of instrumentalities operable to compute, calculate, determine, classify, process, transmit, receive, retrieve, originate, switch, store, display, communicate, manifest, detect, record, reproduce, handle, or utilize any form of information, intelligence, or data for business, scientific, control, or other purposes. For example, an information handling system may be a personal computer (e.g., desktop or laptop), tablet computer, mobile device (e.g., personal digital assistant (PDA) or smart phone), server (e.g., blade server or rack server), a network storage device, or any other suitable device and may vary in size, shape, performance, functionality, and price. The information handling system may include random access memory (RAM), one or more processing resources such as a central processing unit (CPU) or hardware or software control logic, ROM, and/or other types of nonvolatile memory. Additional components of the information handling system may include one or more disk drives, one or more network ports for communicating with external devices as well as various input and output (I/O) devices, such as a keyboard, a mouse, touchscreen and/or a video display. The information handling system may also include one or more buses operable to transmit communications between the various hardware components. Certain examples of information handling systems are described further below, such as with reference toFIG. 1.

FIG. 1depicts an example of a system100. The system100includes multiple user systems, such as a first user system102and one or more remote user systems162. In the example ofFIG. 1, the one or more remote user systems162include a second user system164and a third user system168. The user systems102,164, and168may each include a desktop computer, a laptop computer, a tablet, a mobile device, a server, or a gaming console, as illustrative examples. In one example, the first user system102is remotely coupled to the one or more remote user systems162via a network. The network may include a local area network (LAN), a wide area network (WAN), a wireless network (e.g., a cellular network), a wired network, the Internet, one or more other networks, or a combination thereof.

The system100may further include a server144(e.g., a cloud server). Although the server144is described as a single device, in some implementations, functionalities of the server144may be implemented using multiple servers. The server144may be coupled to the first user system102and to the one or more remote user systems162via one or more networks, such as a local area network (LAN), a wide area network (WAN), a wireless network (e.g., a cellular network), a wired network, the Internet, one or more other networks, or a combination thereof.

The system100may further include one or more control devices, such as a first control device132, a second control device136, and a third control device140. As one example, the first control device132includes a first video game controller, the second control device136includes a second video controller, and the third control device140includes a third video game controller. The first control device132, the second control device136, and the third control device140may be configured to communicate with one or more other devices of the system100via a wireless interface or a wired interface.

Each device ofFIG. 1may include one or more memories configured to store instructions and may further include one or more processors configured to execute instructions to carry out operations described herein. To illustrate, in the example ofFIG. 1, the first user system102includes a processor104and a memory114.

The system100may further include one or more output devices, such as a first output device152and a second output device158. In one example, the first output device152includes a first display device (e.g., a first television display or a first computer monitor), and the second output device158includes a second display device (e.g., a second television display or a second computer monitor). In one possible configuration, the first output device152is in a first location150(e.g., a first room), and the second output device158is in a second location160(e.g., a second room different than the first room). The first output device152and the second output device158may each be coupled to the first user system102via a wired connection or via a wireless connection.

It will be appreciated that the examples described with reference toFIG. 1are illustrative and that other examples are also within the scope of the disclosure. For example, although three control devices are illustrated, in other examples, more than or fewer than three control devices may be used. As another example, althoughFIG. 1depicts two output devices, in other examples, the system100may include more than or fewer than two output devices. As an additional example, although two remote user systems are illustrated, in other examples, more than or fewer than two remote user systems may be used. As a further example, one or more of the video game applications116may execute on the server144to generate AV streams that are transmitted to one or more of the user systems102,164, and168.

During operation, one or more devices ofFIG. 1may execute a video game application116or another application generating an audio-visual (AV) stream. For example, the processor104of the first user system102may retrieve the video game application116from the memory114and may execute the video game application116to generate a first AV stream106. The second user system164may execute the video game application116to generate a second AV stream166, and the third user system168may execute the video game application116to generate a second AV stream166.

As used herein, an “AV stream” may refer to a signal transmitted wirelessly or using a wired connection. In addition, as used herein, an “AV stream” may refer to a digital signal or an analog signal. Further, as used herein, an “AV stream” may refer to a representation of content for an audio/video display. Examples of “AV streams” include a display signal, such as a component video signal or an HDMI signal. Other examples of “AV streams” include a data signal, such as a data stream defining shapes or objects that can be modified (e.g., rendered) to generate a signal to be input to a display device (or other output device). Further, although certain examples are provided with reference to three AV streams, it is noted that aspects of the disclosure are applicable to more than three AV streams (e.g., four or five AV streams) or fewer than three AV streams, such as two AV streams (or one AV stream in some cases).

In some examples, the video game application116is a multi-user video game application that enables gameplay by multiple users, such as a first user135associated with the first control device132, a second user139associated with the second control device136, and a third user143associated with the third control device140. In this example, the first control device132may receive first user input134from the first user135and may provide the first user input134(or other input) to the first user system102to control one or more aspects of the first AV stream106(e.g., to control a first character of the video game application116that is depicted in the first AV stream106). The second control device136may receive second user input138from the second user139and may provide the second user input138(or other input) to the second user system164to control one or more aspects of the second AV stream166(e.g., to control a second character of the video game application116that is depicted in the second AV stream166). The third control device140may receive third user input142from the third user143and may provide the third user input142(or other input) to the third user system168to control one or more aspects of the third AV stream170(e.g., to control a third character of the video game application116that is depicted in the third AV stream170).

In some gameplay scenarios, players are located at a common site (e.g., a game party), and one or more players provide user input (e.g., “cast”) to a remote (or off-site) user system (e.g., due to particular capabilities of the remote user system or due to the particular preferences of the player). An on-site user system may be referred to herein as a “stream target.” To further illustrate, inFIG. 1, the first user system102may correspond to a stream target. The second user system164may be remotely connected to the first user system102and to the second control device136, and the third user system168may be remotely connected to the first user system102and to the third control device140. In this example, the first user system102may receive the first user input134via a local connection with the first control device132, and the second user system164and the third user system168may receive the second user input138and the third user input142via a remote connection with the second control device136and the third control device140, respectively. The local connection may include a wired connection or a LAN, and the remote connection may include the Internet, as non-limiting illustrative examples.

In some examples, the second AV stream166and the third AV stream170are provided to a device of the system100, such as the first user system102or the first output device152. In some examples, the second AV stream166and the third AV stream170are provided from the second user system164and the third user system168, respectively, to the device using a streaming protocol, such as a real-time transport protocol (RTP). In some examples, the first user system102is configured to receive the AV streams166,170and to combine the AV streams106,166, and170to generate a composite AV stream that is presented concurrently on a display of the first output device152(e.g., in connection with a game party).

In accordance with certain aspects of the disclosure, one or more devices of the system100are configured to automatically capture one or more clips (e.g., highlights) during gameplay of the video game application116. To illustrate, in some aspects of the disclosure, the video game application116includes an automatic AV capture engine118that is executable to monitor data for a plurality of trigger events, to detect one or more of the plurality of trigger events (e.g., by detecting one or more trigger events126), and to capture one or more clips (e.g., highlights) based on the one or more detected trigger events. The monitored data may include one or more of computer vision data108, biometric data110, audio recognition data112, or other data, as illustrative examples.

The automatic AV capture engine118may be configured to monitor one or more aspects of an AV stream to detect the one or more trigger events126. To illustrate, in some examples, the automatic AV capture engine118is configured to monitor the first AV stream106for one or more video-based indicators. The one or more video-based indicators may include a change in contrast of the first AV stream106that satisfies a contrast threshold, a velocity of a character or vehicle depicted in the first AV stream106that satisfies a velocity threshold, a magnitude of motion vectors used for encoding the first AV stream106, or another type of movement associated with the first AV stream106, as illustrative examples. In some examples, the automatic AV capture engine118is configured to generate the computer vision data108based on the first AV stream106(e.g., using a computer vision image recognition technique) and to analyze the computer vision data108for the one or more video-based indicators.

The automatic AV capture engine118may be configured to monitor an AV stream for one or more audio indicators to detect the one or more trigger events126. The one or more audio indicators may correspond to certain noises indicated by the first AV stream106, such as explosions, gunfire, or sound effects associated with scoring, as illustrative examples. In some examples, the automatic AV capture engine118is configured to generate the audio recognition data112based on the first AV stream106(e.g., using an audio recognition technique) and to analyze the audio recognition data112for the one or more audio-based indicators.

The automatic AV capture engine118may be configured to monitor gameplay telemetry associated with an AV stream for one or more gameplay telemetry indicators to detect the one or more trigger events126. For example, the automatic AV capture engine118may be configured to monitor the first AV stream106for an indication of a game stage having a difficulty that satisfies (e.g., is greater than, or is greater than or equal to) a difficulty threshold (e.g., a boss level), for an indication of a number of kills that satisfies a kill threshold, for an indication of a number of level-ups that satisfies a level-up threshold, or for an indication of a bonus, as illustrative examples.

The automatic AV capture engine118may be configured to monitor for one or more object-based indicators included in the first AV stream106to detect the one or more trigger events126. The one or more object-based indicators may include an object or item of interest depicted in the first AV stream106, such as an indication that a character health-level fails to satisfy (e.g., is less than, or is less than or equal to) a health-level threshold, text indicating that a character is victorious during gameplay, a kill count, or an indication of a boss, as illustrative examples.

Alternatively or in addition to monitoring an AV stream, the automatic AV capture engine118may be configured to monitor one or more other data sources to identify the one or more trigger events126. To illustrate, in some examples, the automatic AV capture engine118is configured to monitor input/output telemetry data associated with the first user input134to detect the one or more trigger events126. In some examples, the one or more trigger events126indicate a threshold I/O rate, such as a threshold rate of keystrokes of the first user input134, a threshold rate of mouse movements of the first user input134, a threshold rate of mouse clicks of the first user input134, or a threshold rate of button presses of the first user input134, as illustrative examples. In this example, in response to detecting that the I/O telemetry data satisfies the threshold I/O rate, the first user system102may detect the one or more trigger events126.

The automatic AV capture engine118may be configured to monitor ambient sound for sound-based indicators to detect the one or more trigger events126. The ambient sound may include speech from the first user135(e.g., speech detected using a microphone of a headset worn by the first user135during gameplay) or sound recorded at a location of the first user135(e.g., sound detected using a room microphone included in or coupled to the first user system102). The sound-based indicators may include one or more utterances (e.g., a gasp, an exclamation, or a certain word), cheering, clapping, or other sounds, as illustrative examples.

The automatic AV capture engine118may be configured to monitor for one or more commentary-based indicators to detect the one or more trigger events126. To illustrate, in some examples, the users135,139, and143may exchange commentary during gameplay, such as social media messages, chat messages, or text messages. In some examples, the first user system102is configured to receive commentary indicated by the first user input134and to send a message indicating the commentary to the server144(e.g., to post the commentary to a social media site or to send the commentary to the users139,143via one or more chat messages or via one or more text messages). The automatic AV capture engine118may be configured to monitor the commentary for one or more keywords or phrases indicative of excitement or challenge, as illustrative examples.

The automatic AV capture engine118may be configured to monitor the biometric data110for one or more biometric indicators to detect the one or more trigger events126. In some examples, the biometric data110is generated by a wearable device (e.g., a smart watch or other device) that is worn by the first user135that sends the biometric data110to the first user system102. The one or more biometric indicators may include a heart rate of the first user135that satisfies a heart rate threshold, a respiratory rate of the first user135that satisfies a respiratory rate threshold, a change in pupil size (e.g., pupil dilation) of the first user135that satisfies a pupil size change threshold, or an eye movement of the first user135that satisfies an eye movement threshold, as illustrative examples. In some implementations, the biometric data110includes electrocardiogram (ECG) data, electroencephalogram (EEG) data, other data, or a combination thereof.

The automatic AV capture engine118may be configured to monitor room events for one or more room event indicators to detect the one or more trigger events126. The one or more room event indicators may include a change in ambient lighting, for example. In some examples, the first user system102includes or is coupled to a camera having a lighting sensor configured to detect an amount of ambient lighting.

The automatic AV capture engine118may include one or more virtual sensors120configured to detect the one or more trigger events126. In some implementations, the one or more virtual sensors120include instructions (e.g., “plug-ins”) executable by the processor104to compare samples of data (e.g., the computer vision data108, the biometric data110, the audio recognition data112, other data, or a combination thereof) to reference samples (e.g., signatures of trigger events) indicated by the virtual sensors120. The automatic AV capture engine118may be configured to detect a trigger event of the one or more trigger events126in response to detecting that a sample of the data matches a reference sample indicated by the one or more virtual sensors120. The automatic AV capture engine118may be configured to check for conditions specified by the one or more virtual sensors120based on a configurable poll rate.

The automatic AV capture engine118may be configured to automatically capture a portion of an AV stream based on detection of the one or more trigger events126. As an example, in response to detection of the one or more trigger events126, the automatic AV capture engine118may capture a clip154(e.g., a “greatest hit”) of the first AV stream106. In one example, the first AV stream106is buffered at a memory (e.g., an output buffer) prior to being provided to the first output device152, and the capture of the clip154is initiated at a certain portion of the memory in response to detection of the one or more trigger events126. Capture of the clip154may be terminated in response to one or more conditions. For example, capture of the clip154may be terminated after a certain duration of recording. In another example, capture of the clip154may be terminated in response to another event, such as a threshold time period during which no other trigger events126are detected. The clip154may be stored to the memory114and/or sent to another device, such as to the server144.

The automatic AV capture engine118may be configured to automatically capture the clip154based on the one or more trigger events126satisfying a threshold number of events, based on a time interval, or a combination thereof. To illustrate, the automatic AV capture engine118may be configured to monitor for the one or more trigger events126during the time interval (e.g., 5 seconds, as an illustrative example). The automatic AV capture engine118may be configured to increment, during the time interval, a value130of a counter128(e.g., a software counter or a hardware counter) in response to each detected trigger event of the one or more trigger events126. If the value130of the counter128satisfies the threshold number of events during the time interval, the automatic AV capture engine118may automatically capture the clip154. Alternatively, if the value130of the counter128fails to reach the threshold number of events during the time interval, the automatic AV capture engine118may reset the value130of the counter128(e.g., upon expiration of the threshold time interval).

The clip154may be presented (e.g., via the first output device152) with a prompt156to save (or delete) the clip154. For example, upon termination of gameplay or upon completion of a game stage, the clip154may be presented to the first user135with the prompt156. In another example, the clip154can be presented at the first output device152during gameplay (e.g., using a split-screen technique) so that the first user135can review the clip154soon after capture of the clip154. In some examples, the clip154is stored to (or retained at) the memory114or the server144in response to user input via the prompt156. Alternatively, the clip154may be deleted, invalidated, or overwritten from the memory114or the server144in response to user input via the prompt156.

Alternatively or in addition, the prompt156may enable a user to edit or share the clip154. For example, the prompt156may enable the first user135to trim the clip154or to apply a filter to the clip154. Alternatively or in addition, the prompt156may enable the first user135to upload the clip154to a social media platform or to send the clip154via a text message or email, as illustrative examples.

In accordance with certain aspects of the disclosure, one or more devices of the system100are configured to determine one or more gameplay metrics124associated with gameplay of the video game application116. In the example ofFIG. 1, the one or more gameplay metrics124include a first gameplay metric121associated with the first user135, a second gameplay metric122associated with the second user139, and a third gameplay metric123associated with the third user143. The one or more gameplay metrics124may be determined based on data used to detect the one or more trigger events126, such as based on the computer vision data108, the biometric data110, the audio recognition data112, other data, or a combination thereof.

To illustrate, in some examples, the computer vision data108indicates one or more achievements (e.g., a score) of the first user135in connection with the video game application116. The one or more achievements may be detected using one or more techniques described herein, such as by analyzing the first AV stream106or by analyzing external events, such as ambient sound or room lighting. In some implementations, the first user system102is configured to execute the video game application116to determine the first gameplay metric121, the second user system164is configured to execute the video game application116to determine the second gameplay metric122, and the third user system168is configured to execute the video game application116to determine the third gameplay metric123.

To further illustrate, in some implementations, each event or indicator described with reference to the one or more trigger events126is associated with a value, and the automatic AV capture engine118is configured to add values associated with each trigger event detected during a certain time interval to determine a gameplay metric. In an illustrative example, the first gameplay metric121may correspond to (or may be based at least in part on) the value130of the counter128.

In accordance with some aspects of the disclosure, the one or more gameplay metrics124are used to determine an arrangement of the AV streams106,166, and170at an output device, such as the first output device152. In one illustrative example, the second user system164and the third user system168send the second gameplay metric122and the third gameplay metric123, respectively, to the first user system102, and the video game application116is executable by the processor104to resize one or more of the AV streams106,166, and170based on the gameplay metrics121,122, and123.

To further illustrate,FIG. 2depicts an illustrative example of a dynamic screen aggregation operation to modify concurrent display of the AV streams106,166, and170from a first graphical arrangement172to a second graphical arrangement174. The first graphical arrangement172includes the AV streams106,166, and170and additional content184. In some examples, the additional content184includes one or more of an advertisement or a watermark, such as a brand logo associated with the video game application116. Alternatively or in addition, recent highlights (e.g., the clip154) can be displayed as the additional content184.

In the first graphical arrangement172, the first AV stream106has a first size176, and the second AV stream166has a second size178. In one example, the first size176corresponds to the second size178. In one example, upon starting gameplay, sizes of each of the AV streams106,166, and170and the additional content184may be set to a default size. The AV streams106,166, and170may correspond to different video games or a common video game. Further, the AV streams106,166, and170may be generated by different devices (e.g., by the user systems102,164, and168ofFIG. 1) or by a common device (e.g., by the first user system102).

During gameplay, the first graphical arrangement172may be changed to the second graphical arrangement174based on one or more criteria. In one example, the first graphical arrangement172is changed to the second graphical arrangement174based on the one or more gameplay metrics124(e.g., where a better performing user is allocated more screen size as compared to a worse performing user). For example, if the gameplay metrics121,122indicate that the second user139is performing better than the first user135, then the first size176may be decreased to an adjusted first size180, and the second size178may be increased to the adjusted second size182.

To further illustrate, in one example, the first user system102is configured to determine screen sizes based on a pro rata basis. For example, the first user system102may be configured to add the gameplay metrics121,122, and123to determine a composite metric and to divide the composite metric by the first gameplay metric121to determine the adjusted first size176. The first user system102may be configured to divide the composite metric by the second gameplay metric122to determine the adjusted second size182.

In some implementations, the gameplay metrics121,122, and123can be recomputed periodically, and the dynamic screen aggregation operation ofFIG. 2can be performed on a periodic basis (e.g., with each computation of the gameplay metrics121,122, and123. Alternatively or in addition, the dynamic screen aggregation operation ofFIG. 2can be performed based on one or more other conditions. As an example, the dynamic screen aggregation operation ofFIG. 2can be performed in response to a change in one of the gameplay metrics121,122, and123that satisfies a threshold.

Alternatively or in addition to performing a dynamic screen aggregation operation, in some implementations, a dynamic position switch operation may be performed to change positions of any of the AV streams106,166, and170and the additional content184. For example, inFIG. 2, a first position of the first AV stream106, a second position of the second AV stream166, a third position of the third AV stream170, and a fourth position of the additional content184have been modified in the second graphical arrangement174relative to the first graphical arrangement172.

FIG. 3depicts an example of a dynamic position switch operation. InFIG. 3, positions of the AV streams106,166, and170and the additional content184are modified based on one or more criteria. For example, the positions of the AV streams106,166, and170and the additional content184may be modified according to a timer (e.g., where the positions are randomly selected according to the timer, such as every 30 seconds). In another example, each position may be associated with a ranking (e.g., where the top left position is associated with a top performer), and the AV streams106,166, and170may be assigned to the positions according to the gameplay metrics121,122, and123.

In some implementations, assignment of the control devices132,136, and140to the AV streams106,166, and170can be dynamically modified.FIG. 4depicts one example of a dynamic player switch operation. Control of the first AV stream106may be reassigned from the first control device132to the second control device136. In this case, the second user139assumes control of gameplay from the first user135. The example ofFIG. 4also illustrates that control of the second AV stream166is reassigned from the second control device136to the third control device140and that control of the third AV stream170is reassigned from the third control device140to the first control device132. In this example, the third user143assumes control of gameplay from the second user139, and the first user135assumes control of gameplay from the third user143.

In some examples, the first user system102ofFIG. 1is configured to perform the dynamic player switch operation ofFIG. 4based on one or more criteria. In one example, the first user system102is configured to perform the dynamic player switch operation randomly or pseudo-randomly. Alternatively or in addition, the first user system102may be configured to perform the dynamic player switch operation based on a schedule, such as every 30 seconds or every minute. By performing the dynamic player switch operation ofFIG. 4, a user may need to identify which AV stream the user controls, which may add challenge to a multiplayer video game.

FIG. 5illustrates another aspect of the disclosure. InFIG. 5, the first AV stream106is presented at the first output device152using a border having a first color.FIG. 5also illustrates that the second AV stream166is presented at the first output device152using a border having a second color. InFIG. 5, the third AV stream170is presented at the first output device152using a border having a third color.

In one example, the first user system102ofFIG. 1is configured to trigger lighting events at the control devices132,136, and140. For example, the first user system102may be configured to indicate the first color to the first control device132, to indicate the second color to the second control device136, and to indicate the third color to the third control device140. The indications may trigger lighting events at the control devices132,136, and140. For example, the first control device132may include a first set lighting devices (e.g., light emitting diodes (LEDs)) that generate a certain color based on an indication provided by the first user system102.

In some implementations, lighting events triggered at the control devices132,136, and140match colors presented at the first output device152. For example, in connection with the dynamic position switch operation ofFIG. 3, the first user system102may indicate that the first control device132is to change from generating the first color to generating the second color (e.g., to indicate that the first AV stream106has moved from the upper-left of the first output device152to the upper-right of the first output device152). In some cases, triggering lighting events in accordance with one or more aspects ofFIG. 5may assistant players (or viewers) in understanding which player is controlling which character.

Referring again toFIG. 1, in some cases, one or more users may leave a location and enter another location (e.g., while carrying a control device having a wireless configuration). For example, the first user135may leave the first location150and enter the second location160while carrying the first control device132. The first user system102may be configured to movement of the first control device132from the first location150to the second location160(e.g., using location data reported by the first control device132, such as global positioning system (GPS) data, or by detecting that a change in a signal-to-noise ratio (SNR) of wireless signals transmitted by the first control device132matches a signature SNR change associated with the movement).

In response to detecting movement of the first control device132from the first location150to the second location160, the first user system102may be configured to send the first AV stream106to the second output device158(e.g., instead of to the first output device152). In some implementations, the first user system102may continue sending the second AV stream166and the third AV stream170to the first output device152until detecting movement of the second control device136and the third control device140, respectively, from the first location150to the second location160.

In some implementations, certain data described with reference toFIG. 1may be stored at a user-specific database146of the server144, at a multi-user database148of the server144, or both. For example, the first gameplay metric121may be stored at the user-specific database146, which may include performance data associated with the first user135. In another example, the one or more gameplay metrics124are stored at the multi-user database148, which may store data associated with the video game application116.

In some examples, one or both of the user-specific database146or the multi-user database148are accessible to one or more devices. For example, a peripheral device may perform lighting events or haptic feedback operations based on information of one or both of the user-specific database146or the multi-user database148. Alternatively or in addition, a game developer may access one or both of the user-specific database146or the multi-user database148while adjusting a difficulty of a version of the video game application116or while designing a walk-through of the video game application116. Other illustrative examples are described further below.

FIG. 6is a diagram illustrating class diagrams in accordance with some aspects of the disclosure. In the example ofFIG. 6, the clip154may correspond to a “greatest hit” detected during gameplay of the video game application116. InFIG. 6, the greatest hit includes a time stamp, video data, audio data, and object metadata. The audio data and video data may be recorded during an event, and the time stamp may indicate a time of the recording. The metadata may indicate information associated with the recording, such as a number of actions per minute (APM), as an illustrative example.

One or more virtual sensors120may be associated with a condition. A virtual sensor may be inserted (e.g., by a game developer) into instructions of the video game application116. During execution of the video game application116by the processor104, the virtual sensor may cause the processor104to check whether the condition is satisfied, such as by monitoring a value of a register or a state machine to detect whether a boss character is present in the first AV stream106. Metadata can be generated in response to detecting that the condition is satisfied. A trigger event of the one or more trigger events126may be associated with one or more operators. Each operator may be a grouping (e.g., permutation or subset) of the one or more virtual sensors120. Event recording may be initiated in response to the trigger event at a certain time (“recordingStart”) and for a certain duration (“recordingDuration”). A trigger event may be detected upon satisfaction of each condition specified by an operator corresponding to the trigger event.

FIG. 7depicts an example of a method in accordance with certain aspects of the disclosure. InFIG. 7, a greatest hits engine is executable (e.g., by the processor104) to generate a “greatest hit,” which may correspond to the clip154. An operator ofFIG. 6may be associated with a disjunctive grouping of one or more virtual sensors or a conjunctive grouping of one or more virtual sensors. For example, detection of a first trigger event may be conditioned on a certain biometric input or a certain game data input. As another example, detection of a second trigger event may be conditioned on a certain computer vision input and a certain audio recognition input. In some implementations, a greatest hit is captured in response to detection of any of multiple trigger events (e.g., upon detection of the first trigger event or the second trigger event) or in response to detection of multiple trigger events (e.g., upon detection of the first trigger event and the second trigger event).

FIG. 8depicts another example of a method in accordance with certain aspects of the disclosure. The method ofFIG. 8uses a packing process to aggregate AV streams, such as to perform the dynamic screen aggregation operation ofFIG. 2. The AV streams can be sized accordance to player performance, which may be indicated by the one or more gameplay metrics124. A first-place AV stream may be associated with a largest size and may be placed first during the packing process. One or more additional streams may be reduced in size and combined with the first-place stream for concurrent display.

FIG. 9depicts another example of a method in accordance with certain aspects of the disclosure. The method ofFIG. 9illustrates operations that may be performed to dynamically adjust positions of AV streams. For example, positions of AV streams may be adjusted based on a change in player performance, based on a change in a number of the AV streams, or based on a change in player positions, as illustrative examples.

FIG. 10depicts another example of a method in accordance with certain aspects of the disclosure. The method ofFIG. 10may be performed by a the first user system102or the server144. The method includes receiving, by a device, a plurality of AV streams including a first AV stream and at least a second AV stream. The method also includes outputting the plurality of AV streams from the device to an output device for concurrent display using a first graphical arrangement of the plurality of AV streams. The first graphical arrangement depicts the first AV stream using a first size and further depicts the second AV stream using a second size. The method further includes adjusting, by the device, the concurrent display of the plurality of AV streams from the first graphical arrangement to a second graphical arrangement that depicts the first stream using an adjusted first size different than the first size, that depicts the second stream using an adjusted second size different than the second size, or a combination thereof. The adjusted first size is based, at least in part, on a gameplay metric associated with the first AV stream. The gameplay metrics may be based on a count of in-game events detected during gameplay by analyzing an audio-visual (AV) stream of the video game. The in-game events may be detected based on input/output (I/O) telemetry (e.g., rapid keystrokes), voice-based indicators (e.g., a comment made by a player of the video game), video-based indicators in the AV stream, audio-based indicators in the AV stream, biometric indicators associated with a player of the video game, other information, or a combination thereof. By analyzing an AV stream to detect in-game events, gameplay footage may be automatically identified and captured, reducing the need for users to capture and edit footage (e.g., using complicated video editing software).

Another illustrative example of a system200is depicted inFIG. 11. System200may include the first user system102and the second user system164. The first user system102includes the processor104and the memory114. The processor104is configured to retrieve the video game application116from the memory114and to execute the video game application116to generate an AV stream, such as the first AV stream106. Depending on the implementation, the video game application116may correspond a single-user video game or a multi-user video game (e.g., as described with reference to certain aspects ofFIG. 1). The system200further includes peripheral devices230, which may be coupled to a user system, such as the second user system164. The peripheral devices230may include a lighting device240including a plurality of light sources (e.g., light-emitting diodes (LEDs)), such as a light source242, a light source244, and a light source246. The peripheral devices230may include a plurality of speakers (e.g., speakers arranged in a spatially localized configuration), and each light source of the lighting device240is disposed on a speaker enclosure of a respective speaker of the plurality of speakers. As an illustrative example, each light source of the lighting device240may correspond to a status light or power-on light of a speaker of the plurality of speakers. In one example, one or more of the light sources242,244, and246are included in the control devices132,136, and140, and the light sources242,244, and246are configured to perform lighting operations described with reference toFIG. 5. In another example, the one or more of the light sources242,244, and246are included another device, such as in backlights of a keyboard. The peripheral devices230may include a haptic feedback device250. The haptic feedback device250may include a plurality of actuators, such as an actuator252, an actuator254, and an actuator256. In an illustrative example, the haptic feedback device250includes haptic chair. In another example, the haptic feedback device250is included within a game controller, such as the second control device136.

During operation, the first AV stream106may be generated during execution of the video game application116by the processor104. In the example ofFIG. 11, the first AV stream106includes one or more channels of audio data202. To illustrate, the one or more channels of audio data202may include a monaural signal. In another example, the one or more channels of audio data202include stereo signals. In another example, the one or more channels of audio data202include three or more channels of signals. Depending on the implementation, the one or more channels of audio data202may include one or more analog signals or one or more digital signals. The one or more channels of audio data202may include a title audio stream (e.g., a game audio stream generated by executing the video game application116) or a system audio stream (e.g., a combination of the game audio stream and other audio, such as notification audio generated at the first user system102or control audio associated with user input to the first user system102), as illustrative examples.

In some implementations, the processor104is configured to execute an audio driver280. The audio driver280may be included in or specified by an operating system or a program of the first user system102. The processor104may execute the audio driver280to enable the first user system102to interface with one or more audio devices, such as a receiver or one or more speakers included in the first output device152.

In accordance with an aspect of the disclosure, a device of the system200may analyze the one or more channels of audio data202to detect one or more in-game events204of the video game application116. In one example, the first user system102is configured to analyze the one or more channels of audio data202of the first AV stream106to detect the one or more in-game events204. In another example, the server144may be configured to perform one or more operations described with reference toFIG. 11, such as analyzing the one or more channels of audio data202of the first AV stream106to detect the one or more in-game events204.

In accordance with some aspects of the disclosure, one or more in-game events204may be detected without direct analysis of instructions of the video game application116. For example, by detecting the one or more in-game events204by monitoring the first AV stream106, use of software “hooks” to trigger certain operations (e.g., gameplay enhancement operations) may be avoided. In one example, the video game application116does not include software “hooks” that are executable to trigger gameplay enhancement operations.

In some examples, analyzing the one or more channels of audio data202includes generating one or more spectrograms216associated with the one or more channels of audio data202. The one or more spectrograms may216may include a spectrogram for each channel of the one or more channels of audio data202, a composite spectrogram for a composite (e.g., “mix-down”) of the one or more channels of audio data202, one or more other spectrograms, or a combination thereof.

The one or more spectrograms216may be compared to reference data220associated with a plurality of sounds. As an illustrative example, the reference data220may include, for each sound of the plurality of sounds, a sound signature indicating one or more characteristics (e.g., a frequency spectrum) of the sound (e.g., a vehicle sound or an explosion sound, as illustrative examples). The one or more channels of audio data202may be compared to the reference data220to determine whether sound indicated by the one or more channels of audio data202matches any of the plurality of sounds. In response to detecting that sound indicated by the one or more channels of audio data202matches one or more sounds indicated by the reference data220, one or more in-game events204may be detected. As an illustrative example, in response to detecting that a sound indicated by the one or more channels of audio data202matches an explosion sound indicated by the reference data220, an explosion event of the one or more in-game events204may be detected.

Alternatively or in addition, analyzing the one or more channels of audio data202may include inputting the one or more channels of audio data202to a machine learning program218. The machine learning program218may be executable by the processor104to identify the one or more in-game events204based on the one or more channels of audio data202. In some examples, the machine learning program218is trained using training data, which may include the reference data220, as an illustrative example. To further illustrate, the machine learning program218may include a plurality of weights including first weights associated with a first sound (e.g., an explosion sound) and second weights associated with a second sound (e.g., a vehicle sound). The first plurality of weights and the second plurality of weights may be determined (e.g., “learned”) by the machine learning program218during a training process that uses the training data. An output of the machine learning program218may be generated by applying the plurality of weights to the one or more channels of audio data202. The output may be compared to a plurality of reference outputs to detect the one or more in-game events204. For example, if the one or more channels of audio data202indicate an explosion sound, then applying the first weights to the one or more channels of audio data202may cause the output to have a signature indicating the explosion sound. As another example, if the one or more channels of audio data202indicate a vehicle sound, then applying the second weights to the one or more channels of audio data202may cause the output to have a signature indicating the vehicle sound.

In accordance with an aspect of the disclosure, a device of the system100is configured to generate sound metadata208(e.g., gameplay telemetry data) based on the one or more in-game events204. In one example, the first user system102is configured to generate the sound metadata208. In another example, the server144is configured to generate the sound metadata208.

In some implementations, the sound metadata208includes, for an in-game event of the one or more in-game events204, an indication of a sound210associated with the in-game event. For example, if the sound210corresponds to a vehicle sound, then the indication of the sound210may include a first sequence of bits indicating a vehicle sound. As another example, if the sound210corresponds to an explosion sound, then the indication of the sound210may include a second sequence of bits indicating an explosion sound.

In some examples, the sound metadata208further includes an indication of a virtual direction212associated with the sound210. To illustrate, if an amplitude of the sound210decreases as a function of time, then the indication of the virtual direction212may specify that the sound210appears to attenuate relative to a reference object206(e.g., a character) in the video game application116. As another example, if the sound210appears to move across channels in a sequence (e.g., left to right), then the indication of the virtual direction212may specify that a source of the sound210appears to move to the right relative to the reference object206.

Alternatively or in addition, the sound metadata208may include an indication of a virtual distance214from a source of the sound210to the reference object206. To illustrate, if an amplitude of the sound210is relatively small, then the indication of the virtual distance214may be greater.

In some examples, the sound metadata208includes an indication of a point of gameplay associated with the sound210(e.g., a time or place of the gameplay at which time the one or more in-game events204occur). In some implementations, upon detecting an event of the one or more in-game events204, a value of a program counter associated with the video game application116is identified and included in the sound metadata208. Alternatively or in addition, the sound metadata208may indicate a timestamp or a frame number associated with the event.

In some examples, a device of the system200(e.g., the first user system102or the server144) is configured to initiate storage of the sound metadata208to a database. In some examples, the sound metadata208is stored to the user-specific database146, the multi-user database148, or both. Alternatively or in addition, the first user system102may include a database, and the sound metadata208may be stored to the database of the first user system102.

In accordance with an aspect of the disclosure, storing the sound metadata208to a database enables one or more gameplay enhancement operations at one or more peripheral devices during the execution of the video game application116. As an illustrative example, a user system (e.g., the first user system102, the second user system164, or the third user system168) may access the database (e.g., upon loading of the video game application) to identify one or more gameplay enhancement operations to be initiated during gameplay.

To further illustrate, in one example, the second user system164is configured to request the sound metadata208from the server144in response to executing the video game application116. For example, the second user system164may send a message to the server144identifying the video game application116and requesting sound metadata associated with the video game application116. The second user system164may receive, from the server144, a reply including the sound metadata208in response to the message.

During gameplay, the second user system164may trigger one or more gameplay enhancement operations at the one or more of the peripheral devices230. In one example, the second user system164is configured to send a peripheral control signal222to one or more of the peripheral devices230to initiate a gameplay enhancement operation.

Alternatively or in addition, one or more of the peripheral devices230may be configured to send a request224(e.g., a polling message) to obtain one or more parameters of the sound metadata208. To illustrate, in some examples, the peripheral devices230may include a smart device (e.g., a robot, a smart appliance, or a smart home automation device) that is configured to detect gameplay of the video game application116(e.g., using a computer vision technique, an audio recognition technique, or a combination thereof) and to send the request224in response to detecting gameplay of the video game application116. In some examples the request224is sent to the server144or to the second user system164.

In one example, the sound metadata208enables the lighting device240to perform a directional lighting feedback event during an in-game event of the plurality of the in-game events204. The directional lighting event may include selectively activating one or more of the light sources242,244, and246based on an identification of the one or more in-game events204indicated by the sound metadata208. In one example, the directional lighting event is based on the virtual direction212indicated by the sound metadata208. For example, if the in-game event includes movement of a vehicle, the light sources242,244, and246may be selectively activated based on the virtual direction212of the movement of the vehicle, such as by selectively activating the light source242before the light source244and by selectively activating the light source244before the light source246(e.g., to simulate movement of the vehicle from left to right). As another example, if the in-game event includes an explosion, the light sources242,244, and246may be activated and then throttled off to simulate attenuation of noise of the explosion.

Alternatively or in addition, the directional lighting feedback event may be based on the virtual distance214. For example, an intensity of light generated by the light sources242,244, and246may be increased for a smaller virtual distance214(e.g., to indicate that the sound210appears to be close to the reference object206) or decreased for a greater virtual distance214(e.g., to indicate that the sound210is appears to be far from the reference object206).

Alternatively or in addition, the directional lighting feedback event may include modifying a color of light generated by one or more of a plurality of light sources of the lighting device. For example, an LED color may be selected based on a type of the event, such as by selecting a red or orange color for an explosion event.

Alternatively or in addition, the sound metadata208may enable the haptic feedback device250to perform a directional haptic feedback event during an in-game event of one or more in-game events204. In some examples, the haptic feedback device250may be configured to selectively activate one or more of the actuators252,254, and256based on an identification of the one or more in-game events204indicated by the sound metadata208. In one example, the directional haptic feedback event is performed based on the virtual direction212, such as by selectively activating the actuator252before the actuator254and by selectively activating the actuator254before the actuator256(e.g., to simulate movement of a vehicle from left to right). Alternatively or in addition, the haptic feedback device250may be configured to modify an intensity and/or pattern of haptic feedback generated by the actuators252,254, and256based on the virtual distance214(e.g., by reducing the intensity of the haptic feedback if the virtual distance214indicates the sound210is far away from the reference object206). Alternatively or in addition, the haptic feedback device250may be configured to modify the pattern of haptic feedback generated by the actuators252,254, and256based on a type of event, such as by selecting a long vibration for an explosion event.

In some implementations, the audio driver280is executable by the processor104to generate an indication of a first number of audio channels. The audio driver280may provide the indication of the first number to the video game application116, and the processor104may be configured to execute the video game application116to generate a plurality of channels of audio data202based on the indication of the first number. As a non-limiting example, the audio driver280may specify an eight-channel audio format to the processor104to indicate that eight channels of audio should be included in the first AV stream106. In this example, the processor104may generate, based on the indication, eight channels of audio data202.

In one example, the processor104is configured to provide a subset203of the plurality of channels of audio data202to the first output device152, and a second number of audio channels associated with the subset203is less than the first number. As an example, the first number may correspond to an eight-channel format, and the second number may correspond to a two-channel stereo format. One or more operations described with reference toFIG. 11may be performed based on the plurality of channels of audio data202instead of the subset203. For example, the sound metadata208may be determined based on the plurality of channels of audio data202instead of the subset203. In some examples, after determining the sound metadata208based on the plurality of channels of audio data202, at least one channel of the plurality of channels of audio data202may be discarded (e.g., by erasing, invalidating, or overwriting the at least one channel from a buffer) to generate the subset203, or the plurality of channels of audio data202may be “down-converted” (e.g., from eight channels to two channels) to generate the subset203.

Use of the audio driver280may “trick” the video game application116into generating more channels of audio data202than are compatible with the first output device152. Because the first number of channels of audio data202may include more information than the second number of channels of audio data202, “tricking” the video game application116into generating a greater number of channels of audio data202may increase an amount of audio that is available for generating the sound metadata208, improving accuracy or an amount of detail associated with the sound metadata208(e.g., so that locations or directions associated with sounds are estimated more accurately).

In some examples, one or more aspects described with reference toFIG. 11are used in connection with one or more other aspects described herein. As an example, the one or more in-game events204may be included in or may correspond to the one or more trigger events126ofFIG. 1. In this example, one or more operations described with reference toFIG. 1can be performed in response to detection of the one or more in-game events204, such as by incrementing the value130of the counter128in response to detection of the one or more in-game events204or by determining the first gameplay metric121in response to detection of the one or more in-game events204. Alternatively or in addition, one or more operations described with reference toFIGS. 2, 3, 4, and 5may be performed in response to detection of the one or more in-game events204(e.g., by performing a dynamic screen aggregation operation to increase the first size176of the first AV stream106in response to detecting the one or more in-game events204in the first AV stream106, as an illustrative example).

FIG. 12illustrates an example of a method that may be performed in accordance with certain aspects of the disclosure. In some examples, operations of the method ofFIG. 12are performed to generate a plurality of gameplay enhancement operations, such as a lighting gameplay enhancement operation, a visual gameplay enhancement operation, a haptic gameplay enhancement operation, an analytic gameplay enhancement operation, and a historical gameplay enhancement operation.

FIG. 13illustrates another example of a method that may be performed in accordance with certain aspects of the disclosure. InFIG. 13, sounds of a plurality of objects (e.g., a tank, a smoker, a zombie, and a witch) are detected in the first AV stream106. As illustrated inFIG. 13, a virtual distance (e.g., the virtual distance214) and a virtual direction (e.g., the virtual direction212) can be determined for each of the plurality of objects. In the non-limiting example ofFIG. 13, the virtual distances correspond to 2 degrees, 84 degrees, 242 degrees, and 103 degrees. The non-limiting example ofFIG. 13also shows that the virtual distances correspond to 5 meters (m), 1 m, 20 m, and 0.5 m.

FIG. 14illustrates another example of a method that may be performed in accordance with certain aspects of the disclosure. In one example, operations of the method ofFIG. 14are performed by the first user system102, the second user system164, or the third user system168. Alternatively or in addition, one or more operations of the method ofFIG. 14can be performed by one or more other devices, such as by the server144.

The method ofFIG. 14includes, during execution of a video game application to generate an AV stream, analyzing one or more channels of audio data of the AV stream to identify one or more in-game events associated with the video game application. The method further includes storing, based on the one or more in-game events, sound metadata to a database to enable one or more gameplay enhancement operations at one or more peripheral devices during the execution of the video game application. The method further includes identifying, based on information of the database, a gameplay enhancement operation of the one or more gameplay enhancement operations and a peripheral device of the one or more peripheral devices to perform the gameplay enhancement operation. The method also includes providing, to the peripheral device in response to identifying the gameplay enhancement operation, a peripheral control signal to initiate the gameplay enhancement operation at the peripheral device.

Referring toFIG. 15, another illustrative example of a system is depicted and generally designated300. In the example ofFIG. 15, the system300includes one or more user systems, such as the first user system102and the second user system164. The first user system102includes the processor104and the memory114. The processor104is configured to retrieve the video game application116from the memory114and to execute the video game application116to generate an AV stream, such as the first AV stream106. Depending on the implementation, the video game application116may correspond a single-user video game or a multi-user video game (e.g., as described with reference to certain aspects ofFIG. 1). In the example ofFIG. 15, the first user system102is coupled to one or more peripheral devices, such as the peripheral devices230.

In the example ofFIG. 15, the first AV stream106includes a video portion302. The video portion302includes a heads-up display (HUD)304. The first AV stream106may further include an audio portion, such as the one or channels of audio data202ofFIG. 11. In one example, the HUD304presents game information during gameplay of the video game application116, such as character health, game status information, weapon information, or other information.

During operation, the first user system102is configured to analyze the first AV stream106to detect one or more elements306(also referred to herein as widgets) of the HUD304and to identify one or more in-game events associated with the video game application116, such as the one or more in-game events204. In one example, the one or more elements306of the HUD304indicate character health, game status information, weapon information, or other information.

In one example, the first user system102is configured to receive user selection322of an element of the one or more elements306. For example, the user selection322may identify a region of interest (ROI)324of the HUD304including the element. In some examples, the first user135may click, mouse over, or perform another operation indicating the ROI324via the user selection322(e.g., to enlarge or inspect the element). The processor104may be configured to identify the one or more elements306of the HUD304based on the user selection322.

In another example, an element of the one or more elements306is identified by detecting that the element maintains a common position in at least a threshold number of frames of the video portion. For example, the processor104may be configured to monitor frames of the video portion302and to determine which regions of the video portion302remain fixed during gameplay. The processor104may be configured to detect that regions of the video portion302that change during gameplay correspond to gameplay elements and that regions of the video portion302that remain fixed (or substantially fixed) correspond to the one or more elements306of the HUD304.

The processor104may be configured to generate first video metadata318(e.g., gameplay telemetry data) based on the one or more elements306of the HUD304. The first video metadata318may correspond to a game state of the video game application116and may be associated with the first user135. The processor104may be configured to generate the first video metadata318using a computer vision technique (e.g., by generating computer vision data312), an optical character recognition (OCR) technique (e.g., by generating OCR data314), using a graphical approximation technique (e.g., by generating graphical approximation data316), using one or more other techniques, or a combination thereof.

In some examples, the first video metadata318indicates one or more regions of the HUD304including the one or more elements306. For example, the first video metadata318may indicate pixel coordinates of an element of the one or more elements306or an object identifier specifying an element of the one or more elements306. The first video metadata318may further indicate a game state of the video game application at the time of detection of the one or more elements306. The game state may include a program counter value associated with execution of the video game application116or a level or stage of the video game application116, as illustrative examples.

In one implementation, the first video metadata318indicates, for an element of the one or more elements306, a count of the element. For example, the element may correspond to a health bar region of the HUD304, and the count may be the number of health bars displayed in the health bar region.

In one example, the first video metadata318indicates a category of each of the one or more elements306. In some implementations, a category is assigned to an element that is associated with a count in the HUD304. For example, an element may correspond to a status or other indication (e.g., “high scorer”) that is not associated with a count.

In some implementations, the first video metadata318indicates a range of values for an element of the one or more elements306. The range of values may indicate a minimum possible value of the element and a maximum possible value of the element. As an example, if the element indicates a score of zero out of five stars, then the range of values for the element may be zero to five stars.

The processor104may be configured to track the one or more elements306during gameplay of the video game application116. For example, the processor104may be configured to detect changes in the one or more elements306, such as a change in an amount of health of a character, a change in status, or a change in score. The processor104may be configured to update the first video metadata318to indicate the changes to the one or more elements306(e.g., where the first video metadata318indicates a history of each element of the one or more elements306).

In some examples, tracking the one or more elements306includes generating a mask320based on locations of the one or more elements306within the HUD304. The processor104may be configured to apply the mask320to the video portion302to generate a masked version of the video portion302. For example, the processor104may be configured to apply the mask320to the video portion302(e.g., by overlaying the mask320over the video portion302) so that regions of the video portion302other than the one or more elements306are covered, obscured, or “blacked out.” The processor104may be configured to sample the one or more elements306from the masked version (e.g., by performing a computer vision operation, an OCR operation, or a graphical approximation operation based on the masked version). Use of the mask320to generate a masked version of the video portion302may reduce an amount of video processing performed by the processor104(e.g., by obscuring one or more regions of the video portion302so the one or more regions are excluded from a computer vision operation, an OCR operation, a graphical approximation operation, or another operation).

The processor104may be configured to store the first video metadata318to a database, such as the user-specific database146, the multi-user database148, or both. In another example, the first user system102includes a database, and the processor104may be configured to store the first video metadata318to the database of the first user system102.

The processor104may be configured to retrieve, from a database, second video metadata360corresponding to the game state of the video game application116and associated with the second user139. For example, the multi-user database148may receive and store the second video metadata360(e.g., prior to generation of the first video metadata318). The second user system164may generate the second video metadata360using any technique described herein, such as one or more techniques described with reference to the first video metadata318.

The processor104may be configured to perform a comparison of the first video metadata318and the second video metadata360to determine one or more gameplay enhancement operations, such as a gameplay assistance message308. The gameplay assistance message308may offer one or more tips based on video metadata collected from gameplay of better-performing players. In one example, the gameplay assistance message308indicates one or more tips, based on gameplay of the second user139indicated by the second video metadata360, to improve gameplay performance of the first user135relative to the second user139(e.g., “use more grenades”). The gameplay assistance message308may offer “coaching” to a user (e.g., for a particularly challenging portion of the video game application116).

In some examples, the gameplay assistance message308includes or is presented via a second HUD310of the first AV stream106. The second HUD310may augment the video portion302and offer tips or feedback to a user (e.g., in real time or near-real time during gameplay or in aggregations after gameplay). In some examples, the gameplay assistance message308includes haptic feedback (e.g., via the haptic feedback device250), visual feedback (e.g., via the lighting device240), audio feedback, other feedback, or a combination thereof. It is noted that the gameplay assistance message308can be provided based on the sound metadata208ofFIG. 11(alternatively or in addition to the first video metadata318ofFIG. 15).

In some examples, video metadata for multiple users is collected and compared to enable game state tracking or visualization within a gaming session of multiple users. The video metadata for the multiple users may be synchronized (e.g., by grouping metadata associated with a common game state). In some implementations, a collection device (or “agent”) is configured to perform multi-source event detection and capture of game state and user response. The user response may be captured in game (e.g., by detecting the in-game events204), based on external communications (e.g., text messages, emails, or social media messages), or a combination thereof. The collection device may be configured to capture and synthesize differential activity and to characterize the intensity and priority of the response as a complete sample (e.g., using a full enumeration of event sources, game state, and user response). The samples may be captured, recorded locally, and used to enable a variety of other solutions, such as coaching of gamers based on comparative sample response analysis, tagging in-game highlights (e.g., as described with reference to the clip154inFIG. 1), tracking user performance across titles using stimulus/response scoring, or providing a multi-user tactics and prioritization engine. Thus, in some aspects, a buffered stream is analyzed to capture and record events for later analysis from a main continuous capture stream. In some aspects, a system is configured to detect and classify in-game event stimuli and to capture subsequent user response and sentiment across game and communications platforms.

In some examples, one or more aspects described with reference toFIG. 15are used in connection with one or more other aspects described herein. For example, the one or more in-game events204may be included in or may correspond to the one or more trigger events126ofFIG. 1. In this example, one or more operations described with reference toFIG. 1can be performed in response to detection of the one or more in-game events204, such as by incrementing the value130of the counter128in response to detection of the one or more in-game events204or by determining the first gameplay metric121in response to detection of the one or more in-game events204. Alternatively or in addition, one or more operations described with reference toFIGS. 2, 3, 4, and 5may be performed in response to detection of the one or more in-game events204(e.g., by performing a dynamic screen aggregation operation to increase the first size176of the first AV stream106in response to detecting the one or more in-game events204in the first AV stream106, as an illustrative example).

FIGS. 16, 17, 18, and 19illustrate examples of operations that may be performed by the first user system102. InFIGS. 16, 17, 18, and 19, the operations include discovery of the one or more elements306(e.g., widgets) of the HUD304, tracking of the one or more elements306of the HUD304, and recording, to a database, of the one or more elements306of the HUD304. The one or more elements306of the HUD304may be used in solution development, such as to generate the gameplay assistance message308, as an illustrative example. The one or more elements306of the HUD304may be used in solution development, such as to generate the gameplay assistance message308according to an in-game event204captured from the first video metadata318. The in-game event204may be captured in response to detecting that the in-game event204corresponds to an “outlier” that differs from gameplay observed from other player performances (e.g., in response to detecting approach of an extremum according to an observed schema from the user-specific database146, as an illustrative example).

FIG. 20illustrates an example of a method in accordance with an aspect of the disclosure. Operations of the method ofFIG. 20may be performed by a device, such as by the first user system102. In other examples, operations of the method ofFIG. 20may be performed by another device, such as the server144. The method ofFIG. 20includes, during execution of a video game application to generate an AV stream, analyzing one or more elements of a heads-up display (HUD) of a video portion of the AV stream to identify one or more in-game events associated with the video game application. The method ofFIG. 20further includes, based on the one or more in-game events, storing, to a database, first video metadata corresponding to a game state of the video game application. The first video metadata is associated with a first user. The method further includes retrieving, from the database, second video metadata corresponding to the game state, the second video metadata associated with a second user. The method also includes initiating, based on a comparison of the first video metadata and the second video metadata, one or more gameplay enhancement operations at during the execution of the video game application.