U.S. Pat. No. 11,244,510

INFORMATION PROCESSING APPARATUS AND METHOD CAPABLE OF FLEXIBILITY SETTING VIRTUAL OBJECTS IN A VIRTUAL SPACE

AssigneeSONY CORPORATION

Issue DateJuly 31, 2019

Illustrative Figure

Abstract

To achieve flexible setting of virtual objects in virtual space. An information processing apparatus includes: an information acquisition unit that obtains first state information regarding a state of a first user; and a setting unit that sets, on the basis of the first state information, a display mode of a second virtual object in a virtual space in which a first virtual object corresponding to the first user and the second virtual object corresponding to a second user are arranged.

Description

MODE FOR CARRYING OUT THE INVENTION Preferred embodiments of the present disclosure will be described in detail below with reference to the accompanying drawings. Note that same reference numerals are assigned to constituent elements having substantially the same functional configuration, and thus redundant description is omitted in the description herein and the drawings. Note that description will be presented in the following order. 1. Configuration of information processing system 2. Configuration of devices constituting information processing system 3. Virtual object setting method using static parameter 4. Virtual object setting method using dynamic parameter 5. Grouping virtual objects using dynamic parameter 6. Hardware configuration of device 7. Supplementary matter 8. Conclusion 1. CONFIGURATION OF INFORMATION PROCESSING SYSTEM Hereinafter, an overview of an information processing system according to an embodiment of the present disclosure will be described.FIG. 1is a view illustrating a configuration of an information processing system according to the present embodiment. The information processing system according to the present embodiment includes a display device100, a state detection device200, a network300, and a server400. Note that the display device100, the state detection device200, and the server400are an example of an information processing apparatus that executes information processing of the present embodiment. Furthermore, the display device100and the state detection device200may be configured by one information processing apparatus. In the information processing system according to the present embodiment, a service using a virtual space is provided. The server400performs control of the virtual space and generates image information regarding the virtual space. Subsequently, the server400transmits the generated image information to the display device100of each of users via the network300. The display device100of each of users presents the user with a picture regarding the virtual space on the basis of the received image information. Furthermore, the information processing system according to the present embodiment sets ...

MODE FOR CARRYING OUT THE INVENTION

Preferred embodiments of the present disclosure will be described in detail below with reference to the accompanying drawings. Note that same reference numerals are assigned to constituent elements having substantially the same functional configuration, and thus redundant description is omitted in the description herein and the drawings.

Note that description will be presented in the following order.

1. Configuration of information processing system

2. Configuration of devices constituting information processing system

3. Virtual object setting method using static parameter

4. Virtual object setting method using dynamic parameter

5. Grouping virtual objects using dynamic parameter

6. Hardware configuration of device

7. Supplementary matter

8. Conclusion

1. CONFIGURATION OF INFORMATION PROCESSING SYSTEM

Hereinafter, an overview of an information processing system according to an embodiment of the present disclosure will be described.FIG. 1is a view illustrating a configuration of an information processing system according to the present embodiment. The information processing system according to the present embodiment includes a display device100, a state detection device200, a network300, and a server400. Note that the display device100, the state detection device200, and the server400are an example of an information processing apparatus that executes information processing of the present embodiment. Furthermore, the display device100and the state detection device200may be configured by one information processing apparatus.

In the information processing system according to the present embodiment, a service using a virtual space is provided. The server400performs control of the virtual space and generates image information regarding the virtual space. Subsequently, the server400transmits the generated image information to the display device100of each of users via the network300. The display device100of each of users presents the user with a picture regarding the virtual space on the basis of the received image information.

Furthermore, the information processing system according to the present embodiment sets the position of the virtual object corresponding to each of users on the basis of a static parameter of each of the users. A static parameter includes user's attribute or user's role in the virtual space. Furthermore, a plurality of scenes is set in the virtual space. For example, a plurality of scenes in the virtual space includes a scene such as a conference, a class, a concert, a play, a movie, an attraction, or a game.

For example, in a case where the scene is a conference and the user's role is a presenter, the virtual object is arranged at a position of the user giving presentation in the virtual space. By arranging virtual objects in this manner, for example, the user can easily receive a service using a virtual space without a need to set the positions of the virtual objects in the virtual space. This enables the user to further concentrate on the user's purpose. The method of arranging virtual objects using static parameters will be described later with reference toFIGS. 4 to 7.

Furthermore, the information processing system according to the present embodiment sets the position of the virtual object corresponding to each of users on the basis of a user's dynamic parameter. The dynamic parameter includes information regarding user's behavior or user's biometric information indicating the user's state. Accordingly, dynamic parameters may also be referred to as user state information. Note that the information regarding user's behavior includes any one of pieces of information regarding user's facial expression, blinks, posture, vocalization, and a line-of-sight direction. Furthermore, the user's biometric information includes any one of pieces of information regarding user's heart rate, body temperature, and perspiration.

User's dynamic parameters are detected by the display device100and/or the state detection device200. Note that the dynamic parameter may be detected by a wearable device worn by the user. The detected dynamic parameters are transmitted to the server400. The server400controls the virtual object on the basis of the received dynamic parameter.

For example, the server400estimates user's emotion on the basis of the user's dynamic parameter. The estimated user's emotion may be categorized into a plurality of categories. For example, estimated emotions may be categorized into three categories, namely, positive, neutral (normal) and negative. Subsequently, the server400controls the virtual object on the basis of the estimated user's emotion.

For example, the server400may set a distance between the plurality of virtual objects on the basis of the estimated user's emotion. At this time, in a case where the estimated emotion is positive, the server400may reduce the distance between the plurality of virtual objects. Furthermore, in a case where the estimated emotion is negative, the server400may increase the distance between the plurality of virtual objects. In this manner, by setting the distance between virtual objects, the user can, for example, stay away from a virtual object of an unpleasant user and approach a virtual object of a cozy user. Therefore, the information processing system according to the present embodiment can automatically set a distance comfortable for each of users from the user's unconscious action, such as the user's posture or facial expression during communication, and automatically adjust a distance to the other party.

Furthermore, the server400may present a virtual space picture mutually different for each of users. For example, in a case where the user A is estimated to have a negative emotion toward the user B, the server400may display the virtual object of the user B at a separate position in the picture of the virtual space provided to the display device100of the user A. In contrast, the server400need not alter the distance to the virtual object of the user A in the picture of the virtual space provided to the display device100of the user B. In this manner, by setting the distance between the virtual objects, for example, the user A can stay away from a virtual object of an unpleasant user while preventing the unpleasant feeing of the user A about the user B from being recognized by the user B. Accordingly, the information processing system of the present embodiment can adjust the position of the virtual object to a position enabling the user to easily perform communication without giving an unpleasant feeling to the other party. The setting of virtual objects using dynamic parameters will be described later with reference toFIGS. 8 to 20.

2. CONFIGURATION OF DEVICES CONSTITUTING INFORMATION Processing System

Hereinabove, the overview of the information processing system according to the embodiment of the present disclosure has been described. Hereinafter, the configuration of devices constituting the information processing system according to an embodiment of the present disclosure will be described.

2-1. Configuration of Display Device and State Detection Device

FIG. 2is a diagram illustrating an example of a configuration of the display device100and the state detection device200of the present embodiment. First, a configuration of the display device100will be described. The display device100according to the present embodiment includes, for example, a processing unit102, a communication unit104, a display unit106, an imaging unit108, and a sensor110. Furthermore, the processing unit102includes a facial expression detection unit112and a line-of-sight detection unit114. Alternatively, the display device100according to the present embodiment may be a head mounted display mounted on the head of the user.

The processing unit102processes a signal from each of configurations of the display device100. For example, the processing unit102performs decode processing on a signal transmitted from the communication unit104and extracts data. Furthermore, the processing unit102processes image information to be transmitted to the display unit106. Furthermore, the processing unit102may also process data obtained from the imaging unit108or the sensor110.

The communication unit104is a communication unit that communicates with an external device (the state detection device200inFIG. 2) by near field communication, and may perform communication using, for example, a communication scheme (for example, Bluetooth (registered trademark)) defined by the IEEE 802 committee. Alternatively, the communication unit104may perform communication using a communication scheme such as Wi-Fi. Note that the above-described communication scheme is an example, and the communication scheme of the communication unit104is not limited to it.

The display unit106is used to display an image. For example, the display unit106displays a virtual space image based on data received from the server400. The imaging unit108is used to capture the user's face. In the present embodiment, the imaging unit108is used particularly for imaging the eyes of the user.

The sensor110senses the movement of the display device100. For example, the sensor110includes an acceleration sensor, a gyro sensor, a geomagnetic sensor, or the like. The acceleration sensor senses acceleration on the display device100. The gyro sensor senses angular acceleration and angular velocity with respect to the display device100. The geomagnetic sensor senses geomagnetism. The direction of the display device100is calculated on the basis of the sensed geomagnetism.

The facial expression detection unit112detects the user's facial expression on the basis of the image information obtained from the imaging unit108. For example, the facial expression detection unit112may detect the user's facial expression by pattern matching. Specifically, the facial expression detection unit112may compare the shape or movement of the human eyes in the statistically classified predetermined facial expression with the shape or movement of the user's eyes obtained from the imaging unit108to detect the user's facial expression.

The line-of-sight detection unit114detects the user's line-of-sight on the basis of the image information obtained from the imaging unit108and the data obtained from the sensor110. Specifically, the line-of-sight detection unit114may detect the direction of the user's head on the basis of the data obtained from the sensor110, and may detect the movement of the user's eyeballs obtained from the imaging unit108and may thereby detect the user's line-of-sight. Furthermore, the line-of-sight detection unit114may detect a blink on the basis of the image information obtained from the imaging unit108.

Hereinabove, the configuration of the display device100according to the embodiment of the present disclosure has been described. Next, a configuration of the state detection device200according to an embodiment of the present disclosure will be described.

The state detection device200of the present embodiment is used to obtain state information regarding the state of the user. State information includes information regarding user's behavior and information regarding user's biometric information. The state detection device200includes a processing unit202, a first communication unit204, a second communication unit206, and an imaging unit208, for example. Furthermore, the processing unit202further includes a physical condition detection unit212.

The processing unit202processes a signal from each of configurations of the state detection device200. For example, the processing unit202may process the signal transmitted from the first communication unit204. The processing unit202may also process data obtained from the imaging unit208.

The first communication unit204is a communication unit that communicates with an external device (the display device100inFIG. 2) by near field communication, and may perform communication using, for example, a communication scheme (for example, Bluetooth (registered trademark)) defined by the IEEE 802 committee. Furthermore, the first communication unit204may perform communication using a communication scheme such as Wi-Fi. Note that the above-described communication scheme is an example, and the communication scheme of the first communication unit204is not limited to it.

The second communication unit206is a communication unit that communicates with an external device (the server400in the present embodiment) by wired or wireless communication, and may perform communication using a communication scheme compliant with Ethernet (registered trademark), for example.

The imaging unit208is used to capture the entire body of the user. Furthermore, the imaging unit208may sense infrared light. The microphone210obtains audio data from sounds around the state detection device200.

The physical condition detection unit212determines the user's behavior and biometric information on the basis of the image information obtained from the imaging unit208. For example, the physical condition detection unit212may detect the user's motion or posture by performing known image processing such as edge detection. For example, the physical condition detection unit212may detect states where the user is leaning forward, the user is crossing own arms, or the user is sweating. Furthermore, the physical condition detection unit212may detect the body temperature of the user on the basis of the infrared light data obtained from the imaging unit208. Furthermore, the physical condition detection unit212may detect a state where the user is projecting voice on the basis of audio data obtained from the microphone210. Furthermore, the physical condition detection unit212may obtain information regarding user's heartbeat from a wearable terminal worn by the user.

Note that inFIG. 2, the display device100and the state detection device200are configured as separate two devices. Alternatively, however, the display device100and the state detection device200may be configured as one device. For example, the display device100and the state detection device200as illustrated inFIG. 2may be configured by using the display device100installed apart from the user, such as a television having an imaging device and a microphone. In this case, the line-of-sight, facial expression, behavior, or biometric information of the user may be detected on the basis of data from the imaging device. Furthermore, the state where the user is projecting voice may be detected on the basis of the data from the microphone.

2-2. Configuration of Server

Hereinabove, configurations of the display device100and the state detection device200according to the embodiment of the present disclosure have been described. Hereinafter, a configuration of the server400according to an embodiment of the present disclosure will be described.

FIG. 3is a diagram illustrating an example of a configuration of the server400capable of performing processing according to the information processing method of the present embodiment. The server400includes a processing unit402, a communication unit404, and a storage unit406, for example. The processing unit402further includes an information acquisition unit408, a setting unit410, and an information generation unit412.

The processing unit402processes a signal from each of configurations of the server400. For example, the processing unit402performs decode processing on a signal transmitted from the communication unit404and extracts data. The processing unit402also reads data from the storage unit406and processes the read-out data.

Furthermore, the processing unit402performs various types of processing on the virtual space. Note that the processing unit402may set a virtual space for the display device100of each of users, and may present a mutually different virtual space picture onto the display device100of each of the users on the basis of arrangement of the virtual objects in the plurality of virtual spaces, or the like. That is, the position of the virtual object in the virtual space with respect to the display device100of each of users is different for each of the virtual spaces.

Furthermore, the processing unit402may perform processing on one virtual space, and may present a mutually different virtual space picture onto the display device100of each of users on the basis of the arrangement of virtual objects in the one virtual space, or the like. That is, the processing unit402may correct the arrangement of virtual objects in the one virtual space, and may generate image information for the display device100of each of users. By performing processing on one virtual space in this manner, it is possible to reduce processing load on the processing unit402.

The communication unit404is a communication unit that communicates with an external device by wired or wireless communication, and may perform communication using a communication scheme compliant with Ethernet (registered trademark), for example. The storage unit406stores various types of data used by the processing unit402.

The information acquisition unit408obtains dynamic parameters of the user, which will be described later, from the display device100or the state detection device200. Furthermore, the information acquisition unit408obtains static parameters of the user described later from the storage unit406or an application.

The setting unit410performs setting or alteration for the virtual space on the basis of the static parameter or the dynamic parameter obtained by the information acquisition unit408. For example, the setting unit410may perform setting for the virtual object that corresponds to the user in the virtual space. Specifically, the setting unit410sets the arrangement of virtual objects. Furthermore, the setting unit410sets the distance between virtual objects.

The information generation unit412generates image information to be displayed on the display device100on the basis of the setting made by the setting unit410. Note that the information generation unit412may generate image information of mutually different virtual spaces for the display device100of each of users, as described above.

3. VIRTUAL OBJECT SETTING METHOD USING STATIC PARAMETER

Hereinabove, the configuration of devices constituting the information processing system according to an embodiment of the present disclosure has been described. Hereinafter, a virtual object setting method using a static parameter according to an embodiment of the present disclosure will be described.

In the present embodiment, setting for a virtual object in the virtual space is performed using a static parameter of the user. For example, in the information processing system according to the present embodiment, the position of the virtual object is set on the basis of the static parameter of the user. Note that the static parameter may be information preliminarily stored in the storage unit406or the like of the server400, representing information that would not be altered during execution of information processing of the present embodiment, on the basis of the information detected by the sensor110, the imaging unit108,208or the like.

Furthermore, the information processing system according to the present embodiment uses preliminarily set scenes in the virtual space, in a case where the setting for the virtual object described above is performed. For example, the scenes in the virtual space include a scene of a conference, a class, a concert, a play, a movie, an attraction, a game, or the like.

FIG. 4is a diagram illustrating a relationship between the scenes in the virtual space and static parameters used to set the virtual object. For example, in a case where the scene is a conference, information regarding user attributes, such as basic information of the user, department to which the user belongs, and title of the user, is used to set the virtual object. Note that the basic information of the user may include age, gender, nationality, language, and physical information of the user. Here, the physical information may include information regarding the height and weight of the user. Furthermore, information regarding the user's role in the conference (for example, a presenter, a chairperson, a listener) and information regarding frequency of participation in the conference may be used for setting of the virtual object.

Note that the role of the user may be set by the user or may be obtained from an application for schedule management. For example, in a case where the user is registered as a presenter in the conference on a schedule management application, information regarding the role may be obtained from the application. Furthermore, information regarding a scene (in this example, a conference) may also be obtained from the application in a similar manner. In this manner, with information obtained from the application, the user can more easily receive service using the virtual space without performing setting in the virtual space. This enables the user to further concentrate on the user's purpose.

Furthermore, in a case where the scene is a concert, user's basic information, information regarding user's role in the concert (for example, performer or audience) or regarding frequency of participation in the concert may be used for the setting of the virtual object. Furthermore, in a case where the scene is a class, user's basic information and information regarding the user's role in the class (for example, teacher or student) may be used for the setting of the virtual object.

FIG. 5is a view illustrating a layout of preliminarily set virtual objects in a case where a conference is set as a scene. The positions represented by open circles inFIG. 5indicate positions at which user's virtual objects can be arranged.

For example, a virtual object of a user being a presenter or a chairperson may be arranged at a position indicated by “A”. Furthermore, a virtual object of a user being a listener may be arranged at a position other than the position indicated by “A”.

Furthermore, among the users being listeners, a virtual object of the user having a high-rank title may be arranged at a position near the position indicated by “A”. Furthermore, among users being listeners, a virtual object of the user having high frequency of participation in a conference regarding a predetermined purpose (for example, a conference related to a predetermined project) may be arranged near a position indicated by “A”. Furthermore, virtual objects of users of the same gender may be arranged adjacent to each other. Furthermore, virtual objects of users belonging to a same department may be arranged adjacent to each other.

FIG. 6is a view illustrating a layout of virtual objects in a case where a concert is set as a scene. InFIG. 6, for example, the virtual object of the user being a performer may be arranged at the position indicated by “A”. Furthermore, the virtual object of the user being the audience may be arranged at a position other than the position indicated by “A”.

Furthermore, among users who are audience, virtual objects of users having high frequency of participation in a particular performer's concert may be arranged at a position near the position indicated by “A”. Furthermore, virtual objects of users of the same gender may be arranged adjacent to each other.

As described above, the information processing system of the present disclosure sets the position of the virtual object corresponding to the user on the basis of the static parameter of the user. By arranging the virtual objects in this manner, the user can easily receive a service using a virtual space without a need to set the position of the virtual objects in the virtual space.

FIG. 7is a flowchart illustrating information processing for setting virtual objects using the above-described static parameters. In S102, the information acquisition unit408obtains static parameters of the user from the storage unit406or an application.

Next, in S104, the setting unit410determines a scene in a virtual space. For example, the setting unit410may set a scene on the basis of registration information from the user, or may set a scene on the basis of information from an application.

In S106, the setting unit410arranges virtual objects on the basis of the static parameters obtained in S102and the scene determined in S104. Subsequently, the information generation unit412generates, in S108, a display image for the display device100of each of users on the basis of the arrangement of the virtual objects set in S106, or the like.

4. VIRTUAL OBJECT SETTING METHOD USING DYNAMIC PARAMETER

Hereinabove, a virtual object setting method using a static parameter according to an embodiment of the present disclosure has been described. Hereinafter, a virtual object setting method using a dynamic parameter according to an embodiment of the present disclosure will be described.

In the present embodiment, setting for a virtual object in the virtual space is performed using a dynamic parameter of the user. For example, in the information processing system according to the present embodiment, the position of the virtual object is set on the basis of the dynamic parameter of the user. Specifically, in the information processing system according to the present embodiment, the distance between a plurality of virtual objects is set on the basis of the dynamic parameter of the user. Note that the dynamic parameter represents information that would be sequentially updated during execution of information processing of the present embodiment on the basis of information detected by the sensor110, the imaging unit108,208or the like.

FIG. 8is a diagram illustrating relationships among dynamic parameters used for virtual object setting, user's emotions estimated using the dynamic parameters, and distances between the virtual objects to be set. As illustrated inFIG. 8, the dynamic parameters are categorized in association with the distances between virtual objects to be set or the estimated user's emotions.

InFIG. 8, dynamic parameters include information regarding user's behavior and user's biometric information. For example, user's behavior may include behaviors of user, such as “straining eyes”, “leaning forward”, “projecting a loud voice”, “touching the hair”, “blinking”, “crossing own arms” or sweating. Furthermore, the user's biometric information may include information regarding the user's “body temperature” and “heartbeat”.

In addition, dynamic parameters are used to estimate the user's emotions. According toFIG. 8, by user's states such as straining eyes, leaning forward, or projecting a loud voice, the user is estimated to be in a positive emotion. Furthermore, by user's states such as touching the hair, blinking a lot (frequently), crossing own arms, or sweating, the user is estimated to be in a negative emotion. Furthermore, in a case where there is an increase in body temperature or the heart rate of the user, the user is estimated to be in a negative emotion. Furthermore, in a case where no above user's condition is detected, the user's emotion is estimated to be neutral.

Note that the emotion estimated as the dynamic parameter described above is a non-limiting example. Furthermore, the relationship between the dynamic parameter described above and the estimated emotion is a non-limiting example For example, the user's emotion may be estimated to be positive in a case where the user's body temperature has increased.

Additionally, in a case where the estimated user's emotion is positive, the distance between virtual objects is to be reduced. Furthermore, in a case where the estimated user's emotion is negative, the distance between virtual objects is to be increased. Furthermore, in a case where the estimated user's emotion is neutral, the distance between virtual objects is not to be altered. In addition, the degree of changing the distance may be uniform, or may be variable in accordance with a detected dynamic parameter. For example, the degree of changing the distance may be varied in accordance with the degree of increase in the user's heart rate.

FIGS. 9 to 15are views illustrating changes in distance between virtual objects in the information processing system of the present embodiment. Note that in the present embodiment, a mutually different virtual space picture is presented to the display device100of each of users. As described above, an individual virtual space may be set for the display device100of each of users and a mutually different virtual space picture may be presented onto the display device100of each of the users on the basis of arrangement of the virtual objects in the plurality of virtual spaces, or the like. Furthermore, a mutually different virtual space picture may be presented on the display device100of each of users on the basis of the arrangement of virtual objects in one virtual space shared by the display devices100of a plurality of users, or the like.

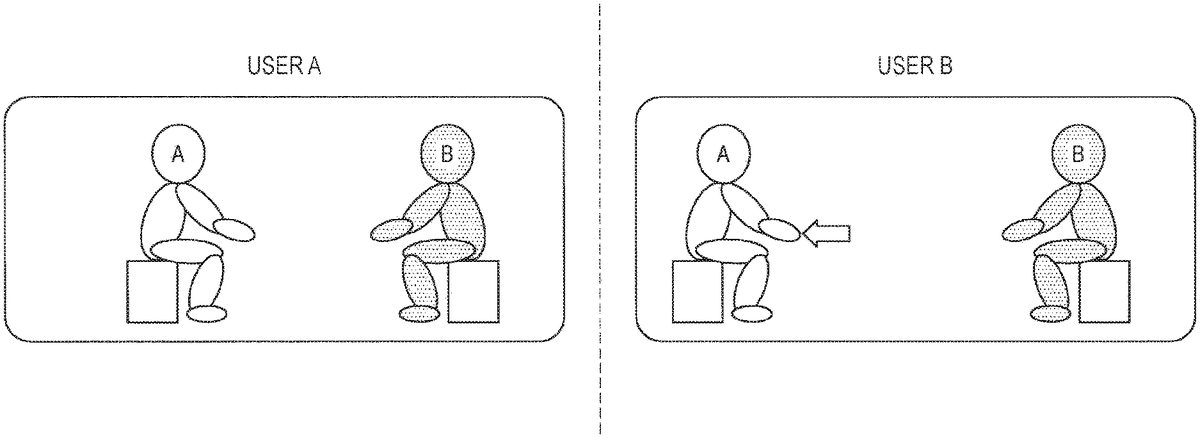

Hereinafter, for the sake of simplicity, an example of setting a virtual space for the display device100of each of users will be described. Therefore, inFIGS. 9 to 15, the view illustrated as “User A” represents a virtual space for the display device100of a user A, while the view illustrated as “User B” represents the display device100of a user B.

FIG. 9is a view illustrating an initial position of a virtual object set on the basis of the above-described static parameter. As illustrated inFIG. 9, initially, the distance between virtual objects in the virtual space for the display device100of the user A is equal to the distance between virtual objects in the virtual space for the display device100of the user B.

FIG. 10is a view illustrating alteration in distance between virtual objects in a case where user's positive emotion is estimated. For example, in a case where the user B leans forward, the user B is estimated to have a positive emotion toward the user A. Accordingly, the distance between virtual objects in the virtual space for the display device100of the user B is reduced. That is, in the virtual space for the display device100of the user B, the virtual object corresponding to the user A approaches the virtual object corresponding to the user B. Note that in this case, the display device100of the user B sets a display mode of the virtual object of the user A such that the virtual object of the user A is arranged at a position close to the user B. That is, the display device100of the user B sets a display mode of the virtual object of the user A such that the virtual object of the user A is displayed in large size.

In contrast, even in a case where the user B is estimated to have a positive emotion toward the user A, the distance between virtual objects in the virtual space for the display device100of the user A would not be altered.

FIG. 11is a view illustrating alteration in distance between virtual objects in a case where user's negative emotion is estimated. For example, in a case where the user B crosses own arms, the user B is estimated to have a negative emotion toward the user A. Accordingly, the distance between virtual objects in the virtual space for the display device100of the user B is increased. That is, in the virtual space for the display device100of the user B, the virtual object corresponding to the user A moves away from the virtual object corresponding to the user B. Note that in this case, the display device100of the user B sets a display mode of the virtual object of the user A such that the virtual object of the user A is arranged at a position distant from the user B. That is, the display device100of the user B sets a display mode of the virtual object of the user A such that the virtual object of the user A is displayed in a small size.

In contrast, even in a case where the user B is estimated to have a negative emotion toward the user A, the distance between virtual objects in the virtual space for the display device100of the user A would not be altered. As described above, since mutually different processing is performed in the virtual space for the display device100of each of users, the user A cannot recognize the emotion the user B has toward the user A. In particular, in a case where the user B has negative emotion toward the user A, the above-described processing would be effective because the fact that the user B has negative emotion toward the user A would not be recognized by the user A.

Hereinabove, the setting of the basic virtual object in the present embodiment has been described. Hereinafter, an example in which a personal space set for the virtual object prohibits entrance of another virtual object will be described. Note that the personal space indicates a region prohibiting entrance of other virtual objects and thus may be referred to as a non-interference region. InFIGS. 12 to 15, the personal space will be illustrated using dotted lines.

FIG. 12is a view illustrating an example in which a personal space is set for a virtual object corresponding to the user B. Furthermore,FIG. 12is a view illustrating alteration in distance between virtual objects in a case where user's positive emotion is estimated. As illustrated inFIG. 12, in the virtual space for the display device100of the user B, the virtual object corresponding to the user A approaches the virtual object corresponding to the user B.

In the course where the virtual object corresponding to the user A approaches the virtual object corresponding to the user B, the virtual object corresponding to the user A comes in contact with a part of the personal space as indicated by a point P. At this time, the virtual object corresponding to the user A cannot come closer to the virtual object corresponding to the user B.

In this manner, setting the non-interference region would make it possible to prevent the virtual object corresponding to the user A from coming too close to the virtual object corresponding to the user B. This would enable the user B to receive the service in the virtual space without feeling a sense of oppression.

FIG. 13is a view illustrating an example in which a personal space is set also for a virtual object corresponding to the user A. Furthermore,FIG. 13is a view illustrating alteration in distance between virtual objects in a case where user's positive emotion is estimated. As illustrated inFIG. 13, in the virtual space for the display device100of the user B, the virtual object corresponding to the user A approaches the virtual object corresponding to the user B.

In the course where the virtual object corresponding to user A approaches the virtual object corresponding to user B, the personal space set for the virtual object corresponding to user A comes in contact with a part of the personal space set for the virtual object corresponding to user B, as indicated by point P. At this time, the virtual object corresponding to the user A would not come closer to the virtual object corresponding to the user B.

In this manner, setting the non-interference region would make it possible to prevent the virtual object corresponding to the user A from coming too close to the virtual object corresponding to the user B. This would enable the user A to receive the service in the virtual space without causing the virtual object corresponding to the user A to inadvertently approach the virtual object corresponding to the user B.

Note that the processing for the personal space described above is an example. Accordingly, in a case where the personal space is set for both virtual objects of the user A and the user B as illustrated inFIG. 14, the personal space set for virtual object of the user B may be prioritized.

That is, as indicated by point P inFIG. 14, the distance between virtual objects can be set small until the virtual object corresponding to user A comes in contact with the personal space set for the virtual object of user B.

Furthermore, in a case where the personal space is set for both virtual objects of the user A and the user B as illustrated inFIG. 15, the personal space set for virtual object of the user A may be prioritized.

That is, as indicated by point P inFIG. 15, the distance between virtual objects can be set small until the virtual object corresponding to the user B comes in contact with the personal space set for the virtual object of the user A.

Furthermore, the size of the personal space may be set on the basis of the static parameters described above. For example, the size of the personal space may be set in accordance with the height of the user. Specifically, in a case where the height of the user is large, the size of the personal space of the user may be set large. Furthermore, the size of the personal space may be set in accordance with the title of the user. Specifically, in a case of a user having a high-rank title, the size of the personal space of the user may be set large.

FIG. 16is a flowchart illustrating information processing for setting virtual objects using the above-described dynamic parameters. In S202, the information acquisition unit408obtains a dynamic parameter of a user from the display device100or the state detection device200.

Next, in S204, the setting unit410estimates the user's emotion on the basis of the obtained dynamic parameter. Subsequently, the setting unit410sets, in S206, a distance between virtual objects on the basis of the emotion estimated in S204.

In S208, the setting unit410determines whether or not there is interference in the personal space set in the virtual object at the distance set in S206. In a case where there is interference in S208, the setting unit410re-sets, in S210, the distance between virtual objects so as to cause no interference. In a case where there is no interference in S208, processing proceeds to S212.

In S212, the information generation unit412generates a display image for the display device100of each of users on the basis of the distance between virtual objects set in S206or S210.

Note that in the example of the information processing described above, the setting unit410estimates the user's emotion on the basis of the category of the dynamic parameter. However, the setting unit410need not estimate user's emotion. That is, the category of the dynamic parameter and the setting of the distance between virtual objects may be directly associated with each other. Specifically, in a case where the user strains one's eyes, the distance between virtual objects may be reduced. Furthermore, in a case where the body temperature of the user has risen, the distance between virtual objects may be increased.

Note that information processing of the present embodiment is also applied to setting of virtual objects for a plurality of persons.FIG. 17is a diagram illustrating a status of the virtual space for the display device100of each of users in a case where three people use the system of the present embodiment.

InFIG. 17, virtual objects corresponding to user A, user B, and user C are arranged on a triangle. Note that arrows inFIG. 17indicate directions of virtual objects corresponding to user A, user B, and user C.

FIG. 18is a view illustrating a status of the virtual space for the display device100of each of users in a case where the distance between the virtual objects illustrated inFIG. 17has been re-set on the basis of the dynamic parameter. According toFIG. 18, user A has a positive emotion toward user B, user B has a positive emotion toward user C, and user C has a positive emotion toward user A. In this case, the virtual objects may move along sides of an equilateral triangle (or regular polygon in case of three or more users) so that the virtual objects corresponding to individual users do not overlap with each other.

Note that as illustrated inFIG. 18, in a case where the user makes behavior such as head swing after alteration of the distance between virtual objects, the face might not be oriented in the correct direction in some cases. Therefore, the present embodiment will perform picture correction for user's behavior such as head swing.

For example, in a case where the user B turns face in the direction of the user A in the virtual space for the display device100of the user B illustrated inFIG. 18, the virtual object of the user B makes behavior of head swing by 60°. However, in the virtual space for the display device100of the user A illustrated inFIG. 18, the virtual object of the user B would not be directed in the direction of the user A even if the user B swings head by 60°. This is because the positional relationship between the virtual object of the user A and the virtual object of the user B is different between the virtual space for the display device100of the user A and the virtual space for the display device100of the user B.

Therefore, in the present embodiment, in a case where the user B swings head by 60°, the setting unit410estimates that the user B wishes to direct own face in the direction of the user A, and then the information generation unit412performs, in the virtual space for the display device100of user A, picture processing to make the head of the virtual object of the user B appear to be swung by 90°. This processing enables natural display of the virtual space on the display device100of each of users.

5. GROUPING VIRTUAL OBJECTS USING DYNAMIC PARAMETER

Hereinabove, a virtual object setting method using a dynamic parameter according to an embodiment of the present disclosure has been described. Hereinafter, virtual object grouping using a dynamic parameter according to an embodiment of the present disclosure will be described.

FIG. 19is a diagram illustrating an example of grouping virtual objects using dynamic parameters. InFIG. 19, positions where virtual objects corresponding to audience user are arranged are grouped into three groups in a concert scene. In the present embodiment, the virtual object of each of users is classified into one of three groups on the basis of the dynamic parameter. For example, the three groups may be classified into a group of users who silently listen to the concert, a group of users who wish to sing, and a group of users who wish to dance.

Additionally, the virtual object of the group of the user who silently listens to the concert may be arranged at a position illustrated by “3” inFIG. 19. Furthermore, the virtual objects of the group of users who wish to sing may be arranged at a position indicated by “1” inFIG. 19. Furthermore, the virtual objects of the group of users who wish to dance may be arranged at a position indicated by “2” inFIG. 19.

FIG. 20is a diagram illustrating a relationship between classification groups and dynamic parameters. For example, in a case where the user is sitting or not projecting voice, the user may be classified into the group of users who silently listen to the concert. Furthermore, in a case where the user is projecting voice, the user may be classified into a group of users who wish to sing. Furthermore, in a case where the user is standing or moving the body, the user may be classified into a group of users who wish to dance.

Note that the classification of the group described above is an example of a concert scene, and the classification of a group is not limited to it. For example, in the case of a conference scene, the groups may be classified into a group of speaking users and a group of users who take notes.

Note that the above-described group may be altered in accordance with a change of the dynamic parameter detected. For example, in a case where the sitting user stands up, the group may be altered from a silently listening group to a dancing group.

By grouping users in accordance with dynamic parameters in this manner, it is possible to achieve communication with users having higher similarities in accuracy. In particular, in the virtual space of a concert, it is possible to avoid disturbance by a user having different character, such as in a case where a silently listening user is disturbed by a dancing user.

6. HARDWARE CONFIGURATION OF DEVICE

Hereinafter, a hardware configuration of the server400according to an embodiment of the present disclosure will be described in detail with reference toFIG. 21.FIG. 21is a block diagram illustrating a hardware configuration of the server400according to an embodiment of the present disclosure.

The server400mainly includes a CPU901, a ROM903and a RAM905. The server400further includes a host bus907, a bridge909, an external bus911, an interface913, an input device915, an output device917, a storage device919, a drive921, a connection port923, and a communication device925.

The CPU901functions as a central processing unit and control unit, and controls all or part of operation in the server400in accordance with various programs recorded in the ROM903, the RAM905, the storage device919, or a removable recording medium927. Note that the CPU901may include the function of the processing unit402. The ROM903stores programs, calculation parameters, or the like, used by the CPU901. The RAM905temporarily stores programs used by the CPU901or parameters appropriately changing in execution of programs, or the like. These are mutually connected by the host bus907including an internal bus such as a CPU bus.

The input device915is an operation means operated by a user, such as a mouse, a keyboard, a touch panel, buttons, a switch, or a lever. Furthermore, the input device915includes, for example, an input control circuit that generates an input signal on the basis of information input by the user using the above-described operation means and that outputs the generated input signal to the CPU901. The user operates the input device915to enable inputting various types of data or instructing processing operation to the server400.

The output device917includes a device that can visually or audibly notify the user of obtained information. Examples of such devices include display devices such as CRT display devices, liquid crystal display devices, plasma display devices, EL display devices and lamps, audio output devices such as speakers and headphones, printer devices, mobile phones, facsimiles, or the like. The output device917outputs results obtained by various types of processing performed by the server400, for example. Specifically, the display device displays the result obtained by the various types of processing performed by the server400as text or an image. Meanwhile, the audio output device converts an audio signal including reproduced audio data, sound data or the like into an analog signal and outputs the converted signal.

The storage device919is a data storage device configured as an example of the storage unit406of the server400. The storage device919includes a magnetic storage device such as a hard disk drive (HDD), a semiconductor storage device, an optical storage device, a magneto-optical storage device, or the like. The storage device919stores programs to be executed by the CPU901, various types of data, various types of data obtained from the outside, or the like.

The drive921is a reader/writer for a recording medium, built in or externally attached to the server400. The drive921reads out information recorded on a removable recording medium927such as a mounted magnetic disk, optical disk, magneto-optical disk, semiconductor memory, or the like, and outputs the read-out information to the RAM905. Furthermore, the drive921can also write a recording onto a removable recording medium927such as a mounted magnetic disk, optical disk, magneto-optical disk, semiconductor memory or the like. Examples of the removable recording medium927include a DVD medium, an HD-DVD medium, and a Blu-ray (registered trademark) medium. Furthermore, examples of the removable recording medium927may be a compact flash (CF) (registered trademark), a flash memory, a secure digital (SD) memory card), or the like. Furthermore, the removable recording medium927may be, for example, an integrated circuit card (IC card) on which a non-contact IC chip is mounted, an electronic device, or the like.

The connection port923is a port for directly connecting a device to the server400. Examples of the connection port923can be a universal serial bus (USB) port, an IEEE 1394 port, a small computer system interface (SCSI) port, or the like. Other examples of the connection port923may be an RS-232C port, an optical audio terminal, a high-definition multimedia interface (HDMI) (registered trademark) port, or the like. By connecting an external connection device929to the connection port923, the server400obtains various types of data directly from the external connection device929, and provides various types of data to the external connection device929.

An example of the communication device925is a communication interface including communication devices, or the like for connecting to a communication network931. Examples of the communication device925include a communication card for a wired or wireless local area network (LAN) or wireless USB (WUSB), or the like. Furthermore, the communication device925may be a router for optical communication, a router for asymmetric digital subscriber line (ADSL), a modem for various types of communication, or the like. The communication device925can transfer signals or the like through the Internet or with other communication devices in accordance with a predetermined protocol such as TCP/IP, for example. Furthermore, the communication network931connected to the communication device925may include a wired or wireless network, or the like and may be, for example, the Internet, home LAN, infrared communication, radio wave communication, satellite communication, or the like.

7. SUPPLEMENTARY MATTER

Hereinabove, the preferred embodiments of the present disclosure have been described above with reference to the accompanying drawings, while the technical scope of the present disclosure is not limited to the above examples. A person skilled in the art in the technical field of the present disclosure may find it understandable to reach various alterations and modifications within the technical scope of the appended claims, and it should be understood that they will naturally come within the technical scope of the present disclosure.

For example, in the above-described example, the server400performs control or processing of the virtual space and virtual objects. However, the information processing system of the present embodiment may be configured without including the server400. For example, the information processing performed by the information processing system of the present embodiment may be performed by the plurality of display devices100and the state detection device200operating in cooperation. At this time, one of the plurality of display devices100and the state detection device200may perform control or processing performed by the server400in the present embodiment, instead of the server400. Furthermore, the plurality of display devices100and the state detection devices200may dispersedly perform the control or processing performed by the server400in the present embodiment.

Furthermore, the above-described example is an exemplary case in which the distance between virtual objects is altered. However, examples of altering the display mode of the virtual object are not limited to it. For example, in a case where the user B is determined to have a negative emotion, the virtual object corresponding to the user A may be replaced with a virtual object of an animal, for example. Furthermore, the virtual object corresponding to user A may be partially deformed. For example, the deformation may be performed to enlarge the eyes of the virtual object corresponding to the user A.

Furthermore, in the example usingFIG. 10, in a case where the user B is estimated to have a positive emotion toward the user A, the virtual object corresponding to the user A approaches to the virtual object corresponding to the user B in the virtual space for the display device100of the user B. That is, the position of the virtual object of the user A has been altered in the virtual space corresponding to the display device100of the user B on the basis of the change of the dynamic parameter of the user B. Alternatively, however, the position of the virtual object of the user A may be altered in the virtual space corresponding to the display device100of the user B on the basis of the change of the dynamic parameter of the user A. That is, in a case where the user A is estimated to have a positive emotion toward the user B, the virtual object corresponding to the user A may approach the virtual object corresponding to the user B in the virtual space for the display device100of the user B. Note that such control may be performed in a case where the user A has a positive feeling toward the user B, and need not be performed in a case where the user A has a negative emotion toward the user B.

Furthermore, a computer program may be provided for causing the processing unit102of the display device100, the processing unit202of the state detection device200, and the processing unit402of the server400to perform the operations as described above. Furthermore, a storage medium that stores these programs may be provided.

8. CONCLUSION

As described above, the information processing system of the present disclosure sets the position of the virtual object corresponding to each of users on the basis of the static parameter of each of the users. By arranging the virtual objects in this manner, the user can easily receive a service using a virtual space without a need to set the position of the virtual objects in the virtual space. This enables the user to further concentrate on the user's purpose.

Furthermore, the information processing system according to the present disclosure sets the position of the virtual object corresponding to each of users on the basis of a user's dynamic parameter. In this manner, by setting the distance between virtual objects, the user can stay away from a virtual object of an unpleasant user and can approach a virtual object of a cozy user. Therefore, the information processing system according to the present embodiment can automatically set a distance comfortable for each of users from the user's unconscious action, such as the user's posture or facial expression during communication, and automatically adjust a distance to the other party.

Furthermore, a virtual space picture mutually different for each of users may be presented in the information processing system of the present disclosure. In this manner, by controlling virtual space, the user A can, for example, stay away from a virtual object of an unpleasant user while preventing the unpleasant feeing the user A has about the user B from being recognized by the user B. Accordingly, the information processing system of the present embodiment can adjust the position of the virtual object to a position enabling the user to easily perform communication without giving an unpleasant feeling to the other party.

Note that the following configuration should also be within the technical scope of the present disclosure.

(1)

An information processing apparatus including:

an information acquisition unit that obtains first state information regarding a state of a first user; and

a setting unit that sets, on the basis of the first state information, a display mode of a second virtual object in a virtual space in which a first virtual object corresponding to the first user and the second virtual object corresponding to a second user are arranged.

(2)

The information processing apparatus according to (1), in which the setting of the display mode includes setting of a distance between the first virtual object and the second virtual object.

(3)

The information processing apparatus according to (2), in which the first state information is classified into a plurality of categories,

the setting unit determines the category of the first state information,

reduces the distance between the first virtual object and the second virtual object in a case where the first user is determined to be positive as a result of the determination, and

increases the distance between the first virtual object and the second virtual object in a case where the first user is determined to be negative as a result of the determination.

(4)

The information processing apparatus according to any one of (1) to (3), in which the setting unit sets a display mode of the second virtual object in the virtual space for a first device of the first user and a display mode of the first virtual object in the virtual space for a second device of the second user such that the display modes differ from each other.

(5)

The information processing apparatus according to (4),

in which the setting unit alters the display mode of the second virtual object in the virtual space for the first device on the basis of the first state information, and

performs no alteration of the display mode of the first virtual object in the virtual space for the second device based on the first state information.

(6)

The information processing apparatus according to (4) or (5), in which the virtual space for the first device and the virtual space for the second device are a same virtual space.

(7)

The information processing apparatus according to any one of (2) to (6), in which the setting unit sets the distance between the first virtual object and the second virtual object on the basis of a non-interference region for the first virtual object or a non-interference region for the second virtual object.

(8)

The information processing apparatus according to (7),

in which the non-interference region for the first virtual object is set on the basis of information regarding an attribute of the first user, and

the non-interference region for the second virtual object is set on the basis of information regarding an attribute of the second user.

(9)

The information processing apparatus according to (7), in which the setting unit sets the distance between the first virtual object and the second virtual object so as not to allow entrance of the second virtual object into the non-interference region for the first virtual object.

(10)

The information processing apparatus according to (7), in which the setting unit sets the distance between the first virtual object and the second virtual object so as not to allow entrance of the first virtual object into the non-interference region for the second virtual object.

(11)

The information processing apparatus according to (7), in which the setting unit sets the distance between the first virtual object and the second virtual object so as not to allow overlapping of the non-interference region for the first virtual object with the non-interference region for the second virtual object.

(12)

The information processing apparatus according to any one of (1) to (11),

in which the information acquisition unit obtains second state information regarding a state of the second user, and

the setting unit sets the display mode of the second virtual object on the basis of the second state information.

(13)

The information processing apparatus according to (12),

in which the first state information is information regarding behavior or biometric information of the first user, and

the second state information is information regarding behavior or biometric information of the second user.

(14)

The information processing apparatus according to (13),

in which the information regarding the behavior includes any one of pieces of information regarding user's facial expression, blinks, posture, vocalization, and a line-of-sight direction, and

the biometric information includes any one of pieces of information regarding user's heart rate, body temperature, and perspiration.

(15)

The information processing apparatus according to any one of (1) to (14), in which the setting unit sets the first virtual object to one of a plurality of groups in the virtual space on the basis of the first state information.

(16)

The information processing apparatus according to any one of (1) to (15), in which the setting unit sets a position of the first virtual object in the virtual space on the basis of a static parameter of the first user.

(17)

The information processing apparatus according to (16), in which the static parameter includes a user attribute of the first user or a role of the first user in the virtual space.

(18)

The information processing apparatus according to (16) or (17),

in which the virtual space includes a plurality of scenes, and

the setting unit sets the position of the first virtual object in accordance with one of the plurality of scenes set as the virtual space.

(19)

An information processing method including:

obtaining first state information regarding a state of a first user; and

setting, by a processor, on the basis of the first state information, a display mode of a second virtual object in a virtual space in which a first virtual object corresponding to the first user and the second virtual object corresponding to a second user are arranged.

(20)

A program for causing a processor to execute:

obtaining first state information regarding a state of a first user; and

setting, on the basis of the first state information, a display mode of a second virtual object in a virtual space in which a first virtual object corresponding to the first user and the second virtual object corresponding to a second user are arranged.

REFERENCE SIGNS LIST

100Display device102Processing unit104Communication unit106Display unit108Imaging unit110Sensor112Facial expression detection unit114Line-of-sight detection unit200State detection device202Processing unit204First communication unit206Second communication unit208Imaging unit210Microphone212Physical condition detection unit300Network400Server402Processing unit404Communication unit406Storage unit408Information acquisition unit410Setting unit412Information generation unit

Claims

- An information processing apparatus, comprising: a processor configured to: obtain first state information regarding a state of a first user based on a sensor input, wherein the first user corresponds to a first virtual object in a virtual space;and the first state information indicates one of a positive state of the first user or a negative state of the first user;set a display mode of a second virtual object corresponding to a second user in the virtual space for a first device of the first user, wherein the virtual space comprises an arrangement of the first virtual object corresponding to the first user and the second virtual object corresponding to the second user;and change a position of the second virtual object corresponding to the second user with respect to the first virtual object corresponding to the first user in the virtual space for the first device, wherein the change in the position of the second virtual object with respect to the first virtual object in the virtual space for the first device is based on the set display mode and the first state information of the first user that indicates one of the positive state of the first user or the negative state of the first user.

- The information processing apparatus according to claim 1 , wherein the first state information is classified into a plurality of categories, the processor is further configured to: determine a category of the first state information among the plurality of categories;reduce a distance between the first virtual object and the second virtual object in a case where the determined category indicates that the first user is in the positive state;and increase the distance between the first virtual object and the second virtual object in a case where the determined category indicates that the first user is in the negative state.

- The information processing apparatus according to claim 1 , wherein the processor is further configured to set a display mode of the first virtual object in the virtual space for a second device of the second user, such that the display mode of the second virtual object is different from the display mode of the first virtual object.

- The information processing apparatus according to claim 3 , wherein the processor is further configured to perform no alteration of the display mode of the first virtual object in the virtual space for the second device based on the first state information.

- The information processing apparatus according to claim 3 , wherein the virtual space for the first device and the virtual space for the second device are a same virtual space.

- The information processing apparatus according to claim 1 , wherein the processor is further configured to set a distance between the first virtual object and the second virtual object based on at least one of a non-interference region for the first virtual object or a non-interference region for the second virtual object.

- The information processing apparatus according to claim 6 , wherein the processor is further configured to: set the non-interference region for the first virtual object based on information regarding an attribute of the first user;and set the non-interference region for the second virtual object based on information regarding an attribute of the second user.

- The information processing apparatus according to claim 6 , wherein the processor is further configured to set the distance between the first virtual object and the second virtual object so as not to allow entrance of the second virtual object into the non-interference region for the first virtual object.

- The information processing apparatus according to claim 6 , wherein the processor is further configured to set the distance between the first virtual object and the second virtual object so as not to allow entrance of the first virtual object into the non-interference region for the second virtual object.

- The information processing apparatus according to claim 6 , wherein the processor is further configured to set the distance between the first virtual object and the second virtual object so as not to allow overlapping of the non-interference region for the first virtual object with the non-interference region for the second virtual object.

- The information processing apparatus according to claim 1 , wherein the processor is further configured to: obtain second state information regarding a specific state of the second user;and set the display mode of the second virtual object based on the second state information.

- The information processing apparatus according to claim 11 , wherein the first state information is one of information regarding a behavior of the first user or biometric information of the first user, and the second state information is one of information regarding a behavior of the second user or biometric information of the second user.

- The information processing apparatus according to claim 12 , wherein the information regarding the behavior includes at least one of user's facial expression, blinks, posture, vocalization, or a line-of-sight direction, and the biometric information includes at least one of user's heart rate, body temperature, or perspiration.

- The information processing apparatus according to claim 1 , wherein the processor is further configured to set the first virtual object to one of a plurality of groups in the virtual space based on the first state information.

- The information processing apparatus according to claim 1 , wherein the processor is further configured to set a position of the first virtual object in the virtual space based on a static parameter of the first user.

- The information processing apparatus according to claim 15 , wherein the static parameter includes one of a user attribute of the first user or a role of the first user in the virtual space.

- The information processing apparatus according to claim 15 , wherein the virtual space includes a plurality of scenes, and the processor is further configured to set the position of the first virtual object based on one scene of the plurality of scenes set as the virtual space.

- An information processing method, comprising: obtaining first state information regarding a state of a first user based on a sensor input, wherein the first user corresponds to a first virtual object in a virtual space, and the first state information indicates one of a positive state of the first user or a negative state of the first user;setting, by a processor, a display mode of a second virtual object corresponding to a second user in the virtual space for a first device of the first user, wherein the virtual space comprises an arrangement of the first virtual object corresponding to the first user and the second virtual object corresponding to the second user;and changing a position of the second virtual object corresponding to the second user with respect to the first virtual object corresponding to the first user in the virtual space for the first device, wherein the change in the position of the second virtual object with respect to the first virtual object in the virtual space for the first device is based on the set display mode and the first state information of the first user that indicates one of the positive state of the first user or the negative state of the first user.

- A non-transitory computer-readable medium having stored thereon, computer-executable instructions which, when executed by a computer, cause the computer to execute operations, the operations comprising: obtaining first state information regarding a state of a first user based on a sensor input, wherein the first user corresponds to a first virtual object in a virtual space, and the first state information indicates one of a positive state of the first user or a negative state of the first user;setting a display mode of a second virtual object corresponding to a second user in the virtual space for a first device of the first user, wherein the virtual space comprises an arrangement of the first virtual object corresponding to the first user and the second virtual object corresponding to the second user;and changing a position of the second virtual object corresponding to the second user with respect to the first virtual object corresponding to the first user in the virtual space for the first device, wherein the change in the position of the second virtual object with respect to the first virtual object in the virtual space for the first device is based on the set display mode and the first state information of the first user that indicates one of the positive state of the first user or the negative state of the first user.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.