U.S. Pat. No. 11,110,350

MULTIPLAYER TELEPORTATION AND SUMMONING

AssigneeIntuitive Research and Technology Corp

Issue DateDecember 3, 2019

Illustrative Figure

Abstract

In a method according to the present disclosure a user in a virtual reality space identifies a desired location for moving another user within the space. The desired location is tested for suitability for teleportation or summoning. A list of clients available for moving to the desired location is identified and stored. The user selects one or more clients to move to the desired location, and the selected clients are summoned to the desired location.

Description

DETAILED DESCRIPTION In some embodiments of the present disclosure, the operator may use a virtual controller to manipulate three-dimensional mesh. As used herein, the term “XR” is used to describe Virtual Reality, Augmented Reality, or Mixed Reality displays and associated software-based environments. As used herein, “mesh” is used to describe a three-dimensional object in a virtual world, including, but not limited to, systems, assemblies, subassemblies, cabling, piping, landscapes, avatars, molecules, proteins, ligands, or chemical compounds. FIG. 1depicts a system100for allowing a virtual reality user to “host” another virtual reality user, according to an exemplary embodiment of the present disclosure. The system100comprises an input device110communicating across a network120to a processor130. The input device110may comprise, for example, a keyboard, a switch, a mouse, a joystick, a touch pad and/or other type of interface, which can be used to input data from a user of the system100. The network120may be of any type network or networks known in the art or future-developed, such as the internet backbone, Ethernet, Wifi, WiMax, and the like. The network120may be any combination of hardware, software, or both. XR hardware140comprises virtual or mixed reality hardware that can be used to visualize a three-dimensional world. A video monitor150is used to display a VR space to a user. The input device110receives input from the processor130and translates that input into an XR event or function call. The input device110allows a user to input data to the system100, by translating user commands into computer commands. FIG. 2illustrates the relationship between three-dimensional assets210, the data representing those assets220, and the communication between that data and the software, which leads to the representation on the XR platform. The three-dimensional assets210may be any three-dimensional assets, which are any set of points that define geometry in three-dimensional space. The data representing a three-dimensional world220is a procedural ...

DETAILED DESCRIPTION

In some embodiments of the present disclosure, the operator may use a virtual controller to manipulate three-dimensional mesh. As used herein, the term “XR” is used to describe Virtual Reality, Augmented Reality, or Mixed Reality displays and associated software-based environments. As used herein, “mesh” is used to describe a three-dimensional object in a virtual world, including, but not limited to, systems, assemblies, subassemblies, cabling, piping, landscapes, avatars, molecules, proteins, ligands, or chemical compounds.

FIG. 1depicts a system100for allowing a virtual reality user to “host” another virtual reality user, according to an exemplary embodiment of the present disclosure. The system100comprises an input device110communicating across a network120to a processor130. The input device110may comprise, for example, a keyboard, a switch, a mouse, a joystick, a touch pad and/or other type of interface, which can be used to input data from a user of the system100. The network120may be of any type network or networks known in the art or future-developed, such as the internet backbone, Ethernet, Wifi, WiMax, and the like. The network120may be any combination of hardware, software, or both. XR hardware140comprises virtual or mixed reality hardware that can be used to visualize a three-dimensional world. A video monitor150is used to display a VR space to a user. The input device110receives input from the processor130and translates that input into an XR event or function call. The input device110allows a user to input data to the system100, by translating user commands into computer commands.

FIG. 2illustrates the relationship between three-dimensional assets210, the data representing those assets220, and the communication between that data and the software, which leads to the representation on the XR platform. The three-dimensional assets210may be any three-dimensional assets, which are any set of points that define geometry in three-dimensional space.

The data representing a three-dimensional world220is a procedural mesh that may be generated by importing three-dimensional models, images representing two-dimensional data, or other data converted into a three-dimensional format. The software for visualization230of the data representing a three-dimensional world220allows for the processor130(FIG. 1) to facilitate the visualization of the data representing a three-dimensional world220to be depicted as three-dimensional assets210in the XR display240.

FIG. 3depicts a method300to graphically create multiplayer actors. In step310, the client logs in to the host session, which facilitates future communication between a client and a pawn by creating a player controller. The player controller (not shown) is an intermediary between the user's input and the user's avatar (pawn). In step320, the host session creates a pawn object, for example, an avatar, and assigns control of the object to the connecting client. In step330, the host stores references to internally connect the created pawn to the connected client, which allows summoning clients to find all other clients.

FIG. 4describes a summoning method400according to the present disclosure. In step410, the user provides the input to the input device to start the line trace cycle. The user provides input via a controller, which in one embodiment has a button that the user actuates to start the teleporting process and a button that the user actuates to start the summoning process.

In the line trace cycle, the engine automatically tests the location where the user is pointing to determine if teleportation to that location is possible. In this regard, a line is projected from the controller in the direction the user is pointing. The line trace will sample the environment to ensure that an avatar is not teleported into a wall, for example. If a user points at a location where it would not be possible for an avatar to move, the user will get a visual indication that this is a bad location. The visual indication may be a cursor not appearing, or turning a different color, or the like.

In step420, user points the input device at a spot the avatar is desired to be teleported or summoned. In step430, the user signals the input device to teleport or summon the summoned client. Signaling the input device is performed by the user clicking a button in one embodiment. In step440, the avatar is teleported or summoned to the location specified.

FIG. 5describes a method500of the movement of client actors other than the summoning client, during a summoning process. In step510, the system uses the references described in step330above to find all clients. In step520, the system removes the summoning client from the list. In step530, the summoned clients are moved to the location specified the summoning client.

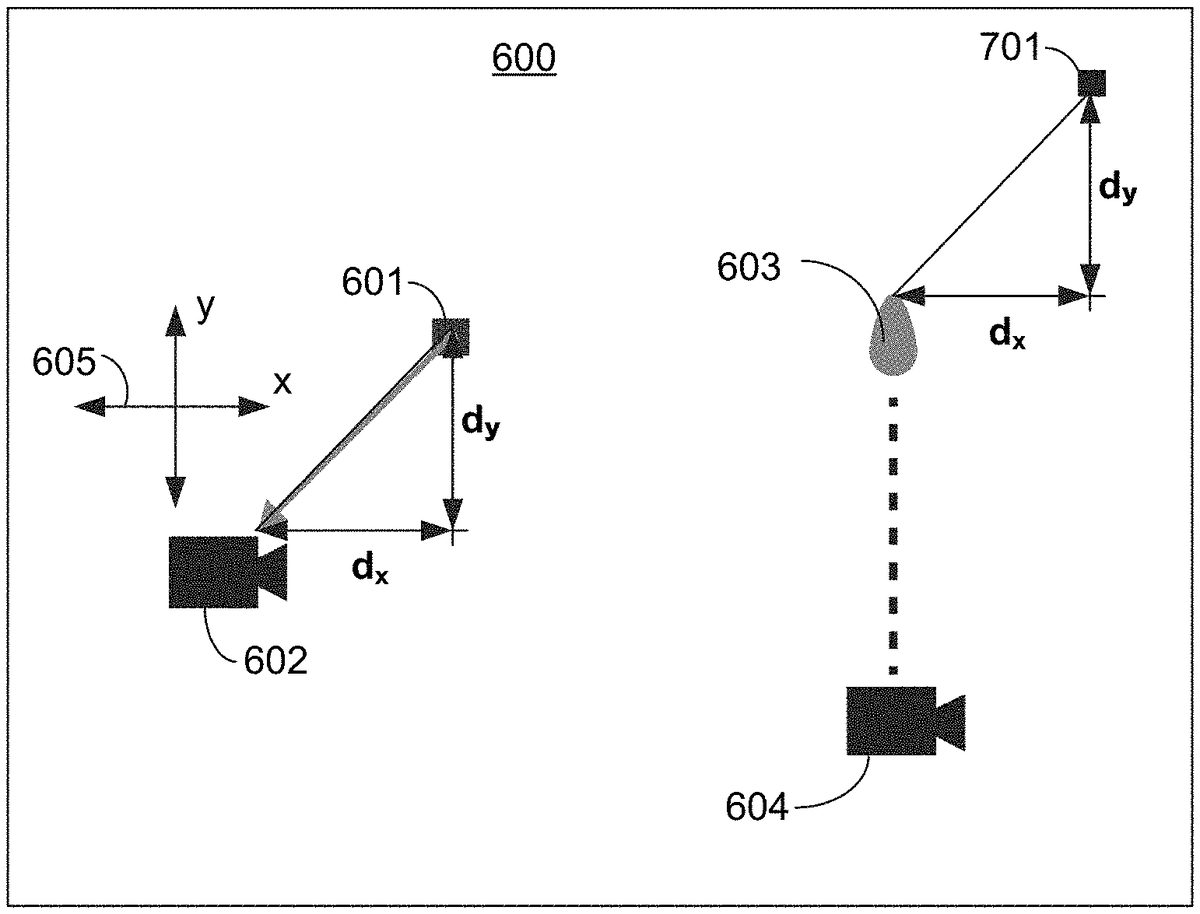

FIG. 6is a graphical representation of a virtual space600showing an original location601of a “summonee” (i.e., a participant actor to be summoned by a summoner) in the virtual space, and an original camera location602associated with the summonee. The original cameral location602represents where a camera would be in relation to the summonee's original location601, and is generally offset from the original location601in the “x” and “y” directions, as indicated by directional axis605. Specifically, the original location601is offset from the camera location602in the x direction a distance “−dx” and in the y direction a distance “−dy.”

A cursor603indicates a desired camera location that a summoner (not shown) would like to summon the summonee to. A summoner camera position604indicates the location of the field of view of the summoner. The summoner uses an input device to set the cursor603at a position where the summoner wishes the summonee to move. However, due to the offset between the summonee and the camera location discussed above, the summonee's actual position when summoned will also be offset, as discussed below with respect toFIG. 7.

FIG. 7is a graphical representation of the virtual space600showing the same original location601, original camera location602, cursor location603, and summoner camera position604as inFIG. 6, and also shows a new summonee location701where summonee would be summoned, based on the position of the cursor603, which represents the desired camera location for the summonee. The new summonee location701will be offset from the cursor603by the same distance dxand dyas the original location601is offset from the original camera location602.

FIG. 8is a graphical representation of the virtual space600ofFIG. 7, showing a new camera location801where the cursor603was inFIG. 7. The new camera location801takes the place of the original camera location602, and the field of view of the summoned has now changed to of the new camera location801.

FIG. 9is a graphical representation of a virtual space900showing an original location901of an actor, i.e., a participant in the virtual space desired to be teleported. As was discussed above with respect toFIGS. 6-8, there is an offset between the original location901and an original camera location902. A cursor903represents a desired location of the camera.

FIG. 10is a graphical representation of the virtual space900ofFIG. 9, showing a new location904of the actor after teleportation.FIG. 11is a graphical representation of the virtual space900ofFIG. 10, showing a new camera location905where the cursor903was inFIG. 10.

Claims

- A method of moving multiplayer actors in virtual reality, comprising: identifying, by a first user using a user input device, a desired location in a virtual space for summoning a client;testing the desired location for suitability for summoning;identifying and storing a list of clients for possible summoning to the desired location;selecting one or more clients to summon to the desired location;and moving the selected clients to the desired location by calculating the offset in an x direction and a y direction from an original camera position and an original actor position, and compensating the desired location by the offset.

- The method of claim 1 , wherein the step of moving the selected clients to the desired location requires no action by the selected clients.

- The method of claim 1 , wherein the first user controls a first avatar via the user input device in the virtual space and causes the first avatar to perform actions in the virtual space, the virtual space comprising a second avatar, the second avatar associated with a second user, and wherein a processor moves the first avatar in the virtual space according to the actions of the first user and moves the second avatar in the virtual space according to the actions of the first user.

- The method of claim 3 , wherein the second avatar moves to the desired location upon command by the first user.

- The method of claim 1 , wherein the first user controls a first avatar via the user input device in the virtual space and causes the first avatar to perform actions in the virtual space, the virtual space comprising a plurality of other avatars, each of the other avatars associated with one of a plurality of other users, and wherein a processor moves the first avatar in the virtual space according to the actions of the first user and moves the plurality of other avatars in the virtual space according to the actions of the first user.

- The method of claim 1 , wherein the step of selecting one or more clients to move to the desired location further comprises removing a summoning client from the list.

- A method of summoning multiplayer actors in a virtual space, comprising: identifying, by a user using a user input device, a desired location within the virtual space for summoning one or more clients to appear;testing the desired location for suitability;identifying and storing a list of clients for possible summoning to the desired location;selecting, by the user using the user input device, one or more clients to summon to the desired location;and without input from the selected clients, moving the selected clients to the desired location by calculating the offset in an x direction and a y direction from an original camera position and an original actor position, and compensating the desired location by the offset.

- The method of claim 7 , wherein a first user controls a first avatar via the user input device in the virtual space and causes the first avatar to perform actions in the virtual space, the virtual space further comprising a second avatar, the second avatar associated with a second user, and wherein a processor moves the first avatar in the virtual space according to the actions of the first user and moves the second avatar in the virtual space according to the actions of the first user.

- The method of claim 7 , wherein the step of selecting one or more clients to move to the desired location further comprises removing a summoning client from the list.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.