U.S. Pat. No. 11,052,317

PERFORMING SIMULATION OF STRETCHABLE CHARACTER IN COMPUTER GAME

AssigneeSQUARE ENIX LTD.

Issue DateJune 29, 2020

Illustrative Figure

Abstract

Embodiments relate to generating a character with a stretchable body part in a computer game. A pose of a body part of the character is received. The body part includes at least one base joint, bones connected via the at least one base joint, and an end effector coupled to one of the bones. An end effector position is received. The end effector position is where an end effector of the body part is to be placed in an updated pose. Inverse kinematics operations are performed to determine the updated pose of the body part by at least changing a length of one of the bones to place the end effector at the end effector position responsive to receiving the pose of the body part and the end effector position.

Description

The figures depict various embodiments of the present disclosure for purposes of illustration only. One skilled in the art will readily recognize from the following description that alternative embodiments of the structures and methods illustrated herein may be employed without departing from the principles, or benefits touted, of the disclosure described herein. DETAILED DESCRIPTION In the following description of embodiments, numerous specific details are set forth in order to provide more thorough understanding. However, note that the embodiments may be practiced without one or more of these specific details. In other instances, well-known features have not been described in detail to avoid unnecessarily complicating the description. Embodiments are described herein with reference to the figures where like reference numbers indicate identical or functionally similar elements. Also in the figures, the left most digit or digits of each reference number correspond to the figure in which the reference number is first used. Embodiments relate to generating graphical representations of a character with one or more stretchable body parts in a computer game that involves performing inverse kinematics operations where one or more bones in the body parts are stretched or contracted according to the pose of the character. The inverse kinematics operations are performed based on an end effector position of a stretchable body parts and the current pose of the character to determine properties (e.g., translational movements of joints and rotational movements of joints) of base joints coupled to one or more bones. After the properties of the base joints are determined, properties of first virtual joints are determined by interpolating the properties of the based joints. Then, forward kinematics operations are performed on the first virtual joints to obtain second virtual joints that represent an updated pose of the character. Positions and configurations of polygons representing the surface of ...

The figures depict various embodiments of the present disclosure for purposes of illustration only. One skilled in the art will readily recognize from the following description that alternative embodiments of the structures and methods illustrated herein may be employed without departing from the principles, or benefits touted, of the disclosure described herein.

DETAILED DESCRIPTION

In the following description of embodiments, numerous specific details are set forth in order to provide more thorough understanding. However, note that the embodiments may be practiced without one or more of these specific details. In other instances, well-known features have not been described in detail to avoid unnecessarily complicating the description.

Embodiments are described herein with reference to the figures where like reference numbers indicate identical or functionally similar elements. Also in the figures, the left most digit or digits of each reference number correspond to the figure in which the reference number is first used.

Embodiments relate to generating graphical representations of a character with one or more stretchable body parts in a computer game that involves performing inverse kinematics operations where one or more bones in the body parts are stretched or contracted according to the pose of the character. The inverse kinematics operations are performed based on an end effector position of a stretchable body parts and the current pose of the character to determine properties (e.g., translational movements of joints and rotational movements of joints) of base joints coupled to one or more bones. After the properties of the base joints are determined, properties of first virtual joints are determined by interpolating the properties of the based joints. Then, forward kinematics operations are performed on the first virtual joints to obtain second virtual joints that represent an updated pose of the character. Positions and configurations of polygons representing the surface of the stretchable body parts are determined so that the polygons are bound to the second virtual joints.

Among other advantages, embodiments enable efficient generation of moving graphical representations of a character with one or more stretchable body parts that move in a manner as desired by a game developer. Further, embodiments may reuse most of the algorithm or code for performing forward kinematics operations and inverse kinematics operations on characters with fixed length bones, reducing the time associated with developing or updating the algorithm or code to produce characters with stretchable body parts as well as reducing the complexity of code for determining the pose of the character.

FIG. 1is a block diagram of a system100in which the techniques described herein may be practiced, according to an embodiment. The system100includes, among other components, a content creator110, a server120, client devices140, and a network144. In other embodiments, the system100may include additional content creators110or servers120, or may include a singular client device140.

The content creator110, the server120, and the client devices140are configured to communicate via the network144. The network144includes any combination of local area and/or wide area networks, using both wired and/or wireless communication systems. In one embodiment, the network144uses standard communications technologies and/or protocols. For example, the network144includes communication links using technologies such as Ethernet, 802.11, worldwide interoperability for microwave access (WiMAX), 3G, 4G, code division multiple access (CDMA), digital subscriber line (DSL), etc. Examples of networking protocols used for communicating via the network144include multiprotocol label switching (MPLS), transmission control protocol/Internet protocol (TCP/IP), hypertext transport protocol (HTTP), simple mail transfer protocol (SMTP), and file transfer protocol (FTP). Data exchanged over the network144may be represented using any suitable format, such as hypertext markup language (HTML) or extensible markup language (XML). In some embodiments, all or some of the communication links of the network144may be encrypted using any suitable technique or techniques.

The content creator110is a computing device, such as a personal computer, a mobile phone, a tablet, or so on, which enables a game developer to create content items (e.g., characters and environment information) for a computer game. For this purpose, the content creator110includes a processor and a memory (not shown) that stores various software modules for creating content items. The created content items are sent to the server120for storing on its memory130.

The server120is a computing device that includes a processor128and a memory130connected by a bus127. The memory130includes various executable code modules or non-executable content items122. The server120may receive and route messages between the content creator110and the client devices140. The non-executable content items122may include information on characters with stretchable body parts. Such content items may be sent to the client devices140via the network144.

The processor128is capable of executing instructions, sequential or otherwise, that specify operations to be taken, such as performance of some or all of the techniques described herein. The bus127connects the processor128to the memory130, enabling data transfer from the one to the other and vice versa. Depending upon the embodiments, the server120may include additional elements conventional to computing devices.

Each client device140is a computing device that includes a game or other software. The client device140receives data objects from the server120and uses the data objects to render graphical representations of characters and environment in which the characters take actions in the game. Different client devices140can request different data objects from the server120.

Although the embodiment ofFIG. 1is described as operating in a networked environment, in other embodiments, the client devices140are not connected via network and the computer game is executed without exchanging messages or content items over any network. In such cases, any content items associated with the compute game may be received and installed on the client devices140using a non-transitory computer readable medium such as DVD ROM, CD ROM or flash drive.

FIG. 2is a block diagram of the client device140ofFIG. 1, according to an embodiment. Depending upon the embodiment, the content creator110and/or server120may be embodied as a computing device that includes some or all of the hardware and/or software elements of the client device140described herein. The client device140, content creator110, and/or server120are any machine capable of executing instructions, and may each be a standalone device or a connected (e.g. networked) set of devices.

The client device140may include, among other components, a central processing unit (“CPU”)202, a graphics processing unit (“GPU”)204, a primary memory206, a secondary memory214, a display controller208, a user interface210, and a sound controller212that are connected by a bus216. While only a single client device140is illustrated, other embodiments may include any collection of client devices140that individually or jointly execute instructions to perform any one or more of the methodologies discussed herein.

The primary memory206is a machine-readable medium that stores instructions (e.g., software) embodying any one or more of the methodologies or functions described herein. For example, the primary memory206may store instructions that, when executed by the CPU202, configure the CPU202to perform a process700, described below in detail with reference toFIG. 7. Instructions may also reside, partially or completely, within the CPU202and/or GPU204, e.g., within cache memory, during execution of the instructions.

The term “machine-readable medium” should be taken to include a single medium or multiple media (e.g., a centralized or distributed database, or associated caches and servers) able to store instructions. The term “machine-readable medium” shall also be taken to include any medium that is capable of storing instructions for execution by the device and that cause the device to perform any one or more of the methodologies disclosed herein. The term “machine-readable medium” includes, but is not limited to, data repositories in the form of solid-state memories, optical media, and magnetic media.

The secondary memory214is a memory separate from the primary memory206. Similar to the primary memory206, the secondary memory214is a machine-readable medium that stores instructions (e.g., software) embodying any one or more of the methodologies or functions described herein. For example, the primary memory206may be a hard drive of the client device140, and the secondary memory214may be a game disc.

The CPU202is processing circuitry configured to carry out the instructions stored in the primary memory206and/or secondary memory214. The CPU202may be a general-purpose or embedded processor using any of a variety of instruction set architectures (ISAs). Although a single CPU is illustrated inFIG. 2, the client device140may include multiple CPUs202. In multiprocessor systems, each of the CPUs202may commonly, but not necessarily, implement the same ISA.

The GPU204is a processing circuit specifically designed for efficient processing of graphical images. The GPU204may render objects to be displayed into a frame buffer (e.g., one that includes pixel data for an entire frame) based on instructions from the CPU202. The GPU204may include one or more graphics processors that may execute graphics software to perform a part or all of the graphics operations.

The display controller208is a circuit that generates a video signal using graphical data from the GPU204. For example, the display controller208drives a display device (e.g., a liquid crystal display (LCD) and a projector). As such, a game, including one or more characters with stretchable body parts, can be displayed as images or a sequence of image frames through the display controller208.

The sound controller212is a circuit that provides input and output of audio signals to and from the client device140. For purposes of a character, the sound controller212can provide audio signals that align with actions and objects in the computer game.

The user interface210is hardware, software, firmware, or a combination thereof that enables a user to interact with the client device140. The user interface210can include an alphanumeric input device (e.g., a keyboard) and a cursor control device (e.g., a mouse, a trackball, a joystick, a motion sensor, or other pointing instrument). For example, a user uses a keyboard and mouse to control a character's action within a game environment that includes an electronic map rendered by the client device140.

The client device140executes computer program modules for providing functionality described herein. As used herein, the term “module” refers to computer program instructions and/or other logic used to provide the specified functionality. Thus, a module can be implemented in hardware, firmware, and/or software. In some embodiments, program modules formed of executable computer program instructions are loaded into the memory206, and executed by the CPU202or the GPU204. For example, program instructions for the process700describe herein can be loaded into the primary memory206and/or secondary memory214, and executed by the CPU202and GPU204. In some embodiment, one or more of the functionality of the modules described herein may be performed by dedicated circuitry.

FIG. 3is a block diagram of software modules in a primary memory of the client device140ofFIG. 1, according to an embodiment. In particular,FIG. 3illustrates software modules in the primary memory206of the client device140. The primary memory206may store, among other modules, a game system300and an operating system (“OS”)380. The primary memory206may include other modules not illustrated inFIG. 3. Furthermore, in other embodiments, at least part of the modules inFIG. 3is stored in secondary memory214.

The game system300includes a level manager320, a physics system330, a sound module340, a terrain generator350, an animation module360, and a graphics rendering module370. These modules collectively form a “game engine” of the game system300.

The game system300performs operations312A through312N (collectively referred to as “operations312”) to generate actions of characters within game environment. Specifically, the game system300performs these operations312that involves changes in poses of characters to realize actions. The operations312refer to computing operations that result in changes in various parameters (e.g., poses or positions s of a character) based upon certain events (e.g., user interactions, expirations of time, and triggers occurring in the game) occur.

Some operations312are associated with actions taken by a character with a stretchable body parts. Such character may involve one or more body parts that stretchable or contractable (e.g., elastic). The character may appear to have flexible bones that stretch or contract as the character takes actions (e.g., stretch arm to punch an opponent) or as the character becomes a subject of actions by other characters (e.g., receiving punch from an opponent).

The level manager320receives data objects from the server120and stores the level data in the primary memory206. Other modules of the game system300can request one or more levels from the level manager320, which responds to the requests by sending the level data corresponding to the requested level to the module of the game system300that sent the request.

The terrain generator350generates a complete electronic map based on electronic map data and game data. The terrain generator350receives electronic map data from the level manager320, and game and object data from the content source stored, for example, in secondary memory214.

The physics system330models and simulates the dynamics of objects in the game environment. After an operation312is initiated in the game system300, the physics system330models how an action or event of the operation312affects the object associated with the operation312. For example, the physics system models a rock as it rolls down a hill. Depending on the action and object, other objects and actions may become associated with the action or object.

The animation module360performs kinematic animation of objects based on the operations312from the game system300. For example, if an operation312specifies that a character's arm is to punch another character, the animation module360generates a series of poses that collectively form the movement of the character's body that results in the punching action. For this purpose, the animation module360is capable of performing forward kinematics operations, inverse kinematics operations, and generation of transformation matrix. Some of these characters may have stretchable body parts with one or more bones that can be stretched or contracted during such operations. The details of the animation module360are described below with reference toFIG. 4. Such stretching or contracting of the bones can be represented in terms of translational movements of the joints.

The sound module340generates sounds corresponding to events and/or actions occurring in the game environment. For example, a corresponding sound may be generated when a character punches an opponent. Animation data from the animation module360or terrain information from the terrain generator350may be sent to the sound module340to enable the sound module340to produce appropriate sound data that is sent to the sound controller212.

The graphics rendering module370renders graphics using information from the animation module360to generate an image frame. For this purpose, the graphics rendering module370may receive transformation matrices that indicate changes in vertices of polygons that collectively form a surface of the characters, and terrain information from the terrain generator350. The graphics rendering module370processes the transformation matrices and the terrain information to generate graphical data that is sent to the GPU204for rendering images on a display screen, e.g. a display screen of the client device140or a display connected to the client device140, via the display controller208.

The OS380manages computer hardware and software resources. Specifically, the OS380acts as an intermediary between programs and the computer hardware. For example, the OS380can perform basic tasks, such as recognizing input from the user interface210and sending output to the display controller208.

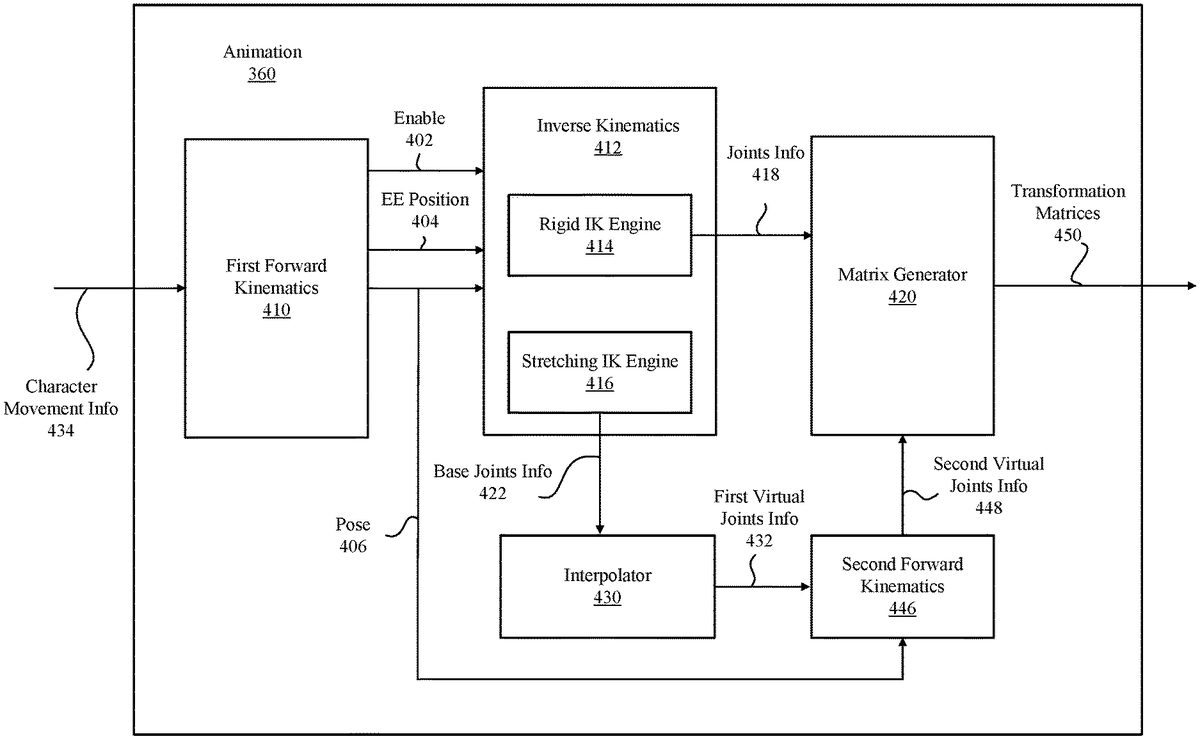

FIG. 4is a block diagram of the animation module360according to one embodiment. The animation module360receives character movement information434, processes character movement information434using forward kinematics and inverse kinematics, and generates transformation matrices450sent to the graphics rendering module370. For this purpose, the animation module360may include, among other components, first forward kinematics module410, inverse kinematics module412, interpolator430, second forward kinematics module446, and matrix generator420.

The first forward kinematics module410performs forward kinematics operations of a character as constrained by character movement information434associated with the character. As a result, the first forward kinematics module410generates end effector positions404(e.g., positions of a character's hand or foot), and pose information406using, for example, conventional techniques well known in the art. The pose information406may indicate properties of the joints (e.g., relative translations and rotations of the joints) as well as the properties of the bones (e.g., the change in the lengths of bones, which may be represented as translational movements of the joints). The first forward kinematics module410also generates an enable signal402indicating whether any body parts of the character is stretchable. Depending on the enable signal402, different methods may be used to perform inverse kinematics at the inverse kinematics module412. The enable signal402, the pose information406and the end effector positions404are sent to the inverse kinematics module412.

Character movement information refers to information indicating constraints or features of poses and movements of a character. Character movement information may be obtained from an animation graph of the character, which functions as a blueprint for actions and poses that can be taken by the character. The character movement information434constraints forward kinematic operations performed the first forward kinematics module410so that generated poses are in line with the animation graph.

The inverse kinematics module412performs inverse kinematics operations on a character based on information received from the first forward kinematics module410. The inverse kinematics module412includes two engines, one is a rigid inverse kinematics (IK) engine414, and the other is a stretching IK engine416. The rigid IK engine414determines properties of joints in the character (which, collectively, form an updated pose of the character) without any changes in the lengths of bones, using convention techniques well known in the art. The properties of the joints determined by the rigid IK engine414are sent to the matrix generator420as joints information418.

On the other hand, the stretching IK engine416determines base joints information422of the character by changing the lengths of bones in the character's body parts, as described below in detail with reference toFIGS. 6A through 6D. In one or more embodiments, the stretching IK engine416may change the lengths of the bones only when an end effector (e.g., hand) of the character cannot reach a desired target by merely rotating the base joints. The base joints information422becomes the basis for generating first and second virtual joints information432,448. The selection between the use of the rigid IK engine414and the stretching IK engine416is made based on the enable signal402.

In one or more embodiments, one or more body parts of the character may be processed by the rigid IK engine414while the remaining body parts of the same character are processed by stretching IK engine416. The conditions or body parts to be processed by either IK engines414,416may be defined by the character movement information.

When the stretching IK engine416is used, the interpolator430receives the base joints information422from the stretching IK engine416. The base joints information422indicates the properties (e.g., translational movements, rotational movements and scales of the base joints) of base joints in a character. The base joints refer to virtual joints that define the overall shape and orientation of body parts that are stretchable or contractable. Vertices of polygons representing the surface of the body part may not be bound to (e.g., retains certain spatial relationships to) the base joints but instead may be bound to second virtual joints. Alternatively or in addition, a subset of polygons may be bound to various combinations of the base joints, the first virtual joints and the second virtual joints.

The interpolator430determines the properties of the first virtual joints between two base joints through interpolation of properties of the two based joints (e.g., positions and orientations of the base joints), as described below with reference toFIG. 6C.

The number of first virtual joints between two given base joints may be fixed or may be varied. The first virtual joints are children joints of associated base joints. By using the first virtual joints, more diverse movements of stretchable or contractable body part can be implemented in the computer game. The increase in the number of first virtual joints enables the number of second virtual joints to be increased, which enables the movements of the body part during stretching or contracting can be more tightly controlled as desired by the designer of the computer game.

The second forward kinematics module446receives the first virtual joints information432and generates second virtual joints information448by performing forward kinematics operations. The second virtual joints information448indicates the properties (e.g., relative translational movements, rotational movements and scales) of the second virtual joints that are offset from the corresponding first virtual joints according to corresponding offset vectors. The offset vectors are configured so that the stretching or contracting of a body part are performed in a way that is desired by the designer of the computer game. The offset vectors may indicate translations, rotations and scales of the second virtual joints relative to the first virtual joints. The offset vectors may be derived from fragments of animation (also known as animation clip or simply as “animation”), often generated from information provided by an animator. The second virtual joints may function as binding points for vertices of polygons that form the surface of the corresponding body part.

The joints information418or the base joints information422, depending on the used kinematic engine, is sent to the matrix generator420that generates transformation matrices450. The transformation matrices may be skinning matrices that indicate translational and rotational movement of base joints (when rigid IK engine414is used) or second virtual joints (when stretching IK engine416is used) of the current image relative to those of the prior image frame. The matrix generator420generates the transformation matrix, for example, by using a technique described in Jason Gregory et al., “Poses” inGame Engine Architecture(3rded.), A K Peters/CRC Press, § 12.3 (2018), which is incorporated by reference herein.

FIG. 5is a block diagram of the graphics rendering module370, according to an embodiment. The graphics rendering module370performs operations to determine properties of vertices or polygons constituting surfaces of various objects to be displayed in the computer game. To render the surfaces of the character, the graphics rendering module370includes, among other components, a matrix processor510and a buffer516.

The graphics rendering module370stores properties of surfaces of the characters, including but not limited to, lists of vertices that form polygons (e.g., triangular meshes) of a surface, a list of normal vectors of the vertices, and a list of polygons in the buffer516. A subset of such properties518is sent to the matrix processor510for generating updated properties514.

The matrix processor510receives the transformation matrices450and performs matrix operations, among others, on the properties518stored in the buffer516to generate updated properties514. The update properties514are added to the buffer516or replaces corresponding properties in the buffer516. Graphics data520including the properties of the polygons is sent to the GPU204to display the character on a screen using techniques that are well known in the art.

An example of inverse kinematics operations performed by the stretching IK engine416on a character's arm is described herein with reference toFIGS. 6A through 6D. Although the example is described with respect to the character's arm, the same principle can be applied to other parts of the characters body.

FIG. 6Aillustrates a skeleton of the character's arm including a shoulder joint J1, an elbow joint J2, an upper arm bone B1, a lower arm bone B2, and a hand EE as an end effector. When an action is to be taken by the arm so that the hand EE reaches target T, the arm is assumed to make a rotational movement with respect to the shoulder joint J1as shown by arrow inFIG. 6A. By doing so, the hand EE is placed on a line extending between the center of joint J1and the target T. After the rotation, the joint J2is separated from the line by distance DA. The elbow joint J2is a child joint of the shoulder joint J1for the purpose of forward or inverse kinematics.

FIG. 6Billustrates a conceptual diagram illustrating adjustment of the lengths of the bones so that the hand EE reaches the target T, according to one embodiment. For example, bone B1of the arm is extended to B1E and bone B2is extended to B2E as shown inFIG. 6B, with or without rotations at joints J1, J2. After extending the joints and the hand EE reaching the target T, the distance of joint J2from the line between the center of the joint J1and the target T becomes DB. Although the lengths of the bones in the example ofFIG. 6Bare adjusted so that distance DB is larger than original distance DA, the distance DB may be maintained or decreased, and the degree of the change in the lengths may be adjusted to affect different stretching or contracting motions. For example, the distance of DB may be a linear function, a bilinear function, an exponential function, an inverse function or any combinations of functions of the length adjustment of one or more bones. Alternatively or in addition, the distance DB may be defined as a function that causes a joint (e.g., J2) to follow a certain path with the movement of the body part. The path may include straight line portions and/or curved line portions.

AlthoughFIGS. 6A and 6Billustrate the rotation about the base joint J1occurring first followed by the stretching of the bones, the sequence is merely for the purpose of explanation. In practice, the operation ofFIG. 6Bmay occur before the operation ofFIG. 6A, or both operations may occur simultaneously.

FIG. 6Cis a conceptual diagram illustrating first virtual joints K11, K12, K21, K22associated with base joints J1, J2, according to one embodiment. The first virtual joints K11, K12are children joints of the base joint J1, and first virtual joints K21, K22are children joints of the base joint J2, as shown by the dashed lines ofFIG. 6C. The interpolator430computes the properties (e.g., positions) of the first virtual joints K11, K12by interpolating corresponding properties of the base joints J1, J2. Similarly, the properties of the first virtual joints K21, K22are determined by interpolating corresponding properties of the base joint J2and the hand EE.

FIG. 6Dis a conceptual diagram illustrating second virtual joints S11, S12A, S12B, S21A, S21B, S22offset from the first virtual joints K11, K12, K21, K22, according to one embodiment. The second virtual joints S11, S12A, S12B, S21A, S21B, S22are offset from corresponding first joints K11, K12, K21, K22by offset vectors OV11, OV12A, OV12B, OV21A, OV21B, OV22, respectively. As illustrated inFIG. 6D, more than one second virtual joints (e.g., S12A, S12B, S21A, S21B) may be associated with a single first virtual joint (e.g., K12, K21), while some first virtual joints (e.g., K11, K22) may be associated with only a single second virtual joint (e.g., S11, S22). The second forward kinematics module446computes the second virtual joints information448that includes, for example, properties such as positions and orientations of the second virtual joints S11, S12A, S12B, S21A, S21B, S22by performing forward kinematics on the first virtual joints K11, K12, K21, K22using the offset vectors OV11, OV12A, OV12B, OV21A, OV21B, OV22.

After the second virtual joints S11, S12A, S12B, S21A, S21B, S22are determined, a surface of the arm SA may be defined by polygons whose locations and other properties are determined from properties of one or more of the second virtual joints S11, S12A, S12B, S21A, S21B, S22. The vertices of a polygon may be bound to one or more of base joints, first virtual joints and second virtual joints. In one or more embodiments, locations and other properties of the polygons (e.g., triangular meshes) and their vertices are determined and updated by the matrix processor510so that the polygons and/or vertices maintain predefined spatial relationships with the second virtual joints S11, S12, S21, S22. For example, the translational movements of a polygon or its vertices are so that the distance from a corresponding second joint is maintained. The operations to determine the properties of the polygons or vertices may be computed using, for example, linear blend skinning method well known in the art.

Although the example ofFIGS. 6A through 6Duses an arm with a single base joint J1between a parent joint J1and the end effector EE, the same principle of operations may be applied to other body parts of a character with multiple children base joints between an end effector and a parent joint.

FIG. 7is a flowchart illustrating a process of simulating a stretchable body part of a character, according to one embodiment. A pose of body part including at least one base joint, bones connected via the base joint, and an end effector coupled to one of the bones is received702at the stretching IK engine416. The pose may be represented as a collection of properties (e.g., relative translations, rotations and scales of the joints) of base joints, and the lengths of bones connected to the joints. In one or more embodiments, the pose is generated by performing forward kinematics operations as constrained by the character movement information434.

An end effector position is also received704by the stretching IK engine416. The end effector position is where an end effector connected to one or more bones is to be located as the result of the action. The end effector position may be determined by as a result of operations312of the game system300that is based on user interactions or other events.

After receiving the pose of the character, the stretching IK engine416performs706, among others, inverse kinematics operations to determine second virtual joints information as an updated pose by at least changing a length of a bone in the character. For example, the base joints information may be obtained by performing the invers kinematics. Then, the first virtual joints information is generated by performing interpolation on base joints information, followed by performing forward kinematics on the first virtual joints to compute the second virtual joints information as the updated pose.

Based on the updated pose as represented by the second virtual joints information, transformation matrices450for moving the prior second virtual joints to the updated second prior joints are determined.

After performing the inverse kinematics operation, polygon information of the body parts for rendering the surface of the stretchable/contractable body part for displaying an image frame is generated708. That is, from the transformation matrices450, properties of polygons constituting the surface of the body part in an update pose are computed from those of the polygons of the prior pose.

The process ofFIG. 7is merely illustrative. The steps and sequences ofFIG. 7may be modified. For example, the sequence of receiving702the pose of the body part and receiving704the end effector position may be reversed or may be performed simultaneously. Also, further processes may be performed between the steps illustrated inFIG. 7.

Although the above embodiments are described with respect to simulating a pose of a character in a computer game, the same principle can be applied to other fields such as creating scenes in movies, rendering avatars for communication, emulating real-world interactions of objects with stretchable members such as robots. Such scenes may be stored in a non-transitory computer readable storage medium such as DVD, CDROM or a flash memory drive. Such scenes may also be transmitted over a network for access.

In one or more embodiments, joints information418generated from the rigid1K engine414is blended with base joints information422generated by the stretching1K engine416to generate blended joints information using respective weights. The weights may be adjusted for different frames so that smooth transitions are made between the poses generated using joints information418and base joints information422.

While particular embodiments and applications have been illustrated and described, it is to be understood that the invention is not limited to the precise construction and components disclosed herein and that various modifications, changes and variations which will be apparent to those skilled in the art may be made in the arrangement, operation and details of the method and apparatus disclosed herein without departing from the spirit and scope of the present disclosure.

Claims

- A method for generating a character in a computer game, comprising: receiving a pose of a body part, the body part including at least one base joint, bones connected via the at least one base joint, and an end effector coupled to one of the bones;receiving an end effector position where the end effector of the body part is to be placed in an updated pose;performing inverse kinematics operation to determine the updated pose of the body part by at least changing a length of one of the bones to place the end effector at the end effector position responsive to receiving the pose of the body part and the end effector position;and generating polygon information of the body part based on the updated pose for displaying a display frame including a character with the body part, responsive to performing the inverse kinematics.

- The method of claim 1 , further comprising determining properties of a plurality of first virtual joints that are children joints of the at least one base joint by performing interpolation joint information of two or more base joints.

- The method of claim 2 , further comprising determining properties of a plurality of second virtual joints that are children joints of the first virtual joints by performing forward kinematic operations on the first virtual joints using the pose of the body part, wherein polygons corresponding at least a portion of a surface of the body part are bound to the second virtual joints.

- The method of claim 3 , wherein relationships between a subset of the second joints and an associated first virtual joints are defined by offset vectors.

- The method of claim 1 , further comprising: receiving character movement information of the character;and performing forward kinematics operations as constrained by the character movement information.

- The method of claim 1 , further comprising: receiving an enablement signal indicating enabling of the changing of the length of one of the bones.

- The method of claim 1 , wherein the updated pose indicates at least a translation movement and a rotational movement each of the bones in a matrix form.

- The method of claim 1 , wherein translational movements of two or more of the bones are changed by the inverse kinematics operation.

- The method of claim 1 , performing the inverse kinematics operation comprises: rotating a first bone relative to a first joint to move a second bone and a second joint between the first bone and the second bone so that the end effector is placed on a straight line between the first joint and the end effector position;and extending or contracting the first bone and extending or contracting the second bone so that a distance between the second joint and the straight line satisfies a predetermined condition and the end effector is placed at the end effector position.

- A non-transitory computer-readable storage medium storing instructions thereon, the instructions when executed by one or more processors cause the one or more processors to: receive a pose of a body part, the body part including at least one base joint, bones connected via the at least one base joint, and an end effector coupled to one of the bones;receive an end effector position where the end effector of the body part is to be placed in an updated pose;perform inverse kinematics operation to determine the updated pose of the body part by at least changing a length of one of the bones to place the end effector at the end effector position responsive to receiving the pose of the body part and the end effector position;and generate polygon information of the body part based on the updated pose for displaying a display frame including a character with the body part, responsive to performing the inverse kinematics.

- The computer-readable storage medium of claim 10 , further comprising instructions that cause the one or more processors to: determine properties of a plurality of first virtual joints that are children joints of the at least one base joint by performing interpolation joint information of two or more base joints.

- The computer-readable storage medium of claim 11 , further comprising instructions that cause the one or more processors to: determine properties of a plurality of second virtual joints that are children joints of the first virtual joints by performing forward kinematic operations on the first virtual joints using the pose of the body part, wherein polygons corresponding at least a portion of a surface of the body part are bound to the second virtual joints.

- The computer-readable storage medium of claim 12 , wherein relationships between a subset of the second joints and an associated first virtual joints are defined by offset vectors.

- The computer-readable storage medium of claim 11 , further comprising instructions that cause the one or more processors to: receive character movement information of the character;and perform forward kinematics operations as constrained by the character movement information.

- The computer-readable storage medium of claim 10 , further comprising instructions that cause the one or more processors to: receive an enablement signal indicating enabling of the changing of the length of one of the bones.

- The computer-readable storage medium of claim 10 , wherein the updated pose indicates at least a translational movement and a rotational movement of each of the bones in a matrix form.

- The computer-readable storage medium of claim 10 , wherein translational movements of two or more of the bones are changed by the inverse kinematics operation.

- The computer-readable storage medium of claim 10 , wherein instructions to perform the inverse kinematics operation comprises instructions to: rotate a first bone relative to a first joint to move a second bone and a second joint between the first bone and the second bone so that the end effector is placed on a straight line between the first joint and the end effector position;and extend or contracting the first bone and extending or contracting the second bone so that a distance between the second joint and the straight line satisfies a predetermined condition and the end effector is placed at the end effector position.

- A non-transitory computer-readable storage medium storing a series of image frames generated by: receiving a pose of a body part, the body part including at least one base joint, bones connected via the at least one base joint, and an end effector coupled to one of the bones;receiving an end effector position where the end effector of the body part is to be placed in an updated pose;performing inverse kinematics operation to determine the updated pose of the body part by at least changing a length of one of the bones to place the end effector at the end effector position responsive to receiving the pose of the body part and the end effector position;and generating polygon information of the body part based on the updated pose for displaying a display frame including a character with the body part, responsive to performing the inverse kinematics.

- The computer-readable storage medium of claim 19 , wherein the image frames are generated further by: determining properties of a plurality of first virtual joints that are children joints of the at least one base joint by performing interpolation joint information of two or more base joints, and determining properties of a plurality of second virtual joints that are children joints of the first virtual joints by performing forward kinematic operations on the first virtual joints using the pose of the body part, wherein polygons corresponding at least a portion of a surface of the body part are bound to the second virtual joints.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.