U.S. Pat. No. 11,013,984

GAME SYSTEM, GAME PROCESSING METHOD, STORAGE MEDIUM HAVING STORED THEREIN GAME PROGRAM, GAME APPARATUS, AND GAME CONTROLLER

AssigneeNINTENDO CO., LTD.; THE POKÉMON COMPANY

Issue DateSeptember 30, 2019

Illustrative Figure

Abstract

Based on first data transmitted from a game controller, a game apparatus executes game processing for catching a predetermined game character and transmits, to the game controller, second data corresponding to the game character as a target to be caught. Based on the transmitted second data, the game controller outputs a sound corresponding to the caught game character.

Description

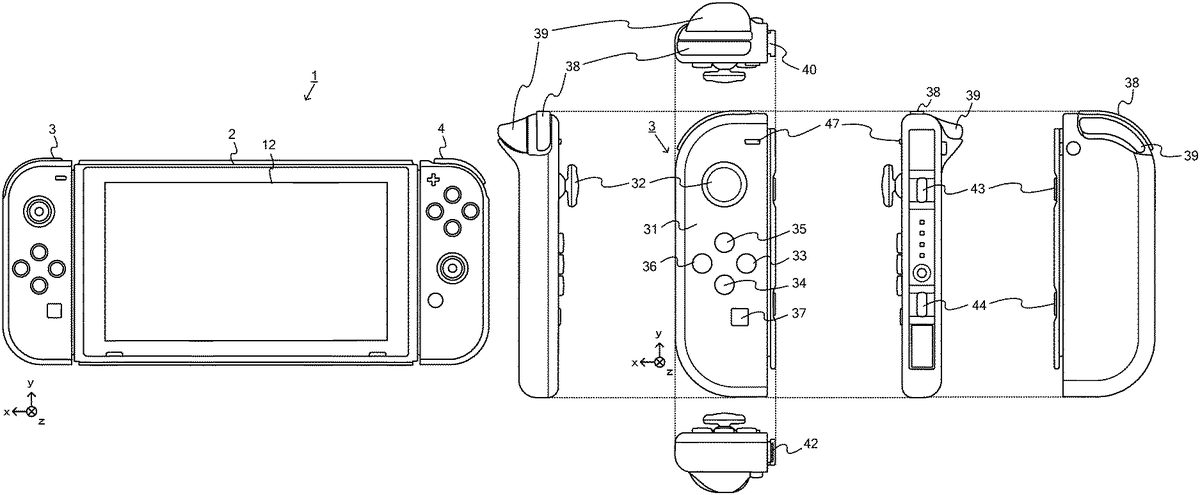

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS Before a spherical controller according to an exemplary embodiment is described, a description is given of a game system where the spherical controller is used. An example of a game system1according to the exemplary embodiment includes a main body apparatus (an information processing apparatus; which functions as a game apparatus main body in the exemplary embodiment)2, a left controller3, and a right controller4. Each of the left controller3and the right controller4is attachable to and detachable from the main body apparatus2. That is, the game system1can be used as a unified apparatus obtained by attaching each of the left controller3and the right controller4to the main body apparatus2. Further, in the game system1, the main body apparatus2, the left controller3, and the right controller4can also be used as separate bodies (seeFIG. 2). Hereinafter, first, the hardware configuration of the game system1according to the exemplary embodiment is described, and then, the control of the game system1according to the exemplary embodiment is described. FIG. 1is a diagram showing an example of the state where the left controller3and the right controller4are attached to the main body apparatus2. As shown inFIG. 1, each of the left controller3and the right controller4is attached to and unified with the main body apparatus2. The main body apparatus2is an apparatus for performing various processes (e.g., game processing) in the game system1. The main body apparatus2includes a display12. Each of the left controller3and the right controller4is an apparatus including operation sections with which a user provides inputs. FIG. 2is a diagram showing an example of the state where each of the left controller3and the right controller4is detached from the main body apparatus2. As shown inFIGS. 1 and 2, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. It should be ...

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS

Before a spherical controller according to an exemplary embodiment is described, a description is given of a game system where the spherical controller is used. An example of a game system1according to the exemplary embodiment includes a main body apparatus (an information processing apparatus; which functions as a game apparatus main body in the exemplary embodiment)2, a left controller3, and a right controller4. Each of the left controller3and the right controller4is attachable to and detachable from the main body apparatus2. That is, the game system1can be used as a unified apparatus obtained by attaching each of the left controller3and the right controller4to the main body apparatus2. Further, in the game system1, the main body apparatus2, the left controller3, and the right controller4can also be used as separate bodies (seeFIG. 2). Hereinafter, first, the hardware configuration of the game system1according to the exemplary embodiment is described, and then, the control of the game system1according to the exemplary embodiment is described.

FIG. 1is a diagram showing an example of the state where the left controller3and the right controller4are attached to the main body apparatus2. As shown inFIG. 1, each of the left controller3and the right controller4is attached to and unified with the main body apparatus2. The main body apparatus2is an apparatus for performing various processes (e.g., game processing) in the game system1. The main body apparatus2includes a display12. Each of the left controller3and the right controller4is an apparatus including operation sections with which a user provides inputs.

FIG. 2is a diagram showing an example of the state where each of the left controller3and the right controller4is detached from the main body apparatus2. As shown inFIGS. 1 and 2, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. It should be noted that hereinafter, the left controller3and the right controller4will occasionally be referred to collectively as a “controller”.

FIG. 3is six orthogonal views showing an example of the main body apparatus2. As shown inFIG. 3, the main body apparatus2includes an approximately plate-shaped housing11. In the exemplary embodiment, a main surface (in other words, a surface on a front side, i.e., a surface on which the display12is provided) of the housing11has a generally rectangular shape.

It should be noted that the shape and the size of the housing11are optional. As an example, the housing11may be of a portable size. Further, the main body apparatus2alone or the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2may function as a mobile apparatus. The main body apparatus2or the unified apparatus may function as a handheld apparatus or a portable apparatus.

As shown inFIG. 3, the main body apparatus2includes the display12, which is provided on the main surface of the housing11. The display12displays an image generated by the main body apparatus2. In the exemplary embodiment, the display12is a liquid crystal display device (LCD). The display12, however, may be a display device of any type.

Further, the main body apparatus2includes a touch panel13on a screen of the display12. In the exemplary embodiment, the touch panel13is of a type that allows a multi-touch input (e.g., a capacitive type). The touch panel13, however, may be of any type. For example, the touch panel13may be of a type that allows a single-touch input (e.g., a resistive type).

The main body apparatus2includes speakers (i.e., speakers88shown inFIG. 6) within the housing11. As shown inFIG. 3, speaker holes11aand11bare formed on the main surface of the housing11. Then, sounds output from the speakers88are output through the speaker holes11aand11b.

Further, the main body apparatus2includes a left terminal17, which is a terminal for the main body apparatus2to perform wired communication with the left controller3, and a right terminal21, which is a terminal for the main body apparatus2to perform wired communication with the right controller4.

As shown inFIG. 3, the main body apparatus2includes a slot23. The slot23is provided on an upper side surface of the housing11. The slot23is so shaped as to allow a predetermined type of storage medium to be attached to the slot23. The predetermined type of storage medium is, for example, a dedicated storage medium (e.g., a dedicated memory card) for the game system1and an information processing apparatus of the same type as the game system1. The predetermined type of storage medium is used to store, for example, data (e.g., saved data of an application or the like) used by the main body apparatus2and/or a program (e.g., a program for an application or the like) executed by the main body apparatus2. Further, the main body apparatus2includes a power button28.

The main body apparatus2includes a lower terminal27. The lower terminal27is a terminal for the main body apparatus2to communicate with a cradle. In the exemplary embodiment, the lower terminal27is a USB connector (more specifically, a female connector). Further, when the unified apparatus or the main body apparatus2alone is mounted on the cradle, the game system1can display on a stationary monitor an image generated by and output from the main body apparatus2. Further, in the exemplary embodiment, the cradle has the function of charging the unified apparatus or the main body apparatus2alone mounted on the cradle. Further, the cradle has the function of a hub device (specifically, a USB hub).

FIG. 4is six orthogonal views showing an example of the left controller3. As shown inFIG. 4, the left controller3includes a housing31. In the exemplary embodiment, the housing31has a vertically long shape, i.e., is shaped to be long in an up-down direction (i.e., a y-axis direction shown inFIGS. 1 and 4). In the state where the left controller3is detached from the main body apparatus2, the left controller3can also be held in the orientation in which the left controller3is vertically long. The housing31has such a shape and a size that when held in the orientation in which the housing31is vertically long, the housing31can be held with one hand, particularly the left hand. Further, the left controller3can also be held in the orientation in which the left controller3is horizontally long. When held in the orientation in which the left controller3is horizontally long, the left controller3may be held with both hands.

The left controller3includes an analog stick32. As shown inFIG. 4, the analog stick32is provided on a main surface of the housing31. The analog stick32can be used as a direction input section with which a direction can be input. The user tilts the analog stick32and thereby can input a direction corresponding to the direction of the tilt (and input a magnitude corresponding to the angle of the tilt). It should be noted that the left controller3may include a directional pad, a slide stick that allows a slide input, or the like as the direction input section, instead of the analog stick. Further, in the exemplary embodiment, it is possible to provide an input by pressing the analog stick32.

The left controller3includes various operation buttons. The left controller3includes four operation buttons33to36(specifically, a right direction button33, a down direction button34, an up direction button35, and a left direction button36) on the main surface of the housing31. Further, the left controller3includes a record button37and a “−” (minus) button47. The left controller3includes a first L-button38and a ZL-button39in an upper left portion of a side surface of the housing31. Further, the left controller3includes a second L-button43and a second R-button44, on the side surface of the housing31on which the left controller3is attached to the main body apparatus2. These operation buttons are used to give instructions depending on various programs (e.g., an OS program and an application program) executed by the main body apparatus2.

Further, the left controller3includes a terminal42for the left controller3to perform wired communication with the main body apparatus2.

FIG. 5is six orthogonal views showing an example of the right controller4. As shown inFIG. 5, the right controller4includes a housing51. In the exemplary embodiment, the housing51has a vertically long shape, i.e., is shaped to be long in the up-down direction. In the state where the right controller4is detached from the main body apparatus2, the right controller4can also be held in the orientation in which the right controller4is vertically long. The housing51has such a shape and a size that when held in the orientation in which the housing51is vertically long, the housing51can be held with one hand, particularly the right hand. Further, the right controller4can also be held in the orientation in which the right controller4is horizontally long. When held in the orientation in which the right controller4is horizontally long, the right controller4may be held with both hands.

Similarly to the left controller3, the right controller4includes an analog stick52as a direction input section. In the exemplary embodiment, the analog stick52has the same configuration as that of the analog stick32of the left controller3. Further, the right controller4may include a directional pad, a slide stick that allows a slide input, or the like, instead of the analog stick. Further, similarly to the left controller3, the right controller4includes four operation buttons53to56(specifically, an A-button53, a B-button54, an X-button55, and a Y-button56) on a main surface of the housing51. Further, the right controller4includes a “+” (plus) button57and a home button58. Further, the right controller4includes a first R-button60and a ZR-button61in an upper right portion of a side surface of the housing51. Further, similarly to the left controller3, the right controller4includes a second L-button65and a second R-button66.

Further, the right controller4includes a terminal64for the right controller4to perform wired communication with the main body apparatus2.

FIG. 6is a block diagram showing an example of the internal configuration of the main body apparatus2. The main body apparatus2includes components81to91,97, and98shown inFIG. 6in addition to the components shown inFIG. 3. Some of the components81to91,97, and98may be mounted as electronic components on an electronic circuit board and accommodated in the housing11.

The main body apparatus2includes a processor81. The processor81is an information processing section for executing various types of information processing to be executed by the main body apparatus2. For example, the processor81may be composed only of a CPU (Central Processing Unit), or may be composed of a SoC (System-on-a-chip) having a plurality of functions such as a CPU function and a GPU (Graphics Processing Unit) function. The processor81executes an information processing program (e.g., a game program) stored in a storage section (specifically, an internal storage medium such as a flash memory84, an external storage medium attached to the slot23, or the like), thereby performing the various types of information processing.

The main body apparatus2includes a flash memory84and a DRAM (Dynamic Random Access Memory)85as examples of internal storage media built into the main body apparatus2. The flash memory84and the DRAM85are connected to the processor81. The flash memory84is a memory mainly used to store various data (or programs) to be saved in the main body apparatus2. The DRAM85is a memory used to temporarily store various data used for information processing.

The main body apparatus2includes a slot interface (hereinafter abbreviated as “I/F”)91. The slot I/F91is connected to the processor81. The slot I/F91is connected to the slot23, and in accordance with an instruction from the processor81, reads and writes data from and to the predetermined type of storage medium (e.g., a dedicated memory card) attached to the slot23.

The processor81appropriately reads and writes data from and to the flash memory84, the DRAM85, and each of the above storage media, thereby performing the above information processing.

The main body apparatus2includes a network communication section82. The network communication section82is connected to the processor81. The network communication section82communicates (specifically, through wireless communication) with an external apparatus via a network. In the exemplary embodiment, as a first communication form, the network communication section82connects to a wireless LAN and communicates with an external apparatus, using a method compliant with the Wi-Fi standard. Further, as a second communication form, the network communication section82wirelessly communicates with another main body apparatus2of the same type, using a predetermined communication method (e.g., communication based on a unique protocol or infrared light communication). It should be noted that the wireless communication in the above second communication form achieves the function of enabling so-called “local communication” in which the main body apparatus2can wirelessly communicate with another main body apparatus2placed in a closed local network area, and the plurality of main body apparatuses2directly communicate with each other to transmit and receive data.

The main body apparatus2includes a controller communication section83. The controller communication section83is connected to the processor81. The controller communication section83wirelessly communicates with the left controller3and/or the right controller4. The communication method between the main body apparatus2and the left controller3and the right controller4is optional. In the exemplary embodiment, the controller communication section83performs communication compliant with the Bluetooth (registered trademark) standard with the left controller3and with the right controller4.

The processor81is connected to the left terminal17, the right terminal21, and the lower terminal27. When performing wired communication with the left controller3, the processor81transmits data to the left controller3via the left terminal17and also receives operation data from the left controller3via the left terminal17. Further, when performing wired communication with the right controller4, the processor81transmits data to the right controller4via the right terminal21and also receives operation data from the right controller4via the right terminal21. Further, when communicating with the cradle, the processor81transmits data to the cradle via the lower terminal27. As described above, in the exemplary embodiment, the main body apparatus2can perform both wired communication and wireless communication with each of the left controller3and the right controller4. Further, when the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2or the main body apparatus2alone is attached to the cradle, the main body apparatus2can output data (e.g., image data or sound data) to the stationary monitor or the like via the cradle.

Here, the main body apparatus2can communicate with a plurality of left controllers3simultaneously (in other words, in parallel). Further, the main body apparatus2can communicate with a plurality of right controllers4simultaneously (in other words, in parallel). Thus, a plurality of users can simultaneously provide inputs to the main body apparatus2, each using a set of the left controller3and the right controller4. As an example, a first user can provide an input to the main body apparatus2using a first set of the left controller3and the right controller4, and simultaneously, a second user can provide an input to the main body apparatus2using a second set of the left controller3and the right controller4.

The main body apparatus2includes a touch panel controller86, which is a circuit for controlling the touch panel13. The touch panel controller86is connected between the touch panel13and the processor81. Based on a signal from the touch panel13, the touch panel controller86generates, for example, data indicating the position where a touch input is provided. Then, the touch panel controller86outputs the data to the processor81.

Further, the display12is connected to the processor81. The processor81displays a generated image (e.g., an image generated by executing the above information processing) and/or an externally acquired image on the display12.

The main body apparatus2includes a codec circuit87and speakers (specifically, a left speaker and a right speaker)88. The codec circuit87is connected to the speakers88and a sound input/output terminal25and also connected to the processor81. The codec circuit87is a circuit for controlling the input and output of sound data to and from the speakers88and the sound input/output terminal25.

Further, the main body apparatus2includes an acceleration sensor89. In the exemplary embodiment, the acceleration sensor89detects the magnitudes of accelerations along predetermined three axial (e.g., xyz axes shown inFIG. 1) directions. It should be noted that the acceleration sensor89may detect an acceleration along one axial direction or accelerations along two axial directions.

Further, the main body apparatus2includes an angular velocity sensor90. In the exemplary embodiment, the angular velocity sensor90detects angular velocities about predetermined three axes (e.g., the xyz axes shown inFIG. 1). It should be noted that the angular velocity sensor90may detect an angular velocity about one axis or angular velocities about two axes.

The acceleration sensor89and the angular velocity sensor90are connected to the processor81, and the detection results of the acceleration sensor89and the angular velocity sensor90are output to the processor81. Based on the detection results of the acceleration sensor89and the angular velocity sensor90, the processor81can calculate information regarding the motion and/or the orientation of the main body apparatus2.

The main body apparatus2includes a power control section97and a battery98. The power control section97is connected to the battery98and the processor81. Further, although not shown inFIG. 6, the power control section97is connected to components of the main body apparatus2(specifically, components that receive power supplied from the battery98, the left terminal17, and the right terminal21). Based on a command from the processor81, the power control section97controls the supply of power from the battery98to the above components.

Further, the battery98is connected to the lower terminal27. When an external charging device (e.g., the cradle) is connected to the lower terminal27, and power is supplied to the main body apparatus2via the lower terminal27, the battery98is charged with the supplied power.

FIG. 7is a block diagram showing examples of the internal configurations of the main body apparatus2, the left controller3, and the right controller4. It should be noted that the details of the internal configuration of the main body apparatus2are shown inFIG. 6and therefore are omitted inFIG. 7.

The left controller3includes a communication control section101, which communicates with the main body apparatus2. As shown inFIG. 7, the communication control section101is connected to components including the terminal42. In the exemplary embodiment, the communication control section101can communicate with the main body apparatus2through both wired communication via the terminal42and wireless communication not via the terminal42. The communication control section101controls the method for communication performed by the left controller3with the main body apparatus2. That is, when the left controller3is attached to the main body apparatus2, the communication control section101communicates with the main body apparatus2via the terminal42. Further, when the left controller3is detached from the main body apparatus2, the communication control section101wirelessly communicates with the main body apparatus2(specifically, the controller communication section83). The wireless communication between the communication control section101and the controller communication section83is performed in accordance with the Bluetooth (registered trademark) standard, for example.

Further, the left controller3includes a memory102such as a flash memory. The communication control section101includes, for example, a microcomputer (or a microprocessor) and executes firmware stored in the memory102, thereby performing various processes.

The left controller3includes buttons103(specifically, the buttons33to39,43,44, and47). Further, the left controller3includes the analog stick (“stick” inFIG. 7)32. Each of the buttons103and the analog stick32outputs information regarding an operation performed on itself to the communication control section101repeatedly at appropriate timing.

The left controller3includes inertial sensors. Specifically, the left controller3includes an acceleration sensor104. Further, the left controller3includes an angular velocity sensor105. In the exemplary embodiment, the acceleration sensor104detects the magnitudes of accelerations along predetermined three axial (e.g., xyz axes shown inFIG. 4) directions. It should be noted that the acceleration sensor104may detect an acceleration along one axial direction or accelerations along two axial directions. In the exemplary embodiment, the angular velocity sensor105detects angular velocities about predetermined three axes (e.g., the xyz axes shown inFIG. 4). It should be noted that the angular velocity sensor105may detect an angular velocity about one axis or angular velocities about two axes. Each of the acceleration sensor104and the angular velocity sensor105is connected to the communication control section101. Then, the detection results of the acceleration sensor104and the angular velocity sensor105are output to the communication control section101repeatedly at appropriate timing.

The communication control section101acquires information regarding an input (specifically, information regarding an operation or the detection result of the sensor) from each of input sections (specifically, the buttons103, the analog stick32, and the sensors104and105). The communication control section101transmits operation data including the acquired information (or information obtained by performing predetermined processing on the acquired information) to the main body apparatus2. It should be noted that the operation data is transmitted repeatedly, once every predetermined time. It should be noted that the interval at which the information regarding an input is transmitted from each of the input sections to the main body apparatus2may or may not be the same.

The above operation data is transmitted to the main body apparatus2, whereby the main body apparatus2can obtain inputs provided to the left controller3. That is, the main body apparatus2can determine operations on the buttons103and the analog stick32based on the operation data. Further, the main body apparatus2can calculate information regarding the motion and/or the orientation of the left controller3based on the operation data (specifically, the detection results of the acceleration sensor104and the angular velocity sensor105).

The left controller3includes a vibrator107for giving notification to the user by a vibration. In the exemplary embodiment, the vibrator107is controlled by a command from the main body apparatus2. That is, if receiving the above command from the main body apparatus2, the communication control section101drives the vibrator107in accordance with the received command. Here, the left controller3includes a codec section106. If receiving the above command, the communication control section101outputs a control signal corresponding to the command to the codec section106. The codec section106generates a driving signal for driving the vibrator107from the control signal from the communication control section101and outputs the driving signal to the vibrator107. Consequently, the vibrator107operates.

More specifically, the vibrator107is a linear vibration motor. Unlike a regular motor that rotationally moves, the linear vibration motor is driven in a predetermined direction in accordance with an input voltage and therefore can be vibrated at an amplitude and a frequency corresponding to the waveform of the input voltage. In the exemplary embodiment, a vibration control signal transmitted from the main body apparatus2to the left controller3may be a digital signal representing the frequency and the amplitude every unit of time. In another exemplary embodiment, the main body apparatus2may transmit information indicating the waveform itself. The transmission of only the amplitude and the frequency, however, enables a reduction in the amount of communication data. Additionally, to further reduce the amount of data, only the differences between the numerical values of the amplitude and the frequency at that time and the previous values may be transmitted, instead of the numerical values. In this case, the codec section106converts a digital signal indicating the values of the amplitude and the frequency acquired from the communication control section101into the waveform of an analog voltage and inputs a voltage in accordance with the resulting waveform, thereby driving the vibrator107. Thus, the main body apparatus2changes the amplitude and the frequency to be transmitted every unit of time and thereby can control the amplitude and the frequency at which the vibrator107is to be vibrated at that time. It should be noted that not only a single amplitude and a single frequency, but also two or more amplitudes and two or more frequencies may be transmitted from the main body apparatus2to the left controller3. In this case, the codec section106combines waveforms indicated by the plurality of received amplitudes and frequencies and thereby can generate the waveform of a voltage for controlling the vibrator107.

The left controller3includes a power supply section108. In the exemplary embodiment, the power supply section108includes a battery and a power control circuit. Although not shown inFIG. 7, the power control circuit is connected to the battery and also connected to components of the left controller3(specifically, components that receive power supplied from the battery).

As shown inFIG. 7, the right controller4includes a communication control section111, which communicates with the main body apparatus2. Further, the right controller4includes a memory112, which is connected to the communication control section111. The communication control section111is connected to components including the terminal64. The communication control section111and the memory112have functions similar to those of the communication control section101and the memory102, respectively, of the left controller3. Thus, the communication control section111can communicate with the main body apparatus2through both wired communication via the terminal64and wireless communication not via the terminal64(specifically, communication compliant with the Bluetooth (registered trademark) standard). The communication control section111controls the method for communication performed by the right controller4with the main body apparatus2.

The right controller4includes input sections similar to the input sections of the left controller3. Specifically, the right controller4includes buttons113, the analog stick52, and inertial sensors (an acceleration sensor114and an angular velocity sensor115). These input sections have functions similar to those of the input sections of the left controller3and operate similarly to the input sections of the left controller3.

Further, the right controller4includes a vibrator117and a codec section116. The vibrator117and the codec section116operate similarly to the vibrator107and the codec section106, respectively, of the left controller3. That is, in accordance with a command from the main body apparatus2, the communication control section111causes the vibrator117to operate, using the codec section116.

The right controller4includes a power supply section118. The power supply section118has a function similar to that of the power supply section108of the left controller3and operates similarly to the power supply section108.

As describe above, in the game system1according to the exemplary embodiment, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. Further, the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2or the main body apparatus2alone is attached to the cradle and thereby can output an image (and a sound) to the stationary monitor6.

Next, the spherical controller according to an example of the exemplary embodiment is described. In the exemplary embodiment, the spherical controller can be used, instead of the controllers3and4, as an operation device for giving an instruction to the main body apparatus2, and can also be used together with the controllers3and/or4. The details of the spherical controller are described below.

FIG. 8is a perspective view showing an example of the spherical controller.FIG. 8is a top front perspective view of a spherical controller200. As shown inFIG. 8, the spherical controller200includes a spherical controller main body portion201and a strap portion202. For example, the user uses the spherical controller200in the state where the user holds the controller main body portion201while hanging the strap portion202from their arm.

Here, in the following description of the spherical controller200(specifically, the controller main body portion201), an up-down direction, a left-right direction, and a front-back direction are defined as follows (seeFIG. 8). That is, the direction from the center of the spherical controller main body portion201to a joystick212is a front direction (i.e., a negative z-axis direction shown inFIG. 8), and a direction opposite to the front direction is a back direction (i.e., a positive z-axis direction shown inFIG. 8). Further, a direction that matches the direction from the center of the controller main body portion201to the center of an operation surface213when viewed from the front-back direction is an up direction (i.e., a positive y-axis direction shown inFIG. 8), and a direction opposite to the up direction is a down direction (i.e., a negative y-axis direction shown inFIG. 8). Further, the direction from the center of the controller main body portion201to a position at the right end of the controller main body portion201as viewed from the front side is a right direction (i.e., a positive x-axis direction shown inFIG. 8), and a direction opposite to the right direction is a left direction (i.e., a negative x-axis direction shown inFIG. 8). It should be noted that the up-down direction, the left-right direction, and the front-back direction are orthogonal to each other.

FIG. 9is six orthogonal views showing an example of the controller main body portion. InFIG. 9, (a) is a front view, (b) is a right side view, (c) is a left side view, (d) is a plan view, (e) is a bottom view, and (0is a rear view.

As shown inFIG. 9, the controller main body portion201has a spherical shape. Here, the “spherical shape” means a shape of which the external appearance looks roughly like a sphere. The spherical shape may be a true spherical shape, or may be a shape having a true spherical surface with a missing portion and/or a shape having a true spherical surface with a protruding portion. The spherical shape may be so shaped that a part of the surface of the spherical shape is not a spherical surface. Alternatively, the spherical shape may be a shape obtained by slightly distorting a true sphere.

As shown inFIG. 9, the controller main body portion201includes a spherical casing211. In the exemplary embodiment, the controller main body portion201(in other words, the casing211) is of such a size that the user can hold the controller main body portion201with one hand (seeFIG. 10). The diameter of the casing211is set in the range of 4 cm to 10 cm, for example.

In the exemplary embodiment, the casing211is so shaped that a part of a sphere is notched, and a part of the sphere has a hole. To provide an operation section (e.g., the joystick212and a restart button214) on the casing211or attach another component (e.g., the strap portion202) to the casing211, a hole is provided in the casing211.

Specifically, in the exemplary embodiment, a front end portion of the casing211is a flat surface (a front end surface) (see (b) to (e) ofFIG. 9). It can be said that the casing211has a shape obtained by cutting a sphere along a flat surface including the front end surface, thereby cutting off a front end portion of the sphere. As shown inFIG. 10, an opening211ais provided on the front end surface of the casing211, and the joystick212, which is an example of a direction input section, is provided, exposed through the opening211a. In the exemplary embodiment, the shape of the opening211ais a circle. In another exemplary embodiment, the shape of the opening211ais any shape. For example, the opening211amay be polygonal (specifically, triangular, rectangular, pentagonal, or the like), elliptical, or star-shaped.

The joystick212includes a shaft portion that can be tilted in any direction by the user. Further, the joystick212is a joystick of a type that allows the operation of pushing down the shaft portion, in addition to the operation of tilting the shaft portion. It should be noted that in another exemplary embodiment, the joystick212may be an input device of another type. It should be noted that in the exemplary embodiment, the joystick212is used as an example of a direction input section provided in a game controller.

The joystick212is provided in the front end portion of the casing211. As shown inFIG. 9, the joystick212is provided such that a part of the joystick212(specifically, the shaft portion) is exposed through the opening211aof the casing211. Thus, the user can easily perform the operation of tilting the shaft portion. It should be noted that in another exemplary embodiment, the joystick212may be exposed through the opening211aprovided in the flat surface, and may not be provided protruding from the flat surface. The position of the joystick212is the center of the spherical controller main body portion201in the up-down direction and the left-right direction (see (a) ofFIG. 9). As described above, the user can perform a direction input operation for tilting the shaft portion using a game controller of which the outer shape is spherical. That is, according to the exemplary embodiment, it is possible to perform a more detailed operation using a game controller of which the outer shape is spherical.

Further, as shown in (d) ofFIG. 9, the operation surface213is provided in an upper end portion of the casing211. The position of the operation surface213is the center of the spherical controller main body portion201in the left-right direction and the front-back direction (see (d) ofFIG. 9). In the exemplary embodiment, the operation surface213(in other words, the outer circumference of the operation surface213) has a circular shape formed on the spherical surface of the casing211. In another exemplary embodiment, however, the shape of the operation surface213is any shape, and may be a rectangle or a triangle, for example. Although the details will be described later, the operation surface213is configured to be pressed from the above.

In the exemplary embodiment, the operation surface213is formed in a unified manner with the surface of the casing211. The operation surface213is a part of an operation section (also referred to as an “operation button”) that allows a push-down operation. The operation surface213, however, can also be said to be a part of the casing211because the operation surface213is formed in a unified manner with a portion other than the operation surface213of the casing211. It should be noted that in the exemplary embodiment, the operation surface213can be deformed by being pushed down. An operation section including the operation surface213is input (i.e., an input is provided to the operation section) by pushing down the operation surface213.

With reference toFIG. 10, the positional relationship between the joystick212and the operation surface213is described below.FIG. 10is a diagram showing an example of the state where the user holds the controller main body portion. As shown inFIG. 10, the user can operate the joystick212with their thumb and operate the operation surface213with their index finger in the state where the user holds the controller main body portion201with one hand. It should be noted thatFIG. 10shows as an example a case where the user holds the controller main body portion201with their left hand. However, also in a case where the user holds the controller main body portion201with their right hand, similarly to the case where the user holds the controller main body portion201with their left hand, the user can operate the joystick212with their right thumb and operate the operation surface213with their right index finger.

As described above, in the exemplary embodiment, the operation surface213that allows a push-down operation is provided. Consequently, using a game controller of which the outer shape is spherical, the user can perform both a direction input operation using the joystick and a push-down operation on the operation surface213. Consequently, it is possible to perform various operations using a game controller of which the outer shape is spherical.

Further, the controller main body portion201includes the restart button214. The restart button214is a button for giving an instruction to restart the spherical controller200. As shown in (c) and (f) ofFIG. 9, the restart button214is provided at a position on the left side of the back end of the casing211. The position of the restart button214in the up-down direction is the center of the spherical controller main body portion201. The position of the restart button214in the front-back direction is a position behind the center of the spherical controller main body portion201. It should be noted that in another exemplary embodiment, the position of the restart button214is any position. For example, the restart button214may be provided at any position on the back side of the casing211.

Further, in the exemplary embodiment, a light-emitting section (i.e., a light-emitting section248shown inFIG. 11) is provided inside the casing211, and light is emitted from the opening211aof the casing211to outside the casing211. For example, if the light-emitting section248within the casing211emits light, light having passed through a light-guiding portion (not shown) is emitted from the opening211ato outside the casing211, and a portion around the joystick212appears to shine. As an example, the light-emitting section248includes three light-emitting elements (e.g., LEDs). The light-emitting elements emit beams of light of colors different from each other. Specifically, a first light-emitting element emits red light, a second light-emitting element emits green light, and a third light-emitting element emits blue light. Beams of light from the respective light-emitting elements of the light-emitting section248travel in the light-guiding portion and are emitted from the opening211a. At this time, the beams of light of the respective colors from the respective light-emitting elements are emitted in a mixed manner from the opening211a. Thus, light obtained by mixing the colors is emitted from the opening211a. This enables the spherical controller200to emit beams of light of various colors. It should be noted that in the exemplary embodiment, the light-emitting section248includes three light-emitting elements. In another exemplary embodiment, the light-emitting section248may include two or more light-emitting elements, or may include only one light-emitting element.

Further, in the exemplary embodiment, a vibration section271is provided within the casing211. The vibration section271is a vibrator that generates a vibration, thereby vibrating the casing211. For example, the vibration section271is a voice coil motor. That is, the vibration section271can generate a vibration in accordance with a signal input to the vibration section271itself and can also generate a sound in accordance with the signal. For example, when a signal having a frequency in the audible range is input to the vibration section271, the vibration section271generates a vibration and also generates a sound (i.e., an audible sound). For example, when a sound signal indicating the voice (or the cry) of a character that appears in a game is input to the vibration section271, the vibration section271outputs the voice (or the cry) of the character. Further, when a signal having a frequency outside the audible range is input to the vibration section271, the vibration section271generates a vibration. It should be noted that a signal to be input to the vibration section271can be said to be a signal indicating the waveform of a vibration that should be performed by the vibration section271, or can also be said to be a sound signal indicating the waveform of a sound that should be output from the vibration section271. A signal to be input to the vibration section271may be a vibration signal intended to cause the vibration section271to perform a vibration having a desired waveform, or may be a sound signal intended to cause the vibration section271to output a desired sound, or may be a signal intended to both cause the vibration section271to output a desired sound and cause the vibration section271to perform a vibration having a desired waveform. In the exemplary embodiment, sound data (catch target reproduction data and common reproduction data) for causing the vibration section271to output a sound is stored within the casing211. The sound data, however, includes at least a sound signal having a frequency in the audible range for causing the vibration section271to output a desired sound, and may include a vibration signal having a frequency outside the audible range for causing the vibration section271to perform a vibration having a desired waveform.

As described above, in the exemplary embodiment, the vibration section271can output a vibration and a sound. Thus, it is possible to output a vibration and a sound from the spherical controller200and also simplify the internal configuration of the controller main body portion201. If such effects are not desired, a speaker (a sound output section) for outputting a sound and a vibrator (a vibration output section) for performing a vibration may be provided separately from each other in the spherical controller200. It should be noted that in the exemplary embodiment, the vibration section271is used as an example of a sound output section. The sound output section may double as a vibration section, or the sound output section and the vibration section may be provided separately.

Further, in the exemplary embodiment, the spherical controller200includes an inertial sensor247(e.g., an acceleration sensor and/or an angular velocity sensor) provided near the center of the casing211. Based on this, the inertial sensor247can detect accelerations in three axial directions, namely the up-down direction, the left-right direction, and the front-back direction under equal conditions and/or angular velocities about the three axial directions under equal conditions. This can improve the acceleration detection accuracy and/or the angular velocity detection accuracy of the inertial sensor247.

FIG. 11is a block diagram showing an example of the electrical connection relationship of the spherical controller200. As shown inFIG. 11, the spherical controller200includes a control section321and a memory324. The control section321includes a processor. In the exemplary embodiment, the control section321controls a communication process with the main body apparatus2, controls a vibration and a sound to be output from the vibration section271, controls light to be emitted from the light-emitting section248, or controls the supply of power to electrical components shown inFIG. 11. The memory324is composed of a flash memory or the like, and the control section321executes firmware stored in the memory324, thereby executing various processes. Further, in the memory324, sound data for outputting a sound from the vibration section271(a voice coil motor) and light emission data for emitting beams of light of various colors from the light-emitting section248may be stored. It should be noted that in the memory324, data used in a control operation may be stored, or data used in an application (e.g., a game application) using the spherical controller200that is executed by the main body apparatus2may be stored.

The control section321is electrically connected to input means included in the spherical controller200. In the exemplary embodiment, the spherical controller200includes as the input means the joystick212, a sensing circuit322, the inertial sensor247, and a button sensing section258. The sensing circuit322is a sensing circuit that senses that an operation on the operation surface213is performed. In the button sensing section258, a contact that senses an operation on the restart button214, and a sensing circuit that senses that the restart button214comes into contact with the contact are provided. The control section321acquires, from the input means, information regarding (in other words, data) an operation performed on the input means.

The control section321is electrically connected to a communication section323. The communication section323includes an antenna and wirelessly communicates with the main body apparatus2. That is, the control section321transmits information (in other words, data) to the main body apparatus2using the communication section323(in other words, via the communication section323) and receives information (in other words, data) from the main body apparatus2using the communication section323. For example, the control section321transmits information acquired from the joystick212, the sensing circuit322, and the inertial sensor247to the main body apparatus2via the communication section323. It should be noted that in the exemplary embodiment, the communication section323(and/or the control section321) functions as a transmission section that transmits information regarding an operation on the joystick212to the main body apparatus2. Further, the communication section323(and/or the control section321) functions as a transmission section that transmits information regarding an operation on the operation surface213to the main body apparatus2. Further, the communication section323(and/or the control section321) functions as a transmission section that transmits, to the main body apparatus2, information output from the inertial sensor247. In the exemplary embodiment, the communication section323performs communication compliant with the Bluetooth (registered trademark) standard with the main body apparatus2. Further, in the exemplary embodiment, as an example of reception means of a game controller, the communication section323(and/or the control section321) is used. The communication section323(and/or the control section321) receives, from the main body apparatus2, sound/vibration data indicating a waveform for causing the vibration section271to vibrate or output a sound, and the like.

It should be noted that in another exemplary embodiment, the communication section323may perform wired communication, instead of wireless communication, with the main body apparatus2. Further, the communication section323may have both the function of wirelessly communicating with the main body apparatus2and the function of performing wired communication with the main body apparatus2.

The control section321is electrically connected to output means included in the spherical controller200. In the exemplary embodiment, the spherical controller200includes the vibration section271and the light-emitting section248as the output means. The control section321controls the operation of the output means. For example, the control section321may reference information acquired from the input means, thereby controlling the operation of the output means in accordance with an operation on the input means. For example, in accordance with the fact that the operation surface213is pressed, the control section321may cause the vibration section271to vibrate or cause the light-emitting section248to emit light. Further, based on information received from the main body apparatus2via the communication section323, the control section321may control the operation of the output means. That is, in accordance with a control command from the main body apparatus2, the control section321may cause the vibration section271to vibrate or cause the light-emitting section248to emit light. Further, the main body apparatus2may transmit to the spherical controller200a signal indicating a waveform for causing the vibration section271to vibrate or output a sound, and the control section321may cause the vibration section271to vibrate or output a sound in accordance with the waveform. That is, the antenna of the communication section323may receive from outside (i.e., the main body apparatus2) a signal for causing the vibration section271to vibrate, and the vibration section271may vibrate based on the signal received by the antenna. It should be noted that in the exemplary embodiment, since the vibration section271is a voice coil motor capable of outputting a vibration and a sound, the control section321can output a vibration and a sound from the vibration section271in accordance with the above waveform.

The control section321is electrically connected to a rechargeable battery244provided in the spherical controller200. The control section321controls the supply of power from the rechargeable battery244to each piece of the input means, each piece of the output means, and the communication section. It should be noted that the rechargeable battery244may be directly connected to each piece of the input means, each piece of the output means, and the communication section. In the exemplary embodiment, based on information acquired from the button sensing section258(i.e., information indicating whether or not the restart button214is pressed), the control section321controls the above supply of power. Specifically, when the restart button214is pressed (in other words, while the restart button214is pressed), the control section321stops the supply of power from the rechargeable battery244to each piece of the input means, each piece of the output means, and the communication section. Further, when the restart button214is not pressed (in other words, while the restart button214is not pressed), the control section321supplies power from the rechargeable battery244to each piece of the input means, each piece of the output means, and the communication section. As described above, in the exemplary embodiment, the restart button214is a button for giving an instruction to restart (in other words, reset) the spherical controller200. The restart button214can also be said to be a button for giving an instruction to control the on state and the off state of the power supply of the spherical controller200.

Further, the rechargeable battery244is electrically connected to a charging terminal249provided on the outer peripheral surface of the spherical controller200. The charging terminal249is a terminal for connecting to a charging device (e.g., an AC adapter or the like) (not shown). In the exemplary embodiment, the charging terminal249is a USB connector (more specifically, a female connector). In the exemplary embodiment, when a charging device to which mains electricity is supplied is electrically connected to the charging terminal249, power is supplied to the rechargeable battery244via the charging terminal249, thereby charging the rechargeable battery244.

A description is given below using a game system where an operation is performed using the spherical controller200in a use form in which an image (and a sound) is output to the stationary monitor6by attaching the main body apparatus2alone to the cradle in the state where the left controller3and the right controller4are detached from the main body apparatus2.

As described above, in the exemplary embodiment, the game system1can also be used in the state where the left controller3and the right controller4are detached from the main body apparatus2(referred to as a “separate state”). As a form in a case where an operation is performed on an application (e.g., a game application) using the game system1in the separate state, a form is possible in which one or more users each use the left controller3and/or the right controller4, and a form is also possible in which one or more users each use one or more spherical controllers200. Further, when a plurality of users perform operations using the same application, play is also possible in which a user performing an operation using the left controller3and/or the right controller4and a user performing an operation using the spherical controller200.

With reference toFIGS. 12 to 16, a description is given of a game where the game system1is used. It should be noted thatFIGS. 12 to 16are diagrams showing examples of the state where a single user performs a game where the single user uses the game system1by operating the spherical controller200.

For example, as shown inFIGS. 12 to 16, the user can view an image displayed on the stationary monitor6while performing an operation by holding the spherical controller200with one hand. Then, in this exemplary game, the user can perform a tilt operation or a push-in operation on the joystick212with their thumb and perform a push-down operation on the operation surface213with their index finger in the state where the user holds the controller main body portion201of the spherical controller200with one hand. That is, the user can perform both a direction input operation and a push-in operation using the joystick, and a push-down operation on the operation surface213, using a game controller of which the outer shape is spherical. Further, in the state where the spherical controller200is held with one hand, the spherical controller200is moved in up, down, left, right, front, and back directions, rotated, or swung, whereby game play is performed in accordance with the motion or the orientation of the spherical controller200. Then, in the above game play, the inertial sensor247of the spherical controller200can detect accelerations in the xyz-axis directions and/or angular velocities about the xyz-axis directions as operation inputs.

Further, when game play is performed by the user holding the spherical controller200, a sound is output and a vibration is imparted from the spherical controller200in accordance with the situation of the game. As described above, the spherical controller200includes the vibration section271(a voice coil motor) capable of outputting a sound. The processor81of the main body apparatus2transmits sound data and/or vibration data to the spherical controller200in accordance with the situation of the game that is being executed by the processor81, and thereby can output a sound and a vibration from the vibration section271at an amplitude and a frequency corresponding to the sound data and/or the vibration data.

FIGS. 12 to 16show examples of game images displayed in a game played by operating the spherical controller200. In this exemplary game, a game is performed where the actions of a player character PC and a ball object B are controlled by operating the spherical controller200, and characters placed in a virtual space are caught. Then, an image of the virtual space indicating the game situation is displayed on the stationary monitor6.

For example, as shown inFIG. 12, in this exemplary game, by performing a tilt operation on the joystick212of the spherical controller200, it is possible to move the player character PC in the virtual space and search for a character placed in the virtual space. Then, when the player character PC encounters a character placed in the virtual space, the character is set as a catch target character HC, and a catch game where the player character PC catches the catch target character HC is started. It should be noted that a plurality of types of characters that the player character PC can encounter are set, and one of the plurality of types of characters is selected as the catch target character HC. It should be noted that the catch target character HC, the player character PC, and the like that appear in this game can also be said to be virtual objects placed in the virtual space. Further, the ball object B and the like that appear in this game function as game characters that appear in the virtual space. Further, in the exemplary embodiment, as an example of a game character as a target to be caught, the catch target character HC is used, and as an example of an object that resembles the external appearance of the game controller, the ball object B is used.

In this exemplary game, when the catch target character HC to be caught by the player character PC is set, the game shifts to a catch game mode. In the catch game mode, a game image is displayed in which an image of the virtual space where the catch target character HC is placed near the center of the virtual space is displayed on the stationary monitor6, and the ball object B flies off toward the catch target character HC by performing the operation of throwing the spherical controller200.

As shown inFIG. 13, in this exemplary game, as a preparation operation for performing the operation of throwing the spherical controller200, the operation of holding up the spherical controller200is performed. For example, in this exemplary game, the hold-up operation is performed by performing the operation of pushing in the joystick212of the spherical controller200. When the hold-up operation is performed, a catch timing image TM is displayed in the periphery of the catch target character HC displayed near the center. Here, the catch timing image TM is an image indicating to the user an appropriate catch operation timing for the catch target character HC. As an example, the size of a ring is sequentially changed, and at the timing when the size becomes a predetermined size (e.g., a minimum size), it is indicated that it is highly likely that the catch of the catch target character HC is successful by performing the operation of throwing the spherical controller200.

Further, in this exemplary game, when the operation of holding up the spherical controller200is performed, then in accordance with a reproduction instruction from the main body apparatus2, the sound of holding up the ball (e.g., the sound of gripping the ball, “creak”) is emitted from the spherical controller200. It should be noted that sound data indicating the sound of holding up the ball is written in advance in storage means (e.g., the memory324) in the spherical controller200, and in accordance with a reproduction instruction from the main body apparatus2, the sound data is reproduced by the vibration section271.

Further, in this exemplary game, the operation of holding up the spherical controller200is performed, whereby the ball object B representing the external appearance of the spherical controller200is displayed in the virtual space. In accordance with the fact that the operation of holding up the spherical controller200is performed, the ball object B is displayed, initially placed at a position determined in advance by an orientation determined in advance. Then, to correspond to changes in the position and/or the orientation of the spherical controller200in real space after the operation of holding up the spherical controller200is performed, the ball object B is displayed by changing the position and/or the orientation of the ball object B in the virtual space. It should be noted that the motion of the displayed ball object B does not need to completely match the position and/or the orientation of the spherical controller200in real space. For example, the motion of the displayed ball object B may be at a level that the motion relatively resembles the position and/or the orientation of the spherical controller200in the motion before and after the position and/or the orientation of the spherical controller200in real space change.

As shown inFIG. 14, in this exemplary game, the controller main body portion201of the spherical controller200is moved by swinging the controller main body portion201(e.g., swinging down the controller main body portion201from top to bottom), whereby the above throw operation is performed. As an example, when the magnitudes of accelerations detected by the inertial sensor247of the spherical controller200exceed a predetermined threshold, it is determined that the operation of throwing the spherical controller200is performed. When the throw operation is performed, the catch timing image TM is erased, and the state where the ball object B flies off toward the catch target character HC is displayed. It should be noted that the trajectory of the ball object B moving in the virtual space may be a trajectory determined in advance from the position of the ball object B displayed at the time when the throw operation is performed to the placement position of the catch target character HC, or the trajectory may change in accordance with the content of the throw operation (e.g., the magnitudes of accelerations generated in the spherical controller200).

Further, in this exemplary game, in accordance with the fact that the operation of throwing the spherical controller200is performed, the main body apparatus2determines the success or failure of the catch of the catch target character HC. For example, based on the timing when the operation of throwing the spherical controller200is performed (e.g., the size of the catch timing image TM at the time when the throw operation is performed), the content of the throw operation (e.g., the magnitudes of accelerations generated in the spherical controller200), the level of difficulty of the catch of the catch target character HC, the empirical value of the player character PC, the number of catch tries, and the like, the main body apparatus2determines the success or failure of the catch of the catch target character HC.

Further, in this exemplary game, when the operation of throwing the spherical controller200is performed, then in accordance with a reproduction instruction from the main body apparatus2, the sound of the ball flying off (e.g., the sound of the ball flying off, “whiz”) is emitted from the spherical controller200. It should be noted that sound data indicating the sound of the ball flying off is written in advance in the storage means (e.g., the memory324) in the spherical controller200, and in accordance with a reproduction instruction from the main body apparatus2, the sound data is reproduced by the vibration section271.

Further, in this exemplary game, when the operation of throwing the spherical controller200is performed, the spherical controller200vibrates in accordance with a reproduction instruction from the main body apparatus2. It should be noted that vibration data for causing the spherical controller200to vibrate is written in advance in the storage means (e.g., the memory324) in the spherical controller200with the sound data indicating the sound of the ball flying off, and in accordance with a reproduction instruction from the main body apparatus2, the vibration data is reproduced by the vibration section271. Here, the sound data written in the storage means in the spherical controller200together with the vibration data includes a sound signal having a frequency in the audible range for causing the vibration section271to output the sound of the ball flying off, and also includes a vibration signal having a frequency outside the audible range for causing the vibration section271to perform a vibration corresponding to the throw operation. A sound/vibration signal including both the sound signal and the vibration signal is input to the vibration section271, whereby the above sound and the above vibration are simultaneously emitted from the vibration section271.

It should be noted that in the exemplary embodiment, regarding a signal (a waveform) to be reproduced by the vibration section271, even a signal in the purpose of outputting a sound having a frequency in the audible range for outputting a sound, the vibration section271can output a sound in accordance with the signal, thereby imparting a weak vibration to the controller main body portion201of the spherical controller200. Further, in the exemplary embodiment, regarding a signal (a waveform) to be reproduced by the vibration section271, even a signal in the purpose of outputting a vibration having a frequency outside the audible range for performing a vibration, the vibration section271can vibrate in accordance with the signal, whereby a small sound may be emitted from the spherical controller200. That is, even when a signal including one of the above sound signal and the above vibration signal is input to the vibration section271, a sound and a vibration can be simultaneously emitted from the vibration section271.

As shown inFIG. 15, in this exemplary game, after the state where the ball object B flies off toward the catch target character HC is displayed on the stationary monitor6, a game image I indicating the state where the ball object B hits the catch target character HC is displayed. For example, in the example ofFIG. 15, “Nice!” indicating that the ball object B hits the catch target character HC in a favorable state is displayed as a game image I. It should be noted that the state where the ball object B hits the catch target character HC may be set by the main body apparatus2based on the success or failure of the catch of the catch target character HC, or may be set by the main body apparatus2based on the content of the operation of throwing the spherical controller200. Further, the state where the ball object B does not hit the catch target character HC may be displayed. For example, in accordance with the strength of the operation of throwing the spherical controller200(e.g., the relative magnitudes of accelerations generated in the spherical controller200), an image may be displayed in which the ball object B stops moving on the near side of the catch target character HC, or the ball object B flies off beyond the catch target character HC.

Further, in this exemplary game, when the ball object B hits the catch target character HC, then in accordance with a reproduction instruction from the main body apparatus2, the sound of the ball hitting the catch target character HC (e.g., the sound of the ball hitting a character, “crash!”) is emitted from the spherical controller200. It should be noted that sound data indicating the sound of the ball hitting the character is also written in advance in the storage means (e.g., the memory324) in the spherical controller200, and in accordance with a reproduction instruction from the main body apparatus2, the sound data is reproduced by the vibration section271.

Further, in this exemplary game, various representations may be performed during the period until the user is notified of the success or failure of the catch of the catch target character HC. For example, in this exemplary game, a representation that after the ball object B hits the catch target character HC, the catch target character HC enters the ball object B, a representation that after the catch target character HC enters the ball object B, the ball object B closes, a representation that the ball object B that the catch target character HC has entered falls to the ground in the virtual space, a representation that the ball object B that the catch target character HC has entered intermittently shakes multiple times on the ground in the virtual space, and the like may be performed. Further, in this exemplary game, when each of the above representations is performed, then in accordance with a reproduction instruction from the main body apparatus2, a sound corresponding to the representation may be emitted from the spherical controller200. It should be noted that sound data indicating the sounds corresponding to these representations is also written in advance in the storage means (e.g., the memory324) in the spherical controller200, and in accordance with a reproduction instruction from the main body apparatus2, the sound data is reproduced by the vibration section271. Further, in this exemplary game, when each of the above representations is performed, the state where a light-emitting part C as a part of the ball object B lights up or blinks in a predetermined color may be displayed on the stationary monitor6, and in accordance with a reproduction instruction from the main body apparatus2, light corresponding to the representation may also be output from the spherical controller200. It should be noted that light emission color data indicating the beams of light corresponding to these representations is written in advance in the storage means (e.g., the memory324) in the spherical controller200, and in accordance with a reproduction instruction from the main body apparatus2, the light-emitting section248emits light in a color indicated by the light emission color data.

It should be noted that in the representation that the ball object B that the catch target character HC has entered intermittently shakes multiple times on the ground in the virtual space, a reproduction instruction is intermittently given multiple times by the main body apparatus2, and in accordance with the reproduction instruction, the sound of the ball shaking is emitted from the spherical controller200, and the spherical controller200also vibrates. It should be noted that sound data indicating the sound of the ball shaking and the vibration of the ball shaking is also written in advance in the storage means (e.g., the memory324) in the spherical controller200, and in accordance with a reproduction instruction from the main body apparatus2, the sound data is reproduced by the vibration section271. Here, the sound data written in the storage means in the spherical controller200includes a sound signal having a frequency in the audible range for causing the vibration section271to output the sound of the ball shaking, and also includes a vibration signal having a frequency outside the audible range for causing the vibration section271to perform a vibration for shaking. The sound signal and the vibration signal are simultaneously input to the vibration section271, whereby the above sound and the above vibration are simultaneously emitted from the vibration section271.

As shown inFIG. 16, in this exemplary game, through the above representations, an image notifying the user of the success or failure of the catch of the catch target character HC is displayed on the stationary monitor6. As a first stage where the user is notified of the success of the catch of the catch target character HC, a game image indicating that the catch is successful, and the state where the light-emitting part C as a part of the ball object B lights up or blinks in a color indicating that the catch is successful (e.g., green) are displayed on the stationary monitor6. Further, at the first stage where the user is notified of the success of the catch of the catch target character HC, then in accordance with a reproduction instruction from the main body apparatus2, a sound indicating that the catch is successful is emitted from the spherical controller200, and the spherical controller200also emits light in a color indicating that the catch is successful (e.g., green). As an example, at the first stage where the user is notified of the success of the catch of the catch target character HC, then in accordance with a reproduction instruction from the main body apparatus2, a sound indicating that the catch is successful (e.g., a sound indicating that the catch is successful, “click!”) is output from the vibration section271of the spherical controller200. Further, at the first stage where the user is notified of the success of the catch of the catch target character HC, then in accordance with a reproduction instruction from the main body apparatus2, the light-emitting section248lights up or blinks in a color indicating that the catch is successful (e.g., green), whereby light in this color is emitted from the opening211aof the spherical controller200. It should be noted that sound data indicating the sound to be output at the first stage where the user is notified of the success of the catch of the catch target character HC, and light emission color data indicating the light to be emitted at the first stage are also written in advance in the storage means (e.g., the memory324) in the spherical controller200. In accordance with a reproduction instruction from the main body apparatus2, the sound data is reproduced by the vibration section271, and in accordance with a reproduction instruction from the main body apparatus2, the light-emitting section248emits light in a color indicated by the light emission color data.

At a second stage where the user is notified of the success of the catch of the catch target character HC and which is after a predetermined time elapses from the first stage, the state where the light-emitting part C of the ball object B lights up or blinks in a color corresponding to the catch target character HC of which the catch is successful is displayed on the stationary monitor6. For example, the color corresponding to the catch target character HC may be a color related to the base color of the catch target character HC. For example, in the case of a character of which the whole body has a yellow base color, the color corresponding to the catch target character HC may be yellow. Further, at the second stage where the user is notified of the success of the catch of the catch target character HC, then in accordance with a reproduction instruction from the main body apparatus2, the cry of the catch target character HC of which the catch is successful is emitted from the spherical controller200, and the spherical controller200also emits light in the color corresponding to the caught catch target character HC. As an example, at the second stage where the user is notified of the success of the catch of the catch target character HC, then in accordance with a reproduction instruction from the main body apparatus2, the cry of the catch target character HC of which the catch is successful (e.g., the cry of the catch target character HC, “gar”) is output from the vibration section271of the spherical controller200. Further, at the second stage where the user is notified of the success of the catch of the catch target character HC, then in accordance with a reproduction instruction from the main body apparatus2, the light-emitting section248lights up or blinks in the color corresponding to the catch target character HC of which the catch is successful, whereby light in this color is emitted from the opening211aof the spherical controller200. It should be noted that as will be described later as catch target reproduction data, sound data indicating the sound to be output at the second stage where the user is notified of the success of the catch of the catch target character HC and light emission color data indicating the light to be emitted at the second stage are transmitted from the main body apparatus2to the spherical controller200and written in the storage means (e.g., the memory324) in the spherical controller200when the catch target character HC is set. In accordance with a reproduction instruction from the main body apparatus2, the sound data is reproduced by the vibration section271, and in accordance with a reproduction instruction from the main body apparatus2, the light-emitting section248emits light in a color indicated by the light emission color data.