U.S. Pat. No. 11,000,768

USER INTERFACE FOR A VIDEO GAME

Issue DateFebruary 28, 2019

Illustrative Figure

Abstract

Implementations include objects displayed on a computing device's display screen arranged in a pattern such as a grid. Upon selection of object(s) having at least one common property (e.g., color) with other adjacent object(s), the adjacent object(s) having one or more common properties may be removed causing spaces to be opened up into which other of the displayed objects, and objects off of the screen display, are moved into in order to fill up the spaces. When the movement of the other objects creates one or more collisions of objects having the common property(s) then those colliding and adjacent objects may be, in turn, removed from the display. The process of removing objects, filling the space with new objects, and checking for a condition (e.g., a common property) may be repeated in a “chain reaction” without user intervention until the condition ends.

Description

DETAILED DESCRIPTION OF EMBODIMENTS Embodiments include a method and system used to display objects, e.g. game objects, on a computing device's display screen. The objects may be arranged in a pattern such as a grid of columns and rows. In one configuration, after a user selects an object that has at least one common property such as color, size, shape, animation, etc., with other adjacent objects then the adjacent objects having that at least one common property are visually removed from the display. Removing the objects may cause one or more voids such as spaces or gaps to be opened up into which other of the displayed objects, and objects off of the screen display, are moved into in order to fill up the one or more voids. The movement of the other objects into the one or more voids may generate one or more collisions between objects. The movement of the other objects may also include moving objects that are adjacent to the colliding objects. When two or more objects collide, then those colliding and adjacent objects which have the at least one common property are, in turn, removed from the display. The process of removing objects, filling the space with new objects, and checking for a condition (e.g., a common property) can be repeated in a “collision chain reaction” until the condition is no longer met. When the condition is no longer met the chain reaction ends and the user can be presented with another turn for selecting and removing another object. FIG. 1is a high-level block diagram of an exemplary computing system100for providing for electronic games, video games, and variations thereof. Computing system100may be any computing system, such as a network computing environment, client-server system, and the like. Computing system100includes game system110configured to process data received from ...

DETAILED DESCRIPTION OF EMBODIMENTS

Embodiments include a method and system used to display objects, e.g. game objects, on a computing device's display screen. The objects may be arranged in a pattern such as a grid of columns and rows. In one configuration, after a user selects an object that has at least one common property such as color, size, shape, animation, etc., with other adjacent objects then the adjacent objects having that at least one common property are visually removed from the display. Removing the objects may cause one or more voids such as spaces or gaps to be opened up into which other of the displayed objects, and objects off of the screen display, are moved into in order to fill up the one or more voids. The movement of the other objects into the one or more voids may generate one or more collisions between objects. The movement of the other objects may also include moving objects that are adjacent to the colliding objects. When two or more objects collide, then those colliding and adjacent objects which have the at least one common property are, in turn, removed from the display. The process of removing objects, filling the space with new objects, and checking for a condition (e.g., a common property) can be repeated in a “collision chain reaction” until the condition is no longer met. When the condition is no longer met the chain reaction ends and the user can be presented with another turn for selecting and removing another object.

FIG. 1is a high-level block diagram of an exemplary computing system100for providing for electronic games, video games, and variations thereof. Computing system100may be any computing system, such as a network computing environment, client-server system, and the like. Computing system100includes game system110configured to process data received from a user interface114, such as a keyboard, mouse, etc., with regard to game mechanics or characteristics that may be employed in a computer game such as generating game objects, generating collision simulation, keeping score, logging scores, providing power-ups, awarding extra points for special patterns or moves, etc. as described herein.

Note that the computing system100presents a particular example implementation, where computer code for implementing embodiments may be implemented, at least in part, on a server. However, embodiments are not limited thereto. For example, a client-side software application may implement game system110, or portions thereof, in accordance with the present teachings without requiring communications between the client-side software application and a server.

In one exemplary implementation, game system110may be connected to display130(e.g., game display) configured to display data140(e.g. game objects), for example, to a user thereof. Display130may be a passive or an active display, adapted to allow a user to view and interact with data140displayed thereon, via user interface114. In other configurations, display130may be a touch screen display responsive to touches, gestures, swipes, and the like for use in interacting with and manipulating data140by a user thereof. Gestures may include single gestures, multi-touch gestures, and other combinations of gestures and user inputs adapted to allow a user to introspect, process, convert, model, generate, deploy, maintain, and update data140.

In other implementations, computing system100may include a data source such as database120. Database120may be connected to game system110directly or indirectly, for example via a network connection, and may be implemented as a non-transitory data structure stored on a local memory device, such as a hard drive, non-transitory Solid State Drive (SSD), flash memory, and the like, or may be stored as a part of a Cloud network as further described herein.

Database120may contain data sets122. Data sets122may include data as described herein. Data sets122may also include data pertaining to game action flow, game objects (e.g., objects), special effect simulation (e.g., explosions), sounds, scoring, values, tracking data, data attributes, data hierarchy, nodal positions, values, summations, algorithms, code (e.g., C++, Javascript, JSON, etc.), security protocols, hashes, and the like.

In addition, data sets122may also contain other data, data elements, and information such game models, special effect simulators, physic models, fluid flow simulators, Integration Archives (IAR) files, Uniform Resource Locators (URLs), eXtensible Markup Language (XML), schemas, definitions, files, resources, dependencies, metadata, labels, development-time information, run-time information, configuration information, API, interface component information, library information, pointers, and the like.

Game system110may include user interface module112, game engine116, and rendering engine118. User interface module112may be configured to receive and process data signals and information received from user interface114. For example, user interface module112may be adapted to receive and process data from user input associated with data sets122for processing via game system110.

In an exemplary implementation, game engine116may be adapted to receive data from user interface114and/or database120for processing thereof. In one configuration, game engine116is a software engine configured to receive and process input data from a user thereof pertaining to data140from user interface module114, database120, external databases, the Internet, ICS, and the like in order to play electronic games.

Game engine116may receive existing data sets122from database120for processing thereof. Such data sets122may include and represent a composite of separate data sets122and data elements pertaining to, for example, object interactions, object patterns, and the like. In addition, data sets122may include other types of data, data elements, and information such as object data, scoring data, explosion data, fluid dynamics data, collision simulation data, scientific data, financial data, and the like.

Game engine116in other implementations may be configured as a data analysis and processing tool to perform functions associated with data processing and analysis, on received data, such as data sets122. Such analysis and processing functions may include collision emulation (e.g., emulating collisions between objects), fluid flow, reflection—including introspection, meta-data, properties and/or functions of an object during gameplay, recursion, traversing nodes of data hierarchies, determining the attributes associated with the data, determining the type of data, determining the values of the data, determining the relationships to other data, interpreting metadata associated with the data, checking for exceptions, and the like.

For example, game engine116may be configured to receive and analyze gameplay flows to determine whether such game flows contain errors and/or anomalies, and to determine whether such errors and/or anomalies are within an acceptable error threshold. Moreover, game engine116may be configured to determine whether to bypass, report, or repair such errors and anomalies as needed in order to continue gameplay.

Rendering engine118may be configured to receive configuration data pertaining to data140, associated data sets122, and other data associated with data sets122such as user interface components, icons, user pointing device signals, and the like, used to render data140on display130. In one exemplary implementation, rendering engine118may be configured to render 2D and 3D graphical models and simulations to allow a user to obtain more information about data sets122. In one implementation, upon receiving instruction from a user, for example, through user interface114, rendering engine118may be configured to generate a real-time display of interactive changes being made to data140by a user thereof.

FIG. 2is a flow diagram of an example method200adapted for use with implementations, and variations thereof, for example, as illustrated inFIGS. 1 and 3-15. As illustrated, method200performs steps used to initiate and execute gameplay.

At201, method200receives one or more gameplay instances. At204, method determines if a game engine, such as game engine116is initiated. For example, as discussed supra, game engine116receives a trigger to initiate gameplay, which then may be provided to a first game environment, such as a mobile device, TV, etc., for presenting the game to a user.

At206, method200sets the initial game configuration. For example, method200may set the gameplay area to an initial configuration of objects setting, gameplay time, gameplay sound, game colors, play area reconfigurations, reconfiguration interval, cascading actions, object types, simulation effects, one or multiplayer modes, hidden object modes, contests, and the like.

At208, method200determines which simulation models to use for this instance. For example, method200may determine and load simulation algorithms such as simulated explosions, simulated fluid models, simulated physics models used to simulate contact between objects, and the like, used to enhance and implement instances of gameplay.

At210, method200determines the configuration of the play area based on the point in the game instance. For example, if the game is being started, then method200may set the initial gameplay area configuration and display a plurality of objects in a particular or random starting configuration. However, at each gameplay interval, method200may set a new play area configuration, which may be appear to be sequential, random, or pseudo-random, etc.

For example, in one implementation, each subsequent reconfiguration after a gameplay interval may be based on emulating sequential motion of beads along a direction, such as a horizontal or vertical direction, and adding new objects that appear to come from entry points, and removing objects as they appear to exit via exit points of the gameplay area. As such, in this gameplay scenario, at each turn interval of the game each subsequent gameplay area configuration is different from the previous gameplay area configuration.

At212, method200detects user selection of one or more objects presented within the gameplay area. For example, as described herein, a user selection of a bead or beads may be detected by method200for removal thereof from the play area.

At214, in response to user selection of one or more objects, method200removes the objects from the play area. In addition, method200may determine adjacent objects having at least one common property (e.g., color, shape, attribute, animation, etc.) to the one or more objects being selected and remove those objects from the play area. In other implementations, method200may determine if there is a condition met by the orientation of the moved objects with respect to stationary objects within the play area, if so, then method200may also remove one or more of the moved or stationary objects according to the condition.

At216, in response to a removal signal, method200may initiate a special effect such as an explosion, presented to the user to show the removal of the selected objects and any adjacent objects having the at least one common property. In implementations, special effects may be generated from algorithms, such as special effect algorithms, physical model simulations, fluid dynamic simulations, collision simulations, and the like.

At218, in response to object removal, method200may initiate one or more special effects to emulate moving objects from within the play area and from entry points of the play area into a space formerly occupied by the removed objects. For example, filling in simulations may include using sliding motion emulation to emulate objects sliding into the gap areas, use fluid dynamics to emulate objects flowing into the gaps, or use other types of emulations, such as running or hopping to show objects filling in gaps.

At220, upon the removal of objects at218, method200may continue to remove objects that are adjacent and have the at least one common property. For example, such object removal may be part of a cascading removal process as discussed herein where the removal of objects may leave gaps that are automatically filled in by other objects which then may be subsequently removed as some objects filing in the gaps become adjacent to other objects that have the at least one common property with the objects filling in the gaps.

At222, method200determines whether a specified amount or all adjacent objects having at least one common property have been removed. If there are more objects to remove, then method200returns to218. If all, or the specified amount, of objects have been removed, then method200proceeds to224.

At224, if the gameplay is finished, method200proceeds to226and ends. However, if the gameplay is not done, method200proceeds to210.

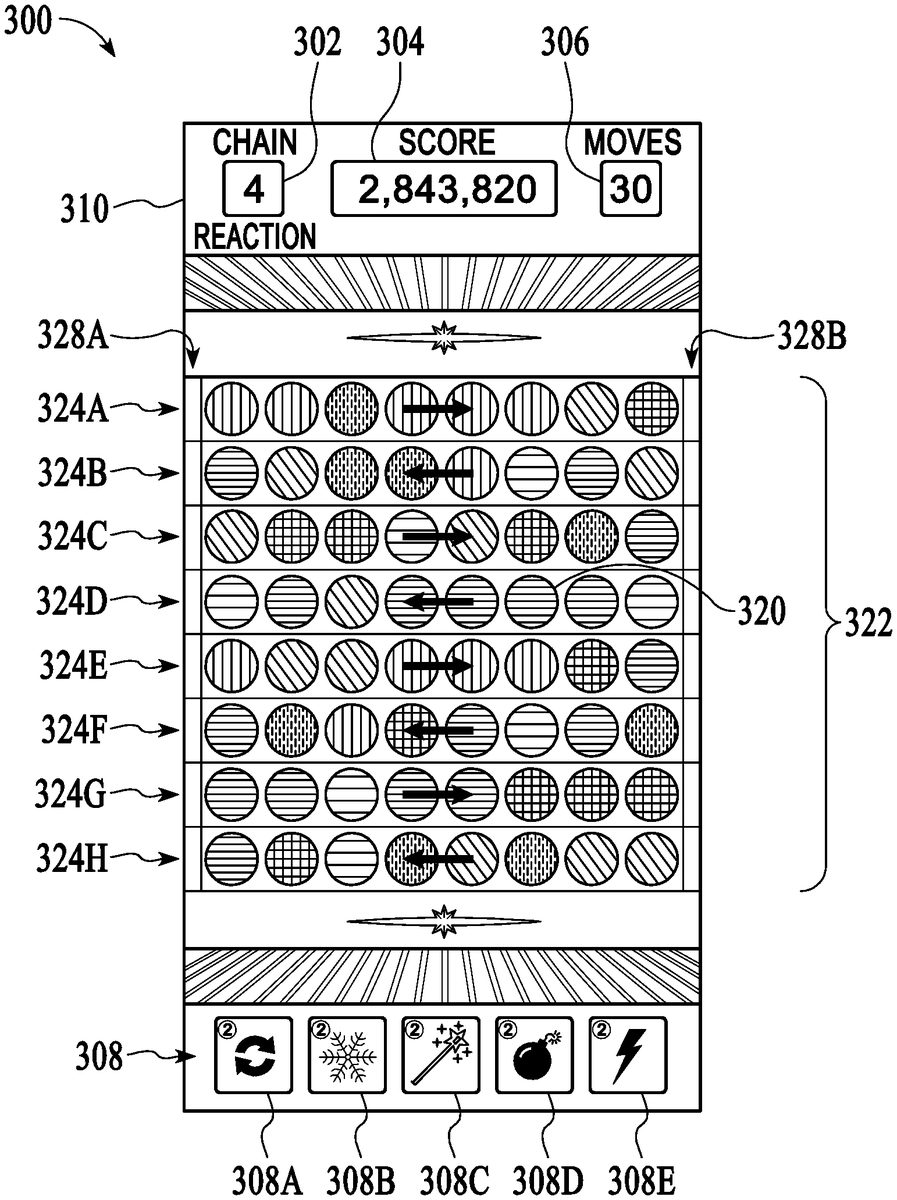

FIG. 3illustrates a screen shot of a particular implementation. Although specific details are provided for this example video game on an example display it should be apparent that many variations are possible. In general, many different types of games can be created using features of the user interface described herein. Also, a game may be presented or played on many different types of hardware such as TVs, Smart TVs, mobile phones, tablets, laptops, desktop computers, virtual reality headsets, augmented reality displays, etc. As discussed herein, denoted white lines and grey lines showing motion or groupings are not part of the gameplay or game display and are merely for ease of discussion.

InFIG. 3, screen display300includes play area310, chain reaction counter/timer302, score304, moves306, and power ups308, including, game refresh308A, freeze308B, magic wand308C, bomb308D, and lightening308E, as described herein. Counter302counts, for example, a time interval, number of game intervals played, etc. Score304may show various scores related to gameplay. Moves306, shows the number of moves a player or players have made during a game.308when selected provides the user with options to, for example, acquire a new set of beads, freeze the game, delete certain objects, buy additional time, reduce or increase the number of game objects being displayed, etc.

For example, in one implementation power ups308may include refresh308A which may allow a user to receive a new or partial set of objects, freeze308B which may allow a user to freeze play for a non-specified or specified duration which may be configured to freeze timer302until player select objects to tap on and/or selects another power up to use, magic wand308C where a player may delete one or more objects having at least one common property, such as color, off play area310, where for example a player can tap on one of the red bead and all the red beads are deleted, bomb308D which may be used to remove one or more objects in groups off the play area310, and lighting308E, which may be used to delete a number of objects situated in a particular group, such as a column of objects, row or objects, etc.

Main play area310shows many objects320which, in this embodiment, are moveable colored bead shaped objects320(e.g., beads, balls, marbles, etc.) arranged in an array or grid322. Each row324A-H of the grid322allows movement of the balls320in an alternating direction as shown by white arrows. The white arrows are not part of the gameplay or display but are shown for ease of discussion.

Thus, in this scenario, each row324A-H, alternates direction as shown. For example, in rows324A,324C,324E, and324G the beads320move from left to right while in rows324B,324D,324F, and324H the beads320move from right to left. Note that in other variations objects320need not be arranged in a grid322but may have different organizations, or even no organization at all (i.e., random placement). Rather than moving from side-to-side the beads320or other objects320can move in other directions such as vertically. Directions need not alternate and can be set in any desired pattern. Directions may change during gameplay, etc. Many variations are possible.

In this example game mechanic, beads320may be shifted after a period of time or game interval, e.g., every 3 seconds, in the direction of their respective row324A,324B, etc., into a new location by one or more beads320. The shifting may be periodic e.g., a time limit, encouraging users to make moves more quickly. Also, it can be used strategically by a user to allow the board to change to a more favorable configuration. For example, as beads320shift positions, a user may see a pattern emerging over one or more shifts. Other game variations need not use a timer or can implement automatic shifting or movement of the game objects by other rules, as desired.

When a row324is shifted it typically means a bead320disappears and another bead320appears from the respective exit and entry edges328A-B of a particular row324. In this example, a new bead320entering a row324may be hidden from the user (e.g., inside an entry edge328) and may be randomly, pseudo-randomly, or semi-randomly generated. In some gameplay scenarios there may be a higher or lower probability that the beads320being generated will be of a matching attribute (e.g., color) to adjacent beads. In other variations, other rules can be used to determine the color or other properties of objects320entering the play area310.

In one embodiment, respective entry edges328A and/or exit edges328B may be configured to reveal or provide clues as to which one or more objects320are coming out of entry edges320A or what just left the exit edges328B. In some cases, entry/exit edges328may change in appearance to reflect which objects are emerging. For example, one or more attributes, such as color, dimension, surface pattern, and the like of entry edge328A may change to signal, which one or more objects320are coming out of the entry edge328A into the play area310. The amount and types of hints may vary according to, for example, a players level, number of cascading events, time lapse, score, etc. For example, entry edges328A or exit edges328B may change color, shape, or surface attribute to reflect a next object320coming from an entry edge320A or a leaving object320leaving via an exit edge320B.

FIGS. 4A and 4Billustrate time-out row shifting.FIG. 4Bis the game display310after the shifts have occurred from the display inFIG. 4A. In this example, the shifts in all rows happen simultaneously. As shown, top row324A has been shifted one bead320to the right and second row324B has been shifted one bead320to the left. Third row324C has been shifted one bead320to the right . . . and so on. Illustratively, on a game shift, to fill in row324A, a red bead320has entered the top row324A; an orange bead320has entered the second row324B; and an orange bead320has entered the third row320C.

FIGS. 5A-5Bare screenshots to illustrate object collisions and removal in an embodiment. InFIGS. 5A-Bremoving beads320from the display310causes other beads320to move into a vacated space336. In some implementations, beads320that move into a vacated space336have the ability to cause a “collision” with remaining beads320that have the common property and this, in turn, can cause a “chain reaction” of another removal of beads320that causes yet other beads320to move into the vacated space336, etc. as described herein.

For example, as shown inFIG. 5A, to remove a set of beads320, a set of beads320having at least one common property are aligned within rows324B and row324C to form a group332of adjacent beads320. An electronic gaming system, such as game system100identifies group332of beads320as being adjacent and having at least one common property, attribute, etc. Illustratively, as illustrated inFIG. 5A, a grey line334is used to denote the boundary of the grey-circled group332of adjacent beads320having the common property. In this example, the common property is color.

To remove grey-circled group of beads332, a user taps or otherwise selects any beads320in the grey-circled group332, for example, using a touch gesture such as tapping. For example, as shown in5B, since beads320in grey-circled group332are all of the same color and are adjacent to each other, tapping on any one of these beads320in the grey-circled group332causes all of the beads320in grey-circled group332to be removed, leaving a vacated space336illustrated as by grey lines338. The removal of the beads320leaves space or vacancy336into which other beads320adjacent to the grey-circled group332may be moved into. In one implementation, the vacancy is extremely short-lived as other beads320adjacent to the grey-circled group332are moved into, or flow into, the vacated space336almost immediately.

In a particular embodiment, the removal of beads320may be accompanied by an effect, such as a visual and/or audio effect, e.g., explosion effect, burning effect, vaporizing effect, and the like, such that the impression is that the beads320have been disambiguated (e.g., blown up, destroyed, dissolved, disintegrated, etc.). In other embodiments, any other effect (or no effect at all) can be used to enhance the experience of removing objects320from the screen.

FIGS. 6A-6Bare screenshots to illustrate object removal and replacement in an embodiment. For example,FIG. 6Aillustrates beads320being moved into the vacated space336with the final result shown inFIG. 6B. The white arrows show the direction of bead movement, which is in accordance with the movement discussed herein. In this example, row324C has four beads moved into vacated space336from the left. All of these beads320have come from off-screen or from beyond the edge of the playing area310.

In other variations, different methods can be used to cause beads320to move into the playing area310or to otherwise appear. In lower row324D, beads320are moved in from the right. Note that first two beads320, were already on the playing area310and the next four beads320have been moved from beyond the edge of the playing area310, e.g., passed through entry edges328.

FIGS. 7A-7Bare screenshots to illustrate object movement in an embodiment creating a chain reaction of collisions. In implementations, shown inFIGS. 6A-6B, as beads320move into and fill the vacant space336they can be said to “hit” or “collide” with beads320that remain in the playing area310. To create a chain reaction a user removes objects320in a manner that allows beads320with common properties to fill in vacant space336. If a collision is between two beads320of the same attribute, e.g., color, then those two beads320may initiate a check by the gaming system to see whether there are other adjacent beads320having the common attribute. If there are adjacent beads320having at least a common attribute, a new adjacency group may be formed, typically in real-time.

In one implementation, most if not all beads320in the new adjacency group formed by the collision will, in turn, be removed in a similar fashion described above forFIGS. 5A-5B and 6A-6B. This removal will cause new beads320to be moved which can also result in a collision of same-attribute beads320and so on to create a “chain reaction” of removals due to collisions. In an embodiment, these collisions are tracked and may create changes in the scoring as discussed herein.

For example, as illustrated inFIG. 7A, a user may remove a bead (e.g. green bead)320in order to create a chain reaction. In this illustration, a group340of three adjacent green beads320having a common property is presented to a user via game area310. One green bead320in row234D of group340separates a group342of blue beads320positioned on either side of one green bead320. In addition, two green beads320in row324E of group340separate another group344of red beads320in row324E positioned on either side of two green beads320.

As illustrated inFIG. 7B, when group340is removed by a user, for example, by tapping on one or more beads320of group340, a vacant space336is formed. Subsequently, beads320from group342and group344move inward to fill in the vacant space346and collide to form a new group of beads320. Since beads320of group342and similarly group344, all have the same respective property, e.g., color, and are now adjacent after they move in their respective rows324D and324E and collide, bead group342(e.g. blue beads) and bead group344(e.g., red beads) are removed. In implementations, beads320may fill in vacant space336(e.g., gap, void, etc.), from any direction, here beads320from group342and group344may move from the left, right, or from both sides of vacant space336in order to fill in vacant space336and collide.

As discussed herein, once all of the groups of two or more objects320(e.g., balls, beads, marbles, figures, etc.) having at least one common property are formed by moving objects320during the cascade are removed, and no other adjacent objects320have the at least one common property are found by the game system, the collision cascade ends. For example, in the case being discussed ofFIGS. 7A-7B, at the end of the collision process occurs when there is no secondary collision effect. For example, when the two beads320that collide with remaining beads320do not collide with other beads320having the same property or attribute, the chain reaction ends. The final result of the movement of objects320after the collision process has ended is shown inFIG. 8.

FIGS. 9A-9Bare screenshots to illustrate bead position and movement after one or more chain reactions. After the final result of the chain reaction as shown inFIG. 8, as illustrated inFIG. 9A, beads320in rows324A-H continue to move horizontally either from left to right, or right to left, with respect to each row324until the end of the game is reached. In other implementations, as shown inFIG. 9B, beads320may move vertically. In some implementations, beads320may move in a combination of directions, such as horizontal, vertical, angular, or randomly or pseudo randomly, etc.

FIGS. 10A-10Bare screenshots to illustrate scoring. In one implementation, scoring may be achieved by removing at least two objects320from the play area310. For example, as illustrated inFIG. 10A, in one configuration beads320are arranged in a pattern350at one time period before changing to a new pattern at the next time period, as described herein. In this particular arrangement, there are a number of bead groupings352, where two or more beads320, having a common property, such as color, are adjacent one another.

As illustrated inFIG. 10B, at this moment in the gameplay, a user may select, (e.g., tap) on any beads320within one of the bead groupings352to remove the bead grouping352from the play area310and score points. As illustrated, tapping on beads within bead grouping352A will remove two beads320from row324C. If the user selects any beads320from bead grouping352B, one bead from each row324G and324H will be removed. Once the selected bead grouping352is removed, e.g.,352A, other beads320around the removed grouping move to fill in the gap left by the removal of grouping352as discussed above.

For example, given a user removes bead grouping352A, beads320from row324C would move horizontally to fill in the gap left by the removed bead grouping352A. Beads352may move from right to left, left to right, or from both sides to fill in the gap. In one implementation, a physical fluid dynamics model may be used to determine how the beads320in row324C will flow. For example, if beads320in row324C are under a simulated fluid pressure, beads320may be moved using a fluid simulation configured to simulate fluid motion with respect to that pressure to fill in the gap, similar to water flowing into an open drain.

FIG. 11is a screenshot to illustrate capturing an object320to incur points. In an implementation, scoring may be enhanced relative to a particular configuration of beads320. As illustrated inFIG. 11, a user may remove beads320to form a capturing formation of beads356. Since capturing a bead302using other beads320may take an additional user skill and strategy, such capturing formation of beads356may incur an additional game perk such as additional bonus number of points scored, additional play time, variations in game time, additional power ups, less types of beads in the play area310, etc. Other variations are possible. For example, removing groups of beads320to allow a particular bead320to move from one side of the game area310to another side without being removed may incur additional points, tokens, play bonus, life, etc.

FIG. 12is a screenshot to illustrate two-player gameplay. In an implementation, two players may share a common play are310and take turns playing a game. For example, as illustrated, player360A (e.g., Patrick D) may be playing another player360B (e.g., Edward C). In this scenario, game mechanics may be similar to those described herein but player360A and player360B take turns. In one gameplay scenario, each player360makes a fixed number of moves, e.g.,3moves, at each inning. Who ever scores the highest at the end of a number of innings, e.g.,7innings, wins the game. Here, inning counter364shows it is 2nd inning and player360A's turn where with “6,332,980” points player360A is trailing player360B who has 12,760,550 points.

In other game scenarios, to enhance gameplay excitement game mechanics may vary relative to the skill of the player360. For example, gameplay for player360A may be different for player360A than player360B. Here, since player360has achieved a level of “twenty-three” and player360B has achieved a level of “twenty four,” player360A may be giving a handicap such as an easier game mechanic, extra scoring, etc. in order to increase the competitive challenge to player360B.

FIG. 13is a screenshot to illustrate gameplay involving a fixed number of moves. In implementations, gameplay mechanics are varied such that a user must complete the game within a set time, set number of moves, or a combination of both. Here, to provide more user interaction with the game, a bead320may be configured as a special bead366. For example, a special bead366may be a “sparkling bead” configured with a “spark”368to randomly alternate in various directional planes, e.g., vertical and horizontal plane, and when activated may clear a whole row or column in the direction of spark368. These special beads366also can be used in conjunction with power ups308down as described herein.

Here, the game mechanic requires a user remove all skulls370within a fixed number of time periods (e.g., sequential reconfigurations of play area310) by removing associated columns372using a combination of removing beads320and using special bead366when activated. For example, in this scenario as a user removes beads320under skulls370, when a user removes all of the beads in a column372, the associated skull370may fall down associated column372.

FIG. 14is a screenshot to illustrate gameplay involving uncovering design patterns and/or opening more space for objects320to occupy. In one game implementation, as user removes beads320, adjacent blocks384are removed exposing more play area310to the user and allowing more beads320to enter the play area310. For example, as illustrated inFIG. 14, a group382of adjacent beads320may be removed which then removes adjacent blocks384. As adjacent blocks384are removed, more gaps may be created where blocks384were, which allows beads320entering play area310, from for example, entry edges328, to fill in the gaps. A user may then use cascading removal of beads320as described herein to remove more blocks384at each play.

In some configurations, non-removable objects388, may be used to create more challenges be placing obstructions in the way of beads320. In this particular example, removing blocks384adjacent non-removable objects388may allow the non-removable objects388to act as entry points for beads to enter a protected area, such as protected areas390, as shown.

FIG. 15is a screenshot to illustrate game badges, trophies, and achievement awards. In some implementations, various badges392, trophies394, and awards396may be earned by a user. Such badges392, trophies394, and awards396, may be designed to emulate a change in player status. For example, larger trophies394and larger awards396may be designed to show an increase in player status, player winning, player longevity, and the like.

Although the description has been described with respect to particular embodiments thereof, these particular embodiments are merely illustrative, and not restrictive. For example, although the common characteristic for bead adjacency grouping and collision chain reactions has been described as being the color of the beads320, any other characteristic may be used. In other games beads320may have different patterns or designs, animations or other effects. Objects can be any desired shape besides beads and the shapes may change. Many other variations are possible.

FIG. 16is a block diagram of an exemplary computer system1600for use with implementations described inFIGS. 1-15. Computer system1600is merely illustrative and not intended to limit the scope of the claims. One of ordinary skill in the art would recognize other variations, modifications, and alternatives. For example, computer system1600may be implemented in a distributed client-server configuration having one or more client devices in communication with one or more server systems.

In one exemplary implementation, computer system1600includes a display device such as a monitor1610, computer1620, a data entry device1630such as a keyboard, touch device, and the like, a user input device1640, a network communication interface1650, and the like. User input device1640is typically embodied as a computer mouse, a trackball, a track pad, wireless remote, tablet, touch screen, and the like. Moreover, user input device1640typically allows a user to select and operate objects, icons, text, characters, and the like that appear, for example, on the monitor1610.

Network interface1650typically includes an Ethernet card, a modem (telephone, satellite, cable, ISDN), (asynchronous) digital subscriber line (DSL) unit, and the like. Further, network interface1650may be physically integrated on the motherboard of computer1620, may be a software program, such as soft DSL, or the like.

Computer system1600may also include software that enables communications over communication network1652such as the HTTP, TCP/IP, RTP/RTSP, protocols, wireless application protocol (WAP), IEEE 802.11 protocols, and the like. In addition to and/or alternatively, other communications software and transfer protocols may also be used, for example IPX, UDP or the like.

Communication network1652may include a local area network, a wide area network, a wireless network, an Intranet, the Internet, a private network, a public network, a switched network, or any other suitable communication network, such as for example Cloud networks. Communication network1652may include many interconnected computer systems and any suitable communication links such as hardwire links, optical links, satellite or other wireless communications links such as BLUETOOTH, WIFI, wave propagation links, or any other suitable mechanisms for communication of information. For example, communication network1652may communicate to one or more mobile wireless devices1656A-N, such as mobile phones, tablets, and the like, via a base station such as wireless transceiver1654.

Computer1620typically includes familiar computer components such as one or more processors1660, and memory storage devices, such as memory1670, e.g., random access memory (RAM), storage media1680, and system bus1690interconnecting the above components. In one embodiment, computer1620is a PC compatible computer having multiple microprocessors, graphics processing units (GPU), and the like. While a computer is shown, it will be readily apparent to one of ordinary skill in the art that many other hardware and software configurations are suitable for use with the present invention.

Memory1670and Storage media1680are examples of non-transitory tangible media for storage of data, audio/video files, computer programs, and the like. Other types of tangible media include disk drives, solid-state drives, floppy disks, optical storage media such as CD-ROMS and bar codes, semiconductor memories such as flash drives, flash memories, read-only-memories (ROMS), battery-backed volatile memories, networked storage devices, Cloud storage, and the like.

Although the description has been described with respect to particular embodiments thereof, these particular embodiments are merely illustrative, and not restrictive.

Any suitable programming language can be used to implement the routines of particular embodiments including C, C++, Java, assembly language, etc. Different programming techniques can be employed such as procedural or object oriented. The routines can execute on a single processing device or multiple processors. Although the steps, operations, or computations may be presented in a specific order, this order may be changed in different particular embodiments. In some particular embodiments, multiple steps shown as sequential in this specification can be performed at the same time.

Particular embodiments may be implemented in a computer-readable storage medium for use by or in connection with the instruction execution system, apparatus, system, or device. Particular embodiments can be implemented in the form of control logic in software or hardware or a combination of both. The control logic, when executed by one or more processors, may be operable to perform that which is described in particular embodiments. For example, a tangible medium such as a hardware storage device can be used to store the control logic, which can include executable instructions.

Particular embodiments may be implemented by using a programmed general purpose digital computer, by using application specific integrated circuits, programmable logic devices, field programmable gate arrays, optical, chemical, biological, quantum or nanoengineered systems, etc. Other components and mechanisms may be used. In general, the functions of particular embodiments can be achieved by any means as is known in the art. Distributed, networked systems, components, and/or circuits can be used. Cloud computing or cloud services can be employed. Communication, or transfer, of data may be wired, wireless, or by any other means.

It will also be appreciated that one or more of the elements depicted in the drawings/figures can also be implemented in a more separated or integrated manner, or even removed or rendered as inoperable in certain cases, as is useful in accordance with a particular application. It is also within the spirit and scope to implement a program or code that can be stored in a machine-readable medium to permit a computer to perform any of the methods described above.

A “processor” includes any suitable hardware and/or software system, mechanism or component that processes data, signals or other information. A processor can include a system with a general-purpose central processing unit, multiple processing units, dedicated circuitry for achieving functionality, or other systems. Processing need not be limited to a geographic location, or have temporal limitations. For example, a processor can perform its functions in “real time,” “offline,” in a “batch mode,” etc. Portions of processing can be performed at different times and at different locations, by different (or the same) processing systems. Examples of processing systems can include servers, clients, end user devices, routers, switches, networked storage, etc. A computer may be any processor in communication with a memory. The memory may be any suitable processor-readable storage medium, such as random-access memory (RAM), read-only memory (ROM), magnetic or optical disk, or other tangible media suitable for storing instructions for execution by the processor.

As used in the description herein and throughout the claims that follow, “a”, “an”, and “the” includes plural references unless the context clearly dictates otherwise. Also, as used in the description herein and throughout the claims that follow, the meaning of “in” includes “in” and “on” unless the context clearly dictates otherwise.

Thus, while particular embodiments have been described herein, latitudes of modification, various changes, and substitutions are intended in the foregoing disclosures, and it will be appreciated that in some instances some features of particular embodiments will be employed without a corresponding use of other features without departing from the scope and spirit as set forth. Therefore, many modifications may be made to adapt a particular situation or material to the essential scope and spirit.

Claims

- A method for controlling a computer game, the method comprising: displaying a plurality of objects on a gameplay area at a first game interval generating a first game display configuration;accepting a user input to cause selecting of one or more of the plurality of objects;in response to the selecting, performing the following: removing the selected objects from the gameplay area;and moving other objects into a space formerly occupied by the removed objects by employing a fluid dynamics model to simulate a fluid motion of the other objects.

- The method of claim 1 , wherein the other objects are moved into the space from alternating directions including left-to-right and right-to-left.

- The method of claim 1 , wherein the other objects are moved into the space from alternating directions including top-to-bottom and bottom-to-top.

- The method of claim 1 , wherein an object is moved into the space formerly occupied by the removed objects from a position off of the display.

- The method of claim 1 , wherein objects are slid along a predetermined direction in order to fill the space formerly occupied by the removed objects.

- The method of claim 5 , wherein when an object is slid into the space it becomes adjacent to one or more stationary objects.

- The method of claim 1 , wherein the removing further comprises: removing moved objects and stationary objects having a common characteristic that are adjacent to one another.

- The method of claim 7 , wherein the common characteristic includes color.

- The method of claim 7 , wherein the common characteristic includes shape.

- The method of claim 7 , wherein the common characteristic includes animation.

- The method of claim 7 , wherein the common characteristic includes a value.

- The method of claim 11 , wherein the value is indicated by a number.

- The method of claim 11 , wherein the value is indicated by one or more symbols.

- The method of claim 1 , further comprising: automatically repeating the removing if the moving of one or more of the other objects causes a condition to be met.

- The method of claim 14 , further comprising: employing a physics model to simulate collisions between objects moving into the at least a portion of the space.

- A computer implemented method for a user interface for a video game played on a computing device, the method comprising: displaying a plurality of objects on a gameplay area at a first game interval generating a first game display configuration;accepting a user input to cause selecting of one or more of the plurality of objects;in response to the selecting, performing the following: removing adjacent objects that match at least one characteristic of the selected objects from the gameplay area;and moving other objects in a fluid simulation to simulate the other objects moving as flowing into a space formerly occupied by the removed objects, wherein the objects are moved into the space from at least two alternating directions.

- The computer implemented method of claim 16 , wherein the at least two alternate directions include left-to-right and right-to-left.

- The computer implemented method of claim 16 , wherein the at least two alternating directions include top-to-bottom and bottom-to-top.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.