U.S. Pat. No. 10,953,330

REALITY VS VIRTUAL REALITY RACING

AssigneeBUXTON GLOBAL ENTERPRISES, INC.

Issue DateJuly 22, 2019

U.S. Patent No. 10,953,330: Reality vs Virtual Reality Racing

U.S. Patent No. 10,953,330: Reality vs Virtual Reality Racing

Issued March 23, 2021, to Buxton Glob. Enter. Inc.

Filed: July 22, 2019 (claiming priority to July 7, 2017)

Overview:

U.S. Patent No. 10,953,330 (the ‘330 patent) relates to tracking the locations of a physical object and a virtual vehicle on a racetrack and displaying them to merge physical and virtual racing. The ‘330 patent describes a method for displaying a virtual vehicle which takes several points of view of a racecourse, sometimes including one in a physical vehicle, and provides them to a simulation system which creates the virtual vehicle. This system takes the points of view in the real world and mirrors them in the virtual world to allow virtual and real drivers to compete in the “same” space. The points of view in both worlds are also used to display the physical object in the virtual world. As the virtual vehicle moves the virtual points of view are used by the simulation system to calculate which parts of the virtual vehicle are visible from points of view in the real world, including when it is obscured by physical objects. This information is used to provide to a display system the visible parts of the virtual vehicle to a real-life driver or an audience in some versions.

The ‘330 patent aims to allow racers of both the physical and virtual world to compete in the same world. In some versions, the ‘330 patent provides predictive information to audience members like trajectory information or likelihood of a virtual vehicle overtaking a physical one. This could be an interesting fusion of e-sports and real-life sports for players, drivers, and audiences alike.

Abstract:

A method for displaying a virtual vehicle includes identifying a position of a physical vehicle at a racecourse, identifying a position of a point of view at the racecourse, providing a portion of the virtual vehicle visible from a virtual position of the point of view. The method operates by calculating the virtual position within a virtual world based on the position of the point of view. A system for displaying virtual vehicles includes a first sensor detecting a position of a physical vehicle at a racecourse, a second sensor detecting a position of a point of view at the racecourse, and a simulation system providing a portion of the virtual vehicle visible from a virtual position of the point of view. The simulation system is configured to calculate the virtual position of the point of view within a virtual world based on the position of the point of view.

Illustrative Claim:

The invention claimed is:

- A method for displaying a virtual vehicle comprising: identifying respective positions of multiple points of view at a racecourse; providing the respective positions of the points of view at the racecourse to a simulation system; providing a position of a physical object at the racecourse to a simulation system; calculating, by the simulation system, a virtual world comprising the virtual vehicle; calculating, by the simulation system, respective virtual positions of the points of view within the virtual world based on the respective positions of the points of view at the racecourse; calculating, by the simulation system, a representation of the physical object in the virtual world between the respective virtual positions of the points of view and the virtual vehicle within the virtual world; calculating, by the simulation system, respective portions of the virtual vehicle within the virtual world that are visible from the corresponding virtual positions of the points of view, wherein the respective portions of the virtual vehicle within the virtual world that are visible from the corresponding virtual positions of the points of view comprise respective portions of the virtual vehicle that are unobscured, from the respective virtual position, by the representation of the physical object; outputting, by the simulation system, the respective portions of the virtual vehicle visible from the virtual positions of the points of view; providing, to a display system, the respective portions of the virtual vehicle visible from the virtual positions of the points of view; generating, at the display system, representations of the respective portions of the virtual vehicle visible from the virtual positions of the points of view; and displaying a series of representations of the virtual vehicle over a period of time to simulate a trajectory of the virtual vehicle on the racecourse, wherein the series of representations comprises the generated representations.

Illustrative Figure

Abstract

A method for displaying a virtual vehicle includes identifying a position of a physical vehicle at a racecourse, identifying a position of a point of view at the racecourse, providing a portion of the virtual vehicle visible from a virtual position of the point of view. The method operates by calculating the virtual position within a virtual world based on the position of the point of view. A system for displaying virtual vehicles includes a first sensor detecting a position of a physical vehicle at a racecourse, a second sensor detecting a position of a point of view at the racecourse, and a simulation system providing a portion of the virtual vehicle visible from a virtual position of the point of view. The simulation system is configured to calculate the virtual position of the point of view within a virtual world based on the position of the point of view.

Description

DETAILED DESCRIPTION Embodiments described herein merge real world and virtual world racing competitions. For example, real world racing champions and virtual world racing champions can compete to determine an overall champion. Advantageously, each champion can stay within their respective “world” and still compete with a champion from another “world.” In effect, embodiments described herein enable live participants to compete against virtual participants. The terms “physical” and “real-world” are used interchangeably herein and to contrast with “virtual world.” For example, a “physical vehicle” or “real-world vehicle” can be physically present on or at a racecourse. A “virtual vehicle” cannot be physically present on the same racecourse. For example, a “virtual vehicle” may be a graphically generated vehicle that is shown on a display. In some embodiments, a “virtual vehicle” is a representation in a software-based environment. In some embodiments, a method for displaying a virtual vehicle includes identifying a position of a physical vehicle at a racecourse, identifying a position of a point of view at the racecourse, and providing, to a display system, a portion of the virtual vehicle visible from a virtual position of the point of view. Problems solved by embodiments disclosed herein can include overcoming the lack of realism experienced by users of prior solutions. In some embodiments herein, providing visible portions of the virtual vehicle to the user increases the realism experienced by the user. The increased realism provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle. In some embodiments, the visible portion of the virtual vehicle is calculated based on a virtual position of the physical vehicle in a virtual world, a virtual position of the point of view in the virtual world, and a virtual position of the virtual vehicle in the virtual world. Problems solved ...

DETAILED DESCRIPTION

Embodiments described herein merge real world and virtual world racing competitions. For example, real world racing champions and virtual world racing champions can compete to determine an overall champion. Advantageously, each champion can stay within their respective “world” and still compete with a champion from another “world.” In effect, embodiments described herein enable live participants to compete against virtual participants.

The terms “physical” and “real-world” are used interchangeably herein and to contrast with “virtual world.” For example, a “physical vehicle” or “real-world vehicle” can be physically present on or at a racecourse. A “virtual vehicle” cannot be physically present on the same racecourse. For example, a “virtual vehicle” may be a graphically generated vehicle that is shown on a display. In some embodiments, a “virtual vehicle” is a representation in a software-based environment.

In some embodiments, a method for displaying a virtual vehicle includes identifying a position of a physical vehicle at a racecourse, identifying a position of a point of view at the racecourse, and providing, to a display system, a portion of the virtual vehicle visible from a virtual position of the point of view. Problems solved by embodiments disclosed herein can include overcoming the lack of realism experienced by users of prior solutions. In some embodiments herein, providing visible portions of the virtual vehicle to the user increases the realism experienced by the user. The increased realism provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle.

In some embodiments, the visible portion of the virtual vehicle is calculated based on a virtual position of the physical vehicle in a virtual world, a virtual position of the point of view in the virtual world, and a virtual position of the virtual vehicle in the virtual world. Problems solved by embodiments disclosed herein can include how to provide a visible portion of a virtual vehicle. In some embodiments herein, providing visible portions of the virtual vehicle through a virtual calculation of the visible portion increases the accuracy of the visible portion determination. The increased accuracy provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle. In some embodiments herein, providing visible portions through a virtual calculation increases the efficiency of the calculation. The increased efficiency reduces power usage and improves representation speed for a more seamless user experience.

In some embodiments, the portion of the virtual vehicle visible from the virtual position of the point of view includes a portion of the virtual vehicle that is unobscured, from the virtual position of the point of view, by a representation of the physical vehicle at a virtual position of the physical vehicle in the virtual world.

In some embodiments, the method further includes simulating, by the simulation system, an interaction between the virtual vehicle and the representation of the physical vehicle in the virtual world, the portion of the virtual vehicle visible from the virtual position of the point of view is calculated based on the interaction.

In some embodiments, the position of the point of view at the racecourse includes a point of view of an operator of the physical vehicle, and identifying a position of a point of view at the racecourse includes detecting, at a sensor, the point of view of the operator of the physical vehicle, the method further including: identifying a position of a physical object; receiving kinematics information of the virtual vehicle; generating, at a display system, a representation of the virtual vehicle based on the position of the physical object, the position of the point of view at the racecourse, and the kinematics information; and displaying the representation of the virtual vehicle such that the virtual vehicle is aligned with the physical object from the perspective of the position of the point of view at the racecourse.

In some embodiments, the method further includes generating, at a display system, the representation of the portion of the virtual vehicle visible from the virtual position of the point of view.

In some embodiments, the method further includes displaying, by the display system, a series of representations of the virtual vehicle over a period of time to simulate a trajectory of the virtual vehicle on the racecourse, the series of representations includes the representation of the portion of the virtual vehicle visible form the virtual position of the point of view. In some embodiments, a predicted trajectory of the virtual vehicle is displayed. The prediction may be based on current trajectory, acceleration, current vehicle parameters, etc. This may allow an audience member to anticipate if a virtual vehicle is likely to overtake a physical vehicle. The predicted trajectory may be presented as a line, such as a yellow line. Other displays may also be included, such as “GOING TO PASS!” or “GOING TO CRASH!”

In some embodiments, the method further includes storing, by the display system, a digital 3-D model of the virtual vehicle used to generate each representation from the series of representations, each representation is generated by the display system based on the digital 3-D model.

In some embodiments, the method further includes receiving a digital 3-D model of the virtual vehicle used to generate each representation from the series of representations, each representation is generated by the display system based on the digital 3-D model.

In some embodiments, the kinematics information includes one or more vectors of motion, one or more scalars of motion, a position vector, a GPS location, a velocity, an acceleration, an orientation, or a combination thereof of the virtual vehicle.

In some embodiments, identifying the position of the physical vehicle includes detecting one or more vectors of motion, one or more scalars of motion, a position vector, a GPS location, a velocity, an acceleration, an orientation, or a combination thereof of the virtual vehicle.

In some embodiments, identifying the position of the point of view at the racecourse includes detecting a spatial position of a head of an operator of the physical vehicle. In some embodiments, the method further includes transmitting, by a telemetry system coupled to the physical vehicle, the spatial position to a simulator system; receiving, at the telemetry system, information related to the portion of the virtual vehicle visible from the virtual position of the point of view; and displaying, to the operator of the physical vehicle, the representation of the portion of the virtual vehicle based on the information.

In some embodiments, the method further includes displaying the representation of the portion of the virtual vehicle includes: translating the information into a set of graphical elements, displaying the representation of the portion includes displaying the set of graphical elements. In some embodiments, the method further includes computing, at the simulation system, the information related to the portion visible from the virtual position of the point of view.

In some embodiments, displaying the series of representations of the virtual vehicle includes displaying the series of representation on a display of the physical vehicle, and the display is a transparent organic light-emitting diode (T-OLED) display that allows light to pass through the T-OLED to display the field of view to the operator.

In some embodiments, displaying the series of representations of the virtual vehicle includes displaying the series of representations on a display of the physical vehicle, and the display is an LCD display, the method further including: capturing, by a camera coupled to the physical vehicle, an image representing the field of view of the physical world as seen by the operator on the display in the physical vehicle; and outputting the image on a side of the LCD display to display the field of view to the operator, the series of representations are overlaid on the image displayed by the LCD display.

In some embodiments, displaying the series of representations of the virtual vehicle includes displaying the series of representations on a display of the physical vehicle, and the display includes a front windshield of the physical vehicle, one or more side windows of the physical vehicle, a rear windshield of the physical vehicle, one or more side mirrors, a rearview mirror, or a combination thereof.

In some embodiments, displaying the series of representations of the virtual vehicle includes displaying the series of representations on a display of a headset worn by the operator. In some embodiments, the headset is a helmet.

In some embodiments, identifying the position of the point of view at the racecourse includes detecting one or more of a spatial position of a user's eyes, a gaze direction of the user's eyes, or a focus point of the user's eyes.

In some embodiments, the method further includes: providing the position of the physical vehicle and the position of the point of view at the racecourse to a simulation system; calculating, by the simulation system, a virtual world including the virtual vehicle and a representation of the physical vehicle; calculating, by the simulation system, a virtual position of the point of view within the virtual world based on the position of the point of view at the racecourse; and calculating, by the simulation system, the portion of the virtual vehicle visible from the virtual position of the point of view, and providing, to a display system, the portion of the virtual vehicle visible from the virtual position of the point of view includes outputting, by the simulation system, the portion of the virtual vehicle visible from the virtual position of the point of view. Problems solved by embodiments disclosed herein can include how to calculate a visible portion of a virtual vehicle. In some embodiments herein, calculating the visible portion of the virtual vehicle in a virtual world increases the accuracy of the visible portion determination. The increased accuracy provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle. In some embodiments herein, providing visible portions through a virtual calculation increases the efficiency of the calculation. The increased efficiency reduces power usage and improves representation speed for a more seamless user experience.

In some embodiments, identifying the position of the physical vehicle includes receiving a location of each of two portions of the vehicle. In some embodiments, identifying the position of the physical vehicle includes receiving a location of one portion of the vehicle and an orientation of the vehicle. In some embodiments, receiving the orientation of the vehicle includes receiving gyroscope data. Problems solved by embodiments disclosed herein can include how to correctly position a physical vehicle in a virtual world for determining a visible portion of a virtual vehicle. In some embodiments herein, using a measure of orientation provides for accurate placement of the physical vehicle in the virtual world. The increased accuracy provides for a more faithful display of the visible portions of the vehicle, thereby improving the user experience.

In some embodiments, the position of the point of view at the racecourse includes a position of a point of view of an operator of the physical vehicle at the racecourse. In some embodiments, the position of the point of view at the racecourse includes a position of a point of view of an audience member present at a racecourse and observing the physical vehicle on the racecourse. In some embodiments, the position of the point of view at the racecourse includes a position of a camera present at a racecourse and imaging the physical vehicle on the racecourse. In some embodiments, the camera images a portion of the racecourse on which the physical vehicle is racing. When the physical vehicle is travelling across the portion of the racecourse being captured by the camera, the camera may capture the physical vehicle in its video feed. When the physical vehicle is not travelling across the portion of the racecourse being captured by the camera, the camera may still capture the portion of the racecourse.

In some embodiments, identifying the position of the point of view at the racecourse includes at least one of measuring a point of gaze of eyes, tracking eye movement, tracking head position, identifying a vector from one or both eyes to a fixed point on the physical vehicle, identifying a vector from a point on the head to a fixed point on the physical vehicle, identifying a vector from a point on eye-wear to a fixed point on the physical vehicle, identifying a vector from a point on a head gear to a fixed point on the physical vehicle, identifying a vector from one or both eyes to a fixed point in a venue, identifying a vector from a point on the head to a fixed point in the venue, identifying a vector from a point on eye-wear to a fixed point in the venue, or identifying a vector from a point on a head gear to a fixed point in the venue. In some embodiments, identifying the position of the point of view at the racecourse includes measuring the point of gaze of the eyes and the measuring includes measuring light reflection or refraction from the eyes.

In some embodiments, providing the position of the physical vehicle and the position of the point of view at the racecourse includes wireless transmitting at least one position.

In some embodiments, calculating a virtual world includes transforming physical coordinates of the physical vehicle to coordinates in the virtual world and the virtual position of the physical vehicle includes the virtual coordinates.

In some embodiments, calculating the portion of the virtual vehicle visible from the virtual position of the point of view includes: calculating a representation of the physical vehicle in the virtual world, calculating a representation of a physical object in the virtual world between the point of view and the virtual vehicle within the virtual world, and extracting a portion of the virtual vehicle that is unobscured, from the virtual position of the point of view, by the representation of the physical vehicle and the representation of the physical object. In some embodiments, the portion of the virtual vehicle within the virtual world that is visible from the virtual position of the point of view includes the unobscured portion. Problems solved by embodiments disclosed herein can include how to calculate a visible portion of a virtual vehicle, including more than just the portion that is not obscured by the physical vehicle. In some embodiments herein, calculating the visible portion in a virtual world that includes physical objects in the real world increases the accuracy of the visible portion determination. The increased accuracy provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle. In some embodiments herein, providing visible portions through a virtual calculation increases the efficiency of the calculation. The increased efficiency reduces power usage and improves representation speed for a more seamless user experience.

In some embodiments, extracting the portions of the virtual vehicle may include determining which pixels are obstructed by other representations, and only displaying pixels that are not obstructed by other representations. In some embodiments, extracting the portions of the virtual vehicle may include setting a pixel alpha value of zero percent (in RGBA space) for all pixels obstructed by other representations. For example, portions of the virtual vehicle may be obstructed by other virtual representations, e.g., another virtual vehicle, or representations of physical objects, e.g., objects within a physical vehicle or the physical vehicle itself. Any observed (from the virtual position of the point of view) pixel values can be used to provide the portions of the virtual vehicle that are visible from the virtual position of the point of view. In some embodiments, the pixels of unobscured and observed portions of the virtual vehicle can each be set to include an alpha value greater than zero percent (in RGBA space) to indicate that those unobscured pixels can be seen and should be displayed. In contrast, pixels set to an alpha value of zero percent indicate that those pixels are fully transparent, i.e., invisible, and would not be displayed.

In some embodiments, calculating the representation of the physical object between the virtual position of the point of view and the representation of the physical vehicle includes accessing a database of representations to obtain a virtual position of the physical object.

In some embodiments, the portion of the virtual vehicle visible from the virtual position of the point of view consists of portions of the virtual vehicle that are unobscured by other representations in the virtual world.

In some embodiments, the portion of the virtual vehicle visible from the virtual position of the point of view includes a virtual shadow in the virtual world. In some embodiments, the virtual shadow is at least one of a shadow projected by the virtual vehicle and a shadow projected onto the virtual vehicle. In some embodiments, the portion of the virtual vehicle visible from the virtual position of the point of view includes a virtual reflection. In some embodiments, the virtual reflection is at least one of a reflection of the virtual vehicle and a reflection on the virtual vehicle.

In some embodiments, calculating, by the simulation system, a portion of the virtual vehicle within the virtual world that is visible from the virtual position of the point of view includes calculating a field of view from the virtual position of the point of view and providing, to the display system, the portion of the virtual vehicle visible from the virtual position of the point of view includes displaying the portion of the virtual vehicle within the field of view.

In some embodiments, calculating, by the simulation system, a portion of the virtual vehicle within the virtual world that is visible from the position of the virtual point of view includes calculating a field of view from the virtual position of the point of view and providing, to the display system, the portion of the virtual vehicle visible from the virtual position of the point of view consists of displaying the portion of the virtual vehicle visible within the field of view.

In some embodiments, the method may facilitate a competition between two virtual vehicles on a physical racecourse. In a scenario where two virtual vehicles compete on a physical racecourse without any physical vehicles, then the step of “identifying a position of a physical vehicle” would be unnecessary. The method could include identifying a position of a point of view at the racecourse and providing, to a display system, a portion of the virtual vehicle visible from the position of the point of view at the racecourse. All aspects of the foregoing methods not concerning to the position of the physical vehicle could be applied in such embodiment. In some embodiments, the virtual vehicles are given special properties and a video game appearance. In some embodiments, video game attributes (i.e., virtual objects) can be similarly applied to physical vehicles by overlaying those video game attributes on top of the physical vehicles. For example, cars can be given boosts, machine guns, missiles (other graphical virtual objects put into the real world view), virtual jumps, etc. Viewers at the racecourse and at home could view the virtual competitors on the physical racecourse as if competing in the real-world.

In some embodiments, a method for displaying a virtual vehicle includes means for identifying a position of a physical vehicle at a racecourse, means for identifying a position of a point of view at the racecourse, and means for providing, to a display system, a portion of the virtual vehicle visible from a virtual position of the point of view calculated within a virtual world based on the position of the point of view at the racecourse. Problems solved by embodiments disclosed herein can include overcoming the lack of realism experienced by users of prior solutions. In some embodiments herein, providing visible portions of the virtual vehicle to the user increases the realism experienced by the user. The increased realism provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle.

In some embodiments, the portion of the virtual vehicle visible from the virtual position of the point of view includes a portion of the virtual vehicle that is unobscured, from the virtual position of the point of view, by a representation of the physical vehicle at a virtual position of the physical vehicle in the virtual world. Problems solved by embodiments disclosed herein can include how to provide a visible portion of a virtual vehicle. In some embodiments herein, providing visible portions of the virtual vehicle through a virtual calculation of the visible portion increases the accuracy of the visible portion determination. The increased accuracy provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle. In some embodiments herein, providing visible portions through a virtual calculation increases the efficiency of the calculation. The increased efficiency reduces power usage and improves representation speed for a more seamless user experience.

In some embodiments, the method further includes means for simulating, by the simulation system, an interaction between the virtual vehicle and the representation of the physical vehicle in the virtual world, the portion of the virtual vehicle visible from the virtual position of the point of view is calculated based on the interaction.

In some embodiments, the position of the point of view at the racecourse includes a point of view of an operator of the physical vehicle, and means for identifying a position of a point of view at the racecourse includes means for detecting, at a sensor, the point of view of the operator of the physical vehicle, the method further including: means for identifying a position of a physical object; means for receiving kinematics information of the virtual vehicle; means for generating, at a display system, a representation of the virtual vehicle based on the position of the physical object, the position of the point of view at the racecourse, and the kinematics information; and means for displaying the representation of the virtual vehicle such that the virtual vehicle is aligned with the physical object from the perspective of the position of the point of view at the racecourse. Problems solved by embodiments disclosed herein can include how to calculate a visible portion of a virtual vehicle. In some embodiments herein, calculating the visible portion of the virtual vehicle in a virtual world increases the accuracy of the visible portion determination. The increased accuracy provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle. In some embodiments herein, providing visible portions through a virtual calculation increases the efficiency of the calculation. The increased efficiency reduces power usage and improves representation speed for a more seamless user experience.

In some embodiments, the method further includes means for generating, at a display system, the representation of the portion of the virtual vehicle visible from the virtual position of the point of view.

In some embodiments, the method further includes means for displaying, by the display system, a series of representations of the virtual vehicle over a period of time to simulate a trajectory of the virtual vehicle on the racecourse, the series of representations includes the representation of the portion of the virtual vehicle visible form the virtual position of the point of view. In some embodiments, a predicted trajectory of the virtual vehicle is displayed. The prediction may be based on current trajectory, acceleration, current vehicle parameters, etc. This may allow an audience member to anticipate if a virtual vehicle is likely to overtake a physical vehicle. The predicted trajectory may be presented as a line, such as a yellow line. Other displays may also be included, such as “GOING TO PASS!” or “GOING TO CRASH!”

In some embodiments, the method further includes means for storing, by the display system, a digital 3-D model of the virtual vehicle used to generate each representation from the series of representations, each representation is generated by the display system based on the digital 3-D model.

In some embodiments, the method further includes means for receiving a digital 3-D model of the virtual vehicle used to generate each representation from the series of representations, each representation is generated by the display system based on the digital 3-D model.

In some embodiments, the kinematics information includes one or more vectors of motion, one or more scalars of motion, a position vector, a GPS location, a velocity, an acceleration, an orientation, or a combination thereof of the virtual vehicle.

In some embodiments, means for identifying the position of the physical vehicle includes means for detecting one or more vectors of motion, one or more scalars of motion, a position vector, a GPS location, a velocity, an acceleration, an orientation, or a combination thereof of the virtual vehicle.

In some embodiments, means for identifying the position of the point of view at the racecourse includes means for detecting a spatial position of a head of an operator of the physical vehicle. In some embodiments, the method further includes means for transmitting, by a telemetry system coupled to the physical vehicle, the spatial position to a simulator system; means for receiving, at the telemetry system, information related to the portion of the virtual vehicle visible from the virtual position of the point of view; and means for displaying, to the operator of the physical vehicle, the representation of the portion of the virtual vehicle based on the information.

In some embodiments, the method further includes means for displaying the representation of the portion of the virtual vehicle includes: means for translating the information into a set of graphical elements, means for displaying the representation of the portion includes means for displaying the set of graphical elements. In some embodiments, the method further includes means for computing, at the simulation system, the information related to the portion visible from the virtual position of the point of view.

In some embodiments, means for displaying the series of representations of the virtual vehicle includes means for displaying the series of representation on a display of the physical vehicle, and the display is a transparent organic light-emitting diode (T-OLED) display that allows light to pass through the T-OLED to display the field of view to the operator.

In some embodiments, means for displaying the series of representations of the virtual vehicle includes means for displaying the series of representations on a display of the physical vehicle, and the display is an LCD display, the method further including: means for capturing, by a camera coupled to the physical vehicle, an image representing the field of view of the physical world as seen by the operator on the display in the physical vehicle; and means for outputting the image on a side of the LCD display to display the field of view to the operator, the series of representations are overlaid on the image displayed by the LCD display.

In some embodiments, means for displaying the series of representations of the virtual vehicle includes means for displaying the series of representations on a display of the physical vehicle, and the display includes a front windshield of the physical vehicle, one or more side windows of the physical vehicle, a rear windshield of the physical vehicle, one or more side mirrors, a rearview mirror, or a combination thereof.

In some embodiments, means for displaying the series of representations of the virtual vehicle includes means for displaying the series of representations on a display of a headset worn by the operator. In some embodiments, the headset is a helmet.

In some embodiments, means for identifying the position of the point of view at the racecourse includes means for detecting one or more of a spatial position of a user's eyes, a gaze direction of the user's eyes, or a focus point of the user's eyes.

In some embodiments, the method further includes: means for providing the position of the physical vehicle and the position of the point of view at the racecourse to a simulation system; means for calculating, by the simulation system, a virtual world including the virtual vehicle and a representation of the physical vehicle; means for calculating, by the simulation system, a virtual position of the point of view within the virtual world based on the position of the point of view at the racecourse; and means for calculating, by the simulation system, the portion of the virtual vehicle visible from the virtual position of the point of view, and means for providing, to a display system, the portion of the virtual vehicle visible from the virtual position of the point of view includes means for outputting, by the simulation system, the portion of the virtual vehicle visible from the virtual position of the point of view.

In some embodiments, means for identifying the position of the physical vehicle includes means for receiving a location of each of two portions of the vehicle.

In some embodiments, means for identifying the position of the physical vehicle includes means for receiving a location of one portion of the vehicle and an orientation of the vehicle. In some embodiments, means for receiving the orientation of the vehicle includes means for receiving gyroscope data. Problems solved by embodiments disclosed herein can include how to correctly position a physical vehicle in a virtual world for determining a visible portion of a virtual vehicle. In some embodiments herein, using a measure of orientation provides for accurate placement of the physical vehicle in the virtual world. The increased accuracy provides for a more faithful display of the visible portions of the vehicle, thereby improving the user experience.

In some embodiments, the position of the point of view at the racecourse includes a position of a point of view of an operator of the physical vehicle at the racecourse. In some embodiments, the position of the point of view at the racecourse includes a position of a point of view of an audience member present at a racecourse and observing the physical vehicle on the racecourse. In some embodiments, the position of the point of view at the racecourse includes a position of a camera present at a racecourse and the method further includes means for imaging the physical vehicle on the racecourse. In some embodiments, the camera images a portion of the racecourse on which the physical vehicle is racing. When the physical vehicle is travelling across the portion of the racecourse being captured by the camera, the camera may capture the physical vehicle in its video feed. When the physical vehicle is not travelling across the portion of the racecourse being captured by the camera, the camera may still capture the portion of the racecourse.

In some embodiments, means for identifying the position of the point of view at the racecourse includes at least one of means for measuring a point of gaze of eyes, means for tracking eye movement, means for tracking head position, means for identifying a vector from one or both eyes to a fixed point on the physical vehicle, means for identifying a vector from a point on the head to a fixed point on the physical vehicle, means for identifying a vector from a point on eye-wear to a fixed point on the physical vehicle, means for identifying a vector from a point on a head gear to a fixed point on the physical vehicle, means for identifying a vector from one or both eyes to a fixed point in a venue, means for identifying a vector from a point on the head to a fixed point in the venue, means for identifying a vector from a point on eye-wear to a fixed point in the venue, or means for identifying a vector from a point on a head gear to a fixed point in the venue. In some embodiments, means for identifying the position of the point of view at the racecourse includes means for measuring the point of gaze of the eyes and the means for measuring includes means for measuring light reflection or refraction from the eyes.

In some embodiments, means for providing the position of the physical vehicle and the position of the point of view at the racecourse includes means for wireless transmitting at least one position.

In some embodiments, means for calculating a virtual world includes means for transforming physical coordinates of the physical vehicle to coordinates in the virtual world and the virtual position of the physical vehicle includes the virtual coordinates.

In some embodiments, means for calculating the portion of the virtual vehicle visible from the virtual position of the point of view includes: means for calculating a representation of the physical vehicle in the virtual world, means for calculating a representation of a physical object in the virtual world between the point of view and the virtual vehicle within the virtual world, and means for extracting a portion of the virtual vehicle that is unobscured, from the virtual position of the point of view, by the representation of the physical vehicle and the representation of the physical object. In some embodiments, the portion of the virtual vehicle within the virtual world that is visible from the virtual position of the point of view includes the unobscured portion. Problems solved by embodiments disclosed herein can include how to calculate a visible portion of a virtual vehicle, including more than just the portion that is not obscured by the physical vehicle. In some embodiments herein, calculating the visible portion in a virtual world that includes physical objects in the real world increases the accuracy of the visible portion determination. The increased accuracy provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle. In some embodiments herein, providing visible portions through a virtual calculation increases the efficiency of the calculation. The increased efficiency reduces power usage and improves representation speed for a more seamless user experience.

In some embodiments, means for extracting the portions of the virtual vehicle may include means for determining which pixels are obstructed by other representations, and only displaying pixels that are not obstructed by other representations. In some embodiments, means for extracting the portions of the virtual vehicle may include means for setting a pixel alpha value of zero percent (in RGBA space) for all pixels obstructed by other representations. For example, portions of the virtual vehicle may be obstructed by other virtual representations, e.g., another virtual vehicle, or representations of physical objects, e.g., objects within a physical vehicle or the physical vehicle itself. Any observed (from the virtual position of the point of view) pixel values can be used to provide the portions of the virtual vehicle that are visible from the virtual position of the point of view. In some embodiments, the pixels of unobscured and observed portions of the virtual vehicle can each be set to include an alpha value greater than zero percent (in RGBA space) to indicate that those unobscured pixels can be seen and should be displayed. In contrast, pixels set to an alpha value of zero percent indicate that those pixels are fully transparent, i.e., invisible, and would not be displayed.

In some embodiments, means for calculating the representation of the physical object between the virtual position of the point of view and the representation of the physical vehicle includes means for accessing a database of representations to obtain a virtual position of the physical object.

In some embodiments, the portion of the virtual vehicle visible from the virtual position of the point of view consists of portions of the virtual vehicle that are unobscured by other representations in the virtual world.

In some embodiments, the portion of the virtual vehicle visible from the virtual position of the point of view includes a virtual shadow in the virtual world. In some embodiments, the virtual shadow is at least one of a shadow projected by the virtual vehicle and a shadow projected onto the virtual vehicle. In some embodiments, the portion of the virtual vehicle visible from the position of the point of view at the racecourse includes a virtual reflection. In some embodiments, the virtual reflection is at least one of a reflection of the virtual vehicle and a reflection on the virtual vehicle.

In some embodiments, means for calculating, by the simulation system, a portion of the virtual vehicle within the virtual world that is visible from the virtual position of the point of view includes means for calculating a field of view from the virtual position of the point of view and means for providing, to the display system, the portion of the virtual vehicle visible from the virtual position of the point of view includes displaying the portion of the virtual vehicle within the field of view.

In some embodiments, means for calculating, by the simulation system, a portion of the virtual vehicle within the virtual world that is visible from the position of the virtual point of view includes means for calculating a field of view from the virtual position of the point of view and means for providing, to the display system, the portion of the virtual vehicle visible from the virtual position of the point of view consists of means for displaying the portion of the virtual vehicle within the field of view.

In some embodiments, a system for displaying virtual vehicles includes a first sensor detecting a position of a physical vehicle at a racecourse, a second sensor detecting a position of a point of view at the racecourse, and a simulation system outputting a portion of the virtual vehicle visible from a virtual position of the point of view. Problems solved by embodiments disclosed herein can include overcoming the lack of realism experienced by users of prior solutions. In some embodiments herein, providing visible portions of the virtual vehicle to the user increases the realism experienced by the user. The increased realism provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle.

In some embodiments, the simulation system determines the visible portion of the virtual vehicle based on a virtual position of the physical vehicle in a virtual world, a virtual position of the point of view in the virtual world, and a virtual position of the virtual vehicle in the virtual world. Problems solved by embodiments disclosed herein can include how to provide a visible portion of a virtual vehicle. In some embodiments herein, providing visible portions of the virtual vehicle through a virtual calculation of the visible portion increases the accuracy of the visible portion determination. The increased accuracy provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle. In some embodiments herein, providing visible portions through a virtual calculation increases the efficiency of the calculation. The increased efficiency reduces power usage and improves representation speed for a more seamless user experience.

In some embodiments, the portion of the virtual vehicle visible from the virtual position of the point of view includes a portion of the virtual vehicle that is unobscured, from the virtual position of the point of view, by a representation of the physical vehicle at the virtual position of the physical vehicle.

In some embodiments, the system further includes the simulation system configured to simulate an interaction between the virtual vehicle and the representation of the physical vehicle in the virtual world, the portion of the virtual vehicle visible from the virtual position of the point of view is calculated based on the interaction.

In some embodiments, the system includes a simulation system configured to: receive the position of the physical vehicle and the position of the point of view at the racecourse; calculate a virtual world including the virtual vehicle and a representation of the physical vehicle; calculate a virtual position of the point of view within the virtual world based on the position of the point of view at the racecourse; calculate the portion of the virtual vehicle visible from the virtual position of the point of view; and output, to the display system, the portion of the virtual vehicle visible from the virtual position of the point of view. Problems solved by embodiments disclosed herein can include how to calculate a visible portion of a virtual vehicle. In some embodiments herein, calculating the visible portion of the virtual vehicle in a virtual world increases the accuracy of the visible portion determination. The increased accuracy provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle. In some embodiments herein, providing visible portions through a virtual calculation increases the efficiency of the calculation. The increased efficiency reduces power usage and improves representation speed for a more seamless user experience.

In some embodiments, the first sensor receives a location of each of two portions of the vehicle. In some embodiments, the first sensor receives a location of one portion of the vehicle and an orientation of the vehicle. In some embodiments, receiving the orientation of the vehicle includes receiving gyroscope data. Problems solved by embodiments disclosed herein can include how to correctly position a physical vehicle in a virtual world for determining a visible portion of a virtual vehicle. In some embodiments herein, using a measure of orientation provides for accurate placement of the physical vehicle in the virtual world. The increased accuracy provides for a more faithful display of the visible portions of the vehicle, thereby improving the user experience.

In some embodiments, the position of the point of view at the racecourse includes a position of a point of view of an operator of the physical vehicle at the racecourse. In some embodiments, the position of the point of view at the racecourse includes a position of a point of view of an audience member present at a racecourse and observing the physical vehicle on the racecourse.

In some embodiments, the position of the point of view at the racecourse includes a position of a camera present at a racecourse and imaging the physical vehicle on the racecourse. In some embodiments, the camera images a portion of the racecourse on which the physical vehicle is racing. When the physical vehicle is travelling across the portion of the racecourse being captured by the camera, the camera may capture the physical vehicle in its video feed. When the physical vehicle is not travelling across the portion of the racecourse being captured by the camera, the camera may still capture the portion of the racecourse.

In some embodiments, the second sensor is configured to detect the position of the point of view at the racecourse by at least one of measuring the point of gaze of eyes, tracking eye movement, tracking head position, identifying a vector from one or both eyes to a fixed point on the physical vehicle, identifying a vector from a point on the head to a fixed point on the physical vehicle, identifying a vector from a point on eye-wear to a fixed point on the physical vehicle, identifying a vector from a point on a head gear to a fixed point on the physical vehicle, identifying a vector from one or both eyes to a fixed point in a venue, identifying a vector from a point on the head to a fixed point in the venue, identifying a vector from a point on eye-wear to a fixed point in the venue, or identifying a vector from a point on a head gear to a fixed point in the venue. In some embodiments, second sensor is configured to detect the position of the point of view at the racecourse by measuring light reflection or refraction from the eyes.

In some embodiments, receiving the position of the physical vehicle and the position of the point of view at the racecourse includes wireless receiving at least one position.

In some embodiments, calculating a virtual world includes transforming physical coordinates of the physical vehicle to coordinates in the virtual world and the virtual position of the physical vehicle includes the virtual coordinates.

In some embodiments, calculating a portion of the virtual vehicle visible from the position of the point of view at the racecourse includes: calculating a representation of the physical vehicle in the virtual world, calculating a representation of a physical object in the virtual world between the point of view and the virtual vehicle within the virtual world, and extracting a portion of the virtual vehicle that is unobscured, from the virtual position of the point of view, by the representation of the physical vehicle and the representation of the physical object. In some embodiments, the portion of the virtual vehicle within the virtual world that is visible from the virtual position of the point of view includes the unobscured portion. Problems solved by embodiments disclosed herein can include how to calculate a visible portion of a virtual vehicle, including more than just the portion that is not obscured by the physical vehicle. In some embodiments herein, calculating the visible portion in a virtual world that includes physical objects in the real world increases the accuracy of the visible portion determination. The increased accuracy provides a reliable and re-producible user experience by providing a real-world race that includes a virtual vehicle. In some embodiments herein, providing visible portions through a virtual calculation increases the efficiency of the calculation. The increased efficiency reduces power usage and improves representation speed for a more seamless user experience.

In some embodiments, extracting the portions of the virtual vehicle may include determining which pixels are obstructed by other representations, and only displaying pixels that are not obstructed by other representations. In some embodiments, extracting the portions of the virtual vehicle may include setting a pixel alpha value of zero percent (in RGBA space) for all pixels obstructed by other representations. For example, portions of the virtual vehicle may be obstructed by other virtual representations, e.g., another virtual vehicle, or representations of physical objects, e.g., objects within a physical vehicle or the physical vehicle itself. Any observed (from the virtual position of the point of view) pixel values can be used to provide the portions of the virtual vehicle that are visible from the virtual position of the point of view. In some embodiments, the pixels of unobscured and observed portions of the virtual vehicle can each be set to include an alpha value greater than zero percent (in RGBA space) to indicate that those unobscured pixels can be seen and should be displayed. In contrast, pixels set to an alpha value of zero percent indicate that those pixels are fully transparent, i.e., invisible, and would not be displayed.

In some embodiments, calculating the representation of the physical object between the virtual position of the point of view and the representation of the physical vehicle includes accessing a database of representations to obtain a virtual position of the physical object.

In some embodiments, the portion of the virtual vehicle visible from the virtual position of the point of view consists of portions of the virtual vehicle that are unobscured by other representations in the virtual world.

In some embodiments, the portion of the virtual vehicle visible from the virtual position of the point of view includes a virtual shadow in the virtual world. In some embodiments, the virtual shadow is at least one of a shadow projected by the virtual vehicle and a shadow projected onto the virtual vehicle. In some embodiments, the portion of the virtual vehicle visible from the virtual position of the point of view includes a virtual reflection. In some embodiments, the virtual reflection is at least one of a reflection of the virtual vehicle and a reflection on the virtual vehicle.

In some embodiments, calculating a portion of the virtual vehicle within the virtual world that is visible from the virtual position of the point of view includes calculating a field of view from the virtual position of the point of view and providing, to the display system, the portion of the virtual vehicle visible from the virtual position of the point of view includes displaying the portion of the virtual vehicle within the field of view.

In some embodiments, calculating a portion of the virtual vehicle within the virtual world that is visible from the position of the point of view at the racecourse includes calculating a field of view from the virtual position of the point of view and providing, to the display system, the portion of the virtual vehicle visible from the virtual position of the point of view consists of displaying the portion of the virtual vehicle visible within the field of view.

In some embodiments, the system may facilitate a competition between two virtual vehicles on a physical racecourse. In a scenario where two virtual vehicles compete on a physical racecourse without any physical vehicles, then the first sensor detecting a position of a physical vehicle would unnecessary. The system in such an embodiment could include a sensor detecting a position of a point of view at the racecourse and a display system providing a portion of the virtual vehicle visible from the position of the point of view at the racecourse. All aspects of the foregoing systems not concerning to the position of the physical vehicle could be applied in such embodiment. In some embodiments, the virtual vehicles are given special properties and a video game appearance. For example, cars can be given boosts, machine guns, missiles (other graphical virtual objects put into the real world view), virtual jumps, etc. In some embodiments, the physical vehicles can be given similar video game attributes. For example, graphic virtual objects such as machine guns or missiles etc. may be rendered and overlaid on the physical vehicles as observed on a display. Viewers at the racecourse and at home could view the virtual competitors on the physical racecourse as if competing in the real-world.

As used herein, “point of view” can be understood to be a real world position from which the virtual vehicle will be viewed. For example, an operator of a physical vehicle (e.g., a driver in the physical vehicle) viewing his surroundings. A display may be used to augmenting the operator's view by introducing the virtual vehicle in the view. Because the virtual vehicle is added to the real world point of view, if the display system was not provided or discontinued, the real world point of view would not see a virtual vehicle.

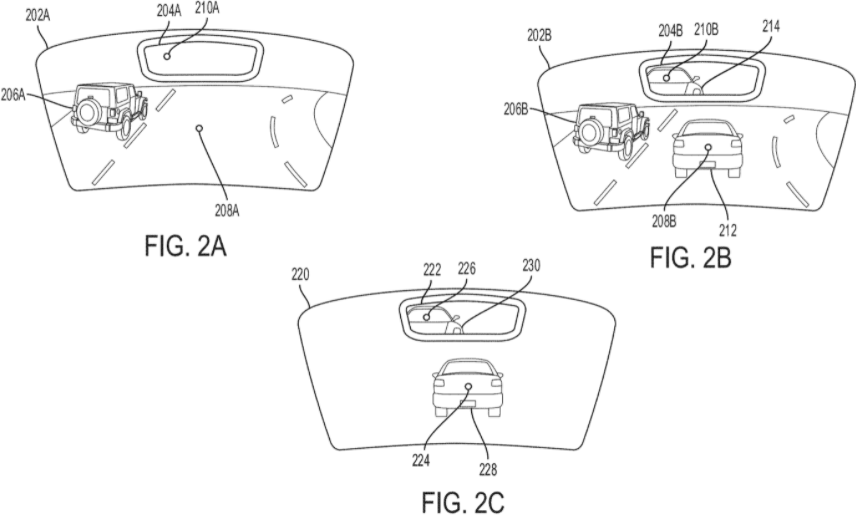

FIG. 1is a diagram100of a physical vehicle101, according to some embodiments.FIG. 1provides an example of a point of view of an operator (e.g., driver) of physical vehicle101. Thus, the position of the point of the view of the operator is the position and direction (gaze) of the operator's eyes (or some approximation of the operator's eye position and direction). AlthoughFIG. 1is provided with reference to the point of view of the operator of a physical vehicle, the teachings apply equally to other points of view, such as audience member at a racecourse on which physical vehicle is driving or a camera at the racecourse.

Physical vehicle101includes a display system102(including rendering component107), a simulation component106, a telemetry system104(including sensors108), RF circuitry105, and a force controller112. Physical vehicle101also includes eye-position detector110, front windshield120, rear-view mirror122, rear windshield124, side windows126A and126B, side mirrors128A and128B, seat and head brace130, speakers132, and brakes134.FIG. 1also includes a vehicle operator114. InFIG. 1, vehicle operator114is illustrated wearing a helmet116, a visor over eyes117, and a haptic suit118. In some embodiments, the visor worn over eyes117is a component of helmet116.

As shown inFIG. 1, physical vehicle101is an automobile. In some embodiments, devices within physical vehicle101communicate with a simulation system140to simulate one or more virtual vehicles within a field of view of vehicle operator114. Simulation system140may be on-board physical vehicle101. In some embodiments, as illustrated in diagram100, simulation system140may be remote from physical vehicle101, also described elsewhere herein. In some embodiments, the functionality performed by simulation system140may be distributed across both systems that are on-board physical vehicle101and remote from physical vehicle101. In some embodiments, simulation system140generates and maintains a racing simulation141between one or more live participants (i.e., vehicle operator114operating physical vehicle101) and one or more remote participants (not shown).

Simulating virtual vehicles in real-time enhances the racing experience of vehicle operator114. Implementing simulation capabilities within physical vehicle101allows vehicle operator114, who is a live participant, to compete against a remote participant operating a virtual vehicle within racing simulation141. The field of view of vehicle operator114is the observable world seen by vehicle operator114augmented with a virtual vehicle. In some embodiments, the augmentation can be provided by a display, e.g., displays housed in or combined with one or more of front windshield120, rear-view mirror122, rear windshield124, side windows126A and126B, and side mirrors128A and128B.

In some embodiments, the augmentation can be provided by a hologram device or 3-D display system. In these embodiments, front windshield120, rear-view mirror122, rear windshield124, side windows126A and126B, or side mirrors128A and128B can be T-OLED displays that enable 3-D images to be displayed, utilizing cameras to capture the surroundings displayed with 3-D images overlaid on non-transparent displays.

In some embodiments, the augmentation can be provided by a head-mounted display (HMD) worn by vehicle operator114over eyes117. The HMD may be worn as part of helmet116. In some embodiments, the HMD is imbedded in visors, glasses, goggles, or other devices worn in front of the eyes of vehicle operator114. Like the display described above, the HMD may operate to augment the field of view of vehicle operator114by rendering one or more virtual vehicles on one or more displays in the HMD.

In other embodiments, the HMD implements retinal projection techniques to simulate one or more virtual vehicles. For examples, the HMD may include a virtual retinal display (VRD) that projects images onto the left and right eyes of vehicle operator114to create a three-dimensional (3D) image of one or more virtual vehicles in the field of view of vehicle operator114.

In some embodiments, the augmentation can be provided by one or more displays housed in physical vehicle101as described above (e.g., front windshield120and rear-view mirror122), a display worn by vehicle operator114as described above (e.g., an HMD), a hologram device as described above, or a combination thereof. An advantage of simulating virtual vehicles on multiple types of displays (e.g., on a display housed in physical vehicle101and an HMD worn by vehicle operator114) is that an augmented-reality experience can be maintained when vehicle operator114takes off his HMD. Additionally, multiple participants in physical vehicle101can share the augmented-reality experience regardless of whether each participant is wearing an HMD.

In some embodiments, multiple virtual vehicles are simulated for vehicle operator114. For example, multiple virtual vehicles are displayed in front and/or behind and/or beside the operator. For example, one or more virtual vehicles may be displayed on front windshield120(an example display) and one or more virtual vehicles may be displayed on rear-view mirror122(an example display). In addition, one or more virtual vehicles may be displayed on the HMD. Similarly, physical vehicle101may be one of a plurality of physical vehicles in proximity to each other. In some embodiments, a virtual vehicle being simulated for vehicle operator114can be another physical vehicle running on a physical racecourse at a different physical location than that being run by vehicle operator114. For example, one driver could operate a vehicle on a racecourse in Monaco and another driver could operate a vehicle on replica-racecourse in Los Angeles. Embodiments herein contemplate presenting one or both of the Monaco and Los Angeles driver with a virtual vehicle representing the other driver.

Returning to simulation system140and as described above, simulation system140may include racing simulation141, which simulates a competition between physical vehicle101and one or more virtual vehicles on a virtual racecourse. In some embodiments, the virtual racecourse is generated and stored by simulation system140to correspond to the physical racecourse in which vehicle operator114is operating, e.g., driving, physical vehicle101. In some embodiments, the virtual racecourse is generated using 360 degree laser scan video recording or similar technology. Therefore, as vehicle operator114controls physical vehicle101on the physical racecourse in real-time, the virtual trajectory of physical vehicle101within racing simulation141is simulated by simulation system140to emulate the physical, real-world trajectory of physical vehicle101on the physical racecourse.

In some embodiments, to enable simulation system140to simulate physical vehicle101on the virtual racecourse in racing simulation141, physical vehicle101includes telemetry system104. Telemetry system104includes sensors108that detect data associated with physical vehicle101. Sensors108include one or more devices that detect kinematics information of physical vehicle101. In some embodiments, kinematics information includes one or more vectors of motion, one or more scalars of motion, an orientation, a Global Positioning System (GPS) location, or a combination thereof. For example, a vector of motion may include a velocity, a position vector, or an acceleration. For example, a scalar of motion may include a speed. Accordingly, sensors108may include one or more accelerometers to detect acceleration, one or more GPS (or GLONASS or other global navigation system) receiver to detect the GPS location, one or more motion sensors, one or more orientation sensors, or a combination thereof. In some embodiments, the real-time data collected by sensors108are transmitted to simulation system140. Other real-time data may include measurements of the car, heat, tire temperature, etc. In some embodiments, one or more of the kinematics information and car measurements are used for simulation predictability. For example, some embodiments may include predictive simulation engines that pre build scenes based on these other measurements and the velocity and acceleration information.

In some embodiments, physical vehicle101includes radio frequency (RF) circuitry105for transmitting data, e.g., telemetric information generated by telemetry system104, to simulation system140. RF circuitry105receives and sends RF signals, also called electromagnetic signals. RF circuitry105converts electrical signals to/from electromagnetic signals and communicates with communications networks and other communications devices via the electromagnetic signals. RF circuitry105may include well-known circuitry for performing these functions, including but not limited to an antenna system, an RF transceiver, one or more amplifiers, a tuner, one or more oscillators, a digital signal processor, a CODEC chipset, a subscriber identity module (SIM) card, memory, and so forth. RF circuitry105may communicate with networks, such as the Internet, also referred to as the World Wide Web (WWW), an intranet and/or a wireless network, such as a cellular telephone network, a wireless local area network (LAN) and/or a metropolitan area network (MAN), and other devices by wireless communication. The wireless communication optionally uses any of a plurality of communications standards, protocols and technologies, including but not limited to Global System for Mobile Communications (GSM), Enhanced Data GSM Environment (EDGE), high-speed downlink packet access (HSDPA), high-speed uplink packet access (HSUPA), Evolution, Data-Only (EV-DO), HSPA, HSPA+, Dual-Cell HSPA (DC-HSPDA), long term evolution (LTE), near field communication (NFC), wideband code division multiple access (W-CDMA), code division multiple access (CDMA), time division multiple access (TDMA), Bluetooth, Bluetooth Low Energy (BTLE), Wireless Fidelity (Wi-Fi) (e.g., IEEE 802.11a, IEEE 802.11b, IEEE 802.11g and/or IEEE 802.11n), Wi-MAX, a protocol for e-mail (e.g., Internet message access protocol (IMAP) and/or post office protocol (POP)), instant messaging (e.g., extensible messaging and presence protocol (XMPP), Session Initiation Protocol for Instant Messaging and Presence Leveraging Extensions (SIMPLE), Instant Messaging and Presence Service (IMPS)), and/or Short Message Service (SMS), or any other suitable communication protocol.

In some embodiments, simulation system140includes RF circuitry, similar to RF circuitry105, for receiving data from physical vehicle101. Based on the telemetric information received from physical vehicle101, simulation system140simulates physical vehicle101as an avatar within racing simulation141. In some embodiments, as will be further described with respect toFIG. 5, simulation system140receives inputs for controlling and simulating one or more virtual vehicles within racing simulation. In some embodiments, simulation system140calculates kinematics information of the virtual vehicle based on the received inputs and a current state of the virtual vehicle on the virtual racecourse in racing simulation141. For example, the current state may refer to a coordinate, a position, a speed, a velocity, an acceleration, an orientation, etc., of the virtual vehicle being simulated on the virtual racecourse. To replicate the virtual race between a live participant, i.e., vehicle operator114, and a virtual participant operating a virtual vehicle for vehicle operator114, simulation system140transmits kinematics information of the virtual vehicle to components (e.g., display system102or simulation component106) in physical vehicle101via RF circuitry. As described elsewhere in this disclosure, it is to be understood that depending on the type of context being simulated, the display system102and other components shown in physical vehicle101may be housed in other types of devices.

In some embodiments, to enable two-way interactive racing where interactions simulated in racing simulation141can be reproduced for vehicle operator114driving physical vehicle101, simulation system140determines whether the avatar of physical vehicle101within racing simulation141is in contact with obstacles, such as the virtual vehicle, being simulated within racing simulation141. Upon determining a contact, simulation system140calculates force information, audio information, or a combination thereof associated with the contact. In some embodiments, simulation system140transmits the force or audio information to physical vehicle101where the force or audio information is reproduced at physical vehicle101to enhance the virtual reality racing experience for vehicle operator114.

Returning to physical vehicle101, physical vehicle101includes a display system102for generating a representation of a virtual vehicle based on information, e.g., kinematics information of the virtual vehicle, received from simulation system140via RF circuitry105. In some embodiments, display system102is coupled to a simulation component106that generates a virtual representation of the virtual vehicle based on the kinematics information of the virtual vehicle received from simulation system140. In some embodiments, simulation component106generates the virtual representation based on the kinematics information and eyes measurements (e.g., a spatial position) of the eyes117of vehicle operator114. To further enhance the realism of the virtual representation, i.e., a graphically generated vehicle, simulation component106generates the virtual representation based on the kinematics information, a spatial position of eyes117of vehicle operator114, a gaze direction of eyes117, and a focus point of eyes117, according to some embodiments. As described herein, eyes measurements may include a spatial position of eyes117, a gaze direction of eyes117, a focus point of eyes117, or a combination thereof of the left eye, the right eye, or both left and right eyes.

In some embodiments, physical vehicle101includes eye-position detector110, e.g., a camera or light (e.g., infrared) reflection detector, to detect eyes measurements (e.g., spatial position, gaze direction, focus point, or a combination thereof of eyes117) of eyes117of vehicle operator114. In some embodiments, eye-position detector110detects a spatial position of the head of vehicle operator114to estimate the measurements of eyes117. For example, eye-position detector110may detect helmet116or a visor on helmet116to estimate the measurements of eyes117. Detecting eye measurements and/or head position may also include detecting at least one of a position and an orientation of helmet116worn by vehicle operator114.

The position of the eyes may be calculated directly (e.g., from a fixed sensor, such as a track-side sensor) or based on a combination of the sensor in the car and a position of the car.

In some embodiments, eye-position detector110includes a camera that can record a real-time video sequence or capture a series of images of the face of vehicle operator114. Then, eye-position detector110may track and detect the measurements of eyes117by analyzing the real-time video sequence or the series of images. In some embodiments, eye-position detector110implements one or more algorithms for tracking a head movement or orientation of vehicle operator114to aid in eye tracking, detection, and measurement. As shown in diagram100, eye-position detector110may be coupled to rear-view mirror122. However, as long as eye-position detector110is implemented in proximity to physical vehicle, eye-position detector110can be placed in other locations, e.g., on the dashboard, inside or outside physical vehicle101. In some embodiments, to increase accuracy in detection, eye-position detector110can be implemented within helmet116or other head-mounted displays (HMDs), e.g., visors or goggles, worn by vehicle operator114over eyes117.

In some embodiments, eye-position detector110implemented within a HMD further includes one or more focus-tunable lenses, one or more mechanically actuated displays, and mobile gaze-tracking technology reproduced scenes can be drawn that actually correct common refractive errors in the VR world as the eyes are continually monitored based on where a user looks in a virtual scene. An advantage of the above techniques is that vehicle operator114would not need to wear contact lenses or corrective glasses while wearing a HMD implanting eye-position detector110.

In some embodiments, simulation component106generates the virtual representation of the virtual vehicle based on a 3-D model of the virtual vehicle. For example, the virtual representation may represent a perspective of the 3-D model as viewed from eyes117. In some embodiments, by generating the virtual representation from the perspective of eyes117whose measurements are detected or estimated by eye-position detector110, the virtual representation can be simulated in accurate dimensions and scaling for vehicle operator114to increase the reality of racing against the virtual vehicle. In some embodiments, the 3-D model may be pre-stored on simulation component106or received from simulation system140.

In some embodiments, rendering component107within display system102displays the generated virtual representation on one or more displays of physical vehicle101. As discussed above, the virtual representation may be generated by simulation component106in some embodiments or simulation system140in some embodiments. The one or more displays may include windows of physical vehicle101, e.g., front windshield120or side windows126A-B, or mirrors of physical vehicle101, e.g., rear-view mirror122or side mirrors128A-B. In some embodiments, the one or more displays may be components in helmet116. Helmet116may include a helmet, visors, glasses, or a goggle system worn by vehicle operator114.

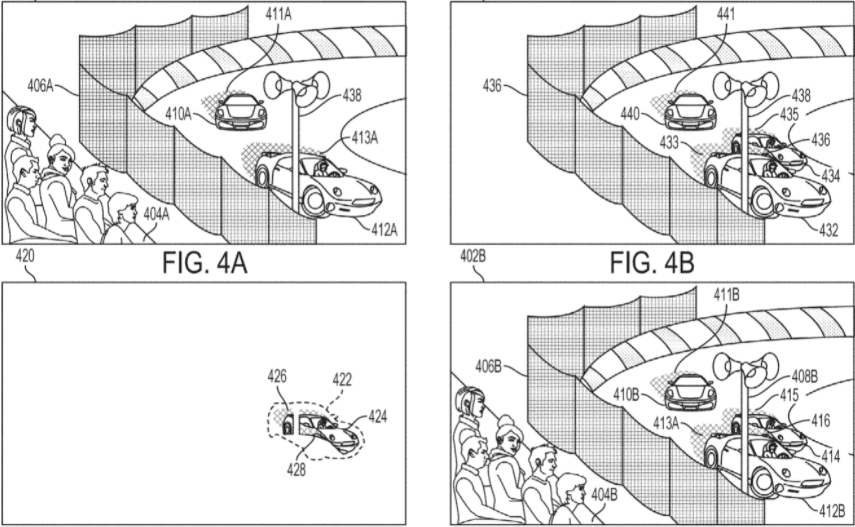

In some embodiments, one or more displays (e.g., front windshield120) can be transparent organic light-emitting diode (T-OLED) displays that allow light to pass through the T-OLED to display the field of view to vehicle operator114. In these embodiments, rendering component107renders the virtual representation of the virtual vehicle as a layer of pixels on the one or more displays. The T-OLED displays may allow vehicle operator114to see both the simulated virtual vehicle and the physical, un-simulated world in his field of view.