Illustrative Figure

Abstract

A portable gaming device includes a game console housing including a handle, a trigger, a screen holder, and a display device coupled to the screen holder. The display device includes a display. The portable gaming device also comprises a processing circuit internally coupled to the game console housing including a processor, a memory, and a network interface, wherein the processing circuit is in communication with the display device. The processing circuit generates a user interface to be displayed on the display, determines a position of the game console housing, and updates the user interface based on the determined position of the game console housing.

Description

The foregoing and other features of the present disclosure will become apparent from the following description and appended claims, taken in conjunction with the accompanying drawings. Understanding that these drawings depict only several embodiments in accordance with the disclosure and are, therefore, not to be considered limiting of its scope, the disclosure will be described with additional specificity and detail through use of the accompanying drawings. DETAILED DESCRIPTION In the following detailed description, reference is made to the accompanying drawings, which form a part hereof. In the drawings, similar symbols typically identify similar components, unless context dictates otherwise. The illustrative embodiments described in the detailed description, drawings, and claims are not meant to be limiting. Other embodiments may be utilized, and other changes may be made, without departing from the spirit or scope of the subject matter presented here. It will be readily understood that the aspects of the present disclosure, as generally described herein, and illustrated in the figures, can be arranged, substituted, combined, and designed in a wide variety of different configurations, all of which are explicitly contemplated and make part of this disclosure. The present disclosure is generally directed to a game console including a processing circuit, a game console housing, and a screen display. The processing circuit may be internally coupled to the game console housing. The screen display may be coupled to the game console housing via an external component (e.g., a screen holder). The processing circuit can communicate with the screen display to display a user interface on the screen display. The processing circuit can process various applications and/or games to display and update the user interface. The processing circuit can receive inputs from various buttons and/or detected movements of the game console housing. The processing circuit can update the user interface based on ...

The foregoing and other features of the present disclosure will become apparent from the following description and appended claims, taken in conjunction with the accompanying drawings. Understanding that these drawings depict only several embodiments in accordance with the disclosure and are, therefore, not to be considered limiting of its scope, the disclosure will be described with additional specificity and detail through use of the accompanying drawings.

DETAILED DESCRIPTION

In the following detailed description, reference is made to the accompanying drawings, which form a part hereof. In the drawings, similar symbols typically identify similar components, unless context dictates otherwise. The illustrative embodiments described in the detailed description, drawings, and claims are not meant to be limiting. Other embodiments may be utilized, and other changes may be made, without departing from the spirit or scope of the subject matter presented here. It will be readily understood that the aspects of the present disclosure, as generally described herein, and illustrated in the figures, can be arranged, substituted, combined, and designed in a wide variety of different configurations, all of which are explicitly contemplated and make part of this disclosure.

The present disclosure is generally directed to a game console including a processing circuit, a game console housing, and a screen display. The processing circuit may be internally coupled to the game console housing. The screen display may be coupled to the game console housing via an external component (e.g., a screen holder). The processing circuit can communicate with the screen display to display a user interface on the screen display. The processing circuit can process various applications and/or games to display and update the user interface. The processing circuit can receive inputs from various buttons and/or detected movements of the game console housing. The processing circuit can update the user interface based on the inputs.

Exemplary embodiments described herein provide a game console with a game console housing that may be shaped to resemble a blaster device (e.g., a rifle, a shotgun, a machine gun, a grenade launcher, a rocket launcher, etc.). The game console housing may include a barrel, a handle, a trigger, sensors (e.g., accelerometers, gyroscopes, magnetometers, etc.), and various buttons on the exterior of the game console housing. The game console may also include a processing circuit internally coupled to the game console housing that processes applications and/or games that are associated with the game console. The game console may include a screen display that is coupled to the barrel of the game console housing. The screen display may display a user interface that is generated by the processing circuit and that is associated with the applications and/or games that the processing circuit processes. The screen display may be coupled to the game console so an operator using (e.g., playing or operating) the game console may view the user interface that is generated by the processing circuit on the screen display.

The processing circuit may receive inputs associated with the trigger, sensors, and the various buttons. The processing circuit may process the inputs to update the user interface being displayed on the screen display. The processing circuit may receive the inputs as a result of an operator using the game console and pressing one of the various buttons, maneuvering (e.g., moving) the game console so the sensors detect the movement, or pulling the trigger. The processing circuit may associate the inputs of each of the various buttons, sensors, and/or the trigger with a different action or signal to adjust the user interface on the screen display. For example, the processing circuit may identify a signal associated with an operator pulling the trigger as an indication to shoot a gun in a video game. While processing the same video game, the processing circuit may associate a signal from the various sensors indicating that the game console is being maneuvered as an indication to change the user interface from a first view to a second view. The processing circuit may process games or applications to create a virtual environment (e.g., an environment with multiple views) that operators can view by maneuvering the game console (e.g., spinning the game console around 360 degrees).

The screen display may also receive a data stream from an external device (e.g., another video game console). The external device may process an application or video game on a processing circuit of the external device to generate the data stream. The data stream may include audiovisual data. In some embodiments, the external device may transmit the data stream to the processing circuit of the game console to process and display on the screen display. An operator using the game console and/or viewing the display may press a button, pull the trigger, or maneuver the game console upon viewing the screen display. The game console may send the inputs generated by any of these interactions to the external device. The external device may process the inputs with the processing circuit of the external device and transmit an updated data stream to the game console for the screen display to display. The processing circuit of the external device may generate the updated data stream based on the inputs. Accordingly, the processing circuit of the game console may not need to perform any processing steps for the screen display to display the user interface.

Advantageously, the present disclosure describes a game console that allows an operator to be immersed in a gaming experience without an external display (e.g., a television). Because the screen display of the game console is coupled to an external component of the game console housing and can display a user interface that changes views corresponding to movement of the game console, an operator may play games on the game console while feeling that they are a part of various gaming environments. Further, the game console may interface with other game consoles to provide virtual reality functionality to games of the game consoles that would otherwise only be playable on a stationary screen. Consequently, the game console provides operators with a virtual reality experience for games and/or applications that are being processed on either an external device or the game console itself.

Another advantage to the present disclosure is the game console provides a hand held virtual reality system that does not necessarily need to be connected to a head or other body part of a user. Instead, a user may easily grab the game console and immediately be immersed in the gaming environment. Further, the user can be immersed in the gaming environment while still viewing the user's surroundings in the real world. This allows the user to avoid hitting real-world objects while playing immersive games or application in a three dimensional virtual world.

Referring now toFIG. 1, a block diagram of a gaming environment100is shown, in accordance with some embodiments of the present disclosure. Gaming environment100is shown to include a game console102, a display device106, a network108, and a gaming device110, in some embodiments. As described herein, gaming environment100may be a portable gaming system. Game console102may communicate with gaming device110over network108. Display device106may be a part of (e.g., coupled to an exterior component of) game console102. A processing circuit104of game console102may communicate with display device106to display a user interface at display device106. Game console102may process and display an application or game via processing circuit104and display device106, respectively. As described herein, game console102may be a portable gaming device. In brief overview, game console102may display a list of games or applications to be played or downloaded on display device106. An operator may select a game or application from the list by moving game console102and pressing an input button of game console102. Game console102may display the selected game or application at display device106. The operator may control movements and actions of the game or application by maneuvering and/or pressing input buttons of game console102. Because display device106is a part of game console102, display device106may move with the movements of game console102. In some embodiments, game console102may display movements and/or actions that are associated with applications and/or games that gaming device110is processing.

Network108may include any element or system that enables communication between game console102and gaming device110and their components therein. Network108may connect the components through a network interface and/or through various methods of communication, such as, but not limited to, a cellular network, Internet, Wi-Fi, telephone, Lan-connections, Bluetooth, HDMI, or any other network or device that allows devices to communicate with each other. In some instances, network108may include servers or processors that facilitate communications between the components of gaming environment100.

Game console102may be a game console including processing circuit104, which can process various applications and/or games. Game console102may include display device106, which is coupled to an exterior component (e.g., a screen holder) of a housing of game console102. Processing circuit104may display an output of the applications and/or games that game console102processes at display device106for an operator to view. Game console102may also include various push buttons that an operator can press to send a signal to processing circuit104. The signal may indicate that one of the push buttons was pressed. Each push button may be associated with an action or movement of the application or game that processing circuit104is processing. Further, processing circuit104may receive inputs from sensors of game console102that detect various aspects of movement (e.g., velocity, position, acceleration, etc.) associated with game console102. Processing circuit104may use the inputs from the sensors to affect movements and/or actions associated with the games or applications that processing circuit104is processing. Processing circuit104may generate and/or update user interfaces associated with the games and/or applications being displayed on display device106based on the inputs.

Display device106may include a screen (e.g., a display) that displays user interfaces associated with games and/or applications being processed by processing circuit104. Display device106may be coupled to game console102via a screen holder (not shown) attached to a game console housing (also not shown) of game console102. Display device106may include a screen display which displays the user interfaces. In some embodiments, display device106may include a touchscreen.

In some embodiments, display device106may display games and/or applications being processed by another device outside of game console102(e.g., gaming device110). Gaming device110may be a device designed to process applications and/or games. Gaming device110is shown to include a processing circuit112. Processing circuit112may process the games and/or applications and send a user interface to display device106to be displayed. Processing circuit112may receive and process signals sent from processing circuit104. Processing circuit112may update the user interface being displayed at display device106of game console102based on the signals from processing circuit104. Processing circuit112may communicate with processing circuit104over network108via Bluetooth. Further, processing circuit112may communicate with display device106via an HDMI connection such as a wireless HDMI connection (e.g., HDbitT®, Wi-Fi, HDMI wireless, etc.) or a wired HDMI connection (e.g., HDbaseT®).

Referring now toFIG. 2, a block diagram of a gaming environment200is shown, in some embodiments. Gaming environment200may be an exemplary embodiment of gaming environment100, shown and described with reference toFIG. 1, in some embodiments. Gaming environment200is shown to include a game console201and a gaming device228. Game console201may be similar to game console102, shown and described with reference toFIG. 1. Gaming device228may be similar to gaming device110, shown and described with reference toFIG. 1. Game console201is shown to include a processing circuit202, a battery204, a charging interface206, sensors208, light element(s)210, command buttons212, a pedal system214, and a display device222. As described above, display device222may be coupled to an exterior component (e.g., via a display holder on a barrel) of game console201. Processing circuit202may receive inputs from each of components204-214, process the inputs, and display and update an output at display device222based on the inputs. In some embodiments, processing circuit202may additionally or alternatively be a part of display device222.

Game console201may include a battery204. Battery204may represent any power source that can provide power to game console201. Example of power sources include, but are not limited to, lithium batteries, rechargeable batteries, wall plug-ins to a circuit, etc. Battery204may be charged via charging interface206. For example, battery204may be a rechargeable battery. To charge battery204, an operator may connect game console201to a wall outlet via charging interface206. Once game console201has finished charging, the operator may disconnect game console201from the wall outlet and operate game console201with the power stored in the rechargeable battery. The operator may disconnect game console201from the wall outlet at any time to operate game console201. In some cases, the operator may operate game console201while it is connected to the wall outlet. Battery204may provide power to processing circuit202so processing circuit202may operate to process applications and/or games.

As shown inFIG. 2, processing circuit202includes a processor216, memory218, and a communication device (e.g., a receiver, a transmitter, a transceiver, etc.), shown as network interface220, in some embodiments. Processing circuit202may be implemented as a general-purpose processor, an application specific integrated circuit (“ASIC”), one or more field programmable gate arrays (“FPGAs”), a digital-signal-processor (“DSP”), circuits containing one or more processing components, circuitry for supporting a microprocessor, a group of processing components, or other suitable electronic processing components. Processor216may include an ASIC, one or more FPGAs, a DSP, circuits containing one or more processing components, circuitry for supporting a microprocessor, a group of processing components, or other suitable electronic processing components. In some embodiments, processor216may execute computer code stored in the memory218to facilitate the activities described herein. Memory218may be any volatile or non-volatile computer-readable storage medium capable of storing data or computer code relating to the activities. According to an exemplary embodiment, memory218may include computer code modules (e.g., executable code, object code, source code, script code, machine code, etc.) for execution by processor216.

In some embodiments, processing circuit202may selectively engage, selectively disengage, control, and/or otherwise communicate with the other components of game console201. As shown inFIG. 2, network interface220may couple processing circuit202to display device222and/or an external device (e.g., gaming device110). In other embodiments, processing circuit202may be coupled to more or fewer components. For example, processing circuit202may send and/or receive signals from the components of game console201such as light elements210; charging interface206; battery204; command buttons212(e.g., a power button, input buttons, a trigger, etc.); one or more sensors, shown as sensors208; and/or pedal system214(e.g., position detectors indicating a position of pedals of pedal system214) via network interface220.

Processing circuit202may send signals to display device222to display a user interface on a display224of display device222via network interface220. Network interface220may utilize various wired communication protocols and/or short-range wireless communication protocols (e.g., Bluetooth, near field communication (“NFC”), HDMI, RFID, ZigBee, Wi-Fi, etc.) to facilitate communication with the various components of game console201, including display device222, and/or gaming device228. In some embodiments, processing circuit202may be internally coupled to display device222via a tethered HDMI cable located inside a housing of game console201that runs between processing circuit202and display device222. In some embodiments, processing circuit202may be connected to display device222via HDbitT®, which is described below. In some embodiments, processing circuit202may be connected to display device222via an HDMI low latency wireless transmission technology. Advantageously, by using an HDMI low latency wireless transmission technology, display device222may connect to both external devices and to processing circuit202.

According to an exemplary embodiment, processing circuit202may receive inputs from various command buttons212and/or pedal system214that are located on an exterior surface of game console201. Examples of command buttons212include, but are not limited to, a power button, input buttons, a trigger, etc. Pedal system214may include multiple pedals (input buttons) that are coupled to each other so only one pedal may be pressed at a time. In some embodiments, the pedals may be pressed to varying degrees. Processing circuit202may receive an input when an operator may press on a pedal of pedal system214and identify how much the operator pressed on the pedal based on data detected by sensors coupled to the pedal. Processing circuit202may receive the inputs and interpret and implement them to perform an action associated with a game or application that processing circuit202is currently processing.

Processing circuit202may change a display (e.g., a user interface) of display224based on the inputs. The inputs from the input buttons, the trigger, and/or the pedal system may be an indication that the operator of game console201desires that their character in a game jumps, crouches, dives/slides, throws a knife, throws a grenade, switches weapons, shoots a weapon, moves backward, moves forward, walks, jogs, runs, sprints, etc. The inputs from the input buttons, the trigger, and/or the pedal system may additionally or alternatively be an indication that the operator of game console201desires to perform various different actions while in menus of the game such as scroll up, scroll down, and/or make a menu selection (e.g., select a map, select a character, select a weapon or weapons package, select a game type, etc.). In this way, an operator may utilize game console201as a mouse or roller ball that can move a cursor on display224to select an option that display224displays. Inputs from the power button may be an indication that the operator of game console201desires that game console201be turned on or off.

In some embodiments, an operator may calibrate game console201to adjust a rate of change of views of display224versus movement that sensors208detects. For example, game console201may be configured to change views of display224at a same rate that an operator moves game console201. The operator may wish for the views to change at a slower rate. Accordingly, the operator may adjust the settings of game console201so display224changes views slower than the operator moves game console201.

In some embodiments, an operator may calibrate game console201based on the actions being performed on a user interface. For example, an operator may calibrate game console201so the user interface changes at the same rate that the operator moves game console201when playing a game. Within the same configuration, the operator may also calibrate game console201so the operator can maneuver a cursor at a faster rate than the operator moves game console201when selecting from a drop down menu or from other types of menus or option interfaces.

In some embodiments, processing circuit202may receive an indication regarding a characteristic within a game (e.g., a health status of a character of the game) operated by game console201and control light elements210of a light bar (not shown) to provide a visual indication of the characteristic within the game operated by game console201. For example, processing circuit202may receive an indication regarding a health status of a character within the game that is associated with the operator of game console201and control light elements210(e.g., selectively illuminate one or more of light elements210, etc.) to provide a visual indication of the character's health via the light bar. By way of another example, processing circuit202may receive an indication regarding a number of lives remaining for a character within the game that is associated with the operator of game console201and control light elements210(e.g., selectively illuminate one or more of light elements210, etc.) to provide a visual indication of the number of remaining lives via the light bar. In another example, processing circuit202may receive an indication regarding a hazard or event within the game (e.g., radiation, warning, danger, boss level, level up, etc.) and control light elements210to change to a designated color (e.g., green, red, blue, etc.) or to flash to provide a visual indication of the type of hazard or event within the game via the light bar.

In some embodiments, processing circuit202may receive inputs from sensors208of game console201. Sensors208may include an accelerometer, a gyroscope, and/or other suitable motion sensors or position sensors that detect the spatial orientation and/or movement of game console201(e.g., a magnetometer). The accelerometer and gyroscope may be a component of an inertial measurement unit (not shown). The inertial measurement unit may include any number of accelerometers, gyroscopes, and/or magnetometers to detect the spatial orientation and/or movement of game console201. The inertial measurement unit (IMU) may detect the spatial orientation and/or movement of game console201by detecting the spatial orientation and/or movement of a game console housing (not shown) of game console201. The IMU may transmit data identifying the spatial orientation and/or movement of game console201to processing circuit202. Based on signals received from sensors208, processing circuit202may adjust the display provided by display224of display device222. For example, sensors208may detect when game console201is pointed up, pointed down, turned to the left, turned to the right, etc. Processing circuit202may adaptively adjust the display on display224to correspond with the movement of game console201.

In some embodiments, game console201may include speakers (not shown). Processing circuit202may transmit audio signals to the speakers corresponding to inputs that processing circuit202processes while processing a game and/or application. The audio signals may be associated with the game or application. For example, an operator may be playing a shooting game that processing circuit202processes. The operator may pull the trigger of game console201to fire a gun of the shooting game. Processing circuit202may transmit a signal to the speakers to emit a sound associated with a gun firing.

In some embodiments, game console201may be used as a mouse and/or keyboard to interact with various dropdown menus and selection lists being displayed via display device222. In some cases, the various dropdown menus and selection lists may be related to an online exchange that allows operators to select a game or application to download into memory218of processing circuit202. The operators may select the game or application by reorienting game console201to move a cursor that display device222is displaying on a user interface including dropdown menus and/or selection lists. Sensors208of game console201may detect the movements of game console201and send signals to processing circuit202that are indicative of the movements including at least movement direction and/or speed. Processing circuit202may receive and process the signals to determine how to move the cursor that display device222is displaying. Processing circuit202may move the cursor corresponding to the movement of the game console201. For example, in some instances, if an operator points game console201upward, processing circuit202may move the cursor upwards. If an operator points game console201downward, processing circuit202may move the cursor downwards. The operator may configure game console201to invert the movements or change the movements in any manner to affect the movement of the cursor. In some embodiments, processing circuit202may receive inputs that correspond to the movement and the speed of the movement of game console201based on the movement of an end of the barrel of game console201.

In some embodiments, game console201may include a calibrated floor position that movements of game console201may be detected against. An administrator may calibrate the floor position by setting a base position of the gyroscopes of game console201that are associated with a pitch of game console201. The pitch of game console201may be determined based on the position of game console201relative to the calibrated floor position. For example, an administrator may calibrate a floor position of game console201to be when game console201is positioned parallel with the ground. The administrator may position and/or determine when game console201is parallel with the ground and set the gyroscope readings associated with the pitch of the position as a calibrated floor position. If the gyroscopes detect that game console201is pointing at a position above the calibrated floor position, the gyroscopes may send data indicating the position of game console201as pointing up and/or a degree of how far up console201is pointing relative to the calibrated floor position to processing circuit202.

In some embodiments, the gyroscopes may send position data to processing circuit202and processing circuit202may determine the position of game console201compared to the calibrated floor position. Consequently, processing circuit202may determine a pitch position of game console201relative to a consistent fixed pitch position instead of relative to a starting pitch position of game console201upon start-up of a game or application. Advantageously, an administrator may only set a calibrated floor position for the pitch of game console201. Processing circuit202may determine and set a calibrated yaw position of game console201upon boot up or upon starting processing of a game or application. A user playing the game or application may adjust the calibrated floor position and/or calibrated yaw position by accessing the settings associated with the game, application, or game console201.

The gyroscopic data may include pitch, yaw, and/or roll of game console201. The pitch may correspond to the barrel of game console201moving up or down and/or a position on a y-axis (e.g., a y-axis tilt of game console201). The yaw may correspond to the barrel of game console201moving side-to-side and/or a position on an x-axis (e.g., an x-axis tilt of game console201). The roll may correspond to the barrel rotating clockwise or counterclockwise around a z-axis. The y-axis may point from the ground to the sky, the x-axis may be perpendicular to the y-axis, and the z-axis may be an axis extending from an end (e.g., the barrel or the handle) of game console201. Gyroscopes of the IMU may be positioned to detect the pitch, yaw, and/or roll of game console201as gyroscopic data along with a direction of each of these components. The gyroscopes may transmit the gyroscopic data to processing circuit202to indicate how game console201is moving and/or rotating.

Processing circuit202may move a cursor on a user interface by analyzing gyroscopic data that gyroscopes of game console201send to processing circuit202along with other positional data (e.g., acceleration data from accelerometers). Processing circuit202may process the gyroscopic data to convert it into mouse data. Based on the mouse data, processing circuit202can move a cursor on the user interface accordingly. For example, if a detected pitch indicates game console201is pointing up, processing circuit202may move a cursor up. If a detected yaw indicates game console201is pointing left, processing circuit202may move the cursor left. Consequently, the combination of processing circuit202and the gyroscopes can act as an HID compliant mouse that can detect a movement of game console201and move a cursor of a user interface corresponding to the movement. Further, processing circuit202may send such gyroscopic data to another device (e.g., gaming device228) for similar processing. Advantageously, by acting as an HID compliant mouse, game console201can move cursors associated with various games and application according to a set standard. Game and application manufacturers may not need to create their games or applications to specifically operate on processing circuit202.

Processing circuit202may also use gyroscopic data (in combination with other data such as data from one or more accelerometers) of game console201to act as an HID compliant joystick. When processing a game with a user interface emulating a view of a person in a three dimensional environment, processing circuit202may receive gyroscopic data associated with the pitch, yaw, and/or roll of game console201compared to a calibrated floor position as described above. Processing circuit202may convert the gyroscopic data and accelerometer data to HID compliant joystick data associated with a direction and acceleration of movement of game console201. Based on the direction and acceleration, processing circuit202may update the user interface.

For example, processing circuit202may process a three dimensional environment of a game or application. Processing circuit202may receive gyroscopic data indicating that game console201is pointing up relative to a calibrated pitch position and convert the data to HID compliant joystick data. Based on the HID compliant joystick data, processing circuit202may update the user interface to cause a character of the game or application to look up in the three dimensional environment (e.g., reorient the screen to look up in the three dimensional environment). Processing circuit202may also receive gyroscopic data indicating a roll of game console201. A roll may correspond to an action in a game or application. For example, an operator may roll game console201to peak around a corner in a game.

Various positions of game console201may correspond to actions in a game. For example, gyroscopic data may indicate that game console201is pointing down. Such a movement may be associated with reloading in a game. Processing circuit202may receive this data, convert the data to HID compliant joystick data, and update the user interface so a reload animation is performed. Game console201may associate various motion gestures with various actions depending on the game or application that game console201is processing.

In some embodiments, display device222may operate as a digital keyboard. Display device222may present a digital keyboard at display224and an operator may interact with the digital keyboard to select various keys. The operator may select various keys by moving a cursor as discussed above and pressing a button or pulling the trigger of game console201. In some embodiments, display device222may include a touchscreen. In these embodiments, an operator may physically touch an electronic representation of each key on display224of display device222to select keys of the digital keyboard.

In some embodiments, game console201may generate a virtual environment for an operator to view. Game console201may generate the virtual environment based on a game or application that processing circuit202processes. Processing circuit202may generate the virtual environment by processing software associated with the game or application. The virtual environment may be a three dimensional environment that an operator can interact with by providing various inputs into game console201. A portion of the virtual environment may be displayed by display device222. An operator that is operating game console201may change the portion of the virtual environment being displayed by display device222by providing an input into game console201. Processing circuit202may receive the input and update the display of display device222based on the input. In some embodiments, processing circuit202can continue to generate and update views of the virtual environment that are not currently being displayed by display device222. Advantageously, by continuing to generate and update portions of the environment that are not currently being shown by display device222, if the operator provides an input to cause the display to change to another view of the environment, processing circuit202may not need to generate a new view responsive to the input. Instead, processing circuit202can display a view that processing circuit202has already generated. Consequently, processing circuit202may process the virtual environment faster after initially generating the virtual environment.

For example, an operator may be playing a war game simulator on game console201. Processing circuit202of game console201may generate a three dimensional battlefield associated with the war game. The three dimensional battlefield may include terrain (e.g., bunkers, hills, tunnels, etc.) and various characters that interact with each other on the battlefield. The operator playing the war game can view a portion of the battlefield through display device222. The portion of the battlefield may be representative of what a person would see if the battlefield was real and the person was on the battlefield. The operator may change views (e.g., change from a first view to a second view) of the battlefield by reorienting game console201. For instance, if the operator spins 360 degrees, processing circuit202could display a 360 degree view of the battlefield on display device222as the operator spins. If the operator pulls the trigger of game console201, a sequence associated with firing a gun may be displayed at display device222. Further, if the operator presses on a pedal of pedal system214, a character representation of the operator may move forward or backwards in the battlefield corresponding to the pedal that was pressed. Other examples of interactions may include the operator pressing an input button to select an item (e.g., a medical pack) on the battlefield, the operator pressing a second input button to reload, the operator pressing a third input button to bring up an inventory screen, etc. As described, by pressing an input button, the operator may change the state of the input button from an unpressed state to a pressed state. Similarly, by pulling the trigger, an operator may change the state of the trigger from an unpulled state to a pulled state. Further, a detected change in position of game console201may be described as a change in state of the position of game console201.

Various buttons (e.g., command buttons212) may map to different actions or interactions of games that processing circuit202processes. Different games may be associated with different button mappings. Further, the mappings may be customizable so an operator can control actions that each button is associated with. For example, a button in one game may be associated with a “jump” movement in the game. In another game, the same button may be associated with a “sprint” movement while another button of the various buttons may associated with the jump movement.

In some embodiments, the various buttons may each be associated with a letter or button on a keyboard displayed on a user interface. An operator may customize the buttons to be associated with different letters based on the operator's preferences. A keyboard may be displayed on display224and the operator may select keys of the keyboard using the various push buttons.

In some embodiments, game console201may operate as an input and display device for an external gaming device (e.g., gaming device228). For example, gaming device228of gaming environment200may be in communication with processing circuit202of game console201. Gaming device228may include a processing circuit230having a processor232, memory234, and a network interface236. Processing circuit230, processor232, memory234, and network interface236may be similar to processing circuit202, processor216, memory218, and network interface220of game console201, respectively. In some cases, gaming device228may include a plug-in that facilitates transmission of an HDMI output (e.g., an audiovisual data stream generated by processing circuit230) to display device222or to processing circuit202to forward to display device222. In some cases, gaming device228may transmit audio data that corresponds to visual data that gaming device228transmits. Consequently, gaming device228may transmit audiovisual data streams to game console201to display at display device222.

In some embodiments, processing circuit202of game console201may be or may include a Bluetooth processor including various Bluetooth chipsets. The Bluetooth processor may receive inputs from various components of game console201, and send the inputs to processing circuit230for processing. Advantageously, Bluetooth processors may be physically smaller and require less processing power and/or memory than other processors because the Bluetooth processors may not need to process applications or games or produce an audiovisual data stream to display at display device222. Instead, the Bluetooth processor may only receive and package inputs based on operator interactions with game console201and transmit the inputs to gaming device228for processing. In addition to Bluetooth, other wireless protocols may utilized to transmit inputs to gaming device228. For example, processing circuit202may transmit the inputs to gaming device228through infrared signals. The inputs may be transmitted through any type of wireless transmission technology.

In some embodiments, gaming device228may transmit an HDMI output to display device222using HDbitT® technology. HDbitT® may enable transmission of 4K Ultra HD video, audio, Ethernet, power over Ethernet, various controls (e.g., RS232, IR, etc.) and various other digital signals over wired and/or wireless communication mediums. For example, HDbitT® may enable data transmission via UTP/STP Cat5/6/7, optical fiber cables, power lines, coaxial cables, and/or wireless transmission. Gaming device228may receive inputs from game console201(e.g., via Bluetooth), process the inputs based on a game or application that gaming device228is currently processing, and transmit an updated HDMI output (e.g., an updated audiovisual datastream) to display device222using HDbitT® technology. Advantageously, by using HDbitT® to transmit data streams, gaming device228may wirelessly transmit high definition audiovisual data signals to display device222for users that are using game console201to view. HDbitT® may allow for gaming device228to transmit high definition audiovisual data signals over large distances so users can use game console201far away from gaming device228(e.g., 200 meters) or between rooms in a house. For example, operators may use game console201in various rooms of a house without moving gaming device228between the rooms.

Gaming device228may process games and/or applications. Gaming device228may generate a user interface associated with the games or applications and send the user interface to processing circuit202or directly to display device222. If gaming device228sends the user interface to display device222, display device222may display the user interface at display224and play any audio that gaming device228sent to display device222via an audio component (e.g., one or more speakers) of game console201. In some embodiments, a speaker of gaming device228may play the audio via speakers connected to gaming device228(e.g., speakers on a television connected to gaming device228).

In some instances, an operator operating game console201may view the user interface that gaming device228generated and sent to display device222. The operator may interact with the user interface by pressing input buttons (e.g., command buttons212, pedal system214, a trigger, etc.) of game console201or reorienting game console201. Processing circuit202can receive the input signals generated from either action and transmit (e.g., forward) the inputs to processing circuit230of gaming device228. Processing circuit202may transmit the input signals to processing circuit230via Bluetooth, as described above. Gaming device228may receive and process the input signals. Gaming device228can consequently provide an updated user interface (e.g., an updated data stream that adjusts the user interface currently being displayed at display device222) to game console201(e.g., via HDbitT®). Gaming device228may either send the updated user interface directly to display device222to update the display of display224or to processing circuit202for processing circuit202to update the display. Advantageously, as described, game console201can connect to gaming device228to display games and/or applications designed for gaming device228. Game console201can display the game or application on display device222. An operator accessing game console201may play the games or applications by providing inputs via game console201. Processing circuit230of gaming device228may perform the processing functions of the games or applications so processing circuit202of game console201may not need to perform such functions or have the capability (e.g., processing power and/or memory requirements) to do so.

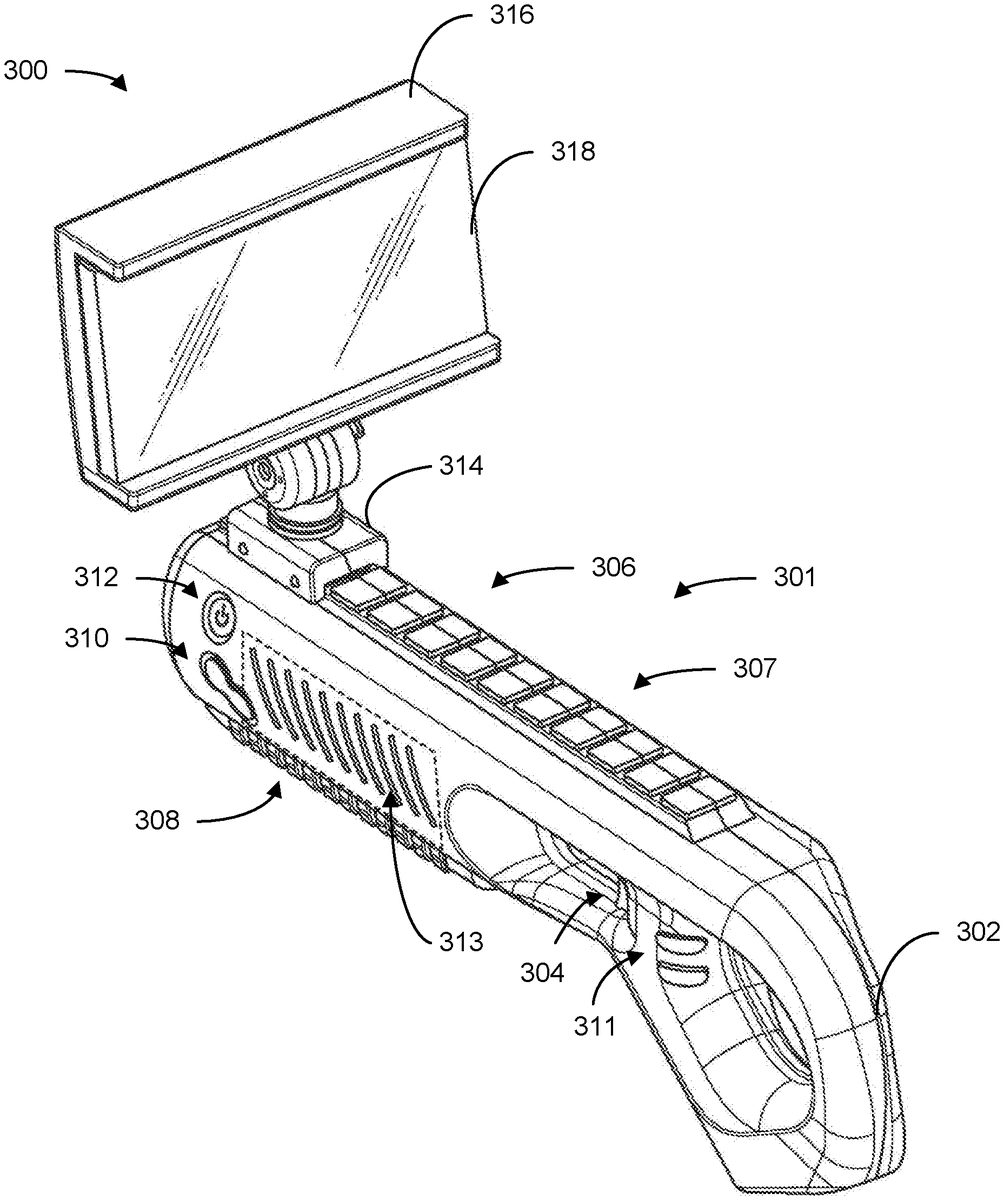

Referring now toFIG. 3, a perspective view of a game console300including a display device316coupled to a game console housing301via a screen holder314is shown, in accordance with some embodiments of the present disclosure. Game console300may be similar to and include similar components to game console201, shown and described with reference toFIG. 2. Display device316may be similar to display device222, shown and described with reference toFIG. 2. Game console housing301is shown to include a handle302, a trigger304, a barrel306, latches307, a grip308, command buttons310and311, a power button312, and light elements313. Game console housing301may be shaped to resemble a blaster device (e.g., a rifle, a shotgun, a machine gun, a grenade launcher, a rocket launcher, etc.). Barrel306may be shaped to resemble a barrel of such a blaster device (e.g., barrel306may be shaped like a tube and extend away from handle302and trigger304). Screen holder314may be coupled to barrel306such that an operator holding game console300may view display device316, which is attached to screen holder314, when the operator is holding game console300. Screen holder314may be coupled to a top of barrel306. It should be understood that the positions and number of handles, triggers, barrels, latches, grips, command buttons, and light elements as shown and described with reference toFIG. 3are meant to be exemplary. Game console housing301may include any number of handles, triggers, barrels, latches, grips, command buttons, and light elements and they can be in any position and in any size or orientation on game console housing301. Further, screen holder314and display device316may be any shape or size and coupled to any component of game console housing301and are not meant to be limited by this disclosure.

Game console300may also include a processing circuit (e.g., processing circuit202) (not shown). The processing circuit may be internally coupled to game console housing301. In some embodiments, the processing circuit may be insulated by protective components (e.g., components that prevent the processing circuit from moving relative to game console housing301or from being contacted by other components of game console300) so the processing circuit may continue operating while operators are moving game console300to play a game and/or application. Further, in some embodiments, the processing circuit may be coupled to display device316. The processing circuit may be coupled to display device316via any communication interface such as, but not limited to, an HDMI cable, an HDMI low latency wireless transmission technology, HDbitT®, etc. The processing circuit may generate and update a user interface being shown on display device316based on a game or application that the processing circuit is processing and/or inputs that the processing circuit processes, as described above with reference toFIG. 2.

Display device316may be removably coupled to game console housing301via latches307and screen holder314. Display device316may be coupled to screen holder314. Latches307may include multiple latches that are elevated so a screen holder (e.g., screen holder314) may be attached to any of the latches. An operator operating game console300may remove screen holder314from any of the latches and reattach screen holder314to a different latch to adjust a position of screen holder314and consequently a position of display device316. In some embodiments, latches307and screen holder314may be configured so an operator may slide screen holder314between latches and lock screen holder314into place to set a position of screen holder314. In some embodiments, screen holder314and/or display device316may be a part of game console housing301.

Display device316may be coupled to screen holder314. Display device316may be coupled to screen holder314with a fastener such as a snap fit, magnets, screws, nails, loop and hooks, etc. In some embodiments, display device316and screen holder314may be the same component. In these embodiments, display device316may be coupled to a latch of latches307. In some embodiments, display device316may be rotatably coupled to screen holder314so display device316can be rotated about screen holder314to a position that an operator desires.

Display device316may be a displaying device that can communicate with the processing circuit of game console300to display a user interface of a game or application being processed by the processing circuit. In some embodiments, display device316may include an internal processing circuit including at least a processor and memory (not shown) that facilitates the transmission of data between display device316and the processing circuit of game console300and/or a processing circuit of an external device. Display device316is shown to include display318. Display318may be similar to display224, shown and described with reference toFIG. 2. Display device316may receive a data stream including a user interface from the processing circuit of game console300and display the user interface at display318.

An operator may press command buttons310or311and/or power button312and/or pull trigger304to provide an input to the processing circuit of game console300. By providing an input to the processing circuit, the operator may adjust the user interface that the processing circuit of game console300is providing via display device316. For example, an operator may perform an action in a game by pressing one of command buttons310or311. In another example, an operator may turn game console300on or off by pressing power button312. Further, the operator may move (e.g., reorient) game console300to provide an input to the processing circuit and adjust the user interface of display device316, as described above.

In some embodiments, game console300may include sensors (e.g., sensors) that are internally coupled to game console housing301. The sensors may be a part of an inertial measurement unit that includes sensors such as, but not limited to accelerometers, gyroscopes, magnetometers, etc. The sensors may provide position data identifying a position of game console housing301to the processing circuit of game console300. The processing circuit may receive the position data and update a user interface of display318based on the position data.

To update the user interface, the processing circuit may receive current position data and compare the current position data to previous position data corresponding to a previous position of the game console housing. The processing circuit may identify a difference between the position data and update the user interface based on the difference. For example, the processing circuit may receive current position data of game console housing301. Previous position data may indicate that game console housing301was pointing straight. The processing circuit may compare the current position data with the previous position data and determine that game console housing301is pointing left compared to the position of the previous position data. Consequently, the processing circuit may change a view of the user interface display318to move to the left of a virtual environment or move a cursor on the user interface left. In some embodiments, the previous position data may be a calibrated position of game console housing301set by an operator.

Characteristics of games or applications being processed by the processing circuit of game console201may be shown via light elements313. Light elements313may include slits in game console housing301with light bulbs that can provide different colors of light. The processing circuit of game console300may communicate with light elements313to provide light based on the game or application that the processing circuit is currently processing. Light elements313may provide light based on characteristics of the game or application that the processing circuit is processing. For example, light elements313may indicate an amount of health a character of a game has left based on the number of light elements313that are lit. In another example, light elements313may provide a number of lives that a character has left by lighting up a number of light elements313that corresponds to the number of lives the character has left. Light elements313may display any sort of characteristics of games or applications.

In an exemplary embodiment, an operator operating game console300may hold game console300with one or two hands. In some instances, an operator may hold game console300with one hand grabbing handle302and another hand holding onto a portion of game console housing301with a finger on trigger304. In other instances, the operator may use one hand to pull trigger304while another hand grabs grip308. Grip308may include a substance that an operator can grip without sliding a hand along barrel306(e.g., rubber). In some embodiments, portions of grip308may be elevated from other portions of grip308to provide operators with further protections from sliding their hands. Grip308may be positioned on barrel306so hands that grab grip308can also push any of command buttons310and/or power button312without adjusting their position. While holding game console300, the operator may view display device316.

Referring now toFIG. 4, a perspective view of a game console400including a display device408coupled to a game console housing402via a screen holder406is shown, in accordance with some embodiments of the present disclosure. Game console400may be similar to game console300, shown and described with reference toFIG. 3. Game console400is shown to include a game console housing402, latches404, a screen holder406, and a display device408. Display device408is shown to include a display410. Each of components402-410may be similar to corresponding components of game console300. However, screen holder406of game console400may be coupled to a latch of latches404that is different from the latch that screen holder314of game console300is coupled to. Screen holder406may be coupled to a latch that is closer to an operator of game console400so the operator may more easily view display410when playing games or applications of game console400. Screen holder406may be adjustable to be position at different locations on a barrel (e.g., on a latch of latches404) of game console housing402. Advantageously, screen holder406may be coupled to any latch of latches404so an operator may operate game console400with display410at any distance from the operator.

Referring now toFIG. 5, a side view of game console400, shown and described with reference toFIG. 4, is shown, in accordance with some embodiments of the present disclosure. Game console400is shown to include a handle501, a trigger502, a grip504, command buttons506, a pedal508, screen holder406, and display device408. An operator may interact with any of trigger502, command buttons506, and/or pedal508to provide inputs to a processing circuit of game console400as described above. Further, the operator may hold game console400by grabbing grip504and handle501.

In some embodiments, display device408may be adjustably (e.g., rotatably) coupled to screen holder406. Display device408may be coupled so an operator may reorient a position of display device408in relation to screen holder406. For example, an operator may rotate display device408around screen holder406to face upwards or away from the operator. The operator may rotate display device408up to 180 degrees depending on how the operator desires display device408to be positioned.

Referring now toFIG. 6, a screen display600displaying various levels of difficulty for an operator to select with a game console (e.g., game console201) is shown, in accordance with some embodiments of the present disclosure. Screen display600is shown to include a user interface602. User interface602may be displayed by a display of the game console and be associated with a game or application that a processing circuit of the game console is processing. As shown, user interface602may include a selection list604and a cursor606. Selection list604may include various options of levels of difficulty associated with a game that the game console is currently processing. For example, as shown, selection list604may include options such as an easy option, a medium option, a hard option, and an impossible option. An operator may view selection list604and select one of the options to begin playing the game with a difficulty associated with the selected option.

In some embodiments, to select an option from selection list604, an operator may change an orientation of game console201to move cursor606towards a desired option. The change in orientation may be represented by a change in a position of an end of a barrel of the game console. For example, if cursor606is at a top left corner of user interface602and an operator wishes to select a medium option of selection list604, the operator may direct the position of the end of the barrel downwards. The processing circuit of the gaming device may receive signals from sensors of the gaming device indicating that the end of the barrel is moving or has moved downwards and move cursor606corresponding to the detected movement. Once cursor606is over the desired option (e.g., medium), the operator may select the option by pressing an input button or pulling a trigger of the game console. The operator may move cursor606in any direction on user interface602based on the movement of the game console.

Referring now toFIG. 7, a screen display700including a user interface702of a game being played via a game console (e.g., game console201) is shown, in accordance with some embodiments of the present disclosure. User interface702may be generated and updated by a processing circuit of the game console. User interface702may be associated with a game being processed by the processing circuit. The processing circuit may update user interface702in response to receiving an input associated with an operator pressing on input buttons on an exterior surface of the game console, pulling a trigger of the game console, or moving or reorienting the game console. User interface702is shown to include an environment704represented by clouds, a line of cans on a table706, and crosshairs708. An operator operating the game console may interact with user interface702by moving and pressing push buttons on the game console.

For example, an operator may control a position of crosshairs708by moving the game console in a similar manner to how the operator controlled the position of cursor606, shown and described above with reference toFIG. 6. The operator may position crosshairs708over one of the cans and shoot at the cans by pulling on the trigger of the game console. The processing circuit of the game console may receive an indication that the operator pulled the trigger and update user interface702to play a sequence of a gun firing at a position represented by a middle of crosshairs708. If the operator was successful at firing at a can, the processing circuit may update user interface702to play a sequence of the can falling off of the table.

Referring now toFIG. 8, an example flowchart outlining operation of a game console as described with reference toFIG. 2is shown, in accordance with some embodiments of the present disclosure. Additional, fewer, or different operations may be performed in the method depending on the implementation and arrangement. A method800conducted by a data processing system (e.g., game console201, shown and described with reference toFIG. 2) includes receive a game or application selection (802), process the game or application (804), generate and display a user interface of the game or application (806), “receive input?” (808), identify the input (810), and update the user interface based on the input (812).

At operation802, the data processing system may receive a game or application selection802. The data processing system may display a list of games retrieved over a network via an online exchange. The data processing system may display the list of games on a screen of a display device that coupled to a housing of the data processing system. The list of games may be displayed on a user interface that the data processing generates. An operator accessing the data processing system may select one of the games by rotating the housing and pressing a push button or pulling on a trigger of the housing. The data processing system may receive a signal indicating the change in position (e.g., the movement) of the housing and move a cursor being displayed on the user interface. The data processing system may move the cursor in the same direction on the user interface as the operator moves the housing.

Once the operator selects the game or application, at operation804, the data processing system may process the game or application. The data processing system may process the game or application by downloading files associated with the selected game or application from an external server or processor that stores the selected game or application. The data processing system may download the game or application over a network. Once the data processing system downloads the game or application, at operation806, the data processing system may generate a user interface associated with the game or application and display the user interface on the screen. In some instances, the game or application may already be downloaded onto the data processing system. In these instances, the data processing system may process the selected game or application to generate the user interface on the screen without downloading the selected game or application from the external server or processor.

Once the data processing system displays the user interface, at operation808, the data processing system can determine if it has received an input. The data processing system may receive an input when the operator that is operating the data processing system presses on a push button of the housing of the data processing system or changes a position of the housing. If the data processing system does not receive an input, method800may return to operation804to continue processing the game or application and display the user interface at operation806until the data processing system receives an input.

If the data processing system receives the input, at operation810, the data processing system may identify the input. The data processing system may determine an action associated with the game or application that the data processing system is processing based on the input. For example, the data processing system may receive an input based on the operator pressing a push button. The data processing system may match the push button to a jump action in a look-up table stored by the data processing system. Consequently, the data processing system may determine jumping to be the action.

Once the data processing system determines the action, at operation812, the data processing system may update the user interface based on the input. The data processing system may display a sequence at the user interface on the screen based on the determined action. Continuing with the example above associated with the input associated with the jump action, upon determining the jumping action, the data processing system may update the user interface to show a jumping sequence.

In another instance, at operation810, the data processing system may display a cursor at the user interface. The input may be a detected movement of the housing of the data processing system that sensors coupled to the housing provide to the data processing system. The data processing system may identify the detected movement as movement of the housing. At operation812, the data processing system may move the cursor on the user interface corresponding to the detected movement of the housing. To move the cursor, the data processing system may detect a current position of the cursor and move the cursor in the same direction as the detected movement of the housing.

Referring now toFIG. 9, an example flowchart outlining operation of a game console in communication with a gaming device as described with reference toFIG. 2is shown, in accordance with some embodiments of the present disclosure. Additional, fewer, or different operations may be performed in the method depending on the implementation and arrangement. A method900conducted by a first data processing system (e.g., a processing circuit of gaming device228, shown and described with reference toFIG. 2) in communication with a second data processing system (e.g., a processing circuit of game console201) and a display device coupled to housing of the second data processing system (e.g., display device222). Method900includes generate an audiovisual data stream (902), transmit the audiovisual data stream to the game console (904), receive an input from the game console (906), generate an updated audiovisual data stream (908), and transmit the updated audiovisual data stream (910).

At operation902, the first data processing system may generate an audiovisual data stream. The first data processing system may generate the audiovisual data stream while processing a game or application associated with the first data processing system. The audiovisual data stream may include visual data associated with a user interface associated with the game or application. The audiovisual data stream may also include audio data that corresponds to the user interface. The user interface may be an interface of a game or application including graphics, menus, or any other component that an operator may view while playing the game or application.

At operation904, the first data processing system may transmit the audiovisual data stream to the display device of the housing of the second data processing system. The first data processing system may communicate and transmit the audiovisual data stream to the display device over a network. The display device may display the user interface of the audiovisual data stream at a screen of the display device. In some embodiments, the first data processing system may communicate with the display using HDbitT® data transmission. In some embodiments, an external device may plug in to the first data processing system to provide a wireless HDMI connection from the first data processing system to the display device.

At operation906, the first data processing system may receive an input from the second data processing system. The first data processing system may communicate with the second data processing system over a network. In some embodiments, the first data processing system may communicate with the second data processing system via Bluetooth. The input may correspond to a signal generated by the second data processing system responsive to an operator pressing a push button on the housing of the second data processing system or sensors coupled to the housing detecting that the housing moved. The second data processing system may process the signals generated from the sensors based on detected movement or push buttons based on the push buttons being pressed and send the signals to the first data processing system to be processed.

At operation908, the first data processing system may receive the signals from the second data processing system and generate an updated audiovisual data stream based on the signals. The first data processing system may receive the inputs and determine actions that are associated with the inputs. The first data processing system may determine the actions by comparing the inputs to an internal look-up table that is specific to the game or application that the first data processing system is currently processing. The first data processing system may identify a match and generate an updated audiovisual data stream based on the match in the look-up table. At operation910, the second data processing system may transmit the updated audiovisual data stream to the display device to update the user interface being shown on the display.

The various illustrative logical blocks, modules, circuits, and algorithm operations described in connection with the examples disclosed herein may be implemented as electronic hardware, computer software, or combinations of both. To clearly illustrate this interchangeability of hardware and software, various illustrative components, blocks, modules, circuits, and operations have been described above generally in terms of their functionality. Whether such functionality is implemented as hardware or software depends upon the particular application and design constraints imposed on the overall system. Skilled artisans may implement the described functionality in varying ways for each particular application, but such implementation decisions should not be interpreted as causing a departure from the scope of the present disclosure.

The hardware used to implement the various illustrative logics, logical blocks, modules, and circuits described in connection with the examples disclosed herein may be implemented or performed with a general purpose processor, a DSP, an ASIC, an FPGA or other programmable logic device, discrete gate or transistor logic, discrete hardware components, or any combination thereof designed to perform the functions described herein. A general-purpose processor may be a microprocessor, but, in the alternative, the processor may be any conventional processor, controller, microcontroller, or state machine. A processor may also be implemented as a combination of computing devices, e.g., a combination of a DSP and a microprocessor, a plurality of microprocessors, one or more microprocessors in conjunction with a DSP core, or any other such configuration. Alternatively, some operations or methods may be performed by circuitry that is specific to a given function.

In some exemplary examples, the functions described may be implemented in hardware, software, firmware, or any combination thereof. If implemented in software, the functions may be stored as one or more instructions or code on a non-transitory computer-readable storage medium or non-transitory processor-readable storage medium. The operations of a method or algorithm disclosed herein may be embodied in a processor-executable software module which may reside on a non-transitory computer-readable or processor-readable storage medium. Non-transitory computer-readable or processor-readable storage media may be any storage media that may be accessed by a computer or a processor. For example but not limitation, such non-transitory computer-readable or processor-readable storage media may include RAM, ROM, EEPROM, FLASH memory, CD-ROM or other optical disk storage, magnetic disk storage or other magnetic storage devices, or any other medium that may be used to store desired program code in the form of instructions or data structures and that may be accessed by a computer. Disk and disc, as used herein, includes compact disc (CD), laser disc, optical disc, digital versatile disc (DVD), floppy disk, and blu-ray disc where disks usually reproduce data magnetically, while discs reproduce data optically with lasers. Combinations of the above are also included within the scope of non-transitory computer-readable and processor-readable media. Additionally, the operations of a method or algorithm may reside as one or any combination or set of codes and/or instructions on a non-transitory processor-readable storage medium and/or computer-readable storage medium, which may be incorporated into a computer program product.

The herein described subject matter sometimes illustrates different components contained within, or connected with, different other components. It is to be understood that such depicted architectures are merely exemplary, and that in fact many other architectures can be implemented which achieve the same functionality. In a conceptual sense, any arrangement of components to achieve the same functionality is effectively “associated” such that the desired functionality is achieved. Hence, any two components herein combined to achieve a particular functionality can be seen as “associated with” each other such that the desired functionality is achieved, irrespective of architectures or intermedial components. Likewise, any two components so associated can also be viewed as being “operably connected,” or “operably coupled,” to each other to achieve the desired functionality, and any two components capable of being so associated can also be viewed as being “operably couplable,” to each other to achieve the desired functionality. Specific examples of operably couplable include but are not limited to physically mateable and/or physically interacting components and/or wirelessly interactable and/or wirelessly interacting components and/or logically interacting and/or logically interactable components.

With respect to the use of substantially any plural and/or singular terms herein, those having skill in the art can translate from the plural to the singular and/or from the singular to the plural as is appropriate to the context and/or application. The various singular/plural permutations may be expressly set forth herein for sake of clarity.

It will be understood by those within the art that, in general, terms used herein, and especially in the appended claims (e.g., bodies of the appended claims) are generally intended as “open” terms (e.g., the term “including” should be interpreted as “including but not limited to,” the term “having” should be interpreted as “having at least,” the term “includes” should be interpreted as “includes but is not limited to,” etc.). It will be further understood by those within the art that if a specific number of an introduced claim recitation is intended, such an intent will be explicitly recited in the claim, and in the absence of such recitation no such intent is present. For example, as an aid to understanding, the following appended claims may contain usage of the introductory phrases “at least one” and “one or more” to introduce claim recitations. However, the use of such phrases should not be construed to imply that the introduction of a claim recitation by the indefinite articles “a” or “an” limits any particular claim containing such introduced claim recitation to inventions containing only one such recitation, even when the same claim includes the introductory phrases “one or more” or “at least one” and indefinite articles such as “a” or “an” (e.g., “a” and/or “an” should typically be interpreted to mean “at least one” or “one or more”); the same holds true for the use of definite articles used to introduce claim recitations. In addition, even if a specific number of an introduced claim recitation is explicitly recited, those skilled in the art will recognize that such recitation should typically be interpreted to mean at least the recited number (e.g., the bare recitation of “two recitations,” without other modifiers, typically means at least two recitations, or two or more recitations). Furthermore, in those instances where a convention analogous to “at least one of A, B, and C, etc.” is used, in general such a construction is intended in the sense one having skill in the art would understand the convention (e.g., “a system having at least one of A, B, and C” would include but not be limited to systems that have A alone, B alone, C alone, A and B together, A and C together, B and C together, and/or A, B, and C together, etc.). In those instances, where a convention analogous to “at least one of A, B, or C, etc.” is used, in general such a construction is intended in the sense one having skill in the art would understand the convention (e.g., “a system having at least one of A, B, or C” would include but not be limited to systems that have A alone, B alone, C alone, A and B together, A and C together, B and C together, and/or A, B, and C together, etc.). It will be further understood by those within the art that virtually any disjunctive word and/or phrase presenting two or more alternative terms, whether in the description, claims, or drawings, should be understood to contemplate the possibilities of including one of the terms, either of the terms, or both terms. For example, the phrase “A or B” will be understood to include the possibilities of “A” or “B” or “A and B.” Further, unless otherwise noted, the use of the words “approximate,” “about,” “around,” “substantially,” etc., mean plus or minus ten percent.

The foregoing description of illustrative embodiments has been presented for purposes of illustration and of description. It is not intended to be exhaustive or limiting with respect to the precise form disclosed, and modifications and variations are possible in light of the above teachings or may be acquired from practice of the disclosed embodiments. It is intended that the scope of the invention be defined by the claims appended hereto and their equivalents.

Claims

- A portable gaming device, the portable gaming device comprising: a game console housing including a handle, a trigger, and a screen holder;a position sensor within the game console housing;and a processing circuit within the game console housing, the processing circuit including a processor, a memory, and a first network interface, wherein the processing circuit is in communication with a display device coupled to the screen holder, the display device comprising a display and a second network interface, and the position sensor, and wherein the processing circuit: generates a user interface to be displayed on the display;transmits, via the first network interface, the user interface to the second network interface of the display device;determines a position of the game console housing based on data generated by the position sensor;generates an updated user interface based on the determined position of the game console housing;and transmits, via the first network interface, the updated user interface to the second network interface of the display device, causing the display device to update the display with the updated user interface.

- The portable gaming device of claim 1 , wherein the game console housing further includes a barrel, and wherein the screen holder is coupled to the barrel.