U.S. Pat. No. 10,940,387

SYNCHRONIZED AUGMENTED REALITY GAMEPLAY ACROSS MULTIPLE GAMING ENVIRONMENTS

AssigneeDisney Enterprises Inc

Issue DateMarch 15, 2019

Illustrative Figure

Abstract

Various embodiments of the invention disclosed herein provide techniques for implementing augmented reality (AR) gameplay across multiple AR gaming environments. A synchronized AR gaming application executing on an AR gaming console detects that a first gaming console that is executing an AR gaming application has exited a first AR gaming environment and entered a second AR gaming environment. The synchronized AR gaming application connects to a communications network associated with the second AR gaming environment. The synchronized AR gaming application detects, via the communications network, a sensor associated with the second AR gaming environment. The synchronized AR gaming application alters execution of the AR gaming application based at least in part on sensor data received via the sensor to enable the AR gaming application to continue executing as the first gaming console exits the first AR gaming environment and enters the second AR gaming environment.

Description

DETAILED DESCRIPTION In the following description, numerous specific details are set forth to provide a more thorough understanding of the present invention. However, it will be apparent to one of skill in the art that embodiments of the present invention may be practiced without one or more of these specific details. System Overview FIG. 1illustrates a system100configured to implement one or more aspects of the present invention. As shown, the system includes, without limitation, a local AR gaming environment110, a vehicle AR gaming environment120, and a play space AR gaming environment130in communication with each other via a communications network140. Communications network140may be any suitable environment to enable communications among remote or local computer systems and computing devices, including, without limitation, point-to-point communications channels, Bluetooth, WiFi, infrared communications, wireless and wired LANs (Local Area Networks), one or more internet-based WANs (Wide Area Networks), and cellular data networks. Local AR gaming environment110includes, without limitation, an AR gaming console102(a), local sensors112, and a local network interface114. AR gaming console102(a), local sensors112, and local network interface114communicate with each other over one or more communications channels. The communications channels may be associated with any suitable environment to enable communications among remote or local computer systems and computing devices, including, without limitation, point-to-point communications channels, Bluetooth, WiFi, infrared communications, wireless and wired LANs (Local Area Networks), one or more internet-based WANs (Wide Area Networks), and cellular data networks. As further discussed herein, AR gaming console102(a),102(b), and102(c) represent the same AR gaming console in different environments. The user may seamlessly transition across different gaming environments by moving the AR gaming console from one gaming environment to another gaming environment. When in local AR gaming environment110, the AR gaming console is referred to as AR gaming console102(a). Similarly, when in vehicle AR gaming environment120, the AR gaming console ...

DETAILED DESCRIPTION

In the following description, numerous specific details are set forth to provide a more thorough understanding of the present invention. However, it will be apparent to one of skill in the art that embodiments of the present invention may be practiced without one or more of these specific details.

System Overview

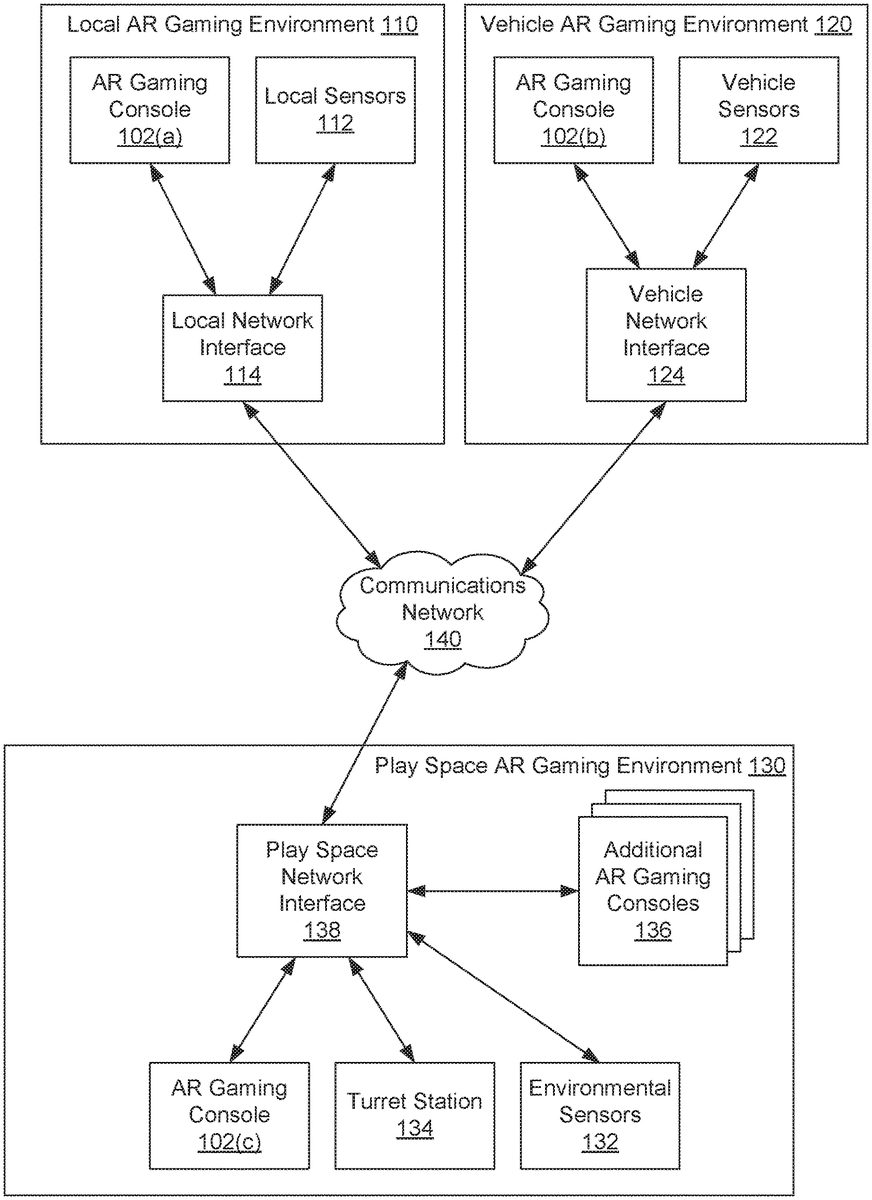

FIG. 1illustrates a system100configured to implement one or more aspects of the present invention. As shown, the system includes, without limitation, a local AR gaming environment110, a vehicle AR gaming environment120, and a play space AR gaming environment130in communication with each other via a communications network140. Communications network140may be any suitable environment to enable communications among remote or local computer systems and computing devices, including, without limitation, point-to-point communications channels, Bluetooth, WiFi, infrared communications, wireless and wired LANs (Local Area Networks), one or more internet-based WANs (Wide Area Networks), and cellular data networks.

Local AR gaming environment110includes, without limitation, an AR gaming console102(a), local sensors112, and a local network interface114. AR gaming console102(a), local sensors112, and local network interface114communicate with each other over one or more communications channels. The communications channels may be associated with any suitable environment to enable communications among remote or local computer systems and computing devices, including, without limitation, point-to-point communications channels, Bluetooth, WiFi, infrared communications, wireless and wired LANs (Local Area Networks), one or more internet-based WANs (Wide Area Networks), and cellular data networks.

As further discussed herein, AR gaming console102(a),102(b), and102(c) represent the same AR gaming console in different environments. The user may seamlessly transition across different gaming environments by moving the AR gaming console from one gaming environment to another gaming environment. When in local AR gaming environment110, the AR gaming console is referred to as AR gaming console102(a). Similarly, when in vehicle AR gaming environment120, the AR gaming console is referred to as AR gaming console102(b). Further, when in play space AR gaming environment130, the AR gaming console is referred to as AR gaming console102(c). The AR gaming console may be flexibly and seamlessly moved across any of local AR gaming environment110, vehicle AR gaming environment120, and play space AR gaming environment130, in any technically feasible combination.

AR gaming console102(a) includes, without limitation, a computing device that may be a personal computer, personal digital assistant, mobile phone, mobile device, or any other device suitable for implementing one or more aspects of the present invention. In some embodiments, AR gaming console102(a), may include an embedded computing system that is integrated into augmented reality goggles, augmented reality glasses, heads-up display (HUD), handheld device, or any other technically feasible AR viewing device.

In operation, AR gaming console102(a) executes a synchronized AR gaming application to control various aspects of gameplay. AR gaming console102(a) receives control inputs from internal sensors, including tracking data. Additionally or alternatively, AR gaming console102(a) receives control inputs from local sensors112, including tracking data. The tracking data includes the position and/or orientation of AR gaming console102(a). From this tracking data, AR gaming console102(a) determines the location of the user and the direction that the user is looking. Additionally or alternatively, the tracking data includes the position and/or orientation of one or more walls, ceilings, and other objects detected by AR gaming console102(a) and/or local sensors112. Based on the tracking data, AR gaming console102(a) may alter the execution of one or more aspects of the computer-generated AR game. As one example, AR gaming console102(a) could include one or more cameras and a mechanism for tracking objects visible in images captured by the camera. AR gaming console102(a) could detect when the hands of the user are included in the image captured by the camera. AR gaming console102(a) could then determine the location and orientation of the hands of the user.

Similarly, AR gaming console102(a) receives control inputs from one or more game controllers (not shown). The control inputs include, without limitation, button presses, trigger activations, and tracking data associated with the game controllers. The tracking data may include the location and/or orientation of the game controllers. The game controllers transmit control inputs to AR gaming console102(a). In this manner, AR gaming console102(a) determines which controls of the game controllers are active. Further, AR gaming console102(a) tracks the location and orientation of the game controllers. Each game controller may be in any technically feasible configuration, including, without limitation, a button panel, a joystick, a wand, a handheld weapon, a steering mechanism, a data glove, or a controller sleeve worn over the user's arm.

Local sensors112transmit tracking data and other information to AR gaming console102(a) via local network interface114. Local sensors112supplement the tracking data that is detected directly via sensors integrated into AR gaming console102(a). Local sensors112may include, without limitation, simultaneous localization and mapping (SLAM) tracking systems, beacon tracking systems, security cameras, lighthouse tracking and other laser-based tracking systems, and smart home sensing systems, in any technically feasible combination.

Local network interface114provides an interface between communications network140and the local network over which AR gaming console102(a) and local sensors112communicate. Additionally or alternatively, any one or more of AR gaming console102(a) and local sensors112may communicate directly with communications network140.

Vehicle AR gaming environment120includes, without limitation, an AR gaming console102(b), vehicle sensors122, and a vehicle network interface124. AR gaming console102(b), vehicle sensors122, and vehicle network interface124communicate with each other over one or more communications channels. The communications channels may be associated with any suitable environment to enable communications among remote or local computer systems and computing devices, including, without limitation, point-to-point communications channels, Bluetooth, WiFi, infrared communications, wireless and wired LANs (Local Area Networks), one or more internet-based WANs (Wide Area Networks), and cellular data networks.

AR gaming console102(b) functions substantially the same as AR gaming console102(a) except as further described below.

Vehicle sensors122transmit tracking data and other information to AR gaming console102(b) via vehicle network interface124. Vehicle sensors122supplement the tracking data that is detected directly via sensors integrated into AR gaming console102(b). Vehicle sensors122may include, without limitation, one or more cameras, vehicle tracking sensors, inertial sensors, seat sensors, or real-time sensors for an autonomous driving system, in any technically feasible combination. Based on data from sensors integrated into AR gaming console102(b) along with data from vehicle sensors122, AR gaming console102(b) computes the real-time direction and location of the vehicle, rate of travel, acceleration, braking, and linear and torque forces exerted on the vehicle. In addition, based on data from sensors integrated into AR gaming console102(b) along with data from vehicle sensors122, AR gaming console102(b) computes the location of the user and the direction that the user is looking. In the case of a moving vehicle, AR gaming console102(b) analyzes vehicle AR gaming environment120to select a suitable stable reference point within the moving vehicle, and not a reference point that is external to the moving vehicle. By selecting a reference point within the moving vehicle, AR gaming console102(b) may accurately identify the location and orientation of the user, game controllers, and other objects within the moving vehicle.

Vehicle network interface124provides an interface between communications network140and the local network over which AR gaming console102(b) and vehicle sensors122communicate. Additionally or alternatively, any one or more of AR gaming console102(b) and vehicle sensors122may communicate directly with communications network140.

Play space AR gaming environment130includes, without limitation, an AR AR gaming console102(c), environmental sensors132, a turret station134, and a play space network interface138. AR gaming console102(c), environmental sensors132, turret station134, and play space network interface138communicate with each other over one or more communications channels. The communications channels may be associated with any suitable environment to enable communications among remote or local computer systems and computing devices, including, without limitation, point-to-point communications channels, Bluetooth, WiFi, infrared communications, wireless and wired LANs (Local Area Networks), one or more internet-based WANs (Wide Area Networks), and cellular data networks.

AR gaming console102(c) functions substantially the same as AR gaming console102(a) and AR gaming console102(b), except as further described below.

Environmental sensors132transmit tracking data and other information to AR gaming console102(c) via play space network interface138. Environmental sensors132supplement the tracking data that is detected directly via sensors integrated into AR gaming console102(c). Environmental sensors132may include, without limitation, security cameras, image recognition systems, global positioning system (GPS) tracking systems, landmark positioning systems, user and skeleton-based tracking systems, geo-fencing systems and geotagging systems, in any technically feasible combination.

Certain play space environments130may include a turret station134in addition to environmental sensors132, where a turret station134is a structure, such as a tower or outbuilding, that is fitted with one or more sensors. The sensors included in the turret station134transmit tracking data and other information to AR gaming console102(c) via play space network interface138. The sensors included in the turret station134supplement the tracking data that is detected directly via sensors integrated into AR gaming console102(c) and the tracking data from environmental sensors132. The sensors included in the turret station134may include, without limitation, security cameras, image recognition systems, GPS tracking systems, landmark positioning systems, user and skeleton-based tracking systems, geo-fencing systems and geotagging systems, in any technically feasible combination.

Certain play space environments130are equipped to facilitate multiuser computer-based AR games. In such play space environments130, AR gaming console102(c) interacts with one or more additional AR gaming consoles136operated by other users. The additional AR gaming consoles136function substantially the same as AR gaming console102(c). As such, each of the additional AR gaming consoles136executes additional instances of the computer-based AR game that is executed by AR gaming console102(c). Further, the additional AR gaming consoles136may receive tracking data and other information from sensors integrated into the additional AR gaming consoles136, environmental sensors132and sensors included in the turret station134, in any technically feasible combination.

Play space network interface138provides an interface between communications network140and the local network over which AR gaming console102(c), environmental sensors132, turret station134, and additional AR gaming consoles136communicate. Additionally or alternatively, any one or more of AR gaming console102(c), environmental sensors132, turret station134, and additional AR gaming consoles136may communicate directly with communications network140.

It will be appreciated that the system shown herein is illustrative and that variations and modifications are possible. In one example, the system100ofFIG. 1is illustrated with a certain number and configuration of AR gaming consoles102, local sensors112, vehicle sensors122, environmental sensors132, turret stations134, additional AR gaming consoles136, and network interfaces. However, the system100could include any technically feasible number and configuration of AR gaming consoles102, local sensors112, vehicle sensors122, environmental sensors132, turret stations134, additional AR gaming consoles136, and network interfaces within the scope of the present disclosure.

In another example, the techniques are disclosed as being executed on an AR gaming console102that is integrated into an AR headset. However, the disclosed techniques could be performed by the AR gaming console102ofFIG. 1, an AR gaming console embedded into a standalone computing device, a computer integrated into a vehicle, or a virtual AR gaming console executing on a server connected to communications network140, in any technically feasible combination. Further, any one or more of the disclosed techniques may be performed by,

In yet another example, the techniques are disclosed herein in the context of computer gaming AR environments. However, the disclosed techniques could be employed in any technically feasible environment within the scope of the present disclosure. The disclosed techniques could be employed in teleconferencing applications where individual or multi-person groups, such as a group convened in a corporate conference room, communicate and/or collaboratively work with each other. Additionally or alternatively, the disclosed techniques could be employed in scenarios where a user engages in an interactive meeting with a physician or other professional. Additionally or alternatively, the disclosed techniques could be employed in collaborative work scenarios where a single user, multiple users, and/or groups of users review and edit various documents that appear on a display monitor106and/or in 3D space as AR objects rendered and displayed by AR headset system104. Any or all of these embodiments fall within the scope of the present disclosure, in any technically feasible combination. More generally, one skilled in the art would recognize that these examples are non-limiting and that any technically feasible implementation falls within the scope of the invention.

Techniques for transitioning across multiple gaming environments as part of an immersive computer gaming experience are now described in greater detail below in conjunction withFIGS. 2-3B.

Augmented Reality Gameplay Across Multiple Gaming Environments

FIG. 2is a more detailed illustration of the AR gaming console102ofFIG. 1, according to various embodiments of the present invention. As shown in AR headset system104includes, without limitation, a processor202, storage204, an input/output (I/O) devices interface206, a network interface208, an interconnect210, a system memory212, a vision capture device214, a display216, and sensors218.

The processor202retrieves and executes programming instructions stored in the system memory212. Similarly, the processor202stores and retrieves application data residing in the system memory212. The interconnect210facilitates transmission, such as of programming instructions and application data, between the processor202, input/output (I/O) devices interface206, storage204, network interface208, system memory212, vision capture device214, display216, and sensors218. The I/O devices interface206is configured to receive input data from user I/O devices222. Examples of user I/O devices222may include one of more buttons, a keyboard, and a mouse or other pointing device. The I/O devices interface206may also include an audio output unit configured to generate an electrical audio output signal, and user I/O devices222may further include one or more speaker configured to generate an acoustic output in response to the electrical audio output signal. The speakers may be integrated into a monaural, stereo, or multi-speaker headset system. The I/O devices interface206may also include an audio input unit that includes one or more microphones. In some embodiments, the audio input unit may be employed for performing noise cancellation, where one or more noise cancellation signals are transmitted to one or more speakers included in the audio output unit.

The vision capture device214includes one or more cameras to capture images from the physical environment for analysis, processing, and display. In operation, the vision capture device214captures and transmits vision information to any one or more other elements included in the AR headset system104. The one or more cameras may include infrared cameras, monochrome cameras, color cameras, time-of-flight cameras, stereo cameras, and multi-camera imagers, in any technically feasible combination. In some embodiments, the vision capture device214provides support for various vision-related functions, including, without limitation, image recognition, visual inertial odometry, and simultaneous locating and mapping (SLAM) tracking.

The display216generally represents any technically feasible means for generating an image for display. The display216includes one or more display devices for displaying AR objects and other AR content. The display may be embedded into a head-mounted display (HMD) system that is integrated into the AR headset system104. The display216reflects, overlays, and/or generates an image including one or more AR objects into or onto the physical environment via an liquid crystal device (LCD) display, light emitting diode (LED) display, organic light emitting diode (OLED) display, digital light processing (DLP) display, waveguide-based display, projection display, reflective display, or any other technically feasible display technology. The display216may employ any technically feasible approach to integrate AR objects into the physical environment, including, without limitation, pass-thru, waveguide, and screen-mirror optics approaches. The display device may be a TV that includes a broadcast or cable tuner for receiving digital or analog television signals.

The sensors218include one or more devices to acquire location and orientation data associated with the AR headset system104. The sensors218may employ any technically feasible approach to acquire location and orientation data, including, without limitation, GPS tracking, radio frequency identification (RFID) tracking, gravity-sensing approaches and magnetic-field-sensing approaches. In that regard, the sensors218may include any one or more accelerometers, gyroscopes, magnetometers, and/or any other technically feasible devices for acquiring location and orientation data. The location and orientation data acquired by sensors218may be supplemental to or as an alternative to camera orientation data, e.g. yaw, pitch, and roll data, generated by the vision capture device214.

Processor202is included to be representative of a single CPU, multiple CPUs, a single CPU having multiple processing cores, a graphic processing unit (GPU), a digital signal processor (DSP), and the like, in any technically feasible combination. And the system memory212is generally included to be representative of a random access memory. The storage204may be a disk drive storage device. Although shown as a single unit, the storage204may be a combination of fixed and/or removable storage devices, such as fixed disc drives, floppy disc drives, tape drives, removable memory cards, or optical storage, network attached storage (NAS), or a storage area-network (SAN). Processor202communicates to other computing devices and systems via network interface208, where network interface208is configured to transmit and receive data via a communications network, such as communications network140. Network interface208may support for various communications channels, including, without limitation, point-to-point communications channels, Bluetooth, WiFi, infrared communications, wireless and wired LANs (Local Area Networks), one or more internet-based WANs (Wide Area Networks), and cellular data networks, in any technically feasible combination.

The system memory212includes, without limitation, a synchronized AR gaming application232and a data store242. Synchronized AR gaming application232, when executed by the processor202, performs one or more operations associated with AR gaming console102ofFIG. 1, as further described herein. Data store242provides memory storage for various items of gaming content, as further described herein. Synchronized AR gaming application232stores data in and retrieves data from data store242, as further described herein.

In operation, synchronized AR gaming application232executes a synchronized AR gaming application to control various aspects of gameplay. Synchronized AR gaming application232receives control inputs from internal sensors, including tracking data. Additionally or alternatively, Synchronized AR gaming application232receives control inputs, including tracking data, from one or more sensors external to AR gaming console102. The tracking data includes the position and/or orientation of the AR gaming console102. From this tracking data, Synchronized AR gaming application232determines the location of the user and the direction that the user is looking. Additionally or alternatively, the tracking data includes the position and/or orientation of one or more users and other objects detected by synchronized AR gaming application232and/or external sensors. Based on the tracking data, synchronized AR gaming application232may alter the execution of one or more aspects of the computer-generated AR game.

Similarly, synchronized AR gaming application232receives control inputs from one or more game controllers (not shown). The control inputs include, without limitation, button presses, trigger activations, and tracking data associated with the game controllers. The tracking data may include the location and/or orientation of the game controllers. The game controllers transmit control inputs to synchronized AR gaming application232via AR gaming console102. In this manner, synchronized AR gaming application232determines which controls of the game controllers are active. Further, synchronized AR gaming application232tracks the location and orientation of the game controllers. Each game controller may be in any technically feasible configuration, including, without limitation, a button panel, a joystick, a wand, a handheld weapon, a steering mechanism, a data glove, or a controller sleeve worn over the user's arm.

In addition, synchronized AR gaming application232detects when AR gaming console102is moved from one gaming environment to a different gaming environment. In response, synchronized AR gaming application232transitions across the gaming environments. More specifically, synchronized AR gaming application232automatically disconnects from the current gaming environment and connects to the new gaming environment without interrupting gameplay of the computer-based AR game. An example case of transitioning across multiple gaming environments is now described.

A user in a local AR gaming environment110, such as the user's home, begins executing synchronized AR gaming application232in order to play a computer-based AR game. Synchronized AR gaming application232identifies one or more wireless or wired networks that are active within local AR gaming environment110. Synchronized AR gaming application232identifies a network that AR gaming console102is authorized to access. In some embodiments, synchronized AR gaming application232may detect a network signal from a network that AR gaming console102is authorized to access. Synchronized AR gaming application232connects to the network and identifies one or more local sensors112that are within local AR gaming environment110. Synchronized AR gaming application232receives sensor data from sensors that are integrated into AR gaming console102as well as sensor data from local sensors112. Via the sensor data, synchronized AR gaming application232tracks the location and orientation of the user and other objects within local AR gaming environment110. Based on the tracking data, synchronized AR gaming application232may alter the execution of one or more aspects of the computer-generated AR game.

In some embodiments, synchronized AR gaming application232may detect a computing device within local AR gaming environment110that is in communication with a local communications network and is capable to perform one or more of the techniques performed by synchronized AR gaming application232. If such a computing device is detected, synchronized AR gaming application232may offload all or a portion of the tasks of the computer-based AR game to the computing device. In this manner, synchronized AR gaming application232may reduce the power consumed by AR gaming console102and improve performance of the computer-based AR game. Subsequently, synchronized AR gaming application232may detect that AR gaming console102is about to exit local AR gaming environment110. In response, synchronized AR gaming application232may offload all or a portion of the tasks previously offloaded to the computing device back to AR gaming console102.

The user then decides to leave home and travel by vehicle to a play space. As the user leaves home, synchronized AR gaming application232detects that AR gaming console102is no longer within local AR gaming environment110. In so doing, synchronized AR gaming application232may detect that AR gaming console102has disconnected from the network within local AR gaming environment110. Additionally or alternatively, synchronized AR gaming application232may detect that a received signal strength indicator (RSSI) level of a signal received from one or more local sensors112or from local network interface114has fallen below a threshold level. Additionally or alternatively, synchronized AR gaming application232may detect, via one or more local sensors112, that AR gaming console102is no longer in a bounded area of a residence associated with the local AR gaming environment110. Upon detecting AR gaming console102is no longer within local AR gaming environment110, synchronized AR gaming application232continues gameplay, but without relying on sensor data from local sensors112. Instead, synchronized AR gaming application232continues gameplay based only on sensor data from the sensors integrated into AR gaming console102.

Subsequently, the user enters the vehicle. Synchronized AR gaming application232identifies one or more wireless or wired networks that are active within vehicle AR gaming environment120. Synchronized AR gaming application232identifies a network that AR gaming console102is authorized to access. In some embodiments, synchronized AR gaming application232may detect a network signal from a network that AR gaming console102is authorized to access. Synchronized AR gaming application232connects to the network and identifies one or more vehicle sensors122that are within vehicle AR gaming environment120. Synchronized AR gaming application232receives sensor data from sensors that are integrated into AR gaming console102as well as sensor data from vehicle sensors122. Via the sensor data, synchronized AR gaming application232tracks the location and orientation of the user and other objects within vehicle AR gaming environment120. Based on the tracking data, synchronized AR gaming application232may alter the execution of one or more aspects of the computer-generated AR game. Further, synchronized AR gaming application232may alter the execution of one or more aspects of the computer-generated AR game based on the operation of AR gaming console102within vehicle AR gaming environment120. For example, synchronized AR gaming application232could generate a simulated spaceship via the AR headset to provide the experience of flying the spaceship within the computer-generated AR game. Further, synchronized AR gaming application232could enable the user to fire weapons from the spaceship towards other users who are playing the game as pedestrians or as occupants of one or more other vehicles.

In some embodiments, synchronized AR gaming application232may identify a computing device within vehicle AR gaming environment120, such as the vehicle's computer, that is capable to perform one or more of the techniques performed by synchronized AR gaming application232. If such a computing device is identified, synchronized AR gaming application232may offload all or a portion of the tasks of the computer-based AR game to the computing device. In this manner, synchronized AR gaming application232may reduce the power consumed by AR gaming console102and improve performance of the computer-based AR game. Subsequently, synchronized AR gaming application232may detect that AR gaming console102is about to exit vehicle AR gaming environment120. In response, synchronized AR gaming application232may offload all or a portion of the tasks previously offloaded to the computing device back to AR gaming console102.

The user then arrives at the play space and leaves the vehicle. As the user leaves the vehicle, synchronized AR gaming application232detects that AR gaming console102is no longer within vehicle AR gaming environment120. In so doing, synchronized AR gaming application232may detect that AR gaming console102has disconnected from the network within vehicle AR gaming environment120. Additionally or alternatively, synchronized AR gaming application232may detect that an RSSI level of a signal received from one or more vehicle sensors122or from vehicle network interface124has fallen below a threshold level. Additionally or alternatively, synchronized AR gaming application232may detect, via one or more vehicle sensors122, that AR gaming console102is no longer in the passenger compartment and/or is outside of the vehicle. Upon detecting AR gaming console102is no longer within vehicle AR gaming environment120, synchronized AR gaming application232continues gameplay, but without relying on sensor data from vehicle sensors122. Instead, synchronized AR gaming application232continues gameplay based only on sensor data from the sensors integrated into AR gaming console102.

Subsequently, the user enters the play space. Synchronized AR gaming application232identifies one or more wireless or wired networks that are active within play space AR gaming environment130. Synchronized AR gaming application232identifies a network that AR gaming console102is authorized to access. In some embodiments, synchronized AR gaming application232may detect a network signal from a network that AR gaming console102is authorized to access. Synchronized AR gaming application232connects to the network and identifies one or more environmental sensors122that are within play space AR gaming environment130. Additionally or alternatively, synchronized AR gaming application232identifies one or more sensors mounted on a turret station134. Synchronized AR gaming application232receives sensor data from sensors that are integrated into AR gaming console102as well as sensor data from one or both of environmental sensors122and sensors mounted on turret station134. Via the sensor data, synchronized AR gaming application232tracks the location and orientation of the user and other objects within play space AR gaming environment130. Based on the tracking data, synchronized AR gaming application232may alter the execution of one or more aspects of the computer-generated AR game. Further, synchronized AR gaming application232detects additional AR gaming consoles136within play space AR gaming environment130. In response, synchronized AR gaming application232interacts with the additional AR gaming consoles136to coordinate gameplay with other users within play space AR gaming environment130.

In some embodiments, synchronized AR gaming application232may detect a computing device within play space AR gaming environment130that is in communication with a local communications network and is capable to perform one or more of the techniques performed by synchronized AR gaming application232. If such a computing device is detected, synchronized AR gaming application232may offload all or a portion of the tasks of the computer-based AR game to the computing device. In this manner, synchronized AR gaming application232may reduce the power consumed by AR gaming console102and improve performance of the computer-based AR game. Subsequently, synchronized AR gaming application232may detect that AR gaming console102is about to exit play space AR gaming environment130. In response, synchronized AR gaming application232may offload all or a portion of the tasks previously offloaded to the computing device back to AR gaming console102.

Subsequently, the user leaves the play space. As the user leaves the play space, synchronized AR gaming application232detects that AR gaming console102is no longer within play space AR gaming environment130. In so doing, synchronized AR gaming application232may detect that AR gaming console102has disconnected from the network within play space AR gaming environment130. Additionally or alternatively, synchronized AR gaming application232may detect that a received signal strength indicator (RSSI) level of a signal received from one or more environmental sensors112, from one or more sensors mounted on turret station134, or from play space network interface138has fallen below a threshold level. Additionally or alternatively, synchronized AR gaming application232may detect that AR gaming console102has exited play space AR gaming environment130based on geotagging and/or geo-fencing data. Additionally or alternatively, synchronized AR gaming application232may detect, via one or more local sensors112, that AR gaming console102is no longer in a defined area within the open play space associated with the play space AR gaming environment130. Upon detecting AR gaming console102is no longer within play space AR gaming environment130, synchronized AR gaming application232continues gameplay, but without relying on sensor data from environmental sensors112or from the sensors mounted on turret station134. Instead, synchronized AR gaming application232continues gameplay based only on sensor data from the sensors integrated into AR gaming console102.

In this manner, synchronized AR gaming application232generates seamless gameplay of a computer-generated AR game is the user transitions across multiple gaming environments.

In some embodiments, each AR gaming console for a set of users may possess a common game network identifier (ID) and a unique instance ID for multiuser peer-to-peer gameplay. In such embodiments, users with an AR gaming console that possesses the correct game network ID have permission to join the corresponding computer-generated AR game. Upon joining, the AR gaming console102may publish the unique instance ID. As a result, the other AR gaming consoles may identify that the corresponding user has joined the computer-generated AR game. Further, AR gaming consoles102may be geographically restricted. In this manner, an AR gaming console102that possess the correct game network ID may nevertheless be prohibited from joining the computer-generated AR game unless the AR gaming console102is within a certain distance from a specified geographical location. More specifically, AR gaming console102may detect that a second AR gaming console is executing the AR gaming application and is residing within a different AR gaming environment. AR gaming console102may determine that the second gaming console is within a threshold distance from the first gaming console. AR gaming console102may then alter execution of the AR gaming application to enable an interaction between the AR gaming consoles.

AR gaming consoles102may be connected to a network within a corresponding play space AR gaming environment130. Additionally or alternatively, AR gaming consoles102may register onto the network from a remotely located network, such as a network within local AR gaming environment110or vehicle AR gaming environment120. Additionally or alternatively, AR gaming consoles102that are not currently connected to a wireless network in a particular gaming environment may register onto the network via a cellular data network.

In some embodiments, a computer-based AR game may be controlled from one or more central servers connected to communications network140. Additionally or alternatively, the computer-based AR game may be controlled via multiple peer-to-peer AR gaming consoles102that connect to generate a mesh network. In some embodiments, the computer-based AR game may be controlled via a hybrid system of a central servers connected to communications network140as well as a mesh network generated by multiple peer-to-peer AR gaming consoles102. In such embodiments, AR gaming consoles102may execute the instances of the computer-based AR game while the one or more central services provide common services for the peer-to-peer AR gaming consoles102. These central services may include, without limitation, voice commands, geo-fencing, geotagging, SLAM tracking, and control of autonomous computer-generated players.

In some embodiments, an AR gaming console102may employ one or more external cameras for remote viewing. In such embodiments, the AR gaming console102may display images from a remote camera on all or part of the display216in the AR gaming console102. Additionally or alternatively, the AR gaming console102may generate sound effects, such as footsteps, or visual effects, such as shadows or warning messages, when an image from external camera indicates other users are nearby but are not currently in the field of view of the AR gaming console102.

FIGS. 3A-3Bset forth a flow diagram of method steps for enabling augmented reality gameplay across multiple gaming environments, according to various embodiments of the present invention. Although the method steps are described in conjunction with the systems ofFIGS. 1-2, persons of ordinary skill in the art will understand that any system configured to perform the method steps, in any order, is within the scope of the present invention.

As shown, a method300begins at step302, where synchronized AR gaming application232executes a computer-based AR game on an AR gaming console102. The computer-based AR game may be an augmented reality game that the user plays with one or more other users via additional game consoles. At step304, synchronized AR gaming application232tracks the user and/or other objects via one or more sensors integrated into AR gaming console102. These sensors may include, without limitation, cameras, GPS trackers, RFID trackers, accelerometers, gyroscopes, and magnetometers. Based on the tracking data, synchronized AR gaming application232may alter the execution of one or more aspects of the computer-generated AR game. More specifically, synchronized AR gaming application232alters execution of the computer-generated AR game to alter the view of the user and display one or more AR objects to the user based on the tracking data. At step306, synchronized AR gaming application232detects that AR gaming console102resides within a first AR gaming environment. More specifically, synchronized AR gaming application232detects one or more networks that are associated with a particular gaming environment. In some embodiments, synchronized AR gaming application232may detect a network signal from a network that AR gaming console102is authorized to access. The gaming environment may be a local AR gaming environment110, a vehicle AR gaming environment120, or a play space AR gaming environment130. At step308, synchronized AR gaming application232connects to a network associated with the first AR gaming environment. The network may include, without limitation, point-to-point communications channels, Bluetooth, WiFi, infrared communications, wireless and wired LANs (Local Area Networks), one or more internet-based WANs (Wide Area Networks), and cellular data networks. At step310, synchronized AR gaming application232detects one or more external sensors within the first AR gaming environment. The external sensors may be any one or more of the sensors described herein. At step312, synchronized AR gaming application232tracks the user and/or other objects via the detected external sensors within the first AR gaming environment as well as the one or more sensors integrated into AR gaming console102. Based on the tracking data, synchronized AR gaming application232may alter the execution of one or more aspects of the computer-generated AR game. More specifically, synchronized AR gaming application232alters execution of the computer-generated AR game to alter the view of the user and display one or more AR objects to the user based on the tracking data. Further, synchronized AR gaming application232alters execution of the computer-generated AR game based on received sensor data in order to enable the synchronized AR gaming application232to continue executing as the first gaming console exits the first AR gaming environment and enters the second AR gaming environment.

Subsequently, at step314synchronized AR gaming application232detects that AR gaming console102is no longer within the first AR gaming environment. In so doing, synchronized AR gaming application232may detect that AR gaming console102has disconnected from the network within the first AR gaming environment. Additionally or alternatively, synchronized AR gaming application232may detect that an RSSI level of a signal received from one or more external sensors or from a network interface has fallen below a threshold level. The external sensors may be any one or more of the sensors described herein. Additionally or alternatively, synchronized AR gaming application232may detect that AR gaming console102has exited the first AR gaming environment based on geotagging and/or geo-fencing data. At step316, synchronized AR gaming application232disconnects from the network associated with the first AR gaming environment. As a result, synchronized AR gaming application232ceases tracking the user and/or other objects based on external sensor data. Instead, at step318, synchronized AR gaming application232tracks the user and/or other objects via only the one or more sensors integrated into AR gaming console102. Based on the tracking data, synchronized AR gaming application232may alter the execution of one or more aspects of the computer-generated AR game. More specifically, synchronized AR gaming application232alters execution of the computer-generated AR game to alter the view of the user and display one or more AR objects to the user based on the tracking data. Further, synchronized AR gaming application232alters execution of the computer-generated AR game based on received sensor data in order to enable the synchronized AR gaming application232to continue executing as the first gaming console exits the first AR gaming environment and enters the second AR gaming environment.

At step320, synchronized AR gaming application232synchronized AR gaming application232detects that AR gaming console102resides within a second AR gaming environment. More specifically, synchronized AR gaming application232detects one or more networks that are associated with a particular gaming environment. In some embodiments, synchronized AR gaming application232may detect a network signal from a network that AR gaming console102is authorized to access. Additionally or alternatively, synchronized AR gaming application232may detect that an RSSI level of a signal received from one or more external sensors or from a network interface associated with the second AR gaming environment is above a threshold level. The external sensors may be any one or more of the sensors described herein. Additionally or alternatively, synchronized AR gaming application232may detect that AR gaming console102has entered the second AR gaming environment based on geotagging and/or geo-fencing data. The gaming environment may be a local AR gaming environment110, a vehicle AR gaming environment120, or a play space AR gaming environment130. At step322, synchronized AR gaming application232connects to a network associated with the second AR gaming environment. The network may include, without limitation, point-to-point communications channels, Bluetooth, WiFi, infrared communications, wireless and wired LANs (Local Area Networks), one or more internet-based WANs (Wide Area Networks), and cellular data networks. At step324, synchronized AR gaming application232detects one or more external sensors within the second AR gaming environment. The external sensors may be any one or more of the sensors described herein. At step326, synchronized AR gaming application232tracks the user and/or other objects via the detected external sensors within the second AR gaming environment as well as the one or more sensors integrated into AR gaming console102. Based on the tracking data, synchronized AR gaming application232may alter the execution of one or more aspects of the computer-generated AR game. More specifically, synchronized AR gaming application232alters execution of the computer-generated AR game to alter the view of the user and display one or more AR objects to the user based on the tracking data. Further, synchronized AR gaming application232alters execution of the computer-generated AR game based on received sensor data in order to enable the synchronized AR gaming application232to continue executing as the first gaming console exits the first AR gaming environment and enters the second AR gaming environment.

The method300then terminates. Additionally or alternatively, synchronized AR gaming application232may continue to external execute any or all of steps304through326as the user continues game play while entering and exiting various gaming environments.

In sum, techniques are disclosed for seamlessly transitioning across multiple gaming environments when executing an augmented reality computer-based AR game. A user may begin executing the computer-based AR game on an AR gaming console within a local gaming environment, such as the user's home. The user may leave the local gaming environment and enter a vehicle in order to travel to a play space. The AR gaming console automatically disconnects from the local gaming environment and connects to the vehicle gaming environment. When the user arrives at the play space, the user exits the vehicle and enters the play space. The AR gaming console automatically disconnects from the vehicle gaming environment and connects to the play space gaming environment. As a result, the AR gaming console fluidly and seamless transitions across the al gaming environment, vehicle gaming environment, and play space gaming environment.

In addition, users executing the computer-based AR game in one gaming environment may interact with other users in the same or different gaming environments. For example, a user executing the computer-based AR game within a vehicle gaming environment may encounter other users who are executing the computer-based AR game as pedestrians within a play space gaming environment. The AR gaming console of the user within the vehicle gaming environment communicates with the AR gaming consoles of the users within the play space gaming environment. As a result, the user within the vehicle gaming environment and the users within the play space gaming environment may interact with one another in the context of the computer-based AR game.

At least one technical advantage of the disclosed techniques relative to the prior art is that a user experiences a more seamless gaming experience when transitioning from one augmented reality gaming environment to another augmented reality gaming environment. In that regard, the disclosed techniques enable a user's AR headset to automatically disconnect from one augmented reality gaming environment and reconnect to the other augmented reality gaming environment without interrupting gameplay. In so doing, the user's AR headset automatically switches from receiving sensor data from sensors in the previous augmented reality gaming environment to receiving sensor data from sensors in the new augmented reality gaming environment. As a result, the user can have a more immersive and uninterrupted experience when playing a computer-based augmented reality game. These technical advantages represent one or more technological improvements over prior art approaches.

1. In some embodiments, a computer-implemented method for implementing augmented reality (AR) gameplay across multiple gaming environments comprises: detecting that a first gaming console that is executing an AR gaming application has exited a first AR gaming environment and entered a second AR gaming environment; connecting to a communications network associated with the second AR gaming environment; detecting, via the communications network, a sensor associated with the second AR gaming environment; and altering execution of the AR gaming application based at least in part on sensor data received via the sensor to enable the AR gaming application to continue executing as the first gaming console exits the first AR gaming environment and enters the second AR gaming environment.

2. The computer-implemented method according to clause 1, further comprising: detecting that the first gaming console has exited the second AR gaming environment and entered a third AR gaming environment; disconnecting from the communications network; and altering execution of the AR gaming application based at least in part on second sensor data received via a second sensor integrated into the first gaming console to enable the AR gaming application to continue executing as the first gaming console exits the second AR gaming environment and enters the third AR gaming environment.

3. The computer-implemented method according to clause 1 or clause 2, wherein detecting that the first gaming console has exited the first AR gaming environment comprises detecting that a received signal strength indicator (RSSI) level associated with second sensor data received via a second sensor associated with the first AR gaming environment is below a threshold level.

4. The computer-implemented method according to any of clauses 1-3, wherein the first AR gaming environment is associated with a vehicle, and wherein detecting that the first gaming console has exited the first AR gaming environment comprises detecting, via a second sensor associated with the first AR gaming environment, that a user associated with the first gaming console has exited a passenger compartment of the vehicle.

5. The computer-implemented method according to any of clauses 1-4, wherein the first AR gaming environment is associated with a residence, and wherein detecting that the first gaming console has exited the first AR gaming environment comprises detecting, via a second sensor associated with the first AR gaming environment, that a user associated with the first gaming console has exited a bounded area within the residence.

6. The computer-implemented method according to any of clauses 1-5, wherein detecting that the first gaming console has entered the second AR gaming environment comprises detecting a network signal associated with the communications network.

7. The computer-implemented method according to any of clauses 1-6, further comprising: detecting that a second gaming console that is executing the AR gaming application resides within a third AR gaming environment; determining that the second gaming console is within a threshold distance from the first gaming console; and altering execution of the AR gaming application to enable an interaction between the first gaming console and the second gaming console.

8. The computer-implemented method according to any of clauses 1-7, further comprising: detecting a computing device that is in communication with the communications network; determining that the computing device is configured to execute at least a portion of the AR gaming application; and offloading a task associated with the at least a portion of the AR gaming application to the computing device.

9. The computer-implemented method according to any of clauses 1-8, further comprising: detecting that the first gaming console is about to exit the second AR gaming environment; and offloading the task from the computing device to the first gaming console.

10. The computer-implemented method according to any of clauses 1-9, further comprising: detecting that a second gaming console that is executing the AR gaming application resides within the second AR gaming environment; and generating a mesh network that includes the first gaming console and the second gaming console.

11. In some embodiments, one or more non-transitory computer-readable media include instructions that, when executed by one or more processors, cause the one or more processors to perform the steps of: detecting that a first gaming console that is executing an AR gaming application has exited a first AR gaming environment and entered a second AR gaming environment; connecting to a communications network associated with the second AR gaming environment; detecting, via the communications network, a sensor associated with the second AR gaming environment; and altering execution of the AR gaming application based at least in part on sensor data received via the sensor to enable the AR gaming application to continue executing as the first gaming console exits the first AR gaming environment and enters the second AR gaming environment.

12. The one or more non-transitory computer-readable media according to clause 11, further comprising: detecting that the first gaming console has exited the second AR gaming environment and entered a second AR gaming environment; disconnecting from the communications network; and altering execution of the AR gaming application based at least in part on second sensor data received via a second sensor integrated into the first gaming console to enable the AR gaming application to continue executing as the first gaming console exits the second AR gaming environment and enters the third AR gaming environment.

13. The one or more non-transitory computer-readable media according to clause 11 or clause 12, wherein detecting that the first gaming console has exited the first AR gaming environment comprises detecting that a received signal strength indicator (RSSI) level associated with second sensor data received via a second sensor associated with the first AR gaming environment is below a threshold level.

14. The one or more non-transitory computer-readable media according to any of clauses 11-13, wherein the first AR gaming environment is associated with a vehicle, and wherein detecting that the first gaming console has exited the first AR gaming environment comprises detecting, via a second sensor associated with the first AR gaming environment, that a user associated with the first gaming console has exited a passenger compartment of the vehicle.

15. The one or more non-transitory computer-readable media according to any of clauses 11-14, wherein the first AR gaming environment is associated with an open play space, and wherein detecting that the first gaming console has exited the first AR gaming environment comprises detecting, via a second sensor associated with the first AR gaming environment, that a user associated with the first gaming console has exited a defined area within the open play space.

16. The one or more non-transitory computer-readable media according to any of clauses 11-15, wherein detecting that the first gaming console has entered the second AR gaming environment comprises detecting a network signal associated with the communications network.

17. The one or more non-transitory computer-readable media according to any of clauses 11-16, further comprising: detecting that a second gaming console that is executing the AR gaming application resides within a third AR gaming environment; determining that the second gaming console is within a threshold distance from the first gaming console; and altering execution of the AR gaming application to enable an interaction between the first gaming console and the second gaming console.

18. The one or more non-transitory computer-readable media according to any of clauses 11-17, wherein at least a portion of the AR gaming application is executing on a remote server in communication with the communications network, and further comprising receiving data associated with the AR gaming application via the remote server.

19. The one or more non-transitory computer-readable media according to any of clauses 11-18, further comprising disconnecting from a second communications network associated with the first AR gaming environment.

20. In some embodiments, a computing device comprises: a memory that includes instructions, and a processor that is coupled to the memory and, when executing the instructions, is configured to: detect that a first gaming console that is executing an AR gaming application has exited a first AR gaming environment and entered a second AR gaming environment; connect to a communications network associated with the second AR gaming environment; detect, via the communications network, a sensor associated with the second AR gaming environment; and alter execution of the AR gaming application based at least in part on sensor data received via the sensor to enable the AR gaming application to continue executing as the first gaming console exits the first AR gaming environment and enters the second AR gaming environment.

Any and all combinations of any of the claim elements recited in any of the claims and/or any elements described in this application, in any fashion, fall within the contemplated scope of the present invention and protection.

The descriptions of the various embodiments have been presented for purposes of illustration, but are not intended to be exhaustive or limited to the embodiments disclosed. Many modifications and variations will be apparent to those of ordinary skill in the art without departing from the scope and spirit of the described embodiments.

Aspects of the present embodiments may be embodied as a system, method or computer program product. Accordingly, aspects of the present disclosure may take the form of an entirely hardware embodiment, an entirely software embodiment (including firmware, resident software, micro-code, etc.) or an embodiment combining software and hardware aspects that may all generally be referred to herein as a “module” or “system.” Furthermore, aspects of the present disclosure may take the form of a computer program product embodied in one or more computer readable medium(s) having computer readable program code embodied thereon.

Any combination of one or more computer readable medium(s) may be utilized. The computer readable medium may be a computer readable signal medium or a computer readable storage medium. A computer readable storage medium may be, for example, but not limited to, an electronic, magnetic, optical, electromagnetic, infrared, or semiconductor system, apparatus, or device, or any suitable combination of the foregoing. More specific examples (a non-exhaustive list) of the computer readable storage medium would include the following: an electrical connection having one or more wires, a portable computer diskette, a hard disk, a random access memory (RAM), a read-only memory (ROM), an erasable programmable read-only memory (EPROM or Flash memory), an optical fiber, a portable compact disc read-only memory (CD-ROM), an optical storage device, a magnetic storage device, or any suitable combination of the foregoing. In the context of this document, a computer readable storage medium may be any tangible medium that can contain, or store a program for use by or in connection with an instruction execution system, apparatus, or device.

Aspects of the present disclosure are described above with reference to flowchart illustrations and/or block diagrams of methods, apparatus (systems) and computer program products according to embodiments of the disclosure. It will be understood that each block of the flowchart illustrations and/or block diagrams, and combinations of blocks in the flowchart illustrations and/or block diagrams, can be implemented by computer program instructions. These computer program instructions may be provided to a processor of a general purpose computer, special purpose computer, or other programmable data processing apparatus to produce a machine, such that the instructions, which execute via the processor of the computer or other programmable data processing apparatus, enable the implementation of the functions/acts specified in the flowchart and/or block diagram block or blocks. Such processors may be, without limitation, general purpose processors, special-purpose processors, application-specific processors, or field-programmable

The flowchart and block diagrams in the figures illustrate the architecture, functionality, and operation of possible implementations of systems, methods and computer program products according to various embodiments of the present disclosure. In this regard, each block in the flowchart or block diagrams may represent a module, segment, or portion of code, which comprises one or more executable instructions for implementing the specified logical function(s). It should also be noted that, in some alternative implementations, the functions noted in the block may occur out of the order noted in the figures. For example, two blocks shown in succession may, in fact, be executed substantially concurrently, or the blocks may sometimes be executed in the reverse order, depending upon the functionality involved. It will also be noted that each block of the block diagrams and/or flowchart illustration, and combinations of blocks in the block diagrams and/or flowchart illustration, can be implemented by special purpose hardware-based systems that perform the specified functions or acts, or combinations of special purpose hardware and computer instructions.

While the preceding is directed to embodiments of the present disclosure, other and further embodiments of the disclosure may be devised without departing from the basic scope thereof, and the scope thereof is determined by the claims that follow.

Claims

- A computer-implemented method for implementing augmented reality (AR) gameplay across multiple gaming environments, the method comprising: detecting that a first gaming console that is executing an AR gaming application has exited a first AR gaming environment and entered a second AR gaming environment;connecting to a communications network associated with the second AR gaming environment;detecting, via the communications network, a sensor associated with the second AR gaming environment;and altering execution of the AR gaming application based at least in part on sensor data received via the sensor to enable the AR gaming application to continue executing as the first gaming console exits the first AR gaming environment and enters the second AR gaming environment.

- The computer-implemented method of claim 1 , further comprising: detecting that the first gaming console has exited the second AR gaming environment and entered a third AR gaming environment;disconnecting from the communications network;and altering execution of the AR gaming application based at least in part on second sensor data received via a second sensor integrated into the first gaming console to enable the AR gaming application to continue executing as the first gaming console exits the second AR gaming environment and enters the third AR gaming environment.

- The computer-implemented method of claim 1 , wherein detecting that the first gaming console has exited the first AR gaming environment comprises detecting that a received signal strength indicator (RSSI) level associated with second sensor data received via a second sensor associated with the first AR gaming environment is below a threshold level.

- The computer-implemented method of claim 1 , wherein the first AR gaming environment is associated with a vehicle, and wherein detecting that the first gaming console has exited the first AR gaming environment comprises detecting, via a second sensor associated with the first AR gaming environment, that a user associated with the first gaming console has exited a passenger compartment of the vehicle.

- The computer-implemented method of claim 1 , wherein the first AR gaming environment is associated with a residence, and wherein detecting that the first gaming console has exited the first AR gaming environment comprises detecting, via a second sensor associated with the first AR gaming environment, that a user associated with the first gaming console has exited a bounded area within the residence.

- The computer-implemented method of claim 1 , wherein detecting that the first gaming console has entered the second AR gaming environment comprises detecting a network signal associated with the communications network.

- The computer-implemented method of claim 1 , further comprising: detecting that a second gaming console that is executing the AR gaming application resides within a third AR gaming environment;determining that the second gaming console is within a threshold distance from the first gaming console;and altering execution of the AR gaming application to enable an interaction between the first gaming console and the second gaming console.

- The computer-implemented method of claim 1 , further comprising: detecting a computing device that is in communication with the communications network;determining that the computing device is configured to execute a portion of the AR gaming application;and offloading a task associated with the portion of the AR gaming application to the computing device.

- The computer-implemented method of claim 8 , further comprising: detecting that the first gaming console is about to exit the second AR gaming environment;and offloading the task from the computing device to the first gaming console.

- The computer-implemented method of claim 1 , further comprising: detecting that a second gaming console that is executing the AR gaming application resides within the second AR gaming environment;and generating a mesh network that includes the first gaming console and the second gaming console.

- One or more non-transitory computer-readable media including instructions that, when executed by one or more processors, cause the one or more processors to perform the steps of: detecting that a first gaming console that is executing an AR gaming application has exited a first AR gaming environment and entered a second AR gaming environment;connecting to a communications network associated with the second AR gaming environment;detecting, via the communications network, a sensor associated with the second AR gaming environment;and altering execution of the AR gaming application based at least in part on sensor data received via the sensor to enable the AR gaming application to continue executing as the first gaming console exits the first AR gaming environment and enters the second AR gaming environment.

- The one or more non-transitory computer-readable media of claim 11 , further comprising: detecting that the first gaming console has exited the second AR gaming environment and entered a third AR gaming environment;disconnecting from the communications network;and altering execution of the AR gaming application based at least in part on second sensor data received via a second sensor integrated into the first gaming console to enable the AR gaming application to continue executing as the first gaming console exits the second AR gaming environment and enters the third AR gaming environment.

- The one or more non-transitory computer-readable media of claim 11 , wherein detecting that the first gaming console has exited the first AR gaming environment comprises detecting that a received signal strength indicator (RSSI) level associated with second sensor data received via a second sensor associated with the first AR gaming environment is below a threshold level.

- The one or more non-transitory computer-readable media of claim 11 , wherein the first AR gaming environment is associated with a vehicle, and wherein detecting that the first gaming console has exited the first AR gaming environment comprises detecting, via a second sensor associated with the first AR gaming environment, that a user associated with the first gaming console has exited a passenger compartment of the vehicle.

- The one or more non-transitory computer-readable media of claim 11 , wherein the first AR gaming environment is associated with an open play space, and wherein detecting that the first gaming console has exited the first AR gaming environment comprises detecting, via a second sensor associated with the first AR gaming environment, that a user associated with the first gaming console has exited a defined area within the open play space.

- The one or more non-transitory computer-readable media of claim 11 , wherein detecting that the first gaming console has entered the second AR gaming environment comprises detecting a network signal associated with the communications network.

- The one or more non-transitory computer-readable media of claim 11 , further comprising: detecting that a second gaming console that is executing the AR gaming application resides within a third AR gaming environment;determining that the second gaming console is within a threshold distance from the first gaming console;and altering execution of the AR gaming application to enable an interaction between the first gaming console and the second gaming console.

- The one or more non-transitory computer-readable media of claim 11 , wherein a portion of the AR gaming application is executing on a remote server in communication with the communications network, and further comprising receiving data associated with the AR gaming application via the remote server.

- The one or more non-transitory computer-readable media of claim 11 , further comprising disconnecting from a second communications network associated with the first AR gaming environment.

- A computing device, comprising: a memory that includes instructions, and a processor that is coupled to the memory and, when executing the instructions, is configured to: detect that a first gaming console that is executing an AR gaming application has exited a first AR gaming environment and entered a second AR gaming environment;connect to a communications network associated with the second AR gaming environment;detect, via the communications network, a sensor associated with the second AR gaming environment;and alter execution of the AR gaming application based at least in part on sensor data received via the sensor to enable the AR gaming application to continue executing as the first gaming console exits the first AR gaming environment and enters the second AR gaming environment.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.