U.S. Pat. No. 10,864,433

USING A PORTABLE DEVICE TO INTERACT WITH A VIRTUAL SPACE

AssigneeSony Interactive Entertainment Inc.

Issue DateApril 7, 2017

Illustrative Figure

Abstract

In one implementation, a portable device is provided, including: a sensor configured to generate sensor data for determining and tracking a position and orientation of the portable device during an interactive session of a virtual reality program presented on a main display, the interactive session being defined for interactivity between a user and the virtual reality program; a communications module configured to send the sensor data to a computing device, the communications module being further configured to receive from the computing device an ancillary video stream of the interactive session that is generated based on a state of the virtual reality program and the tracked position and orientation of the portable device.

Description

DETAILED DESCRIPTION The following embodiments describe methods and apparatus for a system that enables an interactive application to utilize the resources of a handheld device. In one embodiment of the invention, a primary processing interface is provided for rendering a primary video stream of the interactive application to a display. A first user views the rendered primary video stream on the display and interacts by operating a controller device which communicates with the primary processing interface. Simultaneously, a second user operates a handheld device in the same interactive environment. The handheld device renders an ancillary video stream of the interactive application on a display of the handheld device, separate from the display showing the primary video stream. Accordingly, methods and apparatus in accordance with embodiments of the invention will now be described. It will be obvious, however, to one skilled in the art, that the present invention may be practiced without some or all of these specific details. In other instances, well known process operations have not been described in detail in order not to unnecessarily obscure the present invention. With reference toFIG. 1, a system for interfacing with an interactive application is shown, in accordance with an embodiment of the invention. An interactive application10is executed by a primary processor12. A primary processing interface14enables a state of the interactive application10to be rendered to a display18. This is accomplished by sending a primary video stream16of the interactive application10from the primary processing interface14to the display18. In some embodiments of the invention, the primary processing interface14and the primary processor12may be part of the same device, such as a computer or a console system. Or in other embodiments, the primary processing interface14and the primary processor12may be parts of separate devices (such as separate computers or console systems) which are connected either directly or via ...

DETAILED DESCRIPTION

The following embodiments describe methods and apparatus for a system that enables an interactive application to utilize the resources of a handheld device. In one embodiment of the invention, a primary processing interface is provided for rendering a primary video stream of the interactive application to a display. A first user views the rendered primary video stream on the display and interacts by operating a controller device which communicates with the primary processing interface. Simultaneously, a second user operates a handheld device in the same interactive environment. The handheld device renders an ancillary video stream of the interactive application on a display of the handheld device, separate from the display showing the primary video stream. Accordingly, methods and apparatus in accordance with embodiments of the invention will now be described.

It will be obvious, however, to one skilled in the art, that the present invention may be practiced without some or all of these specific details. In other instances, well known process operations have not been described in detail in order not to unnecessarily obscure the present invention.

With reference toFIG. 1, a system for interfacing with an interactive application is shown, in accordance with an embodiment of the invention. An interactive application10is executed by a primary processor12. A primary processing interface14enables a state of the interactive application10to be rendered to a display18. This is accomplished by sending a primary video stream16of the interactive application10from the primary processing interface14to the display18. In some embodiments of the invention, the primary processing interface14and the primary processor12may be part of the same device, such as a computer or a console system. Or in other embodiments, the primary processing interface14and the primary processor12may be parts of separate devices (such as separate computers or console systems) which are connected either directly or via a network. The display18may be any of various types of displays, such as a television, monitor, projector, or any other kind of display which may be utilized to visually display a video stream.

A controller20is provided for interfacing with the interactive application10. The controller includes an input mechanism22for receiving input from a user24. The input mechanism22may include any of various kinds of input mechanisms, such as a button, joystick, touchpad, trackball, motion sensor, or any other type of input mechanism which may receive input from the user24useful for interacting with the interactive application10. The controller20communicates with the primary processing interface14. In one embodiment, the communication is wireless; in another embodiment, the communication occurs over a wired connection. The controller20transmits input data to the primary processing interface14, which in turn may process the input data and transmit the resulting data to the interactive application10, or simply relay the input data to the interactive application10directly. The input data is applied to directly affect the state of the interactive application.

A data feed26of the interactive application10is provided to a handheld device28. The handheld device28provides an interface through which another user30interfaces with the interactive application10. In one embodiment, the handheld device28communicates wirelessly with the primary processing interface14. In another embodiment, the handheld device28communicates with the primary processing interface14over a wired connection. The handheld device28receives the data feed26and a processor32of the handheld device28processes the data feed26to generate an ancillary video stream34of the interactive application10. The ancillary video stream34is rendered on a display36which is included in the handheld device28.

In various embodiments, the ancillary video stream34may provide the same image as the primary video stream16, or may vary from the primary video stream16to different degrees, including being entirely different from the rendered primary video stream16. For example, in one embodiment, the ancillary video stream34provides a same image as the primary video stream16for a period of time, and then transitions to a different image. The transition can be triggered by input received from the handheld device28. Additional exemplary embodiments of the rendered ancillary video stream34on the display36are explained in further detail below. The user30views the ancillary video stream34on the display36and interacts with it by providing input through an input mechanism38included in the handheld device28. The input mechanism38may include any of various input mechanisms, such as buttons, a touchscreen, joystick, trackball, keyboard, stylus or any other type of input mechanism which may be included in a handheld device. The user30thus interacts with the rendered ancillary video stream34so as to provide interactive input40via the input mechanism38. The interactive input40is processed by the processor32so as to determine a virtual tag data42. The virtual tag data42is transmitted to the interactive application10via the primary processing interface14. The virtual tag data42may be stored as a virtual tag, and includes information which defines an event to be rendered by the interactive application10when the state of the interactive application10reaches a certain predetermined configuration so as to trigger execution of the virtual tag by the interactive application10. The contents of the virtual tag may vary in various embodiments, and may pertain to objects, items, characters, actions, and other types of events rendered by the interactive application10to the display18.

While the embodiments described in the present specification include a user24who utilizes a controller20, and a user30who utilizes a handheld device28, it is contemplated that there may be various configurations of users. For example, in other embodiments, there may be one or more users who utilize controllers, and one or more users who utilize handheld devices. Or in other embodiments, there may be no users who utilize controllers, but least one user who utilizes a handheld device.

With reference toFIG. 2, a system for storing and retrieving virtual tags is shown, in accordance with an embodiment of the invention. The handheld device user30utilizes the handheld device28to generate tag data42. In one embodiment, a tag graphical user interface (GUI) module50is included in the handheld device for providing a GUI to the user30for facilitating receipt of the user's30provided interactive input for generation of the tag data42. In various embodiments, the tag GUI50may include any of various features, including selectable menu options, tracing of touchscreen input drawn on the display, movement based on touchscreen gestures or selectable inputs, or any other type of GUI elements or features which may be useful for enabling the user30to provide interactive input so as to generate tag data42.

In one embodiment, the tag data42is transmitted to the interactive application10. The tag data42is received by a tag module60included in the interactive application. In some embodiments, the tag data is immediately applied by the interactive application10to render an event defined by the tag data. For example, the tag data might define an object that is to be rendered or affect an existing object that is already being rendered by the interactive application. The event defined by the tag data is thus applied by the interactive application, resulting in an updated state of the interactive application which includes the event. This updated state is rendered in the primary video stream16which is shown on the display18, as shown inFIG. 1.

In some embodiments, the tag data42defines an event that is not immediately applicable, but will become applicable by the interactive application10when the state of the interactive application10reaches a certain configuration. For example, in one embodiment, the event defined by the tag data42may specify that an object is to be rendered at a specific location within a virtual space of the interactive application10. Thus, when the state of the interactive application10reaches a configuration such that the interactive application10renders a region including the location defined by the tag data, then the interactive application10will apply the tag data so as to render the object. In another example, the tag data42could include a temporal stamp which specifies a time at which an event should occur within a virtual timeline of the interactive application10. Thus, when the state of the interactive application10reaches the specified time, the execution of the event defined by the tag data42is triggered, resulting in an updated state of the interactive application10which includes the rendered event.

The tag module60may store the tag data as a tag locally. The tag module60may also store the tag in a tag repository52. The tag repository52may be local to the interactive application10, or may be connected to the interactive application10by way of a network54, such as the Internet. The tag repository52stores the tags56for later retrieval. Each of the tags56may include various data defining events to be rendered by the interactive application10. The data may include coordinate data which defines a location within a virtual space of the interactive application, time data (or a temporal stamp) which defines a time within a virtual timeline of the interactive application, text, graphical data, object or item data, character data, and other types of data which may define or affect an event or objects within the interactive application10.

The tag repository52may be configured to receive tag data from multiple users, thereby aggregating tags from users who are interacting with the same interactive application. The users could interact with a same session or a same instance of the interactive application10, or with different sessions or different instances of the same interactive application10. In one embodiment, the tag module60of the interactive application10retrieves tag data from the tag repository52. This may be performed based on a current location58of the state of the interactive application. In some embodiments, the current location58may be a geographical location within a virtual space of the interactive application or a temporal location within a virtual timeline of the interactive application. As the current location58changes based on changes in the state of the interactive application (e.g. based on input to the interactive application10), so the tag module60continues to retrieve tag data from the tag repository52which is relevant to the current location58. In this manner, a user of the interactive application10will enjoy an interactive experience with the interactive application10that is affected by the tag data generated from multiple other users.

In an alternative embodiment, the tag data42is transmitted directly from the handheld device28to the tag repository52. This may occur in addition to, or in place of, transmission of the tag data42to the interactive application10.

With reference toFIG. 3, a system for providing interactivity with an interactive application is shown, in accordance with an embodiment of the invention. As shown, an interactive application10runs on a computer70. The computer70may be any of various types of computing devices, such as a server, a personal computer, a gaming console system, or any other type of computing device capable of executing an interactive application. The computer70provides output of the interactive application10as a primary video stream to a display18, so as to visually render the interactive application10for interactivity; and provides audio output from the interactive application10as a primary audio stream to speakers19to provide audio for interactivity.

The computer70further includes a wireless transceiver76for facilitating communication with external components. In the embodiment shown, the wireless transceiver76facilitates wireless communication with a controller20operated by a user24, and a portable device28operated by a user30. The users24and30provide input to the interactive application10by operating the controller20and portable device28, respectively. The user24views primary video stream shown on the display18, and thereby interacts with the interactive application, operating input mechanisms22of the controller20so as to provide direct input which affects the state of the interactive application10.

Simultaneously, a data feed of the interactive application10is generated by a game output module72of the interactive application10, and transmitted to a slave application80which runs on the portable device28. More specifically, the data feed is communicated from the wireless transceiver76of the computer70to a wireless transceiver78of the portable device, and received by a slave input module82of the slave application80. This data feed is processed by the slave application80so as to generate an ancillary video stream which is rendered on a display36of the portable device28. The user30views the display36, and thereby interacts with the ancillary video stream by operating input mechanisms38of the portable device28so as to provide input. This input is processed by the slave application80to generate data which communicated by a slave output module84of the slave application80to a game input module74of the interactive application10(via transceivers78and76of the portable device28and computer70, respectively).

In various embodiments of the invention, the slave application80may be configured to provide various types of interactive interfaces for the user30to interact with. For example, in one embodiment, the ancillary video stream displayed on the display36of the portable device28may provide a same image as that of the primary video stream displayed on display18. In other embodiments, the image displayed on the display36of the portable device28may be a modified version of that shown on display18. In still other embodiments, the image displayed on the portable device28may be entirely different from that shown on the display18.

With reference toFIG. 4, a system for providing interactivity with an interactive game is shown, in accordance with an embodiment of the invention. A game engine90continually executes to determine a current state of the interactive game. The game engine90provides a primary video stream91to a video renderer92, which renders the primary video stream91on a display18. The primary video stream91contains video data which represents a current state of the interactive game, and when rendered on the display18provides a visual representation of the interactive game for interactivity.

The game engine90also provides data to a game output module72. In one embodiment, the game output module72includes an audio/video (AV) feed generator94, which, based on data received from the game engine, generates an AV data feed95that is sent to a handheld device28. The AV data feed95may include data which can be utilized by the handheld device28to generate an image which is the same as or substantially similar to that shown on the display18. For example, in one embodiment, the AV data feed95contains a compressed, lower resolution, lower frame rate or otherwise lower bandwidth version of the primary video stream rendered on the display18. By utilizing a lower bandwidth, the AV data feed95may be more easily transmitted, especially via wireless transmission technologies which typically have lower bandwidth capacities than wired transmission technologies. Additionally, the AV data feed95may be so configured to utilize less bandwidth, as the smaller display36of the handheld device typically will have a lower resolution than the display18, and therefore does not require the full amount of data provided in the primary video stream91.

The game output module72may also include a game data generator96, which, based on data received from the game engine, generates a game data feed97that is sent to the handheld device28. The game data feed97may include various types of data regarding the state of the interactive game. At the handheld device28, the AV data feed95and the game data feed97are received by a slave input handler82, which initially processes the data feeds. A slave application engine98executes on the handheld device28so as to provide an interactive interface to the user30. The slave application engine98generates an ancillary video stream99based on the data feeds. The ancillary video stream99is rendered by a slave video renderer100on a display36of the handheld device28.

The user30views the rendered ancillary video stream on the display36, and interacts with the displayed image by providing direct input102through various input mechanisms of the handheld device28. Examples of direct input102include button input104, touchscreen input106, and joystick input108, though other types of input may be included in the direct input102. The direct input102is processed by a direct input processor110for use by the slave application engine98. Based on the processed direct input, as well as the data feeds provided from the computer70, the slave application engine98updates its state of execution, which is then reflected in the ancillary video stream99that is rendered on the display36. A slave output generator84generates slave data85based on the state of the slave application engine98, and provides the slave data85to a game input module74of the interactive game. The slave data85may include various types of data, such as data which may be utilized by the game engine90to affect its state of execution, or tag data which affects the state of the game engine when the state of the game engine reaches a particular configuration.

With reference toFIG. 5, a system for enabling an interactive application90to utilize resources of a handheld device28is shown, in accordance with an embodiment of the invention. As shown, the interactive application or game engine90generates a request120to utilize a resource of a handheld device28. The request120is sent to a slave application80which runs on the handheld device28. The slave application80processes the request120to determine what resource of the handheld device28to utilize. A resource of the handheld device may be any device or function included in the handheld device28. For example, resources of the handheld device28may include the handheld device's processing power, including its processors and memory. Resources of the handheld device28may also include devices, sensors or hardware such as a camera, motion sensor, microphone, bio-signal sensor, touchscreen, or other hardware included in the handheld device.

Based on the request120, the slave application80initiates operation of or detection from the hardware or sensor124. A user30who operates the handheld device28may, depending upon the nature of the hardware124, exercise control of the hardware to various degrees. For example, in the case where the hardware124is a camera, then the user30might control the camera's direction and orientation. Whereas, in the case where the hardware is a processor or memory of the handheld device28, the user30might exercise very little or no direct control over the hardware's operation. In one embodiment, the operation of hardware124generates raw data which is processed by a raw data processor126of the slave application80. The processing of the raw data produces processed data122which is sent to the interactive application90. Thus, the interactive application90receives the processed data122in response to its initial request120.

It will be appreciated by those skilled in the art that numerous examples may be provided wherein an interactive application90utilizes resources of a handheld device28, as presently described. In one embodiment, the interactive application90utilizes the processing resources of the handheld device, such as its processor and memory, to offload processing of one or more tasks of the interactive application90. In another embodiment, the interactive application90utilizes a camera of the handheld device28to capture video or still images. In one embodiment, the interactive application90utilizes a microphone of the handheld device28to capture audio from an interactive environment. In another embodiment, the interactive application90utilizes motion sensors of the handheld device28to receive motion-based input from a user. In other embodiments, the interactive application90may utilize any other resources included in the handheld device28.

With reference toFIG. 6, a controller for interfacing with an interactive program is shown, in accordance with an embodiment of the invention. The controller20is of a type utilized to interface with a computer or primary processing interface, such as a personal computer, gaming console, or other type of computing device which executes or otherwise renders or presents an interactive application. The controller20may communicate with the computer via a wired or wireless connection. In other embodiments, the interactive application may be executed by a computing device which is accessible via a network, such as a LAN, WAN, the Internet, and other types of networks. In such embodiments, input detected by the controller is communicated over the network to the interactive application. The input from the controller may first be received by a local device which may process the input and transmit data containing the input or data based on the input to the networked device executing the interactive application. A user provides input to the interactive application via the controller20, utilizing hardware of the controller20, such as directional pad130, joysticks132, buttons134, and triggers136. The controller20also includes electrodes138aand138bfor detecting bio-electric signals from the user. The bio-electric signals may be processed to determine biometric data that is used as an input for the interactive program.

With reference toFIG. 7, a front view of an exemplary portable handheld device28is shown, in accordance with an embodiment of the invention. The handheld device28includes a display140for displaying graphics. In embodiments of the invention, the display140is utilized to show interactive content in real-time. In various embodiments of the invention, the display140may incorporate any of various display technologies, such as touch-sensitivity. The handheld device28includes speakers142for facilitating audio output. The audio output from speakers142may include any sounds relating to the interactive content, such as sounds of a character, background sounds, soundtrack audio, sounds from a remote user, or any other type of sound.

The handheld device28includes buttons144and directional pad146, which function as input mechanisms for receiving input from a user of the portable device. In embodiments of the invention, it is contemplated that any of various other types of input mechanisms may be included in the handheld device28. Other examples of input mechanisms may include a stylus, touch-screen, keyboard, keypad, touchpad, trackball, joystick, trigger, or any other type of input mechanism which may be useful for receiving user input.

A front-facing camera148is provided for capturing images and video of a user of the portable handheld device28, or of other objects or scenery which are in front of the portable device28. Though not shown, a rear-facing camera may also be included for capturing images or video of a scene behind the handheld device28. Additionally, a microphone150is included for capturing audio from the surrounding area, such as sounds or speech made by a user of the portable device28or other sounds in an interactive area in which the portable device28is being used.

A left electrode152aand a right electrode152bare provided for detecting bio-electric signals from the left and right hands of a user holding the handheld device. The left and right electrodes152aand152bcontact the left and right hands, respectively, of the user when the user holds the handheld device28. In various other embodiments of the invention, electrodes included in a handheld device for detecting biometric data from a user may have any of various other configurations.

With reference toFIG. 8, a diagram illustrating components of a portable device10is shown, in accordance with an embodiment of the invention. The portable device10includes a processor160for executing program instructions. A memory162is provided for storage purposes, and may include both volatile and non-volatile memory. A display164is included which provides a visual interface that a user may view. A battery166is provided as a power source for the portable device10. A motion detection module168may include any of various kinds of motion sensitive hardware, such as a magnetometer170, an accelerometer172, and a gyroscope174.

An accelerometer is a device for measuring acceleration and gravity induced reaction forces. Single and multiple axis models are available to detect magnitude and direction of the acceleration in different directions. The accelerometer is used to sense inclination, vibration, and shock. In one embodiment, three accelerometers172are used to provide the direction of gravity, which gives an absolute reference for two angles (world-space pitch and world-space roll).

A magnetometer measures the strength and direction of the magnetic field in the vicinity of the controller. In one embodiment, three magnetometers170are used within the controller, ensuring an absolute reference for the world-space yaw angle. In one embodiment, the magnetometer is designed to span the earth magnetic field, which is ±80 microtesla. Magnetometers are affected by metal, and provide a yaw measurement that is monotonic with actual yaw. The magnetic field may be warped due to metal in the environment, which causes a warp in the yaw measurement. If necessary, this warp can be calibrated using information from other sensors such as the gyroscope or the camera. In one embodiment, accelerometer172is used together with magnetometer170to obtain the inclination and azimuth of the portable device28.

A gyroscope is a device for measuring or maintaining orientation, based on the principles of angular momentum. In one embodiment, three gyroscopes174provide information about movement across the respective axis (x, y and z) based on inertial sensing. The gyroscopes help in detecting fast rotations. However, the gyroscopes can drift overtime without the existence of an absolute reference. This requires resetting the gyroscopes periodically, which can be done using other available information, such as positional/orientation determination based on visual tracking of an object, accelerometer, magnetometer, etc.

A camera176is provided for capturing images and image streams of a real environment. More than one camera may be included in the portable device28, including a camera that is rear-facing (directed away from a user when the user is viewing the display of the portable device), and a camera that is front-facing (directed towards the user when the user is viewing the display of the portable device). Additionally, a depth camera178may be included in the portable device for sensing depth information of objects in a real environment.

The portable device10includes speakers180for providing audio output. Also, a microphone182may be included for capturing audio from the real environment, including sounds from the ambient environment, speech made by the user, etc. The portable device28includes tactile feedback module184for providing tactile feedback to the user. In one embodiment, the tactile feedback module184is capable of causing movement and/or vibration of the portable device28so as to provide tactile feedback to the user.

LEDs186are provided as visual indicators of statuses of the portable device28. For example, an LED may indicate battery level, power on, etc. A card reader188is provided to enable the portable device28to read and write information to and from a memory card. A USB interface190is included as one example of an interface for enabling connection of peripheral devices, or connection to other devices, such as other portable devices, computers, etc. In various embodiments of the portable device28, any of various kinds of interfaces may be included to enable greater connectivity of the portable device28.

A WiFi module192is included for enabling connection to the Internet via wireless networking technologies. Also, the portable device28includes a Bluetooth module194for enabling wireless connection to other devices. A communications link196may also be included for connection to other devices. In one embodiment, the communications link196utilizes infrared transmission for wireless communication. In other embodiments, the communications link196may utilize any of various wireless or wired transmission protocols for communication with other devices.

Input buttons/sensors198are included to provide an input interface for the user. Any of various kinds of input interfaces may be included, such as buttons, touchpad, joystick, trackball, etc. An ultra-sonic communication module200may be included in portable device28for facilitating communication with other devices via ultra-sonic technologies.

Bio-sensors202are included to enable detection of physiological data from a user. In one embodiment, the bio-sensors202include one or more dry electrodes for detecting bio-electric signals of the user through the user's skin.

The foregoing components of portable device28have been described as merely exemplary components that may be included in portable device28. In various embodiments of the invention, the portable device28may or may not include some of the various aforementioned components. Embodiments of the portable device28may additionally include other components not presently described, but known in the art, for purposes of facilitating aspects of the present invention as herein described.

It will be appreciated by those skilled in the art that in various embodiments of the invention, the aforementioned handheld device may be utilized in conjunction with an interactive application displayed on a display to provide various interactive functions. The following exemplary embodiments are provided by way of example only, and not by way of limitation.

With reference toFIG. 9, an interactive environment is shown, in accordance with an embodiment of the invention. A console or computer70executes an interactive application which generates a primary video stream that is rendered on a display18. As shown, the rendered primary video stream depicts a scene210, which may include a character212, an object214, or any other item depicted by the interactive application. A user24views the scene210on the display18, and interacts with the interactive application by operating a controller20. The controller20enables the user24to directly affect the state of the interactive application, which is then updated and reflected in the primary video stream that is rendered on the display18.

Simultaneously, another user30views and operates a handheld device28in the interactive environment. The handheld device28receives an auxiliary or ancillary video stream from the computer70that is then rendered on the display36of the handheld device28. As shown in the presently described embodiment by the magnified view216of the handheld device28, the rendered ancillary video stream depicts a scene218that is substantially similar to or the same as that rendered by the primary video stream on the display18. The user30views this scene218and is able to interact with the scene218by various input mechanisms of the handheld device, such as providing input through a touchscreen or activating other input mechanisms such as buttons, a joystick, or motion sensors.

In various embodiments of the invention, the particular interactive functionality enabled on the handheld device28may vary. For example, a user may record the ancillary video stream on the handheld device28. The recorded ancillary video stream may be uploaded to a website for sharing with others. Or in one embodiment a user can select an object, such as object214, by tapping on the object214when displayed on the display36. Selection of the object214may then enable the user30to perform some function related to the object214, such as modifying the object, moving it, adding a tag containing descriptive or other kinds of information, etc.

In one embodiment, the scene218shown on the display36of the handheld device28will be the same as that of scene210shown on the display18until the user30provides some type of input, such as may occur by touching the touchscreen or pushing a button of the handheld device28. At this point, then the ancillary video stream rendered on the handheld device28will no longer depict the same scene as the primary video stream rendered on the display18, but instead diverges from that depicted by the primary video stream, as the user30provides interactive input independently of the interactivity occurring between the user24and the scene210.

For example, in one embodiment, touching or tapping the display36of the handheld device causes the scene218to freeze or pause, thus enabling the user30to perform interactive operations with the scene218. Meanwhile, the scene210shown on the display18continues to progress as the user24operates the controller20or otherwise interacts with the scene210. In another embodiment, the scene218does not freeze, but rather the perspective or point of view represented by the scene218may be altered based on input provided by the user30. In one embodiment, the input provided by the user30includes gesture input which is detected as the user30moves a finger across the display28. In still other embodiments, the divergence of the scene218shown on the display36of the handheld device28from the scene210shown on the display18may include any of various other types of changes. For example, the scene218might be altered in appearance, color, lighting, or other visual aspects from the scene210. In one embodiment, the appearance of scene218differs from that of scene210in such a manner as to highlight certain features within the scene. For example, in the context of an interactive game such as a first-person shooter type game, the scene218might portray a view based on infrared lighting, UV lighting, night-vision, or some other type of altered visual mode. In other embodiments, the scene218might be slowed down or speeded up relative to the scene210. In still other embodiments, information might be visible in the scene218which is not visible in the scene210. Such information may include textual information, markers or indicators, color schemes, highlighting, or other depictions which provide information to the user30viewing the scene218.

With reference toFIG. 10, an interactive environment is shown, in accordance with an embodiment of the invention. As shown, a computer70is configured to render a primary video stream of an interactive application to a display18. The result of rendering the primary video stream is the depiction of a scene220on the display18. A user24views the scene220and operates a controller20to provide input to the interactive application. In one embodiment, the controller communicates wirelessly with the computer70. Simultaneously, a second user30views a related scene222on a display36of a handheld device28. As shown in the illustrated embodiment, the scene222comprises a menu including selectable icons224. In various embodiments of the invention, a menu as included in scene222may enable various functions related to the interactive application. In this manner, the user30is able to affect the interactive application through a menu interface, independently of the interactivity between the user24and the scene220.

With reference toFIG. 11, an interactive environment is shown, in accordance with an embodiment of the invention. A computer70renders a primary video stream of an interactive application on a display18, so as to depict a scene230. Simultaneously, an ancillary video stream is rendered on a display36of a handheld device28, which depicts a scene232that is substantially similar or the same as scene230shown on the display18. A user operating the handheld device28is able to select an area234of the scene232, and zoom in on the area234, as shown by the updated scene236.

Selection of the area234may occur by various mechanisms, such as by touch or gesture input detected on the display36through touchscreen technology. In one embodiment, a user can draw or otherwise designate a box to determine the area234that is to be magnified. In this manner, a user is able to zoom in on an area of interest within a scene230shown on a separate display18. In some embodiments, operation of such a selection feature causes the scene232shown on the handheld device28to freeze or pause; whereas in other embodiments, the scene232does not freeze. In some embodiments, operation of such a selection feature causes both the scene230shown on the display18and the scene232shown on the handheld device28to pause; whereas in other embodiments, only the scene232shown on the handheld device freezes. In one embodiment, the primary and ancillary video streams are synchronized so that when a user selects or zooms in on an area in the scene232shown on the handheld device28, a substantially similar or same effect occurs in the scene230shown on the display18.

While the illustrated embodiment has been described with reference to zoom or magnification functionality, it will be appreciated that in other embodiments of the invention, a selection feature as presently described may enable other types of functions. For example, after selection of an area or region of a scene, it may be possible to perform various functions on the selected area, such as adjusting visual properties, setting a virtual tag, adding items, editing the selected area, etc. In other embodiments of the invention, any of various other types of functions may be performed after selection of an area of a scene.

With reference toFIG. 12, a conceptual diagram illustrating scenes within an interactive game is shown, in accordance with an embodiment of the invention. As shown, the interactive game includes a series of scenes240,242, and244, through scene246. As a player progresses through the interactive game, the player interacts with the scenes240,242, and244through scene246in a sequential fashion. As shown, the player first interacts with scene240, followed by scenes242,244, and eventually scene246. The player interacts with the scenes by viewing them on a display that is connected to a computer or console system, the computer being configured to render the scenes on the display. In the illustrated embodiment, the scene240is a currently active scene which the player is currently playing.

Simultaneously, a second player is able to select one of the scenes for display on a portable device. The scene selected by the second player may be a different scene from the active scene240which the first player is currently playing. As shown by way of example, the second player has selected the scene242for display and interaction on the portable device. The scene248shown on the portable device corresponds to scene242. The second player is able to interact with the scene248in various ways, such as navigating temporally or spatially within the scene, or performing modifications to the scene. By way of example, in one embodiment, the second player using the portable device is able to select from options presented on a menu252as shown at scene250. Options may include various choices for altering or modifying the scene, such as adding an object, moving an object, adding a virtual tag, etc. As shown at scene254, the second player has altered the scene by adding an object256.

Changes to a scene made by the second player can be seen by the first player, as these changes will be shown in the scene rendered on the display when the first player reaches the same spatial or temporal location in the scene. Thus in the illustrated embodiment, when the first player reaches the scene242, the first player will see the object256which has been placed by the second player. According to the presently described embodiment, it is possible for a second player to look ahead within the gameplay sequence of an interactive game, so as to alter scenes which the first player will encounter when the first player reaches the same spatial or temporal location. In various embodiments, the interactive game may be designed so as to establish cooperative gameplay, wherein the second player, by looking ahead within the gameplay sequence, aids the first player in playing the game. For example, the second player may tag particular items or locations with descriptive information or hints that will be useful to the first player. Or the second player might alter a scene by adding objects, or performing other modifications to a scene that would be helpful to the first player when the first player reaches the same location in the scene. In other embodiments of the invention, the interactive game may be designed so that the first and second players compete against each other. For example, the second player might look ahead within the gameplay sequence and set obstacles for the first player to encounter when the first player reaches a certain location.

In various embodiments, the principles of the present invention may be applied to various styles of gameplay. For example, with reference toFIGS. 13A, 13B, and 13C, various styles of gameplay are shown.FIG. 13Aillustrates a linear style of gameplay, in accordance with an embodiment of the invention. As shown, the gameplay comprises a plurality of scenes or nodes260,262,264and266. The scenes may be spatial or temporal locations of an interactive game, and are encountered in a predetermined order by the user as the user progresses through the interactive game. As shown, after completion of scene260, the user encounters scene262, followed by scenes264and266in order. According to principles of the invention herein described, a second user utilizing a handheld device may skip ahead to one of the scenes which the first user has yet to encounter, and perform modifications of the scene or set virtual tags associated with the scene. By way of example, in the illustrated embodiment, virtual tags270are associated with the scene262.

FIG. 13Billustrates a non-linear style of gameplay, in accordance with an embodiment of the invention. Non-linear styles of gameplay may include several variations. For example, there may be branching storylines wherein based on a user's actions, the user encounters a particular scene. As shown by way of example, the scene272is followed by alternative scenes274. It is possible that branching storylines may converge on a same scene. For example, the alternative scenes274ultimately converge on scene276. In other embodiments, branching storylines may not converge. For example, the scenes282and284which branch from scene280do not converge. In other embodiments, gameplay may have different endings depending upon the user's actions. For example, gameplay may end at a scene278based the user's actions, whereas if the user had taken a different set of actions, then gameplay would have continued to scene280and beyond. According to principles of the invention herein described, a second user utilizing a handheld device may skip ahead to one of the scenes which the first user has yet to encounter, and perform modifications of the scene or set virtual tags associated with the scene. By way of example, in the illustrated embodiment, virtual tags286are associated with the scene278.

FIG. 13Cillustrates an open-world style of gameplay, in accordance with an embodiment of the invention. As shown, a plurality of scenes288are accessible to the user, and each may be visited in an order of the user's choosing. In the illustrated embodiment, the scenes are linked to one another such that not every scene is accessible from every other scene. However, in another embodiment, every scene may be accessible from every other scene. According to principles of the invention herein described, a second user utilizing a handheld device may jump to any one of the scenes, and perform modifications of the scene or set virtual tags associated with the scene. By way of example, in the illustrated embodiment, virtual tags286are associated with the scene278.

The foregoing examples of various styles of gameplay have been described by way of example only, as in other embodiments there may be other styles of gameplay. The principles of the present invention may be applied to these other styles of gameplay, such that a user is able perform modifications or set virtual tags associated with a scene of an interactive game.

With reference toFIG. 14, an interactive environment is shown, in accordance with an embodiment of the invention. As shown, a computer70renders a primary video stream of an interactive application on a display18. The rendered primary video stream depicts a scene300. Simultaneously, a user holding a handheld device28, orients the handheld device28towards the display18. In one embodiment, orientation of the handheld device28towards the display may comprise aiming the rear-facing side of the handheld device28at a portion of the display18. The orientation of the handheld device28in relation to the display18may be detected according to various technologies. For example, in one embodiment, a rearward facing camera of the handheld device (not shown) captures images of the display which are processed to determine the orientation of the handheld device28relative to the display18. In other embodiments, the orientation of the handheld device28may be detected based on motion sensor data captured at the handheld device. In still other embodiments, any of various other technologies may be utilized to determine the orientation of the handheld device28relative to the display18.

In the illustrated embodiment, the handheld device28acts as a magnifier, providing the user with a magnified view of an area302of the scene300towards which the handheld device is aimed. In this manner, the user is able to view the magnified area302as a scene304on the handheld device28. This may be accomplished through generation of an ancillary video feed that is transmitted from the computer70to the handheld device28, and rendered on the handheld device28in real-time so as to be synchronized with the primary video stream which is being rendered on the display18. In similar embodiment, wherein the interactive application is a first-person shooter style game, the handheld device28could function as a sighting scope for targeting, as might occur when the user is using a sniper rifle or a long range artillery weapon. In such an embodiment, the user could view the game on the display18, but hold up the handheld device28and aim it at a particular area of the display18so as to view a magnified view of that area for targeting purposes.

With reference toFIG. 15, an interactive environment is shown, in accordance with an embodiment of the invention. As shown, a computer70renders a primary video stream of an interactive application on a display18. The rendered primary video stream depicts a scene310on the display18. A user24views the scene310and operates a controller20so as to provide input to the interactive application. In the illustrated embodiment, the user24provides input so as to steer a vehicle, as illustrated in the left side of the scene310. However, in other embodiments, the user24may provide input for any type of action related to the interactive application. Simultaneously, a user30operates a handheld device28while also viewing the scene310. In the illustrated embodiment, the user30provides input so as to control the targeting and firing of a weapon, as shown on the right side of the scene310. However, in other embodiments, the user30may provide input for any type of action related to the interactive application.

In one embodiment, the user30turns the handheld device28away from the display18, to a position312, so as to orient the rear side of the handheld device away from the display18. This causes activation of a viewing mode wherein the handheld device acts as a viewer of a virtual environment in which the scene310takes place. As shown at view316, by turning the handheld device28away from the display18, the handheld device28now displays a scene314which depicts a view of the virtual environment resulting as would be seen when the weapon controlled by the user30is turned in the same manner as the turning of the handheld device28. In other embodiments of the invention, activation of the viewing mode of the handheld device28may be selectable by the user30, or may be configured to automatically occur based on the location and orientation of the handheld device28.

With reference toFIG. 16, an interactive environment is shown, in accordance with an embodiment of the invention. As shown, a computer70renders a primary video stream of an interactive application on a display18. The rendered primary video stream depicts a scene320on the display18. A user24views the scene320and provides interactive input by operating a motion controller322. In one embodiment, the position of the motion controller is determined based on captured images of the motion controller322captured by a camera324. In one embodiment, the camera324includes a depth camera that is capable of capturing depth information. As shown, a second user30holds a handheld device28oriented towards the first user24. In one embodiment, the handheld device28includes a rearward-facing camera which the user30orients so as to enable capture of images of the first user24by the camera of the handheld device28. In one embodiment, the camera of the handheld device28may be capable of capturing depth information. By capturing images of the user24and the motion controller322from two different viewpoints which are based on the location of the camera324and the location of the handheld device28, it is possible to determine a more accurate three-dimensional representation of the first user24as well as the position and orientation of the motion controller322.

In another embodiment, the user24does not require a motion controller, but is able to provide interactive input to the interactive application through motion which is detected by the camera324. For example, the camera324may capture images and depth information of the user24which are processed to determine the position, orientation, and movements of the user24. These are then utilized as input for the interactive application. Further, the handheld device28may be operated by the second user30as described above to enhance the detection of the first user24.

With reference toFIG. 17, a coordinate system of a virtual space is shown, in accordance with an embodiment of the invention. As shown, the virtual space includes objects330and332. As previously described it is possible for a user using a handheld device to set virtual tags within a virtual space. In the illustrated embodiment, a virtual tag334has been set at coordinates (4, 2, 0). Whereas, a virtual tag336has been set on the object330at coordinates (6, 6, 3). Also, a virtual tag338has been set on the object332at coordinates (10, 7, 1). The virtual tags may be viewable by another user when the user navigates to a location proximate to the virtual tags. As previously described, the virtual tags may highlight a location or an object to the other user, or may contain information related to the virtual space as determined by the user, such as hints or messages, or may define modifications that are rendered or actions which are executed when the other user encounters them.

With reference toFIG. 18, a sequence of actions illustrating a user interface for setting a virtual tag is shown, in accordance with an embodiment of the invention. As shown, a user of the handheld device28can select an object340(for example, by tapping on the object), so as to bring up a menu342, containing various options. An option for setting a tag may be selected from the menu342, this bringing up a keyboard interface344to enable entry of text information. The entered text information is shown in the virtual tag346. The virtual tag346may be rendered to other users when they navigate to a position proximate to the location of the virtual tag346.

With reference toFIG. 19, an interactive environment is shown, in accordance with an embodiment of the invention. As shown, a computer70renders a primary video stream of an interactive application on a display18. The rendered primary video stream depicts a scene350on the display18. A user24views the scene350and operates a controller20to provide input to the interactive application. In the illustrated embodiment, the user24controls a character in a first-person shooter type game. Simultaneously, a second user operates a handheld device28, which displays a map352of the virtual environment in which the first user's character is located. The location of the first user's character is shown by the marker354. In one embodiment, the second user is able to navigate the map352so as to view regions which the user24is unable to view while engaged in controlling the character in the scene350. As such, in one embodiment, the second user is able to cooperatively aid the user24by viewing the map352and providing information to the user24, such as locations of interest, objects, enemies, or any other information which may be displayed on the map352.

With reference toFIG. 20, an interactive environment is shown, in accordance with an embodiment of the invention. In the illustrated embodiment, a user24operating a controller20and second user (not shown, except for the second user's hands) operating a handheld device28are playing a football video game. The second user views a scene360in which the second user is able to diagram plays by, for example, drawing them on a touchcreen of the handheld device28. As shown, the second user has drawn a specified route for a player indicated by marker362. The user24controls a player366that corresponds to the marker362, shown in a scene364that is displayed on the display18. The user24is able to view the route which the second user has diagrammed, and operates the controller20to control the movement of the player366so as to run the route.

In a related embodiment, shown atFIG. 21, the second user operates the handheld device28so as to diagram a play, such as by drawing the play on a touchscreen on the handheld device28. Meanwhile, on the display18, the teams are shown huddled in between plays. The diagrammed play will be utilized to determine the movements of the players on one of the teams in the game. In this manner, the second user is able to directly control the movements of players in an intuitive manner.

The aforementioned concept of utilizing the handheld device to diagram the movements of characters may be extended to other scenarios. For example, in a battlefield scenario, it may be possible to utilize the handheld device to diagram where certain characters will move, and what actions they will take, such as moving towards an enemy and attacking the enemy. In other embodiments, the handheld device may be utilized to diagram the movements or activities of characters or objects in a virtual environment.

In still other embodiments of the invention, the utilization of a portable handheld device for interfacing with an application displayed on a main display may be extended to various other interface concepts, as well as other types of programs and applications. For example, in some embodiments, the touchscreen of a handheld device could be utilized as a control or input surface to provide input to an application. In one embodiment, a user provides input by drawing on the touchscreen of the handheld device. In one embodiment, the touchscreen surface may function as a cursor control surface, wherein movements of a cursor on a main display are controlled according to movements of a user's finger on the touchscreen of the handheld device. The movement of the cursor on the main display may track the detected movement of the user's finger on the touchscreen. In another embodiment, a virtual keyboard is displayed on the touchscreen of the handheld device, and the user can enter text input by touching the displayed keys of the virtual keyboard on the touchscreen of the handheld device. These types of input mechanisms which are facilitated by leveraging the functionality of the handheld device may be applied to various kinds of applications, such as a web browser, word processor, spreadsheet application, presentation software, video game, etc.

In other embodiments, the handheld device may be utilized to provide input for visual editing applications, such as photo or video editing applications. For example, a user could edit a photo by drawing on the touchscreen of the handheld device. In this manner, input for editing a photo or video can be provided in an intuitive manner In one embodiment, the photo to be edited, or a portion of the photo, is shown on the touchscreen of the handheld device, so as to facilitate accurate input for editing of the photo by drawing on the touchscreen.

In other embodiments of the invention, the various resources of the handheld device may be utilized to support the functionality of programs and applications. For example, in one embodiment, wherein the application is a video game, the handheld device may be utilized to save game data related to the video game, such as a particular user's profile or progress data. Game data is typically stored on a console gaming system, and is therefore tied to that particular console system. However, by storing game data on a handheld device, a user can easily transfer their game data, and so utilize a different console system to play the same game without foregoing any of their saved game data.

For ease of description, some of the aforementioned embodiments of the invention have been generally described with reference to one player operating a controller (or providing input through a motion detection system) and/or one user operating a handheld device. It will be understood by those skilled in the art that in other embodiments of the invention, there may be multiple users operating controllers (or providing input through motion detection) and/or multiple users operating handheld devices.

With reference toFIG. 22, a method for utilizing a portable device to provide interactivity with an interactive application is shown, in accordance with an embodiment of the invention. At method operation380, an interactive application is initiated on a console device or computer. At method operation390, a subsidiary application is initiated on a portable device. At method operation382a request is generated by the interactive application and request data388is sent from the interactive application to the subsidiary application running on the portable device. At method operation392, the request data388is received and processed by the subsidiary application on the portable device. At method operation384, the computer awaits processed data after sending the request. At method operation394, a hardware component or sensor of the portable device is activated. At method operation396, raw data from the activated hardware is captured. At method operation398, the captured raw data is processed. And at method operation400, the processed raw data is send as processed data402to the interactive application. At method operation386, the processed data402is received by the interactive application.

Embodiments of the disclosure describe methods and apparatus for a system that enables an interactive application to utilize the resources of a handheld device. In one embodiment of the invention, a primary processing interface is provided for rendering a primary video stream of the interactive application to a display. A first user views the rendered primary video stream on the display and interacts by operating a controller device which communicates with the primary processing interface. Simultaneously, a second user operates a handheld device in the same interactive environment. The handheld device renders an ancillary video stream of the interactive application on a display of the handheld device, separate from the display showing the primary video stream. Accordingly, methods and apparatus in accordance with embodiments of the invention will now be described.

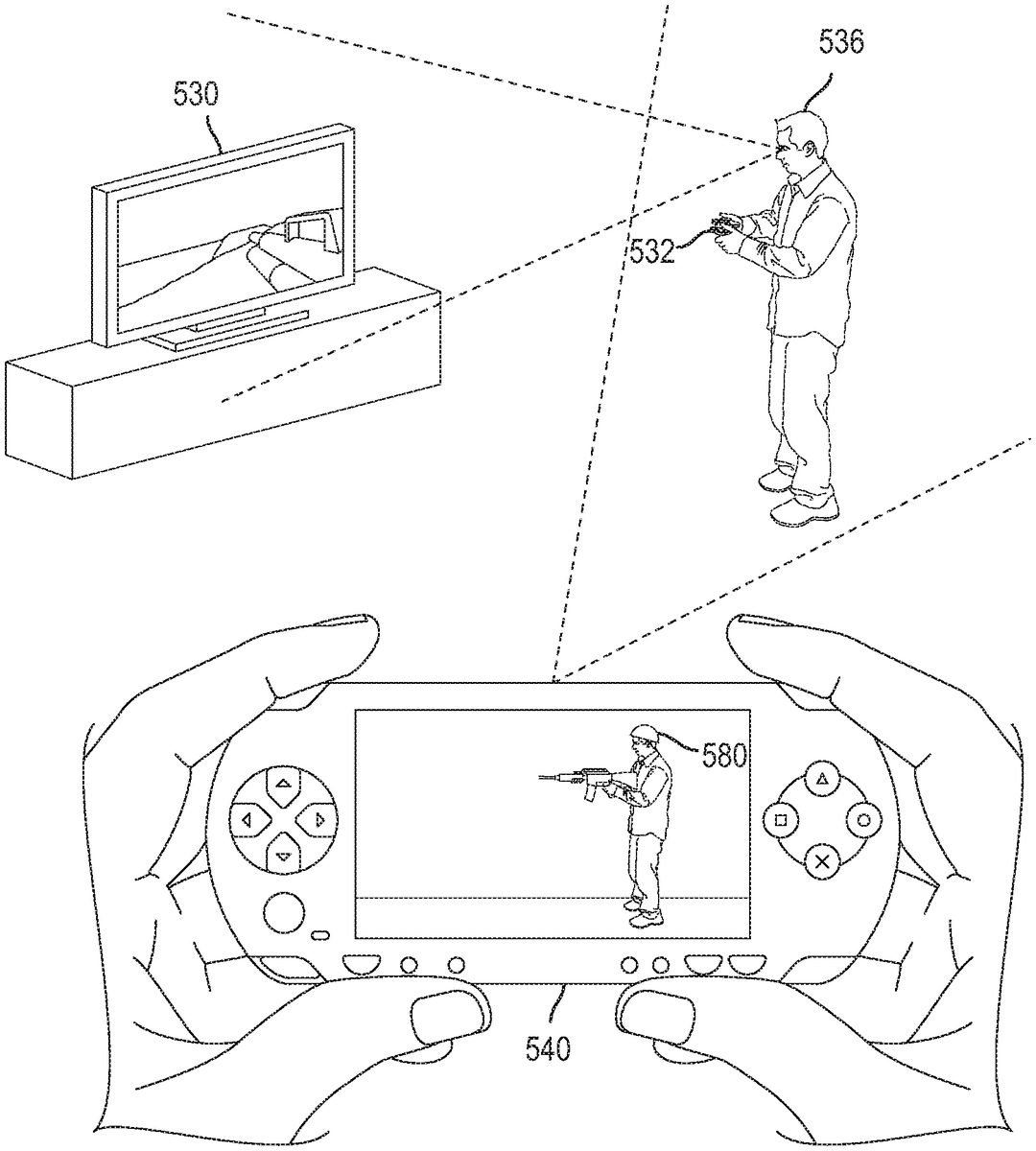

With reference toFIG. 23, a system for enabling a handheld device to capture video of an interactive session of an interactive application is shown, in accordance with an embodiment of the invention. The system exists in an interactive environment in which a user interacts with the interactive application. The system includes a console device510, which executes an interactive application512. The console device510may be a computer, console gaming system, set-top box, media player, or any other type of computing device that is capable of executing an interactive application. Examples of console gaming systems include the Sony Playstation 3® and the like. The interactive application512may be any of various kinds of applications which facilitate interactivity between one of more users' and the application itself. Examples of interactive applications include video games, simulators, virtual reality programs, utilities, or any other type of program or application which facilitates interactivity between a user and the application.

The interactive application512produces video data based on its current state. This video data is processed by an audio/video processor526to generate an application video stream528. The application video stream528is communicated to a main display530and rendered on the main display530. The term “video,” as used herein, shall generally refer to a combination of image data and audio data which are synchronized, though in various embodiments of the invention, the term “video” may refer to image data alone. Thus, by way of example, the application video stream528may include both image data and audio data, or just image data alone. The main display may be a television, monitor, lcd display, projector, or any other kind of display device capable of visually rendering a video data stream.

The interactive application512also includes a controller input module524for communicating with a controller device532. The controller device532may be any of various controllers which may be operated by a user to provide interactive input to an interactive application. Examples of controller devices include the Sony Dualshock 3® wireless controller. The controller device532may communicate with the console device510via a wireless or wired connection. The controller device532includes at least one input mechanism534for receiving interactive input from a user536. Examples of input mechanisms may include a button, joystick, touchpad, touchscreen, directional pad, stylus input, or any other types of input mechanisms which may be included in a controller device for receiving interactive input from a user. As the user536operates the input mechanism534, the controller device532generates interactive input data538which is communicated to the controller input module524of the interactive application512.

The interactive application512includes a session module514, which initiates a session of the interactive application, the session defining interactivity between the user536and the interactive application512. For example, the initiated session of the interactive application may be a new session or a continuation of a previously saved session of the interactive application512. As the interactive application512is executed and rendered on the main display530via the application video stream528, the user536views the rendered application videos stream on the main display530and provides interactive input data538to the interactive application by operating the controller device532. The interactive input data538is processed by the interactive application512to affect the state of the interactive application512. The state of the interactive application512is updated, and this updated state is reflected in the application video stream528rendered to the main display530. Thus, the current state of the interactive application512determined based on the interactivity between the user36and the interactive application512in the interactive environment.

The interactive application512also includes a handheld device module516for communicating with a handheld device540. The handheld device540may be any of various handheld devices, such as a cellular phone, personal digital assistant (PDA), tablet computer, electronic reader, pocket computer, portable gaming device or any other type of handheld device capable of displaying video. One example of a handheld device is the Sony Playstation Portable®. The handheld device540is operated by a spectator548, and includes a display542for rendering a spectator video stream552. An input mechanism544is provided for enabling the user548to provide interactive input to the interactive application512. Also, the handheld device540includes position and orientation sensors546. The sensors546detect data which may be utilized to determine the position and orientation of the handheld device540within the interactive environment. Examples of position and orientation sensors include an accelerometer, magnetometer, gyroscope, camera, optical sensor, or any other type of sensor or device which may be included in a handheld device to generate data that may be utilized to determine the position or orientation of the handheld device. As the spectator548operates the handheld device540, the position and orientation sensors546generate sensor data550which is transmitted to the handheld device module516. The handheld device module516includes position module518for determining the position of the handheld device540within the interactive environment, and orientation module520for determining the orientation of the handheld device540within the interactive environment. The position and orientation of the handheld device540are tracked during the interactive session of the interactive application512.

It will be understood by those skilled in the art that any of various technologies for determining the position and orientation of the handheld device540may be applied without departing from the scope of the present invention. For example, in one embodiment, the handheld device includes an image capture device for capturing an image stream of the interactive environment. The position and orientation of the handheld device540is tracked based on analyzing the captured image stream. For example, one or more tags may be placed in the interactive environment, and utilized as fiduciary markers for determining the position and orientation of the handheld device based on their perspective distortion when captured by the image capture device of the handheld device540. The tags may be objects or figures, or part of the image displayed on the main display, that are recognized when present in the captured image stream of the interactive environment. The tags serve as fiduciary markers which enable determination of a location within the interactive environment. Additionally, the perspective distortion of the tag in the captured image stream indicates the position and orientation of the handheld device.

In other embodiments, any of various methods may be applied for purposes of tracking the location and orientation of the handheld device540. For example, natural feature tracking methods or simultaneous location and mapping (SLAM) methods may be applied to determine position and orientation of the handheld device40. Natural feature tracking methods generally entail the detection and tracking of “natural” features within a real environment (as opposed to artificially introduced fiducials) such as textures, edges, corners, etc. In other embodiments of the invention, any one or more image analysis methods may be applied in order to track the handheld device540. For example, a combination of tags and natural feature tracking or SLAM methods might be employed in order to track the position and orientation of the handheld device540.

Additionally, the movement of the handheld device540may be tracked based on information from motion sensitive hardware within the handheld device540, such as an accelerometer, magnetometer, or gyroscope. In one embodiment, an initial position of the handheld device540is determined, and movements of the handheld device540in relation to the initial position are determined based on information from an accelerometer, magnetometer, or gyroscope. In other embodiments, information from motion sensitive hardware of the handheld device540, such as an accelerometer, magnetometer, or gyroscope, may be used in combination with the aforementioned technologies, such as tags, or natural feature tracking technologies, so as to ascertain the position, orientation and movement of the handheld device540.

The interactive application includes a spectator video module522, which generates the spectator video stream552based on the current state of the interactive application512and the tracked position and orientation of the handheld device540in the interactive environment. The spectator video stream552is transmitted to the handheld device540and rendered on the display542of the handheld device540.

In various embodiments of the invention, the foregoing system may be applied to enable the spectator548to capture video of an interactive session of the interactive application512. For example, in one embodiment, the interactive application512may define a virtual space in which activity of the interactive session takes place. Accordingly, the tracked position and orientation of the handheld device540may be utilized to define a position and orientation of a virtual viewpoint within the virtual space that is defined by the interactive application. The spectator video stream552will thus include images of the virtual space captured from the perspective of the virtual viewpoint. As the spectator548maneuvers the handheld device540to different positions and orientations in the interactive environment, so the position and orientation of the virtual viewpoint will change, thereby changing the perspective in the virtual space from which the images of the spectator video stream552are captured, and ultimately displayed on the display542of the handheld device. In this manner, the spectator548can function as the operator of a virtual camera in the virtual space in an intuitive fashion—maneuvering the handheld device540so as to maneuver the virtual camera in the virtual space in a similar manner.

In an alternative embodiment, the spectator video stream is generated at the handheld device540, rather than at the console device510. In such an embodiment, application state data is first determined based on the current state of the interactive application512. This application state data is transmitted to the handheld device540. The handheld device540processes the application state data and generates the spectator video stream based on the application state data and the tracked position and orientation of the handheld device.

In another embodiment, an environmental video stream of the interactive environment is captured, and the spectator video stream552is generated based on the environmental video stream. The environmental video stream may be captured at a camera included in the handheld device540. In one embodiment, the spectator video stream552is generated by augmenting the environmental video stream with a virtual element. In such an embodiment, wherein the environmental video stream is captured at the handheld device540, then the spectator548is able to view an augmented reality scene on the display542of the handheld device540that is based on the current state of the interactive application512as it is affected by the interactivity between the user536and the interactive application512. For example, in one embodiment, the image of the user536may be detected within the environmental video stream. And a portion of or the entirety of the user's image in the environmental video stream may then be replaced with a virtual element. In one embodiment, the detected user's image in the environmental video stream could be replaced with a character of the interactive application512controlled by the user536. In this manner, when the spectator548aims the camera of the handheld device540at the user536, the spectator548sees the character of the interactive application512which the user536is controlling instead of the user536on the display542.

In another embodiment, the position and orientation of the controller532as it is operated by the user536may also be determined and tracked. The position and orientation of the controller532, as well as the position and orientation of the handheld device540relative to the location of the controller532, may be utilized to determine the spectator video stream. Thus, the location of the controller may be utilized as a reference point according to which the spectator video stream is determined based on the relative position and orientation of the handheld device540to the location of the controller532. For example, the location of the controller532may correspond to an arbitrary location in a virtual space of the interactive application512, such as the present location of a character controlled by the user536in the virtual space. Thus, as the spectator maneuvers the handheld device540in the interactive environment, its position and orientation relative to the location of the controller532determine the position and orientation of a virtual viewpoint in the virtual space relative to the location of the character. Images of the virtual space from the perspective of the virtual viewpoint may be utilized to form the spectator video stream. In one embodiment, the correspondence between the relative location of the handheld device40to the controller532and the relative location of the virtual viewpoint to the character is such that changes in the position or orientation of the handheld device540relative to the location of the controller532cause similar changes in the position or orientation of the virtual viewpoint relative to the location of the character. As such, the spectator548is able to intuitively maneuver the handheld device about the controller536to view and capture a video stream of the virtual space from various perspectives that are linked to the location of the character controlled by the user536.

The foregoing embodiment has been described with reference to a character controlled by the user536in the virtual space of the interactive application512. However, in other embodiments, the location of the controller532may correspond to any location, stationary or mobile, within the virtual space of the interactive application. For example, in some embodiments, the location of the controller may correspond to a character, a vehicle, a weapon, an object or some other thing which is controlled by the user536. Or in some embodiments, the location of the controller may correspond to moving characters or objects which are not controlled by the user532, such as an artificial intelligence character or a character being controlled by a remote user of the interactive application during the interactive session. Or in still other embodiments, the location of the controller532may correspond to a specific geographic location, the location of an object, or some other stationary article within the virtual space of the interactive application512.