U.S. Pat. No. 10,853,966

VIRTUAL SPACE MOVING APPARATUS AND METHOD

AssigneeSamsung Electronics Co., Ltd

Issue DateFebruary 21, 2018

Illustrative Figure

Abstract

Provided are virtual space moving apparatus and method. A virtual space moving apparatus includes a 3D camera to capture real space; a display; and a processor to display a virtual space, implement a virtual object corresponding to an actual object in an actual space, and display virtual object at a specific position in virtual space, set a first detection area adjacent to an initial position of actual object and a second detection area spaced from initial position of actual object and surrounding first detection area, when actual object is moved by a first distance in first detection area outwards from initial position, move virtual object by first virtual movement distance, and when actual object is moved by first distance in second detection area outwards from a boundary between first detection area and second detection area, move virtual object by a second virtual movement distance greater than first virtual movement distance.

Description

DETAILED DESCRIPTION OF EMBODIMENTS OF THE INVENTION Hereinafter, embodiments of the present invention will be described in detail with reference to the accompanying drawings. In the following description and the accompanying drawings, well-known functions and structures will not be described for the sake of clarity and conciseness. In the present invention, when a user moves in a state where a sensor-recognizable region of a 3D camera and a user motion region of a real space are identical, an object in a virtual space is moved based on accelerated-movement information of an accelerated-movement region corresponding to a user's position among a plurality of accelerated-movement regions which are previously set in the sensor-recognizable region, such that the user can freely move in the virtual space without being limited by a real space. FIG. 1is a structural diagram of a virtual space moving apparatus according to an embodiment of the present invention. Referring toFIG. 1, the virtual space moving apparatus includes a 3D camera unit10, a skeletonization unit20, a skeletal data processor30, and a Graphic User Interface (GUI)40. The 3D camera unit10converts a 3D image signal including 3D position information of x-axis, y-axis, and z-axis of a subject into a 3D image, and senses motion of the subject. The 3D image corresponds to the subject. The 3D camera unit10includes a depth camera, a multi-view camera, and a stereo camera. The subject (i.e., a user) is photographed and its motion is sensed using a 3D camera, but a plurality of 2D cameras may be used or the subject motion may be determined by further including and using a motion sensor. While the virtual space moving apparatus includes the 3D camera unit10in an embodiment of the present invention, the 3D image may be received from an external server via a wired or wireless communication unit ...

DETAILED DESCRIPTION OF EMBODIMENTS OF THE INVENTION

Hereinafter, embodiments of the present invention will be described in detail with reference to the accompanying drawings. In the following description and the accompanying drawings, well-known functions and structures will not be described for the sake of clarity and conciseness.

In the present invention, when a user moves in a state where a sensor-recognizable region of a 3D camera and a user motion region of a real space are identical, an object in a virtual space is moved based on accelerated-movement information of an accelerated-movement region corresponding to a user's position among a plurality of accelerated-movement regions which are previously set in the sensor-recognizable region, such that the user can freely move in the virtual space without being limited by a real space.

FIG. 1is a structural diagram of a virtual space moving apparatus according to an embodiment of the present invention.

Referring toFIG. 1, the virtual space moving apparatus includes a 3D camera unit10, a skeletonization unit20, a skeletal data processor30, and a Graphic User Interface (GUI)40.

The 3D camera unit10converts a 3D image signal including 3D position information of x-axis, y-axis, and z-axis of a subject into a 3D image, and senses motion of the subject. The 3D image corresponds to the subject. The 3D camera unit10includes a depth camera, a multi-view camera, and a stereo camera. The subject (i.e., a user) is photographed and its motion is sensed using a 3D camera, but a plurality of 2D cameras may be used or the subject motion may be determined by further including and using a motion sensor. While the virtual space moving apparatus includes the 3D camera unit10in an embodiment of the present invention, the 3D image may be received from an external server via a wired or wireless communication unit or may have been stored in a memory embedded or inserted into the virtual space moving apparatus.

The sensor-recognizable region of the 3D camera unit10refers to a region that can be recognized and photographed by the 3D camera unit10, and this region is the same as a user motion region in a real space.

The skeletonization unit20recognizes an outline of the subject, separates a subject region and a background region from the 3D image based on the recognized outline, and skeletonizes the subject region to generate skeletal data. The skeletonization unit20outputs a plurality of optical signals to the sensor-recognizable region of the 3D camera unit10and recognizes the outline of the subject by recognizing the optical signals received after those signals are reflected from the user. The skeletonization unit20may also recognize the outline of the subject by using a pattern.

After recognizing the outline of the subject, the skeletonization unit20separates the subject region and the background region from the 3D image based on the recognized outline, and skeletonizes the separated subject region to generate skeletal data (or image). In the present invention, skeletonization involves expressing an object with a fully compressed skeletal line for recognition of the object.

The skeletal data processor30generates object data corresponding to the generated skeletal image and outputs the generated object data to the GUI40.

The skeletal data processor30determines whether an accelerated-movement mode for accelerated movement of mapped object data in the virtual space is selected. If the accelerated-movement mode is selected, the skeletal data processor30maps a plurality of previously set accelerated-movement regions around a position of the subject. If the subject's position is moved, the skeletal data processor30identifies an accelerated-movement region including the moved position of the subject among the plurality of previously set accelerated-movement regions.

More specifically, the skeletal data processor30determines in which one of the plurality of accelerated-movement regions is included position information of skeletal data, such as x-axis, y-axis, and z-axis coordinates. Herein, respective accelerated-movement regions are set in which object motion per subject motion is made at different movement ratios. For example, if a particular accelerated-movement region is set to have object motion per subject motion which has a movement ratio of 1:2, then the object is moved in the virtual space with motion of twice the subject motion.

The skeletal data processor30moves the object in the virtual space at a movement ratio previously set corresponding to the identified accelerated-movement region, and displays the moved object in the virtual space through the GUI40, which maps and displays the object generated by the skeletal data processor30in the virtual space. The graphic user interface40also displays the object moved in the virtual space.

As such, the object is moved in the virtual space at a movement ratio of object motion in the virtual space corresponding to subject motion, allowing the user to move as desired in the virtual space without being limited by the real space.

FIG. 2is a detailed structural diagram of the skeletal data processor30according to an embodiment of the present invention.

Referring toFIG. 2, the skeletal data processor30includes a motion recognizer31, a 3D image processor32, and an accelerated-movement mode executer33.

The motion recognizer31recognizes motion of a skeletal image corresponding to subject motion, which is input through the skeletonization unit20. For example, the motion recognizer31recognizes position movement of the skeletal image or a gesture such as a hand motion. More specifically, the motion recognizer31extracts depth information of the moving subject through the 3D camera unit10such as a 3D camera, and segments the depth information. Thereafter, the motion recognizer31recognizes a 3D space position of a head, a 3D space position of a hand, and 3D space positions of torso and legs by using a structure of skeletal data regarding a human body, thus implementing interaction with 3D contents. Although user motion is recognized based on motion of the skeletal image in the embodiment ofFIG. 2, user motion may also be recognized by a separate motion sensor.

The 3D image processor32generates object data corresponding to the skeletal data generated by the skeletonization unit20, and maps the generated object data to a particular position in the virtual space. For example, the 3D image processor32generates object data, such as a user's avatar, in the virtual space, and maps the generated avatar to a position in the virtual space corresponding to the user's position in the real space.

Thereafter, when the accelerated-movement mode is executed, the 3D image processor32moves the position of the object data in the virtual space at the movement ratio identified by the accelerated-movement mode executer33corresponding to the subject motion.

The accelerated-movement mode executer33determines whether the motion recognized by the motion recognizer31is motion previously set for selection of the accelerated-movement mode, and executes the accelerated-movement mode or a normal mode according to a result of the determination. The normal mode is a default operation mode in the virtual space moving apparatus, in which the real space and the virtual space are one-to-one mapped and thus subject motion and object motion one-to-one correspond to each other.

More specifically, if the recognized motion is for selecting the accelerated-movement mode, the accelerated-movement mode executer33maps a plurality of previously set accelerated-movement regions around the position of the skeletal data. Thereafter, if movement of the position of the skeletal data is recognized by the motion recognizer31, the accelerated-movement mode executer33identifies an accelerated-movement region including the moved position information of the skeletal data among the plurality of mapped accelerated-movement regions. In this state, the accelerated-movement regions are mapped around the position of the skeletal data in the sensor-recognizable region of the 3D camera unit10.

The accelerated-movement mode executer33outputs a movement ratio of object motion per user motion, which is set corresponding to the identified accelerated-movement region, to the 3D image processor32.

If the accelerated motion is not intended for selecting the accelerated-movement mode, i.e., is motion for movement in a distance, then the accelerated-movement mode executer33performs a normal mode in which object motion per subject motion is made at a movement ratio of 1:1.

As such, the present invention moves the object in the virtual space at a movement ratio of object motion in the virtual space, which is previously set corresponding to user motion, allowing the user to freely move as desired without being limited by the real space.

FIG. 3illustrates a process for moving in the virtual space without being limited by the real space in the virtual space moving apparatus according to an embodiment of the present invention.

Upon input of a 3D image including x-axis, y-axis, and z-axis coordinates information of the subject through the 3D camera unit10in step300, the skeletonization unit20recognizes the outline of the subject and separates a subject region and a background region from the 3D image based on the recognized outline in step301. The skeletonization unit20outputs a plurality of optical signals to the sensor-recognizable region of the 3D camera unit10and recognizes the optical signals received after being reflected from the subject, thus recognizing the outline of the user.

The skeletonization unit20skeletonizes the separated subject region to generate skeletal data in step302. In other words, the skeletonization unit20, which has recognized the user's outline, separates the subject region and the background region from the 3D image based on the recognized outline, and generates the skeletal data by skeletonizing the separated subject region.

In step303, the skeletal data processor30generates object data corresponding to the generated skeletal data.

In step304, the skeletal data processor30maps the generated object data to a particular position in the virtual space and displays the generated object through the GUI40.

In step305, the skeletal data processor30determines whether the accelerated-movement mode is selected, and if the accelerated-movement mode is selected, the skeletal data processor30proceeds to step306; otherwise, the skeletal data processor30returns to step300to continuously receive a 3D image and perform steps301through305. More specifically, the process of determining whether the acceleration movement mode is selected involves determining at the skeletal data processor30whether the accelerated-movement mode for accelerated movement of the mapped object data in the virtual space is selected.

If the accelerated-movement mode is selected, the skeletal data processor30calculates position information of the skeletal data in step306. More specifically, the skeletal data processor30maps the plurality of previously set accelerated-movement regions around the position of the subject, and if the position of the skeletal data is moved, the skeletal data processor30calculates the moved position information of the skeletal data.

In step307, the skeletal data processor30detects an accelerated-movement region including the moved position information of the subject from the plurality of previously set accelerated-movement regions. Specifically, the skeletal data processor30determines in which one of the plurality of accelerated-movement regions is included the position information of the skeletal data, such as x-axis, y-axis, and z-axis coordinates, in correspondence to the motion of the subject.

In step308, the skeletal data processor30moves the object in the virtual space at a movement ratio previously set corresponding to the detected accelerated-movement region, and displays the moved object in the virtual space through the GUI40.

In step309, the skeletal data processor30determines whether the accelerated-movement mode is terminated. If the accelerated-movement mode is terminated, the skeletal data processor30ends the process, otherwise, the skeletal data processor30returns to step306to calculate the position information of the skeletal data and performs steps307through309.

As such, the object in the embodiment ofFIG. 3is moved in the virtual space at a previously set movement ratio of object motion in the virtual space corresponding to user motion, allowing the user to move as desired in the virtual space without being limited by the real space.

FIGS. 4 through 8are diagrams for describing a process for movement in the virtual space without being limited by the real space by the virtual space moving apparatus according to an embodiment of the present invention.

FIG. 4illustrates a process for mapping the plurality of accelerated-movement regions in the sensor-recognizable region in the accelerated-movement mode according to an embodiment of the present invention.

As shown inFIG. 4, assuming that a sensor-recognizable region400of the 3D camera unit10is identical to a subject motion space, the skeletal data processor30maps a plurality of accelerated-movement regions401around a position of the subject in the accelerated-movement mode.

FIG. 5illustrates the plurality of accelerated-movement regions according to an embodiment of the present invention.

As shown inFIG. 5, there are five accelerated-movement regions: A1, A2, A3, A4, and A5. Although the plurality of accelerated-movement regions includes the 5 regions, it may also include n regions, where n>0.

The plurality of accelerated-movement regions may be set as shown below in Table 1.

TABLE 1Accelerated-MovementRegionSet ValueA1Object motion per subject motion has a movementratio of 1:nA2Object motion per subject motion has a movementratio of 1:2nA3Object motion per subject motion has a movementratio of 1:7nA4Object motion per subject motion has a movementratio of 1:30nA5Object motion per subject motion has a movementratio of 1:100n

Referring to Table 1 andFIGS. 6 and 7, the accelerated-movement mode will be described in detail.

FIG. 6illustrates a process in which the object moves in the virtual space corresponding to user motion according to an embodiment of the present invention.

For example, when a user600is situated in the accelerated-movement region A1 and moves to a position601, the skeletal data processor30may identify position information of the user601, that is, position information of skeletal data and determine in which one of the plurality of accelerated-movement regions the position information is included. In other words, the skeletal data processor30determines in which one of A1 through A4 x-axis, y-axis, and z-axis coordinates of the skeletal data are included.

Upon determining that the position of the user601is included in the accelerated-movement region A3, the skeletal data processor30moves an object in the virtual space at a movement ratio of 1:7n for object motion per user motion as set in Table 1.

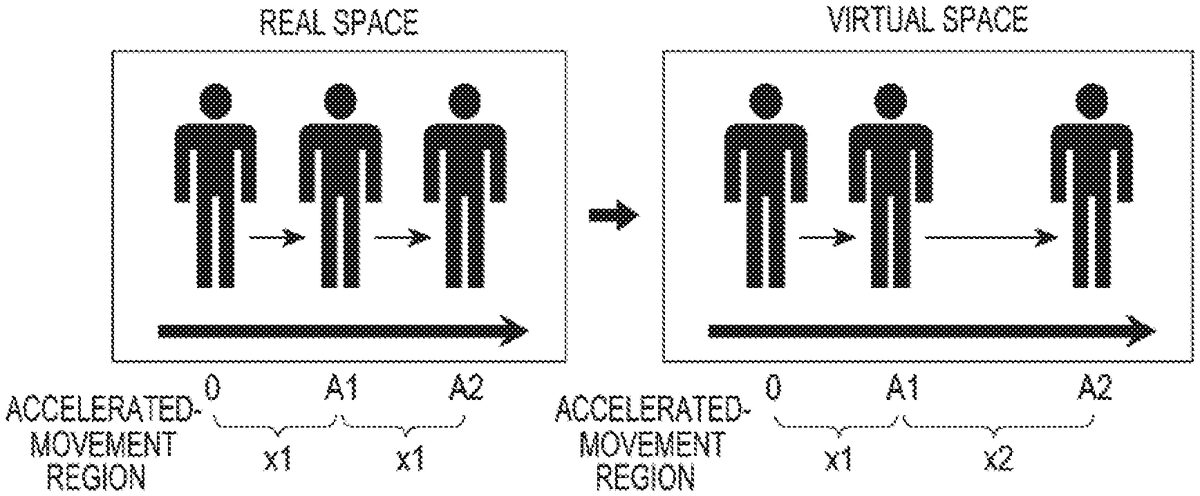

FIG. 7illustrates object motion in the virtual space corresponding to user motion in the real space according to an embodiment of the present invention.

For example, if the user moves at a movement ratio of 1:1 from the accelerated-movement region A1 to the accelerated-movement region A2 in the real space, the object may move at a previously set movement ratio of 1:2 in the virtual space.

FIG. 8illustrates movement in the virtual space according to an embodiment of the present invention.

As the user moves a particular distance in the real space, the object in the virtual space moves from a position A to a position B, as shown inFIG. 8, regardless of a size or a form of the real space.

FIGS. 9 and 10illustrate forms of the plurality of accelerated-movement regions according to an embodiment of the present invention.

While the virtual space is implemented in a circular form in an embodiment of the present invention, it may also be configured in a form shown inFIG. 9and may be configured in circular, rectangular, or triangular forms as shown inFIG. 10.

Therefore, the present invention moves the object in the virtual space at a previously set movement ratio of object motion in the virtual space corresponding to user motion, allowing the user to move as desired in the virtual space without being limited by the real space.

Moreover, according to the present invention, an object moves an accelerated-movement distance corresponding to an accelerated-movement region previously set corresponding to user motion in a virtual space, such that a user can move anywhere as desired in the virtual space, regardless of a size of a real space.

It can be seen that the embodiment of the present invention can be implemented with hardware, software, or a combination of hardware and software. Such arbitrary software may be stored, whether or not erasable or re-recordable, in a volatile or non-volatile storage such as a Read-Only Memory (ROM), a memory such as a Random Access Memory (RAM), a memory chip, a device, or an integrated circuit, and an optically or magnetically recordable and machine (e.g., computer)-readable storage medium such as a Compact Disc (CD), a Digital Versatile Disk (DVD), a magnetic disk, or a magnetic tape. The virtual space moving method according to the present invention can be implemented by a computer or a portable terminal which includes a controller and a memory, and it can be seen that a storing unit may be an example of a non-transitory machine-readable storage medium which is suitable for storing a program or programs including instructions for implementing the embodiment of the present invention. Therefore, the present invention includes a program including codes for implementing an apparatus or method claimed in an arbitrary claim and a machine-readable storage medium for storing such a program. The program may be electronically transferred through an arbitrary medium such as a communication signal delivered through wired or wireless connection, and the present invention properly includes equivalents thereof.

The present invention is not limited by the foregoing embodiments and the accompanying drawings because various substitutions, modifications, and changes can be made by those of ordinary skill in the art without departing from the technical spirit of the present invention.

Claims

- A virtual space moving apparatus, comprising: a three-dimensional (3D) camera configured to capture a real space;a display;and a processor;wherein the processor is configured to: control the display to display a virtual space on the display, identify an entire user using the 3D camera, implement a virtual object corresponding to the entire user, the virtual object being configured to be moved in the virtual space when the entire user moves in an actual space, display the virtual object at a specific position in the virtual space, set a plurality of detection areas based on an initial position of the entire user, wherein the plurality of detection areas comprises a first circular detection area comprising a center point corresponding to the initial position of the entire user and a second detection area comprising an outline spaced a predetermined distance from the center point of the first circular detection area, when the entire user moves by a first distance in the first circular detection area outwards from the initial position, move the virtual object by a first virtual movement distance in the virtual space, and when the entire user moves by the first distance in the second detection area outwards from an outline comprised in the first circular detection area, move the virtual object by a second virtual movement distance greater than the first virtual movement distance in the virtual space, wherein the plurality of detection areas are set to have different movement ratios, and as a distance away from the center point increases, a movement ratio set in each of the plurality of detection areas increases.

- The virtual space moving apparatus of claim 1 , wherein a maximum movement distance of the virtual object in the virtual space when a first mode is selected is greater than a maximum movement distance of the virtual object in the virtual space when a second mode is selected.

- The virtual space moving apparatus of claim 1 , wherein a movement distance of the virtual object per unit time in the display is greater than a movement distance of the entire user per unit time when a first mode is selected.

- The virtual space moving apparatus of claim 1 , wherein the processor is further configured to: generate skeletal data by skeletonizing the entire user from the captured actual space, and generate the virtual object based on the skeletal data.

- The virtual space moving apparatus of claim 4 , wherein the processor is further configured to: detect that the entire user moves by detecting a change of the skeletal data.

- The virtual space moving apparatus of claim 1 , wherein the processor is further configured to: when a first mode is selected, move the virtual object in the virtual space by a movement distance of the entire user in the real space.

- A virtual space moving method in a virtual space moving apparatus, the virtual space moving method comprising: displaying a virtual space on a display;identifying an entire user using a 3D camera;implementing a virtual object corresponding to the entire user, the virtual object being configured to be moved in the virtual space when the entire user moves in an actual space;displaying the virtual object at a specific position in the virtual space;setting a plurality of detection areas based on an initial position of the entire user, wherein the plurality of detection areas comprises a first circular detection area comprising a center point corresponding to the initial position of the entire user and a second detection area comprising an outline spaced a predetermined distance from the center point;when the entire user moves by a first distance in the first circular detection area outwards from the initial position, moving the virtual object by a first virtual movement distance in the virtual space;and when the entire user moves by the first distance in the second detection area outwards from an outline comprised in the first circular detection area, moving the virtual object by a second virtual movement distance greater than the first virtual movement distance in the virtual space, wherein the plurality of detection areas are set to have different movement ratios, and as a distance away from the center point increases, a movement ratio set in each of the plurality of detection areas increases.

- The virtual space moving method of claim 7 , wherein a maximum movement distance of the virtual object in the virtual space when a first mode is selected is greater than a maximum movement distance of the virtual object in the virtual space when a second mode is selected.

- The virtual space moving method of claim 7 , wherein a movement distance of the virtual object per unit time in the display is greater than a movement distance of the entire user per unit time when a first mode is selected.

- The virtual space moving method of claim 7 , further comprising: generating skeletal data by skeletonizing the entire user from the captured actual space, and generating the virtual object based on the skeletal data.

- The virtual space moving method of claim 10 , further comprising: detecting that the entire user moves by detecting a change of the skeletal data.

- The virtual space moving method of claim 7 , further comprising: when a first mode is selected, moving the virtual object in the virtual space by a movement distance of the entire user in the real space.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.