U.S. Pat. No. 10,694,990

BIMANUAL COMPUTER GAMES SYSTEM FOR DEMENTIA SCREENING

AssigneeBright Cloud International Corporation

Issue DateAugust 31, 2015

Illustrative Figure

Abstract

The majority of cognitive virtual reality (VR) applications have been for therapy, and not cognitive screening/scoring. Provided herein is the BrightScreener™ and its first pilot feasibility study for evaluating elderly with various degrees of cognitive impairment. BrightScreener is a portable (laptop-based) serious-gaming system which incorporates a bimanual game interface for more ecological 3D interaction with virtual worlds. A pilot study determined that BrightScreener is able to differentiate levels of cognitive impairment based solely on game performance, as well as to evaluate the technology acceptance by the target population. Subsequent group analysis of the Pearson correlation coefficient showed a high degree of correlation between the subjects' MMSE scores and their Composite Game Scores (0.90, |P|<0.01). Despite the small sample size (n=11), results suggest that serious-gaming strategies can be used as a digital technique to stratify levels of Cognitive Impairment. This may be an alternative to conventional standardized scoring for Mild Cognitive Impairment and Dementia, especially for patients with hearing and speech deficits.

Description

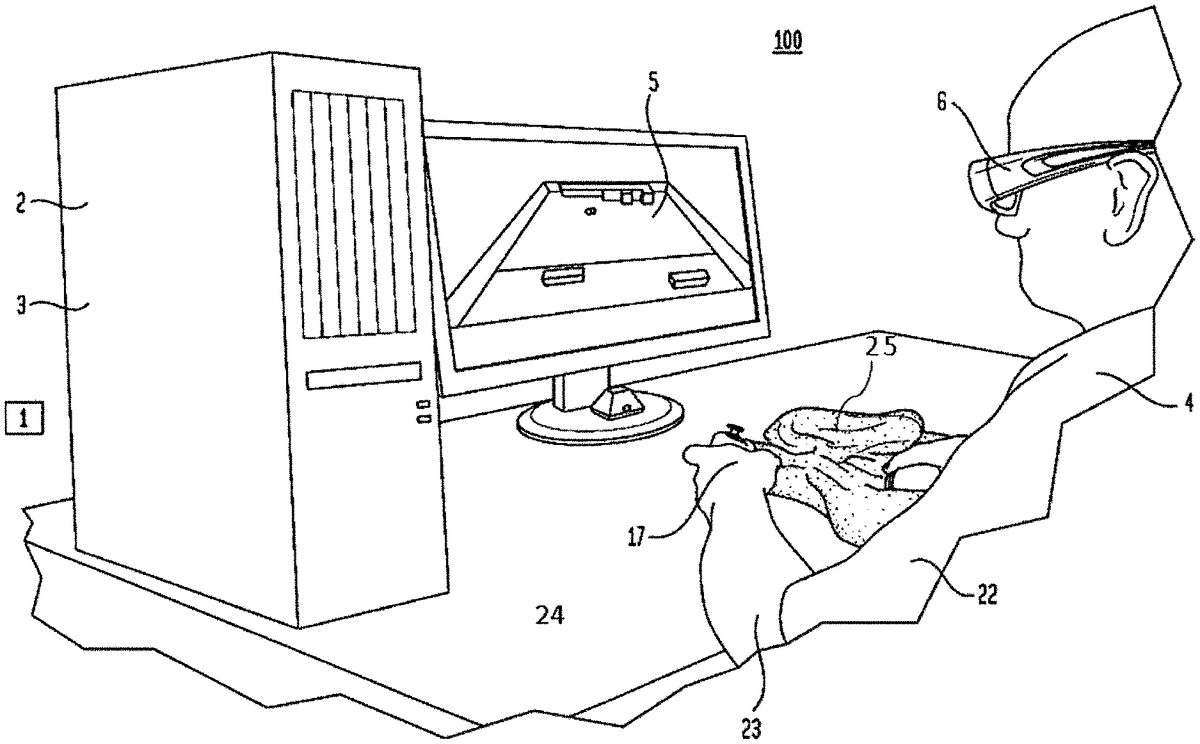

DESCRIPTION Referring toFIG. 1, the bimanual therapy system100consists of off-the shelf gaming hardware and a library of custom therapeutic games1written in Unity 3D Pro. See Reference 11. The games are rendered on a computer2, such as those available in commerce. For example, the games1can be rendered by an HP Z600 graphics workstation with an nVidia “Quadro 2000” graphics accelerator3, or subsequent models (FIG. 1). The graphics are in 3D, so to facilitate immersion and help the patient4in his manual tasks. Therefore the workstation2is connected to an Assus VG236H 3D monitor5, and the patient4wears a pair of nVidia “3D Vision” active stereo glasses6. A towel25is used to allow patient4to rest his arm23on table24. Alternately the games1may be rendered on a 2D “gamer” laptop computer7such as the HP Envy with 17 inch diameter screen and nVidia GeForce GT 750M graphics accelerator8or subsequent models. The same bimanual game controller9may be used (FIG. 2), and the laptop7may be placed on a cooling tray10, such as those available commercially. It is envisioned that other computers such as those “all-in-one” PCs available commercially, may be used as part of the bimanual integrative therapeutic system100. In one embodiment, the interaction with the games is mediated by a Razer Hydra bimanual interface (Reference 12) shown inFIG. 3a. It consists of two hand-held pendants11, each with a number of buttons12and a trigger13, and a stationary source14connected to the workstation2over an USB port15. The source14generates the magnetic field16which allows the workstation2to track the 3D position and orientation of each hand17in real time. Of the many buttons on the pendants11, the system100uses an analog trigger13so to detect the degree of flexion/extension of the patient's index fingers18. The pressing of these analog triggers13controls the closing/opening of hand avatars19, while the position/orientation of the hand avatars19is determined by the position/orientation of the corresponding ...

DESCRIPTION

Referring toFIG. 1, the bimanual therapy system100consists of off-the shelf gaming hardware and a library of custom therapeutic games1written in Unity 3D Pro. See Reference 11. The games are rendered on a computer2, such as those available in commerce. For example, the games1can be rendered by an HP Z600 graphics workstation with an nVidia “Quadro 2000” graphics accelerator3, or subsequent models (FIG. 1). The graphics are in 3D, so to facilitate immersion and help the patient4in his manual tasks. Therefore the workstation2is connected to an Assus VG236H 3D monitor5, and the patient4wears a pair of nVidia “3D Vision” active stereo glasses6. A towel25is used to allow patient4to rest his arm23on table24.

Alternately the games1may be rendered on a 2D “gamer” laptop computer7such as the HP Envy with 17 inch diameter screen and nVidia GeForce GT 750M graphics accelerator8or subsequent models. The same bimanual game controller9may be used (FIG. 2), and the laptop7may be placed on a cooling tray10, such as those available commercially. It is envisioned that other computers such as those “all-in-one” PCs available commercially, may be used as part of the bimanual integrative therapeutic system100.

In one embodiment, the interaction with the games is mediated by a Razer Hydra bimanual interface (Reference 12) shown inFIG. 3a. It consists of two hand-held pendants11, each with a number of buttons12and a trigger13, and a stationary source14connected to the workstation2over an USB port15. The source14generates the magnetic field16which allows the workstation2to track the 3D position and orientation of each hand17in real time. Of the many buttons on the pendants11, the system100uses an analog trigger13so to detect the degree of flexion/extension of the patient's index fingers18. The pressing of these analog triggers13controls the closing/opening of hand avatars19, while the position/orientation of the hand avatars19is determined by the position/orientation of the corresponding Hydra pendants11. The Hydra is calibrated at the start of each session by placing the two pendants11next to the source14. Its work envelope is sufficient to detect hand17position for a patient4exercising in sitting.

Weights11A can be provided which can be slipped over the pendant11to increase the difficulty for the patient. The weights can be provided in a variety of forms and they can be attached to the pendants11(both sides) by snaps, Velcro, and other mechanical attachments. Alternately, the weights28can be placed at the wrist.

Alternately the system100can use a Leap Motion hand controller20, as shown inFIG. 3b(see Reference 13). In this case the interaction is through hand17gestures, without the need for pendants11. Detection of hand17and finger18movement is through infrared beams21emitted by the controller20and reflected off the hands17of the patient4. It is appreciated that hands17need to be able to fully flex/extend fingers18, so to assure proper measurement by the controller20through its infrared beams21.

Stroke patients4in the acute stage (just after the neural infarct) have weak arms22. Similarly, patients who are chronic post-stroke may have low gravity bearing capability. Some of them may also have spasticity (difficulty flexing/extending elbows23or fingers18). Thus using the Hydra9with this population is different from use in normal play by healthy individuals. The adaptation in the present application is to place the weak arm22on a low-friction table24, and use a small towel25under the forearm26, so to minimize friction and facilitate forearm26movement (seeFIG. 3c). Furthermore, for spastic patients who may have difficulty holding the Hydra pendant11in their spastic hand17, the solution is to use Velcro strips27to position the index finger18properly over the analog trigger13.

For stronger patients4, or those without motor impairment to their arms22or hands17, it is possible to play the games1while wearing wrist weights28. The amount of added physical exertion is proportional to the size of weights28, as well as the duration of the session played while wearing the wrist weights28. It is appreciated that elderly users4will feel more comfortable while wearing smaller weights28(0.5 lb, 1 lb, 2 lb).FIG. 4shows a patient4playing a game1using the Hydra pendants11, while wearing wrist weights28. It is envisioned that the size of the wrist weights28may be increased over the weeks of training, with larger weights worn in later weeks. It is also appreciated that the weights need not be the same for each arm, with the weaker arm having smaller weights.

It is further envisioned that while playing the cognitive games1on the system100which now has the commercial name of BrightBrainer™, the user4can also have an Oxygen tube29to the nose30. The Oxygen tube29is of the type known in the art (transparent plastic), being small and flexible, and unencumbering to the user4. Provision of extra Oxygen to the blood, brings extra oxygenation to the brain. This boosts the brain activity, as it facilitates energy generation and in turn helps neuronal activity. The oxygen is provided via a tank30A.

In addition to (or instead of) wearing an Oxygen tube29, the user4may choose to have food supplements31(such as dark chocolate, fatty fish, spinach, blueberries, walnuts, avocado, water intake increase). Such food supplements31need to be taken some time before the play on the system100, so to be metabolized, and facilitate increased cognitive activity.

Therapeutic Games

Several games1were developed to be played either uni-manually or bimanually. This gives flexibility when the therapy focus is motor re-training (using uni-manual mode), or integrative cognitive retraining (using bimanual mode). The requirement for developing a multi-game1therapy system100stems from the need to address several cognitive areas (by targeted games1), as well as to minimize boredom by alternating games1during a session.

In a sequence of sessions, the first sessions can be played uni-manually so users4learn the games1. In the second part they progress to using both arms22, and finally to wearing weights28for increased exercising demands and blood flow to the brain. It is also envisioned that in a sequence of sessions, the duration of play will be shorter in the first sessions, and progressively longer over the duration of therapy.

Baselines

Each patient4is different, each day. It is therefore necessary to use baselines40to determine the patient's4motor capabilities, and adapt the games1accordingly. The system100uses three baselines, two for arm range41,42, and one for the index finger flexion/extension43using the analog trigger13on the Hydra pendant11. As seen inFIG. 5a, the vertical baseline41asks the patient4to draw a circle44on a virtual blackboard45. The software then fits a rectangle to the “circle”44and this range is used to map the arm22limited vertical range46to the full vertical space on the game1scene. The horizontal baseline42(FIG. 5b) is similar, except now the patient4is asked to draw a circle44on a virtual table47covered by a large sheet of paper48.

During bimanual play sessions each arm22performs the baselines42, and42in sequence, and each arm22has different gains49mapping real movement to avatar19movement in the virtual scene. Thus the movement of their respective hand avatars19appears equal (and normal) in the virtual world, something designed to motivate the patient4. A further reason to present exaggerated movement of the paretic arm22when mapped to VR is the positive role image therapy has traditionally played. In other words, the patient4is looking at the display5, not at the hand17, and believes what he or she sees on the display. This technique is similar by that developed by Burdea et al. in U.S. application Ser. No. 12/422,254 “Method for treating and exercising patients having limited range of body motion,” which is incorporated herein by reference. (See Reference 14).

The third baseline43measures the range of movement of the index18of each hand17. Unlike the range baselines41and42, done in sequence, the index baseline43is done simultaneously for both hands17. As seen inFIG. 5c, the patient sees two spheres50that move vertically between target blocks51, in proportion with the index18movement on each pendant trigger13. First the patient4is instructed to flex, and the two balls50move up a certain percentage of full range. The baseline displays the finger-specific percentage52of full motion. Subsequently the patient4is asked to extend the index18of each hand17and the balls50move down, again a certain percentage of full range52(FIG. 5d). For spastic patients4, the paretic index18will have little difficulty flexing, but substantial difficulty extending. The resulting limited range for the paretic index18, and full range of the non-paretic one are then mapped to the hand avatars19. The two hand avatars19will thus show full flexion and full extension during the games1.

Games to Train Focusing

Two games were developed to train patient's4ability to focus. The Kites game60presents two kites61,62flying over water63, while the sound of wind is heard (FIG. 6a). One kite is green, one red, and they have to be piloted through like-colored target circles64,65. The circles64,65alternate randomly in their color and their position on the screen, and the difficulty of the game60is modulated by the speed of the circles64,65, the duration of the game60, the visibility66(a foggy sky gives less time to react) and the presence of air turbulence (acting as a disturbance67). The game60calculates the percentage68of targets entered vs. those available, and displays it at the end of the game60as summative feedback on performance (FIG. 6b).

The Kites game60has a score to objectively measure patient's4performance:

Success%*skite*fr*(100100-df)*(1.2ifbimanual)

In this game, the success rate, given by the percentage of rings caught68, is multiplied by the redefined parameters, kite61speed (Skite) and ring64frequency (fr=number of rings per unit time), as each parameter works to increase the difficulty of the game60. The term in parentheses considers the fog density (df), applying a higher multiplier for denser fog66. Since all parameters other than success rate are predefined at the start of the game60, the final score is directly proportional to the number of rings64hit. Finally, a 20% bonus is granted for bimanual mode so to account for increased difficulty that introduces new sources of error (hitting the ring64with the wrong kite61).

The Breakout 3D game70is a bimanual adaptation of the game developed earlier by Burdea's group for uni-manual training on the Rutgers Arm system. See Reference 15. The scene (FIG. 7a) depicts an island71with an array of crates72placed in a forest clearing. Two paddle avatars73,74of different color, each controlled by one of the patient's hands17are located on each side of the crates72. The patient4needs to bounce a ball75with either paddle73,74, so to keep it in play, and attempt to destroy all the crates72. The ball75is allowed to bounce off several crates, destroying one crate72at each bounce. This is the preferred implementation when cognitive training is the primary focus of the game70. If motor retraining is the primary focus of the game (such as for patients4post-stroke) then the ball75is allowed to destroy only one crate72after each bounce off the paddle avatar73or74. This insures increase arm22movement demands corresponding to a given number of crates72to be destroyed. The sound of waves is added to help the patient4relax. The difficulty of the game70is modulated by the speed of the ball75, the size of the paddles73,74, and the number of crates72to be destroyed in the allowed amount of time.

In a different version of the Breakout 3D game70, the paddle avatars73,74are close to the patient4, and the crates72are further away. In this version of game70the predominant arm22movement is left-right (FIG. 7b). In this configuration a fence76is located at the middle of the court77, so to prevent one paddle avatar73,74from entering the other avatar's space. This features insures that the patient4uses both arms22to play the game70. The score for Breakout 3D is given by:

crateshit*(vballlpaddle)*(1log(lostballs+2))(2)

The number of points awarded for each destroyed crate72is dependent not only on the preset parameters Ball_speed (vball) and Paddle_length (lpaddle), but also on the number of balls75lost. Since the logarithm is an increasing function, there is always a penalty for losing balls75. Yet, as more balls75are lost, the penalty increases at a progressively slower rate, enabling players4of lesser skill to achieve better scores. The number 2 is added to prevent divide-by-zero issues (in case no balls75were lost).

Games to Train Memory

The first memory game is Card Island,80(FIG. 8), again a bimanual version of the game previously used in uni-manual training on the Rutgers Arm system. The patients4are presented with an island81and an array of cards82placed face down on the sand83. The array of cards82is divided symmetrically by a central barrier84, such that each hand avatar19has to stay on its half of the island81. When a hand avatar19overlaps a card82, the patient4can turn it face up by squeezing the Hydra pendant trigger13. Once a card is turned, a voice recites the name of the card image. The task is to take turns turning cards82face up so to find matching pairs. Since non-matching cards82turn face down again, the patient4has to remember where a given card82had been seen before, something that trains short term visual and auditory memory. Once a card82had been seen, its back changes color, which is a cognitive aide to the patient4. The difficulty of game80is proportional with the number of cards82in the array, and the allowed length of time to find all card pairs.

Card Island is scored by:

(Correctmatches-Errors2)*(DeckSizelog(Playtime))(3)

An incorrect match deducts points equal to half of a correctly matched pair. This allows player4a second chance to correct his or her mistake. Leniency is granted towards slower players4as exhibited by the logarithm of their playtime measured in seconds. At the same time, this leniency is also depending on the starting deck82size. Lastly, no performance bonus is given for bimanual play mode, as the difficulty of this game lies in the player's4short-term visual and auditory memory abilities.

Remember this card,90(FIG. 9a) is a game that trains long-term visual and auditory memory. The game consists of two parts91,92, interspaced by other games. In the first part91the patient4is presented with a number of cards93placed face down. Each card needs to be turned face up, at which time a sound in played associated with the image on the card. For example, if the card93depicts a phone booth94, then a ring tone95in played. After all cards93had been explored, the patient4selects one, by flexing the hand avatar19over the card, and is prompted with the “Remember this card” text. After a number of other games are played, the second part of the game92appears, with a scene that shows the cards93previously explored, this time lined up face up. Patient4is asked to select the card he had been asked to remember before. If the attempt is unsuccessful, the “Oups, nice try!” text95appears (FIG. 9b), otherwise the patient4is congratulated for remembering correctly. The difficulty of the game90is modulated by the number of card choices93, as well as the number of other games interposed between the two parts91,92of this delayed recall game90.

The score is:

50*NumberofCardslog(Recalltime+2)(4)

The score scales linearly with the number of cards93while being more lenient on the time taken to recall and choose the correct card. The recall time is the time taken by the patient4to pick their previously selected card among those shown, measured in seconds. For any given number of cards93in this formula, a player4who takes less time to choose the correct card will always receive a higher score than a slower player. However, the slower players will not see a larger gap in scores, regardless of how long they take to remember the original card. Again, 2 (measured in seconds) is added to the recall time in order to prevent divide-by-zero errors.

Game to Train Executive Function

Tower of Hanoi 3D game,110is similar to the version of the game being played with a mouse online. The patient4has to restack a pile of disks111of different diameters, from one pole112to another pole113, using a third pole114as way-point. The game110trains decision making/problem solving by setting the condition that no larger disk111can be placed on top of a smaller diameter one.

In the version of the game110for bimanual therapy, the scene shows two hand avatars19, one green and one red and similarly colored red and green disks111(FIG. 10). Each hand avatar19is allowed to manipulate only disks111of similar color. The game110chooses randomly the green or the red color for the smallest disk and allocates the other color to the other disks. In this configuration, both hands17are doing approximately the same number of moves. The difficulty of the game110depends on the number of disks111(2—easy, 3—medium, 4—difficult). The number of moves in the game110is counted and compared to the ideal (smallest) number of moves to complete the task. Thus cognitively, achieving an economical (minimal) number of moves to solve the problem is indicative of good problem solving skills.

The score is:

150*disks*(1.2ifbimanual)log(moves-pow(2,disks)+3)*log(Playtime)(5)

If a patient4was unable to complete the game110, we assign a flat score of 100, so to maintain patient4motivation. In this game, each disk111is worth 150 points, with 20% increase in bimanual play mode to account for the increased difficulty and newly introduced sources of error. This number is countered by a product of logarithms (for leniency): the first compares the number of moves made by the patient4against the optimal solution, and the second factors in the time taken to solve the task.

Dual Tasking and Therapy Gradation

As stated before, dual tasking is typically problematic with older populations (whether stroke survivors or not). Thus some of the games have embedded dual-tasking features, notably Breakout 3D70. When the dual tasking parameter is set, the paddle avatar73,74characteristics depend on whether the trigger13is squeezed during movement or not. When a momentary squeeze is required, the patient4has to squeeze the trigger13at the moment of bounce, lest the ball75passes through the paddle73, or74and is lost. When a sustained grasp is required, the movement of the paddle73,74is decoupled from that of the pendant11when the trigger13is not squeezed. Thus the patient4has to remember to keep squeezing to move the paddle73,74to bounce the ball75. Recognizing that sustained squeezing may be fatiguing and may induce discomfort for some patients4, the game70sets a threshold as a % of range when classifying an index18flexion as a squeeze. This threshold is based on the finger18flexion baseline40previously described (FIG. 5).

Naturally, the introduction of the squeezing requirement further increases game70difficulty. Thus the weeks of therapy are gradated in terms of session duration and game difficulty. The approach in this application is to begin with shorter sessions of 30 minutes in week 1, progress to 40 minutes in week 2 and 50 minutes for the remaining weeks. The games in week 1 are uni-manual, so to familiarize the patient4with the system100and its games1. Gradually the games1difficulty is increased, switching to bimanual mode in week 2 or later, and in the last 3 weeks the dual tasking condition is introduced. The aim is to always challenge the patient4, offer variety, but make games1winnable, so to keep motivation high.

Arm22and Index Finger18Repetitions120

It is known in the art that the amount of movement repetitions120within a task is crucial to induce brain plasticity. Within the system100described here the tasks are dictated by the different games1, and the system100measures the number of repetitions120during play, The number of repetitions120is arm specific, as well as index finger18specific (right, left), and is summed for the session, by totaling the repetitions of each game1. The amount of repetitions120is an indication of endurance, while the intensity of play is expressed by repetitions in unit of time. Arms endurance and therapy intensity are useful tools for the therapist.

In group therapy the repetitions120may be averaged over the group of patients4for a given session.FIG. 11depicts a graph showing the left and right arm22number of repetitions121,122over a sequence of sessions123. It can be seen that over the first 7 sessions the right arm number of repetitions121grows, while the left arm22is motionless. This is due to the fact that during these 7 sessions the games1were played in uni-manual mode, and thus only one arm was used. The reason the number of repetitions121increases for right arm22is the increased session duration, implying more games played. Once the games1started being played with both arms22, it can be seen that the left arm22has a steep increase in its number of movement repetitions122, while the right arm number of repetitions121is somewhat reduced. Eventually both arms share about equally in the game play.

At each session the average arm repetitions value for the group is associated with a standard deviation. Standard deviation visualizes the span of total repetitions values for each member in the group as measured in a given session123. Typically, the standard deviations are larger at the initial sessions and smaller for later sessions. This is indicative of increased uniformity of effort among group members for later sessions.

Discussion

A pilot feasibility study took place with two elderly participants who were in the chronic phase of stroke and had arm/hand spasticity (See Reference 16).

The pilot feasibility study aim was to determine technology acceptance as well as any clinical benefits in the cognitive and emotive domain of training on the BrightBrainer™. These were measured by a blinded neuro-psychologist consultant using standardized tests. Results showed excellent technology acceptance and benefits to the two patients4in various cognitive domains. One patient had reduced depression following the therapy.

Subsequently a larger study with 10 elderly nursing home residents took place in summer 2013. See reference 17. Eight of the participants4had dementia and one had severe traumatic brain injury. They played the games described above and three other games we developed. The new games were: Pick-and-Place bimanual130, Xylophone bimanual140, and Musical Drums150, bimanual.

The Pick-and-Place game130bimanual (FIG. 12) depicts two hand avatars19that need to pick a ball136each from three possible choices131,132and move them to target areas133,134following prescribed (ideal) paths135. Each time a ball136is correctly moved to a target133,134, a different sound is played. The patient4has a choice of moving one arm controlling on hand avatar19holding a ball136at a time or of moving both arms22at the same time (a more difficult task). The game130difficulty depends on the number of required repetitions, and errors are counted whenever the wrong ball is picked up. The Pick-and-Place game trains hand-eye coordination and dual tasking may be introduced by requiring the patient to squeeze the Hydra trigger13to keep the ball136grasped by hand avatar19.

In the Xylophone game140(FIG. 13) the patient4controls two hammer avatars141, and needs to hit keys142to create a sound (play a note). The patient4is tasked with reproducing sequences of notes by playing the instrument keys142in the correct order. The difficulty of the game depends on the length of note sequences to be reproduced, as well as the total amount of time available to complete a series of note sequences.

Another game is Musical Drums150(FIG. 14) where the patient4needs to hit notes151scrolling on the screen with a hammer avatar141when the notes151overlap a drum152to get points. The difficulty of the game150increases with the tempo of the song153being played, corresponding to faster scrolling of the notes151across the screen. Further increase in difficulty occurs when notes scroll across more drums152.

Patients had to play the games two times per week for 8 weeks. To measure clinical benefit, standardized tests were done by a blinded neuropsychologist before and after the 8 weeks of therapy. These tests showed statistically significant group improvement in decision making capacity, and borderline significant reduction in depression.

In summary, one aspect of the present invention is to provide a method of providing therapy to a patient having a first arm, a first hand, a second arm and a second hand. The method includes executing a video game on a computer and portraying action from the video game on a display, the action being viewable by the patient; the patient holding a first component of a game controller in the first hand and manipulating an interface on the first component of the game controller with the first hand and moving the first component of the game controller with the first hand and the first arm to control the video game; the patient holding a second component of a game controller in the second hand and manipulating an interface on the second component of the game controller with the second hand and moving the second component of the game controller with the second hand and the second arm to control the video game. The first component of the game controller is separate from the second component of the game controller and can be moved independently from the second component of the game controller. The game controller sends one or more signals representative of a position of the interface on the first component, of a position of the interface on the second component, of a motion of the first component and of a motion of the second component are reported by the game controller to the computer; and the computer analyzes the one or more signals and controlling the video game to control action portrayed on the display.

The video game can also control the computer to cause a displayed object to include one of two codes wherein a first code indicates that the displayed object can be moved with the first component of the controller and a second code indicates that the displayed object can be moved with the second component of the controller. Preferably, the two codes are different colors.

While the game is played the computer monitors and stores a set of information from the first component and the second component of the controller. The set of information includes: activation of the interface (button and trigger) on the first component of the controller; movement of the first component of the controller; activation of the interface on the second component of the controller; and movement of the second component of the controller.

The computer controls the video game and resulting action on the display in accordance the set of information. The computer also analyzes the set of information to determine progress of the patient. In one embodiment of the present invention, the computer controls the action displayed such that the action caused by the first component of the controller is the same as the action caused by the second component of the controller even if one of the arms is impaired and does not perform as well. As explained before, extra oxygen can be fed to the patient from an oxygen tank while the patient manipulates the first component and the second component. Also as explained before the patient can wear wrist weights on the first arm, on the second arm or on both arms while the patient manipulates the first component and the second component. Alternatively, weights can be added to either the first component of the game controller, to the second component of the game controller or to both. The handheld components can be modified to have the weights attached to them. The values of the two weights need not be the same for the two arms.

In accordance with an aspect of the present invention, the computer controls a videogame avatar object in response to activation of the interface (button and/or trigger) on each of the handheld components of the controller. The avatar object can be controlled by movement of each handheld component of the controller. Alternatively, one avatar object can be controlled by the movement of the first (say the left) handheld component while another avatar object can be controlled by the movement of the second (say the right) handheld component. Thus, a computer can control a videogame avatar object to respond to movement of the first component of the controller if the button or the trigger on the first component are pressed and the computer controls another video game avatar object to respond to movement of the second component of the controller if the button or the trigger on the second component are pressed.

A system of providing therapy to a patient having a first arm, a first hand, a second arm and a second hand, is also provided as explained above.

In accordance with another aspect of the present invention, a screening software and method of evaluation/triage may be used with the hardware described above for cognitive screening, which has the commercial name BrightScreener™. In one embodiment of the invention, BrightScreener is a portable (laptop-based) serious-gaming system which incorporates a bimanual game interface for more ecological interaction with virtual worlds. It is envisioned that BrightScreener may use wireless bimanual interfaces780such as the STEM system developed by Sixense Entertainment (Reference No. 25) (FIG. 15) or similar interfaces.

In another embodiment of this invention BrightScreener software may run on an all-in-one touch-sensitive PC, 781, and sound may be played on headphones3003so to better insulate the person being screened from distracting surrounding sounds.

The games used by BrightScreener may be cognitive-domain specific, some games training short-term visual and auditory memory, others focusing, others divided attention, or problem solving/executive function. A combination of these games targeting different domains should cover a breadth of cognitive areas, analogous to how cognitive paper and pencil tests contain groups of questions that target different mental indicators. A sample of select games to develop a comprehensive, non-hearing and speech-based cognitive screening is shown inFIG. 16(Reference No. 29).

Breakout 3D (FIG. 16a) asks subjects to bounce balls800alternating between right peddle805and left peddle806avatars, so to destroy rows of crates810placed on an island. The game tests executive function through reaction time (processing speed) and task sequencing, as well as attention.

Card Island (FIG. 16b) asks subjects to pair cards820arrayed face down on the sand825. Hand avatars830are used to select and turn cards820face up (two at-a-time) when a pendant trigger button is pressed. The placement of the game on an island integrates the sound of waves, so to further relax the subjects. The Card Island game tests short-term visual and auditory memory and attention.

Tower of Hanoi 3D (FIG. 16c) is a Bright Cloud International version of a well-known cognitive game, normally played online with a mouse. The subject is asked to restack disks840of varying diameters from one pole842to another pole842, using the third pole842as a way-point. The complexity of the task stems from the requirement that a larger disk may never be placed on top of a smaller one. A disk is picked up by overlapping it with a hand avatar830and squeezing the trigger to flex the hand fingers. In bimanual mode there are two hand avatars, one colored green and one red, and disks are similarly colored. Each hand avatar can only manipulate like-colored disks. The game tests executive function (task sequencing and problem solving).

Finally, in Pick and Place (FIG. 16d) the subject has to pick up a ball800, from several available, and place it on a like-colored target square850. While in route to the target the movement of the hand avatar is traced855. The game tests working memory and divided attention when played in bimanual mode. Other games have been envisioned as part of BrightScreener system.

Each game used by BrightScreener may have several levels of difficulty, with the most basic setting utilizing one hand controller (uni-manual mode). The remaining levels may require both hand controllers to play (bimanual mode). At successive levels, Breakout 3D, for example, may become more difficult with increase in the speed of the ball and decrease in the size of the paddle avatars used to bounce it. Card Island may become more complex by increasing the number of cards to be paired. Similarly, the number of disks in Tower of Hanoi 3D increases with successive difficulty levels (two disks, then three, then four disks and so on). Pick and Place increases difficulty with the increase in number of targets and the removal of visual cues used by subjects to match ball and target colors and complete the pick and place task.

When subjects are new to BrightScreener, it may be beneficial to have a tutorial session before the actual cognitive evaluation session. During a tutorial session subjects play the games (such as those previously described) and each game may be played several times at progressively harder levels of difficulty. The subjects may cycle through all games at the most basic level of difficulty before progressing to the next level of each game type, and so on. In alternate configurations, subject may play the same game multiple times in succession before progressing to a different game. Additionally, the level of difficulty in successive instances of playing a game may be based on the performance of previous instances of playing that game. Furthermore, the level of difficulty may progress or regress depending on performance during the course of continuous play of a game.

For the cognitive testing session, similar to a tutorial session, a subject may play the same game multiple times in succession before progressing to a different game; the level of difficulty in successive instances of playing a game may be based on the performance of previous instances of playing that game; and the level of difficulty may progress or regress depending on performance during the course of continuous play of a game.

During game play for testing, BrightScreener may capture actions or events during game play based on the subject's interaction with the game. For example, one such metric may be the amount of time to complete a goal within the game or the number of times a game objective was missed (such as hitting a virtual ball with a virtual paddle). In another embodiment, precision of the movement of hand controllers may be recorded over time. As seen inFIG. 17, this may correspond to moving a virtual object900such as a ball to a target location905(Pick-and-Place above). Each game may be scored based on game specific formulas based on the capture metrics and game difficulty parameters (such as the speed of the ball in Breakout 3D). For each game played, the score assigned may be the average score for multiple difficulty levels for that game. A composite testing score may be formulated from the weighted combination of scores captured for different types of games.

The overall testing session score may then be used to place the individual in one of several cognitive “bands”. These may be “normal”, “mild cognitive impairment (MCI),” “moderate cognitive impairment”, and “severe cognitive impairment.”

In one or more embodiments, BrightScreener is laptop-based, and portable. As seen inFIG. 18, the system hardware may incorporate a Razer Hydra bimanual game interface (Reference No. 41), allowing the subject to interact with the custom simulations. The Hydra pendants980are light, intuitive to use and track full arm movements in real time. In addition they measure the degree of index flexion-extension, which combined with the tracking feature allow the creation of dual tasking scenarios.

In one or more embodiments, BrightScreener may be comprised of several games that were ported from BrightArm (see Reference No. 38) and re-written in Unity 3D (Reference No. 41). Furthermore, all games may be made to have uni-manual and bimanual modes, with game avatars controlled through the Hydra pendants. As described below, four of the games were selected for the BrightScreener cognitive evaluation feature. These games were Breakout 3D, Card Island, Tower of Hanoi 3D and Pick-and-Place.

Data

In accordance with an aspect of the present invention, BrightScreener was tested as a screening system for individuals with dementia, including a person with Alzheimer's disease (See Reference No. 23). BrightScreener was used for evaluating elderly with various degrees of cognitive impairment to evaluate the technology acceptance by the target population and to determine if BrightScreener is able to differentiate levels of cognitive impairment based on game performance.

Eleven subjects were recruited by the study Clinical Coordinator, from the pool of potential participants at MECA. Of these five were women and six men. Subjects1-5were tested the first Saturday and Subjects6-11were tested a week later. The group had an average age of 73.6 years, with a range from 61 to 90 years old and a standard deviation of 8.6 years. The mean education level was 14.5 years in school, with a standard deviation of 4.2 years.

Since subjects were new to BrightScreener, it was necessary to have a tutorial session before the actual cognitive evaluation session. During the tutorial session subjects played the four games previously described, and each game was played at progressively harder levels of difficulty. The subjects cycled through all four games at the most basic level of difficulty before progressing to the next level of each game type, and so on. The tutorial session lasted about 35 minutes per subject.

For testing, the subjects completed the four levels of difficulty of a given game before proceeding to the next game, and so on. The evaluation session lasted about 30 minutes per subjects.

The sequence of steps used in this study consisted of (1) subject consenting, (2) a tutorial session on the BrightScreener, followed by (3) standardized cognitive testing, then (4) the game-based testing session and (5) an exit questionnaire. Each subject completed the study in the span of a few hours (depending on their cognitive functioning level). The study was approved by the Western Institutional Review Board and took place during two days at the Memory Enhancement Center of America—MECA (Eatontown, N.J.) in February 2014.

Subjects first underwent clinical scoring with the standardized MMSE test. During the same visit they underwent a BrightScreener familiarization session and then an evaluation session on the device. At the end of their visit, each subject filled a subjective evaluation exit form. Technologists were blinded to MMSE scores known only to the clinical coordinator who had administered the MMSE. Subsequent group analysis of BrightScreener data using the Pearson correlation coefficient showed a high degree of correlation between the subjects' MMSE scores and their BrightScreener Composite Game Scores (0.90, I P1<0.01).

The scoring equation for each game incorporated both difficulty and performance metrics. For example, a 25% bonus was given for increased difficulty when playing bimanually over uni-manual version of the same game. Play time was typically used to rate game performance. For example, the score formula for Tower of Hanoi 3D was:

Score=(Ifbimanual1.25;else1.0;)*(Minimum#ofMoves)*100Log(TimeinSeconds)*(Actual#ofMoves+#ofDroppedDisks)(6)

In the numerator, level of difficulty is quantified by whether the game is played in bimanual mode (25% bonus) and minimum number of moves needed to complete the game. Restacking 2 disks required a minimum of 3 moves, 7 moves were necessary to restack 3 disks and 15 moves for 4 disks. In the denominator, game performance was quantified by the logarithm of game completion time and the actual number of moves taken to complete the game combined with the number of disks dropped en route to the poles. Longer length of game play (measured by time and number of steps) corresponds to lower performance and hence has a lower game score.

Table 1, below, shows the subject's characteristics by gender, age, and education level.

TABLE 1Subject #GenderAgeYears in School1M64162M73193M82224F90145M73186F77127M78148F70149F611210F641311M786Average Age = 73.6 (8.6)Average School years = 14.5(4.2)

Subject's data was collected during the study using MMSE, game scores, and an exit interview. Mini Mental State Exam was used to evaluate the subject's cognitive function. The MMSE was administered by the Clinical Coordinator in a quiet room and the BCI researchers were blinded to the scores.

Subsequent to the MMSE testing, subjects were given the BrightScreener evaluation session and game scores stored transparently for each subject. Finally, the subjects filled a subjective evaluation exit form in the Clinical Coordinator room. The form had 8 questions scored on a Likert scale from 1 (least desirable outcome) to 5 (most desirable one). The questions were: “Were instructions easy to understand?,” “Were the games easy to play?,” “Were the game handles difficult to use?,” “How easy was playing with one hand?,” “How easy was playing with both hands?,” “Were you tired after playing the games?,” “Did you have headaches after playing the games?,” and “Did you like the system overall?”

Table 2, below, lists the MMSE scores and cognitive impairment level of the 11 study subjects.

TABLE 2CognitiveSubject #MMSE ScoreMMSE RankImpairment Level1291Normal2247Mild3255Mild4911Severe5273Normal6291Normal7264Mild8247Mild9239Mild10255Mild112210Mild

These scores ranged from a low of 9 to a high of 29 (out of a maximum of 30). The average MMSE score was 23.9 with a standard deviation of 5.4. Based on their MMSE scores, participants were ranked by degree of cognitive impairment from 1 (the least) to 11 (the most). Subjects with identical scores (1 and 6, 2 and 8) were given the same rank (1 or 7, respectively).

The MMSE scores may be used to determine the degree of cognitive impairment (Reference No. 31). Three of the subjects were classified as having normal cognitive function, seven of the subjects were diagnosed with Mild Cognitive Impairment (MCI) and one with Severe Cognitive Impairment (Alzheimer's). For control, at least one participant that was expected to have a normal diagnosis was included in each day of the study. However, the identities of these individuals were not known by technologists conducting the game training and game-based cognitive screening sessions.

Table 3, below, summarizes the game scores and corresponding ranking during the testing session.

TABLE 3Break-CardPick &Com-Com-CognitiveSub-outIslandPlaceTowerspositepositeImpairmentjectScoreScoreScoreScoreScoreRankLevel178.843.337.174.358.43MCI251.949.952.086.960.22Normal364.753.536.970.556.45MCI418.35.40.00.05.911Severe569.953.646.175.561.31Normal639.741.036.176.848.48MCI756.156.838.977.657.34MCI846.749.632.555.646.19MCI958.657.642.457.954.17MCI1061.260.823.571.654.36MCI1183.426.826.620.439.310MCI

For each of the 4 games played, the score assigned was the average score for four difficulty levels of each game. Participant4consistently scored the lowest across games, however the participant with the highest score varied between games. In order to realize an overall ranking, each participant's scores were averaged into a Composite Score. Subject4had the lowest composite score (5.9) and Subject5the highest composite score (61.3).

Subsequently the 11 subjects were ranked from 1 to 11 using the composite game scores. The scores were also categorized into four basic bands following degree of cognitive impairment: normal, mild, moderate, and severe. The thresholds quantizing composite scores to the cognitive impairment levels were calibrated based on the fact that the Clinical Coordinator indicated prior to study that two undisclosed participants were expected to have a normal cognitive state. As seen in Table 3, eight of the participants were categorized as MCI, none of the participants were classified as having moderate cognitive impairment and one participant was categorized has having severe cognitive impairment. This is consistent with the distribution of the MMSE, although individual classifications do vary (i.e. participants2and6).

Table 4, below, shows the Spearman correlation (Reference No. 33) between individual game scores and the MMSE test scores.

TABLE 4CardPick &Tower ofCompositeCorrelationBreakout 3DIslandPlaceHanoiScoreP0.600.750.780.850.90P0.0450.0060.00370.00048.000008

The correlation values ranged from a low of 0.6 for Breakout 3D to a high of 0.85 for Tower of Hanoi 3D, with probability |P|<0.05. As seen, the correlation for Breakout 3D was less than for the other games due to the fact that Subject11performed particularly well for this game. When Subject11was removed from the correlation computation, the Breakout 3D correlation increased to 0.75. This correlation value is now in line with Card Island and Pick and Place correlation values.

The Composite Score correlated to MMSE outcomes better than individual game scores. The correlation value was 0.90 with a confidence |P|<0.01. This is reflective of the nature of the individual games, each targeting a different group of cognitive domains. The rationale for using the Composite Score is a broader spectrum of domains may be captured in a single value, similar to the methodology of the MMSE instrument.

The Spearman correlation was subsequently computed between the ranking of subjects using MMSE scores and the ranking of those same subjects based on game scores. The ranking using the composite score had a correlation value of 0.6 and |P|<0.05. The Spearman value was higher when ranking by Tower of Hanoi 3D alone with a correlation of 0.69 with |P|<0.05. A better Spearman correlation was achieved by limiting the contribution of Pick and Place, correlation value of 0.71 with |P|<0.05.

Spearman rank correlation tends to amplify noise in the ordering. This is seen through the fact that the overall correlation value of 0.6 is much lower than the 0.9 found by correlation between composite game scores and MMSE scores. If BrightScreener were to be used as a cognitive function screening tool, it may be sufficient to categorize subjects into 4 general categories (normal, Mild, Moderate and Severe cognitive impairments), as opposed to get exact ordering within particular categories. To this end, the cognitive impairment levels for games scores was correlated with the diagnostic from MMSE test. Here, a much higher correlation value of 0.8 was measured, with a |P|<0.01.

The subjects were asked to fill out a subjective questionnaire after completing the game-base testing. Although anecdotal evidence suggested that the subjects rarely played video games, the subjective evaluation response was consistently positive. For example, subjects gave an average rating of 4.8 out of 5 to the question “Were the instructions easy to understand?”

The challenge of playing bimanually was measured through the question: “How easy was playing with both hands?” This received the lowest rating of all questions, namely a 4.1 out of 5. Finally, the subjects were asked “Did you like the system overall.” Each of the 11 participants gave the system a perfect rating of 5. All the other questions received a rating of 4.3 to 4.8.

The aims of this pilot study were: 1) to determine if the BrightScreener system was able to differentiate levels of cognitive impairment based on game performance, and 2) to evaluate the technology acceptance by the target population. Virtual reality use in emotive therapy has been tried successfully for a number of years, beginning in the 90s (Reference No. 40). Within the cognitive domain many studies have used virtual supermarkets to train executive function (Reference No. 34), or as a more ecological method for MCI diagnosis (Werner et al., 2009). Researchers tested a group of 30 MCI patients and 30 healthy elderly adults. They observed significant differences in the performance of the two groups in the virtual supermarket simulation. A key differentiation with the prior systems is that BrightScreener uses serious-games as a scoring method for cognitive impairment. The results were shown to be consistent with determination of a popular pencil and paper screening method (MMSE).

BrightScreener interactions were mediated by a bimanual game controller, and the technology was well accepted by the subjects. Another usability study involving an off-the-shelf game interface used the Wii controller with dementia patients (Reference No. 27). Similar to the present study, researchers found that subjects were able to use the Wii and liked the technology very much.

Despite the small sample size, results suggest that serious-gaming strategies can be used as a digital technique to stratify levels of Cognitive Impairment. The above results are supportive of the idea that a computerized system using bimanual game interfaces may be an alternative to conventional standardized scoring for Mild Cognitive Impairment and Dementia.

The following is a list of references referred to herein, each of which is incorporated by reference:

Reference No. 1—Roger V L, Go A S, Lloyd-Jones D M, Benjamin E J, Berry J D, Borden W B, et al. Heart disease and stroke statistics—2012 update: a report from the American Heart Association.Circulation.2012; 125(1):e2-220.

Reference No. 2—C. Y. Wu, L. L. Chuang, K. C. Lin, H. C. Chen and P. K. Tsay, Randomized trial of distributed constraint-induced therapy versus bilateral arm training for the rehabilitation of upper-limb motor control and function after stroke.Neurorehab Neural Re, Vol. 25, 2, pp. 130-139, 2011.

Reference No. 3—J. H. Cauraugh, N. Lodha, S. K. Naik and J. J. Summers, Bilateral movement training and stroke motor recovery progress: a structured review and meta-analysis.Hum Movement Sci, Vol 29, 5, pp. 853-870, 2010.

Reference No. 4—C. Ausenda and M. Carnovali, Transfer of motor skill learning from the healthy hand to the paretic hand in stroke patients: a randomized controlled trial.Eur J Phys Rehabil Med, Vol. 47, 3, pp. 417-425, 2011.

Reference No. 5—G. Burdea, Virtual rehabilitation-benefits and challenges.J Meth Inform Med, pp. 519-523, 2003.

Reference No. 6—C. Brooks, B. Gabella, R. Hoffman, D. Sosin, and G. Whiteneck, Traumatic brain injury: designing and implementing a population-based follow-up system.Arch Phys Med Rehab,78, pp. S26-S30, 1997.

Reference No. 7—M. Wang, N. J. Gamo, Y. Yang, L. E. Jin, X. J. Wang, et al., Neuronal basis of age-related working memory decline,Nature, Vol 476, pp. 210-213, July, 2011.

Reference No. 8—K. Lin, Y. Chen, C. Chen, C. Y. Wu and Y. F. Chang, The effects of bilateral arm training on motor control and functional performance in chronic stroke: a randomized controlled study,Neurorehab Neural Re, Vol 24; pp. 42-51, 2010.

Reference No. 9—P. W. Duncan, M. Probst, and S. G. Nelson, Reliability of the Fugl-Meyer assessment of sensorimotor recovery following cerebrovascular accident.Phys Ther, Vol 63, pp. 1606-1610, 1983.

Reference No. 10—G. Optale, C. Urgesi, V. Busato, S. Marin, L. Piron et al., Controlling memory impairment in elderly adults using virtual reality memory training: a randomized controlled pilot study.Neurorehab Neural Re, Vol 24, 4, pp. 348-357, 2010.

Reference No. 11—Unity Technologies, Reference Manual. San Francisco, Calif., 2010.

Reference No. 12—Sixense Entertainment, Razer Hydra Master Guide, 11 pp., 2011.

Reference No. 13—CNet Leap Motion controller review: Virtual reality for your hands. Jul. 22, 2013. http://reviews.cnet.com/input-devices/leap-motion-controller/4505-3133_7-35823002.html.

Reference No. 14—G. Burdea and M. Golomb, U.S. patent application Ser. No. 12/422,254 “Method for treating and exercising patients having limited range of body motion, Apr. 11 2009.

Reference No. 15—G. Burdea, D. Cioi, J. Martin, D. Fensterheim and M. Holenski,The Rutgers Arm II rehabilitation system—a feasibility study,IEEE Trans Neural Sys Rehab Eng, Vol 18, 5, pp. 505-514, 2010.

Reference No. 16—G. Burdea, C. Defais, K. Wong, J. Bartos and J. Hundal, “Feasibility study of a new game-based bimanual integrative therapy,” Proceedings 10thInt. Conference on Virtual Rehabilitation, Philadelphia, Pa., August 2013, pp. 101-108.

Reference No. 17—G. Burdea, K. Polistico, A. Krishnamoorthy, J. Hundal, F. Damiani, S. Pollack, “A Feasibility study of BrightBrainer™ cognitive therapy for elderly nursing home residents with dementia,” Disability and Rehabilitation—Assistive Technology.

Reference No. 18—Alzheimer's Association (2013) Alzheimer's Disease Facts and Figures. http://www.alz.org/downloads/factsfigures2013.pdf

Reference No. 19—Budea G, (2013) Bi manual Integrative Virtual Rehabilitation Systems and Methods. US Patent Application US 2014/0121018 A1, Sep. 20, 2013.

Reference No. 20—Burdea G, C. Defais, K. Wong, et al. (2013). Feasibility study of a new game-based bimanual integrative therapy,” Proceedings 10th Int. Conference on Virtual Rehabilitation, Philadelphia, Pa., August, pp. 101-108.

Reference No. 21—Burdea G, K Polistico, A Krishnamoorthy, G House, D Rethage, J Hundal, F qamiani, and S Pollack (2014) A feasibility study of the BrightBrainerlw cognitive therapy system for elderly nursing home residents with dementia,” Disability and Rehabilitation—Assistive Technology, Vol. 9, 12 pp. Mar. 29 2014 early online.

Reference No. 22—Hardy J, D Drescher, K Sarkar et al. (2011). Enhancing visual attention and working memory with a Webbased cognitive training program. Mensa Research Journal. 42(2):13-20.

Reference No. 23—House G, G Burdea, K. Polistico, J. Ross, and M. Leibick. (2014) A serious-gaming alternative to pen-andpaper cognitive scoring—a pilot study. Int. Conference on Disability and Virtual Reality Technology, Sweden, 2014.

Reference No. 24—Rosenzweig A (2010) The Mini-Mental State Exam and Its Use as an Alzheimer's Screening Test. Online at http://alzheimers.about.com/od/testsandprocedures/a/The-Mini-Mental-State-Exam-And-Its-Use-As-An-Alzheinners-Screening-Test.htm

Reference No. 25—Sixense Entertainment (2013) STEM system. Wireless motion tracking. http://sixense.com/hardware/wireless

Reference No. 26—Alzheimer's Association, (2013), Alzheimer's Disease Facts and Figures. http://www.alz.org/downloads/facts_figures_2013.pdf

Reference No. 27—Boulay, M, Benveniste, S, Boespflug, S et al., (2011), A pilot usability study of MINWii, a music therapy game for demented patients, Technol Health Care, 19, 4, pp. 233-246.

Reference No. 28—Burdea, G, Rabin, B, Rethage, D, et. al., (2013a), BrightArm™ Therapy for Patients with Advanced Dementia: A Feasibility Study, Proc. 10th Int. Conf. Virtual Rehab, Philadelphia, pp. 208-209.

Reference No. 29—Burdea, G, Defais, C, Wong, K, et al., (2013b), Feasibility study of a new game-based bimanual integrative therapy, Proc. 10th Int. Conf. Virtual Rehab, Philadelphia, pp. 101-108.

Reference No. 30—Burdea, G, Polistico, K, Krishnamoorthy, A, et al., (2014), A feasibility study of the BrightBrainer™ cognitive therapy system for elderly nursing home residents with dementia. Disability and Rehabilitation—Assistive Technology, 9, 12 pp. Mar. 29 2014 early online.

Reference No. 31—Folstein, M F, Folstein, S E, and Fanjiang, G., (2001), Mmse mini-mental state examination clinical guide. Lutz, F L: Psychological Assessment Resources, Inc.

Reference No. 32—Hebert, L E, Beckett, L A, Scherr, P A, et al. (2001), Annual incidence of Alzheimer disease in the United States projected to the years 2000 through 2050. Alzheimer Dis Assoc Disord. 15, 4, pp. 169-173.

Reference No. 33—Laerd Statistics, (2013), Spearman's Rank-Order Correlation using SPSS. Online at https://statistics.laerd.com/spss-tutorials/spearmans-rank-order-correlation-using-spss-statistics.php

Reference No. 34—Lee, J H, Ku, J, Cho, W, et al., (2003), A virtual reality system for the assessment and rehabilitation of the activities of daily living, Cyberpsychol Behav, 6, 4, pp. 383-388.

Reference No. 35—McKhann, G., Knopman, D S, Howard Chertkow, H., et al., (2011), The diagnosis of dementia due to Alzheimer's disease: Recommendations from the National Institute on Aging—Alzheimer's Association workgroups on diagnostic guidelines for Alzheimer's disease. Alzheimer's & Dementia: Journal of Alzheimer's Association. 7, 3, pp. 263-269.

Reference No. 36—O'Bryant S, Lacritz L, Hall J et al. (2010). Validation of the new interpretive guidelines for the clinical dementia rating scale sum of boxes score in the national Alzheimer's coordinating center database. Arch Neurol, 67, 6, pp. 746-749.

Reference No. 37—Perneczky R, Wagenpfeil S, Komossa K, et al. (2006). Mapping scores onto stages: mini-mental state examination and clinical dementia rating. Am J Geriatr Psychiatry, 2, pp. 139-144.

Reference No. 38—Rabin, B, Burdea, G, Roll, D, et al., (2012), Integrative rehabilitation of elderly stroke survivors: The design and evaluation of the BrightArm. Disability and Rehabilitation—Assistive Technology. 7, 4, pp. 323-335.

Reference No. 39—Rosenzweig, A, (2010), The Mini-Mental State Exam and Its Use as an Alzheimer's Screening Test. Online at http://alzheimers.about.com/od/testsandprocedures/a/The-Mini-Mental-State-Exam-And-Its-Use-As-An-Alzheimers-Screening-Test.htm

Reference No. 40—Rothbaum, B O, Hodges, L, Alarcon, R, et al., (1999), Virtual reality exposure therapy for PTSD Vietnam veterans: A case study, J Traumatic Stress, 12, 2, pp. 263-271.

Reference No. 41—Sixense Entertainment, (2011), Razer Hydra Master Guide, 11 pp. Unity Technologies, (2012), User Manual. San Francisco, Calif. http://docs.unity3d.com/Documentation/Manual/index.html

While the invention has been described with respect to specific examples including presently preferred modes of carrying out the invention, those skilled in the art will appreciate that there are numerous variations and permutations of the above described systems and techniques. It is to be understood that other embodiments may be utilized and structural and functional modifications may be made without departing from the scope of the present invention. Thus, the spirit and scope of the invention should be construed broadly as set forth in the appended claims and in view of the specification.

Claims

- A system of screening a patient for cognitive impairment comprising: a computer;a videogame executing on the computer;a display portraying action from the videogame, the action being viewable by the patient;a game controller connected to the computer having one or more hand-held components with a button or trigger, wherein the hand-held components can be moved independently from one another;the game controller adapted to send to the computer one or more signals representative of a position of the hand-held component and a position of the button or trigger on the hand held component;the game controller adapted to send the one or more signals as the patient changes the position of the hand-held component and the position of the button or trigger on the hand held component in response to action from the videogame;the computer adapted to quantify a measure of cognitive impairment based on the one or more signals received by the controller and by compounding performance in one or more games, wherein the computer accounts for the uni-manual or bimanual interaction modality with the one or more video games and adjusts a testing score of cognitive impairment accordingly.

- The system of claim 1 , wherein the computer measures the amount of time it takes a patient to complete a task in the videogame and uses the time to generate a testing score of cognitive impairment.

- The system of claim 1 , wherein the computer measures the precision of movement of the controller during performance of a task on the videogame and uses that quantified precision of movement to generate a testing score of cognitive function.

- The system of claim 1 , wherein the computer measures the precision of movement of the controller during performance of a task in the video game and measures the time it takes a patient to complete the task in the video game and uses the quantified precision and quantified time to generate a testing score of cognitive impairment.

- The system of claim 1 , wherein the computer adjusts the measure of cognitive impairment based on the difficulty of the task performed in the video game.

- The system of claim 1 , wherein the computer quantifies cognitive impairment based on the patient's performance in two or more different types of games by computing an aggregate or compound score for the evaluation session.

- The system of claim 1 , wherein the computer quantifies cognitive impairment of the patient into one of the following cognitive bands: normal;mild cognitive impairment;moderate cognitive impairment;severe cognitive impairment.

- The system of claim 1 , wherein the game controller comprises two hand-held components with a button, wherein the hand-held components can be moved independently from one another in each hand of the patient.

- A method of screening a patient for cognitive impairment comprising the steps of: executing a video game on a computer and portraying action from the videogame on a display, the action on the display being viewable by the patient;the patient holding a game controller connected to the computer, the game controller comprising one or more hand-held components having a button or trigger, wherein the one or more hand-held components can be moved independently from one another;the patient performing an action in the video game by moving the relative position of the one or more hand-held components and manipulating the button or trigger on the one or more hand-held components;the game controller sending and the computer receiving one or more signals from the game controller representative of the position of the one or more hand-held components and the position of the button or trigger on the one or more hand held components as the patient changes the position of the one or more hand held components and the position of the button or trigger on the hand held component in response to action from the videogame;the computer quantifying a measure of cognitive impairment based on the one or more signals received by the controller and by compounding performance in one or more games, the computer accounting for the uni-manual or bimanual interaction modality with the one or more video games and adjusting a testing score of cognitive impairment accordingly.

- The method of claim 9 , further comprising the computer measuring the amount of time it takes a patient to complete a task in the videogame and using that time to generate the testing score of cognitive impairment.

- The method of claim 9 , further comprising the computer measuring the precision of movement of the controller during performance of a task on the videogame and using that quantified precision of movement to generate the testing score of cognitive impairment.

- The method of claim 9 , further comprising the computer measuring the precision of movement of the controller during performance of a task in the video game and measuring the time it takes a patient to complete the task in the video game and using the quantified precision and quantified time to generate the testing score of cognitive impairment.

- The method of claim 9 , further comprising the computer adjusting the measure of cognitive impairment based on the difficulty of the task performed in the video game, and averaging performance scoring over a multitude of games played at different difficulty levels in a given evaluation session.

- The method of claim 9 , further comprising the computer quantifying cognitive impairment based on the patient's performance in two or more different types of games.

- The method of claim 9 , further comprising the computer quantifying cognitive impairment based on the patient's performance, said performance being measured after the patient had undergone a tutorial session such that learning artifacts are minimized.

- The method of claim 9 , further comprising the computer quantifying cognitive impairment of the patient into one of the following cognitive bands: normal;mild cognitive impairment;moderate cognitive impairment;severe cognitive impairment.

- The method of claim 9 , wherein the controller comprises two hand-held components with a button, wherein the hand-held components can be moved independently from one another in each hand of the patient.

- The method of claim 9 , wherein the controller comprises two hand-held components with a button, wherein the hand-held components can be moved independently from one another in each hand of the patient.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.