U.S. Pat. No. 10,668,376

ELECTRONIC GAME DEVICE AND ELECTRONIC GAME PROGRAM

AssigneeDeNA Co., Ltd.

Issue DateJune 7, 2018

Illustrative Figure

Abstract

An operation detector has a common operation portion in which the operation content is shared for a certain length of time after the start of the operation, and detects the start of either a slide operation associated with a command to move a three-dimensional character operated by a player in a virtual space, or a flick operation associated with another command. After the start of the operation is detected, in the common operation period, a model processor moves the three-dimensional character in the virtual space. After this, if the operation detector has detected a slide operation, the model processor moves the three-dimensional character without interruption in the movement processing in the common operation period.

Description

DETAILED DESCRIPTION OF THE INVENTION Detailed Description FIG. 1is a simplified diagram of the configuration of an electronic game device10pertaining to this embodiment. The electronic game device10is a computer capable of executing an electronic game program (e.g., non-transitory computer-readable medium including instructions to be performed on a processor), thereby providing an electronic game to a player. The electronic game device10is a terminal having a touch panel, and is a tablet terminal or a smartphone, for example. In this embodiment, the electronic game executed by the electronic game device10is a game in which the player operates a character (an object) in an electronic game. In particular, the electronic game in this embodiment is a game in which real-time behavior is required in the electronic game with respect to operations by the player, and is, for example, a fighting game in which a character moves, attacks, defends, etc., while fighting other characters according to operations by the player. As will be described in detail below, in the electronic game in this embodiment, three-dimensional characters are defined as three-dimensional objects in a three-dimensional virtual space having a world coordinate system as a game space, and the player is able to manipulate these three-dimensional characters. In this virtual space, the position, line-of-sight direction, and upward direction of the virtual camera are defined, and these are used as a reference to perform processing to project three-dimensional characters onto a two-dimensional screen with respect to the three-dimensional space including these characters. A two-dimensional image including the two-dimensional images of the characters formed by this projection processing is displayed on the electronic game device10. The display component12is constituted by a liquid crystal panel, for example. Various game screens are displayed on the display component12. Of these game screens, the play screen displayed during play includes characters that ...

DETAILED DESCRIPTION OF THE INVENTION

Detailed Description

FIG. 1is a simplified diagram of the configuration of an electronic game device10pertaining to this embodiment. The electronic game device10is a computer capable of executing an electronic game program (e.g., non-transitory computer-readable medium including instructions to be performed on a processor), thereby providing an electronic game to a player. The electronic game device10is a terminal having a touch panel, and is a tablet terminal or a smartphone, for example.

In this embodiment, the electronic game executed by the electronic game device10is a game in which the player operates a character (an object) in an electronic game. In particular, the electronic game in this embodiment is a game in which real-time behavior is required in the electronic game with respect to operations by the player, and is, for example, a fighting game in which a character moves, attacks, defends, etc., while fighting other characters according to operations by the player.

As will be described in detail below, in the electronic game in this embodiment, three-dimensional characters are defined as three-dimensional objects in a three-dimensional virtual space having a world coordinate system as a game space, and the player is able to manipulate these three-dimensional characters. In this virtual space, the position, line-of-sight direction, and upward direction of the virtual camera are defined, and these are used as a reference to perform processing to project three-dimensional characters onto a two-dimensional screen with respect to the three-dimensional space including these characters. A two-dimensional image including the two-dimensional images of the characters formed by this projection processing is displayed on the electronic game device10.

The display component12is constituted by a liquid crystal panel, for example. Various game screens are displayed on the display component12. Of these game screens, the play screen displayed during play includes characters that the player can manipulate.

An input component16(operation acceptance component) is configured to include a touch panel, for example. The input component16can accept various operations (slide operation, flick operation, tap operation, long tap operation, etc.) from the player. More specifically, the player performs an operation by bringing a finger (operation means) into contact with the touch panel. A stylus or the like may be used in place of the player's finger as the operation means. Various kinds of operation content will be described in detail below.

A storage component14is constituted by a ROM (read-only memory) or a RAM (random-access memory), for example. The storage component14stores an electronic game program (e.g., non-transitory computer-readable medium including instructions to be performed on a processor). In this electronic game program, commands used in the electronic game are defined with respect to the various operations inputted by the player to the input component16. The storage component14also stores various kinds of information related to the electronic game.

A controller18is configured to include a CPU (central processing unit), a microcontroller, a dedicated IC for image processing, or the like. The controller18executes processing involved in the electronic game in accordance with the electronic game program (e.g., non-transitory computer-readable medium including instructions to be performed on a processor), and also actuates the various parts of the electronic game device10. For example, the controller18performs the processing and management of parameters (hit points, etc.) for the electronic game related to characters operated by the player, determines the success or failure of attacks on other characters, determines the success or failure of attacks from other characters, and so forth. Also, as shown inFIG. 1, the controller18functions as a modeling processor20, a virtual camera setting component22, a projection converter24, a display processor26, and an operation detector28.

The modeling processor20defines the three-dimensional characters used in the electronic game in a virtual space having a world coordinate system. For example, a three-dimensional character is constituted as a conglomeration of numerous polygons defined by three points (coordinates) in the world coordinate system. Also, the modeling processor20can move three-dimensional characters in the virtual space according to operations by the player. The movement of a three-dimensional character in the virtual space is realized by coordinate transformation of the polygons constituting that three-dimensional character. The processing for moving a three-dimensional character performed by the modeling processor20will be discussed below.

The virtual camera setting component22sets a virtual camera in the virtual space. More specifically, the position, line-of-sight direction, and upward direction of the virtual camera are set. Since the orientation of the virtual camera is determined by the line-of-sight direction and the upward direction of the virtual camera, in this Specification the concept including the line-of-sight direction and the upward direction of the virtual camera is referred to as “orientation.” Also, the virtual camera setting component22can change the position and/or the orientation of the virtual camera according to operations by player. The processing for changing the position or orientation of the virtual camera by the virtual camera setting component22will be described below.

The projection converter24projects a three-dimensional character onto a two-dimensional screen defined in the virtual space on the basis of the position and orientation of the virtual camera set by the virtual camera setting component22, and thereby produces a two-dimensional image that includes the projection image of a three-dimensional object.

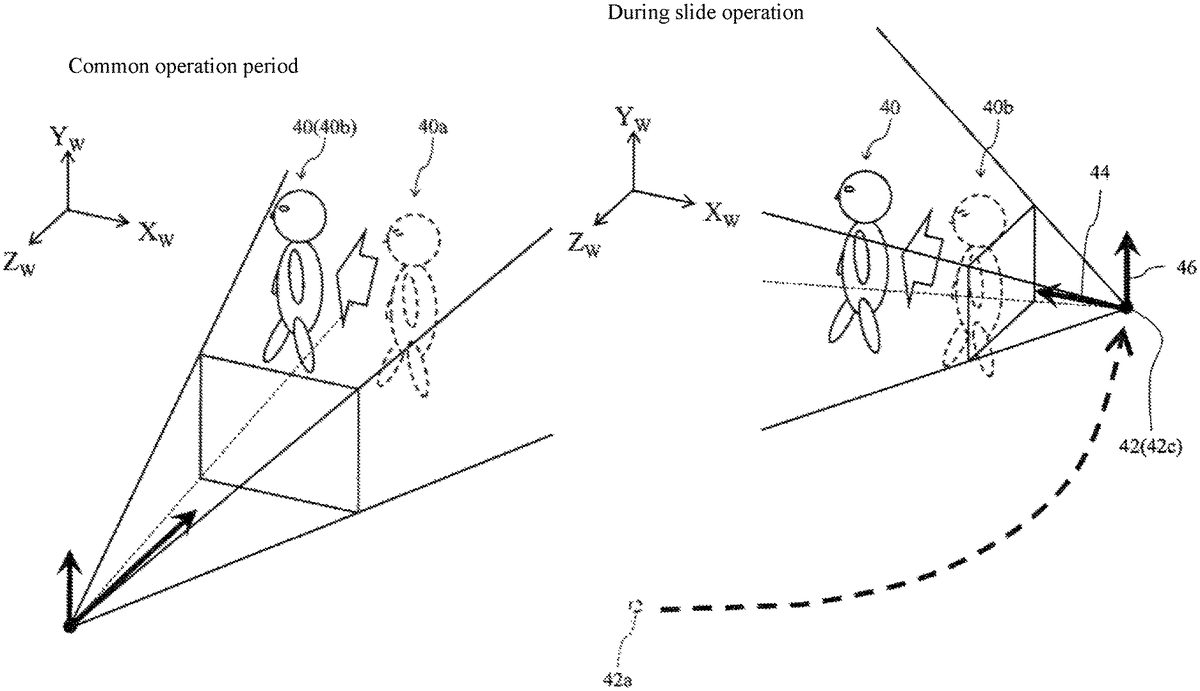

FIG. 2shows a three-dimensional character40and a virtual camera42defined in the virtual space. Also, inFIG. 2, the world coordinate system is indicated by the XWaxis, the YWaxis, and the ZWaxis.FIG. 2shows the position of the virtual camera42, the line-of-sight direction44of the virtual camera42, and the upward direction46of the virtual camera42. The line-of-sight direction44and the upward direction46are defined by a three-dimensional vector whose viewpoint is the position of the virtual camera42. A field of view is defined at a constant viewing angle in the viewing direction44from the virtual camera42, and a two-dimensional screen48, which is a plane perpendicular to the line-of-sight direction44, is defined in this field of view. The upward direction of the two-dimensional screen48(the upper side of the two-dimensional image) is determined according to the upward direction46of the virtual camera42. An object in the virtual space including the three-dimensional character40is projected onto the two-dimensional screen48by a projection method such as perspective projection conversion. As a result, a two-dimensional image including the projected image of the three-dimensional character40is formed on the two-dimensional screen48.

FIG. 2shows only the three-dimensional character40manipulated by the player, but other three-dimensional characters (such as three-dimensional characters to be battled) are also defined in the virtual space. The success or failure of the attack on another three-dimensional character or the success or failure of the attack from another three-dimensional character is determined by also taking into account the positional relationship between the three-dimensional character40manipulated by the player and other three-dimensional characters in the virtual space.

The display processor26performs processing that causes the display component12to display a two-dimensional image including the projection image of the three-dimensional character40formed on the two-dimensional screen48. Consequently, a character that can be manipulated by the player is displayed on the display component12. In this processing, cropping out a part of a two-dimensional image on the two-dimensional screen48, size conversion, or other such processing (view port conversion) may be performed.

The projection converter24and the display processor26execute the above-mentioned projection processing and display processing every time the object included in the field of view moves and every time the position or orientation of the virtual camera42is changed.

The operation detector28detects an input operation by the player with respect to the input component16. More specifically, touch (contact), movement, removal, contact time, and the like of the player's finger on the touch panel are sensed, which allows the operation content of the player to be identified and detected. As discussed above, the player can perform various operations on the input component16, but in this embodiment, we will describe a case in which the first operation is a slide operation and the second operation is a flick operation.

A slide operation is begun by bringing the finger of the player into contact with the touch panel and involves moving the finger while keeping it in contact with the touch panel, with this contact maintained between the finger and the touch panel for at least a specific length of time from the start of the operation. A flick operation is begun by bringing the finger of the player into contact with the touch panel and involves moving the finger while keeping it in contact with the touch panel, with the finger being removed from the touch panel within a specific length of time from the start of the operation. The above-mentioned specific length of time may be appropriately set in advance, but in this embodiment it is a time of about a few tenths of a second.

FIG. 3shows how the player touches a finger at the position P1on the touch panel constituting the input component16and moves the finger from there to the position P2on the touch panel. The operation detector28detects that either a slide operation or a flick operation has begun when the player's finger that touched the position P1has started to move. Then, if a specific length of time has elapsed since the start of the operation by the player until reaching position P2, the operation detector28determines that the operation is a slide operation. On the other hand, if the time from the start of the operation by the player until the finger is removed from the touch panel at the position P2is within a specific length of time, the operation detector28determines that the operation is a flick operation.

In a slide operation and a flick operation, the operation content is shared for a certain length of time after the start of the operation. More precisely, a slide operation and a flick operation have common operation content in that the operation is started by contact between the touch panel and a finger, and the finger is moved from there while in contact with the touch panel for a fixed period within a specific length of time. This common part is referred to as the “common operation portion” in this Specification. Also, the time during which the player is operating the common operation portion is referred to in this Specification as the “common operation period.”

In the common operation period, the operation detector28cannot determine whether a player operation is a slide operation or a flick operation. More precisely, if the player has performed a slide operation, the operation detector28cannot determine that the accepted operation is a slide operation until a specific length of time has elapsed since the start of the operation, and if the player has performed a flick operation, the operation detector28cannot determine that the accepted operation is a flick operation until the flick operation is completed.

As described above, with the electronic game program (e.g., non-transitory computer-readable medium including instructions to be performed on a processor) in this embodiment, it is possible to accept various operations performed by the player on the input component16, and different commands for the electronic game are associated with the various kinds of operation that can be detected by the operation detector28. In this embodiment, a command to move the three-dimensional character40in the virtual space and to slowly change the position and orientation of the virtual camera42so as to follow the three-dimensional character40from behind is associated with a slide operation. Also, a command not to move the three-dimensional character40, and to immediately move the position of the virtual camera42to behind the three-dimensional character40and to change the orientation of the virtual camera42in the direction of the three-dimensional character40is associated with a flick operation. Therefore, the modeling processor20and the virtual camera setting component22perform processing according to the operation of the player detected by the operation detector28. The processing by the modeling processor20and the virtual camera setting component22according to player operation will be described in detail below.

In this embodiment, after the operation detector28has detected the start of either a slide operation or a flick operation, the modeling processor20moves the three-dimensional character40in the virtual space while the operation detector28is detecting the common operation portion (that is, during the common operation period). The modeling processor20thus functions as an in-operation processor.FIG. 4shows how the three-dimensional character40moves in the common operation period. InFIG. 4, the three-dimensional character40is at a position40aat the point when either a slide operation or a flick operation begins, and the drawing shows how the three-dimensional character40moves to the position40bin the common operation period. As described above, when the three-dimensional character40moves, the projection converter24and the display processor26perform processing, so the movement of the three-dimensional character40in the common operation period is also reflected on the display component12.

The movement direction of the three-dimensional character40in the common operation period is determined on the basis of the operation content of the common operation portion. In this embodiment, the movement direction of the three-dimensional character40in the common operation period is determined according to the movement direction of the finger from the position on the touch panel where the player placed his finger. For example, as shown inFIG. 2, when the three-dimensional character40is facing in the ZWaxis positive direction and video of the three-dimensional character40as seen from the front is being displayed on the display component12, if a finger is moved from right to left on the touch panel, the modeling processor20moves the three-dimensional character40in the XWaxis negative direction in the common operation period, as shown inFIG. 4.

If the player's finger is removed from the touch panel in the above-mentioned specific length of time after the detection of the start of an operation, the operation detector28determines that the player's operation is a flick operation. If the operation detector28detects a flick operation, the modeling processor20and the virtual camera setting component22execute the processing associated with a flick operation in response to this. As described above, a flick operation is associated with a command not to move the three-dimensional character40(to stop the movement), to immediately move the position of the virtual camera42to behind the three-dimensional character40, and to change the orientation of the virtual camera42to the direction of the three-dimensional character40, so the modeling processor20and the virtual camera setting component22execute processing to accomplish this. In this manner, the modeling processor20and the virtual camera setting component22function as a post-operation processor.

FIG. 5shows the position and orientation of the virtual camera42after a flick operation. Due to movement in the common operation period, it is assumed that the three-dimensional character40has moved to the position40band is facing in the XWaxis negative direction. At this point, if the finger is removed from the touch panel and the operation detector28determines that it is a flick operation, the modeling processor20stops the movement of the three-dimensional character40. In addition, the virtual camera setting component22immediately moves the virtual camera42to the position42b, which is behind the three-dimensional character40facing the XWaxis negative direction and in the position40b. Furthermore, the virtual camera setting component22sets the line-of-sight direction44and the upward direction46so that the orientation of the virtual camera42is the direction toward the three-dimensional character40.

If the above-mentioned specific length of time has elapsed while contact with the touch panel is maintained after detecting the start of the operation, the operation detector28determines that the player operation is a slide operation. If the operation detector28detects a slide operation, the modeling processor20and the virtual camera setting component22execute the processing associated with the slide operation in response to this. As described above, a slide operation is associated with a command to move the three-dimensional character40and to slowly change the position and orientation of the virtual camera42so as to follow the three-dimensional character40from behind, so the modeling processor20and the virtual camera setting component22(post-operation processors) execute the processing in this way.

FIG. 6shows how the position of the three-dimensional character40and the position and orientation of the virtual camera42are changed during a slide operation. Let us assume that movement in the common operation period causes the three-dimensional character40to move to the position40band be oriented in the XWaxis negative direction. After this, if the operation detector28detects a slide operation, the modeling processor20moves the three-dimensional character40according to the direction of the slide operation without interruption in the movement processing in the common operation period. Along with this, the virtual camera setting component22slowly moves the position of the virtual camera42from the position42aat the point when the slide operation was detected toward the position42c, which is behind the moving three-dimensional character40. The position42cmoves with the three-dimensional character40. During the movement of the virtual camera42, the orientation of the virtual camera42(the line-of-sight direction44and the upward direction46) is slowly changed to maintain the direction to the three-dimensional character40.

The processing flow of the electronic game device10pertaining to this embodiment will now be described through reference to the flowchart shown inFIG. 7. The flowchart inFIG. 7is repeatedly executed during the game play of the electronic game provided by the electronic game device10.

In step S10, the operation detector28detects whether or not the player has started either a slide operation or a flick operation on the input component16. If the start of an operation is detected, the flow proceeds to step S12, and if it is not detected, the system stands by until a start of an operation is detected.

In step S12, the modeling processor20moves the three-dimensional character40in the virtual space in the player operation direction, that is, the direction corresponding to the movement direction of the finger with respect to the touch panel, in the common operation period.

In step S14, the operation detector28determines whether or not the player's finger has been removed from the touch panel within a specific length of time since the start of the operation. If it has been removed, the flow proceeds to step S16.

In step S16, the operation detector28determines that the player's operation is a flick operation.

In step S18, the modeling processor20and the virtual camera setting component22perform processing according to a flick operation. That is, the modeling processor20stops the movement of the three-dimensional character40, and the virtual camera setting component22immediately moves the position of the virtual camera42to behind the three-dimensional character40and sets the orientation of the virtual camera42to the direction of the three-dimensional character40.

If it is determined in step S14that the player's finger has not been removed from the touch panel, in step S20the operation detector28determines whether or not a specific length of time has elapsed since the start of the operation. If the specific length of time has not elapsed, the flow returns to step S14, and the processing is repeated from S14. If the specific length of time has elapsed, the flow proceeds to step S22.

In step S22, the operation detector28determines that the player operation is a slide operation.

In step S24, the modeling processor20and the virtual camera setting component22perform processing corresponding to a slide operation. That is, the modeling processor20continues the movement of the three-dimensional character40, while the virtual camera setting component22keeps the virtual camera42oriented in the direction of the three-dimensional character40, while slowly moving the position of the virtual camera42to behind the three-dimensional character40.

The basic configuration of the electronic game device10pertaining to this embodiment is as described above. With the electronic game device10pertaining to this embodiment, in the common operation period during which the operation detector28cannot determine whether a player operation is a slide operation or a flick operation, the modeling processor20moves the three-dimensional character40. Consequently, although movement of an object could not be started during the common operation period in the past, with this embodiment it is possible to start moving an object in the common operation period. Optionally, movement of the object can be started immediately after the operation is started. That is, when the player performs a slide operation to move the three-dimensional character40, the three-dimensional character40can be moved earlier than in the past. In other words, the response of the electronic game to an operation is improved, which allows the player to better enjoy playing the electronic game.

Also, in the common operation period, the movement direction of the three-dimensional character40is determined according to the player operation direction, so the movement direction of the three-dimensional character40in the common operation period matches the player's intentions. Furthermore, if a slide operation is detected after the common operation period, the three-dimensional character40is moved without interruption in the movement processing in the common operation period, so the three-dimensional character40can be moved as a series of movements, without any sense of unnaturalness before and after the point when the slide operation is detected.

Also, if the player performs a flick operation, the virtual camera42moves to behind the three-dimensional character40after the three-dimensional character40moves a little according to the operation direction in the common operation period, and the camera faces in the direction of the three-dimensional character40. Consequently, the player can set the orientation of the virtual camera42in any desired direction, depending on the direction of the flick operation. For example, as shown inFIG. 2, when the three-dimensional character40is facing in the ZWaxis positive direction and video of the three-dimensional character40as seen from the front is displayed on the display component12, if the player wants the orientation of the virtual camera42to be facing to the left side (the XWaxis negative direction side), the three-dimensional character40can be oriented to face the XWaxis negative direction side by performing a right-to-left flick operation, which allows the orientation of the virtual camera42, which has moved to behind the three-dimensional character40, to be in a direction facing the XWaxis negative direction.

An embodiment of the present invention was described above, but the present invention is not limited to the above embodiment and various modifications are possible without departing from the gist of the present invention.

For example, in the above embodiment, an example was given in which the first operation was a slide operation and the second operation was a flick operation, but as long as there is a common operation portion, the first operation and the second operation may be some other operation. For example, the first operation may be a long tap operation in which the finger is held in contact with the touch panel for an extended period, and the second operation may be a tap operation in which the finger is held in contact with the touch panel for a short time.

In the above embodiment, an example was given in which the operation acceptance component had a touch panel, but as long as a first operation and a second operation having a common operation portion can be accepted, other configurations can be adopted instead. For example, a controller having buttons, a sensor such as a camera that detects movement (gestures) of a player's body, or the like may be used.

In the above embodiment, a command to change the position and orientation of the virtual camera42and the movement of the three-dimensional character40was associated with the first operation, and a command to change the position and orientation of the virtual camera42was associated with the second operation, but as long as a command to move the three-dimensional character40is associated with the first operation and/or the second operation, it would be possible to associate some other commands.

Also, the present invention can be favorably applied to a case in which three or more operations have a common operation portion, and a command to move the three-dimensional character40is associated with at least one of these operations.

Also, the above embodiment described an electronic game in which the three-dimensional character40defined in a virtual space was operated, but the present invention is not limited to the use of a virtual space or the three-dimensional character40, and can also be favorably applied to an electronic game that is processed on a two-dimensional image. In this case, the controller18comprises a game processor as an in-operation processor and a post-operation processor instead of the modeling processor20, the virtual camera setting component22, and the projection converter24. If the operation detector28detects the start of either the first operation or the second operation, this game processor moves a two-dimensional character within the two-dimensional game space on the display component12in the common operation period. After this, the movement is continued when the first operation associated with the movement command is detected, and when the second operation associated with another command (such as an attack command) is detected, the processing relating to that other command is executed.

DESCRIPTION OF THE REFERENCE NUMERALS

10electronic game device,12display component,14storage component,16input component,18controller,20modeling processor,22virtual camera setting component,24projection converter,26display processor,28operation detector,40three-dimensional character,42virtual camera,44line-of-sight direction,46upward direction,48two-dimensional screen.

Claims

- An electronic game device, comprising: a display;and at least one processor that is configured to detect both a first type of input operation and a second type of input operation, wherein a start of the first type of input operation and a start of the second type of input operation are identical, such that the first type of input operation cannot be distinguished from the second type of input operation until an expiration of a common operation period, wherein the at least one processor is further configured to execute an electronic game that displays an object that can be operated by a player on the display, detect a start of an input operation that could be either the first type of input operation or the second type of input operation, and, in response to detecting the start of the input operation, monitor an elapsed time since the start of the input operation, while the elapsed time is within the common operation period and the input operation has not ended, operate the object according to the first type of input operation, and, after the elapsed time exceeds the common operation period or the input operation has ended within the common operation period, determine whether the input operation is the first type or the second type of input operation, when determining that the input operation is the first type of input operation, continue operating the object according to the first type of input operation, and, when determining that the input operation is the second type of input operation, stop operating the object according to the first type of input operation, and start operating the object according to the second type of input operation.

- The electronic game device according to claim 1 , wherein operating the object according to the first type of input operation comprises moving the object in a movement direction based on the input operation.

- The electronic game device according to claim 2 , wherein continuing operating the object according to the first type of input operation comprises continuing to move the object in the movement direction without interruption.

- The electronic game device according to claim 2 , wherein execution of the electronic game displays a game screen on the display, wherein the game screen is formed based on a position and orientation of a virtual camera defined within a three-dimensional virtual game space.

- The electronic game device according to claim 4 , wherein operating the object according to the second type of input operation comprises moving the position of the virtual camera to a rear of the object within the virtual game space, and setting the orientation of the virtual camera in a direction of the object.

- The electronic game device according to claim 1 , further comprising a touch panel configured to receive the input operation, wherein the first type of input operation is a slide operation in which contact to the touch panel is maintained for at least the common operation period, and wherein the second type of input operation is a flick operation in which the contact to the touch panel is ended within the common operation period.

- A computer-implemented method that comprises using at least one processor to: execute an electronic game that displays an object that can be operated by a player on a display;detect a start of an input operation that could be either a first type of input operation or a second type of input operation, wherein a start of the first type of input operation and a start of the second type of input operation are identical, such that the first type of input operation cannot be distinguished from the second type of input operation until an expiration of a common operation period;and, in response to detecting the start of the input operation, monitor an elapsed time since the start of the input operation, while the elapsed time is within the common operation period and the input operation has not ended, operate the object according to the first type of input operation, and, after the elapsed time exceeds the common operation period or the input operation has ended within the common operation period, determine whether the input operation is the first type or the second type of input operation, when determining that the input operation is the first type of input operation, continue operating the object according to the first type of input operation, and, when determining that the input operation is the second type of input operation, stop operating the object according to the first type of input operation, and start operating the object according to the second type of input operation.

- The computer-implemented method according to claim 7 , wherein operating the object according to the first type of input operation comprises moving the object in a movement direction based on the input operation.

- The computer-implemented method according to claim 8 , wherein continuing operating the object according to the first type of input operation comprises continuing to move the object in the movement direction without interruption.

- The computer-implemented method according to claim 8 , wherein execution of the electronic game displays a game screen on the display, wherein the game screen is formed based on a position and orientation of a virtual camera defined within a three-dimensional virtual game space.

- The computer-implemented method according to claim 10 , wherein operating the object according to the second type of input operation comprises moving the position of the virtual camera to a rear of the object within the virtual game space, and setting the orientation of the virtual camera in a direction of the object.

- The computer-implemented method according to claim 7 , further comprising receiving the input operation via a touch panel, wherein the first type of input operation is a slide operation in which contact to the touch panel is maintained for at least the common operation period, and wherein the second type of input operation is a flick operation in which the contact to the touch panel is ended within the common operation period.

- A non-transitory computer-readable medium including instructions to be performed on a processor, wherein the instructions, when executed by the processor, cause the processor to: execute an electronic game that displays an object that can be operated by a player on a display;detect a start of an input operation that could be either a first type of input operation or a second type of input operation, wherein a start of the first type of input operation and a start of the second type of input operation are identical, such that the first type of input operation cannot be distinguished from the second type of input operation until an expiration of a common operation period;and, in response to detecting the start of the input operation, monitor an elapsed time since the start of the input operation, while the elapsed time is within the common operation period and the input operation has not ended, operate the object according to the first type of input operation, and, after the elapsed time exceeds the common operation period or the input operation has ended within the common operation period, determine whether the input operation is the first type or the second type of input operation, when determining that the input operation is the first type of input operation, continue operating the object according to the first type of input operation, and, when determining that the input operation is the second type of input operation, stop operating the object according to the first type of input operation, and start operating the object according to the second type of input operation.

- The non-transitory computer-readable medium according to claim 13 , wherein operating the object according to the first type of input operation comprises moving the object in a movement direction based on the input operation.

- The non-transitory computer-readable medium according to claim 14 , wherein continuing operating the object according to the first type of input operation comprises continuing to move the object in the movement direction without interruption.

- The non-transitory computer-readable medium according to claim 14 , wherein execution of the electronic game displays a game screen on the display, wherein the game screen is formed based on a position and orientation of a virtual camera defined within a three-dimensional virtual game space.

- The non-transitory computer-readable medium according to claim 16 , wherein operating the object according to the second type of input operation comprises moving the position of the virtual camera to a rear of the object within the virtual game space, and setting the orientation of the virtual camera in a direction of the object.

- The non-transitory computer-readable medium according to claim 13 , wherein the instructions further cause the processor to receive the input operation via a touch panel, wherein the first type of input operation is a slide operation in which contact to the touch panel is maintained for at least the common operation period, and wherein the second type of input operation is a flick operation in which the contact to the touch panel is ended within the common operation period.

- The non-transitory computer-readable medium according to claim 13 , wherein the instructions further cause the processor to receive the input operation via a touch panel, wherein the first type of input operation is a slide operation in which contact to the touch panel is maintained for at least the common operation period, and wherein the second type of input operation is a flick operation in which the contact to the touch panel is ended within the common operation period.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.