U.S. Pat. No. 10,653,957

INTERACTIVE VIDEO GAME SYSTEM

AssigneeUniversal City Studios LLC

Issue DateDecember 6, 2017

Illustrative Figure

Abstract

An interactive video game system includes an array of volumetric sensors disposed around a play area and configured to collect respective volumetric data for each of a plurality of players. The system includes a controller communicatively coupled to the array of volumetric sensors. The controller is configured to: receive, from the array of volumetric sensors, respective volumetric data of each of the plurality of players; to combine the respective volumetric data of each of the plurality of players to generate at least one respective model for each of the plurality of players; to generate a respective virtual representation for each player of the plurality of players based, at least in part, on the generated at least one respective model of each player of the plurality of players; and to present the generated respective virtual representation of each player of the plurality of players in a virtual environment.

Description

DETAILED DESCRIPTION As used herein, a “volumetric scanning data” refers to three-dimensional (3D) data, such as point cloud data, collected by optically measuring (e.g., imaging, ranging) visible outer surfaces of players in a play area. As used herein, a “volumetric model” is a 3D model generated from the volumetric scanning data of a player that generally describes the outer surfaces of the player and may include texture data. A “shadow model,” as used herein, refers to a texture-less volumetric model of a player generated from the volumetric scanning data. As such, when presented on a two-dimensional (2D) surface, such as a display device, the shadow model of a player has a shape substantially similar to a shadow or silhouette of the player when illuminated from behind. A “skeletal model,” as used herein, refers to a 3D model generated from the volumetric scanning data of a player that defines predicted locations and positions of certain bones (e.g., bones associated with the arms, legs, head, spine) of a player to describe the location and pose of the player within a play area. Present embodiments are directed to an interactive video game system that enables multiple players (e.g., up to 12) to perform actions in a physical play area to control virtual representations of the players in a displayed virtual environment. The disclosed interactive video game system includes an array having two or more volumetric sensors, such as depth cameras and Light Detection and Ranging (LIDAR) devices, capable of volumetrically scanning each of the players. The system includes suitable processing circuitry that generates models (e.g., volumetric models, shadow models, skeletal models) for each player based on the volumetric scanning data collected by the array of sensors, as discussed below. During game play, at least two volumetric sensors capture the actions of the players ...

DETAILED DESCRIPTION

As used herein, a “volumetric scanning data” refers to three-dimensional (3D) data, such as point cloud data, collected by optically measuring (e.g., imaging, ranging) visible outer surfaces of players in a play area. As used herein, a “volumetric model” is a 3D model generated from the volumetric scanning data of a player that generally describes the outer surfaces of the player and may include texture data. A “shadow model,” as used herein, refers to a texture-less volumetric model of a player generated from the volumetric scanning data. As such, when presented on a two-dimensional (2D) surface, such as a display device, the shadow model of a player has a shape substantially similar to a shadow or silhouette of the player when illuminated from behind. A “skeletal model,” as used herein, refers to a 3D model generated from the volumetric scanning data of a player that defines predicted locations and positions of certain bones (e.g., bones associated with the arms, legs, head, spine) of a player to describe the location and pose of the player within a play area.

Present embodiments are directed to an interactive video game system that enables multiple players (e.g., up to 12) to perform actions in a physical play area to control virtual representations of the players in a displayed virtual environment. The disclosed interactive video game system includes an array having two or more volumetric sensors, such as depth cameras and Light Detection and Ranging (LIDAR) devices, capable of volumetrically scanning each of the players. The system includes suitable processing circuitry that generates models (e.g., volumetric models, shadow models, skeletal models) for each player based on the volumetric scanning data collected by the array of sensors, as discussed below. During game play, at least two volumetric sensors capture the actions of the players in the play area, and the system determines the nature of these actions based on the generated player models. Accordingly, the interactive video game system continuously updates the virtual representations of the players and the virtual environment based on the actions of the players and their corresponding in-game effects.

As mentioned, the array of the disclosed interactive video game system includes multiple volumetric sensors arranged around the play area to monitor the actions of the players within the play area. This generally ensures that a skeletal model of each player can be accurately generated and updated throughout game play despite potential occlusion from the perspective of one or more volumetric sensors of the array. Additionally, the processing circuitry of the system may use the volumetric scanning data to generate aspects (e.g., size, shape, outline) of the virtual representations of each player within the virtual environment. In certain embodiments, certain aspects (e.g., color, texture, scale) of the virtual representation of each player may be further adjusted or modified based on information associated with the player. As discussed below, this information may include information related to game play (e.g., items acquired, achievements unlocked), as well as other information regarding activities of the player outside of the game (e.g., player performance in other games, items purchased by the player, locations visited by the player). Furthermore, the volumetric scanning data collected by the array of volumetric sensors can be used by the processing circuitry of the game system to generate additional content, such as souvenir images in which a volumetric model of the player is illustrated as being within the virtual world. Accordingly, the disclosed interactive video game system enables immersive and engaging experience for multiple simultaneous players.

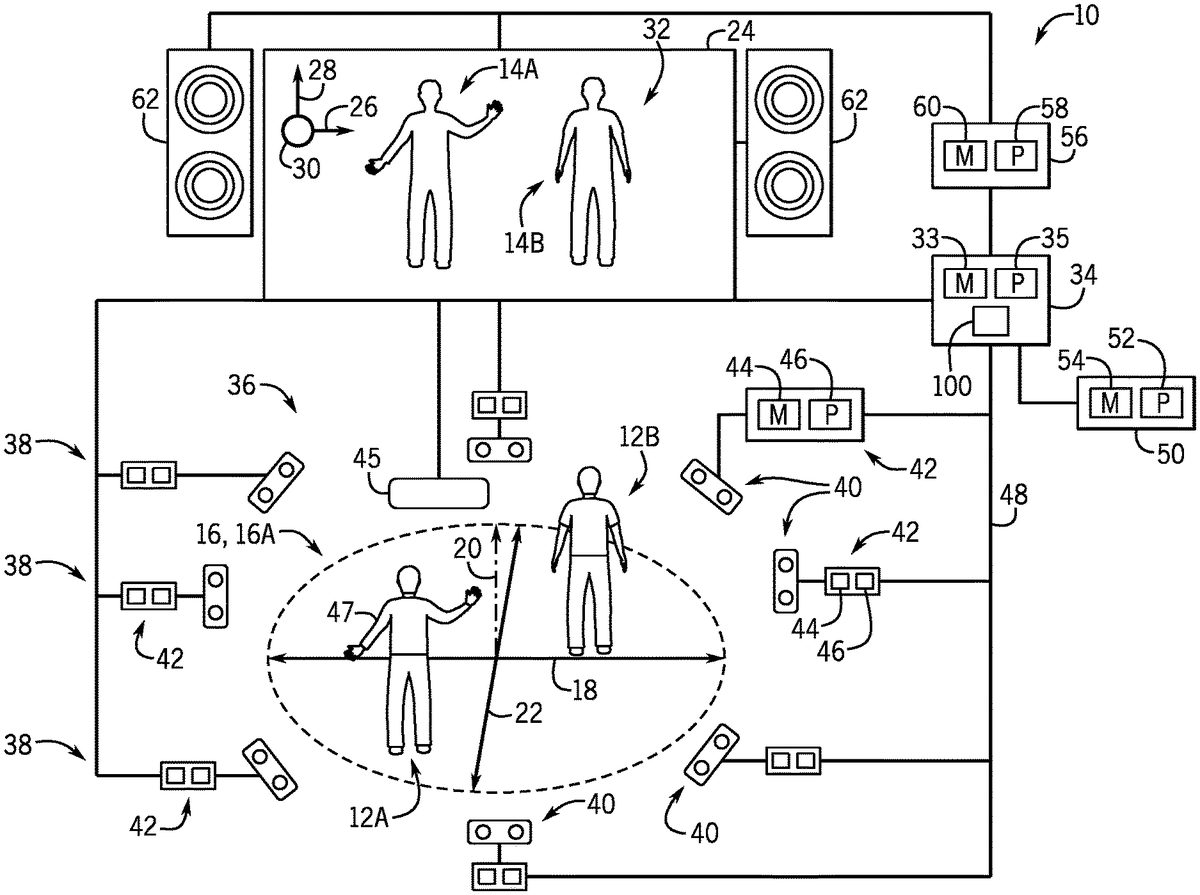

With the foregoing in mind,FIG. 1is a schematic diagram of an embodiment of an interactive video game system10that enables multiple players12(e.g., players12A and12B) to control respective virtual representations14(e.g., virtual representations14A and14B), respectively, by performing actions in a play area16. It may be noted that while, for simplicity, the present description is directed to two players12using the interactive video game system10, in other embodiments, the interactive video game system10can support more than two (e.g., 6, 8, 10, 12, or more) players12. The play area16of the interactive video game system10illustrated inFIG. 1is described herein as being a 3D play area16A. The term “3D play area” is used herein to refer to a play area16having a width (corresponding to an x-axis18), a height (corresponding to a y-axis20), and depth (corresponding to a z-axis22), wherein the system10generally monitors the movements each of players12along the x-axis18, y-axis20, and z-axis22. The interactive video game system10updates the location of the virtual representations14presented on a display device24along an x-axis26, a y-axis28, and z-axis30in a virtual environment32in response to the players12moving throughout the play area16. While the 3D play area16A is illustrated as being substantially circular, in other embodiments, the 3D play area16A may be square shaped, rectangular, hexagonal, octagonal, or any other suitable 3D shape.

The embodiment of the interactive video game system10illustrated inFIG. 1includes a primary controller34, having memory circuitry33and processing circuitry35, that generally provides control signals to control operation of the system10. As such, the primary controller34is communicatively coupled to an array36of sensing units38disposed around the 3D play area16A. More specifically, the array36of sensing units38may be described as symmetrically distributed around a perimeter of the play area16. In certain embodiments, at least a portion of the array36of sensing units38may be positioned above the play area16(e.g., suspended from a ceiling or on elevated platforms or stands) and pointed at a downward angle to image the play area16. In other embodiments, at least a portion of the array36of sensing units38may be positioned near the floor of the play area16and pointed at an upward angle to image the play area16. In certain embodiments, the array36of the interactive video game system10may include at least two at least two sensing units38per player (e.g., players12A and12B) in the play area16. Accordingly, the array36of sensing units38is suitably positioned to image a substantial portion of potential vantage points around the play area16to reduce or eliminate potential player occlusion.

In the illustrated embodiment, each sensing unit38includes a respective volumetric sensor40, which may be an infra-red (IR) depth camera, a LIDAR device, or another suitable ranging and/or imaging device. For example, in certain embodiments, all of the volumetric sensors40of the sensing units38in the array36are either IR depth cameras or LIDAR devices, while in other embodiments, a mixture of both IR depth cameras and LIDAR devices are present within the array36. It is presently recognized that both IR depth cameras and LIDAR devices can be used to volumetrically scan each of the players12, and the collected volumetric scanning data can be used to generate various models of the players, as discussed below. For example, in certain embodiments, IR depth cameras in the array36may be used to collect data to generate skeletal models, while the data collected by LIDAR devices in the array36may be used to generate volumetric and/or shadow models of the players12, which is discussed in greater detail below. It is also recognized that LIDAR devices, which collect point cloud data, are generally capable of scanning and mapping a larger area than depth cameras, typically with better accuracy and resolutions. As such, in certain embodiments, at least one sensing unit38of the array36includes a corresponding volumetric sensor40that is a LIDAR device to enhance the accuracy or resolution of the array36and/or to reduce a total number of sensing units38present in the array36.

Further, each illustrated sensing unit38includes a sensor controller42having suitable memory circuitry44and processing circuitry46. The processing circuitry46of each sensor unit38executes instructions stored in the memory circuitry44to enable the sensing unit38to volumetrically scan the players12to generate volumetric scanning data for each of the players12. For example, in the illustrated embodiment, the sensing units38are communicatively coupled to the primary controller34via a high-speed internet protocol (IP) network48that enables low-latency exchange of data between the devices of the interactive video game system10. Additionally, in certain embodiments, the sensing units38may each include a respective housing that packages the sensor controller42together with the volumetric sensor40.

It may be noted that, in other embodiments, the sensing units38may not include a respective sensor controller42. For such embodiments, the processing circuitry35of the primary controller34, or other suitable processing circuitry of the system10, is communicatively coupled to the respective volumetric sensors40of the array36to provide control signals directly to, and to receive data signals directly from, the volumetric sensors40. However, it is presently recognized that processing (e.g., filtering, skeletal mapping) the volumetric scanning data collected by each of these volumetric sensors40can be processor-intensive. As such, in certain embodiments, it can be advantageous to divide the workload by utilizing dedicated processors (e.g., processors46of each of the sensor controllers42) to process the volumetric data collected by the respective sensor40, and then to send processed data to the primary controller34. For example, in the illustrated embodiment, each of processors46of the sensor controllers42process the volumetric scanning data collected by their respective sensor40to generate partial models (e.g., partial volumetric models, partial skeletal models, partial shadow models) of each of the players12, and the processing circuitry35of the primary controller34receives and fuses or combines the partial models to generate complete models of each of the players12, as discussed below.

Additionally, in certain embodiments, the primary controller34may also receive information from other sensing devices in and around the play area16. For example, the illustrated primary controller34is communicatively coupled to a radio-frequency (RF) sensor45disposed near (e.g., above, below, adjacent to) the 3D play area16A. The illustrated RF sensor45receives a uniquely identifying RF signal from a wearable device47, such as a bracelet or headband having a radio-frequency identification (RFID) tag worn by each of the players12. In response, the RF sensor45provides signals to the primary controller34regarding the identity and the relative positions of the players12in the play area16. As such, for the illustrated embodiment, processing circuitry35of the primary controller34receives and combines the data collected by the array36, and potentially other sensors (e.g., RF sensor45), to determine the identities, locations, and actions of the players12in the play area16during game play. Additionally, the illustrated primary controller34is communicatively coupled to a database system50, or any other suitable data repository storing player information. The database system50includes processing circuitry52that executes instructions stored in memory circuitry54to store and retrieve information associated with the players12, such as player models (e.g., volumetric, shadow, skeletal), player statistics (e.g., wins, losses, points, total game play time), player attributes or inventory (e.g., abilities, textures, items), player purchases at a gift shop, player points in a loyalty rewards program, and so forth. The processing circuitry35of the primary controller34may query, retrieve, and update information stored by the database system50related to the players12to enable the system10to operate as set forth herein.

Additionally, the embodiment of the interactive video game system10illustrated inFIG. 1includes an output controller56that is communicatively coupled to the primary controller34. The output controller56generally includes processing circuitry58that executes instructions stored in memory circuitry60to control the output of stimuli (e.g., audio signals, video signals, lights, physical effects) that are observed and experienced by the players12in the play area16. As such, the illustrated output controller56is communicatively coupled to audio devices62and display device24to provide suitable control signals to operate these devices to provide particular output. In other embodiments, the output controller56may be coupled to any suitable number of audio and/or display devices. The display device24may be any suitable display device, such as a projector and screen, a flat-screen display device, or an array of flat-screen display devices, which is arranged and designed to provide a suitable view of the virtual environment32to the players12in the play area16. In certain embodiments, the audio devices62may be arranged into an array about the play area16to increase player immersion during game play. In other embodiments, the system10may not include the output controller56, and the processing circuitry35of the primary controller34may be communicatively coupled to the audio devices62, display device34, and so forth, to generate the various stimuli for the players12in the play area16to observe and experience.

FIG. 2is a schematic diagram of another embodiment of the interactive video game system10, which enables multiple players12(e.g., player12A and12B) to control virtual representations14(e.g., virtual representations14A and14B) by performing actions in the play area16. The embodiment of the interactive video game system10illustrated inFIG. 2includes many of the features discussed herein with respect toFIG. 1, including the primary controller34, the array36of sensing units38, the output controller56, and the display device24. However, the embodiment of the interactive video game system10illustrated inFIG. 2is described herein as having a 2D play area16B. The term “2D play area” is used herein to refer to a play area16having a width (corresponding to the x-axis18) and a height (corresponding to the y-axis20), wherein the system10generally monitors the movements each of players12along the x-axis18and y-axis20. For the embodiment illustrated inFIG. 2, the players12A and12B are respectively assigned sections70A and70B of the 2D play area16B, and the players12do not wander outside of their respective assigned sections during game play. The interactive video game system10updates the location of the virtual representations14presented on the display device24along the x-axis26and the y-axis28in the virtual environment32in response to the players12moving (e.g., running along the x-axis18, jumping along the y-axis20) within the 2D play area16B.

Additionally, the embodiment of the interactive video game system10illustrated inFIG. 2includes an interface panel74that can enable enhanced player interactions. As illustrated inFIG. 2, the interface panel74includes a number of input devices76(e.g., cranks, wheels, buttons, sliders, blocks) that are designed to receive input from the players12during game play. As such, the illustrated interface panel74is communicatively coupled to the primary controller34to provide signals to the controller34indicative of how the players12are manipulating the input devices76during game play. The illustrated interface panel74also includes a number of output devices78(e.g., audio output devices, visual output devices, physical stimulation devices) that are designed to provide audio, visual, and/or physical stimuli to the players12during game play. As such, the illustrated interface panel74is communicatively coupled to the output controller56to receive control signals and to provide suitable stimuli to the players12in the play area16in response to suitable signals from the primary controller34. For example, the output devices78may include audio devices, such as speakers, horns, sirens, and so forth. Output devices78may also include visual devices such as lights or display devices of the interface panel74. In certain embodiments, the output devices78of the interface panel74include physical effect devices, such as an electronically controlled release valve coupled to a compressed air line, which provides burst of warm or cold air or mist in response to a suitable control signal from the primary controller34or the output controller56.

As illustrated inFIG. 2, the array36of sensing units38disposed around the 2D play area16B of the illustrated embodiment of the interactive video game system10includes at least two sensing units38. That is, while the embodiment of the interactive video game system10illustrated inFIG. 1includes the array36having at least two sensing units38per player, the embodiment of the interactive video game system10illustrated inFIG. 2includes the array36that may include as few as two sensing units38regardless of the number of players. In certain embodiments, the array36may include at least two sensing units disposed at right angles (90°) with respect to the players12in the 2D play area16B. In certain embodiments, the array36may additionally or alternatively include at least two sensing units disposed on opposite sides (180°) with respect to the players12in the play area16B. By way of specific example, in certain embodiments, the array36may include only two sensing units38disposed on different (e.g., opposite) sides of the players12in the 2D play area16B.

As mentioned, the array36illustrated inFIGS. 1 and 2is capable of collecting volumetric scanning data for each of the players12in the play area16. In certain embodiments, the collected volumetric scanning data can be used to generate various models (e.g., volumetric, shadow, skeletal) for each player, and these models can be subsequently updated based on the movements of the players during game play, as discussed below. However, it is presently recognized that using volumetric models that include texture data is substantially more processor intensive (e.g., involves additional filtering, additional data processing) than using shadow models that lack this texture data. For example, in certain embodiments, the processing circuitry35of the primary controller34can generate a shadow model for each of the players12from volumetric scanning data collected by the array36by using edge detection techniques to differentiate between the edges of the players12and their surroundings in the play area16. It is presently recognized that such edge detection techniques are substantially less processor-intensive and involve substantially less filtering than using a volumetric model that includes texture data. As such, it is presently recognized that certain embodiments of the interactive video game system10generate and update shadow models instead of volumetric models that include texture, enabling a reduction in the size, complexity, and cost of the processing circuitry35of the primary controller34. Additionally, as discussed below, the processing circuitry34can generate the virtual representations14of the players12based, at least in part, on the generated shadow models.

As mentioned, the volumetric scanning data collected by the array36of the interactive video game system10can be used to generate various models (e.g., volumetric, shadow, skeletal) for each player. For example,FIG. 3is a diagram illustrating skeletal models80(e.g., skeletal models80A and80B) and shadow models82(e.g., shadow models82A and82B) representative of players in the 3D play area16A.FIG. 3also illustrates corresponding virtual representations14(e.g., virtual representations14A and14B) of these players presented in the virtual environment32on the display device24, in accordance with the present technique. As illustrated, the represented players are located at different positions within the 3D play area16A of the interactive video game system10during game play, as indicated by the locations of the skeletal models80and the shadow models82. The illustrated virtual representations14of the players in the virtual environment32are generated, at least in part, based on the shadow models82of the players. As the players move within the 3D play area16A, as mentioned above, the primary controller34tracks these movements and accordingly generates updated skeletal models80and shadow models82, as well as the virtual representations14of each player.

Additionally, embodiments of the interactive video game system10having the 3D play area16A, as illustrated inFIGS. 1 and 3, enable player movement and tracking along the z-axis22and translates it to movement of the virtual representations14along the z-axis30. As illustrated inFIG. 3, this enables the player represented by the skeletal model80A and shadow model82A and to move a front edge84of the 3D play area16A, and results in the corresponding virtual representation14A being presented at a relatively deeper point or level86along the z-axis30in the virtual environment32. This also enables the player represented by skeletal model80B and the shadow model82B to move to a back edge88of the 3D play area16A, which results in the corresponding virtual representation14B being presented at a substantially shallower point or level90along the z-axis30in the virtual environment32. Further, for the illustrated embodiment, the size of the presented virtual representations14is modified based on the position of the players along the z-axis22in the 3D play area16A. That is, the virtual representation14A positioned relatively deeper along the z-axis30in the virtual environment32is presented as being substantially smaller than the virtual representation14B positioned at a shallower depth or layer along the z-axis30in the virtual environment32.

It may be noted that, for embodiments of the interactive game system10having the 3D player area16A, as represented inFIGS. 1 and 3, the virtual representations14may only be able to interact with virtual objects that are positioned at a similar depth along the z-axis30in the virtual environment32. For example, for the embodiment illustrated inFIG. 3, the virtual representation14A is capable of interacting with a virtual object92that is positioned deeper along the z-axis30in the virtual environment32, while the virtual representation14B is capable of interacting with another virtual object94that is positioned a relatively shallower depth along the z-axis30in the virtual environment32. That is, the virtual representation14A is not able to interact with the virtual object94unless that player represented by the models80A and82A changes position along the z-axis22in the 3D play area16A, such that the virtual representation14A moves to a similar depth as the virtual object94in the virtual environment32.

For comparison,FIG. 4is a diagram illustrating an example of skeletal models80(e.g., skeletal models80A and80B) and shadow models82(e.g., shadow models82A and82B) representative of players in the 2D play area16B.FIG. 4also illustrates virtual representations14(e.g., virtual representations14A and14B) of the players presented on the display device24. As the players move within the 2D play area16B, as mentioned above, the primary controller34tracks these movements and accordingly updates the skeletal models80, the shadow models82, and the virtual representations14of each player. As mentioned, embodiments of the interactive video game system10having the 2D play area16B illustrated inFIGS. 2 and 4do not track player movement along a z-axis (e.g., z-axis22illustrated inFIGS. 1 and 3). Instead, for embodiments with the 2D play area16B, the size of the presented virtual representations14may be modified based on a status or condition of the players inside and/or outside of game play. For example, inFIG. 4, the virtual representation14A is substantially larger than the virtual representation14B. In certain embodiments, the size of the virtual representations14A and14B may be enhanced or exaggerated in response to the virtual representation14A or14B interacting with a particular item, such as in response to the virtual representation14A obtaining a power-up during a current or previous round of game play. In other embodiments, the exaggerated size of the virtual representation14A, as well as other modifications of the virtual representations (e.g., texture, color, transparency, items worn or carried by the virtual representation), may be the result of the corresponding player interacting with objects or items outside of the interactive video game system10, as discussed below.

It is presently recognized that embodiments of the interactive video game system10that utilize a 2D play area16B, as represented inFIGS. 2 and 4, enable particular advantages over embodiments of the interactive video game system10that utilize the 3D play area16A, as illustrated inFIG. 1. For example, as mentioned, the array36of sensing units38in the interactive video game system10having the 2D play area16B, as illustrated inFIG. 2, includes fewer sensing units38than the interactive video game system10with the 3D play area16A, as illustrated inFIG. 1. That is, depth (e.g., location and movement along the z-axis22, as illustrated inFIG. 1) is not tracked for the interactive video game system10having the 2D play area16B, as represented inFIGS. 2 and 4. Additionally, since players12A and12B remain in their respective assigned sections70A and70B of the 2D play area16B, the potential for occlusion is substantially reduced. For example, by having players remain within their assigned sections70of the 2D play area16B occlusion between players only occurs predictably along the x-axis18. As such, by using the 2D play area16B, the embodiment of the interactive video game system10illustrated inFIG. 2enables the use of a smaller array36having fewer sensing units38to track the players12, compared to the embodiment of the interactive video game system10ofFIG. 1.

Accordingly, it is recognized that the smaller array36of sensing units38used by embodiments of the interactive video game system10having the 2D play area16B also generate considerably less data to be processed than embodiments having the 3D play area16A. For example, because occlusion between players12is significantly more limited and predictable in the 2D play area16B ofFIGS. 2 and 4, fewer sensing units38can be used in the array36while still covering a substantial portion of potential vantage points around the play area16. As such, for embodiments of the interactive game system10having the 2D play area16B, the processing circuitry35of the primary controller34may be smaller, simpler, and/or more energy efficient, relative to the processing circuitry35of the primary controller34for embodiments of the interactive game system10having the 3D play area16A.

As mentioned, the interactive video game system10is capable of generating various models of the players12. More specifically, in certain embodiments, the processing circuitry35of the primary controller34is configured to receive partial model data (e.g., partial volumetric, shadow, and/or skeletal models) from the various sensing units38of the array36and fuse the partial models into complete models (e.g., complete volumetric, shadow, and/or skeletal models) for each of the players12. Set forth below is an example in which the processing circuitry35of the primary controller34fuses partial skeletal models received from the various sensing units38of the array36. It may be appreciated that, in certain embodiments, the processing circuitry35of the primary controller34may use a similar process to fuse partial shadow model data into a shadow model and/or to fuse partial volumetric model data.

In an example, partial skeletal models are generated by each sensing unit38of the interactive video game system10and are subsequently fused by the processing circuitry35of the primary controller34. In particular, the processing circuitry35may perform a one-to-one mapping of corresponding bones of each of the players12in each of the partial skeletal models generated by different sensing units38positioned at different angles (e.g., opposite sides, perpendicular) relative to the play area16. In certain embodiments, relatively small differences between the partial skeletal models generated by different sensing units38may be averaged when fused by the processing circuitry35to provide smoothing and prevent jerky movements of the virtual representations14. Additionally, when a partial skeletal model generated by a particular sensing unit differs significantly from the partial skeletal models generated by at least two other sensing units, the processing circuitry35of the primary controller34may determine the data to be erroneous and, therefore, not include the data in the skeletal models80. For example, if a particular partial skeletal model is missing a bone that is present in the other partial skeletal models, then the processing circuitry35may determine that the missing bone is likely the result of occlusion, and may discard all or some of the partial skeletal model in response.

It may be noted that precise coordination of the components of the interactive game system10is desirable to provide smooth and responsive movements of the virtual representations14in the virtual environment32. In particular, to properly fuse the partial models (e.g., partial skeletal, volumetric, and/or shadow models) generated by the sensing units38, the processing circuitry35may consider the time at which each of the partial models is generated by the sensing units38. In certain embodiments, the interactive video game system10may include a system clock100, as illustrated inFIGS. 1 and 2, which is used to synchronize operations within the system10. For example, the system clock100may be a component of the primary controller34or another suitable electronic device that is capable of generating a time signal that is broadcast over the network48of the interactive video game system10. In certain embodiments, various devices coupled to the network48may receive and use a time signal to adjust respective clocks at particular times (e.g., at the start of game play), and the devices may subsequently include timing data based on signals from these respective clocks when providing game play data to the primary controller34. In other embodiments, the various devices coupled to the network48continually receive the time signal from the system clock100(e.g., at regular microsecond intervals) throughout game play, and the devices subsequently include timing data from the time signal when providing data (e.g., volumetric scanning data, partial model data) to the primary controller34. Additionally, the processing circuitry35of the primary controller34can determine whether a partial model (e.g., a partial volumetric, shadow, or skeletal model) generated by a sensor unit38is sufficiently fresh (e.g., recent, contemporary with other data) to be used to generate or update the complete model, or if the data should be discarded as stale. Accordingly, in certain embodiments, the system clock100enables the processing circuitry35to properly fuse the partial models generated by the various sensing units38into suitable volumetric, shadow, and/or skeletal models of the players12.

FIG. 5is a flow diagram illustrating an embodiment of a process110for operating the interactive game system10, in accordance with the present technique. It may be appreciated that, in other embodiments, certain steps of the illustrated process110may be performed in a different order, repeated multiple times, or skipped altogether, in accordance with the present disclosure. The process110illustrated inFIG. 5may be executed by the processing circuitry35of the primary controller34alone, or in combination with other suitable processing circuitry (e.g., processing circuitry46,52, and/or58) of the system10.

The illustrated embodiment of the process110begins with the interactive game system10collecting (block112) a volumetric scanning data for each player. In certain embodiments, as illustrated inFIGS. 1-4, the players12may be scanned or imaged by the sensing units38positioned around the play area16. For example, in certain embodiments, before game play begins, the players12may be prompted to strike a particular pose, while the sensing units38of the array36collect volumetric scanning data regarding each player. In other embodiments, the players12may be volumetrically scanned by a separate system prior to entering the play area16. For example, a line of waiting players may be directed through a pre-scanning system (e.g., similar to a security scanner at an airport) in which each player is individually volumetrically scanned (e.g., while striking a particular pose) to collect the volumetric scanning data for each player. In certain embodiments, the pre-scanning system may be a smaller version of the 3D play area16A illustrated inFIG. 1or the 2D play area16B inFIG. 2, in which an array36of sensing units38are positioned about an individual player to collect the volumetric scanning data. In other embodiments, the pre-scanning system may include fewer sensing units38(e.g., 1, 2, 3) positioned around the individual player, and the sensing units38are rotated around the player to collect the complete volumetric scanning data. It is presently recognized that it may be desirable to collect the volumetric scanning data indicated in block112while the players12are in the play area16to enhance the efficiency of the interactive game system10and to reduce player wait times.

Next, the interactive video game system10generates (block114) corresponding models for each player based on the volumetric scanning data collected for each player. As set forth above, in certain embodiments, the processing circuitry35of the primary controller may receive partial models for each of the players from each of the sensing units38in the array36, and may suitably fuse these partial models to generate suitable models for each of the players. For example, the processing circuitry35of the primary controller34may generate a volumetric model for each player that generally defines a 3D shape of each player. Additionally or alternatively, the processing circuitry35of the primary controller34may generate a shadow model for each player that generally defines a texture-less 3D shape of each player. Furthermore, the processing circuitry35may also generate a skeletal model that generally defines predicted skeletal positions and locations of each player within the play area.

Continuing through the example process110, next, the interactive video game system10generates (block116) a corresponding virtual representation for each player based, at least in part on, the on the volumetric scanning data collected for each player and/or one or more the models generated for each player. For example, in certain embodiments, the processing circuitry35of the primary controller34may use a shadow model generated in block114as a basis to generate a virtual representation of a player. It may be appreciated that, in certain embodiments, the virtual representations14may have a shape or outline that is substantially similar to the shadow model of the corresponding player, as illustrated inFIGS. 3 and 4. In addition to shape, the virtual representations14may have other properties that can be modified to correspond to properties of the represented player. For example, a player may be associated with various properties (e.g., items, statuses, scores, statistics) that reflect their performance in other game systems, their purchases in a gift shop, their membership to a loyalty program, and so forth. Accordingly, properties (e.g., size, color, texture, animations, presence of virtual items) of the virtual representation may be set in response to the various properties associated with the corresponding player, and further modified based on changes to the properties of the player during game play.

It may be noted that, in certain embodiments, the virtual representations14of the players12may not have an appearance or shape that substantially resembles the generated volumetric or shadow models. For example, in certain embodiments, the interactive video game system10may include or be communicatively coupled to a pre-generated library of virtual representations that are based on fictitious characters (e.g., avatars), and the system may select particular virtual representations, or provide recommendations of particular selectable virtual representations, for a player generally based on the generated volumetric or shadow model of the player. For example, if the game involves a larger hero and a smaller sidekick, the interactive video game system10may select or recommend from the pre-generated library a relatively larger hero virtual representation for an adult player and a relatively smaller sidekick virtual representation for a child player.

The process110continues with the interactive video game system10presenting (block118) the corresponding virtual representations14of each of the players in the virtual environment32on the display device24. In addition to presenting, in certain embodiments, the actions in block118may also include presenting other introductory presentations, such as a welcome message or orientation/instructional information, to the players12in the play area16before game play begins. Furthermore, in certain embodiments, the processing circuitry35of the primary controller34may also provide suitable signals to set or modify parameters of the environment within the play area16. For example, these modifications may include adjusting house light brightness and/or color, playing game music or game sound effects, adjusting the temperature of the play area, activating physical effects in the play area, and so forth.

Once game play begins, the virtual representations14generated in block116and presented in block118are capable of interacting with one another and/or with virtual objects (e.g., virtual objects92and94) in the virtual environment32, as discussed herein with respect toFIGS. 3 and 4. During game play, the interactive video game system10generally determines (block120) the in-game actions of each of the players12in the play area16and the corresponding in-game effects of these in-game actions. Additionally, the interactive video game system10generally updates (block122) the corresponding virtual representations14of the players12and/or the virtual environment32based on the in-game actions of the players12in the play area16and the corresponding in-game effects determined in block120. As indicated by the arrow124, the interactive video game system10may repeat the steps indicated in block120and122until game play is complete, for example, due to one of the players12winning the round of game play or due to an expiration of an allotted game play time.

FIG. 6is a flow diagram that illustrates an example embodiment of a more detailed process130by which the interactive video game system10performs the actions indicated in blocks120and122ofFIG. 5. That is, the process130indicated inFIG. 6includes a number of steps to determine the in-game actions of each player in the play area and the corresponding in-game effects of these in-game actions, as indicated by the bracket120, as well as a number of steps to update the corresponding virtual representation of each player and/or the virtual environment, as indicated by the bracket122. In certain embodiments, the actions described in the process130may be encoded as instructions in a suitable memory, such as the memory circuitry33of the primary controller34, and executed by a suitable processor, such as the processing circuitry35of the primary controller34, of the interactive video game system10. It should be noted that the illustrated process130is merely provided as an example, and that in other embodiments, certain actions described may be performed in different orders, may be repeated, or may be skipped altogether.

The process130ofFIG. 6begins with the processing circuitry35receiving (block132) partial models from a plurality of sensor units in the play area. As discussed herein with respect toFIGS. 1 and 2, the interactive video game system10includes the array36of sensing units38disposed in different positions around the play area16, and each of these sensing units38is configured to generate one or more partial models (e.g. partial volumetric, shadow, and/or skeletal models) for at least a portion of the players12. Additionally, as mentioned, the processing circuitry35may also receive data from other devices (e.g., RF scanner45, input devices76) regarding the actions of the players16disposed within the play area16. Further, as mentioned, these partial models may be timestamped based on a signal from the clock100and provided to the processing circuitry35of the primary controller34via the high-speed IP network48.

For the illustrated embodiment of the process130, after receiving the partial models from the sensing units38, the processing circuitry35fuses the partial models to generate (block134) updated models (e.g., volumetric, shadow, and/or skeletal) for each player based on the received partial models. For example, the processing circuitry35may update a previously generated model, such as an initial skeletal model generated in block114of the process110ofFIG. 5. Additionally, as discussed, when combining the partial models, the processing circuitry35may filter or remove data that is inconsistent or delayed to improve accuracy when tracking players despite potential occlusion or network delays.

Next, the illustrated process130continues with the processing circuitry35identifying (block136) one or more in-game actions of the corresponding virtual representations14of each player12based, at least in part, on the updated models of the players generated in block134. For example, the in-game actions may include jumping, running, sliding, or otherwise moving of the virtual representations14within the virtual environment32. In-game actions may also include interacting with (e.g., moving, obtaining, losing, consuming) an item, such as a virtual object in the virtual environment32. In-game actions may also include completing a goal, defeating another player, winning a round, or other similar in-game actions.

Next, the processing circuitry35may determine (block138) one or more in-game effects triggered in response to the identified in-game actions of each of the players12. For example, when the determined in-game action is a movement of a player, then the in-game effect may be a corresponding change in position of the corresponding virtual representation within the virtual environment. When the determined in-game action is a jump, the in-game effect may include moving the virtual representation along the y-axis20, as illustrated inFIGS. 1-4. When the determined in-game action is activating a particular power-up item, then the in-game effect may include modifying a status (e.g., a health status, a power status) associated with the players12. Additionally, in certain cases, the movements of the virtual representations14may be accentuated or augmented relative to the actual movements of the players12. For example, as discussed above with respect to modifying the appearance of the virtual representation, the movements of a virtual representation of a player may be temporarily or permanently exaggerated (e.g., able to jump higher, able to jump farther) relative to the actual movements of the player based on properties associated with the player, including items acquired during game play, items acquired during other game play sessions, items purchased in a gift shop, and so forth.

The illustrated process130continues with the processing circuitry35generally updating the presentation to the players in the play area16based on the in-game actions of each player and the corresponding in-game effects, as indicated by bracket122. In particular, the processing circuitry35updates (block140) the corresponding virtual representations14of each of the players12and the virtual environment32based on the updated models (e.g., shadow and skeletal models) of each player generated in block134, the in-game actions identified in block136, and/or the in-game effects determined in block138, to advance game play. For example, for the embodiments illustrated inFIGS. 1 and 2, the processing circuitry35may provide suitable signals to the output controller56, such that the processing circuitry58of the output controller56updates the virtual representations14and the virtual environment32presented on the display device24.

Additionally, the processing circuitry35may provide suitable signals to generate (block142) one or more sounds and/or one or more physical effects (block144) in the play area16based, at least in part, on the determined in-game effects. For example, when the in-game effect is determined to be a particular virtual representation of a player crashing into a virtual pool, the primary controller34may cause the output controller56to signal the speakers62to generate suitable splashing sounds and/or physical effects devices78to generate a blast of mist. Additionally, sounds and/or physical effects may be produced in response to any number of in-game effects, including, for example, gaining a power-up, lowing a power-up, scoring a point, or moving through particular types of environments. Mentioned with respect toFIG. 5, the process130ofFIG. 6may repeat until game play is complete, as indicated by the arrow124.

Furthermore, it may be noted that the interactive video game system10can also enable other functionality using the volumetric scanning data collected by the array36of volumetric sensors38. For example, as mentioned, in certain embodiments, the processing circuitry35of the primary controller34may generate a volumetric model that that includes both the texture and the shape of each player. At the conclusion of game play, the processing circuitry35of the primary controller34can generate simulated images that use the volumetric models of the players to render a 3D likeness of the player within a portion of the virtual environment32, and these can be provided (e.g., printed, electronically transferred) to the players12as souvenirs of their game play experience. For example, this may include a print of a simulated image illustrating the volumetric model of a player crossing a finish line within a scene from the virtual environment32.

The technical effects of the present approach includes an interactive video game system that enables multiple players (e.g., two or more, four or more) to perform actions in a physical play area (e.g., a 2D or 3D play area) to control corresponding virtual representations in a virtual environment presented on a display device near the play area. The disclosed system includes a plurality of sensors and suitable processing circuitry configured to collect volumetric scanning data and generate various models, such as volumetric models, shadow models, and/or skeletal models, for each player. The system generates the virtual representations of each player based, at least in in part, on a generated player models. Additionally, the interactive video game system may set or modify properties, such as size, texture, and/or color, of the of the virtual representations based on various properties, such as points, purchases, power-ups, associated with the players.

While only certain features of the present technique have been illustrated and described herein, many modifications and changes will occur to those skilled in the art. It is, therefore, to be understood that the appended claims are intended to cover all such modifications and changes as fall within the true spirit of the present technique. Additionally, the techniques presented and claimed herein are referenced and applied to material objects and concrete examples of a practical nature that demonstrably improve the present technical field and, as such, are not abstract, intangible or purely theoretical. Further, if any claims appended to the end of this specification contain one or more elements designated as “means for [perform]ing [a function] . . . ” or “step for [perform]ing [a function] . . . ”, it is intended that such elements are to be interpreted under 35 U.S.C. 112(f). However, for any claims containing elements designated in any other manner, it is intended that such elements are not to be interpreted under 35 U.S.C. 112(f).

Claims

- An interactive video game system, comprising: an array of volumetric sensors disposed around a play area and configured to collect respective volumetric data for each player of a plurality of players;and a controller communicatively coupled to the array of volumetric sensors and configured to: receive, from the array of volumetric sensors, the respective volumetric data of each player of the plurality of players;combine the respective volumetric data of each player of the plurality of players to generate at least one respective shadow model of each player of the plurality of players;and present the generated respective shadow model of each player of the plurality of players in a virtual environment;and a display device disposed near the play area, wherein the display device is configured to display the virtual environment to the plurality of players in the play area.

- The interactive video game system of claim 1 , wherein the array of volumetric sensors comprises an array of LIDAR devices.

- The interactive video game system of claim 1 , wherein the volumetric sensors of the array are symmetrically distributed around a perimeter of the play area.

- The interactive video game system of claim 1 , wherein the controller is configured to: combine the respective volumetric data of each player of the plurality of players to generate at least one respective skeletal model of each player of the plurality of players;determine in-game actions of each player of the plurality of players in the play area and corresponding in-game effects based, at least in part, on the generated at least one respective skeletal model;and update the generated respective shadow model of each player of the plurality of players and the virtual environment based on the determined in-game actions and in-game effects.

- The interactive video game system of claim 1 , wherein the controller is configured to: generate a respective volumetric model for each player of the plurality of players;and generate a simulated image that includes the volumetric model of a particular player of the plurality of players within the virtual environment when game play concludes.

- The interactive video game system of claim 1 , wherein the play area is a three-dimensional (3D) play area and the array of volumetric sensors is configured to collect the respective volumetric data for each player of the plurality of players with respect to a width, a height, and a depth of the 3D play area.

- The interactive video game system of claim 1 , wherein the play area is a two-dimensional (2D) play area and the array of volumetric sensors is configured to collect the respective volumetric data for each of the plurality of players with respect to a width and a height of the 2D play area.

- An interactive video game system, comprising: a display device disposed near a play area and configured to display a virtual environment to a plurality of players in the play area;an array of sensing units disposed around the play area, wherein each sensing unit of the array comprises respective processing circuitry configured to determine a partial model of at least one player of the plurality of players;a controller communicatively coupled to the array of sensing units, wherein the controller is configured to: receive, from the array of sensing units, the partial models of each player of the plurality of players;generate a respective model of each player of the plurality of players by fusing the partial models of each player of the plurality of players;determine in-game actions of each player of the plurality of players based, at least in part, on the generated respective model of each player of the plurality of players;and display a respective virtual representation of each player of the plurality of players in the virtual environment on the display device based, at least in part, on the generated respective model and the in-game actions of each player of the plurality of players;and an interface panel communicatively coupled to the controller, wherein the interface panel comprises a plurality of input devices configured to receive input from the plurality of players during game play and electrical circuitry configured to transmit a signal to the controller that corresponds to the input.

- The interactive video game system of claim 8 , wherein each sensing unit of the array of sensing units comprises a respective sensor communicatively coupled to the respective processing circuitry, wherein the respective processing circuitry of each sensing unit of the array of sensing units is configured to determine a respective portion of the generated respective model of each player of the plurality of players based on data collected by the respective sensor of the sensing unit.

- The interactive video game system of claim 9 , wherein each sensing unit of the array of sensing units is communicatively coupled to the controller via an internet protocol (IP) network, and wherein the interactive video game system comprises a system clock configured to enable the controller to synchronize the partial models of each player of the plurality of players as the partial models are received along the IP network.

- The interactive video game system of claim 8 , wherein the array of sensing units comprises an array of depth cameras, LIDAR devices, or a combination thereof, symmetrically distributed around a perimeter of the play area.

- The interactive video game system of claim 11 , wherein the array of sensing units is disposed above the plurality of players and is directed at a downward angle toward the play area, and wherein the array of sensing units comprises at least three sensing units.

- The interactive video game system of claim 8 , comprising a radio-frequency (RF) sensor communicatively coupled to the controller, wherein the controller is configured to receive data from the RF sensor to determine a relative position of a particular player of the plurality of players relative to remaining players of the plurality of players, the data indicating an identity, a location, or a combination thereof, for each player of the plurality of players.

- The interactive video game system of claim 8 , wherein the interface panel comprises at least one physical effects device configured to provide the plurality of players with at least one physical effect based, at least in part, on the determined in-game actions of each player of the plurality of players.

- The interactive video game system of claim 8 , comprising a database system communicatively coupled to the controller, wherein the controller is configured to query and receive information related to the plurality of players from the database system.

- The interactive video game system of claim 15 , wherein, to present the respective virtual representation of each player of the plurality of players, the controller is configured to: query and receive the information related to the plurality of players;and determine how to modify the virtual representation based on the received information.

- The interactive video game system of claim 8 , wherein the controller is configured to generate the respective virtual representation of each player of the plurality of players based on the generated respective model of each player of the plurality of players.

- The interactive video game system of claim 8 , wherein each of the partial models of each player of the plurality of players received from the array of sensing units comprises a partial shadow model, wherein the generated respective model of each player of the plurality of players generated by the controller comprises a shadow model, and wherein the respective virtual representation of each player of the plurality of players presented by the controller is directly based on the shadow model.

- A method of operating an interactive video game system, comprising: receiving, via processing circuitry of a controller of the interactive video game system, partial models of a plurality of players positioned within a play area, wherein the partial models are generated by respective sensor processing circuitry of each sensing unit of an array of sensing units disposed around the play area;fusing, via the processing circuitry, the received partial models of each player of the plurality of players to generate a respective model of each player of the plurality of players wherein each of the generated respective models comprises a respective shadow model;determining, via the processing circuitry, in-game actions of each player of the plurality of players based, at least in part, on the generated respective models of each player of the plurality of players;and presenting, via a display device, the respective shadow model of each player of the plurality of players in a virtual environment based, at least in part, on the generated respective models and the in-game actions of each player of the plurality of players.

- The method of claim 19 , comprising: scanning, using a volumetric sensor of each sensing unit of the array of sensing units, each player of the plurality of players positioned within the play area;generating, via the sensor processing circuitry of each sensing unit of the array of sensing units, the partial models of each player of the plurality of players;and providing, via a network, the partial models of each player of the plurality of players to the processing circuitry of the controller.

- The method of claim 20 , wherein each of the received partial models comprises a partial volumetric model, a partial skeletal model, a partial shadow model, or a combination thereof.

- The method of claim 19 , wherein the plurality of players comprises at least twelve players that are simultaneously playing the interactive video game system.

- The method of claim 21 , wherein each of the received partial models comprises the partial shadow model.

- The method of claim 19 , wherein the display device is disposed near the play area, and is configured to display the virtual environment to the plurality of players in the play area.

- The method of claim 19 , wherein the display device is disposed near the play area, and is configured to display the virtual environment to the plurality of players in the play area.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.