U.S. Pat. No. 10,430,646

SYSTEMS AND METHODS FOR OPERATING A VIRTUAL REALITY ENVIRONMENT USING COLORED MARKER LIGHTS ATTACHED TO GAME OBJECTS

AssigneeZero Latency Pty Ltd

Issue DateJanuary 26, 2018

Illustrative Figure

Abstract

Systems and methods are disclosed for operating, calibrating, and dynamically optimizing a system for a virtual reality environment where colored marker lights are attached to objects, the objects including players, controllers, and devices related to the game. One or more color cameras are used to view one or more spaces, and track positions and orientations of players and other objects according to the attached marker lights. A hierarchical system of servers may be used to process positions and orientations of objects and provide controls as necessary for the system. A method for color assignment is described as well as a calibration process, and a dynamic optimization process. A synchronization process is also described that ensures that a plurality of cameras and attached servers are properly coordinated. Head-mounted devices may also be used in conjunction with marker lights to provide information regarding players.

Description

DETAILED DESCRIPTION Embodiments will be described below in more detail with reference to the accompanying drawings. The following detailed descriptions are provided to assist the reader in gaining a comprehensive understanding of the methods, apparatuses, and/or systems described herein and equivalent modifications thereof. Accordingly, various changes, modifications, and equivalents of the methods, apparatuses, and/or systems described herein will be apparent to those of ordinary skill in the art. Moreover, descriptions of well-known functions and constructions may be omitted for increased clarity and conciseness. The terms used in the description are intended to describe embodiments only, and shall by no means be restrictive. Unless clearly used otherwise, expressions in a singular from include a meaning of a plural form. In the present description, an expression such as “comprising” or “including” is intended to designate a characteristic, a number, a step, an operation, an element, a part or combinations thereof, and shall not be construed to preclude any presence or possibility of one or more other characteristics, numbers, steps, operations, elements, parts or combinations thereof. Systems and methods are disclosed for operating, calibrating, and dynamically optimizing a system for a virtual reality environment where colored marker lights are attached to objects. The objects may include players, controllers, and devices related to the game or another virtual reality experience. One or more color cameras are used to view one or more spaces, and track positions and orientations of players and other objects according to the attached marker lights. A hierarchical system of servers is used to process positions and orientations of objects and provide controls as necessary for the system. A method for color assignment is described as well as a calibration process, and a dynamic optimization process. A synchronization process is also described that ensures that a plurality of cameras and attached ...

DETAILED DESCRIPTION

Embodiments will be described below in more detail with reference to the accompanying drawings. The following detailed descriptions are provided to assist the reader in gaining a comprehensive understanding of the methods, apparatuses, and/or systems described herein and equivalent modifications thereof. Accordingly, various changes, modifications, and equivalents of the methods, apparatuses, and/or systems described herein will be apparent to those of ordinary skill in the art. Moreover, descriptions of well-known functions and constructions may be omitted for increased clarity and conciseness.

The terms used in the description are intended to describe embodiments only, and shall by no means be restrictive. Unless clearly used otherwise, expressions in a singular from include a meaning of a plural form. In the present description, an expression such as “comprising” or “including” is intended to designate a characteristic, a number, a step, an operation, an element, a part or combinations thereof, and shall not be construed to preclude any presence or possibility of one or more other characteristics, numbers, steps, operations, elements, parts or combinations thereof.

Systems and methods are disclosed for operating, calibrating, and dynamically optimizing a system for a virtual reality environment where colored marker lights are attached to objects.

The objects may include players, controllers, and devices related to the game or another virtual reality experience.

One or more color cameras are used to view one or more spaces, and track positions and orientations of players and other objects according to the attached marker lights. A hierarchical system of servers is used to process positions and orientations of objects and provide controls as necessary for the system. A method for color assignment is described as well as a calibration process, and a dynamic optimization process. A synchronization process is also described that ensures that a plurality of cameras and attached servers are properly coordinated. Head-mounted devices may also be used in conjunction with marker lights to provide information regarding players.

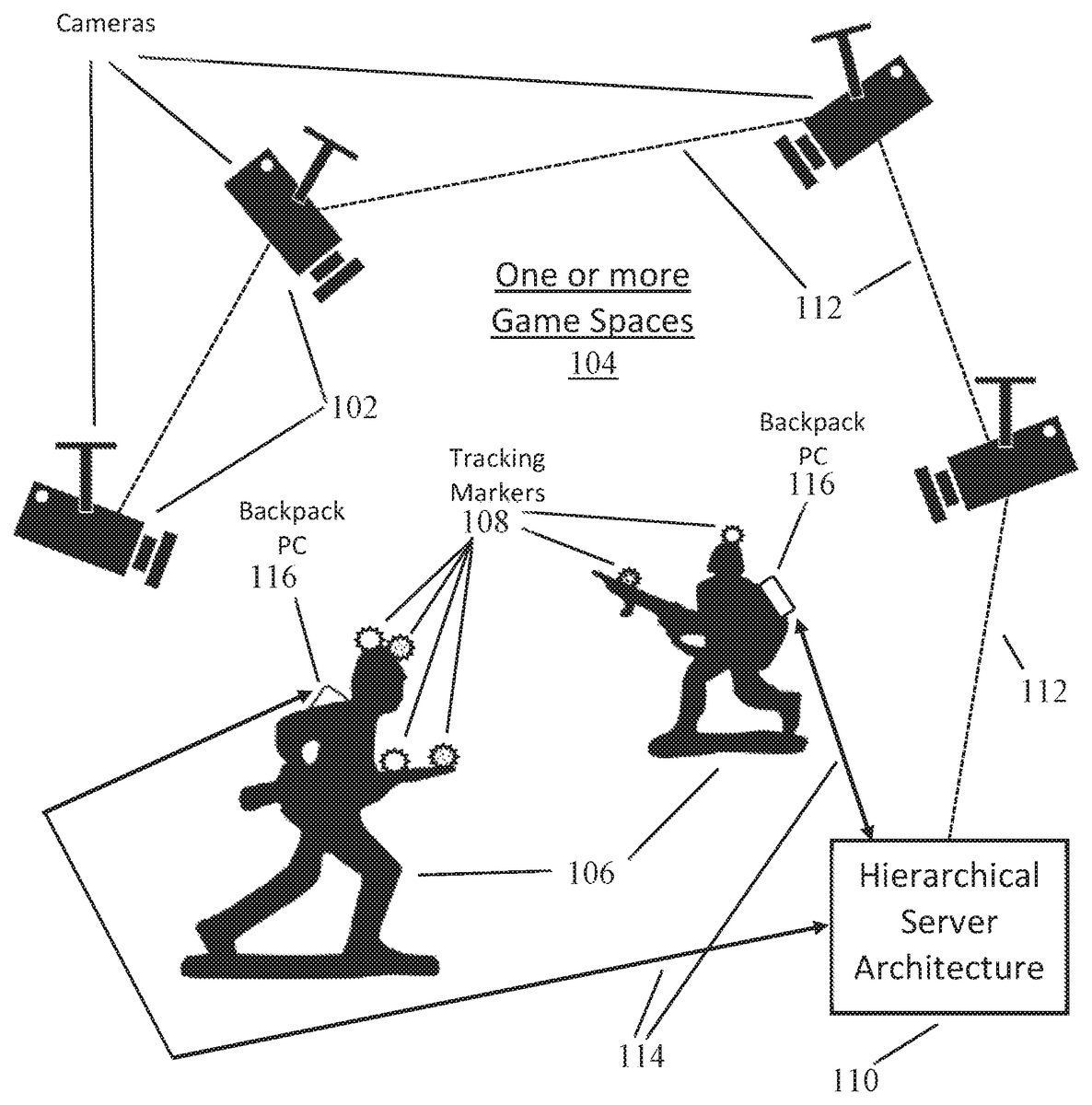

FIG. 1depicts a system comprising a plurality of cameras which track objects such as players and controllers with tracking markers attached thereto, according to an exemplary embodiment. For instance, pictured inFIG. 1is a plurality of color cameras102viewing one or more spaces104of a virtual reality. A plurality of spaces or other virtual reality environments in the same physical space are supported by a logical or virtual division of the physical space into a plurality of virtual spaces where a single game may be operated in one of the plurality of virtual spaces or other virtual reality environments. Cameras102or other optical detectors suitable of detecting radiation from tracking markers108, including infrared detectors, RGB cameras, hyperspectral sensors, and others.

The space/spaces being viewed by the camera, as described above and hereafter throughout the specification may include any kind of space used by a user/player to participate in the virtual reality experience, the virtual reality experience comprising a virtual reality game or any other form of virtual reality experience.

Typically, at least two cameras102are utilized to observe the one or more spaces104or other virtual reality environments, however the limit to the number of cameras102is not limited thereto and only a single camera or more than two cameras may be utilized to observe the one or more spaces103. Cameras102may be connected to a hierarchical server architecture110which analyzes images viewed by cameras102and communicates with players106and other objects such as game controllers, simulated weapons etc., all of which include tracking markers108for observation by cameras102. The hierarchical server architecture110will be described in more detail below, with reference toFIG. 3andFIG. 4.

Connections112between cameras102and server architecture110may be either hardwired such as Ethernet, or alternately wirelessly connected such as, for example, Wi-Fi connectivity. However, the form of connection112is not limited thereto and other forms of establishing a network may be used. Communication between server architecture110and players106and other game objects for both control and sensing purposes may be performed through wireless connectivity114which may include Wi-Fi connectivity or other forms of wireless connectivity.

According to another embodiment, communication between the server architecture110and players106may be performed through a wired connection. For some embodiments of the invention, players106may carry a form of backpack PC116which may interface electronically with a form of head-mounted device and/or a controller or simulated weapon device carried by the player. Alternately, backpack PC116may communicate wirelessly and directly with the head-mounted device and or the controller or simulated weapon device carried by the player, however this form of communication is not limited thereto and the communication may be performed via a wired connection.

An example process for initial color assignment for the tracking marker lights108before play, and for dynamic color reassignment for the marker lights108during play, is shown inFIG. 2. In step S202, a first marker light108is set to white, then is viewed by one or more cameras102, and is located in the tracking system of depicted inFIG. 1. The first marker light108is then changed to a first color, for example red. Next, in step S204a next marker light108is set to white and located by the tracking system in the same manner as step S202. Subsequently, this next marker light108is changed to a different available color, for example green.

In other examples, the tracking marker lights108may be other light or radiation sources, including fluorescent light sources, infrared bulbs, or other types of light sources.

At this point, it is determined, per step S206, if all assignable colors have been assigned to marker lights108. If not, step S204is executed again with a next marker light108and changed to a next available color which might be, for example, blue, since red and green have been assigned. If all assignable colors have been assigned to marker lights108the process proceeds to step S208. Note that an exemplary list of assignable colors may comprise White (R,G,B), Red (R), Blue (B), Green (G), Yellow (R,G), Cyan (B,G), Magenta (R,B). This list of assignable colors is merely exemplary and color variations in-between the listed available colors are also possible.

In step S208, the process starts assigning colors to new unassigned marker lights108where the color has been previously assigned to at least one other marker light108. As such, the system considers the distance from the new unassigned marker light108to the previously assigned marker lights108in making a color choice. Per step S208, a next unassigned marker light108is set to white and located in the tracking system. Subsequently its color is changed to be the same as whichever tracking marker, previously assigned with a color, is farthest from this next unassigned marker light108. In step S210it is determined if all tracking marker lights108have been assigned a color. If not, step S208is repeated until all marker lights108have been assigned a color. Otherwise, the process proceeds to cover dynamic color reassignment during operation of a game.

Per step S212, whenever during a game a tracking marker108is determined to have moved within a specified minimum distance of another tracking marker108having the same light color, the color of one of the two tracking markers is changed to another color such that distances between markers having the same color is maximized. The specified distance may vary based on the size of the game arena. As such, one of the tasks of the server architecture110is to keep track of all distances between tracking markers108having the same color, and compare those distances with the specified minimum distance.

FIG. 3depicts a system comprising a plurality of cameras, players, and controllers connected to a hierarchical server architecture, according to an exemplary embodiment. Here, one bank of color cameras302connects with slave tracking server306, while another bank of color cameras304connects with slave tracking server308. Positions and movements of game objects tracked by slave tracking servers306and308are consolidated in master server310which may optionally have one or more local cameras312connected to it. Note that calibration of tracking marker108positions may be performed locally on the server(s) observing that tracking marker. The number of slave tracking servers and master server depicted inFIG. 3is merely exemplary and not limited thereto. Furthermore, the functionality of the slave tracking server and the master tracking server may be combined into a single server, according to an exemplary embodiment.

When a slave tracking server such as306or308receives an image, they immediately process the image to identify any tracking markers in the optical data of the image. The slave tracking server308immediately sends the processed data to the master server310and performs no further processing on that particular image, according to an exemplary embodiment. This may include identifying a pixel row and column location of the tracking marker108, including with a time stamp camera identification.

Master server310interfaces with game server314which communicates wirelessly316with players106and other devices318which may include for example any of controller devices including simulated weapons, according to one exemplary embodiment. The communication may even be conducted via a wired connection, according to another exemplary embodiment.

The Master server310collects all the processed data from both local cameras312and slave servers306and308. It continues to store all this information until it has a complete set of data from each camera in the system or until it receives repeated data from the same camera. Once the data set is considered complete, it performs the next stage of processing on each individual camera image to create a list of all the intersections of the data points from the cameras where the tracking marker is a match. Positions of these intersection points are then averaged out to create the final processed position for each tracking marker. Where not enough information is available to create an accurate intersection or the information conflicts within a threshold, the information may be optionally discarded.

FIG. 4depicts a flowchart illustrating this process for synchronizing a plurality of cameras with consistent and accurate locations of objects, according to an exemplary embodiment. In step S402, tracking markers in the space are located using cameras302and304communicating with slave servers306,308. In step S404, positions of tracking markers are communicated from the various slave servers306,308to master server310. In step S406a process operating on the master server creates a list of all intersection points where a position of a first marker seen by one camera matches a position of a second marker seen by another camera. Then in step S408, for each intersection point in the list of intersection points, the positions of the first and second tracking markers are averaged to create a processed position for that intersection point, and represents a position of a composite tracking marker corresponding to both the first and second tracking markers that will be used thenceforth in operation of the game.

The master server310and the slave servers306,308are exemplary embodiment forming part of the hierarchy server where the master server310may have unidirectional control over the slave servers306,308. However, the master and the slave servers may be incorporated into a single server which performs the below defined functions of both the master server310and the slave server306,308, according to an exemplary embodiment.

Before game begins, it is important to calibrate the system such that cameras observing a space or other virtual reality environment are accurately coordinated with respect to positions of game objects.FIG. 5depicts a system performing an initial calibration using a calibration object502.

When the system is initially set up, all the cameras are plugged in and nothing is configured for the space. It is important that the tracking servers are programmed with position with the position and orientation of the cameras in 3D space.

To do this, one exemplary calibration configuration according to the invention involves laying out a 1 meter grid on the flat ground. This could be achieved using masking tape or other available means. This grid is visible to the cameras. The grid serves as a guide for where the virtual space will be defined, and a center point is chosen in the room to be the center in the virtual space (x:0, y:0, z:0).

Then, a calibration device502is placed on the 1-meter grid. One exemplary configuration for the calibration device is an L-shape (90 degree angle) with arms each measuring 1 meter long (dimension “d”504), with a colored ball or calibration marker light506at each end of the arm and also at the center. The length mentioned above is merely exemplary and a different shape and size of calibration device with a different number of marker lights506may be used.

These colored balls or calibration marker lights506may be powered, and set to fixed colors. An exemplary configuration would include Green in the center, Blue on one arm and Red on the other, however different colors may be used. By placing the calibration device in the grid at the center of the space, with the blue arm oriented in a specific direction, the calibration software can automatically detect the location of the cameras which can see all 3 colored markers on the calibration device.

The calibration process allows the operator to select a camera, and perform the following process:

1) Detect the 3 colored markers are in the next image to come from the camera and record their positions in the image.

2) Convert the positions in the image to vectors from a zero origin, pointing to the recorded position.

3) The vectors are analyzed to find the ‘best fit’ which would allow the markers to show up in that position.

4) When the right fit is found, the detected orientation of the calibration device is converted into a position and orientation for the camera.

5) The position and orientation is associated with the camera.

This initial calibration process is further described in the flowchart ofFIG. 6where step S602describes placing a calibration object in a space so it is visible to a plurality of one or more color cameras, the calibration object comprising at least three colored calibration marker lights506mounted in a specified orientation on the calibration object, and wherein the calibration object is placed in the space in a specified orientation relative to the one or more cameras. Per step S604, for each camera, a position of each of the calibration marker lights is determined in a captured image, and each of these positions is converted to vectors relative to a zero origin. Then per step S606, the vectors are analyzed to determine a best fit for the position of each calibration marker light and the detected orientation of the calibration object is converted into a position and orientation respective of the camera for use thenceforth in operation of the game.

The detected calibration for the camera can be validated by the operator as the system may also draw a dotted line over the video feed to show where it believes the grid on the floor should be. In the instance where the calibration device is not available, the cameras may be configured manually using the dotted line overlay. All camera calibration data is then stored on the tracking system server that the cameras are connected to (be it a slave server, master server or a combination of the two).

FIG. 7depicts a block diagram of a gaming system700, according to another exemplary embodiment. The system700includes Cameras702and704and VR server724. The cameras,702and704, may be capable of accessing the VR server724either directly or indirectly over a network714. The cameras,702and704, may access the VR server724over the network714using wireless or wired connections supporting one or more point-to-point links, shared local area networks (LAN), wide area networks (WAN), or other access technologies. These cameras702and704may be transmitting video, audio or other kinds of data to the VR server724.

According to the exemplary embodiment depicted inFIG. 7, the VR system700is a type of system that provides tracking of marker lights on players or their controllers or other game objects using cameras702and704using storage devices728,730and multiple processors718. However, it should be appreciated that alternate embodiments of the VR system700may use a single processor and storage device and the depicted embodiment is merely exemplary. Furthermore, althoughFIG. 7depicts a single server724, the VR system may comprise multiple servers splitting up the functionalities which are performed by the depicted server724, as described in the exemplary embodiment ofFIG. 1.

In the exemplary embodiment depicted inFIG. 7, the VR server724may receive the location information and other action/state information regarding a user holding a controller, colors assigned to the tracking lights on the controller or other game objects etc. in a space using the cameras702and704. The VR server724may be realized as a software program stored in a memory and executing on a central processing unit (CPU).

The VR server724may use video images from the tracking cameras702,704. In some embodiments, the VR server724receives video images over video cables connected to the cameras, however the images may be transferred wirelessly. Possible video cable types include analog formats such as composite video, S-Video and VGA; and digital formats such as HDMI and DVI, however these are mere exemplary embodiments and the possibilities are not limited thereto. In another embodiment, the slave server receives video images over a wireless communication connection.

The VR server724may follow the procedures described inFIG. 2,FIG. 4andFIG. 6for assignment and reassignment of colors to the tracking marker lights, and synchronization and calibration of the cameras702,704.

The present disclosure emulates a real-world experience for players, and as such the experience players have is quite real, just as pilots in flight simulators experience all the aspects of flying a real airplane. The disclosure is deeply intertwined with computer and networking technology without which it would not be possible. In fact, the functions described herein have an extremely time-sensitive nature to their operation in order to achieve a true virtual reality experience, and without an intimate integration with the described hardware would not function properly, if at all.

The dynamic reassignment of colors during a game based on changing distances between light markers having the same color is a function grounded in reality. The use of a physical calibration device to calibrate distances for each camera as well as the process for synchronizing positions among a plurality of cameras, are all concrete functionalities. The embodiments disclosed herein can be implemented as hardware, firmware, software, or any combination thereof. Moreover, the software is preferably implemented as an application program tangibly embodied on a program storage unit or computer readable medium. The application program may be uploaded to, and executed by, a machine comprising any suitable architecture.

Preferably, the servers are implemented on a computer platform having hardware such as one or more central processing units (“CPUs”), one or more memories, and one or more input/output interfaces. The computer platform may also include an operating system and micro-instruction code. The various processes and functions described herein may be either part of the micro-instruction code or part of the application program, or any combination thereof, which may be executed by a CPU, whether or not such computer or processor is explicitly shown. In addition, various other peripheral units may be connected to the computer platform such as an additional data storage unit and a printing unit.

Although a number of possible implementations have been mentioned, these are presented merely for the sake of explanation and teaching, and are not limitative. Moreover, an implementation of an apparatus that falls within the inventive concept does not necessarily achieve any of the possible benefits outlined above: such benefits are dependent on the specific use case and specific implementation, and the possible benefits mentioned above are simply examples.

Although the concepts have been described above with respect to the various embodiments, it is noted that there can be a variety of permutations and modifications of the described features by those who are familiar with this field, only some of which have been presented above, without departing from the technical ideas and scope of the features, which is defined by the appended claims.

Further, while this specification contains many features, the features should not be construed as limitations on the scope of the disclosure or the appended claims. Certain features described in the context of separate embodiments can also be implemented in combination. Conversely, various features described in the context of a single embodiment can also be implemented in multiple embodiments separately or in any suitable sub-combination.

Although the drawings describe operations in a specific order and/or show specific arrangements of components, and are described in the context of access segments of data centers, one should not interpret that such specific order and/or arrangements are limited, or that all the operations performed and the components disclosed are needed to obtain a desired result. There are numerous hardware and software devices that can be configured to forward data units in the manner described in the present disclosure with respect to various embodiments. Accordingly, other implementations are within the scope of the following claims.

Claims

- A system for operating a virtual reality environment including at least one space, the system comprising: at least one color camera configured to view the at least one space;at least one object, wherein the at least one object comprises at least one of a player, a controller and a device related to the virtual reality;at least one colored light attached to each of the at least one object configured to be used as a tracking marker;at least one server configured to track movements of the at least one object during the virtual reality;a memory containing machine readable medium comprising machine executable code having stored thereon instructions for tracking the movements of the at least one object;and a control system comprising a processor or processors coupled to the memory, the control system configured to execute the machine executable code to cause the control system to: assign a color choice to each of the at least one colored light, wherein the assignment of the first color to the first colored light from among the at least one colored light is based on a spatial proximity of the first colored light to other colored lights in the at least one space.

- The system of claim 1 , wherein, if a total number of distinct assignable colors is greater than or equal to a total number of the at least one colored light, the control system is further configured to assign each of the at least one colored light a distinct color from among the total number of distinct assignable colors.

- The system of claim 1 , wherein, if a total number of distinct assignable colors is smaller than a total number of the at least one colored light, the control system is further configured to: assign a distinct color from among the total number of distinct assignable colors to a first set of the at least one colored light, and assign a color to each colored light from among a second set of the at least one colored light based on spatial proximity of each colored light from among the second set of the at least one colored light to remaining colored lights with assigned colors.

- The system of claim 1 , wherein the color choice for each of the at least one colored lights is selected from the group consisting of Red, Green, and Blue and combinations thereof.

- The system of claim 4 , wherein the color choice for each of the at least one colored lights further comprises half intensity secondary and tertiary colors.

- The system of claim 1 , wherein the specified distance is 5 meters.

- A method for operating a virtual reality environment including at least one space, at least one color camera, at least one object, at least one colored light attached to each of the at least one object, at least one processor, and at least one server, the method comprising: viewing, using at least one of the at least one color camera, the at least one space;assigning, using at least one of said at least one processor, a first color to a first colored light from among the at least one colored light, within the at least one space based on a spatial proximity of the first colored light to other colored lights in the at least one space;and tracking, using at least one of said at least one server, movements of the at least one object;wherein the at least one object comprises at least one of a player, a controller and a device related to the virtual reality.

- The method of claim 7 , wherein, if a total number of distinct assignable colors is greater than or equal to a total number of the at least one colored light, the assigning further comprises assigning each of the at least one colored light a distinct color from among the total number of distinct assignable colors.

- The method of claim 7 , wherein, if a total number of distinct assignable colors is smaller than a total number of the at least one colored light, the assigning further comprises: assigning a distinct color from among the total number of distinct assignable colors to a first set of the at least one colored light, and assigning a color to each colored light from among a second set of the at least one colored light based on spatial proximity of each colored light from among the second set of the at least one colored light to remaining colored lights with assigned colors.

- The method of claim 7 , wherein the color choice for each of the at least one colored lights is selected from the group consisting of Red, Green, and Blue and combinations thereof.

- The method of claim 10 , wherein the color choice for each of the at least one colored lights further comprises half intensity secondary and tertiary colors.

- The method of claim 7 , wherein the specified distance is 5 meters.

- A system for operating a virtual reality environment including at least one space, the system comprising: at least one color camera configured to view the at least one space;at least one object, wherein the at least one object comprises at least one of a player, a controller and a device related to the virtual reality;at least one colored light attached to each of the at least one object configured to be used as at least one tracking marker;a communication link;at least one server, comprising a hierarchy of at least one slave server and a master server, configured to track movements of the at least one object during the virtual reality;a memory containing machine readable medium comprising machine executable code having stored thereon instructions for tracking the movements of the at least one object;and a control system comprising a processor or processors coupled to the memory, the control system configured to execute the machine executable code to cause the control system to: locate, using the at least one slave servers, positions of at least a first and second tracking marker within view of the at least one color camera;transmit, via the communication link, a position of the at least one tracking marker to the master server;based on the positions of at least the first and second tracking markers, creating a composite tracking marker corresponding to a least the first and second tracking markers.

- A method for operating a virtual reality environment including at least one space, at least one color camera, a communication link, at least one object, at least one colored light attached to each of the at least one object configured to be used as at least one tracking marker, at least one processor, and at least one server comprising a hierarchy of at least one slave server and a master server, the method comprising: viewing, using at least one of the at least one color camera, the at least one space;tracking, using at least one of said at least one server, movements of the at least one object;locating, using the at least one slave servers, the position of the at least one tracking marker within view of the at least one color camera;transmitting, via the communication link, position of the at least one tracking marker to the master server;and determining, based on the position of the at least one tracking marker as viewed by a plurality of color cameras, an average position for the at least one tracking marker;wherein the at least one object comprises at least one of a player, a controller and a device related to the virtual reality.

- A system for operating a virtual reality environment including at least one space, the system comprising: at least one color camera configured to view the at least one space;at least one object, wherein the at least one object comprises at least one of a player, a controller and a device related to the virtual reality;at least one colored light attached to each of the at least one object configured to be used as at least one tracking marker;at least one server configured to track movements of the at least one object during the virtual reality;a memory containing machine readable medium comprising machine executable code having stored thereon instructions for tracking the movements of the at least one object;and a control system comprising a processor or processors coupled to the memory, the control system configured to execute the machine executable code to cause the control system to: detect a calibration object placed in a space visible to each of the at least one color camera, wherein the calibration object comprises a plurality of colored calibration marker lights, and wherein the calibration object is placed in the space in a specified orientation relative to the at least one colored camera;determine in a captured image at each of the at least one color camera, a position of each of the calibration marker lights;and determine best fit positions and orientations of the calibration marker lights respective to each of the at least one color camera for use thenceforth in operation of the virtual reality.

- The system of claim 15 , wherein the calibration object is “L” shaped and comprises three colored calibration marker lights.

- The system of claim 16 , wherein each leg of the “L” shape of the calibration object is 1 meter in length.

- The system of claim 16 , wherein the three colored calibration marker lights are colored green, blue, and red.

- A method for operating a virtual reality environment including at least one space, at least one color camera, at least one object, at least one colored light attached to each of the at least one object, at least one processor, and at least one server, the method comprising: viewing, using at least one of the at least one color camera, the at least one space;tracking, using at least one of said at least one server, movements of the at least one object;detecting, using at least one of said at least one processor, a calibration object placed in a space visible to each of the at least one color camera;determining, using at least one of said at least one processor, in a captured image at each of the at least one color camera, a position of each of two or more calibration marker lights affixed to the calibration object;and converting, using at least one of said at least one processor, a detected orientation of the calibration object into a position and orientation respective to at each of the at least one color camera for use thenceforth in operation of the virtual reality, wherein the at least one object comprises at least one of a player, a controller and a device related to the virtual reality.

- The method of claim 19 , wherein the calibration object is “L” shaped and comprises three colored calibration marker lights.

- The method of claim 20 , wherein each leg of the “L” shape of the calibration object is 1 meter in length.

- The method of claim 20 , wherein the three colored calibration marker lights are colored green, blue, and red.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.