U.S. Pat. No. 10,380,798

PROJECTILE OBJECT RENDERING FOR A VIRTUAL REALITY SPECTATOR

AssigneeSony Interactive Entertainment LLC

Issue DateSeptember 29, 2017

Illustrative Figure

Abstract

A method is provided, including the following operations: receiving at least one video feed from at least one camera disposed in a venue; processing the at least one video feed to generate a video stream that provides a view of the venue; transmitting the video stream over a network to a client device, for rendering to a head-mounted display; wherein processing the at least one video feed includes, analyzing the at least one video feed to identify a projectile object that is launched in the venue, wherein in the video stream, the projectile object is replaced with a virtual object, the virtual object being animated in the video stream so as to exhibit a path of travel that is towards the head-mounted display as the video stream is rendered to the head-mounted display.

Description

DETAILED DESCRIPTION The following implementations of the present disclosure provide devices, methods, and systems relating to projectile object rendering for a virtual reality spectator. It will be obvious, however, to one skilled in the art, that the present disclosure may be practiced without some or all of the specific details presently described. In other instances, well known process operations have not been described in detail in order not to unnecessarily obscure the present disclosure. In various implementations, the methods, systems, image capture objects, sensors and associated interface objects (e.g., gloves, controllers, peripheral devices, etc.) are configured to process data that is configured to be rendered in substantial real-time on a display screen. Broadly speaking, implementations are described with reference to the display being of a head mounted display (HMD). However, in other implementations, the display may be of a second screen, a display of a portable device, a computer display, a display panel, a display of one or more remotely connected users (e.g., whom may be viewing content or sharing in an interactive experience), or the like. FIG. 1Aillustrates a view of an electronic sports (e-sports) venue, in accordance with implementations of the disclosure. E-sports generally refers to competitive or professional gaming that is spectated by various spectators or users, especially multi-player video games. As the popularity of e-sports has increased in recent years, so has the interest in live spectating of e-sports events at physical venues, many of which are capable of seating thousands of people. A suitable venue can be any location capable of hosting an e-sports event for live spectating by spectators, including by way of example without limitation, arenas, stadiums, theaters, convention centers, gymnasiums, community centers, etc. However, the hosting and production of an e-sports event such as a tournament at a discreet physical venue means ...

DETAILED DESCRIPTION

The following implementations of the present disclosure provide devices, methods, and systems relating to projectile object rendering for a virtual reality spectator. It will be obvious, however, to one skilled in the art, that the present disclosure may be practiced without some or all of the specific details presently described. In other instances, well known process operations have not been described in detail in order not to unnecessarily obscure the present disclosure.

In various implementations, the methods, systems, image capture objects, sensors and associated interface objects (e.g., gloves, controllers, peripheral devices, etc.) are configured to process data that is configured to be rendered in substantial real-time on a display screen. Broadly speaking, implementations are described with reference to the display being of a head mounted display (HMD). However, in other implementations, the display may be of a second screen, a display of a portable device, a computer display, a display panel, a display of one or more remotely connected users (e.g., whom may be viewing content or sharing in an interactive experience), or the like.

FIG. 1Aillustrates a view of an electronic sports (e-sports) venue, in accordance with implementations of the disclosure. E-sports generally refers to competitive or professional gaming that is spectated by various spectators or users, especially multi-player video games. As the popularity of e-sports has increased in recent years, so has the interest in live spectating of e-sports events at physical venues, many of which are capable of seating thousands of people. A suitable venue can be any location capable of hosting an e-sports event for live spectating by spectators, including by way of example without limitation, arenas, stadiums, theaters, convention centers, gymnasiums, community centers, etc.

However, the hosting and production of an e-sports event such as a tournament at a discreet physical venue means that not all people who wish to spectate in person will be able to do so. Therefore, it is desirable to provide a live experience to a remote spectator so that the remote spectator can experience the e-sports event as if he/she were present in-person at the venue where the e-sports event occurs.

With continued reference toFIG. 1A, a view of a venue100that is hosting an e-sports event is shown. A typical e-sports event is a tournament wherein teams of players compete against each other in a multi-player video game. In the illustrated implementation, a first team consists of players102a,102b,102c, and102d, and a second team consists of players104a,104b,104c, and104d. The first and second teams are situated on a stage106, along with an announcer/host110. The first team and second team are engaged in competitive gameplay of a multi-player video game against each other at the venue100, and spectators113are present to view the event.

Large displays108a,108b, and108cprovide views of the gameplay to the spectators113. It will be appreciated that the displays108a,108b, and108cmay be any type of display known in the art that is capable of presenting gameplay content to spectators, including by way of example without limitation, LED displays, LCD displays, DLP, etc. In some implementations, the displays108a,108b, and108care display screens on which gameplay video/images are projected by one or more projectors (not shown). It should be appreciated that the displays108a108b, and108ccan be configured to present any of various kinds of content, including by way of example without limitation, gameplay content, player views of the video game, game maps, a spectator view of the video game, views of commentators, player/team statistics and scores, advertising, etc.

Additionally, commentators112aand112bprovide commentary about the gameplay, such as describing the gameplay in real-time as it occurs, providing analysis of the gameplay, highlighting certain activity, etc.

FIG. 1Bis a conceptual overhead view of the venue100, in accordance with implementations of the disclosure. As previously described, a first team and a second team of players are situated on the stage and engaged in gameplay of the multi-player video game. A number of seats114are conceptually shown, which are available for spectators to occupy when attending and viewing the e-sports event in person. As noted, there are large displays108a,108b, and108cwhich provide views of the gameplay and other content for the spectators113to view. Additionally, there are a number of speakers118, which may be distributed throughout the venue to provide audio for listening by the spectators, including audio associated with or related to any content rendered on the displays108a,108b, and108c.

Furthermore, there are any number of cameras116distributed throughout the venue100, which are configured to capture video of the e-sports event for processing, distribution, streaming, and/or viewing by spectators, both live in-person and/or remote, in accordance with implementations of the disclosure. It will be appreciated that some of the cameras116may have fixed locations and/or orientations, while some of the cameras116may have variable locations and/or orientations and may be capable of being moved to new locations and/or re-oriented to new directions.

In accordance with implementations of the disclosure, a “live” viewing experience of the e-sports event can be provided to a virtual reality spectator120. That is, the virtual reality spectator120is provided with a view through a head-mounted display (HMD) (or virtual reality headset) that simulates the experience of attending the e-sports event in person and occupying a particular seat122at the venue100. Broadly speaking, the three-dimensional (3D) location of the virtual reality spectator's seat122can be determined, and video feeds from certain ones of the various cameras116can be stitched together to provide a virtual reality view of the venue100from the perspective of the seat122.

Furthermore, though not specifically shown, each camera may include at least one microphone for capturing audio from the venue100. Also, there may be additional microphones distributed throughout the venue100. Audio from at least some of these microphones can also be processed based on the 3D location of the virtual reality spectator's seat122, so as to provide audio that simulates that which would be heard from the perspective of one occupying the seat122.

FIG. 1Cconceptually illustrates a portion124of seats from the venue100in which the live e-sports event takes place, in accordance with implementations of the disclosure. As shown, the virtual reality spectator120is presented with a view through the HMD150that simulates occupying the seat122in the venue100. In some implementations, the view of the e-sports event that is provided to the virtual reality spectator120is provided from a streaming service142over a network144. That is, the streaming service142includes one or more server computers that are configured to stream video for rendering on the HMD150, wherein the rendered video provides the view of the e-sports event to the virtual reality spectator120. Though not specifically shown in the illustrated implementation, it should be appreciated that the streaming service142may first transmit the video in the form of data over the network144to a computing device that is local to the virtual reality spectator120, wherein the computing device may process the data for rendering to the HMD150.

It should be appreciated that the view provided is responsive in real-time to the movements of the virtual reality spectator120, e.g., so that if the virtual reality spectator120turns to the left, then the virtual reality spectator120sees (through the HMD150) the view to the left of the seat122, and if the virtual reality spectator120turns to the right, then the virtual reality spectator120sees (through the HMD150) the view to the right of the seat122, and so forth. In some implementations, the virtual reality spectator120is provided with potential views of the e-sports venue100in all directions, including a 360 degree horizontal field of view. In some implementations, the virtual reality spectator120is provided with potential views of the e-sports venue100in a subset of all directions, such as a horizontal field of view of approximately 270 degrees in some implementations, or 180 degrees in some implementations. In some implementations, the provided field of view may exclude a region that is directly overhead or directly below. In some implementations, a region that is excluded from the field of view of the e-sports venue may be provided with other content, e.g. advertising, splash screen, logo content, game-related images or video, etc.

In some implementations, the virtual reality spectator120is able to select the seat through an interface, so that they may view the e-sports event from the perspective of their choosing. In some implementations, the seats that are available for selection are seats that are not physically occupied by spectators who are present in-person at the e-sports event. In other implementations, both seats that are unoccupied and seats that are occupied are selectable for virtual reality spectating.

In some implementations, the streaming service142may automatically assign a virtual reality spectator to a particular seat. In some implementations, this may be the best available seat (e.g. according to a predefined order or ranking of the available seats).

In some implementations, virtual reality spectators may be assigned to seats in proximity to other spectators based on various characteristics of the spectators. In some implementations, virtual reality spectators are assigned to seats based, at least in part, on their membership in a social network/graph. For example, with continued reference toFIG. 1C, another virtual reality spectator132may be a friend of the virtual reality spectator120on a social network130(e.g. as defined by membership in a social graph). The streaming service142may use this information to assign the virtual reality spectator132to a seat proximate to the virtual reality spectator120, such as the seat134that is next to the seat122to which the virtual reality spectator120has been assigned. In the illustrated implementation, thus virtually “seated,” when the virtual reality spectator120turns to the right, the virtual reality spectator120may see the avatar of the virtual reality spectator132seated next to them.

In some implementations, the interface for seat selection and/or assignment may inform a given user that one or more of their friends on the social network130is also virtually attending the e-sports event, and provide an option to be automatically assigned to a seat in proximity to one or more of their friends. In this way, friends that are attending the same event as virtual reality spectators may enjoy the event together.

In various implementations, virtual reality spectators can be assigned to seats in proximity to each other based on any of various factors such as a user profile, age, geo-location, primary language, experience in a given video game, interests, gender, etc.

In some implementations, these concepts can be extended to include in-person “real” spectators (who are physically present, as opposed to virtual reality spectators), when information is known about such real spectators. For example, it may be determined that the real spectator138that is seated in seat136is a friend of the virtual reality spectator120, and so the virtual reality spectator120may be assigned (or offered to be assigned) to the seat122that is next to the seat136of the real spectator138.

It will be appreciated that in order to provide input while viewing content through HMDs, the virtual reality spectators may use one or more controller devices. In the illustrated implementation, the virtual reality spectators120,132, and140operate controller devices152,156, and160, respectively, to provide input to, for example, start and stop streaming of video for virtual reality spectating, select a seat in the e-sports venue100for spectating, etc.

It will be appreciated that spectators, whether virtual or real, may in some implementations, hear each other if they are in proximity to each other in the e-sports venue100. For example, the virtual reality spectators120and132may hear each other as audio captured from their respective local environments (e.g. via microphones of the HMDs150and154, the controllers152and156, or elsewhere in the local environments of the spectators120and132) is provided to the other's audio stream. In some implementations, the virtual reality spectator120may hear sound from the real spectator138that is captured by a local microphone.

In some implementations, a virtual reality spectator may occupy a seat that is physically occupied by a real spectator. For example, in the illustrated implementation, the virtual reality spectator may occupy the seat136that is physically occupied by the real spectator138. The virtual reality spectator140is provided with a view simulating being in the position of the seat136. When the virtual reality spectator140turns to the right they may see the avatar of the spectator120; and likewise when the spectator120turns to their left, they may see the avatar of the spectator140in place of the real spectator138.

Though implementations of the present disclosure are generally described with reference to e-sports events, it should be appreciated that in other implementations, the principles of the present disclosure can be applied to other types of spectated live events, including by way of example without limitation, sporting events, concerts, ceremonies, presentations, etc.

At e-sports events and other types of live spectated events, it is a common practice for there to be giveaways of various kinds of souvenirs, promotional products, or other items to engage the spectating audience and promote excitement amongst the attendees. Items such as t-shirts, towels, stuffed animals and toys, and other items may be thrown or launched into a spectating audience, so as to be caught by a real spectator at the event. However, a virtual reality spectator is not able to interact with such items because the virtual reality spectator is not physically present at the event, and cannot physically catch such items. However, in order to enable virtual reality spectators to engage in such giveaways, implementations of the present disclosure provide for specialized rendering of the view provided to the virtual reality spectator to enable the virtual reality spectator to experience a sensation of participating in such interactions.

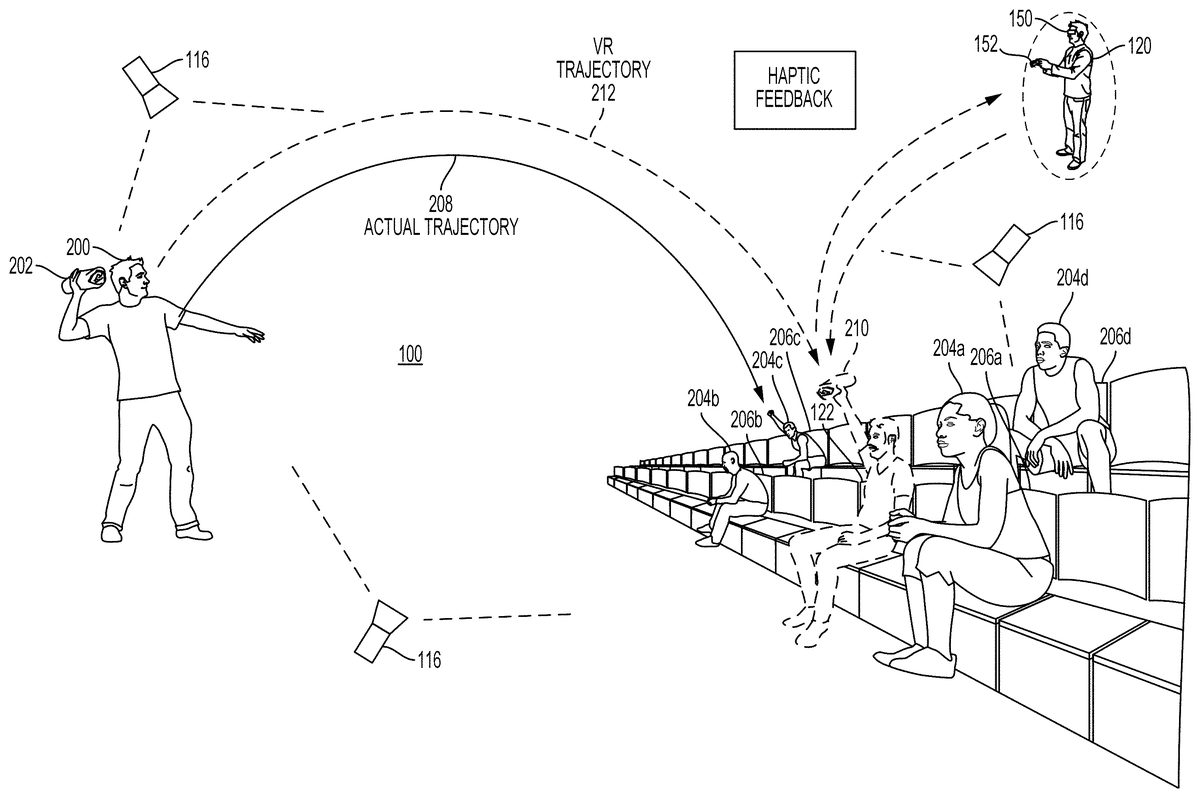

FIG. 2illustrates an interactive experience for a virtual reality spectator with a projectile object in a venue, in accordance with implementations of the disclosure. In the illustrated implementation, the virtual reality spectator120virtually occupies the seat122in the venue100. As previously discussed, the virtual reality spectator120is presented a view of the venue100that simulates the perspective of viewing the venue from the location of the seat122, or a location similar to that of the seat122. Also shown in the illustrated implementation, are real spectators204a,204b,204c, and204d, who are situated proximately to the seat122(to which virtual reality spectator120is assigned) who respectively occupy seats206a,206b,206c, and206d.

A launcher200in the illustrated implementation is a person throwing a projectile object202towards the spectators. It will be appreciated that in other implementations, the launcher200can also include a mechanized device for launching objects that may be operated by such a person, such as a slingshot, gun, cannon, or any other device capable of launching objects towards spectators in accordance with implementations of the disclosure. In the illustrated implementation, the projectile object202is a rolled up t-shirt, by way of example without limitation, but may be any other object that may be launched into a group of spectators, as described above.

The launcher200launches/throws the projectile object202towards the spectators in the venue100. Accordingly, the projectile object202follows an actual or real trajectory208or flight path indicated at reference208, by way of example. However, in some implementations, the view provided to the virtual reality spectator120will show the projectile object202replaced by a virtual object210that will appear to the virtual reality spectator120to follow a virtual reality trajectory212that is directed towards the virtual reality spectator120. Thus, even though the actual trajectory208of the projectile object202is not directed towards the seat122to which the virtual reality spectator120is assigned, the virtual reality spectator120is nonetheless able to experience a view that shows the virtual object210being launched/thrown towards the virtual reality spectator120, thereby providing the virtual reality spectator120with the experience of participating in the giveaway.

In some implementations, the virtual object210is configured to look similar to the projectile object202. In other implementations, the virtual object210is configured to look like a different object than the projectile object202. In some implementations, replacing the projectile object202with the virtual object210includes detecting the projectile object202and processing the video feed to remove the projectile object202in the video feed, while also inserting the virtual object210into the video feed. In some implementations, the virtual object210is inserted as an overlay in the video feed. In some implementations, the projectile object202is not replaced in the view, but rather the view is augmented and/or overlayed to show the virtual object210in addition to the projectile object202.

Furthermore, to heighten the experience of participation, the virtual reality spectator120may “catch” the virtual object210by, for example, lifting their hands and making a catching or reaching motion or otherwise moving their hands in a manner so as to indicate a desire on the part of the virtual reality spectator120to catch the virtual object210. The motion of the virtual reality spectator's120hands to catch the virtual object210can be detected through one or more controller devices152that are operated by the virtual reality spectator120. For example, the virtual reality spectator120may operate one or more motion controllers or use a glove interface for supplying input while viewing the venue space through the HMD150.

As the virtual object210is caught, the view rendered to the HMD150may show virtual hands, such as the hands of an avatar of the virtual reality spectator120, catching the virtual object210. In some implementations, an external camera of the HMD150may capture images of the user's hands, and these captured images may be used to show the user's hands catching the virtual object210. Additionally, haptic feedback can be provided to the virtual reality spectator120in response to catching the virtual object210. Haptic feedback may be rendered to the virtual reality spectator120, for example, through a controller device152operated by the virtual reality spectator120.

In some implementations, the projectile object202is replaced with the virtual object210in the virtual reality spectator120view at or prior to launch of the projectile object202occurring. In some implementations, the projectile object202is replaced with the virtual object210upon recognition or identification of the projectile object202as described below. In some implementations, replacement of the projectile object202with the virtual object210does not occur until after launch of the projectile object202occurs. In some implementations, the launcher200is replaced in the virtual reality spectator120view with a virtual character that is animated to show the virtual object210being launched/thrown by the virtual character. In some implementations, the timing of such a launch by a virtual character is coordinated to the launch of the projectile object202by the launcher200.

In some implementations, in order to facilitate the replacement of the projectile object202with the virtual object210in the view for the virtual reality spectator120, the projectile object202is identified and/or tracked. It will be appreciated that various technologies can be utilized to identify and track the projectile object202. In some implementations, the video feeds from one or more of the cameras116in the venue can be analyzed to identify and track the location and trajectory of the projectile object202. That is, an image recognition process can be applied to image frames of the video feeds to identify and track the projectile object202in the image frames of the video feeds. It will be appreciated that by tracking the projectile object202in multiple different video feeds, the accuracy and robustness of the tracking can be improved.

To further improve such identification and tracking via image recognition, the projectile object202itself can be configured to be more recognizable. By way of example without limitation, the projectile object202may have one or more colors, markers, patterns, designs, shapes, reflective material, retroreflective material, etc. on its surface to enable it to be more easily recognized via image recognition processes. In some implementations, the projectile object202includes one or more lights (e.g. LEDs). In some implementations, such configurations are part of the packaging of the projectile object202.

While image recognition has been described as a technique for tracking the projectile object202, it will be appreciated that in other implementations, other types of tracking techniques can be employed, such as RF tracking, magnetic tracking, etc. To this end, the projectile object202may include various emitters or sensors, and wireless communications devices to enable such tracking.

In some implementations, the launcher200can also be identified and tracked through image recognition applied to the video feeds and/or other techniques. For example, the launcher200might be tracked to detect a throwing motion or other indication that the projectile object202is being launched. In some implementations, when such a throwing or launching motion is detected, then the projectile object202is replaced in the view of the virtual reality spectator120with the virtual object210, so that the “launch” of the virtual object210coincides with the actual launch of the projectile object202.

In some implementations, the launcher200and projectile object202are replaced in the virtual reality spectator120view by a virtual character that is animated so as to launch the virtual object210towards the virtual reality spectator120.

As noted previously, in some implementations, the projectile object202is not necessarily replaced by the virtual object210, but the virtual object210is rendered or overlayed in addition to the projectile object202.

FIG. 3illustrates prediction of a trajectory of a projectile object202for determining a view of a virtual reality spectator120, in accordance with implementations of the disclosure. In the illustrated implementation, the launcher200is shown throwing/launching the projectile object202. As has been noted, the 3D location/trajectory of the projectile object202can be tracked in space. The trajectory of the projectile object202is its actual flight path through the real space of the venue100.

In some implementations, the tracked location of the projectile object202in 3D space is used to predict its trajectory or flight path. By way of example without limitation, using the tracked location over time, the 3D vector velocity of the projectile object202at a given time can be determined. With reference to the illustrated implementation, at a time T0, the projectile object202has an initial potion P0, as it is being launched by the launcher200. After being launched, at a subsequent time T1, the projectile object202has a position P1. By tracking the change in position of the projectile object202, its velocity at time T1can be determined as V1, which is a 3D velocity vector in some implementations.

The instantaneous velocity of the projectile object202can be utilized to predict the future trajectory of the projectile object202. With continued reference toFIG. 3, the projectile object202is shown to have an actual trajectory302. And based on determining the velocity of the projectile object202, it is predicted that the projectile object202will follow a predicted trajectory (or flight path)304, which in the illustrated implementation, is towards the user304bwho is located proximate to the location of seat122, to which the virtual reality spectator120is assigned. The predicted trajectory304thus also provides for a predicted landing location306of the projectile object202. In some implementations, the predicted landing location306is defined by an identified seat in the venue100, such as the seat206bthat is occupied by the real spectator204bin the illustrated implementation.

Based in part on the proximity of the location or seat122(to which the virtual reality spectator120is assigned) to the predicted trajectory304and/or the predicted landing location306of the projectile object202, then the virtual reality spectator120view may be altered to show the projectile object202or a substituted virtual object210that appears to follow a path towards the virtual reality spectator120(e.g. towards the seat122). In the illustrated implementation, this occurs at time T2, at which point the view provided to the virtual reality spectator120is modified to show the virtual object210following a virtual trajectory/path308that is directed towards the seat122.

FIG. 4conceptually illustrates an overhead view of a plurality of seats in a venue, illustrating a method for identifying virtual reality spectators that may have their views altered, in accordance with implementations of the disclosure. As shown, a number of spectators, who may be real or virtual reality spectators, are shown occupying various seats in a venue100. A launcher200is shown in the venue launching/throwing a projectile object202towards the spectators.

The launched projectile object202follows a trajectory400through the air towards the spectators. As noted above, the trajectory can be predicted based on tracking the movement of the projectile object202and determining its 3D velocity vector, by way of example without limitation. In the illustrated implementation, such a process is performed to determine a predicted (3D) trajectory402of the projectile object202. Based on the predicted trajectory402, a predicted landing location408is determined for the projectile object202. In some implementations, the predicted landing location can be a location on a physical surface in the venue100that intersects with the predicted trajectory402. In some implementations, the predicted landing location is defined by a point or region in the venue100. In some implementations, the predicted landing location identifies a seat within the venue100which the predicted trajectory402intersects, or substantially intersects. In some implementations, the predicted landing location identifies a seat that is nearest to a point/region in the venue100that the predicted trajectory402intersects.

In the illustrated implementation, the predicted landing location408of the projectile object202is shown, which is defined by the location of seat410, which is occupied by a real spectator411. In some implementations, a virtual reality spectator that occupies a seat in proximity to the predicted landing location408(or seat410) may have their view of the projectile object202modified to show the projectile object202or a virtual object210following a path that is directed towards them instead of the projectile object's202actual path.

For example, in some implementations, a virtual reality spectator occupying a seat that is within a predefined set of proximately located seats may have their view modified. In the illustrated implementation, virtual reality spectators that occupy seats in the region R, that identifies a plurality of seats proximate to the seat410, may have their views modified. In the illustrated implementation, the seats412and416are occupied by virtual reality spectators414and418, respectively, and thus the virtual reality spectators414and418may have their respective views of the venue100modified to show a virtual object210following a trajectory404and406, respectively.

In some implementations, a virtual reality spectator that is located (e.g. occupying a seat) that is within a predefined distance D of the predicted landing location408may have their view modified. In other implementations, various kinds of spatial criteria related to the predicted trajectory402and/or predicted landing location408of the projectile object202may be applied to determine whether a given virtual reality spectator may have their view modified to show a virtual object traveling towards them. By way of example without limitation, in some implementations, virtual reality spectators that occupy locations that are positioned substantially along the predicted trajectory (predicted path of travel) of the projectile object202may have their views modified.

In some implementations, the modification of a virtual reality spectator's view takes place automatically based on their location in accordance with the above. In some implementations, the location of the virtual reality spectator renders him/her eligible to have their view modified, for example, further based on additional factors, such as detecting a gesture of the virtual reality spectator (e.g. reaching towards the projectile object202as viewed through the HMD).

FIG. 5illustrates a view of a venue provided to a virtual reality spectator, providing interactivity with a projectile object, in accordance with implementations of the disclosure. The view500is rendered through the HMD150for viewing by the virtual reality spectator120, as previously described. The view500is a real-time view of the venue100from a perspective that is defined by a location or seat in the venue to which the virtual reality spectator120is assigned or otherwise virtually positioned or associated.

In the view500, the virtual reality spectator120may see the launcher200throw or launch a projectile object202as previously discussed. However, even though the virtual reality spectator120is not physically present in the venue to participate in the giveaway, as has been described, the view500may be modified to show a virtual object210that is rendered so as to appear to the virtual reality spectator120to follow a trajectory that is towards the virtual reality spectator120location in the venue100. Thus, the virtual reality spectator120is able to virtually catch the virtual object210as shown. By way of example without limitation, the virtual reality spectator120may see rendered images of his/her hands or corresponding virtual hands502aand502bof the virtual reality spectator's120avatar. For example, the virtual hands502aand502bmay be controlled by input from one or more input devices, such as controller devices (including motion controllers), motion sensors, glove interfaces, etc. When the virtual reality spectator120“catches” the virtual object210, haptic feedback can be provided through the input devices that are operated by the virtual reality spectator120in order to simulate or reinforce the sensation of catching the virtual object210.

Additionally, because the virtual reality spectator120is wearing an HMD150, additional functionality can be provided through the HMD display. For example, messages relating to the giveaway can be displayed to the virtual reality spectator120. For example, a message504can be displayed when the launcher200is going to launch the projectile object202, informing the virtual reality spectator120to catch the projectile object202. In some implementations, when the virtual reality spectator120catches the corresponding virtual object210, a confirmation message506can be displayed through the HMD, informing the virtual reality spectator120that he/she caught the item. For example, if a t-shirt giveaway has occurred in the venue, then when the virtual reality spectator120catches the corresponding virtual object210, their account (e.g. an online user account) may be credited to receive a t-shirt through the mail, a digital t-shirt for their avatar, or receive some other physical or digital item. Examples of items that might be received include, coupons, promotion codes, special offers, credit (e.g. for a digital store), video game assets (e.g. items, skins, characters, weapons, etc.), by way of example without limitation.

In some implementations, after catching a virtual object210, an option508to share the experience with others (e.g. via posting to a social network, e-mail, message, etc.) can be provided. By selecting the option508to “Share,” then the virtual reality spectator120view may display an interface to enable sharing about their catching the virtual object210, and/or inviting others to join the event and participate, for example via a social network or other platform. In some implementations, after catching a virtual object210an option510to gift the item that has been credited to their account is provided. That is, the virtual reality spectator120may digitally transfer the item to another user or spectator.

FIG. 6illustrates a method for enabling a virtual reality spectator to participate in a launched giveaway in a venue, in accordance with implementations of the disclosure. At method operation600, cameras in a venue space capture video of the venue space, generating a plurality of video feeds. At method operation602, a view of the venue space is provided to a virtual reality spectator, for example, by assigning the virtual reality spectator to a location in the venue and stitching appropriate video feeds to generate video that provides the view. The video is rendered to an HMD worn by the virtual reality spectator.

At method operation604, a tracking mode is activated to enable tracking of a projectile object in the video feeds from the cameras. At method operation606, the projectile object is identified and tracked in the video feeds. At method operation608, a trajectory and/or landing location of the projectile object are predicted. At method operation610, it is determined whether the virtual reality spectator's location in the venue is proximate to the predicted trajectory or the predicted landing location of the projectile object. If the virtual reality spectator's location is proximate, then at method operation612, a gesture of the virtual reality spectator is detected, such as the virtual reality spectator raising their hands to catch the projectile object. In response to detecting the gesture, then at method operation614, a virtual object is rendered in the virtual reality spectator view having a trajectory that is towards the virtual reality spectator.

At method operation616, the virtual reality spectator view is rendered to show the virtual reality spectator catching the virtual object. At method operation618, an account of the virtual reality spectator is credited with an item based on catching the virtual object.

FIG. 7Aconceptually illustrates a system for providing virtual reality spectating of an e-sports event, in accordance with implementations of the disclosure. Though not specifically described in detail for purposes of ease of description, it will be appreciated that the various systems, components, and modules described herein may be defined by one or more computers or servers having one or more processors for executing program instructions, as well as one or more memory devices for storing data and said program instructions. It should be appreciated that any of such systems, components, and modules may communicate with any other of such systems, components, and modules, and/or transmit/receive data, over one or more networks, as necessary, to facilitate the functionality of the implementations of the present disclosure. In various implementations, various portions of the systems, components, and modules may be local to each other or distributed over the one or more networks.

In the illustrated implementation, a virtual reality spectator120interfaces with systems through an HMD150, and uses one or more controller devices152for additional interactivity and input. In some implementations, the video imagery displayed via the HMD to the virtual reality spectator120is received from a computing device700, that communicates over a network702(which may include the Internet) to various systems and devices, as described herein.

In order to initiate access to spectate an e-sports event, the virtual reality spectator120may access an event manager704, which handles requests to spectate an e-sports event. The event manager704can include seat assignment logic705configured to assign the virtual reality spectator120to a particular seat in the venue of the e-sports event. The seat assignment logic705can utilize various types of information to determine which seat to assign the virtual reality spectator120, including based on user profile data708for the spectator that is stored to a user database707. By way of example, such user profile data708can include demographic information about the user such as age, geo-location, gender, nationality, primary language, occupation, etc. and other types of information such as interests, preferences, games played/owned/purchased, game experience levels, Internet browsing history, etc.

In some implementations, the seat assignment logic705can also use information obtained from a social network710to, for example, assign spectators that are friends on the social network to seats that are proximate or next to each other. To obtain such information, the social network710may store social information about users (including social graph membership information) to a social database712as social data714. In some implementations, the seat assignment logic705may access the social data (e.g. accessing a social graph of a given user/spectator) through an API of the social network710.

In some implementations, the seat assignment logic705is configured to determine which seats are available, e.g. not occupied by real and/or virtual spectators, and assign a virtual reality spectator based at least in part on such information. In some implementations, the seat assignment logic705is configured to automatically assign a virtual reality spectator to the best available seat, as determined from a predefined ranking of the seats in the venue.

It will be appreciated that the seat assignment logic705can use any factor described herein in combination with any other factor(s) to determine which seat to assign a given virtual reality spectator. In some implementations, available seats are scored based on various factors, and the seat assignment is determined based on the score (e.g. virtual reality spectator is assigned to highest scoring seat). In some implementations, the seat assignment logic705presents a recommended seat for acceptance by the spectator120, and the spectator120is assigned to the recommended seat upon the acceptance thereof.

In other implementations, the virtual reality spectator120may access an interface provided by a seat selection logic706that is configured to enable the virtual reality spectator to select a given seat from available seats.

A venue database716stores data about one or more venues as venue data718. The venue data718can include any data describing the venue, such as a 3D space map, the locations of cameras, speakers, microphones, etc. The venue data718may further include a table720associating seat profiles to unique seat identifiers. In some implementations, each seat has its own seat profile. In some implementations, a group of seats (e.g. in close proximity to each other) may share the same seat profile. An example seat profile722includes information such as the 3D location724of the seat, video processing parameters726, and audio processing parameters728.

A video processor732includes a stitch processor734that may use the video processing parameters726and/or the 3D location724of the spectator's assigned seat to stitch together video feeds730from cameras116, so as to generate a composite video that provides the view for the virtual reality spectator120in accordance with the virtual reality spectator's view direction. In some implementations, spatial modeling module738generates or accesses a spatial model of the 3D environment of the venue (e.g. including locations of cameras and the location of the spectator's seat) in order to facilitate stitching of the video feeds730. The stitching of the video feeds may entail spatial projection of the video feeds to provide a perspective-correct video for the spectator. In some implementations, the resulting composite video is a 3D video, whereas in other implementations the composite video is a 2D video.

A compression processor736is configured to compress the raw composite video, employing video compression techniques known in the art, as well as foveated rendering, to reduce the amount of data required for streaming. The compressed video data is then streamed by the streaming server748over the network702to the computing device700, which processes and/or renders the video to the HMD150for viewing by the virtual reality spectator120.

In some implementations, the video feeds are transmitted from the cameras to one or more computing devices that are local to the cameras/venue which also perform the video processing. In some implementations, the cameras are directly connected to such computing devices. In some implementations, the video feeds are transmitted over a local network (e.g. including a local area network (LAN), Wi-Fi network, etc.) to such computing devices. In some implementations, the computing devices are remotely located, and the video feeds may be transmitted over one or more networks, such as the Internet, a LAN, a wide area network (WAN), etc.

An audio processor744is configured to process audio data742from audio sources740to be streamed with the compressed video data. The processing may use the audio processing parameters728and/or the 3D location724of the spectator's seat. In some implementations, an audio modeling module746applies an audio model based on the 3D space of the venue to process the audio data742. Such an audio model may simulate the acoustics of the assigned seat in the venue so that audio is rendered to the virtual reality spectator in a realistic fashion. By way of example without limitation, sounds from other virtual reality spectators may be processed to simulate not only directionality relative to the seat location of the virtual reality spectator120, but also with appropriate acoustics (such as delay, reverb, etc.) for the seat location in the venue. As noted, audio sources can include gameplay audio, commentator(s), house music, audio from microphones in the venue, etc.

FIG. 7Billustrates additional componentry and functionality of the system in accordance with the implementation ofFIG. 7A, in accordance with implementations of the disclosure. As shown, the video processor732further includes an image recognition module750that is configured to analyze the video feeds from the cameras to identify and track a projectile object202in the video feeds. Prediction logic752is configured to predict the trajectory and/or landing location of the projectile object202. An augmentation processor754is configured to augment the video for the spectator with a virtual object210that is rendered so as to show the virtual object210having a trajectory towards the virtual reality spectator120.

In some implementations, the rendering of the virtual object210is processed (e.g. as an overlay) onto a video feed. In some implementations, the rendering of the virtual object210is processed (e.g. as an overlay) onto stitched video that has been stitched by the stitch processor734. In some implementations, lighting in the venue100can be modeled (e.g. locations, intensities, colors of light sources) to enable more realistic rendering of the shading and appearance of the virtual object210. As noted previously, the video for the virtual reality spectator including the virtual object210may be compressed prior to being streamed by the streaming server748over the network702to the computing device700.

In some implementations, the computing device700executes a spectating application758that provides for the interactive virtual reality spectator experience described herein via the HMD150. That is, the spectating application758, when executed by the computing device700, enables access to the event online for virtual reality spectating, and also provides for rendering of video to the HMD150that provides the view of the venue space, and processes input received from the virtual reality spectator120, for example via the controller device152. In some implementations, the spectating application758further defines a gesture recognition module760that is configured to detect/recognize a predefined gesture that indicates the virtual reality spectator's desire to catch the projectile object202, such as raising one or both hands prior to or during launch or during the flight of the projectile object202. In response to detecting such a gesture, the spectating application may send a signal over the network to the streaming server indicating as such. The streaming server748may then trigger the modification of the spectator video by the video processor732to include the virtual object210as has been described.

Additionally, a haptic signal generator756is configured to generate a signal transmitted to the computing device700to produce haptic feedback, e.g. via the controller152. In some implementations, the haptic signal generator756generates the haptic feedback signal to coincide with rendering of spectator video that shows the virtual object210being caught by a virtual hand of the virtual reality spectator120.

FIG. 8illustrates a system for interaction with a virtual environment via a head-mounted display (HMD), in accordance with implementations of the disclosure. An HMD may also be referred to as a virtual reality (VR) headset. As used herein, the term “virtual reality” (VR) generally refers to user interaction with a virtual space/environment that involves viewing the virtual space through an HMD (or VR headset) in a manner that is responsive in real-time to the movements of the HMD (as controlled by the user) to provide the sensation to the user of being in the virtual space. For example, the user may see a three-dimensional (3D) view of the virtual space when facing in a given direction, and when the user turns to a side and thereby turns the HMD likewise, then the view to that side in the virtual space is rendered on the HMD. In the illustrated implementation, a user120is shown wearing a head-mounted display (HMD)150. The HMD150is worn in a manner similar to glasses, goggles, or a helmet, and is configured to display a video game or other content to the user120. The HMD150provides a very immersive experience to the user by virtue of its provision of display mechanisms in close proximity to the user's eyes. Thus, the HMD150can provide display regions to each of the user's eyes which occupy large portions or even the entirety of the field of view of the user, and may also provide viewing with three-dimensional depth and perspective.

In the illustrated implementation, the HMD150is wirelessly connected to a computer806. In other implementations, the HMD150is connected to the computer806through a wired connection. The computer806can be any general or special purpose computer known in the art, including but not limited to, a gaming console, personal computer, laptop, tablet computer, mobile device, cellular phone, tablet, thin client, set-top box, media streaming device, etc. In some implementations, the computer106can be configured to execute a video game, and output the video and audio from the video game for rendering by the HMD150. In some implementations, the computer806is configured to execute any other type of interactive application that provides a virtual space/environment that can be viewed through an HMD. A transceiver810is configured to transmit (by wired connection or wireless connection) the video and audio from the video game to the HMD150for rendering thereon. The transceiver810includes a transmitter for transmission of data to the HMD150, as well as a receiver for receiving data that is transmitted by the HMD150.

In some implementations, the HMD150may also communicate with the computer through alternative mechanisms or channels, such as via a network812to which both the HMD150and the computer106are connected.

The user120may operate an interface object152to provide input for the video game. Additionally, a camera808can be configured to capture images of the interactive environment in which the user120is located. These captured images can be analyzed to determine the location and movements of the user120, the HMD150, and the interface object152. In various implementations, the interface object152includes a light which can be tracked, and/or inertial sensor(s), to enable determination of the interface object's location and orientation and tracking of movements.

In some implementations, a magnetic source816is provided that emits a magnetic field to enable magnetic tracking of the HMD150and interface object152. Magnetic sensors in the HMD150and the interface object152can be configured to detect the magnetic field (e.g. strength, orientation), and this information can be used to determine and track the location and/or orientation of the HMD150and the interface object152.

In some implementations, the interface object152is tracked relative to the HMD150. For example, the HMD150may include an externally facing camera that captures images including the interface object152. The captured images can be analyzed to determine the location/orientation of the interface object152relative to the HMD150, and using a known location/orientation of the HMD, so determine the location/orientation of the interface object152in the local environment.

The way the user interfaces with the virtual reality scene displayed in the HMD150can vary, and other interface devices in addition to interface object152, can be used. For instance, various kinds of single-handed, as well as two-handed controllers can be used. In some implementations, the controllers themselves can be tracked by tracking lights included in the controllers, or tracking of shapes, sensors, and inertial data associated with the controllers. Using these various types of controllers, or even simply hand gestures that are made and captured by one or more cameras, it is possible to interface, control, maneuver, interact with, and participate in the virtual reality environment presented on the HMD150.

Additionally, the HMD150may include one or more lights which can be tracked to determine the location and orientation of the HMD150. The camera808can include one or more microphones to capture sound from the interactive environment. Sound captured by a microphone array may be processed to identify the location of a sound source. Sound from an identified location can be selectively utilized or processed to the exclusion of other sounds not from the identified location. Furthermore, the camera808can be defined to include multiple image capture devices (e.g. stereoscopic pair of cameras), an IR camera, a depth camera, and combinations thereof.

In some implementations, the computer806functions as a thin client in communication over a network812with a cloud application (e.g. gaming, streaming, spectating, etc.) provider814. In such an implementation, generally speaking, the cloud application provider114maintains and executes the video game being played by the user150. The computer806transmits inputs from the HMD150, the interface object152and the camera808, to the cloud application provider, which processes the inputs to affect the game state of the executing video game. The output from the executing video game, such as video data, audio data, and haptic feedback data, is transmitted to the computer806. The computer806may further process the data before transmission or may directly transmit the data to the relevant devices. For example, video and audio streams are provided to the HMD150, whereas a haptic/vibration feedback command is provided to the interface object152.

In some implementations, the HMD150, interface object152, and camera808, may themselves be networked devices that connect to the network812, for example to communicate with the cloud application provider814. In some implementations, the computer106may be a local network device, such as a router, that does not otherwise perform video game processing, but which facilitates passage of network traffic. The connections to the network by the HMD150, interface object152, and camera108may be wired or wireless.

Additionally, though implementations in the present disclosure may be described with reference to a head-mounted display, it will be appreciated that in other implementations, non-head mounted displays may be substituted, including without limitation, portable device screens (e.g. tablet, smartphone, laptop, etc.) or any other type of display that can be configured to render video and/or provide for display of an interactive scene or virtual environment in accordance with the present implementations.

FIGS. 9A-1 and 9A-2illustrate a head-mounted display (HMD), in accordance with an implementation of the disclosure.FIG. 9A-1in particular illustrates the Playstation® VR headset, which is one example of a HMD in accordance with implementations of the disclosure. As shown, the HMD150includes a plurality of lights900A-H. Each of these lights may be configured to have specific shapes, and can be configured to have the same or different colors. The lights900A,900B,900C, and900D are arranged on the front surface of the HMD150. The lights900E and900F are arranged on a side surface of the HMD150. And the lights900G and900H are arranged at corners of the HMD150, so as to span the front surface and a side surface of the HMD150. It will be appreciated that the lights can be identified in captured images of an interactive environment in which a user uses the HMD150. Based on identification and tracking of the lights, the location and orientation of the HMD150in the interactive environment can be determined. It will further be appreciated that some of the lights may or may not be visible depending upon the particular orientation of the HMD150relative to an image capture device. Also, different portions of lights (e.g. lights900G and900H) may be exposed for image capture depending upon the orientation of the HMD150relative to the image capture device.

In one implementation, the lights can be configured to indicate a current status of the HMD to others in the vicinity. For example, some or all of the lights may be configured to have a certain color arrangement, intensity arrangement, be configured to blink, have a certain on/off configuration, or other arrangement indicating a current status of the HMD150. By way of example, the lights can be configured to display different configurations during active gameplay of a video game (generally gameplay occurring during an active timeline or within a scene of the game) versus other non-active gameplay aspects of a video game, such as navigating menu interfaces or configuring game settings (during which the game timeline or scene may be inactive or paused). The lights might also be configured to indicate relative intensity levels of gameplay. For example, the intensity of lights, or a rate of blinking, may increase when the intensity of gameplay increases. In this manner, a person external to the user may view the lights on the HMD150and understand that the user is actively engaged in intense gameplay, and may not wish to be disturbed at that moment.

The HMD150may additionally include one or more microphones. In the illustrated implementation, the HMD150includes microphones904A and904B defined on the front surface of the HMD150, and microphone904C defined on a side surface of the HMD150. By utilizing an array of microphones, sound from each of the microphones can be processed to determine the location of the sound's source. This information can be utilized in various ways, including exclusion of unwanted sound sources, association of a sound source with a visual identification, etc.

The HMD150may also include one or more image capture devices. In the illustrated implementation, the HMD150is shown to include image capture devices902A and902B. By utilizing a stereoscopic pair of image capture devices, three-dimensional (3D) images and video of the environment can be captured from the perspective of the HMD150. Such video can be presented to the user to provide the user with a “video see-through” ability while wearing the HMD150. That is, though the user cannot see through the HMD150in a strict sense, the video captured by the image capture devices902A and902B (e.g., or one or more external facing (e.g. front facing) cameras disposed on the outside body of the HMD150) can nonetheless provide a functional equivalent of being able to see the environment external to the HMD150as if looking through the HMD150. Such video can be augmented with virtual elements to provide an augmented reality experience, or may be combined or blended with virtual elements in other ways. Though in the illustrated implementation, two cameras are shown on the front surface of the HMD150, it will be appreciated that there may be any number of externally facing cameras installed on the HMD150, oriented in any direction. For example, in another implementation, there may be cameras mounted on the sides of the HMD150to provide additional panoramic image capture of the environment. Additionally, in some implementations, such externally facing cameras can be used to track other peripheral devices (e.g. controllers, etc.). That is, the location/orientation of a peripheral device relative to the HMD can be identified and tracked in captured images from such externally facing cameras on the HMD, and using the known location/orientation of the HMD in the local environment, then the true location/orientation of the peripheral device can be determined.

FIG. 9Billustrates one example of an HMD150user120interfacing with a client system806, and the client system806providing content to a second screen display, which is referred to as a second screen907. The client system806may include integrated electronics for processing the sharing of content from the HMD150to the second screen907. Other implementations may include a separate device, module, connector, that will interface between the client system and each of the HMD150and the second screen907. In this general example, user120is wearing HMD150and is playing a video game using a controller, which may also be interface object104. The interactive play by user120will produce video game content (VGC), which is displayed interactively to the HMD150.

In one implementation, the content being displayed in the HMD150is shared to the second screen907. In one example, a person viewing the second screen907can view the content being played interactively in the HMD150by user120. In another implementation, another user (e.g. player2) can interact with the client system806to produce second screen content (SSC). The second screen content produced by a player also interacting with the controller104(or any type of user interface, gesture, voice, or input), may be produced as SSC to the client system806, which can be displayed on second screen907along with the VGC received from the HMD150.

Accordingly, the interactivity by other users who may be co-located or remote from an HMD user can be social, interactive, and more immersive to both the HMD user and users that may be viewing the content played by the HMD user on a second screen907. As illustrated, the client system806can be connected to the Internet910. The Internet can also provide access to the client system806to content from various content sources920. The content sources920can include any type of content that is accessible over the Internet.

Such content, without limitation, can include video content, movie content, streaming content, social media content, news content, friend content, advertisement content, etc. In one implementation, the client system806can be used to simultaneously process content for an HMD user, such that the HMD is provided with multimedia content associated with the interactivity during gameplay. The client system806can then also provide other content, which may be unrelated to the video game content to the second screen. The client system806can, in one implementation receive the second screen content from one of the content sources920, or from a local user, or a remote user.

FIG. 10conceptually illustrates the function of the HMD150in conjunction with an executing video game or other application, in accordance with an implementation of the disclosure. The executing video game/application is defined by a game/application engine1020which receives inputs to update a game/application state of the video game/application. The game state of the video game can be defined, at least in part, by values of various parameters of the video game which define various aspects of the current gameplay, such as the presence and location of objects, the conditions of a virtual environment, the triggering of events, user profiles, view perspectives, etc.

In the illustrated implementation, the game engine receives, by way of example, controller input1014, audio input1016and motion input1018. The controller input1014may be defined from the operation of a gaming controller separate from the HMD150, such as a handheld gaming controller (e.g. Sony DUALSHOCK®4 wireless controller, Sony PlayStation®Move motion controller) or interface object152. By way of example, controller input1014may include directional inputs, button presses, trigger activation, movements, gestures, or other kinds of inputs processed from the operation of a gaming controller. In some implementations, the movements of a gaming controller are tracked through an externally facing camera1011of the HMD102, which provides the location/orientation of the gaming controller relative to the HMD102. The audio input1016can be processed from a microphone1002of the HMD150, or from a microphone included in the image capture device808or elsewhere in the local environment. The motion input1018can be processed from a motion sensor1000included in the HMD150, or from image capture device808as it captures images of the HMD150. The game engine1020receives inputs which are processed according to the configuration of the game engine to update the game state of the video game. The game engine1020outputs game state data to various rendering modules which process the game state data to define content which will be presented to the user.

In the illustrated implementation, a video rendering module1022is defined to render a video stream for presentation on the HMD150. The video stream may be presented by a display/projector mechanism1010, and viewed through optics1008by the eye1006of the user. An audio rendering module1004is configured to render an audio stream for listening by the user. In one implementation, the audio stream is output through a speaker1004associated with the HMD150. It should be appreciated that speaker1004may take the form of an open air speaker, headphones, or any other kind of speaker capable of presenting audio.

In one implementation, a gaze tracking camera1012is included in the HMD150to enable tracking of the gaze of the user. The gaze tracking camera captures images of the user's eyes, which are analyzed to determine the gaze direction of the user. In one implementation, information about the gaze direction of the user can be utilized to affect the video rendering. For example, if a user's eyes are determined to be looking in a specific direction, then the video rendering for that direction can be prioritized or emphasized, such as by providing greater detail or faster updates in the region where the user is looking. It should be appreciated that the gaze direction of the user can be defined relative to the head mounted display, relative to a real environment in which the user is situated, and/or relative to a virtual environment that is being rendered on the head mounted display.

Broadly speaking, analysis of images captured by the gaze tracking camera1012, when considered alone, provides for a gaze direction of the user relative to the HMD150. However, when considered in combination with the tracked location and orientation of the HMD150, a real-world gaze direction of the user can be determined, as the location and orientation of the HMD150is synonymous with the location and orientation of the user's head. That is, the real-world gaze direction of the user can be determined from tracking the positional movements of the user's eyes and tracking the location and orientation of the HMD150. When a view of a virtual environment is rendered on the HMD150, the real-world gaze direction of the user can be applied to determine a virtual world gaze direction of the user in the virtual environment.

Additionally, a tactile feedback module1026is configured to provide signals to tactile feedback hardware included in either the HMD150or another device operated by the user, such as interface object152. The tactile feedback may take the form of various kinds of tactile sensations, such as vibration feedback, temperature feedback, pressure feedback, etc. The interface object152can include corresponding hardware for rendering such forms of tactile feedback.

With reference toFIG. 11, a diagram illustrating components of a head-mounted display150is shown, in accordance with an implementation of the disclosure. The head-mounted display150includes a processor1100for executing program instructions. A memory1102is provided for storage purposes, and may include both volatile and non-volatile memory. A display1104is included which provides a visual interface that a user may view. A battery1106is provided as a power source for the head-mounted display150. A motion detection module1108may include any of various kinds of motion sensitive hardware, such as a magnetometer1110, an accelerometer1112, and a gyroscope1114.

An accelerometer is a device for measuring acceleration and gravity induced reaction forces. Single and multiple axis models are available to detect magnitude and direction of the acceleration in different directions. The accelerometer is used to sense inclination, vibration, and shock. In one implementation, three accelerometers1112are used to provide the direction of gravity, which gives an absolute reference for two angles (world-space pitch and world-space roll).

A magnetometer measures the strength and direction of the magnetic field in the vicinity of the head-mounted display. In one implementation, three magnetometers1110are used within the head-mounted display, ensuring an absolute reference for the world-space yaw angle. In one implementation, the magnetometer is designed to span the earth magnetic field, which is ±80 microtesla. Magnetometers are affected by metal, and provide a yaw measurement that is monotonic with actual yaw. The magnetic field may be warped due to metal in the environment, which causes a warp in the yaw measurement. If necessary, this warp can be calibrated using information from other sensors such as the gyroscope or the camera. In one implementation, accelerometer1112is used together with magnetometer1110to obtain the inclination and azimuth of the head-mounted display150.

In some implementations, the magnetometers of the head-mounted display are configured so as to be read during times when electromagnets in other nearby devices are inactive.

A gyroscope is a device for measuring or maintaining orientation, based on the principles of angular momentum. In one implementation, three gyroscopes1114provide information about movement across the respective axis (x, y and z) based on inertial sensing. The gyroscopes help in detecting fast rotations. However, the gyroscopes can drift overtime without the existence of an absolute reference. This requires resetting the gyroscopes periodically, which can be done using other available information, such as positional/orientation determination based on visual tracking of an object, accelerometer, magnetometer, etc.

A camera1116is provided for capturing images and image streams of a real environment. More than one camera may be included in the head-mounted display150, including a camera that is rear-facing (directed away from a user when the user is viewing the display of the head-mounted display150), and a camera that is front-facing (directed towards the user when the user is viewing the display of the head-mounted display150). Additionally, a depth camera1118may be included in the head-mounted display150for sensing depth information of objects in a real environment.

The head-mounted display150includes speakers1120for providing audio output. Also, a microphone1122may be included for capturing audio from the real environment, including sounds from the ambient environment, speech made by the user, etc. The head-mounted display150includes tactile feedback module1124for providing tactile feedback to the user. In one implementation, the tactile feedback module1124is capable of causing movement and/or vibration of the head-mounted display150so as to provide tactile feedback to the user.

LEDs1126are provided as visual indicators of statuses of the head-mounted display150. For example, an LED may indicate battery level, power on, etc. A card reader1128is provided to enable the head-mounted display150to read and write information to and from a memory card. A USB interface1130is included as one example of an interface for enabling connection of peripheral devices, or connection to other devices, such as other portable devices, computers, etc. In various implementations of the head-mounted display150, any of various kinds of interfaces may be included to enable greater connectivity of the head-mounted display150.

A Wi-Fi module1132is included for enabling connection to the Internet or a local area network via wireless networking technologies. Also, the head-mounted display150includes a Bluetooth module1134for enabling wireless connection to other devices. A communications link1136may also be included for connection to other devices. In one implementation, the communications link1136utilizes infrared transmission for wireless communication. In other implementations, the communications link1136may utilize any of various wireless or wired transmission protocols for communication with other devices.

Input buttons/sensors1138are included to provide an input interface for the user. Any of various kinds of input interfaces may be included, such as buttons, touchpad, joystick, trackball, etc. An ultra-sonic communication module1140may be included in head-mounted display150for facilitating communication with other devices via ultra-sonic technologies.

Bio-sensors1142are included to enable detection of physiological data from a user. In one implementation, the bio-sensors1142include one or more dry electrodes for detecting bio-electric signals of the user through the user's skin.

A video input1144is configured to receive a video signal from a primary processing computer (e.g. main game console) for rendering on the HMD. In some implementations, the video input is an HDMI input.

The foregoing components of head-mounted display150have been described as merely exemplary components that may be included in head-mounted display150. In various implementations of the disclosure, the head-mounted display150may or may not include some of the various aforementioned components. Implementations of the head-mounted display150may additionally include other components not presently described, but known in the art, for purposes of facilitating aspects of the present disclosure as herein described.

FIG. 12is a block diagram of a Game System1200, according to various implementations of the disclosure. Game System1200is configured to provide a video stream to one or more Clients1210via a Network1215. Game System1200typically includes a Video Server System1220and an optional game server1225. Video Server System1220is configured to provide the video stream to the one or more Clients1210with a minimal quality of service. For example, Video Server System1220may receive a game command that changes the state of or a point of view within a video game, and provide Clients1210with an updated video stream reflecting this change in state with minimal lag time. The Video Server System1220may be configured to provide the video stream in a wide variety of alternative video formats, including formats yet to be defined. Further, the video stream may include video frames configured for presentation to a user at a wide variety of frame rates. Typical frame rates are 30 frames per second, 60 frames per second, and 120 frames per second. Although higher or lower frame rates are included in alternative implementations of the disclosure.

Clients1210, referred to herein individually as1210A,1210B, etc., may include head mounted displays, terminals, personal computers, game consoles, tablet computers, telephones, set top boxes, kiosks, wireless devices, digital pads, stand-alone devices, handheld game playing devices, and/or the like. Typically, Clients1210are configured to receive encoded video streams, decode the video streams, and present the resulting video to a user, e.g., a player of a game. The processes of receiving encoded video streams and/or decoding the video streams typically includes storing individual video frames in a receive buffer of the Client. The video streams may be presented to the user on a display integral to Client1210or on a separate device such as a monitor or television. Clients1210are optionally configured to support more than one game player. For example, a game console may be configured to support two, three, four or more simultaneous players. Each of these players may receive a separate video stream, or a single video stream may include regions of a frame generated specifically for each player, e.g., generated based on each player's point of view. Clients1210are optionally geographically dispersed. The number of clients included in Game System1200may vary widely from one or two to thousands, tens of thousands, or more. As used herein, the term “game player” is used to refer to a person that plays a game and the term “game playing device” is used to refer to a device used to play a game. In some implementations, the game playing device may refer to a plurality of computing devices that cooperate to deliver a game experience to the user. For example, a game console and an HMD may cooperate with the video server system1220to deliver a game viewed through the HMD. In one implementation, the game console receives the video stream from the video server system1220, and the game console forwards the video stream, or updates to the video stream, to the HMD for rendering.

Clients1210are configured to receive video streams via Network1215. Network1215may be any type of communication network including, a telephone network, the Internet, wireless networks, powerline networks, local area networks, wide area networks, private networks, and/or the like. In typical implementations, the video streams are communicated via standard protocols, such as TCP/IP or UDP/IP. Alternatively, the video streams are communicated via proprietary standards.