U.S. Pat. No. 10,335,685

STORAGE MEDIUM HAVING STORED THEREIN GAME PROGRAM, INFORMATION PROCESSING APPARATUS, INFORMATION PROCESSING SYSTEM, AND GAME PROCESSING METHOD

AssigneeNintendo Co., Ltd.

Issue DateOctober 31, 2017

Illustrative Figure

Abstract

An exemplary information processing apparatus arranges, in a virtual three-dimensional space, first to fourth objects, a first connection object connecting the first object and the second object, and a second connection object connecting the third object and the fourth object. The information processing apparatus controls actions of the first object and the second object, with restriction being imposed on movements of the first object and the second object based on the first connection object. Further, the information processing apparatus controls actions of the third object and the fourth object, with restriction being imposed on movements of the third object and the fourth object based on the second connection object. A virtual camera is arranged in the virtual three-dimensional space such that at least one of the third object and the fourth object is included in a field-of-view range of the virtual camera.

Description

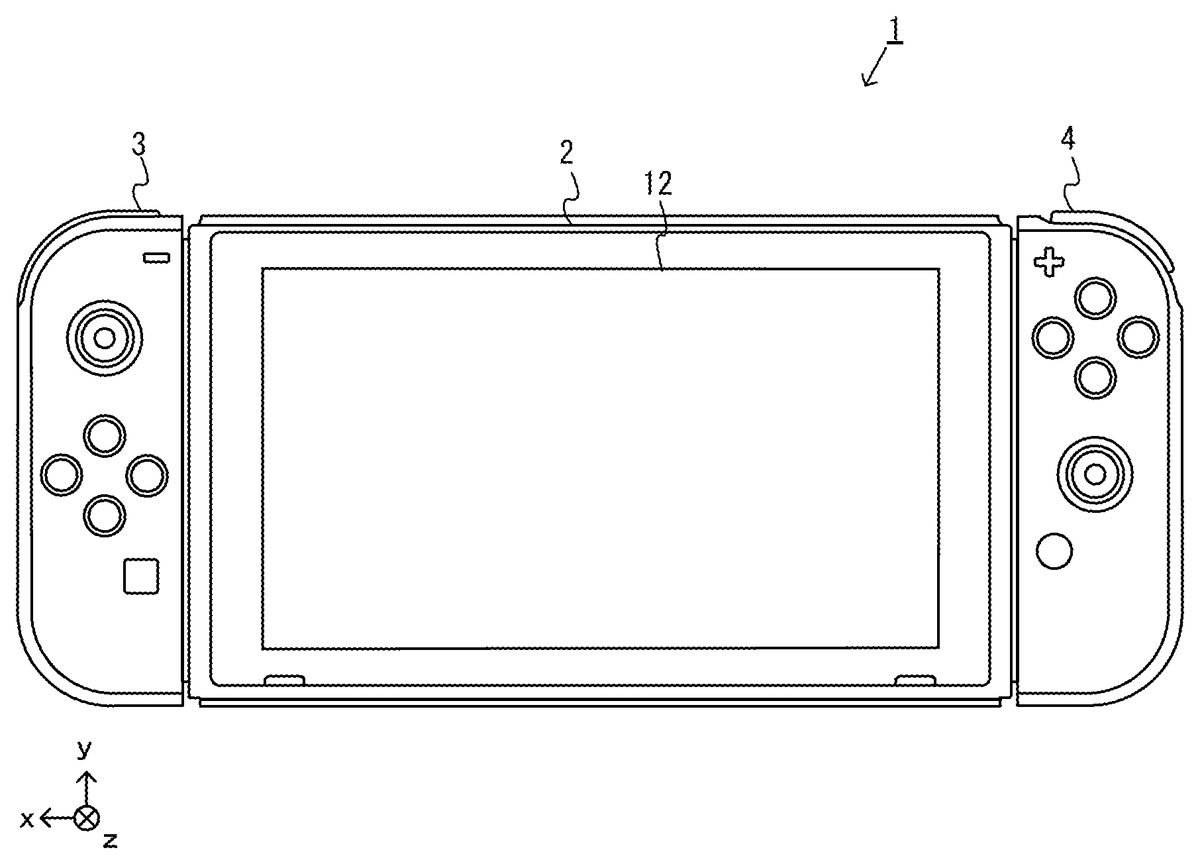

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS 1. Structure of Information Processing System A description is given below of an information processing system, an information processing apparatus, a game program, and a game processing method according to an exemplary embodiment. In the exemplary embodiment, an information processing system1includes a main body apparatus (information processing apparatus; which functions as a game apparatus main body in the exemplary embodiment)2, a left controller3, and a right controller4. Further, in another form, the information processing system may further include a cradle5(seeFIGS. 6 and 7and the like) in addition to the above configuration. In the information processing system1according to the exemplary embodiment, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. The information processing system1can be used as a unified apparatus obtained by attaching each of the left controller3and the right controller4to the main body apparatus2. Further, the main body apparatus2, the left controller3, and the right controller4can also be used as separate bodies (seeFIG. 2). Further, the information processing system1can be used in the form in which an image is displayed on the main body apparatus2, and in the form in which an image is displayed on another display device such as a television. In the first form, the information processing system1can be used as a mobile apparatus (e.g., a mobile game apparatus). Further, in the second form, the information processing system1can be used as a stationary apparatus (e.g., a stationary game apparatus). FIG. 1is a diagram showing the state where the left controller3and the right controller4are attached to the main body apparatus2in an example of the information processing system1according to the exemplary embodiment. As shown inFIG. 1, the information processing system1includes the main body apparatus2, the left controller3, and the right controller4. Each of the left controller3and the ...

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS

1. Structure of Information Processing System

A description is given below of an information processing system, an information processing apparatus, a game program, and a game processing method according to an exemplary embodiment. In the exemplary embodiment, an information processing system1includes a main body apparatus (information processing apparatus; which functions as a game apparatus main body in the exemplary embodiment)2, a left controller3, and a right controller4. Further, in another form, the information processing system may further include a cradle5(seeFIGS. 6 and 7and the like) in addition to the above configuration. In the information processing system1according to the exemplary embodiment, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. The information processing system1can be used as a unified apparatus obtained by attaching each of the left controller3and the right controller4to the main body apparatus2. Further, the main body apparatus2, the left controller3, and the right controller4can also be used as separate bodies (seeFIG. 2). Further, the information processing system1can be used in the form in which an image is displayed on the main body apparatus2, and in the form in which an image is displayed on another display device such as a television. In the first form, the information processing system1can be used as a mobile apparatus (e.g., a mobile game apparatus). Further, in the second form, the information processing system1can be used as a stationary apparatus (e.g., a stationary game apparatus).

FIG. 1is a diagram showing the state where the left controller3and the right controller4are attached to the main body apparatus2in an example of the information processing system1according to the exemplary embodiment. As shown inFIG. 1, the information processing system1includes the main body apparatus2, the left controller3, and the right controller4. Each of the left controller3and the right controller4is attached to and unified with the main body apparatus2. The main body apparatus2is an apparatus for performing various processes (e.g., game processing) in the information processing system1. The main body apparatus2includes a display12. Each of the left controller3and the right controller4is an apparatus including operation sections with which a user provides inputs.

FIG. 2is a diagram showing an example of the state where each of the left controller3and the right controller4is detached from the main body apparatus2. As shown inFIGS. 1 and 2, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. It should be noted that hereinafter, the left controller3and the right controller4will occasionally be referred to collectively as a “controller”. It should be noted that in the exemplary embodiment, an “operation device” operated by a single user may be a single controller (e.g., one of the left controller3and the right controller4) or a plurality of controllers (e.g., both the left controller3and the right controller4, or these controllers and another controller), and the “operation device” can be configured by one or more controllers. A description is given below of examples of the specific configurations of the main body apparatus2, the left controller3, and the right controller4.

FIG. 3is six orthogonal views showing an example of the main body apparatus2. As shown inFIG. 3, the main body apparatus2includes an approximately plate-shaped housing11. In the exemplary embodiment, a main surface (in other words, a surface on a front side, i.e., a surface on which the display12is provided) of the housing11has a generally rectangular shape. In the exemplary embodiment, the housing11has a horizontally long shape. That is, in the exemplary embodiment, the longitudinal direction of the main surface of the housing11(i.e., an x-axis direction shown inFIG. 1) is referred to as a “horizontal direction” (also as a “left-right direction”), the short direction of the main surface (i.e., a y-axis direction shown inFIG. 1) is referred to as a “vertical direction” (also as an “up-down direction”), and a direction perpendicular to the main surface (i.e., a z-axis direction shown inFIG. 1) is referred to as a depth direction (also as a “front-back direction”). The main body apparatus2can be used in the orientation in which the main body apparatus2is horizontally long. Further, the main body apparatus2can also be used in the orientation in which the main body apparatus2is vertically long. In this case, the housing11may be considered as having a vertically long shape.

It should be noted that the housing11are optional. As an example, the housing11may have a portable size. Further, the main body apparatus2alone or the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2may function as a mobile apparatus. The main body apparatus2or the unified apparatus may function as a handheld apparatus or a portable apparatus.

As shown inFIG. 3, the main body apparatus2includes the display12, which is provided on the main surface of the housing11. The display12displays an image (a still image or a moving image) acquired or generated by the main body apparatus2. In the exemplary embodiment, the display12is a liquid crystal display device (LCD). The display12, however, may be a display device of any type.

Further, the main body apparatus2includes a touch panel13on a screen of the display12. In the exemplary embodiment, the touch panel13is of a type that allows a multi-touch input (e.g., a capacitive type). The touch panel13, however, may be of any type. For example, the touch panel13may be of a type that allows a single-touch input (e.g., a resistive type).

The main body apparatus2includes speakers (i.e., speakers88shown inFIG. 8) within the housing11. As shown inFIG. 3, speaker holes11aand11bare formed on the main surface of the housing11. Then, sounds output from the speakers88are output through the speaker holes11aand11b.

Further, the main body apparatus2includes a left terminal17for the main body apparatus2to perform wired communication with the left controller3, and a right terminal21for the main body apparatus2to perform wired communication with the right controller4.

As shown inFIG. 3, the main body apparatus2includes a slot23. The slot23is provided on an upper side surface of the housing11. The slot23is so shaped as to allow a predetermined type of storage medium to be attached to the slot23. The predetermined type of storage medium is, for example, a dedicated storage medium (e.g., a dedicated memory card) for the information processing system1and an information processing apparatus of the same type as the information processing system1. The predetermined type of storage medium is used to store, for example, data (e.g., saved data of an application or the like) used by the main body apparatus2and/or a program (e.g., a program for an application or the like) executed by the main body apparatus2. Further, the main body apparatus2includes a power button28and sound volume buttons26aand26b.

The main body apparatus2includes a lower terminal27. The lower terminal27is a terminal for the main body apparatus2to communicate with the cradle5, which will be described later. In the exemplary embodiment, the lower terminal27is a USB connector (more specifically, a female connector).

FIG. 4is six orthogonal views showing an example of the left controller3. As shown inFIG. 4, the left controller3includes a housing31. In the exemplary embodiment, the housing31is approximately plate-shaped. In the exemplary embodiment, the housing31has a vertically long shape, i.e., is shaped to be long in the up-down direction (i.e., the y-axis direction shown inFIG. 1). In the state where the left controller3is detached from the main body apparatus2, the left controller3can also be held in the orientation in which the left controller3is vertically long. The housing31has such a shape and a size that when held in the orientation in which the housing31is vertically long, the housing31can be held with one hand, particularly the left hand. Further, the left controller3can also be held in the orientation in which the left controller3is horizontally long. When held in the orientation in which the left controller3is horizontally long, the left controller3may be held with both hands.

The left controller3includes an analog stick32. As shown inFIG. 4, the analog stick32is provided on a main surface of the housing31. The analog stick32can be used as a direction input section with which a direction can be input. The user tilts the analog stick32and thereby can input a direction corresponding to the direction of the tilt (and input a magnitude corresponding to the angle of the tilt). It should be noted that the left controller3may include a directional pad, a slide stick that allows a slide input, or the like as the direction input section, instead of the analog stick. Further, in the exemplary embodiment, it is possible to provide an input by pressing the analog stick32.

The left controller3includes various operation buttons. First, the left controller3includes four operation buttons33to36(specifically, a right direction button33, a down direction button34, an up direction button35, and a left direction button36) on the main surface of the housing31. Further, the left controller3includes a record button37and a “−” (minus) button47. The left controller3includes a first L-button38and a ZL-button39in an upper left portion of a side surface of the housing31. Further, the left controller3includes a second L-button43and a second R-button44, on the side surface of the housing31on which the left controller3is attached to the main body apparatus2. These operation buttons are used to give instructions depending on various programs (e.g., an OS program and an application program) executed by the main body apparatus2.

Further, the left controller3includes a terminal42for the left controller3to perform wired communication with the main body apparatus2.

FIG. 5is six orthogonal views showing an example of the right controller4. As shown inFIG. 5, the right controller4includes a housing51. In the exemplary embodiment, the housing51has a vertically long shape, i.e., is shaped to be long in the up-down direction. In the state where the right controller4is detached from the main body apparatus2, the right controller4can also be held in the orientation in which the right controller4is vertically long. The housing51has such a shape and a size that when held in the orientation in which the housing51is vertically long, the housing51can be held with one hand, particularly the right hand. Further, the right controller4can also be held in the orientation in which the right controller4is horizontally long. When held in the orientation in which the right controller4is horizontally long, the right controller4may be held with both hands.

Similarly to the left controller3, the right controller4includes an analog stick52as a direction input section. In the exemplary embodiment, the analog stick52has the same configuration as that of the analog stick32of the left controller3. Further, the right controller4may include a directional pad, a slide stick that allows a slide input, or the like, instead of the analog stick. Further, similarly to the left controller3, the right controller4includes four operation buttons53to56(specifically, an A-button53, a B-button54, an X-button55, and a Y-button56) on a main surface of the housing51. Further, the right controller4includes a “+” (plus) button57and a home button58. Further, the right controller4includes a first R-button60and a ZR-button61in an upper right portion of a side surface of the housing51. Further, similarly to the left controller3, the right controller4includes a second L-button65and a second R-button66.

Further, the right controller4includes a terminal64for the right controller4to perform wired communication with the main body apparatus2.

FIG. 6is a diagram showing the overall configuration of another example of the information processing system according to the exemplary embodiment. As shown inFIG. 6, as an example, the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2can be mounted on the cradle5. Further, as yet another example, only the main body apparatus2can also be mounted on the cradle5in the state where the left controller3and the right controller4are detached from the main body apparatus2. Further, the cradle5can communicate (through wired communication or wireless communication) with a stationary monitor6(e.g., a stationary television), which is an example of an external display device separate from the display12. Although the details will be described later, when the unified apparatus or the main body apparatus2alone is mounted on the cradle5, the information processing system can display on the stationary monitor6an image acquired or generated by the main body apparatus2. Further, in the exemplary embodiment, the cradle5has the function of charging the unified apparatus or the main body apparatus2alone mounted on the cradle5. Further, the cradle5has the function of a hub device (specifically, a USB hub).

FIG. 7is a diagram showing an example of the external configuration of the cradle5. The cradle5includes a housing on which the unified apparatus or the main body apparatus2alone can be detachably mounted (or attached). In the exemplary embodiment, as shown inFIG. 7, the housing includes a first supporting portion71, in which a groove71ais formed, and an approximately planar second supporting portion72.

As shown inFIG. 7, the groove71aformed in the first supporting portion71has a shape corresponding to the shape of a lower portion of the unified apparatus. Specifically, the groove71ais so shaped as to allow the lower portion of the unified apparatus to be inserted into the groove71a, and more specifically, is so shaped as to approximately coincide with the lower portion of the main body apparatus2. Thus, the lower portion of the unified apparatus is inserted into the groove71a, whereby it is possible to mount the unified apparatus on the cradle5. Further, the second supporting portion72supports a front surface of the unified apparatus (i.e., the surface on which the display12is provided) of which the lower portion is inserted into the groove71a. With the second supporting portion72, the cradle5can support the unified apparatus more stably. It should be noted that the shape of the housing shown inFIG. 7is merely illustrative. In another exemplary embodiment, the housing of the cradle5may have any shape that allows the main body apparatus2to be mounted on the housing.

As shown inFIG. 7, further, the cradle5includes a main body terminal73for the cradle5to communicate with the unified apparatus. As shown inFIG. 7, the main body terminal73is provided on a bottom surface of the groove71a, which is formed in the first supporting portion71. More specifically, the main body terminal73is provided at the position where the lower terminal27of the main body apparatus2comes into contact with the main body terminal73when the unified apparatus is attached to the cradle5. In the exemplary embodiment, the main body terminal73is a USB connector (more specifically, a male connector).

Although not shown inFIG. 7, the cradle5includes a terminal (includes a plurality of terminals, specifically, a monitor terminal132, a power supply terminal134, and extension terminals137, which are shown inFIG. 10in the exemplary embodiment) on a back surface of the housing. The details of these terminals will be described later.

FIG. 8is a block diagram showing an example of the internal configuration of the main body apparatus2. The main body apparatus2includes components81to98shown inFIG. 8in addition to the components shown inFIG. 3. Some of the components81to98may be mounted as electronic components on an electronic circuit board and accommodated in the housing11.

The main body apparatus2includes a CPU (Central Processing Unit)81. The CPU81is an information processing section for executing various types of information processing to be executed by the main body apparatus2. The CPU81executes an information processing program (e.g., a game program) stored in a storage section (specifically, an internal storage medium such as a flash memory84, an external storage medium attached to each of the slots23and24, or the like), thereby performing the various types of information processing.

The main body apparatus2includes a flash memory84and a DRAM (Dynamic Random Access Memory)85as examples of internal storage media built into the main body apparatus2. The flash memory84and the DRAM85are connected to the CPU81. The flash memory84is a memory mainly used to store various data (or programs) to be saved in the main body apparatus2. The DRAM85is a memory used to temporarily store various data used for information processing.

The main body apparatus2includes a slot interface (hereinafter abbreviated as “I/F”)91. The slot I/F91is connected to the CPU81. The slot I/F91is connected to the slot23, and in accordance with an instruction from the CPU81, reads and writes data from and to the predetermined type of storage medium (e.g., a dedicated memory card) attached to the slot23.

The CPU81appropriately reads and writes data from and to the flash memory84, the DRAM85, and each of the above storage media, thereby performing the above information processing.

The main body apparatus2includes a network communication section82. The network communication section82is connected to the CPU81. The network communication section82communicates (specifically, through wireless communication) with an external apparatus via a network. In the exemplary embodiment, as a first communication form, the network communication section82connects to a wireless LAN and communicates with an external apparatus, using a method compliant with the Wi-Fi standard. Further, as a second communication form, the network communication section82wirelessly communicates with another main body apparatus2of the same type, using a predetermined communication method (e.g., communication based on a unique protocol or infrared light communication). It should be noted that the wireless communication in the above second communication form achieves the function of enabling so-called “local communication” in which the main body apparatus2can wirelessly communicate with another main body apparatus2placed in a closed local network area, and the plurality of main body apparatuses2directly communicate with each other to transmit and receive data.

The main body apparatus2includes a controller communication section83. The controller communication section83is connected to the CPU81. The controller communication section83wirelessly communicates with the left controller3and/or the right controller4. The communication method between the main body apparatus2and the left controller3and the right controller4is optional. In the exemplary embodiment, the controller communication section83performs communication compliant with the Bluetooth (registered trademark) standard with the left controller3and with the right controller4.

The CPU81is connected to the left terminal17, the right terminal21, and the lower terminal27. When performing wired communication with the left controller3, the CPU81transmits data to the left controller3via the left terminal17and also receives operation data from the left controller3via the left terminal17. Further, when performing wired communication with the right controller4, the CPU81transmits data to the right controller4via the right terminal21and also receives operation data from the right controller4via the right terminal21. Further, when communicating with the cradle5, the CPU81transmits data to the cradle5via the lower terminal27. As described above, in the exemplary embodiment, the main body apparatus2can perform both wired communication and wireless communication with each of the left controller3and the right controller4. Further, when the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2or the main body apparatus2alone is attached to the cradle5, the main body apparatus2can output data (e.g., image data or sound data) to the stationary monitor6via the cradle5.

Here, the main body apparatus2can communicate with a plurality of left controllers3simultaneously (in other words, in parallel). Further, the main body apparatus2can communicate with a plurality of right controllers4simultaneously (in other words, in parallel). Thus, the user can provide inputs to the main body apparatus2using a plurality of left controllers3and a plurality of right controllers4.

The main body apparatus2includes a touch panel controller86, which is a circuit for controlling the touch panel13. The touch panel controller86is connected between the touch panel13and the CPU81. Based on a signal from the touch panel13, the touch panel controller86generates, for example, data indicating the position where a touch input is provided. Then, the touch panel controller86outputs the data to the CPU81.

Further, the display12is connected to the CPU81. The CPU81displays a generated image (e.g., an image generated by executing the above information processing) and/or an externally acquired image on the display12.

The main body apparatus2includes a codec circuit87and speakers (specifically, a left speaker and a right speaker)88. The codec circuit87is connected to the speakers88and a sound input/output terminal25and also connected to the CPU81. The codec circuit87is a circuit for controlling the input and output of sound data to and from the speakers88and the sound input/output terminal25. That is, if receiving sound data from the CPU81, the codec circuit87outputs sound signals obtained by performing D/A conversion on the sound data to the speakers88or the sound input/output terminal25. Consequently, sounds are output from the speakers88or a sound output section (e.g., earphones) connected to the sound input/output terminal25. Further, if receiving a sound signal from the sound input/output terminal25, the codec circuit87performs A/D conversion on the sound signal and outputs sound data in a predetermined format to the CPU81. Further, the sound volume buttons26are connected to the CPU81. Based on an input to the sound volume buttons26, the CPU81controls the volume of sounds output from the speakers88or the sound output section.

The main body apparatus2includes a power control section97and a battery98. The power control section97is connected to the battery98and the CPU81. Further, although not shown inFIG. 8, the power control section97is connected to components of the main body apparatus2(specifically, components that receive power supplied from the battery98, the left terminal17, and the right terminal21). Based on a command from the CPU81, the power control section97controls the supply of power from the battery98to the above components.

Further, the battery98is connected to the lower terminal27. When an external charging device (e.g., the cradle5) is connected to the lower terminal27, and power is supplied to the main body apparatus2via the lower terminal27, the battery98is charged with the supplied power.

FIG. 9is a block diagram showing an example of the internal configuration of the information processing system1. It should be noted that the details of the internal configuration of the main body apparatus2in the information processing system1are shown inFIG. 8and therefore are omitted inFIG. 9.

The left controller3includes a communication control section101, which communicates with the main body apparatus2. As shown inFIG. 9, the communication control section101is connected to components including the terminal42. In the exemplary embodiment, the communication control section101can communicate with the main body apparatus2through both wired communication via the terminal42and wireless communication not via the terminal42. The communication control section101controls the method for communication performed by the left controller3with the main body apparatus2. That is, when the left controller3is attached to the main body apparatus2, the communication control section101communicates with the main body apparatus2via the terminal42. Further, when the left controller3is detached from the main body apparatus2, the communication control section101wirelessly communicates with the main body apparatus2(specifically, the controller communication section83). The wireless communication between the communication control section101and the controller communication section83is performed in accordance with the Bluetooth (registered trademark) standard, for example.

Further, the left controller3includes a memory102such as a flash memory. The communication control section101includes, for example, a microcomputer (or a microprocessor) and executes firmware stored in the memory102, thereby performing various processes.

The left controller3includes buttons103(specifically, the buttons33to39,43, and44). Further, the left controller3includes the analog stick (“stick” inFIG. 9)32. Each of the buttons103and the analog stick32outputs information regarding an operation performed on itself to the communication control section101repeatedly at appropriate timing.

The left controller3includes an acceleration sensor104. In the exemplary embodiment, the acceleration sensor104detects the magnitudes of linear accelerations along predetermined three axial (e.g., XYZ axes shown inFIG. 11) directions. It should be noted that the acceleration sensor104may detect an acceleration along one axial direction or accelerations along two axial directions. Further, the left controller3includes an angular velocity sensor105. In the exemplary embodiment, the angular velocity sensor105detects angular velocities about predetermined three axes (e.g., the XYZ axes shown inFIG. 11). It should be noted that the angular velocity sensor105may detect an angular velocity about one axis or angular velocities about two axes. Each of the acceleration sensor104and the angular velocity sensor105is connected to the communication control section101. Then, the detection results by the acceleration sensor104and the angular velocity sensor105are output to the communication control section101repeatedly at appropriate timing.

The communication control section101acquires information regarding an input (specifically, information regarding an operation or the detection result of the sensor) from each of input sections (specifically, the buttons103, the analog stick32, and the sensors104and105). The communication control section101transmits operation data including the acquired information (or information obtained by performing predetermined processing on the acquired information) to the main body apparatus2. It should be noted that the operation data is transmitted repeatedly, once every predetermined time. It should be noted that the interval at which the information regarding an input is transmitted from each of the input sections to the main body apparatus2may or may not be the same.

The above operation data is transmitted to the main body apparatus2, whereby the main body apparatus2can obtain inputs provided to the left controller3. That is, the main body apparatus2can determine operations on the buttons103and the analog stick32based on the operation data. Further, the main body apparatus2can calculate information regarding the motion and/or the orientation of the left controller3based on the operation data (specifically, the detection results of the acceleration sensor104and the angular velocity sensor105).

The left controller3includes a vibrator107for giving notification to the user by a vibration. In the exemplary embodiment, the vibrator107is controlled by a command from the main body apparatus2. That is, if receiving the above command from the main body apparatus2, the communication control section101drives the vibrator107in accordance with the received command. Here, the left controller3includes a codec section106. If receiving the above command, the communication control section101outputs a control signal corresponding to the command to the codec section106. The codec section106generates a driving signal for driving the vibrator107by amplifying the control signal from the communication control section101and outputs the driving signal to the vibrator107. Consequently, the vibrator107operates.

More specifically, the vibrator107is a linear vibration motor. Unlike a regular motor that rotationally moves, the linear vibration motor is driven in a predetermined direction in accordance with an input voltage and therefore can be vibrated at an amplitude and a frequency corresponding to the waveform of the input voltage. In the exemplary embodiment, a vibration control signal transmitted from the main body apparatus2to the left controller3may be a digital signal representing the frequency and the amplitude every unit of time. In another exemplary embodiment, information indicating the waveform itself may be transmitted. The transmission of only the amplitude and the frequency, however, enables a reduction in the amount of communication data. Additionally, to further reduce the amount of data, only the differences between the numerical values of the amplitude and the frequency at that time and the previous values may be transmitted, instead of the numerical values. In this case, the codec section106converts a digital signal indicating the values of the amplitude and the frequency acquired from the communication control section101into the waveform of an analog voltage and inputs a voltage in accordance with the resulting waveform, thereby driving the vibrator107. Thus, the main body apparatus2changes the amplitude and the frequency to be transmitted every unit of time and thereby can control the amplitude and the frequency at which the vibrator107is to be vibrated at that time. It should be noted that not only a single amplitude and a single frequency, but also two or more amplitudes and two or more frequencies may be transmitted from the main body apparatus2to the left controller3. In this case, the codec section106combines waveforms indicated by the plurality of received amplitudes and frequencies and thereby can generate the waveform of a voltage for controlling the vibrator107.

The left controller3includes a power supply section108. In the exemplary embodiment, the power supply section108includes a battery and a power control circuit. Although not shown inFIG. 7, the power control circuit is connected to the battery and also connected to components of the left controller3(specifically, components that receive power supplied from the battery).

As shown inFIG. 9, the right controller4includes a communication control section111, which communicates with the main body apparatus2. Further, the right controller4includes a memory112, which is connected to the communication control section111. The communication control section111is connected to components including the terminal64. The communication control section111and the memory112have functions similar to those of the communication control section101and the memory102, respectively, of the left controller3. Thus, the communication control section111can communicate with the main body apparatus2through both wired communication via the terminal64and wireless communication not via the terminal64(specifically, communication compliant with the Bluetooth (registered trademark) standard). The communication control section111controls the method for communication performed by the right controller4with the main body apparatus2.

The right controller4includes input sections similar to the input sections (specifically, buttons113, the analog stick52, an acceleration sensor114, and an angular velocity sensor115) of the left controller3. Specifically, the right controller4includes buttons113, the analog stick52, and inertial sensors (an acceleration sensor114and an angular velocity sensor115). These input sections have functions similar to those of the input sections of the left controller3and operate similarly to the input sections of the left controller3.

Further, the right controller4includes a vibrator117and a codec section116. The vibrator117and the codec section116operate similarly to the vibrator107and the codec section106, respectively, of the left controller3. That is, in accordance with a command from the main body apparatus2, the communication control section111causes the vibrator117to operate, using the codec section116.

The right controller4includes a power supply section118. The power supply section118has a function similar to that of the power supply section108of the left controller3and operates similarly to the power supply section108.

FIG. 10is a block diagram showing an example of the internal configuration of the cradle5. It should be noted that the details of the internal configuration of the main body apparatus2are shown inFIG. 8and therefore are omitted inFIG. 10.

As shown inFIG. 10, the cradle5includes a conversion section131and a monitor terminal132. The conversion section131is connected to the main body terminal73and the monitor terminal132. The conversion section131converts the formats of signals of an image (or video) and a sound received from the main body apparatus2into formats in which the image and the sound are output to the stationary monitor6. Here, in the exemplary embodiment, the main body apparatus2outputs signals of an image and a sound as display port signals (i.e., signals compliant with the DisplayPort standard) to the cradle5. Further, in the exemplary embodiment, as the communication between the cradle5and the stationary monitor6, communication based on the HDMI (registered trademark) standard is used. That is, the monitor terminal132is an HDMI terminal, and the cradle5and the stationary monitor6are connected together by an HDMI cable. Then, the conversion section131converts the display port signals (specifically, the signals representing the video and the sound) received from the main body apparatus2via the main body terminal73into HDMI signals. The converted HDMI signals are output to the stationary monitor6via the monitor terminal132.

The cradle5includes a power control section133and a power supply terminal134. The power supply terminal134is a terminal for connecting a charging device (e.g., an AC adapter or the like) (not shown). In the exemplary embodiment, an AC adapter is connected to the power supply terminal134, and mains electricity is supplied to the cradle5. When the main body apparatus2is attached to the cradle5, the power control section133supplies power from the power supply terminal134to the main body apparatus2via the main body terminal73. Consequently, the battery98of the main body apparatus2is charged.

Further, the cradle5includes a connection processing section136and extension terminals137. Each of the extension terminals137is a terminal for connecting to another apparatus. In the exemplary embodiment, the cradle5includes a plurality of (more specifically, three) USB terminals as the extension terminals137. The connection processing section136is connected to the main body terminal73and the extension terminals137. The connection processing section136has a function as a USB hub and for example, manages the communication between an apparatus connected to each of the extension terminals137and the main body apparatus2connected to the main body terminal73(i.e., transmits a signal from a certain apparatus to another apparatus by appropriately distributing the signal). As described above, in the exemplary embodiment, the information processing system1can communicate with another apparatus via the cradle5. It should be noted that the connection processing section136may be able to change the communication speed, or supply power to the apparatus connected to the extension terminal137.

As describe above, in the information processing system1according to the exemplary embodiment, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. Further, the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2or the main body apparatus2alone is attached to the cradle5and thereby can output an image (and a sound) to the stationary monitor6. A description is given below using the information processing system in use forms in which an image (and a sound) is output to the stationary monitor6by attaching the main body apparatus2alone to the cradle5in the state where the left controller3and the right controller4are detached from the main body apparatus2.

As described above, in the exemplary embodiment, the information processing system1can also be used in the state where the left controller3and the right controller4are detached from the main body apparatus2(referred to as a “separate state”). As a form in a case where an operation is performed on the same application (e.g., a game application) using the information processing system1in the separate state, a form in which a single user uses both the left controller3and the right controller4is possible. It should be noted that when a plurality of users perform operations using the same application in this use form, a form is possible in which a plurality of sets of the left controller3and the right controller4are prepared, and each user uses one of the plurality of sets.

FIGS. 11 to 13are diagrams showing an example of the state where a single user uses the information processing system1by holding a set of the left controller3and the right controller4in the separate state. As shown inFIGS. 11 to 13, in the separate state, the user can view an image displayed on the stationary monitor6while operating the left controller3and the right controller4by holding the left controller3with their left hand and the right controller4with their right hand.

For example, in the exemplary embodiment, the user holds the left controller3with their left hand such that the down direction of the longitudinal direction of the left controller3(the down direction (the negative y-axis direction) shown inFIG. 1), which is vertically long and approximately plate-shaped, is the vertical direction, also the side surface (the side surface on which a slider40is provided) that is in contact with the main body apparatus2when the left controller3is attached to the main body apparatus2is directed forward, and also the main surface of the left controller3(the surface on which the analog stick32is provided) is directed to the right. Further, the user holds the right controller4with their right hand such that the down direction of the longitudinal direction of the right controller4(the up-down direction (the negative y-axis direction) shown inFIG. 1), which is vertically long and approximately plate-shaped, is the vertical direction, also the side surface (the side surface on which the slider40is provided) that is in contact with the main body apparatus2when the right controller4is attached to the main body apparatus2is directed forward, and also the main surface of the right controller4(the surface on which the analog stick52is provided) is directed to the left. In the state where the left controller3is held with the left hand, and the right controller4is held with the right hand (hereinafter, the orientations of the left controller3and the right controller4held in these directions will occasionally be referred to as “basic orientations”), each controller is moved in up, down, left, right, front, and back directions, rotated, or swung, whereby game play is performed in accordance with the motion or the orientation of the controller.

It should be noted that to facilitate the understanding of the directions of accelerations and angular velocities generated in the left controller3, a front direction in the above holding state (the direction from a round side surface to the side surface in contact with the main body apparatus2, and the negative x-axis direction shown inFIG. 1) is defined as a positive X-axis direction. A right direction in the above holding state (the direction from a back surface to the main surface, and the negative z-axis direction shown inFIG. 1) is defined as a positive Y-axis direction. An up direction in the above holding state (the direction toward the up direction of the longitudinal direction, and the positive y-axis direction shown inFIG. 1) is defined as a positive Z-axis direction. Then, the acceleration sensor104of the left controller3can detect accelerations in the XYZ-axis directions, and the angular velocity sensor105can detect angular velocities about the XYZ-axis directions. Further, to facilitate the understanding of the directions of accelerations and angular velocities generated in the right controller4, a front direction in the above holding state (the direction from a round side surface to the side surface in contact with the main body apparatus2, and the positive x-axis direction shown inFIG. 1) is defined as a positive X-axis direction. A right direction in the above holding state (the direction from the main surface to a back surface, and the positive z-axis direction shown inFIG. 1) is defined as a positive Y-axis direction. An up direction in the above holding state (the direction toward the up direction of the longitudinal direction, and the positive y-axis direction shown inFIG. 1) is defined as a positive Z-axis direction. Then, the acceleration sensor114of the right controller4can detect accelerations in the XYZ-axis directions, and the angular velocity sensor115can detect angular velocities about the XYZ-axis directions.

2. Outline of Game Operation

Next, an outline of a game operation according to the exemplary embodiment is described with reference toFIGS. 14 to 20. In the exemplary embodiment, four objects, namely, first to fourth player objects, to be operated by players (in other words, users of the information processing system) appear in a three-dimensional game space. In the exemplary embodiment, each of four users operates one of the player objects. Specifically, a first user operates the first player object, a second user operates the second player object, a third user operates the third player object, and a fourth user operates the fourth player object. Although the details will be described later, a game of the exemplary embodiment is a competition game, and the respectively player objects are separated into two groups to compete. Here, the first player object and the second player object make a first group (a friend group for the first player object), the third player object and the fourth player object make a second group (an enemy group for the first player object), and the first group and the second group compete against each other. A description is given below of the game operation in a case where the first user operates the first player object by using the left controller3and the right controller4.

FIGS. 14 to 19are diagrams showing examples of a game image displayed in a game played by moving the left controller3and the right controller4. As shown inFIG. 14, in this exemplary game, an image of a game (e.g., a boxing game) in which a first player object P1and a second player object (not shown) compete against a third player object P3and a fourth player object P4is displayed on the stationary monitor6.

The user operating the left controller3and the right controller4can operate the first player object P1by swinging the main body of the left controller3and/or the main body of the right controller4, or changing the orientations of the main body of the left controller3and/or the main body of the right controller4. For example, the user swings the left controller3and thereby can control the action of a left-fist object G1, which represents a left glove (a left first) of the first player object P1. The user swings the right controller4and thereby can control the action of a right-fist object G2, which represents a right glove (a right first) of the first player object P1. Specifically, when the user performs the operation of swinging so as to throw a left punch using the left hand holding the left controller3, the left-fist object G1, which represents the left glove of the first player object P1, moves toward the place where the third player object P3or the fourth player object P4as an enemy object is placed. Further, when the user performs the operation of swinging so as to throw a right punch using the right hand holding the right controller4, the right-fist object G2, which represents the right glove of the first player object P1, moves toward the place where the enemy object is placed (seeFIGS. 15 and 16).

For example, when the right controller4is swung so as to be pushed forward (in the positive X-axis direction of the right controller4) in the state where neither of the left controller3and the right controller4moves (the state shown inFIG. 14), then as shown inFIG. 15, the right-fist object G2of the first player object P1moves toward the enemy object in accordance with the motion of the right controller4in the state where an effect image Ie1is provided. Consequently, a game image is displayed such that the first player object P1throws a right punch at the enemy object.

Here, the moving direction of the left-fist object G1starting moving is set by the orientation of the left controller3when the left controller3is swung so as to be pushed forward. Further, the moving direction of the right-fist object G2starting moving is set by the orientation of the right controller4when the right controller4is moved so as to be pushed forward. For example, when the right controller4moves in the positive X-axis direction as shown inFIG. 15, a moving direction D1of the right-fist object G2is set in accordance with the orientation in a roll direction of the right controller4in this movement. As an example, in the exemplary embodiment, in the period in which the right controller4moves, the tilt in the Y-axis direction of the right controller4with respect to the direction in which a gravitational acceleration acts in real space is calculated, and the moving direction D1of the right-fist object G2is calculated based on the tilt in the Y-axis direction. Specifically, when the tilt in the Y-axis direction indicates that the right controller4is in the orientation in which the right controller4roll-rotates in the right direction with respect to the above reference orientation, the right-fist object G2moves in the right direction in a virtual space. Further, when the tilt in the Y-axis direction indicates that the right controller4is in the orientation in which the right controller4roll-rotates in the left direction with respect to the reference orientation, the right-fist object G2moves in the left direction in the virtual space. Then, the angle at which the moving direction shifts in the right direction or the left direction is calculated in accordance with the tilt angle in the Y-axis direction.

In this exemplary game, even when the distance between the first player object P1and the enemy object is relatively long in the virtual space, it is possible to throw a punch. The arms of the first player object P1extend, whereby the left-fist object G1and the right-fist object G2can move by a relatively long distance. Then, the left-fist object G1or the right-fist object G2collides with another object (e.g., the enemy object) or moves by a predetermined distance, then finishes the movement, and returns to a movement start position where the left-fist object G1or the right-fist object G2starts moving (e.g., a hand portion of the first player object P1shown inFIG. 14). The left-fist object G1and the right-fist object G2return to the movement start positions and thereby can make a next movement toward the enemy object. In other words, it is possible to throw a next punch. Thus, the time from when the left-fist object G1or the right-fist object G2starts moving from the movement start position to when the left-fist object G1or the right-fist object G2returns to the movement start position again is longer than in a general boxing game.

In this exemplary game, when the left-fist object G1and/or the right-fist object G2move in the virtual space, the controllers for operating the objects vibrate. For example, when the left-fist object G1moves in accordance with the fact that the left controller3is swung so as to be pushed forward, the left controller3vibrates in accordance with the fact that the left-fist object G1moves in the virtual space. Further, when the right-fist object G2moves in accordance with the fact that the right controller4is swung so as to be pushed forward, the right controller4vibrates in accordance with the fact that the right-fist object G2moves in the virtual space (the state inFIG. 15). Specifically, when the left controller3and/or the right controller4are swung so as to be pushed forward, the main body apparatus2generates outward vibration data in accordance with the types, the moving velocities, the moving directions, and the like of the left-fist object G1and/or the right-fist object G2moving in the virtual space, and transmits the outward vibration data to the left controller3and/or the right controller4. Further, when the left-fist object G1and/or the right-fist object G2collide with another object, the main body apparatus2generates vibration data indicating vibrations corresponding to the collision and transmits the vibration data to the left controller3and/or the right controller4. Further, when the left-fist object G1and/or the right-fist object G2move on homeward paths for returning to the movement start positions, the main body apparatus2generates homeward vibration data in accordance with the types, the moving velocities, the moving directions, and the like of the left-first object G1and/or the right-fist object G2moving on the homeward paths and transmits the homeward vibration data to the left controller3and/or the right controller4. Consequently, the left controller3and/or the right controller4receiving various vibration data vibrate based on the vibration data.

In this exemplary game, even while the left-fist object G1or the right-fist object G2is moving using the movement time of the left-fist object G1or the right-fist object G2(typically, the period in which the left-fist object G1or the right-fist object G2is moving in the direction of the enemy object), it is possible to change a trajectory moving in accordance with the orientation or the motion of the left controller3or the right controller4. For example, when the left controller3or the right controller4rotates in the roll direction or rotates in a yaw direction from the orientation of the left controller3or the right controller4when the left-fist object G1or the right-fist object G2starts moving, the trajectory of the left-fist object G1or the right-fist object G2is changed in accordance with the rotation. For example, in the example shown inFIG. 16, after the right controller4is swung so as to be pushed forward in the positive X-axis direction, the moving direction of the right-fist object G2changes to D2in accordance with the fact that the right controller4rotationally moves in the left roll direction (θx inFIG. 16) during the movement of the right-fist object G2. Further, when the moving direction of the right-fist object G2changes, an effect image Ie2is provided for the right-fist object G2.

As an example, in the exemplary embodiment, in the state where the rotational velocity (the angular velocity) about the X-axis of the left controller3or the right controller4after the left-fist object G1or the right-fist object G2starts moving is the rotation in the roll direction, the trajectory of the left-fist object G1or the right-fist object G2moving based on this rotational velocity about the X-axis is changed. Specifically, when the rotational velocity of the left controller3roll-rotating in the right direction about the X-axis while the left-fist object G1is moving is obtained, the trajectory of the left-fist object G1is changed in the right direction in the virtual space. When the rotational velocity of the left controller3roll-rotating in the left direction about the X-axis is obtained, the trajectory of the left-fist object G1is changed in the left direction in the virtual space. Further, when the rotational velocity of the right controller4roll-rotating in the right direction about the X-axis while the right-fist object G2is moving is obtained, the trajectory of the right-fist object G2is changed in the right direction in the virtual space. When the rotational velocity of the right controller4roll-rotating in the left direction about the X-axis is obtained, the trajectory of the right-fist object G2is changed in the left direction in the virtual space.

As another example, in the exemplary embodiment, in the state where the rotational velocity (the angular velocity) of the left controller3or the right controller4with respect to the direction of gravity in real space after the left-fist object G1or the right-fist object G2starts moving is the rotation in the yaw direction, the trajectory of the left-fist object G1or the right-fist object G2moving based on this rotational velocity about the direction of gravity is changed. Specifically, when the rotational velocity of the left controller3yaw-rotating in the right direction about the direction of gravity while the left-fist object G1is moving is obtained, the trajectory of the left-fist object G1is changed in the right direction in the virtual space. When the rotational velocity of the left controller3yaw-rotating in the left direction about the direction of gravity is obtained, the trajectory of the left-fist object G1is changed in the left direction in the virtual space. Further, when the rotational velocity of the right controller4yaw-rotating in the right direction about the direction of gravity while the right-fist object G2is moving is obtained, the trajectory of the right-fist object G2is changed in the right direction in the virtual space. When the rotational velocity of the right controller4yaw-rotating in the left direction about the direction of gravity is obtained, the trajectory of the right-fist object G2is changed in the left direction in the virtual space.

As described above, when the trajectories of the left-fist object G1and/or the right-fist object G2change, the vibrations of the left controller3and/or the right controller4also change. For example, when the outward trajectories of the left-fist object G1and/or the right-first object G2change, the main body apparatus2calculates change parameters for the amplitudes and/or the frequencies of the vibrations in accordance with changes in the motions or the orientations of the left controller3and/or the right controller4(e.g., the angular velocities in the roll direction or the yaw direction), temporarily changes the outward vibration data using the change parameters, and then changes back the outward vibration data again. Consequently, receiving the outward vibration data that temporarily changes, the left controller3and/or the right controller4change the amplitudes and/or the frequencies of the vibrations based on the outward vibration data.

Further, in this exemplary game, using the magnitude of an acceleration generated in the left controller3or the right controller4, it is determined whether or not the left controller3or the right controller4is swung. Then, when it is determined that the left controller3is swung in the positive X-axis direction in the state where the left-fist object G1is placed at the movement start position (hereinafter referred to as a “first movement-start-allowed state”), the left-fist object G1starts moving from the movement start position toward the enemy object. Further, when it is determined that the right controller4is swung in the positive X-axis direction in the state where the right-fist object G2is placed at the movement start position (hereinafter referred to as a “second movement-start-allowed state”), the right-fist object G2starts moving from the movement start position toward the enemy object. In the exemplary embodiment, however, even when the left controller3is not in the first movement-start-allowed state, but when the left controller3enters the first movement-start-allowed state within a predetermined time from when it is determined that the left controller3is swung in the positive X-axis direction, it is possible to start the movement of the left-fist object G1from the movement start position toward the enemy object in accordance with the operation of swinging the left controller3. Further, even when the right controller4is not in the second movement-start-allowed state, but when the right controller4enters the second movement-start-allowed state within a predetermined time from when it is determined that the right controller4is swung in the positive X-axis direction, it is possible to start the movement of the right-fist object G2from the movement start position toward the enemy object in accordance with the operation of swinging the right controller4. As described above, in the exemplary embodiment, even when the left controller3and/or the right controller4are not in the first movement-start-allowed state and/or the second movement-start-allowed state, the left controller3and/or the right controller4are swung, whereby it is possible to give an instruction to start the movements of the left-fist object G1and/or the right-fist object G2. Thus, it is possible to facilitate an operation even in a game where the state where an operation instruction can be given intermittently occurs. That is, as described above, in the exemplary game, the time from when the left-fist object G1or the right-first object G2starts moving from the movement start position to when the left-fist object G1or the right-fist object G2returns to the movement start position again is longer than that in a general boxing game. Thus, it is possible that the operation of swinging the left controller3or the right controller4precedes in the state where the user cannot wait until the first movement-start-allowed state or the second movement-start-allowed state. However, even when such a preceding operation is performed, it is possible to aid the preceding operation without invalidating the preceding operation and make use of the preceding operation for a game operation.

In this exemplary game, as shown inFIG. 17, the left-fist object G1and the right-first object G2are caused to simultaneously start moving from the movement start positions, whereby a predetermined action is performed. For example, when, within a predetermined period from when one of the left-fist object G1and the right-fist object G2starts moving, the other starts moving, a “both-hand punch action” is started in which the left-fist object G1and the right-fist object G2are a set. Here, in the “both-hand punch action”, the state where a collision area A is formed between the left-fist object G1and the right-fist object G2moving in the virtual space is represented as a game image, and the left-fist object G1and the right-fist object G2move toward the enemy object in the state where the collision area A is formed. Then, when the left-fist object G1or the right-fist object G2that is moving or the collision area A collides with the enemy object, a predetermined action is performed in which damage greater than that in the case where the left-fist object G1or the right-fist object G2solely collides with the enemy object is imparted to the enemy object. In the exemplary embodiment, in the “both-hand punch action”, when the left-fist object G1, the right-fist object G2, or the collision area A collides with the enemy object, the action of throwing the enemy object is performed. The “both-hand punch action” may be an action of putting the enemy object out of action. It should be noted that even during the execution of the “both-hand punch action”, the trajectories of the movements of the left-fist object G1and/or the right-fist object G2can be changed in accordance with the orientations or the motions of the left controller3and/or the right controller4. Thus, the trajectories of the movements of the left-fist object G1and/or the right-fist object G2are changed, whereby it is possible to also change the range of the collision area A. Thus, it is possible to make a more strategic attack on the enemy object.

As described above, in the exemplary embodiment, the operation display mode in the case where the actions of both the left-fist object G1and the right-fist object G2are performed (FIG. 17) is different from the operation display mode in the case where the punching action of one of the left-fist object G1and the right-fist object G2is performed (FIG. 15). That is, in the former case, an effect image Ie2is provided for the first object performing the action. In the latter case, an effect image (i.e., the collision area A) different from the effect image Ie2is provided for the first object. Thus, which action is being performed can be presented in an easy-to-understand manner to the user.

Even when the left-fist object G1and the right-fist object G2move in the state where such an action is performed, the left controller3and/or the right controller4vibrate in accordance with the movements of the objects. Specifically, when the left-fist object G1and the right-fist object G2move by the both-hand punch action, the main body apparatus2generates action outward vibration data in accordance with the types, the moving velocities, the moving directions, and the like of the left-fist object G1and the right-fist object G2moving in the virtual space and transmits the action outward vibration data to the left controller3and the right controller4. Further, when the collision area A, the left-fist object G1, or the right-fist object G2collides with another object, the main body apparatus2generates vibration data indicating a vibration corresponding to the collision and transmits the vibration data to the left controller3and the right controller4. Further, when the left-fist object G1and the right-fist object G2move on the homeward paths for returning to the movement start positions, the main body apparatus2generates homeward vibration data in accordance with the types, the moving velocities, the moving directions, and the like of the left-fist object G1and the right-fist object G2moving on the homeward paths and transmits the homeward vibration data to the left controller3and the right controller4. Consequently, even when the both-hand punch action is performed, the left controller3and the right controller4receiving various vibration data vibrate based on the vibration data.

Further, when the trajectories of the left-fist object G1and the right-fist object G2change during the execution of the both-hand punch action, the vibrations of the left controller3and the right controller4also change. For example, when the outward trajectories of the left-first object G1and the right-fist object G2change during the execution of the both-hand punch action, the main body apparatus2calculates change parameters for the amplitudes and/or the frequencies of the vibrations in accordance with the changes in the motions or the orientations of the left controller3and/or the right controller4, temporarily changes the action outward vibration data using the change parameters, and then changes back the action outward vibration data again. Consequently, receiving the action outward vibration data that temporarily changes, the left controller3and the right controller4change the amplitudes and/or the frequencies of the vibrations based on the action outward vibration data.

In this exemplary game, it is possible to move the first player object P1in the virtual space in accordance with the motions and/or the orientations of both the left controller3and the right controller4. For example, when both the left controller3and the right controller4rotate in a pitch direction or rotate in the roll direction in real space, the first player object P1is caused to move in accordance with the tilts of the rotations (e.g., θx shown inFIG. 18) (seeFIG. 18). Specifically, the tilts in the X-axis direction and the Y-axis direction of the left controller3and the tilts in the X-axis direction and the Y-axis direction of the right controller4with respect to the direction of gravity in real space are calculated. Then, based on these tilts, when it is determined that both the left controller3and the right controller4are in the orientations in which the left controller3and the right controller4are tilted forward, the first player object P1is caused to move forward in the virtual space by the amount of movement corresponding to the angles at which both the left controller3and the right controller4are tilted forward (e.g., the average value of the tilt angle of the left controller3and the tilt angle of the right controller4). In the exemplary embodiment, the first player object P1moves in a direction with respect to the front direction of the first player object P1. That is, in the above case, the first player object P1moves in the front direction of the first player object P1. In another exemplary embodiment, the first player object P1may move in a direction with respect to a direction in accordance with a line-of-sight direction of a virtual camera (i.e., a depth direction of the screen).

Further, based on these tilts, when it is determined that both the left controller3and the right controller4are in the orientations in which the left controller3and the right controller4are tilted backward, the first player object P1is caused to move backward (i.e., backward with respect to the front direction of the first player object P1) in the virtual space by the amount of movement corresponding to the angles at which both the left controller3and the right controller4are tilted backward (e.g., the average value of these angles). Further, based on these tilts, when it is determined that both the left controller3and the right controller4are in the orientations in which the left controller3and the right controller4are tilted to the left, the first player object P1is caused to move to the left (i.e., to the left with respective to the front direction of the first player object P1) in the virtual space by the amount of movement corresponding to the angles at which both the left controller3and the right controller4are tilted to the left (e.g., the average value of these angles) (seeFIG. 18). Further, based on these tilts, when it is determined that both the left controller3and the right controller4are in the orientation in which the left controller3and the right controller4are tilted to the right, the first player object P1is caused to move to the right (i.e., to the right with respective to the front direction of the first player object P1) in the virtual space by the amount of movement corresponding to the angles at which both the left controller3and the right controller4are tilted to the right (e.g., the average value of these angles).

In the exemplary game, it is possible to cause the first player object P1to perform a guard action in accordance with the motions and/or the orientations of both the left controller3and the right controller4. While performing the guard action, the first player object P1is not damaged even if a punch of another object hits the first player object P1. However, the first player object P1cannot avoid, even with the guard action, the throw action due to the both-hand punch action of another player object (that is, the first player object P1, even during the guard action, is thrown by the both-hand punch action of the other player object). In addition, while performing the guard action, the first player object P1cannot throw a punch, and cannot perform the both-hand punch action.

In the exemplary embodiment, based on the tilts described above, when it is determined that both the left controller3and the right controller4are in the orientations in which the left controller3and the right controller4are tilted inward, the first player object P1performs the guard action (FIG. 19). Specifically, the main body apparatus2calculates a tilt of the left controller3in the X-axis direction and a tilt of the right controller4in the X-axis direction with respect to the direction of gravity in the real space. Then, based on these tilts, when it is determined that both the left controller3and the right controller4are in the orientations in which the left controller3and the right controller4are tilted inward at a predetermined angle or more (e.g., θx shown inFIG. 19), the main body apparatus2causes the first player object P1to perform the guard action.

In the exemplary game, it is possible to cause the first player object P1to perform an action in accordance with an operation using the analog stick and/or the operation buttons of the controller, in addition to the operation of moving the controller. For example, the main body apparatus2causes the first player object P1to perform a jumping action in accordance with a pressing input to a predetermined operation button of the controller.

While the operation method for the first player object P1has been described above, operation methods for the second to fourth player objects are the same as the operation method for the first player object P1in the exemplary embodiment. That is, in the exemplary embodiment, each user performs an operation for the corresponding player object by using one set of controllers composed of a left controller and a right controller. In another exemplary embodiment, the operation methods for the second to fourth player objects may be different from the operation method for the first player object P1. For example, actions of some of the second to fourth player objects may be controlled based on an input (e.g., an input to the analog stick or a button) to one left controller or one right controller.

Further, the game of the exemplary embodiment may be a communication competition game. In this case, some of the second to fourth player objects (e.g., the third player object P3and the fourth player object P4as enemy objects) may be controlled by a user of another external device capable of communicating with the main body apparatus2via a network. For example, the external devices may be another main body apparatus of the same type as the main body apparatus2shown inFIG. 1, and the actions of the player objects may be controlled based on inputs to controllers communicable with the other main body apparatus. At this time, the main body apparatus2performs communication with two sets of controllers to acquire operation data from the two sets of controllers, while the other main body apparatus performs communication with other two sets of controllers to acquire operation data from the other two sets of controllers. It should be noted that, when a plurality of users use one main body apparatus, the display area of the stationary monitor6may be split into two display areas, and an image for each user (i.e., an image in which the game space is viewed in the line-of-sight direction from a player object operated by one user to a player object operated by the other user) may be displayed on each split display area.

In the exemplary embodiment, non-player characters may be used instead of some of the second to fourth player objects. That is, the main body apparatus2may use, instead of some of the second to fourth player objects, an object whose action is automatically controlled based on an algorithm determined in the game program. That is, the number of users who play the game of the exemplary embodiment is not limited to four, and may be one to three.

In the exemplary embodiment, it is also possible to play the above game using an attachment (an accessory device) for joining the left controller3and the right controller4to cause the left controller3and the right controller4to function as a single operation apparatus.

FIG. 20is a diagram showing an example of an accessory device to which the left controller3and the right controller4are attachable. As shown inFIG. 20, an extension grip210, which is an example of the accessory device, is an accessory device used by the user to perform an operation. The left controller3is attachable to the extension grip210, and the right controller4is also attachable to the extension grip210. Thus, with the extension grip210, the user can perform an operation by holding, in a unified manner, the two controllers3and4detached from the main body apparatus2.

The extension grip210has mechanisms similar to those of the main body apparatus2(specifically, the left rail member15, the right rail member19, and the like) as mechanisms for attaching the left controller3and the right controller4. Thus, similarly to the case where the left controller3and the right controller4are attached to the main body apparatus2, the left controller3and the right controller4can be attached to the extension grip210. Specifically, in the extension grip210, mechanisms for attaching the left controller3and the right controller4are provided on both left and right sides across a main body portion having a predetermined width, and rail members for attaching the left controller3and the right controller4are provided in parallel. Consequently, the left controller3and the right controller4are attached to the extension grip210such that the xyz-axis directions of the left controller3and the right controller4are parallel to each other. Then, the user holds with both hands the left controller3and the right controller4attached to the extension grip210and unified. Consequently, the user can hold in a unified manner the two controllers, namely the left controller3and the right controller4, detached from the main body apparatus2.