U.S. Pat. No. 10,297,090

INFORMATION PROCESSING SYSTEM, APPARATUS AND METHOD FOR GENERATING AN OBJECT IN A VIRTUAL SPACE

AssigneeNintendo Co., Ltd.

Issue DateJune 12, 2017

Illustrative Figure

Abstract

An example information processing apparatus includes a processing system configured to control the information processing apparatus to: determine a base and a base direction in a virtual space; and determine whether an object can be generated in the virtual space relative to the base in the base direction. Based on determining that the object can be generated relative to the base in the base direction, an animated indicator may be displayed indicating at least the base direction for generating the object in the virtual space. Based on determining that a specified condition is satisfied, the object may be progressively generated relative to the base in the base direction.

Description

DETAILED DESCRIPTION OF EXAMPLE EMBODIMENTS The figures discussed herein, and the various embodiments used to describe the principles of the present disclosure in this patent document are by way of illustration only and should not be construed in any way to limit the scope of the disclosure. Those skilled in the art will understand that the principles of the present disclosure may be implemented in any of a variety of suitably arranged electronic devices. Hereinafter, example embodiments of the present disclosure are described in detail with reference to the accompanying drawings. In the following description, detailed descriptions of well-known functions or configurations are omitted to avoid unnecessarily obscuring the subject matter of the present disclosure. The present disclosure relates to generating objects, such as, but not limited to, blocks or pillars, in a virtual space (e.g., a virtual 3D world). Generating the objects may allow a player character in the virtual space to move between different areas of the virtual space, place obstacles in the virtual space, bypass obstacles in the virtual space, and/or move other objects in the virtual space. The technology described herein can provide, for example, an efficient manner to generate objects in the virtual space, present various characteristics of the object to be generated (e.g., its size, dimensions, location in the virtual world, etc.) before the object is generated, and/or provide an efficient manner to change the location and orientation of the object to be generated. By way of illustration and without limitation, before an object is generated in the virtual space, a determination may be made as to whether the object can be generated at a designated location and, based on determining that the object can be generated at the designated location, an indicator may be displayed. The indicator may be displayed such that the ...

DETAILED DESCRIPTION OF EXAMPLE EMBODIMENTS

The figures discussed herein, and the various embodiments used to describe the principles of the present disclosure in this patent document are by way of illustration only and should not be construed in any way to limit the scope of the disclosure. Those skilled in the art will understand that the principles of the present disclosure may be implemented in any of a variety of suitably arranged electronic devices. Hereinafter, example embodiments of the present disclosure are described in detail with reference to the accompanying drawings. In the following description, detailed descriptions of well-known functions or configurations are omitted to avoid unnecessarily obscuring the subject matter of the present disclosure.

The present disclosure relates to generating objects, such as, but not limited to, blocks or pillars, in a virtual space (e.g., a virtual 3D world). Generating the objects may allow a player character in the virtual space to move between different areas of the virtual space, place obstacles in the virtual space, bypass obstacles in the virtual space, and/or move other objects in the virtual space. The technology described herein can provide, for example, an efficient manner to generate objects in the virtual space, present various characteristics of the object to be generated (e.g., its size, dimensions, location in the virtual world, etc.) before the object is generated, and/or provide an efficient manner to change the location and orientation of the object to be generated.

By way of illustration and without limitation, before an object is generated in the virtual space, a determination may be made as to whether the object can be generated at a designated location and, based on determining that the object can be generated at the designated location, an indicator may be displayed. The indicator may be displayed such that the indicator represents the object to be generated without having to generate the object in the virtual space. Displaying the indicator according to various illustrative embodiments of this disclosure may provide a user an indication of where the object will be placed in the virtual space, how the object will look in the virtual space, and/or how the object will be generated in the virtual space before the object is generated. In some instances, displaying the indicator before the object is generated may provide information about how the generated object will interact with other movable and/or non-movable objects in the virtual space when the object is generated. Displaying the indicator according to various illustrative embodiments of this disclosure may prevent the problem of an unexpected collision between the generated object and other objects or characters in the virtual space. In a non-limiting example embodiment, the indicator may be displayed so as to pass through movable objects. For example, an ice pillar can be generated even when generating the ice pillar will cause a collision with a movable treasure chest and, in this case, the indicator is displayed so as to pass through the treasure chest. As explained in greater detail below, a movable object such as a treasure chest can be moved in accordance with the growing pillar. In case of a collision with stationary (non-movable) objects (e.g., a ceiling, a floor), the indicator does not grow. The ice pillar cannot be generated and the ice pillar will not be generated at all. If the ice pillar collides with a stationary object after it is generated (e.g., the indicator didn't collide with the stationary object at the time of generation but the stationary object moves and collides with the ice pillar after the generation), the ice pillar is destroyed.

By way of illustration, for a game, a player character may come to a body of water in the virtual world which needs to be crossed. In accordance with the technology described herein, the player may generate an object to assist in crossing the water to reach another part of the game world. For example, the player may grow an ice pillar to which the character can jump from the current position. From the ice pillar, the character can then jump to the other part of the game world. The disclosed technology displays an indicator prior to generating the object so that the player can better ascertain various characteristics of the object to be generated (e.g., its size, dimensions, location in the virtual world, etc.). While the indicator is displayed, the player can change the location at which the object will be generated to, for example, confirm that it is positioned to allow for the jumping action described above. Displaying the indicator before the ice pillar is generated allows a user to accurate place the object at a desired location. Of course, the example ice pillar may be generated for purposes other than reaching different parts of a game world. For example, the ice pillar may be generated to provide an obstacle to other game objects in the virtual game world or to move one or more other objects in the virtual game world.

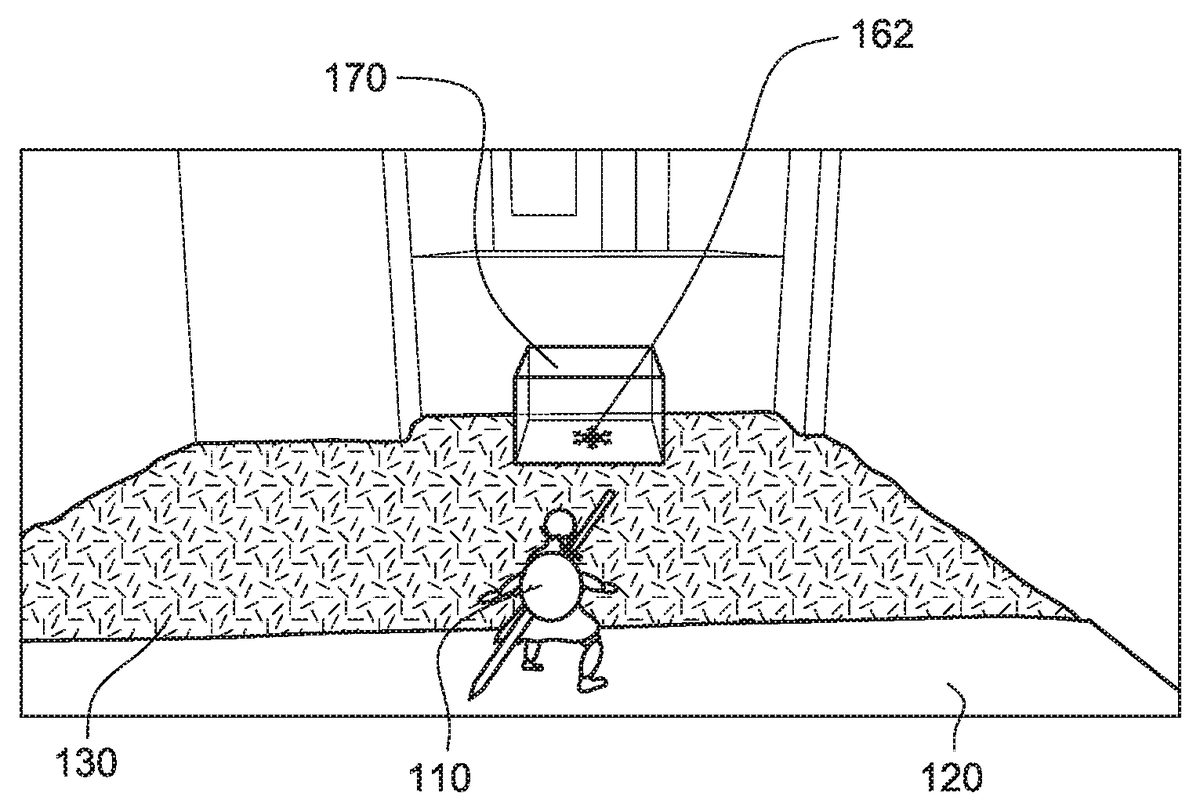

FIGS. 1-13show non-limiting examples of displaying an indicator and generating an ice pillar180in a virtual game world during game play. While this example describes an ice pillar, the ice may take other shapes including blocks, cylinders and the like.FIG. 1shows an image of a virtual game world as captured by a virtual camera. The virtual game world includes a player character110controlled based on progress of the game and/or user controls. The images of the virtual game world change with changes in the position and/or orientation of the virtual camera based on progress of the game, movement of the player character110, and/or control of the virtual camera based on user controls. InFIG. 1, the player character110is standing on a first surface120and a second surface130is present adjacent to the first surface120and in front of the player character110. For example, the first surface120may be ground (earth) or a floor and the second surface130may be a water surface covering a bottom surface at a certain distance below the water surface.

FIG. 2illustrates a virtual space in an object generating mode. The object generating mode may be entered, for example, based on a user inputting a specified command (e.g., pressing a particular button on a remote control) during game play. As shown inFIGS. 1 and 2, when a user activates the object generating mode, a surface from which an object can be generated (e.g., the water surface130) is displayed in a manner different from its normal appearance in the game world (e.g., with a different color, brightness, and/or surface texture) to show where it may be possible to generate the ice pillar180. Textual and/or aural output may also provide information about the object generating mode and the possibility of generating objects. A user may also be provided with aural and/or visual feedback to indicate that it is not possible to generate a new object at the current location in the game world or in the current game conditions. While the virtual game world includes a plurality of different surfaces, in the example embodiment, only the surface on which the ice pillar180can be generated is changed when the object generating mode is activated.

As shown inFIG. 2, a marker160is displayed in an image of the virtual space. In an example embodiment, the marker160is displayed at the center of the image. The marker160may represent an imaging direction of the virtual camera in the virtual space. The marker160may correspond to an intersection of an imaging axis of the virtual camera capturing the displayed image and a surface of another object in the virtual space. The location in the virtual space corresponding to the location of the marker160may change if the orientation and/or location of the virtual camera in the virtual space is changed. The virtual camera orientation and/or location in the virtual space may change in response to movement of the player character110, specific user controls for moving the camera and/or progress of a game. Of course, the location in the virtual space corresponding to the marker may be determined in ways not involving the imaging axis of the virtual camera. For example, the display of the marker may be invoked based on a specified user input and thereafter positioned based on marker movement inputs to a remote control.

The marker160is displayed at the center of the image until the marker160coincides with a surface in the virtual space from which an ice pillar could be generated, at which point the marker162is displayed in the virtual space.FIG. 3shows a marker162displayed at a location of the intersection of the imaging axis of the virtual camera and another surface in the virtual space (the water surface130). The marker162may be visually different (e.g., different size, shape, color, etc.) from the marker160shown inFIG. 2because the marker162is displayed on a water surface from which an ice pillar180could be generated. In one example embodiment, the marker160indicating an object in the virtual space will change to the marker162when the object is a movable object and a water surface from which an ice pillar180can be generated is located below the movable object.

FIGS. 4 and 5show an example indicator170extending from the water surface130. Different portions of the indicator170may be displayed based on satisfaction of one or more conditions. For example, the indicator170may include a square or rectangle near the water surface130and may extend from the bottom to provide a pillar. The indicator170may be displayed as a square when the game processing determines that the imaging axis of the virtual camera intersects a surface (e.g., the water surface130) from which it may be possible to generate an object (e.g., the ice pillar180). The marker162may be displayed along with the indicator170. The indicator170may be displayed as the pillar (e.g., progressively extending from the square or the water surface130) when the game processing determines that an ice pillar180can be generated at the location of the marker162. In one example embodiment, the user may change the position of the marker162by moving the marker162over the surface of the water.

In an illustrative embodiment, the indicator170is displayed progressively extending from the square or the water surface130to a predetermined height repeatedly, in a base direction, when the game processing determines that an ice pillar180can be generated at the location of the marker162or indicator170. The indicator170is shown having a shape that corresponds to the shape of the ice pillar180to be generated and with transparent surfaces. In some example embodiments, the indicator170may not be transparent (e.g., may have one or more solid surfaces).

The position and/or orientation of the indicator170may change in response to changes in the position and/or orientation of the virtual camera. Thus, the user may “fine tune” the position of the ice pillar180that will be generated by making changes to the position of the indicator170and/or the marker162. In one embodiment, displaying the indicator170progressively extending may end, while still displaying the indicator170as the square, if the position of the indicator170on the water surface130changes to another position on the water surface130at which the object (in this case, the ice pillar) cannot be generated (e.g., due to a collision of the ice pillar with another non-movable object in the virtual space). In one embodiment, display of the indicator170may end if the position of the marker162is moved to another surface from which the object cannot be formed.

FIGS. 6 and 7show ice pillar180being progressively generated in the virtual space from the water surface130. The ice pillar180is generated when a specific condition is satisfied (e.g., user input is made while in the object generating mode). A gauge174may be displayed representing a time until the next ice pillar180can be generated. As illustrated inFIGS. 5 and 6, the water surface130from which the object is generated is displayed in a different manner (e.g., with a different color, brightness, and/or surface texture) from the time of entering an object generating mode and until the instructions are received to start generating the object. For example, the manner in which the water surface130is displayed while the ice pillar180is generated may correspond to the manner in which the water surface130was displayed before the object generating mode was entered. In some embodiments, the water surface130may be displayed in a different manner while the indicator170is not displayed on the water surface130.

The ice pillar180may be generated such that the ice pillar180starts being generated below the water surface130(e.g., from the ground surface below the surface of the water) and extend through the water surface130and in a direction that is away from the water surface130into the air (away from bottom of the water). The direction in which the ice pillar180is generated may by perpendicular to the water surface, but is not so limited. The ice pillar180may be progressively generated until the ice pillar180reaches a specific height.

FIG. 8shows a plurality of ice pillars180,182, and184generated in a virtual world from the water surface130. The ice pillars180,182, and184may be sequentially generated in response to user inputs.FIG. 8shows ice pillar184being generated after ice pillars180and182. The ice pillars180,182, and184may be generated such that they are spaced from each other or provided directly adjacent to each other. The game processing may provide an object generating limit to that only up to a certain number of user-generated objects may be present in the game world at the same time. The game may provide for one object generating limit for the game world as a whole and one or more other object generating limits for particular sections of the game world. For example, when an object generating limit is reached, the input of an instruction to generate a subsequent ice pillar may result in removal of the first generated ice pillar (e.g., ice pillar180) from the virtual game world. Of course, the game may allow a user to select which prior object should be removed and the game may also be configured so that no object generating limits are present.

The game may also provide for personalization of a generated object. For example, a user-generated object may include text or graphics identifying the user or game character that created the object or may provide a message for subsequent characters that arrive in the same section of the game world.

The player character110may be controlled to climb side surfaces of the ice pillars180,182, and184, and/or move across the top surfaces of the ice pillars180,182, and184. The user may control the generating of the ice pillars180,182, and184at specific location(s) to allow the player character110to move from one area of the virtual world to another area of the virtual world. The user may additionally or alternatively generate the ice pillars180,182, and184at specific locations to provide obstacles for other player characters or enemy characters.

FIGS. 9 and 10show generating an ice pillar280in the virtual world such that the ice pillar280moves another object290in the virtual space. In this way, a user may generate combinations of one or more objects to move and/or support another object in the virtual space. Moving and/or supporting other objects in the virtual space by generating the object may provide a game character with an additional paths for movement, provide shelter, uncover items hidden by the other object, remove obstacles for the player character, or create obstacles for other characters in the virtual space. In some example embodiments, generating the object may move the player character or another character (e.g., enemy character) if the object is generated at the position of the player character or the other character. As shown inFIG. 9, an indicator270is displayed extending from a water surface230under another object290. The indicator270shows the position, orientation, size of the object that will be generated, and direction of movement of the growing ice pillar280as it will be generated. In addition, the indicator270is illustrated with a transparent surface to show at what position the generated ice pillar280will interact with the other object290when the ice pillar280is generated.

FIG. 10shows the ice pillar280being progressively generated from the water surface230until the ice pillar280reaches a specific height. When the ice pillar280being generated reaches the other object290, the ice pillar280makes contact with the other object290and causes the other object290move from its original location. The other object290may be moved in an upward direction (e.g., direction in which the ice pillar280is being generated) and/or to the side of the ice pillar380.

The other object290is moved in response to generating the ice pillar280because the other object290is a movable object in the virtual world. If the other object290is a non-movable object (e.g., object that is not movable by the ice pillar280), placing the indicator under the other object290may display the indicator270, but without progressively extending from the square, to let the user know that the ice pillar380cannot be generated at this location.

In an example embodiment, if the ice pillar380makes contact with a non-movable object after generating the ice pillar380is started (e.g., the indicator270did not collide with the non-movable object at the time generating the ice pillar380is started but the non-movable object is moved and collides with the ice pillar380after the generating is started), the ice pillar380is destroyed (e.g., when the ice pillar380makes contact with the non-movable object). In this example, the non-movable object may be an object that is not movable by the ice pillar380being generated but is moved by the game (e.g., a moving ceiling or a moving floor). The non-movable object may be an object that is not movable in one direction (e.g., direction in which the ice pillar380is generated) but is movable in one or more other directions based on instructions in the game.

FIGS. 11 and 12show an example embodiment in which an ice pillar380is generated in the virtual world such that the generated ice pillar380moves another object390in the virtual space. As shown inFIG. 11, the other object390may be provided on the water surface330. A portion of the other object390may be partially submerged below the surface330.

In the virtual space, the player character310may not be able to safely move across or through the water surface330to the object390which may, for example, be a weapon, a coin, and the like. To move from the ground surface320to the location of the other object390, the user may generate a plurality of ice blocks or pillars from the water surface330. As illustrated inFIG. 12, a user may generate one ice pillar380to lift the other object above the water surface330to facilitate obtaining of the object390by the player character.

FIG. 11shows an indicator370displayed at the water surface330. A user may control the positioning of the indicator370such that the indicator370is displayed at the location of the other object390. Once the indicator370is displayed at the location of the other object390, instructions may be received to generate the object based on a specified user input.

FIG. 12shows the ice pillar380being generated in the virtual space from the water surface330. The ice pillar380is generated in response to a specific condition being satisfied (e.g., a user input is made while in the object generating mode). The ice pillar380may be generated such that the ice pillar380starts being generated below the water surface380(e.g., from a predetermined distance below the surface of the water) and extends in the direction away from the water surface330(e.g., direction that is perpendicular to the water surface). The ice pillar380may be progressively generated until the ice pillar380reaches a specific height set for the ice pillar380.

As the ice pillar380is generated, the other object390may be moved in an upward direction when the generated ice pillar380contacts with the other object390. The other object390may remain on a top planar surface of the ice pillar380until the generated ice pillar380is removed from the virtual world.

Previously generated ice pillars may be removed from the virtual world in response to specific user commands. In one example, previously generated ice pillars may be removed from the virtual world by controlling the imaging axis of the virtual camera to intersect with the previously generated ice pillar and receiving a user input to remove the previously generated ice pillar.FIG. 13illustrates an imaging axis of the virtual camera intersecting a generated ice pillar380. When the virtual camera is moved such that the imaging axis intersects with the previously generated ice pillar380, a marker388is displayed on or near a surface of the generated ice pillar380and/or a color, brightness and/or texture of the generated ice pillar380is changed. The marker388indicates that the ice pillar can be removed (e.g., shattered) if a specific input is received. Receiving the specific input after this marker388is displayed on the ice pillar380or after changing the manner in which the ice pillar380is displayed, will remove the previously generated ice pillar380. In response to removing the ice pillar380, the other object390may fall back to the water surface330. Here again, marker388is not limited to being associated with the imaging axis of the virtual camera and may be invoked and moved based on user inputs to a remote control device.

WhileFIGS. 1-13are discussed with reference to generating an ice pillar from a water surface, this disclosure are not so limited and may include other objects being generated from other surfaces. For example, a sand pillar may be generated from a sand surface or a cloud pillar may be generated form a cloud surface.

FIG. 14illustrates an example screen of an information processing device1400displaying an image of a virtual space. The information processing device1400may execute an application program (e.g., a game application) and control a player character1410in a virtual space based on instructions in the application program and/or inputs (e.g., inputs to remote control device(s)1450supplied by one or more users). Additional details about remote control devices1450are provided in U.S. application Ser. No. 15/179,022, which corresponds to U.S. Patent Publication No. 2016/0361641. The contents of the '022 application are incorporated herein in their entirety.

As shown inFIG. 14, an image of a virtual space, as seen from a virtual camera, is displayed on the screen of the information processing device1410. A virtual camera (not shown inFIG. 14) may be set at a position in the virtual space above and/or behind the player character1410to provide a view of the virtual space in a direction forward of the player character1410. The position of the virtual camera may be determined in accordance with the position of the player character1410, which may be controlled based on user inputs. The orientation of the virtual camera in the virtual space may change based on changes in the orientation of the player character1410made based on instructions in the application program and/or inputs (e.g., inputs to remote control device(s)1450supplied by one or more users). In some embodiments, the orientation and/or position of the virtual camera may be changed based on other conditions (e.g., specific user controls, progress of a game, and/or interaction of the player character1410with other objects in the virtual space). For example, control may be provided to allow the user to move the virtual camera independently from the movement of the player character1410(e.g., allow the player character1410to look around the virtual space or at least a part of the virtual space from a current location of the player character in the virtual space).

Based on changes in the orientation and/or position of the virtual camera, the image of the virtual space displayed on the screen will change. In some example embodiments, the image of the virtual space may be displayed with the viewpoint of the player character1410(e.g., the position of the virtual camera may be set at the first person view of a player character).

The user of the information processing device1400may need to achieve certain goals in the virtual space. For example, a user may need to move the player character1410from one area of the virtual space to another area of the virtual space or may need move specific objects in the virtual space. The user may achieve these tasks by generating objects in accordance with the technology described in this application. For example, objects may be generated on a specific surface (e.g., horizontal surface, vertical surface, or slanted surface in the virtual space) to allow the player character1410to use the objects to move from one side of the surface to another side of the surface, where the player character1410cannot otherwise move across the surface. In another example, objects may be generated in a growing manner from a base point in the virtual space (e.g., a growing ice ball is generated from a base point). In another example, a specific object may be moved in the virtual space by generating an object such that the generated object will contact the specific objects to be moved. In other example embodiments, objects may be generated in the virtual space to create obstacles (e.g., for enemy characters in the virtual space).

FIGS. 15A-15Cillustrate an example process for generating an object1580in a virtual space. The process may, for example, be implemented by instructions contained in a game application program which is stored in a non-volatile memory and executed by a processor.FIG. 15Ashows a player character1510movable in accordance with user inputs on a surface1520in a virtual space. Virtual camera1550captures images of the virtual space. The location for generating an object in the virtual space may be determined based on position and/or orientation of the virtual camera. For example, the location for generating the object in the virtual space may be determined based on an imaging axis1552of the virtual camera1550. Based on the imaging axis1552of the virtual camera1550, a base for the object1580may be determined. In particular, as discussed above, the position of the base can be moved by changing a direction and/or orientation of the virtual camera1550. The base for the object may be a base point or a base plane. As shown inFIG. 15A, the base for the object1580may be a base point1554or a base plane1556corresponding to a point (or a planar area around such point) where the imaging axis1552intersects the surface1520. The position of the base may of course be determined in ways not involving the imaging axis of the virtual camera.

The surface1520may include a plurality of different types of surfaces. For example, the surface1520may include a ground surface in the virtual world and a liquid (e.g., water) surface in the virtual world. While the surface1520is illustrated inFIGS. 15A-15Cas being provided on a same plane, the various types of surfaces may be provided on different planes and/or each different type of surface may have a planar or varying profile.

With the base of the object1580to be generated in the virtual space determined, the process may determine a base direction. The base direction may provide the direction relative to the base in which the object1580will be generated in the virtual space. The base direction may be determined based on the direction of the surface1520at the location of the base. The base direction may be an upward direction from the surface1520(e.g., into the air of the virtual space) or a downward direction from the surface1520(e.g., gravity direction). The base direction may, for example, be a direction that is perpendicular to the surface1520, but is not so limited.

An indicator1570is displayed at the base. The indicator may be a visual display element for providing one or more characteristics of the object to be generated, how the object will be added to the virtual space, and/or how the object will interact with other objects in the virtual space. The indicator1570may be displayed based on determining that the type of surface in the virtual space on which the indicator1570will be displayed is a type of surface from which an object could be generated. InFIG. 15B, the indicator1570is displayed with a two-dimensional shape (e.g., a square) and a three-dimensional shape (e.g., a pillar) corresponding to the shape of the object1580to be generated. The indicator1570may be displayed as a static indicator or a dynamic indicator providing an animation. In an example embodiment, different portions of the indicator1570and/or the indicator1570may be displayed in a different manner (e.g., statically or dynamically) based on satisfying one or more conditions (e.g., determining that the object could be generated from the surface and/or determining that the object can be generated from the base and/or in the base direction).

In an example embodiment, based on the determined base and/or base direction, a static indicator1570(e.g., a square) is displayed when the surface is a surface from which the object could be generated. The indicator1570may be displayed dynamically (e.g., a pillar progressively extending in an upward direction from the square) after the determination is made that the object1580can be generated at the base and/or in the base direction. Such a determination may include determining whether the type of surface in the virtual space is a type of surface from which an object can be generated, whether specific conditions at the base on the surface (e.g., distance to another surface below surface) are satisfied, and/or how the object to be generated will interact with other objects in the virtual space when the object is generated.

The indicator1570may be animated such that the indicator1570repeatedly grows from the base until it reaches a specific height. The animation of the indicator may provide a direction in which the object will expand when it is generated. The height of the indicator1570may correspond to the height of the object to be generated. In this way, the indicator1570may allow the user to better ascertain various characteristics of the object to be generated (e.g., its size, dimensions, and/or location in the virtual space, etc.).

The indicator1570is not limited to examples discussed above and may include other 2-dimensional or 3-dimensional objects. For example, the indicator1570may include an object having a shape that corresponds to the object to be generated, a circle or sphere that expands from the base point in the base direction, a rectangle, a cube, a cylinder, rectangular prism, a cone, a pyramid, or a triangular prism. In an example embodiment, the indicator1570may be depicted as an arrow provided at the location of the base and pointing in the determined base direction. The arrow may be a static arrow depicting a direction in which the object will be generated (e.g., a base direction) and/or the height of the object after it is generated. In another example, the arrow may be a dynamic arrow depicting a direction in which the object will be generated by progressively extending from the base in the base direction and/or depicting the height of the object to be generated by extending to a height that corresponds to the height of the object to be generated.

The user may supply inputs to a remote control in order to change the position of the indicator1570.

FIG. 15Cillustrates the generated object1580in the virtual space. The object1580may be generated, for example, after the user positions the indicator1570at a particular location and when a specific condition is satisfied. For example, the specific condition may be a specific user input. The object1580may be progressively generated from the base in the base direction. For example, the object may appear from the base at the surface1520and progressively extend in the base direction until the object1580reaches a specific height set for the object1580.

After the object1580is generated, subsequent objects may be generated with the same process at other locations in the virtual space. The object1580may remain in the virtual space until a specific condition is satisfied. After the specific condition is satisfied, the generated object may be removed from the virtual space. The specific condition may, for example, be a predetermined amount of time, user instructions to remove the generated object, a subsequent object is generated after a user-generated object limit for the generating object is reached. By way of illustration, if three user-generated objects are present in a virtual space in which the user-generated objet limit is three, upon receiving instructions to generate a fourth object, one of the three objects previously generated may be removed from the virtual space. In one example, the object that was first generated (earliest generated) out of the three objects may be automatically removed upon receiving instructions to generate the fourth object.

FIGS. 16A-16Cillustrate another example process of generating an object1680in a virtual space.FIG. 16Ashows a player character1610positioned on a surface1620in a virtual space. A virtual camera1650is provided in the virtual space and captures images of the virtual space. The position for generating the object1680in the virtual space may be determined based on an imaging axis1652of the virtual camera1650. Based on the imaging axis1652of the virtual camera1650, a base1656for the object1680may be determined.

As shown inFIG. 16A, another object1690may be included in the virtual space. If the imaging axis1652of the virtual camera1650intersects with the other object1690at a first point, the base1656for the object1680may be determined by locating a second point relative to the first point, e.g., by generating a line from the first point in the downward direction (e.g., direction towards the surface). The base may correspond to a point on the surface1620intersected by the generated line.

With the base of the object1680to be generated in the virtual space determined, the process determines a base direction. The base direction may correspond to a direction in which the object1680will be generated in the virtual space relative to the base1656. The base direction may be determined based on the direction of the surface1620at the location of the base1656. For example, the base direction may be a direction that is perpendicular to the surface1620.

Based on the determined base and/or base direction, the process determines whether an object1680can be generated at the base and/or in the base direction. Such a determination may include, for example, determining whether the surface is a type of surface from which the object can be generated, whether specific conditions at the base on the surface (e.g., distance to another surface below surface) are satisfied, and/or how the object to be generated will interact with other objects in the virtual space when the object is generated.

As shown inFIGS. 16A-16C, generating the object1680in the virtual space will cause the object1680to make contact with the other object1690. Determining whether the object1680can be generated at the base1656and/or in the base direction may include determining whether the other object1690is a movable object in the virtual space. If the other object1690is a movable object and is movable in the base direction, the process determines that the object1680can be generated at the base and in the base direction. However, if the other object1690is a stationary object or not movable in the base direction, the process may determine that the object1680cannot be generated at the base and in the base direction. Determining whether the object1680can be generated at the base and/or in the base direction may further include determining whether the other object1690is movable in the base direction for a distance that corresponds to the height of the object1680. If the other object1690is movable but will prevent the object1680being generated to the height of the object1680, the process may determine that the object1680cannot be generated in the base direction at the base.

Based on determining that the object1680can be generated from the base and/or in the base direction, an indicator1670may be displayed at the base1656. As shown inFIG. 16B, the indicator1670may be provided at the location of the base and extend in the determined base direction. The indicator1670may have a shape that corresponds to the shape of object1680.

The indicator1670may be animated such that the indicator1670repeatedly extends from the surface1620or base until it reaches a specific height. The animation may continue until instructions to generate the object1680are received or an object generation mode is terminated. The height of the indicator1670may correspond to the height of the object1680to be generated. The indicator1670may allow the user to better ascertain various characteristics of the object to be generated (e.g., its size, dimensions, and/or location in the virtual space, etc.), without having to generate the object1680. In this way, if the location of the indicator1670and/or the interaction of the object1680with other objects in the virtual space is not desired, a user may want to change the location of where the object1680will be generated before the object1680is generated (e.g., by moving indicator1670in accordance with user inputs). As shown inFIG. 16B, the indicator1670having transparent surfaces is displayed in the virtual space.

FIG. 16Cillustrates the generated object1680in the virtual space. The object1680may be generated, for example, after the user positions the indicator1670at a particular location and when a specific condition is satisfied. For example, the specific condition may be a specific user input. The object1680may be progressively generated from the base in the base direction. For example, the object may appear from the base1656at the surface1620and progressively grow in the base direction until the object1680reaches a specific height.

Generating the object1680in the virtual space may cause the object1680to make contact with one or more other objects in the virtual space. As shown inFIG. 16C, generating the object1680from the base in the base direction will cause object1680to make contact with the other object1690. Because the other object1690is a movable object in the virtual space, generating the object1680will cause the other object1690to move in the virtual space (e.g., in the base direction).

If there is a space between the surface1620and the other object1690, movement of the other object1690will occur when the generated object1680reaches and contacts the other object1690.

Being able to move other objects by generating an object in the virtual space from a surface, may allow a user to lift objects that the player character1610may not be otherwise able to lift. For example, the player character1610may generate an object at a location of a gate to lift the gate to allow the player character to pass under the gate. In other embodiments, moving another object by generating an object may provide a path for the player character to move across. In some embodiment, generating an object may move another object (e.g., a sphere or a block) to a side of the generated object.

An example process for generating an object in a virtual space will now be described with reference toFIG. 17. One or more steps of the process may be performed by computer system including one or more processors and one or more software modules including program instructions. At Step1710, a processing system of an information processing device, may enter an object generating mode. The object generating mode may be entered in response to a specific condition being satisfied (e.g., specific user input). For example, the processing system may activate the object generating mode in response to receiving a specific user input from a controller during execution of a game application program. Upon entering the object generating mode, the processing system may change a surface in the virtual space on which the object can be generated by displaying the surface in a manner different the manner in which the surface is displayed in a mode other than the object generating mode. For example, when the object generating mode is entered, a surface, on which the object can be generated, may be displayed with a different color, different brightness, and/or additional features (e.g., guidelines or a grid).

At Step1720, a base of a surface may be determined. The base may be determined for a surface in the virtual space based on an imaging direction of a virtual camera in the virtual space. For example, the base may be determined based on where an imaging axis of a virtual camera intersects a surface in a virtual space. For example, the processing system may receive coordinates of the virtual camera in the virtual space and based on the coordinates and the orientation of the virtual camera determine a point in the virtual space where the imaging axis of the virtual camera intersects the surface.

The base may be a base point, a base plane, or a base having a shape that corresponds to a cross section or bottom surface of the object to be generated in the virtual space. Determining the base may include determining an orientation of the base based on the angle of the surface in the virtual space, the imaging axis of the virtual camera and/or horizontal direction in the virtual space.

At Step1730, a base direction may be determined. The base direction specifies the direction in which the object will be generated in the virtual space relative to the base. The base direction may be determined based on the direction of the surface at the location of the base. The base direction may be, for example, a direction that is perpendicular to the surface.

At Step1740, a first portion of the indicator (e.g., a square) may be displayed at the base. The first portion of the indicator may be displayed when the base is at a location that corresponds to a surface near which an object could be generated. At Step1740, the first portion of the indicator may be displayed as a static indicator.

At Step1750, a determination is made as to whether the object can be generated. The determination may include determining whether the object can be generated at the base and/or in the base direction. Such a determination may include determining whether specific conditions at the base (e.g., distance to another surface below surface) are satisfied, and/or how the object to be generated will interact with other objects in the virtual space when the object is generated, but is not so limited. If the object cannot be generated at the base (NO in Step1750), the process may repeat steps1720,1730, and1740until the location of the base is changed to a location where the object can be generated or the object generating mode is terminated (e.g., by changing the position and/or orientation of the virtual camera).

If the object can be generated at the base (YES in Step1750), a second portion of the indicator may be displayed in Step1760. The second portion of the indicator may be displayed so as to extend from the base in the base direction. The second portion of the indicator may be displayed as an extension of the first portion of the indicator already displayed. The second portion of the indicator may, for example, provide a preview of how the object will look in the virtual space, the location where the object will be generated, demonstrate how the object will be generated in the virtual space, and/or how the object will interact with other objects in the virtual space, without generating the object. The second portion of the indicator may be displayed repeatedly extending from near the first portion of the indicator in the base direction. In an example embodiment, the first portion of the indicator may be a primary indicator and a second portion of the indicator may be a secondary indicator, which is different from the primary indicator.

If a specific condition is satisfied after the indicator is displayed (YES in Step1770), the object may be generated in Step1780. The specific condition may be a specific user input. The object may be generated when the specific user input is received after the initial display of the indicator. The object may be progressively generated from the base in the base direction. The position and/or orientation for the object being generated may correspond to the position and/or the orientation of the base. If a specific condition is not satisfied after the indicator is displayed (NO in Step1770), the display of the indicator may continue until the specific condition is satisfied (YES in Step1770) (e.g., after the position and/or orientation of the camera is changed or until the object generating mode is terminated).

FIGS. 18 and 19illustrate non-limiting examples of how orientation of the base and/or object in the virtual space are determined. Such determinations may be made when the base is determined (e.g., in Step1720) and the orientation of the object may correspond to the orientation determined for the base. As discussed above, the base direction for the object may be a direction that is perpendicular to the surface from which the object is generated. If the object has a planar top surface, generating the object in the base direction will provide a top surface that is parallel to the surface from which the object is generated. As explained further below, the orientation of the base or object in the virtual space may be determined based on the imaging direction of the virtual camera, the angle of the surface in the virtual space, and/or horizontal direction in the virtual space.

FIG. 18illustrates how the orientation of the object1880is determined when an angle of a surface1820, from which the object is generated, is horizontal or does not exceed a specific limit relative to a horizontal direction in the virtual space. The horizontal direction may be a direction that is perpendicular to a gravity direction G in the virtual space. By way of example, and without limitation, the specific limit may be 18 degrees. When the angle of the surface does not exceed the specific limit, the orientation of the object1880may be set such that sides1882and1884of top surface of the object1880are parallel to a direction from the virtual camera. Sides1886and1888of top surface of the object1880may be set such that they are perpendicular to sides1882and1884and/or the gravity direction G. The direction from the virtual camera may correspond to a horizontal component of the imaging axis of the virtual camera.

FIG. 19illustrates how the orientation of the object1980is determined when an angle of the surface1920, from which the object is generated, equals or exceeds a specific limit relative to a horizontal direction in the virtual space. By way of example, and without limitation, the specific limit may be 18 degrees. When the angle of the surface equals or exceeds the specific limit, the orientation of the object1980may be set such that sides1982and1984of an end surface of the object1980are parallel to a horizontal direction in the virtual space. Sides1986and1988of top surface of the object1980may be set such that they are perpendicular to sides1982and1984.

As illustrated inFIG. 19, if the object1980is generated from a surface that is parallel or approximately parallel to a gravity direction, a surface of the generated object1980that is perpendicular to the surface from which the object1980is generated may be used as a surface to step on by the player character or to hold another object.

Determining whether an object can be generated in the base direction and/or at the base may include determining whether the surface satisfies one or more predetermined conditions. For example, a condition may be that the surface is a water surface or a sand surface in the virtual space.

In other embodiments, determining whether an object can be generated in the base direction and/or at the base may include determining whether the surface satisfies one or more predetermined conditions at the location of the base. For example, one condition may include that the surface is a specific type of surface (e.g., a water surface) and a second condition may include that the depth of the water surface exceeds a specific value (e.g., 0.25 m in the virtual space). For example, if the depth of the water at the location of the base does not exceed the specific value, the determination may be made that the object cannot be generated at the location of the base. In one example embodiment, the whole surface at the base needs to satisfy this condition. Thus, a portion of the base cannot be located on another type of surface (e.g., a ground surface in the virtual space) for this embodiment.

As discussed above, the height of the indicator and/or the object may be set to a specific height. For example, the height may be set to 4.0 m in the virtual space. In other embodiments, the height of the object may be set based on the height of the indicator when a specific input is received. For example, as the indicator progressively extends in the base direction from the base, the height for the object may be set to the height of the indicator when a specific input is received.

Determining whether an object can be generated in the base direction at the base may include determining whether there is another object (e.g., a non-movable object) above and/or below the base. This determination may be made for a predetermined distance below the surface at the base and a predetermined distance above the surface at the base.

The predetermined distance above the surface may be the set specific height of the object, or to the set specific height plus or minus another value (e.g., 12.5% or 6.25% of the specific height of the object). The predetermined distance below the surface may be a specific value or a percentage of the set specific height (e.g., 12.5% or 6.25% of the specific height of the object). Thus, the search length for the non-movable object at the base may be equal to or exceed the set specific height of the object, and/or the search length for the non-movable object may extend below the surface and/or above a height at which the object will be generated. In one embodiment, if the depth of the water at the location of the base does not exceed the predetermined distance below the surface (i.e., the ground surface below the water is less than the predetermined distance below the surface) then the determination may be made that the object cannot be generated at the location of the base.

In one embodiment, the object may be generated in the virtual space from a location below the surface (e.g., from a predetermined distance below the surface or from another surface below the upper surface). If the depth of the water at the base is in a specific range (e.g., between 0.25 m and 0.5 m), the object may be generated from a lower surface (e.g., ground) that is provided below the main surface (e.g., water surface) in the base direction. If the depth of the water (e.g., distance from the water surface to the ground surface below the water surface) at the base exceeds the range, the object may be generated from a specific depth below the main surface (e.g., 0.5 m) or from the main surface in the base direction.

FIG. 20is a block diagram of an example computing device2000(which may also be referred to, for example, as a “computing device,” “computer system,” or “computing system”) according to some embodiments. In some embodiments, the computing device2000includes one or more of the following: one or more processors2002; one or more memory devices2004; one or more network interface devices2006; one or more display interfaces2008; and one or more user input adapters2010. Additionally, in some embodiments, the computing device2000is connected to or includes a display device2012. As will explained below, these elements (e.g., the processors2002, memory devices2004, network interface devices2006, display interfaces2008, user input adapters2010, display device2012) are hardware devices (for example, electronic circuits or combinations of circuits) that are configured to perform various different functions for the computing device2000. For example, an application stored in memory devices2004may be executed by processors2002to generate images of a virtual space that are transmitted by the display interface2008to a display device2012. Inputs to a controller may be received via user input adapters2010to control operation of the application. Such operations may change the position of the virtual camera in the virtual space and cause the processors2002to generate new images for display on the display device2012.

In some embodiments, each or any of the processors2002is or includes, for example, a single- or multi-core processor, a microprocessor (e.g., which may be referred to as a central processing unit or CPU), a digital signal processor (DSP), a microprocessor in association with a DSP core, an Application Specific Integrated Circuit (ASIC), a Field Programmable Gate Array (FPGA) circuit, or a system-on-a-chip (SOC) (e.g., an integrated circuit that includes a CPU and other hardware components such as memory, networking interfaces, and the like). And/or, in some embodiments, each or any of the processors2002uses an instruction set architecture such as x86 or Advanced RISC Machine (ARM).

In some embodiments, each or any of the memory devices2004is or includes a random access memory (RAM) (such as a Dynamic RAM (DRAM) or Static RAM (SRAM)), a flash memory (based on, e.g., NAND or NOR technology), a hard disk, a magneto-optical medium, an optical medium, cache memory, a register (e.g., that holds instructions), or other type of device that performs the volatile or non-volatile storage of data and/or instructions (e.g., software that is executed on or by processors2002). Memory devices2004are examples of non-volatile computer-readable storage media.

In some embodiments, each or any of the network interface devices2006includes one or more circuits (such as a baseband processor and/or a wired or wireless transceiver), and implements layer one, layer two, and/or higher layers for one or more wired communications technologies (such as Ethernet (IEEE 802.3)) and/or wireless communications technologies (such as Bluetooth, WiFi (IEEE 802.11), GSM, CDMA2000, UMTS, LTE, LTE-Advanced (LTE-A), and/or other short-range, mid-range, and/or long-range wireless communications technologies). Transceivers may comprise circuitry for a transmitter and a receiver. The transmitter and receiver may share a common housing and may share some or all of the circuitry in the housing to perform transmission and reception. In some embodiments, the transmitter and receiver of a transceiver may not share any common circuitry and/or may be in the same or separate housings.

In some embodiments, each or any of the display interfaces2008is or includes one or more circuits that receive data from the processors2002, generate (e.g., via a discrete GPU, an integrated GPU, a CPU executing graphical processing, or the like) corresponding image data based on the received data, and/or output (e.g., a High-Definition Multimedia Interface (HDMI), a DisplayPort Interface, a Video Graphics Array (VGA) interface, a Digital Video Interface (DVI), or the like), the generated image data to the display device2012, which displays the image data. Alternatively or additionally, in some embodiments, each or any of the display interfaces2008is or includes, for example, a video card, video adapter, or graphics processing unit (GPU).

In some embodiments, each or any of the user input adapters2010is or includes one or more circuits that receive and process user input data from one or more user input devices (not shown inFIG. 20) that are included in, attached to, or otherwise in communication with the computing device2000, and that output data based on the received input data to the processors2002. Alternatively or additionally, in some embodiments each or any of the user input adapters2010is or includes, for example, a PS/2 interface, a USB interface, a touchscreen controller, or the like; and/or the user input adapters2010facilitates input from user input devices (e.g., seeFIG. 14) such as, for example, a keyboard, handheld controller, mouse, trackpad, touchscreen, and the like.

In some embodiments, the display device2012may be a Liquid Crystal Display (LCD) display, Light Emitting Diode (LED) display, or other type of display device. In embodiments where the display device2012is a component of the computing device2000(e.g., the computing device and the display device are included in a unified housing—SeeFIG. 14), the display device2012may be a touchscreen display or non-touchscreen display. In embodiments where the display device2012is connected to the computing device2000(e.g., is external to the computing device2000and communicates with the computing device2000via a wire and/or via wireless communication technology), the display device2012is, for example, an external monitor, projector, television, display screen, and the like.

In various embodiments, the computing device2000includes one, or two, or three, four, or more of each or any of the above-mentioned elements (e.g., the processors2002, memory devices2004, network interface devices2006, display interfaces2008, and user input adapters2010). Alternatively or additionally, in some embodiments, the computing device2000includes one or more of: a processing system that includes the processors2002; a memory or storage system that includes the memory devices2004; and a network interface system that includes the network interface devices2006.

The computing device2000may be arranged, in various embodiments, in many different ways. As just one example, the computing device2000may be arranged such that the processors2002include: a multi (or single)-core processor; a first network interface device (which implements, for example, WiFi, Bluetooth, NFC, etc. . . . ); a second network interface device that implements one or more cellular communication technologies (e.g., 3G, 4G LTE, CDMA, etc. . . . ); memory or storage devices (e.g., RAM, flash memory, or a hard disk). The processor, the first network interface device, the second network interface device, and the memory devices may be integrated as part of the same SOC (e.g., one integrated circuit chip). As another example, the computing device2000may be arranged such that: the processors2002include two, three, four, five, or more multi-core processors; the network interface devices2006include a first network interface device that implements Ethernet and a second network interface device that implements WiFi and/or Bluetooth; and the memory devices2004include a RAM and a flash memory or hard disk.

As previously noted, whenever it is described in this document that a software module or software process performs any action, the action is in actuality performed by underlying hardware elements according to the instructions that comprise the software module. Consistent with the foregoing, in various embodiments, each or any combination of the information processing device1400or other devices described in the '022 application, each of which will be referred to individually for clarity as a “component” for the remainder of this paragraph, are implemented using an example of the computing device2000ofFIG. 20. In such embodiments, the following applies for each component: (a) the elements of the computing device2000shown inFIG. 20(i.e., the one or more processors2002, one or more memory devices2004, one or more network interface devices2006, one or more display interfaces2008, and one or more user input adapters2010), or appropriate combinations or subsets of the foregoing) are configured to, adapted to, and/or programmed to implement each or any combination of the actions, activities, or features described herein as performed by the component and/or by any software modules described herein as included within the component; (b) alternatively or additionally, to the extent it is described herein that one or more software modules exist within the component, in some embodiments, such software modules (as well as any data described herein as handled and/or used by the software modules) are stored in the memory devices2004(e.g., in various embodiments, in a volatile memory device such as a RAM or an instruction register and/or in a non-volatile memory device such as a flash memory or hard disk) and all actions described herein as performed by the software modules are performed by the processors2002in conjunction with, as appropriate, the other elements in and/or connected to the computing device2000(i.e., the network interface devices2006, display interfaces2008, user input adapters2010, and/or display device2012); (c) alternatively or additionally, to the extent it is described herein that the component processes and/or otherwise handles data, in some embodiments, such data is stored in the memory devices2004(e.g., in some embodiments, in a volatile memory device such as a RAM and/or in a non-volatile memory device such as a flash memory or hard disk) and/or is processed/handled by the processors2002in conjunction, as appropriate, the other elements in and/or connected to the computing device2000(i.e., the network interface devices2006, display interfaces2008, user input adapters2010, and/or display device512); (d) alternatively or additionally, in some embodiments, the memory devices2002store instructions that, when executed by the processors2002, cause the processors2002to perform, in conjunction with, as appropriate, the other elements in and/or connected to the computing device2000(i.e., the memory devices2004, network interface devices2006, display interfaces2008, user input adapters2010, and/or display device512), each or any combination of actions described herein as performed by the component and/or by any software modules described herein as included within the component.

Although the processes illustrated and described herein include series of steps, it will be appreciated that the different embodiments of the present disclosure are not limited by the illustrated ordering of steps, as some steps may occur in different orders, some concurrently with other steps apart from that shown and described herein. In addition, not all illustrated steps may be required to implement a methodology in accordance with the present disclosure. Moreover, it will be appreciated that the processes may be implemented in association with the apparatus and systems illustrated and described herein as well as in association with other systems not illustrated.

Although example embodiments of the present disclosure have been illustrated and described hereinabove, the present disclosure is not limited to the above-mentioned specific example embodiments, but may be variously modified by those skilled in the art to which the present disclosure pertains without departing from the scope and spirit of the disclosure as disclosed in the accompanying claims. These modifications should also be understood to fall within the scope of the present disclosure.

Claims

- An information processing apparatus comprising a processing system including at least one processor, the processing system being configured to control the information processing apparatus to at least: determine a base and a base direction in a virtual space;determine whether an object can be generated in the virtual space relative to the base in the base direction;based on determining that the object can be generated in the virtual space relative to the base in the base direction, display an animated indicator indicating at least a size of the object and the base direction for generating the object in the virtual space, wherein the indicator comprises a repeated animation in which the indicator progressively extends from the base in the base direction until extended in the base direction for a distance corresponding to a dimension in the base direction of the object to be generated;and based on determining that a specified condition is satisfied, progressively generate the object relative to the base in the base direction.

- The information processing apparatus of claim 1 , wherein the base is determined based on an intersection location of an imaging axis of a virtual camera provided in the virtual space and a surface in the virtual space.

- The information processing apparatus of claim 2 , wherein location and/or orientation of the virtual camera is controlled based on location and/or orientation of a virtual character in the virtual space and/or inputs for controlling the virtual camera.

- The information processing apparatus of claim 2 , wherein the processing system is further configured to, based on determining that the object cannot be generated relative to the base in the base direction, display the indicator, without animation indicating the base direction, at the intersection location of the imaging axis of the virtual camera and the surface.

- The information processing apparatus of claim 1 , wherein the base is a base point or a base plane.

- The information processing apparatus of claim 5 , wherein the indicator is an arrow dynamically extending from the base point or the base plane in the base direction.

- The information processing apparatus of claim 1 , wherein the indicator is a pillar or a sphere displayed increasing in size from the base in the base direction.

- The information processing apparatus of claim 1 , wherein the processing system is further configured to control the information processing apparatus to: determine whether the object to be generated relative to the base will collide with a non-movable object in the virtual space, and determine that the object cannot be generated relative to the base in the base direction based on determining that the object to be generated relative to the base will collide with the non-movable object in the virtual space.

- The information processing apparatus of claim 1 , wherein the processing system is further configured to control the information processing apparatus to, upon the object being generated colliding with another object in the virtual space, move the other object according to the object being generated in the base direction.

- The information processing apparatus of claim 1 , wherein the processing system is further configured to control the information processing apparatus to, upon the object being generated colliding with another object in the virtual space that is not movable, stop the generating of the object and remove the object being generated from the virtual space.

- The information processing apparatus of claim 1 , wherein the processing system is further configured to control the information processing apparatus to: in response to a specific input, enter an object generating mode;and upon entering the object generating mode, change color, brightness, or texture of a surface on which the object can be generated.

- The information processing apparatus of claim 1 , wherein the object is a rectangular prism and the indicator includes a shape that corresponds to the shape of the rectangular prism, and the processing system is further configured to control the information processing apparatus to determine an orientation for the rectangular prism such that two opposite sides of a top surface of the rectangular prism are parallel to a horizontal component of an imaging axis of a virtual camera in the virtual space, and generate the object having the determined orientation.

- The information processing apparatus of claim 1 , wherein the processing system is further configured to control the information processing apparatus to move the indicator in the virtual space according to movement of the base, and determine that the specified condition is satisfied when user instructions to generate the object are received.

- The information processing apparatus of claim 1 , wherein the indicator indicates a position of the object to be generated in the virtual space.

- The information processing apparatus of claim 1 , wherein determining whether the object can be generated in the virtual space includes determining whether the base in the virtual space is located on a surface having a characteristic permitting the object to be generated, and a determination is made that the object can be generated relative to the base in the base direction when the base is located on the surface having the characteristic permitting the object to be generated.

- The information processing apparatus of claim 15 , wherein the determination is made that the object cannot be generated relative to the base in the base direction when the base is located on a surface without a characteristic permitting the object to be generated.

- A information processing system comprising: a display;and a computer including at least one processor, the computer being configured to control the information processing system to at least: display, on the display, an image of a virtual space;determine a base and a base direction in the virtual space;determine whether an object can be generated in the virtual space relative to the base in the base direction;based on determining that the object can be generated in the virtual space relative to the base in the base direction, display, on the display, an animated indicator indicating at least a size of the object and the base direction for generating the object in the virtual space, wherein the indicator comprises a repeated animation in which the indicator progressively extends from the base in the base direction until extended in the base direction for a distance corresponding to a dimension in the base direction of the object to be generated;and based on determining that a specified condition is satisfied, progressively generate the object relative to the base in the base direction.

- The system according to claim 17 , wherein the base is determined based on an intersection location of an imaging axis of a virtual camera provided in the virtual space and a surface in the virtual space, and the location of the base is changed in response to changes in the location and/or orientation of the virtual camera.

- The system according to claim 17 , wherein the object is a rectangular prism and the indicator includes a shape that corresponds to the shape of the rectangular prism, and the computer is further configured to control the information processing system to determine an orientation for the rectangular prism such that two opposite sides of a top surface of the rectangular prism are parallel to a horizontal component of an imaging axis of a virtual camera in the virtual space, and generate the object having the determined orientation.

- A method implemented at least in part by at least one computer including a processor, the method comprising: determining a base and a base direction in a virtual space;determining whether an object can be generated in the virtual space relative to the base in the base direction;based on determining that the object can be generated in the virtual space relative to the base in the base direction, displaying an animated indicator indicating at least a size of the object and the base direction for generating the object in the virtual space, wherein the indicator comprises a repeated animation in which the indicator progressively extends from the base in the base direction until extended in the base direction for a distance corresponding to a dimension in the base direction of the object to be generated;and based on determining that a specified condition is satisfied, progressively generating the object relative to the base in the base direction.

- The method according to claim 20 , wherein the base is determined based on an intersection location of an imaging axis of a virtual camera provided in the virtual space and a surface in the virtual space, and the location of the base is changed in response to changes in the location and/or orientation of the virtual camera.

- The method according to claim 20 , wherein the object is a rectangular prism and the indicator includes a shape that corresponds to the shape of the rectangular prism, and the method further include determining an orientation for the rectangular prism such that two opposite sides of a top surface of the rectangular prism are parallel to a horizontal component of an imaging axis of a virtual camera in the virtual space, and generating the object having the determined orientation.

- A non-transitory computer-readable medium storing a program causing an information processing device to at least: determine a base and a base direction in a virtual space;determine whether an object can be generated in the virtual space relative to the base in the base direction;based on determining that the object can be generated in the virtual space relative to the base in the base direction, display an animated indicator indicating at least a size of the object and the base direction for generating the object in the virtual space, wherein the indicator comprises a repeated animation in which the indicator progressively extends from the base in the base direction until extended in the base direction for a distance corresponding to a dimension in the base direction of the object to be generated;and based on determining that a specified condition is satisfied, progressively generate the object relative to the base in the base direction.

- The non-transitory computer-readable medium according to claim 23 , wherein the base is determined based on an intersection location of an imaging axis of a virtual camera provided in the virtual space and a surface in the virtual space, and the location of the base is changed in response to changes in the location and/or orientation of the virtual camera.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.