U.S. Pat. No. 10,278,001

MULTIPLE LISTENER CLOUD RENDER WITH ENHANCED INSTANT REPLAY

AssigneeMicrosoft Technology Licensing, LLC

Issue DateMay 12, 2017

U.S. Patent no. 10,278,001: Multiple listener cloud render with enhanced instant replay

U.S. Patent no. 10,278,001: Multiple listener cloud render with enhanced instant replay

Issued April 30, 2019, to Microsoft Technology Licensing, LLC

Priority Date May 12, 2017

Summary:

U.S. Patent No. 10,278,001 (the ‘001 Patent) relates to streaming video platforms and specifically those used to stream video games such as Twitch where people stream playthroughs and esports competitions. The ‘001 Patent details a system that can generate recorded video data along with corresponding audio. This data can then be streamed and rendered on multiple computers. The system is able to automatically generate customized scenes of game play by selecting a location and direction for a virtual camera perspective to record. The camera perspective can also be used to “follow the action” of the game play. The invention enables spectators to observe recorded events or live events being streamed in real time. This technology can also be used to create an instant replay of relevant game play. This will allow for improved viewing of game play content being streamed.

Abstract:

The techniques disclosed herein provide a high fidelity, rich, and engaging experience for spectators of streaming video services. The techniques disclosed herein enable a system to receive, process and, store session data defining activity of a virtual reality environment. The system can generate recorded video data of the session activity along with rendered spatial audio data, e.g., render the spatial audio in the cloud, for streaming of the video data and rendered spatial audio data to one or more computers. The video data and rendered spatial audio data can provide high fidelity video clips of salient activity of a virtual reality environment. In one illustrative example, the system can automatically create a video from one or more camera positions and audio data that corresponds to the camera positions.

Illustrative Claim:

1. A computing device, comprising: a processor; a memory having computer-executable instructions stored thereupon which, when executed by the processor, cause the computing device to receive session data defining a virtual reality environment comprising a participant object, the session data allowing a participant to provide a participant input for controlling a location of the participant object and a direction of the participant object, analyze the session data to determine a level of activity associated with the participant object, determine a level of activity associated with one or more virtual objects, select a location and a direction of a virtual camera perspective, wherein the virtual camera perspective is a first-person perspective projecting from the location of the participant object, wherein the direction is towards the one or more virtual objects when the level of activity of one or more virtual objects is greater than the activity level of the participant object, and generate an output file comprising video data having images from the location and direction of the virtual camera perspective, wherein the output file further comprises audio data for causing an output device to emanate an audio output from a speaker object location that is selected based on the location of the virtual camera perspective, wherein the audio output emanating from the speaker object location models the location and direction of the virtual camera perspective.

Illustrative Figure

Abstract

The techniques disclosed herein provide a high fidelity, rich, and engaging experience for spectators of streaming video services. The techniques disclosed herein enable a system to receive, process and, store session data defining activity of a virtual reality environment. The system can generate recorded video data of the session activity along with rendered spatial audio data, e.g., render the spatial audio in the cloud, for streaming of the video data and rendered spatial audio data to one or more computers. The video data and rendered spatial audio data can provide high fidelity video clips of salient activity of a virtual reality environment. In one illustrative example, the system can automatically create a video from one or more camera positions and audio data that corresponds to the camera positions.

Description

DETAILED DESCRIPTION The techniques disclosed herein provide a high fidelity, rich, and engaging experience for spectators of streaming video services. In some configurations, a system can receive or generate session data defining a virtual reality environment comprising a participant object. The session data can allow a participant to provide a participant input for controlling a location of the participant object and a direction of the participant object. A participant object, for example, can include an avatar, vehicle, or other virtual reality object associated with a user. A system can analyze the session data to determine a level of activity associated with the participant object or another virtual reality object. In some configurations, the system can also analyze the session data to determine a type of activity associated with the participant object or another virtual reality object. The system can then select a location and a direction of a virtual camera perspective based on the level of activity and/or the activity type associated with the participant object or another object. For illustrative purposes, the virtual camera perspective is also referred to herein as a “virtual camera viewing area.” Data defining the perspective can include a two-dimensional or a three-dimensional area of a virtual environment where virtual objects, such as an avatar or a boundary, within the perspective are deemed as visible to a virtual reality object, such as a participant object. The virtual camera perspective can be from a first-person perspective of a participant object or the virtual camera perspective can be from a third-person perspective. The system can then generate an output file comprising video data having images from the location and direction of the virtual camera perspective. The output file further comprises audio data for causing an output device to emanate an audio output from a speaker object location ...

DETAILED DESCRIPTION

The techniques disclosed herein provide a high fidelity, rich, and engaging experience for spectators of streaming video services. In some configurations, a system can receive or generate session data defining a virtual reality environment comprising a participant object. The session data can allow a participant to provide a participant input for controlling a location of the participant object and a direction of the participant object. A participant object, for example, can include an avatar, vehicle, or other virtual reality object associated with a user.

A system can analyze the session data to determine a level of activity associated with the participant object or another virtual reality object. In some configurations, the system can also analyze the session data to determine a type of activity associated with the participant object or another virtual reality object. The system can then select a location and a direction of a virtual camera perspective based on the level of activity and/or the activity type associated with the participant object or another object. For illustrative purposes, the virtual camera perspective is also referred to herein as a “virtual camera viewing area.” Data defining the perspective can include a two-dimensional or a three-dimensional area of a virtual environment where virtual objects, such as an avatar or a boundary, within the perspective are deemed as visible to a virtual reality object, such as a participant object. The virtual camera perspective can be from a first-person perspective of a participant object or the virtual camera perspective can be from a third-person perspective.

The system can then generate an output file comprising video data having images from the location and direction of the virtual camera perspective. The output file further comprises audio data for causing an output device to emanate an audio output from a speaker object location that is selected based on the location of the virtual camera perspective, wherein the audio output emanating from the speaker object location models the location and direction of the of the virtual camera perspective.

In some configurations, the system generates an audio output based on the Ambisonics technology. In some configurations, the techniques disclosed herein can generate an audio output based on other technologies including a channel-based technology and/or an object-based technology. Based on such technologies, audio streams associated with individual objects can be rendered from any position and from any viewing direction by the use of the audio output.

When the system generates an Ambisonics-based audio output, such an output can be communicated to a number of client computing devices. In some configurations, a server can render the audio output in accordance with the selected camera perspective orientation and communicate the output to one or more client computing devices. The client computing device can rotate a model of audio objects defined in the audio output. The system can cause a rendering of an audio output that is consistent with the spectator's new orientation. Although other technologies can be used, such as an object-based technology, configurations utilizing the Ambisonics technology may provide additional performance benefits given that an audio output based on the Ambisonics technology can be rotated after the fact, e.g., after the audio output has been generated.

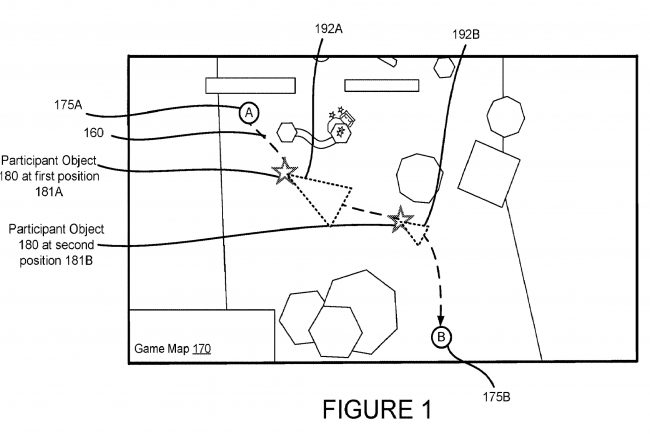

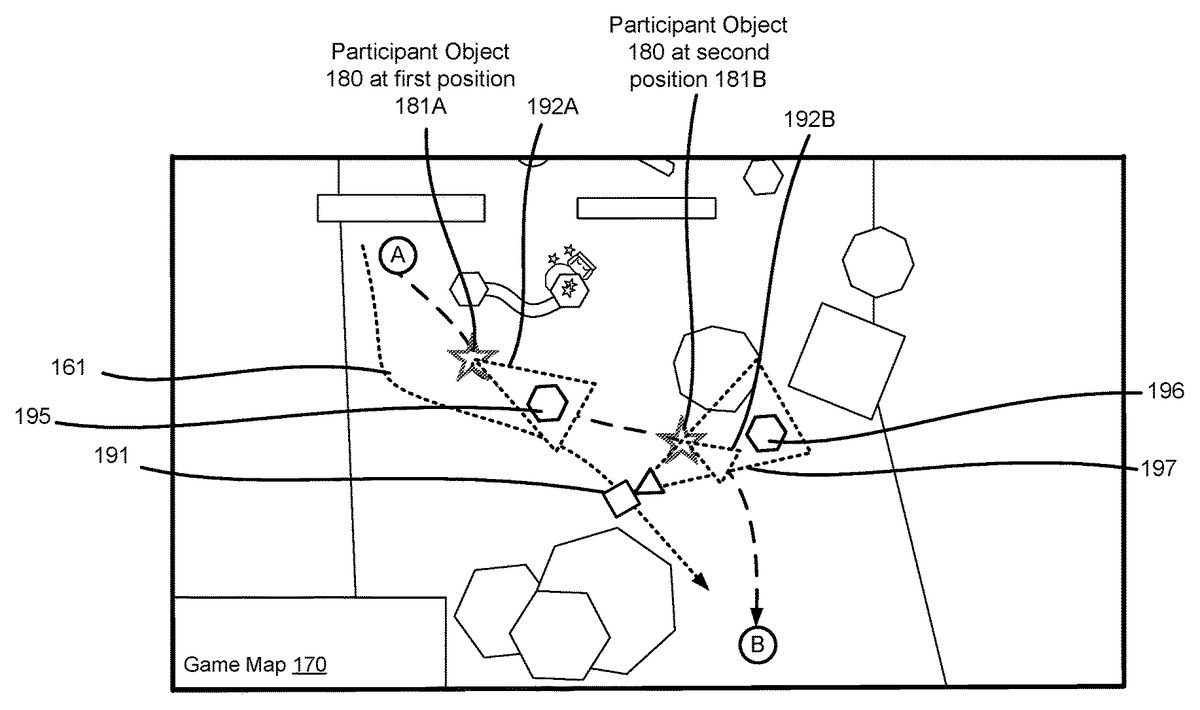

As summarized above, one or more systems can utilize session data defining a virtual reality environment comprising a participant object, the session data allowing a participant to provide a participant input for controlling a location of the participant object and a direction of the participant object. For illustrative purposes, aspects of a virtual reality environment are shown inFIG. 1andFIG. 2.

FIG. 1illustrates a top view of a rendering of a game map170that is based on session data. In this illustrative example, a participant object180is traveling down a path160from a first point175A to a second point175B. This illustrative example shows the participant object180at a first position181A having a first perspective192A. This example also shows the participant object180at a second position181B having a second perspective192B. The participant object180can interact with a number of virtual objects, some of which are shown in this example as hexagons and rectangles.

FIG. 2illustrates a top view of the game map170showing a virtual camera191having a virtual camera perspective197. In addition, the game map170shown inFIG. 2comprises virtual objects within the perspective192, e.g., viewing area, of the participant object180. More particularly, a first virtual object195is within the first perspective192A when the participant object180is at the first position181A. A second virtual object196is not within the second perspective192B when the participant object180is at the second position181B. However, the virtual object196is within the virtual camera perspective197of the virtual camera191.

In some configurations, the virtual camera191can follow a camera path161that follows the path160of the participant object180. Video data can be recorded from the perspective192of the participant object180or from the perspective197of the virtual camera191. The location of the virtual camera191, and thus the virtual camera perspective197, can be within a threshold distance from the path160of the participant object180. The threshold distance, for example, can be any suitable distance for capturing multiple objects having a threshold level of activity or a threshold level of interaction within the virtual camera perspective197.

In some configurations, the video data can be generated from a first-person perspective projecting from the location of the participant object180when virtual objects within the first-person perspective have a threshold level of activity. For example, when the first object195as a threshold level of activity, such as a certain amount of movement or a particular change in a state, the system may select the perspective192A of the participant object184for recording video data.

In some configurations, the video data can be generated from a third-person perspective197directed toward the participant object180and a virtual object196when the participant object180has a threshold level of activity or a threshold level of interaction with the virtual object196. For example, in an example where the participant object180is an avatar in the avatar is interacting with another virtual object196, such as a virtual weapon or a virtual tool, a selected camera angle for the video data may be arranged to record video data depicting the participant object interacting with the virtual object.

In some configurations, the system can analyze and compare a level of activity with respect to each virtual object in a virtual environment. Video data can then be generated to include the virtual object having a greater level of activity. For example, as shown inFIG. 2, a system can analyze the session data to determine a level of activity associated with one or more virtual objects. The virtual camera perspective generating video data can be a first-person perspective192A projecting from the location181A of the participant object180when the level of activity of one or more virtual objects, such as object195within the first-person perspective192A is greater than the activity level of the participant object180.

In some configurations, the virtual camera perspective used for generating video data can be a third-person perspective197directed toward the participant object180when the participant object180has a threshold level of activity. For instance, if the participant object180is moving at a threshold velocity or is moving in a particular manner, also referred to herein as a type of activity, the system may select the third-person perspective197for generating video data.

In some configurations, the virtual camera perspective used for generating video data can toggle between the first-person perspective192B and a third-person perspective197based on one or more time periods. Such techniques enable periodic switching between different perspectives.

In some configurations, the virtual camera perspective used for generating video data can be based on a type of activity. For example, the virtual camera perspective can be a first-person perspective192A projecting from the location181A of the participant object180when a first type of activity is detected, and the virtual camera perspective is a third-person perspective197directed toward the participant object180when a second type of activity is detected. In some illustrative examples, the first type of activity can include, but is not limited to, a category of moves, a type of movement, a gesture, an interaction between objects, etc. More specific examples of a first type of activity may include movement of a player in a predetermined direction, e.g., forward, backward, left, or right. A first type of activity may include a turning motion. A second type of activity can also include but is not limited to, a category of moves, a type of movement, a gesture, an interaction between objects, etc. More specific examples of a second type of activity may include a player, e.g., the participant object, operating a weapon or tool in association with another virtual object, jumping over anther virtual object, etc.

FIG. 3Ais an example user interface showing a rendering of a first-person perspective192from video data captured by a virtual camera. As shown, the interface of the first-person perspective192can include one or more virtual objects. Also shown, the user interface can include graphical elements304indicating contextual information regarding the session. For instance, a system can receive the contextual information from a client device controlled by a participant, and renderings of the contextual information can be integrated into the generated video data.FIG. 3Bis an example user interface showing a rendering of a third-person perspective197from video data captured by a virtual camera. As shown, the interface of the third-person perspective197can include one or more virtual objects, including the participant object180and a virtual object196.

As summarized above, media data, such as a video file can be generated to enable a playback device to play a video having sound that follows selected camera perspectives and renders an audio output from the portions of the object-based audio. The system can generate an output file comprising video data having images from the location and direction of the virtual camera perspective, wherein the output file further comprises audio data for causing an output device to emanate an audio output from a speaker object location that is selected based on the location of the virtual camera perspective, wherein the audio output emanating from the speaker object location models the location and direction of the of the virtual camera perspective.FIGS. 4-9illustrate such features in more detail.

FIG. 4illustrates a scenario where a computer is managing a virtual reality environment that is displayed on a user interface100. The virtual reality environment comprises a participant object101, also referred to herein as an “avatar,” that is controlled by a participant. The participant object101is moving through the virtual reality environment following a path151. A system provides a participant viewing area103and a camera viewing area105, also referred to herein as a virtual camera perspective197. In this example, the participant viewing area103is aligned with the camera viewing area105. More specifically, the participant object101is pointing in a first direction110and the camera viewing area105is also pointing in the first direction110. In this scenario, data defining the camera viewing area105is communicated to computing devices associated with spectators for the display of objects in the virtual reality environment that fall within the camera viewing area105. Similarly, the computing device associated with the participant displays objects in the virtual reality environment that fall within the viewing area103.

Also shown inFIG. 4, within the virtual reality environment, a first audio object120A and a second audio object120B (collectively referred to herein as audio objects120) are respectively positioned on the left and the right side of the participant object101. In such an example, data defining the location of the first audio object120A can cause a system to render an audio signal of a stream that indicates the location of the first audio object120A. In addition, data defining the location of the second audio object120B would cause a system to render an audio signal that indicates the location of the second audio object120B. More specifically, in this example, the participant and the spectator of the generated video would both hear the stream associated with the first audio object120A emanating from a speaker on their left. The participant and the spectator would also hear the stream associated with the second audio object120B emanating from a speaker on their right.

In some configurations, data indicating the direction of a stream can be used to influence how a stream is rendered to a speaker. For instance, inFIG. 4, the stream associated with the second audio object120B could be directed away from the participant object101, and in such a scenario, an output of a speaker may include effects, such as an echoing effect or a reverb effect to indicate that direction.

Referring now toFIG. 5, consider a scenario where the camera viewing area105has rotated towards a second direction111. In this example, the second direction111is 180 degrees from the participant viewing area103. In this scenario, the computing device associated with the spectator would display objects in a virtual reality environment that fall within the camera viewing area105. The computing device associated with the participant would independently display objects in a virtual reality environment that fall within the participant viewing area103.

In addition, the system can generate a spectator audio output data comprising streams associated with the audio objects120. The spectator audio output data can cause an output device to emanate audio of the stream from a speaker object location positioned relative to the spectator, where the speaker object location models the direction of the spectator view and the location of the audio object120relative to the location of the participant object. Specific to the example shown inFIG. 5, when the system selects a virtual camera direction that is rotated, e.g., the direction of the camera viewing area105has changed, the spectator of the video would hear the stream associated with the first audio object120A emanating from a speaker on the right side of the spectator. In addition, the spectator would hear the stream associated with the second audio object120B emanating from a speaker on the left side of the spectator.

Although the example shown inFIG. 4andFIG. 5illustrates a two-dimensional representation of a virtual reality environment, it can be appreciated that the techniques disclosed herein can apply to a three-dimensional environment. Thus, a rotation of a viewing area for the participant or the spectator can have a vertical component as well as a horizontal component.FIG. 6illustrates aspects of such configurations. In the example ofFIG. 6, a first direction of a camera viewing area105is shown relative to a first audio object120A in a second audio object120B. Similar to the example described above, in this arrangement, the spectator of the video that is based on the viewing area105would hear streams associated with the first audio object120A emanating from a speaker on his/her left, and streams associated with the second audio object120B emanating from a speaker on his/her right.

Now turning toFIG. 7, consider a scenario where the system rotates the camera viewing area105both in a horizontal direction and vertical direction. As shown, a second direction of the camera viewing area105B is shown relative to a first audio object120A in a second audio object120B. Given this example rotation, the spectator of the video would hear streams associated with the first audio object120A emanating from a location that is located in front of him/her and slightly overhead. In addition, the spectator would hear streams associated with the second audio object120B emanating from a location that is located behind him/her.

FIG. 8illustrates a model500having a plurality of speaker objects501-505(501A-501H,505A-505H). Each speaker object is associated with a particular location within a three-dimensional area relative to a user, such as a participant or spectator. For example, a particular speaker object can have a location designated by an X, Y and Z value. In this example, the model500comprises a number of speaker objects505A-505H positioned around a perimeter of a plane. In this example, the first speaker object505A is a front-right speaker object, the second speaker object505B is the front-center speaker, and the third speaker object505C is the front-left speaker. The other speaker objects505D-505H include surrounding speaker locations within the plane. The model500also comprises a number of speakers501A-501H positioned below plane. The speaker locations can be based on real, physical speakers positioned by an output device, or the speaker locations can be based on virtual speaker objects that provide an audio output simulating a physical speaker at a predetermined position.

As summarized herein, a system can generate a spectator audio output signal of a stream, wherein the spectator audio output signal causes an output device to emanate an audio output of the stream from a speaker object location positioned relative to the spectator550. The speaker object location can model the direction of the camera viewing area105(a “spectator view”) and the location of the audio object120relative to the location of the participant object101. For illustrative purposes, the model500is used to illustrate aspects of the example shown inFIG. 2. In such an example, the speaker object505H can be associated with an audio stream of the first audio object120A, e.g., on the right side of the spectator. The speaker object505D can be associated with an audio stream of the second audio object120B, e.g., on the left side of the spectator.

FIG. 9illustrates aspects of a system600for implementing the techniques disclosed herein. The system600comprises a participant device601, a plurality of spectator devices602(602A up to602N devices), and a server620. In this example, the participant device601, which may be running a gaming application, communicates data defining a 360 canvas649and audio data652to the server620. The 360 canvas649and audio data652can be stored as session data613where it can be accessed in real time as the data is generated or accessed after the fact as a recording. A recording can be generated as video data650in a manner as described above. For instance, video data650can be generated by the can be processed by the recording engine690to include scenes from selected virtual camera perspectives. The recording engine690can generate audio data652that can cause an output device to emanate an audio output from a speaker object location that is selected based on the location of a virtual camera perspective, wherein the audio output emanating from the speaker object location models the location and direction of the of the virtual camera perspective.

In some embodiments, the audio data652can comprise an audio signal to the output device in accordance with an Ambisonics-based technology, wherein the output signal defines at least one sound field modeling the location of an audio object associated with a stream, wherein data defining the sound field can be interpreted by one or more computing devices for causing the output device to emanate the audio output based on the stream from the speaker object location.

The video data650and audio data652can be communicated to the spectator devices602. Given that the video data650and the audio data652includes a video of a session, the client computing devices can then play the video and audio and hear sounds from orientations and locations that are consistent with the video that was recorded.

Generally described, output data, e.g., an audio output, based on the Ambisonics technology involves a full-sphere surround sound technique. In addition to the horizontal plane, the output data covers sound sources above and below the listener. Thus, in addition to defining a number of other properties for each stream, each stream is associated with a location defined by a three-dimensional coordinate system.

An audio output based on the Ambisonics technology can contain a speaker-independent representation of a sound field called the B-format, which is configured to be decoded by a listener's (spectator or participant) output device. This configuration allows the system100to record data in terms of source directions rather than loudspeaker positions, and offers the listener a considerable degree of flexibility as to the layout and number of speakers used for playback.

Turning now toFIG. 10, aspects of a routine1000for providing video data having automatically selected virtual camera positions based on session activity are shown and described below. It should be understood that the operations of the methods disclosed herein are not necessarily presented in any particular order and that performance of some or all of the operations in an alternative order(s) is possible and is contemplated. The operations have been presented in the demonstrated order for ease of description and illustration. Operations may be added, omitted, and/or performed simultaneously, without departing from the scope of the appended claims.

It also should be understood that the illustrated methods can end at any time and need not be performed in their entireties. Some or all operations of the methods, and/or substantially equivalent operations, can be performed by execution of computer-readable instructions included on a computer-storage media, as defined below. The term “computer-readable instructions,” and variants thereof, as used in the description and claims, is used expansively herein to include routines, applications, application modules, program modules, programs, components, data structures, algorithms, and the like. Computer-readable instructions can be implemented on various system configurations, including single-processor or multiprocessor systems, minicomputers, mainframe computers, personal computers, hand-held computing devices, microprocessor-based, programmable consumer electronics, combinations thereof, and the like.

Thus, it should be appreciated that the logical operations described herein are implemented (1) as a sequence of computer implemented acts or program modules running on a computing system and/or (2) as interconnected machine logic circuits or circuit modules within the computing system. The implementation is a matter of choice dependent on the performance and other requirements of the computing system. Accordingly, the logical operations described herein are referred to variously as states, operations, structural devices, acts, or modules. These operations, structural devices, acts, and modules may be implemented in software, in firmware, in special purpose digital logic, and any combination thereof.

For example, the operations of the routine1000are described herein as being implemented, at least in part, by system components, which can comprise an application, component and/or a circuit. In some configurations, the system components include a dynamically linked library (DLL), a statically linked library, functionality produced by an application programming interface (API), a compiled program, an interpreted program, a script or any other executable set of instructions. Data, such as the audio data, 360 canvas data and other data, can be stored in a data structure in one or more memory components. Data can be retrieved from the data structure by addressing links or references to the data structure.

Although the following illustration refers to the components ofFIG. 9andFIG. 11, it can be appreciated that the operations of the routine1000may be also implemented in many other ways. For example, the routine1000may be implemented, at least in part, by a processor of another remote computer or a local circuit. In addition, one or more of the operations of the routine1000may alternatively or additionally be implemented, at least in part, by a chipset working alone or in conjunction with other software modules. Any service, circuit or application suitable for providing the techniques disclosed herein can be used in operations described herein.

With reference toFIG. 10, the routine1000begins at operation1001, where a computing device receive session data defining a virtual reality environment comprising a participant object, the session data allowing a participant to provide a participant input for controlling a location of the participant object and a direction of the participant object. The action of receiving the session data can also mean the session data is generated at the computing device, such as a server. In some configurations, the session data is generated at the participant device and communicated to a remote computer such as the server and/or the spectator computers.

Next, at operation1003, the computing device analyzes the session data to determine a level of activity or activity type. A summarized herein, activity of one or more virtual objects, such as a participant object, can be analyzed to determine if a virtual object made a particular movement or performed some type of predetermined action.

Next, at operation1005, the computing device select a virtual camera perspective based on the level of activity and/or activity type. In some configurations, the system can select a first-person perspective or a third-person perspective based on a level of activity and/or an activity type. In one illustrative example, a virtual camera can follow a participant object to capture video footage of salient activity of the participant object. At predetermined times or random times, the virtual camera perspective can toggle between a first-person view and a third-person view. Different types of activity, levels of activity, gestures, or any other action that can take place in a virtual reality environment can cause a computing device to automatically select a particular perspective to capture salient activity.

Next, at operation1007, the computing device generates a video output comprising video data from the selected virtual camera perspective. The video output can be in the form of a file, a stream, or any other suitable format for communicating video data to one or more computing devices.

Next, at operation1009, the computing device generates a spatial audio output based on the selected virtual camera perspective. In some configurations, a spatial audio output is based on the direction of the selected camera perspectives, a location of an audio object associated with a stream, and/or the location of the participant object. In some configurations, the spatial audio output can cause an output device (a speaker system or headphones) to emanate an audio output of the stream from a speaker object location positioned relative to the end user, e.g., a spectator. The speaker object location can model the direction of the spectator view and the location of the audio object relative to the location of the participant object.

Next, at operation1009, the computing device communicates the video output and the audio output to one or more computing devices for rendering. As described herein, the video output (also referred to herein as video data) and the audio output (also referred to herein as audio data) can be communicated from the server to a plurality of client computing devices associated with spectators.

FIG. 11shows additional details of an example computer architecture for the components shown inFIG. 9capable of executing the program components described above. The computer architecture shown inFIG. 11illustrates aspects of a system, such as a game console, conventional server computer, workstation, desktop computer, laptop, tablet, phablet, network appliance, personal digital assistant (“PDA”), e-reader, digital cellular phone, or other computing device, and may be utilized to execute any of the software components presented herein. For example, the computer architecture shown inFIG. 11may be utilized to execute any of the software components described above. Although some of the components described herein are specific to the computing devices601, it can be appreciated that such components, and other components may be part of any suitable remote computer, such as the server620.

The computing device601includes a baseboard802, or “motherboard,” which is a printed circuit board to which a multitude of components or devices may be connected by way of a system bus or other electrical communication paths. In one illustrative embodiment, one or more central processing units (“CPUs”)804operate in conjunction with a chipset806. The CPUs804may be standard programmable processors that perform arithmetic and logical operations necessary for the operation of the computing device601.

The CPUs804perform operations by transitioning from one discrete, physical state to the next through the manipulation of switching elements that differentiate between and change these states. Switching elements may generally include electronic circuits that maintain one of two binary states, such as flip-flops, and electronic circuits that provide an output state based on the logical combination of the states of one or more other switching elements, such as logic gates. These basic switching elements may be combined to create more complex logic circuits, including registers, adders-subtractors, arithmetic logic units, floating-point units, and the like.

The chipset806provides an interface between the CPUs804and the remainder of the components and devices on the baseboard802. The chipset806may provide an interface to a RAM808, used as the main memory in the computing device601. The chipset806may further provide an interface to a computer-readable storage medium such as a read-only memory (“ROM”)810or non-volatile RAM (“NVRAM”) for storing basic routines that help to startup the computing device601and to transfer information between the various components and devices. The ROM810or NVRAM may also store other software components necessary for the operation of the computing device601in accordance with the embodiments described herein.

The computing device601may operate in a networked environment using logical connections to remote computing devices and computer systems through a network814, such as the local area network. The chipset806may include functionality for providing network connectivity through a network interface controller (NIC)812, such as a gigabit Ethernet adapter. The NIC812is capable of connecting the computing device601to other computing devices over the network. It should be appreciated that multiple NICs812may be present in the computing device601, connecting the computer to other types of networks and remote computer systems. The network allows the computing device601to communicate with remote services and servers, such as the remote computer801. As can be appreciated, the remote computer801may host a number of services such as the XBOX LIVE gaming service provided by MICROSOFT CORPORATION of Redmond, Wash. In addition, as described above, the remote computer801may mirror and reflect data stored on the computing device601and host services that may provide data or processing for the techniques described herein.

The computing device601may be connected to a mass storage device826that provides non-volatile storage for the computing device. The mass storage device826may store system programs, application programs, other program modules, and data, which have been described in greater detail herein. The mass storage device826may be connected to the computing device601through a storage controller815connected to the chipset806. The mass storage device826may consist of one or more physical storage units. The storage controller815may interface with the physical storage units through a serial attached SCSI (“SAS”) interface, a serial advanced technology attachment (“SATA”) interface, a fiber channel (“FC”) interface, or other type of interface for physically connecting and transferring data between computers and physical storage units. It should also be appreciated that the mass storage device826, other storage media and the storage controller815may include MultiMediaCard (MMC) components, eMMC components, Secure Digital (SD) components, PCI Express components, or the like.

The computing device601may store data on the mass storage device826by transforming the physical state of the physical storage units to reflect the information being stored. The specific transformation of physical state may depend on various factors, in different implementations of this description. Examples of such factors may include, but are not limited to, the technology used to implement the physical storage units, whether the mass storage device826is characterized as primary or secondary storage, and the like.

For example, the computing device601may store information to the mass storage device826by issuing instructions through the storage controller815to alter the magnetic characteristics of a particular location within a magnetic disk drive unit, the reflective or refractive characteristics of a particular location in an optical storage unit, or the electrical characteristics of a particular capacitor, transistor, or other discrete component in a solid-state storage unit. Other transformations of physical media are possible without departing from the scope and spirit of the present description, with the foregoing examples provided only to facilitate this description. The computing device601may further read information from the mass storage device826by detecting the physical states or characteristics of one or more particular locations within the physical storage units.

In addition to the mass storage device826described above, the computing device601may have access to other computer-readable media to store and retrieve information, such as program modules, data structures, or other data. Thus, the application829, other data and other modules are depicted as data and software stored in the mass storage device826, it should be appreciated that these components and/or other modules may be stored, at least in part, in other computer-readable storage media of the computing device601. Although the description of computer-readable media contained herein refers to a mass storage device, such as a solid-state drive, a hard disk or CD-ROM drive, it should be appreciated by those skilled in the art that computer-readable media can be any available computer storage media or communication media that can be accessed by the computing device601.

Communication media includes computer readable instructions, data structures, program modules, or other data in a modulated data signal such as a carrier wave or other transport mechanism and includes any delivery media. The term “modulated data signal” means a signal that has one or more of its characteristics changed or set in a manner so as to encode information in the signal. By way of example, and not limitation, communication media includes wired media such as a wired network or direct-wired connection, and wireless media such as acoustic, RF, infrared and other wireless media. Combinations of the any of the above should also be included within the scope of computer-readable media.

By way of example, and not limitation, computer storage media may include volatile and non-volatile, removable and non-removable media implemented in any method or technology for storage of information such as computer-readable instructions, data structures, program modules or other data. For example, computer media includes, but is not limited to, RAM, ROM, EPROM, EEPROM, flash memory or other solid state memory technology, CD-ROM, digital versatile disks (“DVD”), HD-DVD, BLU-RAY, or other optical storage, magnetic cassettes, magnetic tape, magnetic disk storage or other magnetic storage devices, or any other medium that can be used to store the desired information and which can be accessed by the computing device601. For purposes of the claims, the phrase “computer storage medium,” “computer-readable storage medium,” and variations thereof, does not include waves or signals per se and/or communication media.

The mass storage device826may store an operating system827utilized to control the operation of the computing device601. According to one embodiment, the operating system comprises a gaming operating system. According to another embodiment, the operating system comprises the WINDOWS® operating system from MICROSOFT Corporation. According to further embodiments, the operating system may comprise the UNIX, ANDROID, WINDOWS PHONE or iOS operating systems, available from their respective manufacturers. It should be appreciated that other operating systems may also be utilized. The mass storage device826may store other system or application programs and data utilized by the computing devices601, such as any of the other software components and data described above. The mass storage device826might also store other programs and data not specifically identified herein.

In one embodiment, the mass storage device826or other computer-readable storage media is encoded with computer-executable instructions which, when loaded into the computing device601or server, transform the computer from a general-purpose computing system into a special-purpose computer capable of implementing the embodiments described herein. These computer-executable instructions transform the computing device601by specifying how the CPUs804transition between states, as described above. According to one embodiment, the computing device601has access to computer-readable storage media storing computer-executable instructions which, when executed by the computing device601, perform the various routines described above with regard toFIG. 10and the other FIGURES. The computing device601might also include computer-readable storage media for performing any of the other computer-implemented operations described herein.

The computing device601may also include one or more input/output controllers816for receiving and processing input from a number of input devices, such as a keyboard, a mouse, a microphone, a headset, a touchpad, a touch screen, an electronic stylus, or any other type of input device. Also shown, the input/output controller816is in communication with an input/output device825. The input/output controller816may provide output to a display, such as a computer monitor, a flat-panel display, a digital projector, a printer, a plotter, or other type of output device. The input/output controller816may provide input communication with other devices such as a microphone, a speaker, game controllers and/or audio devices.

For example, the input/output controller816can be an encoder and the output device825can include a full speaker system having a plurality of speakers. The encoder can use a spatialization technology, such as Dolby Atmos, HRTF or another Ambisonics-based technology, and the encoder can process audio output data or output signals received from the application829. The encoder can utilize a selected spatialization technology to generate a spatially encoded stream that appropriately renders to the output device825.

The computing device601can process audio signals in a number of audio types, including but not limited to 2D bed audio, 3D bed audio, 3D object audio and audio data Ambisonics-based technology as described herein.

2D bed audio includes channel-based audio, e.g., stereo, Dolby 5.1, etc. 2D bed audio can be generated by software applications and other resources.

3D bed audio includes channel-based audio, where individual channels are associated with objects. For instance, a Dolby 5.1 signal includes multiple channels of audio and each channel can be associated with one or more positions. Metadata can define one or more positions associated with individual channels of a channel-based audio signal. 3D bed audio can be generated by software applications and other resources.

3D object audio can include any form of object-based audio. In general, object-based audio defines objects that are associated with an audio track. For instance, in a movie, a gunshot can be one object and a person's scream can be another object. Each object can also have an associated position. Metadata of the object-based audio enables applications to specify where each sound object originates and how it should move. 3D bed object audio can be generated by software applications and other resources.

Output audio data generated by an application can also define an Ambisonics representation. Some configurations can include generating an Ambisonics representation of a sound field from an audio source signal, such as streams of object-based audio of a video game. The Ambisonics representation can also comprise additional information describing the positions of sound sources, wherein the Ambisonics data can be include definitions of a Higher Order Ambisonics representation.

Higher Order Ambisonics (HOA) offers the advantage of capturing a complete sound field in the vicinity of a specific location in the three-dimensional space, which location is called a ‘sweet spot’. Such HOA representation is independent of a specific loudspeaker set-up, in contrast to channel-based techniques like stereo or surround. But this flexibility is at the expense of a decoding process required for playback of the HOA representation on a particular loudspeaker set-up.

HOA is based on the description of the complex amplitudes of the air pressure for individual angular wave numbers k for positions x in the vicinity of a desired listener position, which without loss of generality may be assumed to be the origin of a spherical coordinate system, using a truncated Spherical Harmonics (SH) expansion. The spatial resolution of this representation improves with a growing maximum order N of the expansion.

In addition, or alternatively, a video output822may be in communication with the chipset806and operate independent of the input/output controllers816. It will be appreciated that the computing device601may not include all of the components shown in the figures, may include other components that are not explicitly shown inFIG. 11, or may utilize an architecture completely different than that shown inFIG. 11.

In closing, although the various configurations have been described in language specific to structural features and/or methodological acts, it is to be understood that the subject matter defined in the appended representations is not necessarily limited to the specific features or acts described. Rather, the specific features and acts are disclosed as example forms of implementing the claimed subject matter.

Claims

- A computing device, comprising: a processor;a memory having computer-executable instructions stored thereupon which, when executed by the processor, cause the computing device to receive session data defining a virtual reality environment comprising a participant object, the session data allowing a participant to provide a participant input for controlling a location of the participant object and a direction of the participant object, analyze the session data to determine a level of activity associated with the participant object, determine a level of activity associated with one or more virtual objects, select a location and a direction of a virtual camera perspective, wherein the virtual camera perspective is a first-person perspective projecting from the location of the participant object, wherein the direction is towards the one or more virtual objects when the level of activity of one or more virtual objects is greater than the activity level of the participant object, and generate an output file comprising video data having images from the location and direction of the virtual camera perspective, wherein the output file further comprises audio data for causing an output device to emanate an audio output from a speaker object location that is selected based on the location of the virtual camera perspective, wherein the audio output emanating from the speaker object location models the location and direction of the virtual camera perspective.

- The computing device of claim 1 , wherein the location of the virtual camera perspective is within a threshold distance from a path of the participant object.

- The computing device of claim 1 , wherein the virtual camera perspective is a first-person perspective projecting from the location of the participant object when virtual objects within the first-person perspective have a threshold level of activity.

- The computing device of claim 1 , wherein the virtual camera perspective is a third-person perspective directed toward the participant object and a virtual object when the participant object has a threshold level of activity with the virtual object.

- The computing device of claim 1 , wherein the virtual camera perspective is a third-person perspective directed toward the participant object when the participant object has a threshold level of activity.

- The computing device of claim 1 , wherein the virtual camera perspective toggles between a first-person perspective and a third-person perspective based on one or more time periods.

- The computing device of claim 1 , wherein generating the audio output comprises configuring an audio signal to the output device in accordance with an Ambisonics-based technology, wherein the output signal defines at least one sound field modeling the location of an audio object associated with a stream, wherein data defining the sound field can be interpreted by one or more computing devices for causing the output device to emanate the audio output based on the stream from the speaker object location.

- A computing device, comprising: a processor;a memory having computer-executable instructions stored thereupon which, when executed by the processor, cause the computing device to receive session data defining a virtual reality environment comprising a participant object, the session data allowing a participant to provide a participant input for controlling a location of the participant object and a direction of the participant object, analyze the session data to determine a level of activity associated with the participant object and a type of activity associated with the participant object;determine a level of activity associated with one or more virtual objects, select a location and a direction of a virtual camera perspective based on the type of activity associated with the participant object, wherein the virtual camera perspective is a first-person perspective projecting from the location of the participant object, wherein the direction is towards the one or more virtual objects when the level of activity of one or more virtual objects is greater than the activity level of the participant object;and generate an output file comprising video data having images from the location and direction of the virtual camera perspective, wherein the output file further comprises audio data for causing an output device to emanate an audio output from a speaker object location that is selected based on the location of the virtual camera perspective, wherein the audio output emanating from the speaker object location models the location and direction of the virtual camera perspective.

- The computing device of claim 8 , wherein the location of the virtual camera perspective is within a threshold distance from a path of the participant object.

- The computing device of claim 8 , wherein the virtual camera perspective is the first-person perspective projecting from the location of the participant object when a first type of activity is detected, wherein the virtual camera perspective is a third-person perspective directed toward the participant object when a second type of activity is detected.

- The computing device of claim 10 , wherein the second type of activity comprises a threshold level of activity of a virtual reality object and the participant object, wherein the virtual camera perspective causes the generation of the video data depicting the virtual reality object and the participant object.

- The computing device of claim 10 , wherein the first type of activity comprises movement of the participant object in a predetermined direction.

- The computing device of claim 12 , wherein the predetermined direction is at least one of a forward direction or a backward direction.

- The computing device of claim 8 , wherein the virtual camera perspective toggles between a first-person perspective and a third-person perspective based on one or more time periods.

- The computing device of claim 8 , wherein generating the audio output comprises configuring an audio signal to the output device in accordance with an Ambisonics-based technology, wherein the output signal defines at least one sound field modeling the location of an audio object associated with a stream, wherein data defining the sound field can be interpreted by one or more computing devices for causing the output device to emanate the audio output based on the stream from the speaker object location.

- A method comprising: receiving session data defining a virtual reality environment comprising a participant object, the session data allowing a participant to provide a participant input for controlling a location of the participant object and a direction of the participant object, analyzing the session data to determine a level of activity and an activity type associated with the participant object;determining a level of activity associated with one or more virtual objects, selecting a location and a direction of a virtual camera perspective based on the level of activity and the activity type associated with the participant object, wherein the virtual camera perspective is a first-person perspective projecting from the location of the participant object when the level of activity of one or more virtual objects within the first-person perspective is greater than the activity level of the participant object;and generating an output file comprising video data having images from the location and direction of the virtual camera perspective, wherein the output file further comprises audio data for causing an output device to emanate an audio output from a speaker object location that is selected based on the location of the virtual camera perspective, wherein the audio output emanating from the speaker object location models the location and direction of the virtual camera perspective.

- The method of claim 16 , wherein the location of the virtual camera perspective is within a threshold distance from a path of the participant object.

- The method of claim 16 , wherein the virtual camera perspective is a first-person perspective projecting from the location of the participant object when the one or more virtual objects within the first-person perspective have a threshold level of activity.

- The method of claim 16 , wherein the virtual camera perspective is a third-person perspective directed toward the participant object and the one or more virtual objects when the participant object has a threshold level of activity with the one or more virtual objects.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.