U.S. Pat. No. 10,258,888

METHOD AND SYSTEM FOR INTEGRATED REAL AND VIRTUAL GAME PLAY FOR MULTIPLE REMOTELY-CONTROLLED AIRCRAFT

AssigneeQFO Labs Inc

Issue DateNovember 16, 2016

Illustrative Figure

Abstract

Gaming systems and methods facilitate integrated real and virtual game play among multiple remote control objects, including multiple remotely-controlled aircraft, within a locally-defined, three-dimensional game space using gaming “apps” (i.e., applications) running on one or more mobile devices, such as tablets or smartphones, and/or a central game controller. The systems and methods permit game play among multiple remotely-controlled aircraft within the game space. Game play may be controlled and/or displayed by a gaming app running on one or more mobile devices and/or a central game controller that keeps track of multiple craft locations and orientations with reference to a locally-defined coordinate frame of reference associated with the game space. The gaming app running on one or more mobile devices and/or central game controller also determine game play among the various craft and objects within the game space in accordance with the rules and parameters for the game being played.

Description

While various embodiments are amenable to various modifications and alternative forms, specifics thereof have been shown by way of example in the drawings and will be described in detail. It should be understood, however, that the intention is not to limit the claimed inventions to the particular embodiments described. On the contrary, the intention is to cover all modifications, equivalents, and alternatives falling within the spirit and scope of the subject matter as defined by the claims. DETAILED DESCRIPTION OF THE DRAWINGS Referring toFIG. 1, a block diagram of a gaming system100for multiple remotely-controlled aircraft is depicted. Gaming system100generally comprises a first craft102aconfigured to be controlled by a first controller104a, a second craft102bconfigured to be controlled by a second controller104b, a mobile device106running a gaming app, and a game space recognition subsystem108. Generally, gaming system100allows for enhanced game play between first craft102aand second craft102busing integrated virtual components on mobile device106. In some embodiments, craft102is a hovering flying craft adapted to be selectively and unique pair with and controlled by a handheld remote controller, such as controller104. In one embodiment, a hovering flying craft102has a frame assembly that includes a plurality of arms extending from a center body with an electric motor and corresponding propeller on each arm. The frame assembly carries a battery together with electronics, sensors and communication components. These may include one or more gyroscopes, accelerometers, magnetometers, altimeters, ultrasounds sensors, downward facing camera and/or optical flow sensors, forward-facing camera and/or LIDAR sensors, processors, and/or transceivers. In some embodiments, controller104is a single-handed controller to be used by a user for controlling a craft102. In one embodiment, controller104has a controller body configured to be handheld and having an angled shape and including a flat top surface for orientation reference of the controller, a trigger mechanism projecting from the controller body ...

While various embodiments are amenable to various modifications and alternative forms, specifics thereof have been shown by way of example in the drawings and will be described in detail. It should be understood, however, that the intention is not to limit the claimed inventions to the particular embodiments described. On the contrary, the intention is to cover all modifications, equivalents, and alternatives falling within the spirit and scope of the subject matter as defined by the claims.

DETAILED DESCRIPTION OF THE DRAWINGS

Referring toFIG. 1, a block diagram of a gaming system100for multiple remotely-controlled aircraft is depicted. Gaming system100generally comprises a first craft102aconfigured to be controlled by a first controller104a, a second craft102bconfigured to be controlled by a second controller104b, a mobile device106running a gaming app, and a game space recognition subsystem108. Generally, gaming system100allows for enhanced game play between first craft102aand second craft102busing integrated virtual components on mobile device106.

In some embodiments, craft102is a hovering flying craft adapted to be selectively and unique pair with and controlled by a handheld remote controller, such as controller104. In one embodiment, a hovering flying craft102has a frame assembly that includes a plurality of arms extending from a center body with an electric motor and corresponding propeller on each arm. The frame assembly carries a battery together with electronics, sensors and communication components. These may include one or more gyroscopes, accelerometers, magnetometers, altimeters, ultrasounds sensors, downward facing camera and/or optical flow sensors, forward-facing camera and/or LIDAR sensors, processors, and/or transceivers.

In some embodiments, controller104is a single-handed controller to be used by a user for controlling a craft102. In one embodiment, controller104has a controller body configured to be handheld and having an angled shape and including a flat top surface for orientation reference of the controller, a trigger mechanism projecting from the controller body adapted to interface with a finger of the user, a top hat projecting from the flat top surface adapted to interface with a thumb of the user, and a body, processor, electronics and communication components housed within the controller body.

For a more detailed description of aspects of one embodiment of craft102and controller104, reference is made to U.S. Pat. No. 9,004,973, by John Paul Condon et al., titled “Remote-Control Flying Copter and Method,” the detailed specification and figures of which are hereby incorporated by reference.

While embodiments of the present invention are described with respect to a smaller, hovering remotely-controlled aircraft in wireless communication with a paired wireless controller configured for single-handed tilt-control operations, it will be understood that other embodiments of the communications system for game play in accordance with the present invention may include other types of remotely-controlled flying craft, such as planes or helicopters, or other non-flying remote-control craft, such as cars or boats, and may also include other types of remote controllers, such as a conventional dual joystick controller or a tilt-control based on an “app” (i.e., an application) running on a mobile device or a software programming running on a laptop or desktop computer, as will be described.

In an embodiment, second craft102bis substantially similar to first craft102a. In such embodiments, second controller104is substantially similar to first controller104aand is configured to control second craft102b. In other embodiments, second craft102bcomprises a flying craft that is a different type than first craft102a. In embodiments, multiple types of flying craft can be used in the same game space.

Device106is configured to execute a gaming application. In an embodiment, device106comprises a smartphone, tablet, laptop computer, desktop computer, or other computing device. For example, device106can comprise a display, memory, and a processor configured to present a gaming application on the display. In embodiments, device106comprises communication or network interface modules for interfacing to other components of gaming system100. Typically, device106is mobile to allow players to set up a game space in nearly any environment or space, such as an outdoor space like a field or parking lot, or an indoor space like a gymnasium or open room. However, device106can also be stationary instead of mobile, such that players can bring their craft to a preexisting game space defined proximate the device106.

In some embodiments, device106can further be configured by the player to selectively control one of craft102aor craft102bsuch that the functionality of either controller104aor controller104bis integrated into device106. In other embodiments, device106can be integrated with game space recognition subsystem108such that the functionality of device106and game space recognition subsystem108are integrated into one device.

Game space recognition subsystem108is configured to determine a relative position and orientation of first craft102aand second craft102bwithin a three-dimensional game space. In an embodiment, game space recognition subsystem108comprises a plurality of fiducial elements, a mapping engine, and a locating engine, as will be described.

Referring toFIG. 2, a diagram of a portion of gaming system100for multiple remotely-controlled aircraft utilizing computing device106is depicted, according to an embodiment. As shown, gaming system100partially comprises first craft102a, first controller104a, and device106.

Device106comprises computing hardware and software configured to execute application110. In other embodiments, device106further comprises computing hardware and software to store application110. Application110generally comprises software configured for game-based interaction with first craft102a, including display of first craft102arelative to the executed game. Application110can include a relative location in a two-dimensional application display space that approximates the location of first craft102ain the three-dimensional game space space. For example, if first craft102ais in the northeast corner of the game space space, application110can depict first craft102aas being in the upper right corner of the application display space. Likewise, if first craft102ais in the southwest corner of the game space space, application110can depict first craft102aas being in the lower left corner of the application display space.

Application110can further include gaming elements relative to the game being played, such as heath zones, danger zones, or safe or “hide-able” zones. For example, referring to FIG.3A, a diagram of real-world interaction of multiple remotely-controlled aircraft within a game space200is depicted, according to an embodiment. As depicted, first craft102aand second craft102bare positioned or hovering within game space200. Game space200comprises a three-dimensional gaming area in which first craft102aand second craft102bcan move around in X, Y, and Z axes. In an embodiment, first craft102aand second craft102bare controlled within game space200by controller104aand second controller104b, respectively (not shown). In embodiments of game play, leaving the game space200may be considered entering a restricted or hidden zone, and may be done with or without a penalty. In other embodiments, sensors are positioned within game space200to restrict the ability of first craft102aand second craft102bto leave game space200. For example, a craft touching one of the “sides” of game space200might engage a sensor configured to transmit a command to the craft that artificially weakens or disables the flying ability of the craft.

FIG. 3Bis an illustration of an application such as application110that integrates the real-world interaction of first craft102aand second craft102bofFIG. 3Awith a virtual world of application110, according to an embodiment. For example, application110can visually depict first craft102aand second craft102bwithin the application display. Further, application110can include other visual elements that first craft102aand second craft102bcan interface with within application110. For example, as depicted, first craft102ais positioned proximate a health zone202. A user might recognize a health zone202within the virtual environment of application110and physically command first craft102anear health zone202. In an embodiment, within the game play of application110, first craft102acould gain a relative advantage to the other craft not positioned proximate health zone202. In embodiments, various display communication such as text204can visually indicate interaction with health zone202.

In another example, second craft102bcan be positioned proximate a hiding zone, such as virtual mountains206. In such embodiments, second craft102bcan be “protected” within the game play from, for example, cannon208. While proximate mountains206within application110as interpreted within game space200, second craft102bcan be safe from the “shots” fired by cannon208. In other embodiments, while positioned proximate mountains206within application110, second craft102bcan be safe from any “shots” fired by first craft102a. In embodiments, physical markers or beacons representing the various virtual elements within application110can be placed on game space200. One skilled in the art will readily appreciate that interplay between first craft102aand second craft102bon physical game space200as represented within application110is essentially unlimited. Any number of games or game components can be implemented in both game space200and on application110.

Referring again toFIG. 2, first craft102ais in wireless communication with first controller104aover craft control channel112. The wireless communication over craft control channel110can be by any suitable protocol, such as infrared (IR), Bluetooth, radio frequency (RF), or any similar protocol. Additionally, first craft102ais in wireless communication with device106over gaming channel114. Wireless communication over craft gaming channel114can likewise be by any suitable protocol, such as infrared (IR), Bluetooth, radio frequency (RF), or any similar protocol. In an embodiment, device106comprises both controller104afunctionality and application110functionality. For ease of explanation, second craft102band second controller104bare not depicted, but may include similar interfaces and communication schemes as first craft102aand first controller104aas described herein, or may include interfaces as well communication schemes that, while not similar, are compatible with at least the other communication schemes used by other craft and objects in the gaming space.

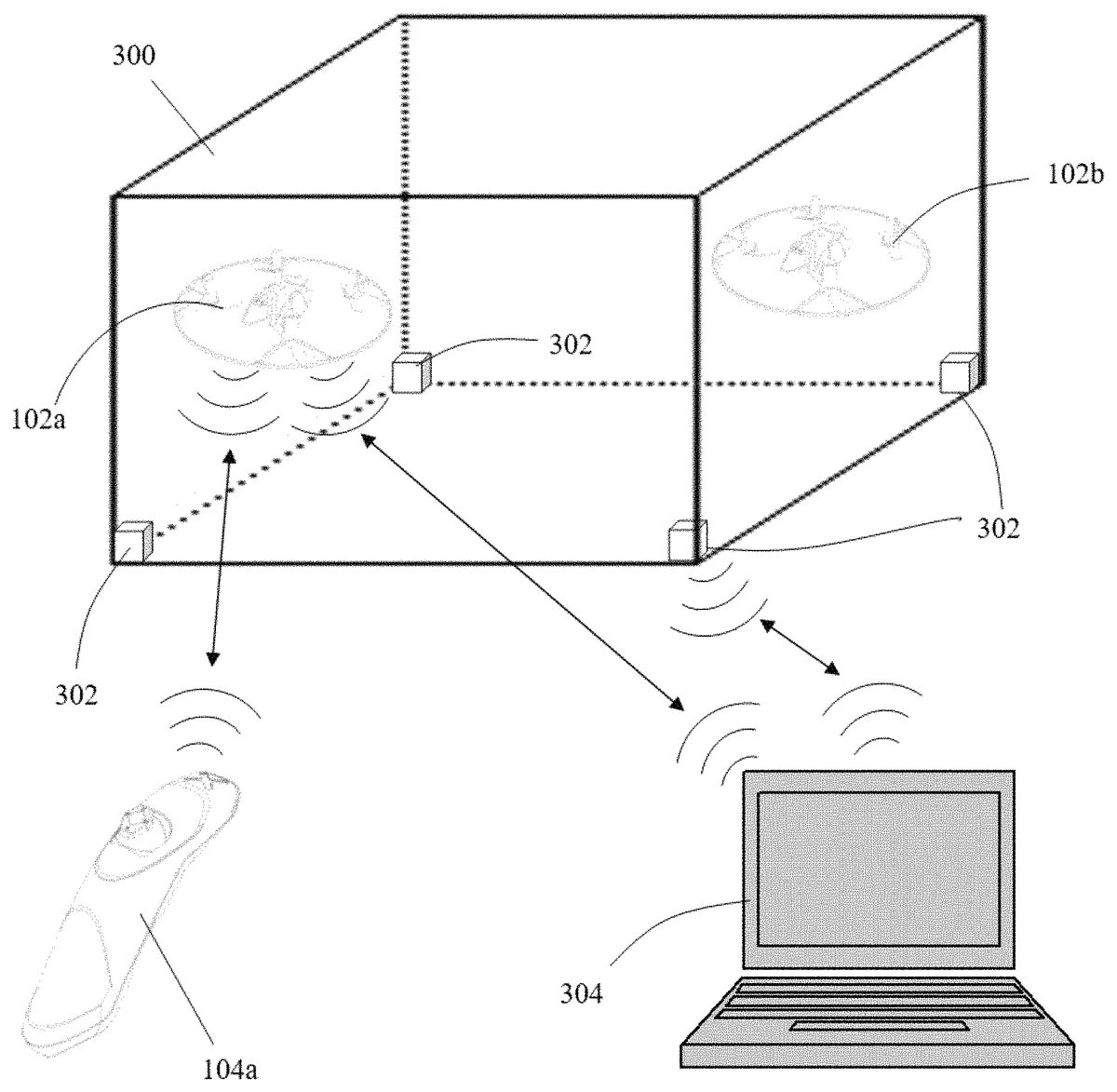

Referring toFIG. 4, a diagram of a portion of a gaming system for multiple remotely-controlled aircraft within a game space is depicted, according to an embodiment. Game space300comprises a three-dimensional gaming area similar to game space200described with respect toFIG. 3A. In an embodiment, first craft102aand second craft102bare controlled within game space200by controller104aand second controller104b(not shown), respectively.

A plurality of fiducial beacons302,402,502are provided to define game space300. In various configurations and embodiments, these fiducial beacons302(active),402(passive),502(smart) are used by game space recognition subsystem108to locate each of the craft within game space300, as will be described. It will be understood that the game space300is bounded and defined at least in part by the fiducial beacons such that the game space300represents a finite space. Although the game space300may be either indoors or outdoors as will be described, the overall volume of the game space300is limited and in various embodiments may correspond to the number, size and range of the remotely-controlled aircraft. For smaller aircraft and a smaller number of such aircraft in play, the overall volume of game space300may be suitable for indoor play in an area, for example, that could be 20 feet wide by 30 feet long by 10 feet high. With a larger number of craft, an overall volume of game space300may correspond to a typical size of a basketball court (90 feet by 50 feet) together with a height of 20 feet. For outdoor configurations, the overall game volume may be somewhat larger and more irregular to accommodate a variety of terrain and natural obstacles and features, although the game volume is typically constrained in various embodiments to both line of sight distances and distances over which the remotely-controlled aircraft are still reasonably visible to players positioned outside the game space300.

Gaming controller station304comprises computing hardware and software configured to execute a gaming application. In other embodiments, game control device304further comprises computing hardware and software to store the gaming application. In an embodiment, game control device304is substantially similar to device106ofFIG. 2. As depicted, gaming controller station304is communicatively coupled to first craft102aand second craft102b. For ease of illustration, only the linking between first craft102aand gaming controller station304is shown inFIG. 4, but one skilled in the art will readily understand that second craft102bis similarly communicatively coupled to gaming controller station304.

In another embodiment, gaming controller station304further comprises computing hardware and software configured to execute game space recognition subsystem108. In an embodiment, gaming controller station304can be communicatively coupled to active fiducial beacons302or smart fiducial beacons502to transmit and receive location information.

Referring toFIG. 5, a block diagram of game space recognition subsystem108is depicted, according to an embodiment. Game space recognition subsystem108generally comprises a plurality of fiducial elements402, a mapping engine404, and a locating engine406. It will be appreciated that the finite volume of game space300as described above contributes to the complexity of the determinations that must be performed by game space recognition subsystem108. In various embodiments, particularly those that utilize only passive fiducial beacons402, the game space volume300is smaller to simplify the requirements that game space recognition system108and mapping engine404must fulfill to adequately define the game space volume in which locating engine406can effectively identify and locate each relevant craft in the game space300.

The subsystem includes various engines, each of which is constructed, programmed, configured, or otherwise adapted, to autonomously carry out a function or set of functions. The term engine as used herein is defined as a real-world device, component, or arrangement of components implemented using hardware, such as by an application specific integrated circuit (ASIC) or field-programmable gate array (FPGA), for example, or as a combination of hardware and software, such as by a microprocessor system and a set of program instructions that adapt the engine to implement the particular functionality, which (while being executed) transform the microprocessor system into a special-purpose device. An engine can also be implemented as a combination of the two, with certain functions facilitated by hardware alone, and other functions facilitated by a combination of hardware and software. In certain implementations, at least a portion, and in some cases, all, of an engine can be executed on the processor(s) of one or more computing platforms that are made up of hardware (e.g., one or more processors, data storage devices such as memory or drive storage, input/output facilities such as network interface devices, video devices, keyboard, mouse or touchscreen devices, etc.) that execute an operating system, system programs, and application programs, while also implementing the engine using multitasking, multithreading, distributed (e.g., cluster, peer-peer, cloud, etc.) processing where appropriate, or other such techniques. Accordingly, each engine can be realized in a variety of physically realizable configurations, and should generally not be limited to any particular implementation exemplified herein, unless such limitations are expressly called out. In addition, an engine can itself be composed of more than one sub-engines, each of which can be regarded as an engine in its own right. Moreover, in the embodiments described herein, each of the various engines corresponds to a defined autonomous functionality; however, it should be understood that in other contemplated embodiments, each functionality can be distributed to more than one engine. Likewise, in other contemplated embodiments, multiple defined functionalities may be implemented by a single engine that performs those multiple functions, possibly alongside other functions, or distributed differently among a set of engines than specifically illustrated in the examples herein.

Fiducial elements302,402,502may each comprise a beacon, marker, and/or other suitable component configured for mapping and locating each of the craft within a game space. In an embodiment, fiducial elements402are passive and comprise a unique identifier readable by other components of game space recognition subsystem108. For example, fiducial elements402can comprise a “fiducial strip” having a plurality of unique markings along the strip. In other embodiments, fiducial elements402can include individual cards or placards having unique markings. A plurality of low-cost, printed fiducials can be used for entry-level game play.

In another embodiment, fiducial elements302are active and each include a transceiver configured for IR, RF-pulse, magnetometer, or ultrasound transmission. In embodiments, other active locating technology can also be utilized. In embodiments, active fiducial elements302can include “smart” fiducials502having a field-of-view camera. Fiducial elements can further be a combination of active elements302, passive fiducial elements402and/or smart fiducial elements502. In further embodiments, fiducial elements302,402,502may includes special fiducials that also represent game pieces like capture points, health restoring, defensive turrets, etc.

In embodiments, fiducial elements302,402,502can be spaced evenly or unevenly along the horizontal (X-axis) surface of the game space. In embodiments, fiducial elements302,402,502can be spaced evenly or unevenly along the vertical dimension (Z-axis) of the game space. For example, fiducial elements302,402,502can be spaced along a vertical pole placed within the game space. In most embodiments, vertical placement of fiducial elements302,402,502is not needed. In such embodiments, an altitude sensor of the craft provides relevant Z-axis data. Relative placement of fiducial elements302,402,502is described in additional detail with respect toFIGS. 8-10.

According to an embodiment, the maximum spacing between fiducials is a trade-off between minimum craft height and camera field of view. After calibration and assuming the height above the ground is known, game play can be implemented with a craft identifying only a single fiducial element continuously. In embodiments, the size of fiducial elements can be a function of the resolution of the camera, its field of view, or the required number of pixels on a particular fiducial element. Accordingly, the less information-dense the fiducial elements are, the smaller the implementation size can be.

Mapping engine404is configured to map the relative placement of the plurality of fiducial elements302,402,502. In embodiments, mapping engine404is configured to map, with high certainty, the relative distance between each of the fiducial elements302,402,502. Any error in fiducial positions can result in error in the estimated position of a craft. One skilled in the art will appreciate that smaller, environmentally-controlled game spaces require fewer fiducial elements. However, outdoor locations and larger areas require a larger quantity of fiducial elements or additional processing to determine the relative positions of fiducial elements302,402,502. In embodiments, mapping engine404is configured to perform a calibration sequence of fiducial elements302,502.

In an embodiment of fiducial elements302,502comprising active elements and/or smart elements, mapping engine404is configured to receive relative locations of fiducial elements302,502as discovered by the respective elements. For example, wherein active fiducial elements302,502comprise transmitting beacons, an IR, RF, or ultrasound pulse can be emitted by one or more of fiducial elements302,502and received by the other fiducial elements302,502and/or game controller station304. The time of the pulse from transmission to reception by another fiducial element302,502(i.e., time of flight analysis) can thus be utilized to determine a relative position between fiducial elements302,502. This data can be transmitted to mapping engine404, for example, through game controller station304inFIG. 4. Mapping engine404can thus prepare or otherwise learn a relative map of the position of active fiducial elements302,502.

In an embodiment of fiducial elements402comprising passive elements, mapping engine404is configured to interface with image data to identify and prepare or otherwise learn a relative map of the position of fiducial elements. In one example, a calibration sequence includes obtaining or taking an image including fiducial elements402using a mobile device, such as device106or game controller station304. The image can be transmitted or otherwise sent to mapping engine404. Mapping engine404is configured to parse or otherwise determine the unique identifiers of fiducial elements402in order to prepare or otherwise learn a relative map of the position of fiducial elements402. In embodiments, multiple images can be taken of the game space. For example, in a large game space, the resolution required to determine each unique fiducial element402may not fit in a single picture. Multiple “zoomed-in” pictures can be taken and stitched together using any number of photo composition techniques in order to generate a full image comprising all of the fiducial elements402.

In another embodiment, as described above, craft can include one or more downward-facing cameras. In embodiments, such a craft is referred to here as a mapping craft during a calibration sequence that can include utilizing the mapping craft to initiate a mapping or reconnaissance flight to identify fiducial elements302,402,502. For example, mapping craft can be automated to fly over the game space and visually capture fiducial elements302,402,502using the downward-facing camera. In another embodiment, a user can manual fly over the game space to visually capture fiducial elements302,402,502using the downward-facing camera.

According to an embodiment, during calibration, at least two and preferably three individual fiducial elements can be identified simultaneously. Using an estimated craft height (for example, from an altimeter) and the angle between the fiducials, a mapping can be created between all fiducial elements. In another embodiment, if mapping engine404can identify or otherwise know the size of the fiducial elements themselves, an altimeter is not necessary.

In another embodiment, a joint calibration of fiducial elements302,402can includes using a “smart” fiducial502. For example, each smart fiducial502can include a 180 degree field-of-view camera to “see” other fiducial elements. In an embodiment, a map can be created with at least two smart fiducials502that can independently view each other fiducial. In further embodiments, one or more smart fiducials502can be combined with mapping craft as described above, and/or may be combined with time of flight position calibration of fiducials302as described above.

As mentioned, fiducial elements can be combined among passive elements402and active elements302. For example, four “corner” fiducial elements302and two “encoded” strings of passive elements402placed to crisscross between opposite corners can be utilized. In a further embodiment, encoded strings can be placed to crisscross between adjacent corners. According to an embodiment, for example, if the corners of the game space are no more than 30 feet apart and the craft is three feet above the ground, a downward facing camera on the craft can locate at least one string. Corner elements can be passive or active, as described herein.

Locating engine406is configured to locate the position of craft within the game space using the data from mapping engine404and optionally, craft data. For example, IR, RF, or ultrasound beacons can emit a pulse that is received or returned by a particular craft. A triangulation calculation or measurement can be utilized in order to determine the distances and relative positions of craft over the game space using the pulse data. In an embodiment, at least two beacons are utilized in combination with a sensor reading from the craft in order to triangulate the relative position of the craft on the game space. In another embodiment, at least three beacons are utilized to determine the relative position of the craft on the game space. More than two beacons can be combined to improve accuracy, but most game play does not require it.

In other embodiments of craft having a downward facing camera, a relative location can be determined by the data returned from the camera. Relative craft position can be determined based on the map of the game space created during calibration as implemented by mapping engine404.

In an embodiment, the craft can be launched within the game space at a predetermined or known location relative to the fiducial elements. This allows embodiments of locating engine406to initiate with another data point for locating the craft within the game space. See, for example,FIG. 10.

Referring toFIG. 5, a block diagram of one embodiment of locating engine406is described that utilizes both sensors and/or information obtained from onboard the craft102, and sensors and/or information from external references and/or sources that are combined in a data fusion process to provide the necessary location information consisting of both positional information of a relative position in the game space300and orientation information of the craft at the given point indicated by the positional information.

With respect to the onboard sensors in this embodiment, during initialization, an initial pitch and roll estimate of craft102is estimated using the accelerometers to observe an orientation of craft102relative to gravity with the assumption that no other accelerations are present. The craft heading or yaw can be initialized using the magnetometer or may simply be set to zero. As typically performed by Attitude Heading and Reference Systems (AHRS), data from the gyroscope is continually integrated at each time step to provide a continual estimate of the attitude of craft102. Noise present in the gyroscope signal will accumulate over time resulting in an increasing error and may be corrected and or normalized using information from other sensors. The accelerometer data is used to provide measure the orientation of the craft102relative to gravity. As the accelerometers measure both the effect of gravity as well as linear accelerations, linear accelerations will corrupt this measurement. If an estimate is available, body accelerations can be removed. Additionally, if an estimate of the craft orientation is available, it can be used to remove the gravity vector from the signal and the resulting body acceleration measurements can be used to aid the position tracking estimate.

In some embodiments, a magnetometer on the craft102can be used to measure the orientation of the craft relative to earth's magnetic field vector. Using both the magnetic and gravitational vectors provide an absolute reference frame to estimate a three-dimensional orientation of the craft.

In some embodiments, a dynamic measurement of a relative altitude of craft104may be obtained. In various embodiments, a barometer can be used to measure the air pressure of the craft. As the craft rises in altitude, the air pressure drops. Using the relationship between altitude and barometric pressure, an estimate of the craft's altitude relative to sea level can be made. Typically, the barometric pressure is measured while the craft is on the ground to determine the ground's altitude above sea level and allows for a rough estimate of the craft's height above ground. In other embodiments, an ultrasonic range finder can be used to measure the craft's height above ground and/or walls by transmitting and receiving sound pulses. Typically, the ultrasonic sensor is fixed to the craft, so the current attitude estimate for craft104is used to correct the estimate. Similar to an ultrasonic range finder, in some embodiments a time of flight sensor can use LED or laser light to transmit a coded signal. The reflection of the light is received and correlated to the transmitted signal to determine the time of flight of the signal and thereby the distance to the reflecting target.

In some embodiments, a downward-facing camera or an optical flow sensor may be used to monitor the motion of a scene that can include one or more fiducials, and this information can be analyzed and processed along with knowledge of an orientation and a distance to a fiducial, for example, which can be used to calculate a relative position and velocity between the craft and the fiducial and/or another know object in the scene within the gaming space (i.e., floor or walls).

In other embodiments, an onboard camera on the craft can be used to image visual targets such as fiducials on the ground, the floor, or even other remotely-controlled aircraft of other remote control object within the game space. A vector can be determined for each visual target identified that connects a focal point of the camera with the target as measured by a pixel location of the target. If the location of a given target is known in the local coordinate frame of the game space, the position of the remotely-controlled aircraft within the three-dimensional game space can be estimated using the intersection of these vectors. If the size of the targets is known, their imaged size in pixels and the camera's intrinsic properties also can be used to estimate the distance to the target.

In various embodiments, a commanded thrust output for the craft can be used along with a motion model of the craft to aid the tracking system providing estimates of its future orientation, acceleration, velocity and position.

With respect to the onboard sensors in various embodiments, a video camera can be used with computer vision algorithms in one or more of the smart fiducials502and/or the gaming controller to determine a location of a craft in the field of view of the video camera along with its relative orientation. Using the pixel location of the identified craft, a vector from the camera in a known location within the gaming space to the craft can be estimated. Additionally, if the physical size of the craft and camera's intrinsic imaging properties are known, the distance to the craft can also be estimated. Using multiple cameras, multiple vectors to each craft can be determined and their intersections used to estimate the three-dimensional position of the remotely-controlled aircraft within the gaming space.

In other embodiments, to aid in the computer vision tracking of the craft by external references, the visual fiducial markers402may be used. These markers are designed to be easily seen and located in the field of view of the external reference camera. By arranging the fiducials on the target in a known pattern, orientation and distance tracking can be improved using this information.

A time of flight camera or a LIDAR array sensor may also be used in various embodiments of the external references to generate a distance per pixel to the target. It illuminates the scene with a coded light signal and correlates the returns to determine the time of flight of the transmitted signal. The time of flight camera or a LIDAR array sensor can provide a superior distance measurement than just using the imaged craft size. Examples of a LIDAR array sensor that may be small enough and light enough to be suitable for use in some embodiments described herein is shown in U.S. Publ. No. 2015/0293228, the contents of which are hereby incorporated by reference.

In various embodiments, when the available sensor measurements and information from onboard and/or external references is obtained, this information together with the corresponding mathematical models applicable to such information, may be combined in a data fusion process to create estimates with lower error rates for determining the location, position and orientation than any single sensor. This estimate may also be propagated through time and continually updated as new sensor measurements are made. In various embodiments, the data fusion process is accomplished by using an Extended Kalman Filter or its variants (i.e. Unscented Kalman Filter). In various embodiments, the data fusion process is performed by the locating engine406.

FIG. 6is a block diagram of a locating engine601that determines position, orientation and location information of multiple remotely-controlled aircraft, according to an embodiment. In some embodiments, locating engine601is an implementation of locating engine406described above.

FIGS. 7A and 7B, together, which are adapted from the Wikipedia internet website entry for Kalman filter, depict an exemplary set701of algorithms and formulas that may be implemented, in some embodiments, by the locating engine601to perform the data fusion process, both for initial prediction and for subsequent update of the location information.

Referring toFIG. 8, a diagram of a fiducial marker mat502for a game space500is depicted, according to an embodiment. As shown, game space500comprises a three-dimensional space in which two or more craft can be launched and fly in accordance with a particular game. Once fiducial marker mat502is deployed onto game space500, fiducial marker mat502comprises a net of visual targets504evenly spaced at the respective intersections of the netting. In an embodiment, fiducial marker mat502is unrolled on the playing surface “floor.” Each visual target504comprises a unique identifier readable by an image-recognition device. Accordingly, the relative distance and angle of each of the unique visual targets504from other unique visual targets504can be easily determined. In other embodiments, visual targets504are not positioned at respective intersections of the netting, but are otherwise spaced along the surface of fiducial marker mat502. As shown inFIG. 8, fiducial marker mat502is stretched out on the floor of game space500.

Referring toFIG. 9, a diagram of a plurality of fiducial marker ribbon strips for a game space for a gaming system for multiple remotely-controlled aircraft is depicted, according to an embodiment. As shown, game space600comprises a three-dimensional space in which two or more craft can be launched and fly in accordance with a particular game.

A plurality of two-dimensional 1-D ribbon strips are positioned in a fiducial grid along the playing surface “floor.” For example, first strip602and second strip604are positioned adjacent and parallel each other, but spaced from each other along the floor. Likewise, third strip606and fourth strip608are positioned adjacent and parallel each other, but spaced from each other along the floor. Third strip606and fourth strip608are positioned orthogonal to first strip602and second strip604to form known angles with respect to each other.

Similar to visual targets504, each of first strip602, second strip604, third strip606, and fourth strip608can comprise a plurality of a unique identifiers along the surface of the respective strip that are readable by an image recognition device.

Referring toFIG. 10, a diagram of a fiducial beacon grid for a game space for a gaming system for multiple remotely-controlled aircraft is depicted, according to an embodiment. As shown, game space700comprises a three-dimensional space in which two or more craft can be launched and fly in accordance with a particular game.

A plurality of fiducial beacons are positioned in a fiducial grid along the playing surface “floor.” For example, fiducial beacon702and fiducial beacon704are positioned largely within the main area of the playing surface floor. Fiducial beacon706is positioned proximate one of the corners of game space700. In an embodiment, a craft launch area708is adjacent fiducial beacon706. By positioning launch area708at a site known to fiducial beacon706, locating the craft is easier with this initial known data point.

Referring toFIG. 11, a diagram of a game space space having an out-of-bounds area for a gaming system for multiple remotely-controlled aircraft is depicted, according to an embodiment. As shown, game space800comprises a three-dimensional space in which two or more craft can be launched and fly in accordance with a particular game.

Game space800generally comprises a three-dimensional upper out-of-bounds area802, a three-dimensional lower out-of-bounds area804, and a three-dimensional in-play area806. In an embodiment, upper out-of-bounds area802comprises the three-dimensional area within game space800wherein the Z-axis is above 10 feet. In other embodiments upper out-of-bounds area802comprises a three-dimensional area within game space800greater or less than 10 feet.

In an embodiment, lower out-of-bounds area804comprises the three-dimensional area within game space800wherein the Z-axis is under 1 foot. In other embodiments lower out-of-bounds area804comprises a three-dimensional area within game space800greater or less than 1 foot.

As described herein with respect to a craft touching one of the sides of a game space, when a craft moves into an out of bounds location, such as upper out-of-bounds area802or lower out-of-bounds area804, a command can be transmitted to the craft that artificially weakens or disables the flying ability of the craft. In other embodiments, other penalties or actions can be taken with respect to the craft or game play when craft moves into these out of bounds locations.

In operation, referring toFIG. 12, a flowchart of a method900of integrating three-dimensional game play among multiple remote control aeronautical vehicles on a gaming application for a mobile device is depicted, according to an embodiment.

At902, a game space is prepared. In embodiments, two or more users can physically bring two or more remote control aeronautical vehicles to an area in which the vehicles can be flown as a part of game play. Further, referring toFIG. 4, a gaming controller station to coordinate the positional data of the craft can be set up adjacent the game space. In embodiments, one or more mobile devices implementing a gaming application are powered on, initiated, or otherwise prepared for use. Finally, at least two fiducial elements are deployed on the horizontal (X-axis) surface of the game space. Fiducial elements can include both active and passive elements and can include various placements or configurations as described in, for example,FIGS. 5 and 8-10.

At904, the fiducial elements are mapped. Referring to mapping engine404, a map of fiducial elements on the game space is generated. In embodiments, both active and passive fiducial elements can be mapped according to their respective mapping processes. The generated map data can be transmitted to the mobile device and/or the gaming controller station for use with the gaming application.

At906, two or more craft are launched into the game space. In an embodiment, the craft are launched at a specified launch area such as launch area708inFIG. 10.

At908, the craft are located within the game space. Referring to locating engine406, active fiducial elements, passive fiducial elements, and/or the craft including a downward-facing camera can be used to triangulate the craft position within the game space. This data is aggregated as position data and transmitted to one or more of the device, gaming controller station, or other system components.

At910, the position data of the craft is integrated into the gaming application and displayed on the mobile device. The three-dimensional position data is filtered or otherwise synthesized to represent two-dimensional data. In embodiments, the three-dimensional position data can be further integrated into game play features, such as health, power boost, “hiding” locations, and others, depending on the game.

Steps908and910can be iterated to update the position location of the craft within the gaming application according to any desired update rate.

Various embodiments of systems, devices, and methods have been described herein. These embodiments are given only by way of example and are not intended to limit the scope of the claimed inventions. It should be appreciated, moreover, that the various features of the embodiments that have been described may be combined in various ways to produce numerous additional embodiments. Moreover, while various materials, dimensions, shapes, configurations and locations, etc. have been described for use with disclosed embodiments, others besides those disclosed may be utilized without exceeding the scope of the claimed inventions.

Persons of ordinary skill in the relevant arts will recognize that the subject matter hereof may comprise fewer features than illustrated in any individual embodiment described above. The embodiments described herein are not meant to be an exhaustive presentation of the ways in which the various features of the subject matter hereof may be combined. Accordingly, the embodiments are not mutually exclusive combinations of features; rather, the various embodiments can comprise a combination of different individual features selected from different individual embodiments, as understood by persons of ordinary skill in the art. Moreover, elements described with respect to one embodiment can be implemented in other embodiments even when not described in such embodiments unless otherwise noted.

Although a dependent claim may refer in the claims to a specific combination with one or more other claims, other embodiments can also include a combination of the dependent claim with the subject matter of each other dependent claim or a combination of one or more features with other dependent or independent claims. Such combinations are proposed herein unless it is stated that a specific combination is not intended.

Any incorporation by reference of documents above is limited such that no subject matter is incorporated that is contrary to the explicit disclosure herein. Any incorporation by reference of documents above is further limited such that no claims included in the documents are incorporated by reference herein. Any incorporation by reference of documents above is yet further limited such that any definitions provided in the documents are not incorporated by reference herein unless expressly included herein.

For purposes of interpreting the claims, it is expressly intended that the provisions of 35 U.S.C. § 112(f) are not to be invoked unless the specific terms “means for” or “step for” are recited in a claim.

Claims

- A gaming system integrating three-dimensional real-world game play and virtual game play, the system comprising: at least two remotely-controlled aircraft and at least two controllers, each aircraft uniquely paired with and controlled by a corresponding one of the at least two controllers via a radio-frequency (RF) communication protocol implemented between the corresponding controller and the remote-control craft that transmits at least craft control communications between a particular pair of a controller and a remotely-controlled aircraft based on a pair identification information contained in the RF communication protocol, the RF communication protocol further including communication of sensor and positional information;a game space recognition system including: at least two fiducial elements placed relative to one another to define a three-dimensional game space, and a locating engine configured to utilize the at least two fiducial elements and sensor and positional information received via the RF communication protocol to determine a relative position and orientation of each of the at least two remotely-controlled aircraft within a locally-defined coordinate frame of reference associated with the game space;at least one mobile computing device configured to execute a gaming application wherein the relative position of each of the at least two remotely-controlled aircraft is utilized by the gaming application to determine game play associated with an integrated real and virtual game played among at least two remotely-controlled aircraft within the three-dimensional game space in accordance with a set of rules and parameters for the integrated real and virtual game, wherein the integrated real and virtual game defines at least one zone selected from the group consisting of: a health zone that, when a first one of the at least two remotely-controlled aircraft is located within the health zone, provides a relative advantage over a second one of the at least two remotely-controlled aircraft that is not located within the health zone, and a hiding zone of virtual mountains that, when a first one of the at least two remotely-controlled aircraft is located within the hiding zone, protects the first aircraft from shots fired by a virtual cannon.

- The gaming system of claim 1 , wherein the integrated real and virtual game defines the hiding zone of virtual mountains.

- The gaming system of claim 1 , further comprising a plurality of physical markers, wherein the integrated real and virtual game defines a virtual element corresponding to each of the plurality of physical markers.

- The gaming system of claim 1 , further comprising: a beacon that emits pulses that are received or returned by a selected one of the at least two remotely-controlled aircraft, and a measurement based on the emitted pulses is used by the locating engine to locate a position of the selected aircraft.

- The gaming system of claim 1 , wherein the at least one mobile computing device executing the gaming application is a smartphone device physically separate from the at least two remotely-controlled aircraft.

- A gaming system integrating three-dimensional real-world game play and virtual game play, the system comprising: at least two remotely-controlled aircraft and at least two controllers, each aircraft uniquely paired with and controlled by a corresponding one of the at least two controllers via a radio-frequency (RF) communication protocol implemented between the corresponding controller and the remote-control craft that transmits at least craft control communications between a particular pair of a controller and a remotely-controlled aircraft based on a pair identification information contained in the RF communication protocol, the RF communication protocol further including communication of sensor and positional information;a game space recognition system including: at least two fiducial elements placed relative to one another to define a three-dimensional game space, and a locating engine configured to utilize the at least two fiducial elements and sensor and positional information received via the RF communication protocol to determine a relative position and orientation of each of the at least two remotely-controlled aircraft within a locally-defined coordinate frame of reference associated with the game space;at least one mobile computing device configured to execute a gaming application wherein the relative position of each of the at least two remotely-controlled aircraft is utilized by the gaming application to determine game play associated with an integrated real and virtual game played among at least two remotely-controlled aircraft within the three-dimensional game space in accordance with a set of rules and parameters for the integrated real and virtual game;and a mapping engine, wherein the game space recognition system further includes at least one image-acquisition device selected from the group consisting of: a camera on at least one of the at least two remotely-controlled aircraft, an ultrasonic range finder altimeter and a camera on at least one of the at least two remotely-controlled aircraft, and a 180-degree field-of-view camera on each of the at least two fiducial elements, wherein the image-acquisition device is operable to obtain at least one image of the at least two fiducial elements, and wherein the mapping engine is configured to determine unique identifiers of the at least two fiducial elements in order to prepare a relative map of positions of the at least two fiducial elements.

- The gaming system of claim 6 wherein the game space recognition system includes the camera on at least one of the at least two remotely-controlled aircraft.

- The gaming system of claim 6 , further comprising: wherein the game space recognition system includes the ultrasonic range finder altimeter and a camera on at least one of the at least two remotely-controlled aircraft, and wherein the mapping engine is further configured to determine an estimated craft height, and angles between the fiducial elements.

- A gaming system integrating three-dimensional real-world game play and virtual game play, the system comprising: at least two remotely-controlled aircraft and at least two controllers, each aircraft uniquely paired with and controlled by a corresponding one of the at least two controllers via a radio-frequency (RF) communication protocol implemented between the corresponding controller and the remote-control craft that transmits at least craft control communications between a particular pair of a controller and a remotely-controlled aircraft based on a pair identification information contained in the RF communication protocol, the RF communication protocol further including communication of sensor and positional information;a game space recognition system including: at least two fiducial elements placed relative to one another to define a three-dimensional game space, and a locating engine configured to utilize the at least two fiducial elements and sensor and positional information received via the RF communication protocol to determine a relative position and orientation of each of the at least two remotely-controlled aircraft within a locally-defined coordinate frame of reference associated with the game space;and at least one mobile computing device configured to execute a gaming application wherein the relative position of each of the at least two remotely-controlled aircraft is utilized by the gaming application to determine game play associated with an integrated real and virtual game played among at least two remotely-controlled aircraft within the three-dimensional game space in accordance with a set of rules and parameters for the integrated real and virtual game, wherein the at least two fiducial elements include a plurality of encoded strings of passive fiducial elements placed to crisscross between corners of the game space.

- A method of integrating three-dimensional real-world game play and virtual game play, the method comprising: providing at least one mobile computing device, at least two remotely-controlled aircraft and at least two controllers;uniquely pairing each aircraft with a corresponding one of the at least two controllers via a radio-frequency (RF) communication protocol implemented between the corresponding controller and the remote-control craft;transmitting at least craft-control communications between a particular pair that includes one of the at least two controllers and a corresponding one of the at least two remotely-controlled aircraft based on pair-identification information contained in the RF communication protocol, the RF communication protocol further including communication of sensor and positional information;defining a three-dimensional game space using at least two fiducial elements placed relative to one another to define the game space;associating a locally-defined coordinate frame of reference with the three-dimensional game space;utilizing the at least two fiducial elements and sensor and positional information received via the RF communication protocol to determine a relative position and orientation of each of the at least two remotely-controlled aircraft within the locally-defined coordinate frame of reference associated with the three-dimensional game space;executing a gaming application on the at least one mobile computing device, wherein the relative positions and orientations of each of the at least two remotely-controlled aircraft are utilized by the gaming application to determine game play associated with an integrated real and virtual game played among at least two remotely-controlled aircraft within the three-dimensional game space in accordance with a set of rules and parameters for the integrated real and virtual game play;and defining at least one zone selected from the group consisting of: a hiding zone of virtual mountains that, when a first one of the at least two remotely-controlled aircraft is located within the hiding zone, protects the first aircraft from shots fired by a virtual cannon, and a health zone that, when a first one of the at least two remotely-controlled aircraft is located within the health zone, provides a relative advantage over a second one of the at least two remotely-controlled aircraft that is not located within the health zone.

- The method of claim 10 , further comprising: defining a virtual element corresponding to each of a plurality of physical markers placed on the game space.

- The method of claim 10 , further comprising: executing the gaming application on a smartphone device physically separate from the at least two remotely-controlled aircraft.

- A method of integrating three-dimensional real-world game play and virtual game play, the method comprising: providing at least one mobile computing device, at least two remotely-controlled aircraft and at least two controllers;uniquely pairing each aircraft with a corresponding one of the at least two controllers via a radio-frequency (RF) communication protocol implemented between the corresponding controller and the remote-control craft;transmitting at least craft-control communications between a particular pair that includes one of the at least two controllers and a corresponding one of the at least two remotely-controlled aircraft based on pair-identification information contained in the RF communication protocol, the RF communication protocol further including communication of sensor and positional information;defining a three-dimensional game space using at least two fiducial elements placed relative to one another to define the game space;associating a locally-defined coordinate frame of reference with the three-dimensional game space;utilizing the at least two fiducial elements and sensor and positional information received via the RF communication protocol to determine a relative position and orientation of each of the at least two remotely-controlled aircraft within the locally-defined coordinate frame of reference associated with the three-dimensional game space;executing a gaming application on the at least one mobile computing device, wherein the relative positions and orientations of each of the at least two remotely-controlled aircraft are utilized by the gaming application to determine game play associated with an integrated real and virtual game played among at least two remotely-controlled aircraft within the three-dimensional game space in accordance with a set of rules and parameters for the integrated real and virtual game play;obtaining images selected from the group consisting of: images from each of the at least two remotely-controlled aircraft, and images from each of the at least two fiducial elements;and using the images to create a map of the game space.

- The method of claim 13 , further comprising: obtaining the images from each of the at least two fiducial elements.

- The method of claim 13 , further comprising: obtaining the images from each of the at least two remotely-controlled aircraft;and obtaining estimated height data from each of the at least two remotely-controlled aircraft.

- The method of claim 13 , further comprising: obtaining the images from each of the at least two remotely-controlled aircraft;and using the images to locate three-dimensional location and orientation information for each of the at least two remotely-controlled aircraft.

- The method of claim 13 , further comprising: executing the gaming application on a smartphone device physically separate from the at least two remotely-controlled aircraft.

- A method of integrating three-dimensional real-world game play and virtual game play, the method comprising: providing at least one mobile computing device, at least two remotely-controlled aircraft and at least two controllers;uniquely pairing each aircraft with a corresponding one of the at least two controllers via a radio-frequency (RF) communication protocol implemented between the corresponding controller and the remote-control craft;transmitting at least craft-control communications between a particular pair that includes one of the at least two controllers and a corresponding one of the at least two remotely-controlled aircraft based on pair-identification information contained in the RF communication protocol, the RF communication protocol further including communication of sensor and positional information;defining a three-dimensional game space using at least two fiducial elements placed relative to one another to define the game space;associating a locally-defined coordinate frame of reference with the three-dimensional game space;utilizing the at least two fiducial elements and sensor and positional information received via the RF communication protocol to determine a relative position and orientation of each of the at least two remotely-controlled aircraft within the locally-defined coordinate frame of reference associated with the three-dimensional game space;executing a gaming application on the at least one mobile computing device, wherein the relative positions and orientations of each of the at least two remotely-controlled aircraft are utilized by the gaming application to determine game play associated with an integrated real and virtual game played among at least two remotely-controlled aircraft within the three-dimensional game space in accordance with a set of rules and parameters for the integrated real and virtual game play;and obtaining magnetic and gravitational vectors from each respective one of the at least two remotely-controlled aircraft to provide an absolute reference frame to estimate a three-dimensional orientation of the respective aircraft.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.