U.S. Pat. No. 10,242,241

ADVANCED MOBILE COMMUNICATION DEVICE GAMEPLAY SYSTEM

AssigneeOpen Invention Network LLC

Issue DateNovember 9, 2011

Illustrative Figure

Abstract

A mobile communications device including a transceiver, a motion input or other optical device, and a system for supporting single or multi-player gameplay is described. A method of playing a game is provided including transmitting and receiving information between one or more devices through a mobile communications system with use of one or more tethered and/or wireless links; and playing a game on the mobile communications device. Further one or more players either creating or receiving one or more motions, thereby communicating through the device, receiving and/or transmitting some or all of the game data and may also receive/transmit one or more motion results and having the ability to send and/or receive one or more motion instructions to/from another one or more devices.

Description

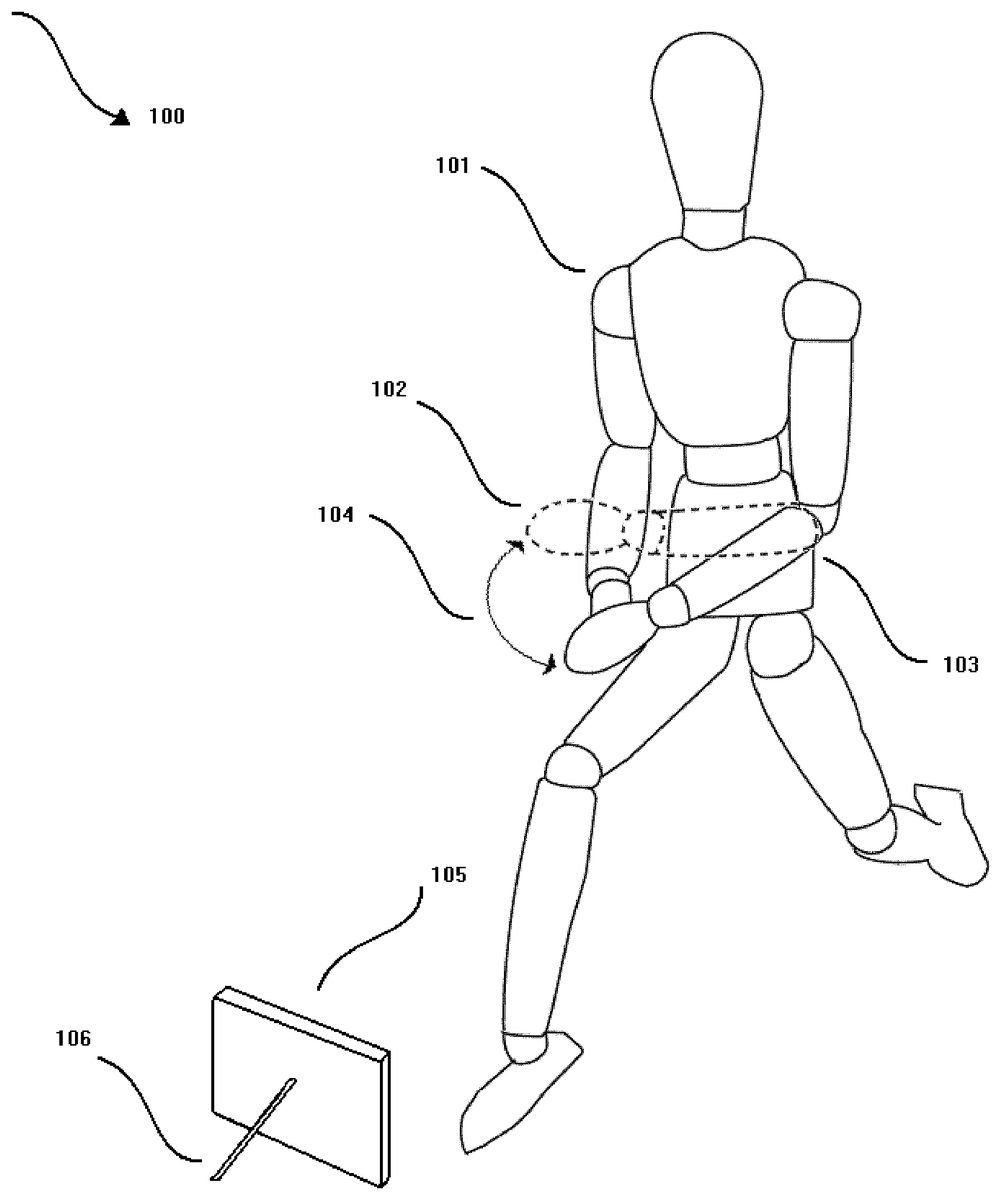

DETAILED DESCRIPTION OF THE INVENTION Referring toFIG. 1, a player101(described in system100, shown in more detail in a system800) in front of a mobile communication device105, which may be supported by a stand106or other object. While in front of the mobile communication device105, the player101may change a facial feature or move a body part such as an arm103from the first position of the arm103to the second position of the arm102. The arc of change104is measured from the original first position of the arm103to the second position of the arm102. The arc104, detected by the mobile communication device105, can be interpreted as a motion instruction. Now referring toFIG. 2, a player201(described in a system200, shown in more detail in a system800) is holding a mobile communication device204. While in front of the mobile communication device204, the player201may change a facial feature, issue a voice command or move a body part such as an arm203from the first position of the arm203to the second position of the arm202. The arc of change205is measured from the original first position of the arm203to the second position of the arm202. The arc205, detected by the mobile communication device204, can be interpreted as a motion instruction. Now referring toFIG. 3, a player301(described in a system300, shown in more detail in a system800) is holding a mobile communication device302. While in front of the mobile communication device302, the player301may change a facial feature, issue a voice command or move a body part (described in a system300of the instant invention, not fully shown) communicatively coupled to a device304, further communicating with a device (i.e., console unit, personal computer, game unit, storage device, server, etc.)304via a tethered or wireless communication protocol303. The device304connected to none or more devices such as a server (not fully shown) through a tethered or wireless ...

DETAILED DESCRIPTION OF THE INVENTION

Referring toFIG. 1, a player101(described in system100, shown in more detail in a system800) in front of a mobile communication device105, which may be supported by a stand106or other object. While in front of the mobile communication device105, the player101may change a facial feature or move a body part such as an arm103from the first position of the arm103to the second position of the arm102. The arc of change104is measured from the original first position of the arm103to the second position of the arm102. The arc104, detected by the mobile communication device105, can be interpreted as a motion instruction.

Now referring toFIG. 2, a player201(described in a system200, shown in more detail in a system800) is holding a mobile communication device204. While in front of the mobile communication device204, the player201may change a facial feature, issue a voice command or move a body part such as an arm203from the first position of the arm203to the second position of the arm202. The arc of change205is measured from the original first position of the arm203to the second position of the arm202. The arc205, detected by the mobile communication device204, can be interpreted as a motion instruction.

Now referring toFIG. 3, a player301(described in a system300, shown in more detail in a system800) is holding a mobile communication device302. While in front of the mobile communication device302, the player301may change a facial feature, issue a voice command or move a body part (described in a system300of the instant invention, not fully shown) communicatively coupled to a device304, further communicating with a device (i.e., console unit, personal computer, game unit, storage device, server, etc.)304via a tethered or wireless communication protocol303. The device304connected to none or more devices such as a server (not fully shown) through a tethered or wireless communication line305.

The connected state where the mobile communication device302is communicatively coupled to a device304, may transmit game information to the device304via a communication protocol303further being stored to the device304.

In addition, the connected state where the mobile communication device302is communicatively coupled to a device304, may receive game information from the device304via a communication protocol303further being stored to the mobile communication device302.

Finally, the connected state where the mobile communication device302is communicatively coupled to a device304, may both transmit and receive game information to/from the device304via a communication protocol303further being delivered and stored either to/from the mobile communication device302or to/from the mobile communication device302or both.

Now referring toFIG. 4, a player401(described in a system400, shown in more detail in a system800) is holding a mobile communication device402. While in front of the mobile communication device402, the player401may change a facial feature, issue a voice command or move a body part (described in a system400of the instant invention, not fully shown) communicatively coupled to a device404, further communicating with a device (i.e., console unit, personal computer, game unit, storage device, server, etc.)404via a tethered or wireless communication protocol403. The device404connected to none or more devices such as a server (not fully shown) through a tethered or wireless communication line405.

The connected state where the mobile communication device402is communicatively coupled to a device404, may transmit one or more motion instructions to the device404via a communication protocol403further being stored to the device404.

In addition, the connected state where the mobile communication device402is communicatively coupled to a device404, may receive one or more motion instructions from the device404via a communication protocol403further being stored to the mobile communication device402.

Finally, the connected state where the mobile communication device402is communicatively coupled to a device404, may both transmit and receive one or more motion instructions to/from the device404via a communication protocol403further being delivered and stored either to/from the mobile communication device402or to/from the mobile communication device402or both.

Now referring toFIG. 5, a player using their voice, finger or other body part503(described in a system500, shown in more detail in a system800) using a mobile communication device501, exposed to an optical device, microphone and/or other device or combination of devices, either built-in to the communication device or simply attached to it,502which may be found any where on or embedded in the mobile communication device501, may apply one or more motions505to the communication device501, through a change in the position of the first object, in this case the player's finger503, relative to a second position504or the player's finger503without touching the communication device501, thereby generating one or more motion instructions (not fully shown).

For example, if the player wanted their avatar to attack another avatar using a kick-jump-triple-spin move, the player may move their finger in a spiral and then straight up and then down to initiate the move, where in a traditional system, the player would be required to successfully press a series of one or more buttons to produce the same move. The game software may interpret the one or more motion instructions or button commands in the same manner but the one or more motion instructions, having the possibility to be completely customizable by the player, could give the player additional ranges of freedom and flexibility.

For example, if the kick-jump-triple-spin move, using the conventional method or pressing one or more buttons on the player's controller, could require the player to swirl the left-stick and press the red and blue buttons on their controller one after the other to produce the sequence of signals from the player to the controller to the game software so that the game software interprets this as a common to produce the move.

Regarding the method used with the instant invention, the player could simply swirl their finger three times and then point up. The motion could be received by the mobile communication device, converted to one or more motion instructions and then the avatar could move accordingly without the need for the player to press any buttons. In this manner, the same move made by the avatar would be performed by the player only moving their finger in the air and not touching the controller.

In addition, the mobile communication device could have a gyroscope contained within it or other device, for example, which could be used to measure the one or more bodily motions of the player. For example, lunging right, left, up, down, forwards, backwards, shaking the device, etc. could produce one or more motion instructions directed at one or more avatars either locally on the mobile communication device or connected remotely.

For instance, lunging back while the player's avatar is in the cockpit of a fighter jet, could be interpreted by the game software and/or mobile communication device that the avatar, as a pilot, is being propelled in the air using the jet's afterburners with an increased g-force so that the mobile communication device would interpret this motion in a different manner than the usual flight speed of the jet.

Likewise, in a race car, the player could lunge back and the mobile communication device could interpret the motion command as the avatar being pressed into the driver's seat as the turbo boost on the race car engages. The mobile communication device could vibrate as the car speeds on.

In order to process this lunging motion, for example, the instant invention would receive motions from the player through the gyroscope or other device in the mobile communication device, which would send signals to the game software. These signals would then be compared to signals stored on the mobile communication device related to known results which are used by the mobile communication device and/or game software to effect the movements of the avatar. The signals, received by the mobile communication device, would be converted to motion instructions by the game software, the game software would then apply the motion instructions to the one or more avatars and the one or more avatars would move as directed by the one or more motion instructions.

It is a further important innovation of the instant invention worth noting, that the one or more motions of the finger or other body parts do not necessarily constitute an equivalent motion of the player's avatar on the screen. For example, a triple somersault dive on a diving board may simply be a motion of three clockwise spins of the player's finger and then a point downward. This simple motion produced by the player could result in the avatar running over the diving board, jumping into the air, spinning three times and then diving into the pool. The motion produced by the player is not equivalent to the motions the avatar performs as a result of the command given by the player.

Also, the one or more motions made by the player may need to be different enough to be decipherable by the game software so that one motion instruction can be distinguished between another. For example, using the same diving motion, the player could have the same diving avatar jump up higher from the diving board by pointing up and then down to show a difference in the motion in contrast to the simple motion described previously.

And yet one motion instruction may be the same as another in the case of different contexts within the game or when the game software is in a different mode, for example in a training, tutorial or game option or setup mode as opposed to regular gameplay and may also be treated differently in a multiplayer mode. In addition, the same motion instruction may be useful in more than one game and may be treated either in the same manner or a different manner depending on how the game is programmed and how the player may have customized the game or the motion instruction.

For example, a player may raise their hand in a stopping motion and the game software may interpret this as a pause or to stop the game. Once the game is paused, it could be in a setup or option mode. The player could then rotate their right finger counterclockwise once to signify that they want the game software to roll back to the prior checkpoint in the game. However, the same motion, during the gameplay, could mean something completely different. In the case of the counterclockwise wind of the finger, it could be interpreted by the system that the diving avatar is commanded by the game software to flip counterclockwise in their dive. Because the game software knows that the state of the game system is in the gameplay mode, it handles the motion command differently than it would handle the same command while the system is in another mode such as option mode.

An example of this could be demonstrated by the avatar using a kick-jump-triple-spin move in one game by the player moving their finger in a spiral and then straight up and then down to initiate the move in one game, but only having to move the finger up and down in another game to do the same move, and the kick-jump-triple-spin move could mean something completely different in the second game than what it was interpreted as in the first game. Once the game software receives the one or more motion instructions, it can use the one or more motion instructions to perform a lookup in a database, for example, and compare each motion instruction with one or more motion instructions available in the system in the database. The database can have a setting which associates the one or more motion instructions with one or more games which have associated one or more motion instructions. If the game the player is playing and the motion instruction find a match, for example, the motion result, which is the actual action which may be applied to the one or more avatars, associated with the lookup is retrieved from the database and applied to the one or more avatars, and the resulting move or other activity appears on the screen or produces other anticipated results.

Now referring toFIG. 6, a first player601(described in a system600, shown in more detail in a system800) may be holding or in front of a mobile communication device602. While in front of the mobile communication device602, the player601may change a facial feature, issue a voice command or move a body part (described in a system600of the instant invention, not fully shown) communicatively coupled to a device604, further communicating with a device (i.e., console unit, personal computer, game unit, storage device, server, etc.)604via a tethered or wireless communication protocol603. And a second or more users605holding, sitting, or standing in front of the mobile communication device604playing or observing the game being played.

The connected state where the mobile communication device602is communicatively coupled to a device604, may transmit one or more motion instructions to the device604via a communication protocol603further being stored to the device604.

In addition, the connected state where the mobile communication device602is communicatively coupled to a device604, may receive one or more motion instructions from the device604via a communication protocol603further being stored to the mobile communication device602.

Finally, the connected state where the mobile communication device602is communicatively coupled to a device604, may both transmit and receive one or more motion instructions to/from the device604via a communication protocol603further being delivered and stored either to/from the mobile communication device602or to/from the mobile communication device602or both.

The first player601may transmit the one or more motion instructions from the mobile communication device602and received by one or more mobile communication devices604and viewed by the one or more second players605and the one or more second players605having the same ability, using their one or more mobile communication devices604to transmit one or more motion instructions to the receiving mobile communication device602and resulting motion viewed by the first player601.

Now referring toFIG. 7, a first player701(described in a system700, shown in more detail in a system800) may be holding or standing/sitting, etc. in front of a mobile communication device702. While in front of the mobile communication device702, the player701may change a facial feature, issue a voice command or move a body part (described in a system700of the instant invention, not fully shown) communicatively coupled to a device704, further communicating with a device (i.e., console unit, personal computer, game unit, storage device, server, etc.)704via a tethered or wireless communication protocol703. And a second or more users705holding, sitting, or standing in front of the communication device704playing or observing the game being played.

In the manner of an example embodiment of the instant invention the communication device704may have a connection706connected to yet another device and may be connected to one or more screens708through another wired or wireless connection707.

The connected state where the mobile communication device702is communicatively coupled to a device704, may transmit one or more motion instructions to the device704via a communication protocol703further being stored to the device704.

In addition, the connected state where the mobile communication device702is communicatively coupled to a device704, may receive one or more motion instructions from the device704via a communication protocol703further being stored to the mobile communication device702.

Finally, the connected state where the mobile communication device702is communicatively coupled to a device704, may both transmit and receive one or more motion instructions to/from the device704via a communication protocol703further being delivered and stored either to/from the mobile communication device702or to/from the mobile communication device702or both.

The first player701may transmit the one or more motion instructions from the mobile communication device702and received by one or more communication devices704and viewed by the one or more second players705and the one or more second players705having the same ability, using their one or more communication devices704to transmit one or more motion instructions to the receiving mobile communication device702and resulting motion viewed by the first player701.

Now referring toFIG. 8, a first player801(described in a system800) may be holding or standing/sitting, etc. in front of a mobile communication device802. While in front of the mobile communication device802, the player801may change a facial feature, issue a voice command or move a body part (described in a system800of the instant invention) communicatively coupled to a device806, further communicating with a device (i.e., console unit, personal computer, game unit, storage device, server, etc.)806via a tethered or wireless communication protocol803, connected to a network804and further connected to the communication device806by a tethered or wireless connection805. And a second or more users808holding, sitting, or standing in front of the communication device809playing or observing the game being played or receiving one or more motion instructions stored on the one or more communication devices806communicatively coupled to the device806or communicatively coupled to the device802through the said network connections, which may including none or more of the connections803,805and/or807.

In the manner of an example embodiment of the instant invention the communication device809may have a connection807connected to yet another device806through a connection805which may have the ability to provide downloadable one or more motion instructions available from the one or more servers806. In addition, the one or more motion instructions may be uploaded to the one or more servers806from the player808or801through their single or collective devices802and809, communicatively coupled through connections803and/or807and805to the one or more servers806.

The connected state where the mobile communication device802may be further communicatively coupled to a device806and/or809, may transmit one or more motion instructions to the device806and/or809via a communication protocol through the connections803, and/or805, and/or807further optionally being stored to the device806and/or809.

In addition, the connected state where the mobile communication device802is communicatively coupled to a device806and/or809, may receive one or more motion instructions from the device806and/or809via a communication protocol through the connections803, and/or805, and/or807further being stored to the mobile communication device802.

Finally, the connected state where the mobile communication device802is communicatively coupled to a device806and/or809, may all transmit and receive one or more motion instructions to/from the one or more servers806and/or mobile communication device809via a communication protocol through connections803, and/or805, and/or807further being delivered and/or stored either to/from the communication device802or to/from the mobile communication device806and/or809or both.

The first player801may transmit the one or more motion instructions from the mobile communication device802being received by one or more communication devices806and/or809and viewed by the one or more second players808and the one or more second players808having the same ability, using their one or more communication devices809to transmit one or more motion instructions to the receiving mobile communication device802or806and resulting motion viewed by the first player801.

Now, referring toFIG. 9, where a motion is being received by a communication device, for example, running while dribbling a ball, separate frames may be captured into the system while the motion is occurring. System900describes an example embodiment of five frames each as901,902,903,904, and905. Each of the described frames is collected for a motion instruction. The frames may be captured in various time intervals (seconds, microseconds, milliseconds, etc.) and some identical frames may be captured repeatedly as motions are repeated or other motions may be so slow that multiple frames are captured, whereas they can be dropped and/or later interpolated by the game software which has the ability to derive differences in relative motion points along particular axes (further described inFIG. 11,FIG. 12andFIG. 13by a partial reference to a system of the instant invention1100,1200and1300, respectively).

In addition, the number of points could range from one to thousands. The higher number of points captured results in a more detailed subject translation. For example, the twelve points captured in901cannot describe changes in facial features or hand details. Multiple mathematical references and coordinate groups may be needed to describe more intricate motions thereby producing more intricate motion vectors. For example, a mathematical model may be used to generate the motion points of the hand while another mathematical model is used to generate the motion points of the body and still another for the face (further described inFIG. 13by a partial reference to a system of the instant invention1300).

Furthermore, each of these motion points could exist on their own coordinate system, having various levels of precision unrelated to each other (further described inFIG. 13by a partial reference to a system of the instant invention1300).

What the image in901does describe in this example is the body with arms and legs raised and/or moving in one or more directions. Adding in902, the arms are in the same place, but the legs have moved. Based on the distance (point A and B) that the legs have moved in relation to each other, it can be discerned that the person is running. In903the arms and legs have moved. The arm has been lowered relative to the frame shown in902. Still, in904, the person has lowered their arm and still running Finally, in905, the person is still running and raising their arm. In the context of a basketball game, the system can infer that the player is dribbling the basketball and is approaching a virtual basket. On the screen, the player can be moving the ball down the court at the same relative speed they are moving in the real world. The sequence of the described five frames would constitute the described motion and be registered in the system as such. Once the player repeats this motion in a gameplay scenario within the given game context, the avatar may appear to be dribbling a ball down the court. Therefore the relative distances between body parts point A versus point B, for example, may be used to describe the range of motion which can be used to determine the intended one or more motion instructions and apply these one or more motion instructions to the one or more associated movements or actions (further described inFIG. 13by a partial reference to a system of the instant invention1300).

Other items of interest include the direction the player is running within a confined area relative to the unconfined space of a computer program. If a player, for example, must turn in their room, the system must determine that they are only turning in the same room but are still progressing in the same direction in the game. If, for example, they impede on a virtual boundary within the computer system and the person switches direction, the avatar on the screen can be inferred as changing direction, also.

Referring now toFIG. 10, a system1000of the instant invention not fully shown appears as several points taken from the captured objects from system900. Frames1001,1002,1003,1004, and1005represent the motion points captured from the described motion. Later, these points, or close approximations, may be captured in a during normal gameplay and compared to all of the groups of points previously captured in a pervious mode until a match is found so that the system may infer from the motion the motion which should be attributed to the avatar at that moment.

If a match is not found a configurable set of options may be performed. This can include the motion simply matched to a similar action within the context of the motion, the motion result defaulting to a present motion result, the player being prompted for the action they would like to take, or the motion simply processed as it is performed by the player.

In addition to the ability that the player can run and act like they are dribbling a ball, the system can be programmed to have the avatar run, dribbling the ball without actually requiring the player to be running. In this way, the speed of the avatar's run could be based on the speed of the dribble, for example.

In the same manner, a player without the ability to run, for example, could virtually run as they are directing their own, custom, avatar through other methods, such as moving just their hands or fingers. The motion of the hands or fingers could be related to the full body movement of an avatar on the screen. The way this might be performed would be based on the system learning the motions of the players' hands which they relate to certain movements made by the avatar. In another words, the player's hand could jump up into the air. In this way, if the system has recorded a hand jumping up into the air and it is related to the avatar jumping up into the air, the avatar would appear to jump up into the air on the screen based on the player's motions. The player's motions would be translated to motion frames, vector signatures, edge points, and then compared to the edge points stored in the game console's database. In this case, for example, a match would be found where the edge points for the avatar jumping up into the air would match, somewhat, the edge points produced when the player's hand moves up into the air. The match results in the avatar jumping up in the air on the screen and this is what the system uses to play out the player's motions.[0110] Referring now toFIG. 11, several collection of points of captured objects1100of the instant invention not fully shown appears as several collections of points from the captured objects from system900, being frames901shown as frame1101and902shown as frame1102, and the overlay of the frames1101and1102as frame overlay1103. The overlay frames1103show the changes in motion for the legs and arms of the captured images1101and1102. Using the overlay frames1103, for example, the game software has the ability to determine the changes, direction and angle of motion of each forearm, arm, wrist, thigh, leg, etc. by measuring the changes in motion about several independent coordinate systems (shown further inFIG. 12andFIG. 13).

Referring now toFIG. 12, several collection of points of captured objects1200of the instant invention not fully shown resembles the overlay figure of several collection of points of captured objects1100of the instant invention. In this example, the left arm of theFIG. 1201is further shown inFIG. 13.

Referring now toFIG. 13, an overlayFIG. 1300of the instant invention not fully shown resembles the overlay figure of several collections of points of captured objects1100and1200of the instant invention having an independent coordinate system A and another independent coordinate system B which is used to calculate the angle of rotation of the upper arm1302and the forearm1306as a part of the first arm1303and this rotation relative to the upper arm1301and the forearm1304. Points A(x,y), being the center point along the x-axis of the coordinate system A, and A′(x′,y′), being the center point along the x-axis of the coordinate system A are found about the angle of theta θ in the coordinate system A. Points B(x″,y″), being the center point along the x-axis of the coordinate system B, and B′(x′″,y′″), being the center point along the x-axis of the coordinate system B are found about the angle of theta θ′ in the coordinate system B. The tangent of θ is taken as the difference of x and x′ divided by the difference of y and y′. The tangent of θ′ is taken as the difference of x″ and x′″ divided by the difference of y″ and y′″.

Therefore, based on the example of the slight upward motion of the player's arm described in1300, the angle θ constitutes the motion of the upper arm within the independent coordinate system A while the angle θ′ constitutes the motion of the lower arm within the independent coordinate system B. The angles θ and θ′ and the vectors A and B could be used to form a motion instruction by the game software which could relate these angles, within a range, to a numerical value which may be used to search for a match in the database located in the mobile communication device.

Therefore the summation of the given motion angles θ and0′ and the vectors A and B could be stored in the database of the mobile communication device in the form of a matrix which constitutes a motion instruction. In this manner, as motions are read into the game system, the received motion may be converted into a motion instruction query which is used to search against the known list of motion instructions, resulting in a possible match, thereby resulting in an equivalent one or more responses by the game software to the resulting motion instruction.

This self same process could be used with the same or varying numbers of points, body parts, motions, etc., including the feet, head, facial features, eyes, torso, legs, etc. as well as the motions of other objects such as a ball, skateboard, drawings on a whiteboard, and any numerous other objects.

Along the same thought process, a collection of a given number of points attributed to one or more motions could also be related in a close proximity mode where only the hands are used to explicitly form the one or more motion instructions, being the equivalent of the full-body one or more motion instructions. The intent would be to make the gameplay more private while still maintaining the ability to control the mobile communication device without the use of a control pad. In this manner, the gameplay may be done while sitting with the device as opposed to standing in front of the device and producing full-body motions.

Also note that the motion described in the aforementioned example only calculates motions related to two-dimensional motion, but the game software is capable of processing motions using three-dimensional capture equipment and has the ability to distinguish motions in the x, y and z vectors along multiple independent axes.

Referring now toFIG. 14, a captured motion1400by the instant invention shows a hand moving in an upward motion along a new coordinate system Q1403. The first hand1402in this example is moving in a counterclockwise direction to where the second hand1401is shown about the axis Q1403and has a vector segment κ1405and an angle γ1404.

As shown in the captured motion1400, this motion requires much less effort than a full-body motion. However, based on an equivalence index, described further inFIG. 15, this motion is directly equivalent to the motion instruction described in900,1000,1100,1200and1300which results in the same motion result of the avatar dribbling a ball, given the same context of the game. This translation between a full-body motion and a close proximity motion is done by the instant invention by associating the two motion instructions with each other using an equivalence index. Given the association of the motion instructions through the equivalence index, the gameplay may be done using a full-body motion or the close proximity motion, having the same desired results.

In addition to the ability to play the game through the normal motion instruction (NMI), based on full-body motion, the system has the ability to interpret one or more associated close proximity motions (CMI), collectively called the motion instructions, and download and/or receive these same or other motion instructions to/from one or more other devices.

The motion instructions as well as other game data may be transferred from the first mobile communication device to another device or from another device, such as a console system or other mobile communication device, to the first mobile communication device using any number of possible transmission protocols and may be capable of the same using protocols specified in the future as the motion instructions and other data may be simple text or binary data streams.

As an example, communication protocols could include an infrared connection, Bluetooth, wireless download, internet connection, or memory card.

In the case of an infrared connection, the mobile communication device could be held up to a console, for example, and the console would transfer the intended information to the mobile communication device. Once the mobile communication device receives the information from the console through the infrared connection, the information can be placed into the database or other storage means in the mobile communication device so that the game can be continued.

In the case of a Bluetooth connection, the mobile communication device could be set to contact, for example, a console. The console could then transfer the intended information to the mobile communication device. Once the mobile communication device receives the information from the console through the Bluetooth connection, the information can be placed into the database or other storage means in the mobile communication device so that the game can be continued.

In the case of a wireless or internet connection, the mobile communication device could be set to contact, for example, a console. The console could then transfer the intended information to the mobile communication device. Once the mobile communication device receives the information from the console through the wireless or internet connection, the information can be placed into the database or other storage means in the mobile communication device so that the game can be continued.

Through the available transmission protocols, for example the internet or wireless connection, the instant invention may also have the ability to allow the player to find and download motion instructions and/or other data from a remote or local server, other machine, console with an internet or wireless connection, or other mobile communication device. Likewise, the player could upload one or more motion instructions to the stated devices for the purpose of storing and/or sharing or selling the one or more motions instructions.

In the case of a memory card or game cartridge, for example, the player could connect the card to a first device, such as a console, mobile communication device, or other device, load the motion instructions and/or other data onto the card, remove the card and connect it to the mobile communication device or second device and the motion information and/or other data would be downloaded to the second device. The player could also receive the card through a purchase, sharing from a friend, as well as other possible means. Once the mobile communication device or second device receives the information from the card, the information can be placed into the database or other storage means in the mobile communication device so that the game can be continued or the game may be played using the card so that no database is involved.

Other methods may be used to transfer the motion instructions and/or other data to the mobile communication device for the purpose of playing a game. It is possible using the instant invention to transfer the motion instructions and/or other data using one protocol and then downloading the same information using another protocol. For example, a motion instruction may be transferred to a server using an internet connection and may be downloaded to a mobile communication device using a wireless connection.[0129] Referring now toFIG. 15, an example database vector object record1500of the instant invention showing rows and columns. The full set of rows and columns constitute a series of multiple vector sequences which describe a portion of a single motion instruction.

In the given example, the motion instruction shown inFIG. 13may be stored in the gameplay system in the first and second rows of the vector object record as the normal motion instruction (NMI).

The motion index1504for the NMI is 1 and contains the coordinate systems A1505on the first row1501and coordinate system B1505on the second row1502. The range1506of the upper arm is shown on coordinate system A1505along the first row1501in radians which describes the range of motion of the motion instruction for the NMI, coordinate system A1505. This range1506is equivalent to the angle θ shown inFIG. 13. The associated extent1507on the first row1501is in centimeters in this example and provides the length of the vector A in the coordinate system A1505along the first row of the table1501.

The second portion of the NMI, based on the coordinate system B1505on the second row1502has a motion index of 11504because the coordinate system B1505is within the same NMI as the coordinate system A1505having the same motion index 11504. The range1506of the lower arm is shown on coordinate system B1505along the second row1502in radians which describes the range of motion of the motion instruction for the NMI, coordinate system B1505. This range1506is equivalent to the angle θ′ shown inFIG. 13. The associated extent1507on the second row1502is in centimeters in this example and provides the length of the vector B in the coordinate system B1505along the second row of the table1502.

Other important aspects of the NMI may include the repeat cycle1508, the motion form1509, the storage type1510, the aggregation1511, the close proximity index1512, among potentially others.

The repeat cycle1508may be based on the number of times a motion must be repeated before it equates to the motion instruction requirements. If the repeat cycle1508is set to INF, for example, the game software could know that the motion is simply matched and there is no requirement to check the number of repeated motions. If, however, the number of repeat cycles1508is set to a number, the game software could know that the motion instruction query does not match the intended motion instruction unless the motion is repeated, at least, until the number of motions match the required repeat cycle1508.

The motion form1509may be used to designate the motion type. In the example shown, the motion form1509includes both linear and circular motion types. A linear type of motion is equivalent to a straight motion such as a vertical or horizontal motion. A circular motion is equivalent to rotated or spherical path of motion. Other motion types could be available.

The storage type1510may be used to assign the type of storage array used to hold the motion instruction. In the example NMI1500the complex storage type can be equivalent to a matrix. The matrix can include more than one independent motion and/or motion coordinate system which makes up one motion instruction. A basic storage type can describe a single row which may define a motion instruction. For example, in the motion table1500, the third row1503shows the full independent motion for the close proximity motion instruction, CMI. Because the CMI is a simple linear motion, it can be described in one row1503as a single vector segment.

The aggregation column1511may be used so that the sum of the motion vectors, such as those in rows1501and1502, make up the motion instruction NMI. Other aggregation types may be available.

The close proximity index1512may provide the ability for a NMI motion instruction to have a connection to a CMI motion instruction. In this manner, both motions can be understood by the game software to have an equivalence to a given motion result. So, the motion the player performs may be either a full-body motion which fits the NMI or a close proximity motion which fits the CMI, producing the same motion result. In the given example, this would show the avatar dribbling a ball.

The items described inFIG. 15are a simple example of a portion of a normal motion instruction (NMI) having an extended close proximity motion instruction (CMI). However, motion instructions are not required to have close proximity motion instructions directly tied to them and close proximity motion instructions may stand alone where they are not tied to a normal motion instruction.

The values shown inFIG. 15may be generated using any of many potential methods including through a manufacturer, a player, a gaming system, programmed system, or another entity knowledgeable in the art using a motion receiving and/or motion association session using the said device, a user interface, an editor, or other means.

One of the methods can include the player making one or more motions, the said device receiving the motions, converting the motions to one or more motion instructions and/or other data and the said device storing the motion instructions and/or other data to a database or other storage means in the said device.

At any point in time, the player, the game developer, the game system or other entity may assign the one or more motion instructions and/or other data to an intended action by the game software and/or game system. For example, the player could assign a given motion of moving their hand up and down as shown inFIG. 14to an action of the avatar dribbling a ball. Once the assignment is made, it can be associated to the given motion instruction using, for example, the motion index1501by the player or other entity through a voice command, answering a prompt or other method either locally, remotely, manually or programmatically.

Once the said device receives the one or more motions, the game software may convert the one or more motions into one or more motion instructions which may include the assignment of one or more motion indices1504, coordinate systems1505, vector ranges1506, vector extents1507, repeat cycles1508, motion forms1509, storage types1510, aggregation models1511, among other items and may include close proximity indices1512. Other data, not shown, may be provided and/or defined by a player or other entity.

Advanced players or other entities may even have the ability to create motion instruction data and/or action assignments manually using an editor or other means and load them into the said device.

In one embodiment of the instant invent, a mobile communications device comprising: a transceiver; an optical device; a system for playing a game; and a system for receiving motion instructions so that the game may be played without the physical use of a controller or pad exists; and a system which also has the ability to send and/or receive one or more motion instructions to/from a second device; wherein the mobile communications device has the ability to interact with the game software and affect the game settings and/or avatar movement; wherein a first mobile communications device has the ability to interact with the game software on a second device and affect the game settings and/or avatar movement on the second device; wherein the mobile communications device has the ability to send and/or receive game information to/from another device; wherein the mobile communications device has the ability to read and/or store game information to/from another device; the mobile communications device has the ability to send and/or receive motion instructions to/from another device; wherein the mobile communications device has the ability to read and/or store motion instructions to/from another device; wherein the mobile communications device has the ability to send and/or receive voice or other audio commands to/from another device; wherein the mobile communications device has the ability to read and/or store voice commands to/from another device; wherein a motion described is produced using the at least one of: one or more fingers; the face; the lips; one or more of the eyes; or other body parts; or non-body parts such as a wand or stylus.

It should be understood that the foregoing description is only illustrative of the instant invention. Various alternatives and modifications can be devised by those skilled in the art without departing from the claims of the instant invention including, but not limited to, the use of 2-dimensional motion instructions as well as 3-dimensional motion instructions and motion instructions with additional data combined or intermingled with it. Accordingly, the present invention is intended to embrace all such alternatives, modifications and variances which fall within the scope of the appended claims.

Claims

- A method of processing user movement via a mobile computing device, the method comprising: receiving at least one user movement via the mobile computing device;detecting a portion of a user's body via a plurality of points referencing the portion of the user's body;capturing a plurality of separate frames while a motion performed by the portion of the user's body is detected, wherein the separate frames comprise changes in the plurality of points as measured along predefined axes;identifying motion vectors corresponding to the changes in the plurality of points identified among the plurality of separate frames;measuring, via the mobile computing device, an arc of change based on a change between a first user position of the detected portion of the user's body and a second user position of the detected portion of the user's body resulting from changes in locations of the plurality of points of the detected portion of the user's body due to the at least one user movement as measured from the separate frames over a predetermined period of time, and wherein the changes in the locations of the plurality of points is further determined based on identifying an angle between a first position of a first point of the plurality of points of the portion of the user's body and a second position of the first point;defining the arc of change based on the angle and the motion vector;interpreting the arc of change as at least one motion instruction applied to a movement of a digital avatar of an active game session operating within a digital computing game;creating packet data representing the at least one motion instruction;comparing the motion instruction to a pre-stored known list of motion instructions;determining at least the angle of the motion instruction matches an angle one of the pre-stored known motion instructions within a numerical range of certainty;and transmitting the packet data to a separate communication device to conduct the digital avatar movement of the active gaming session.

- The method of claim 1 , wherein the at least one user movement comprises at least one of a change in a user facial feature, a user voice command, and a moved user body part.

- The method of claim 1 , wherein the transmitting the packet data to the separate communication device comprises communicating over at least one of a tethered communication link and a wireless communication link.

- The method of claim 1 , wherein the at least one user movement comprises a series of movements which are interpreted as a game movement and applied to the active game session.

- The method of claim 4 , wherein the game movement is interpreted from the series of movements without the user providing button selections.

- The method of claim 1 , further comprising: comparing the at least one motion instruction to at least one known result signal pre-stored in memory to determine a type of game movement to apply to the active game session.

- An apparatus configured to process user movement, the apparatus comprising: a receiver configured to receive at least one user movement;a processor configured to detect at least a portion of a user's body via a plurality of points referencing the portion of the user's body, capture a plurality of separate frames while a motion performed by the portion of the user's body is detected, wherein the separate frames comprise changes in the plurality of points as measured along predefined axes, identify motion vectors corresponding to the changes in the plurality of points identified among the plurality of separate frames, measure an arc of change based on a change between a first user position of the detected portion of the user's body and a second user position of the detected portion of the user's body resulting from changes in locations of the plurality of points of the detected portion of the user's body due to the at least one user movement as measured from the separate frames over a predetermined period of time, and wherein the changes in the locations of the plurality of points is further determined based on identifying an angle between a first position of a first point of the plurality of points of the portion of the user's body and a second position of the first point, interpret the arc of change as at least one motion instruction applied to a movement of a digital avatar of an active game session operating within a digital computing game, and create packet data representing the at least one motion instruction;compare the motion instruction to a pre-stored known list of motion instructions;determine at least the angle of the motion instruction matches an angle one of the pre-stored known motion instructions within a numerical range of certainty;and a transmitter configured to transmit the packet data to a separate communication device to conduct the digital avatar movement of the active gaming session.

- The apparatus of claim 7 , wherein the at least one user movement comprises at least one of a change in a user facial feature, a user voice command, and a moved user body part.

- The apparatus of claim 7 , wherein the packet data is transmitted to the separate communication device by communicating over at least one of a tethered communication link and a wireless communication link.

- The apparatus of claim 7 , wherein the at least one user movement comprises a series of movements which are interpreted as a game movement and which is applied to the active game session.

- The apparatus of claim 10 , wherein the game movement is interpreted from the series of movements without the user providing button selections.

- The apparatus of claim 7 , wherein the processor is further configured to compare the at least one motion instruction to at least one known result signal pre-stored in memory to determine a type of game movement to apply to the active game session.

- A non-transitory computer readable storage medium configured to store instructions that when executed cause a processor to perform processing user movement via a mobile computing device, the processor being further configured to perform: receiving at least one user movement via the mobile computing device;detecting at least a portion of a user's body via a plurality of points referencing the portion of the user's body;capturing a plurality of separate frames while a motion performed by the portion of the user's body is detected, wherein the separate frames comprise changes in the plurality of points as measured along predefined axes;identifying motion vectors corresponding to the changes in the plurality of points identified among the plurality of separate frames;measuring, via the mobile computing device, an arc of change based on a change between a first user position of the detected portion of the user's body and a second user position of the detected portion of the user's body resulting from changes in locations of the plurality of points of the detected portion of the user's body due to the at least one user movement as measured from the separate frames over a predetermined period of time, and wherein the changes in the locations of the plurality of points is further determined based on identifying an angle between a first position of a first point of the plurality of points of the portion of the user's body and a second position of the first point;interpreting the arc of change as at least one motion instruction applied to a movement of a digital avatar of an active game session operating within a digital computing game;creating packet data representing the at least one motion instruction;comparing the motion instruction to a pre-stored known list of motion instructions;determining at least the angle of the motion instruction matches an angle one of the pre-stored known motion instructions within a numerical range of certainty;and transmitting the packet data to a separate communication device to conduct the digital avatar movement of the active gaming session.

- The non-transitory computer readable storage medium of claim 13 , wherein the at least one user movement comprises at least one of a change in a user facial feature, a user voice command, and a moved user body part.

- The non-transitory computer readable storage medium of claim 13 , wherein the transmitting the packet data to the separate communication device comprises communicating over at least one of a tethered communication link and a wireless communication link.

- The non-transitory computer readable storage medium of claim 13 , wherein the at least one user movement comprises a series of movements which are interpreted as a game movement and applied to the active game session.

- The non-transitory computer readable storage medium of claim 16 , wherein the game movement is interpreted from the series of movements without the user providing button selections.

- The non-transitory computer readable storage medium of claim 13 wherein the processor is further configured to perform: comparing the at least one motion instruction to at least one known result signal pre-stored in memory to determine a type of game movement to apply to the active game session.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.