U.S. Pat. No. 10,183,222

SYSTEMS AND METHODS FOR TRIGGERING ACTION CHARACTER COVER IN A VIDEO GAME

AssigneeGlu Mobile Inc.

Issue DateApril 1, 2016

Illustrative Figure

Abstract

Systems and methods are provided in which a character possessing a weapon is displayed in a scene along with first and second affordances. Responsive to first affordance user contact, scene orientation is changed. Responsive to second affordance user contact with a first status of the weapon, a firing process is performed in which the weapon is fired and the weapon status is updated. When (i) the first and second affordances are user contact free or (ii) the second affordance is presently in user contact and there is a second weapon status, firing is terminated. Alternatively, when the second affordance is presently in user contact and there is a first weapon status, the firing is repeated. Alternatively still, with (i) present first affordance user contact, (ii) a first weapon status, and (iii) no present second affordance user contact, the firing is repeated upon second affordance user contact.

Description

Like reference numerals refer to corresponding parts throughout the several views of the drawings. DETAILED DESCRIPTION With reference toFIG. 6, disclosed are systems and methods for hosting a video game in which a virtual character602possessing a weapon604is displayed in a scene606along with a first affordance608. As is typically the case, inFIG. 6, this first affordance608is not visible to the user, and typically encompasses a specified region of the touch screen display illustrated inFIG. 6. Responsive to first affordance608user contact (e.g., the user dragging a finger across the first affordance608as illustrated inFIG. 6), scene606orientation (e.g., pitch and/or yaw) is changed. This is useful for aiming the weapon604into the scene606. Referring toFIG. 8, responsive to second affordance user contact (e.g., pressing the second affordance702) while the weapon has a first status (e.g., the weapon is loaded or partially loaded), a firing process is performed in which the weapon is fired and the weapon status is updated (e.g., if the weapon is a gun the number of bullets fired is subtracted from a weapon value, thereby affecting the weapon status, etc.). Advantageously, when either (i) the first608and second702affordances are user contact free or (ii) the second affordance702is presently in user contact and there is a second weapon status (e.g., the weapon needs to be recharged, reloaded, etc.), firing is automatically terminated without any requirement that the user interact with a “cover” button. In some embodiments, as follow up to the firing process and/or concurrently with the firing process, when the second affordance702is presently in user contact (e.g., the user is pressing the second affordance702) and there is a first weapon status (e.g., the weapon604remains loaded or partially loaded), the firing process described above is repeated and/or seamlessly continued. Additionally still, as follow up to the firing process and/or concurrently with the firing process, ...

Like reference numerals refer to corresponding parts throughout the several views of the drawings.

DETAILED DESCRIPTION

With reference toFIG. 6, disclosed are systems and methods for hosting a video game in which a virtual character602possessing a weapon604is displayed in a scene606along with a first affordance608. As is typically the case, inFIG. 6, this first affordance608is not visible to the user, and typically encompasses a specified region of the touch screen display illustrated inFIG. 6. Responsive to first affordance608user contact (e.g., the user dragging a finger across the first affordance608as illustrated inFIG. 6), scene606orientation (e.g., pitch and/or yaw) is changed. This is useful for aiming the weapon604into the scene606.

Referring toFIG. 8, responsive to second affordance user contact (e.g., pressing the second affordance702) while the weapon has a first status (e.g., the weapon is loaded or partially loaded), a firing process is performed in which the weapon is fired and the weapon status is updated (e.g., if the weapon is a gun the number of bullets fired is subtracted from a weapon value, thereby affecting the weapon status, etc.). Advantageously, when either (i) the first608and second702affordances are user contact free or (ii) the second affordance702is presently in user contact and there is a second weapon status (e.g., the weapon needs to be recharged, reloaded, etc.), firing is automatically terminated without any requirement that the user interact with a “cover” button.

In some embodiments, as follow up to the firing process and/or concurrently with the firing process, when the second affordance702is presently in user contact (e.g., the user is pressing the second affordance702) and there is a first weapon status (e.g., the weapon604remains loaded or partially loaded), the firing process described above is repeated and/or seamlessly continued. Additionally still, as follow up to the firing process and/or concurrently with the firing process, when all the following three conditions are satisfied the firing process is paused but immediately repeated (or resumed) after second affordance user contact: (i) present first affordance608user contact (e.g., the user is dragging their thumb across the first affordance608), (ii) a first weapon status (e.g., the weapon is fully or partially loaded), and (iii) no present second affordance702user contact (e.g., the user is not touching the second affordance). That is, the virtual character602maintains the active cover status but does not fire. Then, either user contact with the first affordance608subsides in which case the virtual character602assumes the cover status “cover” as illustrated inFIG. 9, or user contact with the second affordance702is made in which case the virtual character602assumes the cover status “active” and fires the weapon604as illustrated inFIG. 8.

Now that an overview of user controls in accordance to an embodiment has been described, a truth table for the user controls in a more complex embodiment is disclosed with reference to the table illustrated inFIG. 12.

With reference to row1of the table illustrated inFIG. 12, if no targets are remaining to shoot at, then the virtual character602is advanced to a new position within a scene606, the present level/campaign that the virtual character602is in is terminated, or the virtual character is advance to a new scene606. With reference toFIG. 7, examples of targets are opponents704-1and704-2in scene606.

With reference to row2of the table illustrated inFIG. 12, if there are targets remaining to shoot at, the weapon606is in not in a first value range (e.g., the weapon is in need of reloading), the virtual character602status is involuntarily changed to “cover”, thereby terminating weapon fire and forcing weapon reloading.

With reference to row3of the table illustrated inFIG. 12, if the virtual character602status is “cover” (as illustrated for instance inFIGS. 6, 7, and 9), there are targets remaining to shoot at, the weapon604is in a first value range (e.g., the weapon is loaded or at least partially loaded), the first affordance608is engaged by the user by the second affordance702is not engaged by the user, the scene606is adjusted in accordance with first affordance interaction with the user and the virtual character602status is not changed (e.g., it remains “cover”).

With reference to row4of the table illustrated inFIG. 12, if the virtual character602status is “cover” (as illustrated for instance inFIGS. 6, 7, and 9), there are targets remaining to shoot at, the weapon604is in a first value range (e.g., the weapon is loaded or at least partially loaded), and the second affordance702is engaged by the user, the virtual character602status is either changed to, or maintained as, “active” and the weapon604is fired as illustrated inFIG. 8.

With reference to row5of the table illustrated inFIG. 12, if the virtual character602status is “active” (e.g., not “cover”), there are targets remaining to shoot at, the weapon604is in a first value range (e.g., the weapon is loaded or at least partially loaded), the first affordance is engaged by the user and the second affordance702is not engaged by the user, the scene606is adjusted in accordance with first affordance608interaction with the user and the virtual character602status is maintained as “active” and the weapon604is not fired.

With reference to row6of the table illustrated inFIG. 12, if the virtual character602status is “active” (e.g., not “cover”), there are targets remaining to shoot at, the weapon604is in a first value range (e.g., the weapon is loaded or at least partially loaded), the first affordance is not engaged by the user and the second affordance702is engaged by the user, the virtual character602status is maintained as “active” with the weapon604firing.

With reference to row7of the table illustrated inFIG. 12, if the virtual character602status is “active” (e.g., not “cover”), there are targets remaining to shoot at, the weapon604is in a first value range (e.g., the weapon is loaded or at least partially loaded), and both the first affordance608and second affordance702is engaged by the user, the scene606is adjusted in accordance with first affordance608interaction with the user while keeping the virtual character602status “active” with the weapon604firing.

With reference to row8of the table illustrated inFIG. 12, if the virtual character602status is “active” (e.g., not “cover”), there are targets remaining to shoot at, the weapon604is in a first value range (e.g., the weapon is loaded or at least partially loaded), and neither the first affordance608nor the second affordance702is engaged by the user, an automatic “cover” is enacted in which the virtual character602status is changed or maintained as “cover” with the weapon604not firing.

Additional details of systems, devices, and/or computers in accordance with the present disclosure are now described in relation to theFIGS. 1-3.

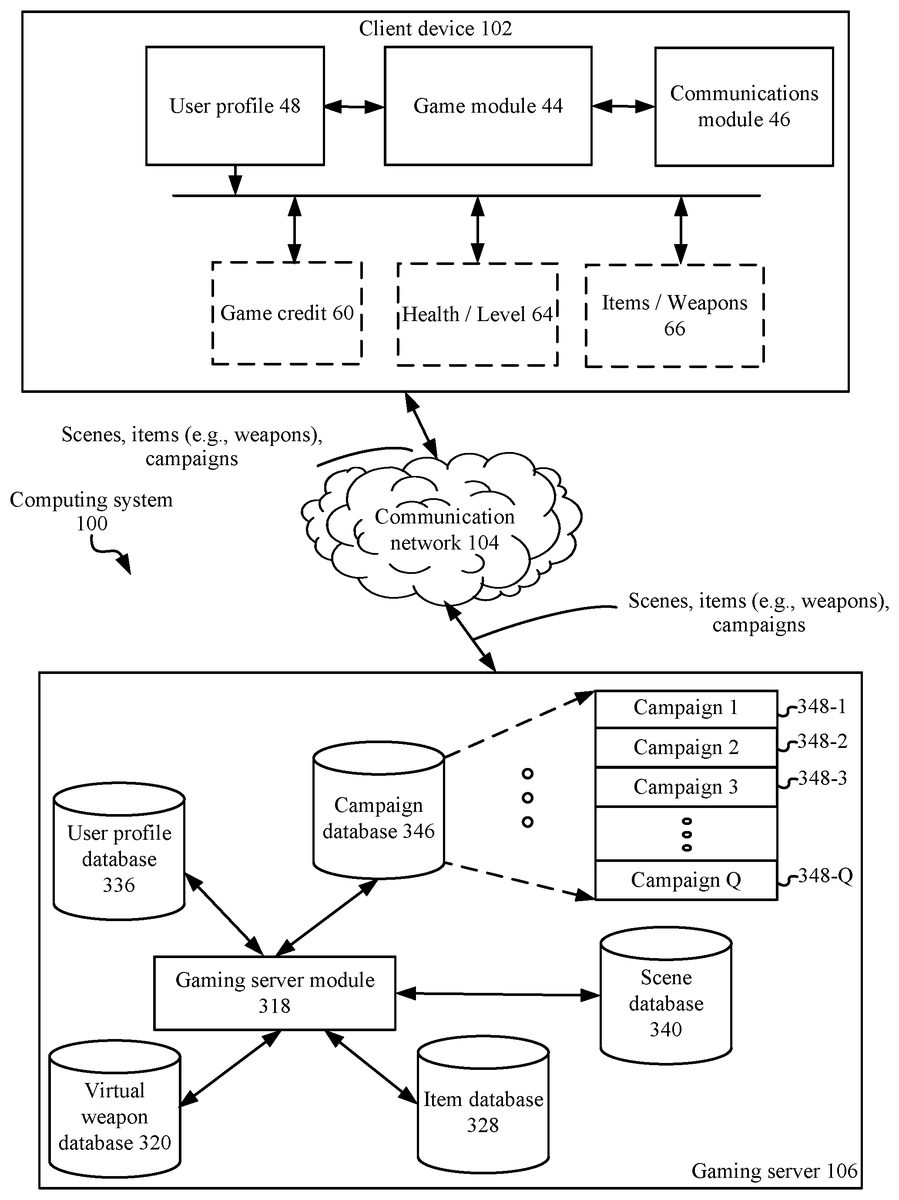

FIG. 1is a block diagram illustrating a computing system100, in accordance with some implementations. In some implementations, the computing system100includes one or more computing devices102(e.g., computing devices102A,102B,102C,102D . . . , and102N), a communication network104, and one or more gaming server systems106. In some implementations, a computing device102is a phone (mobile or landline, smart phone or otherwise), a tablet, a computer (mobile or otherwise), or a hands free computing device. In some embodiments, a computing device102is any device having a touch screen display.

In some implementations, a computing device102provides a mechanism by which a user earns game credit through successful completion of one or more campaigns within a game. In these campaigns, a virtual character602is posed against multiple adverse characters (e.g., defendants of a base not associated with the user). For instance, in some embodiments the user must use their virtual character602to infiltrate a base (or other defensive or offensive scenes606) associated with another user. The virtual character602infiltrates the opposing base (or other defensive or offensive scene606) in a three dimensional action format in which their virtual character602and defendants (e.g., opponents704ofFIG. 7) of the base or other form of scene606are adverse to each other and use weapons against each other. Advantageously, in some embodiments the virtual character602has an ability to fire a weapon604in three dimensions, and explore scenes606in three dimensions, during such campaigns. Advantageously, in other embodiments the virtual character602has an ability to swing a weapon (e.g., a sword or knife) in three dimensions, and explore the base in three dimensions, during such campaigns. In some embodiments, successful completion of campaigns leads to the award of game credit to the user. In some such embodiments, the user has the option to use such game credit to buy better items (e.g., weapons) or upgrade the characteristics of existing items. This allows the user to conduct campaigns of greater difficulty (e.g., neutralize scenes that have more opponents or opponents with greater health, damage, or other characteristics).

Referring toFIG. 1, in some implementations, the computing device102includes a game module44that facilitates the above identified actions. In some implementations, the computing device102also includes a user profile48. The user profile48stores characteristics of the user such as game credit60that the user has acquired, the health of the user in a given campaign and the level64that the user has acquired through successful campaign completions, and the items (e.g., weapons, armor, etc.)66that the user has acquired. In some implementations, the computing device102also includes a communications module46. The communications module46is used to communicate with gaming server106, for instance, to acquire additional campaigns, look up available item upgrades, report game credit, identify available items and/or weapons or their characteristics, receive new campaigns and/or new events within such campaigns (e.g., special new adversaries, mystery boxes, or awards).

In some implementations, the communication network104interconnects one or more computing devices102with each other, and with the gaming server system106. In some implementations, the communication network104optionally includes the Internet, one or more local area networks (LANs), one or more wide area networks (WANs), other types of networks, or a combination of such networks.

In some implementations, the gaming server system106includes a gaming server module318, a user profile database336, a campaign database346comprising a plurality of campaigns348, a scene database340, an item database328, and/or a virtual weapon database320. In some embodiments, the virtual weapon database320is subsumed by item database328. In some embodiments, the gaming server module318, through the game module44, provides players (users) with campaigns348. Typically, a campaign348challenges a user to infiltrate and/or compromise one or more scenes606such as the scene illustrated inFIG. 6. Advantageously, in some embodiments, the gaming server module318can draw from any of the scenes606in scene database340to build campaigns348. In alternative embodiments, the gaming server module318draws from upon a predetermined set of scenes606in scene database340to build one or more predetermined campaigns348.

In some embodiments, as each player progresses in the game, they improve their scores or other characteristics. In some embodiments, such scores or other characteristics are used to select which scenes606and/or opponents704are used in the campaigns offered by gaming server module318to any given player. The goal is to match the skill level and/or experience level of a given player to the campaigns the user participates in so that the given player is appropriately challenged and is motivated to continue game play. Thus, in some embodiments, the difficulty of the campaigns offered to a given user matches the skill level and/or experience level of the user. As the user successfully completes campaigns, their skill level and/or experience level advances. In some embodiments, gaming server module318allows the user to select campaigns348.

In some embodiments, the campaign database346is used to store the campaigns348that may be offered by the gaming server module318. In some embodiments, some or all of the campaigns348in the campaign database346are system created rather than user created. This provides a measure of quality control, by ensuring a good spectrum of campaigns of varying degrees of difficulty. In this way, there are campaigns348available for both beginners and more advanced users. In some embodiments, a campaign348is a series of scenes and thus in some embodiments scene database340is subsumed by campaign database346and/or campaign database346accesses scenes606from scene database340.

As referenced above, in some embodiments, the scene database340stores a description of each of the scenes606that may be used in campaigns offered by the gaming server module318and/or game module44. In some embodiments each campaign has, at a minimum, a target. The target represents the aspect of the campaign that must be compromised in order to win the campaign. In some embodiments, the target is the location of a special henchman that is uniquely associated with the campaign. The henchman is the lead character of the campaign. In some embodiments, killing the henchman is required to win a campaign. In some embodiments, each scene606is three-dimensional in that the user can adjust the pitch and yaw of the scene in order to aim a weapon into the scene. In some embodiments, each scene606is three-dimensional in that the user can adjust the pitch, yaw and roll of the scene in order to aim a weapon into the scene. As such, a three-dimensional scene606can be manipulated in three dimensions by users as their virtual characters602traverse through the scene. More specifically, in some embodiments, the virtual character602is given sufficient viewing controls to view the three-dimensional scene in three dimensions. For example, in some embodiments, the three dimensions are depth, left-right, and up-down. In some embodiments, the three dimensions are controlled by pitch and yaw. In some embodiments, the three dimensions are controlled by pitch, yaw and roll. Examples of the possible three-dimensional scenes606include, but are not limited to, a parking lot, a vehicle garage, a warehouse space, a laboratory, an armory, a passageway, an office, and/or a missile silo. In typical embodiments, a campaign stored in campaign database346has more than one three-dimensional scene606, and each of the three-dimensional scenes606of a campaign348are interconnected in the sense that one the user neutralizes the opponents in one scene606they are advanced to another scene in the campaign. In some embodiments, such advancement occurs through a passageway (e.g., doorway, elevator, window, tunnel, pathway, walkway, etc.). In some embodiments, such advancement occurs instantly in that the user's virtual character602is abruptly moved to a second scene in a campaign when the user has neutralized the opponents in the first scene of the campaign.

In some embodiments, each game user is provided with one or more items (e.g., weapons, armor, food, potions, etc.) to use in the campaigns348. In some embodiments, a user may purchase item upgrades or new items altogether. In some embodiments, a user may not purchase item upgrades or new items altogether but may acquire such upgrades and new items by earning game credit through the successful completion of one or more of the campaigns348. In some embodiments, a user may not purchase item upgrades or new items altogether but may acquire such upgrades and new items by earning game credit through both successful and unsuccessful completion of one or more of the campaigns348.

In some embodiments, the gaming server module318provides users with an interface for acquiring items (e.g. weapon) upgrades or new items. In some embodiments, the gaming server module318uses the item database328to track which items and which item upgrades (item characteristics) are supported by the game. In some embodiments, the items database328provides categories of items and the user first selects an item category and then an item in the selected item category. In the case where the items include virtual weapons, exemplary virtual weapons categories include, but are not limited to assault rifles, sniper rifles, shotguns, Tesla rifles, grenades, and knife-packs.

In some embodiments, users of the video game are ranked into tiers. In one example, tier 1 is a beginner level whereas tier 10 represents the most advanced level. Users begin at an initial tier (e.g., tier 1) and as they successfully complete campaigns348their tier level advances (e.g., to tier 2 and so forth). In some such embodiments, the weapons available to users in each item category are a function of their tier level. In this way, as the user advances to more advanced tiers, more advanced items are unlocked in item database328and thus made available to the user. As a non-limiting example solely to illustrate this point, in some embodiments, in the assault rifles category, at the tier 1 level, item database328and/or virtual weapon database320provides a Commando XM-7, a Raptor Mar-21, and a Viper X-72, in the sniper rifles category, at the tier 1 level, item database328and/or virtual weapon database320provides a Scout M390, a Talon SR-9, and a Ranger 338LM, in the shotguns category, at the tier 1 level, item database328and/or virtual weapon database320provides a SWAT 1200, a Tactical 871, and a Defender, in the Tesla rifles category, at the tier 1 level, item database328and/or virtual weapon database320provides an M-25 Terminator, a Tesla Rifle 2, and a Tesla Rifle 3. In some embodiments, the item database328and/or virtual weapon database320further provides grenades (e.g., frag grenades for damaging groups of enemies crowded together and flushing out enemies hiding behind doors or corners) and knife-packs. In some embodiments, the item database328, depending on the particular game implementation, further provides magic spells, potions, recipes, bombs, food, cloths, vehicles, space ships, and/or medicinal items. In some embodiments, the characteristics of these items are tiered. For example, in some embodiments, where an item is a weapon, the accuracy of a weapon may be upgraded to a certain point, the point being determined by the user's tier level.

In some embodiments, gaming server module318maintains a profile in the user profile database336of each user playing the game on a computing device102. In some embodiments, there are hundreds, thousands, tens of thousands or more users playing instances of the game on corresponding computing devices102and a gaming server module318stores a profile for each such user in user profile database336. In some embodiments, the user profile database336does not store an actual identity of such users, but rather a simple login and password. In some embodiments, the profiles in the user profile database336are limited to the logins and passwords of users. In some embodiments, the profiles in user profile database336are limited to the logins, passwords, and tier levels of users. In some embodiments, the profiles in user profile database store more information about each user, such as amounts of game credit, types of weapons owned, characteristics of such weapons, and descriptions of the bases built. In some embodiments, rather than storing a full description of each base in a user profile, the user profile contains a link to base database340where the user's bases are stored. In this way, the user's bases may be quickly retrieved using the base database340link in the user profile. In some embodiments, the user profile in the user profile database336includes a limited amount of information whereas a user profile48on a computing device102associated with the user contains more information. For example, in some embodiments, the user profile in user profile database336includes user login and password and game credit acquired whereas the user profile48on the computing device102for the same user includes information on weapons and bases associated with the user. It will be appreciated that any possible variation of this is possible, with the profile for the user in user profile database336including all or any subset of the data associated with the user and the user profile48for the user on the corresponding computing device102including all or any subset of the data associated with the user. In some embodiments, there is no user profile48stored on computing device102and the only profile for the user is stored on gaming server106in user profile database336.

FIG. 2is an example block diagram illustrating a computing device102, in accordance with some implementations of the present disclosure. It has one or more processing units (CPU's)402, peripherals interface470, memory controller468, a network or other communications interface420, a memory407(e.g., random access memory), a user interface406, the user interface406including a display408and input410(e.g., keyboard, keypad, touch screen), an optional accelerometer417, an optional GPS419, optional audio circuitry472, an optional speaker and/or audio jack460, an optional microphone462, one or more optional intensity sensors464for detecting intensity of contacts on the device102(e.g., a touch-sensitive surface such as a touch-sensitive display system408of the device102), optional input/output (I/O) subsystem466, one or more optional optical sensors473, one or more communication busses412for interconnecting the aforementioned components, and a power system418for powering the aforementioned components.

In typical embodiments, the input410is a touch-sensitive display, such as a touch-sensitive surface. In some embodiments, the user interface406includes one or more soft keyboard embodiments. The soft keyboard embodiments may include standard (QWERTY) and/or non-standard configurations of symbols on the displayed icons.

Device102optionally includes, in addition to accelerometer(s)417, a magnetometer (not shown) and a GPS419(or GLONASS or other global navigation system) receiver for obtaining information concerning the location and orientation (e.g., portrait or landscape) of device102.

It should be appreciated that device102is only one example of a multifunction device that may be used, and that device102optionally has more or fewer components than shown, optionally combines two or more components, or optionally has a different configuration or arrangement of the components. The various components shown inFIG. 2are implemented in hardware, software, firmware, or a combination thereof, including one or more signal processing and/or application specific integrated circuits.

Memory407optionally includes high-speed random access memory and optionally also includes non-volatile memory, such as one or more magnetic disk storage devices, flash memory devices, or other non-volatile solid-state memory devices. Access to memory407by other components of device102, such as CPU(s)402is, optionally, controlled by memory controller468.

Peripherals interface470can be used to couple input and output peripherals of the device to CPU(s)402and memory407. The one or more processors402run or execute various software programs and/or sets of instructions stored in memory407to perform various functions for device102and to process data.

In some embodiments, peripherals interface470, CPU(s)402, and memory controller468are, optionally, implemented on a single chip. In some other embodiments, they are, optionally, implemented on separate chips.

RF (radio frequency) circuitry108of network interface420receives and sends RF signals, also called electromagnetic signals. RF circuitry108converts electrical signals to/from electromagnetic signals and communicates with communications networks and other communications devices via the electromagnetic signals. RF circuitry420optionally includes well-known circuitry for performing these functions, including but not limited to an antenna system, an RF transceiver, one or more amplifiers, a tuner, one or more oscillators, a digital signal processor, a CODEC chipset, a subscriber identity module (SIM) card, memory, and so forth. RF circuitry108optionally communicates with networks106. In some embodiments, circuitry108does not include RF circuitry and, in fact, is connected to network106through one or more hard wires (e.g., an optical cable, a coaxial cable, or the like).

Examples of networks106include, but are not limited to, the World Wide Web (WWW), an intranet and/or a wireless network, such as a cellular telephone network, a wireless local area network (LAN) and/or a metropolitan area network (MAN), and other devices by wireless communication. The wireless communication optionally uses any of a plurality of communications standards, protocols and technologies, including but not limited to Global System for Mobile Communications (GSM), Enhanced Data GSM Environment (EDGE), high-speed downlink packet access (HSDPA), high-speed uplink packet access (HSDPA), Evolution, Data-Only (EV-DO), HSPA, HSPA+, Dual-Cell HSPA (DC-HSPDA), long term evolution (LTE), near field communication (NFC), wideband code division multiple access (W-CDMA), code division multiple access (CDMA), time division multiple access (TDMA), Bluetooth, Wireless Fidelity (Wi-Fi) (e.g., IEEE 802.11a, IEEE 802.11ac, IEEE 802.11ax, IEEE 802.11b, IEEE 802.11g and/or IEEE 802.11n), voice over Internet Protocol (VoIP), Wi-MAX, a protocol for e-mail (e.g., Internet message access protocol (IMAP) and/or post office protocol (POP)), instant messaging (e.g., extensible messaging and presence protocol (XMPP), Session Initiation Protocol for Instant Messaging and Presence Leveraging Extensions (SIMPLE), Instant Messaging and Presence Service (IMPS)), and/or Short Message Service (SMS), or any other suitable communication protocol, including communication protocols not yet developed as of the filing date of this document.

In some embodiments, audio circuitry472, speaker460, and microphone462provide an audio interface between a subject (medical practitioner) and device102. The audio circuitry472receives audio data from peripherals interface470, converts the audio data to an electrical signal, and transmits the electrical signal to speaker460. Speaker460converts the electrical signal to human-audible sound waves. Audio circuitry472also receives electrical signals converted by microphone462from sound waves. Audio circuitry472converts the electrical signal to audio data and transmits the audio data to peripherals interface470for processing. Audio data is, optionally, retrieved from and/or transmitted to memory407and/or RF circuitry420by peripherals interface470.

In some embodiments, power system418optionally includes a power management system, one or more power sources (e.g., battery, alternating current (AC)), a recharging system, a power failure detection circuit, a power converter or inverter, a power status indicator (e.g., a light-emitting diode (LED)) and any other components associated with the generation, management and distribution of power in portable devices.

In some embodiments, the device102optionally also includes one or more optical sensors473. Optical sensor(s)473optionally include charge-coupled device (CCD) or complementary metal-oxide semiconductor (CMOS) phototransistors. Optical sensor(s)473receive light from the environment, projected through one or more lens, and converts the light to data representing an image. In conjunction with imaging module431(also called a camera module), optical sensor(s)473optionally capture still images and/or video. In some embodiments, an optical sensor is located on the back of device102, opposite display system408on the front of the device, so that the touch screen is enabled for use as a viewfinder for still and/or video image acquisition.

As illustrated inFIG. 2, a device102preferably comprises an operating system40(e.g., iOS, DARWIN, RTXC, LINUX, UNIX, OS X, WINDOWS, or an embedded operating system such as VxWorks), which includes various software components and/or drivers for controlling and managing general system tasks (e.g., memory management, storage device control, power management, etc.) and facilitates communication between various hardware and software components. The device further optionally comprises a file system42which may be a component of operating system40, for managing files stored or accessed by the computing device102. Further still, the device102further comprises a game module44for providing a user with a game having the disclosed improved shooter controls. In some embodiments, the device102comprises a communications module (or instructions)46for connecting the device102with other devices (e.g., the gaming server106and the devices102B . . .102N) via one or more network interfaces420(wired or wireless), and/or the communication network104(FIG. 1).

Further still, in some embodiments, the device102comprises a user profile48for tracking the aspects of the user. Exemplary aspects include a description of one or more virtual weapons604-K, and for each such virtual weapon a virtual weapon status52and/or virtual weapon characteristics54(e.g., firing rate, firepower, reload rate, etc.), game credit60across one or more game classes62(e.g., a first game credit class through an Nthgame credit class, where N is a positive integer greater than one), health/tier level64, and/or a description of one or more items66and for each such item66the item characteristics;

In some implementations, one or more of the above identified elements are stored in one or more of the previously mentioned memory devices, and correspond to a set of instructions for performing a function described above. The above identified modules or programs (e.g., sets of instructions) need not be implemented as separate software programs, procedures or modules, and thus various subsets of these modules may be combined or otherwise re-arranged in various implementations. In some implementations, the memory407optionally stores a subset of the modules and data structures identified above. Furthermore, the memory407may store additional modules and data structures not described above.

FIG. 3is an example block diagram illustrating a gaming server106in accordance with some implementations of the present disclosure. The gaming server106typically includes one or more processing units CPU(s)302(also referred to as processors), one or more network interfaces304, memory310, and one or more communication buses308for interconnecting these components. The communication buses308optionally include circuitry (sometimes called a chipset) that interconnects and controls communications between system components. The memory310includes high-speed random access memory, such as DRAM, SRAM, DDR RAM or other random access solid state memory devices and optionally includes non-volatile memory, such as one or more magnetic disk storage devices, optical disk storage devices, flash memory devices, or other non-volatile solid state storage devices. The memory310optionally includes one or more storage devices remotely located from CPU(s)302. The memory310, or alternatively the non-volatile memory device(s) within the memory310, comprises a non-transitory computer readable storage medium. In some implementations, the memory310or alternatively the non-transitory computer readable storage medium stores the following programs, modules and data structures, or a subset thereof:an operating system312, which includes procedures for handling various basic system services and for performing hardware dependent tasks;optionally, a file system314which may be a component of operating system312, for managing files stored or accessed by the gaming server106;a network communication module (or instructions)316for connecting the server106with other devices (e.g., the computing devices102) via the one or more network interfaces304(wired or wireless), or the communication network104(FIG. 1);a gaming server module318for managing a plurality of instances of a game, each instance corresponding to a different participant (user of a device102) and for tracking user activities within such games;an optional virtual weapon database320for storing information regarding a plurality of virtual weapons604, and information for each such virtual weapon604such as the cost324of the virtual weapon and/or the characteristics326of the virtual weapon;an item database328to track the items330that are supported by the game as well as the costs332of such items and the characteristics334of such items;a user profile database336that stores a user profile338for each user of the game;an optional scene database340that stores a description of each scene606(e.g., such as the scene illustrated inFIG. 6) that is hosted by the system (e.g. by campaigns348), including for each such scene606information344regarding the scene such as opponents704(e.g., as illustrated inFIG. 7) that appear in the scene606; anda campaign database346for storing the campaigns348that may be offered to the gaming server module318.

In some implementations, one or more of the above identified elements are stored in one or more of the previously mentioned memory devices, and correspond to a set of instructions for performing a function described above. The above identified modules or programs (e.g., sets of instructions) need not be implemented as separate software programs, procedures or modules, and thus various subsets of these modules may be combined or otherwise re-arranged in various implementations. In some implementations, the memory310optionally stores a subset of the modules and data structures identified above. Furthermore, the memory310may store additional modules and data structures not described above.

AlthoughFIGS. 2 and 3show a “computing device102” and a “gaming server106,” respectively,FIGS. 2 and 3are intended more as functional description of the various features which may be present in computer systems than as a structural schematic of the implementations described herein. In practice, and as recognized by those of ordinary skill in the art, items shown separately could be combined and some items could be separated.

FIG. 4is a flow chart illustrating a method for playing a game, e.g., using a computing device102and/or gaming server106, in accordance with some implementations. In some implementations, a user initiates401, at the computing device102, an instruction to start the video game using the computing device102A. In response, gaming server106identifies411the user profile338associated with the user who just initiated the video game. In some alternative embodiments (not shown), some or all of the components of the user profile is actually obtained from user profile48stored locally on the device102rather than obtaining a profile of the user from the server. In still other embodiments, some components of the user profile are obtained from user profile48of device102whereas other components of the user profile are obtained from the user profile338.

Next an initial user interfaceFIG. 6(e.g., a scene606) is provided to the user to enable the user to earn items, item upgrades, weapons, or weapon upgrades (403).FIG. 6provides a screen shot of an example initial scene606that is presented to the user via computing device102when the user has initiated the game. Referring toFIG. 6, a virtual character602that is controlled by the user is depicted. In some embodiments, the user is able to customize characteristics of the virtual character602and these customizations are stored in the user profile of the user on computing device102and/or gaming server106. In some embodiments the name of the user is displayed. When the user selects the name, the user is able designate a title for the name. Accordingly, in some embodiments, aspects of the user profile are updated in accordance with customization and/or purchases made by the user (405).

Continuing inFIG. 4and with reference toFIG. 7, in some embodiments, a goal of the game is to accumulate game credit by neutralizing opponents704and other defenses in scenes606of campaigns348(407). Exemplary defenses include, but are not limited to, guards that are adverse to the user and use weapons against the user's virtual character602. To ward off and neutralize these defenses, in some embodiments, the user selects an item, such as a weapon604. For instance, referring toFIG. 6, in the initial user interface, the default weapon for the illustrated user could be a Commando XM-7. Scene606neutralization is not an easy task, it requires skill on the part of the user as well as good weapons. In some embodiments the user has no choice as to which weapon604they may use. In other embodiments, the user can upgrade the weapon604and/or characteristics of the weapon in exchange for game credit. In some embodiments, some of the weapon characteristics upgrades (or other forms of item characteristic upgrades) are locked until the user advances to a higher tier. As such, in some embodiments of the present disclosure, some item characteristics upgrades are not available or are locked even though the user may have sufficient game credit. In some embodiments a weapon604characteristic is the amount of damage a weapon will inflict when it hits a target. In some embodiments, damage is rated on a numeric scale, such as 1 to 24, with higher numbers representing more significant damage, and the user is able to exchange game credit for a larger number on this scale. Referring toFIG. 2, in some embodiments, the fact that a user possesses a particular virtual weapon is stored as element604in their user profile48, and the weapon characteristics, such as the damage number, is stored as a weapon characteristic54. In some embodiments, other weapon characteristics54of a weapon604that are numerically ranked and individually stored as weapon characteristics54in accordance with some embodiments of the present disclosure include recoil power, range, accuracy, critical hit chance, reload time, ammunition clip size, and/or critical damage multiplier.

Referring back toFIG. 4, in some embodiments upon successful completion of a campaign348(409) or upon unsuccessful completion of a campaign348(411), aspects of the user profile43/338are updated in accordance with the outcome of the most recent campaign (413).

In some embodiments, the user can select new weapons604(e.g., assault rifles, sniper rifles, shotguns, tesla rifles, “equipment” such as knives, etc.). Items purchased by the user and item upgrades made by the user are stored in the user's profile48/338. Further, the user's profile48/338is updated to reflect the usage of game credit for these items and/or item upgrades. In one example, the item is armor, the item characteristic is armor strength on a numerical scale, and the item upgrade is an improvement in the armor strength on the numeric scale. The user selects a campaign348in order to acquire game credit406. In a campaign, the user manipulates the virtual character602posed against a plurality of opponents704in a scene606in an action format in which the virtual character602and the plurality of opponents704are adverse to each other and use weapons against each other (e.g., fire weapons at each other).

In some such embodiments, the virtual character602has an ability to fire a projectile weapon (e.g., fire a gun, light crossbow, sling, heavy crossbow, shortbow, composite shortbow, longbow, composite longbow, hand crossbow, repeating crossbow, etc.) at opponents704.

In some such embodiments, the virtual character602has an ability to swing a weapon (e.g., glaive, guisarme, lance, longspear, ranseur, spiked chain, whip, shuriken, gauntlet, dagger, shortspear, falchion, longsword, bastard sword, greataxe, greatsword, dire flail, dwarven urgrosh, gnome hooked hammer, orc double axe, quarterstaff, two-bladed sword, etc.) at opponents704in the scene606.

In some such embodiments, the virtual character602has an ability to throw a weapon (e.g., daggers, clubs, shortspears, spears, darts, javelins, throwing axes, light hammers, tridents, shuriken, net, etc.) at opponents704in the scene606.

FIG. 5is an example flow chart illustrating a method (500) in accordance with embodiments of the present disclosure that provides improved shooter controls for taking virtual characters in and out of cover in action video games involving targets with adversity.FIG. 5makes reference toFIG. 13which illustrates a campaign database346associated with the video game in accordance with an embodiment of the present disclosure.

Referring to block502, a method is performed at a client device102comprising a touch screen display408, one or more processors402and memory407, in an application (e.g., game module44ofFIG. 2) running on the client device102. The application44is associated with a user. For instance, the user has provided a login and/or password to the client device102for identification purposes and to access the profile of the user.

The application44includes a first virtual character602that is associated with a categorical cover status1304selected from the set {“cover” and “active”}. The cover status “cover” is illustrated by the virtual character602inFIGS. 6, 7, and 9.

The cover status “cover” is associated with a first plurality of images1306of the first virtual character602stored in the client device102or accessible to the client device from a remote location (e.g., in the categorical database346of gaming server106). Each image1308of the first plurality of images1306is of the first virtual character602in a cover position. For instance, the portion of the figure illustrating the virtual character602in each ofFIGS. 6, 7, and 9constitutes an image1308in the first plurality of images1306.

The cover status “active” is associated with a second plurality of images1310of the first virtual character602stored in the client device102or accessible to the client device from a remote location (e.g., in the categorical database346of gaming server106). Each image1312of the second plurality of images1310is of the first virtual character602in an active position. For instance, the portion of the figure illustrating the virtual character602inFIG. 8constitutes an image1312in the second plurality of images1310.

Referring to block504ofFIG. 5A, a scene606is displayed on the touch screen display408of the user device102. The scene606is obtained from a plurality of scene images1314stored in the client device102or that is accessible to the client device from a remote location. For instance, in some embodiments the scenes606are stored in campaign database346of the gaming server106. In still other embodiments, the scenes606are stored in scene database340of the gaming server106. Each ofFIGS. 6 through 9illustrates a different scene602in a campaign346of the game module44. Referring to block506ofFIG. 5, non-limiting examples of scenes606include parking lots, vehicle garages, warehouse spaces, vaults, factories, a laboratories, armories, passageways, offices, torture chambers, jail cells, missile silos and dungeons. InFIGS. 6 through 9, the scene606is that of a passageway between buildings.

Referring to block508ofFIG. 5A, the method continues by obtaining a weapon status52of a virtual weapon604in the gaming application (e.g., game module44) from a user profile48and/or338associated with the user. The user profile is stored in the client device (as user profile48) and/or is accessible to the client device102from a remote location (e.g., from the gaming server106as user profile338). In some embodiments, the user profile refers to the campaign database346, such as the campaign database illustrated inFIG. 13to obtain the weapon status52of a virtual weapon604. In some embodiments, the user profile refers to the virtual weapon database320on the gaming server102to obtain the weapon status52of a virtual weapon604.

Referring to block510ofFIG. 5A, the method continues with the provision of a first affordance region608and a second affordance region702on the scene606. In some embodiments, the first and second affordance regions are visible to the user (512). That is, there is some demarking on the display to denote the boundaries of the affordances. In some embodiments, the first affordance region608is not visible to the user and the second affordance region is visible to the user (514). Such an embodiment is illustrated inFIG. 7. That is, inFIG. 7, the first affordance region608is not visible to the user, in the sense that the user does not see any markings of the boundary of the first affordance and the second affordance region702is visible to user in that the user can see the boundary of the second affordance702. For completeness, in some embodiments, the first affordance region608is visible to the user and the second affordance region702is not visible to the user.

Referring to block518ofFIG. 5A, the first affordance is used to change the orientation of the scene606. Responsive to contact with the first affordance region by the user, a change in orientation (e.g., pitch and/or yaw and/or roll) of the scene occurs. This is illustrated inFIG. 6, for example, where the user is instructed to drag their thumb across the screen to aim their virtual weapon602. A picture of a thumb over the first affordance and a direction the thumb should be dragged is provided since the first affordance608is not visible to the user.

Referring to block520ofFIG. 5B, responsive to contact with the second affordance region702by the user when the virtual weapon status52is in a first value range that is other than a second value range, a firing process is performed. For instance, consider the case where the weapon604is a gun. The first value range may represent the gun fully or partially loaded while the second value range may represent the gun without bullets. In another case, the weapon is a laser and the first value range indicates that the laser is sufficient charged for use as a weapon whereas the second value range indicates that the laser is in need of recharging.

Referring to block522ofFIG. 5B, in some embodiments the firing process comprises i) displaying one or more images1308from the second plurality of images1310of the first virtual character602on the scene606, ii) firing the virtual weapon604into the scene606, iii) updating the weapon status52to account for the firing of the virtual weapon; and iv) performing a status check. The second plurality of images1310of the first virtual character602are images of the character602in an active position. In some embodiments there is only one image1312of the virtual character602in the active state. In some embodiments there are several images of the virtual character602in the active states and these images are successively shown to convey movement by the virtual character602during the firing process.

Referring to block524ofFIG. 5B, in typical embodiments a third plurality of images are stored in the client device102or are accessible to the client device from a remote location. For instance, in some embodiments the third plurality of images are stored in the campaign database346illustrated inFIG. 13as images1316under defense/offense mechanisms1314. As such, the third plurality of images represents one or more defense (or offense) mechanisms1314that shoot at the first virtual character602. The method further comprises displaying one or more images1316from the third plurality of images on the scene606. In typical embodiments, the action of the defense mechanisms1314is independent of the cover status of the first virtual character602.FIG. 7illustrates. InFIG. 7, two opponents704are attaching the virtual character604regardless of the cover status (active or cover) of the virtual character602. As such, referring to block526ofFIG. 5B,FIG. 7illustrates an embodiment of the present disclosure in which the displaying of one or more images1316from the third plurality of images displays a second virtual character704that moves around in the scene606.FIGS. 8 and 9provide further illustrations of such an embodiment of the present disclosure.

Referring to block528ofFIG. 5B, in some embodiments, the displaying the one or more images1316from the third plurality of images displays a gun or cannon that is fixed within the scene606. Although this is not illustrated inFIGS. 6 through 9, it will be appreciated that in some embodiments, in addition to or instead of opponents704, there are fixed weapons that shoot at the virtual character602.

Referring to block530ofFIG. 5B, in some embodiments the first virtual character602is subject to termination in a campaign346when shot a predetermined number of times by the one or more defense mechanisms.

Referring to block532ofFIG. 5B, in some embodiments the firing the virtual weapon604into the scene606destroys the one or more defense mechanisms. In such embodiments, the method further comprises advancing the first virtual character602from a first position in the scene to a second position in the scene. For instance, to illustrate, and referring toFIG. 7, after the first virtual character602shoots opponents704-1and704-2, the virtual character is advanced from position740A to position740B in the scene606.

Referring to block534ofFIG. 5B, in some embodiments, the firing of the virtual weapon604into the scene606destroys the one or more defense mechanisms. And, in some such embodiments, the method further comprises changing the scene606on the touch screen display to a new scene606from the plurality of scene images1314. For instance, to illustrate, and referring toFIG. 7, after the first virtual character602shoots opponents704-1and704-2, the virtual character is advance from position740A ofFIG. 7to a new position in a new scene606.

Referring to block536ofFIG. 5C, in some embodiments, the firing the virtual weapon604in the firing process only takes place while the second affordance702is in contact with the user and the firing process terminates immediately when the second affordance702becomes free of user contact.

Referring to block538ofFIG. 5C, in some embodiments, the firing the virtual weapon604completes and the weapon status52is updated upon the occurrence of the earlier of (a) the second affordance702becoming free of user contact and (ii) the elapse of a predetermined amount of time (e.g., one second or less, 500 milliseconds or less, 100 milliseconds or less, etc.). Such embodiments are useful when the virtual weapon604has a predetermined amount of firing power that can be measured in time. An example of such an embodiment is a machine gun with a set firing rate and a predetermined firing clip size. Such embodiments are not useful when the virtual weapon604has a predetermined amount of firing power that is measured in a number of shots fired, and each shot is fired on a non-automatic basis.

Block540ofFIG. 5Crefers to a status check that is part of the firing process of block522ofFIG. 5B. In some embodiments, this status check is performed on a continual basis throughout the firing process522. In some embodiments, this status check is performed on a recurring basis (e.g., every millisecond, every 10 milliseconds, every 500 milliseconds, and so forth). In some embodiments, the status check comprises evaluating the state of the first affordance region608, the second affordance region702, and the weapon604. The status check further comprises evaluating the number of defense/offense mechanisms (targets)1314remaining, and the cover status of the virtual character602. In some embodiments, the status check is embodied in the truth table illustrated inFIG. 12. Referring to the status check of block540ofFIG. 5C, when (i) the first affordance region608and the second affordance region702are free of user contact or (ii) the second affordance702is presently in contact with the user and the weapon status42is deemed to be in the second value range (indicating that the weapon needs to be reloaded, a method is performed. In this method, the cover status1304of the virtual character602is deemed to be “cover”, firing of the virtual weapon604into the scene606is terminated, display of the one or more images1312from the second plurality of images1310of the first virtual character602are terminated, and one or more images1308from the first plurality of images1306of the first virtual character602are displayed. Thus, for instance, the virtual character602stops firing a weapon604and ducks into cover (e.g., a transition fromFIG. 8toFIG. 9happens). Or, when the second affordance702is presently in contact with the user and the weapon status52is in the first value range (indicating that the weapon is loaded or partially loaded) the firing process is repeated. For instance, the process illustrated inFIG. 8is either continued for a period of time or repeated. Or, when (i) the first affordance608is presently in contact with the user, (ii) the weapon status52is in the first value range (indicating that the weapon is loaded or partially loaded), and (iii) the second affordance702is not in contact with the user, the firing process is repeated upon user contact with the second affordance region702. That is, cover status1304is not changed (the virtual character remains either active or in cover) but the weapon is not fired until the user contacts the second affordance region702.

In some embodiments, when a user neutralizes the opponents (defense/offense mechanisms1314) in a scene606or all the opponents in a campaign within a predefined time period (e.g., the campaign346is successfully completed), the user is awarded a first amount of game credit. In some embodiments, when a user fails to neutralize the opponents (defense/offense mechanisms1314) in a scene606or all the opponents in a campaign within a predefined time period (e.g., the campaign346is not successfully completed), the user is awarded no game credit. In some embodiments, no time constraint is imposed on the user and the user can take as long as they want to complete a campaign346.

Throughout this disclosure the terms profile48and profile338have been used interchangeably. While a profile48is found on a computing device102associated with a particular user and a profile338is found in a user profile database336on a gaming server106, the present disclosure encompasses all possible variants of such a schema, including embodiments in which profile48does not exist or profile338does not exist and including embodiments in which some user information is found in profile48and some user information is found in profile338. It is for this reason that the terms profile48and profile338have been used interchangeably in the present disclosure. Likewise, the terms “player” and “user” have been used interchangeably throughout the present disclosure.

Plural instances may be provided for components, operations or structures described herein as a single instance. Finally, boundaries between various components, operations, and data stores are somewhat arbitrary, and particular operations are illustrated in the context of specific illustrative configurations. Other allocations of functionality are envisioned and may fall within the scope of the implementation(s). In general, structures and functionality presented as separate components in the example configurations may be implemented as a combined structure or component. Similarly, structures and functionality presented as a single component may be implemented as separate components. These and other variations, modifications, additions, and improvements fall within the scope of the implementation(s).

It will also be understood that, although the terms “first,” “second,” etc. may be used herein to describe various elements, these elements should not be limited by these terms. These terms are only used to distinguish one element from another. For example, a first mark could be termed a second mark, and, similarly, a second mark could be termed a first mark, without changing the meaning of the description, so long as all occurrences of the “first mark” are renamed consistently and all occurrences of the “second mark” are renamed consistently. The first mark, and the second mark are both marks, but they are not the same mark.

The terminology used herein is for the purpose of describing particular implementations only and is not intended to be limiting of the claims. As used in the description of the implementations and the appended claims, the singular forms “a”, “an” and “the” are intended to include the plural forms as well, unless the context clearly indicates otherwise. It will also be understood that the term “and/or” as used herein refers to and encompasses any and all possible combinations of one or more of the associated listed items. It will be further understood that the terms “comprises” and/or “comprising,” when used in this specification, specify the presence of stated features, integers, steps, operations, elements, and/or components, but do not preclude the presence or addition of one or more other features, integers, steps, operations, elements, components, and/or groups thereof.

As used herein, the term “if” may be construed to mean “when” or “upon” or “in response to determining” or “in accordance with a determination” or “in response to detecting,” that a stated condition precedent is true, depending on the context. Similarly, the phrase “if it is determined (that a stated condition precedent is true)” or “if (a stated condition precedent is true)” or “when (a stated condition precedent is true)” may be construed to mean “upon determining” or “in response to determining” or “in accordance with a determination” or “upon detecting” or “in response to detecting” that the stated condition precedent is true, depending on the context.

The foregoing description included example systems, methods, techniques, instruction sequences, and computing machine program products that embody illustrative implementations. For purposes of explanation, numerous specific details were set forth in order to provide an understanding of various implementations of the inventive subject matter. It will be evident, however, to those skilled in the art that implementations of the inventive subject matter may be practiced without these specific details. In general, well-known instruction instances, protocols, structures and techniques have not been shown in detail.

The foregoing description, for purpose of explanation, has been described with reference to specific implementations. However, the illustrative discussions above are not intended to be exhaustive or to limit the implementations to the precise forms disclosed. Many modifications and variations are possible in view of the above teachings. The implementations were chosen and described in order to best explain the principles and their practical applications, to thereby enable others skilled in the art to best utilize the implementations and various implementations with various modifications as are suited to the particular use contemplated.

Claims

- A method, comprising: at a client device comprising a touch screen display, one or more processors and memory: in an application running on the client device, wherein the application includes a first virtual character that is associated with a categorical cover status selected from the set {“cover” and “active”}, a virtual weapon that is associated with a categorical cover status selected from the set {“first value range” and “second value range”}, a first affordance region configured to adjust a camera view in the application responsive to contact with the first affordance region by the user, and a second affordance region that is different than the first affordance region, the second affordance region configured to perform a firing process responsive to contact with the second affordance region by the user, the firing process comprising consisting of: i) in accordance with a determination that the second affordance region is free of user contact and the weapon status is deemed to be in the second value range, deeming the cover status to be “cover” and deeming the weapon status to be “first value range”, ii) in accordance with a determination that the second affordance region is free of user contact and the weapon status is deemed to be in the first value range, deeming the cover status to be “cover”, iii) in accordance with a determination that the second affordance is presently in contact with the user and the weapon status is in the first value range, deeming the cover status to be “active” and firing the virtual weapon, and (iv) in accordance with a determination that the second affordance is in contact with the user and the weapon status is in the second value range, deeming the cover status to be “cover” and deeming the weapon status to be “first value range”.

- The method of claim 1 , wherein the first and second affordance region are visible to the user.

- The method of claim 1 , wherein the first affordance region is not visible to the user and the second affordance region is visible to the user.

- The method of claim 1 , wherein the first affordance region is visible to the user and the second affordance region is not visible to the user.

- The method of claim 1 , further comprising displaying a scene on the touch screen display, wherein the scene is obtained from a plurality of scene images stored in the client device or that is accessible to the client device from a remote location.

- The method of claim 1 , wherein a first plurality of images are stored in the client device or are accessible to the client device from a remote location, wherein the first plurality of images represent one or more defense mechanisms that shoot at the first virtual character, the method further comprising: displaying one or more images from the first plurality of images on the application independent of the cover status of the first virtual character.

- The method of claim 6 , wherein the displaying one or more images from the first plurality of images displays a second virtual character that moves around in the application.

- The method of claim 6 , wherein a the displaying one or more images from the first plurality of images displays a gun or cannon that is fixed within the scene.

- The method of claim 6 , wherein the first virtual character is subject to termination in a campaign when shot a predetermined number of times by the one or more defense mechanisms.

- The method of claim 6 , wherein, when the firing the virtual weapon into the scene destroys the one or more defense mechanisms, and the method further comprises advancing the first virtual character from a first position in the scene to a second position in the scene.

- The method of claim 6 , wherein, when the firing the virtual weapon into the scene destroys the one or more defense mechanisms, the method further comprises changing the scene on the touch screen display to a new scene from the plurality of scene images.

- The method of claim 1 , wherein the firing the virtual weapon only takes place while the second affordance is in contact with the user and the firing process terminates immediately when the second affordance becomes free of user contact.

- The method of claim 1 , wherein the firing the virtual weapon completes and the weapon status is updated upon the occurrence of the earlier of (a) the second affordance becomes free of user contact and (b) the elapse of a predetermined amount of time.

- The method of claim 13 , wherein the predetermined amount of time is one second or less.

- The method of claim 13 , wherein the predetermined amount of time is 500 milliseconds or less.

- The method of claim 13 , wherein the predetermined amount of time is 100 milliseconds or less.

- A non-transitory computer readable storage medium stored on a computing device, the computing device comprising a touch screen display, one or more processors and memory storing one or more programs for execution by the one or more processors, wherein the one or more programs singularly or collectively comprise instructions for running in an application running on the client device, wherein the application includes a first virtual character that is associated with a categorical cover status selected from the set {“cover” and “active”}, a virtual weapon that is associated with a categorical cover status selected from the set {“first value range” and “second value range”}, a first affordance region configured to adjust a camera view in the application responsive to contact with the first affordance region by the user, and a second affordance region that is different than the first affordance region, the second affordance region configured to perform a firing process responsive to contact with the second affordance region by the user the firing process consisting of: i) in accordance with a determination that the second affordance region is free of user contact and the weapon status is deemed to be in the second value range, deeming the cover status to be “cover” and deeming the weapon status to be “first value range”, ii) in accordance with a determination that the second affordance region is free of user contact and the weapon status is deemed to be in the first value range, deeming the cover status to be “cover”, iii) in accordance with a determination that the second affordance is presently in contact with the user and the weapon status is in the first value range, deeming the cover status to be “active” and firing the virtual weapon, and (iv) in accordance with a determination that the second affordance is in contact with the user and the weapon status is in the second value range, deeming the cover status to be “cover” and deeming the weapon status to be “first value range”.

- A computing system comprising a touch screen display, one or more processors, and memory, the memory storing one or more programs for execution by the one or more processors, the one or more programs singularly or collectively comprising instructions for running an application on the computing system, wherein the application includes a first virtual character that is associated with a categorical cover status selected from the set {“cover” and “active”}, a virtual weapon that is associated with a categorical cover status selected from the set {“first value range” and “second value range”}, a first affordance region configured to adjust a camera view in the application responsive to contact with the first affordance region by the user, and a second affordance region that is different than the first affordance region, the second affordance region configured to perform a firing process responsive to contact with the second affordance region by the user the firing process consisting of: i) in accordance with a determination that the second affordance region is free of user contact and the weapon status is deemed to be in the second value range, deeming the cover status to be “cover” and deeming the weapon status to be “first value range”, terminating firing the virtual weapon into the scene, ii) in accordance with a determination that the second affordance region is free of user contact and the weapon status is deemed to be in the first value range, deeming the cover status to be “cover”, iii) in accordance with a determination that the second affordance is presently in contact with the user and the weapon status is in the first value range, deeming the cover status to be “active” and firing the virtual weapon, and (iv) in accordance with a determination that the second affordance is in contact with the user and the weapon status is in the second value range, deeming the cover status to be “cover” and deeming the weapon status to be “first value range”.

- A method of serving an application at a server comprising one or more processors, and memory for storing one or more programs to be executed by the one or more processors, the one or more programs singularly or collectively encoding the application, wherein wherein the application includes a first virtual character that is associated with a categorical cover status selected from the set {“cover” and “active”}, a virtual weapon that is associated with a categorical cover status selected from the set {“first value range” and “second value range”}, a first affordance region configured to adjust a camera view in the application responsive to contact with the first affordance region by the user, and a second affordance region that is different than the first affordance region, the second affordance region configured to perform a firing process responsive to contact with the second affordance region by the user the firing process comprising consisting of: i) in accordance with a determination that the second affordance region is free of user contact and the weapon status is deemed to be in the second value range, deeming the cover status to be “cover” and deeming the weapon status to be “first value range”, ii) in accordance with a determination that the second affordance region is free of user contact and the weapon status is deemed to be in the first value range, deeming the cover status to be “cover”, iii) in accordance with a determination that the second affordance is presently in contact with the user and the weapon status is in the first value range, deeming the cover status to be “active” and firing the virtual weapon, and (iv) in accordance with a determination that the second affordance is in contact with the user and the weapon status is in the second value range, deeming the cover status to be “cover” and deeming the weapon status to be “first value range.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.