U.S. Pat. No. 10,124,256

REAL-TIME VIDEO FEED BASED MULTIPLAYER GAMING ENVIRONMENT

AssigneeGarbowski Peter

Issue DateMay 17, 2018

Illustrative Figure

Abstract

Methods and systems for real-time video-based multiplayer gaming environments enable operators to remotely control vehicles over a network comprising a base station and a server. Cameras may record and transmit encoded video relating to the vehicles for display at a remote console. In response, the operator is able to input commands to remotely control the operation of the vehicle.

Description

DETAILED DESCRIPTION Disclosed embodiments generally relate to methods and systems for real-time video feed-based multiplayer gaming environments. For example, the systems and methods may enable users to compete over a network in a real-time racing environment. Certain embodiments may relate to vehicles, each comprising a camera that transmits a real-time video feed through a base station and to a server for transmission to a remote console for viewing by the user. In response, the user is able to input commands to remotely control the operation of the vehicle. By utilizing real-time video feeds transmitted to the user rather than rendered environments, several advantages may be realized. For example, in certain embodiments, the remote console need not render complex virtual environments. Rather, the console need only be configured to display video data, receive user input commands, and perform basic functions. Such a remote console would need fewer resources than would be necessary to render a complex virtual environment. As another example, controlling a vehicle in a physical environment may obviate the need for the system to implement an anti-cheat system. Anti-cheat systems are often used in virtual multi-player games to prevent a user from modifying the game or game environment in order to gain an unfair advantage. Such systems often require additional networking and processing resources from the server and remote console. By contrast, in a real-real time video system, the vehicles may be physical objects and therefore their physical modification through traditional hacking is unlikely. As such, the system need not implement sophisticated anti-cheat functionality. This may reduce system resource consumption. Despite certain advantages, real-time video feed-based systems present their own challenges. These challenges may be overcome using some of the systems and methods disclosed herein. For instance, certain disclosed embodiments may utilize various methods to decrease the perceived effect ...

DETAILED DESCRIPTION

Disclosed embodiments generally relate to methods and systems for real-time video feed-based multiplayer gaming environments. For example, the systems and methods may enable users to compete over a network in a real-time racing environment. Certain embodiments may relate to vehicles, each comprising a camera that transmits a real-time video feed through a base station and to a server for transmission to a remote console for viewing by the user. In response, the user is able to input commands to remotely control the operation of the vehicle.

By utilizing real-time video feeds transmitted to the user rather than rendered environments, several advantages may be realized. For example, in certain embodiments, the remote console need not render complex virtual environments. Rather, the console need only be configured to display video data, receive user input commands, and perform basic functions. Such a remote console would need fewer resources than would be necessary to render a complex virtual environment.

As another example, controlling a vehicle in a physical environment may obviate the need for the system to implement an anti-cheat system. Anti-cheat systems are often used in virtual multi-player games to prevent a user from modifying the game or game environment in order to gain an unfair advantage. Such systems often require additional networking and processing resources from the server and remote console. By contrast, in a real-real time video system, the vehicles may be physical objects and therefore their physical modification through traditional hacking is unlikely. As such, the system need not implement sophisticated anti-cheat functionality. This may reduce system resource consumption.

Despite certain advantages, real-time video feed-based systems present their own challenges. These challenges may be overcome using some of the systems and methods disclosed herein. For instance, certain disclosed embodiments may utilize various methods to decrease the perceived effect of performance issues and improve the experience of the user404.

One perceived effect of performance issues is unacceptably long time delay, which may cause jittering, freezing, errors, and other issues. A time delay may be described as the time it takes for an event at one part of the system to be reflected at another end of the system. Increased delay may result in the user providing input that is no longer relevant. For example, if the vehicle is approaching a turn, the operator may input a command to instruct the vehicle to perform the turn. However, if the delay is too high, then the operator may respond to the situation too late and crash the vehicle. An appropriate amount of delay to make the control of the vehicle feel responsive and accurate may be as high as 200 milliseconds (ms); however, this number may be higher or lower depending on the various operating conditions, such as the speed of the vehicle or the preferences of the user.

Performance may generally be classified into two different categories: processing performance and network performance. Processing performance is the rate at which the components can complete an action in response to an input. For example, processing performance may include the rate at which a remote console may convert received video packets into displayed information for the user. The remote console may need to decode, buffer, or otherwise process the video packets before they are displayed. As another example, the vehicle's camera may need to convert or encode the captured images into video data for transmission.

Network performance is a broad concept that encompasses many factors of network quality. These factors may include network latency, bandwidth, jitter, error rate, dropped packets, out of order delivery, and other factors. Depending on the configuration of the system, different factors may have a greater weight in affecting performance. For example, when downloading a large file, bandwidth may be the most important factor because delay caused by network latency may be small compared to delay caused by waiting for the file to be transmitted at the particular bandwidth rate. By contrast, real-time streaming video may be more affected by latency because each packet is being sent for near-immediate display and latency may set the time distance between each packet being received (e.g., the packet of data may be video information sent from the camera to the remote console for presentation to the operator).

In addition, there is interplay between network performance and processing performance for user experience. For example, there may be a method of encoding video data so that the transmitted packets of information are small enough to travel through the network quickly; however, if additional processing resources are needed to encode and decode the video data, any gains achieved in network performance may be moot in light of decreased processing performance. As shown in this example, certain elements of the system, such as encoding video data, may affect performance in multiple ways.

Certain disclosed embodiments may relate to methods of correcting or alleviating both network and processing performance issues. These embodiments may include encoding or transcoding the video data into particular formats in order to better suit the real-time nature of the system. For example, the video data may be encoded in a format that generates key frames and predictive frames to reduce the amount of network resources needed to transmit high-quality video data in real time. In addition, the video data may be encoded so that the video frames may be processed or decoded in parallel, rather than in series, for example, by splitting each frame into multiple slices. In addition, forward error correction may be utilized to prevent or limit the effect of errors on the experience of the user.

FIG. 1illustrates a network diagram of a system1000, including an operations area100, a vehicle110, a base station130, a network250, a server300, a network350, a remote console400, a controller402, and a user404. In certain embodiments, the vehicle110is communicatively coupled to the base station130, which is communicatively coupled to the server300over the network250. The server300is also communicatively coupled via the network350to the remote console400, which is communicatively coupled to the controller402. The controller402may be configured to receive input from user404. In addition, the remote console400may be configured for providing information to the user404. As such, an input from the user404to the controller402may be sent to the vehicle110, and the vehicle110may respond accordingly. In addition, the vehicle110may be configured to transmit data to the user404or other components of the system1000. For example, the vehicle110may transmit haptic feedback to the user404via controller402or the vehicle110may capture video and send the video to the remote console400for presentation to the user404.

The communications between the various components of the system1000may be through various means, networks, or combinations thereof. While the server300has been shown connecting to the base station130and the remote console400over the network250and the network350, respectively, these networks250,350need not be present at all or may be the same network. All connections shown may be direct or through one or more networks, such as local area, wide area, metropolitan area, cellular or other kinds of networks, including the Internet. The communications may be made through various protocols. In addition, although the components of the system1000have been shown as separate blocks, the components may be combined in various combinations, including the same housing. For example, a single device may provide the functionality of both the base station130and the server300.

Remote Console400

The remote console400may be a hardware or software system that, when combined with the controller402, enables the user404to communicate with various components of the system1000. For example, the remote console400may be a mobile device, smart phone, tablet, website, software application, headset, video game console, computer, or other system or combinations of systems. The remote console400may include a monitor or other means for displaying real-time video or otherwise communicating information to the user404.

Controller402

The controller402may be a device, portion of a device, or a combination of devices configured for receiving input from the user404. The controller402may then transmit representations of the input (which may be referred to as simply “input”) to the remote console400for use throughout the system1000. The controller402may be, for example, a video game controller, a touch screen user interface (e.g., the touchscreen of a phone), keyboard, mouse, microphone, gesture sensor, camera, other device, or combinations thereof. The controller402may include various components including but not limited to a steering wheel, a touch screen, a button, a trigger, a pedal, user interface elements, and/or a lever.

Operations Area100

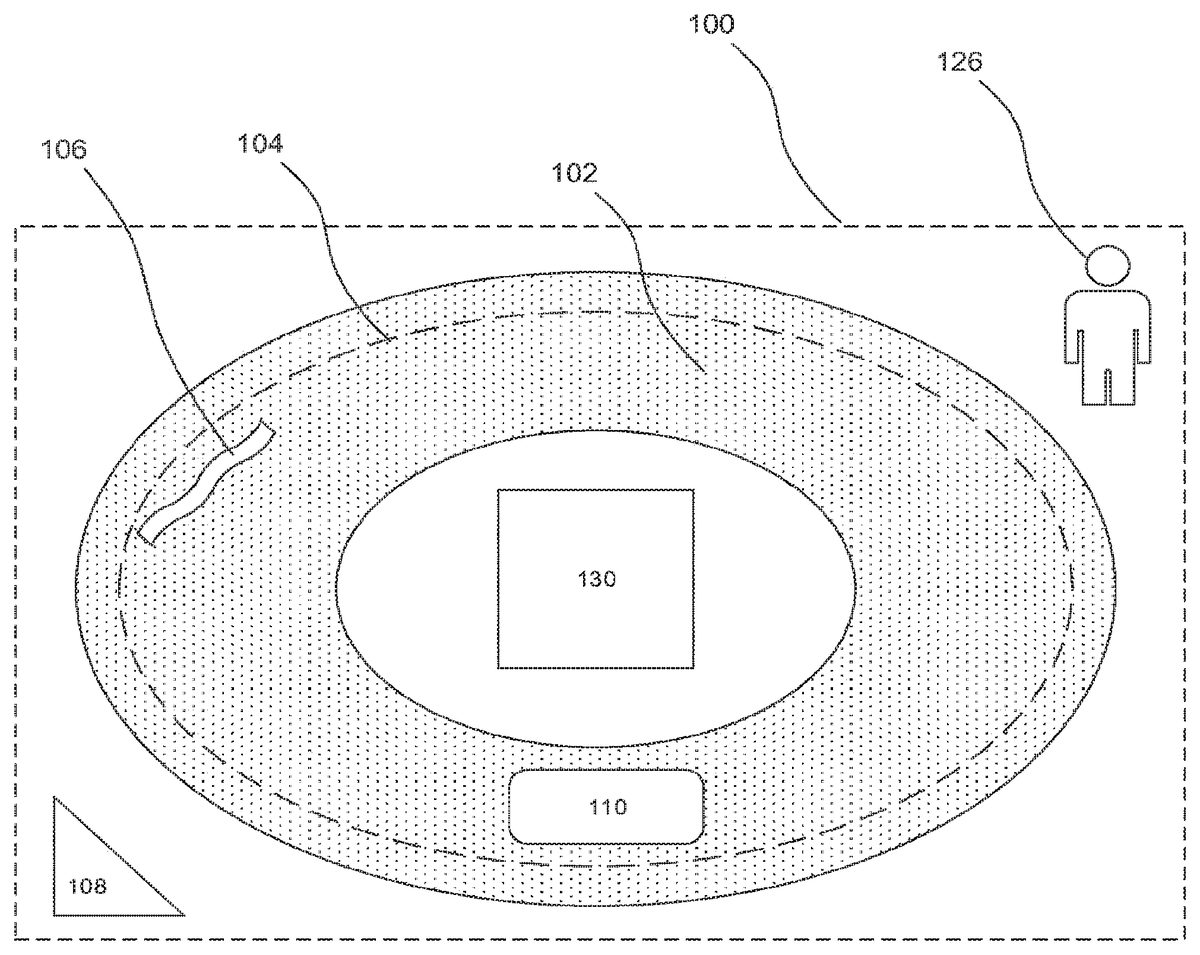

FIG. 2illustrates an overview of the operations area100according to certain implementations, including a track102, a barrier104, a gameplay element106, a sensor108, the vehicle110, a referee126, and the base station130. The operations area100may be described as the area in which the vehicle110operates. The operations area100may be defined by various boundaries, such as a range of a wireless signal from the base station130, a range of the sensors108, an agreed-upon boundary (such as a boundary set in game rules), a physical boundary (such as a wall), or other boundaries or combinations of boundaries. The operations area100as a whole and various components within it may cooperate in order to deliver a particular look, feel, and/or user experience. For example, portions of the operations area100may be scaled to match the scale of the vehicle110so as to appear that the vehicle110is traveling through an appropriately sized real-life environment such as a city, forest, canyon, or other environment.

Track102

The track102may be a predetermined course or route within the operations area100. For example, the track102may be a paved area, a particularly bounded area, an area with particular game features (e.g., a maze, ramps, and jumps), or other regions or combinations of regions. The track102may include various sensors, wires, or other components incorporated in the track itself. For example, the track102may comprise guide wires or transmitters to update the vehicle110or other component of the system to various conditions of the vehicle110or operations area100.

Barrier104

The barrier104may be a virtual or physical boundary that defines a region within the operations area100. In certain embodiments, the barrier104may take the form of a region that interacts with the vehicle110to warn of particular conditions. For example, the barrier104may comprise a rumble strip to warn the user404(for instance through haptic feedback) that the vehicle110may soon be leaving the operations area100. In certain embodiments, the barrier104may be a virtual system that transmits warnings to the remote console400or otherwise informs the user404of particular conditions. These may prevent or dissuade the vehicle110from entering a dangerous area, leaving the game area, or otherwise. In certain embodiments, the barrier104may also be a boundary between various tracks or operations areas100. Such barriers104may include physical boundaries or special boundaries to prevent interference from wireless communications from base stations130of other tracks102or operations areas100.

Gameplay Element106

Various gameplay elements106may be present within the operations area100. The gameplay element106may be an object designed to have an effect on vehicles110in the operations area100. The gameplay element106may be something that vehicle110may need to drive through, around, over, or otherwise take into account. For example, the gameplay element106may be an obstacle such as a pillar, rock, ramp, pole, bumpy area, or water. The gameplay element106may be designed so as to be easily removed or configurable. For example, the gameplay element106may have actuators that allow the element106to be raised, lowered, put away, deactivated, engaged, deployed or otherwise modified. The gameplay element may be communicatively coupled to the base station130or other part of the system1000, such that it may be remotely controlled.

While the gameplay element106may physically interact with the vehicle110to produce a certain effect, it need not. For example, the gameplay element106may be a virtual space defined by a transmitter or other means that, when interacted with, produces an effect on the vehicle. For example, when a component of the system1000detects that the vehicle110passes near the gameplay element106, the server300sends a command to cause a change in the vehicle110or match. For example, the command may be to slow down the vehicle110while it is near a particular gameplay element106. As another example, the user404may gain or lose points when the vehicle110is near the gameplay element106.

Sensor108

Near or within the operations area100, a sensor108or a plurality of sensors108may be provided. The sensors108may be communicatively coupled to various components of system1000and may be used to collect information about conditions within or around the operations area100. This may include, conditions of the vehicle110(e.g., speed or location), weather conditions (e.g., wind speed), conditions near the track102(e.g., potential obstacles, interferences, and crashes), potential sources of wireless interference, and other information. The sensor108may take various forms. For example, in certain implementations, the sensor108is a self-contained computing unit with its own processor, communication system, and other components. In certain implementations, the sensor108may take a simpler form and cooperate with other systems or components in order to produce, interpret, and/or communicate data. In some embodiments, the sensor108may be a camera or system of cameras placed around the track102, such as an overhead camera. The sensor108may also be a sensor disposed in a region of the track102(e.g. a finish line or an out-of-bounds area) determine whether or in what order one or more vehicles110crosses the region.

Vehicle110

The vehicle110may generally be any kind of machine configured for motion. The vehicle110may be a remotely operated vehicle, such as a remote control car, a flying machine (e.g., a drone or a quad-copter), a boat, a submersible vehicle, or other machine configured for motion. The vehicle110may include various means for locomotion, communication, sensing, or other systems.

Referee126

The referee126may be a person who is designated to assist in the operation of the system1000. The referee126perform various tasks including but not limited to, preparing the operations area100for play, preparing the vehicles110for play, correcting crashes or other problems within the operations area100, and other activities. A user404may also be the referee126.

Base Station130

The base station130may be located within or near the operations area100and may provide various functionalities, including communication with and between the vehicle110and the server300. For example, the base station130may be configured to send communications to and receive communications from the vehicle110. These communications may take the form of wireless transmissions via an antenna operably coupled to the base station130. The antenna may be configured for transmitting and/or receiving various wireless communications, including but not limited to wireless local area network communications, communications within the 2.4 GHz ultra-high frequency and 5 GHz super high frequency radio bands (e.g., WiFi™ or IEEE™ 802.11 standards compatible communications), frequencies reserved for use with radio controlled vehicles (e.g. 72 MHz, 75 MHz, and 53 MHz), communications compatible with Bluetooth™ standards, near field communications, and other wireless technologies configured for transmitting data. Non-antenna based forms of communication are also possible, including line-of-sight based communication (e.g., infra-red communication) and wired connections. The base station130may also use this communication functionality to communicate with the sensor108. The base station130may also include a network interface connection for connecting to a network250to which the server300is connected.

Depending on the configuration of the various devices, the base station130may take various roles. In certain embodiments, the base station130may act as a router that passes communication between the server300, vehicle110, and/or sensors108. For example, the base station130may receive a turn-left input from the server300that is associated with the vehicle110. The base station130may then appropriately pass the input to the vehicle110, which then interprets and responds to the turn-left input by turning to the left. As another example, the sensor108may produce a live, top-down video feed of the track102, which the sensor108transmits to the base station130, which, in turn, passes the video feed to the server300. In certain other embodiments, the base station130may take a more active role. For example, the base station130may be a computer system (or operably coupled to a computer system) that may be configured to perform certain tasks. These tasks may include activities that may be more suited to be performed closer to the vehicle110and sensor108, rather than at the server300. For example, the base station130may be configured for detecting a loss of signal between the base station130and the server300and respond accordingly.

The response by the base station130to loss of signal may include assuming control of the vehicle110and buffering certain events occurring in the operations area100for recovery at a later time. Buffering certain events may include taking readings from the sensor108, recording the time the vehicle110crossed a finish line, and other events. For example, more than one vehicle110may be racing towards a photo-finish when the connection with the server300is lost. The base station130may be receive and buffer input from one or more sensors108(e.g., a sensor108monitoring which vehicle110crosses the finish line first), so that when the connection is reestablished, a winner is able to be accurately determined. As another example, the base station130may buffer the video data recorded by the vehicle110in order to show the user404what happened while the real-time signal was interrupted.

Vehicle110

FIG. 3illustrates a block diagram of components of the vehicle110, including a processor112, a media114, a sensor116, a camera118, an antenna120, and a control system122. The processor112may comprise circuitry configured for performing instructions, such as a computer central processing unit. The processor112may be operably connected to the various components114,116,118,120,122of the vehicle110to assist in their operations.

In certain implementations, the media114is a computer-readable media operably coupled to the processor112, including but not limited to transitory or non-transitory memory modules, disk-based memory, solid-state memory, flash memory, and network attached memory. The media114may store or otherwise comprise data, modules, programs, instructions, and other data, configured for execution or use by the processor112to produce results. For example the media114may comprise instructions for the operation of the various components of the vehicle110and other methods, systems, implementations, and embodiments described herein.

The sensor116may be one or more sensors configured for generating data. For example, the sensor116may be configured to collect data related to the speed, direction, orientation, or other characteristics of the vehicle110. After collection, the data may be transmitted directly to the antenna120, or transmitted to the processor112. In certain implementations, the processor may take certain actions based on the data, for example, if the vehicle110is traveling over a set speed, the processor112may send a signal to the control system122to slow down the vehicle, or ignore further acceleration commands until the speed decreases. In certain implementations, the processor112may package or otherwise transmit the data via the antenna120to the base station130for communication to other portions of the system1000. For example, there may be a sensor116configured for detecting data relating to vibrations that the vehicle110may be experiencing. The processor112could direct the vibration data to be transmitted to the controller402to provide haptic feedback to the user404via the controller402. In certain instances, the vehicle110may include a sensor16for collecting or assisting the base station130to determine the location of the vehicle110. For example, the vehicle110may include a sensor16configured for receiving Global Positioning System (GPS) data to provide a rough location of where the vehicle110is located. Certain implementations of the vehicle110may include a beacon or other transmitter configured for transmitting a signal that one or more sensors108may be able to use to determine the location of the vehicle110(e.g., via triangulation).

The antenna120may be a component or system of components configured for performing wireless communications. For example, the antenna120may enable wireless communication compatible with the base station130.

The control system122may be a system or combination of systems configured for controlling various aspects of the vehicle110. For example, if the vehicle110is a wheeled vehicle, the control system122may be configured to send a signal to the motor of the vehicle110to increase or decrease speed, send a signal to a steering system to change the direction of motion of the vehicle, and other such controls. The control system122may be configured to operate various accessories of the vehicle110as well. For example, the control system122may be configured to cause the camera118to rotate, elevate, or otherwise change. If the vehicle110has arms, legs, graspers, cargo, lasers, lights, or other accessories, the control system122may be configured to control their activities as well. The control system122may receive commands from the processor112and/or directly from the antenna120.

The camera118may be a sensor configured for collecting image or video data relating to a scene of interest, including but not limited to a CMOS sensor, a rolling-shutter sensor, and other systems. This video data may be from one or more cameras118on the vehicle110. In addition to or instead of capturing the video data from the camera118on the vehicle110, the video data may be received from a sensor108within the operations area100configured as a camera. The video may be collected in various formats, including but not limited to a native camera format (e.g., a default or otherwise standard setting for output from a video-enabled camera), a compressed format, a block-oriented motion-compensation-based video compression format (e.g. H.264), a format where each frame is compressed separately as an image (e.g., Motion JPEG), and other formats. The camera118may be connected to the processor112such that the data may be transmitted to other parts of the system1000. For example, the camera118may be a camera connected to the processor112through a USB or other wired connection.

The camera118may be attached to an internal or external portion of the vehicle110such that the camera118is aimed at a scene of interest. Additional camera118may be coupled to the vehicle110in various locations and directed to additional scenes of interest. For example, there may be cameras118directed to capture scenes to the left, right, top, bottom, front, or rear of the vehicle110. These cameras118may be configured to collect data all at once, or be selectively activated by the processor112in response to particular events (e.g., the rear-facing camera118activates when the vehicle110is traveling in reverse). The camera118may also be able to capture audio data through a microphone. In addition, one or more cameras118may be used to capture three-dimensional video.

Server300

FIG. 4illustrates a block diagram of the server300of the system1000. The server300ofFIG. 4includes a processor312operably coupled to a media314and a network interface318. The processor312may be circuitry configured for performing instructions, for example as described above in reference to processor112. Similarly, the media314may be a computer-readable media, for example, as described above in reference to media114. The network interface318may be a hardware component or a combination of hardware and software coupled to the processor312that enables the server300to communicate with a network, for example the networks250,350.

The media314may comprise various modules, such as a video encoding module322, an error correction module324, an auto-pilot module326, a hosting module328, a vehicle database330, and a player database332. While the contents of the media314are shown as being a part of the server300, one or more of the contents may be located on and/or executed on media in various other portions of the system1000, such as media114or media located on the base station130.

The video encoding module322may be a module or combination of modules comprising instructions for encoding, decoding, and/or transcoding video data. This may include the various methods described herein and elsewhere, such as multi-slice parallelism, and key and predicted frames.

The error correction module324may be a module or combination of modules comprising instructions for correcting errors. The errors may include, transmission errors, encoding errors, decoding errors, transcoding errors, corrupted data, artifacting errors, compression errors, or various other kinds of errors that may be present or introduced into the system1000.

The auto-pilot module326may be a module or combination of modules comprising instructions for controlling or otherwise directing the movement of one or more vehicles110. The auto-pilot module326may use various means for controlling the vehicles110including data from sensor108, sensor116, camera118, combined with image recognition, position tracking, artificial intelligence, and other means for understanding the state of the vehicle110and directing its behavior.

The hosting module328may be a module or combinations of modules that contain instructions for operating the server300as a server or host for the operation of the real-time video feed based multiplayer game. For example, the hosting module328may comprise instructions for establishing network communications with the various components of the system1000. These network communications may enable the server300to receive input from various components of the system1000and forward, process, or otherwise interact with input from the other components. In certain implementations, the hosting module328may enable to the server300to host a game with particular rules. One set of rules may comprise instructions for operating a race with a group of four vehicles110on track102and determining a winner based on sensor data from the sensor108and/or the sensor16.

For example, users404may initially log in to the server300using particular information, such as a user name and password. Based on the user name and password, the server300may be able to authenticate the connected user404with a particular user identifier stored in the player database332. The server300may then be able to load particular user data associated with the user identifier from the player database332.

In certain implementations, the hosting module328may comprise instructions for preparing to host a game. This may include opening a game lobby and allowing users404to join and wait for a game to begin.

In certain implementations, the hosting module328comprises instructions for preparing the operations area100for a race. This may include moving one or more vehicles110to a starting location. Moving the vehicles110may be performed by a referee126manually moving the vehicles110into place, activating the vehicles110, and/or performing other initial vehicle setup. In certain implementations, the server300may send a signal to the vehicles110(e.g., through base station130), containing instructions for automatically preparing the vehicles110for a match. This may be performed by, for example, instructions contained within the auto-pilot module326and may additionally include running initial diagnostics on the vehicles, commands to move the vehicles110into particular locations, and other initial preparation of the vehicles110for the particular match. In certain game modes, initialization may include preparing the operations area100for a particular game mode. For example, automatically or manually moving or initializing the gameplay elements106or other features of a game mode. In certain implementations, the initialization may include activating, testing, calibrating or otherwise initializing the sensors108or the base station130.

The vehicle database330may be a database or combination of databases comprising information relating to the vehicles110and their operation. The vehicle database330may comprise information such as: vehicle type, vehicle location, vehicle capabilities, price, rental price, usage fees, maintenance history, ownership, usage status, usage history, and other information.

The player database may be a database or combination of databases comprising information about the users404. For example, the database may contain information relating to: user login information, use name, user identifier, user password, remote console identifier, use network connection preferences, user settings, use internet protocol address, user network performance preferences, user processing performance preferences, user controller preferences, vehicle preferences, history of past games, preferred operations areas100, vehicle information, base station region information, and other data client data.

Decreasing Perceived Network Performance Issues and Improving Operator Experience

The system1000may be configured through various systems and methods in order to provide real-time video to the user404with limited impact from network performance issues to improve operator experience. These systems and methods make take various forms, such as the ones described below.

Video Encoding and Decoding

Certain implementations may utilize particular video encoding methods and systems to optimize the video data for real-time video feed based multiplayer gaming. The video data may be encoded at the camera118and/or encoded, transcoded, and decoded at different locations throughout the system1000, such as at the server300via the video encoding module322. For example, the camera118may comprise an onboard processor configured for converting the video data from the sensor on the camera118to an initial encoding.

The encoding of the video data may change as the data is sent through the system1000. For example, the video data may be captured and encoded at a high-quality setting at the camera118and subsequently transcoded to a lower-quality or more compressed format. High-quality video data may be described as video data that more accurately reflects the scene of interest than low-quality video data. For example, high-quality video data may be in a high-definition format, such as 1080p, while the low-quality video is in a standard definition format, such as 480i. As another example, high-quality video data may be encoded at a high-frame rate such as 144 frames per second or 60 frames per second, while low-quality video data may be encoded at a low frame rate such as 24 frames per second or lower.

The transcoding process may be used to take into account various conditions within the system1000, such as bandwidth. If the connection from the vehicle110to the remote console400has low bandwidth, high-quality video information may be too large to be sent without an unacceptable delay. The video data may be kept in a high-quality format until the system1000detects latency or other issues and then transcodes the video data to a more compressed format. For example, the video data may be transmitted at the highest quality possible until network performance issues are detected by the sender, which then transcodes the video based on the perceived network issues. As a specific example, the connection between the vehicle110and the base station130may be configured for supporting the transfer of high-quality video without a significantly affecting network performance, but sending the same data to the server300over network250would result in unacceptable latency so the base station130transcodes the video data to a format more suitable for the conditions of the network250. This transcoding may continue until it is detected that the network250or other conditions have improved.

Multi-Slice Parallelism

In certain implementations, multi-slice parallelism may be used to reduce latency within the system1000. While certain video data systems split video data into frames, multi-slice parallelism may be described as the further division of the video data frames into one or more slices. Multi-slice parallelism may help improve processing performance. For example, the slices may be processed concurrently at multiple processors or multiple cores of a single processor. Many modern processors have more than one core (virtual or physical) with each core configured for running a separate process. While, typically, a single frame may only be processed by a single processor, by breaking each frame into slices, separate cores may be able to process different slices of the same frame at the same time. Once each slice is finished processing, the slices are combined into a frame for display or other use. This process may be described as “multi-slice parallelism” and can be used to speed up the processing of frames and enable shorter delay.

Key and Predicted Frames

In certain implementations, the video data may be encoded such that it utilizes special kinds of frames, such as key frames, predicted frames, and/or bi-directional predicted frames. The encoder (e.g., the encoding module322) may generate predicted frames by examining previous frames and then encoding the predicted frame based on differences between the previous frame and the current frame being encoded. Because the predicted frame is based on changes from the previous frames, a predicted frame may have a smaller size. Bi-directional predicted frames are generated based not only on previous frames, but also frames that come next. Because they are based on both forward and backward differences, bi-directional predicted frames may be even smaller than predictive frames. Key frames are frames that contain data that is not dependent on the content of other frames.

While encoding video data with these frames may result in a small encoded video size, their use in real-time steaming video may introduce certain problems. For example, because bi-directional predicted frames require information based on frames that come next, the waiting for additional frames may result in undesirable delay in the system1000. For example, a bi-directional predictive frame may be received by the remote console400, which waits for the next frame before the bi-directional frame can be decoded. However, if the next frame is delayed or dropped, the remote console400may be stuck waiting.

In certain embodiments, the video data may be encoded using key frames and predicted frames. As discussed above, the predicted frames may have a smaller size, which may decrease the transmission time. This may result in a situation where the remote console displays multiple predicted frames quickly, but then needs to wait for a key frame. This may cause undesirable periodic stutter in video playback. To alleviate this, the remote console400may buffer frames in memory to smooth playback.

Forward Error Correction

During typical networking activity, some packets of data may be delayed, lost, or include an error. While some recipient devices may simply automatically send a request back to the sending device to ask for the particular packet to be sent again, this process may introduce additional delay in the system1000. In certain embodiments, system1000may utilize forward error correction (e.g., as implemented in the error correction module324) to avoid the need for retransmission of packets. Forward error correction may take various forms. For example, the packets of information may include redundant information such that errors in transmission may be detected and corrected. For example, certain embodiments may utilize parity bits, checksums, cyclic redundancy checks, and other methods of detecting or correcting errors. In certain circumstances, the redundant information may be used to infer information about the affected packet and substantially correct the error to avoid retransmission. In certain other circumstance, the packets may be uncorrectable or take too long to process (e.g., the processing time is longer than the useful life of the packet), so the error correction module324may decide to ignore the packet. Similarly, in certain embodiments, the system1000may be configured to detect stale or otherwise erroneous user404input and engage in corrective action. For example, if a packet containing a command is delayed and arrives after a subsequent command has been entered (such as the user404sends a left-turn then a right-turn command but the right-turn command is received by the server300or base station130first), a component of the system100may detect and disregard the subsequent command as stale.

Resource Requirements and Balancing Techniques

The encoding techniques described above and other performance settings of the system need not be static or applied the same across all users. The performance and gameplay settings may be dynamically updated to take into account real-time resource requirements and performance. For example, encoding and transmitting the real-time video data may involve processing, time, and bandwidth resources. While the video data may be encoded in a highly-compressed format, additional time and processor cycles may be needed to achieve the high levels of compression. This may be because the encoding processor may need to spend additional time analyzing the file in order to compress the data to a small size. Similarly, the decoding processor may need to spend additional time reconstructing the video data from a highly compressed file. The server300or other parts of the system1000may be able to analyze and predict the effect of certain resource requirements to achieve beneficial results.

For instance, reducing transport time by 2 ms by spending an additional 5 ms compressing the data may not be worthwhile. However, if the remote console400has significant processing resources but reduced bandwidth or other network resources, the situation may be different. For example, particular encoding methods may be beneficial if the method reduces transport time by 10 ms even though it requires an additional 5 ms to encode and decode the data.

As another example, for unreliable connections that frequently lose or damage packets, including additional error correction information or other redundancies may be worthwhile. While encoding and decoding the video data packets with such information may increase processing time and bandwidth requirements, the ability for the system1000to recover the information may improve the user experience. By contrast, if a user404has a generally stable connection, the user404may be able to tolerate the effect of a few lost frames if processing and network resource requirements are eased.

In addition, certain compression or encoding methods may have an effect on video quality. For example, certain compression or encoding methods may be both network and processor resource efficient but produce undesirable artifacts or compression errors that reduce quality. Tolerance for artifacting and other compression errors may vary on a user-by-user basis. As such, the server300may give users404the option of selecting the level of quality that they prefer.

FIG. 5illustrates an example method for dynamically adjusting performance500. The method500begins at the start step502. The method500may be started at various points in time or in response to various events. For example, the method500may be started when a user logs in to the service, at the user's request, when a match is selected, when a match is started, during a match, after a period of instability, during a benchmark, at a scheduled time, or at other times.

At step504, the server300is initialized and prepared for adjusting performance. In certain implementations, the initialization includes receiving user data. User data may be data relating to a connected or connecting user404, such as user login information, user name, user identifier, user password, remote console identifier, network connection preferences or settings, internet protocol address, network performance preferences, processing performance preferences, control preferences, vehicle preferences, history of past games, a target operations area, vehicle information, base station region information, and other data user data. The user data may be received from media within the remote console400, loaded from the player database332, received as a response to a query, received as part of a connection, and through other means.

At step506, the server300receives performance data. The performance data may be related to one or both of processing performance and network performance of various components of the system1000, including but not limited to the vehicle110, the base station130, the server300, and the remote console400. Network performance data may include data relating to network latency, bandwidth, jitter, error rate, dropped packets, out of order delivery, and other factors affecting network performance. Processing performance data may include data relating to processor speed, processor usage, RAM speed, RAM availability, video decoding speed, number of frames in a buffer, time between a frame arriving at the remote console400and the frame being displayed, and other factors affecting processing performance. Receiving performance data may continue for a particular amount of time, until a particular event occurs, or based on another factor. For example, the performance data may be analyzed every 10 seconds or once a change over a particular threshold is reached.

The server300may receive performance data in different ways. In certain implementations, the server300may passively receive data from one or more components of the system1000. For example, a component of the system1000may periodically send performance data to the server300. The server300may also receive information from the packets that it receives as a part of its operation. For example, a packet of video data received from a component may be analyzed for information relating to performance (e.g. a timestamp, testing for artifacting or other errors in the data that may indicate an issue). The server300may also receive the information through direct requests. For example, the server300may ping a component to determine latency, send a file to test bandwidth, send a video file for decoding to test decoding speed, requesting a component to perform a diagnostic test, request a component ping another component of the system1000, and other means of requesting information.

At step508, some or all of the received performance data is analyzed. This may include various steps or processes to determine the meaning of the received data. For example, with regard to diagnosing network performance based on a ping, several factors may be analyzed, including whether the packets are being received at all, the amount of the delay in the round-trip of the ping (e.g. whether the response time is greater than 400 ms), the duration of a particular delay (e.g. whether the response time is greater than 400 ms for longer than 10 seconds), or other factors.

In certain implementations, step508may include comparing raw performance numbers. For example, the current latency between the server300and the remote console400may be 80 ms and the previous value was 60 ms. This may indicate that there is a latency issue in the network and that one or more settings may need to be updated. However, this fluctuation may be a normal fluctuation in the performance of the network that will stabilize or otherwise be unnecessary to address. In that situation, updating performance settings for every change in performance may be undesirable to the user404or introduce its own performance issues. As such, the server300may use historical data or statistical analysis to determine the appropriateness or desirability of changing settings.

For example, the server300may compare the current latency with the typical historical average latency, median latency, standard deviation, or other data. Historical data may include data from previous gameplay sessions, the same session, other users404in similar circumstances, and other previously collected information. Further, the server300may use the duration of a performance to determine whether a change should be made. For example, in some circumstances, an increase in latency for 2 seconds may be small enough to be ignored, while an increase in latency for 5 seconds may be large enough to need to be addressed.

In certain embodiments, this time interval may be based on historical performance or stored settings. A particular remote console400may typically undergo increases in latency for 5 seconds before returning to an approximately normal setting that need not be addressed. However, there may be another user404with a more stable connection where an increase in latency for even 1 second is atypical, and therefore the server300may update performance settings after only 1 second. This is because there may be natural fluctuations in network performance. In some stable connections (e.g., a T1 connection), fluctuations may be rare and short, while unstable connections (e.g., connecting over cellular data) may frequently have large fluctuations. In certain embodiments, the severity of the change may be analyzed. This may include determining whether certain change thresholds have been reached.

In certain implementations, step608may include calculating a performance score for one or both of the processing performance and the network performance. The performance score may include heuristic calculations based on received performance data. For example, a latency of between 0 ms and 50 ms receives a score of 10, between 50 ms and 100 ms receives a score of 5, between 100 ms and 200 ms receives a score of 2, and a latency greater than 201 ms receives a score of 0. The score need not be tied to directly measured values. For example, scores can be given based on the stability of the connection (e.g., the standard deviation of measured latency is small), or other factors. The sever300may utilize change in score to determine if an alteration should be made. The server300may make alterations to attempt to maximize or minimize certain scores.

In certain implementations, the analysis includes determining the location of performance issues. For example, determining the network performance between the base station130and the server300or between the server300and the remote console400. As another example, the server300may determine where a processing performance issue is located, for example, at the vehicle110, at the base station130, at the server300, at the remote console400, or in other locations.

Based on the analyzed performance data, the server300determines whether to change the operation of the system1000or to leave it substantially the same. If the server300determines that the performance parameters need to be changed, then the process goes to step510.

At step510, performance preferences and/or settings are updated. How the performance preferences are changed, may be based on the analysis of the performance data in step608.

For example, the server300may take different actions depending on the location of the problem. In certain implementations, the server300may dynamically allocate resources or resource requirements throughout the system1000. Resources may include additional bandwidth, priority processing, and other resources available to the server300, base station130, or other parts of the system1000. For example, if the issue is between the server300and base station130, then the server300may instruct the base station130to automatically increase compression to ease network issues at the cost of requiring additional resources to decode. Once the data packets reach the server300, the server300may utilize its increased processing performance to transcode the data into an easier to decode format for use by the remote consoles400.

As another example, if the base station130is having a processing or networking issue, the server300may send an alert to the referee126, decrease the number of vehicles110that may be playing the game concurrently at the base station130(e.g., at the start of the next game), activate an additional or backup base station130, deactivate one or more sensors, send an instruction to the vehicle110to send video of lower quality, alter an encoding parameter at the base station130, or make other changes.

If the server300is having a processing or network issue, the server300may send an alert to a referee126, decrease the number of vehicles110that may be playing the game concurrently at the base station130(e.g., at the start of the next game), activate an additional or backup server300, deactivate one or more sensors108, send an instruction to the vehicle110or base station130to send video of lower quality, alter an encoding parameter at the server300, or make other changes.

In certain implementations, the server300may address network issues by altering the performance of the vehicles110. For example, game rules or difficulty settings may dictate that that all users404should have at least 1 second to identify a gameplay element106and determine what to do. For instance, the operations area100may have a quickest reaction time moment where, after rounding a corner, there is a 10 meter straightaway and then an obstacle that must be avoided. If a maximum speed for the vehicles110is 9 meters per second, a vehicle110traveling at maximum speed would travel the 10 meters in approximately 1.11 seconds. If a user404has a delay of 100 ms, then the user404has approximately 1.11 seconds minus 100 ms or 1.01 seconds to make a decision. However, if the user404with the highest delay has a delay of 200 ms, then that user404would have only 0.91 seconds to react, which is below the threshold. The server300may detect this scenario based on the latency of the user404and readjust the speed of some or all of the vehicles110in order to ensure that all users404meet the minimum time. For example, the server300may reduce the maximum speed of all vehicles110to 8 meters per second. At this speed, the user with 200 ms of delay would have 1.25 seconds minus 200 ms or 1.05 seconds to make a decision, a value above the threshold.

As another example, the speed of the vehicles110may be adjusted so as to ensure that all users404must make a decision within substantially the same calculated reaction time. For example, a variance of up to 10% may be acceptable as could other values depending on chosen rules or user preferences. That is, vehicles110with lower delays or network performance issues, have the maximum speed of the vehicle110increased to reduce the amount of time that the user404has to make a decision. As another example, the server300could personalize the speed of the vehicles110and then adjust the time or score at the completion of the match based on the speed of a vehicle110.

For example, a vehicle110that completed the course in 3 minutes at a speed of 10 meters per second may reach the finish first against a vehicle110that completed the course in 3 minutes 10 seconds at a speed of 8 meters per second; however, the server300may determine that the second-place vehicle110is the winner because it performed better considering its speed. This may be determined by, for example, declaring the winners based on how little of distance was traveled. In the above scenario, the finished-first vehicle110may have traveled the equivalent of 1,800 meters, but the finished-second vehicle110traveled only 1,520 meters and therefore would be declared the winner.

In certain cases, the server300may dynamically update performance parameters on a per-vehicle110basis. For example, by determining, whether the delay should be applied to all of the vehicles110or just some. For example, if highest-delay user404has been disqualified, lapped, or is otherwise unlikely or impossible to win, the server300may exclude that vehicle110from the highest-delay vehicle calculation or only slow that vehicle's speed.

Dynamic update of performance parameters need not be limited to altering the speed of vehicles110. It may include altering the allocation of resources to vehicles110. As an example, the server300may detect that two or more vehicles110are racing towards a photo-finish and direct additional resources in order to improve the performance of the two or more vehicles110. This may be done at the expense of other vehicles110. As yet another example, the server300may shift resources away from vehicles110that have already completed the match and are still operating within the operations area100(e.g. because the vehicle110has crossed a finish line after completing a required number of laps and is taking a victory lap while the other vehicles110finish the match).

In certain embodiments, a digital representation of the operations area100may be generated and made available to the remote console400to allow for accurate control even during periods of increased latency. For example, by default the user404sees the video data that is streamed from the vehicle110, but when experiencing increased latency, the server300may detect the latency, cease sending video data, and instead send signals to the console400to display a portion of the digital representation based on position information of the vehicle110until the latency improves. This may be advantageous because the position data may require less bandwidth and may need to be transmitted less frequently and therefore may be more suitable for high-latency situations.

As another example, an auto-pilot feature (e.g., enabled by the auto-pilot module326) may activate if the server300or another part of the system1000detects performance issues. The auto-pilot feature may be provided at various levels of sophistication. The system1000may detect high latency with the user404and cause the vehicle110to gracefully stop motion. This may be useful to prevent the vehicle110from crashing and being damaged.

For instance, upon detecting performance issues, a component of the system1000, (e.g., the base station130) may transmit a signal to the vehicle110that is experiencing the issue to cause the vehicle110to gracefully stop. As a specific example, the vehicle110may be heading towards a wall of the track102at a high speed. If the vehicle110does not receive any instructions (e.g., because the user404has not sent any instructions, or because the vehicle110did not receive any instructions because of a loss of connection with the server300), the vehicle110may be directed by the base station130to coast to a stop. However, given a particular measured velocity of the vehicle110, the vehicle110may hit the wall even if the vehicle110coasts to a stop. The base station130may be able to detect this collision scenario and the loss of connection with the server300and send instructions to the vehicle110to take maneuvers to avoid the collision and then come to a stop.

In certain embodiments, the auto-pilot feature may direct the vehicle110to travel through the operations area100in a controlled manner. For example, the auto-pilot may send signals to the vehicle110to direct it to a nearby track exit or extraction point. As another example, the auto-pilot may send signals directing the vehicle110to travel through the operations area100on a predetermined path, such as following the track102, until connection is restored.

If the server300determines at step508that it is not desirable to update the performance preferences, then the flow returns to the step of receiving performance data506. This step506may be performed immediately, after a particular delay (e.g., a number of seconds), or on the occurrence of a particular event (e.g., the events described in relation to step502). While the steps of the method500are shown as discrete elements, one or more of the steps may be performed concurrently or substantially at the same time. For example, the server300may receive performance data, even while performance data is being analyzed, performance preferences are being updated, and/or the server300is being initialized.

Usage Example

The following paragraphs describe an example use of certain embodiments of the systems and methods described herein.

According to certain implementation, four users404are interested in playing a game using the system1000, “PlayerOne”, “PlayerTwo”, “PlayerThree”, and “PlayerFour”. The users404begin by launching a game client on their respective remote consoles400. The game client may be software installed or otherwise accessible from the user's remote console400that enables the user to control the game using a controller402and view video data. PlayerOne is playing the game on a smartphone and is using a touch screen on the smartphone as a controller402. The video data is played on the screen as well. PlayerTwo is playing the game from a game client running on a video game console, is using a gamepad as a controller402, and is viewing the video data using a virtual reality headset, which also serves as a secondary controller402. PlayerThree is running the game client from a web application on a computer and begins by launching a web browser and navigating to a particular web address. PlayerThree is using a keyboard and mouse communicatively coupled to the computer as a controller402and will see the video data displayed on a computer monitor. PlayerFour is also running the game on a video game console, but does not have a virtual reality headset and will instead be viewing the video data on a television connected to the video game console.

The users404begin by logging into the server300using authentication information. PlayerOne logs in using a fingerprint scanner located on the smartphone. PlayerTwo and PlayerThree log in using a username and password. PlayerFour does not have an account yet, but registers with the server300by specifying a username, password, player information, and some initial preferences. The server300stores PlayerFour's registration information within a player database332. The information for the other users404is already present in the database332and is used to confirm their log in credentials. The server300facilitates the login by ensuring the users404have properly authenticated themselves, for example, by comparing the received authentication information with stored values in a database.

PlayerOne has upgraded his smartphone since the last time he played but is currently playing over a low-bandwidth cellular connection. The server300detects this change by analyzing an information packet sent from the game client that contains information relating to the remote console400and the connection. The server300updates his entry in the player database332to include data about the new remote console400and alters his settings so PlayerOne receives highly compressed video data in order to alleviate the bandwidth constraints. The server300chooses an advanced video encryption format to take advantage of the faster processor of PlayerOne's new smartphone. The server300compares the remote consoles400and connections of PlayerTwo and PlayerThree with historical data and determines that no change is necessary from previous settings. The server300detects that PlayerFour is a first-time user and automatically configures PlayerFour's initial performance settings based on the settings of other users404playing on similar remote consoles400on similar connections.

The users404each specify that they would like to find a game. PlayerOne and PlayerTwo are friends, so they specify that they would like to join a game together and have no operations area100preference. PlayerThree owns a vehicle110located at a location called “RegionOne” and requests that she be connected to the next game played at that location. RegionOne is a location that comprises multiple operations areas100where users404may play. PlayerFour specifies that he has no location preference and would like to be connected to the soonest-available location. The server300receives the find-game request from the users404and adds them to a pool of other users404also looking for games. The server300places PlayerThree in a virtual lobby for the next game at RegionOne. RegionOne also happens to be the track with the next available opening so PlayerOne, PlayerTwo, and PlayerFour are all placed in the lobby. While the users404on in the lobby, the server300enables the users404to communicate to each other through voice chat and written messages.

The server300detects that everyone has successfully joined by querying their remote consoles400. The server300then sends instructions to the remote consoles400of the users404to display a vote prompt, enabling the users404to vote on which operations area100of RegionOne the users404would like to use and which gameplay mode. The server300determines which voting choices to display by querying the base stations130within RegionOne for a status.

The server300receives responses from the base stations130that indicate that OperationsAreaOne is unavailable due to a tournament, OperationsAreaTwo is available for race and obstacle game modes, OperationsAreaThree is available for race, maze, and capture-the-flag game modes, and that OperationsAreaFour is unavailable because it is being cleaned. The server300then populates the vote options based on the status information. The users404vote and, after tallying the vote, the server300informs them that OperationsAreaTwo and the obstacle game mode have been chosen. The server300sends the results of the vote to the base station130at OperationsAreaTwo with instructions to prepare the operations area100for the obstacle game mode. The server300also sends the results to a referee126in charge of OperationsAreaTwo.

The base station130checks its state and determines that the previous game mode played there was the race game mode. The base station130then changes its game mode to the obstacle game mode. The base station130sends signals to actuators that control game elements106to move them into position. In response, an actuator controlling a ramp game element106raises the ramp from an undeployed position to a deployed position, and the base station130alters the properties of the virtual barrier114to cause the track102to change course and go through a region of bumpy terrain. The referee126, in response to the instructions from the server300, increases the difficulty of the track by scattering gravel throughout a particular part of the track102to reduce traction.

While OperationsAreaTwo is being prepared, the server300sends instructions to the users404to choose a vehicle110from a list of vehicles. The server300populates the list of vehicles110by querying the base station130for a list of the vehicles110at RegionOne and comparing the list to the selected operations area100, game mode, vehicle database330, and player database332. The available vehicles110include air, water, and land vehicles, but the server300excludes water vehicles110because OperationsAreaTwo does not contain water. The server300also excludes air vehicles110from competing because the game mode does not support air vehicles; however, the server300leaves the air vehicles110available for spectating. The server300also analyzes the properties of certain vehicles110and customizes the vehicle list for each user404.

The server300queries for PlayerOne's entry in the player database332and determines that PlayerOne is a premium member and has access to premium level vehicles110in addition to base-level vehicles. As such, the premium vehicles110are available to PlayerOne at no additional cost, and displayed in the vehicle list for PlayerOne as free options. Through a similar method, the server300determines that PlayerTwo, PlayerThree, and PlayerFour may choose the base level vehicles110, choose to rent premium level vehicles110, or purchase vehicles110. PlayerOne chooses a premium level vehicle110. PlayerTwo chooses to rent a premium level vehicle110. The server300receives the rental request, loads PlayerTwo's stored payment information in the player database332, and confirms that PlayerTwo would like to pay a fee to rent the vehicle. PlayerTwo confirms this, her choice is accepted, and the server300causes her to be billed for the cost of renting the vehicle110. Because PlayerThree owns a vehicle110at RegionOne, that vehicle110is available to her but not to the other users404. She chooses that vehicle110. PlayerFour, being new, chooses to spectate the match by flying a base-level drone vehicle110.

The server300receives these requests and sends instructions to the base station130to prepare the selected vehicles. The base station130responds by sending activation signals to the vehicles110, running diagnostic checks to confirm the vehicles110are operational, and responds to the server300with connection information for the vehicles110. The server300receives the connection information and uses it to establish connections between the vehicles110and their respective users404. The server300detects that the vehicles110for PlayerOne and PlayerThree have autopilot options that enables the server300or base station130to drive the vehicles110to the starting line. The server300engages the autopilot feature and uses sensor data from the vehicles110to drive them to the starting line. The server300queries vehicle database330for information about PlayerTwo's vehicle110and determines that the vehicle110does not have an autopilot feature, so the server300sends a message to the referee126to move the vehicle110to the starting line manually. The referee126receives the message and does so. The server300also determines that PlayerFour's vehicle110is already on a launch pad and no further changes are needed before the match begins.

According to certain implementations, the server300confirms the vehicles are in the correct position for the competition to start. For instance, the server300may receive confirmation from sensors positioned on or near the track, such as sensors at or just behind the starting line, that the vehicles110for PlayerOne and PlayerThree have successfully been autopiloted to the starting line. In addition or alternatively, the vehicles110may confirm their positioning. In another example, such as PlayerTwo's vehicle110, the referee126may transmit confirmation that the vehicle has been manually placed on the starting line. If the server300is unable to confirm correct positioning of each of the vehicles110within a specified period of time, e.g., one or two minutes before or after the competition is to begin, those vehicles with unconfirmed positioning may be disqualified, the referee126may be prompted to correctly position one of the vehicles, and/or the vehicle and associated player may be required to take a penalty in the competition.

Once the vehicles110are in the correct position, the server300begins to forward the video data from the vehicles110to the remote consoles of their respective operators. Each vehicle110has a camera, which collects video data of the area around the vehicle. Because PlayerTwo has a virtual reality headset with input controls, she enables a setting that causes her head movement to translate to movement of the camera118. For example, if she turns her head to the left, a controller402in the headset sends a signal through the server404to the control system122of the vehicle110, causing the camera118to rotate to the left.

The video data from a camera404attached to each vehicle110is encoded according to initial configuration instructions received from the server300that correspond to the preferences and settings for each of the users404stored in the player database332. This video data is then wirelessly transmitted from the vehicle110to the base station130, which performs additional processing, if necessary, and transmits the video data to the server300. The server300receives the video data, performs additional processing, if necessary, and transmits the video data to the remote consoles of the users404for any necessary processing and display. The server300then performs diagnostics on the network and processing performance of each of the remote consoles400to determine whether parameters need to be changed before the match beings. The server does so by undergoing the method shown and described inFIG. 5, above. The server300detects that no change is necessary and that the match is ready to begin. As such, the server300sends a message that instructs the users404that the race will begin soon and starts a countdown clock. When the clock reaches zero, the users404are informed that the race has begun and the server300enables the users404to directly control their vehicles110.

During the match, the users404use their respective controllers402to control the motion of their vehicles. The input is transmitted from the controller402to the remote console400to the server300. The server300then transmits the input to the base station130, which sends the input to the vehicle110.

During the match, in addition to processing commands, the server300may synthesize match data for presentation to the users404. For example, the server300may collect position data of the vehicles110and display it on a heads up display (HUD) that is overlaid on the real-time video data. The HUD may display information relating to current match time, current lap number, context-sensitive information (e.g., the vehicle110is leaving the gameplay area, the user404received an in-game message, the user404has earned an achievement, advertising information, or other context-sensitive information), score, speed, and other information. The HUD may also display a compass showing orientation, an arrow indicating a direction that the user404should travel, a mini-map (e.g., a top-down map that shows the location of the user404in relation to operations area100elements and other users404), a picture-in-picture element (e.g., for showing video data from a secondary camera118), and other display elements.

During the course of the match, PlayerOne takes an early lead with PlayerTwo not far behind. PlayerTwo attempts to pass PlayerOne by taking a turn sharply. While turning, PlayerTwo begins to head off course. As her vehicle110approaches the barrier104, the server300receives information from the barrier104detailing how close PlayerTwo is to the barrier104. As the distance decreases, the server300sends increasingly aggressive warning messages to PlayerTwo. First, PlayerTwo's screen flashes red, warning her that she may be heading off course, as she continues to approach the barrier104, the server300sends instructions to her remote console400to cause PlayerTwo's controller402to vibrate. PlayerTwo uses this information to correct her orientation and successfully passes PlayerOne.

PlayerFour watches the pass from above and switches to a view from a sensor108on the ground to get a closer look. The server300receives the camera switch request, activates the chosen sensor108and transmits the video to PlayerFour's remote console402. In addition, the server300detects that PlayerFour may not be able to as easily control his vehicle110from this view, so the server300engages autopilot for the vehicle110. The vehicle110hovers and ignores control signals from PlayerFour until he switches back to the camera118on the vehicle110.

Because PlayerFour is spectating, rather than directly competing, the server300prioritizes video data quality over control responsiveness for PlayerFour. The server300does so by enabling a one-second delay for the otherwise live video data. This additional time enables video data to be encoded using bi-directional frames because the server300has time to buffer and wait to see what the next frame is. The additional level of encoding means that the video quality is able to be increased for PlayerFour.

While being passed, PlayerOne's camera118becomes obscured by dirt and dust kicked up from the wheels of PlayerTwo's vehicle. The server300, while performing image recognition on PlayerOne's video data, detects that the video is obscured beyond a 25% obfuscation threshold and takes action to correct PlayerOne's view. Specifically, the server300sends instructions to the base station130to activate various cameras108around the operations area100and send the video data to the server300. The server300then sends a message to PlayerOne, informing him that a change will be made to his video data. The server300then uses position data for PlayerOne's vehicle110in order to determine which cameras108around the operations area100will show a view of the vehicle110. The server300uses this data to act as a director and switch PlayerOne's video feed to cameras108around the track102that will show his vehicle110. The server300also moves the camera feed from the camera118on the vehicle110to a picture-in-picture window on the screen of PlayerOne's phone. The server300detects that this will require additional bandwidth resources, reduces the quality of the picture-in-picture to balance resource costs of adding the video data in this mode.

In addition, while playing, PlayerOne's cell phone picks up WiFi™ reception and his network performance improves significantly. The server300detects that PlayerOne has increased bandwidth and decreased latency through the method described inFIG. 5, so the server300causes the video data quality to improve from 24 frames per second to 48 frames per second.

During the course of the match, PlayerThree's computer crashes, causing her to lose connection with the server300. The server300detects that it is not receiving input information from PlayerThree and that PlayerThree's remote console400is not responding to ping requests. In response, the sever300activates the auto-pilot module326and takes control of PlayerThree's vehicle110. The server300uses position data, location data, and other information relating to the vehicle110to guide the vehicle110at a leisurely pace around the track102. PlayerThree reboots her computer, and logs back in to the server300. The server300detects that PlayerThree is attempting to reconnect and has a match in progress, so the server300sends a message to her remote console asking her if she would like to reconnect to her match in progress or forfeit the match. PlayerThree chooses to reconnect. The server300reestablishes the connection, runs a diagnostic test on the remote console400to ensure that is in a playable state, and then displays a countdown timer showing how long until PlayerThree will resume control of the vehicle110. PlayerThree resumes control and continues the match.

Claims