U.S. Pat. No. 10,099,147

Using a Portable Device to Interface with a Video Game Rendered on a Main Display

AssigneeSony Interactive Entertainment Inc.

Issue DateSeptember 30, 2013

Illustrative Figure

Abstract

A method for interfacing with a video game is provided, including: rendering display data on a main display, the display data defining a scene rendered by the video game, the display data being configured to include a visual cue; capturing the display data by an image capture device incorporated into a portable device; analyzing the captured display data to identify the visual cue; in response to identification of the visual cue, determining additional information that is in addition to the scene of the video game that is displayed on the main display, the additional information defining graphics or text to be added to the scene of the video game; presenting the additional information on a personal display incorporated into the portable device, the presentation of the additional information being synchronized to the rendering of the display data on the main display.

Description

DETAILED DESCRIPTION OF THE PREFERRED EMBODIMENTS An invention is described for a system, device and method that provide an enhanced augmented reality environment. It will be obvious, however, to one skilled in the art, that the present invention may be practiced without some or all of these specific details. In other instances, well known process operations have not been described in detail in order not to unnecessarily obscure the present invention. The embodiments of the present invention provide a system and method for enabling a low cost consumer application related to augmented reality for entertainment and informational purposes. In one embodiment, a portable device with a display, a camera and software configured to execute the functionality described below is provided. One exemplary illustration of the portable device is the PLAYSTATION PORTABLE (PSP) entertainment product combined with a universal serial bus (USB) 2.0 camera attachment and application software delivered on a universal media disk (UMD) or some other suitable optical disc media. However, the invention could also apply to cell phones with cameras or PDAs with cameras. In another embodiment, the portable device can be further augmented through use of wireless networking which is a standard option on the PSP. One skilled in the art will appreciate that Augmented Reality (AR) is a general term for when computer graphics are mixed with real video in such a way as the computer graphics adds extra information to the real scene. In one aspect of the invention a user points the portable device having a display and a camera at a real world scene. The camera shows the scene on the portable device such that it seems that the user is seeing the world through the device. Software stored on the device or accessed through wireless network displays the real world image, and ...

DETAILED DESCRIPTION OF THE PREFERRED EMBODIMENTS

An invention is described for a system, device and method that provide an enhanced augmented reality environment. It will be obvious, however, to one skilled in the art, that the present invention may be practiced without some or all of these specific details. In other instances, well known process operations have not been described in detail in order not to unnecessarily obscure the present invention.

The embodiments of the present invention provide a system and method for enabling a low cost consumer application related to augmented reality for entertainment and informational purposes. In one embodiment, a portable device with a display, a camera and software configured to execute the functionality described below is provided. One exemplary illustration of the portable device is the PLAYSTATION PORTABLE (PSP) entertainment product combined with a universal serial bus (USB) 2.0 camera attachment and application software delivered on a universal media disk (UMD) or some other suitable optical disc media. However, the invention could also apply to cell phones with cameras or PDAs with cameras. In another embodiment, the portable device can be further augmented through use of wireless networking which is a standard option on the PSP. One skilled in the art will appreciate that Augmented Reality (AR) is a general term for when computer graphics are mixed with real video in such a way as the computer graphics adds extra information to the real scene.

In one aspect of the invention a user points the portable device having a display and a camera at a real world scene. The camera shows the scene on the portable device such that it seems that the user is seeing the world through the device. Software stored on the device or accessed through wireless network displays the real world image, and uses image processing techniques to recognize certain objects in the camera's field of vision. Based on this recognition, the portable device constructs appropriate computer graphics and overlays these graphics on the display device on top of the real world image.

As the device is a portable hand held device with limited computing resources, certain objects may be used so that the image recognition software can recognize the object with relative ease, i.e., in manner suitable for the limited processing capabilities of the portable device. Some exemplary objects are listed below. It should be appreciated that this list is not exhaustive and other objects that are recognizable may be used with the embodiments described herein.

Collectable or regular playing cards are one suitable object. In one embodiment, the playing cards have a fixed colored design in high contrast. The design graphics are easy for the device to recognize through the image recognition software. In addition, the graphics may be chosen so that the device can easily determine the orientation of the card. The portable device can then take the real image, remove the special recognized graphic and replace it with a computer-generated image and then show the resulting combination of real and computer graphics to the user on the display. As the card or the camera moves, the computer graphics move in the same way. In one embodiment, an animating character could be superimposed on the card. Alternatively, a book could be used. Similar to the cards, a clear design is used and then the portable device overlays registered computer graphics before displaying the scene to the user.

In another embodiment, the clear graphic images can be displayed on a television (TV) either from a computer game, the Internet or broadcast TV. Depending upon the software application on the device, the user would see different superimposed computer graphics on the portable display as described further below.

In yet another embodiment, a user with the device can get additional product information by analyzing the standard bar code with the camera attachment. The additional product information may include price, size, color, quantity in stock, or any other suitable physical or merchandise attribute. Alternatively, by using a special graphic design recognized by the portable device, graphics can be superimposed on the retail packaging as seen by the portable device. In addition, through a wireless network of the store in which the merchandise is located, catalogue information may be obtained about the merchandise. In one embodiment, the image data captured by the portable device is used to search for a match of the product through a library of data accessed through the wireless network. It should be appreciated that the embodiments described herein enable a user to obtain the information from a bar code without the use of special purpose laser scanning equipment. The user would also own the device and could take it from store to store. This would enable the user to do comparison-shopping more easily. Also, the device would be capable of much richer graphics than bar code scanners available in-store. In one embodiment, retailers or manufacturers could provide optical disc media with catalogues of product information. The user would put the disc in the device and then point the camera at a bar code and they would see detailed product information.

With respect to music and video, the bar code would enable the portable device to access a sample of the music and play so the user can effectively listen to a part of the CD simply by capturing an image of the bar code. Similarly, for DVD and VHS videos, a trailer can be stored in the product catalogue on the removable media of the device. This trailer can be played back to the user after they capture the bar code and the portable device processes the captured image and matches it to the corresponding trailer associated with the bar code. Likewise, a demo of a video game could be played for video game products. It should be appreciated that there are other possible uses including product reviews, cross promotions, etc. Furthermore, it should be appreciated that the portable device is not scanning the bar code as conventional scanners. The portable device performs image processing on a captured image of the bar code and matches it with a corresponding image to access the relevant data. Furthermore, with an in-store wireless networked and a portable device like the PSP (which is wireless network enabled), there is no need for a special removable disc media catalogue. Here, the catalogue can be provided directly by the in-store wireless network.

In another embodiment, the portable device may be used as a secondary personal display in conjunction with a main display that is shared by several users. For example, several people may play a video game on a single TV and use the portable devices for additional information that is unique for each player. Likewise, for broadcast TV (e.g. game show) where several people in the home watch a single broadcast, but see different personal information on their portable device depending upon their preferences. The portable device may be used to obtain additional information from the main display. For example, with respect to a sports game, additional player information or statistics may be displayed for a selected player. It may be necessary to synchronize the graphics on the main display with those on the portable display. One approach is to use a wireless network or broadcast and to send information to each display using this network. An alternative method is to use visual cues from the main display to drive the synchronization with the portable display. As such no additional expensive network connections are required.

FIG. 1is a simplified schematic diagram of a device having image capture capability, which may be used in an augmented reality application in accordance with one embodiment of the invention. Portable device100includes navigation buttons104and display screen102. Device100is capable of accepting memory card106and image capture device108. Image capture device108may include a charge couple device (CCD) in order to capture an image of a real-world scene. Alternatively, the camera functionality may be provided by a complimentary metal oxide semiconductor chip that uses an active pixel architecture to perform camera functions on-chip. In one embodiment, device100is a PSP device having image capture capability.

FIGS. 2A and 2Bare side views of the portable device illustrated inFIG. 1.FIG. 2Ashows device100with memory card slot110and display panel102. Image capture device108is located on a top surface of device100. It should be appreciated that image capture device108may be a pluggable device or may be hard-wired into device100.FIG. 2Billustrates an alternative embodiment of device100ofFIG. 1. Here, image capture device108is located on a backside of device100. Therefore, a user viewing the display screen102may have the same viewing angle as image capture device108. As illustrated, device100ofFIG. 2Balso includes memory card slot110. It should be appreciated that the memory card may be interchanged between users in order to swap information with other users.

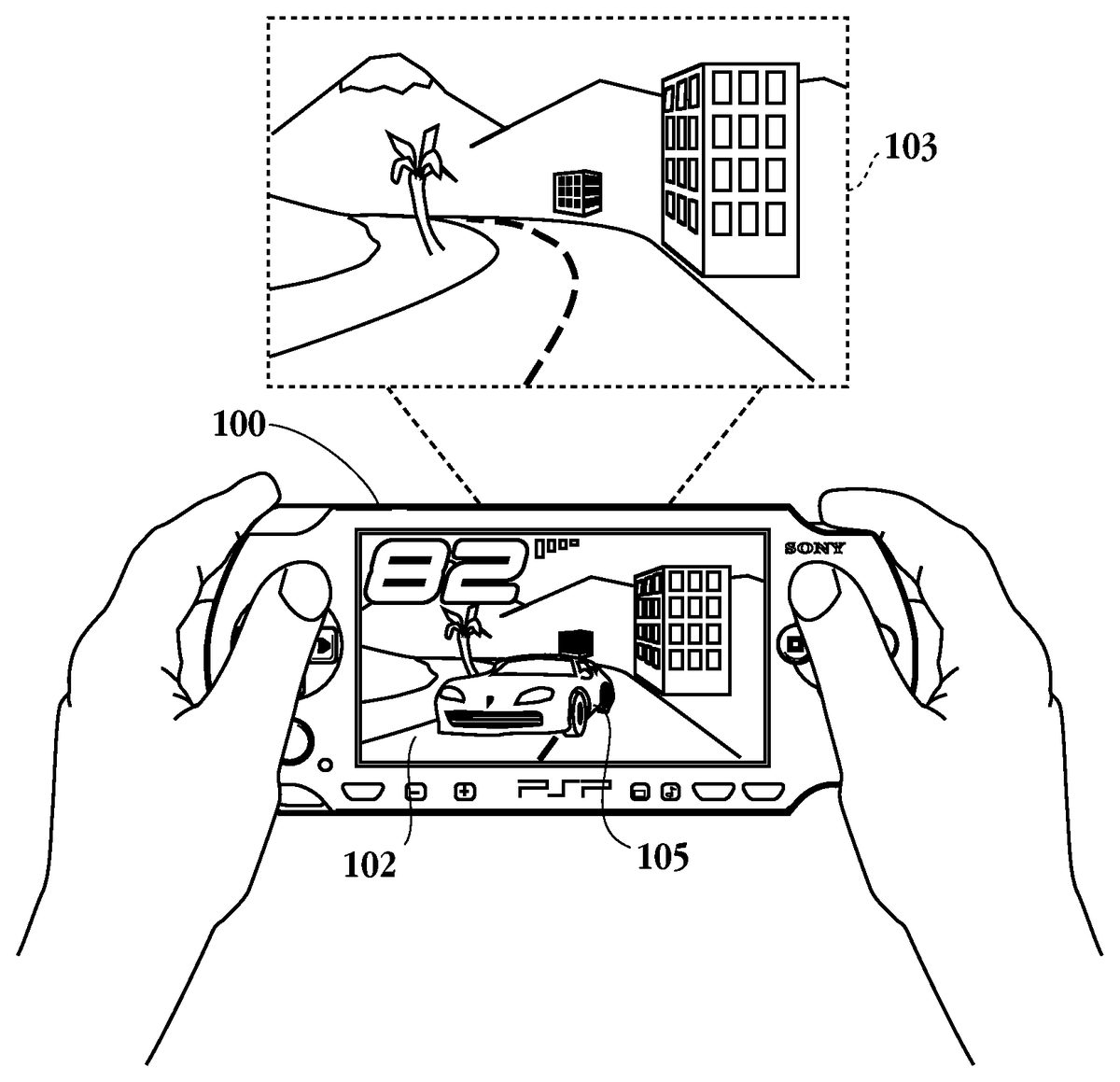

FIG. 3is a simplified schematic diagram of an image capture device being utilized in an augmented reality application in accordance with one embodiment of the invention. Here, device100is being held by a user with a real-world scene103being augmented with computer graphics on display screen102. Real-world scene103includes a street bordering buildings having mountain scenery in the background. The computer graphics incorporated into real-world scene103is car105. In one embodiment, logic within the portable device recognizes the road or a marker on the road, e.g., the dividing line of the road, and incorporates the car into the scene. It should be appreciated that while a PLAYSTATION PORTABLE device is illustrated inFIGS. 1-3the embodiments described herein may be incorporated into any handheld device having camera capability. Other suitable devices include a cell phone, a personal digital assistant, a web tablet, and a pocket PC.

FIG. 4is a simplified schematic diagram illustrating yet another application of the incorporation of computer graphics into a real world scene in accordance with one embodiment of the invention. Here, a user is holding portable device100, which includes display102. It should be noted that display102is expanded relative to device100for ease of explanation. An image capture device, which is incorporated into device100, captures a scene being displayed on display device112, which may be a television. Here, display device112illustrates a tree114being shown. Device100captures the image being displayed on device112and displays tree114on display screen102. In addition to tree114being shown on display screen102, device100incorporates additional objects into the scene. For example, sun116is incorporated into the scene being displayed on display screen102. As described above, a marker, such as marker115of the first display device, may cause the incorporation of additional objects such as sun116into the second display device. It should be appreciated that device100includes a logic capable of recognizing objects such as tree114or marker115and thereafter responding to the recognition of such objects or markers by adding appropriate computer graphics such as sun116into the scene being displayed on device100. Furthermore, the image capture device incorporated into portable device100may be a video capture device that continuously captures the changing frames on display device112and incorporates additional objects accordingly. As mentioned above, visual cues from the main display may be used to drive the synchronization with the portable display.

FIG. 5is a simplified schematic diagram showing the plurality of users viewing a display monitor with a handheld device in accordance with one embodiment of the invention. Here, display device120is a single display device but is illustrated three different times for ease of explanation. Users101athrough101chave corresponding handheld portable devices100athrough100c, respectively. It should be appreciated that a game show, computer game, sporting event or some other suitable display may be being presented on display screen120. Display devices100a,100b, and100ccapture the image being displayed on display screen120and augment image data or graphics into the captured image in order to provide additional information to users101athrough101c. In one embodiment, a game show being displayed on display device120is being viewed by each of users101athrough101c, so that users101athrough101cmay compete with each other. In another embodiment, the display on display screen120, which is captured by devices100athrough100c, includes data which may be analyzed by logic within device100athrough100cso that each of the users see somewhat different displays on the corresponding display screens. For example, with reference to a game of charades, one of the users101athrough101cmay have access to what the answer is while the other users do not have this access. In this embodiment, the television broadcast system may be used to incorporate extra data into the display data being shown by display120in order to provide extra functionality for users101athrough101c. In essence, devices100athrough100cenable extra data in the image being displayed on display120to be turned on. The extra data may be triggered by graphics within display120which are recognized by image recognition logic of the portable device.

FIGS. 6A and 6Bshow yet another application of the use of a portable device capable of recognizing graphical data in accordance with one embodiment of the invention. Here, a user has a portable device100awith display screen102a. As mentioned above, display screen102ais enlarged for ease of explanation. Device100ais capable of being networked to in-store network131a. Device100acaptures an image of a barcode132aassociated with product130a. By recognizing barcode132aand communicating with in-store network131awirelessly, device100ais enabled to download information concerning the characteristics of item130a. It should be appreciated that in place of barcode132adevice100amay recognize a storage box containing item130aor item130aitself. Then, by communicating with in-store network131a, a comparison of the captured image data with a library from in-store network131adevice100ais able to locate the characteristics such as price, size, color, etc., of item130a. The user then may move to store Y and use device100ato download characteristics associated with item130b. Here again, a barcode132bor image data of item130dor its storage container may be used to access the item characteristics, which can be any catalogue characteristics from in-store network133a. From this data, the user is then able to compare the characteristics of item130ain store X and130bin store Y. Thus, where item130aand130bare the same items, the user is able to perform comparison-shopping in the different locations.

FIG. 7is a simplified schematic diagram illustrating the use of a portable device and a card game application in accordance with one embodiment of the invention. Here, the user is pointing device100toward cards140band140b. The cards140and140bmay have symbols or some kind of graphical data, which is recognized by logic within device100. For example, cards140ahas image142aand numbers142b, which may be recognized by image device100. Card140bincludes barcode142cand marker142dwhich also may be recognized by device100. In one application, these markings may indicate the value of the cards in order to determine which card is the highest. Once each of the images/markings of cards140aand140bare processed by the logic within device100, a simulated fight may take place on display screen102where the winner of the fight will be associated with the higher of cards140aand140b. With respect to collectable cards, by using portable device100and a special recognizable design on the card (possibly the back of the card), a new computer generated graphic can be superimposed on the card and displayed on the portable display. For example, for sports cards, the sports person or team on the card can be superimposed in a real 3D view and animated throwing the ball, etc. For role-playing games, it is possible to combine the cards and a video game on the portable device so that collecting physical cards becomes an important part of the game. In this case, a character of the game may be personalized by the player and this information could be swapped with other players via wireless network or via removable media (e.g. Memory Stick).

A similar technique could be used to augment business cards. In addition to the normal printed material on a business (or personal) card, a special identifying graphic could be included. This graphic can be associated with the individual and will reference information about that person potentially including photos, video, audio as well as the normal contact info. The personal information could be exchanged via removable media. In another embodiment a unique graphic is indexed an on-line database via a wireless network to get the information about that person. Having accessed the information, a superimposed graphic, e.g., the person's photo, can be created in place of the graphic on the portable display.

FIG. 8is a flow chart illustrating the method operations for augmenting display data presented to a viewer in accordance with one embodiment of the invention. The method initiates with operation150where the display data on a first display device is presented. Here, the display is shown on a television, computer monitor or some other suitable display device. Then in operation152, the display data on the display device is captured with an image capture device. For example, the portable device having image capture capability discussed above is one exemplary device having image capture capability, which includes video capture capability. The captured display data is then analyzed in operation154. This analysis is performed by logic within the portable device. The logic includes software or hardware or some combination of the two. In operation156a marker within the captured display data is identified. The marker may be a any suitable marker, such as the markers illustrated inFIGS. 4 and 7. In operation158additional display data is defined in response to identifying the marker. The additional display data is generated by image generation logic of the portable device. Alternatively, the additional data may be downloaded from a wireless network. The captured display data and the additional display data are then presented on a display screen of the image capture device in operation160.

FIG. 9is a flow chart illustrating the method operations for providing information in a portable environment in accordance with one embodiment of the invention. The method initiates with operation170where an image of a first object is captured in a first location. For example, an image of an item in a first store may be captured here. In operation172the object characteristics of the first object are accessed based upon the image of the first object. For example, a wireless network may be accessed within the store in order to obtain the object characteristics of the first object. Then, in operation174the user may move to a second location. In operation176an image of a second object in the second location is captured. The object characteristics of the second object are accessed based upon the image of the second object in operation178. It should be appreciated that in operations172and178the image data is used to access the object characteristics and not laser scan data. In operation180the object characteristics of the first object and the object characteristics of the second object are presented to a user. Thus, the user may perform comparison shopping with the use of a portable device based upon the recognition of video image data and the access of in-store networks.

FIG. 10is a simplified schematic diagram illustrating the modules within the portable device in accordance with one embodiment of the invention. Portable device100includes central processing unit (CPU)200, augmented reality logic block202, memory210and charged couple device (CCD) logic212. As mentioned above, a complimentary metal oxide semiconductor (CMOS) image sensor may perform the camera functions on-chip in place of CCD logic212. One skilled in the art will appreciate that a CMOS image sensor draws less power than a CCD. Each module is in communication with each other through bus208. Augmented reality logic block202includes image recognition logic204and image generation logic206. It should be appreciated that augmented reality logic block202may be a semiconductor chip incorporating the logic to execute the functionality described herein. Alternatively, the to functionality described with respect to augmented reality logic block202, image recognition logic204and image generation logic206may be performed in software. Here the code may be stored within memory210.

In summary, the above-described invention describes a portable device capable of providing an enriched augmented reality experience. It should be appreciated that while the markers and graphics that are recognized by the system are computer generated, the invention is not limited to computer-generated markers. For example, a set of pre-authored symbols and a set of user definable symbols can be created which can be recognized even when drawn by hand in a manner recognizable to the camera of the image capture device. In this way, players could create complex 3D computer graphics via drawing simple symbols. In one embodiment, a player might draw a smiley face character and this might be recognized by the device and shown on the display as a popular cartoon or game character smiling With user definable designs, users can also establish secret communications using these symbols.

With the above embodiments in mind, it should be understood that the invention may employ various computer-implemented operations involving data stored in computer systems. These operations include operations requiring physical manipulation of physical quantities. Usually, though not necessarily, these quantities take the form of electrical or magnetic signals capable of being stored, transferred, combined, compared, and otherwise manipulated. Further, the manipulations performed are often referred to in terms, such as producing, identifying, determining, or comparing.

The above-described invention may be practiced with other computer system configurations including hand-held devices, microprocessor systems, microprocessor-based or programmable consumer electronics, minicomputers, mainframe computers and the like. The invention may also be practiced in distributing computing environments where tasks are performed by remote processing devices that are linked through a communications network.

The invention can also be embodied as computer readable code on a computer readable medium. The computer readable medium is any data storage device that can store data, which can be thereafter read by a computer system. Examples of the computer readable medium include hard drives, network attached storage (NAS), read-only memory, random-access memory, CD-ROMs, CD-Rs, CD-RWs, magnetic tapes, and other optical and non-optical data storage devices. The computer readable medium can also be distributed over a network coupled computer system so that the computer readable code is stored and executed in a distributed fashion.

Although the foregoing invention has been described in some detail for purposes of clarity of understanding, it will be apparent that certain changes and modifications may be practiced within the scope of the appended claims. Accordingly, the present embodiments are to be considered as illustrative and not restrictive, and the invention is not to be limited to the details given herein, but may be modified within the scope and equivalents of the appended claims. In the claims, elements and/or steps do not imply any particular order of operation, unless explicitly stated in the claims.

Claims

- A method for interfacing with a video game, comprising: rendering display data on a main display, the display data defining a scene rendered by the video game, the display data being configured to include a visual cue;capturing the display data by an image capture device incorporated into a portable device while being directed toward the main display to enable the capturing;analyzing the captured display data to identify the visual cue as depicted in the scene of the video game as depicted in the display data;in response to identification of the visual cue, determining additional information that is in addition to the scene of the video game that is displayed on the main display, the additional information defining graphics or text to be added to the scene of the video game;presenting the additional information on a personal display incorporated into the portable device, the presentation of the additional information being synchronized to the rendering of the display data on the main display, the synchronization being driven by the identification of the visual cue.

- The method of claim 1 , wherein the image capture device is a video capture device configured to continuously capture changing frames defined by the display data as it is rendered on the main display, wherein the additional information presented on the personal display is updated accordingly based on identification of the visual cue or additional visual cues defined in the changing frames by the display data.

- The method of claim 1 , wherein the visual cue is defined by an object of the video game or a marker.

- The method of claim 1 , wherein the additional information defines at least one additional object of the video game.

- The method of claim 1 , wherein determining the additional information includes receiving the additional information over a wireless connection by the portable device.

- The method of claim 1 , wherein determining the additional information includes generating the additional information by the portable device.

- The method of claim 1 , wherein presenting the additional information includes incorporating the graphics into the scene of the video game to provide extra functionality for the video game.

- The method of claim 1 , wherein determining additional information includes accessing a user preference setting defined for a user of the portable device, the additional information being defined based on the user preference setting.

- A method for interfacing with a video game, comprising: rendering display data on a main display, the display data defining a scene rendered by the video game, the display data being configured to include a visual cue;capturing the display data by a first image capture device incorporated into a first portable device while being directed toward the main display to enable the capturing;analyzing the captured display data of the first image capture device to identify the visual cue as depicted in the scene of the video game as depicted in the display data, and, in response to identification of the visual cue, determining first additional information that is in addition to the scene of the video game that is displayed on the main display, the first additional information defining graphics or text to be added to the scene of the video game;presenting the first additional information on a first personal display incorporated into the first portable device, the presentation of the first additional information being synchronized to the rendering of the display data on the main display, the synchronization being driven by the identification of the visual cue;capturing the display data by a second image capture device incorporated into a second portable device while being directed toward the main display to enable the capturing;analyzing the captured display data of the second image capture device to identify the visual cue as depicted in the scene of the video game as depicted in the display data, and, in response to identification of the visual cue, determining second additional information that is in addition to the scene of the video game that is displayed on the main display, the second additional information defining graphics or text to be added to the scene of the video game, the second additional information being different from the first additional information;presenting the second additional information on a second personal display incorporated into the second portable device, the presentation of the second additional information being synchronized to the rendering of the display data on the main display, the synchronization being driven by the identification of the visual cue.

- The method of claim 9 , wherein the first image capture device is a first video capture device configured to continuously capture changing frames defined by the display data as it is rendered on the main display, wherein the first additional information presented on the first personal display is updated accordingly based on identification of the visual cue or additional visual cues defined in the changing frames by the display data;wherein the second image capture device is a second video capture device configured to continuously capture the changing frames defined by the display data as it is rendered on the main display, wherein the second additional information presented on the second personal display is updated accordingly based on identification of the visual cue or additional visual cues defined in the changing frames by the display data.

- The method of claim 9 , wherein the visual cue is defined by an object of the video game or a marker.

- The method of claim 9 , wherein the first additional information or the second additional information defines at least one additional object of the video game.

- The method of claim 9 , wherein determining the first additional information or the second additional information includes receiving the additional information over a wireless connection by the first portable device or the second portable device, respectively.

- The method of claim 9 , wherein determining the first additional information or the second additional information includes generating the first additional information or the second additional information, respectively, by the first portable device or the second portable device, respectively.

- The method of claim 9 , wherein presenting the first additional information or the second additional information includes incorporating the graphics or text of the first additional information, or the graphics or text of the second additional information, respectively, into the scene of the video game to provide extra functionality for the video game.

- The method of claim 9 , wherein determining the first additional information or the second additional information includes accessing a user preference setting defined for a user of the first portable device or the second portable device, respectively, the first additional information or the second additional information being respectively defined based on the user preference setting.

- A portable device for interfacing with a video game, comprising: an image captured device configured to capture display data rendered on a main display while being directed toward the main display to enable the capturing, the display data defining a scene rendered by the video game, the display data being configured to include a visual cue;image recognition logic configured to analyze the captured display data to identify the visual cue as depicted in the scene of the video game as depicted in the display data;image generation logic, configured in response to identification of the visual cue, to determine additional information that is in addition to the scene of the video game that is displayed on the main display, the additional information defining graphics or text to be added to the scene of the video game;a personal display configured to present the additional information, the presentation of the additional information being synchronized to the rendering of the display data on the main display, the synchronization being driven by the identification of the visual cue.

- The portable device of claim 17 , wherein the image capture device is a video capture device configured to continuously capture changing frames defined by the display data as it is rendered on the main display, wherein the additional information presented on the personal display is updated accordingly based on identification of the visual cue or additional visual cues defined in the changing frames by the display data.

- The portable device of claim 17 , wherein the image generation logic is configured to determine the additional information by receiving the additional information over a wireless connection or by generating the additional information at the portable device.

- The portable device of claim 17 , further comprising: a head mount for enabling the portable device to be worn by a user.

- A method for interfacing with a video game, comprising: capturing display data, rendered on a main display, using an image capture device incorporated into a portable device while being directed toward the main display to enable the capturing, the display data defining a scene rendered by the video game, the display data being configured to include a visual cue;analyzing the captured display data to identify the visual cue as depicted in the scene of the video game as depicted in the display data;in response to identification of the visual cue, determining additional information that is in addition to the scene of the video game that is displayed on the main display, the additional information including graphics or text related to the scene of the video game;presenting the additional information on a display of the portable device, the presentation of the additional information being responsive to the identification of the visual cue.

- The method of claim 21 , wherein the image capture device is a video capture device configured to continuously capture changing frames defined by the display data as it is rendered on the main display, wherein the additional information presented on the personal display is updated accordingly based on identification of the visual cue or additional visual cues defined in the changing frames by the display data.

- The method of claim 21 , wherein the visual cue is defined by an object of the video game or a marker.

- The method of claim 21 , wherein the additional information includes at least one additional object of the video game.

- The method of claim 21 , wherein determining the additional information includes receiving the additional information over a wireless connection by the portable device.

- The method of claim 21 , wherein determining the additional information includes generating the additional information by the portable device.

- The method of claim 21 , wherein determining additional information includes accessing a user preference setting defined for a user of the portable device, the additional information being defined based on the user preference setting.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.