DETAILED DESCRIPTION

This disclosure relates generally to computer ecosystems including aspects of consumer electronics (CE) device networks such as but not limited to distributed computer game networks.

The disclosure teaches that elements of an entertainment asset, and in specific embodiments textures of computer games, are remastered and presented on the fly using the legacy game software without recompiling the software. Thus, for example, a computer game designed to be played on a relatively lower resolution display such as a cell phone display, portable game display such as a PlayStation® Portable (PSP), or an older low resolution game display can be played on a relatively higher resolution display such as a high definition (HD) or ultra high definition (UHD) display or higher resolution game display such as a PlayStation®-3 or PlayStation®-4 display without modifying the legacy game software but still providing high quality remastered images and audio. Remastering is desirable because low resolution textures presented on high resolution displays have blurry or otherwise disagreeable appearances.

A system herein may include server and client components, connected over a network such that data may be exchanged between the client and server components. The client components may include one or more computing devices including game consoles such as but not limited to Sony PlayStation™ and Microsoft Xbox™, portable televisions (e.g. smart TVs, Internet-enabled TVs), portable computers such as laptops and tablet computers, and other mobile devices including smart phones and additional examples discussed below. These client devices may operate with a variety of operating environments. For example, some of the client computers may employ, as examples, Orbis or Linux operating systems, operating systems from Microsoft, or a Unix operating system, or operating systems produced by Apple Computer or Google. These operating environments may be used to execute one or more browsing programs, such as a browser made by Microsoft or Google or Mozilla or other browser program that can access websites hosted by the Internet servers discussed below. Also, an operating environment according to present principles may be used to execute one or more computer game programs.

Servers and/or gateways may include one or more processors executing instructions that configure the servers to receive and transmit data over a network such as the Internet. Or, a client and server can be connected over a local intranet or a virtual private network. A server or controller may be instantiated by a game console such as a Sony PlayStation®, a personal computer, etc.

Information may be exchanged over a network between the clients and servers. To this end and for security, servers and/or clients can include firewalls, load balancers, temporary storages, and proxies, and other network infrastructure for reliability and security. One or more servers may form an apparatus that implement methods of providing a secure community such as an online social website to network members.

As used herein, instructions refer to computer-implemented steps for processing information in the system. Instructions can be implemented in software, firmware or hardware and include any type of programmed step undertaken by components of the system.

A processor may be any conventional general purpose single- or multi-chip processor that can execute logic by means of various lines such as address lines, data lines, and control lines and registers and shift registers.

Software modules described by way of the flow charts and user interfaces herein can include various sub-routines, procedures, etc. Without limiting the disclosure, logic stated to be executed by a particular module can be redistributed to other software modules and/or combined together in a single module and/or made available in a shareable library.

Present principles described herein can be implemented as hardware, software, firmware, or combinations thereof; hence, illustrative components, blocks, modules, circuits, and steps are set forth in terms of their functionality.

Further to what has been alluded to above, logical blocks, modules, and circuits described below can be implemented or performed with a general purpose processor, a digital signal processor (DSP), a field programmable gate array (FPGA) or other programmable logic device such as an application specific integrated circuit (ASIC), discrete gate or transistor logic, discrete hardware components, or any combination thereof designed to perform the functions described herein. A processor can be implemented by a controller or state machine or a combination of computing devices. Thus, the methods herein may be implemented as software instructions executed by a processor, suitably configured application specific integrated circuits (ASIC) or field programmable gate array (FPGA) modules, or any other convenient manner as would be appreciated by those skilled in those art. Where employed, the software instructions may be embodied in a non-transitory device such as a CD ROM or Flash drive. The software code instructions may alternatively be embodied in a transitory arrangement such as a radio or optical signal, or via a download over the internet.

The functions and methods described below, when implemented in software, can be written in an appropriate language such as but not limited to Java, C# or C++, and can be stored on or transmitted through a computer-readable storage medium such as a random access memory (RAM), read-only memory (ROM), electrically erasable programmable read-only memory (EEPROM), compact disk read-only memory (CD-ROM) or other optical disk storage such as digital versatile disc (DVD), magnetic disk storage or other magnetic storage devices including removable thumb drives, etc. A connection may establish a computer-readable medium. Such connections can include, as examples, hard-wired cables including fiber optics and coaxial wires and digital subscriber line (DSL) and twisted pair wires. Such connections may include wireless communication connections including infrared and radio.

Components included in one embodiment can be used in other embodiments in any appropriate combination. For example, any of the various components described herein and/or depicted in the Figures may be combined, interchanged or excluded from other embodiments.

“A system having at least one of A, B, and C” (likewise “a system having at least one of A, B, or C” and “a system having at least one of A, B, C”) includes systems that have A alone, B alone, C alone, A and B together, A and C together, B and C together, and/or A, B, and C together, etc.

Now specifically referring toFIG. 1, an example system10is shown, which may include one or more of the example devices mentioned above and described further below in accordance with present principles. The first of the example devices included in the system10is a consumer electronics (CE) device such as an audio video device (AVD)12such as but not limited to a computer game console system with display or an Internet-enabled TV with a TV tuner (equivalently, set top box controlling a TV). However, the AVD12alternatively may be an appliance or household item, e.g. computerized Internet enabled refrigerator, washer, or dryer. The AVD12alternatively may also be a computerized Internet enabled (“smart”) telephone, a tablet computer, a notebook computer, a wearable computerized device such as e.g. computerized Internet-enabled watch, a computerized Internet-enabled bracelet, other computerized Internet-enabled devices, a computerized Internet-enabled music player, computerized Internet-enabled head phones, a computerized Internet-enabled implantable device such as an implantable skin device, etc. Regardless, it is to be understood that the AVD12is configured to undertake present principles (e.g. communicate with other CE devices to undertake present principles, execute the logic described herein, and perform any other functions and/or operations described herein).

Accordingly, to undertake such principles the AVD12can be established by some or all of the components shown inFIG. 1. For example, the AVD12can include one or more displays14that may be implemented by a high definition or ultra-high definition “4K” or higher flat screen and that may be touch-enabled for receiving user input signals via touches on the display. The AVD12may include one or more speakers16for outputting audio in accordance with present principles, and at least one additional input device18such as e.g. an audio receiver/microphone for e.g. entering audible commands to the AVD12to control the AVD12. The example AVD12may also include one or more network interfaces20for communication over at least one network22such as the Internet, an WAN, an LAN, etc. under control of one or more processors24. Thus, the interface20may be, without limitation, a Wi-Fi transceiver, which is an example of a wireless computer network interface, such as but not limited to a mesh network transceiver. It is to be understood that the processor24controls the AVD12to undertake present principles, including the other elements of the AVD12described herein such as e.g. controlling the display14to present images thereon and receiving input therefrom. Furthermore, note the network interface20may be, e.g., a wired or wireless modem or router, or other appropriate interface such as, e.g., a wireless telephony transceiver, or Wi-Fi transceiver as mentioned above, etc.

In addition to the foregoing, the AVD12may also include one or more input ports26such as, e.g., a high definition multimedia interface (HDMI) port or a USB port to physically connect (e.g. using a wired connection) to another CE device and/or a headphone port to connect headphones to the AVD12for presentation of audio from the AVD12to a user through the headphones. For example, the input port26may be connected via wire or wirelessly to a cable or satellite source26aof audio video content. Thus, the source26amay be, e.g., a separate or integrated set top box, or a satellite receiver. Or, the source26amay be a game console or disk player containing content such as computer game software and databases. The source26awhen implemented as a game console may include some or all of the components described below in relation to the CE device44.

The AVD12may further include one or more computer memories28such as disk-based or solid state storage that are not transitory signals, in some cases embodied in the chassis of the AVD as standalone devices or as a personal video recording device (PVR) or video disk player either internal or external to the chassis of the AVD for playing back AV programs or as removable memory media. Also in some embodiments, the AVD12can include a position or location receiver such as but not limited to a cellphone receiver, GPS receiver and/or altimeter30that is configured to e.g. receive geographic position information from at least one satellite or cellphone tower and provide the information to the processor24and/or determine an altitude at which the AVD12is disposed in conjunction with the processor24. However, it is to be understood that that another suitable position receiver other than a cellphone receiver, GPS receiver and/or altimeter may be used in accordance with present principles to e.g. determine the location of the AVD12in e.g. all three dimensions.

Continuing the description of the AVD12, in some embodiments the AVD12may include one or more cameras32that may be, e.g., a thermal imaging camera, a digital camera such as a webcam, and/or a camera integrated into the AVD12and controllable by the processor24to gather pictures/images and/or video in accordance with present principles. Also included on the AVD12may be a Bluetooth transceiver34and other Near Field Communication (NFC) element36for communication with other devices using Bluetooth and/or NFC technology, respectively. An example NFC element can be a radio frequency identification (RFID) element.

Further still, the AVD12may include one or more auxiliary sensors37(e.g., a motion sensor such as an accelerometer, gyroscope, cyclometer, or a magnetic sensor, an infrared (IR) sensor, an optical sensor, a speed and/or cadence sensor, a gesture sensor (e.g. for sensing gesture command), etc.) providing input to the processor24. The AVD12may include an over-the-air TV broadcast port38for receiving OTA TV broadcasts providing input to the processor24. In addition to the foregoing, it is noted that the AVD12may also include an infrared (IR) transmitter and/or IR receiver and/or IR transceiver42such as an IR data association (IRDA) device. A battery (not shown) may be provided for powering the AVD12.

Still referring toFIG. 1, in addition to the AVD12, the system10may include one or more other CE device types. In one example, a first CE device44may be used to control the display via commands sent through the below-described server while a second CE device46may include similar components as the first CE device44and hence will not be discussed in detail. In the example shown, only two CE devices44,46are shown, it being understood that fewer or greater devices may be used. As alluded to above, the CE device44/46and/or the source26amay be implemented by a game console. Or, one or more of the CE devices44/46may be implemented by devices sold under the trademarks Google Chromecast, Roku, Amazon FireTV.

In the example shown, to illustrate present principles all three devices12,44,46are assumed to be members of an entertainment network in, e.g., a home, or at least to be present in proximity to each other in a location such as a house. However, for present principles are not limited to a particular location, illustrated by dashed lines48, unless explicitly claimed otherwise.

The example non-limiting first CE device44may be established by any one of the above-mentioned devices, for example, a portable wireless laptop computer or notebook computer or game controller (also referred to as “console”), and accordingly may have one or more of the components described below. The second CE device46without limitation may be established by a video disk player such as a Blu-ray player, a game console, and the like. The first CE device44may be a remote control (RC) for, e.g., issuing AV play and pause commands to the AVD12, or it may be a more sophisticated device such as a tablet computer, a game controller communicating via wired or wireless link with a game console implemented by the second CE device46and controlling video game presentation on the AVD12, a personal computer, a wireless telephone, etc.

Accordingly, the first CE device44may include one or more displays50that may be touch-enabled for receiving user input signals via touches on the display. The first CE device44may include one or more speakers52for outputting audio in accordance with present principles, and at least one additional input device54such as e.g. an audio receiver/microphone for e.g. entering audible commands to the first CE device44to control the device44. The example first CE device44may also include one or more network interfaces56for communication over the network22under control of one or more CE device processors58. Thus, the interface56may be, without limitation, a Wi-Fi transceiver, which is an example of a wireless computer network interface, including mesh network interfaces. It is to be understood that the processor58controls the first CE device44to undertake present principles, including the other elements of the first CE device44described herein such as e.g. controlling the display50to present images thereon and receiving input therefrom. Furthermore, note the network interface56may be, e.g., a wired or wireless modem or router, or other appropriate interface such as, e.g., a wireless telephony transceiver, or Wi-Fi transceiver as mentioned above, etc.

In addition to the foregoing, the first CE device44may also include one or more input ports60such as, e.g., a HDMI port or a USB port to physically connect (e.g. using a wired connection) to another CE device and/or a headphone port to connect headphones to the first CE device44for presentation of audio from the first CE device44to a user through the headphones. The first CE device44may further include one or more tangible computer readable storage medium62such as disk-based or solid state storage. Also in some embodiments, the first CE device44can include a position or location receiver such as but not limited to a cellphone and/or GPS receiver and/or altimeter64that is configured to e.g. receive geographic position information from at least one satellite and/or cell tower, using triangulation, and provide the information to the CE device processor58and/or determine an altitude at which the first CE device44is disposed in conjunction with the CE device processor58. However, it is to be understood that that another suitable position receiver other than a cellphone and/or GPS receiver and/or altimeter may be used in accordance with present principles to e.g. determine the location of the first CE device44in e.g. all three dimensions.

Continuing the description of the first CE device44, in some embodiments the first CE device44may include one or more cameras66that may be, e.g., a thermal imaging camera, a digital camera such as a webcam, and/or a camera integrated into the first CE device44and controllable by the CE device processor58to gather pictures/images and/or video in accordance with present principles. Also included on the first CE device44may be a Bluetooth transceiver68and other Near Field Communication (NFC) element70for communication with other devices using Bluetooth and/or NFC technology, respectively. An example NFC element can be a radio frequency identification (RFID) element.

Further still, the first CE device44may include one or more auxiliary sensors72(e.g., a motion sensor such as an accelerometer, gyroscope, cyclometer, or a magnetic sensor, an infrared (IR) sensor, an optical sensor, a speed and/or cadence sensor, a gesture sensor (e.g. for sensing gesture command), etc.) providing input to the CE device processor58. The first CE device44may include still other sensors such as e.g. one or more climate sensors74(e.g. barometers, humidity sensors, wind sensors, light sensors, temperature sensors, etc.) and/or one or more biometric sensors76providing input to the CE device processor58. In addition to the foregoing, it is noted that in some embodiments the first CE device44may also include an infrared (IR) transmitter and/or IR receiver and/or IR transceiver78such as an IR data association (IRDA) device. A battery (not shown) may be provided for powering the first CE device44. The CE device44may communicate with the AVD12through any of the above-described communication modes and related components.

The second CE device46may include some or all of the components shown for the CE device44. Either one or both CE devices may be powered by one or more batteries.

Now in reference to the afore-mentioned at least one server80, it includes at least one server processor82, at least one tangible computer readable storage medium84such as disk-based or solid state storage, and at least one network interface86that, under control of the server processor82, allows for communication with the other devices ofFIG. 1over the network22, and indeed may facilitate communication between servers and client devices in accordance with present principles. Note that the network interface86may be, e.g., a wired or wireless modem or router, Wi-Fi transceiver, or other appropriate interface such as, e.g., a wireless telephony transceiver. Typically, the server80includes multiple processors in multiple computers referred to as “blades”.

Accordingly, in some embodiments the server80may be an Internet server or an entire server “farm”, and may include and perform “cloud” functions such that the devices of the system10may access a “cloud” environment via the server80in example embodiments for, e.g., network gaming applications. Or, the server80may be implemented by one or more game consoles or other computers in the same room as the other devices shown inFIG. 1or nearby.

FIG. 2illustrates an example system which may include any of the appropriate components described above. A processor200such as a computer game console processor executes a legacy game controller202typically implemented in software to present a computer game on one or more audio and/or video displays204. As indicated previously, the game software, which is but an example of an entertainment asset that may leverage present principles, is assumed for disclosure purposes to have been designed for playing a computer game on a relatively low resolution display. The A/V display204, on the other hand, is assumed to have a higher resolution than the display for which the legacy software was targeted.

As disclosed further below, the processor200may execute an emulator engine206, typically embodied in software, to insert remastered textures from a data store208into the game on the fly as it is being presented on the A/V/display204. As also discussed is greater detail below, the remastered textures in the data store208are produced by one or more remastering computers210.

The emulator engine206may be regarded as a software interpreter that effects emulation without recompiling code to replace substantially all of the low resolution textures called for by the game202with higher resolution remastered versions of those textures. By “substantially all” of the textures is meant all of the low resolution legacy textures except for possibly a small percentage, under 10%, that are not remastered either by design or inadvertence.

FIGS. 3 and 4illustrate logic for creating remastered textures, andFIGS. 5-7present a visual example of a portion of the remastering process. Commencing at block300, the legacy game202is executed on a remastering computer210. As the game runs, it issues calls for assets such as computer game textures, which calls are intercepted at block302. An algorithm is executed on information pertaining to the called-for textures to render respective results. In example implementations, the algorithm includes a hash such as but not limited to a secure hash algorithm, it being understood that other hash functions are contemplated. The hash is executed on all or part of the texture. For example, the hash may be executed on a texture identification or other portion of the texture. The result of the hash is a hash value or hash tag.

Proceeding to diamond306, it is determined whether the hash tag resulting from the hash in block304matches a hash tag in a data structure accessible to the processor. Of course, the test at decision diamond306will be negative the first time the game is played because the data structure initializes in an unpopulated state. A negative test causes the hash tag and associated texture to be added to the data structure (such as a database) at block308.

Because computer games often entail multiple branches it is usually necessary to run the game multiple times to follow each branch, to ensure that all (or substantially all) of the legacy textures with associated hash tags are added to the data structure. Accordingly, it will be the case that from time to time a call for a previously stored texture will be intercepted and thus cause the test at diamond306to be positive, which simply loops the logic back to the next texture call.

FIG. 4shows that as legacy low-resolution textures are added to the data structure inFIG. 3, an artist operating the same or a different remastering computer may employ the process shown inFIG. 4to remaster the low resolution textures into higher resolution textures. The artist accesses the data structure to retrieve a legacy texture at block400. The legacy texture is presented on a higher definition display at block402, preferably on the display of the game console targeted for presenting the remastered textures. The artist remasters the texture at block404and saves the remastered texture and associated hash tag at block406back to the storage location from whence the original texture came, preferably replacing the original texture with the remastered texture. The hash tag may include a date/time stamp that indicates when the associated texture was remastered.

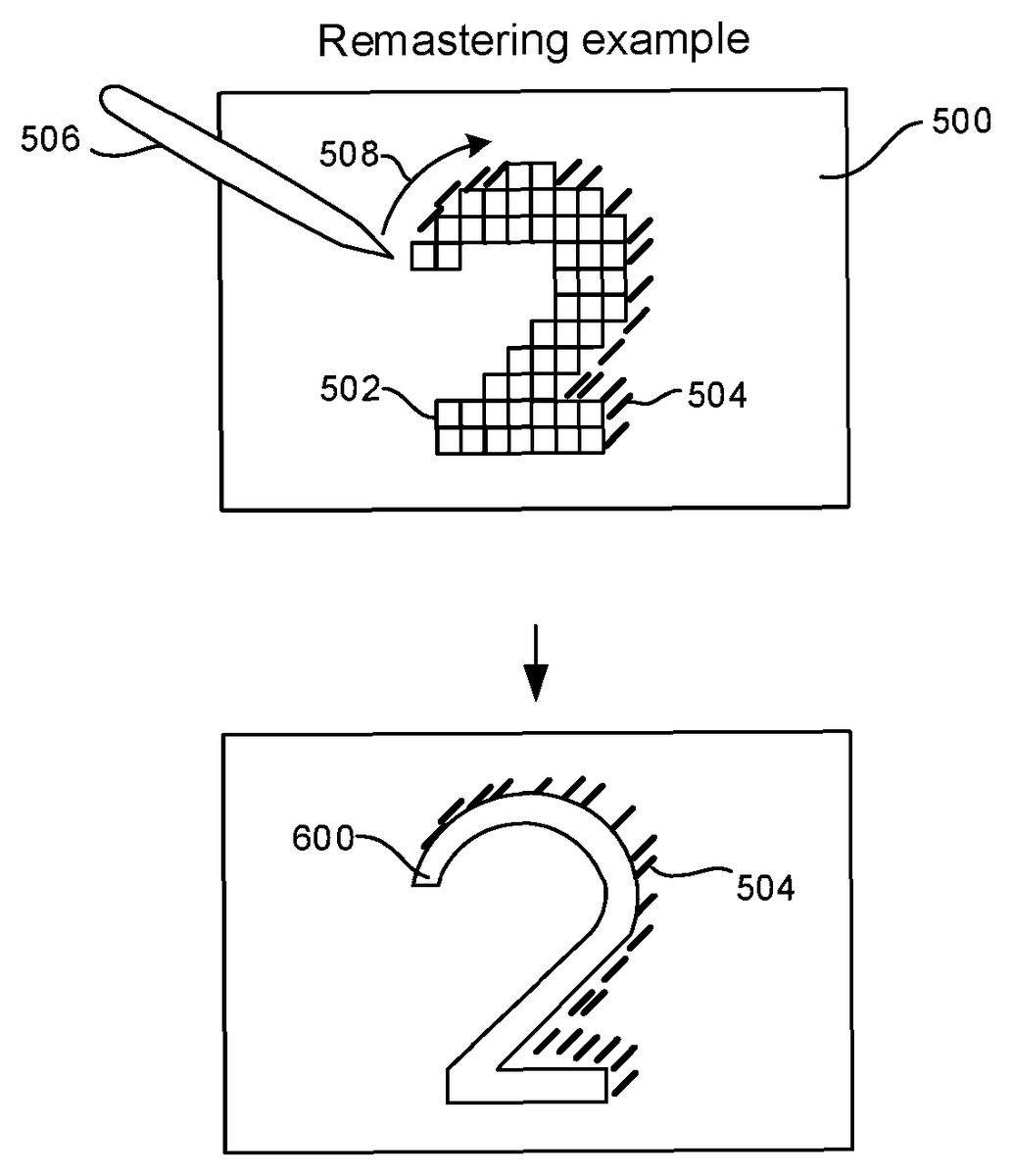

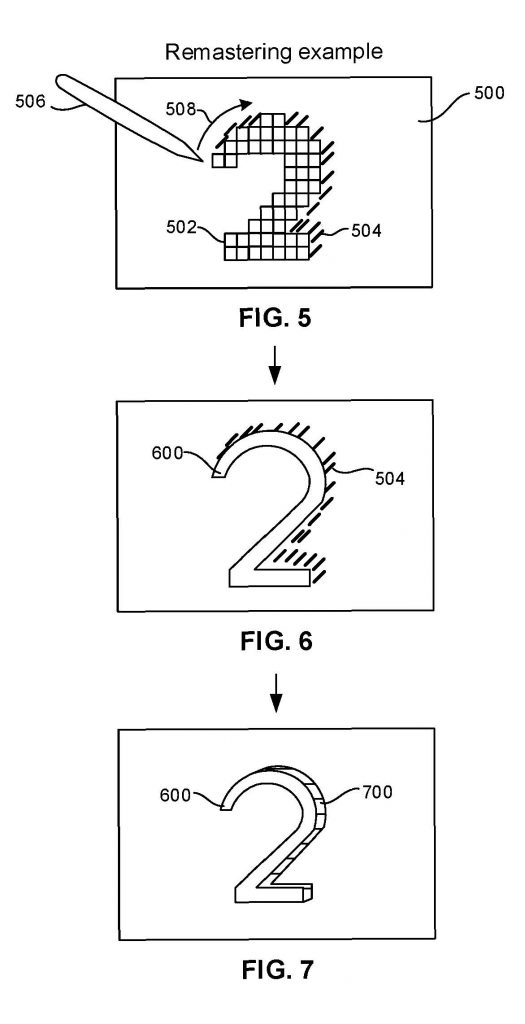

FIGS. 5-7illustrate. A high resolution display500which may be a touch screen display presents an original low-resolution texture502, in this case, a 3D numeral “2”. Because the original low-resolution texture502is being presented on a higher resolution display than originally intended, its appearance, as shown, is blocky or blurry, and the shading504intended to give the numeral “2” a 3D appearance is likewise blocky/blurry.

To remaster the texture, an artist may employ her finger or a stylus506to trace a numeral “2” over the original texture, as indicated by the arrow508. This input causes the graphic editing software to present a high resolution texture600as shown inFIG. 6, which shows a smooth, non-blocky, non-blurring remastered portion of the original texture.

The blocky/blurry shading504, however, remains to be remastered. This is also effected by the artist drawing a shading border700as shown inFIG. 7that accurately captures, for the human eye, a 3D presentation of the numeral “2”. The example remastered texture shown inFIG. 7is then saved back to the data structure with its hash tag with updated timestamp as indicated at block406inFIG. 4. The process is repeated to remaster the original textures in the data structure until all (or substantially all) original textures have been replaced by remastered versions.

FIG. 8illustrates game play using the legacy game software202to present a remastered computer game on the high resolution display204without recompiling the game software. At block800, as each call from the software for a texture is made, it is intercepted and hashed at block802using the same function that was used to generate the remastered textures. The resulting hash tag is used as entering argument to the data structure208, which is a copy of (or otherwise derived from) the data structure to which remastered textures are added in block308ofFIG. 3. If the hash tag has a match in the data structure, as it is expected to, the remastered texture associated with the matching hash tag is retrieved at block806and presented in the computer game display on the fly at block808. The logic then loops back to block800to intercept the next texture call from the legacy game software.

Recognizing the possibility that not all original textures may have been remastered, in the event that the hash tag at diamond804has no match in the data structure, the legacy texture may be presented at block810. Or, no texture at all may be presented. In either case, since a texture is presented for only a short moment of time until an ensuing texture is called, the temporary presentation of a low resolution texture or no texture at all may not be unduly distracting.

The above principles may be used in conjunction with remastering lower resolution original audio that accompanied the original entertainment asset.FIG. 9illustrates. A call for an audio asset such as but not limited to an audio track or audio sample is intercepted at block900. If it is determined at diamond902that a higher resolution remastered version of the audio is present in a data structure such as that containing the remastered textures or another, separate audio database, the remastered audio is presented at block904. Otherwise, the original audio is presented at block906, or no audio at all may be presented. Audio fingerprints, for instance, may be used to match the audio called for at block900with matching remastered audio. As an example, audio samples originally provided in a computer game at 11 k can be remastered to 44.1 k.

Principles above pertaining to textures may also be applied to 3D geometries called for by an entertainment asset.

It will be appreciated that whilst present principals have been described with reference to some example embodiments, these are not intended to be limiting, and that various alternative arrangements may be used to implement the subject matter claimed herein.