U.S. Pat. No. 10,058,779

GAME APPARATUS, STORAGE MEDIUM HAVING GAME PROGRAM STORED THEREON, GAME SYSTEM, AND GAME PROCESSING METHOD

AssigneeNINTENDO CO., LTD.

Issue DateMay 5, 2017

Illustrative Figure

Abstract

When a first swing input is determined to have been made in a first movement start-possible state, in which a first object is allowed to start moving, and when the first object is put into the first movement start-possible state within a predetermined time period after the first swing input is determined to have been made, the first object is started to move in a virtual space based on at least the first swing input; and when a second swing input is determined to have been made in a second movement start-possible state, in which a second object is allowed to start moving, and when the second object is put into the second movement start-possible state within a predetermined time period after the second swing input is determined to have been made, the second object is started to move based on at least the second swing input.

Description

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS A description is given below of a game apparatus, a game program, a game system, and a game processing method according to an exemplary embodiment. An information processing system1as an example of game system according to the exemplary embodiment includes a main body apparatus (information processing apparatus; acts as a game apparatus main body in the exemplary embodiment)2, a left controller3, and a right controller4. In another form, the information processing system may further include a cradle5(seeFIG. 6,FIG. 7, and the like) in addition to the above elements. In the information processing system1according to the exemplary embodiment, the left controller3and the right controller4are attachable to, and detachable from, the main body apparatus2. The information processing system1is usable as an integrated apparatus in a state where the left controller3and the right controller4are attached to the main body apparatus2. Alternatively, the main body apparatus2, the left controller3and the right controller4are usable as separate bodies (seeFIG. 2). The information processing system1is usable in a form in which an image is displayed on the main body apparatus2, and in a form in which an image is displayed on another display apparatus such as a TV or the like. In the former form, the information processing system1is usable as a mobile apparatus (e.g., a mobile game apparatus). In the latter form, the information processing system1is usable as a stationary apparatus (e.g., a stationary game apparatus). FIG. 1shows a state where the left controller3and the right controller4are attached to the main body apparatus2in an example of the information processing system1according to the exemplary embodiment. As shown inFIG. 1, the information processing system1includes the main body apparatus2, the left controller3, and the right controller4. The left controller3and the right controller4are attached to, and integrated with, the main body apparatus2. The main ...

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS

A description is given below of a game apparatus, a game program, a game system, and a game processing method according to an exemplary embodiment. An information processing system1as an example of game system according to the exemplary embodiment includes a main body apparatus (information processing apparatus; acts as a game apparatus main body in the exemplary embodiment)2, a left controller3, and a right controller4. In another form, the information processing system may further include a cradle5(seeFIG. 6,FIG. 7, and the like) in addition to the above elements. In the information processing system1according to the exemplary embodiment, the left controller3and the right controller4are attachable to, and detachable from, the main body apparatus2. The information processing system1is usable as an integrated apparatus in a state where the left controller3and the right controller4are attached to the main body apparatus2. Alternatively, the main body apparatus2, the left controller3and the right controller4are usable as separate bodies (seeFIG. 2). The information processing system1is usable in a form in which an image is displayed on the main body apparatus2, and in a form in which an image is displayed on another display apparatus such as a TV or the like. In the former form, the information processing system1is usable as a mobile apparatus (e.g., a mobile game apparatus). In the latter form, the information processing system1is usable as a stationary apparatus (e.g., a stationary game apparatus).

FIG. 1shows a state where the left controller3and the right controller4are attached to the main body apparatus2in an example of the information processing system1according to the exemplary embodiment. As shown inFIG. 1, the information processing system1includes the main body apparatus2, the left controller3, and the right controller4. The left controller3and the right controller4are attached to, and integrated with, the main body apparatus2. The main body apparatus2is an apparatus that executes various processes (e.g., game process) in the information processing system1. The main body apparatus2includes a display12. The left controller3and the right controller4are each a device including an operation section allowing a user to make an input thereto.

FIG. 2shows an example of state where the left controller3and the right controller4are detached from the main body apparatus2. As shown inFIG. 1andFIG. 2, the left controller3and the right controller4are attachable to, and detachable from, the main body apparatus2. The left controller3is attachable to a left side surface (side surface on a positive side in an x-axis direction shown inFIG. 1) of the main body apparatus2, and is attachable to, and detachable from, the main body apparatus2by being slid along the left side surface of the main body apparatus2in a y-axis direction shown inFIG. 1. The right controller4is attachable to a right side surface (side surface on a negative side in the x-axis direction shown inFIG. 1) of the main body apparatus2, and is attachable to, and detachable from, the main body apparatus2by being slide along the right side surface of the main body apparatus2in the y-axis direction shown inFIG. 1. Hereinafter, the left controller3and the right controller4will occasionally be referred to collectively as “controllers”. In the exemplary embodiment, an “operation device” operable by a single user may be a single controller (e.g., one of the left controller3and the right controller4) or a plurality of controllers (e.g., both the left controller3and the right controller4, or at least either the left controller3or the right controller4and another controller). The “operation device” includes at least one controller. Hereinafter, an example of specific configuration of the main body apparatus2, the left controller3, and the right controller4will be described.

FIG. 3provides six orthogonal views showing an example of the main body apparatus2. As shown inFIG. 3, the main body apparatus2includes a generally plate-shaped housing11. In the exemplary embodiment, a main surface of the housing11(in other words, a front surface, i.e., a surface on which the display12is provided) has a roughly rectangular shape. In the exemplary embodiment, the housing11is longer in a left-right direction as described below. In the exemplary embodiment, a longer direction of the main surface of the housing11(i.e., x-axis direction shown inFIG. 1) will be referred to as a “width direction” (also referred to as the “left-right direction”), and a short direction of the main surface (i.e., y-axis direction shown inFIG. 1) will be referred to as a “length direction” (also referred to as an “up-down direction”). A direction perpendicular to the main surface (i.e., z-axis direction shown inFIG. 1) will be referred to as a “depth direction” (also referred to as a “front-rear direction”). The main body apparatus2is usable in an orientation in which the width direction extends in the horizontal direction. The main body apparatus2is also usable in an orientation in which the length direction extends in the horizontal direction. In this case, the housing11may be considered as being longer in the vertical direction.

The housing11may have any shape and size. For example, the housing11may have a mobile size. A single body of the main body apparatus2, or an integrated apparatus including the main body apparatus2and the left and right controllers3and4attached thereto, may act as a mobile apparatus. Alternatively, the main body apparatus2or the integrated apparatus may act as a handheld apparatus. Still alternatively, the main body apparatus2or the integrated apparatus may act as a portable apparatus.

As shown inFIG. 3, the main body apparatus2includes the display12provided on the main surface of the housing11. The display12displays an image (a still image or a moving image) acquired or generated by the main body apparatus2. In the exemplary embodiment, the display12is a liquid crystal display device (LCD). Alternatively, the display12may be a display apparatus of any type.

The main body apparatus2includes a touch panel13provided on a screen of the display12. In the exemplary embodiment, the touch panel13is of a type that allows a multi-touch input to be made (e.g., of an electrostatic capacitance type). Alternatively, the touch panel13may be of any type. For example, the touch panel13may be of a type that allows a single-touch input to be made (e.g., of a resistive type).

The main body apparatus2includes speakers (speakers88shown inFIG. 8) accommodated in the housing11. As shown inFIG. 3, the main surface of the housing11has speaker holes11aand11bformed therein. The speakers88output a sound through the speaker holes11aand11b.

As shown inFIG. 3, the main body apparatus2includes a left rail member15provided on the left side surface of the housing11. The left rail member15is provided to allow the left controller3to be detachably attached to the main body apparatus2. The left rail member15extends in the up-down direction on the left side surface of the housing11. The left rail member15is so shaped as to be engageable with a slider in the left controller3(slider40shown inFIG. 4), and a slide mechanism includes the left rail member15and the slider40. The slide mechanism allows the left controller3to be slidably and detachably attached to the main body apparatus2.

The main body apparatus2includes a left terminal17. The left terminal17allows the main body apparatus2to communicate with the left controller3in a wired manner The left terminal17is provided at a position where, in a case where the left controller3is attached to the main body apparatus2, the left terminal17comes into contact with a terminal in the left controller3(terminal42shown inFIG. 4). The specific position of the left terminal17is optional. In the exemplary embodiment, as shown inFIG. 3, the left terminal17is provided on a bottom surface of a groove in the left rail member15. In the exemplary embodiment, the left terminal17is provided near a lower end on the bottom surface of the groove of the left rail member15.

As shown inFIG. 3, components similar to the components provided on the left side surface of the housing11are provided on the right side of the housing11. Specifically, the main body apparatus2includes a right rail member19provided on the right side surface of the housing11. The right rail member19extends in the up-down direction on the right side surface of the housing11. The right rail member19is so shaped as to be engageable with a slider in the right controller4(slider62shown inFIG. 5), and a slide mechanism includes the right rail member19and the slider62. The slide mechanism allows the right controller4to be slidably and detachably attached to the main body apparatus2.

The main body apparatus2includes a right terminal21. The right terminal21is provided to allow the main body apparatus2to communicate with the right controller4in a wired manner. The right terminal21is provided at a position where, in a case where the right controller4is attached to the main body apparatus2, the right terminal21comes into contact with a terminal in the right controller4(terminal64shown inFIG. 5). The specific position of the right terminal21is optional. In the exemplary embodiment, as shown inFIG. 3, the right terminal21is provided on a bottom surface of a groove in the right rail member19. In the exemplary embodiment, the right terminal21is provided near a lower end of the bottom surface of the groove of the right rail member19.

As shown inFIG. 3, the main body apparatus2includes a first slot23. The first slot23is provided in an upper side surface of the housing11. The first slot23is so shaped as to allow a first type storage medium to be attached to the first slot23. The first type storage medium is, for example, a dedicated storage medium (e.g., dedicated memory card) for the information processing system1and an information processing apparatus of the same type as that of the information processing system1. The first type storage medium is used to, for example, store data usable by the main body apparatus2(e.g., saved data of an application or the like) and/or a program executable by the main body apparatus2(e.g., program for an application or the like). The main body apparatus2includes a power button28. As shown inFIG. 3, the power button28is provided on the upper side surface of the housing11. The power button28is provided to switch the power supply of the main body apparatus2between an on-state and an off-state.

The main body apparatus2includes a sound input/output terminal (specifically, earphone jack)25. That is, the main body apparatus2allows a microphone or an earphone to be attached to the sound input/output terminal25. As shown inFIG. 3, the sound input/output terminal25is provided on the upper side surface of the housing11.

The main body apparatus2includes sound volume buttons26aand26b.As shown inFIG. 3, the sound volume buttons26aand26bare provided on the upper side surface of the housing11. The sound volume buttons26aand26bare provided to give an instruction to adjust the volume of a sound output from the main body apparatus2. The sound volume button26ais provided to give an instruction to turn down the sound volume, and the sound volume button26bis provided to give an instruction to turn up the sound volume.

The housing11includes an exhaust hole11cformed thereon. As shown inFIG. 3, the exhaust hole11cis formed in the upper side surface of the housing11. The exhaust hole11cis formed to exhaust (in other words, release) heat generated inside the housing11to outside the housing11. That is, the exhaust hole11cmay be called a heat discharge hole.

The main body apparatus2includes a lower terminal27. The lower terminal27is provided to allow the main body apparatus2to communicate with the cradle5described below. As shown inFIG. 3, the lower terminal27is provided on a lower side surface of the housing11. In a case where the main body apparatus2is attached to the cradle5, the lower terminal27is connected to a terminal of the cradle5(main body terminal73shown inFIG. 7). In the exemplary embodiment, the lower terminal27is a USB connector (more specifically, a female connector).

The main body apparatus2includes a second slot24. In the exemplary embodiment, the second slot24is provided in the lower side surface of the housing11. In another exemplary embodiment, the second slot24may be provided in the same surface as the first slot23. The second slot24is so shaped as to allow a second type storage medium, different from the first type storage medium, to be attached to the second slot24. The second type storage medium may be, for example, a general-purpose storage medium. For example, the second type storage medium may be an SD card. Similarly to the first type storage medium, the second type storage medium is used to, for example, store data usable by the main body apparatus2(e.g., saved data of an application or the like) and/or a program executable by the main body apparatus2(e.g., program for an application or the like).

The housing11includes an absorption holes lldformed therein. As shown inFIG. 3, the air absorption holes11dare formed in the lower side surface of the housing11. The absorption holes11dare formed to absorb (in other words, introduce) air outside the housing11into the housing11. In the exemplary embodiment, the air absorption holes lldare formed in the surface opposite to the surface in which the exhaust hole11cis formed. Thus, heat in the housing11is released efficiently.

The shapes, the numbers, and the installation positions of the above-described components provided in the housing11(specifically, the buttons, the slots, the terminals, and the like) are optional. For example, in another exemplary embodiment, at least one of the power button28and the slots23and24may be provided on/in another side surface or a rear surface of the housing11. Alternatively, in another exemplary embodiment, the main body apparatus2may not include at least one of the above-described components.

FIG. 4provides six orthogonal views showing an example of the left controller3. As shown inFIG. 4, the left controller3includes a housing31. In the exemplary embodiment, the housing31is generally plate-shaped. A main surface of the housing31(in other words, a front surface. i.e., a surface on a negative side in the z-axis direction shown inFIG. 1) has a roughly rectangular shape. In the exemplary embodiment, the housing31is longer in the up-down direction inFIG. 1A(i.e., in the y-axis direction shown inFIG. 1). In a state of being detached from the main body apparatus2, the left controller3may be held in an orientation in which the longer side extends in the vertical direction. The housing31has such a shape and such a size as to be held by one hand, particularly, with the left hand when being held in an orientation in which the longer side extends in the vertical direction. The left controller3may also be held in an orientation in which the longer side extends in the horizontal direction. In a case of being held in an orientation in which the longer side extends in the horizontal direction of, the left controller3may be held with both of two hands of the user. The housing31has any shape. In another exemplary embodiment, the housing31may not be generally plate-shaped. The housing31may not be rectangular, and may be, for example, semicircular. The housing31may not be vertically long.

The length in the up-down direction of the housing31is approximately equal to the length in the up-down direction of the housing11of the main body apparatus2. The thickness of the housing31(i.e., length in the front-rear direction, in other words, the length in the z-axis direction shown inFIG. 1) is approximately equal to the thickness of the housing11of the main body apparatus2. Thus, in a case where the left controller3is attached to the main body apparatus2(seeFIG. 1), the user can hold the main body apparatus2and the left controller3with a feeling that he/she holds an integrated apparatus.

As shown inFIG. 4, the main surface of the housing31is shaped such that left corners thereof are more rounded than right corners thereof. Specifically, a connection portion between an upper side surface and a left side surface of the housing31and a connection portion between a lower side surface and the left side surface of the housing31are more rounded (in other words, are chamfered to have a greater roundness) than a connection portion between the upper side surface and a right side surface of the housing31and a connection portion between the lower side surface and the right side surface of the housing31. Thus, in a case where the left controller3is attached to the main body apparatus2(seeFIG. 1), the information processing system1as the integrated apparatus has a rounded shape on the left side. The information processing system1having such a shape is easy for the user to hold.

The left controller3includes an analog stick32. As shown inFIG. 4, the analog stick32is provided on the main surface of the housing31. The analog stick32is an example of direction input section usable to input a direction. The analog stick32includes a stick member that can be inclined in all directions parallel to the main surface of the housing31(i.e., 360° directions including up, down, left, right, and oblique directions). The user may incline the stick member to input a direction corresponding to a direction of the inclination (and to input a magnitude corresponding to an angle of the inclination). The direction input section may be a cross key, a slide stick, or the like. In the exemplary embodiment, the stick member may be pressed (in a direction perpendicular to the housing31) to make an input operation. That is, the analog stick32is an input section usable to input a direction and a magnitude corresponding to the direction of inclination and the amount of inclination of the stick member, and also usable to make a press input operation on the stick member.

The left controller3includes four operation buttons33through36(specifically, a right direction button33, a down direction button34, an up direction button35, and a left direction button36). As shown inFIG. 4, the four operation buttons33through36are provided below the analog stick32on the main surface of the housing31. In the exemplary embodiment, the four operation buttons are provided on the main surface of the left controller3. The number of operation buttons is optional. The operation buttons33through36are used to give instructions corresponding to various programs executable by the main body apparatus2(e.g., an OS program and an application program). In the exemplary embodiment, the operation buttons33through36are usable to input directions, and thus are termed the right direction button33, the down direction button34, the up direction button35, and the left direction button36. Alternatively, the operation buttons33through36may be used to give instructions other than directions.

The left controller3includes a “−” (minus) button47. As shown inFIG. 4, the “−” button47is provided on the main surface of the housing31, more specifically, is provided on an upper right area of the main surface. The “−” button47is used to give instructions corresponding to various programs executable by the main body apparatus2(e.g., an OS program and an application program). The “−” button47is used as, for example, a select button in a game application (e.g., as a button used to switch a selectable item).

In a case where the left controller3is attached to the main body apparatus2, the operation sections provided on the main surface of the left controller3(specifically, the analog stick32and the buttons33through36and47) are operated with, for example, the thumb of the left hand of the user holding the information processing system1as the integrated apparatus. In a case where the left controller3is used while being detached from the main body apparatus2and held in a horizontal orientation with both of two hands of the user, the above-described operation sections are operated with, for example, the thumbs of the left and right hands of the user holding the left controller3. Specifically, in this case, the analog stick32is operated with the thumb of the left hand of the user, and the operation buttons33through36are operated with the thumb of the right hand of the user.

The left controller3includes a first L-button38. The left controller3includes a ZL-button39. Similarly to the operation buttons33through36, the operation buttons38and39are used to give instructions corresponding to various programs executable by the main body apparatus2. As shown inFIG. 4, the first L-button38is provided on an upper left portion of the side surface of the housing31. The ZL-button39is provided on an upper left portion from the side surface to a rear surface of the housing31(more precisely, an upper left portion when the housing31is viewed from the front side thereof). That is, the ZL-button39is provided to the rear of the first L-button38(on a positive side in the z-axis direction shown inFIG. 1). In the exemplary embodiment, the upper left portion of the housing31has a rounded shape. Therefore, the first L-button38and the ZL-button39each have a rounded shape corresponding to the roundness of the upper left portion of the housing31. In a case where the left controller3is attached to the main body apparatus2, the first L-button38and the ZL-button39are located on an upper left portion of the information processing system1as the integrated apparatus.

The left controller3includes the slider40described above. As shown inFIG. 4, the slider40extends in the up-down direction on the right side surface of the housing31. The slider40is so shaped as to be engageable with the left rail member15of the main body apparatus2(more specifically, with the groove in the left rail member15). Thus, the slider40, when being engaged with the left rail member15, is secured so as not to be detached in a direction perpendicular to a slide direction (the slide direction is, in other words, the direction in which the left rail member15extends).

The left controller3includes the terminal42usable by the left controller3to communicate with the main body apparatus2in a wired manner The terminal42is provided at a position where, in a case where the left controller3is attached to the main body apparatus2, the terminal42comes into contact with the left terminal17(FIG. 3) of the main body apparatus2. The specific position of the terminal42is optional. In the exemplary embodiment, as shown inFIG. 4, the terminal42is provided on an attachment surface to which the slider40is attached. In the exemplary embodiment, the terminal42is provided near a lower end on the attachment surface of the slider40.

FIG. 5provides six orthogonal views showing an example of the right controller4. As shown inFIG. 5, the right controller4includes a housing51. In the exemplary embodiment, the housing51is generally plate-shaped. A main surface of the housing51(in other words, a front surface, i.e., a surface on the negative side in the z-axis direction shown inFIG. 1) has a roughly rectangular shape. In the exemplary embodiment, the housing51is longer in the up-down direction inFIG. 1A. In a state of being detached from the main body apparatus2, the right controller4may be held in an orientation in which the longer side extends in the vertical direction. The housing51has such a shape and such a size as to be held by one hand, particularly, with the right hand when being held in an orientation in which the longer side extends in the vertical direction. The right controller4may also be held in an orientation in which the longer side extends in the horizontal direction. In a case of being held in an orientation in which the longer side extends in the horizontal direction, the right controller4may be held with both of two hands of the user.

Similarly to the case of the housing31of the left controller3, the length in the up-down direction of the housing51of the right controller4is approximately equal to the length in the up-down direction of the housing11of the main body apparatus2, and the thickness of the housing51is approximately equal to the thickness of the housing11of the main body apparatus2. Thus, in a case where the right controller4is attached to the main body apparatus2(seeFIG. 1), the user can hold the main body apparatus2and the right controller4with a feeling that he/she holds an integrated apparatus.

As shown inFIG. 5, the main surface of the housing51is shaped such that right corners thereof are more rounded than left corners thereof. Specifically, a connection portion between an upper side surface and a right side surface of the housing51and a connection portion between a lower side surface and the right side surface of the housing51are more rounded (in other words, are chamfered to have a greater roundness) than a connection portion between the upper side surface and a left side surface of the housing51and a connection portion between the lower side surface and the left side surface of the housing51. Thus, in a case where the right controller4is attached to the main body apparatus2(seeFIG. 1), the information processing system1as the integrated apparatus has a rounded shape on the right side. The information processing system1having such a shape is easy for the user to hold.

Similarly to the left controller3, the right controller4includes an analog stick52as a direction input section. In the exemplary embodiment, the analog stick52has the same configuration as that of the analog stick32of the left controller3. Similarly to the left controller3, the right controller4includes four operation buttons53through56(specifically, an A-button53, a B-button54, an X-button55, and a Y-button56). In the exemplary embodiment, the four operation buttons53through56have the same mechanism as that of the four operation buttons33through36of the left controller3. As shown inFIG. 5, the analog stick52and the operation buttons53through56are provided on the main surface of the housing51. In the exemplary embodiment, the four operation buttons are provided on the main surface of the right controller4. The number of operation buttons is optional.

Now, in the exemplary embodiment, the positional relationship between the two types of operation sections (the analog stick and the operation buttons) of the right controller4is opposite to the positional relationship between the corresponding two types of operation sections of the left controller3. That is, in the right controller4, the analog stick52is located below the operation buttons53through56, whereas in the left controller3, the analog stick32is located above the operation buttons33through36. With such a positional arrangement, the left controller3and the right controller4are usable with similar operation feelings to each other when being detached from the main body apparatus2.

The right controller4includes a “+” (plus) button57. As shown inFIG. 5, the “+” button57is provided on the main surface of the housing51, more specifically, is provided on an upper left area of the main surface. Similarly to the other operation buttons53through56, the “+” button57is used to give instructions corresponding to various programs executable by the main body apparatus2(e.g., an OS program and an application program). The “+” button57is used as, for example, a start button in a game application (e.g., as a button used to give an instruction to start a game).

The right controller4includes a home button58. As shown inFIG. 5, the home button58is provided on the main surface of the housing51, more specifically, is provided on a lower left area of the main surface. The home button58is used to display a predetermined menu screen on the display12of the main body apparatus2. The menu screen, for example, allows an application, specified by the user from one or more applications executable by the main body apparatus2, to be started. The menu screen may be displayed, for example, when the main body apparatus2is started. In the exemplary embodiment, when the home button58is pressed in the state where an application is being executed by the main body apparatus2(i.e., in the state where an image of the application is displayed on the display12), a predetermined operation screen may be displayed on the display12(at this point, the menu screen may be displayed instead of the operation screen). The operation screen, for example, allows an instruction to finish the application and display the menu screen on the display12, an instruction to resume the application, or the like, to be given.

In a case where the right controller4is attached to the main body apparatus2, the operation sections (specifically, the analog stick52and the buttons53through58) provided on the main surface of the right controller4are operated with, for example, the thumb of the right hand of the user holding the information processing system1. In a case where the right controller4is used while being detached from the main body apparatus2and held in a horizontal orientation with both of two hands of the user, the above-described operation sections are operated with, for example, the thumbs of the left and right hands of the user holding the right controller4. Specifically, in this case, the analog stick52is operated with the thumb of the left hand of the user, and the operation buttons53through56are operated with the thumb of the right hand of the user.

The right controller4includes a first R-button60. The right controller4includes a ZR-button61. As shown inFIG. 5, the first R-button60is provided on an upper right portion of the side surface of the housing51. The ZR-button61is provided on an upper right portion from the side surface to a rear surface of the housing51(more precisely, an upper right portion when the housing51is viewed from the front side thereof). That is, the ZR-button61is provided to the rear of the first R-button60(on the positive side in the z-axis direction shown inFIG. 1). In the exemplary embodiment, the upper right portion of the housing51has a rounded shape. Therefore, the first R-button60and the ZR-button61each have a rounded shapes corresponding to the roundness of the upper right portion of the housing51. In a case where the right controller4is attached to the main body apparatus2, the first R-button60and the ZR-button61are located on an upper right portion of the information processing system1.

The left controller3includes a slider mechanism similar to that of the left controller3. That is, the right controller4includes the slider62described above. As shown inFIG. 5, the slider62extends in the up-down direction on the left side surface of the housing51. The slider62is so shaped as to be engageable with the right rail member19of the main body apparatus2(more specifically, with the groove in the right rail member19). Thus, the slider62, when being engaged with the right rail member19, is secured so as not to be detached in a direction perpendicular to the slide direction (the slide direction is, in other words, the direction in which the right rail member19extends).

The right controller4includes the terminal64usable by the right controller4to communicate with the main body apparatus2in a wired manner The terminal64is provided at a position where, in a case where the right controller4is attached to the main body apparatus2, the terminal64comes into contact with the right terminal21(FIG. 3) of the main body apparatus2. The specific position of the terminal64is optional. In the exemplary embodiment, as shown inFIG. 5, the terminal64is provided on an attachment surface to which the slider62is attached. In the exemplary embodiment, the terminal64is provided near a lower end on the attachment surface of the slider62.

Regarding the left controller3and the right controller4, the shapes, the numbers, and the installation positions of the above-described components provided in the housings31and51(specifically, the sliders, the sticks, the buttons, and the like) are optional. For example, in another exemplary embodiment, the left controller3and the right controller4may each include a direction input section of a type different from that of the analog stick. The slider40or62may be located at a position corresponding to the position of the rail member15or19provided in the main body apparatus2, for example, on the main surface or the rear surface of the housing31or51. In still another exemplary embodiment, the left controller3and the right controller4may not include at least one of the above-described components.

FIG. 6shows an overall configuration of another example of information processing system according to the exemplary embodiment. As shown inFIG. 6, for example, only the main body apparatus2, with the left controller3and the right controller4being detached therefrom, may be mounted on the cradle5. In another example, the integrated apparatus including the main body apparatus2and the left and right controllers3and4attached thereto may be mounted on the cradle5. The cradle5is communicable (via wired communication or wireless communication) with the stationary monitor6(e.g., stationary TV), which is an example of external display apparatus separate from the display12. As described below in detail, in a case where the integrated apparatus or a single body of the main body apparatus2is mounted on the cradle5, the information processing system1displays, on the stationary monitor6, an image acquired or generated by the main body apparatus2. In the exemplary embodiment, the cradle5has a function of charging the integrated apparatus or a single body of the main body apparatus2mounted thereon. The cradle5has a function of a hub apparatus (specifically, a USB hub).

FIG. 7shows an example of external configuration of the cradle5. The cradle5includes a housing on which the integrated apparatus or only the main body apparatus2is detachably mountable (or attachable). In the exemplary embodiment, as shown inFIG. 7, the housing includes a first supporting portion71including a groove71aformed therein, and a generally planar second supporting portion72.

As shown inFIG. 7, the groove71aformed in the first supporting portion71has a shape corresponding to the shape of a lower portion of the above-described integrated apparatus. Specifically, the groove71ais so shaped as to allow the lower portion of the integrated apparatus to be inserted thereto. More specifically, the shape of the groove71ais generally matched to the shape of the lower portion of the main body apparatus2. Therefore, the lower portion of the integrated apparatus may be inserted into the groove71a,so that the integrated apparatus is mounted on the cradle5. The second supporting portion72supports a front surface of the integrated apparatus having the lower portion inserted into the groove71a(i.e., supports the surface on which the display12is provided). The second supporting portion72allows the cradle5to support the integrated apparatus more stably. The shape of the housing shown inFIG. 7is merely illustrative. In another exemplary embodiment, the housing of the cradle5may have any shape that allows the main body apparatus2to be mounted thereon.

As shown inFIG. 7, the cradle5includes the main body terminal73usable by the cradle5to communicate with the integrated apparatus. As shown inFIG. 7, the main body terminal73is provided on a bottom surface of the groove71a,which is formed in the first supporting portion71. More specifically, the main body terminal73is provided at a position where, in a case where the integrated apparatus is attached to the cradle5, the lower terminal27of the main body apparatus2comes into contact with the main body terminal73. In the exemplary embodiment, the main body terminal73is a USB connector (more specifically, a male connector). In the exemplary embodiment, the integrated apparatus is attachable to the cradle5in any of two orientations in the depth direction, namely, regardless of whether the front surface of the integrated apparatus faces the second support portion72or a rear surface of the integrated apparatus faces the second support portion72. The lower terminal27of the main body apparatus2and the main body terminal73of the cradle5have symmetrical shapes in the depth direction (i.e., the z-axis direction shown inFIG. 1), and thus the main body apparatus2and the cradle5are communicable with each other in whichever orientation, among the above-mentioned two orientations in the depth direction, the integrated apparatus may be mounted on the cradle5.

Although not shown inFIG. 7, the cradle5includes a terminal on a rear surface of the housing (in the exemplary embodiment, includes a plurality of terminals, specifically, a monitor terminal132, a power supply terminal134, and extension terminals137shown inFIG. 10). The details of these terminals will be described below.

Regarding the cradle5, the shapes, the numbers, and the installation positions of the above-described components (specifically, the housing, the terminals, the buttons, and the like) are optional. For example, in another exemplary embodiment, the housing may have another shape with which the integrated apparatus including the main body apparatus2and the left and right controllers3and4attached thereto, or a single body of the main body apparatus2, is supported. Some of the terminals provided in the housing may be provided on a front surface of the housing. In still another exemplary embodiment, the cradle5may not include at least one of the above-described components.

FIG. 8is a block diagram showing an example of internal configuration of the main body apparatus2. The main body apparatus2includes components81through98shown inFIG. 8in addition to the components shown inFIG. 3. At least one of the components81through98may be mounted as an electronic component on an electronic circuit board and accommodated in the housing11.

The main body apparatus2includes a CPU (Central Processing Unit)81. The CPU81is an information processing section that executes various types of information process executable by the main body apparatus2. The CPU81executes an information processing program (e.g., game program) stored on a storage section (specifically, an internal storage medium such as a flash memory84or the like, an external storage medium attached to each of the slots23and24, or the like) to execute various types of information process.

The main body apparatus2includes the flash memory84and the DRAM (Dynamic Random Access Memory)85as examples of internal storage medium built in the main body apparatus2. The flash memory84and the DRAM85are connected with the CPU81. The flash memory84is mainly usable to store various pieces of data (or programs) to be saved on the main body apparatus2. The DRAM85is usable to temporarily store various pieces of data used for the information process.

The main body apparatus2includes a first slot interface (hereinafter, the “interface” will be abbreviated as “I/F”)91. The main body apparatus2includes a second slot I/F92. The first slot I/F91and the second slot I/F92are connected with the CPU81. The first slot I/F91is connected with the first slot23, and follows an instruction from the CPU81to read and write data from and to the first type storage medium (e.g., SD card) attached to the first slot23. The second slot I/F92is connected with the second slot24, and follows an instruction from the CPU81to read and write data from and to the second type storage medium (e.g., dedicated memory card) attached to the second slot24.

The CPU81appropriately transfers data between the flash memory84/the DRAM85and the above-described storage mediums to execute the above-described information process.

The main body apparatus2includes a network communication section82. The network communication section82is connected with the CPU81. The network communication section82communicates (specifically, via wireless communication) with an external apparatus via a network. In the exemplary embodiment, in a first communication form, the network communication section82is connected with a wireless LAN by a system compliant with the Wi-Fi standards to communicate with an external apparatus. In a second communication form, the network communication section82wirelessly communicates with another main body apparatus2of the same type by a predetermined communication system (e.g., communication based on an original protocol or infrared light communication). The wireless communication in the second communication form may be performed with another main body apparatus2located in a closed local network area and thus realizes a so-called “local communication”, in which a plurality of the main body apparatuses2are communicated directly to each other to transmit and receive data.

The main body apparatus2includes a controller communication section83. The controller communication section83is connected with the CPU81. The controller communication section83wirelessly communicates with the left controller3and/or the right controller4. The communication system between the main body apparatus2and the left controller3or the right controller4is optional. In the exemplary embodiment, the controller communication section83performs communication compliant with the Bluetooth (registered trademark) standards with the left controller3and with the right controller4.

The CPU81is connected with the left terminal17, the right terminal21, and the lower terminal27. When communicating with the left controller3in a wired manner, the CPU81transmits data to the left controller3via the left terminal17and also receives operation data from the left controller3via the left terminal17. When communicating with the right controller4in a wired manner, the CPU81transmits data to the right controller4via the right terminal21and also receives operation data from the right controller4via the right terminal21. When communicating with the cradle5, the CPU81transmits data to the cradle5via the lower terminal27. As described above, in the exemplary embodiment, the main body apparatus2can perform both wired communication and wireless communication with each of the left controller3and the right controller4. In a case where the integrated apparatus including the main body apparatus2and the left and right controllers3and4attached thereto, or a single body of the main body apparatus2, is attached to the cradle5, the main body apparatus2outputs data (e.g., image data or sound data) to the stationary monitor6via the cradle5.

The main body apparatus2can communicate with a plurality of the left controllers3simultaneously (in other words, in parallel). The main body apparatus2can communicate with a plurality of the right controllers4simultaneously (in other words, in parallel). Thus, the user can input data to the main body apparatus2using the plurality of left controllers3and the plurality of right controllers4.

The main body apparatus2includes a touch panel controller86, which is a circuit that controls the touch panel13. The touch panel controller86is connected between the touch panel13and the CPU81. Based on a signal from the touch panel13, the touch panel controller86generates data indicating, for example, the position where a touch input has been provided. Then, the touch panel controller86outputs the data to the CPU81.

The display12is connected with the CPU81. The CPU81displays, on the display12, an generated image (e.g., image generated by executing the above-described information process) and/or an externally acquired image.

The main body apparatus2includes a codec circuit87and the speakers (specifically, a left speaker and a right speaker)88. The codec circuit87is connected with the speakers88and the sound input/output terminal25and also connected with the CPU81. The codec circuit87controls the input and output of sound data to and from the speakers88and the sound input/output terminal25. Specifically, when receiving sound data from the CPU81, the codec circuit87performs D/A conversion on the sound data and outputs a resultant sound signal to the speakers88or the sound input/output terminal25. As a result, a sound is output from the speakers88or a sound output section (e.g., earphone) connected with the sound input/output terminal25. When receiving a sound signal from the sound input/output terminal25, the codec circuit87performs A/D conversion on the sound signal and outputs resultant sound data in a predetermined format to the CPU81. The sound volume buttons26are connected with the CPU81. Based on an input to the sound volume buttons26, the CPU81controls the volume of the sound to be output from the speakers88or the sound output section.

The main body apparatus2includes a power control section97and a battery98. The power control section97is connected with the battery98and the CPU81. Although not shown inFIG. 8, the power control section97is connected with various components of the main body apparatus2(specifically, components that receive power supplied from the battery98, the left terminal17, and the right terminal21). Based on a command from the CPU81, the power control section97controls the supply of power from the battery98to the above-mentioned components. The power control section97is connected with the power button28. Based on an input to the power button28, the power control section97controls the supply of power to the above-mentioned components. Specifically, in a case where an operation of turning off the power supply is performed on the power button28, the power control section97stops the supply of power to all or a part of the above-mentioned components. In a case where an operation of turning on the power supply is performed on the power button28, the power control section97starts the supply of power to all or a part of the above-mentioned components. The power control section97outputs, to the CPU81, information indicating an input to the power button28(specifically, information indicating whether or not the power button28has been pressed).

The battery98is connected with the lower terminal27. In a case where an external charging apparatus (e.g., cradle5) is connected with the lower terminal27and power is supplied to the main body apparatus2via the lower terminal27, the battery98is charged with the supplied power.

The main body apparatus2includes a cooling fan96usable to release heat inside the main body apparatus2. The cooling fan96is operated to introduce air outside the housing11through the absorption holes11dand also to release air inside the housing11through the exhaust hole11c,so that heat inside the housing11is released. The cooling fan96is connected with the CPU81, and the operation of the cooling fan96is controlled by the CPU81. The main body apparatus2includes a temperature sensor95, which detects the temperature inside the main body apparatus2. The temperature sensor95is connected with the CPU81, and a detection result provided by the temperature sensor95is output to the CPU81. Based on the detection result provided by the temperature sensor95, the CPU81controls the operation of the cooling fan96.

FIG. 9is a block diagram showing an example of internal configuration of the information processing system1. Among the components of the information processing system1, the components of the main body apparatus2are shown in detail inFIG. 8and thus are omitted inFIG. 9.

The left controller3includes a communication control section101, which communicates with the main body apparatus2. As shown inFIG. 9, the communication control section101is connected with components including the terminal42. In the exemplary embodiment, the communication control section101can communicate with the main body apparatus2by wired communication via the terminal42and also by wireless communication with no use of the terminal42. The communication control section101controls a method of communication performed by the left controller3with the main body apparatus2. In in a case where the left controller3is attached to the main body apparatus2, the communication control section101communicates with the main body apparatus2via the terminal42. In a case where the left controller3is detached from the main body apparatus2, the communication control section101wirelessly communicates with the main body apparatus2(specifically, the controller communication section83). The wireless communication between the controller communication section83and the communication control section101is performed in conformity to, for example, the Bluetooth (registered trademark) standards.

The left controller3includes a memory102such as, for example, a flash memory or the like. The communication control section101includes, for example, a microcomputer (or a microprocessor) and executes firmware stored on the memory102to perform various types of process.

The left controller3includes buttons103(specifically, the buttons33through39,43and44). The left controller3includes the analog stick (“stick” inFIG. 9)32. The buttons103and the analog stick32each output information regarding an operation performed thereon to the communication control section101repeatedly at appropriate timing.

The left controller3includes an acceleration sensor104. In the exemplary embodiment, the acceleration sensor104detects magnitudes of linear accelerations in predetermined three axis directions (e.g., X-, Y- and Z-axis directions shown inFIG. 11). The acceleration sensor104may detect an acceleration in one axis direction or accelerations in two axis directions. The left controller3includes an angular velocity sensor105. In the exemplary embodiment, the angular velocity sensor105detects angular velocities about predetermined three axes (e.g., X, Y and Z axes shown inFIG. 11). The angular velocity sensor105may detect an angular velocity about one axis or angular velocities about two axes. The acceleration sensor104and the angular velocity sensor105are connected with the communication control section101. Detection results provided by the acceleration sensor104and the angular velocity sensor105are each output to the communication control section101repeatedly at appropriate timing.

The communication control section101acquires information regarding an input (specifically, information regarding an operation or a detection result provided by any of the sensors) from each of the input sections (specifically, the buttons103, the analog stick32, and the sensors104and105). The communication control section101transmits, to the main body apparatus2, operation data including the acquired information (or information obtained by performing a predetermined process on the acquired information). The operation data is transmitted repeatedly at a rate of once every predetermined time period. The interval at which information regarding an input is transmitted to the main body apparatus2may or may not be the same among the input sections.

The above-mentioned operation data is transmitted to the main body apparatus2, so that the main body apparatus2obtains the inputs provided to the left controller3. That is, the main body apparatus2distinguishes operations made on the buttons103and the analog stick32from each other, based on the operation data. The main body apparatus2computes information regarding the motion and/or the attitude of the left controller3based on the operation data (specifically, the detection results provided by the acceleration sensor104and the angular velocity sensor105).

The left controller3includes a vibrator107usable to give notification to the user by a vibration. In the exemplary embodiment, the vibrator107is controlled by a command from the main body apparatus2. Specifically, upon receipt of the above-mentioned command from the main body apparatus2, the communication control section101drives the vibrator107in accordance with the command The left controller3includes an amplifier106. Upon receipt of the above-mentioned command, the communication control section101outputs a control signal corresponding to the command to the amplifier106. The amplifier106amplifies the control signal from the communication control section101, generates a driving signal for driving the vibrator107, and outputs the driving signal to the vibrator107. As a result, the vibrator107is operated.

The left controller3includes a power supply section108. In the exemplary embodiment, the power supply section108includes a battery and a power control circuit. Although not shown inFIG. 9, the power control circuit is connected with the battery and also connected with components of the left controller3(specifically, components that receive power supplied from the battery). The power control circuit controls the supply of power from the battery to the above-mentioned components. The battery is connected with the terminal42. In the exemplary embodiment, in a case where the left controller3is attached to the main body apparatus2, the battery is charged via the terminal42with power supplied from the main body apparatus2under a predetermined condition.

As shown inFIG. 9, the right controller4includes a communication control section111, which communicates with the main body apparatus2. The right controller4includes a memory112, which is connected with the communication control section111. The communication control section111is connected with components including the terminal64. The communication control section111and the memory112have functions similar to those of the communication control section101and the memory102, respectively, of the left controller3. Thus, the communication control section111can communicate with the main body apparatus2by wired communication via the terminal64and also by wireless communication with no use of the terminal64(specifically, communication compliant with the Bluetooth (registered trademark) standards). The communication control section111controls a method of communication performed by the right controller4with the main body apparatus2.

The right controller4includes input sections similar to the input sections of the left controller3(specifically, buttons113, the analog stick52, an acceleration sensor114, and an angular velocity sensor115). These input sections have functions similar to those of the input sections of the left controller3and operate similarly to the input sections of the left controller3.

The right controller4includes a vibrator117and an amplifier116. The vibrator117and the amplifier116operate similarly to the vibrator107and the amplifier106, respectively, of the left controller3. Specifically, the communication control section111, in accordance with a command from the main body apparatus2, uses the amplifier116to cause the vibrator117to operate.

The right controller4includes a power supply section118. The power supply section118has a function similar to that of the power supply section108of the left controller3, and operates similarly to the power supply section108. That is, the power supply section118controls the supply of power to components that receive power supplied from a battery. In a case where the right controller4is attached to the main body apparatus2, the battery is charged via the terminal64with power supplied from the main body apparatus2under a predetermined condition.

The right controller4includes a processing section121. The processing section121is connected with the communication control section111and is also connected with an NFC communication section122. The processing section121, in accordance with a command from the main body apparatus2, performs a process of managing the NFC communication section122. For example, the processing section121controls an operation of the NFC communication section122in accordance with a command from the main body apparatus2. The processing section121controls the start of the NFC communication section122or controls an operation of the NFC communication section122(specifically, reading, writing, or the like) performed on a communication partner thereof (e.g., NFC tag). The processing section121receives, from the main body apparatus2via the communication control section111, information to be transmitted to the communication partner and passes the information to the NFC communication section122. The processing section121also acquires, via the NFC communication section122, information received from the communication partner and transmits the information to the main body apparatus2via the communication control section111. In accordance with a command from the main body apparatus2, the processing section121performs a process of managing an infrared image capturing section123. For example, the processing section121causes the infrared image capturing section123to perform an image capturing operation, or acquires information based on an image capturing result (information of a captured image, information computed from such information, or the like) and transmits the information to the main body apparatus2via the communication control section111.

FIG. 10is a block diagram showing an example of internal configuration of the cradle5. The internal configuration of the main body apparatus2is shown in detail inFIG. 8and thus is omitted inFIG. 10.

As shown inFIG. 10, the cradle5includes a conversion section131and a monitor terminal132. The conversion section131is connected with the main body terminal73and the monitor terminal132. The conversion section131converts formats of signals of an image (or video) and a sound received from the main body apparatus2into formats in which the image and the sound are to be output to the stationary monitor6. In the exemplary embodiment, the main body apparatus2outputs an image signal and a sound signal as display port signals (i.e., signals compliant with the DisplayPort standard) to the cradle5. In the exemplary embodiment, the communication between the cradle5and the stationary monitor6is performed based on the HDMI (registered trademark) standard. That is, the monitor terminal132is an HDMI terminal, and the cradle5and the stationary monitor6are connected to each other by an HDMI cable. The conversion section131converts display port signals (specifically, signals representing the video and the sound) received from the main body apparatus2via the main body terminal73into HDMI signals. The HDMI signals obtained as a result of the conversion are output to the stationary monitor6via the monitor terminal132.

The cradle5includes a power control section133and a power supply terminal134. The power supply terminal134is connectable with a charging apparatus (e.g., an AC adapter or the like; not shown). In the exemplary embodiment, the power supply terminal134is connected with an AC adapter, and mains electricity is supplied to the cradle5. In a case where the main body apparatus2is attached to the cradle5, the power control section133supplies power from the power supply terminal134to the main body apparatus2via the main body terminal73. As a result, the battery98of the main body apparatus2is charged.

The cradle5includes a connection processing section136and extension terminals137. The extension terminals137are each connectable with another apparatus. In the exemplary embodiment, the cradle5includes a plurality of (more specifically, three) USB terminals as the extension terminals137. The connection processing section136is connected with the main body terminal73and the extension terminals137. The connection processing section136has a function of a USB hub and, for example, manages the communication between an apparatus connected with any of the extension terminals137and the main body apparatus2connected with the main body terminal73(i.e., transmits a signal from a certain apparatus to other apparatuses while distributing the signal appropriately). As described above, in the exemplary embodiment, the information processing system1is communicable with another apparatus via the cradle5. The connection processing section136may be capable to change the communication speed, or supply power to an apparatus connected to any of the extension terminals137.

As described above, in the information processing system1according to the exemplary embodiment, the left controller3and the right controller4are attachable to, and detachable from, the main body apparatus2. The integrated apparatus including the main body apparatus2and the left and right controllers3and4attached thereto, or a single body of the main body apparatus2, may be attached to the cradle5to output an image (and a sound) to the stationary monitor6. Hereinafter, an operation of the information processing system1will be described in which the main body apparatus2, in a state where the left controller3and the right controller4are detached therefrom, is attached to the cradle5to output an image (or a sound) to the stationary monitor6.

As described above, in the exemplary embodiment, the information processing system1is usable in the state where the left controller3and the right controller4are detached from the main body apparatus2(referred to as a “separate state”). The information processing system1in the separate state is usable to make an operation on the same application (e.g., a game application) in a case where a single user uses both of the left controller3and the right controller4. In a case where a plurality of users make an operation on the same application, a plurality of pairs of the left controller3and the right controller4may be prepared, so that each of the users uses one of such pairs.

FIG. 11andFIG. 12show an example in which a single user uses the information processing system1in the separate state while holding a pair of the left controller3and the right controller4. As shown inFIG. 11andFIG. 12, in the separate state, the user can view an image displayed on the stationary monitor6while holding the left controller3with his/her left hand and holding the right controller4with his/her right hand to make an operation.

For example, in the exemplary embodiment, the user holds the left controller3, which is longer in the up-down direction inFIG. 1Aand is generally plate-shaped, with his/her left hand such that the left controller3is oriented as follows: a downward direction in the longer direction (the negative y-axis direction shown inFIG. 1) is downward in the vertical direction, the side surface facing the main body apparatus2when the left controller3is attached to the main body apparatus2(side surface on which the slider40is provided) is directed forward, (direction away from the user), and the main surface (surface on which the analog stick32and the like are provided) is directed rightward. The user holds the right controller4, which is longer in the up-down direction inFIG. 1Aand is generally plate-shaped, with his/her right hand such that the right controller4is directed as follows: a downward direction in the longer direction (the negative y-axis direction shown inFIG. 1) is downward in the vertical direction, the side surface facing the main body apparatus2when the right controller4is attached to the main body apparatus2(side surface on which the slider62is provided) is directed forward, and the main surface (surface on which the analog stick52and the like are provided) is directed leftward. From the state of holding the left controller3with his/her left hand and holding the right controller4with his/her right hand (hereinafter, the attitude of each of the left controller3and the right controller4in the above-described orientation may be referred to as a “reference attitude”), the user moves each of the controllers3and4upward, downward, leftward, rightward, forward or rearward, rotates each of the controllers3and4, or swings each of the controllers3and4. Thus, a game is played in accordance with the motion or the attitude of each of the controllers3and4.

For easier understanding of the direction of acceleration or angular velocity caused in the left controller3, the following directions will be defined for the left controller3.

The forward direction in the above-described held state (direction from the rounded side surface toward the side surface attachable to the main body apparatus2; the negative x-axis direction shown inFIG. 1) will be referred to as a “positive X-axis direction”. The rightward direction in the above-described held state (direction from the rear surface toward the main surface; the negative z-axis direction shown inFIG. 1) will be referred to as a “positive Y-axis direction”. The upward direction in the above-described held state (upward direction in the longer direction; the positive y-axis direction shown inFIG. 1) will be referred to as a “positive Z-axis direction”. The acceleration sensor104of the left controller3is capable of detecting an acceleration in each of the X-, Y- and Z-axis directions. The angular velocity sensor105is capable of detecting an angular velocity about each of the X-, Y- and Z-axis directions. For easier understanding of the direction of acceleration or angular velocity caused in the right controller4, the following directions will be defined for the right controller4. The forward direction in the above-described held state (direction from the rounded side surface toward the side surface attachable to the main body apparatus2; the positive x-axis direction shown inFIG. 1) will be referred to as a “positive X-axis direction”. The rightward direction in the above-described held state (direction from the main surface toward the rear surface; the positive z-axis direction shown inFIG. 1) will be referred to as a “positive Y-axis direction”. The upward direction in the above-described held state (upward direction in the longer direction; the positive y-axis direction shown inFIG. 1) will be referred to as a “positive Z-axis direction”. The acceleration sensor114of the right controller4is capable of detecting an acceleration in each of the X-, Y- and Z-axis directions. The angular velocity sensor115is capable of detecting an angular velocity about each of the X-, Y- and Z-axis directions.

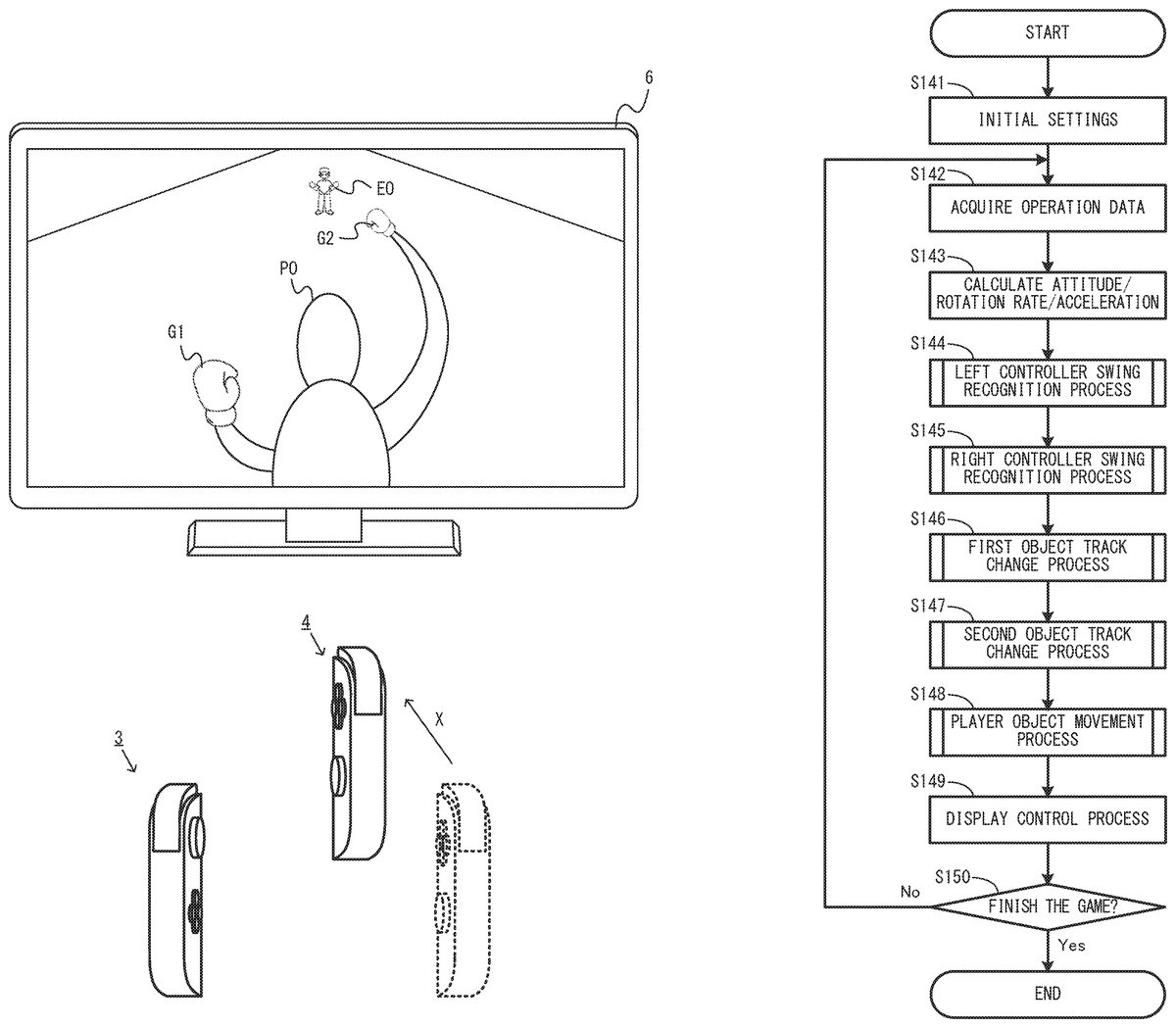

FIG. 13throughFIG. 15each show an example of game image displayed in a game played by the left controller3and the right controller4being moved. As shown inFIG. 13, in this game example, an image of a game in which a player object PO and an opponent object EO fight against each other (e.g., boxing game) is displayed on the stationary monitor6. The user operating the left controller3and the right controller4may swing the left controller3and/or the right controller4or change the attitude of the left controller3and/or the right controller4to operate the player object PO. For example, the user may swing the left controller3to control the motion of a first object G1, which represents the left glove (left fist) of the player object PO, and may swing the right controller4to control the motion of a second object G2, which represents the right glove (right fist) of the player object PO. Specifically, in a case where the user makes an operation of swinging his/her left hand holding the left controller3as if throwing a left punch, the first object G1representing the left glove of the player object PO moves toward a position where the opponent object EO is located. In a case where the user makes an operation of swinging his/her right hand holding the right controller4as if throwing a right punch, the second object G2representing the right glove of the player object PO moves toward the position where the opponent object EO is located.

For example, as shown inFIG. 14, in a case where the right controller4is swung as if being protruded forward (in the positive X-axis direction of the right controller4) from a state shown inFIG. 13where neither the left controller3nor the right controller4is moved, the second object G2of the player object PO moves toward the opponent object EO in accordance with the movement of the right controller4. As a result, a game image showing that the player object PO throws a right punch to the opponent object EO is displayed.

The direction of the movement of the first object G1is set by the attitude of the left controller3when the left controller3is swung as if being protruded. The direction of the movement of the second object G2is set by the attitude of the right controller4when the right controller4is swung as if being protruded. In a case where, for example, the right controller4is moved in the positive X-axis direction as shown inFIG. 14, the movement direction of the second object G2is set in accordance with the attitude in the roll direction of the right controller4at the time of the movement. For example, in exemplary embodiment, an inclination of the Y-axis direction of the right controller4with respect to the direction in which the gravitational acceleration acts in a real space while the right controller4is moving is calculated, and the movement direction of the second object G2is calculated based on the resultant inclination of the Y-axis direction. Specifically, in a case where the inclination of the Y-axis direction indicates that the right controller4is rotated rightward in the roll direction with respect to the reference attitude, the second object G2moves rightward in a virtual space. In a case where the inclination of the Y-axis direction indicates that the right controller4is rotated leftward in the roll direction with respect to the reference attitude, the second object G2moves leftward in the virtual space. The angle at which the movement direction is shifted in the rightward direction or the leftward direction is calculated in accordance with the angle of inclination of the Y-axis direction.

In this game example, even in a case where the distance between the player object PO and the opponent object EO is relatively long in the virtual space, a punch may be thrown. One of the arms of the player object PO is extended, so that the first object G1or the second object G2move a relatively long distance. The first object G1or the second object G2finishes moving after colliding against another object (e.g., opponent object EO) or after moving a predetermined distance, and returns to a movement start position, from which the first object G1or the second object G2started moving (e.g., the position of the left hand or the right hand of the player object PO shown inFIG. 13). The first object G1or the second object G2returns to the movement start position, and thus a next movement toward the opponent object EO is permitted to be made. In other words, the next punch is permitted to be thrown. Therefore, a time period from the start of the movement of the first object G1or the second object G2from the movement start position until the return thereof to the movement start position is longer than such a time period of a general boxing game.

In this game example, such a movement time period may be utilized to change a track of the movement in accordance with the attitude or the motion of the left controller3or the right controller4even while the first object G1or the second object G2is moving (typically, while the first object G1or the second object G2is moving toward the opponent object EO). For example, in a case where the left controller3or the right controller4is rotated in the roll direction or in the yaw direction from the attitude thereof at the time of start of the movement of the first object G1or the second object G2, the track of the first object G1or the second object G2is changed in accordance with the rotation.

In an example, in the exemplary embodiment, the rotation rate (angular velocity) of the left controller3or the right controller4about the X axis after the first object G1or the second object G2starts moving is set as the rotation rate in the roll direction. The track of the first object G1or the second object G2is changed based on the rotation rate about the X axis while the first object G1or the second object G2is moving. Specifically, in a case where the rotation rate at which the left controller3is rotated about the X axis rightward in the roll direction while the first object G1is moving is obtained, the track of the first object G1is changed rightward in the virtual space. In a case where the rotation rate at which the left controller3is rotated about the X axis leftward in the roll direction while the first object G1is moving is obtained, the track of the first object G1is changed leftward in the virtual space. In a case where the rotation rate at which the right controller4is rotated about the X axis rightward in the roll direction while the second object G2is moving is obtained, the track of the second object G2is changed rightward in the virtual space. In a case where the rotation rate at which the right controller4is rotated about the X axis leftward in the roll direction while the second object G2is moving is obtained the track of the second object G2is changed leftward in the virtual space.

In another example, in the exemplary embodiment, the rotation rate (angular velocity) of the left controller3or the right controller4about the gravity direction in the real space after the first object G1or the second object G2starts moving is set as the rotation rate in the yaw direction. The track of the first object G1or the second object G2is changed based on the rotation rate about the gravity direction while the first object G1or the second object G2is moving. Specifically, in a case where the rotation rate at which the left controller3is rotated about the gravity direction rightward in the yaw direction while the first object G1is moving is obtained, the track of the first object G1is changed rightward in the virtual space. In a case where the rotation rate at which the left controller3is rotated about the gravity direction leftward in the yaw direction while the first object G1is moving is obtained, the track of the first object G1is changed leftward in the virtual space. In a case where the rotation rate at which the right controller4is rotated about the gravity direction rightward in the yaw direction while the second object G2is moving is obtained, the track of the second object G2is changed rightward in the virtual space. In a case where the rotation rate at which the right controller4is rotated about the gravity direction leftward in the yaw direction while the second object G2is moving is obtained, the track of the second object G2is changed leftward in the virtual space.