U.S. Pat. No. 10,035,063

GAME CONTROLLER ON MOBILE TOUCH-ENABLED DEVICES

AssigneeMICROSOFT TECHNOLOGY LICENSING, LLC

Issue DateOctober 20, 2015

Illustrative Figure

Abstract

Various technologies described herein pertain to controlling a game with a mobile touch-enabled device. A thumbstick and a mode selection button can be rendered on a display of the mobile touch-enabled device. The thumbstick can be rendered on a reassignable area of the display and the mode selection button can be rendered on a mode selection area of the display. A touch (e.g., drag) from the mode selection area to the reassignable area can be detected, and an operation in the game can be controlled with the thumbstick represented as being at a depressed height in response to the touch in the reassignable area while the touch is detected without discontinuity of contact from starting the drag. Further, upon detecting discontinuation of the touch, a different operation in the game can be controlled with the thumbstick represented as being at a default height in response to a subsequent touch.

Description

DETAILED DESCRIPTION Various technologies pertaining to controlling a game with a mobile touch-enabled device are now described with reference to the drawings, wherein like reference numerals are used to refer to like elements throughout. In the following description, for purposes of explanation, numerous specific details are set forth in order to provide a thorough understanding of one or more aspects. It may be evident, however, that such aspect(s) may be practiced without these specific details. In other instances, well-known structures and devices are shown in block diagram form in order to facilitate describing one or more aspects. Further, it is to be understood that functionality that is described as being carried out by certain system components may be performed by multiple components. Similarly, for instance, a component may be configured to perform functionality that is described as being carried out by multiple components. Moreover, the term “or” is intended to mean an inclusive “or” rather than an exclusive “or.” That is, unless specified otherwise, or clear from the context, the phrase “X employs A or B” is intended to mean any of the natural inclusive permutations. That is, the phrase “X employs A or B” is satisfied by any of the following instances: X employs A; X employs B; or X employs both A and B. In addition, the articles “a” and “an” as used in this application and the appended claims should generally be construed to mean “one or more” unless specified otherwise or clear from the context to be directed to a singular form. As set forth herein, a game can be controlled with a mobile touch-enabled device. More particularly, an interaction model designed for the mobile touch-enabled device described herein can provide an increased number of analog and/or discrete inputs that can be utilized to manipulate the ...

DETAILED DESCRIPTION

Various technologies pertaining to controlling a game with a mobile touch-enabled device are now described with reference to the drawings, wherein like reference numerals are used to refer to like elements throughout. In the following description, for purposes of explanation, numerous specific details are set forth in order to provide a thorough understanding of one or more aspects. It may be evident, however, that such aspect(s) may be practiced without these specific details. In other instances, well-known structures and devices are shown in block diagram form in order to facilitate describing one or more aspects. Further, it is to be understood that functionality that is described as being carried out by certain system components may be performed by multiple components. Similarly, for instance, a component may be configured to perform functionality that is described as being carried out by multiple components.

Moreover, the term “or” is intended to mean an inclusive “or” rather than an exclusive “or.” That is, unless specified otherwise, or clear from the context, the phrase “X employs A or B” is intended to mean any of the natural inclusive permutations. That is, the phrase “X employs A or B” is satisfied by any of the following instances: X employs A; X employs B; or X employs both A and B. In addition, the articles “a” and “an” as used in this application and the appended claims should generally be construed to mean “one or more” unless specified otherwise or clear from the context to be directed to a singular form.

As set forth herein, a game can be controlled with a mobile touch-enabled device. More particularly, an interaction model designed for the mobile touch-enabled device described herein can provide an increased number of analog and/or discrete inputs that can be utilized to manipulate the game as compared to conventional interaction models employed with mobile touch-enabled devices. Accordingly, in comparison to traditional approaches, the interaction model described herein can enable a user to manipulate a game via a mobile touch-enabled device in a manner that is more similar to manipulating a game via a controller used in conjunction with a video game console.

Referring now to the drawings,FIG. 1illustrates an exemplary graphical user interface100that can be rendered on a display. The graphical user interface100can be rendered on a display of a mobile touch-enabled device, for instance. Examples of mobile touch-enabled devices include phones (e.g., smartphones, etc.), handheld computers, tablet computers, and the like.

Further, the graphical user interface100can be rendered to enable a user to interact with a game. For example, although not shown, it is to be appreciated that the graphical user interface100can include game data (e.g., visual information related to the game); accordingly, the game data can be rendered on at least a portion of the display of the mobile touch-enabled device. By way of illustration, visual indicators included in the exemplary graphical user interface100can be rendered on a layer above the game data on the display of the mobile touch-enabled device; however, the claimed subject matter is not so limited. Moreover, inputs represented by visual indicators included in the exemplary graphical user interface100can be utilized by the user to manipulate the game data rendered on the display.

The graphical user interface100includes various visual indicators that visually represent different analog and discrete inputs. The visual indicators are positioned in the graphical user interface100to be located on different respective areas of a display on which the graphical user interface100is rendered. Moreover, according to one or more exemplary embodiments, it is contemplated that an area of the display can be reassignable; accordingly, the graphical user interface100can include a first visual indicator located on a reassignable area of the display during a first period of time, and a second visual indicator located on the reassignable area of the display during a second period of time. For example, a touch detected within the reassignable area of the display can control different operations during the first period of time compared to the second period of time. According to a further example, different types of inputs (e.g., analog input versus discrete input) can map to touch detected within the reassignable area of the display during the first period of time compared to the second period of time. Following this example, it is contemplated that the different types of inputs can be utilized to control different operations.

According to an example, one or more of the inputs represented by the visual indicators in the graphical user interface100can map to touch detected within corresponding areas of the display of the mobile touch-enabled device; thus, detected touch within the corresponding areas of the display can be used to control various operations of the game. Additionally or alternatively, at least one of the inputs represented by a visual indicator in the graphical user interface100can map to an output of one or more disparate sensors included in the mobile touch-enabled device; accordingly, the output from the disparate sensor(s) can be used to control various operations of the game. A camera, an accelerometer, a gyroscope, and the like are examples of the disparate sensors that can be included in the mobile touch-enabled device; however, it is to be appreciated that the claimed subject matter is not so limited. By using the disparate sensor(s), more points of control (e.g., in addition to the two thumbs) can be supported.

The visual indicators included in the exemplary graphical user interface100are further described below. It is to be appreciated, however, that a graphical user interface that lacks one or more of the visual indicators illustrated herein and/or includes one or more visual indicators that differ from the visual indicators illustrated herein is intended to fall within the scope of the hereto appended claims. As depicted, the exemplary graphical user interface100includes two thumbsticks: namely, a left thumbstick102and a right thumbstick104. Further, the left thumbstick102is located within a left thumbstick zone106and the right thumbstick104is located within a right thumbstick zone108in the graphical user interface100. The graphical user interface100also includes a left mode selection button110and a right mode selection button112. A left trough114is positioned between the left mode selection button110and the left thumbstick zone106, and a right trough116is positioned between the right mode selection button112and the right thumbstick zone108. Moreover, the graphical user interface100includes a toggle button118and four tap buttons (e.g., an A button120, a B button122, an X button124, and a Y button126). The graphical user interface100additionally includes a left trigger button128, a right trigger button130, a left bumper132, and a right bumper134.

The visual indicators depicted inFIG. 1are positioned in the graphical user interface100to be rendered on respective areas of the display of the mobile touch-enabled device. Accordingly, the left thumbstick102(and the left thumbstick zone106) can be rendered on a left reassignable area of the display, and the right thumbstick104(and the right thumbstick zone108) can be rendered on a right reassignable area of the display. Further, the left mode selection button110can be rendered on a left mode selection area of the display, and the right mode selection button112can be rendered on a right mode selection area of the display. The left trough114can be rendered on a left trough area of the display, and the right trough116can be rendered on a right trough area of the display. Moreover, the toggle button118can be rendered on a toggle area of the display, the four tap buttons can be rendered on corresponding, respective tap areas of the display, the left trigger button128can be rendered on a left trigger area of the display, the right trigger button130can be rendered on a right trigger area of the display, the left bumper132can be rendered on a left bumper area of the display, and the right bumper134can be rendered on a right bumper area of the display.

Moreover, inputs used to control operations in the game represented by the left thumbstick102, the right thumbstick104, the left mode selection button110, the right mode selection button112, the toggle button118, the A button120, the B button122, the X button124, and the Y button126can map to touch detected within corresponding areas of the display of the mobile touch-enabled device. Further, inputs used to control operations in the game represented by the left trigger button128and the right trigger button130can map to a measured amount of tilt (e.g., measured angle of tilt) of the mobile touch-enabled device detected from output of a sensor. Moreover, inputs used to control operations in the game represented by the left bumper132and the right bumper134can map to rotation of the mobile touch-enabled device detected from output of a sensor. According to an example, the sensor utilized to detect the amount of tilt and the sensor utilized to detect the rotation can be the same sensor. Pursuant to another example, different sensors can be employed to detect the amount of tilt and the rotation of the mobile touch-enabled device. In accordance with yet a further example, it is contemplated that more than one sensor can be utilized to detect the amount of tilt and/or the rotation of the mobile touch-enabled device.

Further, a subset of the inputs utilized to control operations in the game are analog inputs and a remainder of the inputs utilized to control operations in the game are discrete inputs. More particularly, the inputs represented by the left thumbstick102, the right thumbstick104, the left trigger button128, and the right trigger button130are analog inputs, while a remainder of the inputs can be discrete inputs. An analog input can receive analog input information, which can be a value within a continuous range. Further, a discrete input can receive discrete input information; the discrete input information can be an on or off value, an up, down, left, or right value, or any other discrete value. A discrete input, for instance, can be responsive to a tap of a user.

As noted above, the input represented by the right thumbstick104is an analog input that can receive analog input information. According to an example, a user can touch a portion of the right reassignable area of the display on which the right thumbstick104is rendered with his right thumb. While continuing to touch the display, the user can move his right thumb within the right reassignable area (e.g., within the right thumbstick zone108). As the user moves his right thumb within the right reassignable area of the display, the right thumbstick104can move within the graphical user interface100rendered on the display to provide visual feedback to the user, for instance; however, the claimed subject matter is not so limited. Moreover, following this example, the touch of the user can be detected and mapped to analog input information. The analog input information can vary within a continuous range of values as a function of a location on the display at which the touch is detected (e.g., the analog input information can change within the range of values based on movement of the right thumb on the display, removal of the right thumb from the display, etc.). Moreover, it is contemplated that the input represented by the left thumbstick102can be substantially similar to the input represented by the right thumbstick104.

Further, as described above, the input represented by the A button120is an example of a discrete input that can receive discrete input information. Pursuant to an example, whether a user is touching or not touching a tap area of the display on which the A button120is rendered can be detected. In accordance with this example, whether or not a touch is detected in the tap area of the display on which the A button120is rendered can be mapped to discrete input information. For instance, the input represented by the A button120can function as a single press or a press and hold button. In accordance with an illustration, if the input represented by the A button120functions as a single press button, then the discrete input information can switch from a first state to a second state (e.g., on to off, off to on, etc.) in response to a detected transition from the user not touching to the user touching the tap area of the display on which the A button120is rendered (or alternatively the transition from the user touching to the user not touching such tap area). By way of yet another illustration, if the input represented by the A button120functions as a press and hold button, then the discrete input information can switch from a first state to a second state (e.g., off to on, on to off, etc.) in response to a detected transition from the user not touching to the user touching the tap area of the display on which the A button120is rendered, and can switch back from the second state to the first state (e.g., on to off, off to on, etc.) in response to a detected transition from the user touching to the user not touching the tap area of the display on which the A button120is rendered. Further, it is to be appreciated that inputs represented by the B button122, the X button124, and the Y button126can be substantially similar to the input represented by the A button120.

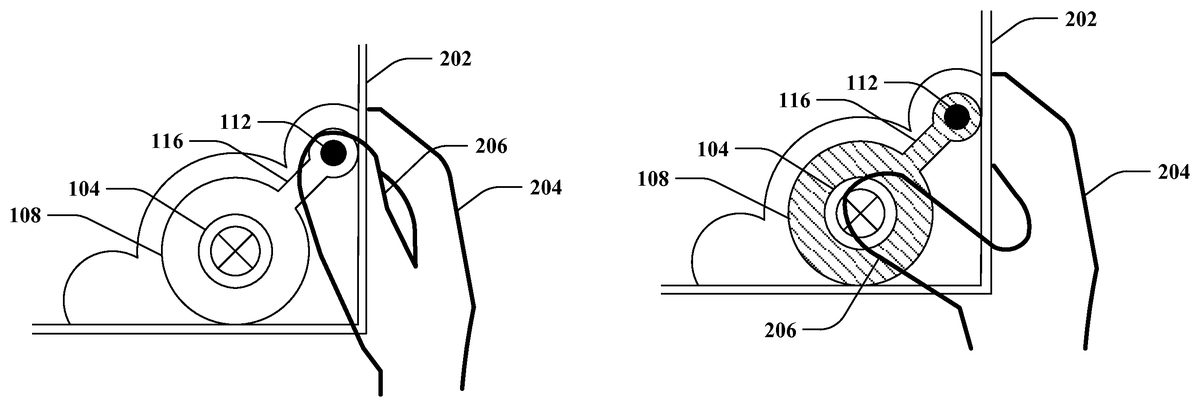

With reference toFIGS. 2-4, illustrated is an exemplary user interaction with a mobile touch-enabled device202to switch a mode of the input represented by the right thumbstick104. In this exemplary user interaction, the mobile touch-enabled device202is held in a right hand204of a user. Although not shown, it is contemplated that the mobile touch-enabled device202can additionally or alternatively be held in a left hand of the user. For illustration purposes,FIGS. 2-4show a portion of the graphical user interface100ofFIG. 1being rendered on a portion of a display of the mobile touch-enabled device202; yet, it is contemplated that a remainder of the graphical user interface100ofFIG. 1can be rendered on a remainder of the display of the mobile touch-enabled device202, which is not depicted. The exemplary user interaction illustrates a right thumb206of the right hand204of the user touching various areas of the display of the mobile touch-enabled device202to switch the mode of the input represented by the right thumbstick104. For instance, the exemplary user interaction with the mobile touch-enabled device202shown inFIGS. 2-4can simulate interacting with a depressible analog thumbstick on a conventional controller for a video game console. Further, different operations in a game can be controlled by the input represented by the right thumbstick104as a function of the mode (e.g., whether the right thumbstick104is represented as being at a default height in a thumbstick up mode or a depressed height in a thumbstick down mode). Moreover, it is contemplated that the user can similarly interact with the input represented by the left thumbstick102ofFIG. 1(e.g., using a left thumb of a left hand).

In the exemplary user interaction,FIGS. 2-4show the right thumbstick104and the right thumbstick zone108being rendered on a right reassignable area of the display of the mobile touch-enabled device202. Moreover, the right mode selection button112is rendered on a right mode selection area of the display of the mobile touch-enabled device202. The right reassignable area of the display and the right mode selection area of the display are non-overlapping. Further, the right trough116is rendered on a right trough area of the display of the mobile touch-enabled device202. According to the illustrated example, the right trough area is between the right reassignable area and the right mode selection area. It is to be appreciated, however, that the exemplary user interaction is presented for illustration purposes, and the claimed subject matter is not so limited.

Turning toFIG. 2, the right thumbstick104is represented as being at a default height on the display of the mobile touch-enabled device202(e.g., the input represented by the right thumbstick104being in a thumbstick up mode). Further, the right thumb206of the user is touching the right reassignable area of the display, on which the right thumbstick104and the right thumbstick zone108are rendered. The mobile touch-enabled device202can detect the touch of the right thumb206within the right reassignable area of the display (e.g., a first touch can be detected). Moreover, a first operation in a game can be controlled in response to the detected touch of the right thumb206within the right reassignable area of the display when the right thumbstick104is represented as being at the default height as illustrated inFIG. 2. For instance, the detected touch can map to analog input information; thus, analog input information can be received via the right reassignable area of the display with the right thumbstick104rendered thereupon.

FIG. 3again illustrates the right thumbstick104being at the default height on the display of the mobile touch-enabled device202. Moreover, the right thumb206of the user is touching the right mode selection area of the display, on which the right mode selection button112is rendered. According to an example, the right thumb206of the user may have been dragged from the right reassignable area of the display as shown inFIG. 2to the right mode selection area of the display as shown inFIG. 3(e.g., without discontinuity of contact between the right thumb206and the display of the mobile touch-enabled device202, without discontinuity of contact from a beginning of the first touch, etc.). By way of another example, the right thumb206of the user may have been removed from being in contact with the display subsequent to touching the right reassignable area of the display as shown inFIG. 2, and thereafter placed in contact with the right mode selection area of the display as shown inFIG. 3(e.g., discontinuity of contact after the first touch).

Moreover, the right thumb206of the user can be dragged from the right mode selection area of the display as shown inFIG. 3to the right reassignable area (e.g., without discontinuity of contact between the right thumb206and the display of the mobile touch-enabled device202). The mobile touch-enabled device202can detect such a touch (e.g., a second touch). Thus, the second touch detected is a drag from the right mode selection area of the display to the right reassignable area of the display. According to an example, the drag of the second touch can be detected to pass through at least a portion of the right trough area; however, the claimed subject matter is not so limited. Further, the mobile touch-enabled device202can switch the mode of the input represented by the right thumbstick104upon detecting the second touch (e.g., switch the input represented by the right thumbstick104to be in a thumbstick down mode).

As depicted inFIG. 4, the right thumbstick104is represented as being at a depressed height. According to the illustrated example, at least a portion of a visual indicator rendered on the right reassignable area of the display is changed to represent the right thumbstick104being switched to the depressed height in response to detecting the second touch (e.g., the aforementioned drag). By way of example, a first visual indicator can be rendered on the right reassignable area of the display when the right thumbstick104is represented as being at the default height (e.g., as shown inFIGS. 2 and 3), and a second visual indicator can be rendered on the right reassignable area of the display when the right thumbstick104is represented as being at the depressed height (e.g., as shown inFIG. 4). Following this example, at least a portion of the first visual indicator and the second visual indicator differ.

FIG. 4shows the right thumb206of the user touching the right reassignable area of the display of the mobile device. The mobile touch-enabled device202can detect the touch of the right thumb206within the right reassignable area of the display (e.g., a third touch) after the right thumb206is dragged from the right mode selection area to the right reassignable area of the display without discontinuity of contact. Thus, the second touch and the third touch are detected by the mobile touch-enabled device without detecting discontinuity of contact. Further, a second operation in the game can be controlled in response to the third touch detected within the right reassignable area of the display when the right thumbstick104is represented as being at the depressed height as illustrated inFIG. 4.

Moreover, the third touch can be detected to be discontinued (e.g., the right thumb206of the user touching the right reassignable area of the display as shown inFIG. 4can be detected to be released from the display). Upon detecting the discontinuation of the third touch, the mobile touch-enabled device202can switch the mode of the input represented by the right thumbstick104back to the mode depicted inFIG. 2(e.g., switch the input represented by the right thumbstick104to be in a thumbstick up mode). Accordingly, the right thumbstick104can mimic being spring loaded, for instance (e.g., simulate automatically popping up upon being released).

Thereafter, if the mobile touch-enabled device202detects the right thumb206of the user touching the right reassignable area of the display (e.g., a fourth touch) after discontinuation of the third touch, then the first operation in the game can again be controlled in response to the fourth touch. The fourth touch can be detected within the right reassignable area of the display when the right thumbstick104is represented as being at the default height as shown inFIG. 2.

Referring again toFIG. 1, the input represented by the toggle button118can switch the type of input that maps to touch detected within the left reassignable area of the display (e.g., on which the left thumbstick102and the left thumbstick zone106are rendered in the graphical user interface100). According to an example, a touch within the toggle area of the display (e.g., on which the toggle button118is rendered) can be detected when the left thumbstick102is rendered on the left reassignable area of the display (e.g., as shown inFIG. 1). The touch within the toggle area of the display can cause the type of input that maps to touch detected within the left reassignable area of the display to switch from an analog input to a discrete input.

With reference toFIG. 5, illustrated is another exemplary graphical user interface500that can be rendered on the display of the mobile touch-enabled device. The mobile touch-enabled device can switch from rendering the graphical user interface100ofFIG. 1to rendering the graphical user interface500on the display when the touch within the toggle area of the display as described above is detected. Thus, the mobile touch-enabled device can switch from rendering the left thumbstick102ofFIG. 1(e.g., included in the graphical user interface100ofFIG. 1) to rendering a directional pad502on the left reassignable area of the display upon detecting the touch within the toggle area of the display. Further, when the directional pad502is rendered on the left reassignable area of the display, the left reassignable area of the display can be configured as a discrete input.

According to an example, if a disparate touch within the toggle area of the display (e.g., on which the toggle button118is rendered) is detected when the directional pad502is rendered on the left reassignable area of the display (e.g., as shown inFIG. 5), then the mobile touch-enabled device can switch from rendering the directional pad502to rendering the left thumbstick102ofFIG. 1on the left reassignable area of the display. Hence, the graphical user interface100ofFIG. 1can again be rendered on the display of the mobile touch-enabled device. Accordingly, following this example, the left reassignable area of the display can be configured as an analog input when the left thumbstick102ofFIG. 1is rendered thereupon. It is thus contemplated that the input represented by the toggle button118can cause the left reassignable area of the display of the mobile touch-enabled device to switch between being an analog input (e.g., receiving analog input information) and a discrete input (e.g., receiving discrete input information). Additionally or alternatively, although not shown, it is to be appreciated that the right reassignable area of the display can similarly be associated with an input similar to the input represented by the toggle button118.

Pursuant to an example, it is contemplated that the input represented by the left mode selection button110can be disabled when the directional pad502is rendered on the display. According to an alternative example, the input represented by the left mode selection button110can be enabled when the directional pad502is rendered on the display. Following this example, a touch detected within the left mode selection area of the display when the directional pad502is rendered on the display can cause the left reassignable area of the display of the mobile touch-enabled device to switch from rendering the directional pad502to rendering the left thumbstick102ofFIG. 1on the left reassignable area of the display (e.g., similar to detecting a touch within the toggle area of the display as set forth above).

According to another example, a touch (e.g., a drag similar to the drag described above in relation toFIGS. 3 and 4) from the left mode selection area of the display to the left reassignable area of the display can be detected. Upon detecting such a touch, the mobile touch-enabled device can switch from rendering the directional pad502on the reassignable area of the display to rendering the left thumbstick102ofFIG. 1on the reassignable area of the display (e.g., the graphical user interface100ofFIG. 1can be rendered on the display). Moreover, following this example, it is to be appreciated that the left thumbstick102can be directly represented as being at the depressed height (e.g., similar to the example shown inFIG. 4). Accordingly, similar to the example ofFIG. 4, an operation in the game can be controlled with the left thumbstick102ofFIG. 1represented as being at the depressed height in response to a subsequent touch detected within the left reassignable area of the display while the touch continues to be detected (e.g., without discontinuity of contact starting at a beginning of the drag). Further, after the touch is detected to be discontinued, then a different operation in the game can be controlled with the left thumbstick102ofFIG. 1represented as being at the default height in response to a further subsequent touch within the left reassignable area of the display.

With reference toFIGS. 6-9, illustrated are various exemplary orientations of the mobile touch-enabled device202that can be utilized to manipulate operations in a game rendered on a display of the mobile touch-enabled device202. According to an example, various inputs used to control operations in the game can map to measured amounts of tilt of the mobile touch-enabled device202. For instance, the amount of tilt of the mobile touch-enabled device202can be measured by a sensor (not shown) included in the mobile touch-enabled device202.

FIG. 6depicts the mobile touch-enabled device202in a rest pose.

Further, a surface of the display of the mobile touch-enabled device202in the rest pose can define a predetermined plane referred to inFIGS. 7-9. It is to be appreciated that the rest pose of the mobile touch-enabled device202can be identified in substantially any manner. According to various illustrations, the rest pose can be identified as being a preset orientation of the mobile touch-enabled device202, an orientation at a time of powering on the mobile touch-enabled device202, an orientation at a time of starting a game, an orientation of the mobile touch-enabled device202after a predetermined amount of time has lapsed without tilting the mobile touch-enabled device202, or the like. Yet, it is to be appreciated that the claimed subject matter contemplates identifying the rest pose in substantially any other manner.

The amount of the tilt of the mobile touch-enabled device202relative to the predetermined plane can be measured. Moreover, an operation in the game can be controlled as a function of the amount of the tilt of the mobile touch-enabled device202. It is contemplated that the amount of the tilt of the mobile touch-enabled device202can map to analog input information for the game. Further, a level of the analog input information for the game can remain constant when the amount of the tilt of the mobile touch-enabled device202remains constant relative to the predetermined plane.

FIG. 7shows a top right corner of the mobile touch-enabled device202being tilted relative to the predetermined plane defined by the rest pose. According to the depicted example, the input represented by the right trigger button130included in the exemplary graphical user interface100ofFIG. 1can map to the amount of the tilt of the mobile touch-enabled device202as measured relative to the predetermined plane. Thus, the upper right corner of the mobile touch-enabled device202can be tilted downwards to varying orientations by a user to activate the input represented by the right trigger button130ofFIG. 1. Accordingly, the amount of tilting of the upper right corner of the mobile touch-enabled device202can mimic squeezing a trigger of a conventional controller utilized with a video game console with varying degrees of pressure. By way of further example, it is contemplated that depression of the right trigger button130ofFIG. 1rendered on the display of the mobile touch-enabled device202can be depicted as a function of the amount of the tilt as measured (e.g., visual feedback can be supplied to the user).

Similarly,FIG. 8depicts a top left corner of the mobile touch-enabled device202being tilted relative to the predetermined plane defined by the rest pose. It is to be appreciated that the input represented by the left trigger button128ofFIG. 1can be manipulated as a function of the amount of the tilt of the top left corner in a substantially similar manner as compared to the example ofFIG. 7described above. Moreover,FIG. 9shows both the top left corner and the top right corner of the mobile touch-enabled device202being tilted together relative to the predetermined plane defined by the rest pose. Pursuant to this example, both the input represented by the left trigger button128ofFIG. 1and the input represented by the right trigger button130ofFIG. 1can be simultaneously manipulated in accordance with the example described above with respect toFIG. 7.

Turning toFIGS. 10-12, illustrated are additional exemplary orientations of the mobile touch-enabled device202that can be utilized to manipulate operations in the game rendered on a display of the mobile touch-enabled device202. For instance, the mobile touch-enabled device202can be moved from the rest pose depicted inFIG. 6to the orientations shown inFIGS. 10-12; such movement can be mapped to one or more inputs used to control operations in the game. As illustrated,FIGS. 10 and 11depict the mobile touch-enabled device202being rotated in a plane defined by a surface of a display of the mobile touch-enabled device202relative to the rest pose. Further,FIG. 12shows the mobile touch-enabled device202being moved downwards in the plane defined by the surface of the display of the mobile touch-enabled device202relative to the rest pose.

According to an example, whether the mobile touch-enabled device202is rotated in the plane defined by the surface of the display can be detected. Moreover, an operation in the game can be controlled whether the mobile touch-enabled device202is rotated as detected. Further, whether the mobile touch-enabled device202is rotated can be mapped to discrete input information for the game.

For instance, a clockwise rotation of the mobile touch-enabled device202as shown inFIG. 10(e.g., compared to the orientation fromFIG. 6) or a counterclockwise rotation of the mobile touch-enabled device202as shown inFIG. 11(e.g., compared to the orientation fromFIG. 6) can be detected. By way of illustration, whether a clockwise rotation is detected can map to the input represented by the right bumper134ofFIG. 1(e.g., a discrete input), and whether a counterclockwise rotation is detected can map to the input represented by the left bumper132ofFIG. 1(e.g., a discrete input). Pursuant to an illustration, the clockwise rotation or the counterclockwise rotation can be caused by the user tapping a top right corner or a top left corner of the mobile touch-enabled device202; however, it is to be appreciated that such a tap need not be employed to rotate the mobile touch-enabled device202.

Pursuant to a further example, whether the mobile touch-enabled device202is moved downwards in the plane defined by the surface of the display as depicted inFIG. 12can be detected. Following this example, if the mobile touch-enabled device202is detected to have been moved downwards, then both the input represented by the left bumper132ofFIG. 1and the input represented by the right bumper134ofFIG. 1can be activated. Moreover, although not shown, it is further contemplated that movement of the mobile touch-enabled device202upwards can additionally or alternatively be detected and utilized in a similar manner as compared to the aforementioned discussion related to the downwards movement.

Now referring toFIG. 13, illustrated is an exemplary system1300that controls a game executed by a mobile touch-enabled device (e.g., the mobile touch-enabled device202ofFIG. 2). The system1300includes an output component1302that renders a graphical user interface1304(e.g., the graphical user interface100ofFIG. 1, the graphical user interface500ofFIG. 5, etc.) on a display1306of the mobile touch-enabled device. For instance, the graphical user interface1304rendered by the output component1302can include game data. According to an illustration, the game data can be generated by the output component1302; however, it is further contemplated that the game data can be generated by a disparate component (not shown).

Moreover, the system1300includes an interaction analysis component1308that can receive touch related data from the display1306. The interaction analysis component1308can be configured to detect the various touches described herein based upon the touch related data received from the display1306. Moreover, the interaction component1308can be the various inputs represented by the visual indicators included in the graphical user interface1304as set forth herein.

The system1300further includes a manipulation component1310that can control the graphical user interface1304rendered by the output component1302. More particularly, the manipulation component1310can employ input information (e.g., analog input information, discrete input information, etc.) received from the interaction analysis component1308to control corresponding operations in a game, which can cause the game data rendered as part of the graphical user interface1304(or outputted by the output component1302in substantially any other manner) to be changed.

According to an example, the output component1302can render at least a thumbstick (e.g., the left thumbstick102ofFIG. 1, the right thumbstick104ofFIG. 1, etc.) on a reassignable area of the display1306and a mode selection button (e.g., the left mode selection button110ofFIG. 1, the right mode selection button112, etc.) on a mode selection area of the display1306. Further, the reassignable area and the mode selection area of the display1306are non-overlapping. Following this example, the interaction analysis component1308can be configured to detect a drag from the mode selection area of the display1306to the reassignable area of the display1306. Moreover, the interaction analysis component1308can be configured to detect a touch within the reassignable area of the display1306.

Further, the manipulation component1310can control a first operation in the game in response to the touch detected within the reassignable area of the display1306when the drag (e.g., from the mode selection area of the display1306to the reassignable area of the display1306) and the touch (e.g., within the reassignable area of the display1306) are detected without discontinuity of contact by the interaction analysis component1308. The first operation in the game can be controlled based on the touch detected within the reassignable area of the display1306while the thumbstick rendered on the display by the output component1302is represented as being at a depressed height. Thus, the first operation in the game can be an operation that can typically be performed by moving an analog thumbstick while depressed on a conventional controller utilized with a video game console. According to an illustration, the first operation in the game that can be controlled as described above can be a crouch operation in a first person shooter or roll playing game (RPG) type of game; however, the claimed subject matter is not so limited.

Moreover, the manipulation component1310can otherwise control a second operation in the game when the thumbstick is rendered on the display1306by the output component1302(e.g., when the drag and touch within the reassignable area are not discontinuously detected). In such a scenario, the thumbstick rendered on the display1306by the output component1302is represented as being at a default height. The second operation in the game can be controlled in response to the touch detected within the reassignable area of the display1306by the interaction analysis component1308. For instance, the second operation in the game can be an operation that can typically be performed by moving an analog thumbstick (e.g., without being depressed) on a convention controller utilized with a video game console. By way of illustration, the second operation in the game that can be controlled as described above can be a walking or running operation in a first person shooter or RPG type of game; yet, the claimed subject matter is not limited to the foregoing illustration.

In various exemplary embodiments, the interaction analysis component1308can optionally include an input alteration component1312. According to such exemplary embodiments, the output component1302can further render a toggle button (e.g., the toggle button118ofFIG. 1) on a toggle area of the display1306. Additionally, the interaction analysis component1308can further be configured to detect a touch within the toggle area of the display1306. Moreover, the input alteration component1312can switch between the touch detected within the reassignable area of the display1306mapping to analog input information for the game and mapping to discrete input information for the game in response to the touch detected within the toggle area of the display1306. Hence, the output component1302can render the thumbstick on the reassignable area of the display1306when mapped to the analog input information and can render a directional pad (e.g., the directional pad502ofFIG. 5) on the reassignable area of the display when mapped to the discrete input information. Further, the manipulation1310can control a third operation in the game in response to the touch detected within the reassignable area of the display1306by the interaction analysis component1308when mapped to the discrete input information.

According to other exemplary embodiments, the system1300can optionally include a sensor1314that can supply data to the interaction analysis component1308. The sensor1314, for example, can be a gyroscope, an accelerometer, a camera, or the like. Moreover, it is contemplated that the system1300can optionally include a plurality of sensors. It is to be appreciated that the sensor1314can be included in the mobile touch-enabled device.

By way of illustration, the interaction analysis component1308can use the data received from the sensor1314to measure a degree of tilt of the mobile touch-enabled device relative to a predetermined plane (e.g., as depicted inFIGS. 6-9). The predetermined plane can be set based upon a rest pose of the mobile touch-enabled device. Further, the interaction analysis component1308can use the data received from the sensor1314to detect whether the mobile touch-enabled device is rotated in a plane defined by a surface of the display1306(e.g., as depicted inFIGS. 10-12). The manipulation component1310can control an operation in the game in response to the degree of the tilt of the mobile device and a disparate operation in the game in response to whether the mobile touch-enabled device is rotated.

In accordance with yet another example, the sensor1314can be a camera coupled with the mobile touch-enabled device (e.g., incorporated into, etc.). Following this example, the interaction analysis component1308can receive a series of images from the camera. Further, the interaction analysis component1308can detect a gesture of a user from the series of images received from the camera (e.g., perform signal processing on the series of images, etc.). Moreover, the manipulation component1310can control an operation in the game in response to the gesture of the user detected by the interaction analysis component1308. By way of illustration, the gesture can be movement of a head of the user (e.g., up, down, left, or right, etc.), a facial gesture (e.g., micro gesture such as gritting teeth, blinking, opening or closing a mouth, etc.), a hand gesture, or the like. By way of further example, it is contemplated that different gestures detected by the interaction analysis component1308can be utilized to respectively control different operations.

Pursuant to yet another example, the output component1302can vibrate the mobile touch-enabled device to provide force feedback related to the control of the game. According to an illustration, referring to the exemplary user interaction described inFIGS. 2-4, upon detecting the discontinuation of the third touch, the output component1302can vibrate the mobile touch-enabled device to provide haptic feedback that signifies that the thumbstick has been switched back to the default height; however, it is to be appreciated that the claimed subject matter is not limited to the foregoing example of haptic feedback, and it is contemplated that haptic feedback responsive to substantially any other user interaction additionally or alternatively can be provided by the output component1302.

FIGS. 14-15illustrate exemplary methodologies relating to controlling a game with a mobile touch-enabled device. While the methodologies are shown and described as being a series of acts that are performed in a sequence, it is to be understood and appreciated that the methodologies are not limited by the order of the sequence. For example, some acts can occur in a different order than what is described herein. In addition, an act can occur concurrently with another act. Further, in some instances, not all acts may be required to implement a methodology described herein.

Moreover, the acts described herein may be computer-executable instructions that can be implemented by one or more processors and/or stored on a computer-readable medium or media. The computer-executable instructions can include a routine, a sub-routine, programs, a thread of execution, and/or the like. Still further, results of acts of the methodologies can be stored in a computer-readable medium, displayed on a display device, and/or the like.

FIG. 14illustrates a methodology1400for controlling a game with a mobile touch-enabled device. At1402, a thumbstick can be rendered on a reassignable area of a display of the mobile touch-enabled device. At1404, a mode selection button can be rendered on a mode selection area of the display of the mobile touch-enabled device. Further, the reassignable area and the mode selection area are non-overlapping. At1406, a first operation in the game can be controlled with the thumbstick represented as being at a default height in response to a first touch detected within the reassignable area of the display.

At1408, a second touch can be detected. The second touch can be a drag from the mode selection area of the display to the reassignable area of the display. At1410, a second operation in the game can be controlled with the thumbstick represented as being at a depressed height in response to a third touch detected within the reassignable area of the display. Moreover, the second touch and the third touch are detected without discontinuity of contact (e.g., continuous contact detected from a beginning of the second touch until an end of the third touch). For instance, the second touch and the third touch can be a single touch of the display without a release of the display. At1412, a discontinuation of the third touch can be detected. At1414, the first operation in the game can be controlled with the thumbstick represented as being at the default height in response to a fourth touch detected within the reassignable area of the display upon detecting the discontinuation of the third touch.

Turning toFIG. 15, illustrated is a methodology1500for varying an input for a game receivable via a reassignable area of a display of a mobile touch-enabled device. At1502, a toggle button, a thumbstick, and a mode selection button can be rendered on the display of the mobile touch-enabled device. The toggle button can be rendered on a toggle area of the display of the mobile touch-enabled device. Further, the thumbstick can be rendered on the reassignable area of the display of the mobile touch-enabled device. Moreover, the mode selection button can be rendered on a mode selection area of the display of the mobile touch-enabled device, where the mode selection area of the display and the reassignable area of the display are non-overlapping.

At1504, a first touch can be detected. The first touch can be a drag from the mode selection area of the display to the reassignable area of the display. At1506, a second touch can be detected within the reassignable area of the display. Further, the first touch and the second touch are detected without discontinuity of contact. At1508, a first operation in the game can be controlled with the thumbstick represented as being at a depressed height in response to the second touch detected within the reassignable area of the display.

At1510, a discontinuation of the second touch can be detected (e.g., a thumb of a user can be detected to be released from the display). At1512, a third touch can be detected within the reassignable area of the display after detecting the discontinuation of the second touch. At1514, a second operation in the game can be controlled with the thumbstick represented as being at a default height in response to the third touch detected within the reassignable area of the display.

At1516, a fourth touch can be detected within the toggle area of the display when the thumbstick is rendered on the reassignable area of the display. At1518, a switch from rendering the thumbstick to rendering a directional pad on the reassignable area of the display can be effectuated in response to the fourth touch detected within the toggle area of the display. At1520, a fifth touch can be detected within the reassignable area of the display with the directional pad rendered on the reassignable area of the display. At1522, a third operation in the game can be controlled in response to the fifth touch detected within the reassignable area of the display.

Referring now toFIG. 16, a high-level illustration of an exemplary computing device1600that can be used in accordance with the systems and methodologies disclosed herein is illustrated. For instance, the computing device1600may be used in a system that controls a game executed by a mobile touch-enabled device. The computing device1600includes at least one processor1602that executes instructions that are stored in a memory1604. The instructions may be, for instance, instructions for implementing functionality described as being carried out by one or more components discussed above or instructions for implementing one or more of the methods described above. The processor1602may access the memory1604by way of a system bus1606. In addition to storing executable instructions, the memory1604may also store game data, visual indicators, and so forth.

The computing device1600additionally includes a data store1608that is accessible by the processor1602by way of the system bus1606. The data store1608may include executable instructions, game data, visual indicators, etc. The computing device1600also includes an input interface1610that allows external devices to communicate with the computing device1600. For instance, the input interface1610may be used to receive instructions from an external computer device, from a user, etc. The computing device1600also includes an output interface1612that interfaces the computing device1600with one or more external devices. For example, the computing device1600may display text, images, etc. by way of the output interface1612.

Additionally, while illustrated as a single system, it is to be understood that the computing device1600may be a distributed system. Thus, for instance, several devices may be in communication by way of a network connection and may collectively perform tasks described as being performed by the computing device1600.

As used herein, the terms “component” and “system” are intended to encompass computer-readable data storage that is configured with computer-executable instructions that cause certain functionality to be performed when executed by a processor. The computer-executable instructions may include a routine, a function, or the like. It is also to be understood that a component or system may be localized on a single device or distributed across several devices.

Further, as used herein, the term “exemplary” is intended to mean “serving as an illustration or example of something.”

Various functions described herein can be implemented in hardware, software, or any combination thereof. If implemented in software, the functions can be stored on or transmitted over as one or more instructions or code on a computer-readable medium. Computer-readable media includes computer-readable storage media. A computer-readable storage media can be any available storage media that can be accessed by a computer. By way of example, and not limitation, such computer-readable storage media can comprise RAM, ROM, EEPROM, CD-ROM or other optical disk storage, magnetic disk storage or other magnetic storage devices, or any other medium that can be used to carry or store desired program code in the form of instructions or data structures and that can be accessed by a computer. Disk and disc, as used herein, include compact disc (CD), laser disc, optical disc, digital versatile disc (DVD), floppy disk, and blu-ray disc (BD), where disks usually reproduce data magnetically and discs usually reproduce data optically with lasers. Further, a propagated signal is not included within the scope of computer-readable storage media. Computer-readable media also includes communication media including any medium that facilitates transfer of a computer program from one place to another. A connection, for instance, can be a communication medium. For example, if the software is transmitted from a website, server, or other remote source using a coaxial cable, fiber optic cable, twisted pair, digital subscriber line (DSL), or wireless technologies such as infrared, radio, and microwave, then the coaxial cable, fiber optic cable, twisted pair, DSL, or wireless technologies such as infrared, radio and microwave are included in the definition of communication medium. Combinations of the above should also be included within the scope of computer-readable media.

What has been described above includes examples of one or more embodiments. It is, of course, not possible to describe every conceivable modification and alteration of the above devices or methodologies for purposes of describing the aforementioned aspects, but one of ordinary skill in the art can recognize that many further modifications and permutations of various aspects are possible. Accordingly, the described aspects are intended to embrace all such alterations, modifications, and variations that fall within the spirit and scope of the appended claims. Furthermore, to the extent that the term “includes” is used in either the details description or the claims, such term is intended to be inclusive in a manner similar to the term “comprising” as “comprising” is interpreted when employed as a transitional word in a claim.

Claims

- A method of controlling a game with a mobile touch-enabled device, comprising: rendering a thumb stick within an area of a display of the mobile touch-enabled device, wherein the thumbstick is moveable within the area of the display;controlling whether the thumbstick is represented within the area of the display as being at a default height or a depressed height, wherein the area of the display outside the thumbstick comprises a first visual indicator when the thumbstick is at the default height and a differing, second visual indicator when the thumbstick is at the depressed height;detecting a touch within the area of the display;controlling a first operation in the game based on the touch within the area of the display, wherein the first operation in the game differs based on whether the thumbstick is represented as being at the default height or the depressed height;changing visual information for the game based on the first operation in the game;and outputting the visual information for the game.

- The method of claim 1 , further comprising: receiving first data from a sensor of the mobile touch-enabled device indicative of an amount of tilt of a corner of the mobile touch-enabled device relative to a predetermined plane;controlling a second operation in the game based on the amount of the tilt of the corner of the mobile touch-enabled device;and changing the visual information for the game based on the second operation in the game.

- The method of claim 1 , wherein the amount of the tilt of the corner of the mobile touch-enabled device maps to analog input information for the game, and a level of the analog input information for the game remains constant when the amount of the tilt of the corner of the mobile touch-enabled device remains constant relative to the predetermined plane.

- The method of claim 1 , wherein a surface of the display of the mobile touch-enabled device for a preset orientation of the mobile touch-enabled device defines the predetermined plane.

- The method of claim 1 , further comprising identifying an orientation of the mobile touch-enabled device at a time of powering on the mobile touch-enabled device, wherein a surface of the display of the mobile touch-enabled device at the orientation at the time of powering on the mobile touch-enabled device defines the predetermined plane.

- The method of claim 1 , further comprising identifying an orientation of the mobile touch-enabled device at a time of starting the game, wherein a surface of the display of the mobile touch-enabled device at the orientation at the time of starting the game defines the predetermined plane.

- The method of claim 1 , further comprising identifying an orientation of the mobile touch-enabled device after a predetermined amount of time has lapsed without tilting the mobile touch-enabled device, wherein a surface of the display of the mobile touch-enabled device at the orientation identified after the predetermined amount of time has lapsed defines the predetermined plane.

- The method of claim 1 , further comprising: receiving second data from the sensor of the mobile touch-enabled device indicative of a second amount of tilt of a second corner of the mobile touch-enabled device relative to the predetermined plane;controlling a third operation in the game based on the second amount of the tilt of the second corner of the mobile touch-enabled device;and changing the visual information for the game based on the third operation in the game.

- The method of claim 8 , wherein the corner and the second corner are tilted together relative to the predetermined plane.

- The method of claim 1 , wherein outputting the visual information for the game further comprises rendering the visual information for the game on the display of the mobile touch-enabled device.

- The method of claim 1 , further comprising: receiving data from a sensor of the mobile touch-enabled device, the data indicative of rotation of the mobile touch-enabled device in a plane defined by a surface of the display of the mobile touch-enabled device;controlling a second operation in the game based on the rotation of the mobile touch-enabled device;and changing the visual information for the game based on the second operation in the game.

- The method of claim 1 , further comprising: receiving data from a sensor of the mobile touch-enabled device, the data indicative of movement of the mobile touch-enabled device one of upwards or downwards in a plane defined by a surface of the display of the mobile touch-enabled device;controlling a second operation in the game based on the movement of the mobile touch-enabled device;and changing the visual information for the game based on the second operation in the game.

- The method of claim 1 , further comprising: receiving a series of images from a camera of the mobile touch-enabled device;tracking movement of a head of a user from the series of images;controlling a second operation in the game based on the movement of the head of the user;and changing the visual information for the game based on the second operation in the game.

- The method of claim 1 , further comprising: receiving a series of images from a camera of the mobile touch-enabled device;detecting a facial gesture of a user from the series of images;controlling a second operation in the game based on the facial gesture of the user;and changing the visual information for the game based on the second operation in the game.

- A mobile touch-enabled device, comprising: a display;at least one processor;and memory that comprises computer-executable instructions that, when executed by the at least one processor, cause the at least one processor to perform acts including: rendering a thumbstick within an area of the display of the mobile touch-enabled device, wherein the thumbstick is moveable within the area of the display;controlling whether the thumbstick is represented within the area of the display as being at a default height or a depressed height, wherein the area of the display outside the thumbstick comprises a first visual indicator when the thumbstick is at the default height and a differing, second visual indicator when the thumbstick is at the depressed height;detecting a touch within the area of the display;controlling a first operation in a game based on the touch within the area of the display, wherein the first operation in the game differs based on whether the thumb stick is represented as being at the default height or the depressed height;changing visual information for the game based on the first operation in the game;and outputting the visual information for the game.

- The mobile touch-enabled device of claim 15 , further comprising: a sensor;wherein the memory further comprises computer-executable instructions that, when executed by the at least one processor, cause the at least one processor to perform acts including: receiving data from the sensor of the mobile touch-enabled device indicative of an amount of tilt of a corner of the mobile touch-enabled device relative to a predetermined plane;controlling a second operation in the game based on the amount of the tilt of the corner of the mobile touch-enabled device;and changing the visual information for the game based on the second operation in the game.

- A mobile touch-enabled device, comprising: a display;at least one processor;and memory that comprises computer-executable instructions that, when executed by the at least one processor, cause the at least one processor to perform acts including: displaying a thumbstick at a default height within an area of the display of the mobile touch-enabled device during a first time period, wherein the thumbstick is moveable within the area of the display, and wherein the area of the display outside the thumbstick comprises a first visual indicator when the thumbstick is at the default height;controlling a game during the first time period based on the thumbstick being at the default height and a first touch within the area of the display when the thumbstick is at the default height;displaying the thumbstick at a depressed height within the area of the display of the mobile touch-enabled device during a second time period, wherein the area of the display outside the thumbstick comprises a differing, second visual indicator when the thumbstick is at the depressed height;and controlling the game during the second time period based on the thumbstick being at the depressed height and a second touch within the area of the display when the thumbstick is at the depressed height.

- The mobile touch-enabled device of claim 17 , further comprising: a sensor;wherein the memory further comprises computer-executable instructions that, when executed by the at least one processor, cause the at least one processor to perform acts including: receiving data from the sensor of the mobile touch-enabled device indicative of an amount of tilt of a corner of the mobile touch-enabled device relative to a predetermined plane;and controlling the game based on the amount of the tilt of the corner of the mobile touch-enable device.

- The mobile touch-enabled device of claim 17 , further comprising: a sensor;wherein the memory further comprises computer-executable instructions that, when executed by the at least one processor, cause the at least one processor to perform acts including: receiving data from the sensor of the mobile touch-enabled device indicative of rotation of the mobile touch-enabled device in a plane defined by a surface of the display of the mobile touch-enabled device;and controlling the game based on the rotation of the mobile touch-enabled device.

- The mobile touch-enabled device of claim 17 , further comprising: a sensor;wherein the memory further comprises computer-executable instructions that, when executed by the at least one processor, cause the at least one processor to perform acts including: receiving data from the sensor of the mobile touch-enabled device indicative of movement of the mobile touch-enabled device one of upwards or downwards in a plane defined by a surface of the display of the mobile touch-enabled device;and controlling the game based on the movement of the mobile touch-enabled device.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.