U.S. Pat. No. 10,004,981

INPUT METHOD AND APPARATUS

AssigneeNintendo Co., Ltd.

Issue DateDecember 4, 2017

U.S. Patent No. 10,004,981: Input method and apparatus

Summary:

U.S. Patent No. 10,004,981 (the ‘981 Patent) describes a method for displaying a digital driving wheel on a touch screen which can control a car in driving games. The ‘981 Patent specifically relates to the Nintendo DS system and how to optimize the bottom, touch screen for driving games. The DS is a dual-screen handheld console. The top screen is a standard screen while the bottom screen had touch capabilities. Driving games could use the bottom screen to display a driving wheel. A player could choose to control their vehicle using this virtual driving wheel. The virtual driving wheel gave players more control over their car than the traditional D-pad control scheme.

Abstract:

A vehicle simulation such as for example a driving game can be provided by displaying an image of a steering wheel on a touch sensitive screen. Touch inputs are used to control the rotational orientation of displayed steering wheel. The rotational orientation of the displayed steering wheel is used to apply course correction effects to a simulated vehicle. Selective application of driver assist and different scaling of touch inputs may be provided.

Illustrative Claim:

1. A handheld device comprising: a housing shaped and dimensioned for being held by a hand; a touch sensitive screen disposed on the housing; a graphics processor operatively connected to the touch sensitive screen, the graphics processor generating display of a virtual object on the touch sensitive screen; and a processor operatively connected to the touch sensitive screen and to the graphics processor, the processor being responsive to input sensed by the touch sensitive screen to detect movements along a multiplicity of paths on the screen, the processor defining vectors in response to said detected touch movements and causing the orientation of the displayed virtual object to change in response to the defined vectors.

Illustrative Figure

Abstract

A vehicle simulation such as for example a driving game can be provided by displaying an image of a steering wheel on a touch sensitive screen. Touch inputs are used to control the rotational orientation of displayed steering wheel. The rotational orientation of the displayed steering wheel is used to apply course correction effects to a simulated vehicle. Selective application of driver assist and different scaling of touch inputs may be provided.

Description

FIG. 1Ashows a further illustrative view of the exemplary illustrative non-limiting video game play platform ofFIG. 1; FIG. 1Bshows a schematic block architectural diagram of theFIG. 1exemplary illustrative non-limiting video game play platform; FIG. 2shows more detailed view of exemplary top and bottom screen displays ofFIG. 1; FIG. 2Ashows an example alternative view of exemplary top and bottom screen displays in which the steering wheel view ofFIG. 2is replaced with a zone pattern; FIGS. 3A and 3Bshow exemplary illustrative non-limiting control settings/input mode selection; FIGS. 4A and 4Bshow exemplary user input control using a stylus; FIG. 5shows exemplary illustrative non-limiting control input zones selectively providing user assist and non-assist regions; FIG. 6shows exemplary user input control using a wearable thumb-mounted touch actuator; FIG. 6Ashows a more detailed view of an exemplary wearable thumb-mounted touch actuator; FIGS. 7A and 7Bshow exemplary illustrative non-limiting visual effects; and FIG. 8shows a flowchart of an exemplary illustrative non-limiting steering-control routine. DETAILED DESCRIPTION FIG. 1shows an exemplary illustrative non-limiting implementation of a touch screen based handheld video game platform100that provides vehicle simulation such as for example a driving game. In the particular example show, handheld video game platform100may comprise for example a Nintendo DS video game system including dual screens102,104. In the example shown, the lower screen102provides a tough-sensitive surface. In this particular example, graphic200comprising the image of a steering wheel is displayed on the touch-sensitive screen102. Steering wheel graphic200represents the steering wheel of a vehicle202and in this particular case is displayed on the upper screen104. As will be understood by those skilled in the art, upper screen104may display the driver's view of the vehicle (i.e., as looking out of the vehicle's windshield from the driver's position), or it may display the third person view showing the vehicle's position as the vehicle travels down a ...

FIG. 1Ashows a further illustrative view of the exemplary illustrative non-limiting video game play platform ofFIG. 1;

FIG. 1Bshows a schematic block architectural diagram of theFIG. 1exemplary illustrative non-limiting video game play platform;

FIG. 2shows more detailed view of exemplary top and bottom screen displays ofFIG. 1;

FIG. 2Ashows an example alternative view of exemplary top and bottom screen displays in which the steering wheel view ofFIG. 2is replaced with a zone pattern;

FIGS. 3A and 3Bshow exemplary illustrative non-limiting control settings/input mode selection;

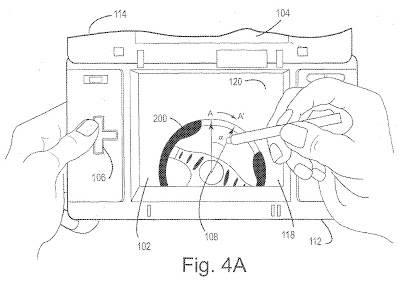

FIGS. 4A and 4Bshow exemplary user input control using a stylus;

FIG. 5shows exemplary illustrative non-limiting control input zones selectively providing user assist and non-assist regions;

FIG. 6shows exemplary user input control using a wearable thumb-mounted touch actuator;

FIG. 6Ashows a more detailed view of an exemplary wearable thumb-mounted touch actuator;

FIGS. 7A and 7Bshow exemplary illustrative non-limiting visual effects; and

FIG. 8shows a flowchart of an exemplary illustrative non-limiting steering-control routine.

DETAILED DESCRIPTION

FIG. 1shows an exemplary illustrative non-limiting implementation of a touch screen based handheld video game platform100that provides vehicle simulation such as for example a driving game. In the particular example show, handheld video game platform100may comprise for example a Nintendo DS video game system including dual screens102,104. In the example shown, the lower screen102provides a tough-sensitive surface. In this particular example, graphic200comprising the image of a steering wheel is displayed on the touch-sensitive screen102. Steering wheel graphic200represents the steering wheel of a vehicle202and in this particular case is displayed on the upper screen104. As will be understood by those skilled in the art, upper screen104may display the driver's view of the vehicle (i.e., as looking out of the vehicle's windshield from the driver's position), or it may display the third person view showing the vehicle's position as the vehicle travels down a path such as for example a racetrack.

In the exemplary illustrative non-limiting implementation shown, rotating the displayed steering wheel image200clockwise causes the simulated vehicle202to turn to the right. Similarly, rotating the displayed steering wheel image200counterclockwise causes the simulated vehicle202to steer to the left. The amount of steering wheel200rotation determines the amount of correction to the course that simulated vehicle202steers. Just as in a real motor vehicle, turning the steering wheel200more to the left causes the simulated vehicle202to steer more toward the left, while turning the steering wheel more to the right causes the simulated vehicle to steer more to the right.

In the exemplary illustrative non-limiting implementation, the user may apply different types of control inputs to specify the rotational orientation of displayed steering wheel200. In the mode and configuration shown inFIG. 1, the user may depress a momentary on/off button106with his or her left thumb to cause the displayed steering wheel200to rotate counterclockwise. Similarly, the user may depress a momentary on button108with his or her right thumb to control displayed steering wheel200to rotate in a clockwise direction. In the example shown, depressing one of momentary on buttons106,108for a longer period of time causes more rotation of displayed steering wheel200. For example, depressing106for a very short period of time may cause displayed steering wheel200to rotate counterclockwise a small number of degrees, and then return to an initial neutral position. Depressing button106for a longer time period may cause displayed steering wheel200to rotate by a further angle counterclockwise. Holding the button106or108down may cause the displayed steering wheel200to rotate to a maximum clockwise or counterclockwise position and remain in that position until the button is released. Release of both buttons106,108may cause steering wheel200to rotate (at a predetermined rate) back to an initial and/or neutral position. In this way, using a simple momentary on-button depression, it is possible to provide a realistic simulated steering wheel control for a vehicle simulation such as a racing car, boat, spacecraft, aircraft, or any other type of vehicle.

FIG. 1Ashows the exemplary illustrative non-limiting video game platform100in more detail. As shown inFIG. 1A, the lower, touch-sensitive screen102may be mounted on a lower housing portion112, whereas an upper screen104may be mounted on an upper housing portion114that is attached to the lower housing portion by a hinge116. The lower and upper screens102,104may each comprise color liquid crystal displays. In the example shown, the lower screen102includes a touch-sensitive surface118that covers substantially the entire surface area of the lower screen. A stylus120(which may be stored when not in use within a stylus cavity122within the upper or lower housing112,114) may be used to provide touch inputs via touch-sensitive surface118. Various input devices mounted on the lower housing112including previously-mentioned push button106(which may be a conventional cross-switch having four momentary on positions and one mutual off position), momentary on buttons108,110, buttons124,126and other input devices may also be provided. Such buttons may be used to control steering wheel position (as explained above) as well as for controlling simulated acceleration, simulated brakes, and the like.

The video game platform100shown inFIG. 1Amay play a video game and provide corresponding interactive video game displays and audio based on contents of a memory card128that may be removably inserted into the video game platform100. In more detail, referring toFIG. 1B, the memory cartridge128may include a read only memory128aand a random access memory128b. The read only memory128amay store a variety of information including video game software to be executed by the video game platform100. As further shown inFIG. 1B, the exemplary illustrative non-limiting video game platform100may include first and second graphics processing units24,26coupled to corresponding video RAMS23,25, respectively. A CPU core21may access the contents of cartridge128via a connector28and a bus28a. CPU core21reads video game instructions and other information from the cartridge128, and controls the graphics processors24,26to display resulting video game images on liquid crystal displays102,104. Inputs provided by touch panel118, operating switches106,108and other inputs are provided to CPU core21via an interface circuit27. Audio can also be played back via a loudspeaker15.

FIG. 2shows in more detail the exemplary illustrative non-limiting screen102,104displays shown inFIG. 1. As shown inFIG. 2, lower screen102displays graphical image200of the steering wheel. The steering wheel image200shown includes a conventional circular rim portion204and an inner hub portion206. A button208at the center of the hub portion206may represent a conventional vehicle horn button. Grip areas210,212, are provided to resemble portions of a steering wheel that are typically gripped by the driver's hands. In the exemplary illustrative non-limiting implementation shown, user input controls the displayed steering wheel200to rotate clockwise or counterclockwise by a controllable amount. Also displayed on display screens102,104is a variety of other interesting interactive graphics. For example,FIG. 2shows an image of a race car or other vehicle202navigating a track214. The track may pass through a three-dimensional landscape such as buildings216, mountains218and the like. Other vehicles220may be displayed on the track, and the object of the game may be to maneuver simulated vehicle202as rapidly as possible down track214in order to win a race against other simulated vehicles220. Virtual speedometer222may be displayed to show the speed at which the simulated vehicle202is traveling down the track. The simulated gear shift display224may be displayed showing the transmission gear that the simulated vehicle is operated in. An image of track214as if viewed from an airplane or satellite may be displayed to inform the user of the position of simulated vehicle202on the track. Various other informational displays may be provided showing lap number, lap time, lap record and the like.

FIG. 2Ashows an exemplary illustrative non-limiting variation of theFIG. 2display in which the steering wheel graphic200is replaced with a series of zones400a-400e. In the particular example shown, zones400a-400emay comprise concentric circular regions such as the top half of an archery target. These zones400a-400emay for example define different sensitivity levels for touch provided within the zones. For example, a given change in touch position within the center zone400amay exert greater change to the path of simulated vehicle202, whereas this same given change in touch position within an outer zone400dmay exert less change to the vehicle's path.

In the exemplary illustrative non-limiting implementation shown, the user may select different input modes for controlling and/or selecting the input controls used to determine and control the rotational orientation of displayed steering wheel200. As shown inFIG. 3A, an initial screen may display a variety of options including control settings230. Selecting the control settings option230may allow the user to select between three different control input modes:control pad mode232,stylus mode234,wrist strap mode236.

In the example shown, a control pad mode operates as described in connection withFIG. 1—that is depressing the momentary on buttons106,108causes the displayed steering wheel image200to rotate clockwise and counterclockwise. The stylus mode234is used when the user wishes to control the orientation of displayed steering wheel200using the stylus120and touch-screen118. The user can select a wrist strap mode236to allow the user to control the orientation of displayed steering wheel200using a thumb-mounted nib that the user moves in contact with touch-sensitive screen118.

As shown inFIG. 4A, in the stylus mode234, the user may use stylus120in contact with touch-sensitive screen118to control the rotational orientation of the displayed steering wheel200. The technology herein provides a method for implementing a steering-wheel simulator for a touch-panel device. This idea has proven very effective in a video-game environment. This implementation has been tested on an LCD-based touch-panel device100. Three exemplary illustrative sections are provided in an exemplary illustrative non-limiting implementation:

(a) A graphic200of a steering-wheel is drawn on the LCD display102. The input from the stylus120is used to determine a vector from the center208of the steering-wheel200to the point being touched on the LCD display102. The angle between any two consecutive vectors is used to determine the direction and amount of rotation of the steering-wheel.

(b) While the input from the steering-wheel itself is sufficient to simulate, for example, the driving of an automobile, the input is further analyzed to make the simulated vehicle more controllable for the user. Specific zones are defined (centered around the wheel at approximately 45-degrees from center in either direction). SeeFIG. 5. The user input is constantly compared to these zones to determine exactly how “frantic” the user is responding to the current position and orientation of the simulated vehicle. This allows the simulation to actually “take-over” (for short periods of time) when it deems it appropriate to keep the vehicle centered on the road.

(c) As the simulation progresses, the user is given visual-feedback (via translation and scaling of the steering-wheel graphic200) about the current environment that the vehicle is experiencing. SeeFIGS. 7A and 7B. This may include collisions with other cars or static objects, rough-road, etc. This feedback allows the user to have a much more vivid experience in the simulation than merely seeing other cars or objects close to his or her vehicle.

In more detail, referring toFIGS. 4A and 4B, in an example illustrative non-limiting implementation, starting and ending positions of stylus120can be sensed by touch screen118and used to define starting and ending vectors A, A′ or B, B′. The angle α between initial and final vectors may be used to determine the amount of rotation of steering wheel200. The larger the angle α, the more the steering wheel200rotates. Note that in this example, the further away stylus120is placed from the center208of steering wheel200, the more linear motion of the stylus120is required to rotate the steering wheel200by a given amount. For example, when the stylus120is placed directly onto the image of steering wheel200, it is relatively close to the center208of the steering wheel and so therefore a relatively small displacement between beginning and ending stylus points needed to rotate the steering wheel by a given angle α. In contrast, as shown inFIG. 4B, when the stylus120position is relatively far away from the center208of steering wheel200, the stylus needs to be moved a relatively far amount to achieve the same rotation angle α of steering wheel200.

This exemplary illustrative functionality allows the user to flexibly determine the amount of control (finer or coarse) over the orientation of steering wheel200by simply locating stylus120relative to the center208of steering wheel200. The further away the user places the tip of stylus120from the center208of the steering wheel200, the more the user needs to move the stylus tip to achieve the same amount of steering wheel rotation. The user does not need to place the tip of the stylus120directly on the steering wheel200to move the steering wheel—placing the stylus anywhere on the touch screen118in the exemplary illustrative non-limiting implementation is sufficient to effect rotation of the steering wheel200. The ability that a user has to select the proportionality between the amount of movement of the stylus120tip and amount of rotation of steering wheel200depending upon the distance of the stylus tip relative to the steering wheel center208gives users ergonomic choices to match their skill level, hand to eye coordination skills and other ergonomic affects. Note also that in the exemplary illustrative non-limiting implementation, it is not necessary for stylus120tip to be moved arcuately in order to effect rotation of steering wheel200. The user may move the tip of stylus120in an entirely linear fashion or along any convenient path in any desired direction and the exemplary illustrative non-limiting implementation will detect such movement, automatically define a vector from the center208of the steering wheel to the current stylus position, and effect rotation of steering wheel200accordingly.

It should be understood that the display of steering wheel200is not essential to the control of the game. Display of a graphic of steering wheel200is in some contexts very convenient in that it gives the user an immediate intuitive understanding of the different ways in which different touch screen inputs steer the simulated vehicle202. However, players often find that once they understand this steering phenomenon and functionality, they stop looking at the displayed steering wheel200and concentrate their view of the simulated vehicle202. This is especially true when the player controls the simulated vehicle202to travel down the track at high simulated speed (e.g., over 100 miles per hour). In such high speed operation, the player's eye may be extremely focused on the horizon of the top screen104and the player may cease looking at steering wheel100altogether or he or she may only see the simulated steering wheel from peripheral vision. In such instances, it may sometimes be desirable to replace the view of steering wheel100with some other view or to allow the user to select a different view.

As illustrated inFIG. 5, exemplary illustrative non-limiting implementations may define different zones on touch screen118. For example,FIG. 5shows four zones: “zone1,” “zone2”, “zone3” and “zone4.” The illustrated zone1and zone4may be defined as “frantic” zones in the sense that when a user's stylus has entered those zones, it is likely that the user is not very skilled and is trying to exert too much correction or too rapid correction of the steering wheel200orientation to be successful in playing the vehicle simulation. While realism is generally desired in vehicle simulation and driving games, it is also important in general for video game play to be fun. Therefore, in the exemplary illustrative non-limiting implementation upon detection that the stylus input has entered zone1or zone4shown inFIG. 5may exert an additional “assist” force that in part saves the user from the consequences of his or her over steering. In this exemplary illustrative non-limiting implementation, this active assist may, for example, prevent the simulated vehicle202from leaving the track even though the extreme amount of steering correction the user effects by placing the stylus in zone1or zone4would immediately cause the vehicle to crash. Such assist functions can make game play more fun and rewarding for inexperienced users while generally not effecting game play of more experienced users who will generally not attempt to over steer by causing their stylus120to enter zone1or zone4. The amount of assist can be carefully selected to permit experienced players to rapidly control the course of vehicle202while saving less experienced players from frustrating crashes and accidents.

FIG. 6shows an additional exemplary illustrative non-limiting user input mode236that uses a wrist strap-based wearable touch screen actuator. In the example shown, a wrist strap280attached to the lower housing112and generally used to carry the platform100in a suspended manner from the user's wrist when not in use may provide a thumb loop282attached to which is a thumb nib284(seeFIG. 6A). The user can insert his thumb into the thumb loop282and use the thumb nib284in contact with touch screen118to provide the touch input for controlling the steering wheel200orientation. In the example shown, the user may but need not place the thumb nib204in direct contact with the steering wheel200image. In this mode, the exemplary illustrative non-limiting implementation scales the amount of input with placement of the thumb nib relative to stylus motion120so that a smaller amount of thumb movement accomplishes the same rotational orientation effect on steering wheel200as a larger amount of stylus120motion. Exemplary illustrative non-limiting implementation performs this scaling because most users will not move their thumbs as much as they will move a stylus120. The same sort of vector control shown inFIGS. 4A and 4Bmay be applied to the wrist strap-based control input mode236, or alternatively, scaling may be provided so the same amount of steering wheel200orientation change may be effected for a given displacement of the thumb nib284on touch screen118irrespective of the starting and ending positions of the thumb nib relative to steering wheel center208.

FIGS. 7A and 7Bshow example additional visual effects indicating collisions with virtual objects, passage over steep cliffs or speed bumps or other obstacles, etc. As shown inFIGS. 7A and 7B, the image of steering wheel200may appear to vibrate (e.g., become smaller and larger in an alternating fashion or moving from side to side) to provide additional realism. The effect is somewhat similar to what a race car driver would see as his head and body vibrated or moved relative to the position of the steering wheel when passing over speed bumps or other obstacles.

The flowchart of the exemplary illustrative non-limiting steering-control routine shown inFIG. 8takes input from the touch-panel118by examining the changes in input from one frame to the next. Touch is sensed (block502). The initial point of contact (“touch”) is saved (blocks506,508) in a touch pad initial position register (block552) and used as the “center” for all future steering calculations. Each time the user lifts the stylus120from the screen118, the “virtual steering wheel”200is centered (block504). Such recentering can take place over time at a predetermined rate of reducing current steering angle toward zero. Each new touch determines a new center for the steering-input (block510.512). Any dragging of the stylus120to the left or right is scaled (block514) and passed on to the functions described below. This scaling is done for-two reasons: (1) it allows the designer to adjust the feel of the steering input to suit the simulation, and (2) it allows different types of styluses120to be supported—some of which do not have the range of movement that a typical “pen” device might have (e.g. a “thumb-pad”284is limited in range by the user's thumb and, therefore, has a much larger scaling-factor applied to it. Most users simply cannot move it as far to the left or right as a pen).

At any point along the track214, the steering-control routine can determine the angle that the car202should be pointed in order to be oriented directly along the road (block516). It then uses this angle—along with the current orientation of the player's car202—to determine a “helper” adjustment angle that can be scaled and applied to (blended with) the current user input (block518). This allows the player to feel as though he or she is driving the car202by moving a stylus left and right on the touch-pad but still not require precise inputs to keep the simulation fun. Note that in the exemplary illustrative non-limiting implementation described, the scaling-factor (amount of scaling) is very important and must be determined by repeated play-testing. Too little scaling requires the user to make too many small adjustments, too much scaling masks any user input and makes the simulation “automatic” and not much fun!

The “helper” adjustment is not applied to the user's input at all times. When the player makes an extreme input (i.e. move the stylus120more than one-half of the screen width), the “helper” function is disabled completely and only the user's “real” input is used. This allows the player to turn the car sharply to the left or right as required by the game. This particular exemplary illustrative non-limiting application implements a “drift-mode” that allows the player to slide around corners in a similar fashion to real auto racing. This move would not be possible if the car202were kept oriented along the direction of the track.

While the technology herein has been described in connection with exemplary illustrative non-limiting embodiments, the invention is not to be limited by the disclosure. For example, although a race simulation is shown, the input may be used to control any sort of vehicle or object or any other simulation or game input parameter. While a simulated steering wheel200has been described for purpose of illustration, other simulated input devices (e.g., joy sticks, control levers, etc.) may be displayed and controlled instead or in addition, or display of the steering wheel can be eliminated. The invention is intended to be defined by the claims and to cover all corresponding and equivalent arrangements whether or not specifically disclosed herein.

Claims

- A handheld device comprising: a housing shaped and dimensioned for being held by a hand;a touch sensitive screen disposed on the housing;a graphics processor operatively connected to the touch sensitive screen, the graphics processor generating display of a virtual object on the touch sensitive screen;and a processor operatively connected to the touch sensitive screen and to the graphics processor, the processor being responsive to input sensed by the touch sensitive screen to detect movements along a multiplicity of paths on the screen, the processor defining vectors in response to said detected touch movements and causing the orientation of the displayed virtual object to change in response to the defined vectors.

- The handheld device of claim 1 wherein the housing is shaped and dimensioned such that when the hand grasps the housing, a thumb of the hand can reach and touch at least a portion of the touch sensitive screen, and the processor and the graphics processor are disposed in the housing.

- The handheld device of claim 1 wherein the virtual object comprises a virtual steering wheel.

- The handheld device of claim 1 wherein the processor changes orientation of the virtual object by smaller amounts in response to the processor detecting touch movements along paths on the screen that are disposed further from a user.

- The handheld device of claim 1 wherein the processor measures an angle α between starting and ending vectors of touch movement along a path on the screen, and uses the angle α to determine an amount to change the orientation of the virtual object.

- The handheld device of claim 1 wherein the processor selects proportionality between amount of detected touch movement and amount of change of orientation of the virtual object depending on distance of the detected touch movement relative to a reference.

- The handheld device of claim 1 wherein the processor calculates an angle between successive vectors to determine direction and amount to change the orientation of the virtual object.

- The handheld device of claim 1 wherein the processor controls the graphics processor to provide at least one of translating and scaling the virtual object.

- The handheld device of claim 1 wherein the touch sensitive screen is sensitive to touch by a stylus or by a digit of a human hand.

- The handheld device of claim 1 wherein the displayed virtual object changes orientation by rotating about an axis that is perpendicular to the screen.

- In a handheld device of the type comprising a housing shaped and dimensioned for being held by at least one hand, a screen disposed on the housing, and a graphics processor operatively connected to the screen, the graphics processor generating display of a virtual object on the screen, a processor operatively connected to the graphics processor, and a non-transitory memory operatively connected to the processor, the non-transitory memory storing information comprising instructions that when executed by the processor control the processor to: (a) detect input movements along a multiplicity of paths on the screen, (b) define vectors in response to said detected input movements on the screen, and (c) cause the orientation of the displayed virtual object to change in response to the defined vectors.

- The handheld device of claim 11 wherein the housing is shaped and dimensioned such that when the hand grasps the housing, a thumb of the hand can reach and touch at least a portion of the screen, and the processor and the graphics processor are disposed in the housing.

- The handheld device of claim 11 wherein the virtual object comprises a virtual steering wheel.

- The handheld device of claim 11 wherein the instructions control the processor to change orientation of the virtual object by smaller amounts in response to the processor detecting input movements along paths on the screen further from a user.

- The handheld device of claim 11 wherein the instructions control the processor to measure an angle α between starting and ending vectors of movement along a path on the screen, and to use the angle α to determine an amount to change the orientation of the virtual object.

- The handheld device of claim 11 wherein the instructions control the processor to select proportionality between amount of movement on the screen and amount of change of orientation of the virtual object depending on distance of the input movement on the screen relative to a reference.

- The handheld device of claim 16 wherein the reference comprises a position on the screen such as a part of the displayed virtual object.

- The handheld device of claim 11 wherein the instructions control the processor to calculate an angle between successively-defined vectors to determine direction and amount to change the orientation of the virtual object.

- The handheld device of claim 11 wherein the instructions control the graphics processor to provide at least one of translating and scaling the virtual object.

- The handheld device of claim 11 wherein the screen is sensitive to touch by a stylus or a digit of a human hand.

- The handheld device of claim 11 wherein the displayed virtual object changes orientation by rotating about an axis that is perpendicular to the screen.

- A method of operating a handheld device of the type comprising a housing shaped and dimensioned for being held by at least one hand, a screen disposed on the housing, and a processing arrangement including a processor and a graphics processor operatively connected to the screen, the graphics processor generating display of a virtual object on the screen, the method comprising performing with the processing arrangement: detecting input movements along a multiplicity of paths on the screen, defining vectors in response to said detected input movements on the screen, and causing the orientation of the displayed virtual object to change in response to the defined vectors.

- The method of claim 22 wherein the virtual object comprises a virtual steering wheel.

- The method of claim 22 further including changing orientation of the virtual object by smaller amounts in response to the processor detecting input movements along paths on the screen further from a user.

- The method of claim 22 further including measuring an angle α between starting and ending vectors of movement along a path on the screen, and using the angle α to determine an amount to change the orientation of the virtual object.

- The method of claim 22 further including selecting proportionality between amount of movement on the screen and amount of change of orientation of the virtual object depending on distance of the input movement on the screen relative to a reference position on the screen.

- The method of claim 22 further including calculating an angle between successively-defined vectors, and specifying direction and amount to change the orientation of the virtual object in response to the calculated angle.

- The method of claim 22 further including providing visual feedback comprising at least one of translating and scaling the virtual object.

- The method of claim 22 wherein the screen is sensitive to touch by a stylus or by a digit of a human hand.

- The method of claim 22 further including changing orientation of the displayed virtual object by rotating the displayed virtual object about an axis that is perpendicular to the screen.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.